Abstract

Some clinical scientists are shifting from research on complete named therapy protocols to a more elemental approach—research on specific therapy components that contribute to therapy goals. To characterize and evaluate this emerging field, we systematically searched PsycINFO and Medline for studies evaluating therapy components. We identified 208 studies. In a scoping review, we map, explain, and critically appraise the seven research strategies employed: (a) expert opinion, (b) shared components, (c) associations between the presence of components and therapy effects, (d) associations between fidelity to components and therapy effects, (e) microtrials, (f) additive and dismantling trials, and (g) factorial experiments. Our examination reveals a need for (a) renewed emphasis on experimental trials (vs. meta-analyses testing associations less rigorously), (b) expanded efforts to locate components within the emerging fields of process-based and principle-guided psychotherapy, and (c) a shift from innovative stand-alone studies to development of a coherent science of therapy components.

Keywords

Mental health problems are prevalent, burdensome, and costly (Costello et al., 2003; Patel et al., 2016; Whiteford et al., 2013). Although medications are available for some of these problems, therapy is widely used and often recommended as the first-line treatment. Decades of intervention research show that psychological therapies can effectively reduce mental health problems (e.g., Cuijpers, 2017; Cuijpers et al., 2014; Foa et al., 2005; Weisz, Kuppens, et al., 2017). Although this success is notable, numerous clinical scientists have noted that the magnitude of therapy effects is limited, that the mean effects of therapies have not improved measurably across the decades (Wampold et al., 1997; Weisz et al., 2019), that there may be an upper limit to effects that can be achieved with therapies as currently designed (Jones et al., 2019), and that improvement could be achieved by identifying and targeting the core processes underlying mental health problems (Hayes & Hofmann, 2020; Hofmann & Hayes, 2019; Holmes et al., 2014). Authors have emphasized differing paths to such deeper understanding (Weisz & Kazdin, 2017)—each potentially valuable. Here we focus on one specific path: identifying the components of psychological therapy that contribute to desired therapy outcomes. Discerning the components that contribute to desired therapy outcomes, and those that do not, could help make therapies more efficient—and potentially more effective—by informing decisions about which components to alter or eliminate and which to emphasize in treatment. In this scoping review, we map research strategies to identify effective therapy components and critically appraise their potential.

Traditionally, psychological therapies tend to be organized within treatment protocols composed of multiple components—skill units designed to change a client’s way of thinking, feeling, or behaving. Cognitive behavioral therapy protocols for depression, for example, include various combinations of a dozen or more different components, such as psychoeducation about depression, mood monitoring, relaxation and self-calming, behavioral activation, identifying negative cognitive errors, and cognitive restructuring (e.g., Persons et al., 2001). Because evidence is scarce about which components actually contribute to treatment effects, most therapy protocols combine multiple components, sometimes on the basis of an untested assumption that each component adds value, sometimes because of uncertainty about which components might be safely eliminated. This leads to complex multisession therapies that often require intensive therapist training and supervision for effective implementation, which adds cost and limits the spread of these therapies, and the duration alone increases the risk that clients will not complete the protocols (Weisz, 2014). Knowing which therapy components drive therapy success could help improve the efficiency and feasibility of therapy and arguably its effectiveness.

Discerning the active ingredients of therapy fits what appears to be an emerging paradigm in intervention research: moving away from complete named therapies toward evidence-based processes linked to discrete evidence-based procedures (i.e., process-based therapy; Hayes & Hofmann, 2020; Hofmann & Hayes, 2019). This paradigm builds on the seminal work of functional analysis and early behavior therapy efforts to isolate active ingredients (for a review, see Haynes & O’Brien, 1990) and on earlier arguments for a return to the search for principles of change (e.g., Klepac et al., 2012; Rosen & Davison, 2003). This shift in focus from full therapy protocols to evidence-based processes may be usefully supported and informed by efforts to investigate and characterize the relevant evidence base and the strategies available to build that evidence base. In the present article, we seek to contribute to process-based thinking by identifying and critically evaluating research strategies for the investigation of intervention components, which could be viewed as building blocks for a process-based model.

Various terminology and definitions are used to refer to discrete parts, components, or aspects of psychological therapies. Many have adopted the term components to refer to therapeutic techniques that are frequently found in effective therapy protocols or manuals (e.g., Chorpita et al., 2005; Murray et al., 2014). Embry and Biglan (2008) used the term kernels to refer to single therapeutic techniques that have their own meaningful function and cannot be further disentangled without losing their function. A recent report by the U.S. Institute of Medicine (2015) referred to evidence-based “elements of interventions” and “elements of therapeutic change” (pp. xiii–xiv). In this article, we will use the commonly used term components and define it as discrete parts of therapy protocols that are hypothesized to affect therapy outcome at least partly independently of other components.

The Present Scoping Review

We aimed to map, explain, and critically appraise the research strategies that can be used to identify effective therapy components. To this end, we systematically searched the literature for studies that evaluated the merit of discrete therapy components. We adopted a scoping review approach—a survey and appraisal of research approaches rather than findings. Scoping reviews differ from classical reviews in that they do not intend to include all individual eligible studies and appraise individual study findings. Instead, scoping reviews intend to examine the variety and nature of research in a heterogeneous field, identify strengths and gaps, and guide future research (Tricco et al., 2018). In our case, we span different subfields within psychotherapy research (e.g., from adult depression to adolescent substance abuse and child anxiety) in which different terminology might be applicable. A scoping approach allowed us to globally, but systematically, review these subfields and identify trends over time and between subfields.

Method

Protocol and registration

We followed Preferred Reporting Items for Systematic Reviews and Meta-Analyses (PRISMA) guidelines for scoping reviews (ScR; Tricco et al., 2018) and published our protocol on OSF (https://osf.io/b7r6e) prospectively before study coding was finished on January 22, 2020.

Eligibility criteria

Criteria for study inclusion were (a) therapy was psychological (i.e., nonpharmacological), (b) therapy focused on reducing mental health problems, (c) the effects of one or more discrete (i.e., single) therapy components were evaluated, and (d) the component under evaluation was treatment specific (as opposed to common factors such as therapist skills and placebo). Studies evaluating multiple therapy components were included only if one or more components were evaluated individually. There were no exclusion criteria. We included the full life span (i.e., adults and youths) and all common mental health problems, including those identified as diagnostic categories in the fifth edition of the Diagnostic and Statistical Manual of Mental Disorders (American Psychiatric Association, 2013) and International Classification of Diseases (World Health Organization, 2018), as well as symptoms of mental health problems identified via an array of standardized measures. We did not place restrictions on study quality or type of design for evaluating the component because variation in research strategies and their rigor was central to our review. No date limitations were placed on the search results.

Information sources

We searched PsycINFO and MEDLINE for publications until August 2019, using key words related to components (e.g., “element” and “ingredient”) and psychological therapy (e.g., “intervention” and “treatment”). Our protocol (https://osf.io/b7r6e) includes the full search strategy. We also searched the reference lists of included studies and relevant review articles and meta-analyses and the references lists of studies that experts alerted us to.

Data-charting process

Studies were coded for their sample (adults or youths), targeted mental health problem (e.g., anxiety, depression, or conduct problems), component under evaluation (e.g., relaxation, behavioral activation, or time-out), level of analysis (within persons, between persons, or between studies), and research strategy. The following design characteristics informed research strategy coding: whether components were evaluated using any quantitative indicators of relations between the component and therapy outcomes (e.g., expert opinion), whether relations between components and therapy outcomes were correlational (e.g., associations between the presence of components and therapy outcomes) or causal (e.g., manipulation of components and their effects on therapy outcomes), and whether components were implemented in isolation from other components (e.g., microtrials) or in the context of other components (e.g., additive/dismantling trials). We included designs that evaluated components within trials (e.g., dismantling trials) and those that evaluated components by synthesizing across trials (e.g., meta-analyses of associations between the presence of components and outcomes). In other words, the categories resulted from an iterative process of the methods we observed in the literature and attempts to distinguish between these methods in various ways (e.g., correlational vs. experimental evidence; whether components were implemented in isolation vs. in the context of other components).

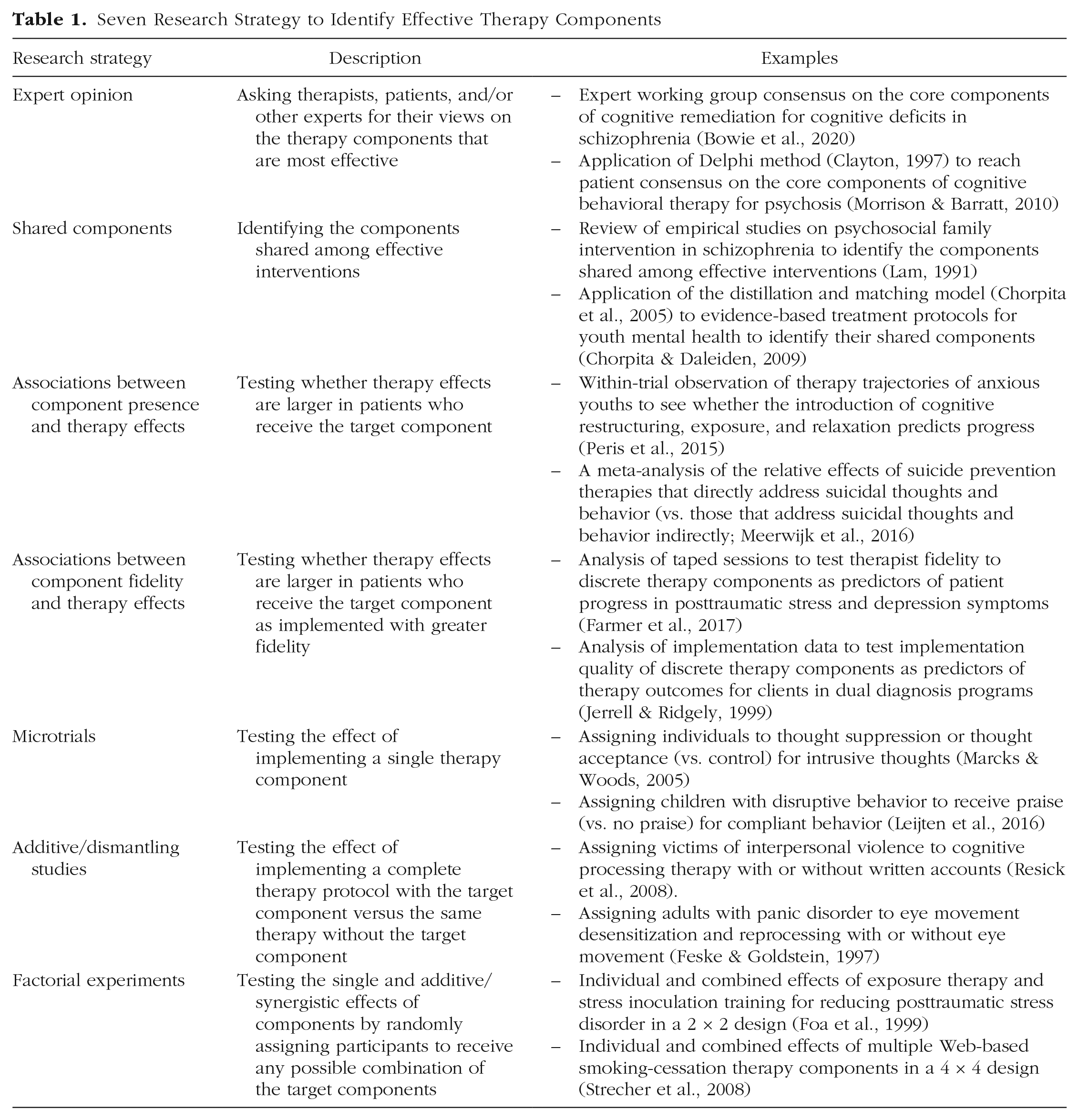

Combinations of these design characteristics led to the seven research strategies explained in Table 1. For our data-charting form, see Table S1 at https://osf.io/b7r6e. A graduate-level second coder coded a random selection of 10% of the studies with good agreement (κ = .92).

Seven Research Strategy to Identify Effective Therapy Components

Data synthesis

First, we identified the relative use of each research strategy across and within subfields of psychotherapy research. We grouped studies by type of research strategy and conducted basic numerical analysis of the number and percentage of studies employing each research strategy overall and per subfield (i.e., adult and youth therapy and different mental health problems).

Second, we critically appraised the value of each research strategy on three criteria: causal inference, generalizability, and feasibility. (a) Causal inference reflects how well the research strategy allows us to draw conclusions about the causal effects of the component on a relevant therapy outcome (i.e., internal validity). We used Rubin’s model for causal inference (e.g., Holland, 1988). (b) Generalizability reflects how well the research strategy permits generalizing from study findings to everyday clinical practice. Although generalizability depends on more than just the research design (e.g., representative sampling and ecologically valid measurement), design features can contribute to the generalizability of the study findings. Lab studies and efficacy studies will, for example, score lower on generalizability than studies implemented in clinical practice contexts because for lab studies and efficacy studies, it often remains unclear how the component will play out in less controlled settings. Likewise, components evaluated in isolation from the rest of therapy (e.g., in microtrials; Howe et al., 2010) will score lower than components evaluated in the context of therapy (e.g., in additive trials) because the former cannot rule out any interaction effects with other components in the therapy. (c) Feasibility reflects the complexity and costs of applying the research strategy. Meta-analyses of associations between component implementation and therapy effects, for example, use existing data, contributing to their feasibility, whereas additive and dismantling trials require large new studies to be conducted.

Results

Sources of evidence

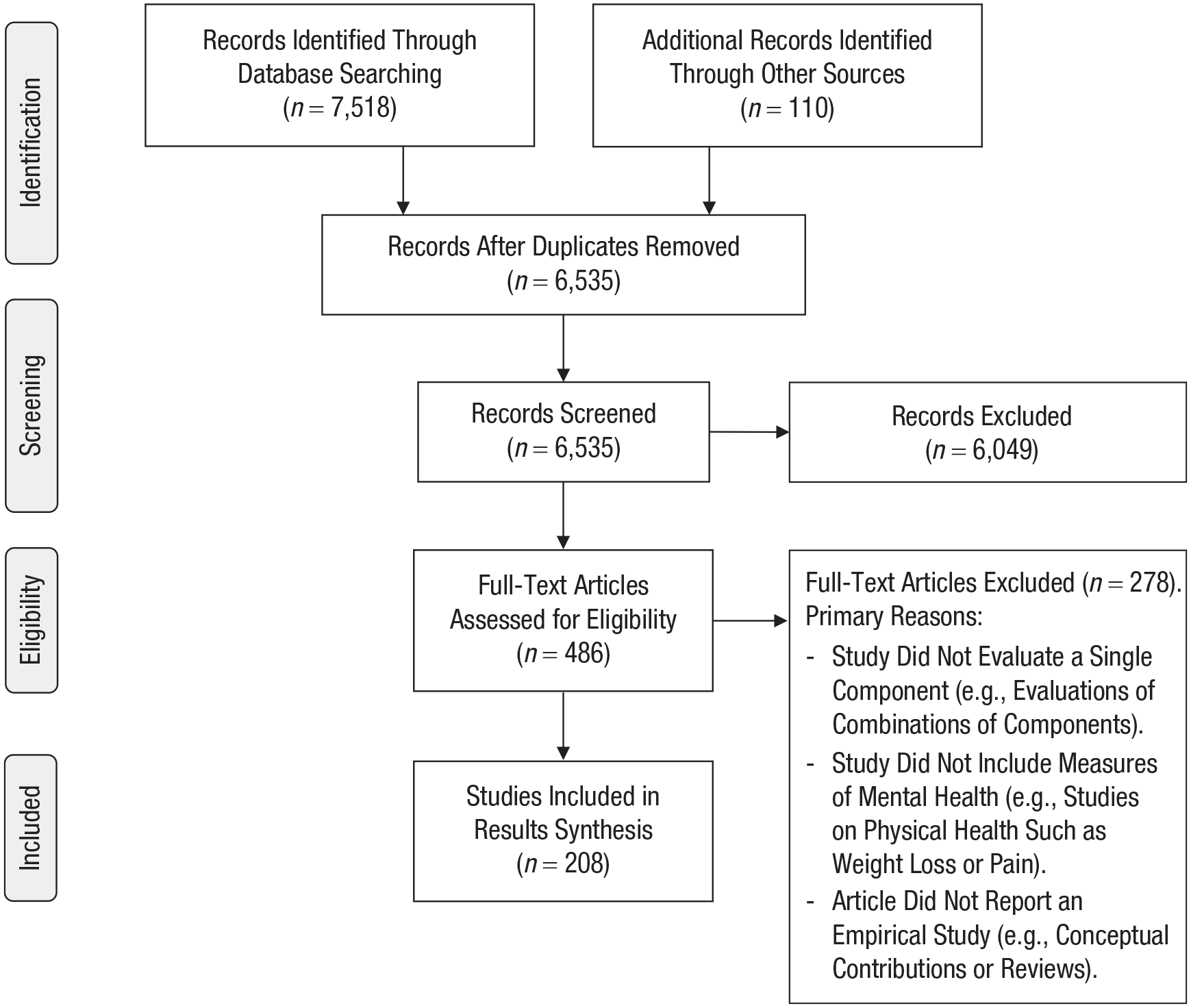

Our systematic search yielded 6,535 unique citations. On the basis of the title and abstract, we assessed that 486 articles were eligible. Of these studies and their reference lists, 208 final studies were eligible for inclusion (Fig. 1). For relevant details of the 208 included studies, see Tables S2 and S3 at https://osf.io/b7r6e.

Preferred Reporting Items for Systematic Reviews and Meta-Analyses (PRISMA) flow diagram of included studies.

Studies were published between 1975 and 2019, with an even distribution of studies published before 2000 (n = 69), between 2000 and 2010 (n = 70), and after 2010 (n = 69). The studies covered the broad psychological therapy literature: 59% included adults (n = 123), 35% included youths (n = 72), and 6% included both adults and youths (n = 13). Most studies targeted depression (22%; n = 45), conduct problems (21%; n = 44), anxiety (18%; n = 36), or substance use (15%; n = 31). As expected, and the primary reason for adopting a systematic scoping review instead of a traditional systematic review approach, terminology differed greatly both between and within subfields of the literature. In fact, many studies did not include the term component or any synonym but only terms that reflected the component under evaluation (e.g., relaxation, cognitive restructuring). These studies were identified from the reference lists of other studies.

In addition to the primary studies, we identified a review by Michie et al. (2018) of methods used to evaluate the effectiveness of behavior change techniques in health research. Michie and colleagues reviewed a different literature (i.e., health-related behavior), including only studies explicitly mentioning “behavior change techniques” and only studies up to February 2015. Yet their review provides a valuable check as to whether a different but related research field covers the same range of strategies to identify effective intervention components. This was not the case, with our review uncovering a broader range of strategies (seven compared with three). For how the strategies identified by Michie and colleagues map onto those from the current review, see Table S5 at https://osf.io/b7r6e.

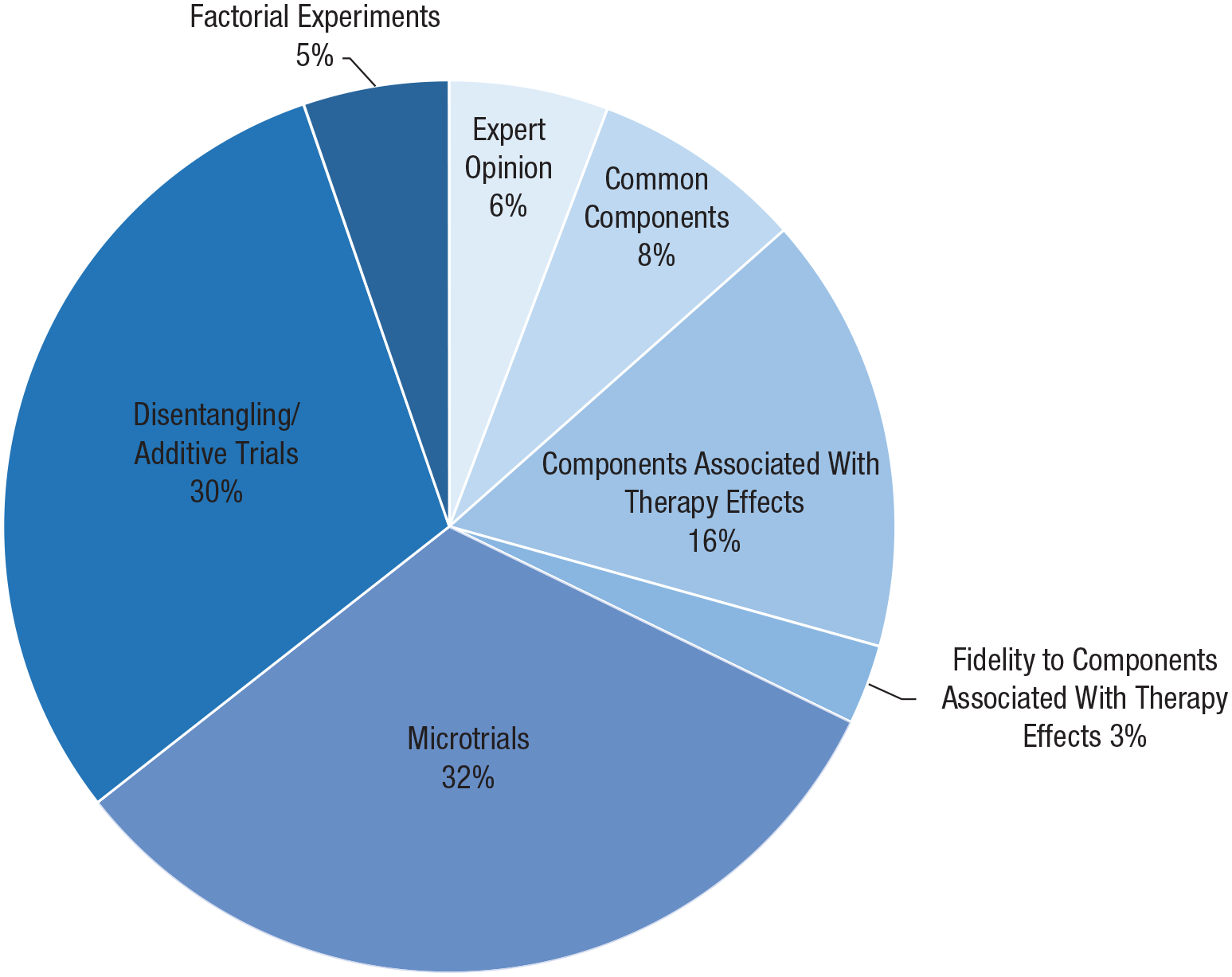

Descriptive analysis

Figure 2 shows the relative use of each strategy. The most frequently used strategies are microtrials (32%; n = 67) and additive/dismantling trials (30%; n = 63). Together with the much less frequently used factorial experiments (5%; n = 11), this means around two thirds of the studies used an experimental approach. Of the nonexperimental approaches, the most frequently used strategy is examining associations between the presence of components and therapy outcomes (16%; n = 33).

Relative use of each strategy.

Differences in research strategies between subfields

For the relative use of each strategy per subfield (by publication year, for adult vs. youth literature, and for different mental health problems), see Tables S4a through S4c at https://osf.io/b7r6e. Here, we highlight three main patterns: First, additive/dismantling trials are more common in the adult literature (42% vs. 8% of studies on youths; n = 52 and n = 6, respectively), and microtrials are more common in the youth literature (57% vs. 21% of studies on adults; n = 41 and n = 26, respectively). In other words, experimental studies are equally common in both literatures, but the dominant type of experimental design differs.

Second, additive/dismantling trials are particularly common in the anxiety and depression literature (56% and 35%, n = 20 and n = 15, respectively, vs. 10%–14%, ns = 3–6 in other fields), microtrials are more common in the conduct problems literature (59%, n = 26 vs. 15%–35%, ns = 6–52 in other fields), and factorial experiments are more common in the substance use literature (19%, n = 6 vs. 2%–7%, ns = 9–14 in other fields). This trend is related to the one in the adult literature but not the youth literature because the majority of studies on anxiety and depression (86%; n = 67) is in adults and the majority of studies on conduct problems is in youths (91%; n = 40). The relative frequent use of factorial experiments in the substance use literature seems independent from trends in the adult and youth literatures.

Additive/dismantling trials have become rarer over time (48% of studies before 2000, n = 33; 30% between 2000 and 2010, n = 21; 13% after 2010, n = 9), whereas the number of studies on associations between the presence of components and therapy effects sizes, often studied in systematic reviews and meta-analyses, has increased over time (3% before 2000, n = 2; 20% between 2000 and 2010, n = 14; 25% after 2010, n = 17). In other words, there seems a shift from individual experimental trials to systematic reviews and meta-analyses of associations between the presence of components and therapy outcomes across trials.

Critical appraisal of research strategies

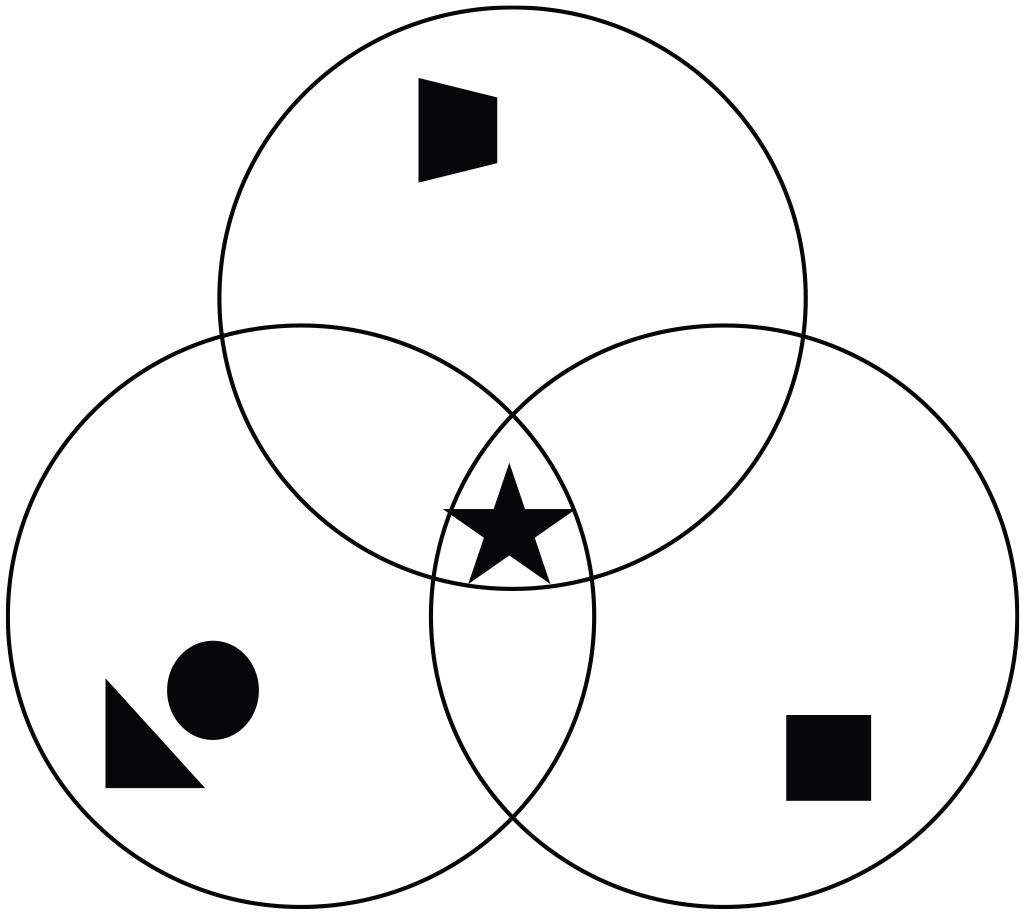

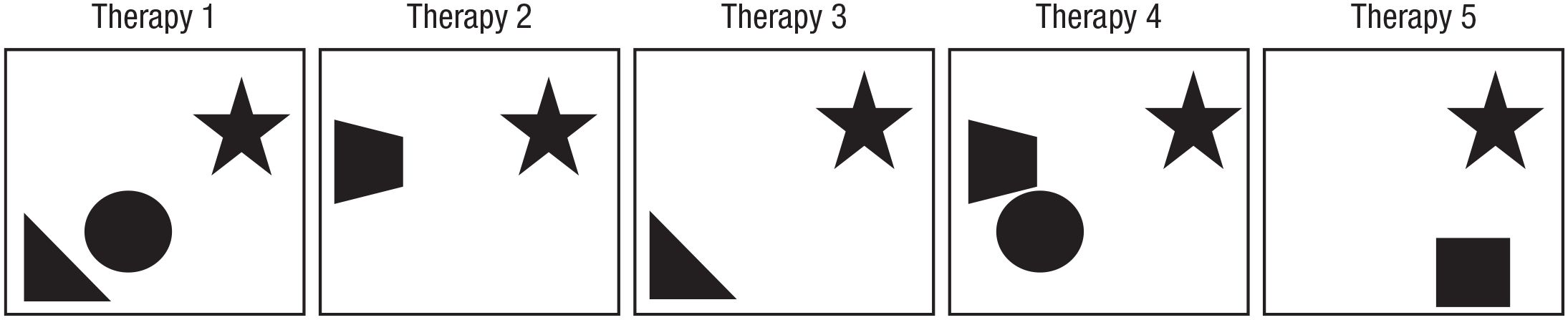

We critically appraised the seven research strategies on three criteria: causal inference, generalizability, and feasibility. In addition, we illustrate the research strategies in Figures 3 through 9. In these figures, the different shapes (e.g., circle, square, triangle) reflect different therapy components, and the star reflects the target component under evaluation.

Strategy 1: expert opinion

Strengths of this strategy (Fig. 3) include its feasibility and ability to bring together information from diverse panels of patients and therapists working in different contexts, contributing to the generalizability of study findings. Limitations include a potential lack of empirical evidence for the opinions put forward—it is unclear whether the components that patients and therapists perceive as most important for therapeutic change are indeed the drivers of therapeutic change. In addition, most Delphi studies have generated lists of dozens of components rather than a carefully distilled intelligible selection of components (e.g., Duncan et al., 2004, and Morrison & Barratt, 2010, each identified more than 70 components). Expert opinion thus may be helpful to start cataloging potentially relevant therapy components, but it is subject to conceptual bias on the part of respondents and cannot actually distinguish between effective and superfluous components.

Using expert opinion to identify effective components. The symbols (star, square, etc.) represent different therapy components; the overlapping circles represent different expert perspectives. Components that experts agree on to be most important might be effective components. In the example presented here, this is the “star component.”

Strategy 2: components shared by effective therapies

Strengths of this strategy (Fig. 4) include its feasibility (i.e., using existing data reduces costs) and the possibility to include wide ranges of therapies and outcomes, contributing to the generalizability of study findings. In addition, this strategy often opens up the black boxes of “branded” therapy protocols that are implemented under different names but largely include the same components (e.g., Sprenkle & Blow, 2004). Limitations include the lack of any assessment of covariation between components and outcomes and lack of ability to draw causal conclusions about whether the identified components indeed drive the identified effects. In addition, most studies treat all empirically supported therapies similar even though the magnitude of therapy effects may differ substantially.

Using shared components of effective therapies to identify effective components. The symbols (star, square, etc.) represent different therapy components; the boxes represent different effective therapies. Components shared across effective therapies might be effective components. In the example presented here, this is the “star component.”

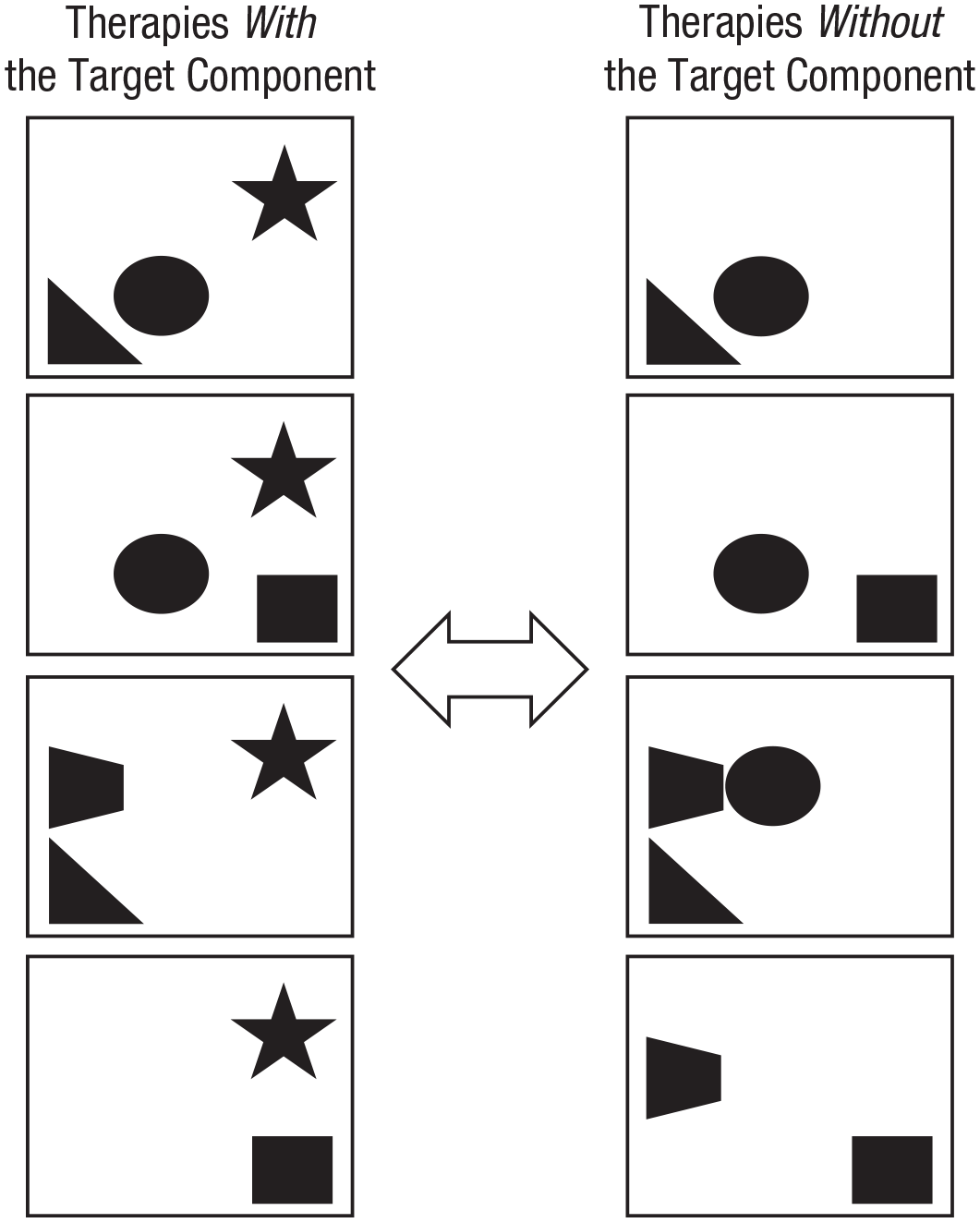

Strategy 3: associations between component implementation and therapy effects

Strengths of this strategy (Fig. 5) include integrating information from a range of therapies, components, studies, and contexts, contributing to the generalizability of study findings. It can also help slim down the often long lists of shared components by identifying components that are not associated with therapy effects. In addition, a strength of extending this strategy to evaluate associations between combinations of components and therapy effects (i.e., network meta-analysis) overcomes the limitation of most of the other strategies to focus on individual components, whereas components may interact in exerting therapy effects. Limitations of these methods, especially when adopting a single-variable approach, include the third variable problem: Implementation of components is not protected by randomization, and components often cluster within trials, leading to confounded associations (Lipsey, 2003; Petticrew et al., 2012).

Testing associations between components and therapy effects in a meta-analysis. The component of interest is the star; this component is present in therapies at the left and absent in therapies at the right. If therapies with the target component are more effective than therapies without the target component, then the target component may be an effective component.

In this strategy, the typically adopted single-component approach (e.g., Leijten et al., 2018, 2019) can be extended to identify combinations of therapy components that yield the strongest effects, using, for example, network meta-analysis (Caldwell et al., 2005; Pompoli et al., 2018), qualitative comparative analysis (Rihoux & Ragin, 2009; Thongseiratch et al., 2020), or meta-analytic classification and regression trees (meta-CART; Breiman et al., 1984). Each of these strategies tests how different combinations of therapy components associate with therapy effects by identifying either clusters of components that collectively define a coherent therapy approach (network meta-analysis) or combinations of therapy components that are present in more effective therapies specifically (qualitative comparative analysis) or testing interactions between components (meta-CART).

Some of these strategies partly overcome the third variable problem by involving direct comparisons protected by randomization (i.e., head-to-head comparisons within trials) in addition to comparisons not protected by randomization (i.e., between trials).

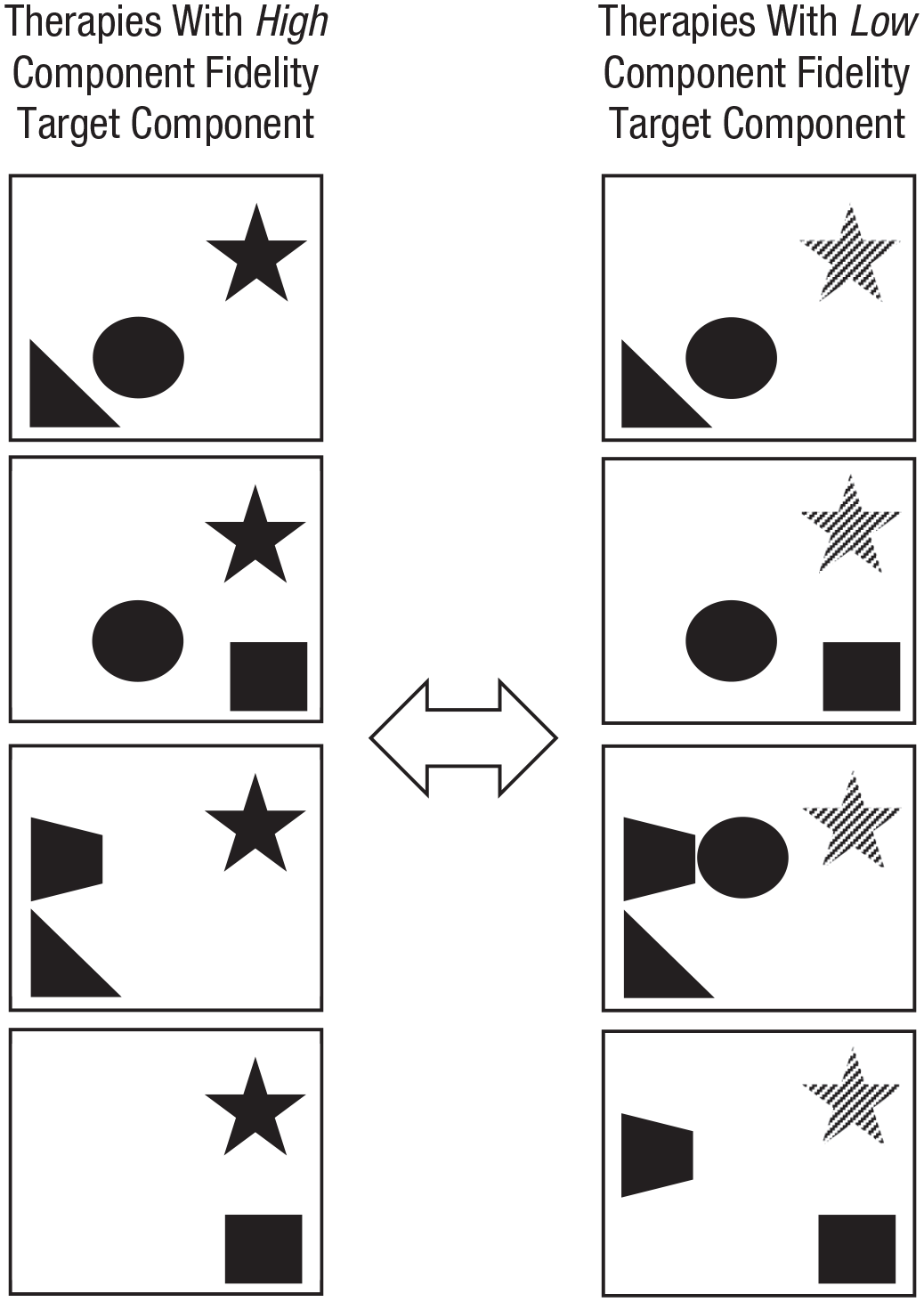

Strategy 4: associations between component fidelity and therapy effects

Strengths and limitations (Fig. 6) are similar to those of Strategy 3—both are relatively feasible because they use existing data but rely on correlational rather than causal evidence. Although most published research on fidelity pertains to fidelity on a protocol level, rather than on an individual-component level, measures of fidelity are almost invariably composed of assessments of adherence to individual components. Increased use of these more precise data might increase the use of this strategy.

Testing associations between component fidelity and therapy effects in a meta-analysis. The component of interest is the star; dark stars at the left represent high fidelity of therapists to the component, and lighter stars at the right represent lower fidelity to the component. If therapies are more effective when fidelity to the target component is high, then the target component may be an effective component.

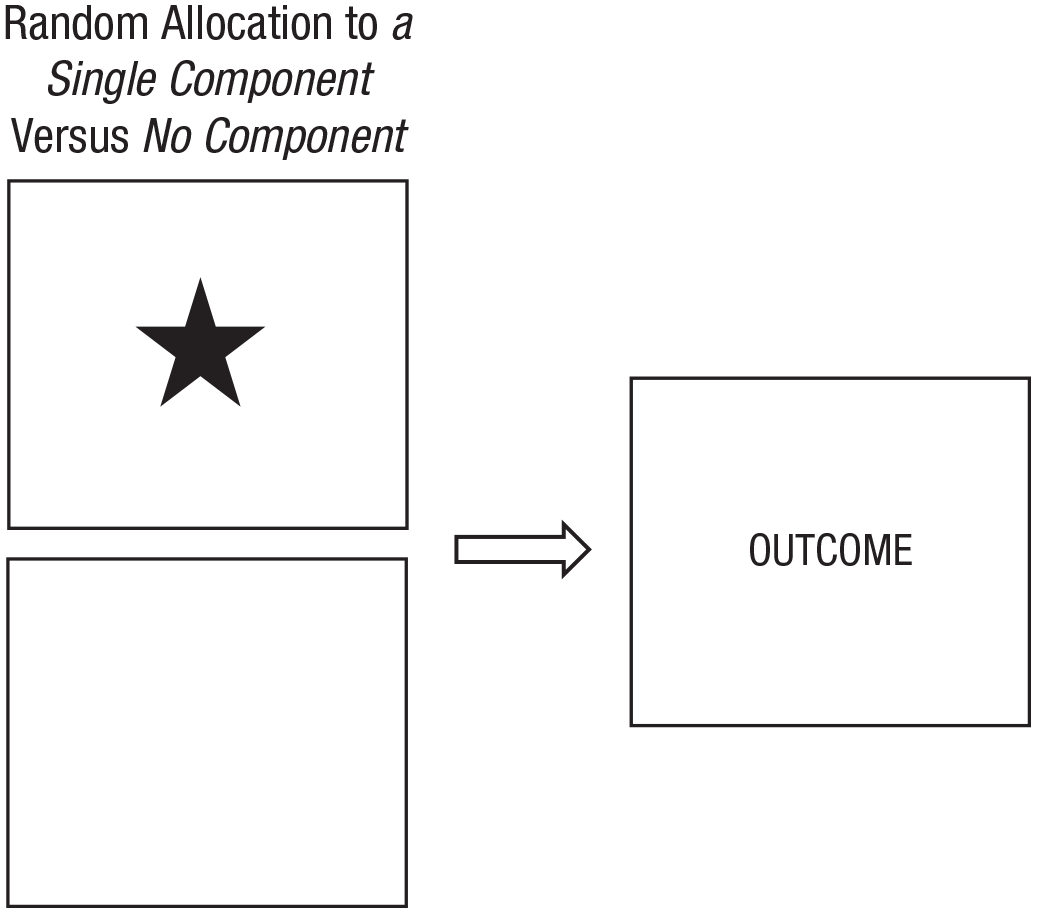

Strategy 5: microtrials

Strengths of this strategy (Fig. 7) include its experimental design that allows for drawing causal conclusions about component effects. In addition, this strategy is more feasible than other experimental strategies: It tests the effects of implementing one component on a proximal outcome rather than the effects of implementing a comprehensive whole of components on a distal outcome. Implementing only one component requires fewer resources, and measuring more proximal outcomes tends to lead to larger effects, allowing modest sample sizes to be sufficient for rigorous hypothesis testing. Limitations of this strategy include generalizability. Because microtrials test the effects of components in isolation from other components, their effects cannot be easily generalized to therapy practices, or standardized protocols, in which multiple therapy components might interact in shaping therapy effects. Instead, microtrials fill a gap between clinical basic and intervention science by testing whether proximal factors associated with mental health problems (e.g., negative mood) can be changed by discrete therapy components (e.g., relaxation) and whether such changes in turn affect indicators of mental health outcomes (e.g., depression symptoms; Howe et al., 2010; Leijten et al., 2015). Structurally, microtrials resemble a procedure the U.S. National Institute of Mental Health has called experimental therapeutics, a proposed strategy for identifying changes in proximal factors (psychological and/or biological) that may need to be targeted to produce intervention effects on more distal mental health outcomes (Gordon, 2017).

Testing the individual effects of a target component in a microtrial. The component of interest is the star. Participants are randomly allocated to either receive this therapy component or to receive no therapy components. If the component changes the outcome of interest, the target component is an effective component.

Strategy 6: additive and dismantling studies

Strengths of this strategy (Fig. 8) include its experimental design that allows for drawing causal conclusions about component effects. In addition, evaluating the component within the context of treatment protocols contributes to the generalizability of study findings. The main disadvantage of this strategy is the statistical power (and thus large sample sizes) needed to identify the unique contributions of individual components. Meta-analyses of additive and dismantling studies show that target components rarely add significant levels of effectiveness to therapy protocols (Ahn & Wampold, 2001; Bell et al., 2013; Cuijpers et al., 2019). This might in part be due to most additive and dismantling studies being seriously underpowered (Kazdin & Whitley, 2003), putting them at risk for Type 2 errors: falsely concluding that therapy is equally effective with and without the target component. Additive and dismantling studies require sample sizes based on the expected effect size of an individual component above and beyond the effect size of the rest of the therapy; the smaller the expected effect, the larger the required sample. Thus, although additive and dismantling studies are rigorous and potentially valuable, they can be costly to conduct well.

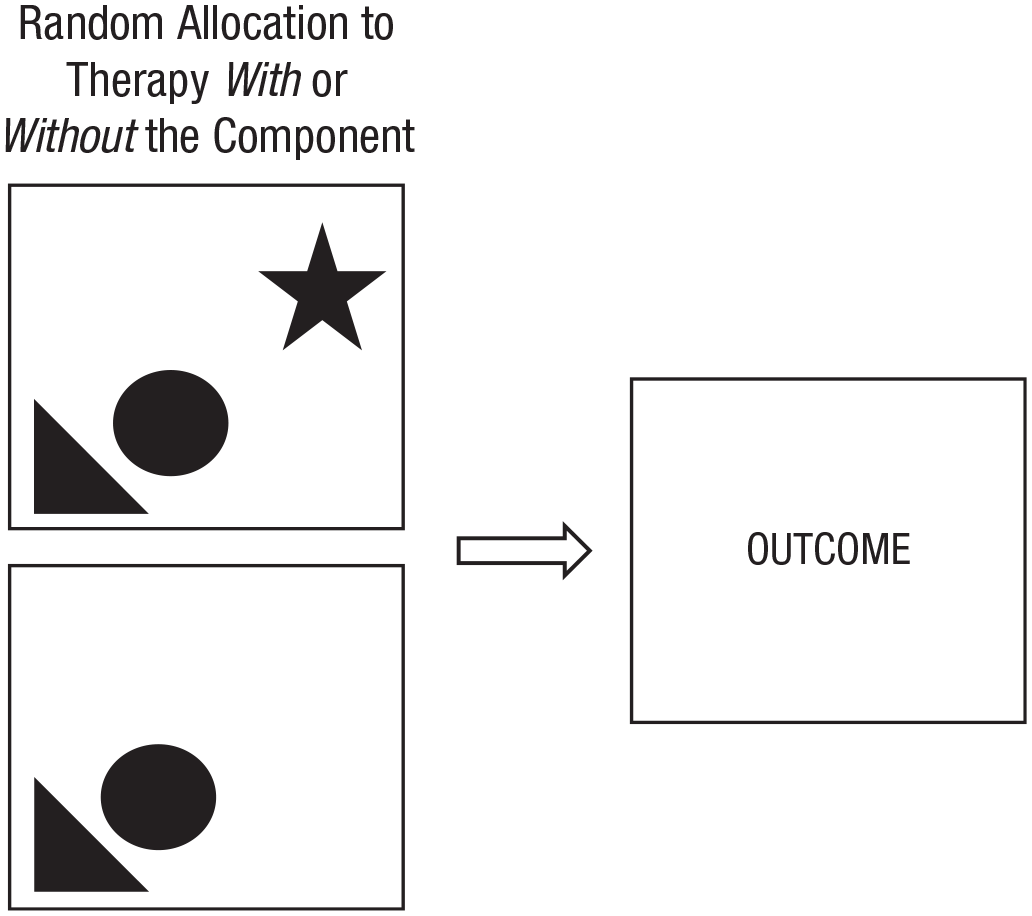

Testing the unique contribution of a target component in an additive or dismantling trial. The component of interest is the star. Participants are randomly allocated to receive either therapy with this component or the same therapy without this component. If therapy with the target component outperforms the same therapy without the target component, then the target component is an effective component.

Strategy 7: factorial experiments

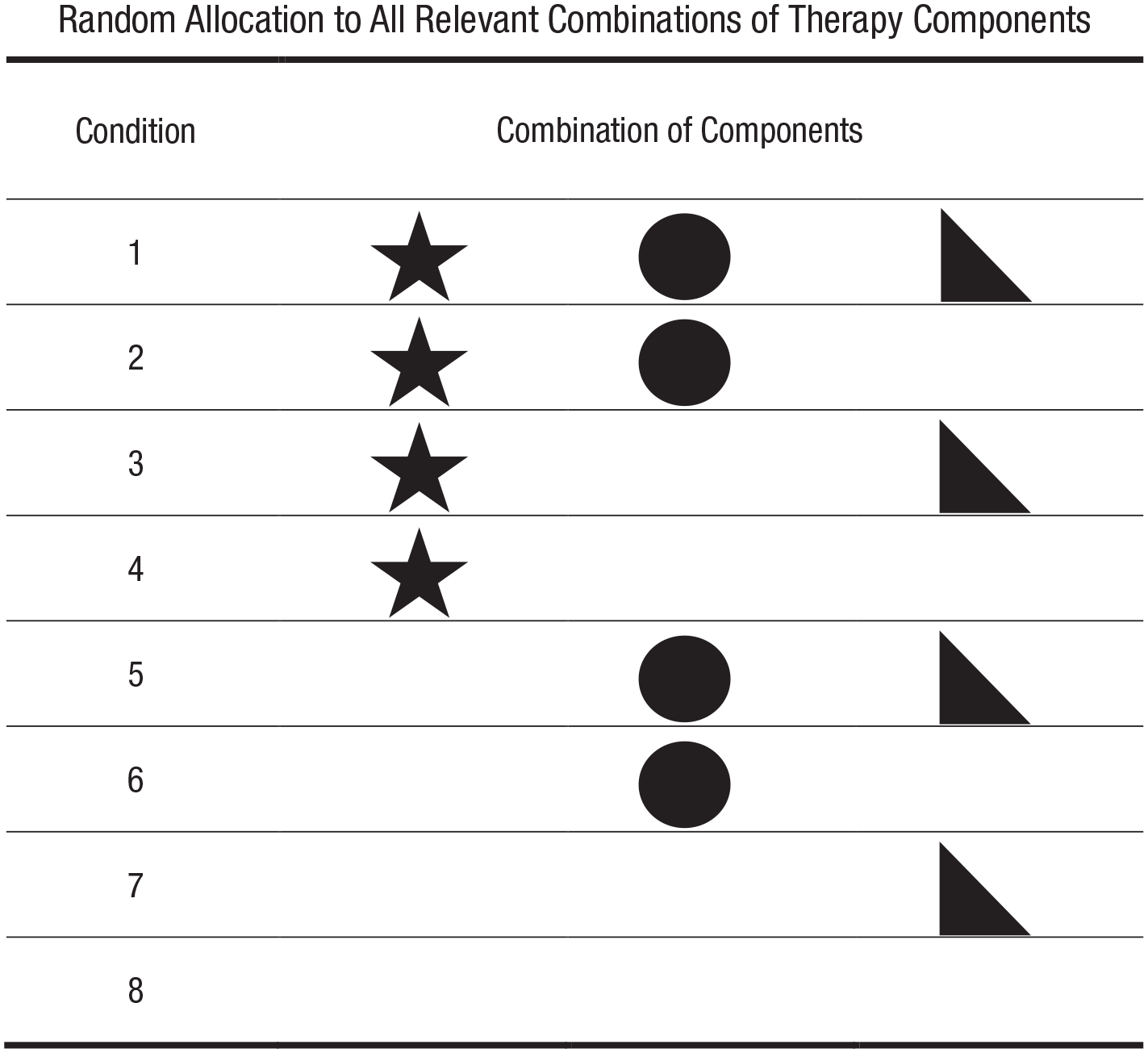

Factorial experiments allow for drawing causal conclusions both about the effects of individual components and about interaction effects between components. Its efficient use of participants and conditions permits testing multiple components in one study without unrealistic sample size requirements (Collins et al., 2014). In Figure 9, for example, the empirical merit of the “star” component is tested by comparing the average improvement of all participants who received the star component (i.e., Conditions 1–4) with the average improvement of all participants who did not receive the star component (i.e., Conditions 5–8). Because data are aggregated across multiple conditions, the number of participants in the individual conditions can be much smaller than in trials that aim to compare individual conditions. If, for example, the design in Figure 9 would include 25 participants in each condition, then data from 100 participants (i.e., Conditions 1–4) are compared with the data from 100 participants (i.e., Conditions 5–8) to test the empirical merit of the star component.

Testing the effects of multiple individual components in a factorial experiment. The three components of interest are the star, circle, and triangle. Participants are randomized to conditions that cover all relevant combinations of the target components. To test the effectiveness of each component, the effects of all conditions in which participants received the component are compared with the effects of all conditions in which participants did not receive the component. If, for example, participants in Conditions 1 to 4 improve more than participants in Conditions 5 through 8, then the “star component” is an effective component.

Factorial experiments are particularly well suited to test interaction effects between components (i.e., whether the effects of one component depend on the presence or level of another component). Several examples of using factorial experiments for this purpose already exist (e.g., Barlow et al., 1992), and several more trials have been announced (e.g., Lachman et al., 2019). Testing interaction effects between components does require larger sample sizes per condition because data from fewer conditions can be aggregated. For example, to test whether effects of the star component in Figure 9 depend on the presence of the circle component, data from Conditions 1 and 2 are compared with data from Conditions 3 and 4, using only half of the total sample size.

Limitations, at least for in-person intervention, include the risk of contagion of therapy content across conditions: It may be hard for therapists to implement some content with some participants and not with others. In addition, the generalizability of findings from factorial experiments to real-world therapy depends on whether each condition reflects a potentially full treatment package or whether the design mainly tests theoretically relevant combinations of components against each other as a stage before testing full treatment effects (i.e., much like microtrials). That said, the ability to test causal effects of multiple individual therapy components within one study, with efficient use of conditions and participants, makes factorial experiments a powerful tool for discerning active therapy components.

Discussion

Unraveling the effects of discrete psychological therapy components is hard, and some researchers question the potential and usefulness of the effort. For example, research aimed at identifying effective components has been criticized for unwarranted use of “the drug metaphor,” the assumption that psychological therapy works because of a particular set of ingredients supplied by the therapist (Stiles & Shapiro, 1989). Others have argued that the specific components of therapy protocols matter much less than more generic “common factors” (Wampold et al., 1997). Yet numerous clinical psychological scientists argue that understanding of therapy effects can only be advanced if this issue is addressed (Holmes et al., 2014; Mulder et al., 2017), and even prominent advocates of the common factors view have argued for the value of research testing the impact of therapy components (Laska et al., 2014). A case can be made that if one strives to improve the effects of psychological therapy, one needs to know whether particular ingredients make therapy work and if so, which ones. These ingredients could then be used as building blocks for evidence-based procedures to target the core processes underlying mental health problems (e.g., process-based therapy, principle-guided therapy).

We conducted a scoping review of research strategies that can guide these efforts. Four identified strategies rely on expert opinion or correlational evidence, allowing us to generate hypotheses about effective components: expert opinion, shared components of empirically supported therapies, and associations between the implementation or implementation fidelity of components and therapy outcomes. Three strategies rely on experimental evidence, allowing us to test hypotheses about effective components: microtrial, additive and dismantling trials, and factorial experiments. The first four strategies have the advantage of high feasibility, often using existing data from decades of rigorous therapy research. The latter three strategies have the advantage of informing causal conclusions about the empirical merit of components.

We also identified trends over time and between subfields that help inform how we can move forward as a field. First, over time, there seems to be a shift from individual experimental trials (e.g., additive and dismantling trials and microtrials) to systematic reviews and meta-analyses of associations between the presence of components and therapy outcomes. Systematic reviews and meta-analysis are helpful to synthesize the ever expanding therapy literature. However, when testing associations between components and therapy outcomes across trials, inferences from these relations are not protected by randomization. We therefore recommend carefully balancing these strategies and avoid any further increase of meta-analyses of associations to come at the cost of rigorous experimental designs.

Second, between subfields, dismantling trials dominate in the adult anxiety and depression literature, microtrials in the youth conduct problem literature, and factorial experiments in the substance use literature. This pattern might merely reflect different tendencies between fields as well as confounding field characteristics. For example, it might be that factorial experiments are mainly used in the substance use literature because online therapy is particularly common in this field (e.g., Taylor et al., 2017) and because factorial experiments may be easier to implement in online therapies, in which it is easier to control the presence of components compared with the absence of components in multiple conditions. That said, we hope our identification of the somewhat different approaches adopted in different fields will help experts in the subfields identify ways to strengthen their evidence base by complementing their current methods with those from related fields.

Third, many included studies appear to represent relatively stand-alone efforts of pioneers in different fields rather than part of a coherent science that systematically maps the evidence for relevant therapy components within a certain field. To move from scattered research to a coherent science of discrete therapy components, a research agenda to guide systematic progress from insight to identification of components that drive therapy effects is needed.

One framework that can help set this agenda is process-based therapy. Process-based therapy aims to identify the core processes that should be targeted to improve the mental health of individual clients (Hayes & Hofmann, 2020; Hofmann & Hayes, 2019). Organizing therapy, and therapy research, in terms of such processes instead of complete named protocols provides an infrastructure for systematically mapping the evidence base of discrete therapy components to efficiently and effectively target these processes. For example, if cognitive defusion is identified as a key therapeutic process to reduce depressive symptoms in some clients, the research strategies discussed in this review can be used to evaluate the most promising components for cognitive defusion (e.g., Levin et al., 2012; Watson et al., 2010; Yovel et al., 2014).

A related perspective has been offered by advocates of principle-based approaches to psychotherapy. These researchers, spanning four decades (see e.g., Goldfried, 1980) and including members of the Society of Clinical Psychology task force on empirically supported treatments (Tolin et al., 2015), have argued that optimal treatment design may require a focus on empirically supported principles of change (ESPCs; Castonguay & Beutler, 2006; Davison, 2019; Oddli et al., 2016). This notion that ESPCs represent a sweet spot between complete therapy protocols and specific treatment techniques is reflected in a recently developed principle-guided youth treatment protocol, called FIRST (Weisz & Bearman, 2020), that relies on five principles, including calming and cognitive change, with each principle subsuming a set of specific treatment procedures. Initial benchmarking trials comparing FIRST to more complex treatment have shown encouraging clinical outcomes (Cho et al., 2020; Weisz, Bearman, et al., 2017); randomized controlled trials will be needed for more definitive evidence.

Therapy components might interact with each other such that the effects of one component depend on the presence or absence of other components (i.e., moderation between components). Empirically testing these interaction effects adds complexity to the research strategies identified in this review, but some strategies are specifically designed for this, such as factorial experiments and network meta-analysis (an extension of testing basic associations between the presence of components and therapy effects). More participants or studies (in the case of meta-analyses) will be needed to identify moderator effects between components because interaction effects tend to be small, especially when tested against main effects, with substantial power required to detect interactions. Examples of studies that examined interaction effects between components include tests of the individual effects compared with combined effects of relaxation and cognitive therapy for generalized anxiety disorder in a 2 × 2 factorial experiment (Barlow et al., 1992) and a network meta-analysis of the most effective combination of cognitive behavioral therapy components for panic disorder (Pompoli et al., 2018).

Therapy components might also interact with client characteristics such that different individuals benefit from different components. In other words, some clients may benefit more from some components than from other components, depending on, for example, the etiology of their mental health problems or their personal values about specific therapy techniques. In addition, different therapists may differ in their ability to implement some content or procedures. Studying such individual differences is essential for understanding the merit of therapy components. Most of the research strategies we identified allow for moderator analysis to identify individuals who benefit from specific components, and the examination of such individual differences—as well as therapist-level moderators—is an important next step for the field.

If the contents of therapy protocols affect outcomes, then effects are not produced by protocol content alone. The research strategies we discuss in this article to identify effective components can be extended to study other aspects of therapy that contribute to therapy effects, such as therapist skills (e.g., foundational ingredients, Lord et al., 2015; therapist facilitative interpersonal skills, Anderson et al., 2016) and “common factors” that transcend specific protocols, such as therapeutic alliance (Grencavage & Norcross, 1990; Messer & Wampold, 2002). Various examples of this application exist. For example, Friedlander et al. (2010) showed that therapist engagement distinguished between less and more successful therapy sessions, and Anderson and colleagues (2016) showed that therapists’ “facilitative interpersonal skills” assessed via a standardized performance task predicted clients’ symptom reduction more than a year later. In addition, meta-analyses testing associations between inclusion of components and therapy effects suggest that computer and Internet-based therapies work better when they include the component of support from a therapist than when they do not (Andersson & Cuijpers, 2009; Richards & Richardson, 2012).

Related to this, we may not always be able to a priori select the components for examination that are most essential for therapy success. In such cases, evaluating more comprehensive therapy packages (instead of discrete therapy components) may help identify the packages most suitable for some individuals, from which the essential therapy components could then be derived. In other words, although the shift to a more elemental approach in therapy evaluation research is valuable in many ways, there will always be value in also examining differential response patterns in comprehensive therapies.

Strengths and limitations

Our scoping review is the first to examine and appraise a wide range of techniques for discerning active therapy ingredients and covers a wide range of subfields of the psychological therapy literature, from childhood conduct problems to adolescent substance use and adult depression, on discrete therapy components. Focusing on the evaluation of discrete therapy components fits the paradigm shift of moving away from complete named therapies toward evidence-based processes and procedures (Hayes & Hofmann, 2020; Hofmann & Hayes, 2019) and may facilitate the development of a coherent clinical psychological science on discerning active therapy ingredients. In addition, our analyses of trends over time provide insight into the development of our field and where we may head if this trend continues—toward more reviews and meta-analyses and fewer novel-focused experiments. Finally, our analyses of differences between fields shed light on possibilities for cross-fertilization between fields.

In line with scoping review goals, our literature review was systematic but did not necessarily pick up every single eligible study. This is because most studies evaluating discrete therapy components refer only to the content of the components evaluated (e.g., “Does behavioral activation add to the effects of cognitive therapy for depression?”) and not to the fact that a component is being evaluated (e.g., “Is behavioral activation an active therapy ingredient for depression?”). In other words, the terminology used is too heterogeneous to identify all relevant studies in a comprehensive way. This also means we cannot exclude the possibilities that some of the trends we identified over time or between subfields are influenced by our ability to identify work published in different times or subfields. For example, the oldest studies we identified were published in the 1970s, but there may be older studies that our search terms did not pick up. In addition, we evaluated the strengths and limitations of each research strategy but did not examine the individual studies to assess how the design strengths and limitations of each strategy are borne out in practice. Next steps in this work include evaluating how the identified research strategies are being used in the field to refine theories and interventions.

Conclusion

This scoping review identified seven research strategies to discern active therapy ingredients. A mature clinical psychological science of therapy components will need to explicitly capitalize on the strengths of the different strategies and track the field’s progress in understanding the components that drive therapy effects. The evidence base this will yield for effective therapy components can be used in approaches that move from named therapy protocols to discrete therapeutic processes.

Footnotes

Transparency

Action Editor: Stefan G. Hofmann

Editor: Kenneth J. Sher

Author Contributions

P. Leijten initiated the manuscript. P. Leijten and F. Gardner developed the underlying ideas as part of a systematic review of evidence for the effectiveness of discrete parenting program components for disruptive child behavior problems. All authors contributed to its conceptual development. P. Leijten drafted the manuscript, and J. R. Weisz and F. Gardner provided critical revisions on multiple versions. All of the authors approved the final manuscript for submission.