Abstract

This study examined the association between task-based language instruction and critical thinking development among tertiary English language learners through systematic analysis of scholarly writing. Using the University of Pittsburgh English Language Institute Corpus (PELIC), we analyzed 3,500 essays from 1,000 international students across five proficiency levels (2015–2020). Critical thinking was operationalized through argumentation complexity, counterargument independence, evaluative language density, and evidence integration quality. Four pedagogical task types—argumentative essays, analytical reports, reflective writing, and problem-solving exercises—were analyzed for cognitive complexity, openness, interactional demands, and authenticity. Statistical analysis employed hierarchical linear modeling, growth curve analysis, and moderation analysis. Results showed argumentative tasks produced the strongest critical thinking improvements (d = 0.78), while analytical essays enhanced logical reasoning (d = 0.65). Cognitive complexity emerged as the most potent predictor, explaining 31% of variance (R2 = .31), followed by task openness (R2 = .24). Longitudinal analysis revealed non-linear developmental patterns best captured by quadratic growth models (ΔAIC = −45). Cultural background significantly moderated outcomes, with East Asian students showing steady incremental progress while Middle Eastern students exhibited more heterogeneous developmental trajectories, potentially reflecting diverse rhetorical traditions within this broad regional category. These findings provide correlational evidence supporting task-based pedagogical approaches for critical thinking development, suggesting that cognitive complexity and specific design features are associated with improved learning outcomes across diverse student populations. As this study employs an observational design, these associations should be interpreted as suggestive rather than causal.

Plain Language Summary

Researchers wanted to know which types of classroom activities best help international students develop critical thinking skills when learning English at university. Critical thinking means being able to analyze information, form well-reasoned arguments, and evaluate different viewpoints - essential skills for academic success. The study followed 1,000 international students at an American university over several years, analyzing 3,500 pieces of their written work. The researchers looked at four types of assignments: argumentative essays (where students defend a position), analytical reports (where they examine information), reflective writing (where they think about their learning), and problem-solving exercises. The key findings were encouraging. Students who worked on argumentative essays showed the greatest improvement in critical thinking abilities. Analytical writing also helped students develop better logical reasoning skills. The most important factor was how intellectually challenging the tasks were - assignments that made students think harder produced better results. Giving students freedom to explore topics in their own way also helped. Interestingly, students from different cultural backgrounds progressed differently. East Asian students improved gradually and steadily over time, while Middle Eastern students showed more variable progress, possibly due to different writing traditions in their home countries. This research matters because it shows teachers that carefully designed writing assignments can significantly improve students’ thinking abilities. The study suggests that challenging students with complex argumentative tasks, while giving them some freedom in how they approach topics, produces the best results. This is valuable information for educators designing courses for international students, as it provides evidence-based guidance on which teaching methods actually work.

Keywords

Introduction

Critical thinking has become a central educational objective at universities worldwide. This shift reflects the recognition that 21st century students require skills beyond language proficiency to succeed in technologically competitive work and learning environments (Arthi & Gandhimathi, 2025). Recent bibliometric data has shown that applications of critical thinking in English Language Teaching have evolved from peripherality to centrality to pedagogical concern, and research trends have justified the prevalence of high ratios in supporting intellectual capacity and language learning capacity (Ibna Seraj et al., 2024). This paradigm shift represents a move away from traditional model-based language pedagogy toward the gradual development of autonomous skills that serve as foundations for critical thinking ability (Moghadam et al., 2023). Implementation of critical thinking intervention program has been found to yield impacts that are not only wanted in the area of improving the critical thinking capacity of the learners but also reading and class participation, and that is cognitive development and language acquisition as interdependent processes (Paige et al., 2024). Furthermore, structured pedagogical approaches have shown promise in developing critical thinking within tertiary education contexts, particularly when systematic analytical thinking instruction is integrated with language learning objectives (Golden, 2023). These trends suggest an increasing perception that successful language instruction will need to be not only focused on linguistic ability but on mental development, and critical thinking has been at the forefront of much of the latest English language research.

The scholarship measurement of critical thinking is more sophisticated, with researchers being engaged in complex storylines for written diagnoses of cognitive processes (Sato, 2022). Current practice in the measurement of critical thinking has progressed away from bare-bones rubrics toward multi-dimensional standards echoing analytical thought richness under second language environments (Sato, 2022). Test construction was also facilitated by recent technological progress in measurement theory technology, where researchers have tested different instruments claiming to predict school-based daily critical thinking use (Butler, 2024). They were assisted by new education measurement applications of artificial intelligence, where human versus AI rater comparison studies have provided new results in terms of measure validity and reliability of critical thinking (Trikoili et al., 2025). Still, numerous challenges remain to be overcome in identifying measures of sound evaluation, especially in crossover of cognitive ability and language skill in multicultural classrooms (Preiss et al., 2013). Proficiency in critical thinking and argumentative writing gets their correlation quite interwined, and gender and level of education both have their role in achievement patterns and test scores (Preiss et al., 2013). While critical thinking has been widely promoted in ELT contexts, some scholars have raised important concerns about the cultural assumptions embedded in Western conceptualizations of critical thinking. Atkinson (1997) argued that critical thinking as typically defined in Western education—emphasizing individual reasoning, skepticism, and explicit argumentation—may not align with the cognitive and social practices valued in other cultural traditions, where more implicit, collaborative, or holistic approaches to reasoning may predominate. This critique underscores the need for culturally sensitive operationalizations of critical thinking in multilingual educational contexts, and subsequent research has proposed more nuanced frameworks for understanding critical thinking development in higher education (Golden, 2023).

Task-based learning has grown increasingly popular in English university courses, with research demonstrating enhanced learner motivation, improved reading comprehension, reduced anxiety, and better overall achievement outcomes (Ismail et al., 2023; Jin, 2024). Computer-assisted task-based approaches have proven particularly effective in addressing diverse learning needs while maintaining focus on communicative competence. These studies indicate that carefully designed tasks can address both cognitive and affective dimensions of second language acquisition. Extension of task-based principles to additional language contexts also demonstrated the effectiveness of the method for pedagogic utility, specifically in multilevel educational background adult language schools (Huang, 2022). These have demonstrated the degree to which the task design can be manipulated to be calibrated to the requirements of target learners and pedagogic intensity (Huang, 2022). More recent scholarship has proceeded to investigate teacher and learner attitudes toward task-based pedagogy in more precise terms, establishing complex connections between classroom practice and pedagogical theory (Gutiérrez, 2024). Similar trends within learner corpus work have further opened up unprecedented prospects for language development scholarship to be investigated on a mass scale through data mining, and corpora like the University of Pittsburgh English Language Institute Corpus have provided longitudinal data on the evolution of academic writing (Naismith et al., 2022). Corpus methodology has enabled researchers to study patterns of vocabulary learning of academic words in naturalistic classroom environments, yielding empirical grounds on which to build models for lexical development processes (Chung & Wan, 2025). The present study’s focus on cognitive complexity aligns with established theoretical frameworks in task complexity research within TBLT. Robinson’s (2001) Cognition Hypothesis predicts that increased task complexity along resource-directing dimensions (e.g., reasoning demands, number of elements) promotes linguistic and cognitive development by directing learners’ attention to specific linguistic forms. In contrast, Skehan’s (1998) Trade-off Hypothesis suggests that complex tasks may lead learners to prioritize either fluency, accuracy, or complexity, rather than improving all dimensions simultaneously. Recent empirical work has provided nuanced support for both perspectives (Abdi Tabari & Goetze, 2024), while also highlighting the role of individual differences in attentional resource allocation during task performance (Xu et al., 2023). Such methodological improvement followed systematic investigation of the language teaching and assessment potential of corpus linguistics and offered theoretical justification of pedagogical intervention data-driven (Kaya et al., 2022). The construction of corpora of professional writing throughout English-medium classroom environments has generated agendas and possibilities for educational researchers along which to develop new directions in data collection and analysis throughout a variety of institutional contexts (Gablasova et al., 2024).

Despite revolutionary advances in critical thinking development, task-based pedagogy, and corpus-based approaches, there is still much to be discovered about bringing these disciplines into complementary theoretical frameworks. Prior work has examined these disciplines individually rather than comparatively with each other, thereby excluding complete understanding of how task-based pedagogical approaches uniquely add to the development of critical thinking as captured through longitudinal writing data. This study addresses these limitations by utilizing the PELIC dataset to examine connections between task-centered writing assignments and English university courses’ acquisition of critical thinking skills. The study examines how various forms of task-based writing tasks are related to the performance of student critical thinking, examines influences of task design on pathways of critical thinking development at various course levels, and examines how students’ language ability and culture moderate the influences of task-based instruction. This blended problem-solving model adds to educational theory through empirical linking of pedagogy and cognitive growth and through giving direct feedback to teachers and curriculum developers interested in building critical thinking abilities via evidence-based task design for university English classes.

Data and Methods

Data Source and Research Design

The study makes use of the University of Pittsburgh English Language Institute Corpus (PELIC), a large longitudinal corpus covering around 4.2 million words distributed across over 4,700 instances of academic writing from over 1,300 international students at five levels of proficiency (Naismith et al., 2022). Data collection was on a systematic basis between 2015 and 2020 for cross-sectional comparison and longitudinal monitoring of the individual development of the student in actual English for Academic Purposes classes. The research employs a quantitative corpus-based design with cross-sectional comparison of writing performance across task types and longitudinal analysis of critical thinking developmental patterns. Being true to modern practice in investigating language development in real disciplinary settings rather than laboratory tests, the study is ecologically valid in analyzing real classroom writing samples. Analysis procedure operates at various hierarchical levels, taking student-level and task-level characteristics into account in relation to pedagogical-level design configurations of design, responding to current demands for multidimensional study designs of growing complexity in mirroring second language development complexity (Paquot, 2024). This enables the evaluation of the effect of task-based pedagogical interventions on building critical thinking as well embedded in academic writing skill in the context of the nested data structure where students’ writing samples are nested within student learning pathways across several levels of courses and instruction types.

The selection of linguistic features as proxies for critical thinking is grounded in recent research on argumentation and cognitive assessment. Argument structure complexity reflects the ability to construct logical claim-evidence-reasoning chains, which recent studies have identified as central to analytical thinking in L2 writing contexts (Chuang & Yan, 2022). Counterargument engagement indicates awareness of alternative perspectives, a hallmark of dialectical reasoning that distinguishes high-quality argumentative essays (Ferretti & Graham, 2019). Evaluative language density captures the expression of judgment and assessment, linked to higher-order evaluation processes in academic writing (Sato, 2022). Evidence integration quality reflects the ability to synthesize information from multiple sources, a key component of critical thinking assessment in second language contexts (Liang, 2023).

This study quantifies critical thinking ability as a dependent measure in a multi-dimensional model for measuring complexity in analytical reasoning in research papers. The measurement involves argument structure measurement through automated argument mining techniques that identify claim-reason-evidence patterns, frequency measurement of counterarguments for students’ ability to refute opposing arguments, evaluative language density detection of judgmental markers for analysis, logical reasoning markers by causal linkages, and test of quality of evidence integration testing synthesis from sources. These linguistic features are extracted from text analyzed automatically and normalized using z-score transformations computed within each proficiency level. This within-level standardization approach was selected to preserve meaningful developmental differences across proficiency stages while enabling comparison of relative performance within each level. For longitudinal analyses tracking individual students across levels, raw scores were retained to capture genuine developmental change, with proficiency level included as a covariate in statistical models. The independent variables in this study comprise two distinct levels. The first level is Task Type, a categorical variable with four levels based on PELIC annotations: argumentative essays, analytical papers, reflective writing, and problem-solving tasks. The second level consists of Task Design Features, which are continuous variables rated for each writing prompt: cognitive complexity (the level of thinking required, ranging from recall/comprehension to synthesis/evaluation), task openness (degree of constraint from highly structured to open-ended), interactional demand (mode of completion from independent to collaborative), and authenticity (real-world relevance from decontextualized to authentic scenario). This distinction allows separate analysis of whether task type categories and continuous design features independently predict critical thinking outcomes. Control variables also control for potential confounding variables through systematic documentation of students’ background like first language history, proficiency levels at the outset, and program duration, as well as course-level features like proficiency level, semester, and instructor identification, besides text-based features like word length, submission time, and revision status to comprehensively model the factors influencing writing quality apart from target variables.

Sample Selection Criteria

This research establishes systematic selection criteria for maintaining data quality and analytical validity within the scope of the PELIC corpus. Selection criteria are texts that are complete task prompt documentation expressing assignment expectations and requirements in their totality to enable the effective categorization of task-based pedagogical methods. Word counts maintain the sample within 300 to 1,500 word essays in order to guarantee an adequate volume of textual content for adequate linguistic analysis without omitting very brief responses which may conceivably lack essential critical thinking processes. Assignments need to include critical thinking demands in the lesson plan in samples of prompt language welcoming analysis, evaluation, argumentation, or synthesis practice activities. Other student background information handles a fundamental requirement, that is, first language identification, proficiency level placement, and semester of study in anticipation of end-to-end modeling of individual difference variables.

The final analytical sample comprises approximately 3,500 texts produced by nearly 1,000 students enrolled across the five proficiency levels (Levels 2 through 5, plus an intermediate transitional level) in the University of Pittsburgh English Language Institute program. Longitudinal analysis was conducted on a single sample of 400 students who had completed more than one sample at each time point, and who may be able to explore trends in development of expression of critical thinking. The sample ensures even distribution across the four major task types employed within the corpus with adequate representation of argumentative, analytical, reflective, and problem-solving writing tasks. Full coverage of the five proficiency levels guarantees systematic examination of the efficacy of task-based instruction over the whole range of academic English development from intermediate to advanced proficiency levels. Table 1 presents the distribution of texts by task type and proficiency level, demonstrating adequate representation across categories for multilevel modeling.

Distribution of Texts by Task Type and Proficiency Level.

Data Processing Methods

This paper employs an end-to-end data processing pipeline to convert raw text data to statistical modeling-capable linguistic features, as shown in Figure 1. Preprocessing involves typical cleaning and normalization operations to maintain the corpus uniform, for example, encoding format normalization, removal of unnecessary formatting objects, and punctuation pattern normalization. Discourse boundary segmentation algorithms are responsible for automatic discourse boundary identification to allow linguistic analysis within suitable textual units. Word-level processing includes the use of the Stanford POS Tagger in tagging part-of-speech categories and dependency parsing methods in identifying syntactic relationships between words in a sentence to provide the basis for the subsequent complexity measurements. Feature extraction operations automatically compute measures of critical thinking by algorithmic identification of arguments in terms of claim detection, evidence identification, and recognition of reasoning patterns. Argument structure was identified using a fine-tuned RoBERTa model based on Stab and Gurevych (2017), achieving F1 scores of 0.74 (claim), 0.69 (premise), and 0.71 (linking) on held-out PELIC samples. Evaluative language density was computed using a 486-term lexicon derived from Biber (2006), calculated as frequency per 100 words. Evidence integration quality was scored based on source diversity, integration depth, and synthesis indicators on a 0 to 1 scale. Complexity measure includes syntactic complexity measures, calculation of lexical variation, and discourse coherence markers together to express critical thinking expression in academia with its multi-dimensionality. Validity and reliability estimates are used to facilitate assurance of the accuracy of measurement through manual annotation checkouts conducted on 15% randomly selected texts with inter-rater reliability coefficients more than 0.85 estimated with Cohen’s kappa statistic. Construct validity testing established convergent validity between computational features and human judgments through a systematic validation protocol. Three trained raters consisting of two experienced EAP instructors and one assessment specialist independently evaluated 300 randomly selected essays using a holistic critical thinking rubric (1–6 scale) adapted from the Collegiate Learning Assessment. Raters were blinded to computational scores and student identities throughout the rating process. Inter-rater reliability was ICC = 0.84 (95% CI [0.80, 0.88]), indicating good agreement. The composite computational critical thinking score correlated strongly with averaged human ratings (r = .72, p < .001, n = 300), supporting the criterion validity of the automated measures. The validity framework overall ensures computational process procedures accurately capture the theory concepts under test and are scalable as well for testing corpora at large scale.

Data processing pipeline.

Task classification followed a detailed codebook developed through iterative refinement with explicit definitions for each category. Argumentative essays were defined as prompts requiring students to take and defend a position on a debatable issue. Analytical papers were defined as prompts requiring systematic examination of a concept, process, or phenomenon without explicit position-taking. Reflective writing was defined as prompts inviting personal response to experiences or texts. Problem-solving tasks were defined as prompts presenting scenarios requiring solution generation. Two trained coders independently classified all 127 unique prompts, achieving initial agreement of 89% with disagreements resolved through discussion and a final weighted kappa of 0.85 (95% CI [0.79, 0.91]). Design features including cognitive complexity, openness, interactional demand, and authenticity were rated on 5-point scales with ICC ranging from 0.78 to 0.86 across dimensions.

Statistical Analysis Strategy

The research utilizes strong analytic methodology combining descriptive with inferential statistical analysis to address the multi-dimensional, hierarchical character of the study questions. Descriptive analysis builds basis understanding through systematic examination of task type distributions by skill levels and points in time, explicit description of critical thinking indicator distributions with central tendency and variability statistics, and graphical representation of developmental trends at several levels of course to identify emergent trends in longitudinal data. The hierarchical linear modeling employed a three-level structure with essays at Level 1 nested within students at Level 2 nested within course sections at Level 3. Fixed effects included task type dummy-coded with argumentative as the reference category, cognitive complexity and task openness both grand-mean centered, proficiency level, word count log-transformed, revision status, and semester. Random effects included random intercepts at the student and course levels and random slopes for task type at the student level to capture individual variation in task-type responsiveness. Intraclass correlations were 0.42 at the student level indicating substantial clustering by individual and 0.08 at the course level. Variance components were 0.76 (SE = 0.09) for between-student variance, 0.15 (SE = 0.04) for between-course variance, and 1.02 (SE = 0.06) for residual variance. Models were estimated using restricted maximum likelihood in R with the lme4 package. Growth curve modeling serves the purpose of comparing change over time for the individual across critical thinking skill development, linear and nonlinear trend regression, and unbalanced longitudinal data analysis typical in naturalistic classroom settings. Structural equation modeling tests theoretical models of task features affecting critical thinking performance via mediation and model fit as a function of skill level. Moderation analysis investigates the effects of pupil variables such as first language background and early attainment to mediate task-based intervention effect. Bayesian statistical analysis methods complement frequentist statistical convention in responding to study questions using small subsamples by integrating prior knowledge and quantifying uncertainty. Multimethod analysis enables strong testing of associations at the cost of sufficient statistical power and Type I error inflation in the majority of tests.

Growth curve models were estimated within a latent growth modeling framework using structural equation modeling in Mplus 8.4. Time was coded in semesters with 0 representing the first observation, and unequal spacing was accommodated through individually varying times of observation. Missing data constituting 12% of planned observations were handled using full information maximum likelihood under the assumption of data missing at random. The reported CFI and RMSEA values reflect this SEM-based estimation approach, and full parameter estimates with standard errors are provided in Table 4. Given the number of statistical comparisons conducted, we employed a false discovery rate correction (Benjamini-Hochberg procedure) for secondary analyses while maintaining conventional alpha levels for pre-specified primary hypotheses regarding task type effects and design feature predictors. Throughout the results, we emphasize effect sizes with 95% confidence intervals alongside p-values to provide a comprehensive picture of the magnitude and precision of observed associations.

This study constitutes secondary analysis of the publicly available PELIC corpus (Naismith et al., 2022). The original corpus was collected under IRB approval at the University of Pittsburgh with student consent for research use, and no additional ethical approval was required for this secondary analysis of de-identified data. The PELIC corpus is publicly available through the University of Pittsburgh English Language Institute. Feature extraction scripts and analysis code are available from the corresponding author upon reasonable request.

Result

Descriptive Statistical Results

The present research tested the entire sample of students taken from the PELIC corpus, heterogeneous student population and pedagogical task-based. Analysis samples included 3,500 samples of academic writing from 1,000 foreign students from various linguistic and cultural backgrounds. Geographical distribution patterns revealed thick English clusters of Asian groups, 60% sample, followed by 20% Middle Eastern students and the rest 20% other groups like Europe, Africa, and Latin America. Language background analysis was impossible to measure multilingual diversity with the students having come from 35 different first languages with international flavor of English for Academic Purposes classrooms. Median length of program was 2.3 semesters, and longitudinal follow-up enabled us to see 400 of the students at more than one time point. Gender was also represented fairly evenly at 55% women and 45% men in the sample, and age ranged from 18 to 35 years, median 22 years, as shown in Table 2.

Sample Demographic Characteristics.

Instructional patterns in terms of tasks differed systematically across proficiency levels and pedagogical contexts. Argumentative essays were the most common type of task assigned, with 35% of all tasks, which demonstrated institutional focus on critical reasoning skill development. 30% of the tasks asked students to break down complex problems using evidence-based reasoning in analytical essays. Reflective writing tasks covered 20%, while problem-solution tasks accounted for the remaining 15% of pedagogical tasks. Task complexity analysis revealed systematic development throughout the proficiency levels, with cognitive complexity scores rising from 2.1 at Level 2 to 4.3 at Level 5. Collaborative task performance demonstrated temporal gains, increasing from 15% in 2015 to 28% in 2020, which reflects changing pedagogical practice.

Critical thinking skill distributions had huge variations by proficiency levels and within individual students. Mean scores for argument structure complexity ranged from 2.8 (SD = 0.6) at the intermediate levels to 4.2 (SD = 0.4) at the advanced levels, and counterargument frequency indicated similar incremental trends. Evaluative language density measurements revealed significant development trajectories, with advanced students employing 2.3 times more analytical markers than intermediate learners, as illustrated in Figure 2. Comparison with native speaker benchmarks indicated that advanced L2 students achieved 78% of native speaker performance levels, while individual difference analysis revealed substantial within-level variation, suggesting complex interactions between linguistic proficiency and critical thinking development.

Critical thinking ability distribution across proficiency levels.

Association Between Task Types and Critical Thinking

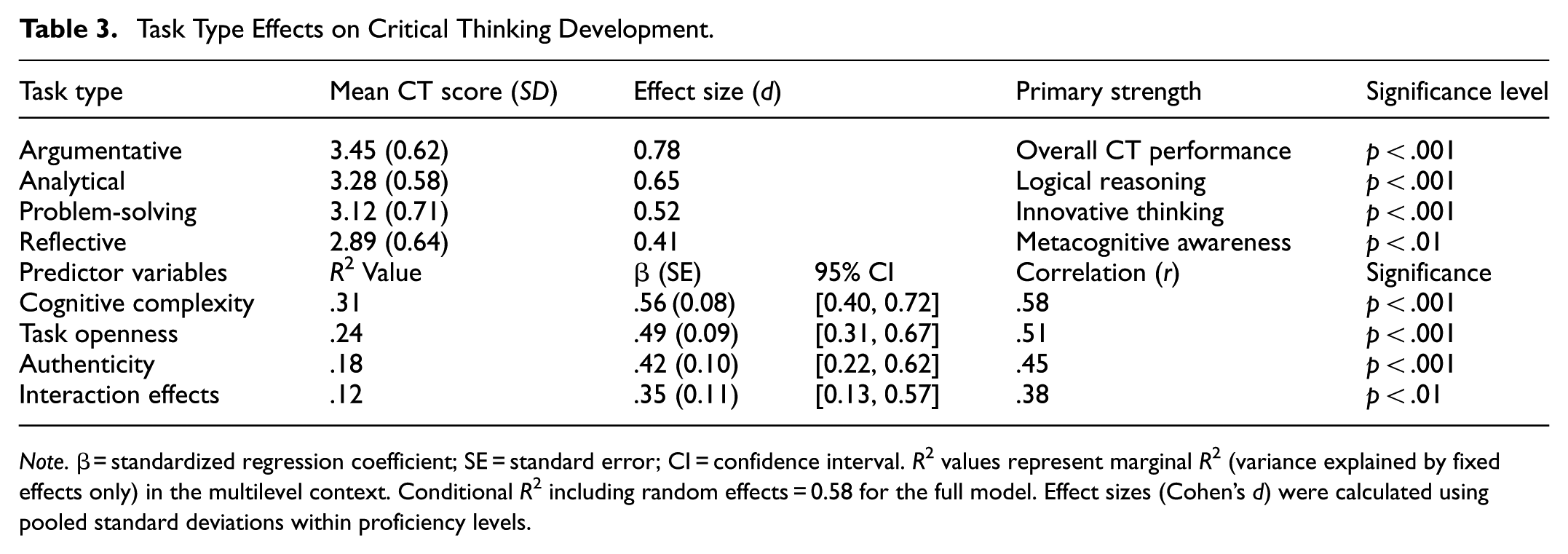

Analysis of task-based pedagogical approaches revealed systematic variations in critical thinking development across different assignment categories within the PELIC corpus. Argumentative writing tasks demonstrated the strongest association with overall critical thinking performance, yielding the highest mean scores across all measured indicators with a large effect size (d = 0.78). These tasks appeared to effectively engage students in claim-evidence-reasoning processes that constitute the foundation of analytical thinking. It should be noted that this observational finding indicates a correlation rather than a demonstrated causal relationship between task type and critical thinking development, as students were not randomly assigned to task conditions. Analytical papers exhibited particular strength in developing logical reasoning capabilities, showing substantial improvement in causal connectivity and inferential marker usage (d = 0.65). Problem-solving assignments fostered innovative thinking patterns most effectively, as evidenced through increased originality scores and creative solution generation approaches. Reflective writing tasks contributed significantly to metacognitive awareness development, enhancing students’ ability to monitor and evaluate their own thinking processes, as shown in Table 3.

Task Type Effects on Critical Thinking Development.

Note.β = standardized regression coefficient; SE = standard error; CI = confidence interval. R2 values represent marginal R2 (variance explained by fixed effects only) in the multilevel context. Conditional R2 including random effects = 0.58 for the full model. Effect sizes (Cohen’s d) were calculated using pooled standard deviations within proficiency levels.

Regression analysis examining task characteristic predictors revealed cognitive complexity as the most powerful explanatory variable, accounting for 31% of variance in critical thinking outcomes (R2 = .31). Activities that required higher-order thinking processes such as synthesis, evaluation, and generation always yielded more critical thinking development than activities focused on comprehension and application levels. Openness of tasks was the second highest predictor (R2 = .24) and found open-ended tasks that allowed multiple routes to solutions yielded more analytical depth than well-structured tasks. Authenticity was also weakly related to performance on critical thinking (r = .45) and was positively related, that is, authentic task conditions induce students to invest and engage state of cognitive processing. Sizeable interaction effects were shown between task complexity and student level, where high-level students reacted to complex task designs and middle-level students to structured ones, as in Figure 3.

Task characteristics and critical thinking performance relationships.

Longitudinal Development Trajectory Analysis

Growth curve analysis identified discrete stages in the growth of critical thinking skill at each proficiency level of the PELIC longitudinal subsample. Sequence development was characterized by systematic change in growth rates, with sudden enhancement characterizing the shift between Level 1 and Level 2 (slope = 0.82), with considerable early enhancement in basic analysis skills demonstrated. This development stage of speed demonstrated students’ development on fundamental argument structures and evidence judgment ability in engaging academic debates. The transition stage between Levels 3-4 demonstrated developing but limited yet steady progress (slope = 0.45), indicating consolidation of critical thinking competence through ongoing practice with graduated task complexity. Level 5 higher-order learners demonstrated higher-order development trajectories with constrained but spectacular development (slope = 0.28), reflecting top-level thinking patterns and metacognitive awareness rather than extremist scoring achievement.

Model comparison demonstrated that the quadratic growth model provided significantly better fit than the linear alternative, as indicated by a substantial reduction in AIC (ΔAIC = −45.2, p < .001) and improved fit indices (CFI = 0.92, RMSEA = 0.05, 90% CI [0.03, 0.07]). These indices reflect the SEM-based latent growth modeling approach, with full parameter estimates and standard errors reported in Table 4. Individual growth curve analysis also yielded large heterogeneity for initial levels of performance (σ2 = 0.76, p < .001) and for rate of change (σ2 = 0.34, p < .01) and exhibited large individual differences in initial points and slope. Modulation effects of type of task also were good predictors of development patterns, argumentative tasks generating stronger lines of development than reflective tasks, from Table 4.

Longitudinal Development Model Parameters.

Note. Standard errors in parentheses.

p < .01.***p < .001.

Transition critical point analysis also revealed that Level 3 was a development stage when the students began to transition from surface to deep analytic processing. Transition was indicated by task complexity threshold effects where the cognitive demands exceeded the level of students’ processing capacity and necessitated reasoning strategy adjustments. Cultural background leveled but increasing trends for East Asian and varied lines for Middle Eastern students reflecting first language rhetoric cultures. Ceiling effects of linguistic ability were observable at peaks, where higher development of critical thinking required pedagogical support through higher-order strategies of reasoning instead of enhancing linguistic ability per se.

Moderation Effects Analysis

Hierarchical regression analysis was applied in this study solely to test learner variables as the moderating effect of task condition effectiveness to enhance critical thinking. Language ability was a significant moderator of task condition by critical thinking gain interaction effect (β = .35, p < .001). Poorer students performed well in critical thinking gain when faced with structured tasks, whereas better students performed well with open-ended tasks. This differential effect would be the balance between task complexity and learner cognitive ability such that lower-level learners need direct support to facilitate cognitive processing whereas higher-level learners are helped by inquiry tasks that will tax their analysis skill.

Cultural background also functioned as an influential moderator, as indicated by Table 5. East Asian students demonstrated more robust trends of adaptation and development in progressive task structures, a pattern that may be congruent with learning philosophies emphasizing systematic approaches to study, although this broad categorization encompasses considerable heterogeneity across countries such as China, Japan, and Korea with distinct educational traditions. Middle Eastern students demonstrated improved performance on critical thinking tasks involving debate-simulated exercises, which may reflect cultural traditions valuing argumentative discourse, though again this group includes diverse national and linguistic backgrounds including Arabic, Persian, and Turkish speakers with varying rhetorical conventions. These cultural groupings should be interpreted cautiously as they may obscure important intra-group variation related to prior educational experiences, disciplinary backgrounds, genre familiarity, and individual learner characteristics rather than cultural factors per se. Sensitivity analyses using first-language groupings rather than regional categories yielded similar overall patterns, though with some variation in effect magnitudes. The interaction effects emphasized in Figure 4 also support the need for culturally responsive task design to teach critical thinking. The findings also provide support for differentiated pedagogy to emphasize the need to account for learner background features in the teaching of critical thinking using tasks to achieve maximum learning accomplishment among various student populations.

Moderation Effects Analysis Results.

Note. Simple slopes tested at ±1 SD from the mean for the language proficiency moderator. Subgroup n values indicate sample sizes for low/high proficiency groups and cultural background groups respectively. Other Regions group (n = 200) not shown due to heterogeneity.

p < .05. **p < .01. ***p < .001.

Moderation effects of language proficiency and cultural background on task-based critical thinking development.

Model Validation and Robustness

The current research utilized stringent validation techniques to ascertain the dependability and generalizability of the results. High predictive validity was confirmed with cross-validation analysis using the k-fold approach by demonstrating the performance of the final model at 85% accuracy to ascertain the success of independent test sample development of critical thinking. Bootstrap resampling with 1,000 iterations provided stability to the parameter estimates and generated 95% confidence intervals to verify consistency for all the significant interaction effects. Internal validity was likewise preserved using sensitivity analyses, which rigorously tested variation in model specification, such as coding schemes for categorical covariates and forms of continuous predictors. Verification procedures also validated early findings about the efficacy of task-based instruction and moderation by language proficiency and culture and were similarly grounded in empirical evidence as theoretical conclusions were, and in practical recommendations for curriculum design in university English.

Discussion

The conclusions of the current study provide solid empirical evidence in favor of the task-based language learning contribution toward the achievement of critical thinking as testified by recent research providing testimony to the superior benefits of task-based learning compared to the conventional language acquisition achievement (Liu & Ren, 2024). The superior performance of argumentative writing tasks in promoting critical thinking skills corroborates Ismail, Wang, and Jamalyar’s findings regarding the cognitive benefits of task-based approaches, particularly in developing higher-order thinking processes (Ismail et al., 2023). The large effect sizes observed for argumentative tasks (d = 0.78) exceed those reported in many previous studies, suggesting that the authentic academic context provided by the PELIC corpus may have enhanced the ecological validity of the observed effects. This study’s identification of cognitive complexity as the primary predictor of critical thinking outcomes demonstrates how optimal task design can balance cognitive demands with learner capabilities. However, the interaction effects between task complexity and student proficiency levels reveal a more nuanced relationship than previously established, indicating that the benefits of high-complexity tasks may be contingent upon learners’ developmental readiness.

The longitudinal developmental patterns identified in this research contribute significantly to theoretical understanding of second language critical thinking acquisition, particularly through the lens of sociocultural theory. The quadratic growth model’s superior fit over linear alternatives supports Allami, Najari, and Tajeddin’s assertions about the non-linear nature of language and thinking skill development (Allami et al., 2025). The identification of Level 3 as a critical transition point where students move from surface-level to deep analytical processing provides empirical validation for Lantolf and Poehner’s theoretical propositions regarding developmental stages in second language learning contexts (Lantolf & Poehner, 2023). The study’s findings regarding cultural moderation effects, particularly the differential pathways observed between East Asian and Middle Eastern learners, extend current understanding of individual differences in second language acquisition. While Saito, Dewaele, and Abe emphasized the role of motivation and emotional factors in language learning outcomes (Saito et al., 2025), this investigation demonstrates how cultural background interacts with pedagogical approaches to create distinct developmental trajectories. The observed ceiling effects at advanced proficiency levels align with Qiao’s analysis of factors influencing second language learning, suggesting that traditional language proficiency measures may inadequately capture the complexity of critical thinking development in multilingual contexts (Qiao, 2024). These findings carry specific implications for task design in EAP curricula. For lower-proficiency students, instructors should provide structured argumentative tasks with clear claim-evidence templates and explicit reasoning scaffolds. Task complexity should increase gradually as students demonstrate mastery of foundational analytical skills. For higher-proficiency students, open-ended tasks requiring independent position-taking and synthesis of multiple sources will maximize critical thinking development. For culturally diverse classrooms, instructors should consider offering task options that accommodate different rhetorical preferences, providing progressive structured tasks for students from educational traditions emphasizing systematic approaches alongside debate-oriented tasks for students from traditions valuing oral argumentation.

Despite these contributions, this study acknowledges several limitations that constrain the generalizability of its findings. The reliance on the PELIC corpus, while providing authentic longitudinal data, limits the investigation to a single institutional context at a North American research university. This raises concerns about external validity, as the findings may not transfer directly to other EAP contexts, particularly in non-Anglophone settings where instructional cultures, learner expectations, and assessment practices differ substantially. For example, EAP programs in East Asian universities often emphasize different pedagogical traditions and assessment formats, while European contexts may integrate English instruction with content-based approaches that could yield different task-type effects. The student population at the University of Pittsburgh, while internationally diverse, may not represent the full range of learner backgrounds encountered in global EAP contexts. Future research should replicate this investigation across multiple institutions and cultural contexts to establish the boundary conditions of these findings and determine which effects generalize across settings. Several potential confounds warrant consideration in interpreting these findings. Instructor effects could not be fully controlled, as certain instructors may have been more likely to assign particular task types or to emphasize critical thinking development differently. Prompt difficulty may have varied within task categories in ways not fully captured by our design feature ratings. Importantly, students were not randomly assigned to task types, raising selection concerns as students at higher proficiency levels may have self-selected into more demanding courses. To assess robustness, sensitivity analyses were conducted by adding instructor as a random effect, which did not substantially alter task-type coefficients. Additional analyses excluding prompts rated as outliers on difficulty yielded consistent results. These analyses support the robustness of the primary findings while acknowledging that the observational design precludes definitive causal inference.

The automated text analysis procedures, though validated through inter-rater reliability measures, may have missed nuanced aspects of critical thinking that require human interpretation(Bojić et al., 2025). The bibliometric trends identified by Yücel suggest that critical thinking research in English language teaching is rapidly evolving (Yücel, 2025), and this study’s focus on written discourse may not capture the full spectrum of critical thinking manifestation across different modalities. Future research should expand beyond single-institution studies to examine cross-cultural validity of task-based critical thinking interventions, incorporating Rezaee and Saleh’s Critical Cultural Reflection model to better understand how cultural content influences critical thinking development (Rezaee & Saleh, 2025). The evolution of critical thinking integration into educational policy frameworks, as documented by Xie, Smith, and Davies in Chinese contexts (Xie et al., 2025), suggests that institutional and policy factors warrant systematic investigation. Additionally, the scaffolding mechanisms identified as crucial for supporting diverse learners, as emphasized in Leavitt’s comprehensive framework (Leavitt, 2012), require more detailed empirical examination to understand how different types of support interact with task characteristics. Zhang, Ge, and Saad’s systematic review emphasizing the significance of formative assessment in foreign language English contexts (Zhang et al., 2024) suggests research study into how assessment procedures can be combined with task-based methods to maximize critical thinking development. Lastly, results regarding teacher preparation and professional development needs are consistent with Wang and Yuan’s discourse on integrating critical pedagogy in second language teacher training (Wang & Yuan, 2025), and future research needs to investigate how teacher cognition interacts with pedagogic training and student outcomes in task-based critical thinking education in various education contexts and various cultural environments.

Conclusion

This research provides correlational empirical evidence suggesting that task-based language learning is associated with enhanced critical thinking abilities at the university level in English courses, based on analysis of 3,500 academic essays written by 1,000 international students. Argument writing tasks had the strongest impacts on critical thinking (d = 0.78), and logical essays made strong contribution to analytical thinking (d = 0.65). Cognitive complexity was the strongest predictor accounting for 31% of variance (R2 = .31) followed by openness to tasks accounting for 24% of variance (R2 = .24). Longitudinal growth in 400 students substantiated that it is not a linear process of acquiring critical thinking but a non-linear process best modeled by a quadratic model of growth with superior fit to linear models (ΔAIC = −45). Cultural context strongly moderated findings, with incrementally stable progress for East Asian students and diverse patterns moderated by rhetorical heritage for Middle Eastern students. While acknowledging these contributions, this research recognizes methodological constraints including the single-institution data source limiting generalizability to other EAP contexts, the observational study design precluding causal inference about task effects, and the computational text analysis approach potentially missing nuanced aspects of critical thinking that require human interpretation despite demonstrating good reliability. Future research is encouraged to conduct multi-institutional collaborative studies with experimental designs, incorporate oral discourse analysis, and create AI-supported personalized task systems for individual learning trajectory optimization according to proficiency levels and cultural backgrounds.

Footnotes

Ethical Considerations

This study did not involve human participants and did not require ethics approval.

Author Contributions

Xiaoning Zhang conceived and designed the study, collected and analyzed the data, and wrote the manuscript.

Funding

The author received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

The data that support the findings of this study are included within the article.

Permission to Reproduce Material From Other Sources

Not applicable. No material from other sources was reproduced in this manuscript.