Abstract

Self-assessment of support needs is a relatively new and under-researched phenomenon in domiciliary aged care. This article outlines the results of a comparative study focusing on whether a self-assessment approach assists clients to identify support needs and the degree to which self-assessed needs differ from an assessment conducted by community care professionals. A total of 48 older people and their case managers completed a needs assessment tool. Twenty-two semi-structured interviews were used to ascertain older people’s views and preferences regarding the self-assessment process. The study suggests that while a co-assessment approach as outlined in this article has the potential to assist older people to gain a better understanding of their care needs as well as the assessment process and its ramifications, client self-assessment should be seen as part of a co-assessment process involving care professionals. Such a co-assessment process allows older people to gain a better understanding of their support needs and the wider community aged care context. The article suggests that a co-assessment process involving both clients and care professionals contains features that have the capacity to enhance domiciliary aged care.

Keywords

Introduction

Client self-assessment of support needs is a relatively novel phenomenon in domiciliary aged care (Abendstern, Hughes, Clarkson, Tucker, & Challis, 2011; Challis et al., 2009; Griffiths, Ullman, & Harris, 2005). To date, there is limited evidence regarding the role and effectiveness of such a self-assessment process within a domiciliary aged care context. This article presents the findings of a pilot study that investigated (a) whether such a self-assessment approach assists older people to identify their care needs, (b) the degree to which self-assessed needs differ from an assessment conducted by professionals, and (c) whether the assistance of care professionals during the self-assessment process affects outcome scores. Following a short review of the literature, this article provides an introduction to domiciliary aged care assessment within the Australian context, outlines the aims of the project this study is based on, followed by a summary of the methodology and methods used, an overview of the findings, and their discussion. The article suggests that while a co-assessment approach has the potential to assist older people to gain a better understanding of their health and social care needs as well as the assessment process and its wider ramifications, client self-assessment should be seen as part of a co-assessment process involving both clients and care professionals. The article outlines a range of issues that need to be systematically addressed by researchers to gain a better understanding of the utility of co-assessment within a domiciliary aged care context.

Review of the Literature

Self-assessment is increasingly relied on by policy makers and care professionals in a range of health and human services settings; it is used, for example, to assess the eligibility of clients or to determine their support needs (Griffiths et al., 2005). However, the usage of self-assessment methodologies within a community aged care context is a more recent phenomenon and there is little research evidence to inform policy or practice. Griffiths et al. (2005) conducted a major review of the literature focusing on self-assessment within an aged care context. The authors highlighted major gaps in the evidence base on self-assessment. They concluded that self-assessment should not be seen as a replacement of a professional assessment but rather as a supplement to generating a more holistic perspective. However, their study did not yield any examples of needs-focused self-assessment within a community aged care context.

In the United Kingdom, where client self-assessment has become part of a larger personalization agenda (Xie, Hughes, Sutcliffe, Chester, & Challis, 2012), self-assessment has been piloted and evaluated in 11 English authorities (Abendstern et al., 2011; Challis et al., 2009; Challis et al., 2008). The evidence that can be derived from this pilot has limitations that are commonly found in social care implementation studies (limited sample sizes, uneven implementation of the intervention in pilot sites including differences regarding the definition of self-assessment, and self-selection of participants, among other things) and that are associated with the usual contextual constraints. Findings from this evaluation suggest that users had no preference when it comes to case manager-led assessment or self-assessment. As long as they were conducted face-to-face, users were extremely satisfied with either option (Challis et al., 2009; Challis et al., 2008). However, users of online self-assessment found the experience less satisfying than either face-to-face option (for a contrasting view, see Purdie, 2003). Moreover, people from a “British Asian” background, people with cognitive issues, and those who rated their health “less than very good” found self-assessment more difficult than other users (Challis et al., 2009; Challis et al., 2008). People with “low mood” and males found the process less satisfactory but not more difficult (Challis et al., 2009; Challis et al., 2008). In terms of its application, the evaluation team found that self-assessment requires appropriate targeting and a consensus within service provider organizations as to what the process should achieve, how it is to be used, and how it is to be integrated into the existing service context (Abendstern et al., 2011). The overall conclusion of the evaluation team mirrors that of Griffiths et al. (2005) suggesting that self-assessment adds a client perspective and “appears to have greatest utility when it complements existing processes, rather than to substitute them” (Challis et al., 2008). The authors further suggest that self-assessment benefits from the presence of a mediator or facilitator and/or a staff member to translate the “assessment into an appropriate response” (Challis et al., 2008). What remains unclear from this literature is whether the involvement of such a mediator (in many instances, a case manager) in the client self-assessment process influences the outcome score.

Context, scope, and role of self-Assessment in Australia

The Australian government is currently in the process of converting the conventional community aged care packages into consumer-directed care packages. This transition is to be completed during the 2014-2015 financial period. While older Australians will have greater control over, and input into, the decision making process underpinning their care arrangements, this does not extend to the assessment process. However, this reform agenda did not affect the policy context in which the research was being conducted.

Within the policy context that framed the research, assessment of community aged care needs and resource allocation decisions typically involved three steps:

The Australian Government delegated to Aged Care Assessment Teams (ACATs) the responsibility of approving people for subsidized care under the Aged Care Act 1997. According to the guidelines issued by the Department of Health and Ageing (DoHA), ACATs are to utilize screening tools measuring medical, physical, social, and psychological needs to determine whether an older person is eligible for a low care or high care Commonwealth-funded aged care package. In practice, tools are used on a “as needed” basis and only some of the tools are systematically used with all clients (Moore, Haralambous, & Xiaoping, 2009). Following ACAT approval, applicants are placed on a waitlist from which they are selected by service provider agencies.

Care packages are held and administered by community aged care providers. Once a package becomes available, the service provider agency reviews the waitlist. Clients that are not within the service provider’s target group are screened out. Subsequently, client needs (low/medium/high) are reviewed against the resources the agency has at its disposal (i.e., type of care package that is available, financial resources, case management skills). If more than one match is identified, a person’s priority rating and length of time on the waitlist is taken into account before an offer is made. In other words, during the client selection process needs-based priorities established by ACATs are re-ordered by the operational priorities of service providers (Moore et al., 2009).

While the initial ACAT assessment is intended to provide an indication regarding resource needs and inform the development of a care plan by community aged care providers, this is only, to a limited degree, the case. Service providers tend to make unsystematic use of ACAT assessment data. A Victorian study suggests that less than 50% of Victorian service providers routinely use the health and social context assessment provided by ACATs to allocate resources (Moore et al., 2009). Indeed, service providers often rely on an informal resource allocation process that takes into account the overall budget position of the agency. This process determines how much of a package is to be retained by the agency for overheads and other allocations, how much is passed on to a client to pay for direct services, and how much is to be placed in a pool of funds to cross-subsidize other clients. To make resource allocation decisions, case managers often use their expert opinion to re-assess client needs against a framework of operational imperatives and the ill-articulated needs of other clients within the organization.

Researchers have argued for some time that this amalgam of client needs and operational imperatives not only compromises the needs-led quality of the assessment but also undermines the whole assessment process by replacing the validated measures at its core with an expert option assessment based on subjectively defined notion of “need” (Caldock, 1994; Ellis, 1993; Hardy, Young, & Wistow, 1999; Richards, 2000); unsurprisingly, it has been found that this kind of approach leads to inconsistent care outcomes (Caldock, 1994; Ellis, 1993; Hardy et al., 1999; Richards, 2000). Self- or co-assessment approaches harbor the promise to address some of these issues. While experiments with self-assessment in community aged care have shown some promise in the United Kingdom (Abendstern et al., 2011; Challis et al., 2009; Challis et al., 2008), in Australia self- and co-assessment approaches have not been investigated within this context.

About the study

This pilot study was designed to explore the application of self-assessment within an Australian community care context. In this study, the self-assessment process substituted the re-assessment process conducted by service providers. Its role was both instrumental and didactic. The aim was to introduce a simple, coherent, and transparent system that could be used as an indicative measure of a client’s needs

Method

The research questions addressed by this study were the following:

To systematically explore these questions, we used a mixed-methods evaluation involving a self-assessment survey comparing the assessment scores of older people with those of “expert” aged care case managers, semi-structured interviews, as well as demographic data and an audit tool. The demographic data were collected as part of a larger project evaluation focusing on a consumer-directed care project (Ottmann, Laragy, & Allen, 2012).

Nature of the Intervention

The self-assessment process was evaluated as part of a larger research project (Ottmann et al., 2012). Participants were asked by their case managers to complete the self-assessment questionnaire to assess their perceived level of need for seven domains (Question 1 [Q1] meeting personal care needs, Question 2 [Q2] meeting nutritional needs, Question 3 [Q3] practical aspects of daily living, Question 4 [Q4] physical & mental health & well-being, Question 5 [Q5] relationships & social inclusion, Question 6 [Q6] choice & control, and Question 7 [Q7] risk) by associating their condition with given statements (e.g., “I need help with doing some things around the home”). In addition, their case managers were asked by the research team to complete the same questionnaire assessing their client’s needs. Case managers were instructed to support their clients in this process only if required. The case managers had an in-depth knowledge of the needs of these clients as they had been assisting them over a period of at least 6 months prior to the commencement of the study. Case managers were asked to forward a copy of the completed assessment forms to the research team.

Moreover, case managers were instructed to use the scores generated by this co-assessment process as the basis for a discussion with their clients. Scores were to be compared and differences mediated, leading to a potential revision of scores. In addition, this assessment-focused discussion was to take into account contextual factors influencing the client’s needs not captured by the assessment tool. The aggregated score was used to determine the resource to be allocated to clients to purchase services to meet their assessed needs. The allocated amount was proportional to their level of need (e.g., the greater their care needs the higher the amount of funds allocated). The funding level could be adjusted by conducting a re-assessment. Clients could query the outcomes of self-assessment outcomes by submitting a complaint to management requesting a review of the process. The process was to be repeated whenever a client’s support needs would change significantly.

The template underpinning the self-assessment tool used in this study was developed by In Control UK and consisted of the Self-Assessment Questionnaire 1 (Older Adults-RAS4). The template was adapted to the Australian context making use of a coproduction process (Ottmann, Allen, Laragy, & Feldman, 2011) involving 13 community aged care recipients and their carers as well as seven case managers involved in the larger evaluation. The self-assessment tool was introduced to these two groups. Its members deliberated over the utility of its content and submitted requests for changes to the researchers who moderated the groups. Changes were incorporated until the assessment form was endorsed by both groups.

Data Collection

Participants formed part of a larger consumer-directed care evaluation (Ottmann et al., 2012). Participants were recruited between July and October 2010. Case managers provided around 600 eligible community aged care clients with information regarding the larger project and asked interested parties for permission to forward contact details to the research team. A total of 87 participants enrolled in an intervention group at baseline, which represents an uptake of approximately 14.5%.

Semi-structured interviews transcripts, demographic data, and assessment data were linked by means of the project identification number. Only 22 semi-structured interviews (out of a total of 56 conducted as part of the larger study) could be linked to the assessment and demographic data. The remaining 23 participants withdrew from the study and 3 were too unwell and could not be interviewed. This attrition rate of 46.0% is substantially higher than that of the larger study (38.4%). This is probably due to the fact that this project attracted a disproportionately high number of carers.

Data Analysis

Of 64 completed records, 16 were completed by one party (participant or case manager) only and were removed, leaving 48 completed records. The weighted outcomes captured by the assessment forms were translated into scores on linear 4- or 5-point scales. It is important to note that these scales express a wide spectrum of care needs ranging from minimal or no assistance required to assistance required with most activities of daily living. Hence, a divergence of one level on the scales implies markedly different care needs.

To explore the overall (total care need scores) agreement between participant and case manager samples, a Bland–Altman plot was generated. Altman and Bland (1983) suggested an approach to investigating the magnitude of agreement between two methods of assessment involving graphic presentation and straightforward calculations (Altman & Bland, 1983). The Bland–Altman plot charts the difference in participant and case manager assessments on the vertical axis against the average of the two assessment scores on the horizontal axis. The average of the two assessments for each individual is considered a better estimate of the actual support need than either assessment alone. Hence, the Bland–Altman plot enables a visual inspection of the association between the difference in assessments and the magnitude of support needs. We also computed Spearman’s to examine the magnitude of correlation between the two assessment scores. In addition, to analyze the inter-observer agreement, we calculated the Kappa Statistic for each of the domains (Viera & Garrett, 2005). The Kappa Statistic is an appropriate method when analyzing inter-observer variation of nominal and ordinal categorical data when two or more observers use the same measurement technique. Table 1 provides a guide of how Kappa scores can be interpreted. To analyze patterns in demographic data, we conducted

The study was approved by Deakin University’s Human Ethics Committee.

Findings

The following section presents the findings of the study. A demographic overview of participants is provided before describing the assessment form data and the degree of agreement between the two samples. Finally, the key themes derived from the semi-structured interviews are introduced.

Participants

The sample of participants (

Comparing Aggregate Client and Case Manager Scores

A total of 48 assessment forms were completed by both client and case manager. Aggregate scores consisted of a total of 335 individual ratings where differences could have occurred. Overall, they were closely matched. The mean scores generated were 19.9 (clients) and 20.3 (case managers) out of a possible total of 32. This suggests that most participants required at least some assistance (e.g., some assistance with meals preparation, laundry, shopping, etc.) with their activities of daily living. A total of 23 identical and 25 diverging aggregate scores were recorded.

A scatterplot of aggregate care needs assessment scores generated by participants and case managers is presented in the left hand section of Figure 1. The 45 degree slope (the line of equality) represents perfect agreement between the two assessments. If all points in the scatterplot were on the line of equality both response groups would be in perfect agreement. The right hand section of Figure 1 features a Bland–Altman plot of

Scatterplot of total care need scores assessed by participant against case manager, with the line of equality indicated (left); Bland–Altman plot of difference in total care need scores (participant assessment minus case manager assessment) against the mean of the two assessments (right).

Aggregate score differences ranged from −7 to +6 (negative numbers denote that the clients assessed their needs lower than the case manager). A total of 15 aggregate client scores were lower than the scores generated by their case managers and nine client scores were higher than their case manager scores. Two client/case manager pairs differed in the way they assessed needs but arrived at the same total score. Of the assessments resulting in different scores, 11 differed by one point (−1 or 1), 6 by two points (−2 or 2) and 7 differed by more than two points.

Comparing Individual Domain Scores

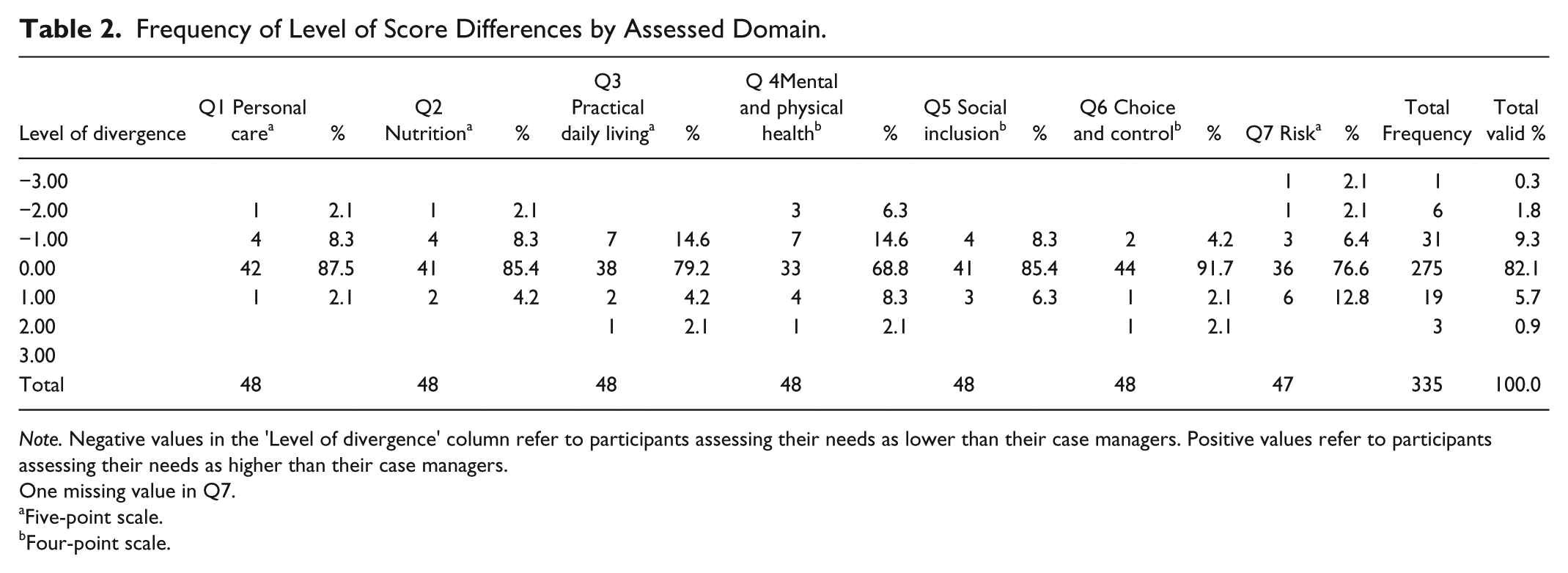

Overall, identical client and case manager scores constituted 82.1% of all scores. Table 2 demonstrates that client and case manager scores were closely matched for the domains of Q1 (meeting personal care needs), Q2 (nutritional needs), Q5 (relationships and social inclusion), and Q6 (choice and control) with identical scores making up between 85.4% and 91.7% of the scores. The range of the score differences was typically between +1 and −1 level on the 4- or 5-point scales. A score difference greater than 1 was produced on three occasions.

Frequency of Level of Score Differences by Assessed Domain.

One missing value in Q7.

Five-point scale.

Four-point scale.

The domains of Q3 (practical aspects of daily living), Q4 (physical and mental health and well-being), and Q7 (risk) differed more substantially with identical scores making up between 68.8% and 79.2% of the total scores in each domain. In other words, more than 20% of clients produced scores that differed from those of their case manager. The domain of Q4 generated the largest percentage (31.2%) of differing scores. Also, Q4 produced the highest number of scores (4) differing by more than one level. Q7 was the only domain in response to which clients were more likely to score their needs higher than the scores produced by their case managers.

Kappa Statistics were calculated to analyze inter-observer agreements by domain. The Kappa provides a “quantitative measure of the magnitude of agreement between observers” taking into account that some agreement occurs purely by chance (Viera & Garrett, 2005). A Kappa of 1 expresses perfect agreement, whereas a Kappa of 0 expresses an agreement equivalent to chance. Negative values express systematic disagreement between observers. The Kappa scores reflect the findings presented above. While the domains of Q1 (meeting personal care needs), Q2 (nutritional needs), Q5 (relationships and social inclusion), and Q6 (choice and control) generated Kappa scores greater than .8 (almost perfect agreement), Q3 (practical aspects of daily living), Q4 (physical and mental health and well-being), and Q7 (risk) produced Kappa scores signaling a more moderate agreement only. Table 3 provides an overview of the Kappa scores by domain.

Kappa Statistics by Assessment Domain.

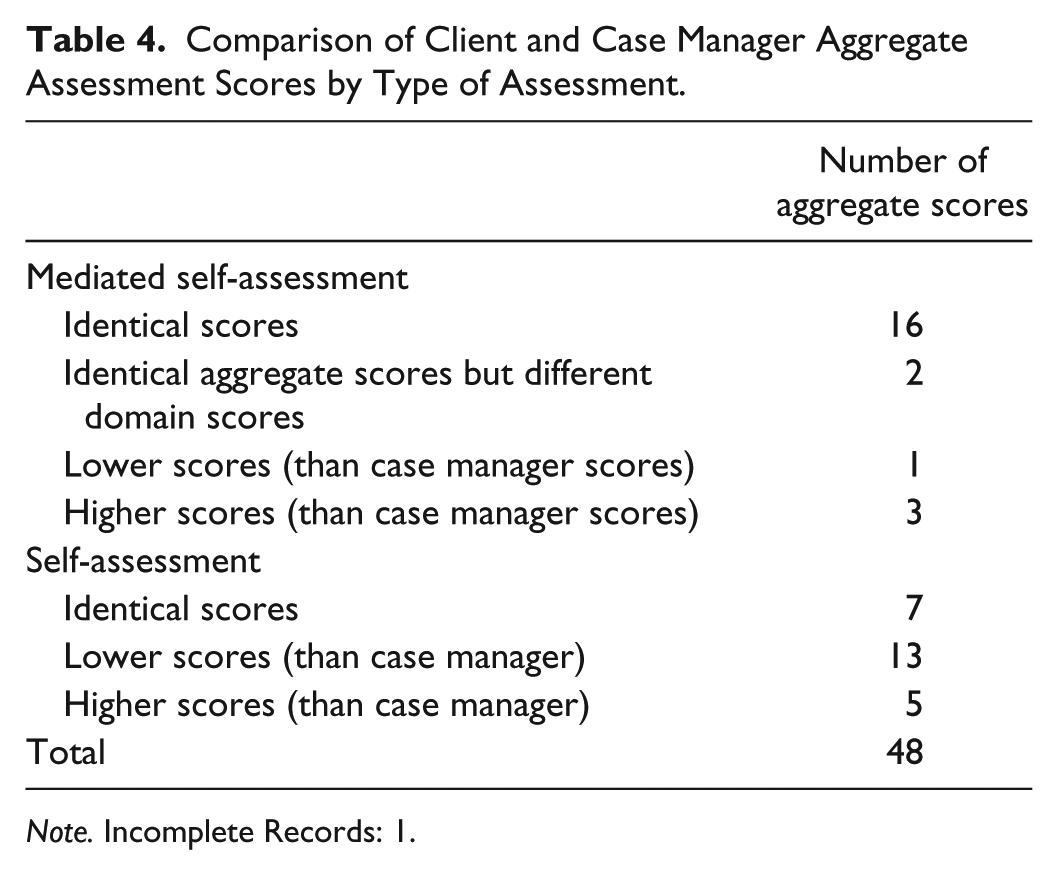

Assisted Self-Assessment Versus Un-Assisted Self-Assessment

A total of 20 clients (41.7%) received assistance with the self-assessment form from their case managers. With 4 exceptions, all of the scores of this subset of clients and case managers were identical. Two of the client self-assessment forms were completed by case managers and family carers. By contrast, only 7 of the 28 scores generated

Comparison of Client and Case Manager Aggregate Assessment Scores by Type of Assessment.

Semi-Structured Interviews

The qualitative aspects of this study focused on the client experience of the self-assessment process. Clients were asked whether they found the process useful. If they did, they were asked to explain why they thought the process to be useful. Of the 48 clients, 5 withdrew from the study due to illness, transfer into a residential aged care facility, or death before an interview could be conducted. A further 3 were too ill to be interviewed. Of the remaining 40 clients, a total of 19 clients and 3 carers participated in a larger study that collected qualitative information about client satisfaction with the self-assessment process. Three carers responded on behalf of clients who were either too ill or cognitively impaired to be personally interviewed.

Half the participants (11) found the self-assessment process to be positive and helpful. Five of these had been assisted by their case managers. Clients explained that the process assisted them “becoming aware of what was available in terms of services and equipment” (SA020), raising issues they may not have thought about when asked about their own needs, and helped to clarify their expectations of what the service agency needed to know to deliver targeted services. Clients also thought that the assessment process gave the agency a clearer picture of their needs, what a “client can and cannot do” (SA020), and allows clients to compare and track changes in their needs over time (SA050). The process was described as “straightforward” (SA033) and “quite easy to do” (SA007). One client mentioned that she became aware that it was not in her interest to over-emphasize her capacity as this may reduce the amount of resources available to her (SA037).

The interview excerpts contained in Table 5 provide an overview of client responses regarding the utility of the self-assessment form and their experience of the overall co-assessment process.

Interview Excerpts Highlighting Client Experience and Utility of Assessment Process.

Two clients and two carers were either ambivalent about the experience or regarded the experience as negative. They regarded the process as “confusing” (SA002, SA014) due to the “terminology” used (SA014) or because “it did not cover” items such as medical issues (SA002). One client commented that the form was easy to complete but was not particularly helpful as it failed to highlight the client’s needs (SA046). Two of these clients and one carer had received the assistance of a case manager to complete the form. One client indicated that she would like to repeat the self-assessment process because she was not in the right “frame of mind” when she was first asked to complete it (SA022).

Five participants were unable to recall the self-assessment process. Four of these clients had been assisted by their case managers to complete the form. Three of these had cognitive impairments. Two carers who were interviewed on behalf of a client were unable to answer questions regarding the self-assessment form as they had not participated in the assessment process.

In most cases, disagreements between clients and case managers regarding assessment scores could be resolved by means of a reflective discussion. None of the clients made use of the complaints mechanism demanding a review of the process. Only one case manager reported that she was unable to reach an agreement with a client concerning an assessment score differential of one level.

Discussion

This section features the limitations of this study followed by discussion of the level of agreement between client and case manager scores, client under-assessment of their care needs, assisted self-assessment, and the experience and utility of the co-assessment process.

Limitations

The most important limitation of this study is its relatively small sample size. This limitation renders this article’s findings tentative and in need of confirmation. While the findings of the present study largely resonate with research conducted outside Australia, it is desirable to validate the findings of this study with research involving a larger sample. Indeed, a larger mixed-methods follow-up study (projected

Level of Agreement Between Client and Case Manager Scores

Overall, client assessment scores and case manager assessment scores resulted in a high level of agreement. In part, the considerable level of agreement between clients and case managers is due to the fact that case managers assisted almost half of the clients in this sample. Also, the self-assessment form converts a wide range of client needs (no care needs to assistance with all activities of daily living) into narrow 4- or 5-point scales. This means that even a score difference of one represents a markedly diverging representation of a client’s care needs. Furthermore, it is important to bear in mind that score divergences are potentially amplified when scores are weighted. Indeed, although the confidence interval (−3.96 to 3.23) appears relatively narrow at first sight, the width of the interval translates into a 22.5% score difference of total possible scores. Within the context of the larger project, this translates into a difference of around Aus$1,350 per annum (low care package) and Aus$3,600 (high care) in direct services funds for clients located at the opposing outer limits of the confidence interval. Hence, while the statistics demonstrated considerable agreement, their serious impact in real terms is serious. Given the magnitude of dollar value differentials the interval represents, a much narrower confidence interval would have been desirable.

Three of the seven domains (Q3—practical aspects of daily living, Q4—physical and mental health and well-being, and Q7—risk) were less closely matched generating Kappa scores between .56 and .7. The domain of Q4 stands out as it only generated 68.8% of equal scores (Kappa = .56). Of these identical scores, 16 were generated with the assistance of case managers. Hence, only slightly more than half of the un-assisted client and case manager assessments for Q4 resulted in identical scores. This clearly demonstrates the value of obtaining both client and care professional perspectives (see also Abendstern et al., 2011; Challis et al., 2009; Griffiths et al., 2005).

The domain of physical and mental health & well-being (Q4) is clearly the domain that is most likely to generate differences in the way clients and case managers view client needs. This is also exemplified by the level of score divergences. While on most occasions (14.9% of all cases) clients self-assessment scores varied by one evaluation level, on 10 occasions client assessments differed by more than one level suggesting profound differences in the interpretation of needs between case managers and clients. The domain of Q4 attracted the highest proportion of score differences greater than one level. More research is required to illuminate the factors that exacerbate scoring variance in client self-assessments focusing on physical and mental health and well-being.

Client Under-Assessment of Needs

The findings presented in this article resonate with studies suggesting that older people have a tendency to assess their own care needs as lower than assessed by their case managers (Challis, 2008). On average, client aggregate scores were 0.36 lower than case manager scores. On 11.4% of all occasions clients assessed their needs lower and on 6.6% higher than their case managers. This could indicate that clients felt more independent and had a more positive view of their health and well-being than their case managers. Indeed, a growing body of research suggests that for a number of reasons older people tend to rate their own health and well-being higher than younger people (Fastame & Penna, 2012). Interestingly, however, the domain Q7 (risk) produced an outcome that contradicts this hypothesis in as much as diverging client’s self-assessments scores tended to be higher than those of their case managers. Perhaps unexpectedly, given the research on the impact of the social desirability effect on the self-assessment of older people (Fastame & Penna, 2012), on 22 occasions, clients assessed their needs higher than evaluated by their case managers. The client interviews seem to suggest that at least some of these clients were dissatisfied with the level of resources available to them. This may have prompted them to self-advocate and assess their needs higher than their case manager’s score.

Assisted Self-Assessment

This study suggests that case manager-assisted client self-assessment gives rise to more identical assessment scores, whereas un-assisted client self-assessment leads to greater variance in assessment scores. This scoping study did not allow us to determine the degree to which differences in opinions emerged in case manager-assisted self-assessments and how such differences were voiced and mitigated. Future research should focus on how potential differences in needs assessments are mitigated during a case manager-assisted client self-assessment process. Furthermore, we were unable to reliably ascertain the degree to which clients had assistance from family caregivers when completing the self-assessment questionnaire. Future research should establish the degree to which the assistance of family caregivers has an impact on client assessment scores. What is more, follow-up discussions with case managers revealed that case manager assistance was not necessarily a result of client need but an outcome of a case manager’s approach to professional practice. For example, one case manager provided assistance to most of her clients despite the fact that they were higher functioning clients. This led us to the conclusion that many clients who actually did not need an assisted self-assessment process were provided with assistance. More research is required to illuminate the relationship between client need and case manager assistance.

Utility of and Experience of Co-Assessment

Although the co-assessment process used in this study did not provide a detailed clinical assessment, it served well as a screening tool able to provide a rough indication of the support needs of a person. The self-assessment element allowed clients to make a dignified acknowledgment of some limitations, without capitulating to a full admission of functional losses. This invited case managers to discuss the assessment of needs and its consequences and, thus, played an important didactic role. Indeed, in about half of the clients participating in the qualitative part of the evaluation, the co-assessment process raised awareness of the assessment process, its criteria, and available service options, or helped to clarify client needs. Further research is required to validate these outcomes.

The qualitative evaluation conducted seems to suggest that case manager-assisted assessment was slightly more positively regarded. Whereas five clients who received case manager assistance during the assessment process commented positively on the impact of the self-assessment process, three provided negative or ambivalent feedback. While the sample size is clearly too small to be representative, the outcome resonates with larger studies conducted in the United Kingdom (Challis et al., 2009; Challis et al., 2008).

Although the assessment tool used in our study was relatively comprehensive, some clients required case manager assistance to complete the form. Particularly people with cognitive issues, those facing significant challenges regarding their health, or those who were uncomfortable with the English language required extensive case management support. This echoes the findings of Challis et al. (Challis et al., 2009; Challis et al., 2008). Interestingly, bi-lingual case managers were unable to translate the self-assessment questions to their clients. This suggests that questionnaires have to be translated into the required languages and need to be sensitive to cultural differences.

This pilot has important ramifications for the follow-up study. It suggests that the role of carers and care professionals during the client self-assessment process needs to be carefully mapped capturing the level of involvement and assistance provided. Moreover, a participant observation component would be useful to elucidate the interaction between clients and case managers during an assisted self-assessment highlighting how differences in opinion, as far as they occur, are mediated. Also, the intervention needs to be carefully implemented to ensure consistency. Finally, participating care professionals require a thorough introduction to the research process to appreciate the need for data integrity and adherence to implementation guidelines.

Conclusion

This pilot study suggests that while a co-assessment approach as outlined in this article has the potential to assist older people to gain a better understanding of their care needs as well as the assessment process and its ramifications, client self-assessment should be seen as part of a co-assessment process involving care professionals. The pilot study suggests that it might be valuable to obtain information regarding care needs from both clients and care professionals. The study also suggests that direct case manager assistance in the client self-assessment process is much more likely to generate identical scores than an un-assisted client self-assessment. More research is required to explore how differences between client and case manager assessment scores are being mitigated within this context. While the participants in this study tended to score their support needs lower than their case managers, this was not the case when focusing on the domain of “risk.” A co-assessment process where clients and case managers determine the client’s care needs independently and moderate differences in a subsequent discussion contains features that are likely to benefit domiciliary aged care clients.

Footnotes

Acknowledgements

We would like to acknowledge the people who generously donated their time to this project. We also thank Brotherhood Community Care Services, Uniting

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: the project received financial support from the Australian Research Council, Helen Macpherson Smith Trust, Percy Baxter Charitable Trust, B. B. Hutchings Bequest, and the John William Fleming Trust.