Abstract

There is growing use of communication technology in Aotearoa New Zealand. How it is used between midwives and pregnant people is unknown. Surveys are ideal for gathering information when there is little known of a phenomenon. Aligning questions to a midwifery informed framework provides an innovate approach to explore this issue. To assess reliability and validity of questions for two online surveys using a tool created for an expert advisory group of midwives with experience in survey design and midwifery practice. An innovative approach is taken to validate questions for two online surveys using an expert advisory group of seven midwifery academic researchers with experience in both quantitative and qualitative research designs, and midwifery practice. The group were asked to rate items using a 4-point rating scale ranging from strongly agree to strongly disagree. Analysis of the scoring was undertaken using Content Validity Index, Cronbach’s alpha coefficient and review of comments by the group. Quantitative scoring of both survey instruments were valid and reliable. The overall Content Validity Index score was 0.92 (midwives) and 0.93 (pregnant people). The overall Cronbach’s alpha coefficient score was .78 (midwives) and .83 (pregnant people). Qualitative comments reinforced the validity and reliability of survey questions. An innovative approach was taken in assessing the reliability and validity of two online surveys using a midwifery expert advisory group and a midwifery framework to situate the surveys within a midwifery body of expertise and knowledge. The comments made by midwifery experts provided an extra layer in the validation of survey instruments using Content Validity Index and Cronbach’s alpha coefficient scoring. Creating a tool for validating questions developed by midwives for an expert group of midwives recognises the potential patriarchal roots of knowledge production and dissemination and enables marginalised voices to be heard.

Keywords

Introduction

The continuity of care model of midwifery practice within Aotearoa New Zealand (NZ) is unique. It is based on partnership which involves the midwife (known as a Lead Maternity Carer), the childbearing person and their family working together sharing knowledge, decision making and trust to enable the best outcomes for mother and baby (Guilliland and Pairman, 1994). This model of care was legislated within Aotearoa New Zealand with the passing of the Nurses Amendment Act in 1990 which enabled midwives to practice in any setting whether it be home or hospital without medical supervision (Department of Health, 1990). Care is provided by the midwife from early pregnancy, during labour and birth through to 6 weeks postpartum following the birth of the baby (New Zealand College of Midwives, 2015) and is recognised internationally as providing one of the best systems of maternity care in the world (Ministry of Health, 2013 ). There were 58,659 live births registered in Aotearoa New Zealand in 2021 (Stats, 2022), with 93% of childbearing people receiving care from a Lead Maternity Carer midwife (LMC) (Ministry of Health|Manatū Hauora, 2022). Midwives as LMC’s make up 38% of the midwifery workforce in Aotearoa NZ (Midwifery Council of New Zealand|Te Tatau o te Whare Kahu, 2022).

There is growing use of communication technology, in Aotearoa NZ. Ninety-one percent of adults aged between 18 and 34 years own a smart phone (Research New Zealand, 2015) and 89% were active internet users (Hughes, 2019). More often childbearing people are connecting with their midwife through using communication technology during their pregnancy. What is unknown however, is how this technology is being used between midwives and pregnant people to ensure quality maternal and newborn care within a continuity model of midwifery care.

Surveys offer an opportunity to gather information on attitudes, beliefs, opinions, behaviours, and characteristics of an area of interest and therefore are ideal to use when little is known of a phenomenon (Borbasi and Jackson, 2016; Safdar et al., 2016). There are many problems identified with instrument design such as questions which are poorly worded or vague, long, or with inappropriate response options (Sue and Ritter, 2007; Sullivan and Artino, 2017). Using an expert panel of reviewers with expertise in a particular topic area is one way to evaluate the reliability and validity of an instrument design (Davis, 1992; Lynn, 1986). Reliability refers to the consistency of survey responses over time, while validity refers to the extent to which the measurements of the survey provide the information needed to meet the study’s purpose (Tavakol and Dennick, 2011). While using a tool for validating question design within midwifery are not new (Jenkinson et al., 2021; Milne et al., 2016) it’s application within a midwifery continuity of care context, whilst aligning questions to an internationally recognised evidenced informed Quality Maternal and Newborn Care (QMNC) framework developed by leading global midwifery researchers (Renfrew et al., 2014) is less well known. The QMNC framework focuses on strengthening people’s capabilities and ensuring care is tailored to meet their needs. It does this through identifying what is needed within a healthcare system to provide high quality care (Renfrew et al., 2014). Communication technology is widely used throughout Aotearoa (Hughes, 2019; Research New Zealand, 2015), and communication is an important aspect of the midwife/pregnant person relationship within a continuity model of midwifery care (Midwifery Council of New Zealand|Te Tatau o te Whare Kahu, n.d.). Identifying what aspects of communication technology are working well and how or what communication technology services need improving upon will be important to ensure services are meeting the needs of the pregnant person. Using an expert advisory group of midwives with expertise in survey design and midwifery knowledge adds to the building of scholarship within the midwifery research community. It does this by situating the survey designed by midwives for midwives and pregnant people within a midwifery body of expertise and knowledge. Content Validity Index and Cronbach’s alpha coefficient provide a reliable way to critique the validity and reliability of the question design for use in online surveys with LMC midwives and pregnant people in Aotearoa New Zealand. This is validated further by qualitative comments provided by the EAG, to give robustness and certainty to the two survey instruments. The purpose of this paper is to discuss an innovative approach for assessing the reliability and validity of questions for use in online surveys and the contribution this makes to instrument validation within a midwifery context. It does this through asking midwifery experts, to use a tool alongside their review of questions for two online surveys.

Aim

To assess reliability and validity of questions designed for use in two online surveys to explore how communication technology is used between LMC midwives and pregnant people in Aotearoa New Zealand. The validation of questions was undertaken using a tool created specifically for use by a panel of midwifery experts with experience in survey design and midwifery practice. Analysis of the tool using Content Validity Index (CVI), Cronbach’s alpha coefficient (CAC) and comments by the expert midwifery group are used to ensure the survey instruments are both valid and reliable.

Method

Innovative method for validating surveys

An innovative approach taken to validating the two online surveys is demonstrated by adding qualitative rigour to what would otherwise be considered a quantitative approach. In so doing, ‘recognises the patriarchal and colonial roots of knowledge production and dissemination’ (Newnham and Rothman, 2022: 178) which is otherwise associated with quantitative survey designs. This is achieved through using an expert advisory group of midwives with experience in survey design and midwifery practice to rate questions used in a survey, along with providing comments to support their scoring. This gives voice to the expertise of midwifery knowledge and builds a community of scholarship within the midwifery domain, to inform midwifery research. The questions for the survey have been specifically designed by midwives, for midwives and pregnant people in Aotearoa NZ within the context of an internationally respected model of midwifery care. These questions were then validated by midwives using a tool to provide a level of expertise and rigour that would otherwise not be possible to achieve. Questions designed for midwives and pregnant people are aligned with a midwifery evidenced informed quality maternal and newborn care framework which was developed by midwifery researchers, and is the first such midwifery framework to be published in the Lancet journal (Renfrew et al., 2014). The development and validation of the survey questions are outlined below.

Development and validation of the survey questions

The development and validation of the two online surveys was carried out using a two-stage process. (1) Questions were developed from findings of an integrative literature review, mapped with the Quality Maternal and Newborn Care (QMNC) framework (Renfrew et al., 2014) and (2) a tool was developed for use with validating questions by an expert advisory group (EAG) of midwifery academics and analysed using Content Validity Index (CVI), Cronbach’s alpha coefficient (CAC) and review of comments made by the EAG. Content validity is the extent to which a study establishes a trustworthy cause and effect relationship between a treatment and an outcome (Pallant, 2016). It asks the question, ‘Does it accurately measure what you want it to?’ Cronbach’s alpha tests the reliability of the instrument asking the question, ‘Does it return the same or similar results each time it is used?’ The higher the Cronbach’s alpha, the greater the internal consistency (reliability) of the instrument (Pallant, 2016). A Cronbach’s alpha of greater than 0.8 is preferable.

Stage 1: Development of survey questions

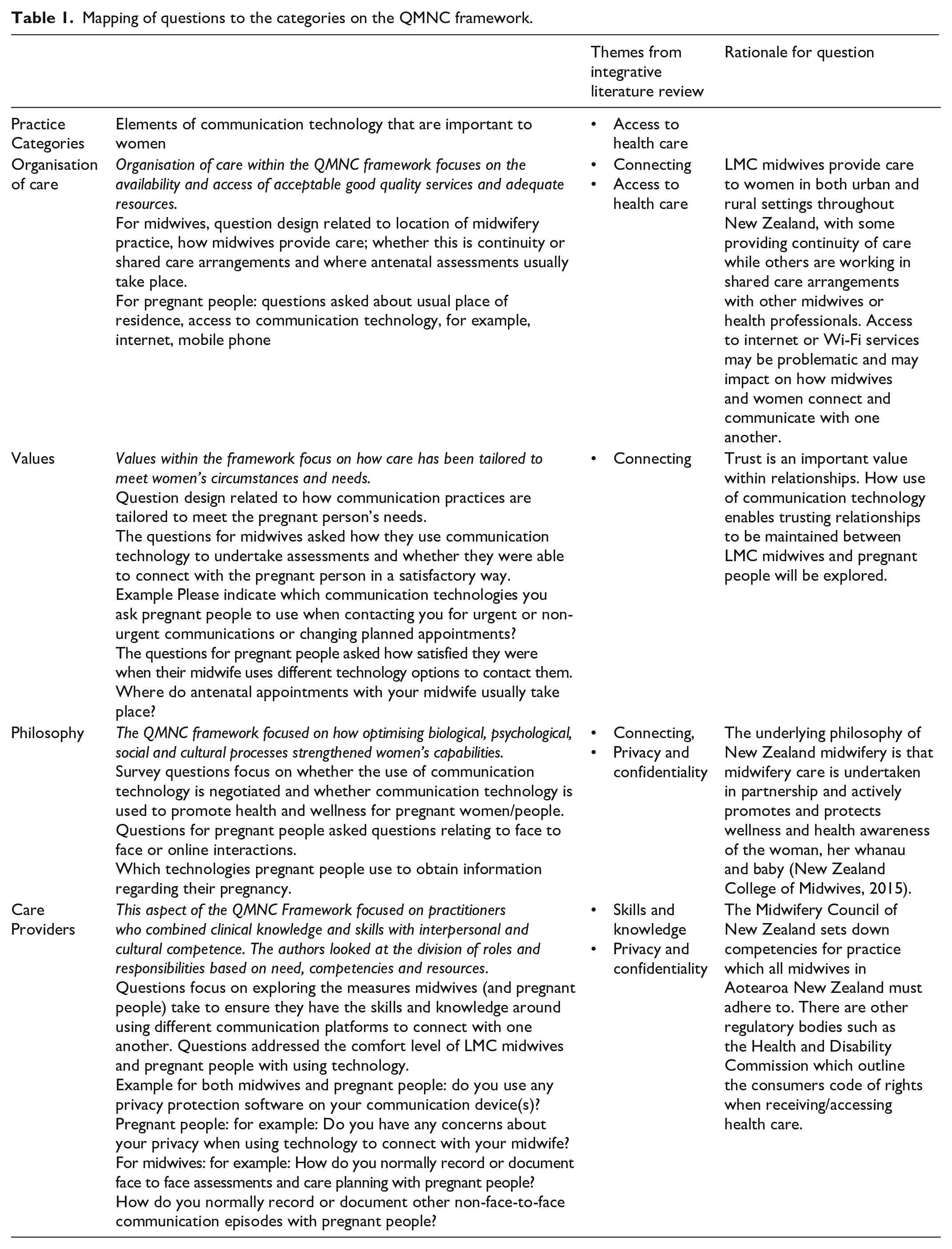

In stage 1, development of questions for the two online surveys were informed from findings of an integrative literature review conducted in preparation for the study (Wakelin et al., 2022) and then mapped with the QMNC framework (Table 1). The QMNC framework was selected specifically as it was developed by midwifery researchers for use within a maternity setting and offers an opportunity to explore how LMC midwives and pregnant people are using communication technology when communicating with one another within a continuity model of midwifery care. Questions were designed to sit within each of the four categories of the QMNC framework; organisation of care; values; philosophy and care providers.

Mapping of questions to the categories on the QMNC framework.

Organisation of care

The themes from the integrative literature review were mapped across the ‘organisation of care’ category related to ‘connecting’ and ‘access to health care’. Questions around location of care and access to care were addressed.

Values

Questions were designed to focus on how communication technology had been tailored to meet the pregnant person’s needs, and how satisfied midwives and pregnant people were with the way they each responded to one another using the various communication technology platforms.

Philosophy

To highlight the importance of communication and connection, the questions sought to investigate how communication technology is used to promote health and wellness for pregnant people. Relevant themes from the integrative review include connecting, privacy and confidentiality.

Care providers

The category ‘care providers’ is concerned with how practitioners combine clinical knowledge and skills with interpersonal and cultural competence. Questions were designed to explore measures that midwives and pregnant people would take to ensure they had the necessary skills and knowledge when using communication technology. The findings from the integrative literature review identified both midwives and pregnant women lacked skills when using communication technology and had concerns around privacy and confidentiality.

Stage 2: Validation of questions for use in the two online surveys:

A tool for validating questions is recommended to assess whether the development of survey instruments are both valid and reliable (Tavakol and Dennick, 2011). Content validity refers to how well an instrument measures the construct under study (Zamanzadeh et al., 2014). The validity of the instruments is discussed using the Content Validity Index (CVI). Polit et al. (2007) discuss the use of a CVI for individual items as well as the content validity of an overall scale. Experts are asked to rate each item using a rating scale to enable reviewers to assess each item separately (Davis, 1992). This is achieved through scoring obtained from each item within an instrument and can be used to evaluate the clarity of the question used in a survey instrument (Polit and Beck, 2006).

Reliability of a survey instrument refers to the consistency of measurement and whether the items of the scale are all measuring the same underlying construct (Pallant, 2016). Reliability of the two survey instruments is discussed using Cronbach’s alpha coefficient (CAC). Cronbach’s alpha coefficient is commonly reported on ‘as an indicator of instrument or scale reliability or internal consistency’ (Taber, 2018: 1284) and provides ‘a measure of the internal consistency of a scale and is expressed as a number between 0 and 1’ (Tavakol and Dennick, 2011: 53).

Once the questions for the survey were developed, the tool for validating questions was created and emailed to an expert advisory group (EAG) consisting of seven midwifery academic research colleagues with experience in both quantitative and qualitative research designs, and midwifery practise. An EAG consisting of between 3 and 10 reviewers is considered reasonable when reviewing a survey instrument (Lynn, 1986). Davis (1992) suggest using experts with experience in instrument construction techniques or with experience in a particular topic area as this ‘maximises the likelihood of having an instrument that is both well-constructed and content-valid’ (p. 194).

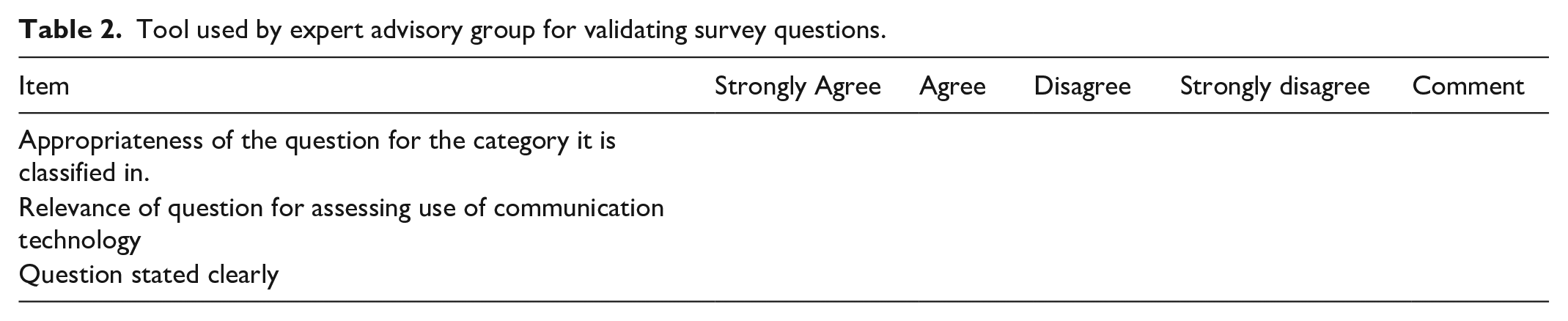

When deciding on what questions to retain in a survey, there seems to be some agreement to retain questions if reviewers reach agreement on questions rated as quite relevant or highly relevant (Davis, 1992; Lynn, 1986). For this tool, strongly agree – agree was used as an indication for retaining questions (De Castellarnau, 2018). A 4-point rating scale ranging from strongly agree to strongly disagree was used by the EAG to evaluate the level of agreement or disagreement with each of the survey questions. For the two instruments, reviewers from the EAG were provided with a tool for validating questions and asked to score each question based on three categories or items: (1) appropriateness of question, (2) relevance of question and (3) question stated clearly (Table 2). In determining whether the question was appropriate, relevant or stated clearly, scoring is based on whether reviewers ‘agree’ or ‘strongly agree’ with each item.

Tool used by expert advisory group for validating survey questions.

There are two ways of calculating the CVI score – one method which relies on universal agreement for each item between reviewers and the second, relying on an average item score (Polit and Beck, 2006). For the purposes of this tool an average item score was used alongside review of comments from the EAG. Addressing the validity of the question was undertaken in three ways. (1) An individual item score (i-CVI) was calculated by counting the number of reviewers who agreed/strongly agreed with each individual item and then divide the score by the number of reviewers, (2) a question score (q-CVI) was calculated by adding up the three individual item CVI scores for each question and dividing by three (this then gives an overall score for the validity of the question) and (3) an overall scale score (s-CVI) was calculated by adding up each of the overall q-CVI scores and dividing by the number of questions. This gave an overall instrument score. Polit and Beck (2006) comment that different approaches can lead to different values and therefore not always easy to calculate. To continue with the innovation of the method, a column for comments was added to the tool which would enable the EAG to provide feedback or comments on the wording of the question (Table 2). DeVellis (2017) recommend experts review the survey to rate the relevance of each question, to evaluate the clarity and conciseness of the questions and to provide further comments on other options that may have been missed. Using midwifery expert knowledge in this way, contributes to the validation of the survey instruments by giving a voice to midwives, and ensures questions are relevant and appropriate.

An individual item (i-CVI) score of 0.78 and above with six or more reviewers is considered acceptable (Davis, 1992; Lynn, 1986) while an overall scale (s-CVI) score of ⩾0.9 is considered acceptable (Polit and Beck, 2006). For this reason, any questions scoring below 0.78 for the i-CVI or 0.9 for the q-CVI and s-CVI have been highlighted in Tables 3 and 4.

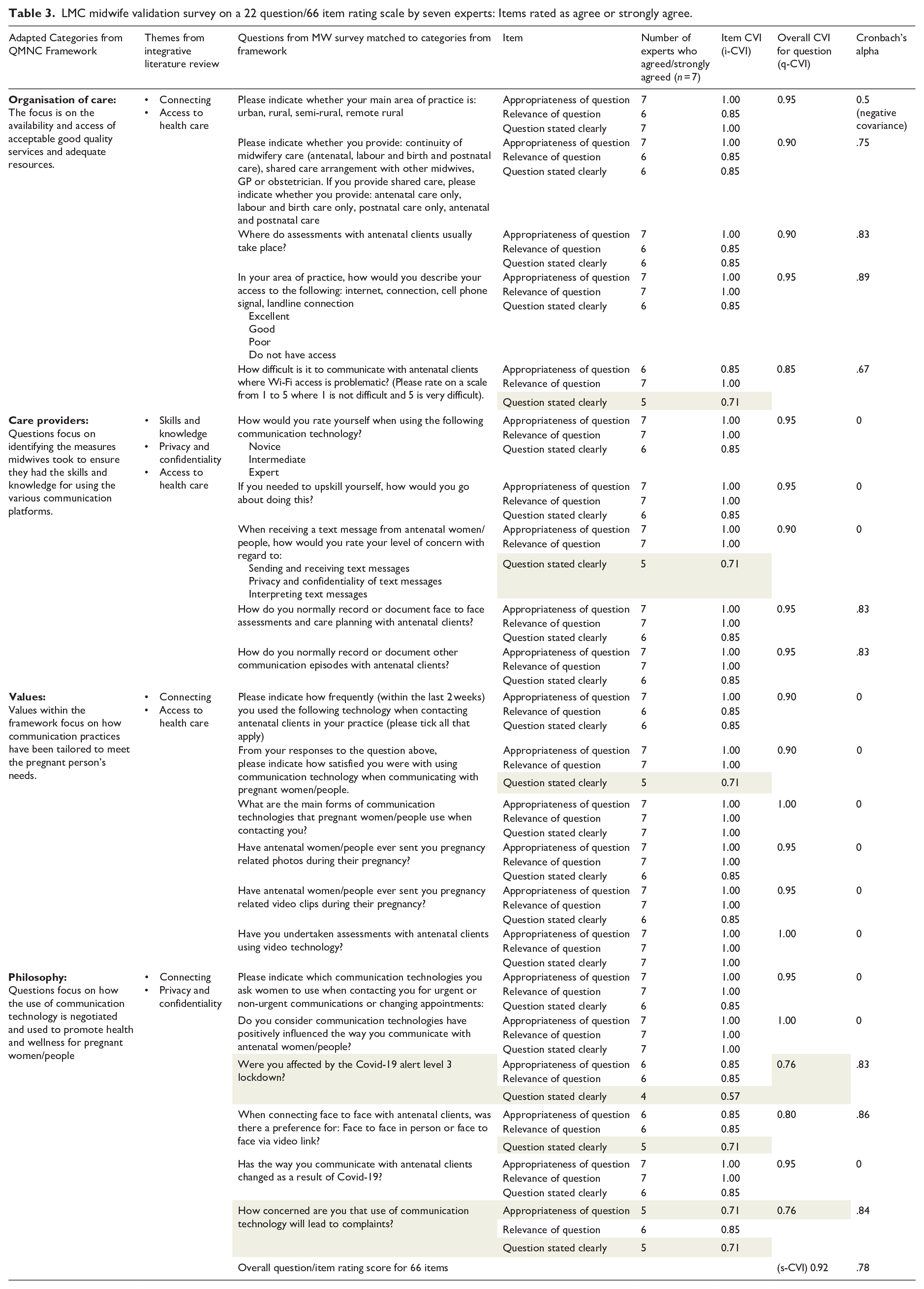

LMC midwife validation survey on a 22 question/66 item rating scale by seven experts: Items rated as agree or strongly agree.

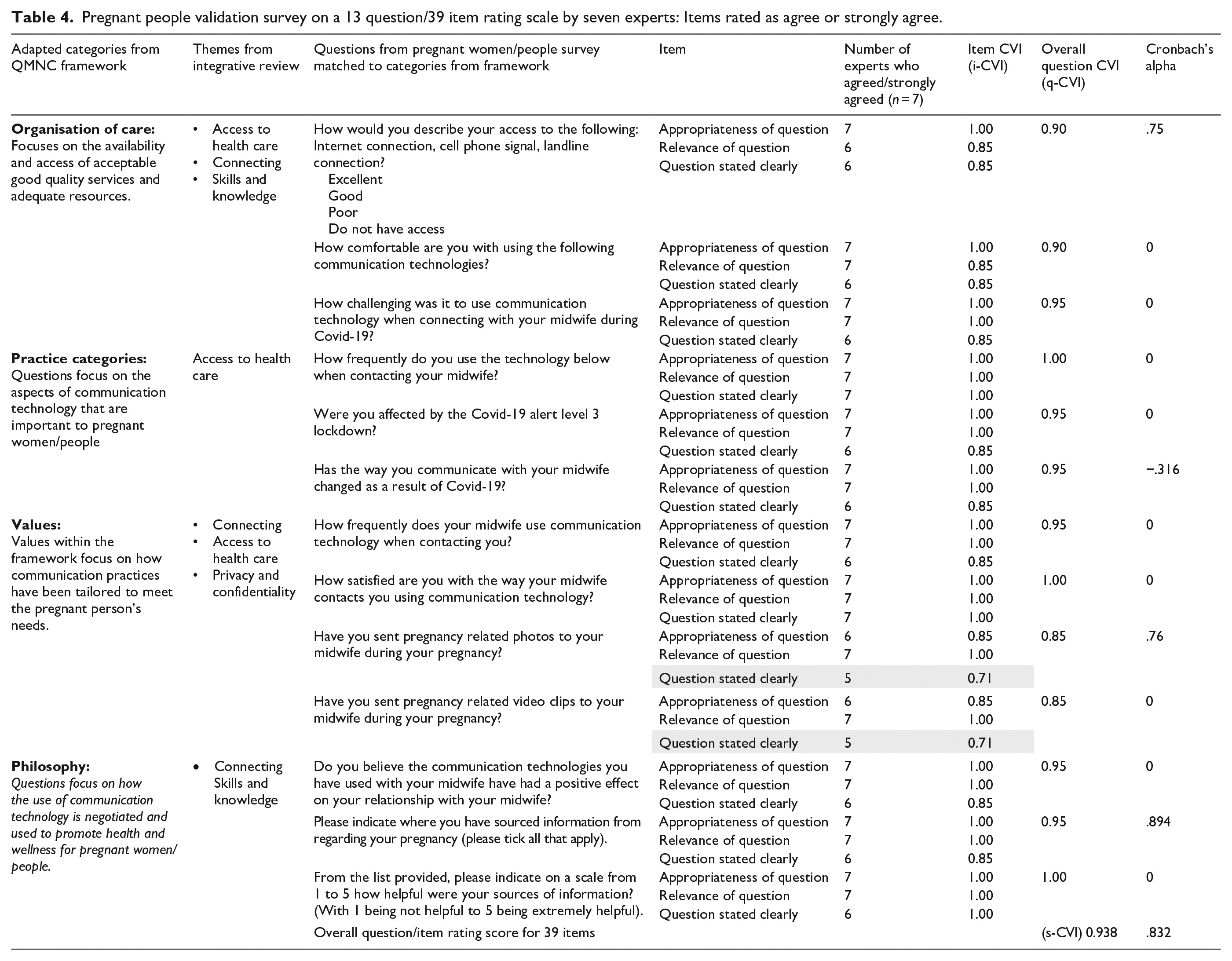

Pregnant people validation survey on a 13 question/39 item rating scale by seven experts: Items rated as agree or strongly agree.

The responses from the EAG were initially recorded into Excel and then transposed into SPSS version 27, for analysis. The initial plan to assess reliability was to use Cronbach’s coefficient alpha (CAC) which would provide an indication of the average correlation among the items scale (Pallant, 2016). When CAC was applied to the survey for LMC midwives and pregnant people respectfully, the scoring was more difficult to interpret possibly due to only having three items per question. Pallant (2016) suggests less than 10 items in a scale can result in low scoring. In view of this, using CVI in conjunction with CAC and comments from the EAG would provide a better overall analysis of results.

Results

The EAG were asked to review 22 questions from the survey developed for LMC midwives (Table 3) and 13 questions for the survey developed for pregnant people (Table 4). For each question, the EAG were asked to evaluate the question based on (1) the appropriateness of the category it was classified in, (2) the relevance of the question and (3) whether the question was stated clearly.

The survey developed for LMC midwives included 22 questions, with 3 items per question giving a total of 66 items. Seven items (i-CVI) from a total of 66 scored under the acceptable score of 0.78. The overall question (q-CVI) score for each question reached the acceptable level of ⩾0.9 for all but three questions where the range in scores were 0.76–0.85. The overall scale (s-CVI) score for all 66 items was 0.92. When Cronbach’s alpha coefficient was applied to the whole survey (66 items), an overall score of 0.78 was achieved.

The survey developed for pregnant people included 13 questions, with three items per question giving a total of 39 items. An item (i-CVI) score was given for each item, along with an overall question (q-CVI) score for each question. Only two items from a total of 39 items scored under the accepted score of 0.78. The overall q-CVI score for each of the questions reached the acceptable level of ⩾0.9 except for two questions, where a score of 0.85 was achieved. The overall scale (s-CVI) score for all 39 items was 0.938. When CAC was applied to the whole survey (39 items), an overall score of 0.83 was achieved. While Pallant (2016) suggests a score of 0.7 or above will provide reliability and validation for a question, due to the small number of items per question being measured, the accuracy is less clear.

Where scoring on CVI and CAC did not reach acceptable levels, comments and suggestions on wording made by the EAG enabled the question to be either reworded or removed altogether. For example, a question asked of pregnant people: Have you sent pregnancy related photos to your midwife during your pregnancy?

This required a yes or no response. If ‘yes’ was indicated, a following question would ask ‘how did your midwife respond’, with options provided such as ‘via text, phone call or not at all’. Following feedback from the EAG, it was decided to include a text box within the survey as this would enable participants to provide information if they had sent more than one pregnancy related photo.

Similarly, an initial question in the survey for midwives would require a yes or no response: Do you use any privacy protection software on your communication devices?

Following feedback and suggestions from the EAG, if ‘yes’ was indicated, participants would have an option to indicate in a text box any privacy protection measures that they used.

Discussion

Using a tool to validate questions for use in two online surveys by an expert advisory group of midwives was a first step in a larger mixed methods multiphase study which seeks to explore how communication technology is used between LMC midwives and pregnant people in Aotearoa New Zealand.

Polit and Beck (2006) discuss two concepts behind the development and validation of instruments; (1) the developer has conceptualised and analysed the items to be used in the instrument and (2) the evaluation of the relevance of the instrument using an expert panel. The conceptualisation of the two survey instruments were informed by findings from an integrative literature review undertaken as part of the multi-phase study (Wakelin et al., 2022) and then mapped against the midwifery evidence-informed Quality Maternal and Newborn Care framework (Renfrew et al., 2014). The evaluation of the two instruments were analysed using Content Validity Index (CVI) and Cronbach’s alpha coefficient (CAC) scoring, alongside comments from the EAG. Using content validity index scoring to rate individual item scores (i-CVI) and overall question scores (q-CVI) provided an opportunity to review and revise the few questions which did not achieve the acceptable validity score. These questions required minor tweaking or removal and were considered alongside comments and suggestions from the EAG. The overall scale CVI score (s-CVI) for both instruments achieved the acceptable level of ⩾0.9 (Polit and Beck, 2006) giving confidence that overall the instrument design was valid.

Interpreting results using CAC was challenging given, there were several items where the overall reliability score was 0. Difficulty in interpreting results using CAC has been reported when small numbers of items are used (Pallant, 2016; Taber, 2018). Each question had only three items which may explain the inaccuracies when analysing results using CAC. Taber (2018) suggests for this reason it is sometimes used alongside other tools as was done in this case, with using CVI. Calculating the CVI score was straightforward, doesn’t require statistical knowledge, and therefore adds to the ease of interpreting results. Used alongside comments from the EAG of midwives, provided an additional layer in which to consider each question, and therefore added to the validity and reliability of the two survey instruments.

A challenge in the development of questions was around whether to include questions on Covid-19. While the analysis of the survey questions based on scoring from CVI and CAC would suggest the two surveys were valid and reliable, consideration was also given to comments made by the EAG. For example, there were initially a series of questions related to Covid-19.

For LMC midwives: Were you affected by the Covid-19 alert level 3 lockdown? and Has the way you communicate with antenatal clients changed as a result of Covid-19? For pregnant people: Were you affected by the Covid-19 alert level 3 lockdown? Has the way you communicated with your midwife changed as a result of Covid-19? How challenging was it to use communication technology when connecting with your midwife during Covid-19?

The comments and suggestions made by the EAG, were that these questions could lead to confusion given that at the time the survey instrument was constructed, New Zealand was not currently in a lockdown and therefore the questions may not be relevant.

Another question asked under the heading of Covid-19 was ‘how concerned are you that use of communication technology will lead to complaints?’ The i-CVI score was lower than 0.78 on both the appropriateness of the question and the clarity of the question. The q-CVI for the question also scored lower than 0.9. The EAG were able to make suggestions for either rewording or removing the question altogether. As a result of this feedback, the sub heading of Covid-19 was removed and instead the question was reworded to ‘How concerned are you that using communication technology with pregnant people may lead to complaints to Midwifery Council?’

Taking into consideration comments from the EAG provided greater insight into the scoring and was an important step in the design of the two online survey questions. Polit et al. (2007) suggests qualitative feedback can be indicative of content capability and commitment to the project. We would argue it is more than this. Increasingly looking to expertise within professions who haven’t traditionally been used to validate tools, establishes a scholarly body of knowledge for those professions. The development of this tool was innovative in its approach of seeking the midwifery voice to validate tools and thus begin a scholarly body of knowledge. In this instance, an EAG of New Zealand midwives were reviewing questions for two online surveys designed by New Zealand midwives for use within a New Zealand midwifery continuity model of care using a QMNC framework, developed by leading midwifery researchers. The validation of the tool using Content Validity Index and Cronbach’s alpha coefficient scoring was further validated by comments from midwifery experts in survey question design and midwifery practice. This in effect, provided an extra layer in the validation of survey instruments. It also builds a community of scholarship within midwifery (Newnham and Rothman, 2022). A similar approach was taken by Milne et al. (2016) in using cultural Indigenous experts in the development and validation of questions for an online survey which sought to measure nursing and midwifery academics’ awareness of cultural safety. This approach is equally applicable to other smaller allied health professions who seek to establish a scholarly body of knowledge within their respective professions. Newnham and Rothman (2022) argue that the quantification of midwifery research is limiting midwifery knowledge, and therefore research methods which seek to give voice, and hold space for qualitative expertise is for the betterment of the profession. The feedback from the EAG enabled validation of the survey questions and provided a level of reliability and certainty that the questions would elicit appropriate responses. As the overall scoring of the survey instruments were considered within an acceptable range for validity and reliability, revalidation of the survey instruments was not sought from the expert advisory group.

Limitations

Analysis of results using CAC were at times difficult to interpret due to small numbers of items within each question. It was not possible to seek feedback from pregnant people with the survey design, however the EAG were able to offer constructive feedback with their expert midwifery knowledge and experience of survey instrument design. A further limitation is acknowledged in not seeking further validation from the EAG once changes to wording of questions were undertaken. This information could have provided further validation and reliability of items which hadn’t initially met the acceptable scoring range.

Conclusion

Creating a tool for validating questions developed by midwives for an expert group of midwives recognises and values the knowledge and expertise from this professional group, and gives voice to midwifery, which traditionally has been marginalised by more patriarchal research paradigms. The findings from the EAG were analysed using Content Validity Index and Cronbach’s alpha coefficient scoring. This provided validation that the questions were appropriate, relevant and stated clearly. Further validation was provided by comments and feedback from the EAG of midwives which added an extra layer of confidence with tweaking the final rendition of the two online surveys. This provided the assurance needed to move to the data collection phase of the study to explore how communication technology is used between LMC midwives and pregnant people in Aotearoa New Zealand.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.