Abstract

In times of social distancing, we need to adapt some of our research methods. Methodologies in field research can be partly replaced by a combination of online methods, which will often include online interviews. Technologically, there are few limitations to conducting interviews online, but there are side effects to digital mediation: privacy related concerns, technology hiccups, and physical distance may be barriers to disclosure for the interviewee. A survey among master students who had conducted interviews online confirmed these negative effects on the flow of interviews. Barriers to disclosure may be overcome by introducing familiarity and role-sharing. We tested the methodology of duo interviews via online video-calling tool Skype. In duo interviews, two respondents who know each other, interview each other in absence of a researcher. This explorative study investigated the effects of digital mediation on the flow of interviews and possible mitigation by familiarity between interviewer and interviewee. The qualitative study’s respondents were mostly experienced interviewers who knew each other well and were also experienced in using online video-calling tools, which reduced the influence of variation in technical and interviewing skills. The focus of the study was on finding conditions for the use of the familiarity strategy in online interviews. While familiarity between interview participants was reported to positively affect disclosure, the use of this method is limited to specific interview purposes. An unexpected finding was that the absent researcher was, in fact, present in the interview due to the element of video-recording. We list recommendations and conditions for conducting duo interviews over online video-calling tools, as well as limitations.

Introduction

While there is no replacement for the rich and detailed data collected in field research and qualitative interviews, circumstances sometimes do not permit an adequate collection of such data. The COVID-19 pandemic is a case in point. Field studies during the COVID-19 pandemic involving in-person contact were nearly impossible, as Howlett (2021: 2) noted, affecting both researchers and the societies and people they study, and moving both “away from the physical realm and into a digital reality.” Under circumstances of forced physical distance, classical interpretative face-to-face methodologies can be partly replaced by online research methodologies. A combination of online methods can indeed generate data that approximate insights from field research. For example, online surveys combined with in-depth online interviews, document analysis, and auto-ethnographic diaries can provide detailed insights into people’s attitudes, opinions, and experiences. In most such combinations of distanced alternatives, online in-depth interviews will be an important element. However, online interviews are mediated by digital video-conferencing tools, like Skype or Zoom, and such mediation may affect data collection in several ways.

Years before widespread confinement measures disrupted research designs, Deakin and Wakefield (2014) already affirmed that online video-calling tools provide opportunities to interview otherwise inaccessible respondents. Rowley (2012) puts forward that Skype interviews save traveling time, and if traveling becomes a non-issue, this might remove some researcher bias caused by practical considerations in the selection of respondents. Moreover, if interviewees can stay in the comfort of their own environment, look things up there, show artifacts or practices, and exchange digital documents, photos, and videos in real-time, this can be an important advantage of conducting interviews online (Bryman, 2016; Lo Iacono et al., 2016).

Nevertheless, some aspects of the interview will inevitably be lost when it is conducted online compared to a face-to-face interview. For instance, elements of the environment, such as background noise or the presence of others, will be different for both the interviewer and interviewee. Since the interviewer and interviewee are not in the same physical space, the interviewer has little control over the offline environment of the other and cannot always respond adequately to interference in the space of the interviewee. Information about the interviewee’s body language and natural behavior will also remain inaccessible to the interviewer (Howlett, 2021; Rowley, 2012). Finally, aside from effects caused by physical distance, there may be technological interference: online connections may be interrupted or cause delays, disrupting the flow of the conversation. Such interference or distortion of information can affect the quality of the interview, 1 though the extent of the “damage” will naturally depend on the goals and context of the interview.

An online interview means there is physical distance between interviewer and interviewee, but it also creates emotional distance. The lack of intimacy that characterizes interviews over online video-calling tools can make it more difficult to discuss personal topics, and interviewees may be more reluctant to discuss sensitive issues (Lo Iacono et al., 2016; Seitz, 2016). One reason for reticence may be discomfort over the recording of digital video. Audio and even video recording of interviews is not uncommon, but the digital environment can lead to additional awareness and privacy concerns. As Boyd (2011) points out, networked technologies can lead digital data astray: digital information is persistent, easily replicable, searchable, and scalable, thus potentially reaching unintended audiences faster and easier than offline audio recordings. Some respondents may be more attuned to the perceived risks of digital technologies than others, which may lead some to censor themselves.

To what extent do interferences and personal concerns hamper disclosure by interviewees in interviews mediated by video-calling technology? What are possible mitigation strategies? We attempted to answer these questions in two ways. We included several statements on online interviewing in a survey among student researchers in confinement who had been forced to adapt their planned research strategies to online methods. Subsequently, we invited more experienced researchers to share their expert views on the effects of digital mediation of interviews in a qualitative study with open questions. We then looked to a possible mitigation strategy: “duo interviews.”

The method of duo interviews can be helpful in overcoming obstacles to disclosure. In duo interviews, respondents are interviewed by a familiar person: a peer, a colleague, a fellow member of the same community. Also known as “reciprocal peer interviewing” (Porter et al., 2009) and similar to “peer interviewing” (Elliott et al., 2002), these types of interviews allow participants more space to express themselves, while the researcher withdraws into the background. Familiarity between interviewer and interviewee creates a more intimate atmosphere for the interview despite physical distance. In addition, the fact that both participants are interviewer as well as interviewee, alternating roles in the conversation, eliminates perceptions of social distance between the researcher and “the researched.” This may lower psychological barriers to disclosure (Porter et al., 2009).

In the explorative study presented here, we aimed to find out whether duo interviews can overcome some of the drawbacks of online video interviews. The focus of our research into the effects of technological mediation in online interviews was on two types of effects: (I) adverse effects on interviews caused by the digital mediation of online video interviewing tools, (II) mitigating effects of familiarity between participants. In this paper, we will first discuss these possible effects in more detail, before explaining our methodology and presenting the findings. Finally, we will end with a set of recommendations for online duo interviews, an overview of their limitations, and a discussion of areas for further research.

Influences on the flow of online video interviews

Interviews cannot simply be taken online without consequences. While ethical issues for Skype interviews may be the same as for face-to-face interviews (Janghorban et al., 2014), ethical neutrality does not preclude technological or psychological effects. These effects may lead to sampling biases. The online environment will exclude respondents who have no access to the technologies required for online interviews, such as a computer, camera, microphone, or internet connection, or who lack the necessary skills to use these technologies. An online set-up may also attract a different type of respondent due to concerns about digital technologies among some members of the target group (Lo Iacono et al., 2016). These potential biases should prompt careful researchers to anticipate such effects in their research plans.

In this chapter, we will first discuss conventional approaches to (face-to-face) in-depth interviewing before we go into two aspects of online video interviews that may affect outcomes: technological mediation and digital video recording. We will conclude this section by discussing potentially mitigating effects of familiarity between interviewer and interviewee.

Conventional approaches to interviewing

Research interviews differ from conversations in that they are formalized exchanges. The researcher sets the topic and purposes, and what is communicated will be used as research data interpreted by the researcher, which means that (most of) what is said is “on the record” (Denscombe, 2014; Kvale, 2006). Interviews are particularly suited to conduct research into complex, subtle phenomena that call for a detailed understanding (Denscombe, 2014; Rowley, 2012). For instance, interviews are the research method of choice when the researcher wants to explore motivations, opinions, and emotions in depth. Qualitative interviews focus on the interviewee’s point of view. The research focus is typically emphasizing the interviewee’s own perspective (Bryman, 2016).

Interviews are conventionally classified into three types: structured, semi-structured, and unstructured. Structured interviews are tightly controlled and similar to questionnaires, with limited response options for the interviewee. These are typically used in quantitative research designs. Qualitative semi-structured interviews leave more flexibility for both the interviewer and interviewee: there is a clear list of topics the interviewer wants to discuss, but the order of questions is less rigid, and the interviewee can answer more freely. In this way, the semi-structured interview leaves room for further elaboration of issues of interest. In unstructured interviews, the researcher is completely non-directive and lets respondents develop their own line of thoughts (Denscombe, 2014).

While interviews are formalized exchanges controlled by the interviewer, they are like conversations in the sense that participants interact and respond to each other’s perceived personalities. Social researchers have long been aware of the so-called “psychodynamic aspects of interviewing” (Laslett and Rapoport, 1975). This refers to the notion that the personalities and emotions of interviewers, as well as their rapport with interviewees, will influence the flow of interviews. Performing the roles of interviewer and interviewee creates social distance between interview participants. The interview format creates a relation of power in which one person “studies” the other or in which one person is “granted” time and access to privileged information from the other (Kvale, 2006; Lyons and Chipperfield, 2000). Nevertheless, feminist researchers prefer qualitative in-depth interviews because of the high level of rapport between interviewer and interviewee, reciprocity, and non-hierarchical relationship (Bryman, 2016).

Gender, hierarchical status, seniority, ethnic background, knowledgeability, and other characteristics of the participants will, however, influence role perceptions in interviews (Kvale, 2006; Meuser and Nagel, 2009; Mikecz, 2012; Welch et al., 2002). The interviewer’s personality is of particular importance when the interview goes into sensitive topics. If interviewees feel uncomfortable during the interview, they may give evasive answers or try to give socially desirable answers in line with the interviewer’s (perceived) expectations. Interviewers have an important role in this: they should be active listeners, flexible and non-judgmental, as (dis)agreement with participants might influence their responses (Bryman, 2016). Denscombe (2014) therefore advises social researchers to consider the social status, educational qualifications, and professional expertise of the interviewee, the age gap between interviewer and interviewee, and differences in gender or ethnicity between interviewer and interviewee. Researchers are conventionally advised to present themselves in a neutral fashion, cloaking their personality and opinions as much as possible. Through a neutral stance and appearance, the researcher should aim to instill trust and establish rapport with the interviewee.

Paradoxically, researchers are often advised against interviewing people with whom they are familiar. Interviewers may be disinclined to explore familiar interviewees’ responses in-depth because they believe they already know a lot about their interviewees’ experiences, backgrounds, and motivations. This may lead them to ask fewer follow-up questions. This advice comes from a positivist perspective on interviewing, in which interviews are fact-finding missions leading to a neutral account of an objective state of affairs (Bogner and Menz, 2009). Problematic about this perspective is that research interviews are often not fact-finding missions. Interviews, especially semi-structured and unstructured interviews, are mainly used to discuss interviewees’ opinions, experiences, values, and attitudes. If the researcher aims for less subjective factuality, surveys are a more appropriate research method (Rowley, 2012). However, when it comes to opinions, experiences, values, or motivations, there is no objective truth.

Another reason why interviewing familiar people is discouraged is that sensitivities between people who know each other differ from sensitivities between people who do not know each other. People who know each other will consider the consequences of each interaction for future interactions and may therefore be less open about certain topics, or reluctant to press for more disclosure. On the other hand, being comfortable around the interviewer may also relax interviewees and lead them to open up more. In other words, some topics are better discussed with strangers, while other topics are better discussed with friends or family. Rather than a discouragement, this suggests a strategic approach to interviewing (un)familiar people, depending on the research topic.

That strategic approach fits within a constructionist perspective on interviewing. In this perspective, interview data are co-constructed by interviewer and interviewee, who together make sense of a research topic and narrative (Rowley, 2012). Bogner and Menz (2009), for example, argue that so-called situative effects—how the interview participants respond to each other’s personalities—should be used productively to co-construct the process of data collection. According to Blichfeldt and Heldbjerg (2011: 5), “researchers who refer to reality as being socially constructed through interaction among individuals and their life-worlds of subjective interpretations may generate especially valuable results by interviewing friends and/or acquaintances.” In their study, most respondents reported a lower sense of formality in being interviewed by someone they knew, which made them open up more about certain topics. Only two interviewees in that study suggested they would rather discuss specific topics with strangers. These findings align with the notion of a strategic approach to familiarity between interviewer and interviewee.

Strategies aimed at gaining interviewees’ trust to get them to open up more have been criticized as manipulative and ethically dubious (Kvale, 2006). Naturally, we would not endorse a strategy that aims to obtain personal information from respondents which they will later regret disclosing. Exploitative practices aside, we would argue that interviews work better for both parties if there is comfort and (justified) trust between interviewer and interviewee. Especially in situations of familiarity, when there is empathy and a long-term interest in maintaining good relations between interviewer and interviewee, trust can be established.

Our study focused on semi-structured or unstructured interviews, as these in particular may suffer from effects of physical and emotional distance between interviewer and interviewee. If the interview outcomes depend on the interviewee’s sense of comfort, technological mediation is an important element to consider in the research design.

Effects of technological mediation

Bryman (2016) identifies several advantages of online Skype interviews: more flexible scheduling, saving time and costs as there is no need to travel, and easy access to geographically dispersed audiences. Online interviews might also be more convenient for interviewees as they can participate in their own secure environments. Of course, there are also limitations. Obvious technological challenges of online video interviewing are presented by a lack of technology and a lack of digital skills. Interviewees need to have access to specific electronic devices, need to be able to install the software and adjust settings, and need to ensure a reliable internet connection. During the COVID-19 pandemic, as distancing measures were prolonged, more and more people acquired both the technologies and the skills to communicate over video-calling tools. As more of people’s lives became virtual, more people also became habituated to using digital platforms in their everyday lives (Howlett, 2021).

However, even when devices and software are available, and respondents are perfectly comfortable using online video-calling tools, there may be unintended effects. For instance, Schoenenberg et al. (2014) found that callers may misattribute the effects of a delay in a telephone conversation to the person at the other end. Delays in calls may lead to unintended interruptions, confusion, and longer response times. Interruptions may create an impression of impoliteness; confusion may lead people to believe that their conversational partner does not understand well, and longer response times may create an impression of hesitation. Callers experiencing delays may also feel that their conversational partners are not paying attention. The experiments conducted by Schoenenberg et al. (2014) show that during the first contact with someone over an online tool, people’s perception of the unfamiliar caller’s personality differs significantly if there is a delay on the line, compared to a non-delay situation. If there was a delay, callers perceived their counterparts to be less friendly, less active, less cheerful, less self-efficient, less achievement-striving, and less self-disciplined. This misattribution effect was particularly noticeable when interruptions were more frequent.

In another example of misattribution, Cramton (2001) found that different interpretations of silence are a major difficulty for teams collaborating online. Silence can mean many different things to different team members; from dissent to indifference or tacit consent. Interpretations of another’s silence may therefore also differ from that person’s intention. In Cramton’s (2001) study, silence due to technical problems was sometimes interpreted as intentional non-participation. Unfortunately, silence and facial expressions are often used as techniques by interviewers to prompt interviewees to elaborate on their answers. Misinterpretation of these techniques in online interviews can therefore have negative consequences.

As the examples of misattribution of technological issues to another’s personality make clear, technological mediation may have several effects on the flow of an online interview. These effects appear especially impactful in cases where the conversational partners do not know each other well. Specifically for interviews, misattribution effects may hamper the rapport and trust between interviewer and interviewee and increase perceptions of social distance. There may also be positive effects, in the sense that the interviewer may ask more questions if the interviewee appears hesitant, or the interviewee may provide more explanations when the interviewer does not seem to understand. Overall, though, it stands to reason that the quality of an interview will be affected if the interviewee perceives an unfamiliar interviewer as impolite, unfriendly, or indifferent.

Concerns related to online video communications

An advantage of using online video-calling tools for interviews is that recording is easy. Skype, the tool most discussed in previous research into online interviews, is one of a small number of video-calling tools that allow for recording. While video recording is easy, some interviewees may feel uncomfortable being on camera. For this reason, interviewees may be more reluctant to discuss sensitive issues over Skype (Howlett, 2021; Lo Iacono et al., 2016). Seitz (2016) reports that a student researcher who studied young women’s dating experiences encountered problems with Skype recording because some interviewees feared videos of the discussion could be shared with others.

Concerns related to online privacy mostly boil down to the fear that a recorded interview can end up with unintended third parties. This may happen because of incautious processing or storage by the researcher, or because of hacking. Digital video recording can compound such concerns because digital data are distributed and copied more easily and persist longer (Boyd, 2011). Apart from the researcher, other parties will have access to the recording despite stringent data protection measures. Lo Iacono et al. (2016) warn that it remains unclear how data are processed by Skype’s owner Microsoft 2 and cloud service providers like Google. Additionally, intelligence services have proven to monitor online communications, as was most notably disclosed by Edward Snowden in 2013.

Another effect of being on camera, not related to recording, has to do with interviewees’ self-conscious feelings. Seeing oneself on screen can be a distraction (Deakin and Wakefield, 2014). During the COVID-19 pandemic, when many forms of communication moved online, the distraction of seeing oneself during a video call did indeed become a popular theme. 3 Interview participants may feel uncomfortable by being reminded of their appearance on camera. In many video-conferencing tools, there is a constant reminder of being on camera in the user’s field of vision. Knowing that one is observed can also induce a “chilling effect” (Schauer, 1978). People act in a restricted manner when they know or feel that someone is watching them (the “Hawthorne effect”), a phenomenon that Reiman (1995) refers to as “an extrinsic loss of freedom.” People who feel observed will imagine viewing their behavior through the observer’s eyes and censor themselves to meet the norms they suppose their observer to have (Foucault, 1975). Consequently, interviewees in a recorded video call may not just imagine the viewpoint of the researcher but also feel scrutinized by unintended third parties, and therefore behave in what they consider to be socially desirable ways. Interviewers themselves may feel observed as well in an online video call and similarly be affected in the performance of their role.

In contrast, Howlett (2021) remarks about online interviewing during the pandemic: “Markedly, most online participants appeared to forget over time that the conversations were being recorded, however, those I met in-person were much more careful with their words, especially at the beginning of the conversations, and sometimes glanced at the [recording] device before speaking.” In a similar vein, Seitz (2016) suggests that introverts may be more likely to open up in an online interview, referring to Orchard and Fullwood (2010), who discuss several studies showing that introverts feel less inhibited when socializing online.

While concerns about privacy and appearance will not affect everyone and can be mitigated, they cannot be taken away entirely. Some people will decline to participate in an online video interview if it is recorded (Howlett, 2021). Some interviewees may censor themselves, and self-censorship is not easy to detect by an outside observer. However, even if privacy risks and related concerns cannot be discarded entirely, their effects may be diminished in a situation of familiarity.

Effects of familiarity

As we have seen, the comfort that participants will feel in an interview is affected by the relationship between interviewer and interviewee. Bogner and Menz (2009) argue that the debate about interactive effects that “distort” the interview rests on the idea that interviews uncover accurate and objective, context-independent information. In reality, there will always be interaction in an interview. Interactivity is one of the core characteristics of qualitative interviews, together with flexibility and a non-directive interview style. The interviewer tries to steer the conversation in as limited a way as possible and focuses on the interviewee’s story (Mortelmans, 2009). Situative effects can be used productively and even constitute the process of data collection in the interview. The interviewer is never invisible, and what is said, is said to him or her. The interview takes place on a social dimension in a specific interaction framework. The interviewee reacts to that and plays his or her role in it.

In light of these notions, familiarity between interviewer and interviewee can be both an advantage and a disadvantage, depending on what sort of information the interviewer wishes to co-construct with the interviewee. Being interviewed by someone they know may put interviewees more at ease because they are already familiar with the personality of their interviewer and know from experience how comfortable they feel talking to this person. As discussed above, when the interviewer and interviewee are unfamiliar with each other, they will tend to ascribe technical problems to the other’s personality. In addition, when the interview is recorded, interviewees may feel a sense of discomfort due to privacy concerns.

To prevent such discomfort, researchers studying sensitive personal subjects related to health issues or drug abuse have turned to “peer interviewing.” Peer interviewers are members of the same community as interviewees. Discussing sensitive issues with peers can lower psychological barriers to disclosure (e.g. Elliott et al., 2002; Kuebler and Hausser, 1997). On the other hand, when a person interviews a friend or close colleague, he or she is careful to make the experience pleasant, not only during the interview but also with a view to the future maintenance of the relationship. This human tendency can limit the topics that are deemed suitable for the interview. It can also lead to either the interviewer or the interviewee avoiding further questioning. In addition, the format of an interview can feel unnatural to friends or peers. Consequently, both interviewers and interviewees may prefer an objective, “distanced” interview relationship when the research topic may lead them to personal revelations (Kvale, 2006; Lyons and Chipperfield, 2000).

Taking these considerations into account, exploring whether duo interviews between people who know each other can mitigate adverse effects of digital mediation, appears to be a valuable contribution to the evaluation of online research methodologies. The focus of such an exploration should be on the type of suitable interview topics, the extent to which participants will discuss them online, and in general on how familiarity between interviewer and interviewee influences the situation.

Methodology

Situation

Due to the abrupt introduction of COVID-19 pandemic measures in Belgium in the Spring of 2020, all staff of a medium-sized university research center were forced to work from home, with little time for preparation. This sudden change interrupted several ongoing studies that involved meeting respondents in person and prompted a somewhat urgent search for alternative online methodologies. At the same time, university students planning thesis research were similarly required to change their research plans to replace in-person methodologies with online methodologies. At the end of the semester, a survey was held among bachelor’s and master’s students in social sciences to investigate the impact of the lockdown on their research. Subsequently, a qualitative study was held among experienced researchers to collect their experiences with online interviewing and to test duo interviews. This qualitative study involved experienced research staff of the research institute who had been working from home for several weeks using online video-conferencing tools for meetings and collaboration.

Recruitment

Students conducting thesis research were recruited through a short initial call for participants on the university’s course management system, Canvas 4 , followed by three email reminders. A duration of 5 minutes was indicated for the survey, which consisted of various questions on online methodologies and challenges related to conducting research from home. 340 students responded to the survey, 123 of whom responded to questions about conducting online interviews.

Participants for the qualitative study were recruited via a short, organization-wide invitation over email and a message on the professional communication platform Slack. 5 In the invitation, the reason and goal of the study were set out, and an indication of the time investment was given (20 minutes). Next, interested participants were asked to find their own interview partners. Four pairs responded and were sent more instructions over email. These instructions included a request to set a time and date for the interview between the participants, a link to a webpage explaining the recording of Skype interviews, 6 an explanation of why and how the recordings and other personal data would be handled, and a quick overview of the different steps in the study. Attached to the email was a consent form for review. All participants agreed to proceed with the study and informed the researcher of the time of their interviews.

Previous research with “reciprocal peer interviewers” or “privileged access interviewers” has emphasized the need to train such interviewers (e.g. Elliott et al., 2002; Kuebler and Hausser, 1997; Porter et al., 2009), but in this case, all but one participant were experienced research interviewers. These participants were also familiar with the methodological challenges of interviewing and qualitative research.

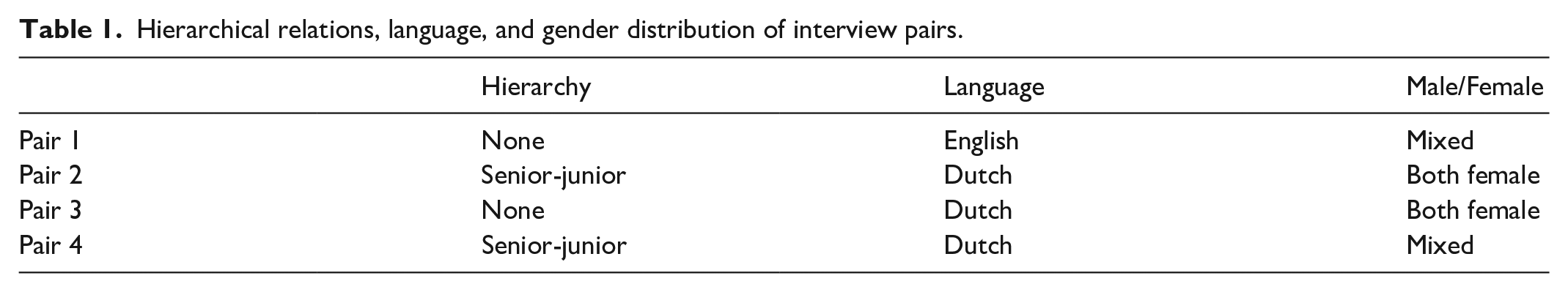

Of the four pairs, two were peers in a hierarchical sense, and two pairs were a combination of senior-junior researchers. In one of those pairs, the junior researcher reported directly to the senior researcher. For three of the pairs, both participants were native speakers of Dutch; in those cases, the interviews were conducted in Dutch to maintain the element of familiarity and comfort. Only two of the eight participants were male, and they were not in the same interview. Table 1 offers an overview.

Hierarchical relations, language, and gender distribution of interview pairs.

Technical aspects

As the student survey took place after they had finished their research, no instructions had been given beforehand about the tools students should use for online interviews. Students who responded to the survey were not asked about the exact software they had used to conduct online interviews, so there may be variation in the video-calling tools they adopted. Research participants in the qualitative study were asked to use only Skype for their interviews. Using the same tool made it possible to compare experiences between the pairs. The reason to select Skype was also to allow for potential comparison with previous studies (Deakin and Wakefield, 2014; Janghorban et al., 2014; Lo Iacono et al., 2016; Seitz, 2016), which had focused on Skype, as this is one of the oldest and most established online video-calling tools available. In the months-long confinement period, several online collaboration tools had been used by the staff, including various chat applications and video-conferencing software. By the time the explorative study was conducted, everyone in the research institute had built up ample experience in video calls and similar technology. Many had implemented a home office environment with required technology comfortably in place.

Survey, interview questions, and evaluation form

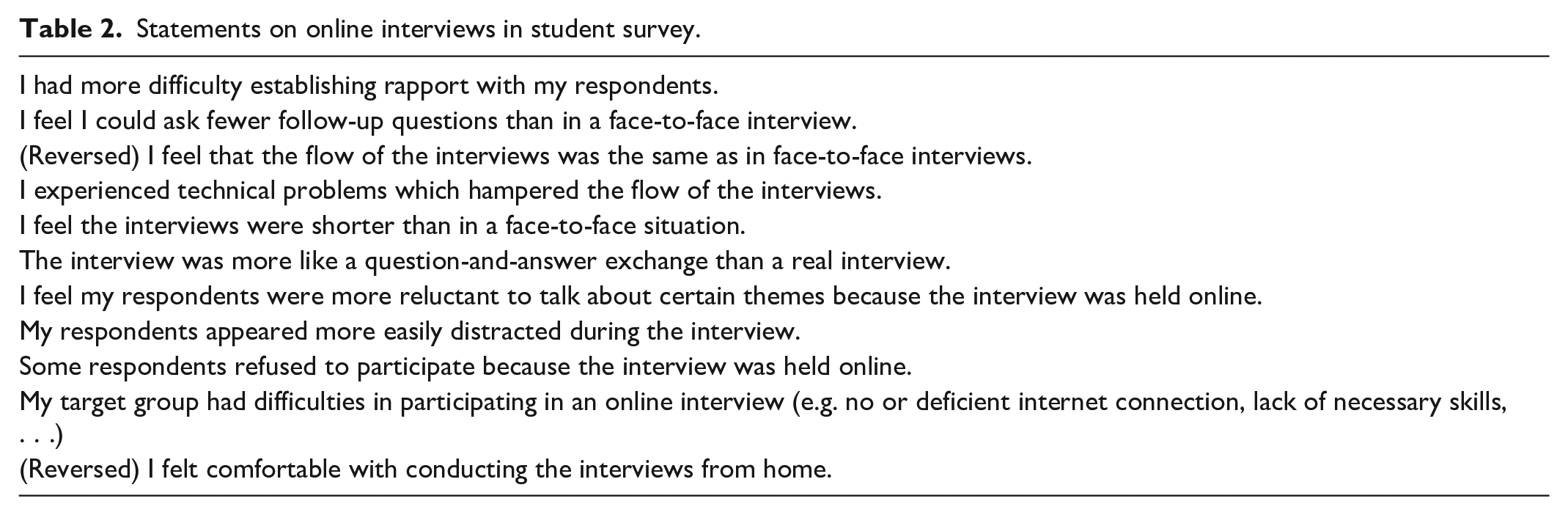

The student survey asked students to reflect on interviews that had already taken place. Within the survey, 11 statements were presented to the students about their experiences conducting interviews online (Table 2). Response alternatives were on a five-point Likert scale, from “completely disagree” to “completely agree.”

Statements on online interviews in student survey.

The subsequent qualitative study with experienced researchers consisted of two parts: conducting online duo interviews first, then evaluating the experience and the effects of online video-calling interviews in general. The questions to be asked in the duo interviews were therefore not about conducting interviews. Instead, a different subject was found to test the appropriateness of duo interviews for different topics. To draw out elements of familiarity and appropriateness, the interview questions were intended to balance on the edge of professional and personal topics, since the respondents were each other’s colleagues. The questions were thematically centered around the participants’ work-life balance during the pandemic-induced confinement period.

The semi-structured interviews consisted of four questions which built up to more disclosure. The first three questions escalated from general politeness to asking about personal impressions. As for the fourth question, participants were instructed to devise one “provocative” question of their own, based on what their interview partners had told them in their answers to the prescribed questions. The provocation in the fourth question was specifically intended to draw out the element of appropriateness, to induce reflection in both interviewer and interviewee on what would be acceptable to ask and to answer. Participants were not explicitly instructed to ask follow-up questions, but as they were experienced interviewers, they did so out of habit.

The three prescribed interview questions were:

How are you doing today?

Can you walk me through what your working day looks like during this pandemic? Chronologically or thematically, whichever you prefer.

What are the three most important differences between this situation and how you worked before the pandemic?

Participants took turns in their roles, meaning participant A would first interview participant B, and subsequently, participant B would interview participant A.

After the interviews were done, participants were sent an evaluation form with open questions, which focused on their experiences and asked them to evaluate online video interviews in general and the duo interview format in particular. The evaluation form contained the following questions:

Did you experience any technical issues? If so, what were the issues?

Was the interview in any other way affected (positively or negatively) by the technology? If so, how?

Do you think there are topics people would not discuss over a Skype interview? If so, what kinds of topics?

What kinds of reasons could people have not to discuss things in this set-up?

In your opinion, what kinds of people would not like to participate in such a method?

Does it matter whether you are interviewed by a colleague, a superior, or a “neutral” outside interviewer? If so, how?

What other comments do you have about this methodology?

Data protection

Students participating in the survey were asked to consent to the processing of their personal data for the purposes of the survey. The survey among students was carried out using Qualtrics, which processes personal data as a joint controller with the research institution using its services.

Participants in the second study received a consent request form beforehand to review, explaining the data protection procedures and their rights, and providing contact details of the data protection officer and the researcher. They were also informed that they did not have to use their own Skype account for the interview; they could set up a special temporary account and delete it after the interview. Additionally, they were informed about government agencies potentially monitoring their communications. As for the recording, participants were asked to password-protect the recording and send it to the researcher over Firefox Send, 7 a file transfer service that offered end-to-end encryption. The password was sent to the researcher over another channel. The videos were stored on the researcher’s password-protected laptop and eventually transcribed, at which point the video files were deleted.

The participants were not only familiar with each other but also with the researcher carrying out the study, who was a colleague of theirs. The researcher’s reputation as a privacy-conscious individual may have eased privacy concerns but also heightened privacy awareness among participants.

Analysis

The analysis of the 11 statements on online interviews in the student survey was part of a broader analysis of the complete survey. Since there were no follow-up questions, the results only consisted of the ordinal responses to the statements on 5-point Likert scales. Students were not asked about their estimations of their technical skills or experience, and they were all at the end of their study programs. We concluded there were no independent variables deemed relevant for causal analysis. Advanced statistical analysis was deemed inappropriate due to the relatively low number of respondents (n = 123).

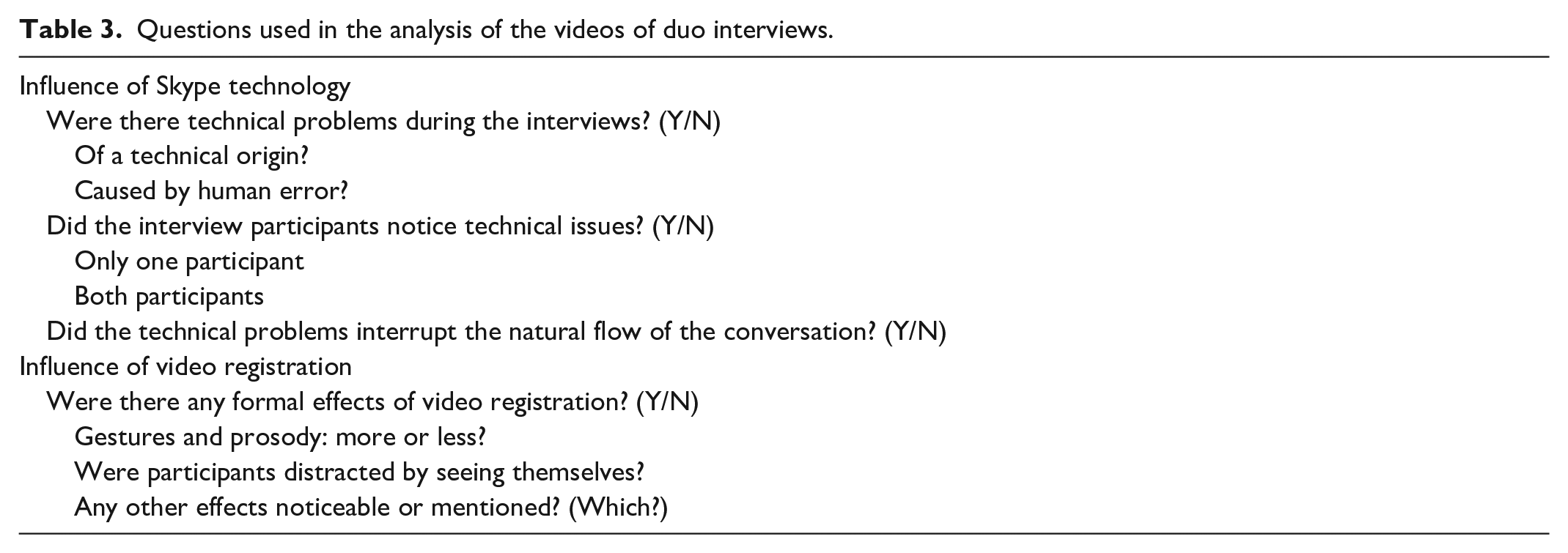

The analysis of the qualitative study consisted of two steps: (I) an analysis of the videos of the interviews between the experts and (II) an analysis of the answers to the open questions in the evaluation forms given to the experts. Two researchers independently analyzed the interview videos and the answers to the open questions in the evaluation forms. They subsequently evaluated the methodology together. The analysis of the videos in the first step consisted of answering the questions in Table 3. The question about “formal effects” was phrased to focus the analysts’ attention not on the content of the discussion but on what was visible in the video: Was there any effect on the form of the interview?

Questions used in the analysis of the videos of duo interviews.

Naturally, the participants sometimes also commented during their interviews on aspects that were part of the analysis in the second step. For instance, one participant remarked during the interview, when asked about differences in working before and during the lockdown: “When I discuss things or issues with people via online calls, I don’t know, for me, it is not the same as seeing someone in person. I don’t have a feeling like we can easily solve issues.” These remarks were also noted down and added to the analysis of the experts’ evaluation.

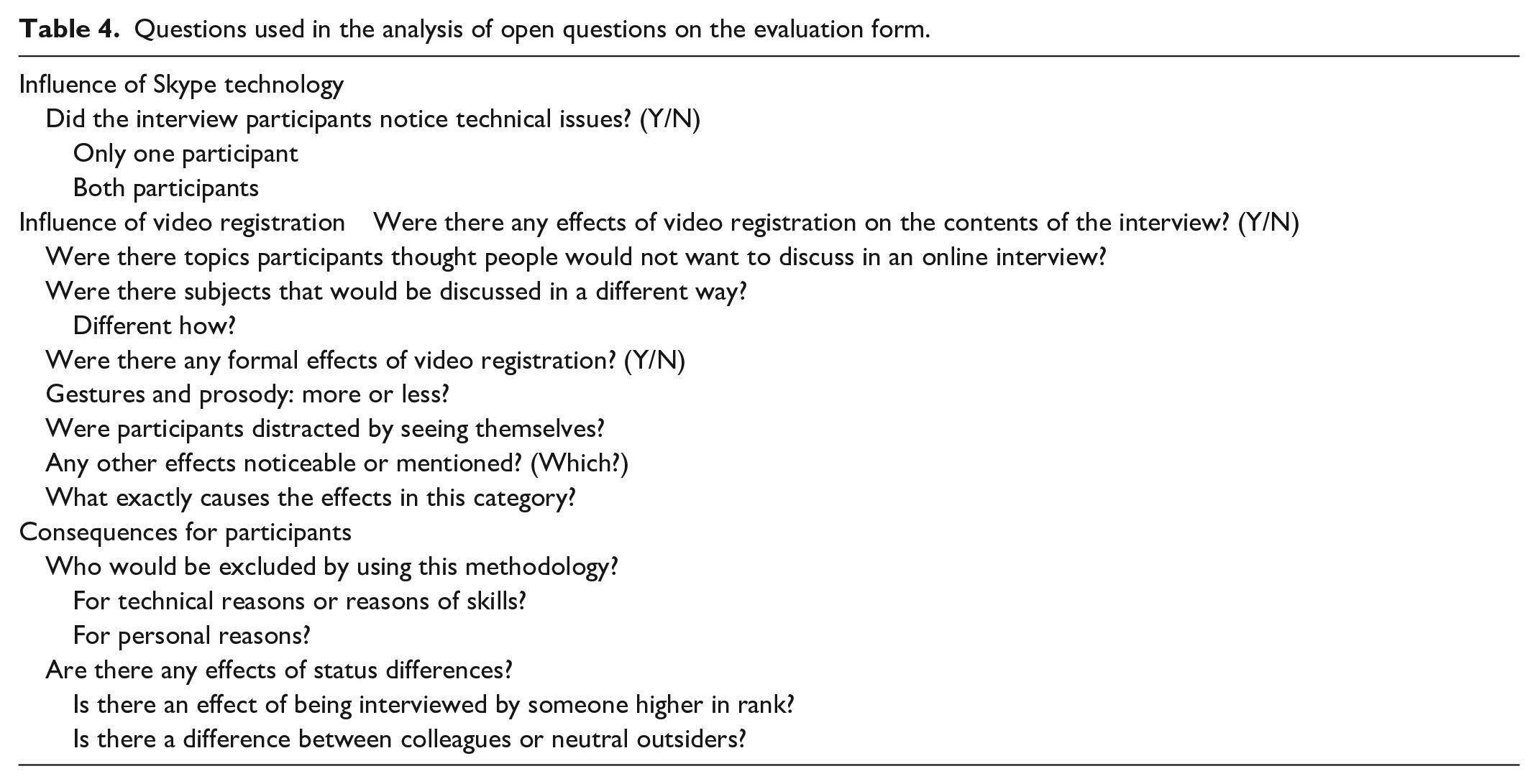

The analysis of the open questions in the evaluation forms consisted of finding direct remarks or elements that answered the questions in Table 4.

Questions used in the analysis of open questions on the evaluation form.

Results

Technical issues

Student survey

A majority of the 123 respondents in the student survey (59.3%) indicated that they had experienced technical problems that hampered their interviews. In response to the statement “My target group had difficulties in participating in an online interview (e.g. no or deficient internet connection, lack of required skills, . . .),” 49.6% disagreed or completely disagreed, while 20.3% agreed there were difficulties on the part of the respondents. 26.8% gave a neutral answer on the 5-point scale, with the remaining 3.3% completely agreeing with respondent difficulties. In other words, while the majority experienced technical problems in conducting online interviews, only for some student researchers those problems were to do with issues on the respondents’ side.

The survey also contained a statement about interviewees being distracted. The most frequent response was disagreement (38.2%), while 21.1% were neutral on the subject, and 29.3% of the students agreed that their interviewees appeared easily distracted. Equal percentages of students completely agreed or completely disagreed (5.7%).

Duo interviews

In the duo interview study, one aspect of technological mediation that immediately became apparent in the videos was that all participants tended to look away from the screen while talking, in a marked deviation from face-to-face conversations. Why this occurred would require further investigation, since no participant commented on it. Other than that, participants did not appear to be distracted by seeing themselves (though two participants commented about this in the evaluation, see below). In two interviews, participants appeared to be gesticulating intentionally in front of the camera, while one participant mentioned on the evaluation form to have been gesticulating less.

All videos of the duo interviews showed slight delays, with one video showing significant disruptions in the form of “frozen screens” and pixelated images. However, participants ignored the delays in their conversations. In the cases with only slight delays, these did not even appear to be noticed in the course of the conversation.

The answers to the evaluation forms showed that the two participants in the significantly disrupted interview did notice delays and technical issues. One of these two participants remarked: “The unstable connection impacts the flow of the conversation. Trying to repeat words over again to make sure the message comes across.” The same participant also mentioned during the interview:

“Normally I feel that the quality of calls is lower than the quality of in-person meetings because it is difficult to observe what the other person is doing. And what I do notice very much is, because I know the people I work with very well, that this is not a problem, because I can assess from their voices and behavior, so to say, what their situation is. If I were new, in a new situation, this would have been unpleasant, because then you have no idea.” (Translated from Dutch.)

In other words, while the participant did notice the technical disruption to the flow of the interview, she stated that this does not hamper conversations with peers she knows.

Several other participants also noticed slight disruptions, but “not more than usual.” Possibly, most participants ignored or did not even notice the delays that the videos showed due to their extensive experience with online video calls. One of the participants remarked during the interview: “I am so used to talking through web conference systems these days,” while another participant mentioned feeling “drained out because of video calls” on the evaluation form. Two participants remarked upon the effect of seeing themselves on camera (”you are also more aware of yourself with this method because you literally see yourself”).

Digital mediation

Student survey

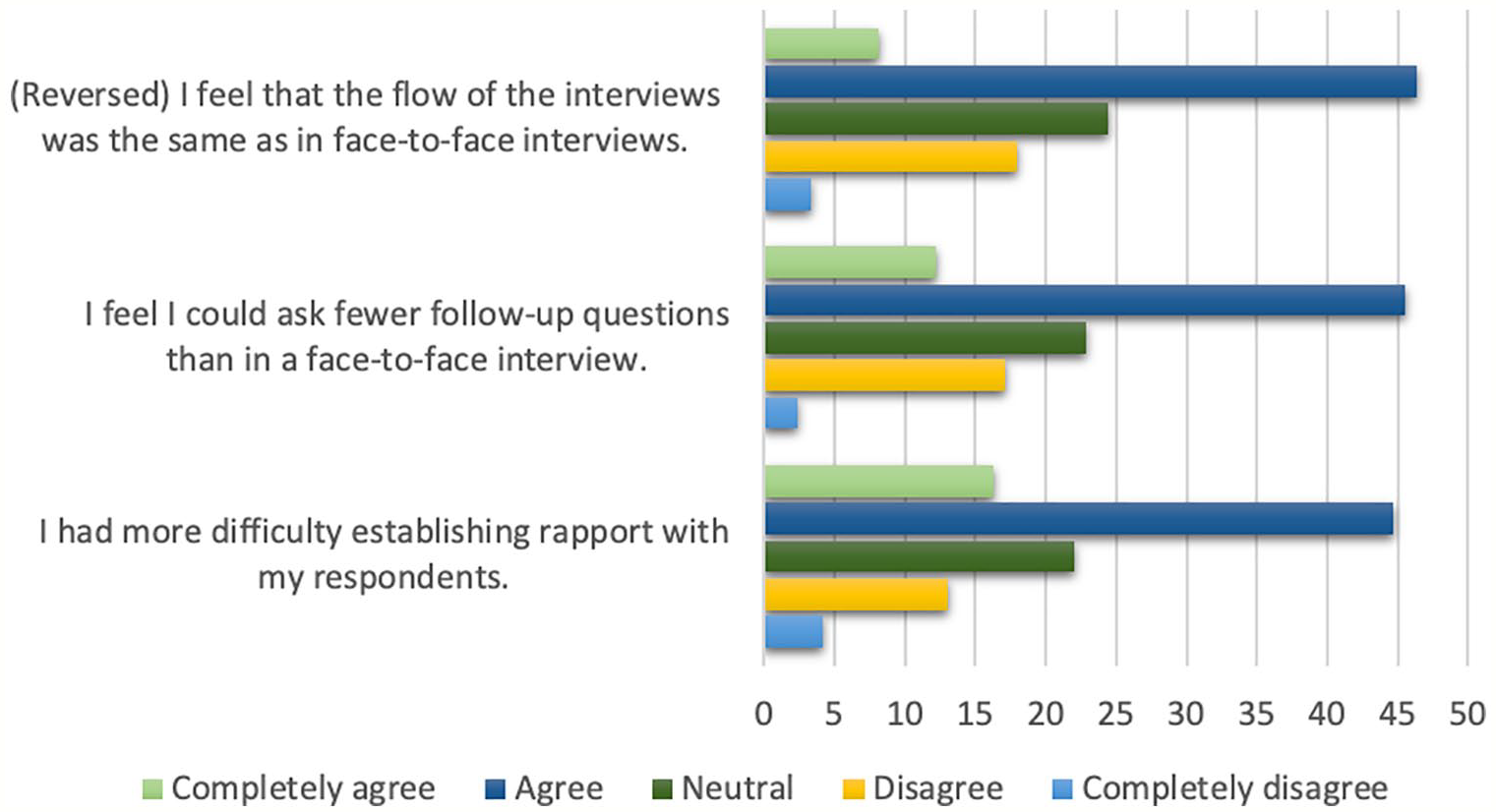

In the student survey, the responses to three statements stood out (Figure 1).

Responses to statements in student survey about the flow of interviews, follow-up questions and interviewer-interviewee rapport.

Clear majorities of students felt that the flow of online interviews was not the same as for face-to-face interviews, they could ask fewer follow-up questions than in face-to-face interviews, and they had more difficulty establishing rapport with their respondents. There could be several explanations for the perceived lack of rapport and the perceived lack of opportunity for follow-up questions. The context of a pandemic, for example, may also have had its effects on interviewees’ willingness to open up.

A slight majority of the students felt that the online interviews were more like a question-and-answer exchange than an actual interview: 39.8% agreed with that statement and 10.6% completely agreed, while about a quarter of the students was neutral (24.4%), and a similar segment disagreed (20.3%) or completely disagreed (4.9%). Most students also felt that the interviews were shorter than they might have been in a face-to-face setting: 42.3% agreed and 12.2% completely agreed (28.5% disagreed, 2.4% completely disagreed). Did the student researchers feel that holding the interviews online led to a sampling bias? No. Most students disagreed (35%) or completely disagreed (13.8%) to a statement about potential interviewees refusing to participate because the interviews were held online (22.8% agreed and 9.8% completely agreed, 18.7 % of the students did not know or were neutral). A slight majority of students (completely) disagreed with the statement that their interviewees were more reluctant to talk about certain themes because the interview was held online (39%); 35.8% agreed or completely agreed, while 25.2% were neutral.

Duo interviews

For the duo interviews, the duration was more or less given beforehand (20 minutes, though most ran up to 30 minutes, including good-byes). Since the participants in the duo interviews were given only four questions to ask, the duration and intensity of the duo interviews were not comparable to the interviews conducted by the students (30% of whom were conducting in-depth interviews). One expert interviewer complained during the interview that it was not clear from the instructions whether follow-up questions could be asked, and then went ahead and asked them. The other three pairs simply asked follow-up questions. Two pairs recapped questions to make sure that all had been answered.

Several participants acknowledged the researcher’s presence-in-absence during the interview. We should note here that the researcher was also a colleague and peer of the participants. In three out of four interviews, participants greeted the researcher (“Weird, it feels a bit like [the researcher] is here in the room with us [. . .] Hello!”) or addressed her during the interview. Some made remarks and comments about the methodology during the interview, clearly displaying an awareness that there were not just two active participants in the interview, but also an observer. For example, one participant remarked: “[The researcher] also knows a lot about my personal life now.”

The second question on the evaluation form was whether the interview was affected by the technology. One participant answered: “I do not think so. I mean, it’s good that we could do it online, but I was not happy with the fact that I have to record it via Skype. I despise Skype and I never use it unless absolutely no other means is available/possible. [. . .] However, I don’t think that at that moment that affected my behavior or my conversation, I completely disregard my annoyance with the tool.” The other participants also answered that they did not think the digital tool affected the interview in other than technical ways. One other participant mentioned: “As with all video calls, it is useful to first have some social talk, because in online meetings there is the tendency to immediately discuss the agenda topics.”

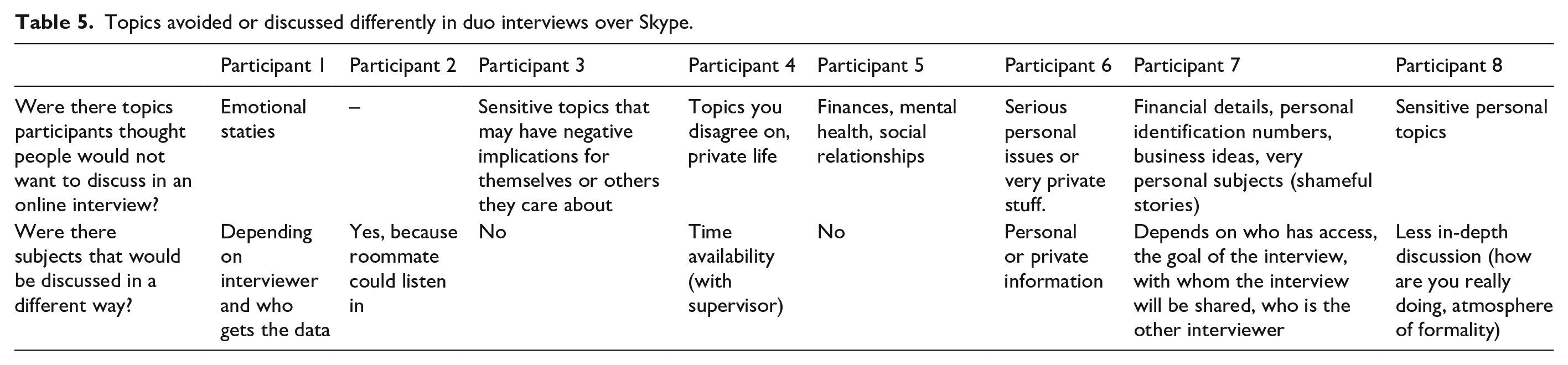

The third question in the evaluation form was about topics people might not want to discuss over online video calls. Table 5 shows an overview of the responses. The responses did not provide a list of topics people might not want to discuss but also topics that people may discuss differently.

Topics avoided or discussed differently in duo interviews over Skype.

The video recordings showed one participant noticeably retracting a potentially sensitive, personal question during the interview, saying: “How are the relations with your children? No, it should be work-related.”

Not all participants thought they could speak less freely during an online interview. One participant mentioned a tendency to talk more freely in online video calls: “My personal experience is that I tend to share more through technology. Not being over-stimulated by non-verbal communication might lead to more time, hence less pressure, and more thoughtful enunciation of ideas. [. . .] But I have to add, that it probably works best with people you already know.”

Question four in the evaluation form then asked which reasons people might have to withhold information during an online interview. In the responses to this question, the potential influence of digital video recording became apparent. Seven out of eight participants specifically mentioned the fact that the interview was recorded as a reason to withhold information, making remarks such as: “it can be recorded and used against you,” “there is a trail of this information now,” and “I feel a different kind of invasiveness between video-recording versus audio-recording.” Some participants mentioned security or privacy reasons in more general terms. Others referred to the recording ending up with unintended third parties. One participant also mentioned that digital videos can be manipulated easily.

Other reasons the participants mentioned for withholding information:

the possible presence of other people in the room during the interview (“my roommate can listen in on the conversation, I am aware of this and slightly change my answers”),

not wanting to disclose personal information in general,

not wanting to disclose personal information to colleagues,

a lack of trust in the platform itself (in this case, Skype) or an intermediate platform,

not liking the interview task,

not being able to read the body language of the interviewer (“[when interviews are face-to-face] the interviewee can use visual cues and intonation to assess the intent of the interviewer”), and

not feeling connected with the interviewer (“the distance, not feeling ‘literally’ connected to the person could influence the interaction (positively as well as negatively)”).

The question on what kinds of participants would be excluded by using this methodology (question 5) yielded answers in three categories:

People with no access to the required technology or lacking the required digital skills,

People who avoid technology or being recorded for privacy or safety reasons,

People whose professions or status preclude participating in a recorded interview.

The first category refers to technological mediation, while the latter two categories refer to the recording aspect of the methodology.

Notably, no participant had created a separate Skype account just for the study; they used the personal accounts they already had.

Familiarity and status differences

Since familiarity was not an explicit element in the interviews conducted by students, there were no statements in the survey on this topic. However, there was a statement about their sense of comfort in conducting interviews from home. Almost half of the students (48.8%) felt comfortable with that situation; only 12.2% disagreed, and 4.9% completely disagreed.

As for the duo interviews, all participants agreed that there is an effect of status differences in an interview. Naturally, given this study’s context, power asymmetries on the work floor were top of mind. A participant who was interviewed by a superior remarked: “I found it harder to admit that I have not been as efficient in working to [the interviewer] compared to other more equal colleagues.” A participant who was interviewed by someone in an equal position answered: “With superiors I think you want to present yourself in a professional positive light and would probably not be as honest or open about any negative impacts on your work.” A third participant highlighted another relation of power, the relationship between researcher and researched, again referring to the absent researcher: “Type of (power) relationship is important but also other factors might play a role in this, the fact that you know that another colleague will watch the video for example.”

Participants were remarkably consistent in their answers about being interviewed by a familiar person, with all but one participant responding positively. Only one participant did not discuss the element of being interviewed by a close colleague at all. The others all mentioned positive effects. “Because I was talking with a person that I’m close with, that I can feel relaxed with, I was more free and playful,” “I can imagine that people could feel uncomfortable being asked personal questions over a digital medium. However, because I know [my interview partner], I didn’t experience any of that.” One participant gave a more nuanced answer: “With colleagues, it depends what category of colleague: With [my interview partner] I feel quite close and would discuss things more informally and in line to my real feelings.”

In comparison, being interviewed by a neutral outsider was discussed in less favorable terms. “Neutral outsiders, [I] keep my conversations more neutral. This does not mean that they are more truthful, they might be just more factual. With a colleague or friend, I would probably elaborate too much on details, but that can make the data richer,” was the response of one participant, while another answered: “With a neutral outside interviewer I would also act more formal because you don’t have an established relationship.” One participant indicated possible adverse effects: “In the situation of a ‘neutral’ person, I assume that the interviewee has no to limited knowledge about this person. Therefore, possibly more critical observation by the interviewee is required to assess the intention of the interviewer.”

Two participants suggested a strategic approach to familiarity between interview partners. One mentioned: “It would be good to think if it’s smart to limit who can interview whom – in some situations two close colleagues interviewing each other might be better, in others being interviewed by a colleague you’re not that close with might be more beneficial.” The other remarked that it might be good for some research questions, but that the was “not sure if it’s an adequate method for studies on actual behavior or attitudes.”

Other aspects of duo interviews

The last question on the evaluation form for the participants in the duo interviews allowed them to express their ideas about other aspects of the methodology that had not been addressed yet. During the duo interviews, participants also sometimes made remarks about the methodology. For instance, one participant commented during the interview about working online: “If you sometimes want to discuss more difficult things, then you lack physical interaction.” One participant suggested an alternative research design to prevent the interview partners from knowing each other’s questions beforehand: “Would it maybe also work if each partner has a specific question, ask it and then reply also with how they would reply to it from their own perspective? Just to add a bit more surprise.” There was also a comment on the data protection measures: “I found the password protection option for zip-files surprisingly complicated [on an Apple MacBook] and hope that in the future it becomes easier to secure your files.”

Discussion

In summary, the main findings of both studies combined were:

Technical issues

Technical problems that hamper online interviews do still occur and will often not be due to issues on the interviewee’s part alone. However, when interview participants are experienced in using online tools, they tend to disregard delays and technical hiccups. When participants (I) know each other well and (II) are skilled in using online video calling tools, there appears to be little negative effect of minor technical issues on the flow of the interview or the rapport between the interview partners.

Interviewers and interviewees with experience in using online tools do not report or appear to be distracted in video calls, though the duo interview participants looked away from the screen, which may be a coping strategy to avoid being distracted from seeing oneself on screen. Two of them did mention being aware of themselves more because they saw themselves on screen. Further research will be needed on this point, as this is not only the case for the interviewer but also the interviewee.

The social distancing measures imposed during the COVID-19 pandemic may have lowered barriers to online video interviewing, as many people have gained skills and become more accustomed to working with online video-calling tools.

Digital mediation

Compared to face-to-face interviews, students found that:

the flow of the interviews was not the same;

they could ask fewer follow-up questions;

they had more difficulty establishing rapport with interviewees;

interviews were more like a question-and-answer exchange than an actual interview;

interviews were shorter.

Students and experienced interviewers disagreed on the exclusion of potential respondents or the types of topics that could be discussed. While students saw no issues, the experienced interviewers responded that:

three types of respondents may opt out of this specific type of interviews: people with no access to the required technology or lacking the necessary skills, people who avoid technology or being recorded for privacy or safety reasons, o people whose professions or status preclude recorded interviews;

interviewees may censor themselves on a list of topics (see Table 5), from emotions and “very private stuff” to novel business ideas;

concerns about privacy and data protection are the reasons mentioned most often for why people may censor themselves during recorded online video interviews.

Remarkably, to a direct question about the digital mediation of video-calling tools on interviews, all experienced interviewers answered that there were no (major) effects.

A notable finding for the method of duo interviews is that the absent researcher is still a passive participant in the interview. The active participants in this study were clearly aware of having an audience. Daynes and Williams (2018: 81) compare this situation to quantum physics: the presence of an observer changes what she or he is observing. Unfortunately, the element of the influence of the observer-researcher on the recorded interview was not addressed in the evaluation form for the experienced interviewers but evidently should be studied in more detail.

Familiarity and status differences

Participants in duo interviews reported:

being more reluctant to disclose information on certain topics to superiors;

feeling more relaxed and free being interviewed by a familiar person, and more amenable to talking about personal experiences in detail;

it would be more difficult for a neutral outsider to establish rapport;

people who know each other may avoid topics they know the other may disagree on.

As some participants pointed out, this method would have to be used judiciously, in a strategic consideration of limitations regarding topics and participants.

Given that the aspects of familiarity and status turn out to have a substantial effect on willingness to disclose personal details, researchers using the method of duo interviews may want to consider creating a short profile of the type of interview partner participants should look for to have more control over the levels of familiarity and status. To allow for the development of such profiles, the evaluation form should contain more extensive questioning about the bond between peer interviewers.

In addition, while these participants were mostly experienced interviewers and users of video calling tools, interview training would have to be provided for inexperienced participants, which may also have to include some training in the use of video calling software and elements of data protection. Only one participant in this study commented on password protection in the evaluation forms, but the researcher also received questions from the other participants after the interviews about password protection. No remarks or comments were made about these elements of data protection in the evaluation. However, in the discussion between the researchers about the analysis, it was noted that the extensive information about data protection measures in the recruitment might have heightened participants’ sensitivity toward privacy and security.

Another aspect that needs to be further explored is whether participants feel more comfortable with the situation as the interview progresses. For instance, one participant remarked: “As participant you notice a difference because of the ‘actual’ recording [. . .] there is a sign that constantly reminds you of the fact it is recording. However, after a few minutes, you already feel more at ease.”

Conclusion

The advantages of online video interviews are clear, especially in overcoming barriers of distance and in saving time and money. Using online video calling tools may expand the potential sample of participants but also constrict it, as the list of potentially excluded categories of participants in the discussion demonstrates. Online interviews also might make it difficult to create rapport between interviewer and interviewee when they are unfamiliar with each other. The lack of face-to-face contact, misinterpretation of pauses, interruptions and technical delays, and self-consciousness and privacy concerns influence interviewees’ feelings of comfort. Online video interviews may also lead interviewees to be more cautious when discussing certain topics.

Interviewers who wish to counter these issues can use duo interviews to collect more extensive information and details about people’s personal experiences and viewpoints. This approach puts interviewees at ease and lowers barriers to disclosure. However, several limitations of duo interviews have come up: participants need to be trained in interviewing skills and in using video-calling tools, and participants may avoid topics they know the other to disagree on. In general, certain topics may be more easily discussed with strangers. In addition, follow-up interviews by the researcher may be needed for additional questions. After all, in duo interviews by peers, the researcher has no influence over follow-up questions. This drawback can also be countered by explaining the research goals to the interview participants, if possible.

Finally, while being interviewed by a familiar person does lower barriers to disclosure, duo interviews do not eliminate the effects of a relation of power between researcher and researched, as interviewees are aware of the researcher’s gaze. This might even lead to a reactive effect, induced by the researcher’s virtual presence. In this study, the fact that the researcher was also familiar to the interview participants might have increased privacy concerns, as the participants were aware of disclosing personal information to a particular person. Further research will have to explore whether this effect is decreased when the researcher is an unknown observer. In any case, the power relationship between researcher and researched is by no means resolved in duo interviews between peers.

To allow for a more strategic approach to familiarity between interviewer and interviewee, more research will be needed on appropriate types of topics. Further research would also be welcome on different levels of familiarity (between the duo-interview participants and the researcher), the influence of the passive presence of the researcher, the influence of data protection information on sensitivity to privacy and security aspects, and a potential increase of informality or ease with the situation as the interview progresses.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.