Abstract

Background

If screening participants do not trust computerized decision-making, screening participation may be affected by the introduction of such methods.

Purpose

To survey breast cancer screening participants’ attitudes towards potential future uses of computerization.

Material and Methods

A survey was constructed. Women in a breast cancer screening program were invited via the final report letter to participate. Data were collected from February 2018 to March 2019 and 2196 surveys were completed. Questions asked participants to rate propositions using Likert scales. Data analysis was done using χ2 and logistic regression tests.

Results

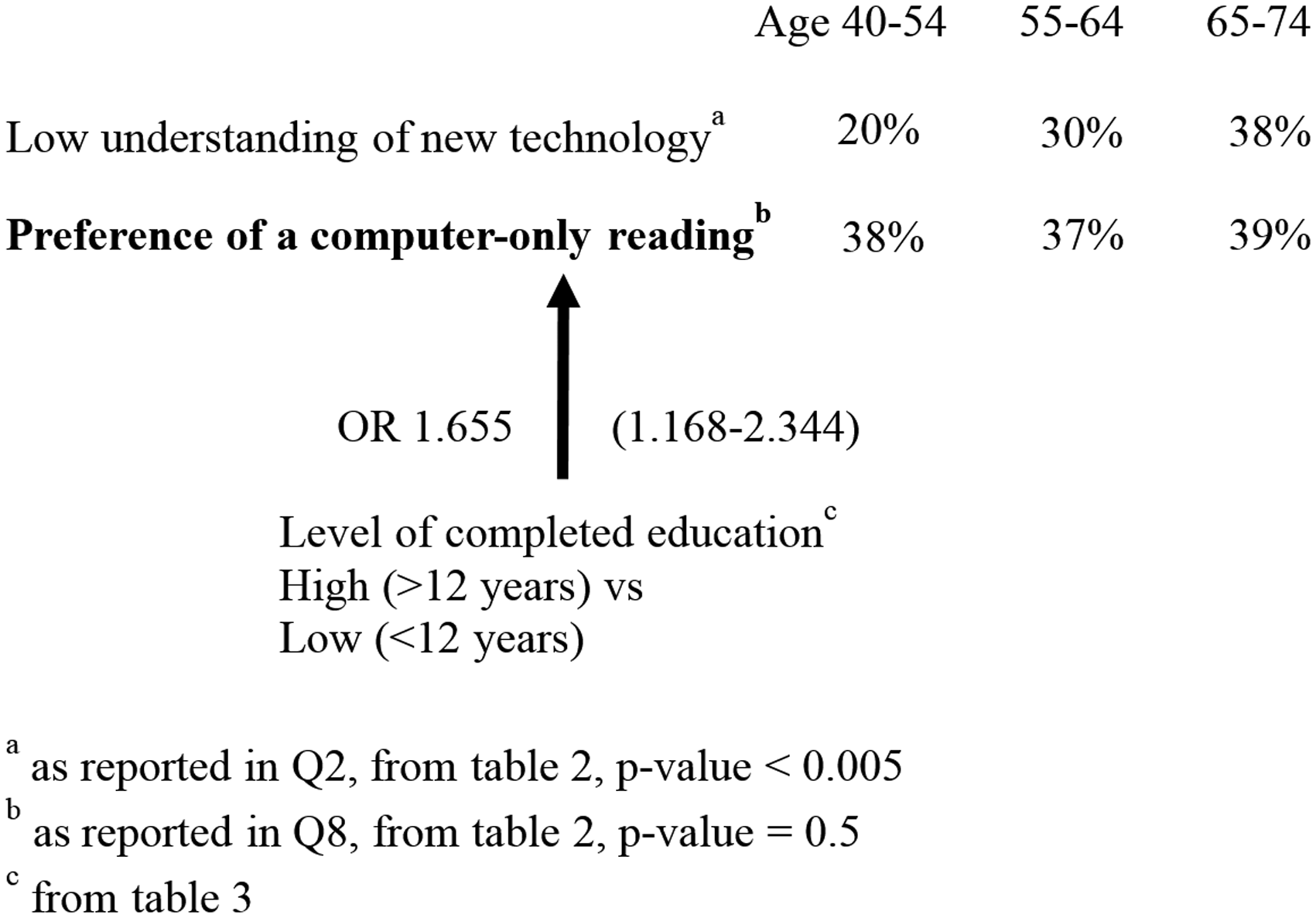

The mean age of participants was 61 years. Response rate was 1.3%. Of the submitted surveys, 97.5% were complete; 38% of respondents reported a preference for a computer-only examination. The highest level of confidence was given a computer-only reading followed by a physician reading. Participants with > 12 years of education were more likely to prefer a computer-only reading (odds ratio [OR] 1.655, 95% confidence interval [CI] 1.168–2.344), had a greater trust in letting a computer determine screening intervals and the need for a supplemental MRI (OR 1.606, 95% CI 1.171–2.202 and OR 1.577, 95% CI 1.107–2.247, respectively). Age was not found to be a significant predictor.

Conclusion

A high level of trust in computerized decision-making was expressed. Higher age was associated with a lower understanding of technology but did not affect attitudes to computerized decision-making. A lower level of education was associated with a lower trust in computerization. This may be valuable knowledge for future studies.

Introduction

Artificial intelligence (AI), particularly deep learning, is currently being developed in an effort to assist and possibly even replace radiologists. One primary target is breast cancer screening, where there is a shortage of radiologists and a large examination volume (1). The success of a public screening program is dependent on high rates of participations (2). Participation has been argued to be driven by participants’ understanding of the benefits of screening (3). The future introduction of AI and computerized decision-making in breast cancer screening could jeopardize participation rates if women do not trust AI examinations.

We hypothesized that younger and well-educated participants would be more open to the possible future uses of AI. We also hypothesized that a history of cancer or chronic disease would influence participants’ trust in and application of AI in mammography. We have been unable to find similar studies, although a recent publication on physicians’ confidence in artificial intelligence makes reference to a news report in Korean that claims that Korean patients would follow AI advice over a doctor’s advice with regards to cancer treatment (4). The aim of this study was to survey screening participants’ attitudes towards the use of AI in the future.

Material and Methods

The study was a prospective survey. A questionnaire was developed collaboratively by the authors and written in Swedish. It was constructed in Google Forms (Google LLC) and accessed via a dedicated web page hosted by our institution. Potential participants were provided with written information about the study on the web page and that participation was anonymous and optional. Participation in the survey was considered informed consent. The study was performed according to the Declaration of Helsinki and waived by the local ethics committee (project number: 2017/1979-31).

The underlying screening population was women living in Stockholm who were invited to partake in the national breast cancer screening program. In Sweden, mammography screening is offered to women aged 40–74 years. Women with a negative examination between February 2018 and March 2019 were invited to participate in the study. An invitation was included as an addendum to the final report letter that was mailed to the women. Specifically, the invitation letter asked the woman if she would like to influence how screening is done in the future, then directed her to the survey on our website; see Supplemental Material for a Swedish and translated version of the modified standardized final report letter. Only women with an initial negative screening were invited. This was done to avoid putting additional stress on recalled participants of participants with a recent cancer diagnosis.

The questionnaire was composed of 29 multiple-choice questions; see Supplemental Material for a Swedish and translated version of the questionnaire. A few possible future scenarios where AI could be used in mammography examinations were described briefly and followed by questions. We also queried interest and understanding of new technology, as well as gathering basic data on demographics: age; living situation; level of education; occupation; income; and a brief medical history asking about previous cancer, previous breast cancer, cancer in a close relative, and presence of chronic disease.

Questions were designed to force participants to choose a positive or negative stance to the presented scenarios by use of four-point Likert scales (5). One question (Q8, Table 1) was designed to force the participant to choose between a computer-only reading or a traditional two-physician reading. Responses to this question were used to define responders as “tech-trusters” or “tech-sceptics” and divided into two groups.

Demographics of tech-trusters and tech-skeptics.*

*Respondents split into tech-trusters and tech-skeptics based on answers to Q8: Given the computer is at least as good as the average physician, what would you prefer?

†Statistics from Statistics Sweden (www.statistikdatabasen.scb.se).

‡Percentages are related to the total number of respondents. These do not always add up to 100%. This is caused by rounding and missing responses. In particular, 50 participants did not answer the question of age and 25 participants reported an age > 74 years.

§An estimated prevalence of at least 50% based on this age group and available data (14).

The questionnaire was initially tested in a pilot study over six weeks with 188 responses (results not reported). The questionnaire underwent minor revisions during this process.

Distribution of responses in the initial pilot study indicated that 1000 responses would be a reasonable volume for statistical analysis and was chosen as a minimum sample size. No power analysis was done. Data analysis was performed using Past 3.21 software (Ø. Hammar, https://folk.uio.no/ohammer/past/, 2018) and PSPP 1.2.0 (GNU Project, 2019) and verified by an external party. To facilitate interpretation, responses were tested with respect to dichotomized Likert scores, with the two lower and higher scores grouped together. Logistic regression was done to study the potential predictors age and educations level with respect to three questions in the survey. The level of statistical significance was set to

Results

The first 1000 responses were collected by mid-September 2018. An additional 1196 questionnaires were collected during the write-up of this article. In total, 164,444 women were examined and had normal mammograms and thus were invited to participate in the survey. This corresponds to a response rate of 1.3%. Out of the submitted surveys, 97.5% were complete, with answers to all 29 questions. The question with the most omitted answers (2.5%) asked about the age of the participant. The mean age of participants was 61 years. Table 1 shows detailed demographic characteristics of the respondents divided into the two groups described under “Material and Methods” and labeled “Tech-trusters” and “Tech-skeptics”. General Stockholm population statistics are also included, as well as the results of the χ2 tests.

Scenario-based questions, responses with respect to age groups, questions 1–16.

Respondents divided by age groups do not add up to the corresponding total numbers in the left-most columns. This is due to age data missing for 55 respondents and recorded as > 74 years in 25 cases.

A higher level of education, as defined by completion of > 12 years of education, was statistically associated with the trust group, with 68% reporting higher education in comparison to 59% in the group of skeptics (

Table 2 presents responses to scenario-based questions for all respondents grouped as one as well as divided by age group. Of the respondents, 38% reported a preference for a computer-only examination given that the computer is at least as good as the average physician (Q8). A computer-only exam was given the two higher Likert scores, indicating certainty, by 59% (Q4). This increased to 97% when a physician exam was added (Q7). Letting a computer determine screening frequency was well trusted by the respondents, with 62% reporting Likert scores of 3 or 4 (Q10). Trust in letting a computer determine the need of supplemental magnetic resonance imaging (MRI) received Likert scores of 3 or 4 by 77% of the study population (Q14).

Most participants reported a willingness to wait ≥30 min, with about 20% willing to wait 2–3 h, for a final report. The willingness to wait for a final report was significantly lower in the group of women aged 55–64 years but similar between the younger and older patient groups (Q6). In a separate analysis, the willingness to wait was not found to differ among respondents who reported a preference of a computer-reading only and those who prefer a two-physician reading (χ2 test with a

Comparing the three age groups, we found that the older age groups reported a lower level of technical understanding and interest (Q1 and Q2). The two older age groups were more negative than the youngest age group to not being offered an MRI exam (Q16). Responses to other questions did not differ significantly based on age of the respondents. Thus, interest in technology and understanding of technology did not affect preference of a computer-only reading (Q8).

Table 3 presents the results of logistic regression modeling. Age and level of education were tested as predictors of outcome with respect to questions Q8, Q10, and Q14. For the two latter questions, responses were dichotomized to 0 for Likert scores 1 and 2, and 1 for scores 3 and 4. Age was reported in five-year brackets in the survey and respondents were further aggregated into three groups: 40–54 years; 55–64 years; and 65–74 years. We found that higher education was a positive predictor of preference of a computer-only examination (odds ratio [OR] = 1.655, 95% confidence interval [CI] = 1.168–2.344), a higher level of trust in letting a computer determine screening intervals (OR = 1.606, 95% CI = 1.171–2.202), and trust in letting a computer determine the need of an MRI (OR = 1.577, 95% CI = 1.107–2.247). A high self-rated understanding of new technology showed a positive predictive value with respect to the same three questions (OR = 1.547, 95% CI = 1.276–1.876; OR = 1.747, 95% CI = 1.451–2.102; and OR = 1.755, 95% CI = 1.414–2.178, respectively). Age did not predict any preference of, or higher level of trust in, the same computerized decisions.

Logistic regression, results.

*Based on Q8, Given the computer is at least as good as the average physician, what would you prefer?

†Determined by Likert scores 3 and 4 to Q10, How well would you trust a computer to determine frequency of screening mammograms?

‡Determined by Likert scores 3 and 4 to Q14, How well would you trust a decision of a need for MRI determined by a computer?

Figure 1 summarizes key findings from Tables 1 and 3.

Discussion

The response-rate in this study is lower than reported for other similar online surveys, for example 7.5% was reported in a German study (6). A low response rate may be due to sending the link by regular mail rather than email. It may also be due to a lack of interest in the subject, which is a well-known reason for not responding (7). One anecdotal report online states that interviewed “patients commonly expressed a belief that an increased implementation of AI-driven technology in the medical field remains both ‘inevitable’ and ‘the way of the future’” (8). Such a mindset would, it seems, influence participation in our study. Recruitment for a survey via a final mammography screening report letter has, to our knowledge, not been studied before. Potential participants had to read the final report letter to the end and then manually enter a URL on a device with an Internet connection. It is likely that both these hurdles contribute significantly to the low response rate. This methodology most certainly imposes a significant selection bias toward technologically interested women, which is also seen in responses to Q1. To possibly counteract this effect the invitation to partake was formulated in very general terms, with no references to computers or AI. In addition, the web-address and questionnaire were simplified as much as possible and accessible using computers as well as smartphones.

A recent article studying resistance to medical AI found, using extensive tests on college students, that “resistance to medical AI is eliminated when AI provides care (a) that is framed as personalized […], (b) to consumers other than the self […], or (c) that only supports, rather than replaces, a decision made by a human healthcare provider” (9). Our study was not nearly as extensive as the studies described in this article when it comes to analyzing willingness to trust a computer decision. Instead, our study found more general results with participants reporting an overall positive attitude towards the scenarios where computers fully or partly replace humans in decision-making within breast cancer screening. Although a computer-only decision received high levels of trust, the addition of a reading by a physician increased trust to the highest levels for any of the presented scenarios. We interpret this as confirmation that participants in the screening program think that the combination of a physician and computer exam, also known as computer aided detection, is a promising method. This supports current developments, for example in the USA, where computer-aided diagnostics is routinely used in mammography and where studies find equivalent or improved lesion detection with only small increases in recall rates (10).

Using a computer to determine the need of supplemental MRI was the hypothetical computerized task that received the highest ratings of trust. MRI is not a standardized part of the current screening program and possibly seen as a risk-free added option. The questionnaire does not enable distinguishing a preference for this computerized task to one driven by a physician recommendation. The positive response does, however, support the use of computerization for this task in future studies.

Respondents reported a willingness to remain on site to wait for a final combined human and computer report as compared to a final report based on two physicians’ readings which is the current situation. The willingness to wait was not shown to be related to reported preference of a computer reading. The question was instead included as a preparatory seemingly likely possible development where one working physician in collaboration with a computerized reading could enable a very quick workflow. The questionnaire did not further investigate the reasons for this willingness to wait, although a wish to minimize the time of psychological distress is likely. Waiting for a final report has been found stressful and women with false-positive initial mammograms, i.e. recalled for additional examination but without findings of cancer after these additional examinations, have been shown to be distressed in particular (11). Since we did not ask specifically about distress and women with a recall decision were excluded from our study, we cannot analyze the role of distress further.

We expected more positive attitudes and a higher level of trust in computerization in the younger, more technologically accustomed group. Age, however, was not shown to affect respondents’ views on possible future developments. Older women had a more negative attitude and lower understanding of new technology but were not less prone to accept computerized decision-making in the screening setting. We have found no prior studies of women partaking in screening, but a similar lack of association with age can be found in a recent study on physician confidence in AI (4) as well as in a few very old studies on medical professionals’ attitudes towards computerization in general (12,13).

Two strengths of our study are the large number of invited participants, over 100,000, and the high rate of completed surveys with answers to all 29 questions. An obvious weakness of our method of recruitment is the low response rate. External validity has been checked by comparing participants with the Stockholm general population data with respect to age, living situation, level of education, and presence of chronic disease. More extensive checks, for example by letting screening participants fill out printed versions of the questionnaire, would be valuable. For this study, it means that the results do not necessarily reflect those of the entire screening population and must be interpreted with caution.

In conclusion, women in breast cancer screening who chose to participate in our survey reported high levels of trust in computerized decision-making. They reported the highest level of trust when a computer examination was combined with that of a physician and the majority reported a willingness to wait at the clinic if this would enable same-day results. Despite a more negative attitude and lower understanding of new technology, older participants were not less prone to accept computerized decision-making in the screening setting. A higher level of education was associated with greater trust in a computer-only exam which suggests that particular care should be given to inform and educate women with lower education level when introducing AI and computerized decision-making in screening.

Supplemental Material

ARR880315 Supplemental Material - Supplemental material for The future of breast cancer screening: what do participants in a breast cancer screening program think about automation using artificial intelligence?

Supplemental material, ARR880315 Supplemental Material for The future of breast cancer screening: what do participants in a breast cancer screening program think about automation using artificial intelligence? by Olof Jonmarker, Fredrik Strand, Yvonne Brandberg and Peter Lindholm in Acta Radiologica Open

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received the following financial support for the research, authorship, and/or publication of this article: Vinnova, grant no. 2017-01382.

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.