Abstract

Healthcare professionals are increasingly expected to use research evidence to improve healthcare outcomes. The aim of this study was to examine change in beliefs and implementation of evidence-based practice (EBP) one year after participation in a 15 ECTS postgraduate educational program in EBP. We conducted a before-and-after study among 158 healthcare professionals in a postgraduate program in EBP. Participants completed the Norwegian version of the EBP Beliefs Scale and the EBP Implementation Scale at baseline and one year after the program. Results indicated increased scores from pre-test to one year follow up on the EBP Beliefs Scale and the EBP Implementation Scale. Participants in evidence-based network groups reported higher scores on the EBP Implementation Scale than those who did not participate in these groups. Further research should examine other factors that may facilitate implementation of evidence-based practice.

Introduction

Evidence-based practice (EBP) has been on the international agenda for the past decades, and healthcare professionals are increasingly expected to use research evidence to improve healthcare outcomes. Evidence-based practice is an approach where clinical expertise acquired through clinical practice and experience is integrated with current best available research evidence and patient preferences, within the context of available resources. 1 This involves finding, assessing and interpreting research evidence, implementing evidence findings and evaluating performance. To deliver practice based on evidence, healthcare professionals need to build competence. Today, EBP is taught to all levels of students and practitioners through workshops, journal clubs, courses, seminars, conferences, various educational programs and internet-based interventions. It is no longer a question of whether to teach EBP, but how to teach it. 2

Teaching EBP typically involves the five essential steps of EBP: 1) asking an answerable question, 2) searching for the best available evidence, 3) critically appraising the evidence for its validity, clinical relevance and applicability, 4) applying the results in practice and 5) evaluating performance. 1 However, people with differing roles and levels of responsibility require different skills for EBP and different types of evidence. 1 Clinicians may incorporate evidence into their practice by carrying out the steps of EBP, by using pre-appraised evidence summaries or by replicating recommendations and decisions of respected EBP leaders.2,3 These approaches require different use of time, skills and knowledge. Ideally, educational aims should be matched to the needs and characteristics of the learners. 4

According to Khan and Coomarasamy's ‘hierarchy of effective teaching and learning’, the best way to learn EBP is through interactive teaching and learning activities clinically integrated in practice. 5 Using this approach, students will learn not only the principles and skills of EBP, but also how to incorporate these skills with their own life-long learning into patient care. 1 However, other teaching and learning approaches may be suitable for learners who have to acquire a baseline or pre-requisite knowledge and encouragement in EBP. 5 For these learners, interactive classroom-based teaching or didactic clinically integrated teaching may also be appropriate.

Several systematic reviews have summarized the effect of postgraduate teaching in EBP.5–11 The interventions reported significant gains in EBP knowledge and skills. Some evidence found changes in EBP attitude and behavior when teaching was integrated into clinical practice.5,6 However, caution is warranted due to variations in methodological quality, outcome measures, populations and educational interventions. In addition, few of the included studies used validated measurement instruments.8,9,12

To build competence in EBP, Bergen University College established a postgraduate educational program in EBP in 2004. The purpose of this program was to promote the use of research evidence in teaching and in clinical practice, make healthcare professionals aware of existing access to evidence, and to provide implementation strategies. 13 The program was organized into one semester, with a total of 42 hours distributed over seven days. Various learning strategies like lectures, group discussions, skills training and assessments were used in the program. The target group was teachers within healthcare professions (nursing, physiotherapy, occupational therapy, radiography and social work), professional development specialists, and healthcare professionals from clinical practice who wanted to practice based on evidence. 13

Much of the literature on postgraduate teaching in EBP describes educational interventions of shorter duration. Most EBP programs have been variably conducted, with sessions from a few hours to a few days long, as one-hour sessions weekly over a period of weeks or as one-hour sessions monthly for a year.6,8,9 There is a need to understand how more comprehensive postgraduate educational programs affect healthcare professionals' EBP over time. The aim of this study was to examine changes in EBP beliefs and implementation among healthcare professionals one year after participating in a postgraduate educational program in EBP of 42 hours. A secondary question explored the change in scores with participation in EBP network groups.

Methods and materials

Research design

A pre- and post-test survey design was conducted, using the Norwegian version of the EBP Beliefs Scale and the EBP Implementation Scale. Data were collected before and one year after participation in a postgraduate educational program in EBP.

Sample

The sample consisted of 203 healthcare professionals attending postgraduate education during the period 2009 to 2011. The participants came in six different cohorts going through the program at different periods. Only participants who had passed the final examination were eligible to participate. Participants who followed the postgraduate program without taking the exam and participants who failed the exam were excluded from the study.

Procedure

All participants (203) completed the questionnaires before the teaching started the first day. One year after the program, the 158 participants who had completed the course and passed the examination received a postal questionnaire and a reply envelope. The participants' anonymity was ensured by means of numbered questionnaires, and the researcher was blinded to participants. We sent out to two reminders.

The postgraduate program in EBP

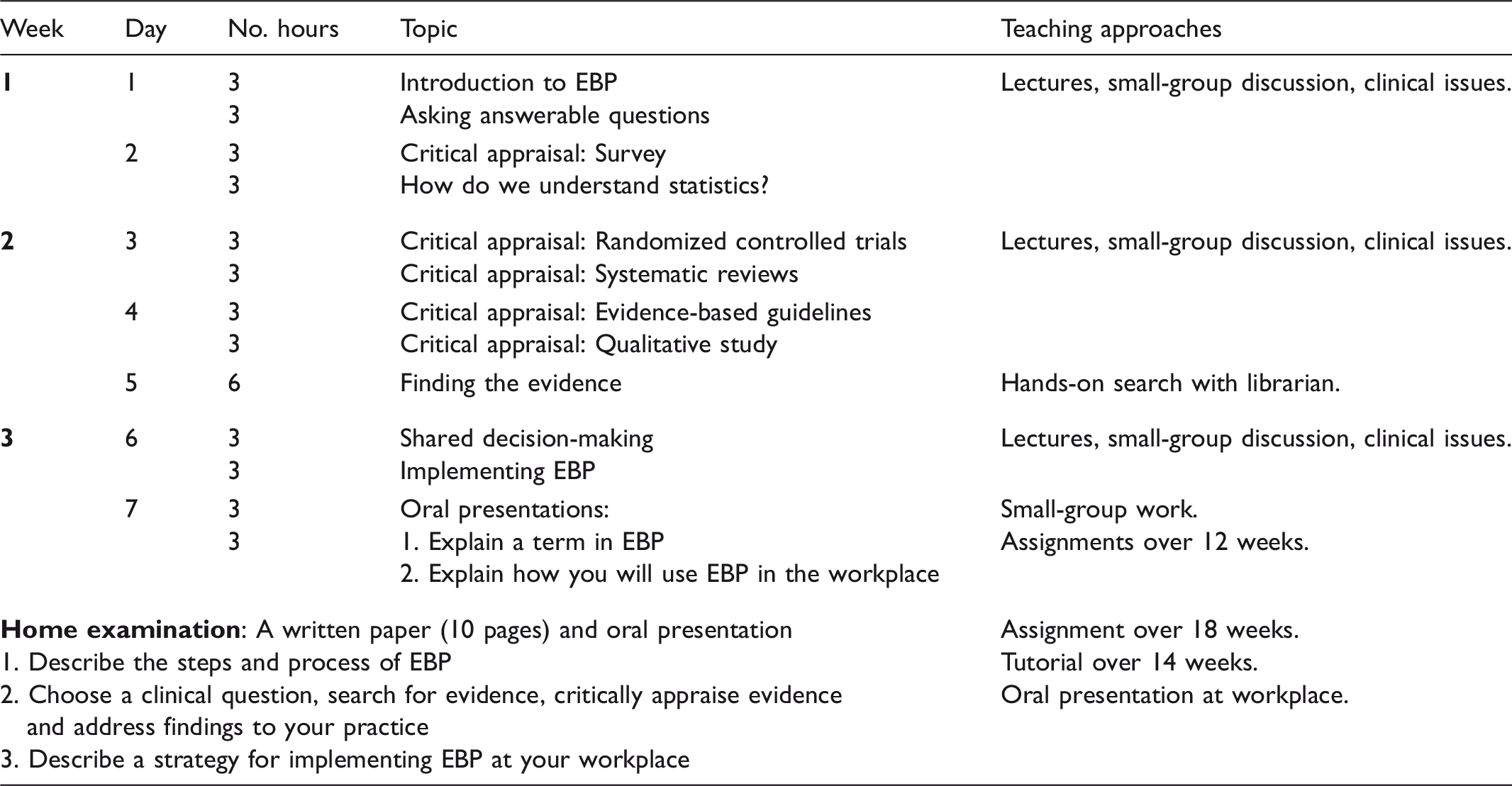

The core content of the postgraduate program was based on the steps of EBP. 14 The students were taught how to reflect on clinical practice and ask answerable clinical questions; search for evidence; critically appraise evidence for its validity, importance, and applicability; apply evidence to practice; and evaluate performance.1,14 The teaching used principles from adult learning theory 15 and the Critical Appraisal Skills Programme. 16 The program used a combination of various learning strategies, such as interactive and didactic lectures, small-group discussions, problem-based learning, skills training, and oral and written assessments. To ensure a close relationship to clinical practice, clinical issues and research articles relevant to the students' practices were incorporated throughout the course.

Content of the postgraduate educational program in evidence-based practice (EBP).

To sustain knowledge and skills obtained at the postgraduate program, the students were encouraged to participate in evidence-based network groups while working in clinical practice. In these network groups, which may have been pre-existing or established during follow up, healthcare professionals critically appraised articles in journal clubs, produced clinical guidelines in accordance with the Appraisal of Guidelines Research and Evaluation (AGREE) tool in clinical guideline groups or gained new knowledge by following the steps of EBP in ‘clinical circles in EBP’.

This postgraduate module was worth 15 ECTS credits. Across Europe, The European Credit Transfer and accumulation System (ECTS) is the credit system used in the European Higher Education Area. 17 It is a tool that helps to design, describe, and deliver study programs and award higher education qualifications. In this system, one full-time academic year corresponds to 60 ECTS credits, and one ECTS credit corresponds to 25–30 hours of work. 17

Outcome measures

We used the Norwegian version of the Evidence-Based Practice Beliefs Scale (EBP Beliefs Scale) and the Evidence-Based Practice Implementation Scale (EBP Implementation Scale). For the post measurement, we also included a questionnaire examining demographic data and participation in evidence-based network groups. The EBP Beliefs Scale and the EBP Implementation Scale were translated into Norwegian in accordance with the World Health Organization's principles of forward and backward translation. 18 Nina Rydland Olsen at Bergen University College performed the translation, in collaboration with the authors of the original scales (not published; available from author). For the original scales in English, construct and criterion validity has been established through factor analysis, 19 and good or excellent Cronbach's alpha values have been consistently reported.19–25 In this study, post-course values of Cronbach's alpha were 0.86 for the EBP Beliefs Scale and 0.88 for the EBP Implementation Scale.

The EBP Beliefs Scale consists of 16 items and measures the respondents’ self-reported beliefs about the value of EBP and their confidence in EBP knowledge and skills. Respondents indicate their agreement with the items on a five-point Likert scale ranging from 1 (‘strongly disagree’) to 5 (‘strongly agree’). The total score for the scale ranges between 16 and 80, with high scores indicating positive beliefs.

The EBP Implementation Scale consists of 18 items and measures how often the respondents engage in behavior relevant to EBP. On a five-point frequency scale, where 0 = ‘0 times’, 1 = ‘1–3 times’, 2 = ‘4–6 times’, 3 = ‘6–8 times’ and 4 = ‘ > 8 times’, the respondents report how often they have performed specific activities related to EBP within the past eight weeks. The total score for the scale ranges between 0 and 72, with high scores reflecting a more frequent use of EBP behaviors and skills.

Analysis

Random checks were performed to ensure accurate data entry. Two negatively phrased items in the EBP Beliefs Scale were reversed before analysis. Mean substitution was used for the scales if the respondent answered more than 80% of the questions.

Descriptive statistics were used to summarize baseline characteristics. We used paired sample t-tests to compare mean scores on the scales before and one year after the postgraduate program. To adjust for age, years in the present job and participation in evidence-based network groups we used generalized estimating equations (GEE) regression analysis. 26 An unstructured working correlation structure was applied in the GEE analysis to adjust for within-participant correlation.

The Wilcoxon signed-rank test was used to test changes in pre and post measurements for each item of the scales. Further, we calculated item-specific percentages for study participants who reported higher, equivalent or lower scores, comparing the scores one year after the postgraduate program with the scores at baseline.

Analysis of variance (ANOVA) with adjustment for baseline values was performed to compare mean scores on the scales at one year for those who participated and did not participate in evidence-based network groups after the program. Estimated mean values are presented. P-values less than 0.05 indicated statistical significance. IBM SPSS Statistics version 20 was used for the statistical analysis.

Ethics statement

The survey was voluntary, and consent for participation was given through completion and return of the questionnaires. We treated the data confidentially. The Regional Committee for Medical and Health Research Ethics (REC), Western Norway, exempted this study from review, because the study did not include medical or biomedical aspects. The Norwegian Social Science Data Services (NSD) approved the study (reference number 22883).

Results

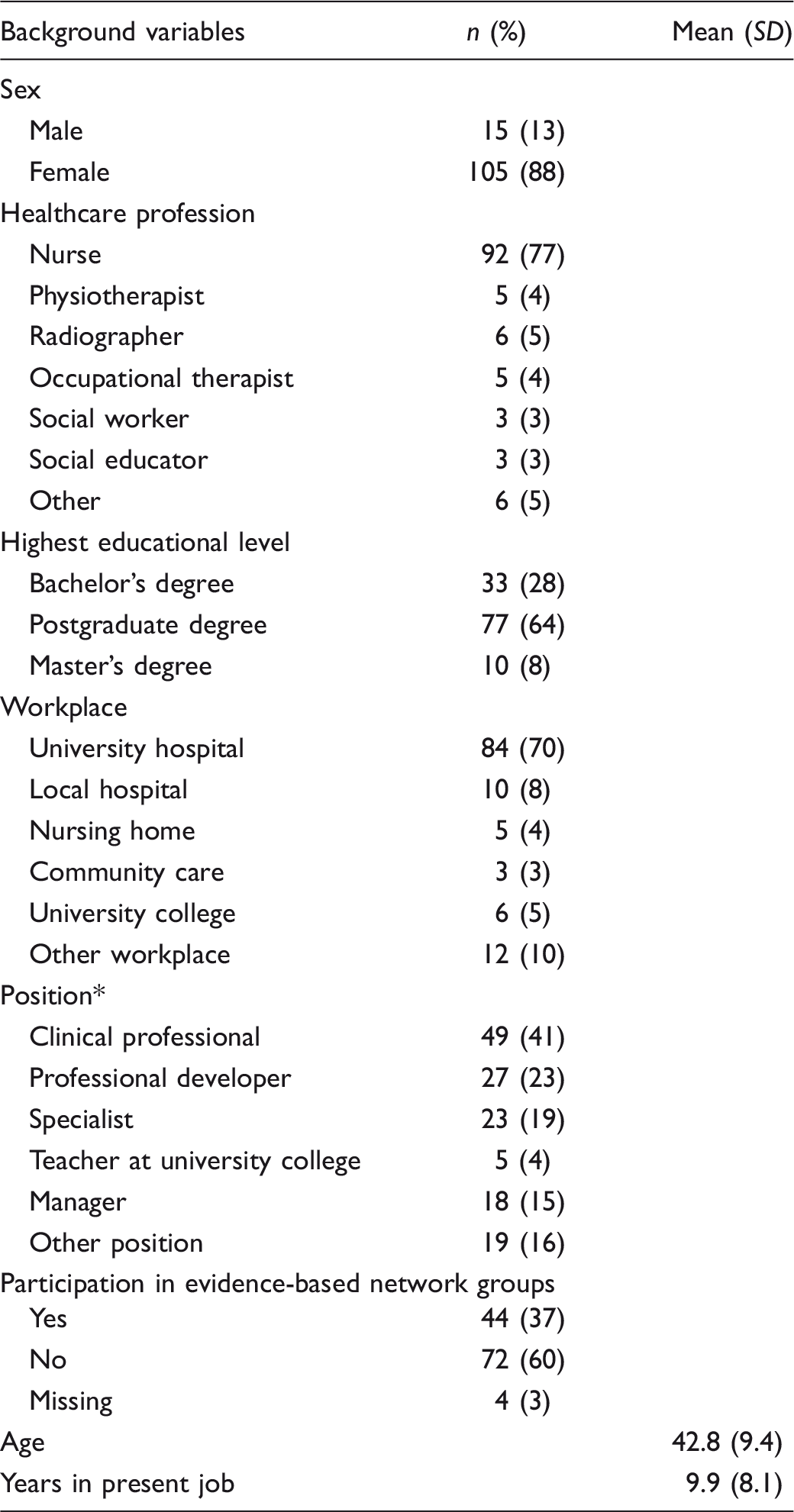

Background variables for participants (n = 120).

Some held more than one position.

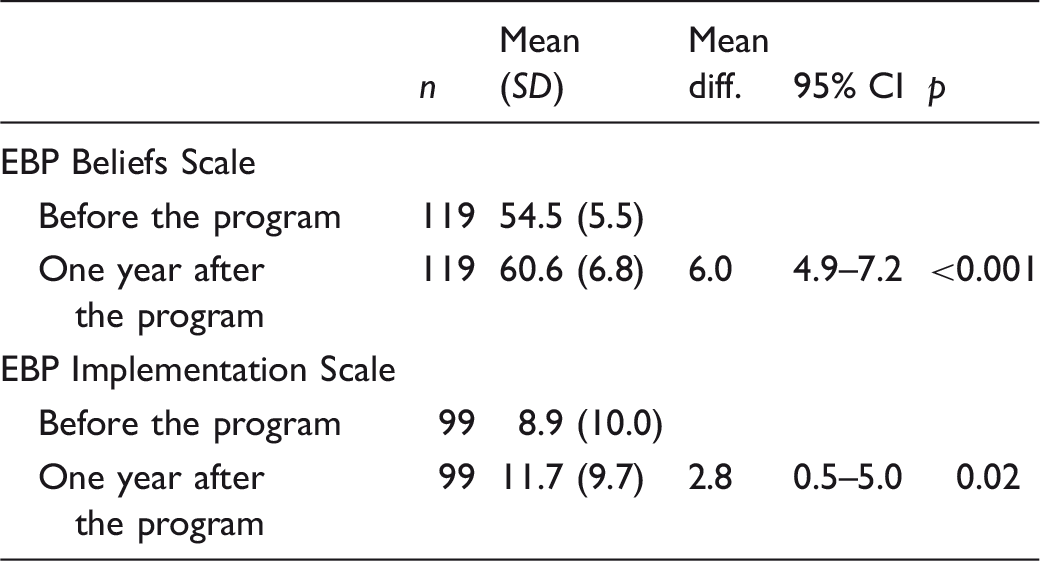

Changes in EBP belief scores

Mean differences for the EBP Beliefs Scale and the EBP Implementation Scale.

Note: Analyzed with paired t-test.

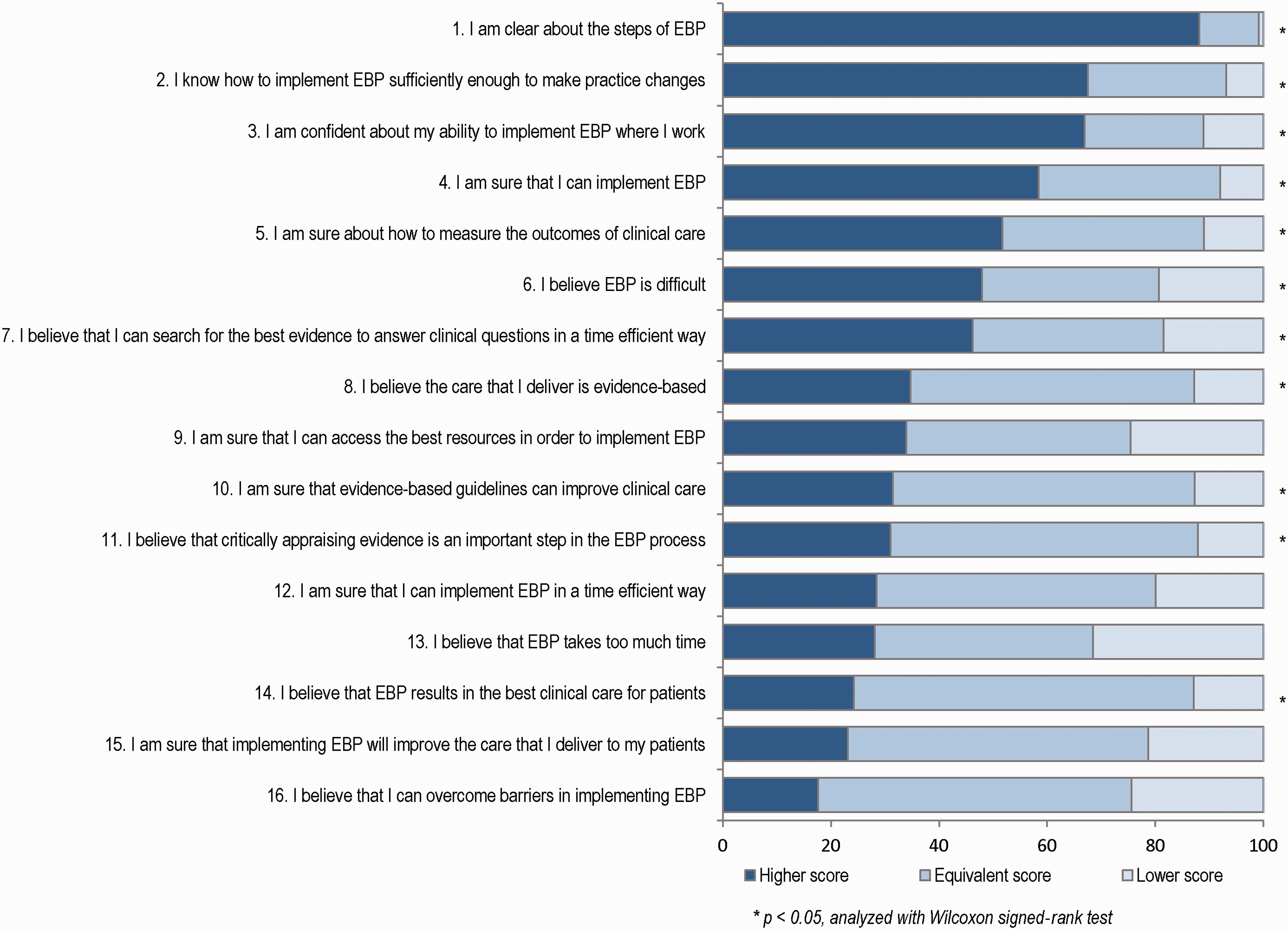

For 11 of the 16 items on the EBP Beliefs Scale, the result indicated a statistically significant positive change in scores reported before and one year after the postgraduate program (Figure 1). The largest percentage of respondents with improved scores was for the items ‘I am clear about the steps of EBP’ (88%), ‘I know how to implement EBP sufficiently enough to make practice changes’ (68%) and ‘I am confident about my ability to implement EBP where I work’ (67%). More than 80% of the participants answered ‘agree’ or ‘strongly agree’ before the program started for five items (items 10, 11, 14–16). For the remaining items, less than 50% answered ‘agree’ or ‘strongly agree’ before the program started.

Changes in scores (%) on the EBP Beliefs Scale one year after the postgraduate education.

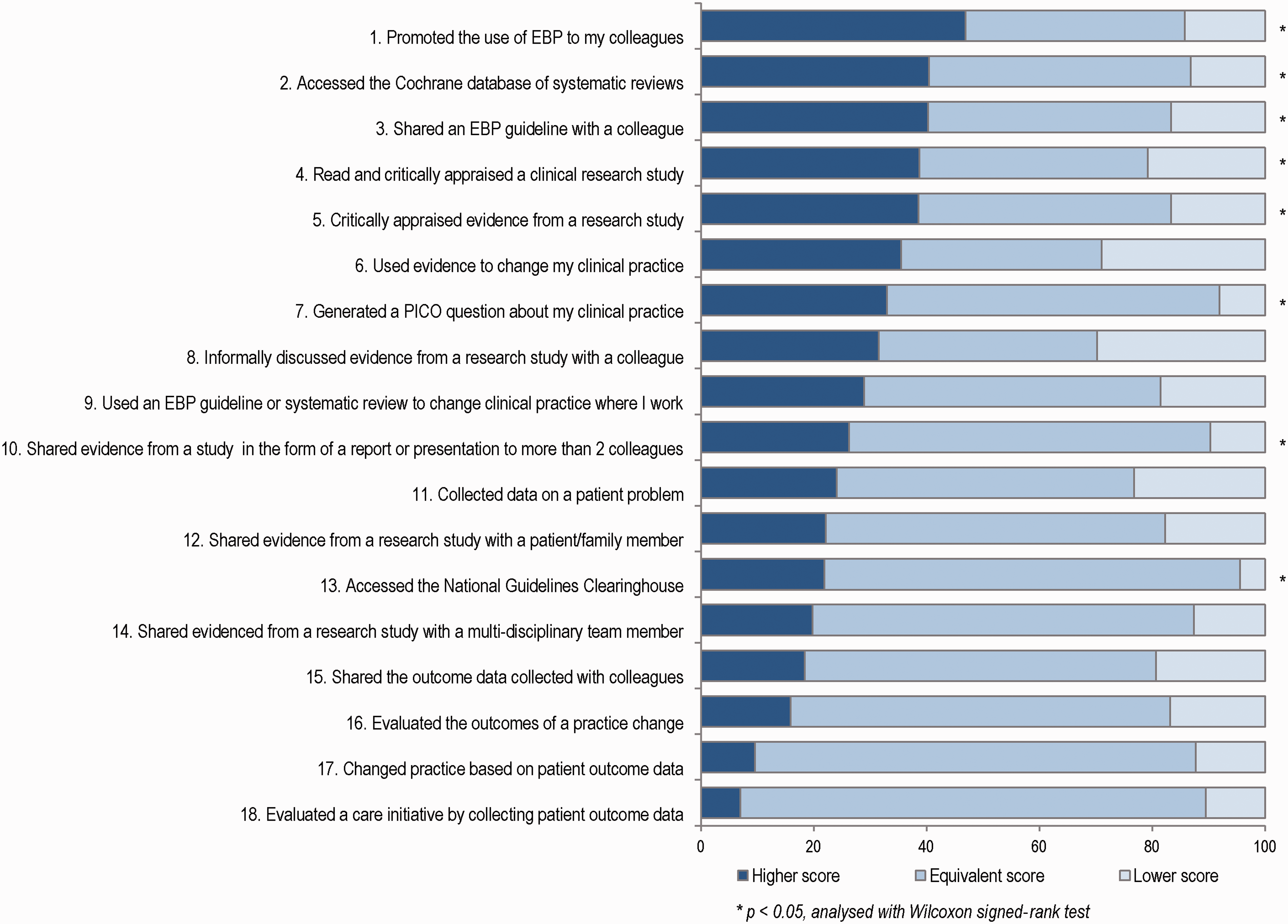

Changes in EBP implementation scores

The mean overall implementation score differed before (M = 8.9) and one year after (M = 11.7) the postgraduate program (MD = 2.8) (Table 3). This difference persisted after the same adjustment as for the beliefs scores. For eight of the 18 items on the EBP Implementation Scale, the result indicated a statistically significant positive change in scores reported before and one year after the postgraduate program (Figure 2). The largest percentage of respondents with improved scores was for the item ‘Promoted the use of EBP to my colleagues’ (47%). In addition, 40% reported higher scores for the items ‘Accessed the Cochrane database of systematic reviews’ and ‘Shared an EBP guideline with a colleague’, while 39% improved their scores for critical appraisal of research studies.

Changes in scores (%) on the EBP Implementation Scale one year after the postgraduate education.

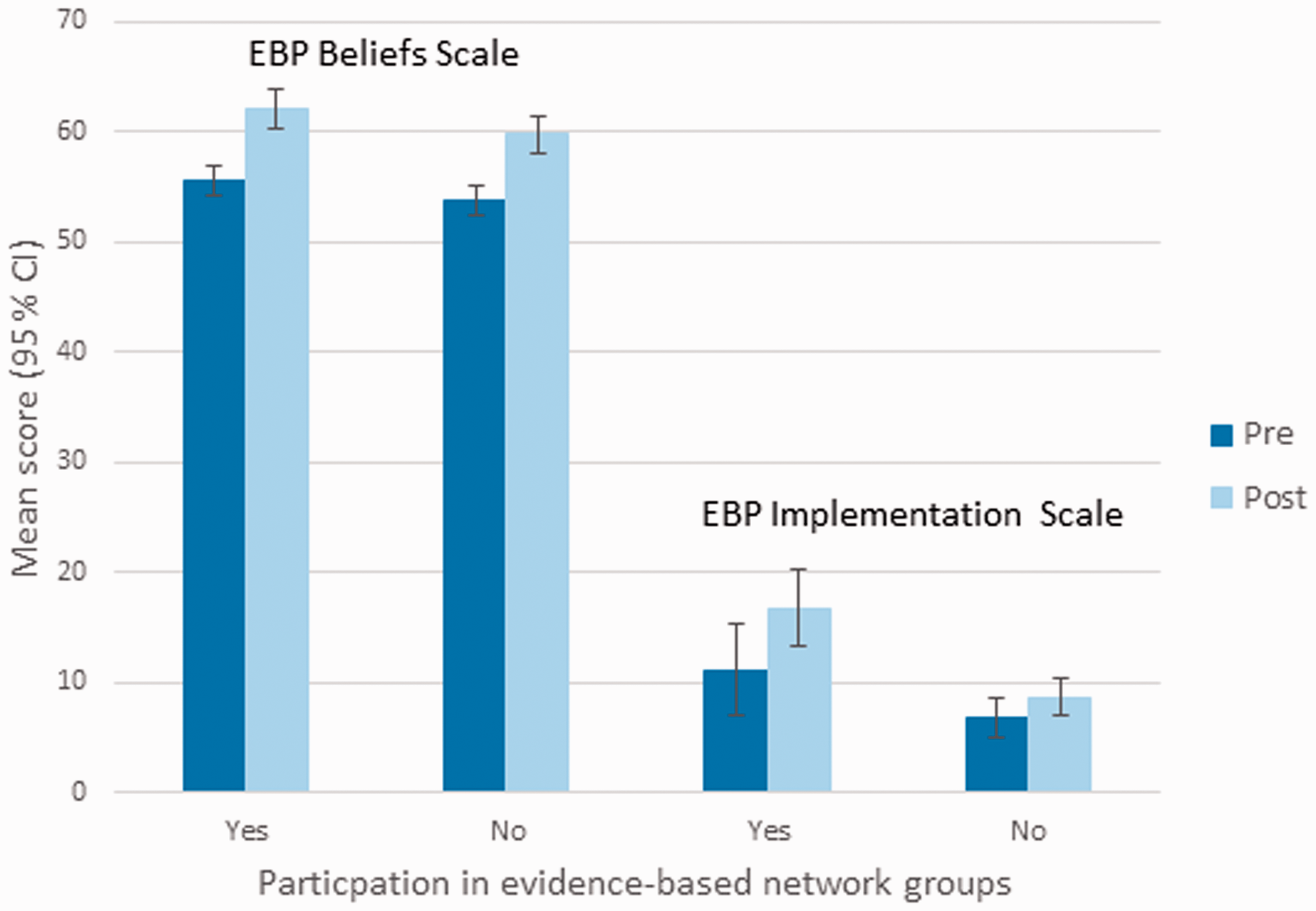

Evidence-based practice implementation and evidence-based network groups

Figure 3 shows mean values for the EBP Beliefs Scale and the EBP Implementation Scale pre and post measurements among those participating or not participating in evidence-based network groups. Healthcare professionals (N = 44) who participated in evidence-based network groups reported higher scores one year after the program than healthcare professionals (N = 72) who did not participate in these groups. This was statistically significant for the EBP Implementation Scale (MNP(non participants) = 9.2 and MP(participants) = 16.2, p = 0.001), but not for the EBP Beliefs Scale (MNP = 60.1 and MP = 61.4, p = 0.3).

Pre and post mean scores for the EBP Beliefs Scale and the EBP Implementation Scale among those participating or not participating in evidence-based network groups.

Discussion

This study showed a positive change in healthcare professionals' EBP beliefs and EBP implementation when scores before and one year after participation in a 15-ECTS-credit (total of 42 hours) postgraduate EBP program were compared. Improvement was strongest in EBP beliefs. Furthermore, healthcare professionals who participated in evidence-based network groups at follow up performed more activities related to EBP compared with healthcare professionals who did not participate in these groups.

Comparing our results with previous follow-up studies using the EBP Beliefs Scale and the EBP Implementation Scale is challenging, as the studies differ in interventions, samples, follow up and context. In previous studies, the education interventions in EBP have been part of a strategic plan.21,22,24,25,27 The samples have been nurses targeted for EBP mentor programs,21,25 nurses chosen to become EBP champions, 24 nurses regardless of job roles partaking in an implementation program,22,27 or health professionals attending a workshop in EBP. 28 In addition, most follow ups have been performed in continuation of an organized strategic EBP implementation, rather than one year after as in our study. Only one study measured EBP implementation six months after an intensive educational workshop. 28

The result from our study revealed a statistically significant positive change in EBP beliefs one year after the postgraduate program. Previous studies have also reported an increase in mean EBP beliefs score,21,22,24,25,27 but only three studies explicitly reported a statistically significant increase in EBP beliefs after an educational intervention.21,24,27 Our observed mean difference in EBP beliefs at one year follow up was similar to changes reported in studies educating EBP mentors and champions over a similar timeframe.21,24,25 Furthermore, our post measurement total mean score was similar to the total mean scores reported in studies where the educational program was part of an organized intervention for implementing EBP in clinical practice.21,24,25,27 Compared to previous studies we found our participants to hold strong beliefs in EBP.

Our result also revealed a statistically significant change in implementation score after the postgraduate program. There are studies reporting larger increase in mean EBP implementation sores,21,22,24,25 but only two studies explicitly reported a statistically significant increase in EBP implementation.21,24 Recent studies assessing the EBP Implementation Scale have not obtained significant changes in EBP implementation after an educational intervention.27,28 We observed a smaller total mean EBP implementation score than the studies on educating EBP mentors.21,24,25 Only one previous study has reported an equivalent implementation score to ours after a teaching intervention. 22

It is not surprising that the EBP implementation in our study was lower than the scores reported in studies where participants were supported in mentorship programs. Our participants came from different workplaces, and likely their barriers for implementing EBP in clinical practice varied. Barriers frequently reported for implementing EBP are related to organizational issues, such as lack of staff experienced in EBP, supportive leadership, lack of resources and lack of time to read research.29–31 Context and organizational culture are essential for EBP implementation, and practitioners' use of research will be encouraged in cultures where particular ideas, activities, or events are more highly valued than others. 32 Thus, implementing EBP may require contextual system changes, involving changes at the level of the organization, team, and individuals. 33 Our postgraduate program provided individualized training in EBP and was not part of an organized intervention for implementing EBP in clinical practice. As sole representatives, our participants faced a challenging task implementing EBP in practice.

In our study, healthcare professionals who participated in evidence-based network groups implemented EBP more often than the healthcare professionals who did not attend these network groups. These findings are consistent with systematic reviews indicating that postgraduate teaching in EBP that is clinically integrated in practice may improve clinicians’ EBP implementation.6,34 In evidence-based network groups participants meet regularly to critically appraise articles, produce guidelines or gain new knowledge by following the steps of EBP, as in journal clubs, clinical guideline groups or ‘clinical circles in EBP’. Even though research on journal clubs is limited and mainly based on self-reported questionnaires, Honey and Baker claim there is growing evidence indicating that journal clubs may increase clinicians’ knowledge and confidence such that clinicians include new skills and interventions in clinical practice. 35 However, another systematic review could not conclude that journal clubs were effective as decision support in clinical practice. 36 Thus, obtaining solid knowledge of how evidence-based network groups may change EBP beliefs and EBP implementation over time requires further studies.

Our postgraduate program assessed various combinations of learning strategies, such as lectures, computer lab sessions, appraisal of various study types, small-group discussions, use of real clinical issues, and assessments over 18 weeks. The educational program was not integrated into clinical practice, as recommended in a recent review of reviews about teaching evidence-based healthcare. 34 Nonetheless, the participants were working in clinical practice, paid by their employer to take part in the program and encouraged to carry out discussions, assignments and papers related to their own clinical practice. Although not clinically integrated, our teaching and learning strategies are in accordance with a recent overview of systematic reviews, which found that multifaceted, clinically integrated interventions, with assessment, led to improvements in knowledge, skills and attitudes. 34

The postgraduate program aspired to teach all the steps of EBP to a broad range of healthcare professionals. Nevertheless, the curriculum devoted five days to asking clinical questions, finding evidence and critical appraisal, whereas only one day was dedicated to implementation of EBP and shared decision-making. This may explain the smaller change for items on the EBP Implementation Scale related to evaluation of practice. Developing individuals' skills in critical appraisal will not automatically lead to greater evidence use. 33

We used the EBP Beliefs Scale and the EBP Implementation Scale because they were found to be valid and reliable in previous studies. 19 A possible limitation of this study is that the EBP Beliefs Scale and the EBP Implementation Scales have not been validated for healthcare professionals in Norway. In addition to the shortcomings of any self-report scale, we question a possible ceiling effect of some of the items in the EBP Implementation Scale. For example, we question how realistic it is to have participants score eight or more times that they have used an EBP guideline or systematic review to change clinical practice in the past eight weeks.

As for pre- and post-test survey design studies in general, changes may occur as a function of time. In our study, it is possible that the participants over-reported engagement in EBP behavior at baseline. They may have perceived that they were engaged in EBP activities at baseline, but through the postgraduate program gained a better understanding of what is required in EBP implementation. Similar observation has been reported in a previous study. 28

We did not have a control group and the effect of the program should be interpreted with caution. We lacked the information to analyze non-responders and dropouts. However, the study had a high response rate. By including six cohorts going through the program at different periods and performing the second measure after one year, we have examined changes in healthcare professionals' EBP beliefs and EBP implementation over a longer period of time than previous studies.

Conclusion

This study examined changes in EBP beliefs and EBP implementation one year after participation in a postgraduate educational program in EBP. The result showed a positive change in EBP beliefs and EBP implementation when scores before and one year after participation in the program were compared. The change was strongest in EBP beliefs. In addition, healthcare professionals who participated in evidence-based network groups at follow up performed more activities related to EBP compared with healthcare professionals who did not participate in these groups. Further research is required to examine factors that may facilitate implementation of EBP among healthcare workers attending postgraduate programs in EBP, and to consider changes in skills and behavior after such educational interventions.

Footnotes

Author contributions

Anne Kristin Snibsøer led the development of the manuscript, including collecting and analyzing data. Birgitte Espehaug participated in analyzing and interpreting data. All authors were involved in drafting the manuscript and critically revising the text. All authors read and approved the final manuscript.

Funding

This research received no specific grant from any agency in the public, commercial, or not-for-profit sectors.

Conflict of interest

The authors declare that there is no conflict of interest.