Abstract

With the emerging trend of artificial intelligence (AI) and its application in various fields, AI ethics and its related incidents have aroused concern and caused wide discussion in both society and academia around the world. In this paper, we discuss AI ethics and governance with respect to public perspectives. Based on the existing literature, policies, and guidelines on AI ethics, we sorted AI ethics concerns into eight dimensions: safety, transparency, fairness, personal data protection, liability, truthfulness, human autonomy, and human dignity. Combining online survey data with social media data, we quantified people's concerns on each dimension, and their attitudes toward AI governance policies and goals. The results shed light on how the public understands and views AI ethics and related governance. Finally, we propose several future directions in the development of AI ethics.

Keywords

Introduction

In past decade, artificial intelligence (AI) has been profoundly transforming people's daily lives in the fields of business, communication, and government. This transformation has largely benefitted from the availability of massive quantities of data coupled with consistent enhancements in computer processing capabilities and AI algorithmic advances. Specifically, the ubiquity of the internet, mobile devices, and sensors has generated a vast amount of data including text, voice recordings, records of human behaviors and environments, etc. These various types of data are the fuel for the development of AI algorithms. Meanwhile, the upgrading of microprocessors is the hardware foundation for accelerated data processing and algorithmic computations. Consequently, AI algorithms have witnessed explosive advancement and have excelled in areas such as natural language processing (Devlin et al., 2019; Lan et al., 2020; Vaswani et al., 2017; Wallach, 2006), speech recognition (Cooke et al., 2006; Park et al., 2019; Yu and Deng, 2015), and computer vision (Goodfellow et al., 2020; He et al., 2016; Krizhevsky et al., 2017; Oquab et al., 2015), as well as planning and decision-making systems (Bahrammirzaee, 2010; Brynjolfsson and McElheran, 2016; Brynjolfsson et al., 2011; Jordan and Mitchell, 2015; Kleinberg et al., 2017; Silver et al., 2016; Tantalaki et al., 2019; Tulabandhula and Rudin, 2014).

Meanwhile, with the application of AI products ranging from daily life to more sensitive arenas such as military and governance contexts, there is growing concern over its ethical implications. AI researchers, governments, and civil society groups have all expressed apprehensions about AI related to safety (Amodei et al., 2016; Russell, 2019), potential discrimination and racial bias (Barocas et al., 2019; Noble, 2018), and risks associated with personal privacy (Brundage et al., 2018; Hoff and Bashir, 2015).

To regulate as well as advocate for the ethical development of AI, academic institutions, international governance bodies, and leading tech companies have published dozens of AI ethics frameworks and principles over the past few years (Fjeld et al., 2020). For example, the Institute for Electrical and Electronics Engineers (IEEE) established the Global Initiative on Ethics of Autonomous and Intelligent Systems in 2016. Subsequently, the US Association for Computing Machinery (ACM) unveiled principles emphasizing algorithmic transparency and accountability (Garfinkel et al., 2017). Other initiatives from academic entities include the Asilomar Principles, crafted at the 2017 Asilomar Conference for Beneficial AI (Future of Life Institute, 2017), and the Montreal Declaration for Responsible AI (University of Montreal, 2018). These frameworks all emphasize the prioritization of human well-being, fairness, transparency, and accountability, and highlight the necessity of interdisciplinary collaboration.

Beyond the efforts in academic circles, international governance bodies such as the Organisation for Economic Co-operation and Development (OECD), G20, and the European Commission have all published AI ethics guidelines, calling for human-centered AI and adherence to fundamental rights and ethical principles (G20 Information Centre, 2019; The European Commission, 2019; Yeung, 2020). Moreover, in the tech industry, leading companies including IBM, Microsoft, and Google have also delineated frameworks to guide their use of AI and have established research units for examining AI ethics in particular (Butcher and Beridze, 2019). Aside from the abovementioned endeavors, many secondary literature studies have extensively reviewed and analyzed the array of statements and declarations made by a spectrum of multiple-stakeholder entities (Floridi and Cowls, 2019; Greene et al., 2019; Hagendorff, 2020; Jobin et al., 2019; Lo Piano, 2020).

Research in AI ethics has proposed comprehensive AI guidelines, but most of the work is prescriptive in nature and outlines what an ideal ethical AI system should entail. While these principles, such as respecting human rights or preserving privacy, aim to protect the public's welfare, there are few studies examining the public perception of these ethical considerations. Public inputs are essential in practice because the public are the direct consumers of AI products. Therefore, understanding these views is crucial if both policymakers and tech companies are to align AI systems with the public's preferences and foresee potential societal reactions to AI-related topics (Zhang, 2021). In this study, we intend to bridge this gap by empirically investigating how the Chinese public perceive AI ethics and their preferences for AI governance. Specifically, we take eight core principles from the abovementioned declarations and guidelines: human dignity, autonomy, fairness, transparency, personal privacy, security, liability, and truthfulness. We examine public opinion on these eight dimensions across different AI applications as well as investigate if such ethical concerns are diverse among survey respondents.

Several existing studies indicate that public attitudes toward AI applications and their policies are indeed diverse. Demographically, race, political party affiliation, and age have been identified as significant determinants of people's support for facial recognition in the USA (Smith, 2019). In another nationally representative US survey as well as two cross-national surveys, higher levels of education and income are consistently associated with increased support for AI development (Johnson and Tyson, 2020; Neudert et al., 2020; Zhang and Dafoe, 2019). Of these, Johnson and Tyson (2020) found that 15 out of 20 countries indicated that men and younger adults have more positive views on AI. Geographically, regions and cultures play important roles in shaping people's moral preferences concerning autonomous vehicles (Awad et al., 2018). Several cross-national surveys have uniformly revealed that East Asians have greater trust in AI, compared with people from other regions (Johnson and Tyson, 2020; Kelley et al., 2021; Neudert et al., 2020). In contrast, people in the USA and the European Union (EU) lean toward the need for stricter AI regulations, indicating a mixed or cautious stance on AI's rapid development (European Commission, Directorate-General for Communications Networks, Content and Technology, 2017; Zhang and Dafoe, 2020).

While there have been empirical findings highlighting the diversity in public attitudes toward AI, more comprehensive studies are needed to verify such diversity in AI-related sentiments. This paper places a particular emphasis on the Chinese context, aiming to engage in a dialogue with the aforementioned surveys. We seek to ascertain whether public preferences in terms of AI ethics and governance align with the trends previously noted or if they reflect nuances specific to the Chinese setting. Currently, theoretical frameworks are lacking in the field to aptly explain the heterogeneity observed in public attitudes toward AI. Thus, our analysis in this study is exploratory in nature, endeavoring to provide valuable insights that may pave the way for subsequent theoretical research on the subject. The paper is organized as follows: the following section outlines our analytical framework of AI ethics, and formally explains each principle with its definition and implications in AI ethics. The subsequent section presents an empirical analysis of public perception on AI ethics across AI applications. The penultimate section presents the empirical results on public perception of AI governance, and the final section concludes.

Definition and analytical framework

Although the public's awareness of AI mostly began with the Go-playing AI program AlphaGo's victory over human players and victory in the Go world championships in 2016, the concept of AI had been proposed far earlier, in 1956, at the Dartmouth Conference, where the attending scientists depicted AI as machines to imitate humans and other aspects of human intelligence. While a number of definitions of AI have surfaced over the last few decades, we follow John McCarthy's (2007) definition: It is the science and engineering of making intelligent machines, especially intelligent computer programs. It is related to the similar task of using computers to understand human intelligence, but AI does not have to confine itself to methods that are biologically observable.

The development of AI has brought profound ethical challenges. Based on the existing literature, this study focuses on eight frequently discussed ethical values relating to AI: human dignity, autonomy, fairness, transparency, personal privacy, security, responsibility, and authenticity. The following sections discuss each dimension in greater detail.

Human dignity

Following existing studies, we define human dignity in AI ethics from two perspectives: the development aspect and the usage aspect. The former mainly refers to ensuring that AI serves humans and conforms to human values and overall interests; the latter includes several meanings: AI should not be used to control human behavior by pursuing efficiency and convenience excessively; human status should not be weakened or replaced by AI; AI should not be used to confront, exploit, or harm humans; and people who use AI as a tool should be able to live a vibrant life both materially and spiritually.

Human autonomy

The core of human autonomy is to respect human beings and their right to decision making. Therefore, human autonomy in the utilization of AI means that all people who interact with AI systems should be able to retain sufficient and effective power to determine which decision to take (Floridi and Cowls, 2019). AI users should pay attention to the risks of the manipulation of human decision making and emotions by AI and the risks of excessive dependence on AI. It is crucial to consider who takes what measures against such risks. In the case of linking AI systems with the human brain and body, users should particularly take into consideration that individual autonomy not be violated (Zeng et al., 2018).

Fairness

Referring to several discussions in the existing literature (Huang and Sun, 2004; Meng, 2012), we divide “fairness” in AI ethics into four aspects: procedural fairness, opportunity fairness, interaction fairness, and outcome fairness. Among them, procedural fairness emphasizes that when making AI-related policies or systems, the process and the corresponding procedure assurance should be fair. Opportunity fairness means that all people in society, including the elderly and minorities, should have equal access to the use of AI and related products. Interaction fairness means that when people are engaged in executing AI-related decisions, their methods of interpersonal interaction should be able to increase people's sense of fairness. Specifically, it includes the following related concepts: “interpersonal fairness”, that is, that the decision makers should be in alignment with the decision recipients, and the dignity of the decision recipients should be considered and respected; “information fairness”, that is, that the decision makers should convey enough information and explanations to the decision recipients, such as explaining why a certain form of program is executed or why resources are allocated in a specific way. Outcome fairness pursues substantive fairness (Meng, 2012), which requires that the valuable resources related to AI and its related products should be distributed equally among all members in society.

Transparency

The concept of transparency is crucial for anyone concerned with ethics and morality because it not only involves the content of the information we convey to others but also requires us to think about the form and nature of our interactions with others; it is not just a matter of what we say but also why we say it and even how we say it (Plaisance, 2007). That is to say, transparency includes not only the accessibility (open visibility) but also the comprehensibility of information (Mittelstadt et al., 2016). Since algorithms are at the core of all AI, AI decision makers are essentially algorithms with multiple embedded wills. Therefore, in this study we divide the transparency principle in AI ethics into three aspects: information transparency, concept transparency, and program transparency (Chen and Zhang, 2020). Information transparency refers to transparency in multiple contexts: (1) various data or non-data information used by AI; (2) the internal structural information of AI; (3) the automatic decision-making principles used by AI; (4) the interaction information between AI and other subjects; and (5) the information involved in the process of AI-related information disclosure. Concept transparency refers to the values and ethical concepts involved in the implementation of AI being transparent. Program transparency refers to maintaining the transparency of the relevant operating procedures for implementing AI.

Personal information protection

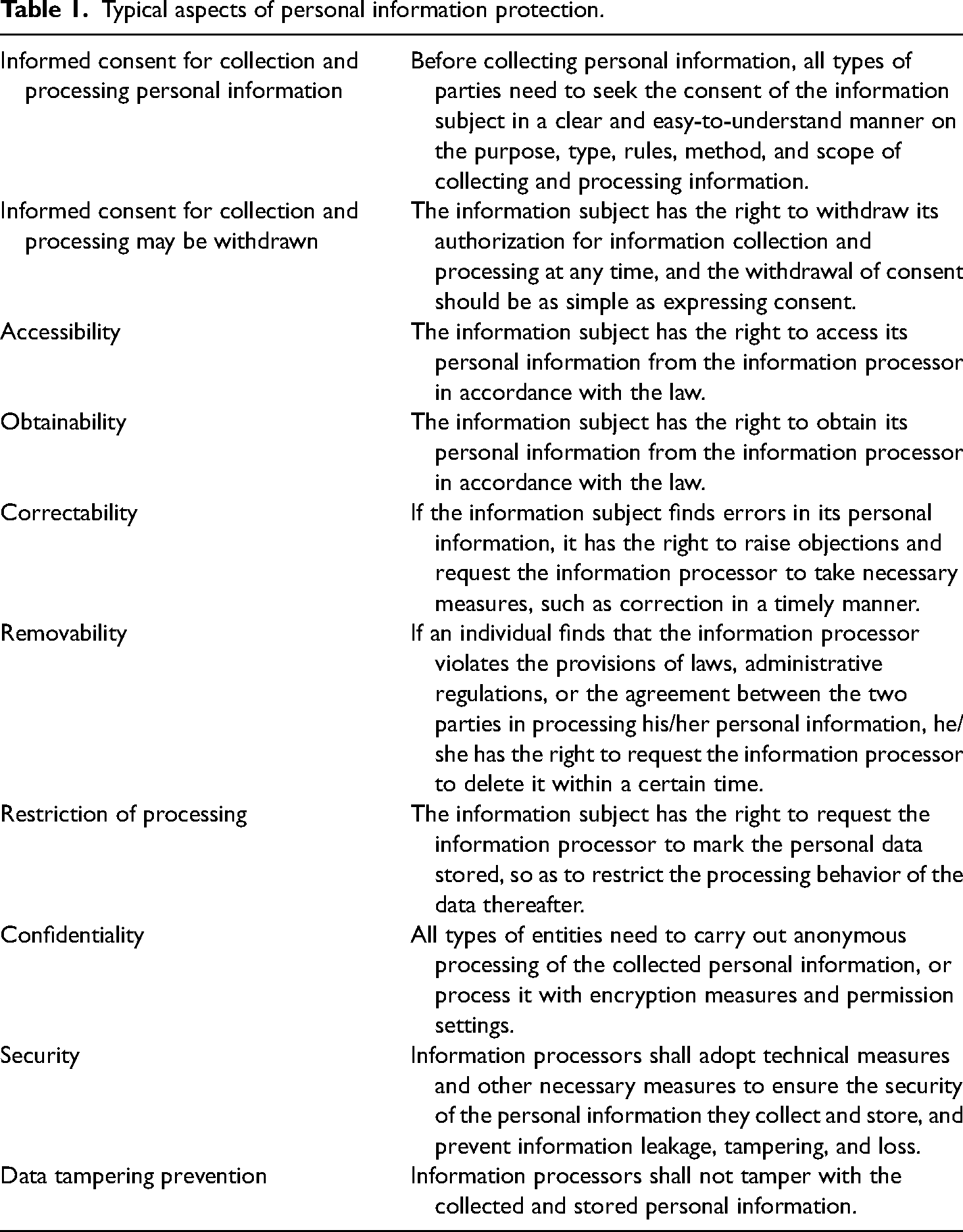

Personal information refers to various types of information that can, alone or in combination with other information recorded by electronic or other means, identify a specific natural person, including the name, date of birth, identity document number, biometric information, address, telephone number, email address, health information, and travel schedule details of a natural person. Although AI technology has facilitated the digital expression, storage, and use of human information, it has also posed challenges to the protection of personal information. Referring to the existing literature, AI ethics guidelines, and legal provisions, we list a full summary of the various aspects of personal information protection in Table 1.

Typical aspects of personal information protection.

Security

From the perspective of international relations, security in AI means that a country has absolute control and ownership over domestic AI technology and related products. With regard to technical systems and external factors, the security principle can be divided into internal security (security) and external security (safety). Among them, internal security means that relevant subjects should test and verify its operation throughout the entire life cycle of an AI to ensure that it can operate normally in all aspects, with robustness and controllability. External security mainly refers to the ability to enhance the security of AI systems and the confidentiality of related information through technical means to avoid being attacked by external forces (Zeng et al., 2018).

Responsibility

Responsibility rules are one element of a legal framework for AI (Zech, 2021). However, liability for AI is the subject of lively debate. The major controversy lies in the implication that the creators of AI software or hardware are not liable for any related harm as long as these products were non-defective when made. Whether AI is defectively made depends, like in other product liability cases, on prevailing industry standards (Long, 2021). Liability creates incentives for risk control, but who is actually able to exercise control has to be taken into account in order to do so.

Authenticity

The value of authenticity is rooted in respect for human dignity and autonomy. The desire to obtain true information is a basic demand of human beings. Only by obtaining true information can people make choices that conform to their own values more autonomously. In society, practicing truthfulness has become the only way for various subjects to fulfill their functions and responsibilities. In the field of media and communications, truthfulness is the primary ethical principle of news professionals. Following the same principle, truthfulness in AI ethics refers to the expectation that any entities should adhere to the principle of truthfulness when designing and using AI for various production activities. In particular, we emphasize program truthfulness and content truthfulness. Program truthfulness refers to the truthfulness in the disclosure in terms of AI program designs and algorithm implementations. Content truthfulness means that the contents generated by AI should be true.

Empirical evidence on public attitudes toward artificial intelligence ethics

In this section, we discuss AI ethics in terms of the eight dimensions defined in the second section. Using both online survey and social media data, we investigate the public's understanding of AI ethics and their attitudes toward each dimension.

Results of the online survey

Data and descriptive statistics

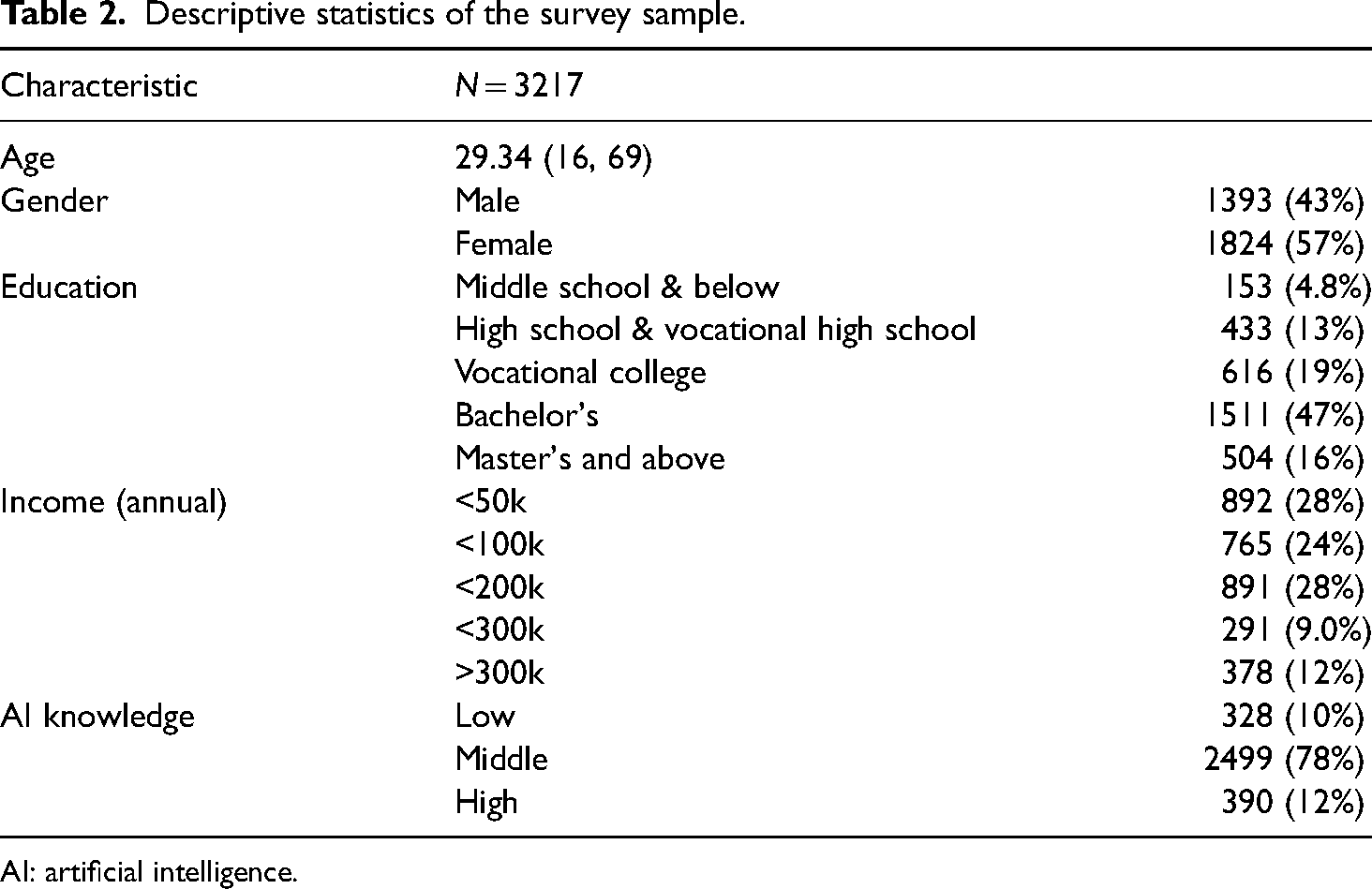

The online survey “Public Perception of AI Applications” was conducted by the Data Governance Research Center of Tsinghua University (THU-CDG) in July 2021. We conducted two waves of the survey and received 3822 responses in total. After deleting invalid respondents who answered the survey in less than 10 min, we obtained 3217 valid questionnaires. The characteristics of the sample are listed in Table 2. The education levels are categorized as low (middle school and below), middle (high school and vocational high school), vocational college, bachelor's degree, and master's degree or above (pro). Knowledge of AI is assessed based on respondents’ average score across their answers to several questions on their familiarity with AI/information technology (IT) skills. 1 People who are one standard deviation below the mean are categorized as having low-level knowledge, while those who are one standard deviation above are termed as having high-level knowledge. The rest in the middle are categorized as having middle-level knowledge.

Descriptive statistics of the survey sample.

AI: artificial intelligence.

AI ethics across eight essential dimensions

Based on the analytical framework defined above, we designed eight questions, each corresponding to one of the eight dimensions of AI ethics. For each question, we asked respondents to what extent they were concerned about the following scenarios.

Transparency: “AI algorithms are a ‘black box’”. Security: “AI has security risks”. Fairness: “AI causes discrimination, for example, big-data discriminatory pricing (BBDDP)”. Personal data protection: “AI may invade personal privacy”. Liability: “It is difficult to determine the responsibility for an accident caused by AI, for example, in the case of self-driving cars”. Truthfulness: “AI has the potential to create false information and deceive users”. Human autonomy: “AI may surpass humans and one day humans will become slaves of machines”. Human dignity: “AI is becoming more and more advanced, and normal people will play a more and more minor role in society”.

Respondents rated their level of concern on a Likert scale: (1) not worried at all; (2) not too worried; (3) worried; and (4) very worried.

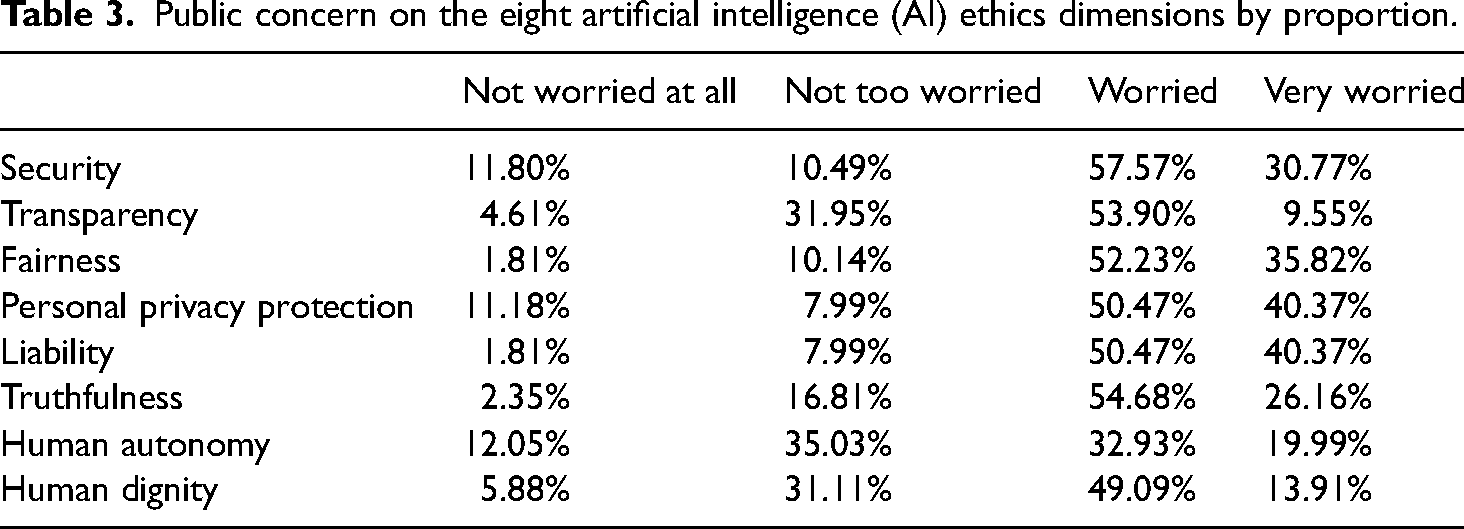

Table 3 shows that people are generally concerned about AI ethics issues. In all of the scenarios, the proportion of respondents who expressed worries (“worried” or “very worried”) was more than 50%. Among the eight dimensions, respondents were most worried about security, fairness, personal privacy, liability, and truthfulness. For these options, the proportion of respondents who chose “worried” or “very worried” was significantly higher than those who chose “not at all worried” and “not very worried”. Among these items, the issue of possible pricing discrimination behavior that would violate the principle of fairness was the most concerning, with 90.84% of respondents choosing “worried” or “very worried”. The second most concerning was the security and liability issue, with 88.345% of respondents choosing “worried” or “very worried”.

Public concern on the eight artificial intelligence (AI) ethics dimensions by proportion.

In contrast, respondents were less concerned about transparency, human autonomy, and human dignity. Among them, the least concerning was human autonomy. In other words, at this time, respondents are not too worried that humans will one day become slaves of machines.

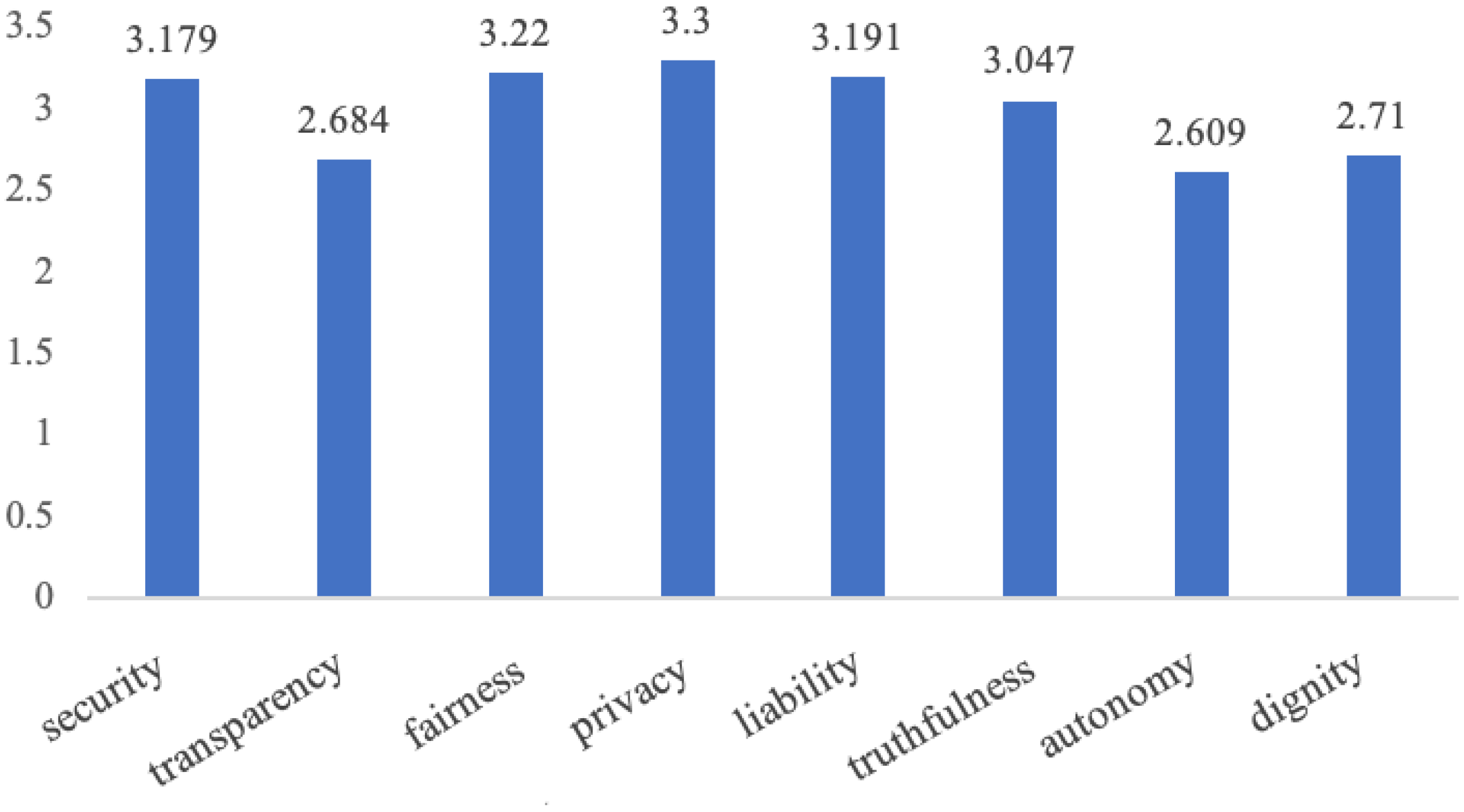

Finally, we also calculated the average scores for each dimension. Ranking “not at all worried” as 1 and “very worried” as 4, we calculated the mean value of the respondents’ level of concern on each ethical issue. Figure 1 shows that the average values for safety, fairness, personal data protection, responsibility, and authenticity are higher. In contrast, respondents paid slightly less attention to transparency, human autonomy, and human dignity. Overall, respondents expressed a high degree of concern on all ethical dimensions, demonstrated by the average values of all ethical dimensions exceeding 2.5.

Average scores for the level of concern on eight dimensions of artificial intelligence ethics.

The above results show that people are broadly concerned about AI, especially in terms of matters relating to safety, discrimination, privacy, and liability. Those ethical issues should be the focus of current AI governance.

Ethics in common AI applications

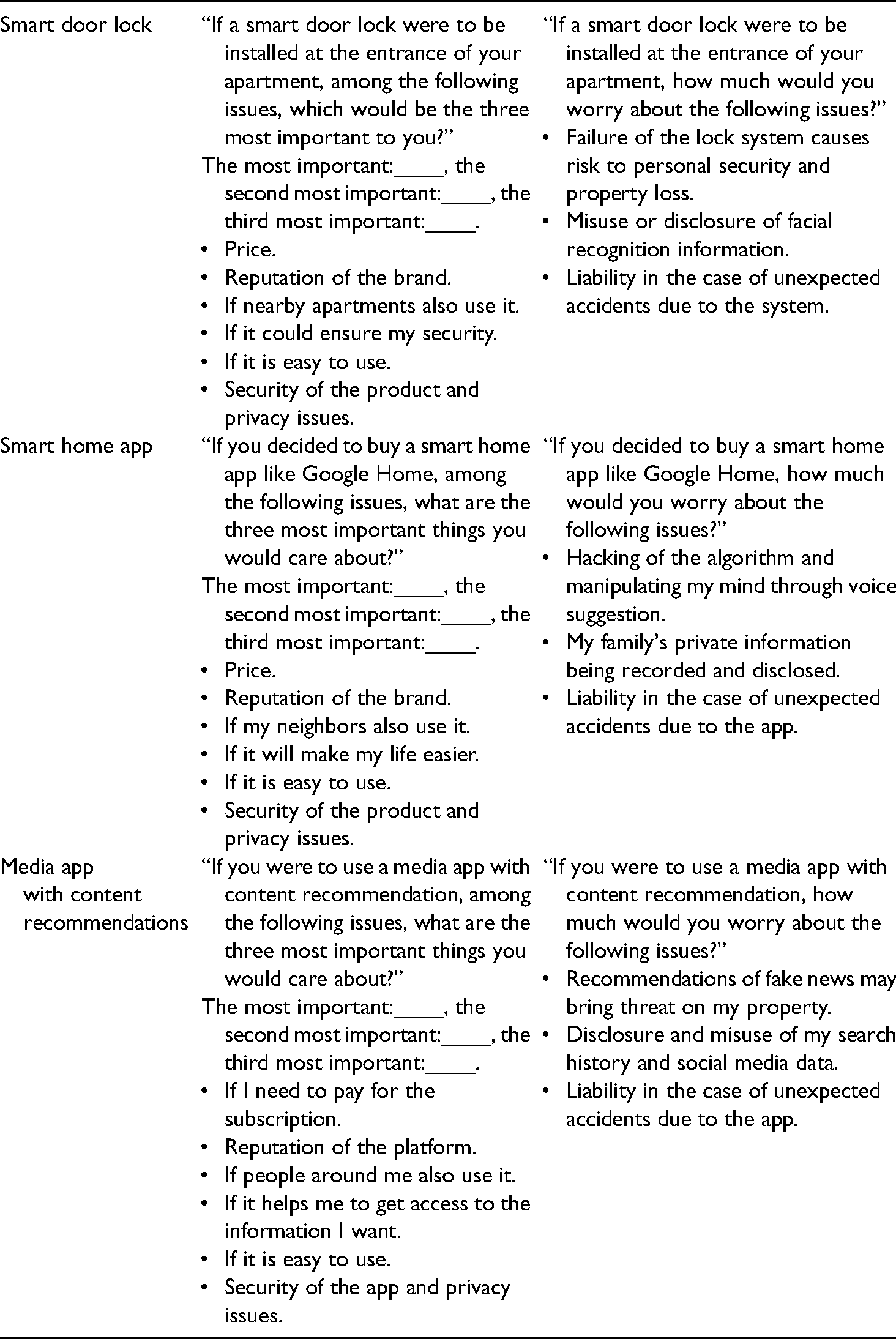

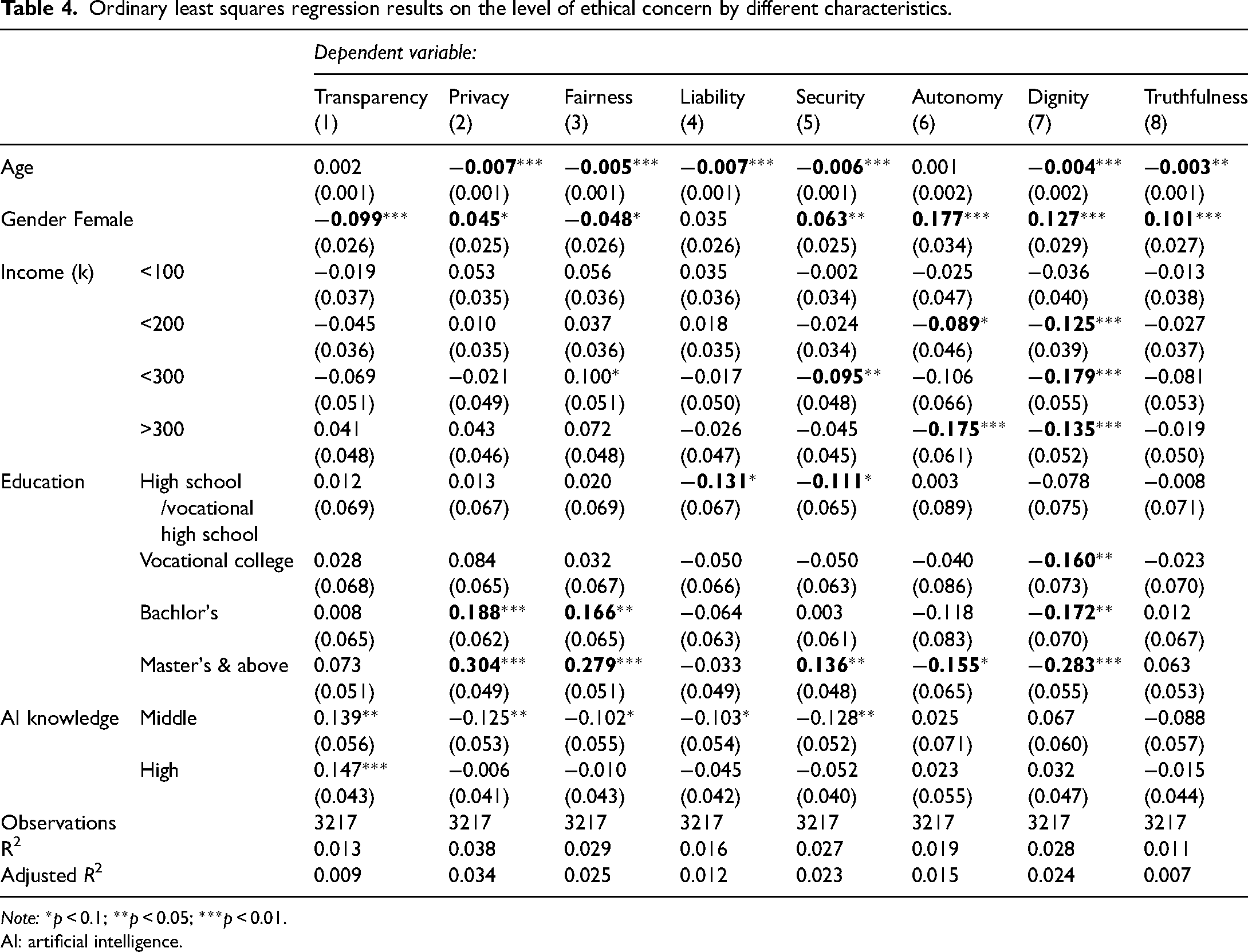

To compare people's concerns regarding AI ethics with other common issues, we selected several AI products, namely smart door locks, smart home apps, and media apps with personalized content recommendations. Respondents were randomly assigned one of these products, and for each product we compared the ethical issues of the smart products with other important considerations side by side. These considerations include the price, brand, trends, usefulness, and usability. For each product, we designed a relative scenario to measure the above considerations and sought to ensure that the questions were comparable across the three scenarios. While our analytical framework includes up to eight ethical dimensions, we selected the top three ethical concerns from respondents based on the previous analysis—safety, personal data privacy, and liability—and provided a specific concern relating to the ethical issue in each scenario. For example, for smart door locks, the most prominent safety issue is “the access control system fails and causes risk to personal safety and property loss”. The questions for each of the three scenarios are shown below.

The results show that, when installing a smart door lock, people care most about usefulness (if it could ensure my security), usability (if it is easy to use), and ethical issues (security of the product and privacy issues). In contrast, price, brand, and trends are the least important concerns. Most people rank usefulness as the most important issue, indicating that functional attributes of AI products are most valued. Ethical issues ranked as the second most important issue, indicating that people pay a great deal of attention to the importance of security and privacy in AI products. In addition, most people ranked ethical issues as the second or third most important issues.

When buying a smart home app like Google Home, most respondents ranked ethical issues (security of the product and privacy issues) as the most important considerations. Respondents also showed a relatively high degree of concern for the usefulness of the product (if it will make my life easier) and price. In general, ethical issues and usefulness were the top two considerations in the smart home app scenario.

For content recommendation media apps, respondent showed the highest level of concern for ethical issues (the security of the app and privacy issues). Figure 2 shows that the proportion of people choosing ethical issues as the top concern was significantly larger than those for the other issues. In addition, people show some concern for the usefulness of the app (if it helps me to get access to information). Price and usability are also relatively significant issues.

Score distribution of three artificial intelligence products.

We calculated the weighted average score of the various considerations in the three scenarios. If an issue was ranked as “most important” then its weight was set to 3, with “second most important” set to 2, and “third most important” set to 1. As can be seen from Figure 2, usefulness and ethical issues are the most important factors that affect respondents’ perceptions of smart products in all scenarios. For the smart door the average score for usefulness was 2.28, followed by ethical issues at 1.86. For smart home and AI-powered media apps, ethical issues scored the highest among all factors. In contrast, the brand, trends, and ease of use of an AI product were consistently not the most concerning factors for respondents.

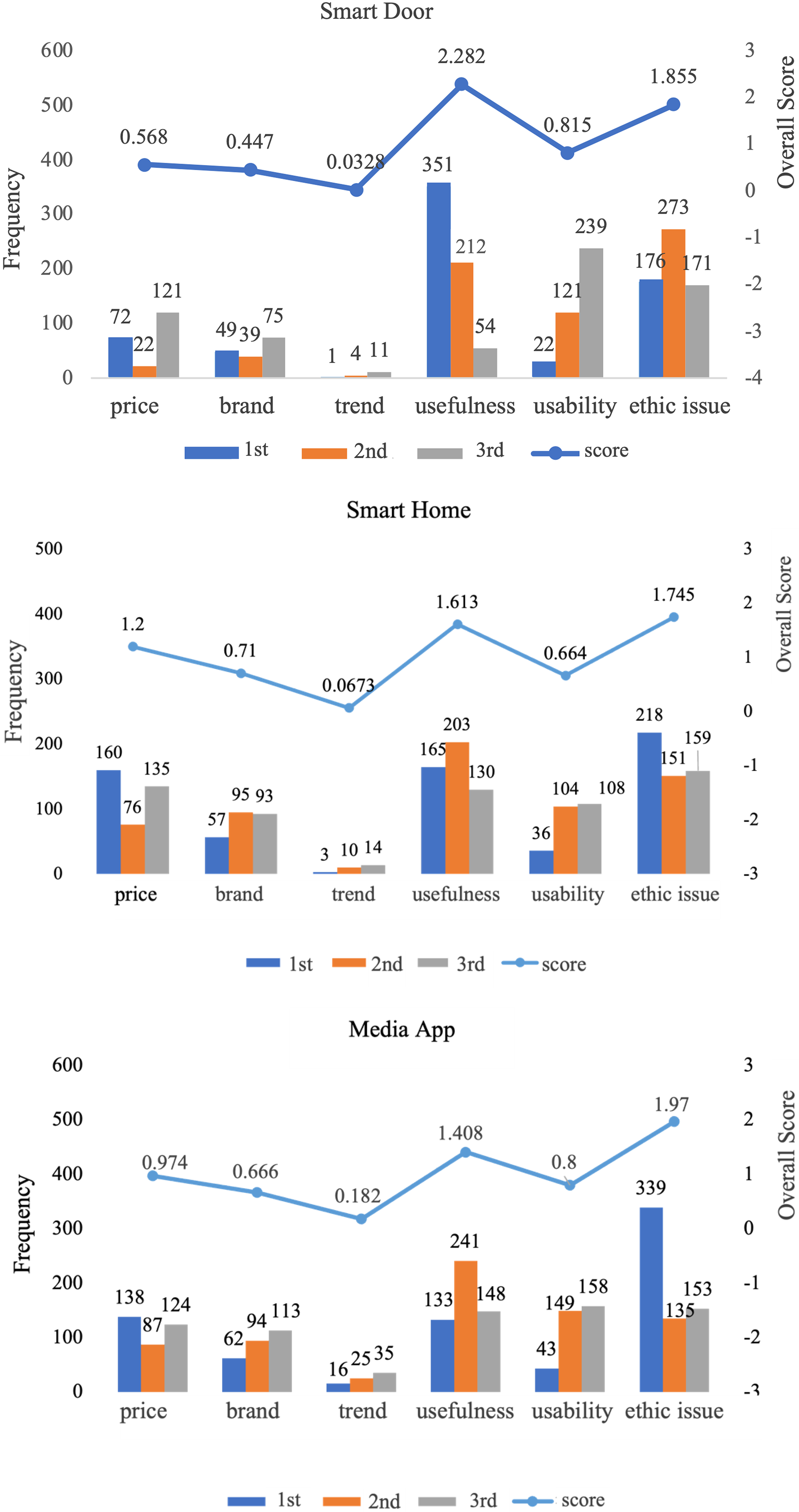

In addition, in each of the three smart product scenarios, we ask respondents to rate their degree of concern on three specific ethical issues—safety, personal data privacy, and liability—on a 1–10 scale, where 0 means “no concern at all” and 10 means “very concerned”. In all three scenarios, the respondents’ levels of concern inclined toward a score of 10, indicating “very concerned”, as opposed to “no concern at all”. As can be seen in Figure 3(a), the security issue was more concerning in the smart door lock than in the media app scenario, while respondents were relatively less concerned about the security issue in smart home products. From the perspective of personal data protection, respondents showed concern for the issue in all three scenarios. In the case of media apps in particular, respondents were very worried that auto-recommended fake news could cause a threat to their property. Liability was most concerning in the case of media apps and smart home products, indicating that respondents are worried about who will be held responsible for accidents resulting from AI products.

Distribution of level of ethical concerns for three artificial intelligence products.

In summary, the respondents were broadly concerned about AI ethics. Ethical issues were important factors affecting respondents’ attitudes to using various AI products. In particular, safety, personal data protection, and liability were generally areas of concern for the respondents.

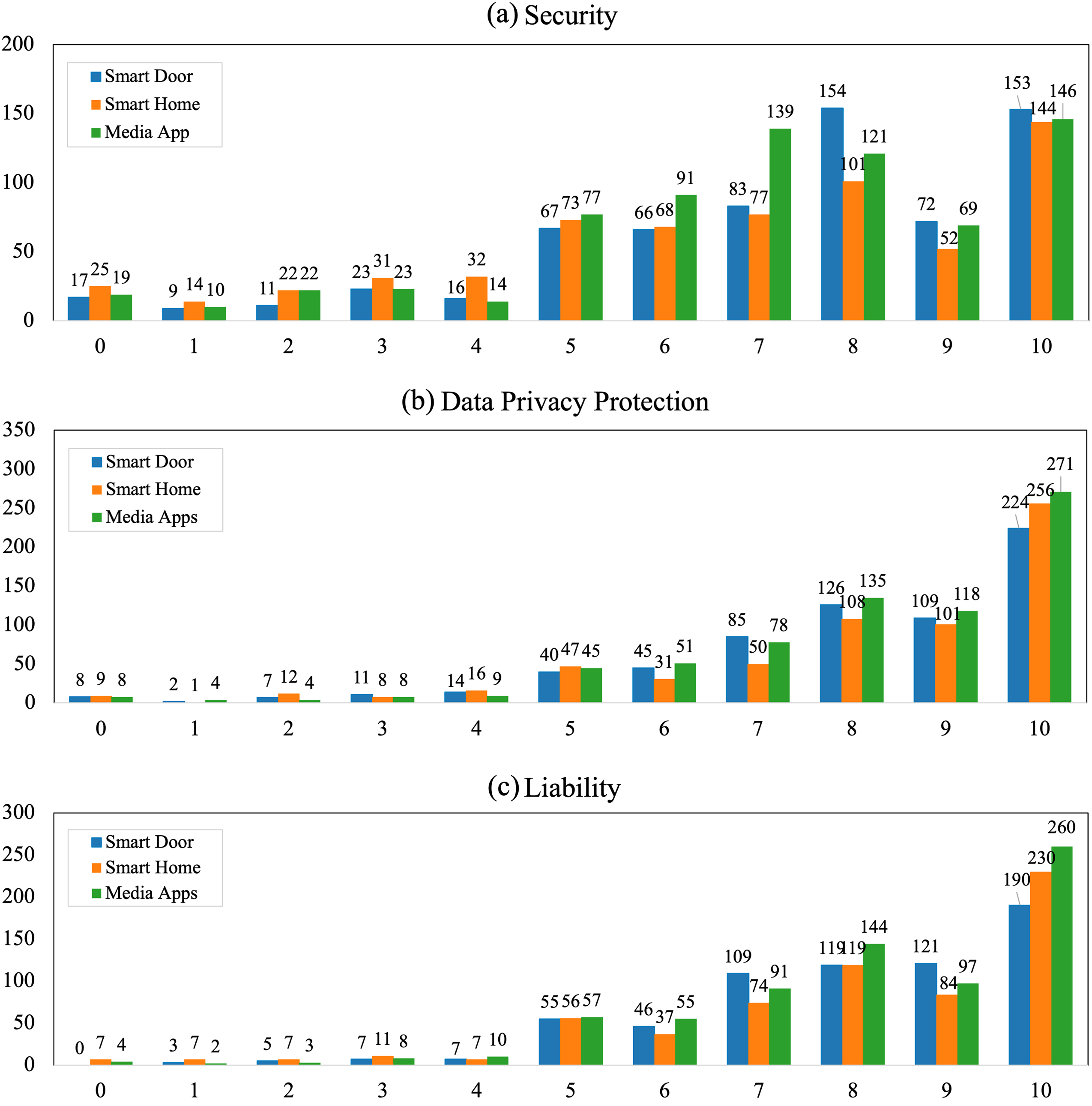

Group heterogeneity in AI ethics concerns

Existing studies have shown that social subgroups may have different opinions on AI (Miller and Keiser, 2021). Therefore, we check heterogeneity in the level of AI ethics concerns by respondents’ gender, age, income, education level, and knowledge of AI. We ran an ordinary least squares (OLS) regression for each of the eight ethical dimensions. Table 4 shows that except for transparency and autonomy, younger people are in general significantly less concerned about ethical issues around AI. This finding is consistent with the empirical results of existing surveys. Women are less concerned about transparency and fairness, while they are more concerned about all the other ethical issues. In terms of personal income, higher income people are less worried about autonomy and human dignity, and no subgroups show significant concerns about truthfulness. As education level increases, people are more worried about privacy and fairness but less about dignity. In contrast to the level of education, higher knowledge of AI is associated with worries about transparency.

Ordinary least squares regression results on the level of ethical concern by different characteristics.

Note: *p < 0.1; **p < 0.05; ***p < 0.01.

AI: artificial intelligence.

In conclusion, we find that people's ethical concerns are highly diverse based on demographic characteristics. People with more knowledge of AI and who are more educated tend to show more concern about substantial ethical issues. Meanwhile, we have identified specific granular concerns people have regarding AI, supplementing the existing literature on attitudes toward AI. Ethically speaking, it is not that specific groups (men, higher income people, more educated people) are universally optimistic about AI, but rather, their concerns diverge based on the specific issues that they prioritize.

Results from social media data

To investigate how the public views AI ethics, this study also analyzes posts from Sina Weibo. Sina Weibo was founded in 2009 and had 530 million monthly active users and 230 million daily active users as of March 2021. It is China's largest social media platform. Therefore, by analyzing posts on Weibo, we can obtain a more comprehensive understanding of public attitudes toward AI. Specifically, the THU-CDG cooperated with the Sina Research Center of Big Data, and based on keyword extraction, 2 filtered 44.96 million posts relevant to AI from 1 May 2020 to 31 May 2021. After deleting advertisements and other irrelevant data, we finally obtained data from 40.52 million Weibo posts. This section will be based on these data. Firstly, we will analyze the trends across time and in the user types of the posts, and then we will proceed to analyze the contents of the text based on the eight dimensions of AI ethics discussed in the previous sections.

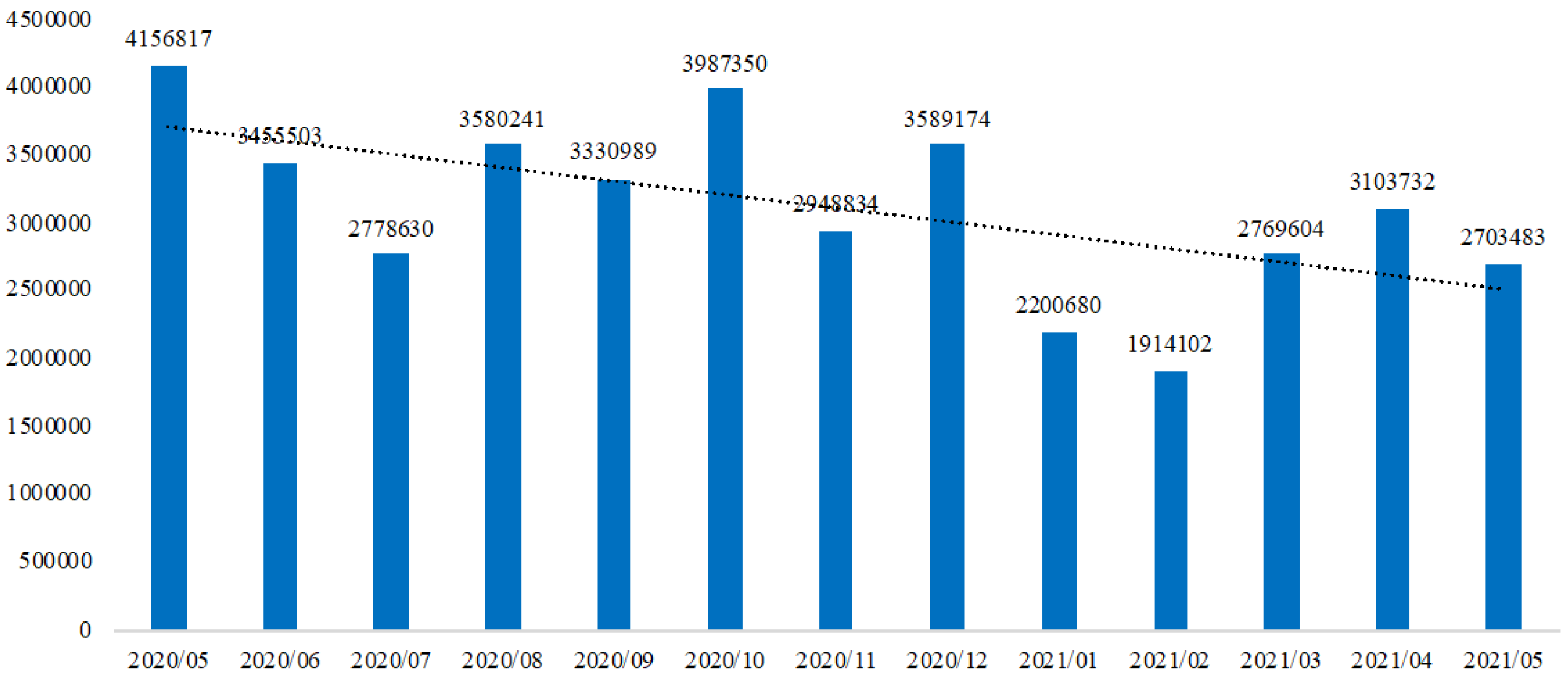

Overall, the number of posts related to AI topics fluctuated over the 13 months covered by our dataset, with the intensity of the discussion declining over that period (see Figure 4). From May 2020 to May 2021, Weibo had an average of 3.116 million AI-related posts per month. Among them, the periods with the most posts were May 2020 and October 2020, with 4.147 million and 3.987 million posts, respectively. The periods with the fewest posts were February 2021 and January 2021, with 1.914 million and 2.201 million posts, respectively. The public's attention to AI has mainly been concentrated on new AI-related laws and regulations and the ethical problems caused by AI in recent years. For this reason, there is a large fluctuation in the number of related posts from month to month.

Number of artificial intelligence-related posts on Weibo over time.

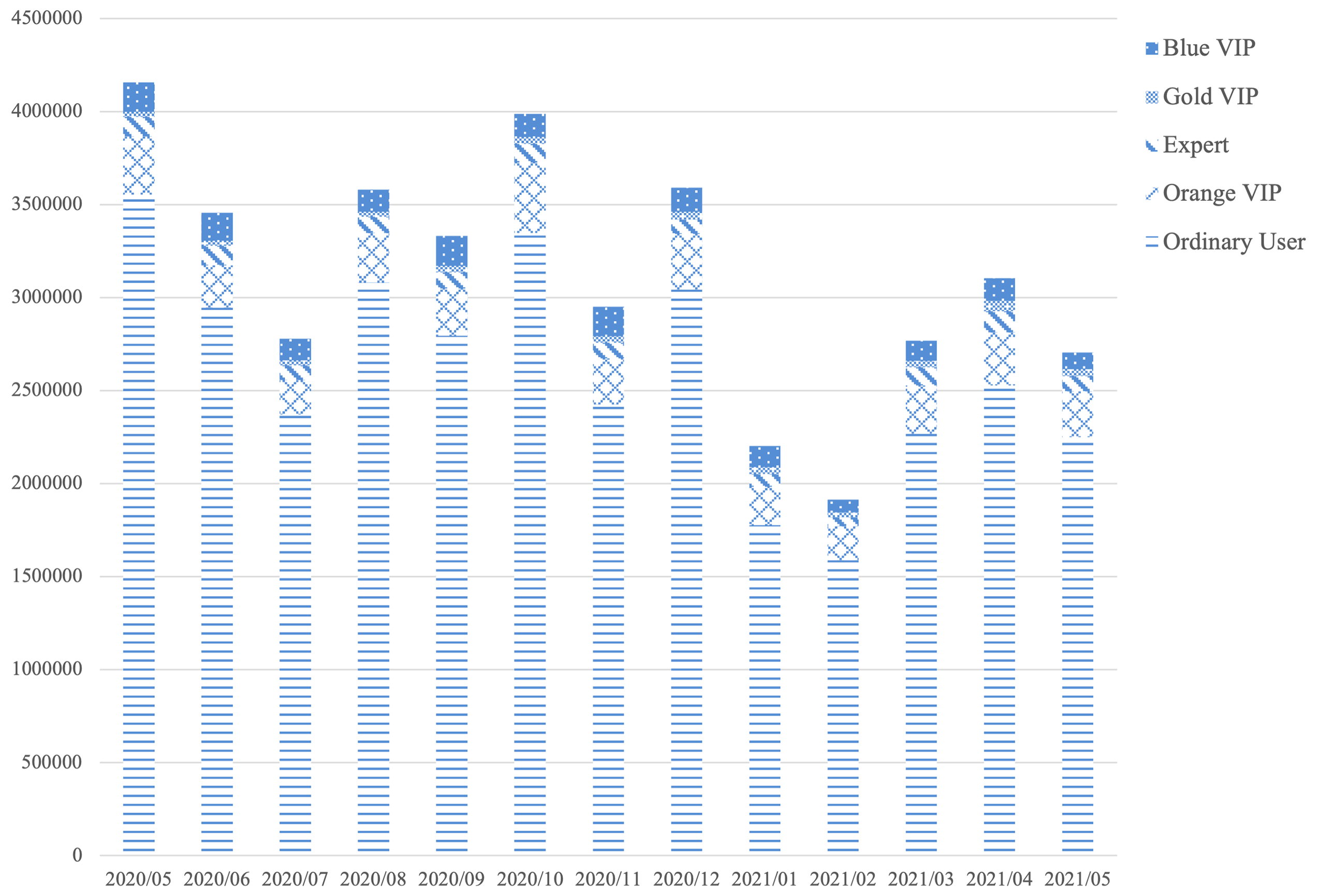

Weibo has several classes of users: ordinary users, orange VIP users, gold VIP users, expert users, and blue VIP users. Among them, ordinary users are users who have not been certified by Weibo; orange VIP users need more than 50 followers and to have at least two mutual followers who are orange VIP users to be certified; expert users need to maintain activity to be certified, and this certification was closed in May 2017; gold VIP users need to have more than 10 million views in Weibo and no less than 10,000 followers in a month to be certified; and blue VIP user status is only open to enterprises, governments, and news media for certification. In terms of the types of users who posted on AI-related topics, in the 13 months covered by our dataset, ordinary users account for 83.7% of posts related to AI topics, with a total of 33.964 million posts; orange VIP users had a total of 3.35 million posts, accounting for 8% of the total; blue VIP users had a total of 1.62 million posts, accounting for 4% of the total; and expert users and gold VIP users posted 1.13 million and 440,000 posts respectively, accounting for 2.8% and 1.1% (see Figure 5).

Artificial intelligence-related posts by different user types.

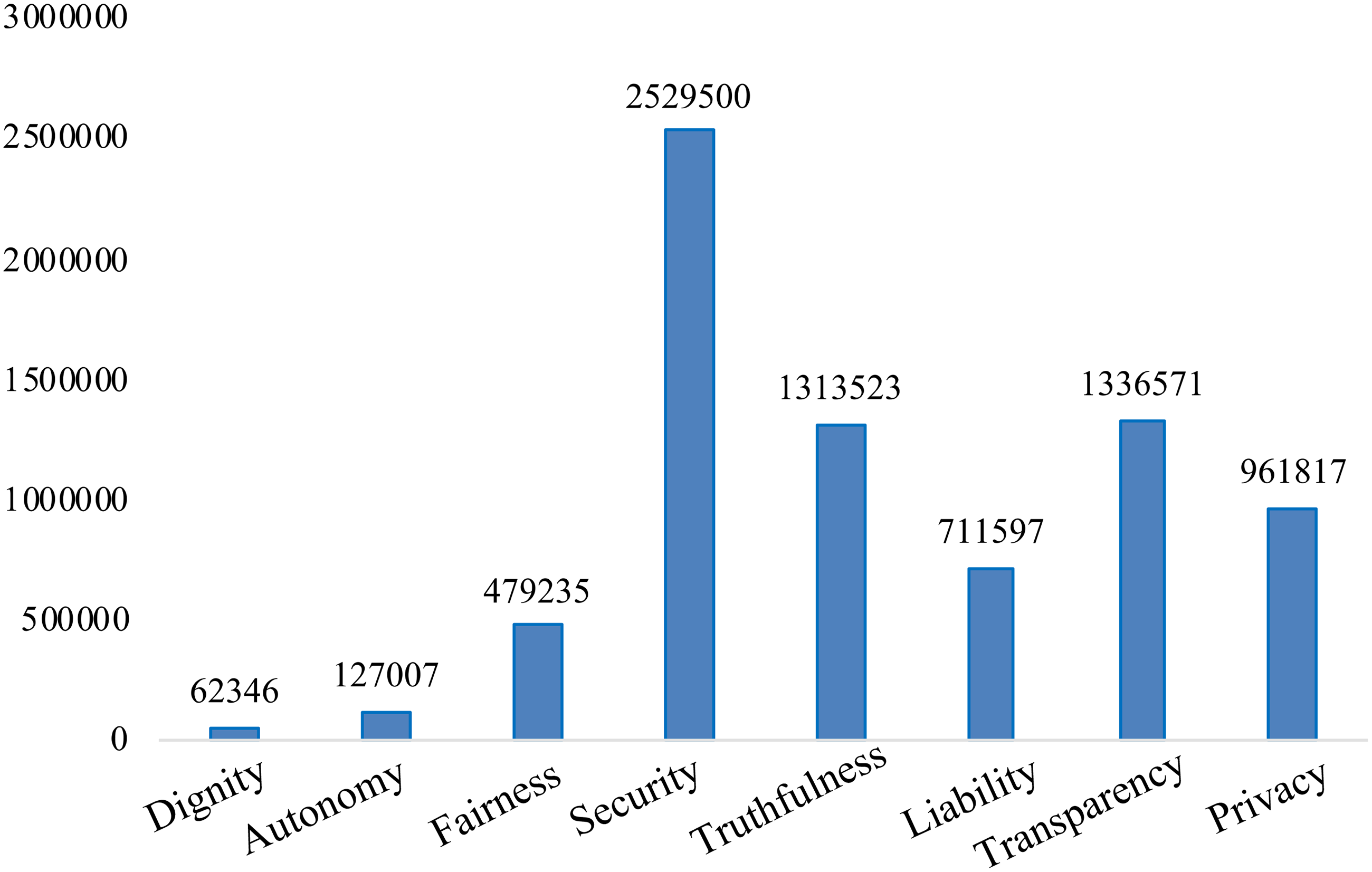

This study also labeled text samples based on keywords to analyze the eight dimensions of AI ethics. The same post may involve different dimensions of AI ethics at the same time, or it may not engage with any of the dimensions. Figure 6 shows the frequency with which different dimensions of AI ethics were posted about, with a total of 6.56 million posts being labeled. Among the eight dimensions of AI ethics, the public is most concerned about the security of AI, with a total of 2.53 million posts on this topic, accounting for 6.24% of the total. The public's other major concerns are transparency, truthfulness, and privacy protection, with 1.337 million (3.30%), 1.312 million (3.24%), and 962,000 (2.37%) posts, respectively. Finally, the public is less concerned about the liability, fairness, human autonomy, and human dignity dimensions of AI, with 710,000 (1.76%), 480,000 (1.18%), 127,000 (0.31%), and 62,000 (0.15%) posts, respectively.

Number of Weibo posts on each ethics issue.

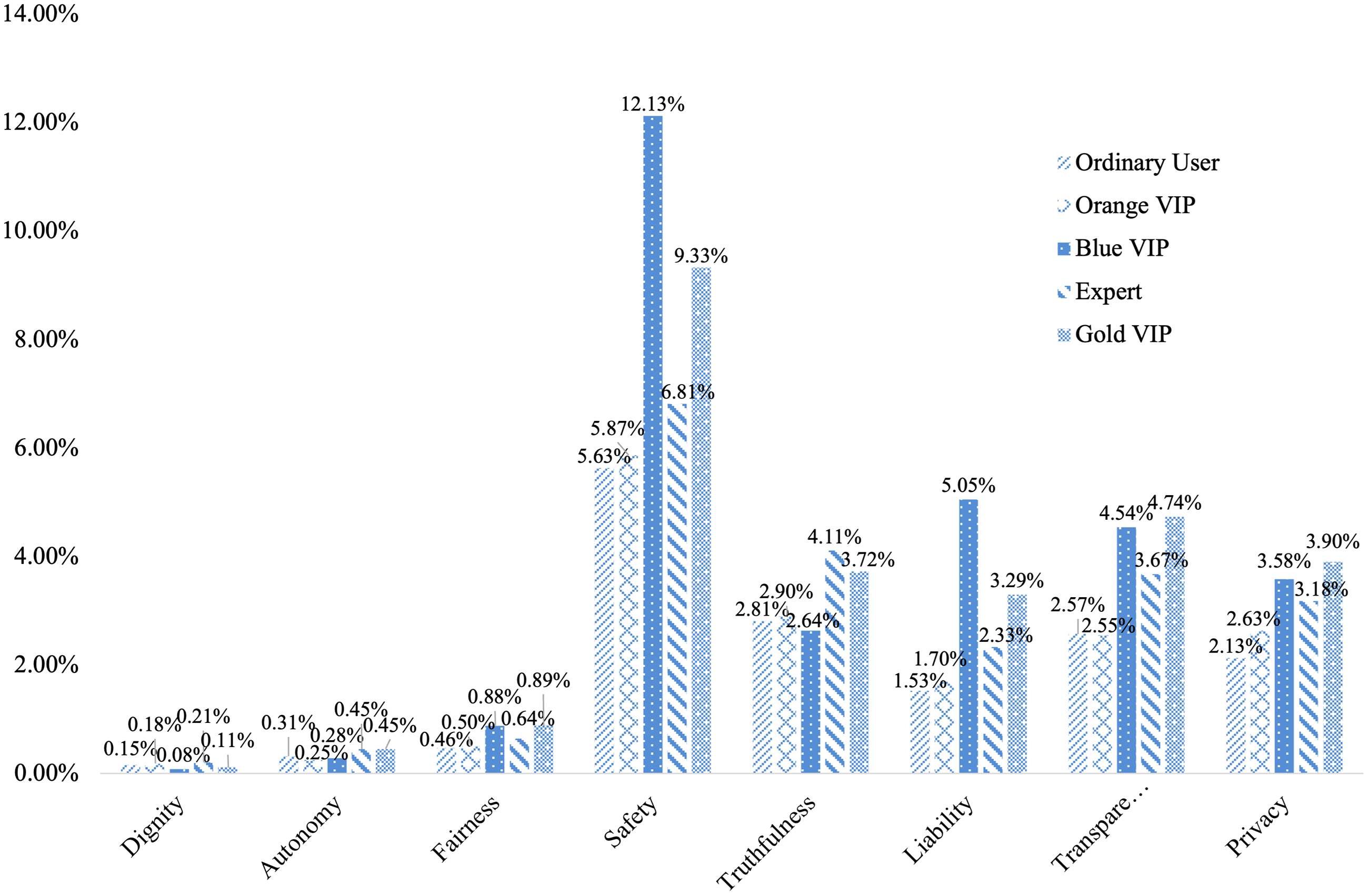

Different types of user also show heterogeneous attention to AI and its related ethical issues, as Figure 7 shows. Overall, blue VIP users show the highest attention to AI, followed by gold VIP users, expert users, and orange VIP users, and finally ordinary users. Specifically, in terms of the security and liability dimensions, blue VIP users paid the most attention, followed by gold VIP, expert, and orange VIP users. Compared with the above groups, the attention of ordinary users to the security of AI was low, with only 5.63% of their AI-related posts addressing this topic. In terms of transparency and privacy, gold VIP users showed the most attention, with 4.74% and 3.90% of their AI-related posts involving these two aspects. In terms of truthfulness, expert users (3.72% of posts on this topic) and gold VIP users (3.25% of posts) showed the most attention, while for blue VIP users only 2.64% of their posts concerned this issue. Finally, the public paid the least attention to the fairness, human autonomy, and human dignity dimensions. Evidently, blue VIP users such as government bodies and enterprises are more concerned about the development of AI, as they are playing a leading role in the sector. VIP users of Weibo, on the other hand, are more interested in AI ethics issues.

Attention to artificial intelligence ethics topics by different user type.

Empirical evidence on public opinions on artificial intelligence governance

Approaches for AI governance

Using the same online survey data described the previous section, this section empirically examines public preferences for AI governance.

To investigate public attitudes toward various methods for AI governance and regulation, we asked the respondents the following question: “For various existing problems with AI applications, which governance methods do you think are more effective? (You can choose up to 5 options)”. The list of methods is as follows: (1) strengthening regulation of the AI industry; (2) promoting legislation in the field of AI; (3) developing technical ethics regulations for AI scientists; (4) urging the association of AI industries to strengthen self-discipline; (5) cultivating the corporate social responsibility of AI enterprises; (6) enhancing the public's AI literacy; (7) providing the public with channels for supervising AI; (8) encouraging international communication and cooperation in the governance of AI.

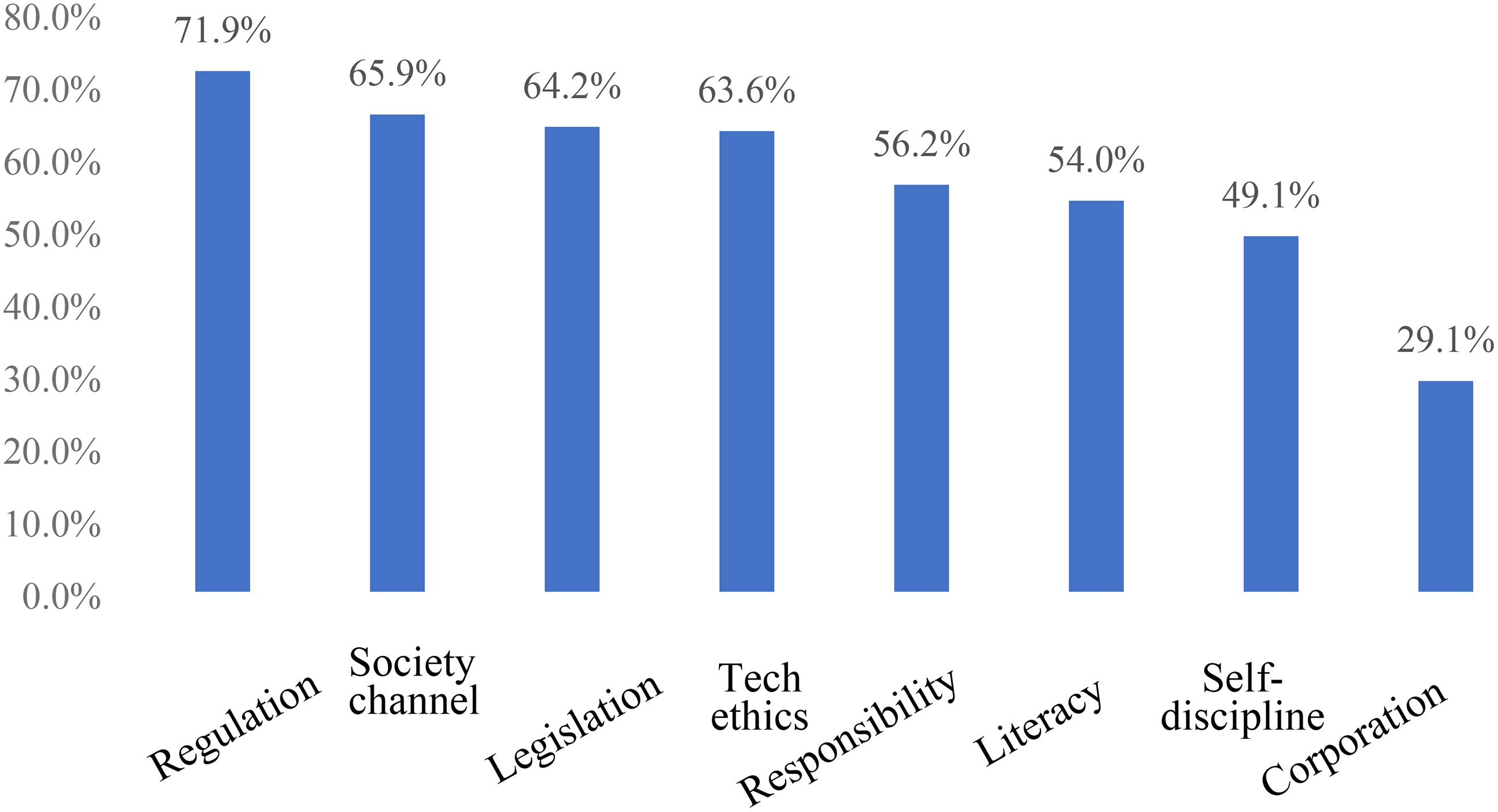

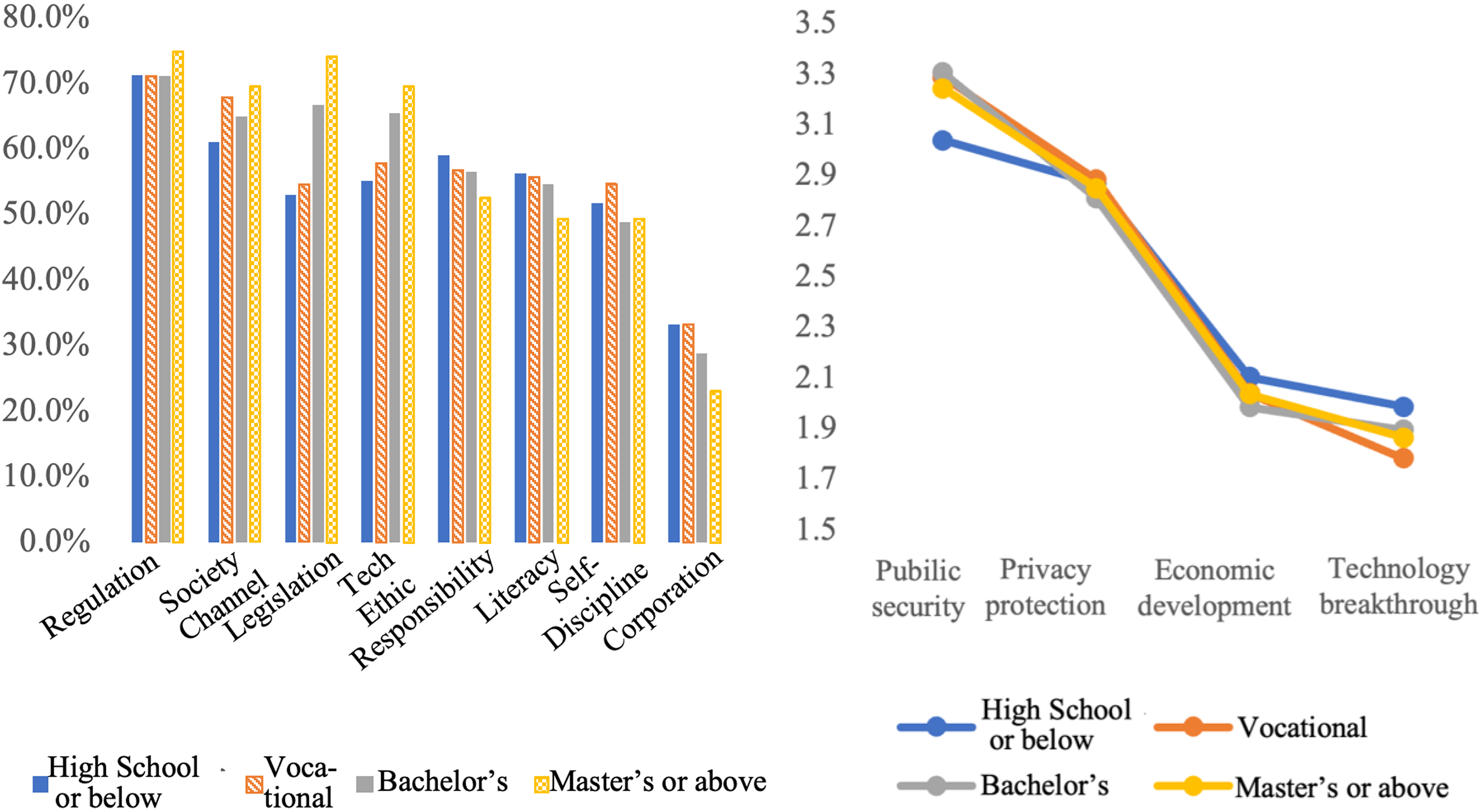

The results show that, among all the methods listed above, the five methods that the public prefers the most are, in declining order of preference, strengthening industry regulation, providing social supervision channels, promoting legislation, developing technical ethics regulations, and cultivating enterprises’ responsibility. Figure 8 shows that the public shows the most endorsement for regulation of the AI industry, with 71.9% of respondents choosing this method. Providing social supervision channels and promoting legislation follow, with 65.9% and 64.2% of respondents choosing these methods, respectively. Establishing technical ethics regulations and cultivating corporate social responsibility ranked fourth and fifth, respectively, with 63.6% and 56.2% of respondents choosing these two options. In addition, enhancing public AI literacy, urging industry self-discipline, and encouraging international communication and cooperation also received attention from 54.0%, 49.1%, and 29.1% of respondents respectively.

Public preferences for artificial intelligence governance approaches.

The ranking results show that the public currently expects a collaborative approach to governing the problems raised by AI. On the one hand, they hope that the government can directly regulate the development of AI from top to bottom, and on the other hand, they also hope that the government can provide effective channels for mass supervision to achieve bottom-up social supervision. In addition, the public also has a high degree of recognition for governance methods such as accelerating legislation in the AI industry and establishing ethical norms for practitioners in the industry. The former is a form of external regulation toward the AI industry, while the latter refers to using ethical norms to internally guide practitioners’ behavior so as to avoid social risks. These tasks require not only government efforts but also active participation from practitioners within the industry.

Goals of governance

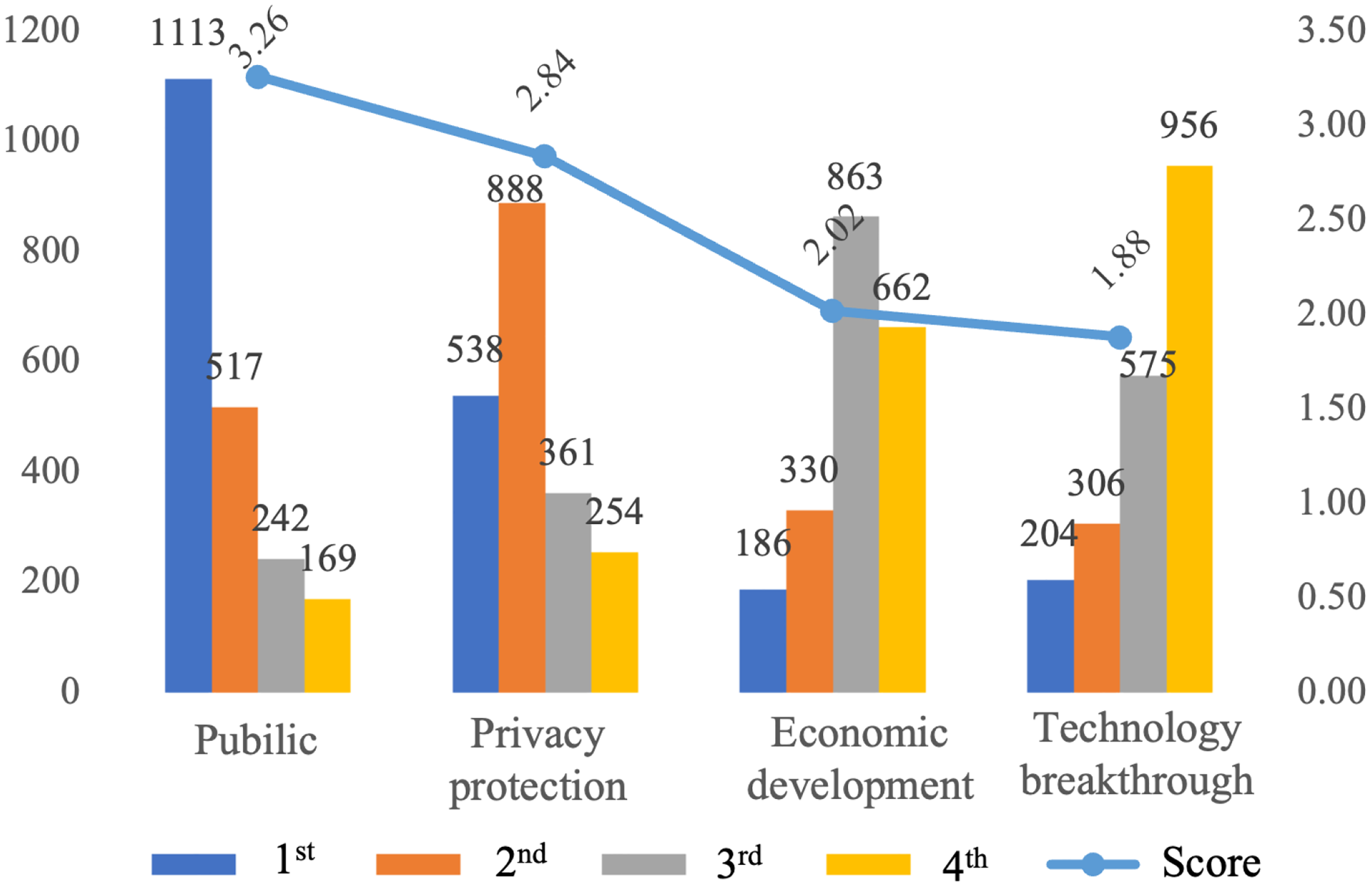

Policy serves as an administrative tool for the government to achieve development. Different public preferences for AI policies not only reflect the current concerns of the public about AI development, but also reflect their expectations for the results of policies. Therefore, our survey also investigates the respondents’ perceptions of the goals of AI governance. Specifically, we asked the respondents to rank the importance of “public safety”, “privacy protection”, “economic development”, and “technological breakthroughs” by asking them: “What do you think the current policy goals for artificial intelligence in China should be? (Rank the following options from 1 to 4)”. Then we calculated the values based on their rankings, with the first ranking assigned 4 points, the second ranking assigned 3 points, the third ranking assigned 2 points, and the fourth ranking assigned 1 point. Figure 9 shows the ranking of the public's preferences for the four different policy goals. Overall, the public is most concerned about the issue of public safety in AI, with 1113 people placing it as the most important policy governance goal, 517 people placing it second, and 242 and 169 people placing it third and fourth, respectively. The overall score of the importance of public safety is 3.26. Privacy protection ranks second, with 538 people placing it first and 888 people placing it second. There are 361 and 254 people placing it third and fourth, respectively. The overall score of the importance of privacy protection is 2.84. Economic development and technological breakthroughs rank third and fourth in terms of importance, respectively. In terms of the scores, there is little difference between the latter two, with the former scoring 2.02 points and the latter scoring 1.88 points. Relatively speaking, more people placed economic development in the third most important position, while more people placed technological breakthroughs in the fourth most important position.

Ranking scores for the artificial intelligence governance goals.

Overall, the public is more concerned about the issues of public safety and personal data protection of AI. Public safety and privacy protection represent two important dimensions of the development of AI. Therefore, when the government is formulating policies around the development of AI, it should focus on issues such as public safety and privacy protection in the development of AI to respond to social and public demands.

Group heterogeneity in methods and goals for AI governance

Considering that different subgroups may have different views on AI governance, we divide the respondents based on their gender, age, education level, place of residence, and income to examine if there is any heterogeneity among their preferences for the methods and goals for AI governance.

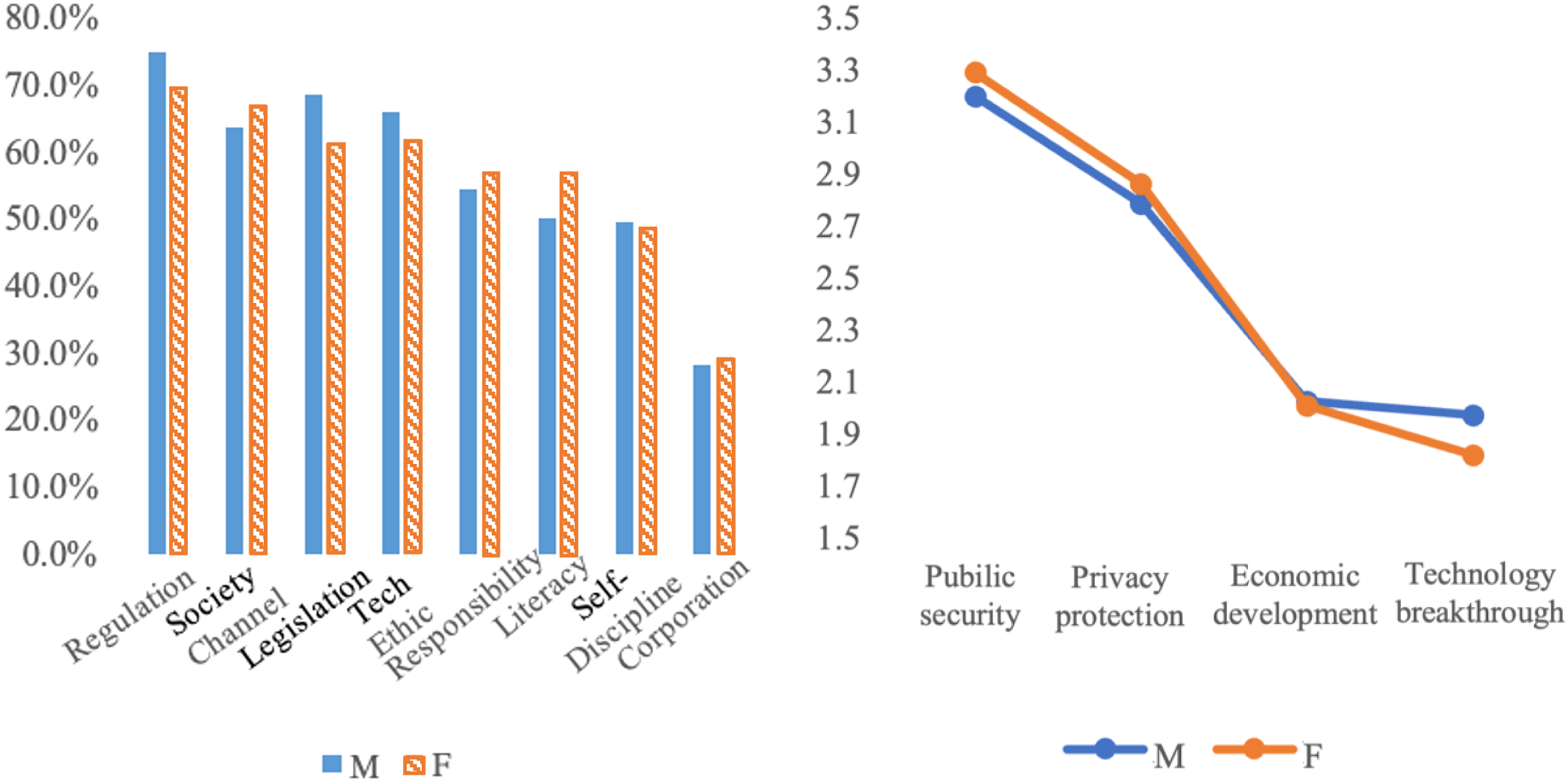

Overall, regardless of gender, strengthening industry regulation, providing social supervision channels, promoting government legislation, establishing technical ethics, and cultivating corporate social responsibility are the top five most recognized policy effectiveness options. There are differences in preferences between men and women for different options, however (see Figure 10). Among those supporting strengthening industry regulation, promoting government legislation, establishing technical ethics, and urging industry self-discipline, the proportion of men is higher. For providing social supervision channels, establishing ethical norms, improving public knowledge literacy, and encouraging international exchanges and cooperation, the proportion of women is higher. Although men and women have relatively consistent perceptions of the four governance goal issues, women attach more importance to public safety, while men give higher scores to technological breakthroughs.

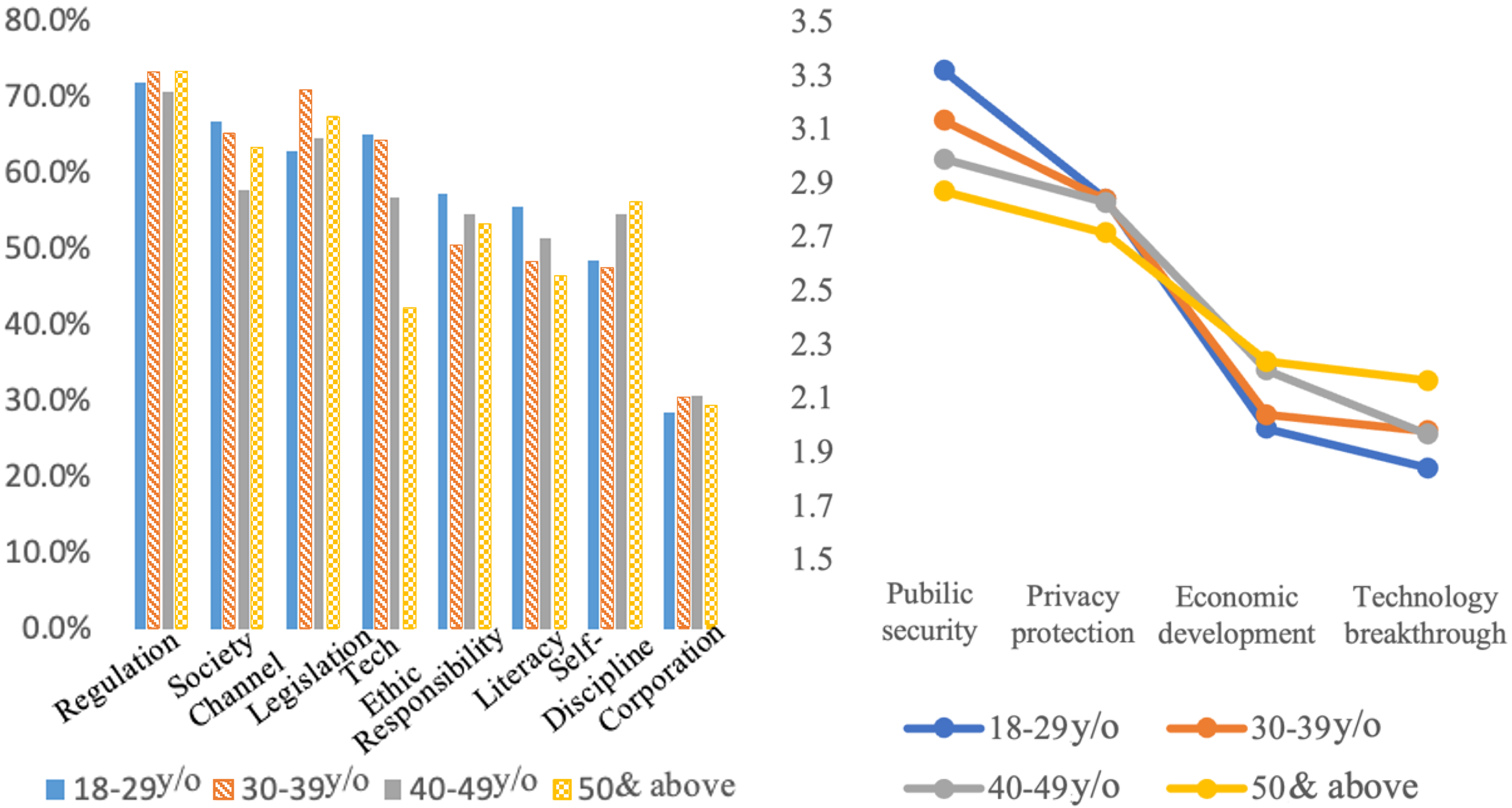

Differences in artificial intelligence governance preferences by gender.

Different age groups have significant differences in preferred AI governance policies (see Figure 11). When discussing which governance method is more effective, all age groups rank strengthening industry regulation, providing social supervision channels, and promoting government legislation as the three most important. For strengthening industry regulation, the support rate of each age group exceeds 70%, among which the over 50 age group expressed the strongest endorsement. In terms of providing social supervision channels, the 40–49 age group had the lowest degree of endorsement and the 18–29 age group had the highest degree of endorsement. In terms of promoting government legislation, the 30–39 age group had the highest degree of endorsement and the 18–29 age group had the lowest degree of endorsement. In terms of establishing ethical norms, the 18–29 age group had the highest degree of endorsement and the over 50 age group had the lowest degree of endorsement. In terms of cultivating corporate social responsibility, the 18–29 age group had the highest degree of endorsement and the 30–39 age group had the lowest degree of endorsement. In terms of improving public knowledge literacy, the 18–29 age group had the highest degree of endorsement and the over 50 group had the lowest degree of endorsement. In terms of urging industry self-discipline, the over 50 age group had the highest degree of endorsement and the 30–39 age group had the lowest degree of endorsement. In terms of encouraging international exchanges and cooperation, there are minor differences among all age groups, and their endorsement rate was around 30%. It can be seen from this that younger groups tend to give more credit to the potential for autonomy and pluralistic governance among enterprises, users, and society, while older groups tend to rely more on government administrative supervision.

Differences in artificial intelligence governance preferences by age.

Although the overall ranking of the four goals is consistent among all age groups, there are certain differences in the overall scores of each goal among different age groups. In terms of public safety, the score was the highest for the 18–29 age group, while the score was relatively low among the over 50 age group. In terms of privacy protection issues, the score for the over 50 age group was also relatively low, and the scores of other age groups were roughly similar. From this it can be seen that older people have a significantly lower awareness of the safety concerns of AI products than young people. As such, they may be at a disadvantage in terms of mastering technology due to their age and are therefore more likely to be at higher risk. Relatively speaking, economic development and technological breakthroughs have relatively high overall scores among the over 50 age group, while younger groups give lower scores to these two governance goals.

When discussing which governance methods are more effective, there are also significant differences in the answers given by people from different educational backgrounds (see Figure 12). Although all groups generally agree on strengthening industry regulation, in the bachelor's degree and above group, promoting government legislation and establishing technical ethics are respectively ranked second and third. In the vocational degree holders group, providing social supervision channels and establishing technical ethics are ranked second and third, respectively. In the below high school group, providing social supervision channels and cultivating corporate social responsibility are ranked second and third, respectively. In addition, the results show that as the level of education increases, the recognition of the effectiveness of a certain governance methods is strengthened. Both strengthening industry regulation and promoting government legislation have a support rate of over 70% in the master's degree and above group, and the support rate for establishing technical ethics and providing social supervision channels is also around 70%. The support rate of the above four options by the bachelor's degree group is also over 60%. Relatively speaking, only two items, strengthening industry regulation and providing social supervision channels, exceeded 60% among groups with a below high school level of education, and both were lower than those of more educated groups. There are also certain differences in educational background in the selection of AI governance goals. Although the ranking of governance goals is consistent among groups with different educational backgrounds, the overall score of public safety is significantly lower in the below high school group.

Differences in artificial intelligence governance preferences by education.

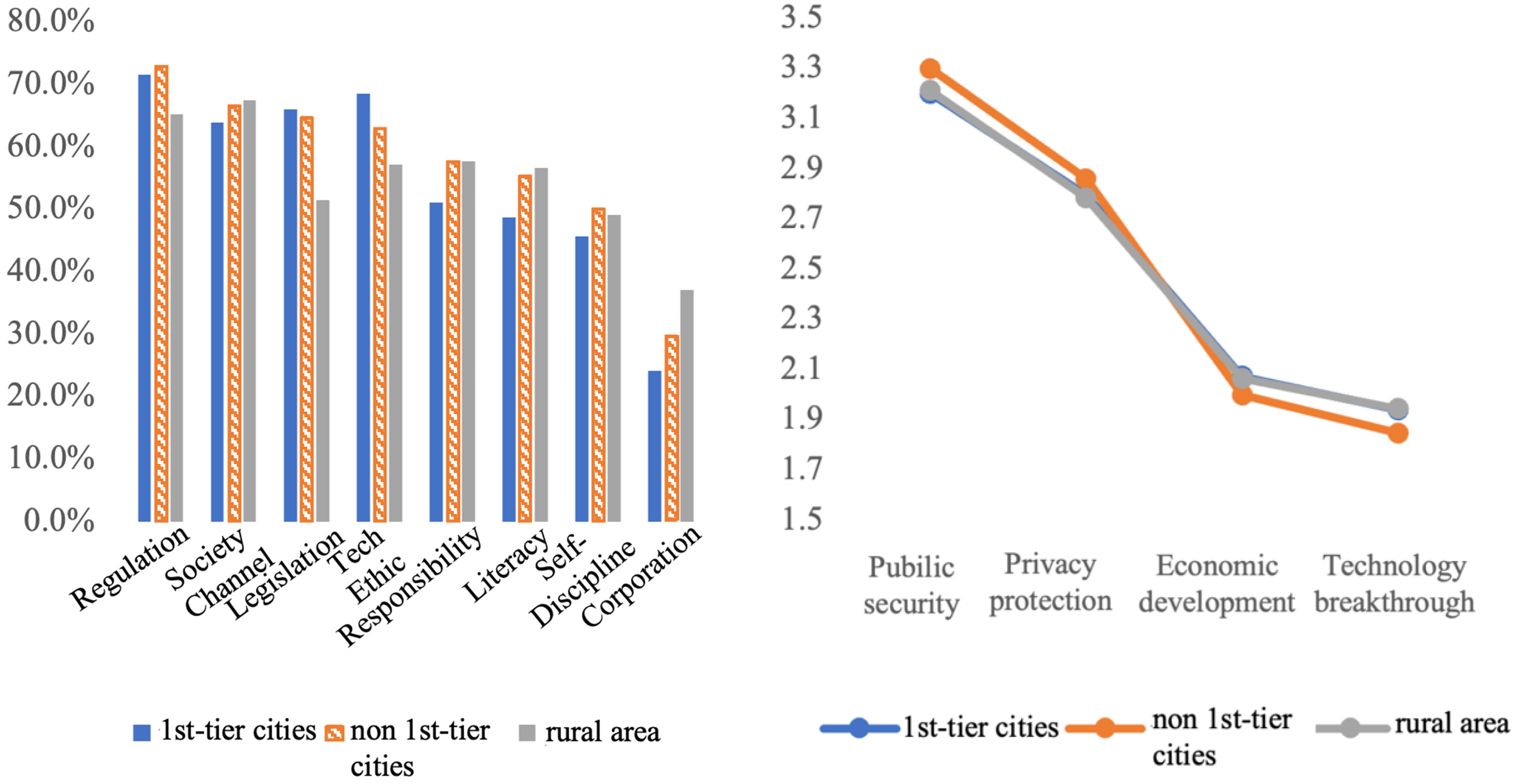

We also investigate the differences in governance methods and goals among respondents from different regional backgrounds (see Figure 13). For respondents living in cities, both first-tier and non-first-tier, their preferences for governance methods tend to be more consistent. They generally agree that strengthening industry regulation, providing social supervision channels, promoting government legislation, and establishing technical ethics are more effective. Relatively speaking, respondents living in first-tier cities such as Beijing, Shanghai, Guangzhou, and Shenzhen attach more importance to establishing technical ethics and promoting government legislation on AI governance, ranking them second and third, respectively. Respondents living in rural areas have more divergent views. Only for strengthening industry regulation and providing social supervision channels do rural respondents exceed a 60% support rate. In terms of governance goals, the views of the three types of respondents are all relatively consistent. Compared with respondents in first-tier cities and rural areas, respondents from below-first-tier cities attach more importance to public safety. However, they give a lower score to technological breakthroughs.

Differences in artificial intelligence governance preferences by region.

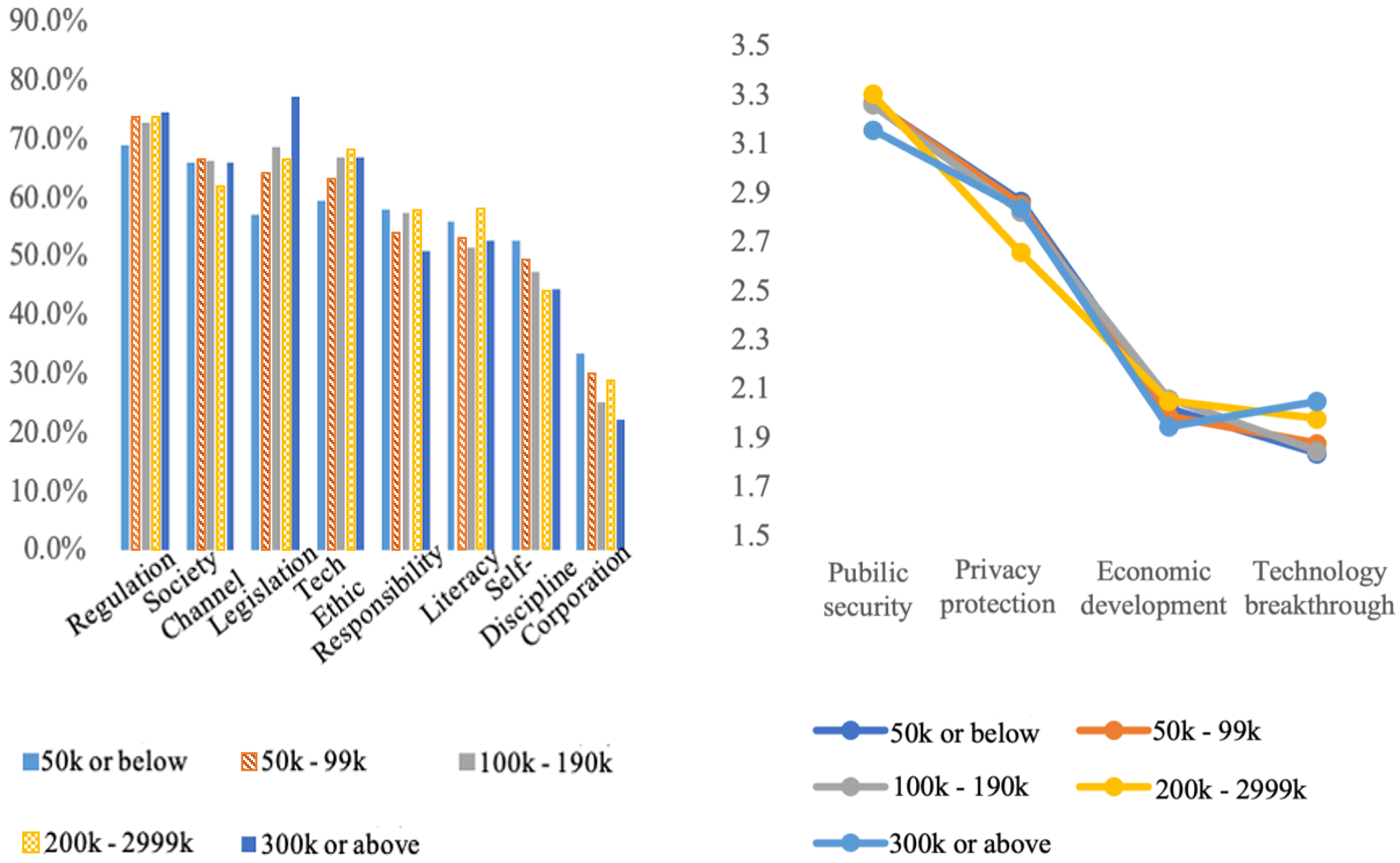

There are significant differences in the perception of AI governance among different income groups (see Figure 14). When discussing which governance method is more effective, the group with an annual income of over 300,000 yuan values the promotion of government legislation the most. In contrast, other income groups value strengthening industry supervision the most. Overall, as family income levels increase, preferences for governance methods become more consistent. In the group with an annual income of over 300,000 yuan, more than 70% of respondents chose promoting government legislation and strengthening industry supervision. The number of people choosing establishing ethics norms and providing social supervision channels also exceeded 60%. In contrast, in groups with an income below 50,000 yuan, the support rate only exceeded 60% for strengthening industry supervision and providing social supervision channels.

Differences in artificial intelligence governance preferences by income.

In terms of AI governance goals, compared with other income groups, the highest income group pays more attention to technological breakthroughs in overall AI governance policies. The score for technological breakthroughs is higher than that for economic development and ranks the third among the four options. Different income groups have basically consistent rankings of governance goals for AI governance policies. “Public safety” and “privacy protection” are ranked either first or second for all of the income groups.

In general, our study finds that respondents with higher education levels, from more developed cities, and with higher incomes place more value on technical ethics and legislation to regulate AI.

Conclusion

In this paper, we discussed AI ethics and governance with respect to public perspectives. Based on the existing literature, policies, and guidelines on AI ethics, we sorted AI ethics into eight dimensions: safety, transparency, fairness, personal data protection, liability, truthfulness, human autonomy, and human dignity. Combining online survey data with social media data, we quantified people's concern on each of dimension. The results show that people are highly concerned about AI ethics. When it comes to the use of AI smart products in daily life, safety, liability, and privacy are the highest-ranking concerns. The study also shows that people's ethical concerns are heterogenous. For example, in terms of the views of AI-adjacent professionals, more efforts should be made in the development of AI transparency, fairness, and personal information protection. Our results also indicate that education on AI is crucial, so that people could be more aware of the actual risks of using AI products. We also investigated the public's policy preferences for AI governance. The results are relative consistent: people expect both top-down regulations as well as bottom-up supervision from society. Public safety and personal privacy protection are the consensus as two most important AI governance goals.

Currently, AI is profoundly changing all aspects of human social life. While AI is promoting social and economic development and improving the quality of life, we should also pay significant attention to AI regulations. This paper indicates that not only governments and AI-related organizations but also public citizens are widely concerned about AI ethics issues. Thus, policy makers should take further steps in AI governance on both the government side to promote legislation in AI and develop clear AI regulations in both technical and ethical aspects, and on the society side to set up channels for social supervision and enhance public literacy in AI.

Footnotes

Contributorship

Tianguang Meng conceived the study design, collected the data, and made the key research findings. Jiongyi Cao contributed to drafting the English manuscript and revising it. Both authors were responsible for the preliminary data analysis, including conducting the statistical analyses and interpretation of results.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the China Social Science Foundation (grant number 18ZDA110).