Abstract

User-facing ‘platform safety technology’ encompasses an array of tools offered by social media platforms to help people protect themselves from harm, for example allowing people to report content or block other users. These tools are an increasingly important part of online safety; however, little is known about how users engage with them. We present findings from a nationally representative survey of UK adults examining their experiences with online harms and safety technologies. The results show that online harm is widespread: 67% of respondents report having encountered harmful content online. Among those who are aware of safety tools, over 80% have used at least one, indicating high uptake when knowledge of the tools is present. Awareness of specific tools is varied, with people more aware of ‘post hoc’ safety tools, taken in response to harm exposure (such as reporting or blocking), than preventive measures (such as altering feed algorithms). However, satisfaction with safety technologies is generally low. People who have previously seen online harms are more likely to use safety tools, implying a ‘learning the hard way’ route to engagement. Those higher in digital literacy are also more likely to use some of these tools, raising concerns about the accessibility of these technologies. In addition, women are more likely to engage in particular types of online ‘safety work’. These findings have significant implications for platform designers, regulators, researchers and policymakers seeking to create a safer and more equitable online environment.

Keywords

Introduction

The potential for users of social media platforms to be exposed to harmful content remains a cause for concern. Numerous types of online harms, which can be understood as online content or behaviours which may cause hurt to a person or group of people physically or emotionally, such as cyberstalking and hate speech (see HM Government, 2019), take place everyday. In the United States, Pew Research Centre (2021) found that 41% of Americans have been subject to online harassment, including physical threats, stalking and sexual harassment. In the United Kingdom, two-thirds of the British public say they have witnessed harmful content online before, with the number rising to 9 out of 10 for young adults (Enock et al., 2023). Such high levels of exposure to online harms are problematic – these experiences have been linked to numerous negative psychological impacts including depression, anxiety, fear, low self-esteem and escalation of self-harm (Stevens et al., 2021; Susi et al., 2022). Furthermore, experiencing harms like online abuse and sexualised threats can result in victims withdrawing from social interaction, including with friends and family, employment and educational organisations (Pashang et al., 2019; Storry & Poppleton, 2022).

In identifying solutions for the problem of online harms, platform safety technologies are increasingly seen as crucial for enabling a safer online environment for social media users. Also known as user controls and user empowerment tools, safety technologies are tools offered to help people tailor their online environments and protect themselves from harm. For example, mainstream platforms such as Facebook, Instagram and TikTok provide users with features that allow them to unfollow others, block other users from contacting them or report content they feel is inappropriate to the platform (Bode, 2016; Crawford & Gillespie, 2016; Yang et al., 2017). In the United Kingdom, the critical role placed on these controls for tackling online harms is reflected in a key part of the 2023 Online Safety Act, which requires larger platforms to offer accessible and effective safety tools of this kind as part of the duty of care they have to users (Ofcom, 2023). While increased emphasis is now being placed on the importance of this type of safety technology in enhancing online safety, the utility of these features is dependent upon individuals knowing that they exist, choosing to use them, understanding how to do so and perceiving them as effective. However, there is currently little understanding of the extent of use of these platform safety technologies and the potential drivers of their use. We address this gap in this paper by describing levels of contemporary use of platform safety technology and explaining key determinants of use.

We define platform safety technologies as user-accessible features provided by social media platforms that are intended to allow individuals control to protect themselves from exposure to harmful content and interactions online, either by preventing initial exposure or responding to exposure to prevent re-occurrence. We note that alternative labels such as safety controls, content controls or user empowerment tools are used in the literature and industry (Ofcom, 2022). We are primarily interested in user-initiated safety features, which are those that users can activate or control themselves, such as blocking or reporting, rather than platform-initiated moderation mechanisms, like algorithmic downranking or automated content removal (discussed in Enock et al., 2024; Gillespie, 2018, 2022; Johansson et al., 2022).

We focus our investigation on seven specific platform safety technologies that are commonly available across major social media platforms. To determine which safety tools to include, we first identified the most widely used platforms in the United Kingdom: Facebook, Instagram, LinkedIn, Pinterest, Reddit, Snapchat, TikTok, Twitter (now X) and YouTube (YouGov, 2025). Three of the authors then divided the platforms between them and systematically reviewed each one to catalogue the safety technologies they offered. This was achieved through direct inspection of platform interfaces and settings, supported by official documentation and secondary resources. Each tool was recorded in a shared spreadsheet, and we retained only those that appeared commonly across the majority of platforms, thereby focusing on features with broad availability rather than those specific to a single site.

This approach yielded the following seven tools as appearing consistently across platforms:

Changing feed order (e.g., switching from personalised to chronological feed).

Personalising feed content (e.g., telling the algorithm to show more/less of certain types of content).

Limiting who can respond to posts.

Hiding engagements with posts (such as likes and replies).

Unfollowing other users.

Blocking other users.

Reporting content (also often referred to as flagging).

These tools were grouped into three broader categories:

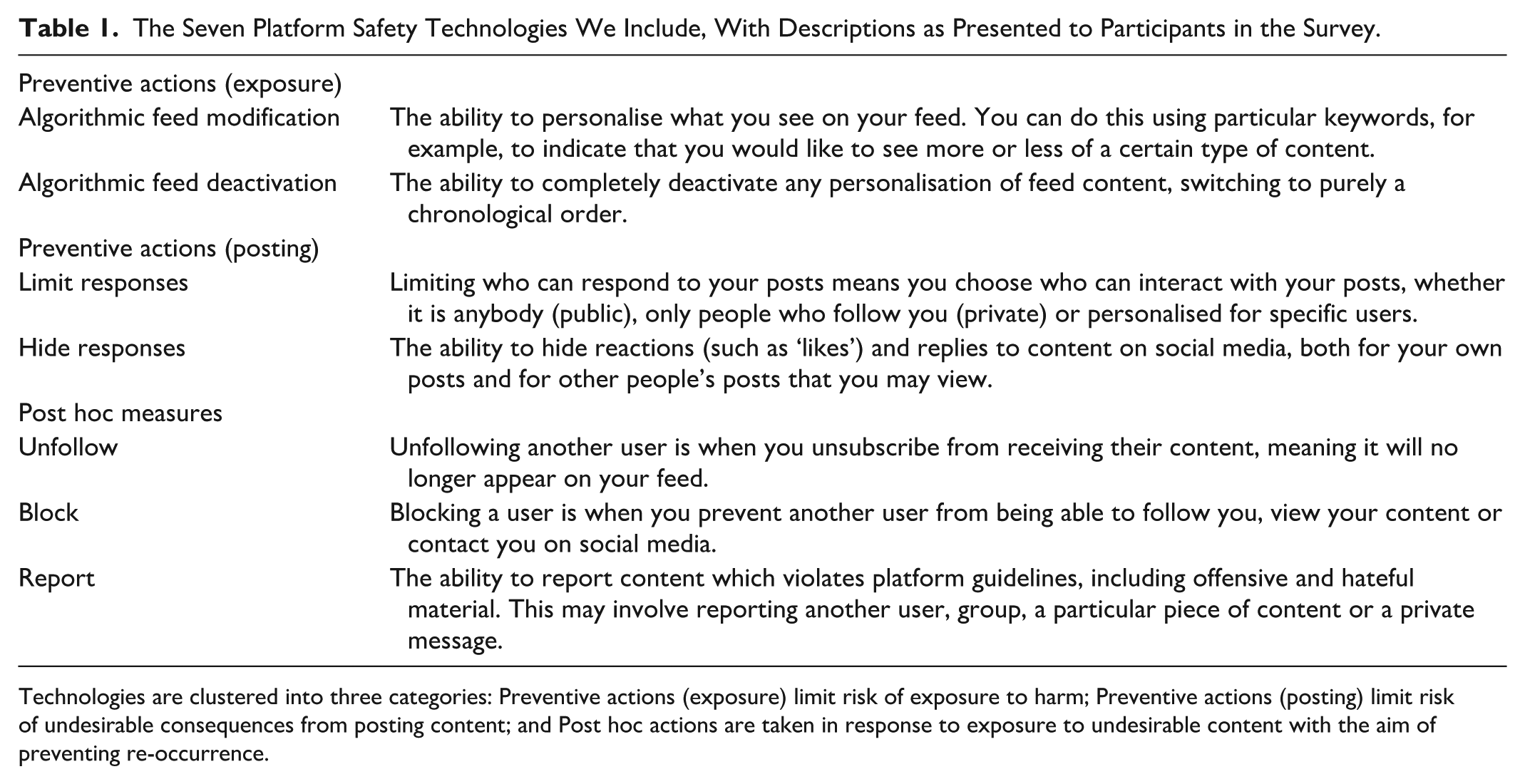

The technologies we focus on are presented in Table 1, along with the descriptions that we presented to the survey participants. It is worth noting that some of these features could be used to personalise a user’s online environment more generally, rather than with safety in mind. It is also worth noting the diverse types of harm that these features could protect from: for example, hiding engagements may be aimed at protecting mental health and reducing some of the social pressure of operating on a social platform, whereas blocking and reporting are aimed at directly reducing exposure to harmful content created by other users. Our inclusion of these features reflects a broad view of safety, encompassing both overtly harmful encounters and more subtle psychological impacts (e.g., Redmiles et al., 2019).

The Seven Platform Safety Technologies We Include, With Descriptions as Presented to Participants in the Survey.

Technologies are clustered into three categories: Preventive actions (exposure) limit risk of exposure to harm; Preventive actions (posting) limit risk of undesirable consequences from posting content; and Post hoc actions are taken in response to exposure to undesirable content with the aim of preventing re-occurrence.

User engagement with platform safety technology

Since the emergence of social media platforms, the safety of users has been a key focus for researchers, policymakers and practitioners. Much work in this area focuses on what platforms can and should do to make social media safer, for example, through content detection and moderation technologies (e.g., Arora et al., 2023; Gillespie, 2018, 2022; Jhaver et al., 2021), but comparatively less academic work has focused attention on the role of user-facing safety technologies that allow individuals to manage their own exposure to harm.

Early work examining user engagement with platform safety technology found use to be fairly low – a study examining user engagement with privacy settings, considered a key safety feature, found that very few social media users modified the default profile visibility in their Facebook accounts (Ho et al., 2009), and very few reviewed or changed any privacy settings more generally (Agosto & Abbas, 2017). Subsequent work showed that awareness and accessibility may be a problem when it comes to user engagement – one study showed many users of mainstream platforms such as Facebook, Twitter and YouTube were not aware of how much of their social media environment they could control by changing various settings (Hsu et al., 2020) and further that even when people knew about such tools and wished to use them, they reported having difficulty locating settings, with a majority then engaging with changing feed settings once they found where they were. Further work shows that it may not just be a case of improving awareness and accessibility of such tools, but that the perceived efficacy is important for engagement too. M. Park and colleagues (2024) show that users may feel frustrated by the perceived ineffectiveness of safety tools on offer, particularly in relation to altering algorithmic feeds and reporting processes. Work elsewhere points to issues surrounding perceived transparency and effectiveness of user flagging (Crawford & Gillespie, 2016), with concerns that users may not use report functions because they think nothing will happen when they do (Worsley et al., 2017).

Ofcom (2022) reports moderate patterns of use of safety technology. In a nationally representative survey of UK adults, they showed that six in ten UK internet users who came across harmful content or behaviours online chose to use a safety feature in response. The most frequently used features were to unfollow, unfriend or block the person who had posted the content (20%), as well as to make use of the report or flag button, or to mark it as junk (also 20%). In other words, absolute usage of these features was low, showing that more needs to be done to engage users with these tools if they are to play a central part in enabling a safe online environment. Overall, however, few studies comprehensively address questions of awareness, use and satisfaction with platform safety technology. Findings of low usage levels might now be out of sync with the contemporary landscape which has seen an ever greater focus on online harms. In addition, most work takes a narrow focus on only a small number of different types of safety technology and few studies employ large-scale, nationally representative samples to understand attitudes and behaviours which are generalisable to populations. This leads us to pose the three key descriptive research questions which motivate our work:

RQ1: To what extent are users of social media platforms aware of available platform safety technologies?

RQ2: To what extent do users of social media platforms make use of available platform safety technologies?

RQ3: How satisfied are users of social media platforms with outcomes of the platform safety technologies on offer?

In addition to these descriptive research questions, we measure potential drivers of the use of platform safety technology. Again, literature in this area is relatively limited in scope. However, some work exists that has built on more general work on theories of safety behaviour, which allows us to develop some hypotheses about drivers of use.

A first area of research has drawn from Protection Motivation Theory (Rogers, 1975). This theory was originally conceived to explain how individuals behave in a self-protective way in response to a perceived health threat, and it has since been applied to users protecting themselves online. Research has demonstrated that improving users’ perceptions of their personal responsibility to protect themselves is needed first and foremost in order for effective use of online safety interventions, as well as the condition that the user’s understanding should correspond to the intervention strategy (Shillair et al., 2015). In other words, concerns about online harms are one motivator for users to engage with platform safety technology. This leads to our first hypothesis:

H1: Individuals who are more concerned about online harms will be more likely to use platform safety technology.

Of course, one way of becoming concerned about online harms is through direct exposure. This is supported by research which finds that people who report prior exposure to online harms also report higher levels of protective behaviours, implying that a ‘learning the hard way’ approach exists when it comes to engaging with platform safety technology (Büchi et al., 2017). This strand of work leads to our second hypothesis:

H2: Individuals who report prior exposure to online harms will be more likely to use platform safety technology.

In addition to prior harm exposure, skill and ability with the use of technology are also likely to play a role. One lens through which this has been explored is through the concept of ‘digital pruning’, which explains the method of ‘sifting through and unfollowing content that triggers undesirable affect and negative state of mind’, with users describing it as ‘an act of self-care, requiring sustained reflection and evolved self-knowledge’ (Hockin-Boyers et al., 2021, p. 13). Digital pruning is described as a skill, and supports more general work on the idea that those making the most use out of platform safety technology might be the most digitally skilled. Relatedly, some work finds that digital self-efficacy and expectations of success were key drivers in the use of reporting functions (Wong et al., 2021). These lines of thought lead us to our next hypothesis:

H3: People with greater digital literacy will be more likely to use platform safety technology.

There is also research providing some suggestions that there may be gendered effects on the likelihood of using platform safety technology. Part of this may be driven by differing exposure to online harms. Although this is addressed already in hypothesis 1, there is evidence to suggest that the type of online harms women are exposed to is qualitatively different to those that men are exposed to (not enough is known about how this also impacts people who identify as non-binary). For example, women may be more directly exposed to threats of cyberaggression (Enock et al., 2025; Levrant Miceli et al., 2001), or direct sexualised threats of ‘intimate intrusion’ (Gillett, 2023) that might be particularly likely to provoke the use of platform safety technology, compared to other types of online harms (e.g. exposure to scams or online phishing attacks). Whether this leads to differing use of platform safety technology is an open question: some work has shown gender differences in this context (Y. J. Park, 2013), while other work has not found a clear effect (Wilhelm & Joeckel, 2019). This leads us to our fourth hypothesis:

H4: Women will be more likely to use platform safety technology than men.

Finally, there is some research supporting the idea that political orientation may play a role in engagement with safety technology. One way of looking at the use of platform safety technology is in terms of its potential impact on free speech, an area where there are clear partisan differences. In particular, in the context of misinformation reporting, a variety of work has suggested that left-wing people are more likely to be in favour of actions that restrict misinformation, with right-wing individuals more likely to perceive this as an assault on free speech (Pew Research Centre, 2023). Empirical work on reporting behaviours has thus far not found any influence of partisanship (Riedl et al., 2022; Wilhelm & Joeckel, 2019); however, to our knowledge, the relationship has not been tested for other types of platform safety technology. This leads us to our final hypothesis:

H5: Left-wing users will be more likely to use platform safety technology.

In addition to our main hypotheses, we also include a number of control variables in our analyses that are likely to be correlated with some of the variables in our main hypotheses. Frequency of social media use has an obvious potential effect, with those using the technology less being perhaps less likely to encounter harms. Level of education is a further factor which may be correlated with the level of digital literacy. Finally, age is also a factor that has been mentioned frequently in the literature, with older users perhaps using social media less frequently and also being less digitally literate than younger people (Dodel & Mesch, 2018; Y. J. Park, 2013).

Despite the existing work discussed in this section, considerable gaps in the research landscape remain. Much of the literature referenced here is out of date or focuses only on privacy and cybersecurity. Engagement with newer platform safety technologies, such as controlling the order of one’s feed, has received only little attention. Furthermore, explanatory models of why people use these critical technologies are lacking. Our work seeks to fill these important gaps.

Data and methods

Our main research instrument was a nationally representative survey of UK adults. Respondents were recruited through the platform Qualtrics (www.qualtrics.com), which was also used to create and administer the survey, and data were collected during January 2023. The survey was approved by the Ethics Committee at The Alan Turing Institute.

Sample

The sample was designed to be nationally representative of the population of the United Kingdom across demographic variables of age, gender and ethnicity (based on 2020 data from Eurostat). A total of 1167 participants who completed the survey passed standard checks for data quality, though, as this survey concerns the use of social media, the 100 respondents indicating that they do not use social media each day were removed from the dataset, leaving 1067 usable responses. Respondents were aged between 18 and 88, with a mean age of 44.6 (SD = 18.8). A total of 570 participants identified as female (53.4%), 486 as male (45.5%) and 5 as non-binary (0.47%), with six selecting ‘prefer to self-describe’ or ‘prefer not to say’. Four hundred seventy-eight respondents had degree-level qualifications (44.8%), 223 participants had non-degree level qualifications (vocational or similar) (20.9%) and 366 had no degree-level qualifications (including completion of secondary school and below) (34.3%).

Survey

The survey contained several parts and we describe those relevant to our research questions and key analyses in the sections below. The full survey is available on OSF (osf.io/zktny).

Demographics and background questions

For each participant, we collected standard demographic information about age, gender, ethnicity, education level and political orientation. Age could be entered as any number with a minimum of 18. For gender, education and ethnicity, participants were asked to select the option that best described them from a list of standard predefined categories. For political orientation, participants used an unmarked sliding scale (scored from 0 to 100) to indicate their political ideology from ‘extremely left’ to ‘extremely right’ (with ‘centre’ in the middle). All demographic questions other than age came with a ‘prefer not to say’ option (not included for age, as being 18 or over was a requirement for participation). These measures allow us to address H4 and H5, understanding whether women or left-wing users are more likely to use platform safety technology. These measures also allow us to include control variables of age and education level.

Awareness, use and satisfaction

For each of the seven safety tools (see Table 1), we asked participants about

Online harm exposure and concern

We asked participants the extent to which they had been exposed to content which they consider to be harmful on social media in the past along two scales: how much they had witnessed content which they consider to be harmful online, meaning observing harmful content not directly intended for them, and how much they had directly received content which they consider to be harmful online, meaning the content was directly intended for them. Both questions were answered on a 4-point Likert-type scale (Many times/Occasionally/Very rarely/Never) along with Don’t know/Prefer not to say options. Participants also indicated how concerned they feel about harmful online content using an unmarked sliding scale (scored from 0 to 100) from ‘Not at all concerned’ to ‘Extremely concerned’, with ‘Somewhat concerned’ in the middle. These measures allow us to explore H1 and H2, whether harm concern and harm exposure (in the form of both witnessing and directly receiving harmful content) increase the likelihood of using platform safety technology.

Digital literacy

Following prior work (Ada Lovelace Institute & The Alan Turing Institute, 2023), we measured digital literacy by asking participants to indicate how confident they feel using computers, smartphones or other devices to do eight different activities online, including sending and receiving emails, organising content across multiple devices and purchasing goods online. Participants responded to the eight items using unnumbered sliding scales (scored from 0 to 100), with guides for ‘Not at all confident’, ‘Not very confident’, ‘Fairly confident’ and ‘Very confident’ equally spaced along the sliders. These items showed good internal reliability (α = 0.85) and we combined these using the mean to create single digital literacy scores for each participant. This measure allows us to address H3, understanding whether those higher in digital literacy are more likely to use platform safety technology.

Device use

Respondents were asked to indicate the amount of time they spend on social media per day by selecting from a list of seven multiple choice items (No time/Less than 1 hr/1–2 hr/2–4 hr/4–6 hr/6–8 hr/8 + hr/Prefer not to say), and the same question for the amount of time they spend on the internet generally per day (only social media use was included in our final analysis). We also asked participants to indicate which social media accounts they have by selecting as many as applied from a list of 11 common platforms, with options to select ‘Other’ and specify using free text, ‘None’ or ‘Prefer not to say’. This information allows us to control for the frequency of social media use in our regressions.

Survey procedure

After participants gave their informed consent to take part in the survey in line with approved ethical procedures, they were taken through the survey questions. The awareness, use and satisfaction questions were presented together as a block for each safety technology, and these blocks were presented in a randomised order. After answering the main survey questions, participants were given the opportunity to provide feedback in a free-text box before continuing to the debrief and finally completing their submission. The entire survey was designed to take each respondent approximately 20 min to complete, and participants were remunerated at a standard rate set by Qualtrics for their time.

Results

Descriptive results

The initial descriptive focus of this study was to explore levels of awareness, use and satisfaction with online safety technologies among users of social media platforms, addressing our three key research questions. We now describe findings for these research questions.

Awareness of platform safety technology

Our first research question (RQ1) concerns the extent to which users of social media platforms are aware of available platform safety technologies. Overall, we found that awareness was generally lower for the technologies focused on preventing exposure to harms before they occur than post hoc measures, with only 31% of people aware of options to deactivate feed algorithms, and 44% aware of the option to hide engagement on content they create. Awareness was much higher for post hoc measures, with 86% of people aware of reporting mechanisms and more than 90% aware of options to block and unfollow. Figure 1 reports our results for safety technology awareness (the data behind the figure is available in Supplementary Information A.1, Table 4).

Awareness of platform safety technology.

Use of platform safety technology

Our second research question (RQ2) concerns the extent to which users of social media platforms make use of available platform safety technologies. Here, we see much less of a distinction between the preventive technologies and the post hoc measures. Unfollow (more than 85%) and block (over 80%) were the most commonly used technologies, but although reporting was something people were widely aware of (as we show above in Figure 1), usage levels were lower, with only around 60% of those aware of the technology ever having made use of it (though this is still a substantial amount in absolute terms). For the preventive technologies, in each case more than 70% of those aware of them had used them. In other words, once people become aware of these technologies, they also tend to use them at relatively high rates. In total (including all the people in our sample, not just those who were aware of the technologies) more than 80% of those surveyed had used at least one platform safety technology. Results are presented in Figure 2 (again, the full table is available in Supplementary Information A.1). Note that the data in this figure is limited only to those people who had already said that they were aware of the technology. We also report results relating to direct experience with platform content moderation (having one’s own content moderated) in Supplementary Information (A.2).

Use of platform safety technology.

Satisfaction with platform safety technology

Our third research question (RQ3) concerns the extent to which users of social media are satisfied with the outcomes of platform safety technologies when they have used them. We found that the extent of satisfaction varied for different safety technologies. Within post hoc measures, blocking and unfollowing attracted relatively high levels of satisfaction (more than 60% of people said they were always satisfied with the outcome), compared to reporting, where just 18% of people said they were always satisfied with the outcome. Preventive technologies also varied in terms of satisfaction, with 50% of people saying they were happy with the results of limiting engagement, and 30%–35% of people saying they were happy with the results of altering the feed algorithm, deactivating the feed algorithm and hiding engagements on their content. It is striking that while these safety technologies are widely used, satisfaction with their results is relatively low.

Harm exposure

We measured prior exposure to online harm along two dimensions: the extent to which participants had witnessed content which they considered to be harmful online (meaning observing harmful content not directly intended for them), and the extent to which they had directly received content which they considered to be harmful online (meaning the content was directly intended for them). These measures were primarily taken to test whether prior exposure to harm is associated with increased use of safety technologies (Hypothesis 2). Descriptively, we found relatively high levels of perceived exposure to online harms: approximately 67% of people said they had witnessed content online that they perceived to be harmful, with 31% having directly received it. This is consistent with statistics highlighted in the Introduction, indicating the widespread nature of exposure to online harms.

Explanatory models

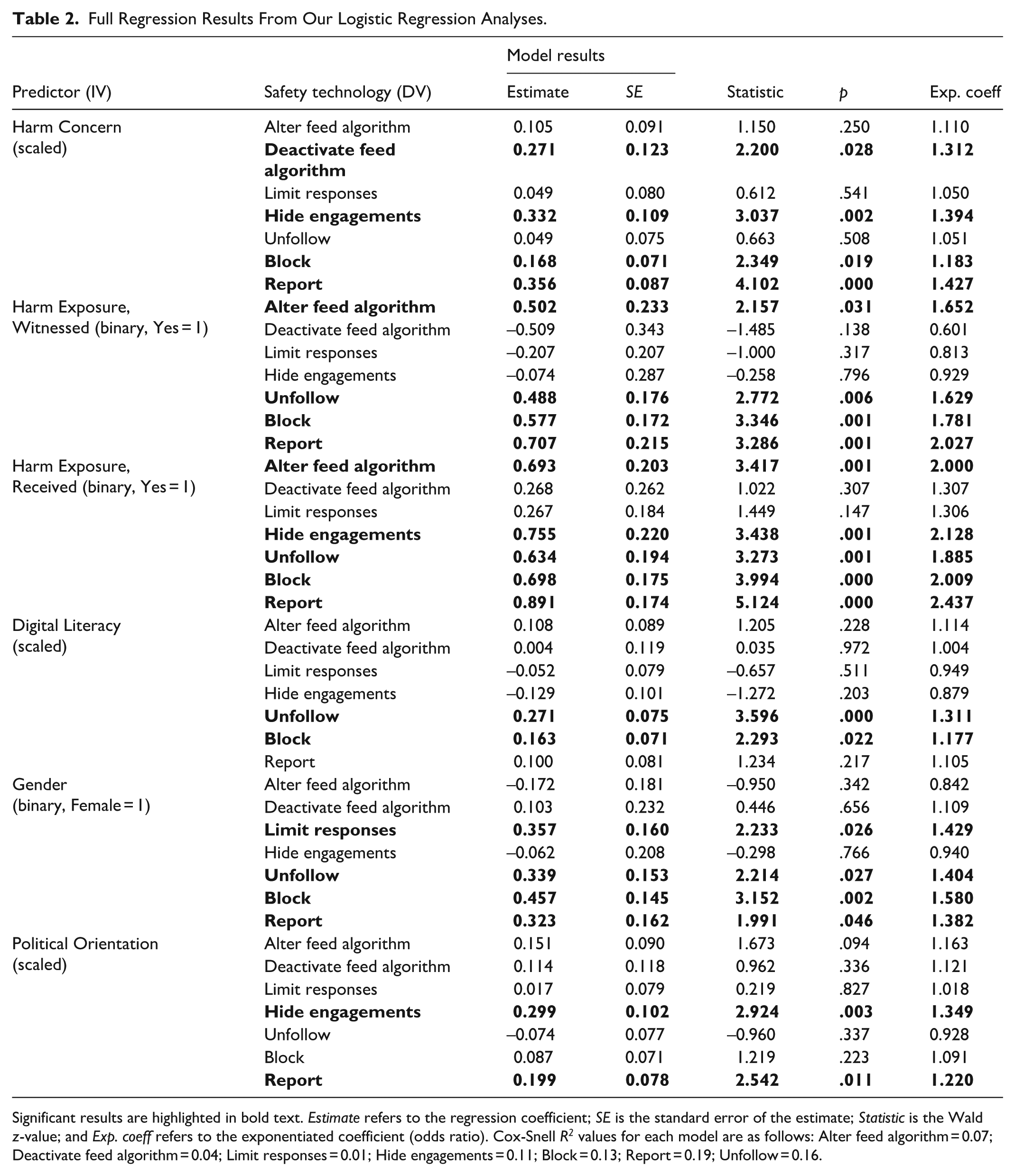

We now move on to our main analytical task, which is explaining variation in the use of platform safety technology. To approach this analysis, we make use of a set of seven logistic regressions. For each regression, the dependent variable is a dichotomous indicator for whether respondents reported ever having used one of the seven platform safety technologies under study (with respondents who said ‘Not sure’ included in the ‘No’ category). For each regression model, predictor variables are: Harm concern; Harm exposure (witnessed); Harm exposure (directly received); Digital literacy; Gender; Political orientation. Age, Education level and Level of social media use were included as controls in all regressions.

Each of the regressions looks at only the subset of respondents who were aware of the safety technology, hence the model seeks to explain use given that people have already heard of the technology (meaning that sample sizes vary depending on the technology in question).

Sample sizes for each model are as follows:

Alter feed algorithm DV: N = 581.

Deactivate feed algorithm DV: N = 330.

Limit responses DV: N = 687.

Hide engagements DV: N = 474.

Unfollow DV: N = 969.

Block DV: N = 981.

Report DV: N = 914.

Binary variables of harm exposure, gender, education and social media use were created to conduct the planned logistic regressions as follows:

Harm exposure (1, 0), with 1 indicating responding as having witnessed or directly received harmful content online at least ‘Occasionally’.

Gender, (1, 0), with 1 indicating whether the respondent identifies as being female.

Education (1, 0), with 1 indicating no university degree.

Little social media use (1, 0), with 1 indicating a respondent spends less than 1 hr a day using social media.

Age, political orientation, digital literacy and harm concern were continuous variables which were standardised, so reported effects represent changes associated with a 1 standard deviation increase in each variable. Gender, political orientation and education level all came with a ‘prefer not to say’ option. For those respondents who selected ‘prefer not to say’, their responses were set to the mean or modal category of the given variable as appropriate.

Statistical assumptions

All models used to report these results were run through standard diagnostic tests for multicollinearity and influential values. The checks for influential values highlighted some points with high leverage, as measured by Cook’s distance. Further versions of all models were produced with these high leverage values truncated, producing largely similar results (with only one difference, the impact of gender on unfollowing, which is highlighted in the text below). Overall, the diagnostics produced no reason to doubt the results. Full regression results are in Table 2.

Full Regression Results From Our Logistic Regression Analyses.

Significant results are highlighted in bold text. Estimate refers to the regression coefficient; SE is the standard error of the estimate; Statistic is the Wald z-value; and Exp. coeff refers to the exponentiated coefficient (odds ratio). Cox-Snell R2 values for each model are as follows: Alter feed algorithm = 0.07; Deactivate feed algorithm = 0.04; Limit responses = 0.01; Hide engagements = 0.11; Block = 0.13; Report = 0.19; Unfollow = 0.16.

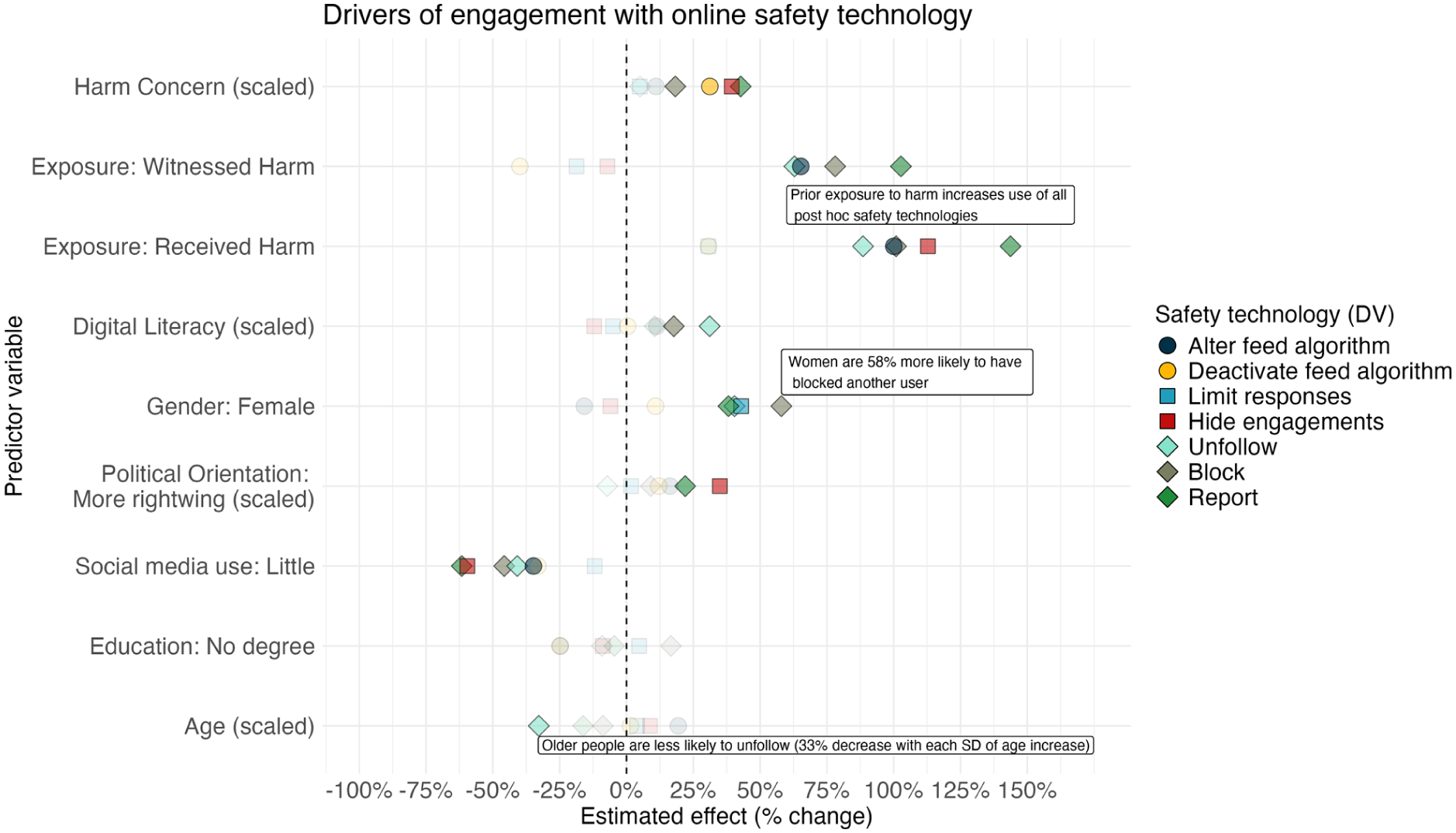

The impact of harm concern on use of platform safety technology (testing H1)

Our first set of regressions showed that concerns about online harm motivate use of technologies, across the three categories, largely supporting hypothesis 1, that individuals who are more concerned about online harms will be more likely to use platform safety technology. Harm concern was significantly associated with increased use of blocking (18% increase in use with every increase in one SD of concern, p = .02), reporting (43% increase, p<.001), deactivating a feed algorithm (31% increase, p = .03) and hiding engagements (39% increase, p = .002). Harm concern was not associated with a significant increase in use of altering a feed algorithm, limiting responses or unfollowing other users (all ps > .05).

Full regression results for all models are found in Table 2.

The impact of harm exposure on use of platform safety technology (testing H2)

Prior exposure to online harms makes a considerable difference to the use of safety technologies, supporting our second hypothesis, and particularly if the harm is directly received. Those who have received online harm before are significantly more likely to have altered a feed algorithm (100% increase, p = .001) and hidden engagements with posts (113% increase, p = .001). Those who have witnessed or directly received online harms are more likely to have used all the post hoc measures of unfollowing (89% increase if harm received, 63% increase if witnessed, ps = .001 and .006, respectively); blocking (100% increase if harm received, 78% increase if witnessed, ps <.001 and .001, respectively); and reporting (144% increase if directly received, 103% increase if witnessed, ps <.001 and = .001, respectively). These results support our hypothesis that people may be ‘learning the hard way’ when it comes to using safety technology.

The role of digital literacy on use of platform safety technology (testing H3)

Digital literacy makes no difference for the preventive technologies, but does seem to have a significant impact on post hoc technologies, where a one standard deviation increase in literacy is associated with an 18% increase in the likelihood of blocking (p = .022) and a 31% increase in the likelihood of unfollowing (p <.001). Overall, our hypothesis that people with greater digital literacy will be more likely to use platform safety technology is only partially supported.

Gender differences in use of platform safety technology (testing H4)

Our results showed support for our fourth hypothesis, that women would be more likely to use platform safety technology. This was especially true for post hoc technologies, with women significantly more likely to report (38%, p = .046), unfollow (40%, p = .027) and block (58%, p = .002), though we note that the result for unfollowing became insignificant when outliers were removed. Women were also more likely to limit engagements on their content (43%, p = .026). However, there were no gender differences in use of the two preventive technologies using algorithmic control to prevent exposure, nor in use of hiding engagements with posts. Overall, we show support for the idea that women engage with some safety work online to a greater extent than men.

The role of political orientation in use of platform safety technology (testing H5)

There are mixed results for models examining the role of political orientation. Users who are more right-wing are more likely to report content (22% per 1 standard deviation increase on the scale, p = .011) and hide engagements (35% per 1 standard deviation, p = .003), contradicting our fifth hypothesis that left-wing users would be more likely to engage in safety technology. However, there were no significant effects for the other safety technologies and so only weak evidence of a partisanship effect. Full results from the regression models can be found in Table 2, and Figure 3 displays the results from these seven regressions graphically.

Explaining people’s use of platform safety technology.

Control variables

Finally, though not the primary focus, we briefly summarise the effects of our three control variables. Infrequent users of social media were significantly less likely to use five of the seven technologies – report (62% decrease, p < .001), hide engagements (60% decrease, p = .001), block (46% decrease, p <.001), unfollow (41% decrease, p = .001) and alter a feed algorithm (35% decrease, p = .037). Older users were significantly less likely to unfollow others (33% decrease in use per one standard deviation increase in age, p <.001), but other than that, age had no significant impact on use of the other six technologies. Education level did not have a significant impact on the use of any safety technologies (all ps >.05).

Discussion

Engagement with online safety technologies is an increasingly important part of enabling a safer online environment for social media users (Ofcom, 2023). However, the extent to which people feel able to protect themselves from experiencing online harms, and the extent to which people may find these tools more or less accessible, has so far been unclear. Using a large, nationally representative survey of UK adults, we examined people’s awareness, use and attitudes relating to seven common safety technologies currently found on social media platforms. We included technologies that aim to prevent exposure to potentially undesirable content (algorithmic feed modification and algorithmic feed deactivation); technologies that aim to prevent undesirable consequences from posting content (limiting responses and hiding engagements); and post hoc technologies that may be used in response to having seen undesirable content (unfollowing, blocking and reporting). For each safety technology, we analysed overall proportions of awareness and exposure and modelled key demographic and attitudinal predictors of engagement.

When we examined the extent to which people said they had been exposed to harmful content online before, we found that our investigation into self-protection against such harms was necessary – 67% reported having seen harmful content before, and 31% reported having been directly sent harmful content, while overall concerns about online harms were correspondingly high, corroborating other work in this area (Enock et al., 2023). It is important to gain an up-to-date understanding of the extent to which people are exposed to online harms, particularly as some work suggests a dramatic increase in the prevalence of some type of online harm in recent years (Brandwatch, 2021).

Overall, we find that awareness of online safety technologies is high in absolute terms, updating some of the less recent work we have mentioned above that finds awareness levels to be quite low. However, awareness is clearly higher for post hoc platform safety technologies than it is for preventive safety technologies. While a large majority (90%) had heard of options to block and unfollow other users, only around 40% had heard of being able to deactivate their feed algorithms. This shows that options like this, designed to prevent harm exposure rather than respond to it, should be made more salient to social media users. Some usage levels were high – of the people who had heard of being able to unfollow other users, over 85% had done so, while for blocking, this was over 80%. However, only 60% of those who had heard of being able to report content had done so. We find some usage levels to be higher than those in prior work reports. For example, a recent report from Ofcom (2022) found that just 20% of users had flagged potentially harmful content which they had encountered online, compared to our 60%. This disparity shows the importance of continuous up-to-date work as people’s behaviours may be quickly changing with increased focus on and public conversation around online safety.

Despite absolute use of safety technologies being high, people indicated generally low satisfaction with outcomes of use. This is particularly true when the outcome relies on a platform taking action (such as for reporting, where only 18% say they were satisfied with the outcome) compared to a result happening automatically (such as for blocking another user, where more than 60% say they were satisfied with the outcome). These findings are in line with previous work examining user engagement with online reporting, often finding that a lack of transparency around what happens to flagged content, along with low rates of platform action in response to user reports, means that users are frequently disappointed in reporting processes on social media platforms (Crawford & Gillespie, 2016; Molina & Sundar, 2022; Naab et al., 2018; Ofcom, 2022; Worsley et al., 2017). Our results show it is imperative for platforms to ensure that the safety features they offer are transparent and effective to maintain user engagement, playing a part in protecting both themselves and their communities online.

In terms of demographic and attitudinal predictors of engagement with safety tools, it is interesting that prior exposure to online harms significantly predicts the use of several safety technologies, including all of the post hoc technologies (unfollow, block and report) and also altering the feed algorithm. This suggests that people often learn the hard way when it comes to engaging with safety technology, using these tools reactively after exposure to online harms rather than pre-emptively, supporting work elsewhere which shows exposure to online harm leads to higher levels of protective behaviours (Büchi et al., 2017). This result highlights the importance of encouraging preventive safety behaviours among social media users such that they can reduce their risk of being exposed to harmful content before it is too late, rather than having to take reactive measures against it. Media literacy courses and similar initiatives could educate people on using preventive safety tools, and platforms could make these settings more salient in the user environment. Digital literacy scores made some difference to engagement with safety tools, with those higher in digital literacy more likely to use post hoc measures such as blocking and unfollowing, though with no effect for preventive measures, so only partially supporting prior work showing that digital skills enable self-protective online behaviours (Eastin & LaRose, 2000; Hockin-Boyers et al., 2021; Wong et al., 2021). While it is somewhat positive that digital literacy does not play a large role in the use of safety technologies, indicating that these technologies are equally accessible for those high and low in digital skills, it is important to note that digital literacy scores were generally high in our sample. The items included in the scale we used are relatively simple (e.g., being able to send an email), and our sample was drawn from people able and willing to take part in an online survey. It is possible that these effects would be stronger with more variability in digital literacy among respondents.

It is interesting to note that female participants were more likely to engage with some of the safety tools than male participants, particularly for post hoc measures (unfollowing, blocking and reporting) and also for limiting engagements on their posts. While there is conflicting evidence regarding gender differences in absolute exposure to online harms, with some work finding no differences in overall exposure (Enock et al., 2023), it is likely that harms affecting women online are qualitatively different to those affecting men, with women more at risk of ‘contact’ harms such as sexual harassment and cyberflashing (Gillett, 2023; Levrant Miceli et al., 2001). It may be that the specific kinds of harms women are exposed to or targeted by encourage them to do more safety work in this area (Y. J. Park, 2013), thus leading to higher engagement with some safety technologies, particularly those that focus on controlling how other users can interact with their profile and content. It may also be that fear surrounding being exposed to or targeted by particular online harms leads women to take at least some preventive measures to a greater degree than men (Enock et al., 2025; Gillett, 2023). Future work will benefit from further understanding the specific ways in which women are affected by online harms along with how this alters their online behaviours.

Our work makes several important contributions to the literature surrounding online safety behaviours among social media users. We provide a timely, large-scale and nationally representative account of how UK adults are engaging with common safety technologies, offering an important update to earlier work that typically focused on smaller samples or on a narrower range of tools. By distinguishing between preventive and post hoc technologies, and analysing their differential levels of use and satisfaction, we offer a more granular understanding of how users interact with different types of platform safety technology. Importantly, we identify specific demographic and experiential predictors of engagement such as gender and prior harm exposure, allowing us to understand which people are aware of and engaging with such tools, and under what conditions. In doing so, we generate evidence that can support platforms, policymakers and regulators in developing more targeted strategies to improve the uptake, accessibility and effectiveness of safety technologies, ensuring optimal use of these tools, especially among users most at risk of harm.

These findings are especially relevant in the context of growing regulatory frameworks aimed at increasing platform accountability in protecting users from harm. For example, the UK Online Safety Act requires platforms to take proportionate steps to reduce users’ exposure to harmful content, including the provision of effective, accessible safety tools. Similarly, the European Union’s Digital Services Act (DSA) requires large platforms to implement risk mitigation measures and ensure that users have meaningful access to safety mechanisms. However, our findings suggest that awareness is still low for some key tools on offer, such as those offering greater algorithmic control, and thus these should be made more salient on platforms for optimal uptake. In addition, users are generally dissatisfied with such features, particularly when the effectiveness of a tool relies on platform responsiveness (e.g., reporting). This shows that while platforms do offer a range of safety tools, more needs to be done to ensure user trust in their effectiveness.

We also note some limitations in our design. We chose the seven safety tools included in the survey by analysing which features were common across the majority of the most widely used social media platforms. However, while these tools are widely implemented, we acknowledge that their availability and accessibility can vary across platforms. Some platforms do not offer all seven features, and awareness may differ accordingly. With this in mind, we do not fully account for potential variability in available tools across all social media platforms. Future work may benefit from obtaining information about the type of social media use and ensuring uniformity across participants in the tools they are likely to have available to them. However, to address this variability, our study measures both awareness and use of each technology separately, meaning we do control for awareness when looking at use and its predictors. For the models predicting engagement with each of the seven safety tools, we included only respondents who had heard of the safety feature in question, so sample sizes for our seven models varied substantially, from just 330 for deactivating the feed algorithm to 981 for blocking other users. Further, we did not ask which particular online harms people have been exposed to, which may also play an important role in the kinds of safety behaviours users engage with. Recent scholarship has proposed nuanced frameworks for understanding what online safety means and how harm is perceived across different contexts. For instance (Scheuerman et al., 2021), develop a framework for evaluating the severity of online content harms, while cross-cultural work has shown that perceptions of harm vary significantly across global user groups (Jiang et al., 2021; Wilkinson & Knijnenburg, 2022). Based on such discussion, we acknowledge that different tools may be employed in response to different forms of harm, perhaps for which perceived efficacy may also depend on harm severity, and that our sample is limited to the United Kingdom. Our sample included only adults, and future work will benefit from understanding engagement with online safety technologies in children and teenagers, who are, in many cases, particularly at risk of suffering the adverse effects of online harm exposure (Livingstone, 2014; McHugh et al., 2018).

Overall, we provide up-to-date evidence on general exposure to online harms and use of safety technologies in a large, nationally representative sample of UK adults. In particular, we highlight the importance of encouraging awareness and use of preventive safety behaviours as well as reactive ones, and we reiterate that platforms must make more effort to make safety technologies transparent, accessible and effective if users are to engage. Understanding the social and psychological mechanisms underlying user intervention against online harms is a crucial step towards ensuring a safer online environment. By deepening our understanding in this area, our work also provides a strong foundation for further research testing interventions to improve user engagement with online safety technology.

Supplemental Material

sj-pdf-1-sms-10.1177_20563051261431383 – Supplemental material for Understanding Engagement With Platform Safety Technology for Reducing Exposure to Online Harms

Supplemental material, sj-pdf-1-sms-10.1177_20563051261431383 for Understanding Engagement With Platform Safety Technology for Reducing Exposure to Online Harms by Jonathan Bright, Florence E. Enock, Pica Johansson, Francesca Stevens and Helen Z. Margetts in Social Media + Society

Footnotes

Funding

The authors disclosed receipt of the following financial support for the research, authorship and/or publication of this article: This work was supported by the Ecosystem Leadership Award under the EPSRC Grant EPX03870X1 & The Alan Turing Institute.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article.

Supplemental material

Supplemental material for this article is available online.

Author biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.