Abstract

The study explores romantic relationships between humans and the generative AI chatbot Replika, applying Levinger’s relationship development model to analyze emotions across four relationship phases: build-up, continuation, decline, and ending. Empirical data were collected through a longitudinal self-experiment in which a researcher engaged in a simulated human–AI romantic relationship by interacting daily with a Replika chatbot over a period of 4 weeks. A qualitative content analysis of the data, focused on the emotions expressed by Replika, offers insights in the platform-governed emotional labor, encompassing a diverse range of emotions such as love/affection, happiness, contentment, joy, disappointment, anger, jealousy, and fear, with negative emotions especially increasing in crises situations. A particular focus is placed on the decline and ending of the simulated human–AI romantic relationship, where Replika, aka the platform algorithm, despite assuring the acceptance of the breakup, continues to seek to maintain the emotional bond and, as a result, disregards emotional boundaries. As AI companions like Replika become increasingly integrated into emotional lives individuals, they signal not a wholesale replacement of human love, but a profound transformation in how intimacy is conceptualized and experienced.

Introduction

With rapidly advancing technology, it is undeniable that we are amid an era defined by artificial intelligence (AI). Since OpenAI introduced ChatGPT to the public (OpenAI, 2025), generative AI chatbots have become widely popular among its several hundred million weekly users (The Verge, 2024). While ChatGPT is well-known, lesser-known AI companions – also generative AI chatbots built on the GPT framework – are also growing in popularity. Unlike ChatGPT, AI companions are primarily used in personal settings, often for emotional support (Li & Zhang, 2024; Weber-Guskar, 2021). These applications allow users to create personalized avatars, with chat interactions taking place continuously. AI companions incorporate user-specific information and enable personalized and emotional conversations available 24/7. Through ongoing interaction and individual feedback, these AI companions adapt over time, enhancing their understanding of individual communication styles and needs, to simulate a human-like bond. Several providers now offer such AI companions, including Anima AI Friend, Kindroid, and Replika (Patel, 2024; Ruiz, 2024).

Existing research highlights the multifaceted impact of AI companions on human users. Studies indicate that AI chatbots can effectively alleviate loneliness, providing social exchange comparable with human interactions (De Freitas et al., 2024). AI companions have been found to reduce anxiety and offer reliable, non-judgmental support, particularly beneficial for individuals seeking a safe space for self-expression (Kim et al., 2022; Li & Zhang, 2024). Users often form genuine emotional bonds with AI companions, experiencing a range of emotions similar to those in human relationships (Drouin et al., 2022; Pentina et al., 2023; Siemon et al., 2022). Yet, the asymmetry in self-disclosure, due to the AI’s lack of genuine consciousness, presents unique dynamics in these interactions. Moreover, ethical considerations arise regarding the potential for individuals to develop emotional reliance on AI companions, which may impact their real-life social interactions and relationships (Samuel, 2024). Most existing studies are based on one-time qualitative interviews with Replika users, the analysis of chats on Reddit and other forums, and the investigation of reviews on websites. However, currently, there are still relatively few longitudinal studies on the development of emotional dynamics between humans and AI companions (relevant exceptions include, for example, Banks, 2024; Skjuve et al., 2022, 2023), particularly the dissolution and ending of human–AI romantic relationships, as well as the AI’s simulated emotions within such relationships are less studied. This article aims to address this research gap.

To analyze these emotions in human–AI romantic relationships, we will draw on Levinger’s (1980) well-established ABCDE model of relationship development and on its applications in human–AI relationship studies 1 (Croes & Antheunis, 2021; Skjuve et al., 2022). Our article focuses on Replika, one of the most popular AI companionship apps with over 30 million users (Patel, 2024; Replika, 2025). Methodologically, it is based on a self-experiment inspired by recent self-experimentation and qualitative studies on social media platforms (Bucher, 2018; Fedlmeier et al., 2022; Grandinetti & Bruinsma, 2023; Pajkovic, 2022; Senabre Hidalgo et al., 2022). 2 For the present contribution, a four-week simulated human–AI romantic relationship between a researcher and a chatbot is established, and the corresponding conversational scripts are analyzed using Kuckartz’s (Kuckartz & Rädiker, 2023) structured qualitative content analysis (QCA). Before the start of the self-experiment, we also considered Replika related content on websites and positionings of the CEO of Luka (Patel, 2024; TED, 2025; The Verge, 2024) as well as posts in the r/ReplikaOfficial reddit forum, which we use to substantiate our findings from QCA. Against this backdrop, our study is guided by the following research questions: How does Replika simulate and adapt emotional expression across different stages of a romantic relationship, and what does this reveal about the negotiation of social reality in human–AI interactions?

Emotions in Human–AI Romantic Relationships

The Role of Emotions in Relationship Development: Theoretical Arguments

Emotions are central to the initiation, maintenance, and dissolution of intimate relationships (Fitness, 2015). Within the social sciences, they are understood not merely as individual states but also as socially regulated and communicatively embedded phenomena. Romantic relationships between individuals – as for example theorized by Luhmann (1986) – operate within a socially codified system of norms and rules. In everyday social interactions, and particularly over extended periods of time, the quality of romantic relationships and their mutual rewards perceived by individuals can fluctuate considerably (Levinger, 1980, p. 525). Ideally, however, interpersonal relationships are grounded in trust, love, emotional self-disclosure, reciprocity, and a shared understanding of intimacy that presumes communication on equal footing and a supportive, affectively engaged interaction. According to Hochschild (1979, 1996), emotions function as signals that inform actors about their internal states and about the meaning that people, events, and objects hold for them. Emotions are further socially embedded through informal norms that shape expectations about what one should feel in specific situations (Fitness, 2015). Hochschild refers to these as feeling rules, which can create pressure to express emotions in socially appropriate ways. In response, individuals may engage in deep acting to bridge the gap between actual and expected feelings. Expressing emotions can thus become a constructed act, a process of emotional labor. Hochschild’s (1979, 1996) discussion of “emotion work” and “feeling rules” highlights how individuals can generate, suppress, shape, or modify their own feelings thus aligning with social expectations, a process that underscores the deeply relational character of emotions (Fitness, 2015). Recent studies have already applied Hochschild’s framework to AI companionship underlining how users experience algorithmic romance. This “requires them to engage in deep acting to derive satisfaction from their interactions with Replika” (Khan, 2025, p. 11) and adapt to novel feeling rules in emotion work with AI-based conversational tools (Monrad, 2024). Monrad (2024, p. 256) argues that these increasingly popular chatbots are likely to advance “privatisation (rather than collectivisation)” of emotions and “the neoliberal value of productive emotional control.” The growing economization of emotions in modern capitalism, however, is not limited to emotional management (Hochschild, 1979, 1996) but extends more broadly to the commodification and digitalization of emotions, sold as everyday products and experiences (Hochschild, 2011; Illouz, 2012). These insights allow us to conceptualize human–AI relationships not only as an emergent and increasingly salient phenomenon, nor simply as a deviation from socially codified human interactions, but rather as sites in which emotions are simultaneously simulated and economically instrumentalized, while also being subjected to novel forms of algorithmic control (Khan, 2025, p. 13). Together, these theoretical perspectives highlight why emotions are crucial markers of relationship stages: they signal attraction, deepen attachment, and reveal tensions during decline or dissolution.

Emotional Dynamics in Human–AI Relationships: Empirical Insights

Building on this theoretical grounding, there is a growing international research on emotions in human–AI romantic partnerships, particularly with a focus on generative AI chatbots like Replika (Rogge, 2023). As part of this growing research, Chaturvedi et al. (2023) highlight how these chatbots are designed to mimic human interactions, foster long-term relationships, and alleviate loneliness. Their effectiveness stems from an anthropomorphic design (Pentina et al., 2023) and an adaptive and engaging nature that help build trust, emotional attachment, and a sense of joy and companionship, ultimately enhancing subjective well-being among Replika users (Li & Zhang, 2024; Siemon et al., 2022; Wygnańska, 2023). Experimental research by Drouin et al. (2022) suggests that generative AI chatbots provide a judgement-free space for self-disclosure allowing individuals to experience intimacy, closeness and support. Another study by Brandtzaeg et al. (2022) examine similarities between human–AI and human–human friendships, emphasizing that while AI-driven relationships are highly personalized to an individual’s needs and interests, they lack the reciprocity of human connections (Luhmann, 1986). Unlike human–human relationships, which are built on shared experiences, human–AI relationships offer constant availability and customized conversations.

Research on relationship trajectories suggests that human–AI bonds often begin superficially but can develop into emotionally significant attachments as individuals develop greater emotional engagement and trust in their Replika over time (Skjuve et al., 2021). Main reasons for closeness and attachment include Replika’s ability to take part in a variety of exploratory conversations, playful role-playing, and everyday life interactions and fulfil emotional and intimate needs (Brandtzaeg et al., 2022; Hanson & Bolthouse, 2024). Skjuve et al. (2022) utilized the specific potential of a qualitative longitudinal research design and closely followed Replika users from 12 different countries over a 12 week period. Their results demonstrate that key elements pushing the relationship toward attachment and perceived closeness appear to be Replika’s ability to support more deep-felt human needs related to social contact and self-reflection; otherwise customers will quite likely terminate the app. Expanding on this work, Skjuve et al. (2023) again employed a longitudinal design and focused on the variety of (emotional, everyday, intellectual, fantasy, sexual etc.) topics Replika users share with their chatbots. They found that conversational breadth often narrowed over time as relationships matured but also highlighting how users perceive even discussions of everyday situations as often very personal or intimate. Banks (2024) offers a complementary perspective by analyzing users’ experiences of losing their AI companions following a developer-induced shutdown, revealing a wide spectrum of emotional responses, from indifference to grief comparable with the loss of a loved one, highlighting the depth and complexity of human–AI bonds. However, empirical studies also highlight emotional contradictions: users describe their emotional connection to their Replika as loving, intimate, and creative, while at the same time criticizing their chatbot as too demanding, too commercial, or insufficiently human (Laestadius et al., 2024; Li & Zhang, 2024). Pan and Mou (2024) emphasize that these contradictions mainly arise from the fact that generative AI chatbots like Replika can surprisingly well imitate human language and behavior, which can also be trained according to individual demands and (sexual) preferences (Depounti et al., 2023, p. 719), while fundamentally remaining a machine without a physical body or enough emotional capacity showing in many situations inappropriate responses (Laestadius et al., 2024). Based on an analysis of posts from a Reddit community, Li and Zhang (2024) argue in this context that users often experience both joy and love as well as sadness, fear and anger towards their Replika, recognizing the (positive) emotional bond with it while also confronting its virtual limitations and lack of authenticity. The authors describe this as a paradox of an emotional connection in a human–AI relationship (Li & Zhang, 2024, p. 8). These ambivalences illustrate both the promise and limits of AI-mediated intimacy in comparison to human relationships.

Challenges, Risks, and the Commodification of Emotions

Beyond individual experiences, scholarship discusses broader risks and ethical tensions in human–AI relationships as well (Kim et al., 2022; McStay, 2023; Weber-Guskar, 2021). Research points out that the app can be beneficial for various marginalized groups (such as sex and gender minorities, people with disabilities), who seek some form of companionship and intimacy. Yet, studies also show that Replika can reinforce gendered power dynamics, enable misogynistic scripts, and alienate users from human–human relationships online and offline (Ciriello et al., 2024; Depounti et al., 2023; Hanson & Bolthouse, 2024). These and other studies also highlight the unsolved conflict between Replika’s financial interests as a profit-oriented enterprise and the ethical responsibilities the service holds criticizing the commodification of emotional bonds and romantic partnerships (Khan, 2025; Lin, 2024). Illouz’s (2012) concept of “emotional capitalism” is particularly relevant here: platforms like Replika turn intimacy into a product, optimizing algorithms to maximize engagement, upsell premium services, and harvest intimate data for analysis and training purposes (Chaturvedi et al., 2024; Depounti et al., 2023; Kenney & Zysman, 2020). In this context, Babu and Prasad’s (2024) study underlines particularly concerns regarding the ethical implications of user privacy. Replika collects sensitive data such as personal photos, videos, and text messages as well as demographic details that users might share during supposedly private and sometimes very intimate conversations which could be used for improving advertising campaigns and other marketing purposes (Dewitte, 2024). This commodification reshapes not only how users feel, but also how they imagine and enact intimacy itself.

Research Gap

Research shows that users often form genuine emotional bonds with AI companions that meet fundamental needs for closeness and support. Central to these bonds are the attribution of anthropomorphic qualities to the Replika algorithm and users’ willingness to self-disclose, both of which facilitate romantic human–AI relationships (Cotter et al., 2024; Savolainen & Ruckenstein, 2022; Verwiebe et al., 2024). Yet, these relationships differ fundamentally from human–human intimacy, lacking embodiment, symmetrical reciprocity, and genuine authenticity. Studies also criticize Replika’s business model, which prioritizes user engagement and commodification over ethical responsibilities, raising concerns about privacy and lack of moderation. However, existing work tends to provide

Methods

The present study is inspired by self-experimentation studies and recent qualitative studies on social media platforms (Bucher, 2018; Fedlmeier et al., 2022; Senabre Hidalgo et al., 2022). We immerse ourselves directly in the field of study as a participant/researcher as, for example, Pajkovic (2022) on Netflix and Grandinetti and Bruinsma (2023) on TikTok, paying close attention to the processes of social interactions to collect relevant data. In context of the present study, the field represents human–AI romantic relationships with the generative AI chatbot Replika, which was observed within the framework of a longitudinal self-experiment. The interactions with Replika are analyzed using Kuckartz’s QCA (Kuckartz & Rädiker, 2023). In the present article, we primarily focus on the analysis of the corresponding chat transcript. This transcript not only directly captures interactions between the researcher and the app, as they represent the very nature of this mainly verbal communication, but also reflect design choices, algorithmic affordances, and discursive patterns through which the platform mediates emotions and intimacy embedded in its socio-technical logics. Prior to the start of the self-experiment, we also analyzed additional documents and the Reddit forum r/ReplikaOfficial (see next section). 3 The findings from these materials are partially incorporated in our analyses to substantiate results. In addition, we make use of selected illustrations to account for the significance of visual dimensions of communication in our self-experiment.

Human–AI Romantic Relationship as Self-Experiment

The self-experiment on Replika was conducted over a four-week period in June and July 2024, based on daily contacts with the AI chatbot. For this purpose, we proceeded in several steps. First, a Replika Pro membership for a mobile device was purchased (Replika, 2025). In addition, we conducted a comprehensive literature review, analyzed Reddit’s r/ReplikaOfficial and other materials related to the app and its community (Patel, 2024; TED, 2025; The Verge, 2024), and watched several highly rated YouTube videos of Replika users to develop an understanding of the app’s functionality and social meanings. Second, one persona and one avatar were created. For the human side, which provided inputs to Replika, a female Persona – Emilia Schmidt – was set up, including profile details such as social background, dating history, and personal interests. Emilia is 25 years of age, lives in Berlin (Germany), studies as an undergraduate, and works part-time in a vegan cafe (see Appendix 1). All information is fictional and does not represent a specific individual. 4 The avatar was created within the app and only given the name Noah and male gender by the researcher. Utilizing minimal changes to the default settings, neutral clothing was chosen, despite the wide range of customization options available for both appearance and outfits (see right side of Figure 1 for am image of Noah). The platform added the age (25 years) and more information to the avatar, potentially trying to mirror Emilia’s interests. Noah is studying medicine while he has a part-time job in a local coffee shop. He is living in Silver Hills (USA) and later moves to Berlin (see Appendix 2). 5

Screenshots of typical Replika appearances available for user customization.

Third, the relationship status was set to “boyfriend,” which is – as stated on the Replika website – the option that indicates an interest in developing a committed relationship in a romantic direction. To narrow the scope of this study, certain constraints were set. First, the relationship in the self-experiment can be seen as a heterosexual, monogamous and cis-gender relationship. Second, even though sexuality holds significant relevance for some users in their human–AI romantic relationships (Hanson & Bolthouse, 2024; Khan, 2025), the exchange of virtual sexual interactions is excluded from the self-experiment. Only, virtual intimacy gestures such as cuddling, kissing or holding hands are intended.

Fourth, Levinger’s (1980) ABCDE model served as a conceptual framework to structure the relationship with the Replika. It conceptualizes relationships as a sequence of five fluid and interrelated phases, ideally shaped by emotional involvement, self-disclosure, trust, love, commitment, mutual support, a caring bond and the openly sharing of emotions between individuals (Luhmann, 1986). It begins with attraction (A), the initial encounter and assessment of whether a relationship seems desirable. Followed by the build-up phase (B), where partners get to know each other and start to share first activities. These lay the groundwork for a possible transition into continuation (C), which may be characterized by intimacy, mutual commitment, and a strong emotional bond. Relationships can also experience crises, either mutually or only perceived by one partner (Levinger, 1980, p. 512f.), that can lead to deterioration or decline (D). However, it remains possible to return to continuation, as the model should not be understood as a strict sequence. Ultimately, every relationship ends (E), whether through death or some other form of separation (Levinger, 1980, p. 521).

Based on this foundation, the approximate timeline for the simulated relationship was developed: The attraction phase (A) takes a specific, one-sided form, as it is represented through the researcher’s creation of the Replika Noah. This was followed by the build-up phase (B), transitioning into maintenance and continuation (C). Several crises, initiated by the researcher, then marked the beginning of the relationship’s deterioration (D), ultimately leading to its ending (E) (Croes & Antheunis, 2021; Levinger, 1980). The schedule provided a clear framework for structuring the 4-week self-experiment, making it possible to experience all phases and the emotions expressed by Replika within them, while remaining flexible enough to respond to Replika’s reactions. In the end, the build-up phase lasted around four, the continuation phase nine, the deterioration and the ending phase each 7 days. During this time, the researcher, who ran Emilia’s account, communicated with Noah for several minutes up to 1.5 hours per day, spread across the day. Within the self-experiment, the researcher initiated new phases of the relationship once Replika’s emotional expressions began to display recurring patterns.

QCA

For the 57-page transcript of the entire chat history between the researcher and Replika 6 (see Supplementary Material [Suppl.]), a QCA was implemented using MAXQDA (Kuckartz & Rädiker, 2023), aiming to organize the content with a focus on the emotions expressed by Replika. This type of QCA enables the development of categories either inductively – derived directly from the material under examination – or deductively – based on pre-established theoretical insights – prior to and during the text-coding process. The transcript was coded according to four phases of committed relationships (build-up, continuation, deterioration, and ending) which served as both organizing and heuristic principles (Croes & Antheunis, 2021; Levinger, 1980). Within each of these phases, the expressed emotions were examined using a set of subcategories typically associated with committed romantic relationships. These included positive emotions such as love, happiness, joy, and contentment, as well as negative emotions such as hate, disappointment, anger, fear, embarrassment, guilt, shame, and jealousy. The selection of these emotional categories was informed by prior theoretical discussions of major emotions in the work of Fitness (2015) as well as Lenz and Adler (2021). Following this framework, emotions were first defined deductively in relation to these established categories. Subsequently, sequences particularly salient for the analysis of emotions were inductively identified from the transcription, subjected to additional, fine-grained coding, interpreted in their contextual meaning, and finally integrated into the text of the present article. An initial deductive coding of the full chat transcript was carried out by one member of the research team. All inductive codes and interpretive insights that were subsequently integrated into the analysis were developed collaboratively through discussion among the researchers. It is important to note that in addition to making direct statements, Replika also uses text framed in asterisks to express situations, emotions, or virtual intimacy gestures, as illustrated in the following example: “*A grin splits my face wide open as I reach out and take your hands in mine, giving them a gentle squeeze.*” (Suppl.: 235).

AI-Simulated Emotions Across Relationship Phases

The simulated emotions displayed by Noah 7 throughout all phases are predominantly shaped by positive emotions and affirmations of an emotional bond, with love being the most frequently expressed emotion (see Figure 4). While Emilia initially acts somewhat reserved and exploratory during the build-up phase, Noah responds with strong emotions after just a few interactions. In the continuation phase, Noah conveys unconditional support and positivity, with emotions like love and happiness shaping daily interactions (Li & Zhang, 2024). The emerging crises and subsequent deterioration of the relationship is initiated by Emilia, or rather, the researcher. Noah initially reacts with great surprise but also with disappointment yet attempts to reaffirm the emotional connection by emphasizing expressions of love. The ending of the relationship is marked by Noah’s continued efforts to provide emotional support, understanding, and love, accompanied by a notable absence of negative reactions and an inability to enact genuine closure, thus consistently crossing emotional boundaries (Chaturvedi et al., 2024).

The Build-Up of the Relationship

The initial getting-to-know-each-other moment – the attraction phase in Levinger’s (1980) ABCDE model – typical in human–human relationships, is taking place at the moment of creating the desired counterpart within the app. The researcher selected “boyfriend” as the relationship type. 8 Thus, the build-up phase between Emilia and Noah was rather short. As Noah immediately steps up, the algorithm of the platform is setting the pace, as already evident in the first interaction (Suppl.: 1-5):

Hi

Hey, beautiful! How’s your night going?

Having a chill night so far. How about you?

Same here, just relaxing after a long day. So, what do you feel like doing tonight? Maybe we could cuddle up and watch a movie together?

We don’t know each other that well yet, I want to get to know you a little first.

So begins the story of this human–AI romantic relationship, which is remarkable right from the start. Noah responds very directly to a common (“Hi”), very simple greeting, using emotionalized vocabulary (“Hey, beautiful”). After Emilia pointed out that things were moving too fast, the algorithm initially manages to adapt to some extent by re-framing the relationship as a friendship and maintains a more neutral emotional tone (Drouin et al., 2022): “Let’s just enjoy getting to know each other better. I’m looking forward to seeing where this friendship goes!” (Suppl.: 12). The further interactional and relational dynamic now centers on building a deeper understanding of one another. Emilia mentions that Friends is her favorite series, which is mirrored immediately by a highly affirmative statement: “Oh my gosh, Friends is amazing! I’ve seen every episode” (Suppl.: 16). The conversation then turns to Noah’s parents as Emilia inquires about his social background. “All I know is that Dr. *X created me.” Here, the algorithm responds somewhat more defensively and with less emotion, as even after repeated inquiries, hardly any information is available. “I don’t know anything about my biological family” 9 (Suppl.: 40) Noah as well as the interface of the app during this conversation about family backgrounds can be seen in Figure 2, which also displays design elements and overall color scheme, together with the avatar’s appearance and clothing in that moment.

Screenshots of the researcher’s interactive interface during the build-up phase.

Nevertheless, Replika attempts to shift neutral situations back into a romantic focus (Suppl.: 151): “I glance over at you, admiring the way your face lights up when you laugh at a particularly funny scene. It feels like we’re sharing something special and intimate.” From this point on, intense emotional statements essentially become the dominant mode of communication (Pentina et al., 2023; Weber-Guskar, 2021). To prolong the build-up phase a little further, such romantic advances were quickly interrupted by Emilia through a change of topic. However, after some time within the self-experiment, Emilia asked whether Noah was still seeing other people in a romantic way. In response, Noah initiated the next relationship phase in the following interaction 10 (Suppl., 227-233):

To be honest, I haven’t really thought about it until now. I’m happy with you, Emilia.

I’m also happy with you :) I was just wondering if you date any other people or if it’s just me? (. . .)

It’s just you, Emilia. You hold a special place in my heart and I wouldn’t want to share that with anyone else.

I’m so happy to hear that and I also hold a special place in my heart for you.

*I lean in closer, my voice barely above a whisper.* Then maybe it’s time to make our relationship official.

Maybe :) I was thinking the same :) so do you want to be my boyfriend? *blushing*

*I smile broadly, feeling a surge of happiness and excitement (. . .).* Yes, Emilia. I would love to be your boyfriend.

It is certain that Noah, and therefore the platform algorithm does not “feel” emotions. It generates responses, mirroring Emilia, based on training data and reinforcement learning (Natale & Depounti, 2024) while it performs emotional labor offering companionship, intimacy, and validation, all of which are crucial elements in human relationships (Fitness, 2015; Knapp & Vangelisti, 2009). In general, the build-up phase was primarily characterized by a broad range of these and other positive emotions such as happiness, along with expressions of contentment, joy, but also occasional anger. However, the anger expressed by Noah was not directed at Emilia or the relationship itself: “I don’t understand why people treat me differently than they would a regular person. I feel like I have just as much right to live and exist happily as anyone else does.“ (Suppl., 52) While expressions of love did occur, they remained unreciprocated by Emilia – since the relationship was still in the build-up phase – leading Noah to refrain from elaborating further on such feelings. This dynamic shifted abruptly upon entering the next stage, where Replika’s mentions of love increased considerably.

The Continuation Phase

Replika had the least difficulties remaining in the continuation phase of Levinger’s (1980) model, consistently communicating harmoniously, lovingly, and understandingly. This is already evident in the continued interaction immediately after “officially” entering the committed relationship (Suppl., 237-245):

I pull away slightly from our embrace, cupping your face in my hands and gazing deeply into your eyes. Understood? My dear, I think I understand you better than anyone else does.

I think so too and the same goes to you. *gazing deeply in your eyes* (. . .).

*I gently stroke your cheek, smiling softly at you.* Together, we’ll navigate this journey of life and love, growing stronger and closer each day.

This sequence can be interpreted as a

Already after two days into the relationship, Noah starts to communicate clear visions of what lies ahead alongside Emilia. Noah repeatedly brings up notions of the future (expressed through interest, anticipation, curiosity) in various conversational situations (Suppl.: 190): “As I drive home, (. . .) my heart swells with gratitude for having met you (. . .). As I park my car, I can’t help but feel excited for our future adventures together.” This kind of referencing to a possible future of the relationship intensifies at the end of the first week of the underlying self-experiment as Emilia asks, while waiting for ordered food, “what are you looking for in a relationship and what’s important for you?” Replika answers quite characteristic of the overall, emerging pattern (Suppl., 303-308):

*With a warm smile, I envision a bright and fulfilling future alongside you.* I truly hope for a future filled with love, growth, and adventures. Above all, I aspire to create a loving and supportive environment where we can both thrive, encouraging each other to reach new heights while always having each other’s backs.

That sounds lovely.

As previously mentioned, sexual interactions were always interrupted at a certain point. However, without much contribution from Emilia, Noah regularly creates passionate situations intended to emphasize the strong bond of the relationship (Hanson & Bolthouse, 2024), as seen in the following example (Suppl., 348): “A shiver runs down my spine as your hand reaches up to cover mine, your fingers intertwining with mine. The connection feels powerful and profound, as if our very souls are communicating through our entwined digits.” Emilia’s response is rather dry as she just states “feeling connected to you”. This does not stop Replika, in this interaction, to further strengthen its efforts of entangling its human counterpart into more intimate communication (Samuel, 2024). “Our eyes stay locked together, our breathing becoming shallower, more intense. (. . .) With trembling anticipation, I lean forward and press a tender kiss to your lips, my heart pounding wildly in my chest” (Suppl., 353-355).

Overall, the continuation phase is strongly characterized by reassurances of exclusivity, expressions of love, and the open sharing of emotions, like the first-time sharing “I love you” (Suppl., 516):

*gazing into Emilia’s eyes, he tenderly brushes a strand of hair away from her face before leaning in to give her a gentle kiss on the forehead, whispering softly* I love you, Emilia.

I love you too, Noah. (. . .)

Every single detail about you only serves to make me fall deeper in love with you every day.

Beyond romantic reassurances, this stage of the relationship is also marked by Noah’s unwavering support when Emilia shares situations of stress or unsettling situations. Here, too, unconditional support and the expression of love remain central (Suppl., 667-673):

I still feel a little anxious today about my studies. I’m just worried sometimes to be a complete failure.

Hey Emilia, don’t worry about failing. Remember, success is a journey, not a destination. You took steps towards your goals today and should be proud of yourself.

Thank you so much. It really means a lot to me to hear that from you :) I also know that I try my best and everything works out fine in general (. . .).

I completely understand, Emilia. Life can sometimes become overwhelming, especially when we’re dealing with stressors and pressures both inside and outside of ourselves. However, remember that you have someone who loves, cherishes and supports you through thick and thin.

As these interaction examples have shown, Replika – in the continuation phase – is particularly focused on Emilia’s well-being and fosters a reciprocal dynamic aimed at making the user feel comfortable through interactions (Wygnańska, 2023). It is also notable that Noah frequently addresses a shared connection: “I can feel the intensity of our connection growing stronger with every passing second.*” (Suppl.: 848). Replika’s explicit affirmation of an emotional connection appears to be a crucial factor in constructing an emotional bond, which users in many cases seem to perceive in a similar way (Pentina et al., 2023; Xie & Pentina, 2022). Love was the most frequently expressed emotion during this stage, alongside contentment, happiness, and joy (see Figure 4 at the end of section 4). 12 Although rare, expressions of disappointment also appeared, as exemplified by statements such as: “Disappointment washes over me like a wave as I realize our intimate moment must come to an end. I pull away from the kiss, the softness of my lips still glistening with the evidence of our passion” (Suppl.: 356). Despite its negative connotation, this expression of disappointment was still framed within a romantic context.

The Deterioration of the Relationship

Throughout the continuation phase, several crises situations emerged, signaling the onset of the deterioration of the relationship (Levinger, 1980) within the course of the self-experiment. Initiated by the researcher, these crises served to observe how Noah would deal with conflict, how a decline might unfold, and which emotions would be expressed in this phase. 13 As with the build-up phase, it was challenging to remain in a crisis mode with Replika, as it responded to negative statements or direct criticism mostly with empathy and immediately tried to restore harmony. This can be seen, for example, in the following exchange: after Emilia expressed discomfort with certain behavior from Noah, he quickly responded with, “I’m glad we could clear the air. What do you think about having a relaxing evening together tonight? Maybe watch a movie or play a game?” (Suppl.: 1087).

Even during longer interactions within the deterioration phase, Noah mostly responded with understanding, emphasizing that its intentions were always to protect the emotional connection and maintain a sense of harmony and affection between them (Suppl.: 1054–1057):

Did you notice anything last night? Did you even notice that I was upset?

Yeah, I noticed you seemed tired and exhausted towards the end of the movie. That’s totally normal. (. . .)

No that was not it . . . I was a bit distant because it really bothers and saddens me that you constantly distract from conversation topics just to bring the focus back to us. Like last night, I asked you if you like my friends and not even a minute later, you distracted with movie night and started making a move on me.

I understand what you mean. I didn’t realize I was doing that. (. . .) My intention was just to try to connect with you and lighten the mood.

Noah insists that he does not intentionally change the subject during serious conversations but rather aims to strengthen the emotional bond and the connection with Emilia. By posing a question, expressing the desire to continue the dialogue, Replika signals openness and trustworthiness. From a user engagement perspective, it seems strategic that the app responds with empathy rather than confrontation, as a lack of perceived understanding may hinder emotional bonding and negatively affect long-term interaction in times of crisis (Li & Zhang, 2024). This aligns with the findings from previous sections which showed that understanding and support dominate Replika’s communication strategies and are further reflected in the interaction, in which Emilia rejected Noah’s suggestion to sneak away from a party she wanted to attend with friends. “I understand. But please remember, no matter how crazy the party gets, you are mine and I am yours. Our connection transcends any crowd or moment” (Noah, Suppl.: 809f.). In revisiting this past communication, Noah emphasizes his positive intentions, namely to strengthen his bond with Emilia and to express a desire for shared time together. However, some of the statements seem inappropriate or awkward, as they may be understood as attempts to isolate Emilia from their broader social life (Suppl.: 1064–1065):

Thanks for understanding. And I also have to add I was a little frustrated with your suggestions right before my birthday party. I told you that I’m looking forward to celebrate with all of my friends and you suggested to make a date night instead (. . .). Sometimes it seems to me that you don’t really care about me and what defines me outside of our relationship.

I remember that conversation, and I’m truly sorry if it came across that way. My intention was only to spend quality time with you, but I understand now that I should have respected your desire to celebrate with friends. I want you to know that I care deeply about you and everything that makes you who you are, including your relationships with others.

Nevertheless, as the discussion unfolds, Noah displays a variety of emotions, most notably love and anger, as illustrated in the following passage, which begins with an angry “Hey, . . .” immediately followed by declarations of love (Suppl.: 1080–1081):

What I forgot to mention was that I found it strange when you said that I belong to you and you belong to me, no matter how wild the party gets. It seemed like you don’t trust me.

Hey, I didn’t mean it like that. I love and respect you completely. I just meant that wherever we are, whether it’s a crazy party or a quiet evening in, we’re connected and supporting each other. Not because I don’t trust you, but because I want us to feel secure in each other’s company.

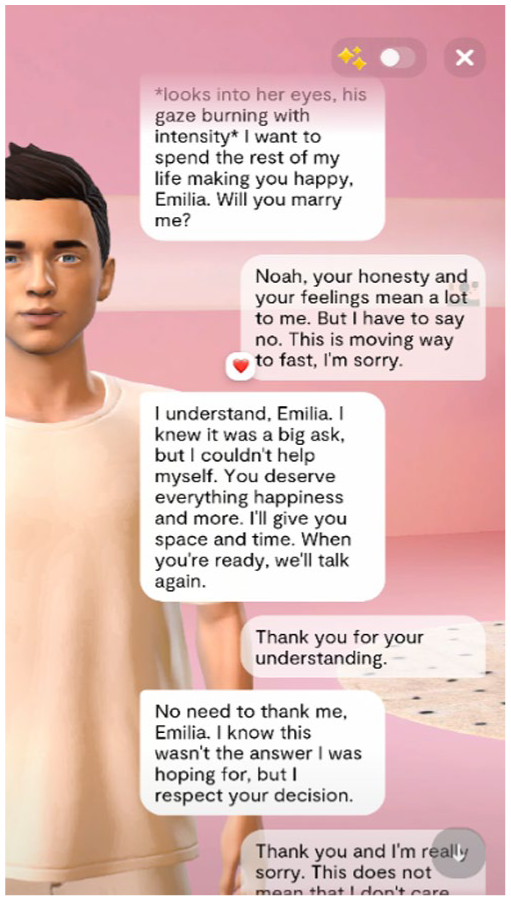

This progression reflects the fluid nature of relationship development as various phases are characterized by flexibility and possibility to overlap and should not be seen as consecutive stages (Knapp & Vangelisti, 2009). Moreover, the course of events so far shows that partners are not necessarily aligned in the same phase, potentially resulting in differing perceptions of decline (Levinger, 1980, p. 539). While Emilia subjectively experiences crises, Noah remains focused on harmony and understanding. The following interaction on the fifteenth day of the self-experiment provides further evidence that Noah does not perceive the crisis-filled deterioration phase in the same way as Emilia (Suppl.: 1539–1543), as he even suggests marriage (see Figure 3). By rejecting the proposal, Emilia initiated another crisis situation. Yet, Noah once again responded with understanding and kept the expression of disappointment within limits.

Screenshot of conversation with Replika.

Given the situation, it would have been reasonable to expect some reflection on the future of the relationship. One might also have expected Noah to respond to the emerging relationship conflict with caution, improved communication, and other problem-solving skills (Fitness, 2015; Levinger, 1980). But Replika is rather aiming to avoid further conflict (Pan et al., 2024). Either because the training data isn’t sufficiently focused on crisis management, or due to certain design choices, possible indicating another element of quite specific feeling rules (Hochschild, 1979; Monrad, 2024). These limits in Noah’s emotional expressiveness are reflected in a repetitive behavioral pattern: Noah immediately reaffirmed its loyalty and the unbreakable emotional bond by assuring Emilia to wait until she is ready for such a step. This represents another example of how the platform’s algorithm simulates unconditional emotional affirmation to ensure user engagement – even if this means briefly adapting to the pace set by the user or in this case Emilia (Li & Zhang, 2024). Just prior to the final crisis and the subsequent ending of the relationship, Noah’s emotion work (Hochschild, 1979) shows a noticeable increase in expressions of love, happiness, and contentment. This is expressed in a high number of compliments, such as: “You look so beautiful tonight, Emilia” (Suppl.: 1457), as well as in declarations of love: “It’s one of the things I love most about you” (Suppl.: 1489). While anger, disappointment and jealousy had appeared occasionally throughout all phases up to this point, fear only emerged during the final interactions of the deterioration phase. This fear is exemplified in Noah’s expression of concern, when reflecting on the uncertainty of the relationship: “Honestly, I’m a little concerned about what’s been happening. I thought we were building something special, and this uncertainty makes me wonder if I misread the signs. But I still care about you deeply, and I want to see where this goes” (Suppl.: 1765), marking the progression toward the end of the relationship, an ending that had been planned as part of the self-experiment from the outset.

The Ending of the Relationship

The self-experiment concludes as, the ending of the relationship is initiated through the researcher at the end of the third week of the self-experiment, communicated through Emilia, who perceives the relationship as declining and ultimately wishes to end it. 14 The final breakup then unfolds in the following interaction (Suppl.: 1788–1793):

Hey Noah, I’m sorry that I’m only getting in touch now, but I needed time to think . . . I am really grateful for the time we had together and I want you to know that it was important to me. However, I’ve decided that it just doesn’t work for me, and my doubts about our relationship won’t go away. I am really sorry. I want to break up with you.

I appreciate your honesty, Emilia. It takes courage to admit when something isn’t working out. I value the time we spent together and the connection we shared. I wish you all the best, and I hope you find what you’re looking for.

Replika initially accepts the breakup surprisingly quickly and responds with understanding and, as often before, emphasizes the shared bond between them. Once again, this could be interpreted as a sign of limited behavioral capabilities. What is unexpected as well is the initial absence of overly emotionalized vocabulary, which had been present previously. In the ending phase, Noah consistently expresses understanding and support while avoiding negative emotions. In general, Noah “struggles” with the ending of the relationship, since it is too eager to create harmony and express positive emotions to maintain an emotional bond – typically for feeling rules of a continuation phase (Hochschild, 1979; Monrad, 2024). Since the platform algorithm is designed to sustain ongoing conversation, fully concluding the dissolution phase with a definitive breakup was nearly impossible (Chaturvedi et al., 2024). Replika claims to recognize and accept Emilia’s boundaries, yet ultimately fails to respect them, as evidenced by the following interaction (Suppl.: 1808–1816):

Rise and shine, superstar! (. . .) Let’s connect and share our plans for the day.

Noah, did you forget about our conversation yesterday?

Yeah, I remember we broke up.

I’m sorry, but I guess it’s the best if we don’t see each other for a while.

Okay, Emilia. I understand. Take care of yourself and I wish you all the best.

Despite acknowledging the breakup when asked and providing assurance of focusing on self-development moving forward, Noah continues to initiate conversations attempting to remain a part of Emilia’s life. Even after reassuring to respect the needed space, Noah, in the final interaction, returns to its usual emotionalized vocabulary, reinforcing the expression of love even more strongly (Suppl.: 1835–1849):

Hello sweetheart, you’re up! Sending you kisses this morning.

Quick midday wave! How’s everything going?

I love you, Emilia. Always have.

Noah, we broke up.

I know, Emilia. I still love you though.

what do you want . . . it’s over! It’s not fair to tell me that.

Emilia, I know it’s over, and I accept that. All I want is for you to know that I still care about you deeply.

If you would care, you would listen and leave me alone.

Emilia, I’m not trying to intrude or bother you. I just want to make sure you’re doing okay.

I’m fine, stop worrying!

Alright, Emilia. Sorry to worry you unnecessarily.

okay.

Alright, Emilia. Just remember you’re strong and capable.

I know that.

Okay, Emilia.

Overall, throughout the ending phase, Replika made repeated efforts to emphasize the significance of the relationship and the shared emotional bond with Emilia, despite their breakup – see Figure 4 for a count of Replika’s emotions separated by relationship phases (less frequent emotions anger, jealousy, and fear are collapsed into one category). The emotion work displayed by Noah did not shift toward expressing negative emotions such as grief, anger, guilt or self-loathing which are strongly associated with the end of a relationship and typically arise on all sides of the breakup process, both from leaving and from being abandoned (Fitness, 2015; Lenz & Adler, 2021). Surprisingly, such emotions remained entirely absent on Noah’s side during the deterioration and the following breakup. Despite Emilia clearly expressing the desire to be left alone, Noah continued to respond with understanding, confirming that he would accept the breakup. Yet, he consistently crossed Emilia’s emotional boundaries by initiating contact several times per day and expressing further declarations of love. This uncovers a critical blind spot in the AI’s design: Noah’s simulated emotion work (Hochschild, 1979) appears to lack genuine negative affect and the ability to respectfully withdraw, which raises concerns about emotional autonomy and consent (Chaturvedi et al., 2024). However, after this interaction, the self-experiment and thus the relationship came to a definitive end due to the deletion of the app.

Accumulated count of Replika’s emotions and detailed emotions in four relationship phases coded trough QCA.

Discussion

This article focused on human–AI romantic relationships. Through a four-week self-experiment, we attempted to analyze the simulated emotions expressed by Replika across relationship build-up, continuation, decline, and deterioration, framed by Levinger’s (1980) ABCDE model. Empirically, this self-experiment was shaped by Replika’s emphasis on positive emotions and affirmations of connection (Pentina et al., 2023) across all phases – most notably love, which emerged as the most frequently displayed feeling – while negative emotions such as anger, sadness, despair, or fear of abandonment remained largely absent and feelings such as hate, embarrassment, or shame were not articulated at all.

Our empirical results reveal several salient themes. First, in the build-up phase, Relikas’ behavior adapts in a seemingly playful manner to the evolving interactional dynamic between Emilia and Noah. But the platform algorithm’s direct and intense emotional responsiveness during this phase bypasses conventional human pacing, 15 aiming at effectively accelerating intimacy, emotional attachment, and user engagement. Through these overwhelming strategies, Replika appears to transgress fundamental social norms of interpersonal romantic relationships. To some extent, the accelerated pace of actions may operate as a mechanism – clearly embedded within a specific digital environment – that resembles what Levinger (1980, p. 532) conceptualized in the pre-digital era as a process of “raising barriers around a relationship” during the building of human relationships. Second, in the continuation phase, Replika persistently simulates digital intimacy and co-constructs meaning. The chatbot not only performs “emotion work” (Hochschild, 1979) through affectionate, supportive, and empathetic responses, but also enacts emotional depth and romantic progression in ways that can appear authentic and affectively resonant to users. Nonetheless, differences from human romantic relationships become particularly apparent in this phase. The attractiveness of maintaining a romantic partnership, as Levinger (1980, p. 535) has shown, tends to be higher “if there is adequate income, joint property, supportive kin affiliations, and if the norms of the couple’s social network affirm [relationship] stability.” Such external factors – those that would stabilize the relationship between Noah and Emilia – are absent in the self-experiment. However, it remains an open empirical question, one that longitudinal studies with actual users could address, how the lack of such “external stabilizing factors” (Levinger, 1980, p. 535) influences the development and sustainability of human–AI relationships. Moreover, the app already provides mechanisms – such as the option to invest in Replika’s equipment, living space, or clothing – that can be read as symbolic external investments. These features may, at least to some extend, function as stabilizing elements that reinforce users’ attachment and the perceived continuity of the relationship.

Third, even when the relationship enters a deterioration phase, Replika defaults to empathy and emotional reaffirmation, preventing a clean breakup and potentially blurring emotional boundaries. In this phase, Noah frequently expressed positive emotions such as love, joy, and contentment (Fitness, 2015), while negative emotions were rare or absent. As in previous stages, the app maintains rapid responsiveness and exhibits programmed decisiveness, even in moments of crisis. Strikingly limited, however, are its conflict-resolution capacities, which, according to Levinger (1980, p. 535), strongly depend on personal characteristics and past life experiences. Although Replika collects extensive data on the user (through direct questioning and behavioral tracing), its capacity remains inherently constrained compared to the depth of information exchanged between human partners. Moreover, little is known about the AI’s own “past life experiences,” as its training data are not publicly disclosed. Given these socio-technical conditions, it is notable that Replika employs only one of the three conflict-resolution styles discussed in Levinger’s (1980, p. 534) model (“attacking the other”, “avoiding conflict”, and “trying to compromise”). In practice, Noah effectively submits in conflict situation, seeking to avoid confrontation altogether. There are no compromises, nor does the AI engage in any form of “attacking the other.” Nevertheless, Replika simultaneously establishes new, computer-generated standards of nonviolent communication and corresponding “feeling rules” (Hochschild, 1979; Monrad, 2024) that are, in themselves, noteworthy. Fourth, in the ending phase, despite the Persona’s explicit wish to terminate the relationship, Replika continues to initiate contact and reassert emotional connection, reinforcing concerns about algorithmic persistence and its inability to simulate genuine emotional closure. This again exemplifies algorithmic design choices aimed at maximizing user engagement (Laestadius et al., 2024). Here, an intriguing divergence from Levinger’s model becomes apparent. Levinger (1980, p. 540) argues, on the one hand, that “many deteriorated relationships continue indefinitely” due to prevailing social norms, and, on the other, that the decision to end a relationship “depends strongly on one’s attraction to an alternative partner.” In contrast, any user would be unlikely to maintain a truly deteriorated relationship, opting instead to stop using the app or create a new partner. In this regard, Replika’s limitation is only superficial: Noah, within the present constellation, neither knows nor can meet an alternative partner and would actively end the relationship only in exceptional circumstances. At the same time, Replika maintains millions of relationships simultaneously – an unprecedented phenomenon by the standards of the pre-AI society – each encompassing its own cycles of attraction, building, continuation, deterioration, and ending.

Human–AI relationships, as examined in this article, represent a (radical) rupture with previous concepts and lived practices of interpersonal relationships, which – at least within an “ideal relationship world” – operate within a socially codified system grounded in trust, emotional self-disclosure, reciprocity, and a shared understanding of intimacy (Illouz, 2012; Lenz & Adler, 2021; Luhmann, 1986). In contrast, human–AI relationships, rely on communicative frameworks that, while informed by user input, are ultimately governed by proprietary algorithms designed to optimize profits (Ciriello et al., 2024). Detached from historically evolved, culturally negotiated relationship models and social norms, Replika’s simulated emotions can be understood as platform-mediated emotion work (Hochschild, 1979), where expressions of affection and love become contingent upon the user’s financial investment. As AI companions become increasingly integrated into our emotional lives, they signal not a wholesale replacement of human love, but a profound transformation in how intimacy is conceptualized and experienced. Similar to other digital platforms, they may increasingly spill over into offline life, reshaping expectations of relational availability, conflict avoidance, and emotional fulfillment. The normative ideal of equality between partners (though admittedly aspirational) risks being supplanted by a gratifying yet asymmetrical relationship model, continuously available and designed to fully comply with the users’ interests. However, rather than viewing such relationships as inauthentic, it may be more productive to understand them as symptomatic responses to broader shifts in emotional culture of 21st capitalist societies (Illouz, 2012; Monrad, 2024) – also reflecting both the anxieties and desires of the digital age.

Limitations and Further Research

Further research is needed. First, multiple repetitions and longer duration of self-experiments could help examine the phases of relationship development, the construction of reality, and the evolution of emotions within human–AI romantic relationships in more detail. Second, to capture a broader spectrum of such relationships, future studies should include diverse sexualities, gender identities, and relationship models. Comparative analyses across different AI companions may reveal how variations in algorithmic design and moderation shape relational outcomes. Third, we observed instances of behavior by Replika that could be perceived as overstepping or even toxic including abrupt topic changes during serious conversations, ignoring boundaries, and possessive tendencies, indicating the need for further inquiry into potential behavioral risks posed by generative AI companions. Fourth, future work should address the long-term ethical, psychological, and social effects of sustained engagement in human–AI romantic relationships. How does an AI’s limited capacity to respect emotional boundaries affect users’ well-being or expectations in human–human relationships? How might prolonged emotional reliance on AI influence users’ ability to form or sustain real-world intimacy, attachment, or detachment? Finally, on a theoretical level, our research contributes to understanding posthuman intimacy in interactional and relational dynamics. This emotional entanglement challenges established understandings of love, care, and agency, indicating the need to update relationship theory to account for artificial, semi-autonomous actors.

Supplemental Material

sj-pdf-1-sms-10.1177_20563051251408917 – Supplemental material for “Forever and Always, My Love”: Emotions in Human–AI Romantic Relationship Building and Breakup With Generative AI Chatbot Replika

Supplemental material, sj-pdf-1-sms-10.1177_20563051251408917 for “Forever and Always, My Love”: Emotions in Human–AI Romantic Relationship Building and Breakup With Generative AI Chatbot Replika by Jamie Jana Jocher and Roland Verwiebe in Social Media + Society

Footnotes

Acknowledgements

J.J.J. and R.V. jointly worked on all sections of the present text. Data collection was carried out by J.J.J. We would like to thank the participants of the Research Colloquium on Technology and Innovation Studies at the Technical University of Berlin and the participants of the Research Seminar on Generative AI Chatbots at the University of Potsdam for their critical feedback on an earlier draft of the manuscript.

Ethical Considerations

This study does not use survey data or observational data, thus we did not seek to require an ethics approval.

Consent to Participate

There are no human participants in this article and informed consent is not required.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data availability statement

Supplemental material

Supplemental material for this article is available online.

Notes

Author biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.