Abstract

The media landscape now contains a growing mix of real and synthetic videos, presenting either authentic or false content. Drawing on the heuristic–systematic model, we conducted a mixed-design survey experiment in China to examine how perceived technical quality and content familiarity influence individuals’ perceptions of and performance in detecting real and synthetic videos. Multilevel analyses based on participant evaluations revealed that both perceived technical quality and content familiarity were positively associated with perceived video realness and trust in the vlogger. Higher perceived technical quality improved detection accuracy for real videos but decreased accuracy for synthetic videos. In addition, the positive association between content familiarity and trust in the vlogger was stronger for synthetic videos than for real videos. These findings deepen our understanding of how heuristic processing shapes perceptions and discernment of potentially synthetic videos and offer practical insights for mitigating the risks associated with AI-generated visual deepfakes.

Keywords

The rapid advancement of artificial intelligence (AI) fueled the generation and dissemination of AI-powered synthetic videos, which have raised considerable concerns because of their hyper-realistic features (Hancock & Bailenson, 2021). Such videos include manipulated and synthetic audio-visual content, which is often characterized by imitations of real individuals. In the past few years, synthetic videos generated using face-swapping technology have gained traction, attracting views from users on Chinese platforms such as Douyin (Park, 2024). Unlike in some Western countries where many deepfakes target political figures, most synthetic videos on Chinese platforms cover general topics, such as health, technology, and other areas of information sharing.

Regardless of the topics, synthetic videos could lead to a variety of harms and risks, such as fake information spreading (Dobber et al., 2021) and financial scams (Noto, 2024), resulting in an erosion in citizens’ trust in the media and news (Vaccari & Chadwick, 2020). It is thus important to improve citizens’ resilience to mitigate the potential risks regarding synthetic videos. Although empirical studies on this topic are growing, most of them focus on Western societies such as the United States and European countries (Birrer & Just, 2024). Moreover, even if automatic machine models are being developed (e.g. Li et al., 2020) to identify synthetic videos, these tools may not be widely integrated into media users’ devices. In reality, viewers usually depend on their own senses to perceive and detect whether the videos are synthetic (Köbis et al., 2021). Therefore, exploring how individuals’ direct-viewing responses and intuitions shape their perceptions and detection judgments is crucial. This research focus is in line with the call for an audience-centered solution in developing resilience to synthetic videos (Hancock & Bailenson, 2021).

According to the heuristic–systematic model (HSM), individuals form judgments through two processing routes: heuristic processing, which relies on simple cues presented in the message that will activate corresponding heuristics as mental shortcuts to make quick judgements, and systematic processing, which involves careful and effortful evaluation of message content. In this research, we focused on heuristic cues, which have been shown to strongly influence individuals’ ability to detect information of questionable veracity (Köbis et al., 2021). Specifically, considering the unique features of online videos, we explored how perceived technical quality and content familiarity, as heuristic cues, shape audiences’ perceptions and detection judgments of videos.

The technical quality and individuals’ familiarity with the content of the video are two core factors that can affect people’s processing of videos. Some scholars have called for research to consider synthetic videos with varying levels of quality (Dobber et al., 2021; Peng et al., 2023). Research has revealed that individuals’ prior involvement with the video content elements could affect their detection performance and credibility judgments (Diel et al., 2024). Moreover, audiences may come across both real and synthetic videos containing authentic or false content. More research is thus needed on whether the process wherein viewers form perceptions differs across different video types.

Based on the HSM, we conducted a mixed-design survey experiment in China to examine how perceived technical quality and content familiarity affect individuals’ perceptions and detection judgements towards short videos with non-political topics. The findings contribute to our understanding of how heuristic processing influences individuals’ reactions to synthetic videos in Eastern countries.

Literature Review

Previous Studies on the Detection and Perceptions of Synthetic Videos

Previous studies have explored people’s detection and perceptions of synthetic videos. However, because of factors such as the stimuli used and the context, further research is needed. Some studies used videos from open-access datasets to examine individuals’ detection performance and found that laypeople’s detection accuracy ranged from a relatively low to a moderate level (e.g. Groh et al., 2022; Köbis et al., 2021). However, it should be noted that those videos contained little information, making it difficult to infer whether the findings from these studies could apply to videos containing richer content (S. Y. Shin & Lee, 2022). Moreover, synthetic videos can convey either authentic or false information. It is important to include content-related factors, such as participants’ previous involvement with the content in exploration on people’s detection and perceptions.

Studies using videos with richer content (e.g. featuring public figures) have provided more nuanced evidence for the persuasive effects of synthetic videos. On the one hand, some studies (Hwang et al., 2021; J. Lee & Shin, 2022) found that synthetic videos are perceived as more credible than less richer modalities, such as text, implying that deepfakes have greater deceptive potential such that “what is seen is believed.” On the other hand, Hameleers et al. (2022, 2024) found that synthetic videos are not more credible or persuasive compared with disinformation in other modalities. This inconsistency may be partly attributed to the fact that most of these studies focused on political issues. Participants’ perceptions regarding politically related deepfakes are highly sensitive to factors such as their own partisanship, political interest, and familiarity with the politicians (Ahmed, 2021; Appel & Prietzel, 2022). There is little evidence regarding detection and perceptions of videos with non-political topics.

Another line of research has investigated the effectiveness of prebunking messages in cultivating individuals’ resilience to synthetic videos. Studies showed that pre-emptive messages such as false tags (J. Lee & Shin, 2022) and media literacy education (Hwang et al., 2021) could reduce individuals’ engagement and sharing intention with synthetic videos; however, a forewarning did not effectively improve participants’ detection accuracy when both synthetic and real videos were provided (Lewis et al., 2023; Ternovski et al., 2022). These studies offered noteworthy insights into improving detection performance from a prevention-oriented perspective. However, in the real-world context of individuals’ video consumption, such a pre-emptive message may be absent. Due to the fact that people may encounter both synthetic and real videos in the digital information environment, it is imperative to examine how people process various cues presented in the videos and form judgements of the authenticity of the videos.

HSM

The HSM posited that there are two distinct routes (i.e. heuristic and systematic) underlying people’s information processing (Chaiken, 1980). The heuristic type represents a more intuitive and faster processing route, in which individuals make judgements based on simple rules with less effort (Bohner et al., 1995). In contrast, the systematic route involves analytical processing based on deep reasoning (S. Chen & Chaiken, 1999). The HSM has been used in previous studies as a framework to understand how individuals perceive and distinguish false information (D. Shin et al., 2024; Sundar et al., 2021).

When processing misinformation, heuristic processing involves less reflection regarding the core aspects of messages, while systematic processing indicates a thorough evaluation of the validity and veracity of messages (Koch et al., 2023). The two processing routes can not only occur in parallel but also coexist in a way that one route lays the foundation for the other (S. Chen et al., 1999). Specifically, the heuristic processing can affect the formation of systematic processing (Maheswaran & Chaiken, 1991) and even bias the outcomes from the latter (Chaiken & Maheswaran, 1994). Individuals tend to first evaluate a message based on the heuristic route, which requires less mental effort (Chaiken et al., 1989). This aligns with the idea that because of limited cognitive capacity, people are more likely to rely on mental shortcuts (Fiske & Russell, 2010). In this study, perceived technical quality and content familiarity are two heuristic cues. This study aimed to investigate how these heuristic cues affect realness perception and authenticity judgements of both synthetic and real videos.

Synthetic Versus Real Videos

The current media landscape contains various videos, including both real and synthetic types with either authentic or false content. It is a crucial question whether and how audiences can discern true videos from synthetic content (Birrer & Just, 2024). According to the “realism heuristic” argument (Sundar, 2008), viewers are more inclined to trust videos because it mirrors real-world experiences. Thus, video forgery could be a powerful contributor to the dissemination of false information (Weikmann et al., 2025). Through a series of experiments, Groh et al. (2024) found that individuals mainly rely on audio-visual cues to make their judgments, supporting the realism heuristic.

The current study aimed to examine viewers’ judgments towards synthetic and real videos. Without revealing the video nature, we had each participant watch and assess three types of videos (i.e. real videos with authentic information, synthetic videos with authentic information, and deepfakes with false information). We first compared people’s perceptions and detection accuracy between the first two types of videos, which differed only in whether the facial information was synthetic. We then explored the effects of perceived technical quality and content familiarity on perceptions and detection and investigated whether such effects varied between the two video types. In addition, we examined whether the findings were consistent for deepfakes with false information.

Individuals are not entirely defenseless against synthetic technology. An optimistic viewpoint indicates that the current synthetic technology is not able to completely deceive viewers (Lyu, 2020). Previous studies have provided empirical evidence for individuals’ resilience to synthetic videos. S. Y. Shin and Lee (2022) found that participants reported higher perceived credibility for real videos than for deepfake videos. Groh et al. (2022) found that participants were skilled at noticing flickering faces in synthetic videos. In a subsequent study, Groh et al. (2024) further showed that people performed better at distinguishing real from fabricated political speeches through auditory and visual modalities. Accordingly, it is reasonable to predict that individuals may notice unnatural manipulations in synthetic videos, leading to decreases in their realness perception and trust. Similarly, the detection accuracies for synthetic videos may be higher than those for real videos when other elements are consistent. When facing real videos, it is possible that individuals will experience doubt if they fail to detect such signs, leading to hesitancy in judgment-making (Groh et al., 2022). Thus, we propose the following hypotheses:

H1: Compared with real videos, people report lower (a) perceived video realness and (b) trust in the vlogger and have higher (c) detection accuracy for synthetic videos.

Perceived Technical Quality

The perceived technical quality of videos refers to “people’s perception of how much effort has gone into creating the videos, as well as the technical attributes of the videos” (G. M. Chen et al., 2017, p. 20). It concerns people’s perceptions of a video’s attributes, such as its resolution, definition, contrast, and sharpness (Chikkerur et al., 2011). Technical quality is crucial in shaping individuals’ judgments and perceptions regarding video modalities (H. Lee et al., 2010; Peng et al., 2023). A high level of technical quality of a video is associated not only with credibility and realness perceptions but also with the persuasiveness of the messages conveyed (Hautz et al., 2014; Slater & Rouner, 1996). People tend to regard modalities that better resemble the real world as more credible (Sundar, 2008). Thus, a high level of quality of the video may lead individuals to believe it is real.

Individuals’ perceived trustworthiness regarding other aspects can also be influenced by the perceived quality of the video. For example, exposure to a higher-quality news video will lead the audience to believe that the news organization is more trustworthy (G. M. Chen et al., 2017). In the context of online science news videos, perceived quality can enhance viewers’ trust in the presenter in the video (Michalovich & Hershkovitz, 2020). We thus predict that a higher level of perceived technical quality will also lead individuals to regard the videos as more credible and to place more trust in the vlogger. The following hypotheses are proposed:

H2: Perceived technical quality is positively related to (a) perceived video realness and (b) trust in the vlogger.

The positive association between perceived technical quality and perceived video realness and trust in the vlogger may be more pronounced for synthetic videos. When individuals view synthetic videos with low technical quality, evident unnatural manipulations may make them unlikely to develop realness and trustworthiness perceptions (S. Y. Shin & Lee, 2022). However, as technical quality improves, the absence of unnatural cues in synthetic videos may lead to higher perceived realness and trust, in the way similar to real videos. In other words, perceived technical quality may play a more decisive role in shaping video realness and trust in the vlogger for synthetic videos. Accordingly, the following hypotheses are proposed:

H3: Video type moderates the associations between perceived technical quality and (a) perceived video realness and (b) trust in the vlogger, such that these relationships are more pronounced for synthetic videos than for real videos.

Given its positive association with realness perception, perceived technical quality may affect detection accuracy differently for synthetic and real videos. For synthetic videos, perceived technical quality may negatively affect detection accuracy. Unnatural transformations in videos are key indicators of the synthetic nature of the content (Johnson & Johnson, 2023). These signs of incongruity are often associated with low technical quality. More importantly, they lead the audience to conclude that the videos are synthetic (S. Y. Shin & Lee, 2022). However, as technical quality improves, highly realistic synthetic videos are more likely to be mistakenly regarded as real because they have fewer obvious unnatural elements, lowering detection accuracy. This aligns with a previous study showing that people can be deceived by high-definition synthetic videos (Jin et al., 2023). Conversely, perceived technical quality may enhance detection accuracy for real videos. High-quality audio-visual content may reinforce the realism heuristic, leading the audience to judge the videos as real, improving the detection performance. Thus, we propose the following hypotheses:

H4: Video type moderates the association between perceived technical quality and detection accuracy, such that (a) for real videos, perceived technical quality is positively associated with detection accuracy, and (b) for synthetic videos, perceived technical quality is negatively associated with detection accuracy.

Content Familiarity

Regarding information-sharing videos, viewers’ prior familiarity with the content may play an important role in shaping their perceptions. According to illusory truth effect (ITE), people are more likely to perceive a message as true when they were exposed to it before (Hassan & Barber, 2021), which is supported by a line of research (e.g. Pennycook et al., 2018). If individuals are familiar with the content, their processing could become easier because it requires less mental effort. Under such accelerated processing, individuals may misinterpret processing fluency as indicating the authenticity and reliability (Ahmed et al., 2024). Such familiarity effect may be more pronounced in the video context because richer modalities can more easily trigger prior associations with the content and strengthen confirmation bias (Sundar et al., 2021). The following hypotheses are proposed:

H5: Content familiarity is positively related to (a) perceived video realness and (b) trust in the vlogger.

The role of content familiarity may be more pronounced for synthetic videos. Synthetic videos contain more unnatural cues and manipulation signals, increasing audiences’ uncertainty and leading them to question the authenticity (Vaccari & Chadwick, 2020). When making judgments under uncertainty, people tend to rely heavily on heuristics (Raue & Scholl, 2018). Therefore, content familiarity may play a stronger role in affecting perceptions of trustworthiness for synthetic videos. Thus, we propose the following research hypotheses:

H6: Video type moderates the associations between content familiarity and (a) perceived video realness and (b) trust in the vlogger, such that these relationships are more pronounced for synthetic videos than for real videos.

The role of content familiarity in relation to detection accuracy may vary between synthetic and real videos. Individuals tend to perceive videos as real when they are more familiar with the content. Thus, content familiarity helps users make accurate judgments for real videos. However, individuals may be more prone to making false inferences regarding synthetic videos as their judgments are biased by their familiarity with the content. This is supported by previous studies (e.g. S. Y. Shin & Lee, 2022) showing that people are more likely to perceive synthetic videos as real if the topic and content align with their existing beliefs and knowledge. We propose the following hypotheses:

H7: Video type moderates the association between content familiarity and detection accuracy, such that (a) for real videos, content familiarity is positively associated with detection accuracy, and (b) for synthetic videos, content familiarity is negatively associated with detection accuracy.

Method

An online survey experiment with a one-factor (video type: real videos with authentic information, synthetic videos with authenticate information, and deepfakes with false information) mixed design was conducted in China between May and June 2024.

Materials

For real videos with authentic information, we selected and downloaded 12 short videos from Chinese platforms such as Weibo and Douyin, each lasting approximately 60 s. The content of these videos involved vloggers sharing knowledge on daily topics such as health care and technology. During the selection, we only chose videos whose information and content had already been verified as authentic by authoritative organizations or research. To avoid confounding effects, we excluded popular videos with a high number of likes or shares. Moreover, we concealed any labels or texts in the videos that could reveal the vlogger’s identity (e.g. name or occupation description). We numbered these real videos from 1 to 12. We then created synthetic versions of these videos by replacing the faces in the original videos with uploaded images. We used a publicly available tool, Qianhe (https://www.qianheai.com/), and applied its advanced deep learning algorithms to generate synthetic videos by detecting facial features in the original videos and implementing seamless face-swapping effects. The face-swapped videos were then reviewed by the research team. In selecting photos for synthetic videos, we chose publicly available online images and ensured that the faces in the photos matched the sound in the original video. These synthetic videos were numbered from 13 to 24. The real and the synthetic videos were consistent in terms of audio information and the content conveyed; they differed only in whether the facial information was synthetic. To create deepfakes containing false information, we first selected nine pieces of debunked fake news or false common knowledge. Then, we recruited volunteers with experience in public knowledge sharing, and each member independently recorded one or two videos in a manner similar to Videos 1–12. In these videos, the members appeared on camera to share the false information as if it were true. Subsequently, we performed face swapping on these videos. These nine deepfake videos with false information were numbered from 25 to 33.

We embedded these videos into the online survey and divided the 33 videos (12 real videos, 12 synthetic videos, and 9 deepfakes) into three groups. Each group included four real videos, four synthesized videos, and three deepfake videos. We ensured that an original real video and its synthetic version, as well as any two deepfake videos recorded by the same volunteer, were not shown in the same group. Each participant was randomly assigned to one of the three video groups for evaluation.

Participants and Procedures

We created an online survey link and collected data using the services of Wenjuanxing, a professional survey company in China. The company maintained a panel database of over six million users, and we set the sampling quotas to ensure that the age and gender distributions reflected those of the Chinese adult population. After excluding participants who failed attention checks, 298 valid responses were retained for analysis. The final sample covered a broad range of regions across China, with a mean age of 40 years (SD = 14.78). Most participants (73.5%) held a bachelor’s degree or higher. The sample skewed toward higher education levels, possibly because more educated individuals were more willing to complete the demanding task of evaluating 11 information-rich videos. Gender distribution was balanced (49.3% female, 50.7% male).

After entering the survey link and indicating their consent to participate, the participants were asked to provide their demographic information. They were then informed that they would be presented with 11 videos, which they were instructed to watch carefully. The participants were then randomly assigned to one of the three groups. During the video evaluations, each video and its related measurement items were displayed on the same page. For each video, we also included a question asking the participants whether they had seen the video before survey participation. The 11 video pages were shown in random order. After submitting all responses, participants were debriefed about which specific pieces of information were false.

Measures

For the independent variables, to measure perceived technical quality, we asked the participants to evaluate the technical quality of each video (M = 4.98, SD = 1.45) on a 7-point Likert-type scale ranging from 1 (very poor) to 7 (very good). Regarding content familiarity, the participants were asked how familiar they were with the content of the video (M = 3.79, SD = 1.71), with responses ranging from 1 (very unfamiliar) to 7 (very familiar).

To measure detection accuracy, following Groh et al. (2022), we added a slider that allowed the participants to report their judgment for each video. The slider ranged from “100% confidence this is a synthetic video” at the left endpoint to “100% confidence this is not a synthetic video” at the right endpoint, with “just as likely a synthetic video as not” at the midpoint. One increment to the left of the midpoint indicated “51% confidence this is a synthetic video,” and one increment to the right indicated “51% confidence this is not a synthetic video.” Detection accuracy (M = 50.24, SD = 34.55) was scored on a range from 0 to 100 based on the participant’s response. For example, for a synthetic video, a response of “100% confidence this is a synthetic video” (the left endpoint) was scored as 100, “100% confidence this is not a synthetic video” (the right endpoint) was scored as 0, and a response of “60% confidence this is a synthetic video” was scored as 60.

The items for other dependent variables were measured using 7-point Likert-type scales. For perceived video realness (M = 4.97, SD = 1.53), following Luo et al. (2022), we included one item asking the participants to rate the degree to which they believed that each video was synthetic or real, ranging from 1 (definitely synthetic) to 7 (definitely real). For trust in the vlogger, we included items for both cognitive trust and emotional trust. The participants evaluated their agreement with the statements on a scale ranging from 1 (strongly disagree) to 7 (strongly agree). Cognitive trust and emotional trust were each measured using three items adapted from a previous study (Komiak & Benbasat, 2006). The six items demonstrated high reliability, and we calculated the average score of these items as a composite measure for trust in the vlogger (Cronbach’s alpha = .96, M = 4.88, SD = 1.48).

Analysis

Because each participant evaluated multiple videos, the data were naturally structured for multilevel analysis, with video evaluations nested within each participant. Accordingly, we used hierarchical linear modeling (HLM) to test the hypotheses. The evaluations for which the participants indicated they had previously watched the video were excluded. In our data analysis, the unit of analysis at level 1 was video evaluation (N = 2954) and the unit at Level 2 was participant (N = 298). Level 1 variables were perceived technical quality, content familiarity, video type, and the dependent variables. Level 2 variables were the demographic factors, namely, age, gender (0 = male, 1 = female), and education level, which were included as control variables.

We first examined all of the hypotheses on the evaluations of videos with authentic information. Regarding H1, H2, and H5, we included perceived technical quality, content familiarity, video type (0 = real, 1 = synthetic), and Level 2 variables in the model (as Model 1) for each dependent variable. For the rest of the hypotheses, we further included the interaction between perceived technical quality and video type and the interaction between content familiarity and video type in the model (as Model 2). Moreover, we additionally ran HLM analyses for the evaluations of the deepfake videos with false information, including perceived technical quality, content familiarity, and demographic variables in the models.

Results

Synthetic and Real Videos With Authentic Information

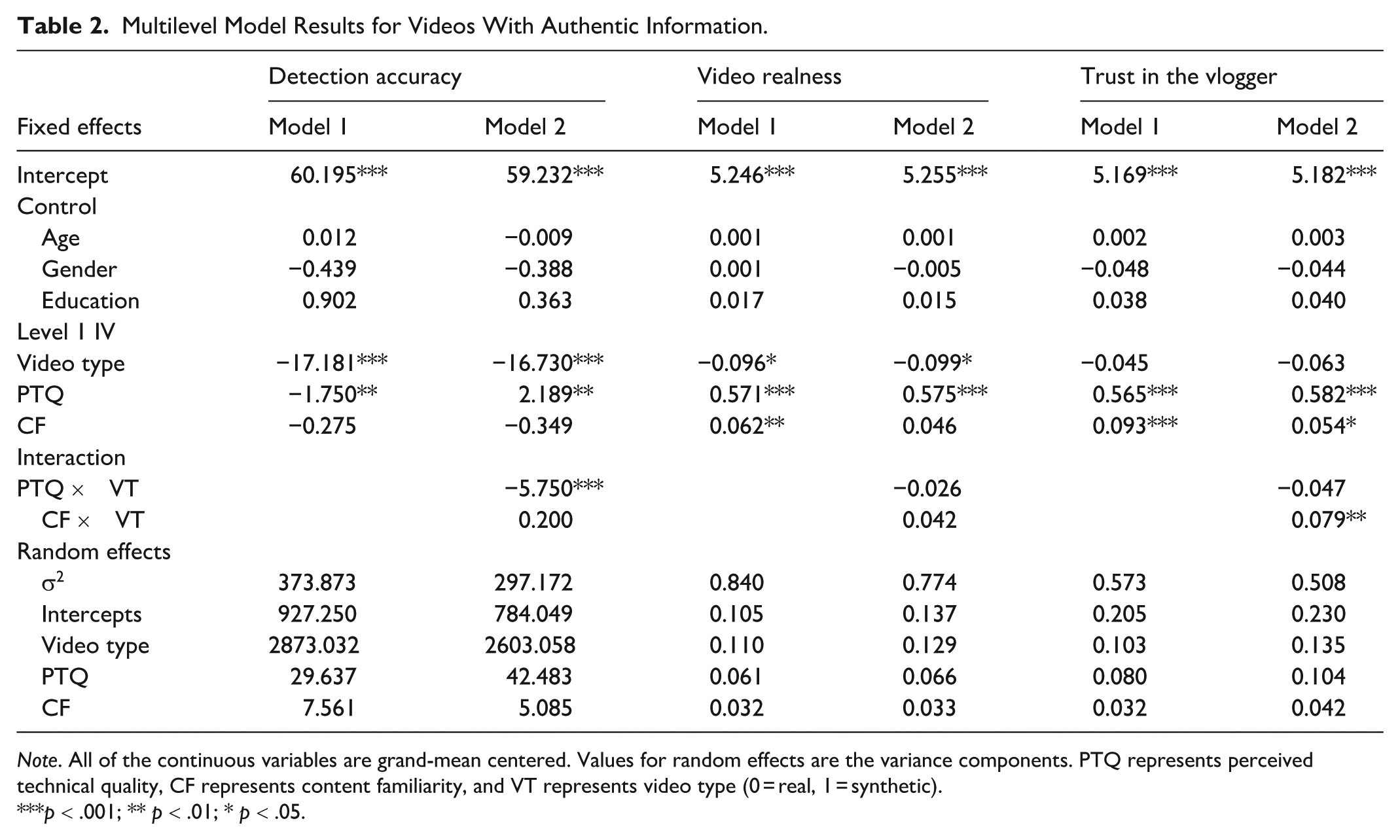

Table 1 shows the means and standard deviations of the dependent variables for the three types of videos. The HLM results are shown in Table 2. For videos with authentic information, none of the demographic variables exerted significant effects on dependent variables.

Descriptives of the Dependent Variables for the Three Video Types.

Note. Mean values are shown with standard deviations in parentheses.

Multilevel Model Results for Videos With Authentic Information.

Note. All of the continuous variables are grand-mean centered. Values for random effects are the variance components. PTQ represents perceived technical quality, CF represents content familiarity, and VT represents video type (0 = real, 1 = synthetic).

p < .001; ** p < .01; * p < .05.

Video type was negatively related to perceived video realness (H1a, γ = −.096, p = .040) and detection accuracy (H1c, γ = −17.181, p < .001). However, we did not find a significant association between video type and trust in the vlogger (H1b, γ = −.045, p = .257). Compared with real videos, participants perceived less video realness but had lower detection accuracy towards synthetic videos. However, the two types did not differ significantly in trust in the vlogger.

Perceived technical quality was positively related to perceived video realness (H2a, γ = .571, p < .001) and trust in the vlogger (H2b, γ = .565, p < .001). Videos with higher perceived technical quality were regarded as more real and trustworthy. Moreover, video type did not moderate the associations between perceived technical quality and perceived video realness (H3a, γ = −.026, p = .518) and trust in the vlogger (H3b, γ = −.047, p = .190). The effects of perceived technical quality on these two variables did not differ significantly between synthetic videos and real videos. However, the interaction between perceived technical quality and video type was negatively associated with detection accuracy (H4, γ = −5.750, p < .001). As Figure 1 shows, the association between perceived technical quality and detection accuracy was positive for real videos (H4a) and negative for synthetic videos (H4b).

Interaction effect of perceived technical quality (PTQ) and video type on detection accuracy for videos with authentic information.

Content familiarity was positively related to perceived video realness (H5a, γ = .062, p = .002) and trust in the vlogger (H5b, γ = .093, p < .001). Individuals who were more familiar with the video content regarded the videos as more real and trustworthy. Video type did not moderate the associations between content familiarity and perceived video realness (H6a, γ = .042, p = .210) and detection accuracy (H7, γ = .200, p = .816). The effects of content familiarity on the two variables did not differ significantly between synthetic videos and real videos. However, the interaction between content familiarity and video type was positively associated with trust in the vlogger (H6b, γ = .079, p = .006). As Figure 2 shows, the positive association between content familiarity and trust in the vlogger was more pronounced for synthetic videos than for real videos.

Interaction effect of content familiarity and video type on trust in the vlogger for videos with authentic information.

Deepfakes With False Information

The results of the analyses for the deepfakes with false information are shown in Table 3. Regarding the control variables from Level 2, age and education level had significant negative associations with both perceived video realness and trust in the vlogger. The findings for the effects of perceived technical quality and content familiarity were consistent with the results for videos with authentic information. Specifically, perceived technical quality was positively related to perceived video realness (γ = .621, p < .001) and trust in the vlogger (γ = .632, p < .001). Perceived technical quality was negatively related to detection accuracy (γ = −3.984, p < .001). Content familiarity was positively associated with perceived video realness (γ = .161, p < .001) and trust in the vlogger (γ = .159, p < .001).

Multilevel Model Results for Synthetic Videos With False Information.

p < .001; ** p < .01; * p < .05.

Discussion

The media landscape now contains a growing mix of real and synthetic videos presenting either authentic or false content. We conducted an experiment in China to examine people’s perceptions of and detection accuracy regarding different types of videos with general topics. The results showed that in general, compared with real videos, people perceived synthetic videos as less real but performed worse at identifying them. However, there was no significant difference in trust in the vlogger between real videos and their synthetic counterparts. As for the heuristic processing routes, as perceived technical quality and content familiarity increased, people were more likely to perceive the videos as real and place greater trust in the vlogger. This pattern held regardless of whether the video’s content was authentic or false. Perceived technical quality improved detection accuracy for real videos but reduced accuracy for synthetic videos. In addition, the positive relationship between content familiarity and trust in the vlogger was more pronounced for synthetic videos than for real videos.

Regarding the comparison between synthetic and real videos, participants reported lower realness for synthetic videos, suggesting that they could recognize manipulation cues in these videos. However, results showed that such decrease in perceived video realness did not effectively translate into an increase in detection accuracy. Contrary to our hypothesis, the participants’ detection accuracy for synthetic videos was lower than that for real videos, indicating that identifying synthetic videos is more challenging. This finding reveals a tendency among participants to regard the videos as real rather than synthetic, which can be explained by truth-default theory (Levine, 2014). According to this theory, people are usually truth-biased in their information consumption. Because of this bias, they may be more accurate in recognizing real videos but at the same time more vulnerable to being deceived by synthetic ones. A series of studies provide further support for this view. In a study where most participants were from Western societies (Groh et al., 2022), subjects were also less accurate at detecting deepfakes than real videos. In general, unless people detect evident manipulation signals and feel confident about their judgment, they tend to perceive what they see as authentic (Farid, 2019). Another study conducted primarily with British citizens also found that people were more likely to misrecognize synthetic videos as real rather than the other way around (Köbis et al., 2021).

Intriguingly, there was no significant difference in trust in the vlogger between the synthetic and real videos. As previous studies (e.g. Hameleers et al., 2022) suggest that people’s standpoints may influence their perceptions of politically related deepfakes, these factors have minimal impacts in our research since our videos did not involve political content. Meanwhile, we only swapped the original faces with new faces from other non-celebrities when creating the synthetic versions. We also removed the evaluations from participants who had previously watched the videos in the data analysis. In summary, the participants’ assessments of the vlogger’s trustworthiness in this study focused on the content per se and may be more impartial. This finding may also be attributable to the specific socio-cultural context in China. First, due to regulatory implementation, AI-generated videos that appear on Chinese social media platforms are often designed for entertainment or science popularization purposes, rather than for the dissemination of false information. Second, prior surveys indicate that Chinese users generally exhibit a more tolerant and optimistic attitude toward AI technologies (KPMG, 2025). Together, these factors may help explain why, upon recognizing a video as AI-synthetic, Chinese audiences do not automatically associate the vlogger with the intention to mislead. Thus, there was no significant difference in trust in the vlogger between real and synthetic videos. The different effects of video type on perceived realness and trust in the vlogger suggest that source trustworthiness and message believability are distinct perceptions. According to Metzger et al. (2003), source credibility and message credibility are two independent constructs that consist of different dimensions and may follow different formation processes. Subsequent studies provide additional supporting evidence. For instance, Foy et al. (2017) found that participants trusted conflicting material if it seemed logical, regardless of source credibility, and Shen et al. (2025) showed that neutral fact-checking exposure increased source credibility but not message credibility. Our findings add video-based evidence to this line of research, indicating that judgments of facial manipulation differ from trust in the vlogger.

As a heuristic cue, perceived technical quality is positively associated with perceived video realness and trust in the vlogger. This is consistent with previous studies (e.g. Michalovich & Hershkovitz, 2020). Noteworthy, these effects remained stable and did not significantly differ between synthetic and real videos. Participants were not skilled at detecting synthetic videos. In the absence of clear labels indicating the synthetic nature, they may rely on heuristic cues to evaluate the credibility in the same way for both synthetic and real videos. This finding is also consistent with a recent study (Weikmann et al., 2025) showing that when participants were not informed about the veracity of the videos, they rated the credibility of synthetic videos similarly to real videos. Given its stable positive influence on video realness, perceived technical quality improved detection accuracy for real videos but decreased the accuracy for synthetic videos. This study adds a novel perspective to prior studies by exploring how perceived technical quality influences detection accuracy of videos. Meanwhile, it should be noted that our sample was skewed toward the higher-educated end. Previous studies have shown that knowledgeable individuals and experts may rely more on heuristic processing due to their better-established knowledge structures (Evans & Stanovich, 2013). The stable heuristic effect found in this study may therefore be partly attributable to the educational background of the sample.

Content familiarity, another heuristic processing cue, could enhance both perceived video realness and trust in the vlogger, aligning with previous studies (e.g. Ahmed et al., 2024). However, it did not influence detection accuracy. Content familiarity involves individuals’ feelings regarding the content, while determining whether a video is synthetic requires judgments on visual elements (Groh et al., 2022). Thus, although content familiarity increased perceived video realness to some extent, it had limited influence on detection accuracy. Interestingly, the positive effect of content familiarity on trust in the vlogger was more pronounced for synthetic videos than for real videos. In synthetic videos, unnatural manipulation cues can create uncertainty (Vaccari & Chadwick, 2020). Thus, viewers may rely more on familiarity heuristics to assess the vlogger’s trustworthiness. Research has suggested that people feel more confident when engaging with topics they consider themselves to be knowledgeable about (Huberman, 2001). Previous studies (Ladouceur et al., 1986; Shavit et al., 2016) also found that as familiarity increases, individuals are more likely to tolerate and even embrace potential risks. Accordingly, familiarity exerts a stronger effect on trust in the vlogger for synthetic videos.

For deepfake videos with false information, the positive effects of perceived technical quality and content familiarity on realness and trust in the vlogger were consistent with those for videos with authentic information. Previous studies (Dobber et al., 2021; Hameleers, 2022) suggest that individuals are not powerless against deepfakes with false information. Our study extends the extant research by demonstrating that audiences apply similar heuristic patterns towards different types of videos. The truth-default theory (Levine, 2014) posits that people tend to assume that information is truthful because of their limited ability to evaluate content systematically. This effect may be particularly strong for deepfake videos compared with other modalities due to their high perceptual richness (Weikmann & Lecheler, 2023). It is plausible that audiences presuppose the information in deepfakes to be real and base their judgments on perceived technical quality and content familiarity even when the information was false. Notably, older participants and those with higher education were more likely to perceive deepfakes with false information as less real and to place less trust in the vlogger, which is not observed for videos with authentic information. The topics of the videos in our study were related to health and life knowledge. More educated and older participants may have greater knowledge in these fields and are more likely to recognize misinformation and question the trustworthiness of these deepfakes.

Theoretical and Practical Implications

This research extends the HSM to the context of synthetic video discernment. We found that heuristic processing routes exert a stable and consistent influence on users’ evaluation and detection of videos that may be synthetic and convey false information. This result provides empirical evidence supporting the influences of heuristic processing, supporting HSM (Chaiken & Maheswaran, 1994). To the best of our knowledge, this study is among the first to explore the effects of heuristic processing in the context of synthetic video detection under the HSM. In addition, our study also offers support for the realism heuristic, as it demonstrates that videos appearing more realistic are perceived as more trustworthy and credible.

In addition, this research contributes to the growing body of literature on deepfake detection. We investigated the factors influencing individuals’ judgments of videos with general topics, thereby supplementing previous studies (e.g. Appel & Prietzel, 2022) that have primarily focused on political contexts. Furthermore, this research provides empirical evidence from China, where synthetic videos are becoming increasingly prevalent, adding a comparative perspective to studies predominantly conducted in Western societies. Moreover, by incorporating three types of videos, this study not only validates prior findings but also offers more nuanced insights into how individuals process and assess synthetic media.

This study offers practical implications for platforms, policymakers, and educators. Since perceived technical quality boosts both video realness and trust in the vlogger, interventions should go beyond voluntary disclaimers. Platforms could adopt standardized provenance signals, as in China’s “deep synthesis” rules, to ensure synthetic videos cannot circulate without clear labeling. Media literacy efforts should highlight that technological advances enable highly realistic synthetic videos and warn against over-relying on familiarity cues. Tailored interventions for younger and less-educated audiences, using accessible formats, can further strengthen resilience.

Our findings also suggest pro-social uses of synthetic media. Because trust in vloggers does not differ between real and synthetic videos when content is truthful, creators could use this technology for health and science communication, provided transparency mechanisms are in place. Finally, regulation should be context-sensitive: strict measures for harmful uses and proportionate labeling for positive applications, balancing risks with benefits.

Limitations

This study had some limitations that warrant attention in future research. First, the face-swapping technology used in this study reflected the tools available at the time of data collection. As AI technologies continue to advance, whether the findings of this study can be generalized to newer forms of synthetic content is unknown. Future research should examine these effects in updated technological contexts. Second, this study relied on participants’ self-reported assessments of perceived technical quality and familiarity. Future research could incorporate objective manipulations to examine how variations in technical quality and content familiarity influence credibility perceptions. We suggest future studies explore potential different effects of objective quality and subjective quality perception in shaping audiences’ detection performance towards synthetic videos. Meanwhile, our study did not incorporate direct measures of the processing mode. To strengthen the theoretical foundation of future research grounded in the HSM, we recommend the inclusion of targeted measures of processing depth. Regarding demographics, our sample was skewed toward the higher-educated end. Therefore, caution should be exercised when generalizing the findings. We also suggest that future studies replicate the findings with a more representative sample. Moreover, for videos containing false information, we recruited volunteers with knowledge-sharing experience to record the content. Future studies could consider involving professional vloggers to enhance the ecological validity of the findings. Finally, we focused on processing and judgment formation during participants’ first encounter with the video and excluded cases with prior exposure from the data analysis to ensure internal validity. Future studies could examine the effects of prior or repeated exposure on shaping judgments of synthetic and real videos.

Despite these limitations, our findings highlight the crucial role of heuristic processing in shaping audiences’ perceptions towards short videos. Therefore, researchers, users, and platform regulators should give particular attention to heuristic cues such as video quality and content familiarity in the context of synthetic video detection.

Footnotes

Acknowledgements

The authors would like to thank Dr Anfan Chen and Dr Yuheng Wu for their help in preparing the video stimuli for the experiment.

Ethical approval

This study has been approved by the Human Research Ethics Committee of the corresponding author’s university (Approval No. HU-STA-00001434). The procedures for human participants involved in this study are consistent with the ethical standards of the corresponding author’s institution.

Consent to Participate

Informed consent was obtained from all participants included in the study.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was partially supported by the University Grants Committee of Hong Kong (Project No. 6000856) and City University of Hong Kong (Project No. 7005700). Open access made possible with partial support from the Open Access Publishing Fund of City University of Hong Kong.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data availability statement

The datasets generated during and/or analyzed during the current study are available from the corresponding author on reasonable request.