Abstract

This paper examines how aspirational content creators (ACCs) on the r/NewTubers subreddit forum understand and discuss YouTube’s algorithm. This study employs thematic analysis of 144 r/NewTubers posts that explicitly mentioned algorithms. The analysis reveals four main themes: mythologizing and anthropomorphism, demystification of the algorithm, platform gossip, and community backlash. NewTubers often personify the algorithm, attributing human-like qualities and agency to it. This anthropomorphism, however, is frequently challenged by other NewTubers who emphasize the algorithm’s technical nature and the importance of content quality. These narratives highlight the formation of public, non-institutional algorithmic literacies among ACCs as they negotiate the opaque world of platform algorithms.

Introduction

Algorithms are central to online life. From a technical perspective, algorithms are input-output computational operations. Social media platforms use algorithms to sort and classify online content (e.g. Shmargad & Klar, 2020). Algorithms sort content on these networks, thereby guiding the circulation of content and the structure of user-to-user interaction. Because the technical workings of algorithms are obscured for proprietary reasons and due to functional illiteracy (Burrell, 2016), users must imagine algorithms (Bucher, 2018). As a consequence, users often share narratives describing algorithms in a variety of ways, including what other researchers have identified as algorithmic gossip (Bishop, 2019), algorithmic lore (Bishop, 2020; MacDonald, 2021), algorithmic folklore (Eslami et al., 2016; Ytre-Arne & Moe, 2021), algorithmic religious significance (Cotter et al., 2024), and algorithmic logics (Gaw, 2022; Lobato, 2016). Regardless of their accuracy, personal narratives about algorithms by content creators can impact how they produce content because these discussions influence how they imagine algorithms.

Identifying and studying algorithmic discussions propelled by aspirational content creators (ACCs) enables researchers to gain an unvarnished understanding of the strategies creators use to attempt to garner online followings, as well as the debates that surround those strategies. While everyday users (e.g. Bucher, 2018; Eslami et al., 2015), micro-celebrities (e.g. Maddox, 2022), power users (e.g. Gallagher, 2020), journalists (e.g. Christin, 2020; Petre, 2021), and established influencers (e.g. Duffy, 2017, 2020; Hund, 2023) have been studied for their perceptions of algorithms, studying ACCs provides insight because this group lacks survivor bias, unlike established creators. Researching ACCs sheds light, too, on intentional ways of creating attention, which everyday users may not be interested in. Furthermore, studying ACCs documents germinal algorithmic perceptions and content strategies that are not necessarily successful but that still aim to be commodified and circulated.

Researchers have focused on ACCs by interviewing them about their algorithmic discussions, including mythmaking and gossip practices, thereby spotlighting the difficulties, pressures, and stresses that these creators experience from corporate platforms and their algorithms. Via ethnographic and interview-oriented studies of creators, in separate studies, Bishop (2019) and Glatt (2022) underscore the remarkable precarity of being an ACC. Likewise, Duffy (2017) found similar types of precarity with more established creators and influencers. Precarity creates incentives for ACCs to seek and share stories from other creators because they do not have formal structures in place, such as editorial teams or professional institutions supporting them. Without these structures, ACCs gather together to share their collective knowledge of their respective platforms. For example, in a 40-person face-to-face meeting, Glatt (2022) observed that participants attended in order “to learn how to grow and monetise their YouTube channels” (p. 9). These participants shared stories about how they did or did not attain algorithmic visibility, which often ended up dovetailing in various forms of hearsay and gossip.

The precarious nature of ACCs, derived from the constant “algorithmic change” on platforms (Duffy, 2020, p. 104), motivates them to find public routes for sharing their stories with one another. Without income or access to support, these creators are likely to search online for public spaces to share their stories and seek advice about algorithms (Haapoja et al., 2024). Examining public stories and advice about algorithms in online forums complements interview- and ethnographic-based work because these stories represent deliberately crafted narratives for broad, unfiltered audiences. By virtue of being online, such narratives have the potential to represent diverse ACCs who may in turn disagree with one another’s stories, thereby revealing algorithmic experiences as well as the contestation of those experiences by others on internet forums. Narratives on open online forums, in contrast to private face-to-face networks, may thus reveal more public understandings of algorithms.

To study such public narratives, this study focuses on the website reddit. We study the ways aspirational YouTube creators specifically share their experiences with the YouTube algorithm on a reddit forum called r/NewTubers. There are six parts to this article. First, we describe the scholarly conversation about algorithms and content creators, including imagined algorithmic audiences. This leads us to study the r/NewTuber forum as a representation of the phenomenon. We next describe our methods for data collection and qualitative analysis of 144 posts about algorithms from the forum. Third, we describe identified themes from these 144 posts. Fourth, we synthesize these themes to discuss the ways that aspirational YouTubers mythologize algorithms and platforms. Fifth, we discuss future directions for this study and studies of ACCs more generally. We conclude with remarks about the formation of non-institutional algorithmic literacies based on the public narratives we analyzed.

Content creators’ imagining algorithms as audiences

Social networking platforms, such as YouTube, TikTok, Facebook, and X (formerly Twitter), dominate the internet landscape. In broad strokes, these platforms operate under a capitalist logic—referred to as platform capitalism and surveillance capitalism (Srnicek, 2017; Zuboff, 2019)—which seeks to maximize profit by predicting user behaviors on these websites and selling those predictions to advertisers. To do so, these platforms do not produce commodities of their own but rather exploit users to create financial value for their owners (Arvidsson & Colleoni, 2016). To keep users on their websites, platforms entice users to produce user-generated content, or UGC (Eichhorn, 2022). Users in turn can receive payment for their content via advertising revenue or through sponsorships. These kinds of payments have led creators to live in an influencer economy (e.g. Abidin, 2016; Christin & Lu, 2023; Hund, 2023) which engenders a culture of aspiration (Duffy, 2016).

Because platforms use algorithms to automate the circulation and distribution of content (Eubanks, 2018; Noble, 2018; Pasquale, 2015), creators within this influencer economy have developed what other researchers call algorithmic knowledges (Cotter, 2022; Cotter et al., 2024; Cotter & Reisdorf, 2020) and literacies (Oeldorf-Hirsch & Neubaum, 2023). Very broadly, algorithmic knowledges and literacies are ways that users operating within platforms learn, explicitly or tacitly, to behave under the logics of automated filtered and sorting techniques. As an example, Gallagher (2020) found that journalists use “algorithmic timing” to learn when content is most likely to be shared. Other studies have made similar findings about the ways algorithms and related technologies influence journalist’s writing practices and strategies for posting social media content (Christin, 2020; Nelson, 2021; Petre, 2021).

Algorithmic knowledge and literacy are contested, however, because the actual mechanisms of algorithms are not verifiable. As Jenna Burrell (2016) has argued, the inner workings of algorithms are opaque and “black boxes” for three reasons. First, companies keep algorithms secret for proprietary reasons. Second, algorithms are obscured because of functional illiteracy, or the idea that even if most people were exposed to the technical detail, they would not understand it. Third, algorithms operate at massive scales, thus making their decisions not interpretable at a human scale (Burrell, 2016, pp. 3–5).

Because users cannot directly determine how algorithms will circulate their content, many content creators begin to imagine algorithms as additional audiences for their content alongside the audience of human platform users. Imagining algorithms as audiences is a way for users to make sense of algorithms that they cannot fully understand from their experiences. As Litt writes, “The imagined audience is the mental conceptualization of the people with whom we are communicating, our audience . . .. The less an actual audience is visible or known, the more individuals become dependent on their imagination” (p. 331). Through our analysis of the aspiring YouTuber community on Reddit, we argue that aspiring content creators must imagine algorithms because (a) algorithms cannot be known, (b) algorithms change as platforms update them, and (c) algorithms are the primary sources of distribution and circulation for social media content. However, aspiring content creators are not imagining the algorithm without evidence; rather they experiment with content production, making determinations about what content is viewed more or less frequently, typically through metrics that platforms make available, such as likes, comments, and views (Beer, 2016; Christin, 2020; Dogruel et al., 2022; Edwards, 2018; MacDonald, 2021; Petre, 2021). Imagined algorithmic audiences are, too, likely unstable (Litt & Hargittai, 2016, p. 8) resulting in various debates and disagreements among content creators.

While imagined algorithmic audiences are unstable, creators are not free to imagine algorithms in any way they choose. They must imagine them based on other voices, including advice from social media platform representatives (Gillespie, 2014), self-proclaimed algorithmic experts (Bishop, 2020), and as we will show later in this article, each other. Moreover, because creators do not have access to the technical working of algorithms, they use figurative language to understand algorithms. The term lore is one such example: when creators cannot determine what advice and strategies are useful, or the mechanisms for benefiting specific users, they create stories by generalizing from anecdote, personal experience, or gossip (Bishop, 2019). As we found in our thematic analysis, the creators in this study created stories by using figurative language to anthropomorphize algorithms and the YouTube platform to which those algorithms belong.

The NewTuber subreddit: algorithmic discussions of aspirational content creators

Finding public forums online where creators discuss and share stories about content-production strategies assists with identifying ways that users imagine algorithms, including the various repertoires around algorithms. Aspirational here denotes creators who are just beginning to create content; they are novices. ACCs, in the context of this article, aim to increase the circulation of their content through metrics, such as number of views and length-of-view (the latter being a metric of time valued by YouTube).

ACCs in public forums are, as noted earlier, likely to represent a wide variety of debate, including sharing unsuccessful strategies. As a result, they offer an inverse perspective to that of expert creators. In a study of self-proclaimed algorithmic experts who sell their advice on YouTube, Sophia Bishop (2020) has written, “Experts, then, are not only invested in teaching users how to ‘win’ at the YouTube algorithm, but their lessons and feedback are intertwined with moralistic judgments about what is good content” (p. 4; emphasis in original). We argue that ACC forums, notably those away from the platform itself, can offer insight into ACC perceptions of “good” and “bad” content, incorrect strategies that algorithms do not reward, and ACC’s personal feelings about algorithms. ACCs teach each other how to avoid losing.

As a result, in this study, we posed the following research question: what are themes of the debates that ACCs have around algorithms in a publicly accessible online forum? To answer this question, we studied the subreddit, r/NewTubers. The forum is dedicated to helping new, aspiring YouTube creators craft their content. The forum is described as a community “. . . to allow up-and-coming channels to improve with resources, critiques, and cooperation among tens of thousands of peers! We teach you how to Start, Build, and Sustain your Content Career!” (NewTuber subreddit). We will refer to the users in this study as NewTubers.

In our observations, the NewTuber subreddit occupies a transitional point for ACCs. While the forum has hundreds of thousands of subscribers, many appear to us to leave the online community. We suggest this emerges from the forum’s informal limit: the forum is designed for YouTubers with fewer than 5000 subscribers on their profile, or channel. In other words, there are likely so many subreddit members because (a) the YouTubers either achieve success and leave, (b) they stop being YouTubers, or (c) find the knowledge in the subreddit to be less helpful as they gain experience. It’s also against the forum’s rules to be directly promotional, such as asking for subscribers. Members are not supposed to link to their YouTube videos except for special weekly threads meant for channel critique. The forum is framed by an explicit rule: it is not a place to “create a plug post,” or advertising their YouTube channel. For these reasons, the forum appears to value a type of anonymity where members can be more direct and vulnerable without the risk or pressure to promote their content. Because of this more transitional space, ACCs on the NewTuber forum are often not monetized and, from our observations, do not yet make money from their content, despite the hope they may one day do so.

The NewTuber subreddit is rife with in-depth stories about YouTube’s algorithm. As we describe in the next section, the posts we collected about algorithms were written in vignette form, with personal stories, anecdotes, and self-help advice. The public aspect of the subreddit, as well as the large potential audience, encourages this narrative element. These public narratives, unlike the qualitative data present in ethnographic or interview studies, are a key differentiator: because any visitor to Reddit can read the posts, there can also be pushback against algorithmic discussions, including the framings of imaginaries, gossip, and lore. Indeed, as we found in our thematic analysis, some NewTubers posted expressions of anger and frustration with the ways their fellow members wrote about and portrayed algorithms.

Methods

Using the Python library Python Reddit API Wrapper (PRAW), we web scraped (Gallagher & Beveridge, 2021; Mancosu & Vegetti, 2020; Marres & Weltevrede, 2013) the subreddit at the time of collection (March 2024). Due to the limits of the API, we were limited to 1000 r/NewTubers posts and about 100,000 comments from the posts. We scraped 11 fields: post ID, post URL, post title, post text, post author, post creation time, comment ID, comment parent, comment body, comment author, and comment creation time. All pieces of information were organized into a Pandas Data Frame for easy access. All texts were then cleaned for special characters.

The posts we gathered were dated from March 29, 2018, and April 8, 2024. While we did collect the comments from each post, these are beyond the scope of this project as the comments would likely represent a response to the posts and other comments, thereby obscuring the focus for a thematic analysis. Likewise, because our research inquired about ACCs perceptions of algorithms, we did not analyze upvotes or downvotes because this would in some ways privilege popular or dominant narratives over less visible but equally meaningful perspectives from ACCs. As we note in the limitations section, both comments and voting metrics are valuable for future study.

To answer our research questions from the previous section, we limited our analysis to posts, excluding comments, where the word “algorithm” was mentioned explicitly in either (a) the post text or (b) title. To do so, we filtered the original Data Frame using the Python Regular Expressions library, which matches patterns within strings of text. Our regular expression accounted for all variations of the word algorithm, including “algorithms,” “algorithm’s,” and “algorithmic.” This process yielded 144 posts (average word count was 835).

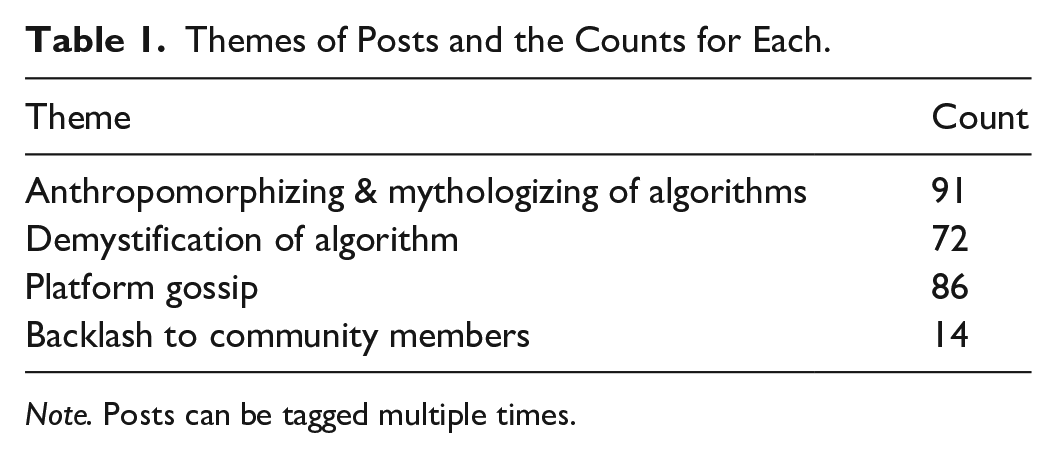

For qualitative thematic analysis, we transferred information regarding the URLs, titles, and body texts of these posts into a Google Spreadsheet where we could analyze these data to create an initial modified ground theory approach (Strauss & Corbin, 1998) in which we created a set of open codes and then conducted a thematic analysis (Braun & Clarke, 2019, 2022). For our qualitative thematic analysis, we held an initial meeting, split the work, and talked about a coding structure in our document. During our second meeting, we reviewed the 30+ codes, modifying them iteratively so that broader themes could be established. We subsequently created a codebook and theme book, coding the posts with each post being able to be coded multiple times. During our weekly meetings in June 2024, we met to discuss and iterate on our themes, updating the theme book as needed. Using our code and theme books, we identified four major themes within our dataset. These themes were decided based on reoccurring codes and ideas that we both saw across posts in our dataset. Ultimately, we found five themes and seven genres of posts (Tables 1 and 2, respectively). To be clear, this study was a qualitative study, but it is helpful to see the number of themes of our thematic analysis. We present our genres of posts to note that the most dominant form was algorithm advice, often couched inside either a success or failure story related to whether the content (a) received a satisfactory number of views or (b) yielded subscribers.

Themes of Posts and the Counts for Each.

Note. Posts can be tagged multiple times.

Genres of Posts and the Counts for Each.

Note. Posts can be tagged multiple times.

Thematic analysis

Theme 1: users anthropomorphized algorithms and platforms, including figurative language

The most dominant theme in our thematic analysis was anthropomorphizing algorithms and the platform of the algorithm, that is, YouTube. NewTubers commonly ascribed actions and emotions to these entities as a rhetorical strategy to understand their experiences with content creation. For example, they repeatedly used the verbs “push” or “pushes” (e.g. “the algorithm pushes long-form content”) to describe types of content that would perform well. They also described the algorithm with the following verbs: “notices,” “picks up,” and “promotes.” In addition, NewTubers attributed opinions to both YouTube itself (the platform) and its algorithm, using such phrases as “YouTube likes,” “YouTube hates,” or “the algorithm likes.” This language, in our view, allows for authoritative claims on what content will and will not be successfully circulated by implying an intimate understanding of the algorithm’s personality. Writing technically about an algorithm or social media platform (detailing the underlying mathematical equations and code) can be difficult if it is a “black box” and its inner workings are largely unknown or inaccessible to most people. Therefore, anthropomorphizing enables NewTubers to make sense of YouTube and its algorithm through narratives, as well as guiding them to success when creating content.

The NewTuber’s anthropomorphizing of the algorithm tended toward mythologizing, meaning they described it similarly to how one would describe a magical, godlike, or superhuman entity. They shared narratives that emphasized YouTube and its algorithm’s power, superior intelligence, randomness, and enigmatic nature, often to an exaggerated degree. Many people attributed their success to the algorithm “blessing” them. The words “magical,” “mysterious,” and “almighty” were found often.

This characterization of the algorithm underscores a power imbalance between creators and the algorithm, including the algorithm having ultimate control of creators’ fates. Several posts argued the algorithm was “testing” them. One creator wrote: Once everything has been going smoothly for some time (it could be days, it could be years), then the algorithm will start aggressively testing your channel to see if you’re worth promoting to an audience that you couldn’t reach on your own.

This post and others characterize the algorithm as dominating and critical when “testing” them.

Viewing the algorithm in this way, NewTubers used different metaphors to describe the difficulty of content creation on the platform, through the language of collaboration and playing a game. Some NewTubers would suggest a collaborative process, or more accurately a process of appeasement, by noting the importance of “working with” the algorithm. Many noted they were trying to “please” the algorithm, which a recent study of the forum confirms (Haapoja et al., 2024). Others would frame themselves to be in an imbalanced gamified struggle with the algorithm, using phrase such as “gaming the algorithm,” “beating the algorithm,” “cheating the algorithm,” “manipulating the algorithm,” or, most commonly, “cracking the algorithm” to demonstrate they achieved success.

In addition to promoting a competitive culture of algorithm vs. creator, figurative descriptions of the algorithm emphasized luck as a key component for success. One NewTuber configured the algorithm as making dreams come true, invoking mysticism, but only “maybe” and only after an extended time: “And after we grind away for months and years, maybe, just maybe, the algorithm will pick us up and make our dreams come true. But until then keep knocking on those doors!” As seen in this post, to avoid hopelessness with respect to the algorithm’s perceived randomness, NewTubers implored creators to work hard and persevere while they waited for their content to perform well. Another post with a similar, but more optimistic, message read: What I think you should take away from this little story is that you will get your chance from the algorithm. The people who succeed are the ones who know how to make the most of this chance. I thought I would be reac[h]ing the milestone of 30k in april time if I was lucky but now I might be reac[h]ing it in a few days. Keep grinding, you will most definitely get your chance.

The word “grind,” used often, made legible the necessity of frequent and consistent content creation and uploads for platform success, which generally signaled the importance of effort. This post’s writer frames success as attainable for “those who know how to make the most of their chance” or make the most out of it by uploading more content and effort.

The tone or content of several posts from this theme indicated an awareness of the anthropomorphizing algorithms. Many posts framed the algorithm in these ways ironically. Some posts would use and point to algorithmic myths and narratives to offer concrete content creation advice. Below is an emblematic post of this strategy: The algorithm is smarter than you. Don’t try to play games or cheat the algorithm . . .. The algorithm is more intelligent and sophisticated than most people, and is learning all the time on its own. Just assume for all intents and purposes a really smart human is evaluating every one of your videos and scouring them for signs of you trying to cheat the algorithm. “Oh this person tried to tag Ukraine and Fortnite in the same video? I see, they’re trying to outsmart me and get their video trending. Well, I won’t rank you for either of those terms then. . .how you like them apples?” (

The algorithm is framed as smarter than creators and will not reward them for attempting to manipulate tags (multiple, possibly competing, tags for a video about both the Ukraine and videogames). The algorithm is “sophisticated” and “scours” for signs of cheating; in fact, even “cheating” is a metaphor. The post, though, is aware that anthropomorphizing algorithms is not accurate. The writer of the post appears to know the algorithm is not intelligent. The point of the post is to use figurative language—for ease of accessibility—to help other ACCs better optimize their content. In other words, figurative language serves the practical purpose of (a) not creating tags on videos that are not accurate and (b) thinking in terms of optimizing.

Theme 2: NewTubers demystified algorithms, often in reaction to perceptions of algorithms being anthropomorphized and personified

In response to the previous theme, many NewTubers attempted to debunk the figurative language they had seen other ACCs post in the forum. NewTubers reacted against wording that suggested the algorithm was opinionated, critical, or emotional. In turn, they challenged adversarial narratives proposed by others on the forum. One NewTuber advocated for the impartiality of the algorithm by writing: “no algorithm hates you, in [my] opinion i think youtube actually has one of the best algorithms for social presence. there is no one to blame but yourself.” Another criticized the view that the algorithm favored some creators over others: I see a lot of people on this subreddit who claim that there is so much luck involved in gaining traction. They blame it on the algorithm deciding to favour others over them or just by random chance these other creators are bigger . . .. Whenever I check out the YouTube channels of the people who say these things, I can always identify a reason for why they’re not doing so well.

Posts such as these critique narratives for “blaming” the algorithm, or projecting responsibility for a lack of platform success outwardly onto it rather than looking inwardly to oneself.

Also featured in the previous example, and prevalent throughout posts demystifying the algorithm, was a critique of perceiving platform success as a matter of random chance and luck. Another poster wrote, similarly, Stop worrying if you are “lucky enough” and work towards analyzing what’s working, what doesn’t, and how to improve. Try to find creative ways to keep people watching and having them want to come back. Keep trying to learn and understand that no video is ever good enough.

As with posts arguing for the algorithm’s impartiality and unemotional nature, posts critiquing this view argued for a shift in responsibility for content outcomes on creators rather than the algorithm. The refrain here is to produce content that is “good enough,” which in this context means figuring out creative ways to get users to watch the content.

Other posters took a more forceful approach, critiquing the religious language of algorithms “blessing” certain content creators.

When i first started on my journey, i read countless times about the algorithm randomly blessing your channel, and that nobody really knew how or why it did it, it just sorta happens to you down the line. And im not saying this DOESNT happen. But the youtube algorithm is its viewers. If viewers respond to a video, youtube promotes it, and suggests it to more people who are likely to watch. Youtube is an app too at the end of the day, and ALL the apps are fighting to keep people on their platform the longest. The percentage base of your subs that do see your video is “Your algorithm.” They ultimately decide if your video gets promoted to a larger range of people. Your title and thumbnails are what reels somebody in, and your view duration is the final piece to if youtube sees it suitable to promote.

This post represents a common trend of demystification. The post dismisses the idea that an algorithm randomly “blesses” content creators (while still allowing for that as a possibility). The post then transitions into concrete techniques for building content, including crafting titles and thumbnails that the algorithm deems suitable to promote. This transition from demystification to concrete advice about content creation was a common strategy we observed. As the following poster argued, the algorithm is not a person but a thing that makes decisions based on metrics: You have to remember: the YouTube algorithm is unemotional. It does not care who you are. It just operates on the above three metrics. So save yourself a tonne of emotions, and just focus on making more videos people want to see.

As with the previous post, the NewTuber demystifies the algorithm and then replaces the anthropomorphosis with a mechanical quantified understanding of the algorithm.

NewTubers frequently offered technical, concrete advice about the algorithm when demystifying. One creator posted granular, specific advice related to engagement of videos with respect to length.

Some people don’t understand. Watchtime is not seen by the algorithm as a percentage as much as raw time. It’s better to have a 60% retention on a 10 minute video getting 6 minutes of watch time than having a 90% retention on a 2 minute video getting 1m 48sec of watch time.

This poster offers an equation, of sorts, for getting “seen” by the algorithm. While there is still personification in this post, this figurative language breaks down into metrics (watch time as compared to raw time).

Theme 3: NewTubers drew on platform gossip about YouTube, frequently to elaborate on demystification of algorithms

Whether doing so through the approach of demystifying or anthropomorphizing, NewTubers simultaneously made arguments and gave advice about how they believed the YouTube platform circulated content. They shared common strategies such as (a) making niche content, (b) content creation using search engine optimization (SEO) techniques, (c) consistency of posting, and (d) garnering retention. Some posts expressed negative feelings toward YouTube, often complaining about the commodification of content, such as the need to use SEO techniques.

Posts in this theme underscore the values that NewTubers believe about YouTube as a corporate platform. One poster sees these values as connections between users, writing, “Youtube’s recommendation system learns what the audience wants by seeing what other videos people are watching.” Another wrote perceives the value of regularity of content creation: “Another thing to note is that [these videos] are all one month apart, give or take a view days, which is perfect for the YouTube algorithm.” Yet another breaks down the idea of having a following, via subscribers, instead arguing that active, participatory audiences are better than a rote number of subscribers: “The YouTube algorithms don’t care how many subscribers you have, they care how ACTIVE your subscribers are.”

The critical aspect of this theme is the connection of the algorithm to the values of YouTube as platform. Algorithmic knowledge is connected to platform knowledge and perceptions of platforms. NewTubers prioritize YouTube’s values, and these creators bring these perceived values to the ACC forum. While we will discuss this more in the conclusion, for now, we underscore that the posts in this theme demonstrate creators are attempting to translate YouTube values into concrete strategies for the YouTube algorithm.

Theme 4: some creators responded to other ACCs with a backlash to anthropomorphizing and other figurative language about algorithms, often criticizing fellow community members for not creating quality content

In what is clearly the most aggressive theme, many NewTubers criticized fellow members for “blaming” the algorithm for their failure. These posts perceived other NewTubers to be blaming algorithms for content that is not high enough quality. They argued a lack of platform success was the result of a lack of creator talent and effort, and implored creators to focus on self-improvement. As a case in point, one wrote, Commiseration (people feeling sorry for you/with you) gets you absolutely nowhere. Get off your butt and start improving your craft. Stop looking for people to validate your complaints that your channel is being overlooked by the YouTube algorithm. Make a large base of content. Watch how it becomes a timeline that reveals your growth. Learn as you go. And always remember, YouTube is merely a venue for creativity to be shared. You as a person, the skills you sharpen, and the network you cultivate—those are the real and important things.

This post, in addition to illustrating a self-reliance shared by many NewTubers, is positioned as a backlash to complaining that occurs on the NewTuber forum. The writer of this post has become seemingly annoyed with community members venting their frustrations with the algorithm. The poster counters this perception that the quality of their videos is in fact not high enough. This creator wanted communal blaming of the algorithm to stop.

Backlash against community members often extended beyond criticism of “blaming” the algorithm to criticism of algorithmic misinformation. This backlash was found throughout our data, with creators calling the advice on the forum incorrect or “stupid.” As one wrote, “I come here to answer the occasional question from time to time and I’m often amazed at the amount of misinformation on display in this sub. The vast majority of it is either, wrong, misinterpreted or just outright stupid.” Another dismissed the advice shared on the forum and for pandering to the algorithm, arguing, . . . 90%+ of the posts I see on this sub are those of you focusing/worrying about shit that doesn’t matter. Things that aren’t worth getting worried about or focusing on. Who cares if you got one random dislike? Who cares about the algorithm? Who cares if you got a spam comment? Who cares about what time of the day you upload videos or what day of the week you post them on?

The “who cares” refrain in this post attests to the creator’s perceptions that there are multiple posts of people complaining about their lack of success. As researchers, we can confirm these complaints are grounded in evidence.

Discussion

In our estimation, a typical experience expressed in these narratives is as follows: when ACCs begin to make content, they do not have much guidance or ways to consider their audience. Without much guidance beyond the recommended platitude of “making quality content,” and optimizing content for search (such as relevant tags, titles, and descriptions), the NewTuber ends up experiencing the “whims” of YouTube’s algorithm. They (a) experience difficulties when trying to grow their channel’s reach, or (b) they are unsure of why they were successful. They then come to the NewTuber forum and share their experiences. Others read these stories and offer recommendations, advice which is contradictory depending on the post. Some narratives recommend “pleasing” the algorithm, some recommend ignoring the algorithm, some recommend following trends, or some recommend making content creation a hobby or “doing it for the love” of making content. What ends up happening in terms of audience is that these creators remain unsure of their audience (Marwick & boyd, 2011). The ACCs possess a variety of ways of identifying their audiences; they can use metrics, they can read comments from fellow YouTubers, and they can seek advice from the NewTuber forum.

As a result of this advice, ACCs imagine algorithms and, in our view, understand the YouTube algorithm as a type of audience for their content. They aspire for their content to be viewed widely and, therefore, both seek and share strategies for going viral. This imagined algorithmic audience relies on anthropomorphizing algorithms, as a sociotechnical concept, and the corporate values of YouTube, as a platform. The algorithm and platform are deeply connected in these narratives, indicating they have developed a relationship between themselves and YouTube (both the algorithm and platform).

NewTubers used extended metaphors and figurative language to understand the relationship between themselves and the algorithm, as well as YouTube as a platform. Notably, we were struck by the religious-type language, both explicit and tacit, for describing this relationship. One post even went so far as to compare the work of content creators to that of missionaries: the more attention you draw to yourself, the more people will ignore you.

Another wrote, “. . . don’t watch others, the amount of time they are spending on their videos doesn’t impact you. don’t be envy [sic], don’t expect the same treatment as them, the algorithm is gonna bless you eventually.” In the first example, the narrative tells the story of a creator needing to engage with users on an individual basis and that will get the attention of the algorithm, implicitly framing the algorithm as a religious figure. The second example frames the algorithm as ineffable; according to the writer, one should not worry about the way others are treated but only that eventually creators will be blessed. This explicit endorsement mimics the tacit framing from the first example: the algorithm is an all seeing and watching entity, possibly even drawing an analogy to “god” or similarly related concept.

At the same time, the “backlash” narrative expresses antipathy toward anthropomorphizing the algorithm, which indicates contestation over the ways that algorithms can be or should be imagined. Part of this conflict involved the very purpose of content creation, which was a tension between (a) monetization and (b) enjoyment of content creation. Creators appeared to have an end goal of monetization, but there was a frequent refrain that creators need to enjoy making the content and the process. Enjoying the process was also a way to deal with emotional disappointments when content did not go viral or receive much viewership. While many posts shared stories of achieving success as defined by going viral or accruing large metrics, many other posts argued that creating good content could still be rewarding even without algorithmic success. This was apparent in our genres counts (Table 2) when some posts advocated for ignoring algorithms.

Across all of the themes from our analysis, creators voiced contestation over algorithmic knowledge and literacies. There was never one correct piece of algorithmic advice or one “right” metaphor. Much of this advice was about resisting the corporate control of YouTube even while operating within that corporate space. This tension underscores the importance of enjoying the process of content creation. If algorithmic success could not be guaranteed, as many of the NewTuber posts indicated, then focusing on the process of creation could be a success. As one creator wrote, “focus more on the content you love with passion than on metric and statistics as a new content creator.” We do not view this post as naïve. Rather, the sentiment is a way of addressing the precarity of being an ACC in an algorithmically-driven space. If there are no right strategies for “appeasing” the algorithm”—indeed, corporations change their algorithms frequently—then the process itself becomes the strategy.

Limitation and future research

Because this article reports on a larger study, the main limitation of this article is that it does not fully analyze all the posts from the NewTuber subreddit or the comments on the analyzed posts. We only reported on 144 of 1000 posts made to the NewTuber forum; there are also over 100,000 comments in our dataset. There are likely more themes and strategies that need identification related to algorithms that our principles of selection excluded. As noted in our methods section, our main principle of data selection was explicit use of the term algorithm and algorithmic. We could have excluded some narratives implicitly about algorithms. For example, if an ACC writes that “posting Shorts will give you more subscribers,” then they are likely making a claim about the algorithm even though there is no explicit mention of the algorithm. There are thus, likely, tacit and implicit findings in the remaining 861 posts. Future work will include analysis of these remaining posts and comments, as well as analysis of popularity of posts, that is, upvotes and downvotes.

Potential studies to expand on this work could include locating and collecting data on similar forums not about YouTube, such as the subreddit forum TikTokhelp, which is dedicated to helping ACCs new to the platform TikTok. Thematic analysis of posts on TikTokhelp could expand the scope of this article. Other avenues include surveying and interviewing ACCs, which we attempted to do with IRB approval, but we could not successfully recruit participants. Finally, researchers could take a more quantitative and/or computational approach to analyzing how ACCs discuss algorithms. We have begun this type of analysis for the comments in our data.

Conclusion: public non-institutional algorithmic literacies

NewTubers imagine algorithms as audiences, seeking to accommodate this audience, challenge it, circumvent it, and possibly ignore. Because the forum is public, these ACCs interact with one another, layering on their various knowledge, experiences, and advice, repeatedly. They create opposing, contradictory, and complex narratives regarding the algorithm to support their advice and complaints regarding content creation and platform success.

As a result, the community on this forum trains creators in informal, non-institutional, and public algorithmic literacy. The NewTuber community functions to varying degrees in a pedagogical, if agonistic, manner. This public algorithmic literacy is based, thus, not merely on concrete verifiable knowledge about algorithms but also on (a) anthropomorphizing algorithms, (b) perceptions of platforms, in terms of gossip and corporate framing, and (c) on individuals sharing successful and failed stories. Because the algorithm is largely a “black box,” it incites gossip and debate within the NewTuber forum. A duality exists here that we wish to underscore. These aspirational creators seek success on platforms; NewTubers want success on YouTube. They want legibility from the algorithm. Yet by going to an off-platform forum, that is, the NewTuber subreddit, their discussions are formulated outside that platform’s corporate control. This process reveals how public algorithmic literacies are influenced by corporate power while underscoring the ways individual creators claim agency when creating content.