Abstract

Emotionality is a well-established strategy for boosting audience engagement on social media. While fact-checking is positioned to provide objective information, fact-checking posts on social media often involve heightened emotionality. How much emotionality is present and how emotionality influences audience engagement and public sentiment toward fact-checked targets remain largely understudied. Informed by social psychological frameworks explicating message-level factors influencing public engagement and sentiment, the present study examines emotionality in 49,270 fact-checking posts created by 10 United States fact-checking organizations on Facebook from 2017 to 2022. Results showed that emotionality in fact-checking posts significantly increased by 13.5% over the years. Editorial fact-checkers (e.g., Washington Post) used higher levels of emotionality than independent fact-checkers (e.g., snopes.com). Emotionality positively indicated public engagement as predicted. However, in both fact-checked true and false information, emotionality was negatively associated with the public’s sentiment toward fact-checked targets, suggesting a potential spillover effect on stories verified to be true. This study reveals that emotionality in fact-checking posts boosts social media engagement yet with the potential of compromising fact-checking effectiveness.

Keywords

Misinformation poses significant threats to individuals and society at large, altering beliefs, norms, and behaviors across realms of politics, health, and science (Lewandowsky et al., 2013). Fact-checking, defined as performing systematic validation of claims, myths, and rumors, is one of a few crucial misinformation-intervention practices in correcting misperceptions and disseminating true information (Walter et al., 2019). A great number of legacy news organizations have established editorial fact-checking divisions (e.g., AP Fact Check), while independent fact-checking organizations operated by professional organizations or universities have emerged as well (e.g., FullFact, PolitiFact). According to the International Fact-Checking Network (IFCN, 2023) at Poynter, there are 109 verified fact-checking organizations globally in 2023.

While fact-checking has been consistently shown to be an effective approach in correcting misinformation in general (Porter & Wood, 2021), the public’s awareness of and engagement with fact-checking organizations and their posts remain low (Brandtzaeg et al., 2017; C. T.Robertson et al., 2020). In comparison to exposure to and engagement with regular news content, the public’s consumption and interaction with fact-checking posts is much lower ( X.Zhang & Fu, 2022). This challenge is further compounded by the rapidly evolving policies of social media platforms. Following Elon Musk’s change to the social media platform X, on 7 January 2025, Meta announced the termination of its third-party fact-checking program, replacing it with a community-based model (Kaplan, 2025). More platforms may shift to similar policies. Such changes will inevitably reduce the presence of fact-checking posts and their spread through social media, leading to a more complex information ecosystem already plagued by misinformation and disinformation.

Fact-checking organizations thus face a pressing need to increase publicity and public engagement. While editorial fact-checkers such as Reuters and the Washington Post tend to have more audiences because of their established news media channels, the majority of independent fact-checking organizations have very limited public visibility and heavily rely on the coverage from mainstream news media and social media channels to reach a wider audience (Graves & Cherubini, 2016).

To increase the visibility of fact-checking posts on social media with engagement-prioritizing algorithms, fact-checking organizations have embraced emotionality to increase engagement, quantified in terms of social media metrics, such as the number of views, likes, comments, and shares (Brown et al., 2020; Wahl-Jorgensen, 2020; X.Zhang & Zhu, 2022). A recent study on three fact-checking organizations on Twitter revealed the presence of heightened emotionality in fact-checking Tweets, indicating that fact-checkers indeed use emotionality on social media (Lee & Britt, 2023). Similarly, an analysis of 12 professional fact-checking organizations in the United States found that nearly one-fifth of the social media posts published by these organizations contained click-bait elements with elevated emotionality (X.Zhang & Fu, 2022).

The literature on social media virality has consistently shown the benefits of using emotionality in boosting engagement (e.g., Berger & Milkman, 2012) and provoking citizen participation (e.g., Hasell & Weeks, 2016). Even journalism that traditionally emphasizes objectivity over emotionality has been embracing an “emotional turn” (Wahl-Jorgensen, 2020, p. 175), using increased emotional frames and styles to cater to the audience’s preferences (Peters, 2011). Scholars have noted that this shift toward emotional content is not solely a product of market-driven trends resulting from the attention economy but also due to its additional benefits. For instance, emotionality has the potential to enhance the audience’s understanding of news articles (Wahl-Jorgensen, 2020), bridge the public’s education gaps (Bas & Grabe, 2015), and encourage political participation (Grabe et al., 2017).

However, the aforementioned benefits of using emotionality may not stand for fact-checking, a form of reporting meant to restore journalism’s truth-seeking role and objectivity (Graves, 2016, p. 187). In this context where objectivity is even more valued, the presence of emotionality could be perceived as a derogation of news quality and a deviation from journalistic objectivity (Burgers & de Graaf, 2013; Plasser, 2005; Xue et al., 2024). In addition, informed by the emotional contagion theory (Barsade, 2002) and the affective processing theory (Elfenbein, 2014), we argue emotionality may be contagious and intensify the public’s sentiment toward fact-checked targets. Fact-checked target refers to entities that are mentioned in a given fact-checking post, such as specific names, organizations, and objects (e.g., in a fact-checking post falsifying a politician’s statement on COVID-19 vaccine misinformation, the targets are the politician and COVID-19 vaccine). It remains unknown what role emotionality in fact-checking posts plays in influencing fact-checking effectiveness and the public’s attitudinal sentiment toward fact-checked targets. How would emotionality influence engagement with fact-checking posts and the public’s attitudinal sentiment toward the fact-checked targets? Our research thus aims to delineate the differential influences of emotionality on public engagement and sentiment evaluation of fact-checked targets.

While considering emotionality’s impact on engagement and attitudinal outcomes, another important angle is to take on the perspective of message production and journalistic practice. Specifically, negativity bias in fact-checking posts may confound the interpretation of the emotionality’s impact. Negative entities are more impactful than positive entities in terms of individual perceptions (Rozin & Royzman, 2016). Negative events spread faster than positive events (Bebbington et al., 2017), and false news spreads faster than true news on social media (Vosoughi et al., 2018). The tendency to prioritize negative information consumption and transmission can in turn promote negativity bias in journalistic decisions. Prominent examples include the use of sensationalistic fact-checking labels (Xue et al., 2024) and the over-reporting of violence (Soroka & Carbone, 2022). In the fact-checking context, fact-checkers pay more attention to fact-checked false information than rumors that turn out to be true. Negativity bias, while being reflected in news event coverage, can also exist in nuanced language choices (Bebbington et al., 2017), such that more words emitting emotionality are used to cover fact-checked false information than true information. So far, this phenomenon has been overlooked, and it is unclear whether emotionality varies across fact-checking stories about true versus false information and how the impact of emotionality on public engagement and sentiment can be moderated by the veracity of fact-checked information.

Grounded on the emotional contagion theory (Barsade, 2002) and affective processing theory (Elfenbein, 2014), we inquire into the impacts and drivers of emotionality in fact-checking posts on social media. Relying on fact-checking posts (N = 49,270) and comments (N = 525,604) collected from 10 IFCN-signified U.S. fact-checking organizations on Facebook, we investigate (a) the association between emotionality in fact-checking posts and their social media engagement, (b) the association between emotionality in fact-checking posts and the public’s sentiment toward fact-checked targets, and (c) how negativity bias moderates these relations. Utilizing large-scale social media datasets and entity-targeted sentiment analysis, our computational analyses and findings contribute important observational evidence illuminating emotionality’s role in communicating fact-checks on social media, with a specific focus on negativity bias. Both theoretical contributions and practical implications can advance research in digital journalism, fact-checking, and social media analytics.

Fact-Checking Emotionality and Public Engagement With Fact-Checking Posts

Public engagement is an essential goal of journalism, not only for profit but also for reach and impact (Nelson, 2021). This is especially true for fact-checking organizations with limited public reach and visibility (Soo et al., 2023). To increase public engagement at the network level, independent fact-checking organizations have been incorporating multiple communication channels to reach the public (Dafonte-Gómez et al., 2022). At the content level, leveraging emotionality is a common journalistic practice to engage the audience and increase visibility, especially on social media with engagement-driven algorithms (Wahl-Jorgensen, 2020). As the framework of viral emotion suggests, emotionality can provoke public engagement by activating the audience’s neural system (Berger & Milkman, 2012), with high-arousal emotions more likely to promote information sharing (Berger, 2011). In the fact-checking context, a recent study found that anger and anxiety in fact-checking Tweets were positively associated with the number of replies (Lee & Britt, 2023).

Previous studies are often constrained in their analytical scope by focusing on discrete emotional dimensions or isolated social media metrics. In this study, we aim to provide a holistic understanding of emotionality as an overarching fact-checking practice without pinpointing specific emotion dimensions or categories. Thus, we examine the effect of overall emotionality as a fact-checking strategy, regardless of emotional valence, arousal, or discrete emotions. On engagement, we consider all social media engagement metrics because they represent different perspectives of public engagement and contribute to the overall visibility of fact-checking posts ( C.Kim & Yang, 2017). For example, emotionality has been found to facilitate engagement with posts on social media in terms of the number of likes, comments, and shares (Leppert et al., 2022). Consequently, we choose to use a composite measure that incorporates all social media engagement metrics. Based on empirical findings and theoretical predictions from emotion contagion, we hypothesize that emotionality would be positively associated with social media engagement.

Hypothesis (H1). Emotionality in fact-checking posts is positively associated with social media engagement with fact-checking posts.

Fact-Checking Emotionality and Public Sentiment Toward Fact-Checked Targets

Fact-checking posts typically serve both informational and persuasive purposes (Graves et al., 2023). For individuals without specific prior attitudes toward fact-checked targets, these posts can capture public attention, elicit public sentiment, and even shape new attitudes without backfire effects (Ecker et al., 2020). However, for those with strong pre-existing beliefs associated with the fact-checked targets, the exposure to and processing of certain fact-checking posts can be influenced and distorted by those prior beliefs and group identities (Jennings & Stroud, 2023; Nyhan, 2021). Although strong, consistent findings have concluded that fact-checking can effectively correct and reduce misperceptions, the effects of fact-checking on attitudinal change are less clear. Few studies measuring attitudes toward fact-checked targets showed inconsistent findings. For instance, Zhang and colleagues (2021) found reading a fact-checking tweet on vaccines increased participants’ pro-vaccine attitudes in comparison to reading a misinformation tweet. In the political context, however, a series of studies have identified the continued influence effect of misinformation and limited attitude change despite fact-checking (see the article by Walter & Tukachinsky, 2020 for a review).

Several message-processing mechanisms can influence attitudinal change. First, when people encounter a fact-checked target—whether concerning an individual or an object—they often assume that something must be controversial or wrong based on the mere act of fact-checking. This perception aligns with the adage “where there’s smoke, there’s fire,” suggesting that there must be a legitimate reason for that scrutiny. This is related to the Implied Truth Effect, which posits that “false headlines that fail to get tagged are considered validated and thus are seen as more accurate” (Pennycook et al., 2020, p. 4944), whereas tagged headlines are seen as less credible. This bias stems from confirmation bias, “an inclination to retain, or a disclination to abandon, a currently favored hypothesis” (Klayman, 1995, p. 386), with which people make swift judgments based on pre-existing attitudes. Consequently, even before reading a fact-check, individuals may already possess negative attitudes and a presumption of guilt toward the fact-checked target, assuming that there must be some questionable action or statement at play to be fact-checked. For instance, Pennycook and colleagues (2020) found that individuals perceived half of the false headlines that are tagged as fact-checked to be less accurate than those untagged, despite all being factually false. This initial bias can shape the impact and interpretation of fact-checking. Furthermore, one recent discouraging observation is that fact-checking, regardless of the formatting of corrective messages, tends to diminish public trust in the media overall and foster a broader sense of skepticism (Hoes et al., 2024).

More intense emotionality embedded in fact-checking posts may amplify the aforementioned mechanisms. One explanation is that emotionality functions as an emotional cue that activates emotional contagion. The affective process theory identifies emotional expressions as a trigger of emotional contagion through individual interpretations of others’ emotional expressions (Elfenbein, 2014). More intense emotional words or phrases used in fact-checking posts can more easily trigger affective responses, possibly reducing objective and rational processing of the fact-checking post’s core arguments, according to the limited capacity of cognitive capacity and dual information processing (Chaiken & Ledgerwood, 2012). Such expressed emotionality can thus indirectly influence the audience’s sentimental reactions to the fact-checked targets. Limited literature from other contexts showed emotional expressions in advertisements can impact attitudes toward the featured entity (Hasford et al., 2015; S. J.Kim & Niederdeppe, 2014). In addition, certain emotional appeals used in health misinformation corrections are shown to be more effective than other emotions or no emotions (Sangalang et al., 2019; Tao et al., 2023). In this current study, we do not intend to delve into examining concrete, discrete emotional appeals. Instead, we cast a broader perspective and ask if emotionality embedded in fact-checking posts can have any association with expressed attitudinal sentiments toward the fact-checked targets. Answering this question provides important evidence speaking to how emotional fact-checking practices may have unintended consequences on influencing the public’s attitudinal sentiment.

Research Question 1 (RQ1). How is emotionality associated with the public’s sentiment toward fact-checked targets?

Negativity Bias and Heightened Emotionality in Fact-Checked False Information

The strategic usage of emotionality in fact-checking posts may vary depending on the type of information being checked. Although fact-checking mostly targets rumors and misinformation by default, information under investigation can also turn out to be true, false, mixture (a mixture of true and false information), or remain unclear and unknown, depending on the availability of evidence.

As discussed earlier, negativity bias is a perceptual bias that drives people to pay more attention to negative events than positive ones (Rozin & Royzman, 2016; Soroka et al., 2019). The fact that negative events such as violence are over-featured in news reporting is evidence of negativity bias at the content-production level (van der Meer et al., 2019). At the consumption level, a study found that negative words in news headlines increased online click-through rates while positive words decreased consumption rates ( C. E.Robertson et al., 2023). In the social media context, social media posts written for news articles contain more subjectivity and emotionality, with a goal that is more in line with the logic of social media algorithms for attracting audience attention (Welbers & Opgenhaffen, 2019).

In the social media fact-checking context, fact-checked false information can be more prevalent than fact-checked true information. Furthermore, fact-checking posts that falsify information may contain more emotionality than those that verify true information due to the psychological and social dynamics at play. When fact-checkers report falsehoods, especially those that are emotionally charged, the fact-checking post can engage with the strong emotions initially provoked by the misinformation. Consequently, the debunking process can be more emotionally charged and more intense. Conversely, fact-checking true information may involve less emotionality. For instance, an example fact-checking post falsifying a piece of misinformation reads, “We also fact-checked President Trump’s claim that Philadelphia poll watchers ‘were thrown out.’ Trump’s accusation is full of misinformation.” However, an example post verifying true information reads, “Yes, former U.S. President Donald Trump called Russian President Vladimir Putin’s stated Ukraine strategy ‘savvy’ and ‘genius.’” The differences in word choices across these two types of fact-checking would vary widely across emotionality, and we reason that information veracity could be fact-checked which functions as a cue for negativity bias and influences the varying levels of emotionality in fact-checking posts. We thus hypothesize that the level of emotionality would be higher for fact-checked false information than for true information:

Hypothesis (H2). Emotionality varies across fact-checked information veracity, and false information is covered with more emotionality than true information.

Method

Data Collection

To collect fact-checking posts and comments on social media, we first selected a list of U.S. fact-checking organizations verified with IFCN. Among 14 verified fact-checking organizations in the United States, we included 10 organizations that have been actively reporting fact-checking stories in English. Half of the 10 fact-checking organizations are independent fact-checking organizations, including snopes.com, Check Your Fact, PolitiFact, Lead Stories, and Fact-check.org; the other half are in-house fact-checkers of established news organizations, including Reuters, The Dispatch, Washington Post, USA TODAY, and AP. We excluded four organizations for non-English content, permanent shutdown, or lack of fact-checking content at the time of data collection. Table 1 reports summary statistics on the number of fact-checking posts, comments, and followers in this dataset across 10 organizations.

Descriptions of Ten Fact-Checking Organizations and Fact-Checking Posts, Retrieved From October 2017 to October 2022.

The number of comments associated with fact-checking posts.

The number of comments retrieved for analysis. The top 25 most relevant comments were retrieved for each fact-checking post.

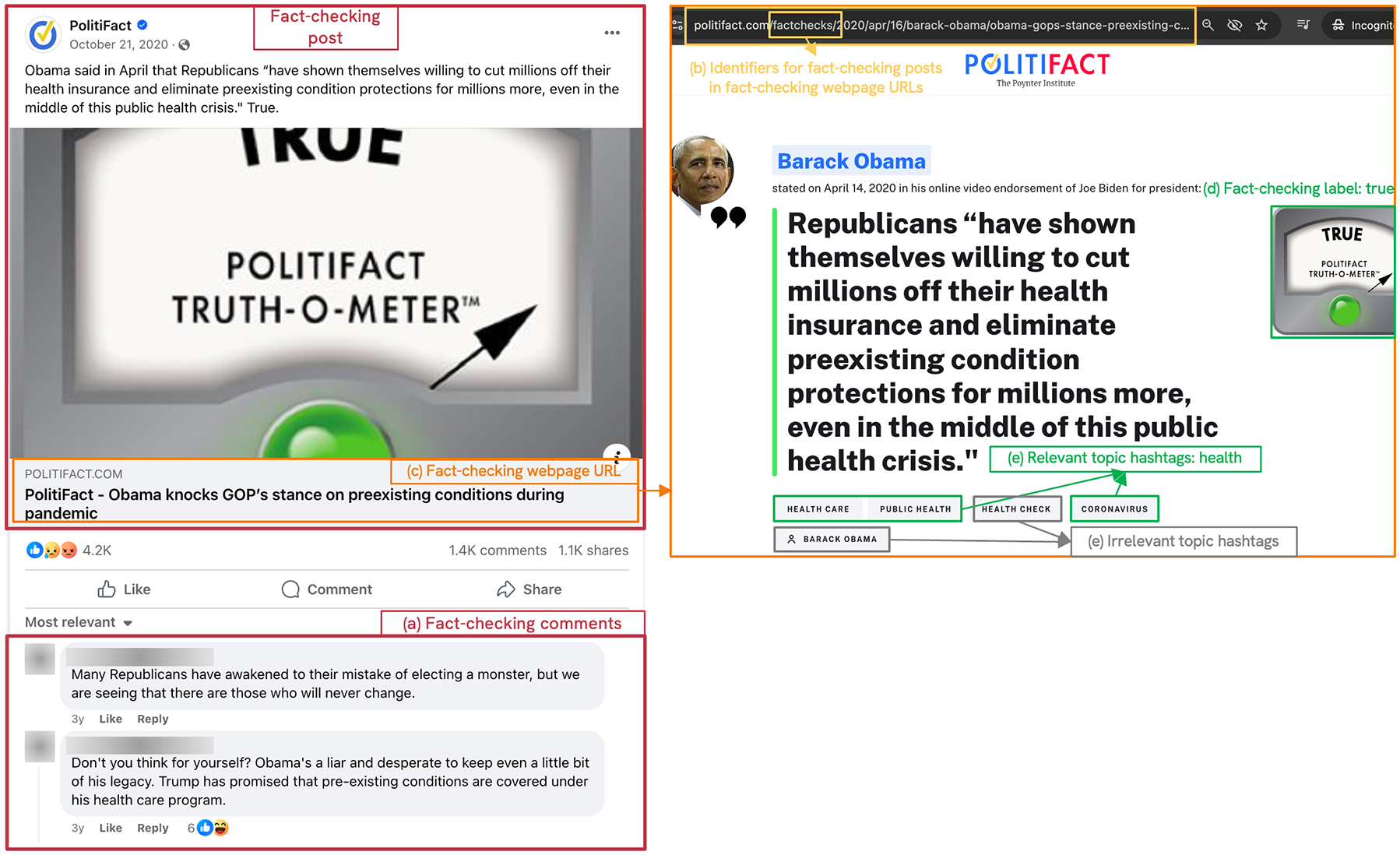

Second, we used CrowdTangle, a data-monitoring platform owned by Meta, to collect all historical posts (n = 367,907) created by the 10 fact-checking organizations on Facebook from October 2017 to October 2022. This timeframe was chosen to capture the escalation of misinformation following the 2016 presidential election and during the COVID-19 pandemic (Alba, 2020). See Figure 1 for the flow diagram of data collection, preprocessing, and annotation. We narrowed down the dataset to fact-checking posts only and excluded irrelevant posts such as the organization’s updates or job advertisements by filtering fact-checking posts based on webpage URLs containing specific fact-check-related keywords when publishing a fact-checking story. Table S1 in the Supplemental Information presents different keyword variations that were used as fact-checking identifiers across organizations. For instance, “factcheck” is a consistent keyword appearing in URLs for all fact-checking posts published by Reuters, whereas “hoax-alert” is the keyword used by Lead Stories for their fact-checking posts. In total, we collected 49,270 fact-checking posts. Figure 2 presents an example fact-checking post on Facebook, with data processing details visualized.

Flow diagram of data extraction, processing, and annotation processes.

An illustration of five kinds of data associated with a fact-checking post: (a) comments, (b) the associated URL and fact-checking identifier (i.e., fact-checks), (c) the associated fact-checking webpage, (d) fact-checking labels retrieved on the webpage, and (e) fact-checking topic hashtags retrieved on the webpage, including both relevant and irrelevant hashtags.

Third, since CrowdTangle does not provide comment data, we used Facepager (Jünger & Keyling, 2019), a web-scraping tool to retrieve the top 25 most relevant comments on each post with a newly created Facebook account. According to Facebook (2024), the most relevant comments include comments (a) from friends, (b) from verified Facebook profiles and pages, and (c) that have received the most likes and replies. While this approach does not capture all comments, it provides a representative sample of the public comments that are shown by default to potential viewers, thus widely viewed and engaged with. We retrieved 525,604 comments attached to 40,395 fact-checking posts. Eighteen percent of posts did not have comments or only had non-public comments. On average, 13 comments were retrieved for every fact-checking post (M = 13.01, SD = 9.27; min. = 1; max. = 25).

Finally, we employed Beautiful Soup (Richardson, 2007), a web-scraping library in Python, to scrape the structured fact-checking label and topic hashtag data on webpages linked to URLs on the fact-checking post (see Figure 2 for illustration). Regarding fact-checking labels, 42,126 out of N = 49,270 fact-checking posts from five fact-checking organizations (i.e., Check Your Fact, snopes.com, PolitiFact, Lead Stories, and USA TODAY) used fact-checking labels. The other five organizations do not use fact-checking labels, and therefore, 14.5% of the posts (n = 7,144) did not include any fact-checking label. From this dataset, we identified 1,832 unique fact-checking labels. The majority of these labels are used to signal and categorize the veracity of fact-checked claims, such as true, false, and mixture. Regarding fact-checking topic hashtags, 25,686 out of N = 49,270 (52.1%) fact-checking posts contained topic hashtags, from which we retrieved 1,976 unique topic hashtags encompassing various topics, public figures, and public organizations. However, irrelevant labels and hashtags also exist in the dataset. Therefore, to further refine these data, we conducted a human annotation process as follows.

Annotation of Fact-Checking Labels and Topics

Annotation of Fact-Checking Labels

The purpose of this annotation task was two-fold: (a) identify the validity of a fact-checking label (i.e., whether it signals information veracity or not) and (b) categorize the veracity of labels (i.e., false, mixture, true, and unknown). For validity, labels that denote information veracity (e.g., false, true, manipulated) were considered valid, while labels that do not indicate information veracity (e.g., satire, lost legend) were considered invalid. Of the 1,832 unique fact-checking labels, only those that have been used at least five times (n = 63) were annotated. We excluded less frequently used labels (n = 1,787; 96.6% of all unique labels) because they tend to have context-specific, ungeneralizable phrases to describe fact-checking explanations (e.g., no confession, never there). This exclusion had minimal influence on the study result because they were used in n = 2,033 fact-checking posts, accounting for only 4.3% of the whole dataset (N = 49,270).

Among the general labels (n = 63) that have been used at least five times, two trained research assistants annotated a label’s (a) validity (Krippendorff’s α = .77) and (b) veracity (Krippendorff’s α = .87). Two research assistants achieved acceptable intercoder consistency and resolved all disagreement with discussion. Finally, we retrieved 45 valid fact-checking labels: comprising 25 denoting false (e.g., altered, full-flop), 13 denoting mixture (e.g., misattributed, half-true), three denoting true (e.g., true, legit), and four denoting unknown (e.g., unproven, research-in-progress). See Supplemental Table S2 for the full list of 45 labels and Supplemental Table S3 for descriptive statistics of the 12 most frequently used fact-checking labels.

Annotation of Fact-Checking Topic Hashtags

Similarly, the same trained research assistants annotated fact-checking topic hashtags to remove context-irrelevant hashtags (Krippendorff’s α = .84). We excluded hashtags that did not pertain to any specific topics, such as public figures (e.g., Barack Obama) or topic-irrelevant locations (e.g., CDC), which could apply to multiple categories and thus were not considered independently relevant. Among 1,976 unique hashtags, 1,143 hashtags were deemed relevant. Second, these relevant hashtags were classified into general topic categories emerging from the data: politics, health, science, entertainment, and others (Krippendorff’s α = .84). Among relevant hashtags, 606 hashtags are politics-related (e.g., agriculture, BLM, migration), 164 of them are health-related (e.g., alcohol, vaccine, WHO), 129 are science-related (e.g., green energy, glacier, coal), and 52 are entertainment-related (e.g., celebrity, baseball). Others include topics such as history and religion. See Supplemental Table S4 for summary statistics.

Measures

Emotionality of Fact-Checking Posts

The emotionality of fact-checking posts is operationalized as the sentiment magnitude, a language variable calculated using the Google Cloud Natural Language Application Programming Interface (API; Google, 2022). This tool uses machine learning and natural language processing methods to calculate the overall strength of emotions expressed in a given text, which ranges from 0 (i.e., no emotion expressed) to positive infinity (i.e., extreme emotion expressed), regardless of positive or negative emotions. The emotionality of fact-checking posts ranged from 0 to 16.3 (M = 0.52, SD = 0.43).

Engagement With Fact-Checking Posts

We measured the public’s engagement with fact-checking posts considering all engagement behaviors documented on Facebook: the number of likes, comments, shares, and six emotional reactions such as Angry, Wow, and Love. All engagement behaviors are aggregated together because they all contribute to the visibility of fact-checking posts ( C.Kim & Yang, 2017). A composite variable summarizing all nine engagement behaviors is created to measure the overall engagement level. On average, a fact-checking post had an engagement score of 504.69 (SD = 1,565.40).

The Public’s Sentiment Toward Fact-Checked Targets

To measure the public’s sentiment toward fact-checked targets, we employed entity-targeted sentiment analysis by Google Cloud Natural Language API to (a) identify fact-checked targets and (b) estimate the aggregate-level sentiment toward fact-checked targets (Google, 2022). First, we employed Google Cloud Natural Language API to identify and extract entities in fact-checking posts and comments. Entities refer to proper (e.g., CDC, Barack Obama) and common nouns (e.g., president, doctor) mentioned in a given text; these entities fall into seven broad categories: person, location, organization, event, artwork, consumer product, and others (Google, 2022). See Supplemental Table S5 for summary statistics of different types of entities in fact-checking posts and comments. We define fact-checked targets as entities that appear in both a fact-checking post and its comments, representing entities that are of common interest and focus of the discourse of a fact-checking post and the audience’s comments (see Figure 3 for illustration). We were able to identify at least one fact-checked target from 18,611 fact-checking posts and their comments. In total, we retrieved 8,608 unique fact-checked targets, such as trump, facebook, and covid. The most commonly mentioned fact-checked targets were Trump (mentioned in n = 1,074 fact-checking posts), evidence (n = 378), Facebook (n = 369), Biden (n = 315), president (n = 278), and COVID (n = 205). On average, every fact-checking post and its comments mentioned 2.1 fact-checked targets (median = 2; SD = 1.50).

An illustration of retrieving fact-checked targets in a fact-checking post and associated comments.

The public’s sentiment toward fact-checked targets is then operationalized as the entity-targeted sentiment score, defined as “the overall emotional leaning in the text toward the specific entity” (Google, 2022). It ranges from −1 to 1, where −1 indicates extreme negativity, and 1 indicates extreme positivity. The interpretation of a sentiment score of 0 depends on a closely related variable––the sentiment magnitude––which quantifies the overall strength of emotions toward a specific entity. With a sentiment magnitude of 0, no public emotion is expressed toward a specific entity; therefore, a sentiment score of 0 represents the absence of emotion. Conversely, with a non-zero sentiment magnitude, a sentiment score of 0 reflects a balanced appearance of both positive and negative emotions associated with an entity, canceling each other out. Thus, the entity-targeted sentiment score can be interpreted as the public’s sentiment toward fact-checked targets. Overall, the public’s sentiment toward fact-checked targets was reflected in the comments with moderately strong sentiment magnitude (M = 0.30, SD = 0.50) and negative-leaning sentiment score (M = −0.04, SD = 0.17).

Covariates

We include three important covariates, discussed in the following sections, in this study because they may potentially influence and confound the engagement with and the effectiveness of fact-checking posts.

Fact-Checking Post Length

The length of a fact-checking post is calculated as the number of words in the text section of a fact-checking post on Facebook (M = 11.91, SD = 4.05).

Posting Time

The year that a fact-checking post was posted is included as a covariate because fact-checking posts tend to be time-sensitive.

Followers at Posting

The number of followers that a fact-checking organization had at the time of posting is included to control for the influence of a fact-checking organization’s popularity (M = 788,751, SD = 855,532).

Statistical Analysis

To test H1 about the positive relationship between emotionality and public engagement with fact-checking posts, we conducted a negative binomial regression model with the sum of 9 engagement metrics on Facebook as the dependent variable and the emotionality in fact-checking posts as the independent variable. We chose negative binomial models because the data are overdispersed (dispersion = 1731.15, p < .001), and only 2.91% (n = 1,435) of the dataset has zero engagement values. In addition to the unadjusted model, we included five covariates in the adjusted model: fact-checking post length, posting time, the number of followers, fact-checking label veracity, and fact-checking topic.

To answer RQ1 about the relationship between emotionality and the public’s sentiment toward fact-checked targets, we conducted a linear regression model, with the public’s sentiment toward fact-checked targets in comments as the dependent variable and emotionality as the independent variable. In the adjusted model, in addition to including the five control variables mentioned earlier, we also included the sentiment toward fact-checked targets in the fact-checking posts in the analyses (using the same analytical approach as for calculating public sentiment toward fact-checked targets from the comments).

To test H2 about the variation of emotionality across fact-checked information veracity, we conducted a linear regression model, with emotionality as the dependent variable and fact-checked information veracity as the independent variable. The same five control variables were included in the adjusted model.

Results

From 2017 to 2022, we observed a small increase in the emotionality level in fact-checking posts on Facebook (b = 0.0003, SE = 0.0001, 95% CI = [0.0001, 0.0004], p = .006, n = 265), indicating that emotionality has increased 13.5% over time (Figure 4). Moreover, we observed several local maximums of emotionality—emotionality spikes with exogenous events such as the presidential and midterm elections and the outbreak of COVID-19. In addition, we observed emotionality in fact-checking posts varied across the two fact-checking organization types. A two-sample t-test showed that editorial organizations (M = 0.64, SD = 0.44) used more emotionality than independent organizations (M = 0.52, SD = 0.43; p < .001). Furthermore, a linear regression validated the lower emotionality in fact-checking posts from independent organizations (b = −0.13, SE = 0.04, 95% CI = [−0.21, −0.05], p = .001), when post length, posting time, and followers at posting were controlled.

The changes in emotionality in fact-checking posts over time (October 2017—October 2022).

H1 hypothesized that emotionality is positively associated with public engagement. Results of negative binomial regression models showed that emotionality was positively associated with overall engagement with fact-checking posts, with or without covariates controlled. See Figure 5 for illustration and Supplemental Table S6 for model coefficients. As for exploratory purposes, we also examined the association between emotionality and all nine engagement metrics, respectively, finding that emotionality positively indicated all engagement metrics with covariates controlled or not, except that emotionality did not significantly predict the “Love” reaction when covariates were not included (Supplemental Table S7). Overall, H1 was supported.

Regression coefficients and confidence intervals of emotionality in fact-checking posts on public engagement.

RQ1 asked about the relationship between emotionality and the public’s sentiment toward fact-checked targets. Results showed that emotionality was negatively associated with the public’s sentiment toward fact-checked targets with or without covariates included in the model. See Figure 6 for illustration and Supplemental Table S8 for model coefficients. Consistently across unadjusted and adjusted models, emotionality was negatively associated with the public’s sentiment toward fact-checked targets.

Regression coefficients and confidence intervals of emotionality in fact-checking posts on public’s sentiment toward fact-checked targets, when all fact-checking information veracity categories are included (i.e., combined), and when it comes to fact-checked false, mixture, true, and unknown information.

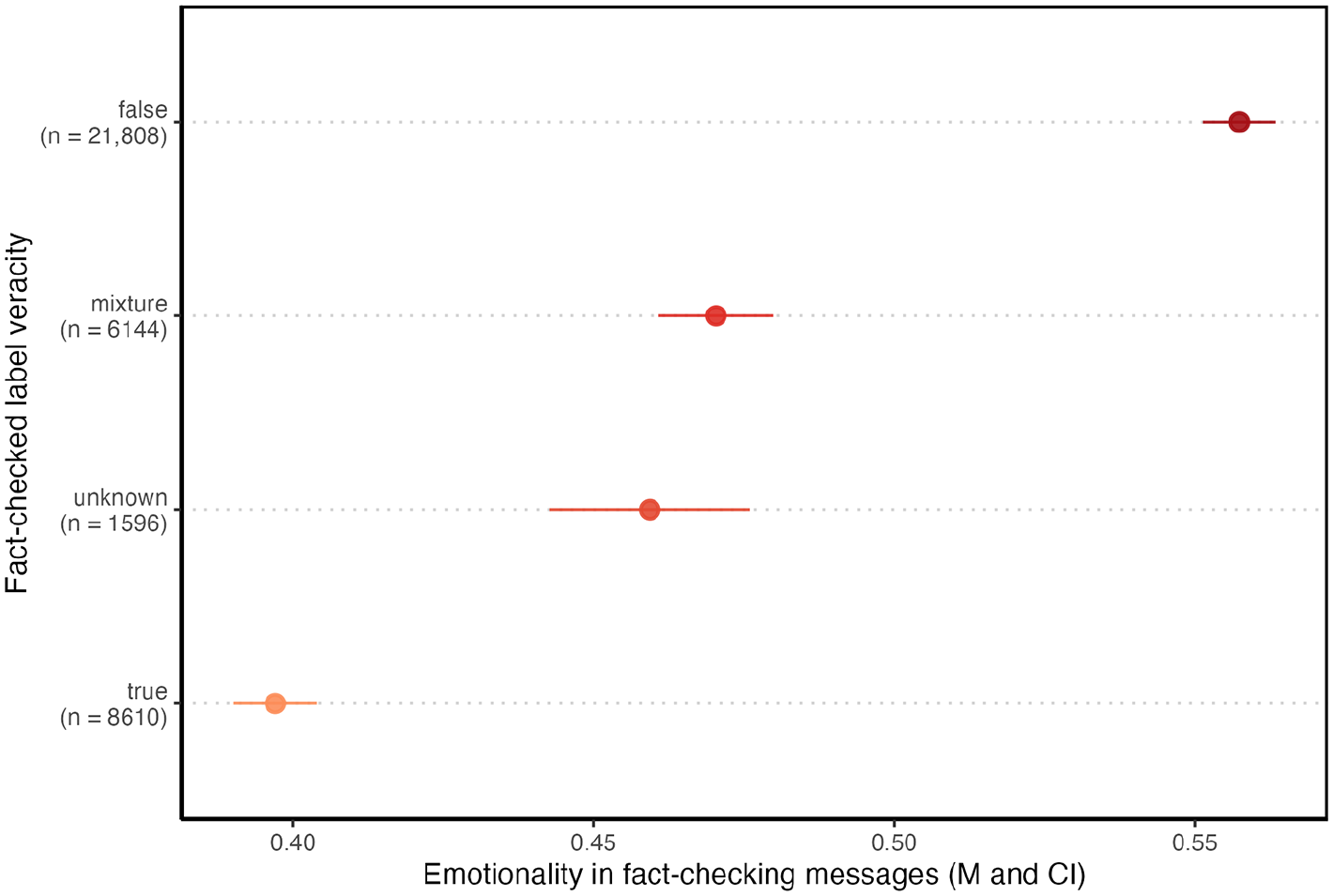

H2 hypothesized that emotionality varies across fact-checking information veracity, such that false information is covered with more emotionality than true information. See Figure 7 for illustration and Supplemental Table S9 for model coefficients. Linear regression models supported this hypothesis: Fact-checked true information had lower levels of emotionality (b = −0.22, SE = 0.01, 95% CI = [−0.24, −0.21], p < .001) than fact-checked false information, with or without covariates adjusted. Emotionality for false information (M = 0.55, SD = 0.46) was considerably higher than emotionality for true information (M = 0.40, SD = 0.33), mixture (M = 0.47, SD = 0.38), or unknown information (M = 0.46, SD = 0.33). H2 was supported.

Means and confidence intervals of emotionality in fact-checking posts across fact-checked information veracity.

Furthermore, we investigated how fact-checking information veracity moderated the relationship between emotionality, public engagement (Supplemental Table S10), and public sentiment (Supplemental Table S11). The positive relationship between engagement and emotionality was significantly smaller for true and unknown information. Surprisingly, we found that emotionality was negatively associated with the public’s sentiment toward fact-checked targets for both fact-checked true and false information, while emotionality was positively associated with the public sentiment toward unknown information only. This result suggests that the use of emotional language in fact-checks may potentially lower the public’s sentiment toward fact-checked true information. We further examined this relationship across different topics (Supplemental Figure S1) and found that emotionality was consistently negatively associated with public sentiment across four topics. Specifically, this negative association only held for fact-checked false information in politics, entertainment, and science topics. Additional sensitivity analyses using different annotation schemes of the label veracity showed consistent findings (see Supplemental Tables S12–S17 for details).

Discussion

The present study is motivated by the pressing debate concerning to what extent professional communicators like fact-checking organizations should use emotional language to engage the public while maintaining professionalism and cultivating an informed public. While related studies have examined the tactics and strategies for engaging the public on social media by journalists, public campaign organizers, and governmental officials, the present study extends this line of inquiry to the context of fact-checking organizations (H. S.Kim et al., 2022; Lee & Britt, 2023; X.Zhang & Fu, 2022). We conducted an observational study that examined how emotionality in fact-checking posts was associated with public engagement and public sentiments toward fact-checked targets from 2017 to 2022, using a comprehensive dataset of 49,270 fact-checking posts and associated comments on Facebook from 10 IFCN-signified fact-checking organizations in the United States. Our research design provides the most comprehensive fact-checking datasets to date, including both social media posts and comments in 5 years. Through theory-driven manual annotation and automated text analyses, we reveal the following empirical insights and elaborate on both theoretical and practical implications discussed in the following section.

First, our results reveal a rising trend of using emotionality in fact-checking reporting. This finding corroborates the emotional turn of journalism (Wahl-Jorgensen, 2020), providing observational evidence on the softening of journalism on social media (Lamot, 2022). This finding provides intuitive evidence of the impact of social media metrics on reporting, which influences journalistic decisions not only on issue coverage (e.g., Mukerjee et al., 2023) but also on language use. Meanwhile, an unexpected finding is that editorial fact-checkers used more emotional language than independent fact-checkers. This contradicts prior assumptions that editorial fact-checkers uphold a higher level of journalistic standards (Humprecht, 2020), while independent fact-checkers with fewer resources are expected to adopt a more aggressive approach on social media (Dafonte-Gómez et al., 2022). One plausible explanation is that the news organizations exhibit more partisan inclinations, and their media product—whether a news story or a fact-checking report—is partisan-laden. For example, Soo and colleagues (2023) found that BBC, an established editorial fact-checker in the UK, focused more on political issues and produced fact-checking ratings and interpretations that aligned with mainstream political agendas. Such an effort could be reflected in emotional fact-checking posts with clear partisanship. On the contrary, independent fact-checkers approach misinformation “beyond the political party’s political agenda” (Soo et al., 2023, p. 475), with more financial independence and editorial transparency (Humprecht, 2020). Independent fact-checkers, which are less institutionally established, may adhere more closely to traditional journalistic conventions to solidify their credibility among audiences. It is caused by their business and professional contexts. As Mahl et al. (2024) highlight, independent fact-checkers often rely on diverse funding sources such as partnerships with digital platforms, consulting, or academia, which impose multiple constraints on their operations, including topic selection and language use. Conversely, established fact-checking operations within resource-rich news organizations benefit from institutional trust and stability than independent news workers (Lindner et al., 2015), allowing for greater flexibility, including the adoption of more emotional tones that may align with their editorial stances. For example, editorially aligned outlets may even openly endorse political candidates, which could foster a more expressive communication style. These factors illustrate the nuanced professional and economic realities that shape the observed patterns in fact-checker emotionality.

Second, we found that emotionality in fact-checking posts is positively associated with public engagement, consistently across nine Facebook engagement metrics. This finding is consistent with the framework of viral emotion (e.g., Berger, 2011; Nelson-Field et al., 2013; Stieglitz & Dang-Xuan, 2013). It can be explained by the heuristic systematic model that people pay more attention to messages with higher and more arousing emotions, especially in the fast-paced social media environment. Specifically, among all nine engagement metrics, sharing shows one of the strongest positive associations with emotionality, which highlights the activating role of emotionality in activating individual behaviors such as information sharing (Berger & Milkman, 2012). With a more comprehensive dataset in terms of data volume, time span, and fact-checking organization variety, this study corroborates and expands a recent finding that showed emotionality in fact-checking Tweets was positively associated with the number of replies (Lee & Britt, 2023). Overall, emotionality as a fact-checking strategy is effective in increasing public reach, visibility, and engagement. Meanwhile, it is necessary to recognize that emotionality can draw attention to both factual information and misinformation within fact-checking posts. To address potential spillover effects stemming from repeated exposure to misinformation, fact-checking organizations should carefully balance the goal of increasing visibility with mitigating unexpected consequences.

We observed a negative association between emotionality and the public’s sentiment toward fact-checked targets. Relying on entity-targeted sentiment analysis, we provide precise insights into how emotionality can systematically influence the public’s sentiment toward the fact-checked targets. Intriguingly, we observed a negative association, revealing that high emotionality was associated with more negative sentiments toward the target. Given that the majority of fact-checked claims are indeed false, this sentiment-damping effect may be argued as beneficial for persuasion against misinformation claims.

However, we observed the same negative association between emotionality and public sentiment when fact-checked claims turned out to be true. Although fact-checking organizations reveal the authenticity of fact-checked information, we observed more negative public sentiment toward fact-checked targets when higher emotionality is used in fact-checking posts. This finding suggests a potential spillover effect of emotionality in intensifying the public’s pre-existing negative evaluations of rumors that turn out to be true. This spillover effect might be explained by the expectancy violation theory (Burgoon, 2015). People often possess a cognitive bias to expect fact-checked claims to be false or at least suspicious (Park et al., 2021). When these claims are confirmed to be true, people experience expectation violation and consequent negative emotions and sentiments and are motivated by the message elaboration (Siegel & Burgoon, 2002). In this process, emotionality in fact-checking posts may intensify the expectation violation and dissonance, leading to a more general negative attitude.

Our study supports the negativity bias hypothesis, such that fact-checkers covered false information with more emotionality than factually true information on social media. In addition to the editorial agenda mentioned earlier, this finding reveals another important factor influencing fact-checking practices. It extends the previous finding that negative events are overreported in the news (Soroka & Carbone, 2022), and negative bias exists in language use in fact-checking practices as well. Although negativity bias is a cognitive bias inherent in human nature, this bias reflected in fact-checking practices may elicit a spillover effect for fact-checked true information. Taking these findings’ implications altogether, our finding suggests that emotion-laden messages may add unnecessary complexity and negate fact-checking’s credibility and effectiveness.

Our findings have implications for how fact-checking organizations communicate their results, particularly in the emotionally charged social media environment. While emotionality may enhance the salience of misinformation, our results suggest that its overuse could unintentionally intensify negativity toward fact-checked entities, even when the claims are true. This spillover effect underscores the need for a balanced approach in fact-checking communication. Drawing from Schudson’s (1998) notion of monitorial citizens—who are less likely to participate in conventional political activities yet critical of the political system and remain interested in politics and public life—we advocate for messaging strategies that engage the public without fostering excessive skepticism or distrust. A communication style overly driven by negativity bias, especially one influenced by engagement-driven algorithms, would harm public trust in both power elites and the institutions that monitor them. Thus, fact-checking organizations should consider the nuanced impacts of their emotional tone to sustain public confidence in truthful information and the broader fact-checking process.

Our findings can be understood in a broader political and journalistic environment of the fact-checking industry in recent years. In a recent interview study with fact-checking practitioners, Graves et al. (2023) found that the fact-checking industries are switching from the public-informed model to the public health model, that is, the fact-checkers should inform the public not only to distinguish the truth from falsehood but also to police the complex information environment, preventing the public from being exposed to misinformation. In such a process, the fact-checkers may “manage” the audience’s behaviors rather than simply “inform” the audience (Graves et al., 2023, p. 14). Furthermore, our study suggests that fact-checkers become more proactive in the debunking process, as reflected by the usage of stronger emotional language. When such a practice raises the profile of fact-checking organizations and attracts public attention to combat misinformation, heightening the emotionality may elicit unintended effects and compromise fact-checking effectiveness, especially for fact-checked true information. Therefore, we call for more attention to this emotional turn driven by social media and larger political and economic pressures. The findings from this research may serve to help design and implement more evidence-based fact-checking strategies for the public good.

Furthermore, the present study enriches an intellectual deliberation on the role of public engagement and publicity in the battle against misinformation. Our discussion on the activating role of emotionality in promoting public engagement is based on a controversial assumption: public visibility and engagement are desirable for fact-checking organizations as important players in the public communication sector (Lewis & Molyneux, 2018). Yet, this logic is questionable in the current digital public sphere, as it remains debatable whether fact-checkers should actualize the democratic role by deferring to the platform’s metrics that are controlled and (could be) manipulated by private companies (van Dijck et al., 2018). In addition, extensive exposure to fact-checking posts and repetition of misinformation may elicit potential spillover effects, increasing misbeliefs while decreasing general trust in the news industry and public institutions at large (van der Meer et al., 2023).

Limitations and Future Directions

This study is not without limitations. First, our operationalization of public engagement as engagement metrics on Facebook is not comprehensive and is limited within the social media sphere. Public engagement is a broader term in journalism, comprising both reception-oriented and production-oriented audience engagement (Nelson, 2021). However, social media metrics capture reception-oriented engagement only, while production-oriented public engagement with fact-checking and journalism is not considered. With the rise of grassroots fact-checking and citizen journalism (Chang et al., 2021; Zeng et al., 2019), future studies may incorporate citizen fact-checking productions as an important element of public engagement.

Second, we use entity-targeted sentiment analysis to narrow down and approximate the public’s sentiment toward fact-checked targets using comments attached to the posts. While this tool has been used in previous research (e.g., Ding et al., 2018), the calculated sentiment score does not capture the full attitudinal space. Besides, while this approach provides an accurate estimate of public opinion through entity-targeted sentiment analysis, it is still limited in its ability to capture contextual meanings and sentiment-irrelevant public opinions. Despite challenges in identifying fact-checking targets with ambiguous references in comments, it still represents an automated, accurate approach to capturing public opinion. Based on the study’s findings and methodological limitations, future research should employ mixed-method approaches such as surveys, experiments, and qualitative approaches to deepen our understanding of audiences’ complex reactions and processing of emotional markers in fact-checking messages on social media. Human annotation of more comprehensive samples of comments (e.g., using the Meta Content Library API) can also be implemented to enhance the validity and robustness of future research.

The third limitation of this study lies in the imbalanced data distribution. The substantial difference in the number of fact-checking posts across editorial (e.g., Reuters) and independent (e.g., Snopes) fact-checking organizations may constrain the generalizability of the study’s findings. While such disparity reflects real-world distribution, the study’s findings are predominantly influenced by data from independent fact-checking organizations, such as Snopes.com. Besides, the comparison across veracity labels could be constrained by the imbalanced distribution of false and true labels. Future research could build on this study by incorporating a more balanced and comprehensive dataset to provide a broader and more representative overview of engagement patterns across organizations or fact-checking labels.

Finally, this study is an observational analysis of social media data, providing correlational evidence on the role of emotionality in fact-checking posts. Future research may provide more causal evidence on the effects of emotionality in the fact-checking context with experiments.

Conclusion

Our study on fact-checking posts and comments on Facebook from 2017 to 2022 revealed significant associations between emotionality, public engagement, and public sentiment, as well as the moderating influence of fact-checked label veracity. These findings suggest that using emotionality to increase public engagement may compromise the original function of fact-checking and fact-checking effectiveness. As we observe the increasing emotional turn in social media news and fact-checking reporting, the findings call for more attention from both academia and the industry to critically assess both the foreseeable and unintended effects of fact-checking on individuals and society at large. More research and practical efforts need to be done to better illuminate the roles of social media fact-checking in influencing public knowledge and opinion to inform more effective designs of fact-checking practices and policies in an increasingly complex information ecosystem.

Supplemental Material

sj-docx-1-sms-10.1177_20563051251318172 – Supplemental material for Facts or Feelings? Leveraging Emotionality as a Fact-Checking Strategy on Social Media in the United States

Supplemental material, sj-docx-1-sms-10.1177_20563051251318172 for Facts or Feelings? Leveraging Emotionality as a Fact-Checking Strategy on Social Media in the United States by Haoning Xue, Jingwen Zhang and Xinzhi Zhang in Social Media + Society

Footnotes

Data Availability Statement

The data underlying this article will be shared on request to the corresponding author.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.