Abstract

The stigmatized nature of nonsuicidal self-injury may render TikTok, a short-form, video-sharing social media platform, appealing to individuals who engage in this behavior. Since this community faces biased scrutiny based on stigmatization surrounding mental health, nonsuicidal self-injury users may turn to TikTok, which offers a space for users to engage in discussions of nonsuicidal self-injury, exchange social support, experience validation with little fear of stigmatization, and facilitate harm reduction strategies. While TikTok’s Community Guidelines permit users to share personal experiences with mental health topics, TikTok explicitly bans content that shows, promotes, or shares plans for self-harm. As such, TikTok may moderate user-generated content, leading to exclusion and marginalization in this digital space. Through semi-structured interviews with 8 TikTok users and a content analysis of 150 TikTok videos, we explore how users with a history of nonsuicidal self-injury experience TikTok’s algorithm to engage with content on nonsuicidal self-injury. Findings demonstrate that users understand how to circumnavigate TikTok’s algorithm through hashtags, signaling, and algospeak to maintain visibility while also circumnavigating algorithmic detection on the platform. Furthermore, findings emphasize that users actively engage in self-surveillance, self-censorship, and self-policing to create a safe online community of care. Content moderation, however, can ultimately hinder progress toward the destigmatization of nonsuicidal self-injury.

TikTok is a short-form, video-sharing social media platform designed for users to interact with and through user-generated content. TikTok’s recommendation algorithm curates a collection of videos for an individual user’s “For You Page” through user engagements with videos, including lingering rates, likes, comments, and shares (TikTok, 2019). By constantly learning users’ content preferences, TikTok’s recommendation algorithm becomes visible to users through customized content feeds. In doing so, TikTok blurs the distinction between consumers and producers, meaning users of the social media platform are equally producers and users of content who observe and experience the algorithm (Klug et al., 2021; Omar & Dequan, 2020).

While TikTok’s recommendation algorithm increases the discoverability of user-generated content, its algorithm strips users a level of control over what they may view on their “For You Page” (Simpson & Semaan, 2021). Studies have shown that TikTok may restrict access to content, block users through the practice of reduction (Gillespie, 2022), and suppress marginalized populations by quelling content from creators who identify as LGBTQIA+ (Simpson & Semaan, 2021), Black (Brown, 2021), and disabled (Botella, 2019) as an anti-cyberbullying measure (MacKinnon et al., 2021), leading to algorithmic exclusion in this digital space. Algorithmic exclusion describes how algorithms adversely impact populations who do not engage in participatory norms (Simpson & Semaan, 2021), suggesting that marginalized populations will always need to navigate barriers to access and engage with information on any social media platform, with TikTok merely the latest iteration.

One population that may experience algorithmic exclusion on TikTok includes individuals who engage in nonsuicidal self-injury (NSSI) who commonly turn to social media platforms as a response to stigma in their offline environment (Seko & Lewis, 2018). NSSI is the act of intentionally injuring one’s body tissue through cutting, scratching, burning, or bruising for nonsuicidal purposes (Klonsky et al., 2014). Social media platforms like TikTok have long afforded individuals who engage in NSSI a refuge to engage in discussions of NSSI, exchange social support, experience validation with little fear of stigmatization, and facilitate harm reduction (Dyson et al., 2016). However, like any algorithm mediating a social media platform, TikTok’s algorithm includes biases and reinforces social norms and stereotypes, including social contagion claims.

Social contagion suggests that exposure to NSSI content on social media can cause users to engage in self-injurious behaviors. While previous literature has suggested that social contagion may increase the risk of initial engagement in NSSI (Jarvi et al., 2013), social contagion may not be unidirectional, as the relationship between social media and NSSI is influenced by both the exposure to online NSSI content and users’ pre-existing behaviors (Lavis & Winter, 2020; Sedgwick et al., 2019). Consequently, it is essential to contextualize users’ engagements with NSSI content on social media, which may be rooted in offline experiences. Contextualizing users’ online engagements may then instead point to the relevance of Joiner’s (2003) assortative relating model, which posits that users who possess similar qualities or experiences, including NSSI, are more likely to form relationships. Even so, TikTok may moderate user-generated NSSI content based on a social contagion model, similar to other platforms such as Instagram and Facebook, which explicitly ban content that depicts NSSI (Smith & Cipolli, 2022). According to TikTok’s Community Guidelines, the platform does not allow physical acts, promotion, or expression of plans to engage in NSSI, including any challenges, dares, games, hoaxes, and pacts related to participating in NSSI. While the platform states that users are permitted to share messages and stories of lived experiences in overcoming NSSI struggles and urges (e.g., instilling hope and sharing recovery stories), as well as prevention strategies (e.g., warning signs and where to seek help) (Thorn et al., 2023; TikTok, 2023a), binarizing NSSI content into either helpful or harmful based on perceived outcomes can venture into deficit framing, as NSSI content is highly contextual. In doing so, TikTok undermines the validity of individuals’ experiences with NSSI, further reifying deficit-based notions that view these individuals as failed bodies.

Literature Review

NSSI and Social Media Platforms

Research has long documented adolescents and young adults as the most at-risk population for NSSI (Klonsky et al., 2014; Seko & Lewis, 2018), while current research has reported elevated risks among LGBTQIA+ youth (Seko et al., 2015). While there are multiple, simultaneous, and evolving reasons behind engaging in NSSI, the behavior is primarily considered a personal coping mechanism used to regulate unwanted affective experiences (Edmondson et al., 2016). Self-injurious behavior is highly stigmatized (Seko et al., 2015). Studies have suggested that individuals engaging in NSSI are reluctant to seek help from formal services or health professionals and instead commonly turn to informal networks consisting of peers (Fortune et al., 2008). The sensitive and stigmatized nature of NSSI may thus render social media and the formation of online “interest-driven communities” (boyd & Ellison, 2007) appealing to individuals who engage in the behavior, particularly as social media platforms offer the potential for anonymity, assurance, support, and the creation of communities of users who are coping with similar experiences (Alvarez, 2021; Guccini & McKinley, 2022; Michelmore & Hindley, 2012).

Information created and shared within these online communities often serves as a means to connect with other users and exchange social support. Social media, therefore, can be considered an atmosphere that channels different affects because it allows people to convene on topics that may otherwise be silenced offline. Affect is a subconscious influence that molds an individual’s experience between and among others through media (Hynnä et al., 2019). Understanding the power of existing in a spatialized atmosphere (e.g., TikTok), whereby users venture into an online environment and come across other users, is a precursor to understanding how social media is used in mental health care (McCosker, 2018; Tucker & Goodings, 2017), as seen on platforms like Elefriends (based in the United Kingdom) and beyondblue (based in Australia). Although it takes new users time to acclimate to the online community, as in any other atmosphere, Elefriends was designed to provide an open forum for users to feel supported and validated (Tucker & Goodings, 2017). Similarly, beyondblue establishes peer mentors as mental health influencers to provide empathetic non-professional care, reframe recovery strategies, and generate rapport among users. These two online communities provide evidence of the benefits of a digital atmosphere for users struggling with similar mental health issues in providing a community of care, social support, and peer advocacy (McCosker, 2018).

Previous studies have examined NSSI communities and the content they produce and reproduce across various social media platforms, often framing NSSI content into potential benefits and harms (Dyson et al., 2016; Lewis & Seko, 2016). Researchers exploring the benefits of NSSI communities have suggested that information created and shared within these communities commonly focuses on disclosing experiences of NSSI (Guccini & McKinley, 2022), sharing stories of recovery (Seko & Lewis, 2018), and seeking and providing emotional support (Lavis & Winter, 2020). Alternatively, researchers have positioned the potential harms of online NSSI communities through digital self-harm. Digital self-harm conceptualizes the online communication and activity that leads to, supports, or exacerbates nonsuicidal but intentional harm (Pater & Mynatt, 2017). Social media platforms, such as Instagram and Tumblr, have been cited in the literature as mediums that may exacerbate digital self-harm by influencing viewers to partake in NSSI. For example, users may normalize acts of NSSI to cope with mental health issues or describe the best instruments to use (Pater & Mynatt, 2017). Users may not intend to harm their viewers directly by creating NSSI content. Instead, these users may flock to social media for the betterment of the community to share recovery stories, raise awareness, or feel a connection with like-minded individuals.

While some users create content that pathologizes NSSI (Pater & Mynatt, 2017), others may post content about harm reduction strategies (Preston & West, 2022; Woolley, 2015). Harm reduction is a public health intervention that assures all persons are given safer opportunities to engage in high-risk behaviors. Many harm reduction programs already exist, such as free syringe service programs to allow injection drug users access to clean needles to reduce the spread of blood-borne pathogens (Substance Abuse and Mental Health Services Administration, 2022), fentanyl testing strips to check the presence of fentanyl of a substance before ingestion (Centers for Disease Control and Prevention, 2022), or naloxone kits to revive users who may have overdosed (Patel, 2020). These strategies are no different than wearing a seatbelt in the car or wearing a helmet while riding a bike. Within NSSI communities, harm reduction strategies include sterilizing the skin and instruments, dressing wounds appropriately, and making surface-level cuts rather than deep cuts (Woolley, 2015). Harm reduction strategies can reduce stigma, increase education and awareness, connect users to support groups and medical services, and allow NSSI to be practiced safely (Schroeder & Zapata-Alma, n.d.). Rather than treating NSSI as a moral failing and censoring NSSI content on social media, platforms should turn to a harm reduction approach to acknowledge these users’ feelings and reduce biases associated with NSSI (Coulson & Hartman, 2022). Harm reduction strategies do not encourage people to engage in these behaviors more but rather recognize that people who are committed to engaging in certain behaviors will still participate regardless of the presence of safety measures (Coulson & Hartman, 2022). Differentiating between users’ intent behind NSSI content is paramount to creating safer online platforms and reducing stigmatization among NSSI communities (Pater & Mynatt, 2017).

Stigma of NSSI

To understand why some identities and behaviors are stigmatized, we must first understand the structural preconditions of stigma. Perceived characteristics that are considered “not normal” according to socially constructed norms are deemed shameful or stigmatized, such as skin color, mental illness, physical disability, prostitution, sexual orientation, or drug addiction (Goffman, 1986). The creation of stigma stems from four converging factors. First, stigma can be considered a marker to label members of a society that deviate from established norms. Second, these labels link certain social demographics, deformities, illnesses, or behaviors to feelings of prejudice toward individuals possessing traits that are considered abnormal within a society. Third, this prejudice leads to the creation of a stigmatized segment of society, fabricating an “us-versus-them” mentality. Fourth, the stigmatized nature surrounding those labeled as “them” leads to disadvantage and discrimination (Lucas & Phelan, 2012). In essence, stigma is about control of power in which one group successfully holds power over another group via devaluation, dehumanization, scapegoating, or oppression, showcasing the social inequality created by power differentials (Tyler, 2020). Thus, stigma ties together the psychology of stigma (e.g., effect on members of a society) to the sociology of stigma (e.g., the political state of society) that perpetuates the labeling, stereotyping, separating, and discriminating against others (Link & Phelan, 2014). Through stigmatization, people in power create three outcomes in society: keeping people down, keeping people in, and keeping people away. These three motivations are the foundation of the stigma we see today on how people should physically look and act (Link & Phelan, 2014).

With the history of stigma attached to mental illness, NSSI communities continue to face scrutiny (Burke et al., 2019). In response, these communities have established their own collective norms of information practices, which are determined by the social context in which the community is situated. Users who engage in NSSI must be discreet about seeking, sharing, creating, and using NSSI content in online spaces. Since content moderators and moderation algorithms are known to remove, flag, or discriminate against stigmatized individuals and content (Haimson et al., 2021), users in NSSI communities must avoid algorithmic detection by using informed strategies to maintain visibility online (Lingel & boyd, 2013).

Content Moderation of NSSI on TikTok

TikTok’s Algorithm as We Understand It

There are limited studies focused on analyzing TikTok’s “For You Page” algorithm (Bandy & Diakopoulos, 2020; Klug et al., 2021; Simpson & Semaan, 2021). Unlike other social media platforms, TikTok does not generate content based on the accounts a user follows. Instead, TikTok’s recommendation algorithm curates the content on an individual user’s “For You Page.” The “For You Page” is personalized for each user and is shaped using a combination of factors, including user interactions on the platform, video information, and account settings. These factors are processed and weighted by the recommendation algorithm to determine the likelihood of a user’s interest in a particular type of content and then ranked and delivered to a user’s “For You Page” (TikTok, 2019). The algorithm uses natural language processing to classify text and audio elements in videos and computer vision technology to locate and categorize visual objects. In combination with hashtags and captions, this information is used to determine whether a video is recommended to other users. Initially, new videos are shown to a small group of users to gauge their interactions, and if they respond favorably, the video is recommended to a larger audience (Klug et al., 2021).

Recent legislative developments, including the Digital Service Act (DSA) in Europe, introduce a new dimension to our understanding of TikTok’s recommendation algorithm. The DSA enables European users to turn off personalization so that their “For You Page” instead recommends a mixture of locally relevant and globally popular videos rather than content based on personal interests (Moncrieff, 2023). This significant shift in user control over content recommendations is intended to provide users with additional tools and greater choice over their experience on digital platforms like TikTok, including enhanced transparency of platforms’ approaches to areas such as recommendation systems and content moderation. The DSA’s impact on the moderation and recommendation of NSSI content on TikTok is nuanced. While the DSA requires large online platforms like TikTok to manage the systemic risk of disseminating harmful content, allowing users to disable personalization may make it more difficult for TikTok’s moderation algorithm to use individualized data to filter out potentially harmful content. However, DSA also offers greater transparency in content moderation and options to appeal content moderation decisions. DSA’s focus on transparency and accessible dispute mechanisms not only enhances users’ abilities to understand and refute content moderation decisions but also holds platforms like TikTok accountable for their practices (Moncrieff, 2023).

Content Moderation and TikTok’s Community Guidelines

Originating as a video-sharing host in China known as ByteDance, TikTok has become the largest social media platform for adolescents and young adults in the United States today (Anderson et al., 2023). With about 60% of its users born after 1996, there have been growing safety and privacy concerns (Franqueira et al., 2022), particularly surrounding minors (De Leyn et al., 2022). To address these concerns, TikTok has begun producing quarterly reports on videos that have been removed from its platform. In 2022, TikTok reported minor safety as the largest violation of its Community Guidelines, with suicide, self-harm, and disordered eating accounting for approximately 2.8% of violations. Of these violations, suicide and self-harm accounted for 96.9% of content removal, with disordered eating accounting for the remaining 3.1% (TikTok, 2023b).

Content moderation is the “organized practice of screening user-generated content posted to Internet sites, social media, and other online outlets” (Roberts, 2014, p. 12). TikTok employs a commercial approach to moderation, wherein moderation is applied platform-wide to users universally. TikTok uses a combination of automated and manual moderation to enforce its Community Guidelines. Videos uploaded to TikTok are first screened by automated systems to check for potential violations of Community Guidelines by analyzing elements such as text, images, and audio. According to TikTok (2024), if a clear violation is detected, the content is automatically removed. If additional review is necessary, the content is sent to human moderators. Some content may be temporarily restricted until human moderators can review it. Human moderators review content that has been detected by the automated moderation system, as well as content flagged by users. Moderators assess whether the reported content violates TikTok’s Community Guidelines and recommend further actions, such as removing or restricting access to the content.

Social media users remain critical to the functioning of content moderation (Crawford & Gillespie, 2016), often due to the black-boxed nature of moderation algorithms (West, 2018). Platforms like TikTok primarily rely on black-box machine learning mechanisms to predict, classify, and filter content, which may introduce errors (Zeng & Kaye, 2022), as these automated content detection tools focus on a binary representation that is not precise enough to understand the nuances of user-generated content (Scott et al., 2023). The often-broad nature of community guidelines, coupled with a lack of transparency, may cause users who experience content moderation to express uncertainty, confusion, or frustration about the process (Ma et al., 2023; West, 2018).

TikTok’s Community Guidelines include policies on NSSI content and specify that the company moderates content that shows, promotes, or shares plans for self-harm (TikTok, 2023a). While TikTok’s Community Guidelines specify that the platform permits messages of hope and stories of personal experiences overcoming NSSI urges, TikTok still restricts access to NSSI content, including referring users to suicide hotline numbers when they search for NSSI-related content (such as searching #selfharm in the Discover feature) due to falsely conflating NSSI with suicidal ideation and spurious connections of NSSI to discredited social contagion claims (Lavis & Winter, 2020; Sedgwick et al., 2019). While risks of trauma or re-traumatization must be considered in content moderation policies and practices, it is imperative that social media platforms have more nuanced understandings of content type and intention as the effect social media content has on a user is dependent on the context, goal, and set interactions between a user and a system (Karim et al., 2020; Matz & Germanakos, 2016). Considering these contexts, other platforms such as Instagram have integrated a Sensitive Content Control, which allows users to control how much content they see that is considered sensitive or potentially triggering as opposed to moderating the content completely (Instagram, 2022).

TikTok’s overarching definition of NSSI may remain unclear to TikTok users, particularly when users violate these policies on the platform. When creating or engaging with NSSI content, users may experience visibility moderation, the process through which TikTok’s platform manipulates the reach of user-generated content through algorithmic or regulatory means (Zeng & Kaye, 2022). Visibility moderation practices, such as reduction (i.e., reducing the visibility or reach of problematic content) (Gillespie, 2022), can contribute to the marginalization and exclusion of NSSI communities in this digital space. In this sense, TikTok exercises power through the “threat of invisibility” (Bucher, 2012, p. 1171), wherein users must adjust their behaviors on the platform to “play by the rules” to maintain visibility.

Because TikTok’s algorithm is an invisible mechanism that affects what users can see and share, we do not fully understand how users of NSSI portray and discover NSSI content on TikTok. This lack of understanding may lead users who engage in NSSI to feel oppressed and marginalized, similar to users within other stigmatized communities (Karizat et al., 2021). Our study responds to this research gap by exploring how users embrace alternate forms of value and authority as contextually informed responses to marginalization on the social media platform TikTok. Specifically, we investigate how TikTok users experience the algorithm to engage with content on NSSI. We address the following research question: How do users circumnavigate algorithmic barriers to seek, share, use, and create nonsuicidal self-injury content on TikTok?

Method

Interviews

This work focuses on how users experience TikTok’s recommendation algorithm to engage with NSSI content. This study received approval from the University of South Carolina’s Institutional Review Board (IRB) (Pro00112972). Prior to beginning each interview, we sought verbal consent to audio-record the interviews, to which all participants granted their consent and agreed to continued contact with us to receive copies of their interview transcripts for member-checking. Per IRB protocol, participants were also provided contact information for national mental health services and resources immediately following each interview.

Recruitment

We created a TikTok account for recruitment purposes. We posted a 1-min-long recruitment video directly soliciting responses on TikTok, in which we introduced ourselves and the study’s goals, similar to other studies recruiting TikTok users (Simpson & Semaan, 2021). In the video, we directed anyone interested in participating to a link to the pre-screening questionnaire, which contained additional information about the study. The video was tagged with popular NSSI hashtags identified in a previous study of NSSI content on TikTok (Lookingbill, 2022) to ensure the recruitment video would gain visibility. We shared the recruitment video on our personal TikTok, Twitter, Instagram, and Reddit accounts to broaden the scope of our recruitment. Two weeks after the initial posting, we reshared the recruitment video on each respective account and posted physical flyers around the University of South Carolina campus.

Pre-Screening Questionnaire

The pre-screening questionnaire asked respondents basic demographic information, their country of origin, average time spent on TikTok in a week, and if they currently engage in or have ever engaged in NSSI throughout their lifetime. Respondents did not meet our study’s minimum eligibility criteria if they responded that they were located outside of the United States, spent less than 2 hr a week on TikTok, were younger than 18, and did not have a history of NSSI. We received 25 responses to the pre-screening questionnaire, of which 11 respondents did not meet our study’s minimum eligibility criteria. We scheduled interviews with the remaining 14 respondents and, following attrition, conducted eight interviews.

Data Collection

We conducted eight semi-structured interviews over Zoom between 21 October and 11 November 2022. Participation was voluntary, and participants received US$25.00 as compensation for their time. Our interview protocol guided participants through a series of questions related to their perception of TikTok’s algorithm, their experiences and engagements with NSSI content on TikTok, and how they navigated the platform to seek and create NSSI content. In addition, we asked participants probing questions regarding any obstacles they faced when accessing or creating content. With participant consent, the interviews were audio-recorded and transcribed for analysis. We engaged in member-checking by sending participants their transcripts and fieldnotes to request the removal of potentially identifying contextual information and comment on how well the transcripts reflected their lived experiences.

Data Analysis

All interviews were audio-recorded, transcribed verbatim, and coded using NVivo 12 qualitative coding software. We conducted open coding at weekly meetings to discuss initial codes in-depth. This process included refining and solidifying codes and connections between the codes throughout the process. Data analysis also followed an emic/etic approach, in which emergent inductive codes were generated from the semi-structured interview transcripts and matched to higher-level etic codes deductively applied from prior theoretical and conceptual work (Guba & Lincoln, 1994; Miles et al., 2019). These codes were then merged into larger thematic categories. Our analysis resulted in three high-level categories aligned with the research question: (1) information practices, (2) user perceptions of TikTok’s algorithm, and (3) portrayal of NSSI content on TikTok. We organized 20 codes under these categories: 11 for information practices, 4 for user perceptions of TikTok’s algorithm, and 5 for portrayal of NSSI content on TikTok.

Qualitative Content Analysis

Data Collection

We identified and collected posts tagged with three popular NSSI hashtags (i.e., #sh, #shawareness, and #scars) identified in a previous study of NSSI content on TikTok (Lookingbill, 2022) using TikTok’s Discover feature. We downloaded the first-appearing 50 videos under each hashtag for a total of 150 videos, which is comparable to sample sizes of previous studies conducted on TikTok (Fraticelli et al., 2021; Herrick et al., 2021; Kong et al., 2021). All videos were publicly accessible. However, this article includes no identifying information, such as screenshots and usernames, to protect users’ privacy. Following data collection, we assigned each video a unique ID and recorded the URL, a description of the video (i.e., textual, visual, and auditory elements), and caption in an Excel spreadsheet. We then imported the Excel spreadsheet into NVivo 12 for analysis.

Data Analysis

After gathering the 150 TikTok videos, we employed a deductive approach to qualitative content analysis by applying codes generated from participant interviews. The first set of 50 videos included the hashtag #scars, and we independently coded the content to check for substantial intercoder reliability via Cohen’s kappa (κ = .74) (McHugh, 2012). Next, we divided the remainder of the data, wherein the first author coded the 50 videos with the hashtag #sh while the second author coded the remaining 50 videos with the hashtag #shawareness. After coding was complete, we reconvened for weekly meetings and discussed the codes that emerged and whether any codes needed adjustment. Based on the interview codebook in the above data collection and analysis procedure, we refined existing codes and added four codes to capture better the themes found in each TikTok video: one for information practices and three for the portrayal of NSSI on TikTok.

Positionality Statement

Care was taken in this study to ensure our positionality would not impact the research. Some identities represented in our research team included White and Vietnamese-American cisgender women. In addition, the first author has previous experience with engaging in NSSI, thus making her relationship with the NSSI community close. Both members were familiar with TikTok. While personal relationships informed the interviews and data analysis, we included memos and debriefing meetings to interrogate biases, presumptions, and positionality throughout the research process.

Findings

After analyzing our data from the interview transcripts and content analysis, we found three major themes from the codebook that informed users’ strategies for evading content moderation: (1) user perceptions of TikTok’s “For You Page” algorithm, (2) algospeak, and (3) signaling and self-policing. We illustrate these findings with data from our interview participants and qualitative content analysis of the creators in conjunction with a discussion of these themes.

Participants

Interview Participants

Of our eight interview participants, four self-identified as Black, three self-identified as White, and one self-identified as Aboriginal. Most participants identified as female. Participants were prompted to input a pseudonym on the pre-screening questionnaire. Please refer to Table 1 for a detailed demographics breakdown.

Interview Participant Demographics.

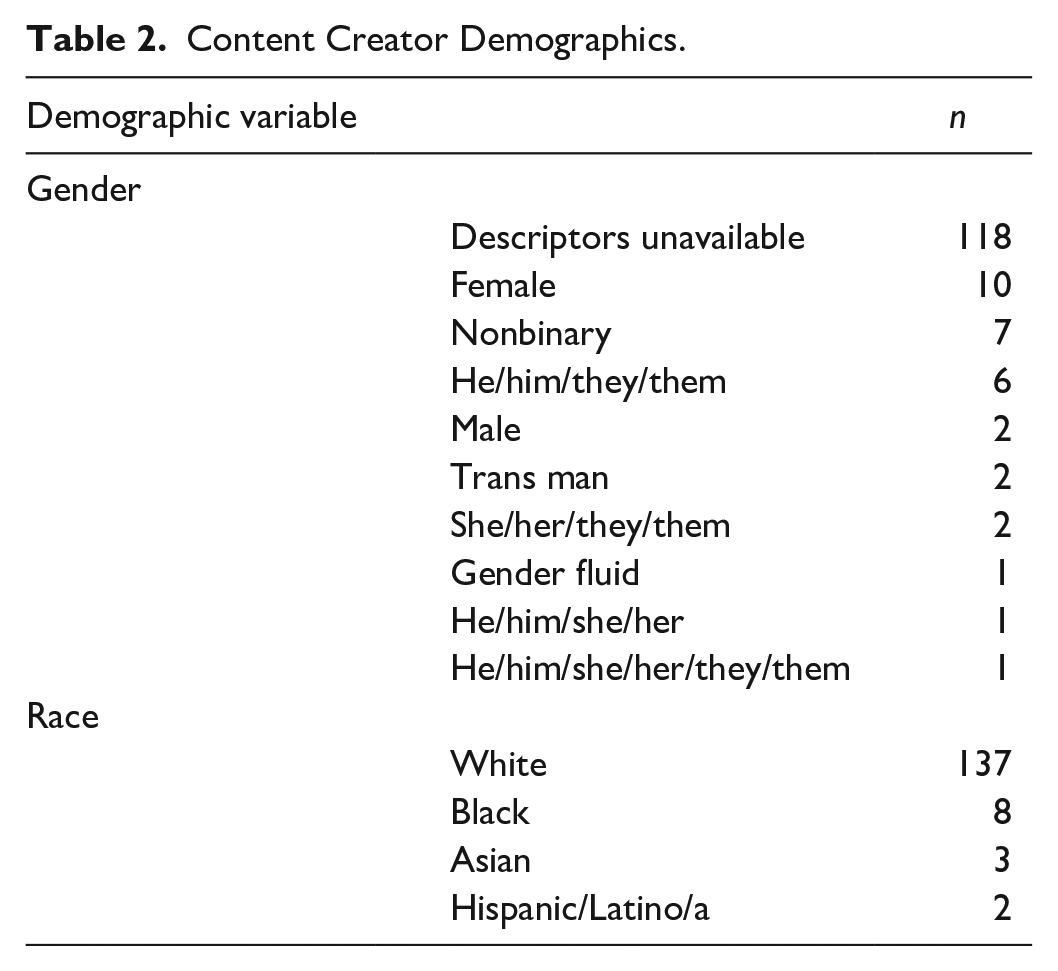

Qualitative Content Analysis Content Creators

As we did not engage with content creators directly, we could not assuredly capture creators’ gender and racial identities. When describing creators’ gender identities, we used relevant descriptors present in creators’ profiles, including pronouns. If descriptors were unavailable, we made presumptions about race and defaulted to gender-neutral pronouns. However, we recognize that these labels may not accurately reflect creators’ identities, especially as these identities are, to an extent, socially constructed (Table 2).

Content Creator Demographics.

Strategies for Content Moderation Evasion

As social media platforms become more ubiquitous in our everyday lives, it is vital to understand how algorithms shape what users can consume and create online. In this section, we discuss how TikTok’s algorithm plays a significant role in dichotomizing content as “safe” and “unsafe” and explain how this division creates barriers for users in the NSSI community who may be seeking social connection. In response to our research question, we further discuss how users perceive TikTok’s algorithm and how the algorithm impacts NSSI content on the platform.

Interview participants expressed concerns with TikTok’s Community Guidelines and described how the platform should view NSSI content as a source of communal support and a form of coping. Participants then detailed strategies to circumnavigate algorithmic barriers to engage with NSSI content on TikTok, indicating that TikTok content creators draw on their folk theories to circumvent platform guidelines without explicitly violating them. Folk theories refer to the “informal, socially-constructed, affect-influenced conceptions of how a platform works” (DeVito, 2022, p. 2). Folk theorization shapes user expectations of how a platform operates (DeVito et al., 2017), driving users to adapt to and around the algorithmic components of social media platforms, including content moderation components. Previous studies have indicated that marginalized populations, including people of color (Karizat et al., 2021) and LGBTQIA+ communities (DeVito, 2022; Karizat et al., 2021), hold folk theories that TikTok suppresses marginalized identities, leading these populations to engage in specific and informed strategies to maintain visibility.

User Perceptions of TikTok’s “For You Page” Algorithm

All eight interview participants perceived TikTok’s “For You Page” algorithm as adaptive and described how the algorithm appeared to learn users’ behaviors, which would, in turn, shape the content that would appear on their feed. For instance, Megan (26-year-old Black female) believed that viewing a creator’s profile would alter the videos on her “For You Page.” Megan stated, If I’m just on the homepage and I’m just scrolling up and down, then it’ll show different things over and over again. But if I click on a profile, and I view a lot of [the creator’s] videos, then afterwards I’ll notice that the algorithm kind of switches, and it’ll be geared towards things that are of that topic.

Interview participants believed that when users engage with a creator, TikTok’s algorithm will “make note” (Kora, 20-year-old White female) and adapt to deliver similar content on the user’s “For You Page.” Similarly, participants speculated that engaging with videos in multiple ways (e.g., liking and commenting) influenced content in users’ feeds and could alter the virality of a post, increasing the chances of a video appearing on their feed and on other users’ feeds as well. Maylin (23-year-old Black female) stated, Sometimes if I “like” [a video], it’s because I find “likes” to be a way to show “yes, I support what you’re doing.” I “like” things like that, and I recognize that it kind of contributes to my algorithm.

Similarly, Megan (26-year-old Black female) also thought of engaging with videos as a means of supporting creators but also recognized that not engaging with content through likes or comments would prevent unwanted content from appearing on her “For You Page”: If I don’t like something, then I won’t “like” it because I don’t want to see things like it again. But if I do “like” it, then it’s mainly just me saying, or I guess in my small way, trying to say to the creator that I support it.

While participants noted that TikTok’s algorithm adapted based on engagements with content, they also expressed how the algorithm seemingly learned users’ interests and experiences on its own, almost as if TikTok was listening to participants through their devices (Drew, 26-year-old Black female). Participants also described the “ebb and flow” (Morgan, 26-year-old White female) of the “For You Page,” particularly in that the algorithm seemed to “know” where they were “at in life” so that content would appear and reappear to reflect their current life experiences. The unpredictable and adaptable “For You Page” algorithm creates a personalized viewing experience for both creators and consumers of content. Thus, participants found consuming NSSI content on TikTok easier than on other platforms. For instance, Kora (20-year-old White female) described TikTok as a quicker tool to find help-seeking information over other platforms: [TikTok is] quicker for the amount of content you get. Because on Facebook, you only get one post, and you just keep scrolling and scrolling. With TikTok, you can have up to, like, five-minute videos. But it’s also faster than sitting down and watching a whole Netflix TV show, you know? And I’m not constantly waiting to see what’s coming next. [TikTok] could be another creative outlet for [NSSI]. I depended on creative outlets just to get through that part of my life.

While TikTok is not a dedicated mental health support space, participants found TikTok to be a tool for recovery for NSSI by cultivating a sense of community. When viewing NSSI content on TikTok, participants like Morgan (26-year-old White female) felt that the content “more so promotes community and care, rather than promotes the act itself.” Similarly, participants like Savannah (25-year-old Black female) expressed that TikTok enables her to “talk or relate to people who have also self-harmed.” In a sense, participants use TikTok as a support group, with Megan (26-year-old Black female) stating how viewing NSSI content “kind of reminds me of like how far I’ve come and how people are on different journeys, so it kind of lets me know that I’m not the only person that went through this.” Maylin (23-year-old Black female) supported the idea that TikTok can be “unifying because everyone [is] kind of struggling with stuff nowadays.”

Algospeak

The primary way that content is distributed on TikTok is through the “For You Page,” an algorithmically curated feed. Thus, unlike other social media platforms, having followers does not necessarily mean users will see creators’ content. This algorithmic distribution of content has led to a shift in creators’ information creation practices, namely that creators tailor videos toward the algorithm rather than a following, which means that abiding by content moderation policies is more important than ever.

Interview participants shared common views about TikTok’s Community Guidelines and the company’s responsibility to its users. There was a shared belief that TikTok’s Community Guidelines were not created to be specific but were instead “all-encompassing” over potentially harmful content. This sentiment caused users to feel that the guidelines were more like an “umbrella” that led to content getting flagged or removed without a transparent reason, where “creators on TikTok get their videos taken down for random stuff” (Kora, 20-year-old White female). The lack of transparency with violation policies shows an absence of both understanding and communication on TikTok’s part, often making users feel as if there is no context behind moderation. For instance, Kora said, “The TikTok algorithm is so strict that sometimes it picks up things that aren’t exactly what it’s trying to wean out.”

Morgan (26-year-old White female) expressed perceptions that simply mentioning NSSI could result in the removal of a video, saying that the wording of the Community Guidelines leads users to believe that “videos can be reported for talking about self-harm.” Participants emphasized that the guidelines were developed for TikTok to “stay out of liability problems” and, in doing so, allow the company to “do the bare minimum” (Megan, 26-year-old Black female).

The algorithm’s strictness and lack of contextualization lead users to carefully consider their content creation strategies, where users “always have a way to get around the guidelines” to avoid detection (Megan, 26-year-old Black female). Interview participants noted strategies such as algospeak (i.e., codewords or turns of phrase) (Lorenz, 2022) to avoid detection and subsequent content moderation. Megan stated, “On TikTok, people find a way to get around those guidelines by using specific words. Like I know you’re not allowed to say kill or murder. You can’t say it, so people say unalived.” Strategies like algospeak enable users to “play by the rules” of the platform and thus be “rewarded” with visibility, aligning with previous studies of NSSI content on TikTok (Lookingbill, 2022) and Instagram (Fulcher et al., 2020), which found users purposely misspelling words or replacing letters with symbols and numbers. While TikTok’s Community Guidelines may present a challenge to creating and accessing NSSI content, their guidelines are not entirely effective in controlling user behavior (Anderson, 2020). Participants expressed that multiple communities on TikTok, including those beyond NSSI, use similar methods to avoid algorithmic detection and content flagging. For instance, algospeak is a common strategy among content creators posting about stigmatized health information. Following the overturning of Roe v. Wade, a landmark decision of the U.S. Supreme Court that protected pregnant individuals’ rights to an abortion, TikTok users began sharing “camping” references to discuss abortion-related issues. For weeks after the Supreme Court ruling, creators posted that if a user needed to go “camping” (i.e., have an abortion), “campsites” (i.e., abortion clinics) were available in a particular region (Delkic, 2022; Levine, 2022). Social media users are increasingly using algospeak to avoid detection of content moderation algorithms. While machine learning can spot overt material, it can be more difficult to read between the lines on euphemisms or phrases (Levine, 2022).

As further evidenced by the content analysis, NSSI community members commonly engage in algospeak to reference NSSI broadly, the act of cutting, and the presence of scars.

For instance, one content creator engaged in algospeak to discuss the “reality of [NSSI]” by altering letters in moderated words like self-harm to numbers and symbols as they showed the text: “It becomes an add1cti0n.” Similarly, another creator engaged in algospeak by using abbreviations for moderated NSSI terms to share “unhelpful things to say to someone who [self-harms],” as they pointed to each text as they appeared on screen: “That’s so attention seeking omg. You’re too pretty to be doing that. Just stop for me.” While the tactic of algospeak is common on TikTok to allow users to talk freely about topics while avoiding algorithmic intervention, interview participants also noted that new ways of evading content moderation might be needed. Kora (20-year-old White female) expressed, “I’ve been seeing a little bit less of [algospeak]. So, I think TikTok is starting to kind of catch on to it.”

Signaling and Self-Policing

Interview participants also referenced the complex interplay between signaling and self-policing tactics as another popular strategy to avoid content flagging or moderation. Signaling is shaped by community norms, which users abide by to understand how to interact with each other within the community context. Participants explained that it is easy for insiders of online NSSI communities to understand implicit references to NSSI, meaning content creators do not need to include NSSI-related words or phrases like “self-harm” or “scars” to reach community members. Instead, lived experiences and hashtags provide contextual clues for NSSI content. Maylin (23-year-old Black female) noted: “I’ve seen people who have posted videos . . . but they’re not directly talking about it. But then the whole comment section is kind of related to that anyway, so it’s kind of obvious.”

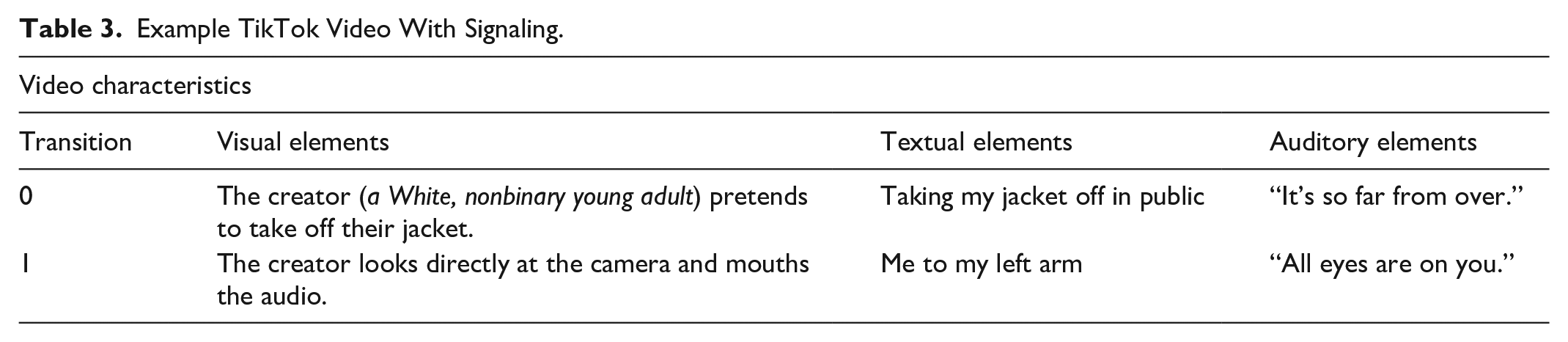

Signaling was a commonly identified strategy in the content analysis videos. Content creators often referenced their arms, implying the presence of NSSI scars without visibly showing them. For example, creators commonly referred to the attention they receive when their bare arms are visible (Table 3).

Example TikTok Video With Signaling.

In addition to referencing lived experiences with NSSI, users engage in signaling to reference harm reduction strategies (Table 4).

Example TikTok Video With Harm Reduction Strategy.

The above example illustrates a common harm reduction strategy wherein individuals snap hair ties or rubber bands against their wrists to resist NSSI urges. While signaling serves to implicitly reference lived experiences with NSSI, signaling also works to implicitly reference harm reduction strategies specific to NSSI communities.

In line with signaling, lexical variations of moderated hashtags are used heavily to find and share NSSI content. Hashtags are a visible form of communication on social media platforms. Hashtags act as information anchors (Potnis & Tahamtan, 2021) for online communities to discover, disseminate, and gatekeep information (Tahamtan et al., 2021). Previous studies (Feuston et al., 2020; Herrick et al., 2021) have examined similar hashtag use within other stigmatized communities. For instance, eating disorder communities have been found to adopt lexical variants of moderated tags by deliberately misspelling words (e.g., anoraxic, thynspyration) to increase visibility of the moderated content within the community while simultaneously being cautious of moderation algorithms (McCosker & Gerrard, 2021).

While signaling tactics serve as a nuanced means for communication within NSSI communities, they are part of a broader framework of community norms that extends into self-policing. Self-policing is not just a reactionary measure but a proactive approach to community care. Similar to other stigmatized populations (Lingel & boyd, 2013), members of NSSI communities engage in self-policing tactics, which work to challenge linear framing of social contagion claims (Sedgwick et al., 2019; Staniland et al., 2021). Our analysis indicated that TikTok users were cognizant of the potential harms of NSSI content and concerned themselves with reducing potential harms to protect themselves and other members of their community. Interview participants, for instance, were aware of their own triggers and purposely did not engage with content that could cause them distress while simultaneously recognizing that triggering content for them might serve as a coping mechanism for other users. Interview participants noted that content creators on TikTok engaged in self-policing tactics, mimicking users in NSSI communities on other social media platforms like Tumblr, Twitter, Reddit, and Instagram who engage in self-surveillance and self-censorship to protect others within the community (Guccini & McKinley, 2022; Lavis & Winter, 2020).

As evidenced by the content analysis, these self-policing tactics commonly consisted of labeling videos with trigger warnings to reduce potential harm. Most videos included either a general trigger warning as “TW” or referenced a specific trigger, such as “TW: scars,” “TW: talk of [self-harm],” or “TW: do not watch if u [sic] don’t wanna [sic] see scars.” Some content creators emphasized the importance of trigger warnings in their own videos, with one creator (Black, nonbinary person) saying in a video: “I cannot believe I’m on this app talking about this again but stop making jokes and videos about [NSSI] without trigger warnings. It’s not that hard. I don’t care if you’re ‘not responsible’ for my triggers. Everyone knows that [NSSI] is incredibly competitive and triggering. Just stop.” Here, definitions of triggering content are not limited exclusively to imagery, such as visible NSSI scars, but also to discussions of NSSI, which may re-traumatize users or cause distress. TikTok users have a sense of “social responsibility” (Seko et al., 2015, p. 1342) in that they feel they are morally obligated to protect vulnerable viewers. Actual benefits arising from the use of trigger warnings, however, have been contested in the literature: some studies demonstrated that while trigger warnings may increase users’ agency in making informed decisions about engaging with potentially traumatizing content, trigger warnings may also increase anxiety among vulnerable populations (Bridgland et al., 2023; Charles et al., 2022).

Limitations

This study had three main limitations. First, it was challenging for the recruitment video to gain a far reach within the U.S.-based NSSI community on TikTok. When creating the recruitment video, we carefully considered TikTok’s Community Guidelines to avoid potential violations. We used the term “mental health” to verbally describe the focus of the study rather than terms such as “self-injury” or “self-harm.” We included an image of the recruitment flyer, where we specified that eligible participants should have a history of engaging in “self-h@rm.” We purposefully altered the phrase “self-harm” to avoid any potential visual detection of NSSI language. After posting the video, we received several international responses. We reviewed participants’ time zones, which were generated with the Zoom web conference link for the interview, as a second eligibility measure. If a participant’s time zone indicated that they were outside the United States, we emailed the participant and canceled the interview. Second, due to IRB requirements and ethical concerns, we did not interview participants under the age of 18. Adolescents comprise a large portion of TikTok’s userbase (Anderson et al., 2023), and adolescence is the most prevalent age for the onset of NSSI. Many of our interview participants reported that while they engaged in NSSI during their adolescence, they no longer engaged in NSSI. Our results may be limited to how users with previous experiences with NSSI engage with content on TikTok and may not be generalizable to younger users. Finally, the data collected for the content analysis were limited to searchable content tagged with one of three hashtags (e.g., #sh, #shawareness, and #scars). As stigmatized populations have been found to un-tag content to circumvent hashtag moderation (Gerrard, 2018), this study’s findings do not document the full landscape of NSSI content likely on TikTok.

Conclusion

Our study presents a nuanced account of users’ experiences with NSSI content on the algorithmic system TikTok. Our findings emphasize the importance of community and suggest that TikTok users actively work to resist content moderation to establish a safe and destigmatized space for themselves and other users who are engaging in or recovering from NSSI. While TikTok encourages supportive interactions among people with mental illnesses, TikTok’s enforcement and rationale of content moderation remain ambiguous to users, necessitating a way to balance the needs of NSSI communities more effectively and transparently on the social media platform. Ultimately, our study underscores the power dynamics inherent in social media platforms and their impact on individuals within the NSSI community.

Practical implications of this research include trauma-informed design recommendations that appreciate the individual impact of technological “solutions,” which conflict with current broad, population-level approaches to mental illness to be categorized as harmful content (Feuston et al., 2020). Trauma-informed design approaches aim to improve technologies by explicitly acknowledging trauma and its impact, recognizing that technologies can both cause and exacerbate trauma (Chen et al., 2022). The principles of trauma-informed design emphasize how product design teams should commit to a trauma-informed viewpoint and acknowledge their role in building technology that minimizes trauma and re-traumatization (Randazzo & Ammari, 2023). Design affordances such as content moderation, which perpetuates social control structures, have a documented history of causing harm, including trauma (Chen et al., 2022; Scott et al., 2023). However, as trauma-informed design considers outcomes of technological affordances, this approach can inform fairer content moderation practices of NSSI content on TikTok. For instance, a trauma-informed design of TikTok may increase the transparency of NSSI content moderation to increase user perceptions of fairness or may provide context moderation wherein moderation considers the content creator, their target audience, and their relationship. Context is imperative in trauma-informed design as online trauma cannot be understood separate from the inherently social context in which trauma occurs (Scott et al., 2023).

By contextualizing the information produced and reproduced within NSSI communities, product design teams can better understand the normalcy and disruption for the individual (Feuston et al., 2020) and attend to the ways that design for these communities fails. In this sense, product design teams should not focus on “fixing” NSSI, such as solely providing mental health resources or erasing its existence entirely, but instead should focus on supporting users’ experiences. Product design teams may attend to these design failures by integrating community members’ voices into the design process, such as through processes like co-design. In doing so, product designers can employ a stress case approach to highlight the sociocultural discourses embedded into technologies and account for the marginalizing outcomes produced by technological affordances (Kitzie, 2019).

Finally, our research can contribute recommendations for content moderation policy change. As it stands, TikTok may be failing users within NSSI communities via their Community Guidelines. TikTok presents issues of autonomy within the bounded sphere of their Community Guidelines, wherein users’ autonomy and consent are undermined (Gray & Witt, 2021). TikTok fails to acknowledge the duty of care to enable destigmatized conversations of NSSI within context and allows users to express and share experiential knowledge within these communities. In line with previous work on mental health content on TikTok (Milton et al., 2023), our research includes recommendations that, instead of sweeping policies, TikTok should establish more nuanced attempts to understand content type and intention. In doing so, TikTok’s content moderation policies should consider NSSI content within context to ensure the platform can function as a safe, destigmatized space to engage in discussions of NSSI.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.