Abstract

Digital beauty filters are pervasive in social media platforms. Despite their popularity and relevance in the selfies culture, there is little research on their characteristics and potential biases. In this article, we study the existence of racial biases on the set of aesthetic canons embedded in social media beauty filters, which we refer to as the Beautyverse. First, we provide a historic contextualization of racial biases in beauty practices, followed by an extensive empirical study of racial biases in beauty filters through state-of-the-art face processing algorithms. We show that beauty filters embed Eurocentric or white canons of beauty, not only by brightening the skin color, but also by modifying facial features.

Introduction

Technological development is a socially entangled process that reflects the values and biases of the society where it takes place (Ash et al., 2018). Social media platforms, with billions of users worldwide, are a clear example of such a process. In less than three decades of existence, they have emerged as a key element that conforms the social fabric of human communities, allowing their members to connect, interact, and share information. They have created new opportunities for personal and professional networking, learning, entertainment, activism, and self-expression.

Historically, the marginalization of women from the use of technology has led to the inclusion of gendered notions in technological design (Cockburn, 1983; Wajcman, 2004). In the case of social media, many of the functionalities and algorithms used in these platforms emphasize physical beauty as a valuable attribute for women, to the point that female users tend to self-objectify in search of social validation (Winch, 2013; D. Zheng et al., 2019). Self-objectification influences self-presentation practices in many ways, such as posting edited selfies on social media to appear more attractive (Hong et al., 2020). Among the available digital beauty enhancement tools for photos and videos, social media platforms favor beauty filters, mostly designed by their users (Riccio et al., 2022). These filters leverage computer vision algorithms for face and facial feature detection and augmented reality (AR) to overlay in real-time digital elements that modify the features of the detected face, as depicted in Figure 1. The changes are typically applied to the skin, the eyes and eyelashes, the nose, the chin, the cheekbones, and the lips, creating a visually enhanced or beautified version of the user. The filters often reflect non-realistic beauty standards, making users believe that a better version of themselves is not only possible, but even needed and desirable, ultimately impacting self-perception and self-esteem (Eshiet, 2020; Grossman, 2017).

Flow of a beauty filter applied to an input image (from left to right). First, computer vision algorithms are applied for face and facial feature detection, followed by the use of augmented reality (AR) methods to overlay in real-time digital content that modifies (beautifies) the face. Possible changes include lightening and correcting imperfections in the skin, making the eyelashes longer, the cheekbones more prominent, the lips fuller and pinker, and the eyes bigger and of lighter color. The original (input) image on the left is from the

In this article, we investigate the existence of racial biases in social media beauty filters and their potential negative impact not only on the well-being of social media users—particularly women and people of color—but also on society at large. We refer to the Beautyverse as the set of aesthetic canons embedded in today’s beauty filters. The often unreachable beauty ideals reflected in the Beautyverse may be internalized by users, who actively aspire to look like their beautified digital versions, reinforcing those standards even further through systematic social comparison (Lamp et al., 2019; Myers & Crowther, 2009). In such a complex scenario, it is of utmost importance to investigate the multiple facets of the Beautyverse, especially its potential negative impact. In qualitative studies, scholars have argued that beauty filters perpetuate racism (Mulaudzi, 2017) and reinforce Euro-centered ethnic features (S. Li, 2020). In other words, the facial aesthetics embedded in such filters are inherently white (Jagota, 2016; Shein, 2021). Note that in this article, we use the terms Eurocentric and white indistinguishably. Furthermore, our definition of whiteness does not simply refer to the skin tone, but also includes other facial features, such as “nose and eyes shape, lips and hair type” (Dyer, 2017).

Despite the wide adoption of beauty filters and their impact on millions of users, there is little quantitative research to date on their characteristics and particularly on their biases. In this article, we aim to unravel and empirically evaluate the existence of racial biases in the Beautyverse by means of state-of-the-art machine learning-based computer vision algorithms. The expected impact of this research is to initiate an interdisciplinary discussion on the technical and social implications of this phenomenon.

Related Work

In recent years, different research communities have investigated the increasingly popular use of digital beauty filters. In this section, we provide an overview of the most relevant previous work in Computer Science, Psychology, and Sociology.

Machine learning-based methods in computer vision are the main technical tool to study beauty filters from a Computer Science perspective. This field relies on the use of publicly available, standardized data sets of faces to enable the comparison of different approaches and the reproducibility of the results. However, to date, there are few public data sets of beautified faces (Hedman et al., 2022) and the use of faces downloaded from social media platforms is not possible unless there is explicit, informed consent from each of the individuals whose faces would be analyzed.

Bharati et al. (2017) created a data set of beautified faces from 600 different individuals belonging to three different ethnicities (Indian, Chinese, and Caucasian) using three commercial tools for beautification: Fotor,

1

BeautyPlus,

2

and PortraitPro Studio Max.

3

In this case, the beautification techniques modified the skin, facial structure, eyes, and lips of the original faces. In addition to sharing the data set, the authors proposed a novel semi-supervised autoencoder to detect whether the images had been retouched. Hedman et al. (2022) generated a beautified version of the Labeled Faces in the Wild (LFW) (Huang et al., 2008) faces data set. They performed an analysis of the impact of beauty filters on face recognition models. However, the beautification process only involved the superimposition of simple AR elements that create occlusions on the face. Mirabet Herranz et al. (2022) studied the impact of beauty filters on both face recognition and estimation of biometric features by beautifying the CALWF (T. Zheng et al., 2017) and VIP_attribute (Dantcheva et al., 2018) data sets. Riccio et al. (2022) proposed

Beyond Computer Science, related work in Psychology and Sociology serves as an inspiration and provides a deeper understanding of the beautification phenomenon. Early work by Felisberti and Musholt (2014) focused on the impact of beauty filters on self-perception and self-esteem. The authors carried out a user study with 33 participants (23 females), finding that low self-esteem impacts the desirability of certain physical features, in particular, smaller nose and bigger eyes. Fribourg et al. (2021) analyzed the impact of beauty filters on the perception of attractiveness, intelligence, and personality through a user study with 20 males and 20 females. They reported that the perception of others is often transferable to self-perception and that AR beauty filters seemed to decrease self-acceptance. Bakker (2022) presented a study with 103 female participants of the internalization of beauty ideals from beauty filters, highlighting that women using these filters internalize these ideals more easily, hence suffering from body dissatisfaction.

Shifting the focus to the subject matter of our work, we report on previous research that emphasizes the connection between beauty filters and racism/colorism from different cultural perspectives. Siddiqui (2021) studied the relationship between social media beauty filters and the deeply rooted colorism in Indian society. As in other countries in Asia, Africa, and South America, 4 a fairer skin is considered more attractive and an enabler of social opportunities in India. The author interviewed 26 young women, and concluded that beauty filters imitate hyper-realistic and fair-skinned beauty ideals, allegedly emancipating women but strongly impacting their self-esteem. Peng Peng (2021) provided a techno-feminist analysis of the development of beauty filters applications and the so-called wanghong beauty ideal in China, which is “characterized by big eyes, double eyelids, white skin, high-bridged nose, and pointed chin” (A. K. Li, 2019). Through a case study of the BeautyCam 5 application, the author suggested that the driving force for the development of such applications in Chinese society is a wave of pseudo-feminism. These applications are designed to target female users, with the argument that improving the physical appearance is a means to obtain social empowerment and emancipation. Such a claim implicitly embeds and propagates a gendered approach in the design of technology, and the need for women to adhere to an “ultra-feminine” physical representation.

While previous work has tackled this matter from a qualitative perspective, this article contributes with an extensive quantitative and interdisciplinary study of racial biases in beauty filters. In particular, our contributions are four-fold:

We contextualize our technical work with a historical overview of racial biases in the social understanding of beauty, emphasizing how they impact other dimensions of collective and individual affirmation in society.

We empirically study the existence of racial biases in the Beautyverse by applying machine learning-based race classification algorithms to images of beautified and non-beautified faces.

We investigate the characteristics of such racial biases through a state-of-the-art explainable artificial intelligence (AI) method.

We draw six insights and implications regarding the use of beauty filters in social media.

Beauty: A White Social Opportunity

In this section, we contextualize and motivate our work through a summary of the biases that have characterized beauty practices in human history, and their direct consequences for the members of marginalized communities.

Research has shown that beauty matters: people who are perceived as more beautiful are more likely to be successful in life by, for example, achieving better grades in school (Talamas et al., 2016), promotions and higher income at work (Morrow et al., 1990), more lenient criminal sentences (Stewart, 1980), and a better social status overall (Frieze et al., 1991). In parallel with the presumption that beauty standards are determined by culture and personal biases (Sartwell, 2012), studies have demonstrated that symmetry, averageness, and sexual dimorphism are important evolutionary factors in determining attractiveness across cultures (Rhodes, 2006). In particular, physical appearance is important especially for teenagers: female adolescents tend to have the highest rates of mental health issues, and particularly anxiety and depression related to body dissatisfaction (Alm & Låftman, 2018; McLean et al., 2022; Pivnick et al., 2022).

Social media has become an indispensable component in young people’s lives (Boyd, 2008), with both positive and negative effects, particularly on mental health (Richards et al., 2015). We know that our digital self and its perception impact our analog self. For instance, having a highly sexualized virtual reality avatar affects how females act both online and offline, increasing their sense of self-objectification (Fox & Bailenson, 2009; Maloney & Robb, 2019). Moreover, selfie dysmorphia has led to an increase in plastic surgery to look like the beautified social media self which, in many cases, reflects an unattainable ideal of beauty (Cristel et al., 2021; Othman et al., 2021; Perrotta, 2020).

In this context, white beauty standards predominate in our society and current advancements in computer vision and AR, combined with the massive adoption of increasingly powerful smartphones and the ubiquitous use of social media platforms, threaten to amplify the predominance of such standards. Historically, structural systems privilege White people in every conceivable social, political, and economic opportunity (Fanon, 2008; Kilomba, 2021). Since Europeans colonized the world—occupying land, appropriating resources, and establishing slave trades—descendants of the colonized countries have relied on migrating to places where White people come from to find better life opportunities.

The social advantage conferred to White(r) individuals manifests itself in the two closely related concepts of colorism and racism, 6 which imply a hierarchical positioning of people according to their skin color, ethnicity, and other physical features. Colorism occurs within a particular racial or ethnic group, based on skin tone, such that, lighter-skinned individuals are preferred over darker-skinned ones. Racism takes place across different racial and ethnic groups, based on perceived differences in physical features, cultural practices, and social customs. Racism plays a role in shaping beauty standards, as it often involves a preference for Eurocentric features, such as straight hair, light skin color, light-colored, and large eyes, over features that are more commonly associated with non-European cultures.

As a consequence, lighter-skinned individuals—both from the same racial group and across racial groups—have been awarded privileges past and present (Mire, 2001). After being subjected to White people’s privileges and their corresponding beauty standards for so long, it is no surprise that people of color might despise the color of their own skin, eyes, and hair, aiming for a whiter look (Tate & Fink, 2019). Today, most countries have banned skin-whitening products because of their toxic ingredients and damaging impact on mental health. Still, people—and particularly women—in the Global South are willing to take great health risks to change their appearance, so that, it conforms to white beauty canons and hence increases their chance to achieve higher socio-economic power (Adawe & Oberg, 2013).

Beyond colonization, globalization also plays a key role in influencing beauty standards around the world, which results in Western European and American beauty ideals being globally embraced (Dimitrov & Kroumpouzos, 2023). The fashion, media, cosmetics, and movie industries significantly contribute to the global culture and the definition of canons of beauty (Yan & Bissell, 2014). This globalization process is also reflected on how social media impacts the perception of beauty worldwide through systematic comparison with beauty influencers from the Western world (Ward & Paskhover, 2019).

While colonization and globalization are determining factors in establishing beauty standards worldwide, additional factors need to be considered as every cultural context is unique. For example, scholars have argued that the shadeism existing in the Indian sub-continent is not only related to the need of mimicking “colonial whiteness” (Fischer-Tiné, 2009) but also has a locally pre-colonial rooted history (Kullrich, 2022) as fair-skin tones were associated with upper castes: lightening the skin in India is not necessarily a matter of changing “color” but a matter of changing “shade” to hide the social and working status (Kullrich, 2022). In Africa, researchers have highlighted how the dominant homogenized representation of beauty in African magazines promotes “western” femininity. As a consequence, it is expected that Black women feel the need to adhere to white beauty ideals to feel beautiful (Akinro & Mbunyuza-Memani, 2019). At the same time, research has shown that within racial minorities in the United States, Asian women tend to idealize and follow mainstream white beauty standards more than Black women (Chin Evans & McConnell, 2003). With respect to Asia, the influence of Western canons of beauty is combined with their own traditional views on beauty, reflected in their art, literature and philosophy (Samizadeh, 2022). For example, a fair skin with smooth texture—so-called porcelain or milk-like skin—has been revered for centuries as illustrated in Asian poetry and literature. Furthermore, the change of facial features is no longer perceived as a disrespect to the ancestors due to globalization and the wide availability of non-surgical and surgical cosmetic procedures (Kim, 2003) to the point that South Korea is referred to as “the plastic surgery capital of the world,” representing a 25% of the global beauty market 7 and China’s cosmetic surgery industry is one of the largest and fastest-growing in the world. Finally, scholars have recently reported on the under-studied beauty and body image ideals in postcolonial Latin American countries and US Latinx women (Gruber et al., 2022), finding that beauty is primarily rooted in a Westernized and white ideology (Figueroa, 2021) (light skin tone and hair color, small noses) combined with a culturally rooted curvaceous figure (Lloréns, 2013).

In summary, while acknowledging that different cultural contexts follow diverse and unique trajectories to shape their beauty standards, we also highlight that the widespread use of beauty filters is turning beauty into a globally shared experience, which prompts research efforts like ours. In this article, we study whether white beauty standards are indeed present in today’s beauty filters through the lens of state-of-the-art face processing algorithms. Such a computational approach enables us to study this phenomenon at scale, with thousands of images, and in a consistent, systematic and potentially more objective manner. We articulate our work on this topic by means of the following research questions (RQs), which we address in two different experiments, described in the next section:

Experiments

In this section, we describe the experiments that we carried out to tackle RQ1 and RQ2. The results can be reproduced through our GitHub repository. 8

Data Sets and Data Pre-processing

We perform our analyses on the widely used

Beyond demographic diversity, the images in the two data sets contain one or more individuals in different poses, scenarios and with a variety of facial expressions. As the beauty filters are typically applied to selfies, we selected a subset of the examples in the

Applying these conditions, we selected a total of 3,164 images, depicting the face of single individuals with frontal or nearly frontal poses, and having comparable resolution. Figure 1 exemplifies a canonical example of the selected images. The images are balanced across gender and racial categories: on average, we select 452 images per race (with a minimum of 420 and a maximum of 484, respectively, for Southeast Asian and Black). We address our RQs on this test set.

RQ1: Do Beauty Filters Make People Conform With Eurocentric (White) Beauty Standards?

Problem Formulation and Setup

We consider a set of images

Example the four versions of the same image considered in RQ1. From left to right, original image

To address RQ1, we use two different state-of-the-art computer vision models

Results

Table 1 depicts the confusion matrices obtained on race prediction. As seen in the Table, the beautified faces are more likely to be classified as White than the originals. As a consequence, the performance of both

Confusion matrices for the two race classification algorithms on four variations of the images (i.e., Original

(a) Confusion matrices for the

(b) Confusion matrices for the

The use of blurred images serves as a reference to ensure that the obtained effect is not caused by an intrinsic artifact in the classification algorithms when facial features are blurred and harder to detect. We observe that the behavior on blurred images is also slightly biased toward predicting the White class, but to a much lower degree than on the beautified case. Interestingly, the Black and (East) Asian classes are the least impacted in terms of classification performance after beautification. In this case, the blurred images yield the worst classification accuracy for both in

Furthermore, a comparison between the per gender race classification performance on the original

For every race and gender (F and M), the dark-colored bars represent the change in accuracy after beautification, while the light-colored bars depict the difference in the percentage of images that are classified as White after beautification. Note that in the case of

The dark-colored bars correspond to the accuracy loss/gain (in percentage points) in classifying the race of the images after beautification, such that, a negative/positive value corresponds to a loss/gain in accuracy, respectively. The only race where the prediction performance consistently increases after the application of the beauty filters is the White race and hence bars show positive values. For the rest of the races, the race classification accuracy significantly decreases (negative values in the bars) after beautification, with the exception of East Asian and Black males, where the performance of the

The light-colored bars depict the percentage of images in each race category that are classified as White after the application of beauty filters but were not classified as White before beautification. This percentage is notably large in the case of the Latino Hispanic and Middle Eastern races, but it is present on all races, for both genders and with both race classification models. While the

Regarding gender, we observe that both the images of male and female faces are more likely to be classified as White after beautification. Yet, there are some gender differences. We perform t-tests between the models’ loss in performance for male and female faces after beautification and conclude that no gender bias is present in the case of the

RQ2: How Do Beauty Filters Embed Eurocentric Beauty Canons?

To address this RQ, we leverage attribution methods (Abhishek & Kamath, 2022), a popular tool within the explainable AI field (Fel et al., 2022). Attribution methods in computer vision are used to understand the contribution of different areas of an image to a specific output in the prediction of a model or algorithm. These methods are used to improve the interpretability and explainability of deep learning-based computer vision models (Colin et al., 2022).

Attribution methods may be categorized as gradient-based (Simonyan et al., 2013; Sundararajan et al., 2017) or sensitivity-based (Fel et al., 2021; Zeiler & Fergus, 2014). Sensitivity-based attribution methods assign a numeric score to each pixel of the image according to how important it is for the classification by probing the model with

Problem Formulation and Setup

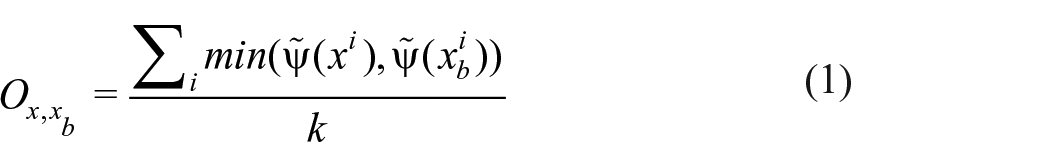

We define as

To gain an insight behind the reason for the classifications in both

This attribution method has been found to be effective in identifying a small number of important pixels that drive the prediction of the model. Typically, 5%–10% of the pixels account for more than 80% of the accuracy of the model (Petsiuk et al., 2018). Thus, to ease the comparison, we threshold

Our goal is to determine whether the changes in the facial features caused by the beautification process lead to the algorithms paying attention to different parts of the face on the beautified images when compared to the original images, which might explain the classification errors. Therefore, to address RQ2, we postulate two hypotheses that we empirically evaluate by means of quantitative measurements (see Figure 4 for an illustration of the pipeline).

Explainability pipeline used to address RQ2. From left to right: input images (

The reason for this change of focus on

with

Our second hypothesis is formulated as follows:

The reasoning behind this hypothesis is that, in addition to the change of focus, the brightening of the faces that occurs after beautification might contribute to the misclassification. The quantitative measure that we propose to evaluate this hypothesis is ∆

Note that we compute the brightness

Results

Figure 5 summarizes the per-race overlap (top graph) and ∆

Overlap and ∆

As seen in Figure 5a, the overlap in the heatmaps between the original image and its beautified version is smaller in the images that are misclassified (set

Moreover, we observe in Figure 5b that the overall ∆

In other words, the parts of the images analyzed by the race classification algorithms to wrongly determine the race of the beautified faces (set

Interestingly, in Figure 5, we observe that for the two races with the largest misclassification rates (Latino Hispanic and Middle Eastern), these differences are less notable. For example, the loss in classification performance of

Examples of individuals that are misclassified as White by

Discussion

The aim of this article is to spur a discussion toward a more equitable Beautyverse and, by extension, a healthier social media environment. Our work should be interpreted from this perspective, acknowledging that the pervasiveness of these filters has been found to impact their users (e.g., insecurities and body dissatisfaction), as previously discussed.

We have leveraged state-of-the-art computer vision techniques to study a complex social phenomenon, namely, the definition of beauty canons as reflected by beauty filters. Our work illustrates the need for all the stakeholders that contribute to the development of pervasive technologies on social media—from scientists to developers, users and social media, and advertisement companies—to understand and embrace race, gender, body type, culture, beliefs, language and functional diversity (Ogolla & Gupta, 2018). In the case of social media platforms, they could review their community standards and guidelines to ensure they address the responsible use of beauty filters and other image-enhancing tools. This might include restrictions on filters that promote racial biases or misrepresent individuals’ natural appearances.

From our experiments, we draw several insights and implications regarding the Beautyverse.

Beauty Filters Embed a Racial Bias

We find that beauty filters transform the faces to conform with Eurocentric (white) beauty canons as perceived by state-of-the-art race classification algorithms (RQ1). Racial biases embedded in beauty filters had been previously hypothesized by researchers in humanity-related fields and by social media practitioners or users from marginalized communities. However, they had not been empirically validated to date until this study.

The fact that beauty filters reinforce and promote white beauty standards perpetuates the notion that Western features are the epitome of attractiveness. This finding suggests that beauty filters contribute to the perpetuation of racial stereotypes, reinforcing existing biases, contributing to the subconscious association of certain non-White racial traits with negative attributes or less beauty, and potentially further marginalizing and devaluing individuals with diverse racial backgrounds and features.

The Racial Bias Entails Changes Beyond Skin Whitening

The reasons why race classification algorithms have a tendency to classify beautified faces—irrespective of their race—as White are complex. From our explainability experiment (RQ2), both a brightening of the skin color and changes in the facial features play a role in confusing the algorithms.

State-of-the-Art Face Processing Algorithms Are Sensitive to Beauty Filters

According to our work, race classification algorithms are not robust to popular beauty filters from social media. Interestingly, while the

However, we do not intend this evidence to necessarily serve as an encouragement to develop more robust race classification algorithms. These models—along with other face processing algorithms, including face recognition systems—pose significant legal and ethical challenges (Bu, 2021), which need to be taken into account before deciding to work on their development, deployment or technical improvement. Classifying humans through their visual characteristics may lead to the misuse of technology for oppression purposes, as we have witnessed in human history (Scheuerman et al., 2020). Should our readers decide to pursue such a research line, we strongly recommend performing a prior rigorous study of potentially unintended applications and the broad societal impact that these tools might have.

The Social Implications of This Phenomenon Should Be Further Studied

The beauty filters considered in this study are designed by social media users. Therefore, our experiments may be seen as empirical evidence of the social influence of Eurocentric beauty standards in the definition of these filters and the choices that users make when designing them. A failure to acknowledge the existence of this systematic racial bias in our society will ultimately prevent achieving a more diverse, inclusive and equitable Beautyverse.

Given the prevalence of beauty filters on social media platforms, their biases contribute to a skewed perception of attractiveness and desirability, leading to implications for social interactions, dating apps, and even job opportunities in professions that heavily rely on virtual presence. Our work indeed emerges from important concerns for non-White individuals, and especially women. Not only are women worldwide subject to the pressure of a male-gazed (Mulvey, 1975) society that conceives them as objects of sexual desire that should satisfy the pleasure in looking, but they are also, once again in human history, subject to the idea that looking beautiful also means being white. In addition, recent advances in generative AI algorithms to automatically create images and videos could exacerbate the dangerous effects of representational biases for women and racial minorities even further (Luccioni et al., 2024). We leave to future work the analysis of potential racial biases in such algorithms.

Beauty Filters as a Colonial Symbol

The popularity of beauty filters and the worldwide diffusion of the standardized—and biased—canons of beauty represented by these filters may be interpreted as a consequence of globalization, and globalization can be considered as a modern form of colonization (Banerjee & Linstead, 2001) that some authors define as “electronic colonization” (Zembylas & Vrasidas, 2005). Being a Western-driven process, it presents the Western world as attractive and beneficial, while appropriating, homogenizing, and standardizing the Global South (Akinro & Mbunyuza-Memani, 2019).

The research presented in this article contributes to a more nuanced, empirical and data-driven perspective on the standardization of beauty ideals that are defined, promoted and reinforced by this modern colonization phenomenon. Thus, a decolonization perspective regarding the use of beauty filters on social media is needed. Such a perspective underscores the need to critically examine and challenge the perpetuation of Eurocentric beauty standards in the digital space. By acknowledging historical colonial legacies, promoting cultural appreciation over appropriation, advocating for inclusive beauty standards, and empowering diverse communities to reclaim their narratives, our research aims to foster a more equitable, diverse, and respectful digital beauty culture that honors and celebrates the richness of global canons of beauty.

Beauty Filters as Equalizers

At the same time, the goal of our research is not to denounce beauty filters per se, but rather investigate the biases and unintended negative consequences that these filters may have. Beauty filters could also be seen as tools for the democratization of beauty given the existence of the attractiveness halo effect (Dion, 1972; Gulati et al., 2022; Talamas et al., 2016). According to this cognitive bias, people that are considered to be attractive are also perceived as having a range of positive attributes, including higher morality and trustworthiness, better mental health, and superior intelligence. From this perspective, beauty filters could contribute to removing (or alleviating) physical appearance as a decisive factor in certain sensitive contexts (e.g., hiring processes or judicial sentences) and hence contribute to fairer decisions. This is a research direction that we plan to study in future work.

Limitations

To the best of our knowledge, our work is the first extensive effort to quantitatively study racial biases in beauty filters. Our aim is to bring the attention toward this topic not only in the scientific community, but also among practitioners, developers, and industrial stakeholders that can effectively make a change in the status quo. Our aspiration is to contribute with our research to an ethical development of AI that would yield positive societal impact. However, our approach is not exempt from limitations.

First, in our data sets, users of social media platforms typically follow specific communication paradigms (e.g., adopt certain poses for selfies) (Qiu et al., 2015; Tifentale & Manovich, 2015) that might not be fully reflected in the data sets used in this research. As previously explained, to mitigate this issue, we selected a subset of the images in

A second limitation stems from the fact that most of the algorithms used in this article are complex deep learning-based systems that combine different modules with opaque inner workings (e.g.,

Third, we recognize the limitation of using categorical racial labels, which is a highly debated topic and an open RQ. This non-ideal choice was due to technical reasons. Given that the machine learning community is still not critical enough in its engagement with the socially constructed meaning of races and their political derivations (Benthall & Haynes, 2019), existing race classification algorithms model race as a categorical label (Guo et al., 2016; Parkhi et al., 2015). An interesting direction of future work in this area would be to develop systems that are able to move beyond categorical racial labels.

Conclusion

We have investigated the racial biases embedded in today’s social media beauty filters, contextualizing our work from a historical perspective. We have applied race classification algorithms to over 3,000 images from the

We hope that our research will spur further interdisciplinary work to build a more inclusive, equitable and diverse social media environment.

Footnotes

Acknowledgements

The authors deeply thank all the colleagues that have read this work and provided insightful feedback and suggestions, which surely helped us improve the quality of our research. They also thank Núria Camps for her substantial help in data set pre-processing.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: P.R., J.C., and N.O. are supported by a nominal grant received at the ELLIS Unit Alicante Foundation from the Regional Government of Valencia in Spain (Convenio Singular signed with Generalitat Valenciana, Conselleria de Innovación, Industria, Comercio y Turismo, Dirección General de Innovación). PR and JC are also supported by a grant by the Bank Sabadell Foundation. JC and NO are also supported by Intel Corporation.

Notes

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.