Abstract

This study analyzes how vaccine-opposed users on Instagram share anti-vaccine content despite facing growing moderation attempts by the platform. Through a thematic analysis of Instagram content (in-feed and ephemeral “stories”) of a sample of vaccine-opposed Instagram users, we explore the observable tactics deployed by vaccine-opposed users in their attempts to avoid content moderation and amplify anti-vaccination content. Tactics range from lexical variations to encode vaccine-related keywords, to the creative use of Instagram features and affordances. The emergence of such tactics exists as a type of “folk theorization”—the cultivation of non-professional knowledge of how visibility on the platform works. Findings highlight the complications of content moderation as a route to minimizing misinformation, the consequences of algorithmic opacity and knowledge-building within problematic online communities.

Vaccine-opposed activists

1

use social networking platforms to share anti-vaccine content and misinformation (Koltai et al., 2022; Yang et al., 2021). Although vaccine-related misinformation far predates the COVID-19 pandemic (Shelby & Ernst, 2003), the current global urgency surrounding combating vaccine misinformation is unprecedented. In response, large social media sites including Twitter, Facebook, and Instagram have been under increased pressure to moderate COVID-19 and vaccine-related misinformation, and to remove offending content and accounts from their digital spaces (Bickert, 2021). We define content moderation as monitoring and vetting user-generated content for social media platforms of all types, in order to ensure that the content complies with legal and regulatory exigencies, site/community guidelines, user agreements, and falls within norms of taste and acceptability for that site and its cultural context. (Roberts, 2019)

Content moderation is often semi-automated (Gillespie, 2020), using a combination of human moderators and automated moderating systems to flag, delete, or interfere with spread or engagement based on whether this content aligns with community guidelines or not. This process has been a salient topic of study as content moderation is extremely complex on large platforms such as Instagram and the process is rife with discriminatory practices (Duguay et al., 2020; Gerrard & Thornham, 2020; Haimson et al., 2021).

A broader public focus on content moderation as the solution for the misinformation crisis has been divisive, leaving some to feel as though they are being censored unfairly (Frenkel, 2021), and others frustrated that platforms are not moderating effectively enough (Krishnan et al., 2021). Vaccine-opposed social media users have developed theories about how content moderation works, creating tactics to avoid moderation on platforms where their content violates platform policy and community guidelines (Virality Project, 2021). This article analyzes how vaccine-opposed users on Instagram share and spread anti-vaccine content, despite growing moderation attempts by the platform. Building on a body of work on “folk theorization” (DeVito et al., 2017) of social media algorithms, we explore the observable tactics that emerge out of folk theorization and discuss how vaccine-opposed influencers theorize platform rules, features and affordances in ways that allow them to avoid content moderation and amplify anti-vaccination content.

This article begins by synthesizing literature on social media use, focusing on the use of algorithmic folk theories as a way of understanding how users navigate social media spaces and find utility and visibility online. Studying the photo-sharing platform Instagram, we explore how users employ the technical features and affordances of social media to amplify their content, and the tensions of seeking amplification in light of increasing content moderation. We present the methods for this study which collated Instagram posts and ephemeral Instagram Story content from vaccine-opposed Instagram users using a snowball sampling approach. The findings section illuminates the strategies emergent from the dataset, cataloging the diversity of tactics Instagram users deploy to avoid moderation and amplify vaccine-opposed content. Finally, we discuss how this folk theorization of Instagram’s algorithmic moderation paves the way for the effective proliferation of vaccine misinformation and conclude with recommendations for future research further exploring the algorithmic knowledge of social media creators and its implications for information disorder, which generally refers to pollution of our information environment including misinformation and disinformation (Wardle & Derakhshan, 2017).

Folk Theorization of Social Media Use

The secrecy and technical complexity of social media sites has led to what Bishop (2019) calls “mystification”—an inability for users to gain a full or substantive understanding of the online spaces they inhabit. Users navigate this lack of knowledge by developing their own folk theories of how social media sites work (DeVito et al., 2018) and how to increase their visibility on these platforms (Karizat et al., 2021). This bridging of knowledge gaps constitutes an “algorithmic imaginary” (Bucher, 2017), or an arrangement of folk theories that determine how individuals engage with social media algorithms, often guided by exception moments “when an algorithmic recommendation is either a little bit too accurate or jarringly inaccurate” (Bishop, 2019, p. 2591). Folk theorization is both individualized and socially constructed—fathomed through experiences of sharing and consuming content and (un)met expectations that emerge out of these activities.

Emergent folk theories are not necessarily accurate depictions of how algorithms are actually designed and deployed but instead exist as heuristics that allow users to explain their personal experiences online. As DeVito (2021) highlights, folk theories “do not require full mechanistic detail or even dense mechanistic knowledge to be useful” (p. 2), nor must they be empirically correct to retain utility. Often algorithmic folk theories emerge from expectancy violation, for example, not getting as many “likes” on posted content as one expects to, or not seeing a friend’s content show up on your feed. They thus perform a range of functions beyond just explaining how platform features have been designed to work, including reifying community, structuring future use, and managing the affective dimensions of social media use. These outcomes do not necessarily hinge on how accurately a theory captures the existence and outcomes of particular algorithms, but on how useful the theory is in explaining and/or enhancing a user’s overall experience on the social media site.

Much of the academic exploration of folk theorization of algorithms and social media sites has focused on two elements: (1) the creation of algorithmic knowledge by a growing cohort of professional social media creators and (2) the cultivation of folk theories by marginalized communities about how to achieve visibility on social media. The former body of research looks at the professionalization of social media spaces, attending to novel cohorts of online labor such as influencers, YouTubers, and social media content creators (Arriagada & Ibáñez, 2020; Bishop, 2020). Research examines the growing realm of cultural production on social media spaces and how content creators “game” the algorithmic systems of social media sites and search engines to gain “algorithmic visibility” despite a lack of formal knowledge of the proprietary algorithms they encounter (Petre et al., 2019). YouTube content creators, for example, engage in “algorithmic gossip” to share their experiences and tactics to navigate visibility on the video platform (Bishop, 2019).

A parallel body of research looks at the algorithmic knowledge built by marginalized communities through their social media use. This research illuminates the consequences of algorithmic invisibility—how algorithmic systems, in this case social media content moderating systems, deny access to visibility and engagement to users with marginalized identities and how those impacted theorize the mechanisms and motivations around this invisibility (Bucher, 2012; Cotter, 2019). It is, however, important to note that visibility can lead to exposure to harm, therefore leaving vulnerable groups experiencing harm from both invisibility and hypervisibility (Dinar, 2021; Díaz & Hecht-Felella, 2021; Marshall, 2021; Siapera, 2022). Counterbalancing the empirically proven algorithmic oppression of historically marginalized identities on digital platforms (Noble, 2018), “algorithmic privilege” has emerged as a framework for understanding users who are “positioned to benefit from how an algorithm operates on the basis of identity” (Karizat et al., 2021, p. 3).

This is further complicated by users’ perception of their own marginalization. Recent research on removals shows evidence that the groups that experience the highest rates of removals on social media are transgender users, Black users, and politically conservative users (Haimson et al., 2021). This highlights a tension where both historically marginalized groups and politically conservative users all perceived themselves to be experiencing discrimination. Because content moderation is participatory, content that contains non-normative ideologies is often most likely to be flagged regardless of whether it violates community guidelines or not (Crawford & Gillespie, 2016; Marshall, 2021). However, politically conservative users’ content was found to violate community guidelines, often for hate speech and misinformation, particularly COVID-related. In contrast transgender users and Black users’ content were often incorrectly removed. In the absence of platform transparency, the underpinnings, and outcomes of how algorithms construct, privilege and oppress identity are negotiated through folk theorization within and between communities based on their digital experiences on social media.

Navigating (In)visibility on Instagram

The range of technical features on social media sites offers up plentiful opportunities for users to engage in folk theorization. Users navigate how their social media feeds are organized—what content is (de)prioritized and how—the emergence of new features such as ephemeral content (including Instagram and Facebook “stories”) and how to connect with other users both within their established and new networks. This article focuses on one social media site—Instagram—and the folk theories that emerge around the algorithmic structure of the visual social media site. Instagram, like the text-based site Twitter, is not structured around formalized communities (Chancellor et al., 2016). Instead, communities form around individual accounts and public hashtags. Popularity on Instagram is highly assigned to “the algorithm” and an individual’s ability to curate their content in ways that play into what the algorithm determines to be worthy of visibility. Instagram has offered users insights into how their algorithms actually work, and in particular how users can boost the chances of their content being featured prominently on other users’ “explore” pages (Mosseri, 2021).

There thus exists a personification of “the algorithm” as a central agent within the folk theories surrounding Instagram wherein audiences demonize, celebrate and attempt to ingratiate themselves to the mysterious algorithm to enhance their desired outcomes on the social media site. Folk theorization exists as a necessary framework for understanding tried-and-tested methods that work, or definitively don’t work, to please the algorithm. Furthermore, perceived algorithmic dependency has led to a homogenization of the aesthetic of Instagram, a rise of a specific, highly curated and predominantly feminized aesthetic that is theorized to be the most popular style for Instagram posts. This aesthetic has spread beyond mainstream social media topics to also take hold in more niche topic-based communities. For instance, mental health content on the platform is often seen to take on a familiar “Instagram aesthetic” with mental health advice presented in pastel colors on short, pithy, “explainer carousels” (Montell & Medina 2021). While this may be characterized in the positive, such aesthetics have also taken hold in more controversial spaces. The phenomenon of “pastel QAnon” (Argentino, 2021)—the spread of conspiracy theory QAnon-related content through aesthetically pleasing Instagram posts—typifies how the visual branding of toxic content and misinformation can afford content and users algorithmic visibility.

In response to this “gaming of the system” (Shepherd, 2020) social media sites are constantly altering algorithms and investing in content moderation (both human-led and algorithmic) to minimize the spread and elevation of problematic content (Allcott et al., 2019). Content moderation has taken a multitude of forms on social media sites, including algorithmic deprioritization (commonly known as “shadow banning”) and the banning and removal of certain content. Instagram has engaged publicly in content moderation on a range of controversial topics, for example, banning pro-eating disorder (pro-ED)-related hashtags (Chancellor et al., 2016), and limiting the visibility of QAnon and #SaveTheChildren related hashtags (Moran et al., 2021). However, little clarity exists from the platform itself over how content moderation decisions are made, leading to backlash from audiences. Accordingly, algorithmic folk theorization has emerged around content moderation on social media platforms, as users grapple with opacity from platforms over what content falls foul of community guidelines and the consequences for posting such content.

Speculating About Content Moderation (and How to Avoid it)

Cotter (2021) coined the term “black box gaslighting” to capture the asymmetry of power relations between users and platforms regarding algorithmic moderation. This asymmetry has fueled folk theorization, as users look to understand and communicate around platform decision-making and content moderation. For example, work by Gerrard (2018) highlights how pro-ED communities on Instagram evade moderation by devising new signals to identify themselves as pro-ED without using already moderated hashtags. Olszanowski’s (2014) research into Instagram’s moderation of nudity highlights further tactics of circumvention such as timed removal of posts and visually obscuring potentially controversial images.

Current research around content moderation has focused on marginalized groups for whom online moderation represents a further compounding of offline structures of marginalization and harm, notably the moderation of sex workers and adjacent labor (Are, 2021), fat bodies (Botella, 2019) and racial minorities (McCluskey, 2020). However, one of the backfire effects of content moderation has been the cultivation of martyrdom by afflicted accounts—for instance, popular anti-vaccine proponents have centered their banning from social media as evidence of “big tech censorship” and overreach (Garcia-Navarro, 2021). More work is necessary to understand folk theories of moderation (and circumvention) within communities that are producing problematic online content. Given the growing focus of social media sites on misinformation—particularly related to the COVID-19 pandemic and global elections—this article focuses on the folk theorization exhibited by problematic information sharers, specifically the anti-vaccination movement. In doing so, we explore how creators produce and disseminate anti-vaccine content and theorize routes to amplifying content and avoiding content moderation on Instagram.

The Continued Presence of Anti-Vaccination Content on Instagram

The emergence of the COVID-19 pandemic and the subsequent availability of COVID-19 vaccines has amplified anti-vaccine conversation and put social media platforms under pressure to address vaccine-related misinformation. In response, Meta (formally Facebook) announced it would ban users of its sites (Facebook and Instagram) who routinely shared vaccine misinformation, in addition to taking down groups that used the platform to organize against vaccines (Heilweil, 2021). The efficacy of such moves is debatable. Journalists Ben Collins and Brandy Zadrozny highlighted the existence of numerous large Facebook groups that evaded the ban by changing their group names to euphemisms like “Dance Party” or “Dinner Party,” using coded language that allowed them to talk about vaccines without being picked up by automated moderation technologies (Collins & Zadrozny, 2021). Polarization around vaccine sentiment has led to anti-vaccine advocates cementing their use of social media platforms—either moving their communities to less prominent platforms like Telegram and Gab—or cultivating ban-evasion efforts that they hope allows them to stay on larger sites like Facebook and Instagram. The anti-vaccine community thus exists as a ripe case study for exploring folk theorization around social media visibility and moderation. This article thus explores the following research questions.

RQ1. What tactics do vaccine-opposed Instagram users create to avoid content moderation?

RQ2. What strategies do vaccine-opposed Instagram users create to amplify anti-vaccination content?

RQ3. How do vaccine-opposed Instagram users theorize algorithmic moderation attempts?

Method

Research relied on a qualitative observational approach that identified Instagram users who share anti-vaccine messaging and observed the tactics and theories around content moderation avoidance they exhibit. Prior to collecting data, researchers used a walkthrough method to understand how users share and engage with content on Instagram. A walkthrough method, or engaging with the platform intentionally as a user might through a defined step-by-step process, allows for researchers to utilize the daily practices of the users within their community of interest to investigate a particular social or cultural phenomenon (Light et. al. 2018). This highlighted the need to observe static in-feed posts as well as ephemeral “Instagram stories” content, in addition to necessitating a snowball sampling method to capture how users discover new accounts within certain communities. Researchers created a new email address and Instagram account to collect data and used an iPad to access the Instagram app and a screen recording application to record the researcher’s interaction with the site. Data collection ran over the course of 14 days in late September, and early October 2021. 2 Researchers accessed Instagram every 24 hr during the data collection. 24 hr was the allotted time period as it is the length of time ephemeral content (“Instagram Stories”) is accessible after a user uploads. Two screen recordings were generated a day, one to collect the data within the Instagram Stories, the other to collect the data within the newsfeed. Data collection ended after 14 days as researchers collecting Instagram content agreed that a saturation point had been reached wherein they were seeing the same types of content, same tactics of avoiding content moderation and the same accounts being suggested repeatedly.

Snowball Sampling Procedure

To find Instagram users that share vaccine-opposed content, we followed a snowball sampling method. From the newly formed Instagram account, researchers followed all members of the “Disinformation Dozen”—widely known vaccine-opposed individuals who routinely share anti-vaccination content (Center for Countering Digital Hate, 2021)—who had active Instagram accounts. This included public figures such as Dr Joseph Mercola, Ty and Charlene Bollinger, Sherri Tenpenny, and Rizza Islam. We also included other prominent anti-vaccine activists, determined by their coverage within media discussion on the anti-vaccination movement, such as Del Bigtee, Catie Clobes, Alec Zeck, and Polly Tommey. Our initial seed accounts totaled 13 and our first collection day captured content from these accounts in addition to snowballing to follow and capture content produced by accounts recommended or endorsed by the initial seed set. Endorsement was defined by the poster explicitly telling followers to follow a particular account. Following this snowballing method, by the final data collection day the account followed 137 Instagram accounts, 100 of which posted either an Instagram story or in-feed post on the final day of data collection.

Analytical Method

Using an inductive approach (Charmaz, 2014; Glaser & Strauss, 1967), a team of three researchers reviewed the recorded data for emergent tactics and content themes and captured relevant visual examples for secondary review. Each researcher analyzed a different day of content and kept memos of recurring visual and rhetorical tactics used by Instagram accounts in the sharing of their content and any explicit comments users made around understanding Instagram’s algorithm and/or content moderation. The research team then met to participate in a collaborative clustering activity (McClure Haughey et al., 2020) to cluster together related screenshots and distill emergent tactics and themes related to folk theorization.

Regarding the ethical challenges that arise from using social media data (Crawford & boyd, 2012)—collected without the direct consent of users—we anonymize the account names and other identifiable features of the content presented within this study when the account in question has a small following and the user is not a public figure. Data collected from highly visible public accounts, that is, those that (1) have over 10,000 followers, (2) are verified users by Instagram, or (3) are owned by known public figures with an existing media presence, are not anonymized.

Findings

Due to the snowball sampling, the length of time data collection took rose dramatically from the first to the last day. The length of recording time similarly reflects the sheer amount of content (both everyday Instagram content, vaccine opposition and vaccine misinformation) produced by vaccine-opposed Instagram users. Moreover, it highlights how Instagram users can spend significant amounts of time-consuming vaccine-opposed messaging on the site (see Table 1). This similarly illuminates an asymmetry in the quantity of data users published on the static Instagram feed compared with the more heavily used Instagram stories.

Quantitative Measures of Instagram Posts and Stories Made by Accounts Followed Using the Snowball Sampling Method Outlined.

The following section highlights content moderation avoidance tactics that emerged from the observational data.

RQ1: What Tactics Do Vaccine-Opposed Instagram Users Create to Avoid Content Moderation?

Users developed visual tactics that they believe minimize their propensity to be moderated by Instagram. Three primary visual tactics emerged—(1) blocking out/covering up COVID-19 and vaccine-related keywords on text content, (2) blurring or covering their faces and identifying features, and (3) purposefully switching back and forth between vaccine-related content and “normal” content (e.g., nature and food photos). These tactics were presented as a route to avoiding automated content moderation, suggesting through their continued use, that visually blocking potentially triggering content or “hiding” it among Instagram-approved content would allow it to remain on the platform.

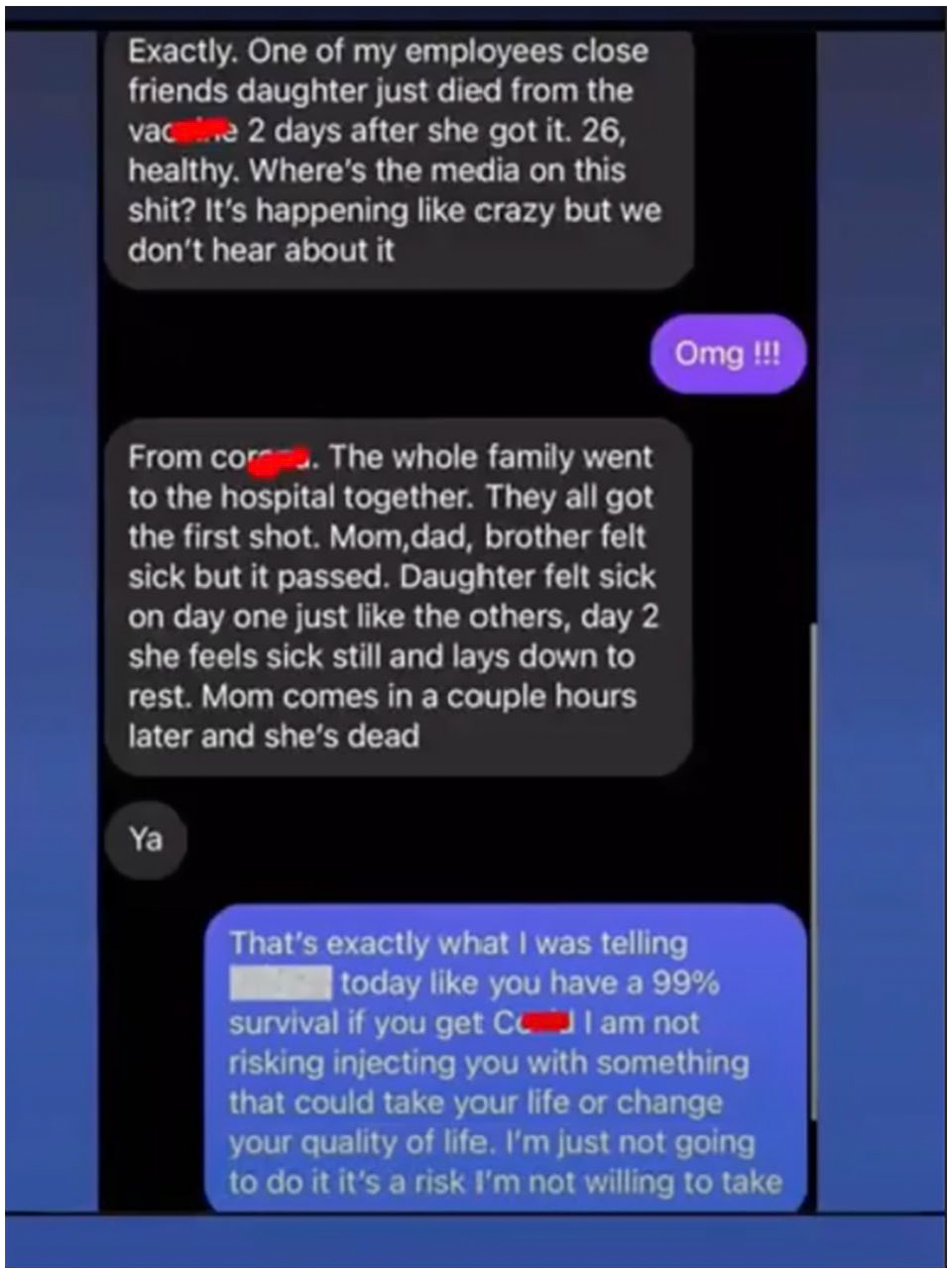

The most popular visual tactic was the blocking out of vaccine-related keywords (see Figure 1)—this was usually done fairly crudely, with creators using the “paint” feature of Instagram stories to place lines to cover up potentially triggering keywords. While this practice was widespread across the data sample, its application was far from uniform. There was a lack of consistency among users regarding when they chose to block out vaccine-related words and when they left them visible and a lack of consensus across the sample data over what words should be covered. In the main users chose to cover up the words “vaccine” with some consistency, but differed over whether to cover up “Covid-19,” “shot,” and other related keywords (Figure 1).

An Instagram user blocks out the words “vaccine” and “Covid” with crudely applied red lines.

A less widely used visual tactic emerged that involved users covering their faces and obscuring their profile pictures to mask their identity. Alec Zeck, for example, appeared in numerous livestreams and Instagram stories covering half their face with a piece of paper and even putting a red “X” through their profile picture, claiming that they were being censored and thus needed to mask their identity to overcome this censoring. A more widespread visual tactic, particularly used by vaccine-opposed users who built their platforms on a broader range of topics (e.g., health and wellness influencers), was to switch between vaccine and “normal” content. Users oscillated between posting about vaccine and vaccine-mandate-related information and posting inoffensive pictures of sunsets, their family and pets, and the foods they ate. This visual tactic emerged in response to Instagram limiting accounts that had been flagged by others for posting misinformation—users pointedly suggested that bulking out their Instagram stories with normal content that could not be flagged to Instagram helped improve the overall reputation of their account in the eyes of the platform’s content moderation algorithms and allowed them to post more vaccine-related information without negative consequences.

Lexical Variation and Coded Language

Lexical variation has become a widely documented tactic (Collins & Zadrozny, 2021) to avoid content moderation, emerging out of folk theorization that moderation focuses on keywords, and obfuscating these keywords is thus effective at circumventing “the algorithm.” This appeared in the form of avoiding particular words, misspelling them, or substituting portions of words and/or phrases with emojis or symbols. Users often avoided using the word “vaccine,” instead, replacing it with “Maxine” “poked,” “  (v-vaccine emoji)” and “

(v-vaccine emoji)” and “ – unicorn emoji,” among others. New iterations and types of lexical variations emerged over the 2-week period of our data collection, highlighting the flexible nature of folk theorization as users engaged in a cat-and-mouse type dynamic with “the algorithm” to avoid moderation. The avoidance of using the word “vaccine” even occurred in video content where a user would pause mid-sentence to imply the word vaccine without explicitly saying it.

– unicorn emoji,” among others. New iterations and types of lexical variations emerged over the 2-week period of our data collection, highlighting the flexible nature of folk theorization as users engaged in a cat-and-mouse type dynamic with “the algorithm” to avoid moderation. The avoidance of using the word “vaccine” even occurred in video content where a user would pause mid-sentence to imply the word vaccine without explicitly saying it.

Leveraging Instagram’s Technical Features

Another emergent tactic was to make use of the variety of technical features available in Instagram stories to achieve amplification or at least avoid content moderation. We consider the use of Instagram stories, a way of sharing content temporarily, to be a tactic itself, as the “temporary” nature of the content appeared more attractive than permanent in-feed posts. The reliance on ephemeral tools suggests folk theorization exists around the comparative propensity for ephemeral content to fall foul of algorithmic moderation. For example, an account in our data set shared one static post of herself doing yoga on the beach to her newsfeed while posting over 20 posts to her stories that include both “lifestyle” content and vaccine opposed messaging. Within Instagram stories there were several features that were highly used by vaccine-opposed accounts. This includes features to share links outside of the platform, overlaid text, images and illustrated “stickers,” and the ability to repost Instagram posts from other accounts. Notably, Instagram allows for out-of-platform links to be inserted into stories but not grid posts. This allowed creators to link to their content on other platforms that may be less heavily moderated, such as Telegram, YouTube, personal websites, and podcasts. Further tactics developed within the use of these additional features, for example, overlaid images and text were often rotated upside down to try to make moderation more difficult.

As the pandemic persisted, Instagram created a digital “sticker” containing the phrase “Let’s Get Vaccinated” that users could add on top of their Instagram stories. If users added this sticker, their story was collated with other users who similarly used the sticker, and this collated “story” was prioritized by Instagram on a user’s story feed. The first “story” on their Instagram feed would then be a collection of their friends’ stories all using the “let’s get vaccinated” sticker, with the story outlines highlighted in a different color with a heart emblem attached (see Figure 2). Within the dataset, users would often use this sticker to reap the benefits of prioritization, but then fill the page with vaccine-opposed content and vaccine-related misinformation in a similar manner to hashtag hijacking. Some would partially cover the sticker with text so it would say a different message, (e.g., “let’s get educated”). In addition to gaming algorithmic prioritization in this way, users theorized that utilizing Instagram’s pro-vaccine sticker would minimize the chance of their content being moderated.

A thumbnail of an Instagram user’s profile picture highlighted purple with a heart logo, indicating that their stories contain vaccine-related information.

Instagram also allows users to post multiple photos in one in-feed post, known as a “carousel.” Vaccine-opposed accounts used this feature heavily in a specific manner—with the first photo, that is displayed when scrolling through the newsfeed or accessing an individual accounts profile, consisting of non-offensive content, for example, sunsets, yoga, or food pictures, and subsequently embedding anti-vaccine content in the other photos in the carousel. In a similar vein of “hiding” anti-vaccination content among “normal” content, vaccine-opposed users also utilized Instagram’s video features—in particular, “Lives,” that is, live streamed video and short videos known as “reels”—to embed vaccine opposed messaging, suggesting that video content is assumed to be a less heavily moderated form, or that videos allowed vaccine-opposed users to mask banned content more effectively. For example, a video posted to one user’s grid was labeled “Let’s Roast Together” and featured the user discussing health while cooking. Twenty-three minutes into the 42-min-long video, the user discusses their opposition to vaccine mandates and recommends that their audience listen to their podcast on Spotify for more concrete details.

Finally, users would often use Instagram’s “link in bio” feature to direct users to anti-vaccine content off-platform through a URL embedded in their biography. Users would then simply allude to vaccine opposition, avoiding contravening Instagram’s Community Guidelines, and direct followers to commonly utilized link collation sites such as LinkTree where they house external links to anti-vaccine content. Such a tactic suggests that users believe that the “link in bio” feature is not moderated by Instagram.

RQ2: What Strategies do Vaccine-Opposed Instagram Users Create to Amplify Anti-Vaccination Content?

RQ2 questions how anti-vaccine advocates use Instagram to amplify vaccine-opposed content despite it contravening the platform’s rules and guidelines. Content spread requires a two-pronged approach—first, the avoidance of content moderation, so that content (and user accounts) remains active and visible on the platform and, second, specific strategies to bring in new audiences either through building follower lists or by getting content reshared by other users, that is, amplifying content reach. However, the data collection highlighted an immense difficulty in separating amplification from moderation avoidance, suggesting that Instagram users see them as entwined goals. The emergent tactics explored in the above section highlight the range of strategies theorized to achieve either avoidance of moderation or amplification (or a combination of both). This research is limited by its observational method as users rarely explain precisely why they are engaging in a certain tactic, or what they believe a certain strategy will do. Accordingly, we do not know the exact reasonings behind each tactic, particularly whether it is designed to circumvent moderation or amplify. However, we can hypothesize these motivations through an analysis of the continued and varied use of each strategy, combined with other emergent narratives around algorithmic visibility on the platform.

The diversity of tactics and inconsistencies in their application by users affords insights into theories of how Instagram works and how to “game the system” to ensure visibility. In particular, the inconsistency of application of visual blocking and lexical variation of COVID-19-related keywords highlights uncertainty within the community as to how the Instagram algorithm interacts with potentially triggering content such as COVID-19 information. For users who consistently practice these strategies, the algorithm is depicted as dependent on keywords for content moderation, therefore hiding these words allows users to share information undetected. Inconsistent application by users can be explained through its combination with other Instagram folk theories. For example, as further explored below, Instagram users often posted stories lamenting that their content had been reported by other users, or that Instagram flagged their captions as similar to those that had been reported in the past. Users are thus very aware that content moderation happens in a multitude of ways, including being driven by human-led flagging processes, undermining the effectiveness of visual blocking and lexical variation strategies. Despite this awareness, users continue to practice blocking and coding strategies, and often accompany them with other rhetorical strategies aimed at highlighting their perceived censorship on the platform. Moreover, they capitalize on such perceptions by using the features of Instagram that are designed for community building to amplify censorship complaints and draw further attention to their broader anti-vaccination content. For example, users tagged fellow “censored” accounts in Instagram stories, allowing the tagged users to repost their content to novel audiences. Furthermore, posters used the comment features of Instagram posts to ask followers to tag other friends to see their content to “counter” the censorship they were experiencing. In this way vaccine-opposed content creators capitalize on content moderation against them, turning it into an amplification strategy—theorizing that perceptions of marginalization on Instagram can actually serve to enhance their credibility and visibility as others reshare their content to “break the algorithm” and overcome Instagram’s censorship of fellow creators. Accordingly, amplification is achieved through a combination of strategies designed to both circumvent moderation, draw attention to moderation attempts as unethical and game algorithmic systems that enhance content visibility. The perceived success of this amplification relies upon the broader audience sharing the same theorization of why certain content is moderated, and agreeing on the normativity of such activities, as further explored by RQ3.

RQ3: How Do Vaccine-Opposed Instagram Users Theorize Algorithmic Moderation Attempts?

The flexibility and adaptability of folk theories of content moderation allows for the strategies developed and deployed by vaccine-opposed content creators to serve multiple purposes including circumventing automated moderation, amplifying content, and enhancing credibility. The findings in RQ1 and RQ2 highlight folk theorization around how to achieve certain goals, that is, theorized strategies designed to amplify content without triggering automated or human-led moderation. However, there also exists theorization around the why of moderation that questions the motivations platforms have for pursuing certain content or communities for moderation.

As such, folk theorization happened around why users believed they were being subject to content moderation, specifically tying a growing rhetorical narrative of marginalization used by the anti-vaccination movement (see McCarthy, 2022) to “censorship” by technology companies like Instagram. Users argued that the moderation of their content and limitations placed upon their accounts such as limiting their ability to go live, caps on the number of times they could post within a day, and algorithmic de-prioritization of their content on other people’s feeds constituted “censorship” and was evidence of marginalization. This was tied to other rhetorical strategies from the broader anti-vaccination movement including narratives that vaccine mandates were akin to Nazi Germany, or transphobic arguments mocking trans identities by claiming to “identify as vaccinated.” The resulting folk theorization thus claimed that vaccine-opposed users are part of a marginalized group being explicitly targeted by Instagram’s content moderation systems. Users provided “evidence” of this marginalization by sharing screenshots of warning messages from Instagram and posting stories that their viewing numbers were down and thus they must be “shadow banned.” A common practice also emerged of maintaining several backup accounts that users routinely directed their followers to “just in case” their main accounts were taken down. Many users also posted in their profiles that they were “axed” at a particular user number (see Figure 3).

Numerous examples of claimed “censorship” from anti-vaccination accounts and proposed strategies to mitigate moderation.

The flexibility and adaptability of folk theories of content moderation allows for the strategies developed and deployed by vaccine-opposed content creators to serve multiple purposes including circumventing automated moderation, amplifying content, and enhancing credibility. The differential application of these strategies represents a richness of this theorization—cementing previous elaborations of folk theories being a route to effective platform engagement and highlighting how theorization differs even within a community.

Discussion

Our analysis highlights the development of a variety of tactics thought to allow users to post and spread vaccine-opposed content and related misinformation without falling foul of Instagram’s content moderation practices. The emergence of such tactics exists as a type of folk theorization on the platform—the cultivation of non-professional knowledge of how visibility on the platform is designed to work. Given the opaque nature of Instagram, this folk theorization serves to make the platform useful for vaccine-opposed users, especially given increasing attempts to curtail vaccine misinformation by the platforms.

Circumvention and the Downstream Effects of Moderation

While the tactics documented by this observational study emerge from a specific community, these tactics are not endemic to this particularized online conversation but instead are the very same tactics also utilized by marginalized groups that disproportionately experience discriminatory content moderation. It is therefore important to resist a normative framing of folk theorization, as the theories and tactics that emerge out of folk theories of social media use are not necessarily harmful or productive in and of themselves but instead leads to the amplification or spread of content that, in this case (anti-vaccine misinformation and vaccine opposition) has the potential to cause harm.

Past research highlights how marginalized and underrepresented groups are disproportionately moderated on mainstream social media platforms, and Instagram specifically, for talking about topics that are deemed controversial including sex education, racism, White supremacy, colonialism, gender and sexuality and LGBTQ+ issues more broadly (Are, 2021). In response, users wanting to engage in a spectrum of “controversial” conversations have theorized routes to communicating and tactics to achieve visibility and avoid moderation on Instagram (Moss, 2021; Stokel-Walker, 2022).

The speed, creativity, and flexibility of folk theorization around social media match that of algorithmic change, meaning that additional content moderation measures spur new theories and tactics for circumvention. Furthermore, the engagement economy structure of Instagram and other social media platforms unfortunately pairs well with the needs of misinformation amplification. The features and affordances that the highlighted folk theories rely upon to circumvent moderation and achieve amplification are originally designed to benefit the Instagram creators that make the site a financial success. Any feature created to help users amplify their content, such as using stickers, links to other platforms, allowing multiple creators to go live together, can and is (as documented in this study) used to amplify and spread vaccine-related misinformation within the networks of anti-vaccine advocates. This highlights the potentially mismatched alignment of the engagement-led profit structure of social media and routes to minimizing the spread and impact of misinformation online.

Folk Theories Around Moderation

The theorization emergent from the analysis highlights how vaccine-opposed Instagram users cultivate theories around why they are (allegedly) being targeted for moderation, how this moderation occurs and strategies to avoid it. This extends knowledge around folk theories of social media by (1) focusing on mechanisms of content removal in addition to routes to visibility and (2) expanding theorization to include problematic and extreme communities on social media.

The research findings cement notions of flexibility and utility central to folk theorization. Researchers were able to quickly amass a growing sample of vaccine-opposed Instagram users who actively share anti-vaccination messaging and misinformation with little to no effective moderation, highlighting inefficiencies in Instagram’s moderation strategies and the usefulness of folk theorization for the vaccine-opposed. Their continued presence on Instagram and their ability to share content-violating posts can be used as evidence within the community that moderation-avoidance strategies are effective, regardless of whether the strategy rests on a correct analysis of Instagram’s algorithms or motivations. Indeed, the inconsistent application of circumvention strategies, and the sheer diversity and rapid changing of tactics, points toward a mismatch between the mechanistic theorization behind the tactic and the reality of Instagram’s moderation approach. For example, if users were correct that Instagram was moderating all vaccine-related keywords and that blocking the keywords disrupted this process then all content related to vaccination (pro- or anti-) would be impacted. Instead, anti-vaccination messaging that did not cover up keywords was consistently, and successfully, shared within the dataset.

Accordingly, folk theorization within vaccine opposed communities produced tactics that achieved the desires of the community, that is, message amplification, without resting on complete or even correct mechanistic knowledge. Instead, folk theorization attended not only to the technical underpinnings of visibility but also the cultural, through the weaponization of marginalization and “censorship” as a route to achieving message credibility. The tactics developed were effective not necessarily as they helped users avoid moderation but because visible engagement with tactics to avoid moderation cemented the narrative that vaccine-opposed users were being unfairly targeted by Instagram.

The literature around folk theories has, in the main, focused on communities that experience marginalization both offline and online. Folk theories thus attend to both a lack of technical knowledge and a community’s need to remain connected within discriminatory or unfair societal structures. The findings from this research, however, extend the boundaries of folk theorization by applying it to communities that contentiously claim marginalization and whose content specifically violates content moderation policies of social media platforms because it is deemed to be socially problematic. There is a distinct difference between structurally marginalized (e.g., queer, disabled) content creators surviving on a platform whose presence does not contradict community guidelines versus misinformation-embedded communities whose presence contradicts the rules of the platform. Accordingly, the algorithmic understanding of vaccine-opposed communities is framed around hostility to Instagram and a belief that algorithmic moderation is specifically designed to find and attack their accounts and content.

Conclusion

This research highlights that despite visible attempts at content moderation and changes to policy, anti-vaccination messaging is still prevalent on Instagram. Vaccine-opposed Instagram users have developed a diverse number of tactics they believe allow them to share anti-vaccination content and misinformation without it being taken down through content moderation. Vaccine-opposed users use folk theorization to understand their experiences within an increasingly hostile digital communications environment, theorizing the motivations behind, methods undertaken, and tactics to avoid algorithmic content moderation. The utility of these theories lies not in their technical explanation of the mechanisms behind Instagram but as a route to understanding how to effectively communicate and “game” the system as it currently exists. Accordingly, overcoming the spread of misinformation by these users is complicated. The flexibility of folk theories allows users to constantly evolve circumvention tactics and even weaponize failure (i.e., characterize the moderation of their content as “censorship”) to achieve visibility and credibility. In addition to amplifying the current backfire effects of content moderation, further moderation attempts may also reinforce the algorithmic oppression already experienced by historically marginalized groups online.

While the tactics observed within this study are analytically rich, observational methods are subjective in nature. Consequently, future research to bolster these findings should expand methodologically. For example, computational text analysis could be used to confirm findings around lexical variation used in video and live content. Further survey and interview methods would be useful for disentangling the concrete underpinnings of circumvention strategies to better understand why vaccine opposed users believe they work and differences within the community over their use or perceived value. Findings also highlighted the use of live streaming as a moderation evasion technique—future research should concentrate analysis specifically on Instagram (and other’s) live streaming features, and how users perceive the platform’s ability to moderate live content. Furthermore, while this research highlights the necessity of extended conversations of algorithmic knowledge to problematic communities, future work should look to evaluate comparatively the folk theorization undertaken within different communities. Given the importance of perceptions of marginalization and the utility of arguments of “censorship” that support the folk theories of vaccine-opposed communities, future research should consider the differences in strategies and outcomes of online groups that do experience marginalization compared with those whose content explicitly contravenes platform rules.

In sum, this article highlights how, in the absence of concrete knowledge about the algorithmic content moderation strategies of social media sites like Instagram, users develop folk theories that allow them to navigate visibility and achieve their desired outcomes online. As a result, problematic communities, like those sharing anti-vaccination messaging, cultivate tactics to share and amplify vaccine-opposed messaging despite active moderation attempts.

Footnotes

Acknowledgements

This work was also made possible thanks to support from the Center for an Informed Public at UW, the John S. and James L. Knight Foundation, and Microsoft’s “Defending Democracy” program.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This material is based upon work supported by the National Science Foundation Graduate Research Fellowship Program under Grant No. DGE-1762114. Any opinions, findings, and conclusions or recommendations expressed in this material are those of the author(s) and do not necessarily reflect the views of the National Science Foundation.