Abstract

In the social media marketplace of ideas during the 2020 coronavirus pandemic, epidemiologists and other scientific and medical experts competed for attention with news media, government agencies, politicians, celebrities, and rank conspiracy theorists. However, everyone with a Twitter account was not equally qualified to speak knowledgeably about critical issues related to the outbreak, such as prevention and treatment. And, accurate information from informed sources can mean the difference between life and death. Our exploratory study addresses a simple, but important question: whose messages about the efficacy of hydroxychloroquine as a treatment for coronavirus were getting the most attention on Twitter? We provide a data visualization of Twitter activity for the period of 21 January through 21 May 2020 that shows users who tweeted about hydroxychloroquine, as well as who interacted with each of them (through likes, comments, retweets, etc.) to determine who were the most prominent voices on the network during a critical juncture of the outbreak. From our analysis, it appears that President Donald Trump’s handle (@realDonaldTrump) and other pro-Trump related accounts were the most influential voices on Twitter during this time of the crisis, rather than those from relevant experts, such as the Centers for Disease Control and Prevention (@CDCgov) or the National Institute of Allergy and Infectious Diseases (@NIAIDnews).

In times of crisis, such as the coronavirus pandemic, the need for accurate, reliable scientific information from qualified experts is crucial to fully understand and effectively manage the threat. The public needs whole truths from the appropriate experts—as awful, inconvenient and disheartening as those truths may be—but simply put and in laymen’s terms. Baseless theories, misinformation, or simply manipulating the truth for political gain can be deadly. For instance, an Arizona man died after attempting to self-medicate against the coronavirus by taking chloroquine phosphate after his wife (who also took the substance and was hospitalized in critical condition) heard then US President Donald Trump tout chloroquine as a possible remedy for the COVID-19 strand of coronavirus (see Waldrup et al., 2020). President Trump had also promoted hydroxychloroquine (which is closely related to chloroquine) in a 21st March tweet as potentially useful in fighting the virus. By the time of this writing that tweet received over 387,000 likes and over 103,000 retweets. 1 However, actual medical research on hydroxychloroquine as a potential treatment for coronavirus was only in the very early stages and claims about the drug’s effectiveness are still unproven. Moreover, that tweet was posted during the first wave of the virus spread in the United States when information (and misinformation) was arguably most critical.

Who was leading the conversation in this broader marketplace of ideas where news media compete against a wider range of voices, including scientific experts, government agencies, politicians, celebrities, and other members of the public with a Twitter account? The answer is more vital than it might appear at first glance. As McAweeney (2020) noted, we know the ultimate costs of inaccurate medical information during a pandemic when “people die from misleading hope about a fake COVID-19 cure,” such as the usefulness of hydroxychloroquine as a safe and effective treatment. So, exactly whose voices were being heard about hydroxychloroquine on Twitter during the coronavirus outbreak? The data visualizations we present in this exploratory research may help answer that question by demonstrating the most dominant voices on Twitter and providing further insight into the lifespan of a single piece of misinformation during the first wave of a global pandemic.

In the marketplace of ideas that is social media, epidemiologists, and other scientific and medical experts compete for attention with news media, government agencies, politicians, and rank conspiracy theorists. One of the more enduring, and factually unfounded claims made by QAnon and perpetuated on social media by celebrities, such as Woody Harrelson and John Cusack is that 5G wireless networks cause coronavirus (see Brown, 2020; Kaur, 2020). Incidentally, it is wireless networks that many Americans now depend on more than ever for communication, information, work, and education, while following stay-at-home orders during the pandemic.

Another, but related, piece of woefully false misinformation began to spread that African Americans were somehow immune from the COVID-19 (see Kertscher, 2020; Palma, 2020). At the same time, ProPublica reported that Blacks in the United States were contracting COVID-19 and dying from the virus at a higher rate than other racial groups within the same demographic areas (A. Johnson & Buford, 2020). Much of the misinformation about the coronavirus centered around hydroxychloroquine, a drug used to prevent malaria and to treat arthritis and lupus. As a response to the misinformation, state governments began to stockpile the medication as individual consumers began seeking prescriptions for the drug (E. Cohen & Bruer, 2020). This had a dire effect on Latinx and African American communities, as both groups have a higher prevalence of lupus and worse effects from arthritis than White Americans (Feldman et al., 2013; Greenberg et al., 2013). Many members of these communities were unable to fill their prescriptions (H. Cohen, 2020; Koziatek, 2020; Sisson, 2020), which led to heightened stress and anxiety, as well as worse medical outcomes.

On 11 March, when many were just starting to realize the seriousness of the coronavirus outbreak, Alex Jones’ Infowars website broadcast was a venue that furthered another specious claim that COVID-19 is an American made biological weapon (T. Johnson, 2020). Jones later promoted a brand of toothpaste and other products, which he claimed (without proof) prevented or cured coronavirus (Porter, 2020). Although, Jones has been banned from prominent social media outlets, including Facebook, Twitter, and YouTube, personalities associated with his website and others regularly post Jones’ Infowars content on their own social media accounts, thus circumventing the ban (Berr, 2019). Moreover, his Infowars website regularly attracts more viewers than mainstream news outlets, such as the Economist, Newsweek, and even the conservative journal, National Review (Beauchamp, 2016). It is amid this kind of media maelstrom that Dr Anthony Fauci, who is Director of the National Institute of Allergy and Infectious Diseases, as well as other medical experts and epidemiologists had to shout into the wind of patently false assertions perpetuated by fringe conspiracy theorists, celebrities, and President Trump.

The first time President Trump promoted the use of antimalarial drugs (chloroquine and hydroxychloroquine) as a treatment for coronavirus was during a press briefing on 19 March 2020. By that evening, according to a New York Times analysis of prescription data, first time orders for the drug came into retail pharmacies at more than 46 times the rate of the average weekday (see Gabler & Keller, 2020, pp. 1, 6). Clearly, President Trump’s suggestion about the efficacy of chloroquine and hydroxychloroquine was taken credibly in spite of medical experts’ warnings about the dangerous side effects associated with these drugs. It should be noted, however, that more than 40,000 health care practitioners (including dermatologists, podiatrists, and psychiatrists) prescribed these drugs for the first time after the President Trump’s statement (see Gabler & Keller, 2020, pp. 1, 6). Despite mischaracterizing limited pieces of scientific information, President Trump was seen as a credible source about the uses and effects of prescription drugs.

Meanwhile, Fox News channel and their primetime opinion program hosts, such as Sean Hannity often asserted during the early weeks of the coronavirus outbreak in the United States that the media and Democrats were blowing the threat posed by the virus out of proportion and characterized it as a “hoax.” These kinds of routine dismissals and mischaracterizations by Fox News prompted an open letter to Fox Corporation Chairman Rupert Murdoch and CEO Lachlan Murdoch by Columbia School of Journalism professor Todd Gitlin and signed by over 200 journalists and journalism professors (including one of the authors of this study) reprimanding the network for violating the “elementary cannons of journalism” and urged its future coverage of the pandemic to be “based on scientific facts” (see Gitlin et al., 2020). A poll conducted by The Economist/YouGov (2020) showed that Fox News viewers were less likely than others to be worried about the coronavirus outbreak and a Pew Research study (see Jurkowitz & Mitchell, 2020) found that 79% of Fox News’ audience surveyed believed that risks posed by the virus were being exaggerated in the media. Fox News is now facing an array of potential lawsuits based on their misleading coverage of the pandemic (see Ecarma, 2020). While The Economist/YouGov and Pew Research studies are good to start to understand the affect that cable news outlets have on public understanding of the risks associated with the coronavirus outbreak, the data visualizations presented here are designed to further our understanding about the prevalence of different voices within the expanse of social media (particularly, Twitter), as well as contextualize some of the interrelationships between Twitter, politicians, experts, and news media.

Ciphering Medical Misinformation on Twitter: Expertise, Politics, and Bullshit

When ciphering claims about COVID-19 on Twitter, it is easy to distinguish the sources of those claims into categories of expert organizations, politicians, news media, celebrities, and others. However, it is less clear what the cacophony of tweets from this wide array sources collectively means. How are we to understand epistemic authority and decipher truth and falsity amid the barrage discordant claims about medical information on Twitter?

This is a particularly frustrating question when placed in context of social media platforms because, as Nielsen (2015) has argued, most of what is posted on social media is (epistemically speaking)—“bullshit”—in that they are statements offered without any real regard for truth or falsity. Furthermore, Nielsen (2015) suggested that “bullshit” is especially common on social media for two reasons because people using social media platforms are often insufficiently informed about whatever it is that they are tweeting about, or they are making claims about something that are not based upon agreed forms of justification.

Nielsen’s argument about truth claims on social media presents an interesting suggestion when applied to our study of which voices were leading the conversation about COVID-19 on Twitter. If expert sources of information are equally weighted with all others on Twitter in terms of credibility; or, all information—no matter the source—is taken as “bullshit” (using Nielsen’s vernacular), then it stands to reason that the most prominent voices (based on volume of tweets, retweets, likes, etc.) are the de facto leading voices. Thereby, false and misleading claims about coronaviruses on Twitter become all the more epistemologically problematic whether they are equally weighted with credible ones from expert sources, or expert knowledge is equally regarded as “bullshit” along with all of the hack conspiracy theories. As Nielsen (2015) stated, This raises the usual questions about the relationship between power and knowledge, about the relationship between folk theories, professional forms of knowledge, and various kinds of science, as well as the interests involved and who bears the consequences of acting upon or espousing bullshit. (p. 2)

In the history of US jurisprudence, the “marketplace of ideas” metaphor is often invoked as a way to address truth and falsity within public expression. However, within the social media marketplace ideas the epistemological struggle between influence and information has become more troubling, as demonstrated by Russia’s placement of fake stories on social media during the US presidential election in 2016: In the realm of social media it seems that the nature of fake news has vexed the very notion of truth itself and the validity of certain forms of knowledge over others. While institutions of journalism employ empiricism in the reporting of information and the presentation of facts, fake news comes in a barrage of memes and tweets and provides a form of discourse in which no perspective is any more or less credible than any other. In post-truth everything is relative; there is no right or wrong, fact or falsity, truth or lie. In which case, we might reasonably ask if the marketplace of ideas metaphor has outlived its usefulness? (Blevins, 2019)

Without a self-regulating marketplace of ideas, expert knowledge is put at a disadvantage.

The National Institute of Health (2020) released an online set of resources to help scientists and members of the medical community digest peer-reviewed studies related to COVID-19. While the online tool is freely available online, the studies it provides access to are not likely to be easily understood by lay members of the public, who are not versed in scientific methods, and thus, are more likely to be susceptible to false and misleading information related to COVID-19.

At the same time, online social networks tend to be prime venues for how people not only attain background information (and, perhaps, more significantly) form emotional attitudes about public health issues (see Liang et al., 2019). In their study of information spreading patterns on Twitter during the 2014–2016 Ebola outbreak, Liang et al. (2019) found that Twitter influencers were more successful at broadcasting information, getting retweets of their posts, and thus, were the most prominent figures in the social media network, and suggested that these influencers could be useful in disseminating public health information. However, what if those influencers are purveyors of misinformation, or are simply misinformed?

Twitter (2020) released a statement claiming that it was monitoring more than 1.5 million accounts, “which were targeting discussions around COVID-19 with spammy [sic] or manipulative behaviors,” and would require people to remove tweets that appeared to “influence people into acting against recommended guidance” by health authorities; described cures that “are known to be ineffective” or “harmful”; denied “established scientific facts about transmission”; as well as other claims encouraging people to engage in risky behavior; impersonating government health officials or organizations; provided misleading diagnostic criteria or procedures for COVID-19; and claims that specific groups or nationalities were more or less susceptible to the disease. Twitter’s efforts seemed to focus on what Jamison et al. (2019) described as “malicious actors” on Twitter, such as spambots and human trolls, whose activities can have a negative impact during public health crises. Contemporary studies of other health communication issues, including antivaccination debates, had suggested that Russian-based bots and trolls were behind disinformation campaigns on Twitter (see Broniatowski et al., 2018; Walter et al., 2020). As “disinformation” campaigns, these were intended to be misleading. Whether intentional or not though, the lack of accurate medical information during a pandemic can be consequential. Toward this end, Twitter sought to address any “misinformation” on its network. That is, Twitter sought to correct any false information without regard to whether the poster intended to mislead.

While “disinformation” may have a more negative connotation than “misinformation” because it implies intentionality in making false claims, “misinformation” like “bullshit” is arguably more problematic because it obscures the very essence of epistemic reasoning. And, despite Twitter’s efforts to address the proliferation of misinformation on its network, the result was not foolproof, as conversations taking place on the network continued to actively engage the type of claims it proposed to prevent. For instance, a video originating from Breitbart’s Facebook account showed lab-coated doctors making specious claims about hydroxychloroquine as a treatment for COVID-19 was shared by President Trump to his 84+ million Twitter followers before it was taken down and was distributed even further based (at least in part) on Trump’s promotion of the statements (Gilbert, 2020).

Accordingly, despite the best efforts of medical experts to make available scientifically based information about COVID-19, and Twitter’s attempt to retroactively delete false and misleading claims, our study addresses a simple, but important question: whose messages about COVID-19 received the most attention on Twitter when the early stages of the outbreak when there was a critical need for accurate information? While our research question may seem deceptively simple at first blush, social media outlets in the United States are afforded broad immunity from legal liability for third-party content posted on their networks, thanks to Section 230 of the Communications Decency Act (1996). Nonetheless, there remain grave ethical concerns about the harm inflicted by misinformation (as well as disinformation) on popular social media outlets. While social media content may too often be dismissed as “bullshit,” what happens when misinformation disseminated on those platforms is acted upon as truth? For example, the riot on 6 January 2021 recently demonstrated the potential consequences of widespread falsehoods, as thousands of Trump supporters stormed the US Capitol to disrupt the electoral college vote count motivated by the #StopTheSteal campaign and believing (falsely) that the 2020 presidential election had been stolen (see Penazola, 2021; Steakin et al., 2021). After Twitter and other social media platforms banned Trump and several of his allies from their networks, a study by Zignal Labs showed that false claims about election fraud dropped from 2.5 million to 688,000 (about 73%) in the following week from 9 to 15 January 2021 (see Dwoskin & Timberg, 2021). Our study is positioned to demonstrate the most influential voices on Twitter about another (and arguably) equally significant bit of misinformation about hydroxychloroquine as a safe and effective treatment for coronavirus. The 2020 coronavirus pandemic provides a ripe case analysis to understand who among the cacophony of voices—scientific experts, government agencies, politicians, celebrities, and other members of the public with a Twitter account—were most heard within this social media broader marketplace of ideas?

Procedures for Analysis: Visualizing Twitter Networks During the First Wave of COVID-19 Outbreaks in the United States

Using the COVID-19 Twitter dataset collected by researchers at the University of Southern California Information Sciences Institute (https://publichealth.jmir.org/2020/2/e19273/ and https://github.com/echen102/COVID-19-TweetIDs) to provide us with an ongoing collection of tweet IDs related to COVID-19 since 21 January 2020, we rehydrated, searched, and visualized two subsets of these to construct our analysis (see “Data Visualization 1” and “Data Visualization 2” sections). We rehydrated the tweets using twarc and uploaded the tweets to an elasticsearch server hosted on AWS with the document mapping described in Appendix A. This dataset contains 141,010,038 tweets and 24,005,457 unique users for the defined time period of 21 January through 21 May 2020. 2

The elasticsearch server provided us with the ability to easily search and filter complex content queries to formulate our research. We searched the elasticsearch database for all tweets containing the term “hydroxychloroquine” within our time range, resulting in 212,445 tweets and 137,746 unique users (see Appendix B). With the tweets from this search, we created a filtered graph structure to highlight the interactions that occurred within these users and the most influential users to the entire conversation.

Using the igraph Python library, we created graphs where nodes correspond to unique users and links correspond to the existence of any interaction between users (retweet, reply, or quote). To isolate the most important network for visualization and analysis within this query, we removed all users with one or fewer links, then removed all users with zero links. Following this filtered step, we isolated the largest connected component of the graph to visualize and analyze, resulting in 31,147 unique users. We calculated the degree centrality and the betweenness centrality for each node, preserving the top 500 users from each measure. Finally, we performed fastgreedy community detection on the isolated network to create 10 communities within the network. We precalculated the layout of these networks for visualization using the Distributed Recursive Layout algorithm for node location in the final visualization.

Our choice of community detection algorithm hedged on speed of processing and quality of communities created (see Wagenseller & Wang, 2017; Yang et al., 2016). We tested the hydroxychloroquine network with edge_community_betweenness, fastgreedy, random_walktrap, and pagerank, settling on fastgreedy for its speed in calculation and the evenness of the communities created.

The layout algorithm choice relied on those same heuristics, with the Distributed Recursive Layout algorithm creating the most easily interpretable layouts. We tested the following layout algorithms: Fruchterman–Reingold force-directed, Distributed Recursive Layout, Kamada–Kawai force-directed, and Large Graph Layout.

To create a picture of the general COVID-19 Twitter conversation for comparison, we queried 1 million random tweets from this same date range and ran the preceding steps on that dataset as well, creating a filtered graph of 76,469 unique users.

The visualization was constructed using d3.js and Three.js. We built a quick-rendering and interactive web-based tool capable of representing millions of points and edges. Each user in the layout is represented by a point in a Three.js point cloud, with a scaled sprite image representing the number of tweets that user made within the dates and using the queried term (if applicable) and colored by the community membership calculated previously. We connected this visualization to elasticsearch using a Django backend to allow for quick fetching of the tweets each user made and built-in interactivity using indexed objects of users representing vertexes within the point cloud and edge objects.

The hashtags illustrated at the top of the network illustrations provided below represent the top hashtags used in all tweets from the specific query.

Data Visualization 1: All Mentions of Hydroxychloroquine on Twitter

The following link shows Twitter interaction networks for all mentions of “hydroxychloroquine” on Twitter from 21 January through 21 May 2020 (see http://socialmedia.modelofmodels.io/twitter_network/webgl_network?identifier=hydroxychloroquinefastgreedy). This network visualization shows user Twitter handles as nodes and links between them indicate a retweet, quote or reply as a measure of interaction. We removed all users with no links and calculated the 500 users with the top degree and betweenness centralities (see Figure 1(a)). When interacting with this network visualization, mouse over a node to see the Twitter handle, and click on a node to highlight the user who interacted with it. Click on a user in the centrality table to highlight that user or search a user to highlight them. Selecting a user will populate a table of the tweets that user made with the searched terms.

(a) Hydroxychloroquine network most central nodes. (b) Hydroxychloroquine Twitter network (21 January 2020 to 21 May 2020). (c) @IngramAngle hydroxychloroquine network (21 January 2020 to 21 May 2020). (d) @cjtruth hydroxychloroquine network (21 January 2020 to 21 May 2020). (e) @wdunlap hydroxychloroquine network (21 January 2020 to 21 May 2020). (f) @realDonaldTrump hydroxychloroquine network (21 January 2020 to 21 May 2020).

When isolating mentions of hydroxychloroquine, the Twitter handle for Fox News Channel opinion host Laura Ingram (@IngramAngle) is the most central node in the network, followed by Michael Coudrey (@michaelcoudrey), an ardent Trump supporter and proponent of hydroxychloroquine (see Nguyen, 2020). The third most central Twitter account, @niro60487270, was created in March 2020 and branded itself as “Hydroxychloroquine News.” This mysterious account was suspended by Twitter in November 2020 (just 7 months after it was created) and was suspected of being a QAnon-supported account. Trump’s account (@realDonaldTrump) is the seventh most central node in the network, but the significance of its relationship to the other nodes is notable in Figure 1(b).

In Figure 1(b), we can see that @realDonaldTrump’s location on the network is actually closer to the mainstream media cluster, which is most likely due to mentions of him as a newsmaker in his role as President, and perhaps, more generally regarding his handling of the pandemic and suggestion of hydroxychloroquine as a coronavirus treatment. However, @IngramAngle is firmly in the cluster on the right, near the @niro60487270 node, which is rife with hydroxychloroquine misinformation.

The role of another Twitter handle, @JamesTodaroMD also becomes more prominent in this community. The @JamesTodaroMD handle belonged to a block chain investor who graduated from medical school before going into business (see Gallagher, 2020). Interestingly, Todaro was listed as a co-author of a paper promoting hydroxychloroquine as a treatment for COVID-19 that appeared on Google, and which he tweeted out on 13 March 2020.

Google later removed the document after a segment on Tucker Carlson’s Fox News program in which Gregory Rigano (who was introduced as an adviser to Stanford University’s School of Medicine) promoted chloroquine as successful treatment for COVID-19 and claimed that a “well controlled peer reviewed study carried out by the most imminent infectious disease experts in the world . . . showed a 100 percent cure rate against coronavirus” (see Rogers, 2020). Stanford denied Rigano’s affiliation with their medical school and emphasized that no one from Stanford was involved in the study Rigano promoted on Tucker Carlson’s program (Rogers, 2020).

Around the same period of time President Trump started promoting chloroquine as a treatment therapy, Fox News continued to mention it throughout its news programming (see Bump, 2020; Rowland, 2020), creating a feedback loop of sorts between the two entities—Fox News and President Trump. As President Trump’s infamous 21 March 2020 tweet (see https://twitter.com/realdonaldtrump/status/1241367239900778501?lang=en) alone collected over 378,000 likes and over 130,000 retweets, Fox News promoted hydroxychloroquine nearly 300 times during the 2-week period around the president’s tweet (Media Matters, 2020a).

Figure 1(c) shows the users who interacted with @IngramAngle’s tweets about hydroxychloroquine from 21 January to 21 May 2020. Interestingly, the @IngramAngle account never specifically retweets or interacts with the @realDonaldTrump handle. However, she primarily tweets news stories that supports President Trump’s claims about hydroxychloroquine’s usefulness as a treatment for COVID-19. Also, the majority of her most interacted with tweets were posted after March, when misinformation about hydroxychloroquine had already spread.

In Figure 1(d) and (e), we can see the important role that suspect Twitter accounts and other social media influencers played in spreading misinformation about hydroxychloroquine. For instance, the @cjtruth account (which has since been deactivated) is clearly a driver of QAnon conspiracies about COVID-19 and misinformation about hydroxychloroquine (see Figure 1(d)). The @JuliansRum and @prayingmedic accounts have frequent connections with @readDonaldTrump’s cluster and spread a notable number of tweets with misinformation about hydroxychloroquine (see Figure 1(d)). Both of these accounts have since been suspended from Twitter. The @prayingmedic account was linked to an influential QAnon follower (see Ruelas, 2020). Figure 1(e) shows that the Twitter account of a popular travel blogger @wdunlap was also considerably influential in spreading misinformation about hydroxychloroquine. During the time period between 21 January and 21 May 2020, he tweeted at a large number of Twitter users on the political right and repeated a few pieces of misinformation over and over in almost bot-like behavior.

Focusing on @realDonaldTrump’s connections alone (see Figure 1(f)), we can see the impact of just three specific tweets about hydroxychloroquine were influential in the overall network. While any astute observer would predict that Trump’s Twitter would be influential among the political right on Twitter, here we can see that the green cluster he attracts in this Figure 1(f) tend to be very anti-Trump. Furthermore, @realDonaldTrump’s location in proximity to the mainstream news and political-left Twitter clusters show that Trump was generating significant conversation (both in favor of and skeptical about) hydroxychloroquine. Most of the political-left’s ire about hydroxychloroquine misinformation is directed at him, rather than the other influential misinformation spreaders within the network.

While Donald Trump’s account, @realDonaldTrump is the seventh most central node in the hydroxychloroquine network, its prevalence becomes even clearer in the second data visualization network described below, when we isolate the earliest (and arguably) most critical first 2 months of the “first wave” of information spread about coronavirus and hydroxychloroquine in the United States.

Data Visualization 2: Random Selection of 1 Million Tweets in the Network

In this data visualization, we randomly selected 1 million tweets within the network from 21 January to 21 March 2020 to capture the earliest 2 months of the “first wave” of information about coronavirus as misinformation about hydroxychloroquine was just beginning to emerge (see http://socialmedia.modelofmodels.io/twitter_network/webgl_network?identifier=fastgreedy). Similar to Data Visualization 1, this interactive data set includes networks of users with links between them indicating a retweet, quote, or reply. Users with no links were removed and the top 500 users were calculated based on the highest degree and betweenness centralities (see Figure 2(a)). To interact with the data set, just mouse over a node to see the Twitter handle associated with the account and click on a node to highlight which users interacted with it. You can click on the user in the centrality table to highlight that user or search a user to highlight them. Selecting a user will populate a table of the tweets that user made with the searched terms.

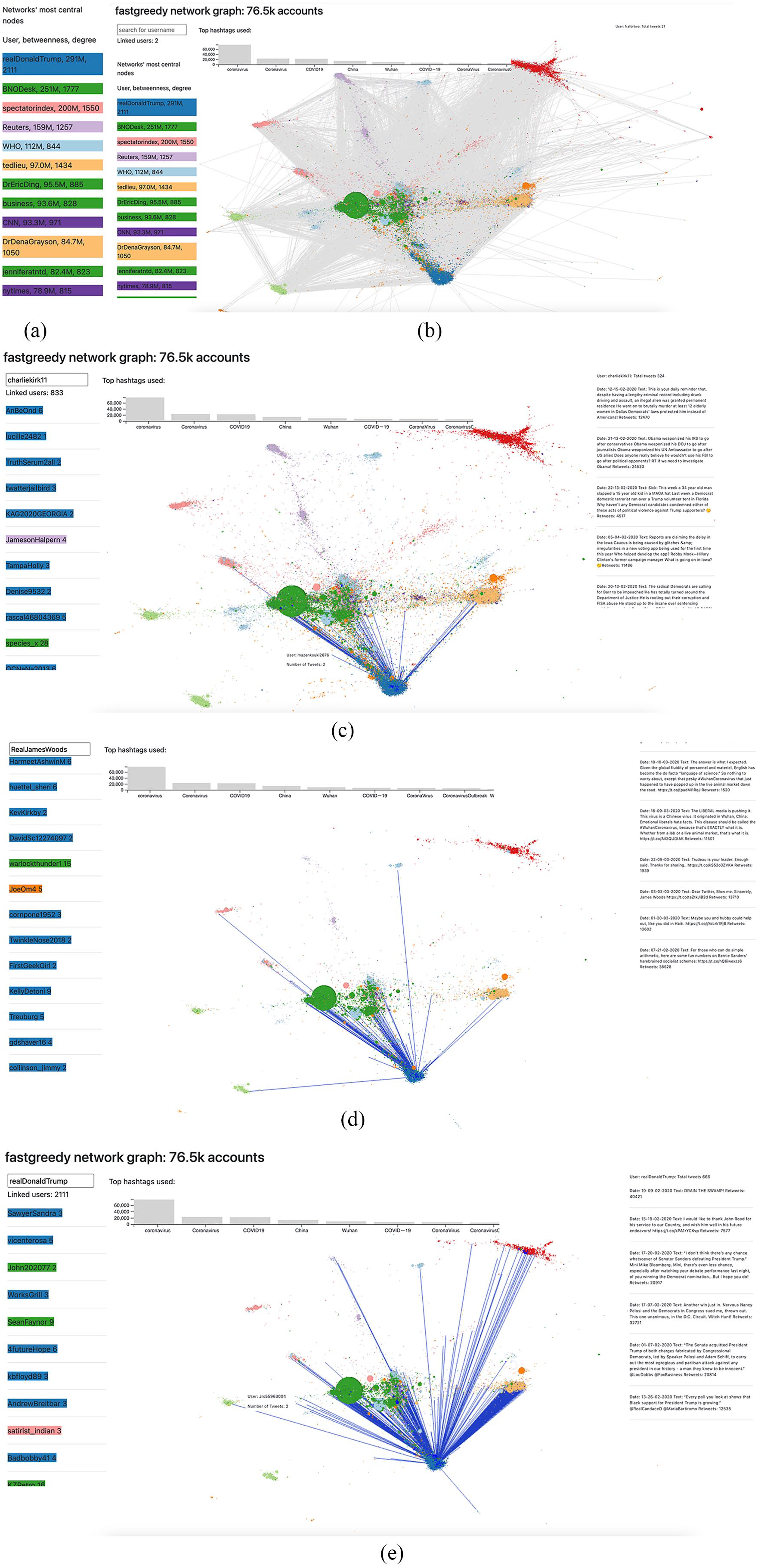

(a) Most central nodes from 1 million tweet random sample (21 January 2020 to 21 March 2020). (b) 1 million tweet random sample network (21 January 2020 to 21 March 2020). (c) @CharlieKirk 1 million tweet random sample network (21 January 2020 to 21 March 2020). (d) @realJamesWoods 1 million tweet random sample network (21 January 2020 to 21 March 2020). (e) @realDonaldTrump 1 million tweet random sample network (21 January 2020 to 21 March 2020).

From this 1 million tweet sample in the earliest part of the “first wave” of information about coronavirus, when hydroxychloroquine was introduced as a potential treatment, President Trump’s Twitter account (@realDonaldTrump) is clearly the most central node in the network (see Figure 2(a)). Moreover, none of the most prominent nodes in Figure 1(a), including right-wing pundits, Trump supporters, and suspected QAnon backed accounts crack the top 10 in this very early sample in Figure 2(a). This suggests that these groups only become central to the overall network after Trump’s leading tweets about hydroxychloroquine. Furthermore, in Figure 2(a), Trump’s closest competitors for centrality in the network are the Twitter accounts from mainstream news outlets, such as Reuters, CNN, and The New York Times. However, these accounts were far less prominent in Figure 1(a), as they are replaced by an array of Trump acolytes.

It is also worth noting here that the World Health Organization’s (WHO) Twitter activity is less than half of Trump’s (see Figure 2(a)). Although, overall, the @WHO handle is rather centrally located within the network, it is part of a different cluster and not interacting with the larger activity that includes misinformation about hydroxychloroquine. More credible medical and health expertise accounts, like @WHO are completely absent from the hydroxychloroquine network in Figure 1(a).

In Figure 2(b), we can see that while more activity amasses around mainstream news organizations (represented by the green cluster), the @realDonaldTrump account has more engagements throughout the network. Varying networked communities from the political-left and right are citing tweets from the news organizations, which explains why the green cluster is centrally located in the overall network. However, @realDonaldTrump still has more connections overall and is clearly the most connected single user within the network.

In this network visualization that focuses on the early months of coronavirus information and hydroxychloroquine misinformation, we can also see the significant role played by a pundit from the political right (see Figure 2(c)). Charlie Kirk (@CharlieKirk) tweeted several political attacks during this time period that are technically unrelated to the COVID-19 pandemic and hydroxychloroquine treatment for coronavirus, but his invective includes some intense Anti-China and xenophobic references. What is likely, is that the majority of political exchanges on Twitter included President Trump, and thus, Kirk’s tweets (although unrelated to specifically to coronavirus or hydroxychloroquine) were probably included in the crossfire of political engagements about Trump and/or COVID-19. Note that the top hashtags in Figure 2(c) and (d) were all related to coronavirus. Like Kirk, the account for actor James Woods (@realJamesWoods) includes unrelated political acrimony and plays an overall important role in the centrality of the network (see Figure 2(d)).

What is further evident in Figure 2(e) is that the closest lines of engagement occur between President Trump’s official Twitter account (@realDonaldTrump) and other significant nodes within the network. All other nodes lag in comparison to the strength of President’s Trump connection with the more politically oriented nodes within the network.

Discussion, Limitations, and Conclusions: Politics, Expertise, Misinformation, and the Struggle for Truth on Social Media

Our study further aimed to contribute to the literature about misinformation on Twitter by describing who are the most influential actors on Twitter in disseminating information (and misinformation) in the early period of the 2020 novel coronavirus pandemic. While it may not be surprising for astute observers of social media that the top tweets from 21 January through 21 May 2020 were from Trump, QAnon conspiracy theorists and pundits from the political right, rather than arguably better-informed entities, such as the Centers for Disease Control and Prevention (CDC), we believe this it is nonetheless important to illustrate this phenomenon empirically.

Moreover, our study contributed to the still nascent understanding of social media and the epistemic problem of “bullshit” by focusing on an important and timely case that might otherwise be dismissed with sweeping generalizations about the role of the media, when instead specific actors can be pinpointed and the interrelationships among those parties across mediated platforms can be better contextualized. Of note in this case, is the feedback loop that developed between Trump and Fox News in which each cites the other’s content relating to the coronavirus pandemic (Trump on Twitter and Fox News on its cable network).

In the case presented here, we illustrated who dominated the discourse on social media about an important health issue. Often times, “the media” is broadly blamed for the perpetuation of misinformation without any delineation of specific sources (e.g., cable news opinion programs, a particular broadcast network, newspaper, tweet from a Russian troll, or just someone’s hot take on Facebook). In this case, misinformation about hydroxychloroquine as a treatment for coronavirus was not perpetuated by the news media (broadly speaking), nor a particular journalistic outlet. And, contrary to previous studies that demonstrated the primary involvement of Russian spambots on Twitter in other public health cases (see Broniatowski et al., 2018; Walter et al., 2020), our study showed how the social media network around a single politician can provide a powerful interconnection among otherwise unconnected communities on Twitter. The misperceptions circulating on Twitter about hydroxychloroquine was primarily the work of a particular political interest on the right, and President Trump’s tweets about COVID-19 and hydroxychloroquine amplified those claims in a way that mostly played out as a political issue on the social media network, rather than a health and safety issue.

While the Twitter accounts for mainstream news outlets appeared within our data visualizations, we reject the idea that it was “the media” that was inducing panic about the pandemic on social media. Rather, the data visualizations presented here show that the media’s role (overall) was scrutinizing the claims being forwarded by President Trump about hydroxychloroquine as a treatment for coronavirus. “The media” that was actually forwarding unsubstantiated assertions about hydroxychloroquine’s efficacy as a treatment for COVID-19 originated primarily from QAnon backed accounts and were repeated by President Trump and other politically right oriented media outlets. That said, news outlets, especially those oriented toward the political-left tended to focus their criticism on President Trump for spreading the falsehoods, rather than on the entities that originated them.

However, if that criticism was misplaced, it is certainly a far cry from inducing a public panic about the disease (and it is beyond the scope of our study here to address any measure of panic itself). The least our study has shown is that claims about news media creating a panic, or social media for sowing misinformation need significant contextualization. There is not a monolithic “social media” or even a “Twitter” that spread false information about hydroxychloroquine—it was specific actors and networked communities on Twitter that did this.

Moreover, there were several examples where President Trump downplayed the seriousness of the COVID-19 outbreak, by stating that mainstream news outlets exaggerated the coronavirus threat, and quoted reporting from Fox News that mainstream news outlets were trying to stoke a “national panic” over the novel virus (see Naughtie, 2020).

Ironically, proponents of “it’s the media’s fault” for causing a panic about the pandemic were a narrow segment within the media itself. For instance, comedian John Oliver blasted Fox News for airing program segments titled “Coronavirus hysteria!” and “Liberal media hoax backfires!” (Media Matters, 2020b). Fox News’ repeated ad hominem attacks on the so-called “liberal media” in conjunction with Trump’s messaging contributed to the political feedback loop of making the coronavirus pandemic a political issue, rather than a medical or scientific one.

While a broader comparative analysis of Twitter activity from left-leaning and right-leaning media outlets about the coronavirus is outside the scope of the study presented here, we believe further research of this topic is warranted. Toward his end, our study also demonstrated the application of data visualizations to significant social, political, and public health phenomenon. Data visualization methodologies and text-mining tools applied to social media data have transcendent applications that are ripe for further development across disciplines (Blevins et al., 2019). In future work section, in this area, our team hopes to build in edge weighting to the visualization, using different weights for different types of engagement between users, but due to our schedule and processing power, we chose to weight all edges equally in this study. However, at the time of this writing, we are continuing our work to operationalize the creation of these networks into a web platform, so that others can utilize these network creation and visualization techniques with ease.

For this study, following Liang et al.’s (2019) study of identifying social media influencers during public health crisis, our research team was able to identify key voices that amplified COVID-19 misinformation on Twitter during the 2020 worldwide pandemic. Unfortunately, the leading voices in this case were the ones spreading the vast majority of misinformation about the coronavirus as being an exaggerated threat while suggesting unproven treatments. Yet, at the time of this writing, the coronavirus pandemic has caused over 24 million infections and over 500,000 deaths in the United States alone. 3 Epidemiological science about COVID-19, treatments, and vaccines is fluid and rapidly developing. As networked societies become more accustomed to relying on information from varying sources on social media outlets and other cyberspaces (even for critical medical knowledge), the implications of how they interpret and apply that information in physical spaces is a significant consideration. Not all sources of information are equally informed and the variations between truth, falsity, and “bullshit” may also be the difference between life and death.

Footnotes

Appendix A

Appendix B

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The authors are grateful for support from the Andrew W. Mellon Foundation’s Scholarly Communications program.