Abstract

This article analyses the role of Telegram in orienting, amplifying, and normalizing the non-consensual diffusion of intimate images (NCII). We focus on the sense of anonymity, the platform’s weak regulation, and the possibility of creating large male communities, arguing that these affordances are “gendered affordances” as they orient male participants’ harassment behaviors and, in concert with an established misogynist culture, contribute to the reinstatement of hegemonic masculinity. The research draws on data collected through an online covert ethnography of Italian Telegram channels and groups.

Introduction

This article discusses the non-consensual dissemination of intimate images (NCII) across Telegram groups and channels. NCII, most commonly referred to as “revenge porn,” consists of the distribution of sexually explicit and private content on the Internet and across social media without the depicted subject’s consent (Maddocks, 2018). The technologically mediated diffusion and circulation of non-consensual intimate content, together with the psychological harm inflicted to the subjects depicted (Uhl et al., 2018), make NCII a relevant topic to address at the intersection of gender-based violence and the non-gendered-neutral structures of the Internet (Eikren & Ingram-Waters, 2016). The role played by men in the sharing of non-consensual images has recently gained increasing scholarly attention (Hall & Hearn, 2019; Uhl et al., 2018). However, an analysis is still missing about how digital affordances work in concert with gendered cultural repertoires and orient discourses about gender-based violence, promoting the enactment of hegemonic masculinity through harassment practices (Flood, 2008).

To address this gap, we turn to Telegram, a messaging app that allows users to communicate both through public channels and closed groups. Different from other messaging apps (such as WhatsApp) and social media sites, Telegram offers the possibility of creating large groups and channels while preserving user anonymity and security and is characterized by a loose regulatory framework enforced by its administrators. 1 Given these peculiar features, Telegram has become a privileged app for the spreading of violent content (Mazzoni, 2019; Shehabat et al., 2017) and for allowing misogynist discourses to flourish (Zorloni, 2019). In particular, we explore the diffusion of non-consensual images on Italian Telegram channels and groups. NCII became an issue of public debate in the Italian context in 2016, after the release of sexually explicit videos by her ex-boyfriend led Tiziana Cantone to commit suicide (Il Post, 2016). More recently, a campaign that proposed to criminalize the non-consensual diffusion of intimate images has reignited interest in the phenomenon, leading to the inclusion of NCII as a criminal offense in the “Red Code” aimed to protect women from gender violence, which became law in April 2019 (Tidman, 2019). However, despite such increasing attention, research about NCII in the Italian context is still scarce.

We argue that Telegram affordances play an important role in orienting and amplifying NCII practices, as they offer the possibility of systematizing the diffusion of non-consensual content and, consequently, of gender-based violence. The sense of anonymity, the weak regulation and the possibility of creating male homosocial bonds afforded by the platform contribute to the creation of an environment where men’s culpability is downplayed, and harassment is normalized. The analytical lens of gendered affordances (Schwartz & Neff, 2019) is particularly apt to analyze the practices and discourses around NCII as it allows for an emphasis on how the platform’s architecture orients male participants’ harassment behaviors and, in concert with an established misogynist culture, contributes to the reinstatement of hegemonic masculinity, defined as the configuration of gendered practices which guarantee the dominant position of men in society (Connell, 2005).

To address these issues, we rely on data collected through a qualitative approach inspired by digital ethnographic research (Caliandro & Gandini, 2017). Specifically, given Telegram’s role in promoting a form of private sociality (Papacharissi, 2014) through the creation of close groups, and the particular nature of the object of study, we performed a covert ethnography (O’Reilly, 2008) to access and analyze our data. We extensively discuss the ethical implications in a dedicated methodological section. Following our object of study ethnographically (Caliandro, 2017), we were able to collect a consistent number of Telegram channels and groups sharing non-consensual images and perform an ethnographic content analysis of the main themes addressed (Altheide, 1987). Drawing on these data, we show how Telegram affordances orient the distribution of non-consensual images as characterized by the negotiation of consent, the categorization and objectification of women, and the creation of homosocial bonds based on solidarity.

The Non-Consensual Diffusion of Intimate Images Phenomenon (NCII)

Contextualizing NCII

The non-consensual diffusion of intimate images is a hotly debated topic in the study of Internet cultures. Among its many implications, it represents one of the ways in which gender-based violence has been exacerbated as a result of the diffusion of technologically mediated forms of sociality and the deluge of user-generated content (Eikren & Ingram-Waters, 2016). 2 Existing literature and media coverage have often addressed the issue in terms of “revenge porn,” thus framing the dissemination of intimate material as an act performed by a malicious ex-partner seeking revenge. Academics and practitioners (see Maddocks, 2018, for a review), however, have questioned the expression “revenge porn” as problematic, for two main reasons. On one hand, the focus on pornography risks overshadowing the non-consensual and intimate nature of the images shared. On the other hand, the idea of revenge assumes that victims have committed some kind of act which needs to be punished, thus leaving room for forms of victim-blaming or slut-shaming (Gong & Hoffman, 2012) and contributing to the legitimization of the phenomenon (McGlynn & Rackley, 2017). On the contrary, it is more appropriate to frame the issue in terms of image-based sexual abuse (McGlynn & Rackley, 2017), technology-facilitated sexual violence (Henry & Powell, 2015), and NCII (European Women’s Lobby [EWL], 2018). Despite the different nuances in meaning, as Maddocks (2018) notes, these terms all consider the diffusion of intimate images as a gender-based and technology-facilitated harm. In line with this debate, this article adopts the wide-ranging concept of NCII, which allows us to take into consideration the diffusion of intimate images both as a privacy violation, what Citron (2019) specifically calls sexual privacy, and as a form of gender-based violence related to technology (Luchadoras, 2017).

This discussion reflects the increasing attention devoted to the topic in recent times across different countries 3 and in different fields, from legal (Citron & Franks, 2014) to sociological research (e.g., Hall & Hearn, 2017, 2019). Despite this interest, research about NCII is still in its infancy and there are few studies directly addressing this issue in the Italian context (Caletti, 2019). Significantly, a recent survey conducted by Ipsos Mori and Amnesty International stressed the relevance of the topic in Italy, as 33% of interviewed women declared that they were victims of online harassment on a daily basis (Amnesty International, 2017). Italian scholars also point out the persistence of sexist discourses (Bandelli & Porcelli, 2016), and the obstinacy of a rape culture which helps to normalize acts of gender violence (Giomi & Magaraggia, 2017), both propelled and legitimized by Italian media and politics. Similar tendencies also fuel the exacerbation of misogynist discourses on the Internet and social media (Rossitto & Uccello, 2019).

A relevant amount of literature has so far understood NCII as a backlash of sexting (Henry & Powell, 2015; Salter, 2016). Such a framework, however, tends to stress the role of women and the behavioral norms they should follow to reduce the risks of sexual harm or reputational damage (Albury & Crawford, 2012), while obscuring the role of male perpetrators (Eikren & Ingram-Waters, 2016). In this context, we argue that specific attention must be given to men’s practices of collecting and sharing intimate images, and to the implications of NCII and harassment in the performance of masculinity in a homosocial, digital environment.

Men, Homosociality, and the Performance of Masculinities

Recent research has begun to address the NCII phenomenon from the perpetrators’ perspective, paying particular attention to the dimension of power, control, and revenge (Hall & Hearn, 2017, 2019; Uhl et al., 2018). By considering men posting explicit images of women, mostly ex-partners, on ad hoc websites (e.g., MyEx.com), scholars highlight that most of the content shared aims to shift the blame toward the victims (Uhl et al., 2018) and represents a way of regaining control over the female subject (Hall & Hearn, 2019). These practices have been framed in terms of compensatory manhood acts (Schwalbe, 2014): the dissemination of intimate images becomes a way of overcompensating for and rehabilitating men’s manhood and to hurt as well as control the women in question. According to Hall and Hearn (2017), these practices can be interpreted as ways of regaining the loss of external control over women as well as re-enhancing the man’s status, in a context where new forms of masculinities built around claims to victimhood and aggrieved entitlement are increasingly widespread across online domains (Banet-Weiser & Miltner, 2016; Kimmel, 1994). 4 Moreover, existing research also points out that the non-consensual material posted online becomes an object of scrutiny and judgment through evaluating, ranking, and commenting practices (Uhl et al., 2018), while tagging behavior results in the creation of a folksonomy of misogyny (Thompson & Wood, 2018), thus contributing to the further objectification of the female subject (Rodriguez & Hernandez, 2018). The presence of these behaviors overall reflects the persistence of sexual harassment as a means of performing masculinities (Quinn, 2002; Rodriguez & Hernandez, 2018). Girl-watching practices (Quinn, 2002) and ritualized harassment (Flood, 2008), although commonly dismissed as “only play,” work instead as mechanisms through which male-to-male interactions are established (Flood, 2008), hegemonic masculinities performed (Connell, 2005), and power inequalities maintained (Bird, 1996). Previous research also testifies that homosocial bonds (Rosen et al., 2003), solidarity (Grazian, 2007), and competition (Kimmel, 1994) among men are largely imbricated with the performance of violent behaviors against women. Similar practices can also be found in digital environments, such as in online forums, where users actively participate in jokes and banter connected to the performance of masculinity (Kendall, 2002).

Accordingly, scholars have argued for a powerful link between the performance of masculinity and male homosociality (Bird, 1996), stressing that men’s practices of doing gender can be considered as a homosocial enactment in which the performance of manhood is staged in front of, and granted by, other men (Kimmel, 1994). Therefore, in line with Bird (1996), we consider men’s homosociality as a key concept to understand the performance of masculinities in online environments and how hegemonic masculinity is enacted and reinforced through male-to-male interactions.

Despite the critique of the concept of hegemonic masculinity (see, for example, Demetriou, 2001), we agree with Connell (2005) that the relevance of the concept cannot be dismissed. We contend that the theorization of hegemonic masculinity as “the pattern of practice [. . .] that allowed men’s dominance over women to continue” (Connell & Messerschmidt, 2005, p. 832) is particularly adequate to analyze NCII on Telegram. It is, therefore, important to delve deeper into the practices of doing masculinity through mediated technologies to unravel how NCII is imbricated with the performance of masculinities. To do so, we look at the role played by Telegram chat groups as mostly male homosocial environments in the making of such performances.

Telegram and Gendered Affordances

To account for NCII in its complexity, we turn to Telegram, looking at how its affordances, platform politics, and consequent assumption of use may orient the diffusion of misogynistic practices and the performance of hegemonic masculinity (Massanari, 2017). Building on Schwartz and Neff (2019), we contend that Telegram affordances can be considered as gendered affordances, as they may suggest different behaviors to different users according to their gender, while contributing to reinforcing already existing gendered power hierarchies. Notably, the actions fostered by the platform affordances draw on cultural and institutional structures of gender reflected in interactional and individual gendered performances (Schwartz & Neff, 2019). The theoretical framework of gendered affordances allows us to take into consideration affordances not only as the socio-technical architecture of digital media which set specific opportunities and constraints on users’ actions and interactions (boyd & Ellison, 2011), but also as elements rooted in cultural and institutional legitimacy (Davis & Chouinard, 2016) and deeply embedded in structural and contextual factors (Banet-Weiser & Miltner, 2016). The definition of affordances stresses the role of affordances as gendered (Schwartz & Neff, 2019) as well as imagined (Nagy & Neff, 2015) conduits to social interaction, taking into account the web of relations among users’ perceptions and expectations, the materiality of artifacts and the intentions of designer (Nagy & Neff, 2015). Our proposition, therefore, aims to contribute to the debate on how social media platforms orient users’ behaviors “through the mutual constitution of social affordances and social structures” (Schwartz & Neff, 2019, p. 3), with a specific focus on the gender dimension. This is pivotal to understand the concept of gendered affordances without falling into forms of determinism but preserving a constructivist perspective (Schwartz & Neff, 2019).

The role played by platforms’ architectures in promoting harassment and misogynist practices has already been highlighted (Massanari, 2017), with a specific focus on anonymity and scalability (Jeong, 2015). Yet, we claim that Telegram deserves particular attention for its distinguishing affordances and the implications these can have for the spreading of NCII. In fact, Telegram allows for the creation of two types of sociality: channels and groups (Mazzoni, 2019). In the first case, the number of participants is unlimited, and information is broadcast in a one-to-many logic. Groups, however, include up to 200,000 users, and allow for a large-scale private sociality (Papacharissi, 2014), thus creating a suitable environment for the diffusion of non-consensual images and for the creation of male homosocial bonds. Moreover, Telegram promotes itself as a secure and anonymous platform (Telegram FAQ), prioritizing both (a) its users’ security, as all the information is stored on a built-in, distributed, and encrypted cloud backup, and secret chats characterized by end-to-end data encryption can be created; and (b) users’ perception of privacy and anonymity, given that, although a phone number is needed to register an account, Telegram allows users to participate to channels and/or groups without having their phone number shown. According to its creators, these features make Telegram a safer platform compared to other messaging apps such as WhatsApp. 5 This, in turn, facilitates the use of Telegram to share violent content as exemplified by the diffusion of ISIS-related channels (Shehabat et al., 2017), Alt-Right extremism (Mazzoni, 2019), as well as other pornographic and non-consensual material of a sexual nature (Zorloni, 2019). In addition, Telegram’s loose regulatory framework for content moderation, which relies primarily on individual flagging, makes it a preferred platform for the dissemination of non-consensual intimate material. Such a role has been especially enhanced since other platforms such as Facebook began to enforce more restrictive policies leading to the removal of a consistent number of accounts sharing pornographic material (Hopkins & Solon, 2017). At the same time, porn websites that were used as platforms to share revenge porn (e.g., MyEx, see Hall & Hearn, 2019) have also been shut down, leaving room for Telegram to become a space for deplatformed users (Rogers, 2020).

It may be said that the sense of security and anonymity, together with the ambiguous regimentation provided by the platform, makes Telegram a highly suitable context for the diffusion of NCII and the perpetration of harassment as a normalized and trivialized practice of male-to-male interaction. The possibility of creating large groups and channels, together with the facility of sharing visual and audio material, fosters the creation of a mediated homosocial environment in which participants can share behaviors and build homosocial bonds, while enacting practices in line with hegemonic masculinity. Accordingly, understanding Telegram affordances as gendered allows for a better understanding of the performances of hegemonic masculinities through the sharing of non-consensual intimate pictures, by linking the platform’s architecture, the social and cultural gendered scripts, and individual gendered practices.

Data and Method

To conduct our research, inspired by a qualitative, digital ethnographic approach (Caliandro & Gandini, 2017), we explored discussions among users on Telegram groups and channels related to NCII content. This entailed undertaking a kind of qualitative observation akin to a covert ethnography (O’Reilly, 2008), meaning that participants were unaware that they were observed by researchers. Considering the implications that concern covert ethnographic research, the use of this practice in our case requires further justification. Bulmer (1993/2001), for instance, underlines how covert ethnography is “clearly a violation of informed consent” and entails “out-and-out deception” (p. 55). Yet, although it is distanced from the usual norms of social research, he also observes that there are “highly exceptional circumstances” in which its use can be justified. We consider this a case of “highly exceptional circumstance,” for two reasons. First, we considered this was the most (to some degree, perhaps the only) suitable option to conduct a study of this kind. Because we needed to gain access to closed groups and private channels to observe internal dynamics and conversations about such a sensitive topic, to reveal the purpose of our presence to participants would have effectively made this research impossible to conduct, as we would have been immediately expelled from these groups. Hence, we decided to operate a non-participant observation (Mills et al., 2009). Second, doing covert ethnography presents specific advantages that are peculiar to the purpose of this study, such as allowing the observation of behaviors that cannot be studied otherwise and avoiding the Hawthorne Effect, for which individuals modify an aspect of their behavior in response to their awareness of being observed (Merrett, 2006). However, the use of covert ethnography involves some ethical issues regarding the lack of informed consent which is entangled with the access, collection, and analysis of digital conversations. We discuss this further in the section “Ethical Issues”.

Data Collection and Access

The first step in our research was to collect a selection of channels and groups that allowed us to delve into NCII practices. Channels and groups can be both public and private. To gain access to public groups and channels, one can use the Telegram search bar using keywords, hashtags, and usernames, but to gain access to private groups, users need to receive an encrypted invitation link and the approval of group administrators or specific bots (Mazzoni, 2019).

Data were collected between January and March 2019. Our research began from the Telegram channels listed on a famous Italian porn website, Phica.net, which is known for being a preferred platform for sharing NCII (Mollica, 2018). We subscribed with a gender-neutral nickname and chatted with the web administrators, asking them about the existence of Telegram channels or groups where forms of NCII were shared. Administrators never asked us to identify ourselves and immediately provided the invitation link for a first private group, thus our first impression was that users were not too concerned with preserving the security of the groups but were rather interested in creating large communities for collecting more material.

Starting from these initial groups, in line with the suggestion of “following the thing, the medium and the natives” (Caliandro, 2017, p. 10), we followed the conversations and the invitation links to find other groups and channels to analyze. Following this procedure, we were able to obtain a sample of 50 channels and groups.

Method

After gaining access to the sampled groups and channels, we used an in-built Telegram feature that allows users to export their whole chat history in HTML and then operated an ethnographic content analysis (ECA), which was oriented to check and supplement prior theoretical claims, allowing “a reflexive movement between concept development [ . . . ] data analysis and interpretation” (Altheide, 1987, p. 68). As a first step in the analysis, we wanted to highlight the different forms of NCII that take place and are fostered by Telegram. To do so, we delved into each chat and classified it according to two criteria: first, the type of communication allowed by the platform (group, channel, or bot) and second, the different types of material shared among its participants. This procedure resulted in a categorization of NCII practices, which is reported in section “NCII on Telegram: A Typology of Practices”. To delve deeper, we then performed an ECA (Altheide, 1987) of a defined number of groups (n = 4). We focused on “explicit image-based sexual abuse” practices (see section “NCII on Telegram: A Typology of Practices”), and selected three big groups, relevant for their popularity, according to the number of users involved and level of engagement (everyday activity). Moreover, we also analyzed a bot, as a peculiar form of automated NCII afforded by the platform. The ECA approach was useful to account for the discourses around NCII and users’ perceptions of the platform’s affordances. The analysis was based on the identification of analytic categories as they emerged while reading the texts, using keywords to spot relevant conversations (e.g., ex-girlfriend, telegram, revenge porn, anonymity).

In addition, inspired by the “walkthrough method” (see Light et al., 2018), we also collected a set of screenshots during the qualitative analysis as a sort of set of ethnographic notes. The combination of these methods was useful to provide us with different types of digital materials that could be compared and analyzed to obtain a deeper understanding of the phenomenon.

Ethical Issues

The ethical implications that concerned the undertaking of this work were also addressed. The question of how to research closed groups is still debated (Zimmer, 2010) and the boundaries of users’ online privacy remain blurred in social research (Markham, 2012). We acknowledge the importance of respecting users’ privacy and asking for informed consent in online research (Franzke et al., 2020) but justify our decision to conduct online covert ethnography as ethical. First, doing covert ethnography is considered ethical when it prevents the risk of loss of the object of study and when the very success of the research depends on it (Economic and Social Research Council, 2015). As mentioned, in our case, undertaking a covert ethnography was the most suitable method to gather information on this phenomenon, since we aimed to study violent behaviors in closed groups. Considering that when it is the only available method to conduct research, covert observation is valued as ethical (Bulmer, 1993/2001; O’Reilly, 2008), we argue that the same should apply to social research in online environments. In addition, we contend that the discussed results hold serious public relevance, especially considering the lack of Italian data on NCII perpetrators or any empirical evidence on this new frontier of violence.

However, to counterbalance the ethical concerns related to this kind of research, we anonymized the identities of perpetrators and victims by operating a non-literal translation from Italian to English and removed any in-text personal details or references. To further strengthen this anonymization work, we followed the “ethical fabrication” approach suggested by Markham (2012), by partially altering users’ quotes without losing their significance. This allowed us to reproduce the essential elements of the conversations, without running any risk of the participant being recognized. Finally, we do not mention the names of the channels to protect users’ anonymity and prevent an increase of visibility. We only mention “La Bibbia” (see section “Categorization and Objectification of the Female Subject”) because it is an already well-known archive in Italy that has gained considerable media attention (Mollica, 2018).

NCII on Telegram: A Typology of Practices

In this section, we discuss different NCII practices that take place on Telegram; accounting for the forms of sociality (groups, channels, bots) they include the following:

(a) Explicit image-based sexual abuse: Channels and groups whereby the aim of sharing non-consensual intimate material is made clear, often stating in the chat info box that the reason for their existence is doxing 6 and image-abusing girls. These include both big groups that exceed 30,000 users and are active on a daily basis, in which the only rule is sending pictures of (ex) girlfriends, friends, or acquaintances (for failing to comply with this rule, we were kicked out from three groups), as well as small groups that target specific girls and generally do not exceed 20 users, recalling more close friends chat groups than large communities.

(b) General porn: Channels and groups in which non-consensual intimate images are shared together with mainstream porn. In these channels and groups, non-consensual images are often consumed and perceived as mainstream porn and participants reach 60,000 users.

(c) Spy mode: Channels and groups created with the aim of sharing pictures and videos made with hidden cameras. Girls and women are recorded and photographed during sexual acts, as well as during daily actions, for example, at the beach, in shops, in the street, and so on. Here, the number of participants often reached 60,000 users.

(d) Linking: Channels and groups that share intimate pictures taken from different social networks, especially Instagram and Facebook. Pictures were consensually taken and posted on social media by the depicted subjects, yet, non-consensually screenshotted and posted on Telegram by other users, shedding light on the problem of cross-platform NCII.

(e) Automated NCII: Bots created with the goal of gathering, storing, and sharing non-consensual intimate material and girls’ personal information, automatizing the spread of NCII among channels and groups.

Such categorizations account for a variety of ways in which Telegram orients the sharing of non-consensual intimate material through the forms of sociality it allows. Moreover, the different kinds of content diffused by users allow mapping out different practices of non-consensual dissemination of content thus moving beyond the restrictive concept of “revenge porn” (McGlynn & Rackley, 2017).

“Thanks God There’s Telegram!”

As a second step in our analysis, we delved deeper into the content shared in explicit image-based sexual abuse groups, with the aim of analyzing the discourses and practices enacted by users. Using ECA (Altheide, 1987), it was possible to delineate three main themes: (1) the negotiation of consent, (2) the categorization and objectification of the female subject, and (3) the performances of masculinity through male homosocial interactions in relation to Telegram affordances.

The Negotiation of Consent

In all the groups we analyzed, two practices were recurrent: the collection of intimate pictures and the negotiation of the non-consensual status of such material among participants. As the following excerpts show, users engage in a constant request for sharing sexually explicit content. They themselves acknowledge that the images shared are non-consensual in their nature, but the lack of the depicted subject’s consent becomes one of the rules of the game to adhere to, to be part of the group:

User1: Photos of ex, slutty friends . . . who wanna start?

User2: Probably we all have a friend we’ve seen in this group

User3: The entry fee for the group is a picture of your ex!

In some cases, users do recognize that the dissemination of pictures without the represented subject’s authorization can be an improper and immoral act. Yet, the issue of consent is immediately dismissed through irony and women’s denigration: as one participant states, “consensual or not, what matters is that they’re bitches.” In line with victim-blaming behaviors (Eikren & Ingram-Waters, 2016), in this case, users also tend to shift the blame to the victim and to downplay their culpability. The diffusion of non-consensual intimate content becomes highly normalized by the majority of participants, and thus, considered neither as a form of privacy violation nor as an act of gendered abuse. This mirrors an already known tendency to trivialized crimes that disproportionately target women (Citron & Franks, 2014).

The theme of consent only emerges as an issue when participants are confronted with the possible consequences of their sharing practices, both in terms of the legal implications they can face, and of the consequences related to the violation of Telegram’s Terms of Service (ToS). In fact, participants frequently discuss the legal implications of their behaviors, especially the possibility of being denounced for sharing (often paedo-) pornographic material:

User4: If some hoes voluntarily send around some pics, why should we be blamed?

User5: I think it is illegal to share their pics without consent

User6: If a girl sends the file, the receiver can do whatever he wants with it. It was the girl who agreed to share it!

User7: I’m not sure, I know of people being arrested for sharing private photos

However, users confront themselves with Telegram’s architecture and affordances, discussing how the platform regulates illegal pornographic content-sharing, the possibilities of being banned, and the functioning of content-detection algorithms:

User8: Guys, as soon as the system will notice we’re sharing porn it will delete the channel Just a warning

User9: But they cannot ban us, right?

User10: At worst, we can create the channel again!

User11: I saw an article saying there are algorithms automatically reporting pornographic content Don’t know if it’s true

Participants thus seem to feel protected by the platform’s promises to preserve their anonymity and by a regulation that relies mostly on users’ flagging of prohibited content, instead of a strict control operated by the platform administrators themselves. The sense of anonymity provided by the end-to-end encryption and this loose regulation, together with the possibility of creating new groups and/or channels and making conversation back-ups, allows the perception of Telegram as a safe space where individuals feel free to share, comment, and denigrate the female subjects. Thus, Telegram users are constantly negotiating the possibilities for sharing NCII fostered by the platform, taking into consideration not only the platform’s ToS, but also Italian legislation. These negotiations show that Telegram affordances are indeed imagined (Nagy & Neff, 2015), as they are as much constructed by users’ perceptions and the discourses around the platform as they are technologically configured. Moreover, our results show that Telegram’s architecture, in concert with already established gendered scripts, contributes to creating a discourse that ends up normalizing NCII practices. The perceived lack of punishment and the downplaying of any culpability orient male participants to reinstate themselves in a power position over the unaware female subjects, thus reaffirming their masculinity as hegemonic (Connell, 2005).

Categorization and Objectification of the Female Subject

Another practice characterizing NCII Telegram groups is the categorization and objectification of the female body. Not only do female subjects become objects of scrutiny through denigrating comments, but they are also objectified by means of a detailed practice of classification according to different categories such as body type and shape, age, and country or city of origin. When asking for images to be shared, participants add specific qualities and prerequisites about the type of material (e.g., amateur video, hacked photos, rape videos) and the type of girls they would like to see (e.g., big breasts, under-age).

User12: Does anyone know *name* *surname* and have her hacked pics?

User13: Rape videos anyone?

User14: Guys we want the 2000–2001 girls!

Participants are also asked to share girls’ personal details along with the pictures (e.g., name, city, phone number, and links to social media profiles), thus mixing the diffusion of intimate images with doxing practices:

User15: if you know the girls’ names don’t be shy and tell ‘em!

User16: please share the girls’ city when you send pics

Categorization and objectification practices are also evident in the analysis of bots. As previously outlined, bots are used to collect, categorize, and archive intimate content, but also as a means to retrieve new material which is then re-shared across closed groups and channels. In this sense, they simultaneously respond to and nourish the need for content classification and women’s objectification. With just one click, the bot displays the intimate photos of a girl with all the available details about her (Figure 1).

A bot on Telegram.

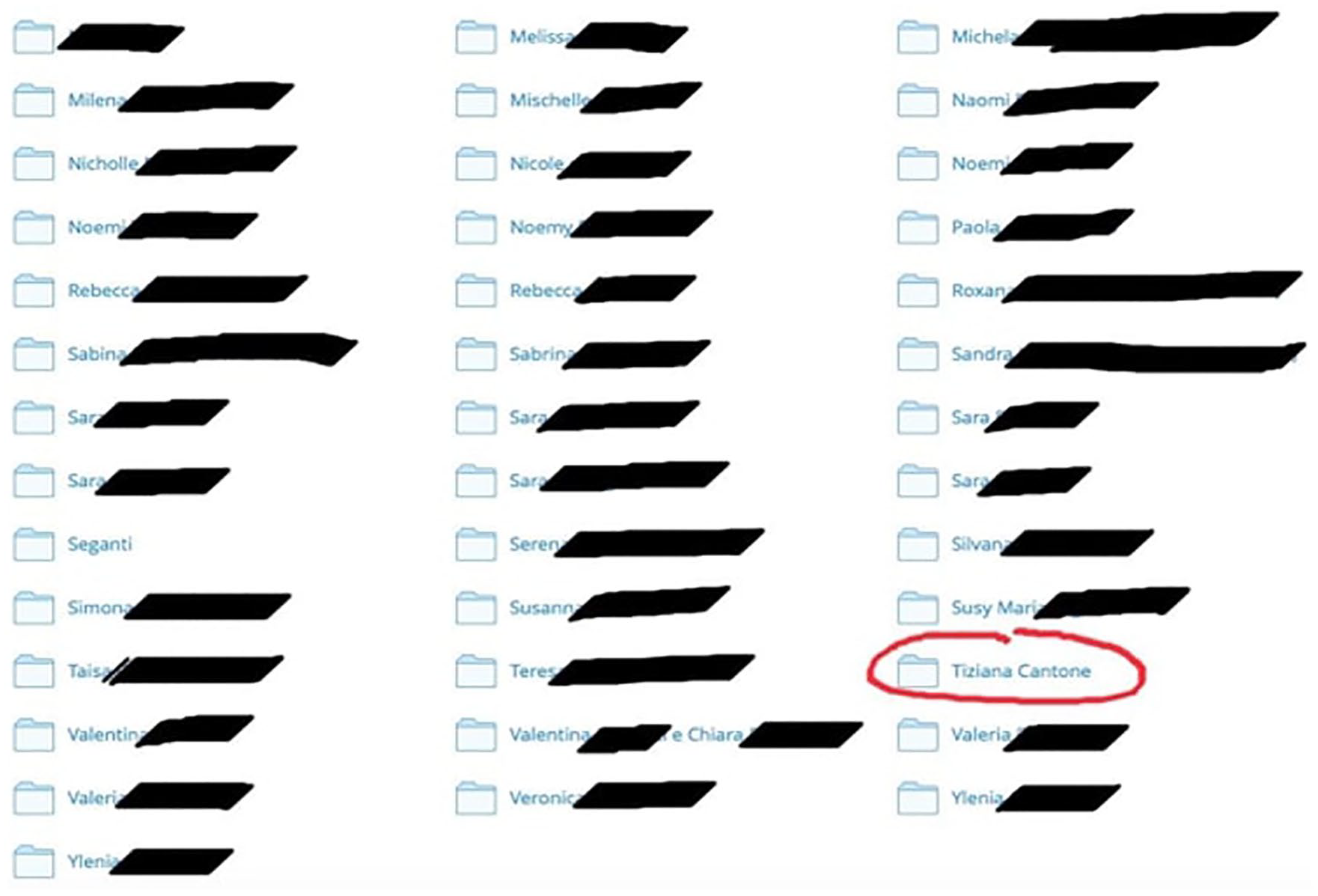

Categorization processes are taken to the extreme through the creation of a series of archives known as “The Bible.” The Bible is an Italian digital archive used to collect hundreds of non-consensual intimate pictures and videos organized in different folders. Within this archive, women are once again classified and grouped into specific folders (Figure 2). 7 Notably, through the processes of classification and objectification, women become raw material and objects of consumption available to satisfy men’s heterosexual desires (Flood, 2008). The results here presented suggest that NCII can be interpreted as a group practice of denigration, humiliation, and derision in a male homosocial environment (Flood, 2008), rather than an attempt to seek revenge toward an ex-partner.

Screenshot from “La Bibbia.”

Moreover, the analysis of The Bible allows an understanding of not only the relevance attributed to such an archive, but also its persistence through time and its cross-platform nature. In fact, after the Bible’s creators were arrested in 2018 (La Repubblica, 2018), the archive was publicly prohibited. However, during the time of this research, the archive was still being hosted by different providers (such as MegaSync and Dropbox) and could be freely downloaded by anyone who gained access to the link. 8 In the analyzed groups, users often asked where they could find the link to access The Bible or stated their willingness to create a new repository from scratch. Significantly, the ways in which users frame such archives and name them (e.g., The Bible, The Gospel), seem to suggest the perception of these repositories as something that has to be protected, worshipped, and transmitted to others.

The objectification of the female body is not a new phenomenon (Fredrickson & Roberts, 1997); rather it is a widespread practice in male homosocial environments, both online and offline (Flood, 2008; Thompson & Wood, 2018) as well as in the pornography domain (Attwood, 2004). However, in this specific context, categorization and objectification practices are further amplified by peculiar interactions between users and the platform affordances, specifically the creation of bots and external archives to classify and store content. In line with existing research, in this case, objectification practices can also be considered as a means through which hegemonic masculinity is enacted and heterosexuality affirmed as a norm (Rodriguez & Hernandez, 2018). The data thus point to the presence of a Telegram-mediated form of homosociality, which offers innovative ways to objectify women and exponentially increase the harm related to NCII.

Men’s Interactions, Homosociality, and Solidarity

The analysis of Telegram groups also provides interesting insights about how masculinities are performed in male homosocial contexts. In particular, the possibility of creating closed groups helps to generate a sense of community among users, which is often framed in terms of solidarity:

User17: I feel like I finally found my place, with new friends and an incredible private collection of Italian sluts

User18: oh yes! I love male solidarity in these moments

User19: I’m on a mission to gather some new material on the chat bot

User20: this is what I wanna hear, I admire you

User21: great job, soldier!

As can be noticed, there are practices aimed at gaining a large audience and conveying this to the group page. This mostly happens when a new group is created, often from the ashes of a previous one closed by Telegram administrators. Second, the incitement to share intimate material is considered to be a form of solidarity among men and reinforces a sense of fellowship and brotherhood. This also becomes clear in the kind of language used, which alludes to the military realm, by calling the collection and diffusion of intimate content a “mission.” Solidarity among men also emerges when participants ask for help and support for perpetrating NCII in its different forms. Notably, groups are used as a means to coordinate so-called “shitstorm actions”—joint actions aimed at harassing and stalking a specific victim:

User22: Follow her and her [Instagram] stories, she is such a bitch! For sure someone has pictures of her I count on you guys

User23: Come on guys, let’s do some shitstorm!

Moreover, given the interactive form assumed by Telegram groups, participants are led astray on unrelated topics, or engage in insulting and trolling each other. However, at some point someone recalls the very nature and scope of the group, as one of the users makes clear: “I’d rather see more pussy than talks.” In line with an extended idea of revenge pornography (Hall & Hearn, 2017), shitstorm practices and revenge pornography represent just a fraction of the broader practice of non-consensual diffusion of intimate images across Telegram groups. In addition, the material shared on Telegram is often framed in terms of pornography consumption. Telegram is considered to be a suitable means to share pornographic material, to the point it is seen by some users, a valid alternative to other traditional websites such as YouPorn:

User24: Thanks God there’s Telegram!

User25: Normal websites aren’t worth a fig anymore!!

The results show that the collection and diffusion of non-consensual material ultimately works as a bonding practice in the homosocial context of Telegram groups. The possibility of creating large communities allowed by the platform contributes to the creation of an environment suitable for male bonding practices. Therefore, in line with Flood (2008), in the case of Telegram groups, male bonding also feeds harassment practices, and harassment in turn feeds male bonding. Thus, the link between homosociality and hegemonic masculinity is once more reaffirmed in digital environments (Bird, 1996; Kendall, 2002).

Conclusion

The article aimed to dissect the different forms of non-consensual diffusion of intimate images as they are spread across Telegram groups and channels, and how masculinities are performed through girl-watching (Quinn, 2002) and harassment practices (Flood, 2008) in a platform-mediated environment. Specifically, we were able to observe that homosocial bonds were constructed through the negotiation of victims’ consent, victim-blaming, “toxic brotherhood” (Cook, 2015) and Telegram imagined affordances.

The results show that Telegram affordances contribute to the normalization of NCII, thanks to the sense of anonymity and community fostered by the platform. In this sense, we consider Telegram’s affordances as gendered (Schwartz & Neff, 2019), as they shape patterned behaviors according to one’s gender. Accordingly, our results show that Telegram channels and groups can be considered as venues where hegemonic masculinity is reaffirmed through homosocial bonding practices. Indeed, women’s bodies serve materially as a site for male homosociality (Flood, 2008), and girl-watching works as a means by which hegemonic masculinity is produced (Quinn, 2002). These practices reflect and at the same time reinforce a still persistent Italian misogynist culture, together with some stereotypical forms of performing masculinity. This opens up broader questions about masculinity and social media, since the understanding of online misogyny as intertwined with already existing forms of gender inequalities is important to contextualize the role of digital platforms in the construction of a fairer digital society.

We acknowledge the limits of our research: our data and results are limited to the Italian cultural context and too specific to allow for broader generalizations. For this reason, we would encourage the conduction of similar research in other national contexts for comparisons.

Furthermore, we are aware that the lack of socio-demographic information regarding users limits the understanding of the type of males that choose to participate in such groups and channels. Further research is then needed to fully unravel these questions, as well as to understand how Telegram groups and channels are regulated by their formal or informal administrators.

Other questions might concern the role played by female users in NCII practices or the role of “blacklists channels.” 9 On another note, NCII dissemination through digital means should be monitored over time and across platforms, by paying attention to how the increasing attention and visibility toward this kind of practice can possibly affect users’ behaviors and sharing practices.

Finally, since we did not undertake an analysis of deep fake porn, which was sometimes shared in the analyzed groups, we encourage further research to consider this new frontier of violence against women and girls while growing the conversation on ethical boundaries that should be imposed upon digital platforms.

Footnotes

Acknowledgements

The authors thank the reviewers for their insightful guidance. They also wish to express their gratitude to Alessandro Gandini, Richard Rogers, Raffaella Ferrero Camoletto, Valerio Mazzoni, Enrica Beccalli, and Greta Tosoni for their generous help and thoughtful suggestions and conversations.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.