Abstract

Introduction

Technological advances have allowed for the estimation of physiological indicators from video data. FaceReader™ is an automated facial analysis software that has been used widely in studies of facial expressions of emotion and was recently updated to allow for the estimation of heart rate (HR) using remote photoplethysmography (rPPG). We investigated FaceReader™-based heart rate and pain expression estimations in older adults in relation to manual coding by experts.

Methods

Using a video dataset of older adult patients with and without dementia, we assessed the relationship between FaceReader’s™ HR estimations against a well-established Video Magnification (VM) algorithm during baseline and pain conditions. Furthermore, we examined the correspondence between the Facial Action Coding System (FACS)-based pain scores obtained through FaceReader™ and manual coding.

Results

FaceReader’s™ HR estimations were correlated with VM algorithm in baseline and pain conditions. Non-verbal FaceReader™ pain scores and manual coding were also highly correlated despite discrepancies between the FaceReader™ and manual coding in the absolute value of scores based on pain-related facial action coding of the events preceding and following the pain response.

Conclusions

Compared to expert manual FACS coding and optimized VM algorithm, FaceReader™ showed good results in estimating HR values and non-verbal pain scores.

Introduction

The collection and analysis of large amounts of data is cumbersome, time consuming, and expensive, particularly when the data are derived from momentary changes in behavior or physiological activity. Publicly available datasets have allowed researchers to address previously inaccessible research questions and to complete projects that otherwise would not have been possible. In particular, facial expression video datasets1–5 have been used in scientific and clinical research6–9 and for the development and improvement of automated facial recognition technologies.10–12 Patient recruitment and development of video datasets can take years to complete depending on the nature of the samples. Thus, the ability to extract information from existing databases is of great importance. Two promising avenues for the development of automated computer vision systems involve efforts to recognize emotions from facial expressions13–16 and the estimation of physiological indicators (e.g., heart rate, blood pressure) based on video data.17–19

The gold standard for facial expression analysis relies on manual coding by trained coders using the anatomically-based, atheoretical and objective Facial Action Coding System (FACS), 20 through which trained raters reliably record the occurrence of specific facial actions (e.g., orbit tightening, cheek raising) known as Action Units (AUs). Frame-by-frame facial analysis using this method is rigorous but labor-intensive. That said, since the analysis is human observer-dependent, results are not immune to biases and errors. Similarly, the conventional and gold standard for measuring heart rate involves use of psychophysiological recording equipment21–23; but relevant heart rate information may be missing from existing video datasets. As a result, the possibility of estimating patient heart rate based on videos, is of interest.

A commercial automated facial analysis software, FaceReader™ by Noldus Technology Information 24 has been used widely in studies of facial expressions of emotion and is associated with a growing base of published research in support of its validity in recognizing and monitoring emotional expressions and FACS AUs. 10 ,13,16,25–27 For instance, Lewinski, Den Uyl, and Butler 10 found that FaceReader™ was able to recognize 88% of human annotated emotional labels from two available datasets. Growing interest has also resulted in an influx of its use in scientific research.28–32 In particular, there is a growing use of the FaceReader™ in the pain context28,33, 34 ; however, analysis and direct estimation of pain are not currently embedded into the Noldus program. 24 The literature on FACS AUs and nonverbal expressions related to pain is robust.35–39 Nonverbal expressions of pain (e.g., facial expressions) are integral to pain assessment because, relative to verbal report, they are less likely to be influenced by situational and cognitive executive factors. 40 This is especially crucial in the assessment of pain in individuals with limited ability to verbally communicate due to severe cognitive impairments related to dementia. 41 , 42 Validating the feasibility of automated estimations of pain based on FaceReader™ output detection is particularly advantageous as the as the manually coded FACS-based approach is very labor-intensive.

Most recently, FaceReader™ has been updated to allow for computerized estimation of heart rate through video processing. The heart rate module of FaceReader™ uses a remote-photoplethysmography (PPG) system. 24 , 43 Remote PPG (rPPG) is a non-contact technique of measuring cardiac activity through variations of blood flow in the tissues which affect the light transmission and reflectance properties on the skin that is captured by a standard camera. 44 , 45 These changes and properties allow for researchers to remotely examine specific parts of the skin and infer heart rate estimations. 46 , 47 Two published studies have used the rPPG system by FaceReader™. 48 , 49 Benedetto and colleagues 48 found that FaceReader’s™ rPPG system calculated insufficiently accurate measurements for low and high heart rates when assessed against the gold standard electrocardiogram (ECG). Given the paucity of research, further examination and improvement of this system are necessary. The addition of physiological estimations to FaceReader’s™ current domain can allow for more comprehensive and simultaneous analyses that reflect the multifaceted nature and complexity of emotions.

These two growing avenues (i.e., assessment of pain expression and heart rate estimation) for the use of FaceReader™ are important. Physiological measures and cardiovascular functions have been examined to expand the understanding of the experience of pain. Moreover, autonomic responses have been examined as non-verbal components of the pain experience.50–53 Similar to facial expressions, physiological measures (e.g., heart rate) have also provided substantial information about the experience of pain in older adults with and without dementia.54–56 In older adults with limited abilities to verbally communicate, physiological measures may provide information in addition to behavioral and social indicators to aid in the evaluation of the pain experience. For instance, blunted autonomic responses in people with dementia have been reported 55 ; however, extensive examinations to the significance of autonomic responses in response to pain are needed. Many of the methods used to obtain physiological information (e.g., ECG) can be invasive, intrusive, or bothersome. As such, the feasibility of an automated and commercial system in estimating heart rate during pain is of interest to expand the information and use of existing video data relating to pain patients. 1

Validation of automatic facial analysis software such as FaceReader™ on existing datasets can expand its utility beyond real-time facial analysis to the collection of robust information from archival video datasets. Although there has been an exponential use for FaceReader™ in various fields, investigations validating the use of the software are limited. In addition, previous studies examining FaceReader™ performance have mainly focused on the recognition of emotion and AU targets. The primary goal of the study is to assess the feasibility of an automated software (e.g., FaceReader™) in retrospectively extracting information from existing video datasets. In particular, the present study aims to: 1) contribute to the current knowledge validating the rPPG FaceReader™ system; and 2) examine the feasibility of FaceReader™ in quantifying pain estimation scores. We examined the validity and feasibility of FaceReader’s™ automated system by examining heart rate during painful situations in a video data set of older adult patients. In the current study, we assessed FaceReader’s™ heart rate estimations and pain related facial action coding against an established, but more labor intensive, optimized Video Magnification (VM) heart rate algorithm57–59 and human annotated FACS data. Moreover, we investigated FaceReader’s™ performance by comparing the aggregate non-verbal scores calculated by FaceReader™ vs. trained coder coding. Validation of FaceReader™ in estimating heart rate and pain would allow researchers to further investigate and retrospectively analyze the vast corpus of archival video data available. We anticipated significant associations between results of manual coding and FaceReader™ outputs for AUs that are associated with pain and for heart rate estimations.

Methods

Measures

Facial action coding system

The Facial Action Coding System (FACS) is an objective measure of facial expression and activity. 20 FACS determines expression in terms of 44 action units (AU); AUs (e.g., lid tightening, brow lowering) represent discrete muscle movements, or combinations of facial muscle movements. 20 Using FACS, trained coders code AUs based on frequency (i.e., presence or absence) and intensity (i.e., from 0 to 5). 20 The FACS has been found to be highly reliable and valid in examinations of non-verbal pain behavior in older adult populations with dementia. 41 , 60 The six most consistent FACS AUs associated with pain are: brow lowering (AU 4), cheek raising and lid compression (AU 6), lid tightening (AU 7), nose wrinkling (AU 9), upper lip raising (AU 10) and eye closure (AU 43). 37 , 39

Prkachin and Solomon 39 proposed a FACS-based approach to quantify pain expressions by aggregating the scores of the FACS AUs that are consistently associated with pain into four categories of pain-related facial actions: brow lowering (AU 4), orbit tightening (AU 6/AU 7), levator tightening (AU 9/AU 10), and eye closure (AU 43). The intensity scores for brow lowering, orbit tightening, and levator tightening can be quantified using alphabetic codes (i.e., A to E) and then converted to numeric codes (i.e., 1 to 5; no action coded = 0), while eye closure is coded as 0 (i.e., no eye closure) or 1 (i.e., eye closure). 39 Intensity scores for each category are summed to indicate a total non-verbal pain expression score (i.e., ranging from 0 to 16) for each participant. 37 , 39 This approach has been previously validated in various populations including older adults with and without dementia 8 , 28 , 39 and was used for the purposes of this study.

FaceReaderTM

FaceReaderTM24 is a commercially available automated facial expression analysis software that is capable of recognizing and analyzing the six basic emotions (i.e., happy, sad, angry, surprised, scared, disgusted) based on 20 FACS AUs described by Ekman, Friesen, and Hager. 20 FaceReader™ analyzes facial expressions by initially finding the position of the face 61 and then concurrently using two methods of face classification (i.e., creating a face model though Active Appearance Model [AAM] 62 and deep artificial neural network 63 ) to produce an output and calculate AU intensities (see FaceReader™ manual 64 ). FaceReader™ codes AU intensity according to the classifications described by Ekman and colleagues 20 from A to E (e.g., trace, slight, pronounced, severe, maximum) which corresponds to a continuous scale from 0 (absence) to 5 (maximum intensity). 64

FaceReader™ offers additional modules that vary in functionality. The remote photoplethysmography (rPPG) module 24 is able to analyze heart rate and heart rate variability from videos by the quantifying the amount of light reflected by the face which relates to cardiac cycles and changes in blood volume based on video captured by a camera. 43 , 65 Gudi et al. 66 tested the rPPG system used in the FaceReader™ and found that repetitive facial movements (i.e., in talking conditions) can affect the performance of the system by compromising accurate face modelling and estimations. However, when validated against ECG measurements in video datasets of participants under various conditions (e.g., resting, walking, post-workout), the rPPG system showed promising results. 66 FaceReader’s™ heart rate analysis follows 3 phases: calibration (i.e., an 8.5 s period where the signal of the skin is initially sampled and processed), calculation (i.e., pulses over the previous 10 s are calculated and converted to beats per minute; bpm), and post-processing (i.e., processed to improve accuracy) 64 (for a detailed description of the remote PPG algorithm, see Gudi et al. 66 ).

Facial Expression Subscale of the Pain Assessment Checklist for Seniors with Limited Ability to Communicate-II

The Pain Assessment Checklist for Seniors with Limited Ability to Communicate-II (PACSLAC-II) is an easy to use observational pain assessment checklist created to assess nonverbal pain behaviors in individuals with dementia. 67 The PACSLAC-II consists of 31 pain behaviors coded as either present (1) or absent (0) and 6 subscales that correspond to the 6 non-verbal domains recommended by the American Geriatric Society for consideration in non-verbal pain assessment (i.e., facial expressions, verbalizations and vocalizations, body movements, changes in activity patterns and routines, changes in interpersonal interactions and mental status changes). 67 , 68 The tool has been shown to be valid and reliable and to account for the variance in distinguishing painful and non-painful situations. 67 , 69 For the purposes of this study, the PACSLAC-II Facial Expression subscale was examined with items such as “tighter face” and “wincing”.

Video magnification

The Video Magnification (VM) algorithm57–59 can be used to magnify the color component of a video to detect subtle variations in color in a specific region of interest. When applied to the skin, it can estimate heart rate by quantifying the changes in skin color due to blood flow. The algorithm has numerous parameters that have to be optimized for the present study such as filter parameters, choice of color, and magnification factor. The artifacts due to motion or changes in light conditions that can lead to erroneous estimates of the heart rate have been analyzed. 66 , 70 As such, a number of algorithms have been proposed to mitigate these artifacts. For example, motion tracking approaches have been proposed to overcome this problem 71 , 72 ; however, moving the face introduces other artifacts due to corresponding changes in light conditions. Given that our focus is to investigate FaceReader’s™ heart rate and pain expression estimations in relation to manual coding by experts, we selected video segments and skin regions with no movement to estimate the heart rate. The VM algorithm used in this study 58 , 73 , 74 has been verified through a comparison with wearable devices such as photoplethysmography (PPG). In the current study, in order to improve the reliability, the VM algorithm was applied to different areas of the body such as the face and hands. As a result, light changes or motion would not affect all areas similarly.

Participants

We used a total of four participants (for in depth analysis) from a larger, pre-existing dataset. Two participants were long-term care (LTC) residents with dementia (mean age = 87.5, SD = 4.95; 2 females) and two were independent community dwelling older adults (mean age = 78.50, SD = 3.54; 2 females). The data for this investigation were collected as part of a larger study. 8 The study was approved by the institutional ethics review board. The obtained consent included permission to use the video data for future analysis by laboratory researchers. Additional approval for the current study, which was consistent with the original consents that were obtained, was specifically granted. Proxy consent was obtained by family members or legal guardians of LTC participants. If LTC participants demonstrated any behavioral or verbal unwillingness to participate in the study, they did not participate. For their participation, $20 was set aside for each resident to purchase items that, according to caregivers or legal guardians, the patient would enjoy (e.g., flowers, music CDs). Community participants were offered $20 for their participation. All participants and/or proxies were given an information package and opportunities to ask questions about the study. The dataset from which the four participants were derived comprises older adults with moderate to severe dementia, recruited from local LTC facilities as well as of older adults over 65 years of age, without dementia, who were living independently in the community (for a detailed explanation of the data collection procedure and participant characteristics see Hadjistavropoulos et al. 8 ).

Two types of videos were available for each participant: a baseline video and a video from standardized physiotherapy examination which was designed to identify painful areas.75–77 For the baseline condition, the participants were video recorded as they were lying still on a bed or examination table. Following the baseline period, the participants were filmed as they engaged in a movement protocol (described by Husebo et al. 75 ) designed to identify painful areas. The baseline and examination conditions each took approximately 5 minutes.

The collected video data were manually coded frame-by-frame by trained coders using the FACS-based approach described above. A different trained coder coded the data using the PACSLAC-II. This resulted in approximately 5000 frames per baseline and examination segments. Interrater reliability for the manual FACS-based coding was based on the entire dataset and was excellent (baseline Pearson r = 0.99; exam r = 0.94). 11 Similarly, the interrater reliability of the PACSLAC-II trained coder coding for this dataset was also excellent (baseline k = 0.92; exam k = 0.86). 11

Procedure

The four videos for this study were chosen from the larger datasets of LTC and community participants based on optimal video quality: in focus, well-lit, and appropriate filming angle. For each participant, a no-pain and pain segment from the baseline and examination videos were determined. The no-pain segment (mean length = 8.05 s) was a segment from the baseline condition video that did not have any facial action or expression. The pain video segment for each participant was determined by initially scoping the PACSLAC-II and FACS-based manual coding of the examination condition dataset for the presence of pain instances. The criteria for the presence of a pain expression was determined as follows: coded to have a “pain expression” (P3) and at least one other facial cue present using the PACSLAC-II Facial Expression Subscale and coded to have at least one facial action associated with pain (i.e., brow lowering, orbit tightening, levator tightening, eye closure) present. The pain segment (mean length = 8.05 s) for each participant included a pain expression sequence (mean length = 2.05 s) and the 3-s preceding and following the pain expression. Previous research has found the average length of a pain expression to be approximately 2 seconds. 78 For the purposes of this study, the front view baseline and examination videos were cropped to allow for optimal analysis of the profile view of the face in FaceReader™. The videos for each participant were then analyzed using FaceReader™ version 8 and the rPPG and Action Unit modules. This resulted in a total of approximately 1000 analyzed frames. To set an individual calibration for each participant, a neutral frame from each participant’s baseline video was chosen.

The VM algorithm was used to estimate heart rate measurements during no-pain and pain segments of the baseline and examination conditions. To improve the reliability of the VM algorithm, we applied it to different areas of the body such as the face, hands, or feet. Heart rate estimations from video data using the VM algorithm were analyzed as follows: 1) determined the start time and end time of the video segment to be analyzed; 2) selected a region of interest from an uncovered area of the skin (i.e., ensured that the area should be steady throughout the segment); 3) determined the window length for each processing cycle (2 to 6 seconds); 4) determined the overlap factor on the window processing cycles (10 to 90%) (i.e., a higher overlap percentage improves the resolution but increases the processing time); 5) determined the frequency ranges to be processed; and 6) determined the color component to be processed (green, red or blue). 73 , 79 We determined that green provided the most reliable estimate followed by red. Moreover, in line with our systematic approach, we chose a longer window for each processing cycle for better frequency resolution. The window has to be large enough to include at least two heart beats; however, longer windows may also mask fast variations in beats per minute (bpm). In contrast, although a narrow frequency range removes noise and artifacts, this risk missing the actual heart rate. As a result, our adaptive approach began with a wide frequency range to obtain a noisy estimate of the heart rate, then repeated the test with a narrower frequency range around the noisy estimate. 73 , 79 Based on the heart rate estimates calculated frame-by-frame by FaceReader™ and the VM algorithm, two heart rate estimates were produced for each participant: a mean heart rate value (i.e., calculated by summing the heart estimates [bpm] of each frame divided by the total number of frames per segment) for the no-pain and pain segments. We omitted a total of 17 (1.74%) frames from the FaceReader™ calculation, where FaceReader™ was not able to estimate a heart rate value because of the nature of the filming (e.g., patient movement).

Using trained coder results as the comparison criterion (coder obtained FACS-based scores), we examined FaceReader’s™ non-verbal pain score and pain intensity ratings during the examination (i.e., pain) period. In order to gain a comparable scale using the FACS-based score calculation method by Prkachin and Solomon, 39 the FaceReader™ data were transformed as follows: AU 4 was coded as brow lowering; in the presence of both AU 6 and AU 7, the AU with the higher intensity score was used to obtain a score for orbit tightening; in the presence of both AU 9 and AU 10, the AU with the higher intensity score was used to obtain a score for levator tightening; and AU 43 was coded as either present (1) or absent (0). Accordingly, for the FaceReader™ and manually coded data, a total non-verbal pain score for each frame (i.e., from 0 to 16) was summed. For each participant the following scores were calculated for both the FaceReader™ and manual coding: an aggregate non-verbal pain score (i.e., obtained by summing the non-verbal pain scores of each frame for a segment), a mean facial action intensity score (i.e., calculated by summing the facial action intensity scores of each frame divided by the total number of frames per segment), and the percent of agreement of the coding for the presence and absence of each facial action (i.e., the number of occurrences where FaceReader™ coding matched trained coder coding).

Analysis

We calculated two correlation coefficients to determine the relationship between FaceReader™ and VM algorithm’s mean heart rate estimations during the no-pain and pain conditions. To examine the relationship between the FaceReader™ and manual coding to detect pain-related facial actions, the correspondence between FaceReader’s™ pain coding and two criterion indices (i.e., trained coder aggregate non-verbal pain score and mean facial action intensity score) were examined. We calculated two correlation coefficients for the aggregate non-verbal pain score of FaceReader™ and manual coding: 1) the correspondence of the aggregate non-verbal pain scores for the pain segment (i.e., including 3-s preceding and subsequent the pain expression); and 2) the correspondence of the aggregate non-verbal pain scores during the pain expression. Similarly, two correlation coefficients were calculated for the mean facial action intensity to assess the relationship between the results that were based on the FaceReader™ and those that were based on trained coders for the pain segment and during the pain expression. We also calculated difference scores based on the mean aggregate non-verbal pain scores. Moreover, the mean percent agreement was calculated by comparing the number instances where FaceReader’s™ coding matched the presence and absence of a facial action according to the coders FACS-based results.

Results

FaceReader™ and VM algorithm’s heart rate estimations

Table 1 shows the relationship between the mean heart rate estimations obtained through FaceReader™ and VM algorithm during no pain (baseline) and pain conditions. The Pearson correlations for heart rate estimations during no-pain and pain conditions were large and significant.

Relationship between the mean heart rate estimations (bpm) obtained through FaceReader™ and VM algorithm during no pain and pain conditions.

Note: Pearson correlations (r) are significant at p < 0.01. Each correlation coefficient reflects 4 pairs of values (i.e., the FaceReader and VM algorithm heart rate estimates during no pain and pain states). df = 2.

LTC: long-term care; VM: video Magnification; bpm: beats per minute.

FaceReader™ and manually coded pain data

Tables 2 and 3 shows the difference and correspondence scores of FaceReader™ and trained coder aggregate non-verbal pain scores for the pain segment (i.e., including 3-s before and after the pain expression) and during the facial pain expression. Pearson correlation coefficients between FaceReader™ and manually coded data for the pain segment and during the pain expression were both large and significant, with the relationship between FaceReader™ and manually coded data during the pain expression being slightly stronger relationship than the relationship of non-verbal pain scores for the entire pain segment (i.e., the segment that included the 3 s before and after the pain expression).

Relationship between the mean non-verbal pain scores obtained through FaceReader™ and manual coding for the videos incorporating the pain expression as well as preceding and subsequent 3-s periods.

LTC: long-term care; M: mean; SD: standard deviation.

Relationship between the mean non-verbal pain scores obtained through FaceReader™ and manual coding during the facial pain expression (excluding the preceding and subsequent 3-s periods).

LTC: long-term care; M: mean; SD: standard deviation.

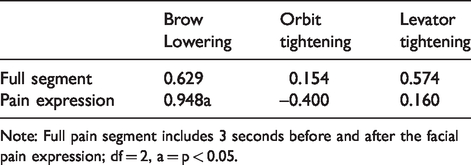

We also calculated Pearson correlation coefficients to determine the relationship between FaceReader™ and trained coder’s mean pain intensity ratings for the three components of the facial actions of the pain index (brow lowering, orbit tightening, levator tightening) during the full pain segment and when pain was expressed; the results demonstrated great variability and mostly nonsignificant relationships (Table 4). Eye closure intensity was not examined due to the dichotomous (0 or 1) nature of the score which often resulted in 0 variance. Table 5 shows the mean percent agreement of facial action presence and absence. The degree of agreement where FaceReader™ coding matched manually coded data during the pain segment ranged from 42% to 79%, FaceReader™ showed greater correspondence to manual coding during the instance when pain was expressed, with level of agreement as high as 85%. FaceReader™ matched the coder 30–79% of the time for presence and absence of facial actions before and after the pain expression.

Pearson correlations (r) for FaceReader™ and manual coding’s mean pain related facial action intensity scores.

Note: Full pain segment includes 3 seconds before and after the facial pain expression; df = 2, a = p < 0.05.

Mean percent agreement of facial action presence and absence between FaceReader™ and manual coding.

Discussion

We aimed to evaluate the feasibility of FaceReader™ in retrospectively extracting information from an existing video dataset of older adults with dementia. With respect to our objective of further validating the rPPG FaceReader™ system, to the best of our knowledge, this study represents the first published attempt to validate the FaceReader™ heart rate module against manual coding by an expert who used the well-established VM algorithm and optimized its parameters to the video segment being analyzed. Our results support the validity of the FaceReader™ heart rate module which demonstrated good correlations with our heart rate estimates. The key to our VM analysis was selecting video segments that had minimal movement and changes light conditions. This finding corroborates the results of a yet to be published study conducted by the developers of the system testing the current version of FaceReader™ heart rate module. 24 A previous investigation by Benedetto et al. 48 led to the conclusion that FaceReader™ overestimated lower heart rates and underestimated higher heart rate measurements. The discrepancy between our study and Benedetto et al.’s 48 could possibly be due to methodological differences including the method used to capture facial expressions and possibly differences in FaceReader™ versions. Although more research is required, our findings add confidence to FaceReader’s™ ability to evaluate heart rate using video of older adult participants.

Regarding our effort to validate FaceReader™ pain assessment estimates against manual coding of non-verbal expressions, we obtained high correlations that supported the validity of FaceReader™. This supports the efficacy of fine-grained analysis (e.g., frame-by-frame) methods to recognize facial pain responses and provide substantial information. 8 Moreover, our investigation adds to the growing body of literature demonstrating the performance of FaceReader™. 10 ,13,14,25–27

To the best of our knowledge, this is the first investigation of FaceReader’s™ heart rate module during painful conditions in older adults. Our results support the feasibility of FaceReader™ to measure heart rate during pain situations. Although physiological responses during pain have shown considerable promise in our understanding of the experience,50–53, 80 research on clinical application of such responses has been very limited. An example of a potential application might be during psychological interventions (e.g., distraction strategies) designed to mitigate negative emotional states (e.g., fear) during acute pain. 81 , 82 Monitoring physiological correlates (e.g., heart rate) of fear and fear of pain 83 could facilitate the direct evaluation of such interventions. More research in this area is needed.

A caveat was that despite the high correlations, the absolute values of pain scores based on FaceReader™ versus manual coding were discrepant during segments that included the run-up to and the denouement of a pain expression. This is evident in the mean differences between the non-verbal pain scores calculated by FaceReader™ and manual coding for the full pain segment and when pain is expressed. This suggests that FaceReader™ coded more pain related AUs than trained coders. Specifically, FaceReader™-based scores were higher than those of trained coders; however, this discrepancy effectively disappeared when only examining the scores for the target pain response (i.e., precise period of pain expression on the video). The discrepancy suggests that FaceReader™ may be detecting additional facial movements that may not have been deemed to be pain indicators in the manual coding. For example, degree of coding agreement was lowest for instances before and after the pain response for brow lowering, orbit tightening, and eye closure in comparison to the specific pain segment. Although videos were cropped to allow for optimal FaceReader™ analysis and specific pain segments were determined in order to remove extraneous artifacts (i.e., other facial expressions), a possible explanation for this discrepancy could be that FaceReader™ is based on an algorithm that is subject to different rules than manual coding. As such, FaceReader™ may be picking up incipient movements in the anticipatory window and/or vestigial movements in the denouement window throughout the instance that pain is experienced. The movements could be FACS AUs to which the algorithm may be more sensitive to or which the algorithm weighs differently.

Limitations and future directions

We recognize that the number of the videos analyzed in this study poses limitations in the generalizability of our findings. In addition, we recognize that there may be a bias towards higher quality videos as our analysis was limited to the video quality parameters of the FaceReader™ system and our ability to manually obtain reliable heart rate results that were consistent with each other when examining each patient’s face and hands. Although this represents a generalizability limitation for retrospectively analyzing video data, future work could be aimed to test efficacy of FaceReader™ in videos with less optimal quality against the VM algorithm and manual FACS-based scores.

Moreover, although manual coding was set as the criterion for this study, human coders are prone to biases and errors. In addition, although reliability analysis of the manual coding for this dataset was supported, reliability merely sets an upper limit for validity and should therefore be interpreted cautiously. Nonetheless, we believe that using two sets of analysis methods (i.e., FACS and PACSLAC-II) by individual proficient coders to define the pain segments adequately addresses the manual coding validity issue. Discrepancies in scores between FaceReader™ and manual pain AU coding could also be a result of the non-facial movements (e.g., head movements) that typically accompany a pain response and that could have produced “noise” in the automated coding: 84 Future research should investigate the scores calculated by FaceReader™ in more controlled and static expressions of pain.

Another limitation in our study was the nature of archival videos themselves. We had little control over technical features inherent to this dataset that may have affected the analysis. For example, the FaceReader™ manual 64 outlines recommended settings to allow for optimal heart rate analysis (e.g., frame rate of at least 15 frames per second (fps) and preferable frame rate of 30 fps). Although this dataset had a frame rate of 15fps, a higher frame rate may have yielded more accurate estimations. In addition, in contrast to studies examining rPPG systems which analyze wide range of bpms and employ larger sample sizes, 85 our study only examined approximately 8.05-s segments and 4 participants. Although this length was deliberately chosen due to the brief nature of pain expressions (e.g., lasting an average of 2 s), wider time periods may render more comprehensive measurements. Future research should examine the efficacy of FaceReader’s™ rPPG system in relation to pain in larger sample sizes and on longer durations, while adhering to the recommended technical features of the camera, movements and lighting outlined by Noldus. 64

The validity and feasibility of FaceReader™ as a heart rate and pain estimation tool can aid in expanding the information that can be extracted in existing video data sets. For instance, this would be helpful for researchers who want to retrospectively examine physiological estimations using FaceReader’s™ rPPG system. In addition, the findings give confidence for the growing use of FaceReader™ in the estimation and detection of pain from video data. Future investigations may incorporate pain estimation scores from FaceReader™ based on facial expressions in studies looking at pain in other populations. Moreover, estimating the intensity of pain using an automated system from facial expressions can aid in the detection and recognition of nonverbal pain indicators in older adults with severe dementia who have difficulties verbally communicating their pain. This avenue could also guide in the development and testing of automated pain detection systems. 12 In addition, the findings lend support to the potential use of the FaceReader™ in studies examining physiological reactions during pain in retrospective analysis or when direct estimation may not be feasible. Future investigations into this promising avenue of data collection and analysis will attempt to strengthen our current findings regarding concordance and reliability and incorporate previously unavailable heart rate data into the understanding of pain and functioning in older adults. The use of FaceReader™ technology to examine archival video data bring previously inaccessible research questions and projects within reach such as further delineating the relationship of autonomic responses in the experience of pain and creating opportunities to more fully utilize the extensive archives of video data available to researchers.

Conclusion

The concordance between FaceReader™ in relation to standard manual FACS coding and optimized VM algorithm’s estimated heart rate values and non-verbal pain scores of older adults with and without dementia was supported. The results of our investigation add to the growing body of literature demonstrating the validity of FaceReader’s™ rPPG system and AU recognition. Discrepancies in the calculated non-verbal pain scores between FaceReader™ and trained coders indicate that future research should assess the correspondence of FaceReader’s™ non-verbal pain estimations in relation to manually coded data in images of facial pain expressions, larger sample sizes, and longer periods. The concordance and effectiveness of FaceReader™ as a heart rate estimation tool is promising, particularly as a tool for accessing health information in vulnerable and under-treated populations such as older adults.

Footnotes

Acknowledgements

Findings within this paper were previously presented at AGE-WELL’s 5th Annual Conference, New Brunswick, 2019. This work was supported, in part, through funding from the AGE WELL Network of National Centres of Excellence and the Canadian Institutes of Health Research.

Contributorship

LIRC drafted the manuscript, completed the statistical analysis and most of the literature review. She also contributed to the study conceptualization. MEB contributed to the write up of the manuscript and approach to coding. TH contributed to the study conceptualization and manuscript write up, including interpretation of results. His grant also contributed to the funding of this project. KMP contributed to the manuscript preparation and approach to coding of the pain data. RG analyzed the heart rate data, contributed to manuscript preparation and conceptualization, including interpretation of the data.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

This work was supported through a grant from the AGE-WELL Network of Centres of Excellence.

Guarantor

TH.