Abstract

Background

Despite the proven effectiveness of traditional screening devices for Early Hearing Detection and Intervention (EHDI) programs, the costs of these instruments are high to be implemented as a sustainable solution for low- and middle-income countries. Mobile Health (mHealth) hearing screeners are an alternative solution to support EHDI programs. Therefore, demand is increasing for accessible, affordable and scalable alternatives.

Objective

This review reports on the validity, costs, status (commercially available/non-commercially available) of the mHealth screeners available globally for the paediatric population.

Method

A systematic search of PubMed, Scopus and Google Scholar was conducted. Articles published from 2014 and study populations of 0–18 years were eligible. Thirty-eight studies met the inclusion criteria, and 27 mHealth screeners were identified in this review.

Results

Three objective screeners were identified, OAEBuds, hearOAE and off-the-shelf OAE. Twenty-four subjective screeners were identified, mainly using pure-tone audiometry, game-based tests and speech-in-noise tests. Subjective screeners target children above 3 years of age. Sensitivity and specificity were comparable to those of traditional screeners, although the validation methodologies varied. Only one objective screener is commercially available. Few screeners are available free of cost and as open-source software. Compared to previous reviews on mHealth devices, this review captured objective hearing screeners.

Conclusion

The review outlined the need for more mHealth-based screeners for lower age groups, particularly for infants and toddlers, as most of the identified screeners catered to an older population of more than three years of age.

Introduction

Early detection of hearing loss is critical for timely intervention through appropriate hearing device fitting and habilitation, which in turn supports optimal auditory, speech and language development. 1 Objective hearing tests, such as Otoacoustic Emissions (OAE) and Automated Auditory Brainstem Response (AABR) 2 are commonly used for newborn hearing screening. Hearing screening among older children at school entry or paediatric screening using pure tone audiometry and tympanometry 3 are effective complementary strategies. These approaches have consistently demonstrated efficacy in ensuring the early identification and management of hearing impairment.

Despite their proven effectiveness, the widespread implementation in hospitals providing newborn hearing screening programs and in schools remains limited, particularly in low- and middle-income countries (LMICs). Access to hearing care via digital health platforms has the potential to address the rising out-of-pocket costs 4 that have been documented for healthcare in developing countries.

Mobile Health (mHealth) is a component of eHealth and is defined as a medical or public health practice supported by mobile devices, such as mobile phones, patient monitoring devices, personal digital assistants and other wireless devices. 5 The mHealth service delivery model offers several advantages, including accessibility, convenience, adaptability, patient-centeredness, data insights, efficiency and effectiveness. 6 It also supports flexible administration approaches, from self-administration to screening conducted by community health workers, facilitating large-scale program implementation. The integration of health data with cloud-based data systems and electronic health records enables effective referral and follow-up processes 7 According to a recent global report, almost 4 billion people have access to a smartphone with the internet. 8 In India, 9 around 94.4% of the rural population and 96% of the urban population own a smartphone, and more than 90% have access to the Internet. This widespread digital reach positions mHealth as a powerful tool for bridging healthcare delivery gaps, particularly in underserved regions. Additionally, mHealth technology eliminates the dependency on bulky hardware, offering portable handheld devices and enhanced data management systems for delivering healthcare services. Furthermore, the integration of artificial intelligence (AI) further empowers mHealth services. 10

Since 2012, there has been a growing trend in the adoption of mHealth technologies for hearing care services. These services are used for health promotion, screening, diagnosis, treatment and support. 11 However, recent systematic and scoping reviews revealed a paucity of validated, paediatric-specific mHealth-based hearing screening tools.11–14 Bright and Pallawella 15 reviewed the validity of mHealth ear and hearing screeners available through commercial app stores. Most of the applications lacked validity in peer reviewed journals and the validity, with no evidence specific for paediatric population. Chen et al. 16 performed meta-analysis to determine the accuracy of smartphone-based audiometry and speech recognition tests and reported high pooled diagnostic accuracy. However, the review primarily reflected adult populations and did not address on test paradigms for younger children. Neither review reported the availability of objective mHealth applications.

Abbey and Douglas 14 and Frisby et al. 11 conducted comprehensive reviews of the mHealth applications available globally. Abbey and Douglas 14 review did not include paediatric-specific hearing screeners and Frisby's review 11 mapped the use of mHealth-supported hearing care services across the continuum of care, encompassing health promotion, screening, diagnosis, treatment and support. The review also reported that hearing diagnostics and treatment applications were more available in high-income countries, whereas screening devices were more available in LMICs. Although comprehensive in scope, the reviews did not evaluate the validity of the identified mHealth applications or provide information on their commercial availability.

Hearing screening technologies can be classified based on measurement methods as objective and subjective. Objective measures assess auditory function without requiring the behavioural response of the patient/child and rely on physiological signals such as OAE or auditory evoked potentials.17,18 Subjective measures depend on perceptual or motor responses of the patient/child to auditory stimuli. Tests such as conditional play audiometry, visual reinforcements audiometry and behavioral observational audiometry are the few examples of subjective-based hearing tests. 19

In terms of service delivery, traditional hearing screening devices are stand-alone or desktop-based interfaced with a computer. 20 mHealth hearing screening refers to hearing screening practices supported by mobile devices such as smartphones and tablets, within this the primary interface for test administration, portability, data processing and result storage is done in the mobile device.7,14,15,21,22

Therefore, this review sheds light on recent innovations and advancements in mobile phone–interfaced hearing screeners tailored for paediatric populations. Additionally, it will capture the nature of the tests (objective/subjective) and provide insights into the commercial availability and costs of these screeners. Understanding these technological developments is important, as it helps identify gaps, avoid duplication of existing efforts and guide the use of validated, cost-effective paediatric hearing screening tools into scalable solutions.

This systematic review aims to report mHealth/mobile phone or tablet-interfaced innovations that support paediatric hearing screening developed in the period of 2014–2024. Furthermore, the validity and cost of such innovations are described in detail.

Methodology

Protocol and registration

The systematic review protocol was registered in the Prospective Register of Systematic Reviews (PROSPERO) under the registration number CRD42024536576 (https://www.crd.york.ac.uk/PROSPERO/view/CRD42024536576).

This systematic review followed the Preferred Reporting Items for Systematic reviews and Meta-Analysis (PRISMA) guidelines. 23 The checklist is attached as Appendix-4 in Supplementary Material.

Search strategy

The PubMed, Scopus and Google Scholar databases were searched using a search strategy reviewed by all authors prior to the database search. These search engines were chosen based on their availability and accessibility. Additionally, manual searches of the bibliographies of the selected papers based on the eligibility criteria were performed to identify further relevant research, which was subjected to the same screening and selection process.

Search strategies were tailored for each of the above-mentioned databases, incorporating MeSH terms and Boolean operators. A pilot search was conducted in each database to refine the keywords and identify synonyms for the search strategy. The finalised search strategy was shared with all authors, and discussions on the inclusions and exclusions of the search terms were held to reach a consensus.

The details of the search strings are in supplementary file (Appendix-1). Title screening was carried out from April to June 2024; abstract screening was completed in November 2024, and the full-text search was completed in March 2025. One relevant article published during the review process was identified through hand searching and included.

Inclusion criteria

Studies using all types of designs having quantitative data were included using the PICO framework 24

Additionally, studies were only included if they were determined to have low bias based on the Joanna Briggs Institute (JBI) quality assessment checklist ratings (Refer Appendix-3).

Screening studies for eligibility

The title, abstract and full-text screening of the studies were conducted using the web-based Rayyan software (premium license was purchased). All duplicates were automatically flagged by the software, and the first author reviewed them before confirming their removal.

The titles and abstracts of the studies were screened against the inclusion and exclusion criteria by the first author (VS). After random allocation, 40% of the articles were screened by the second author (VR). Any discrepancies that arose were discussed among the reviewers, leading to a common consensus.

Two reviewers (VS and VR) screened the full text for inclusion, and any discrepancies between the decisions were discussed and resolved. Both reviewers were blinded to the screening results of the articles using Rayyan software.

Data extraction and synthesis

The data extraction categories were prepared and reviewed by all reviewers. Google Sheets were used to document full-length articles (refer to Appendix 2). The results of the extracted articles were synthesised using a narrative approach.

The cost and commercial availability of the identified screeners were manually searched on the Internet, Google Play Store and App Store. Additionally, email communications were sent to the authors to obtain further information regarding the screeners whenever necessary.

Results

Quality assessment

Following data extraction from the articles, the JBI critical appraisal checklist was utilized. 25 The reviewers categorised each article into three ratings: Yes, No and Unclear. If the overall appraisal of a study had two or more ‘No’ responses, then the study was considered to have ‘high bias’ and was excluded from the review. The overall bias was considered as ‘low’ if no/one item was rated as ‘unclear/high bias.’ If there were two items with ‘unclear/high bias,’ then the overall bias was considered as ‘medium,’ and if there were three or more items with ‘unclear/high bias,’ then the overall bias was considered as ‘high.’ Only studies with low and medium bias were included for data extraction; studies considered to have a high risk of bias were excluded from the review. Google Sheets was used to rate the studies. Two reviewers (VS/VR) performed a critical appraisal of the articles independently. Consensus discussions among the authors resolved any disagreements.

All studies had a cross-sectional design. Therefore, only the JBI cross-sectional quality appraisal tool was used. All the included studies for the full text were rated as having low bias and were included (refer to Appendix-3).

The search yielded a total of 4020 articles (PubMed: 3280; Scopus: 605; Google Search: 134; and hand searched: 1). During title and abstract screening, 186 duplicate articles were removed. Of the remaining 3834 articles, 3698 were eliminated for reasons such as irrelevance to the study topic, inadequate data, no validity measure and not being an mHealth technology. In total, 136 articles were selected for full-text screening.

Preferred Reporting Items for Systematic reviews and Meta-Analysis chart

Of the 136 articles included for full-text screening, 38 were considered for this systematic review. The remaining articles were excluded for reasons such as population mismatch (n = 27), lack of validation/screening focus (n = 24), inappropriate study design (n = 10), lack of mHealth technology (n = 11), inappropriate study focus (n = 20) and publication timeline beyond 2014 (n = 1). The systematic inclusion process for the articles is depicted in a PRISMA flowchart 26 (refer Figure 1).

Preferred Reporting Items for Systematic reviews and Meta-Analysis (PRISMA) chart.

Overview of articles included

The studies were predominantly from the USA (n = 8)27–34 and South Africa (n = 6)35–40. Three studies each were conducted in India,41–43 Australia,44–46 Brazil,47–49 two studies each were conducted in Indonesia,50,51 Thailand,51,52 China,53,54 multi country55,56 and one each was conducted in Nicaragua, 57 Denmark, 58 Taiwan, 59 Turkey, 60 Netherlands, 61 Belgium 62 and United Kingdom. 63

Mobile-interfaced objective and subjective hearing screening tools for the paediatric population

Of the 38 included studies, four described objective hearing screeners, and 35 described subjective hearing screening tools/devices. However, only 27 of these tools were validated as mobile-interfaced paediatric hearing screening tools.

Classification of objective and subjective screeners

Objective hearing screeners

Number of identified screeners: Objective hearing screeners accounted for only three of the 27 validated screeners, which were only OAE-based objective tests (OAEBuds, 28 Off the Shelf OAE27,29and HEAR OAE 35).

Testing site: Validation of these tools were done in hospitals (n = 2)27,35 and quiet room (n = 2).28,29

Test personnel: The instruments were used by the study coordinators (n = 2)27,29 and by an audiologist (n = 1), 35 and one study did not mention the screening personnel involved (n = 1).28

Population: The ages tested for validation ranged from birth to 32 years of age for validation. However, most of the population were newborns and infants, making it ideal for newborn hearing screenings, as they did not require any behavioural participation.

Test paradigms: Two studies described DPOAE,27,28 one study describes TEOAE 29 and one study included both DPOAE and TEOAE35.

Subjective hearing screeners

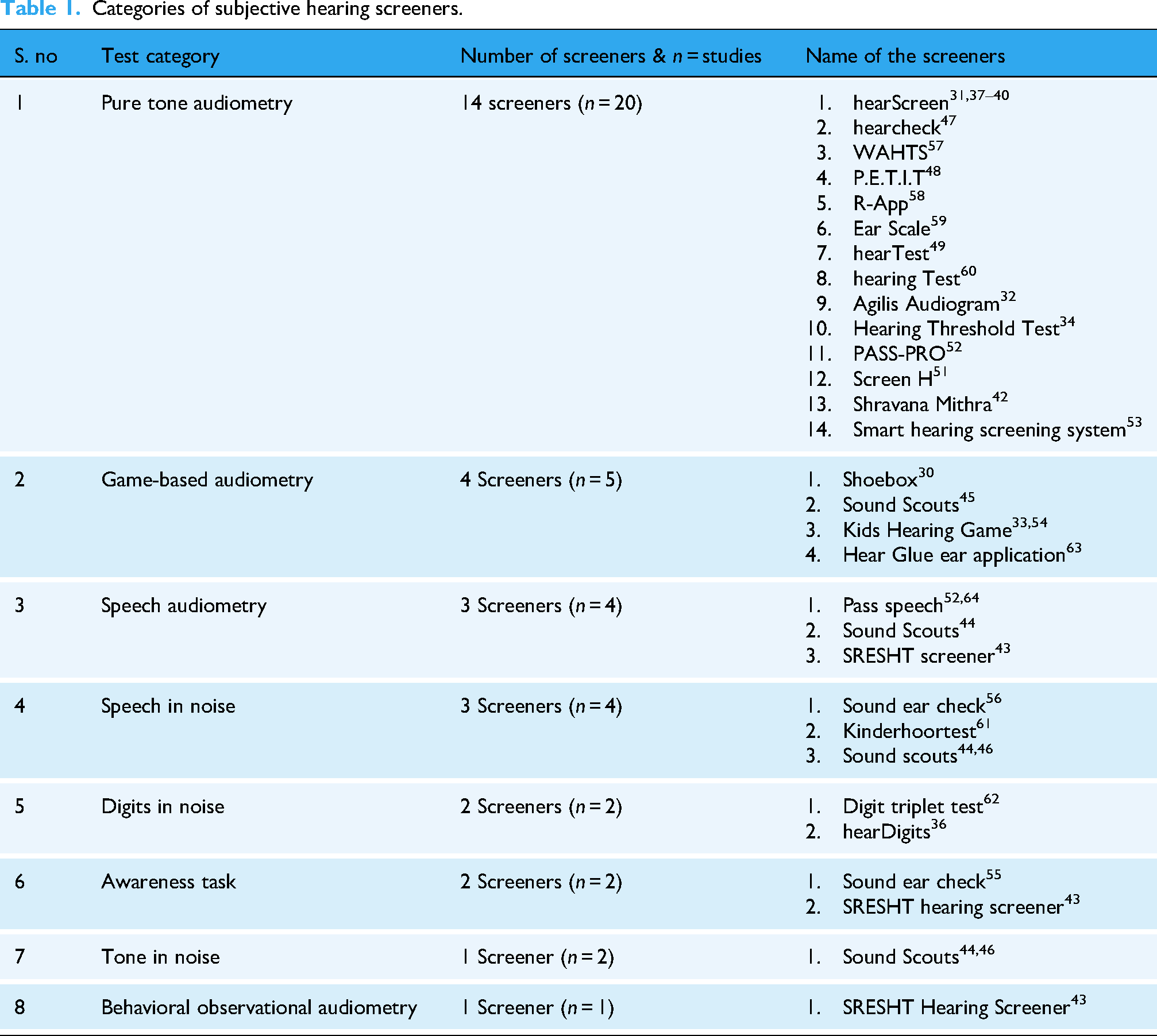

Number of identified screeners: Subjective screeners accounted for most of the 24 screeners and had multiple test paradigms. These tools relied on the active participation of children (refer Table 1).

Categories of subjective hearing screeners.

Testing site: The sites of studies were done mainly in schools (n = 13),38,40,44–46,48,52,53,56,57,59,61,62 Quiet room in community sites (n = 7)33,36,37,41,42,55,60 and quiet room in hospitals (n = 7).39,43,49,54,55,58,64 This was followed by a sound booth (n = 5),30–32,34,63 noise simulated at a sound booth (n = 1) 51 and not mentioned (n = 1). 47

Test personnel: Most of the tests were carried by the child itself (self) (n = 18),30,32–34,39,44–46,49,54–56,58,59,61–64 audiologist (n = 4),43,48,49,53 community health worker (n = 2),31,57 audiology student (n = 2),37,40 ENT assistant (n = 1), 41 researcher (n = 1) 36 and some of the studies have not mentioned the personnel (n = 6). 42 47,50–52,60

Population: The age range of the patients tested for validation was 2 days to 97 years. Because different screeners tested different age ranges, the following table shows the least age at which the screeners can screen for hearing loss (refer Table 2).

Least age the subjective hearing screeners can screen for hearing loss.

These tools are predominantly aimed at preschool and school-aged children, enabling mass screening in community, school or home environments. Unlike objective screeners, subjective screeners require the active participation of children, and most screeners can only validate or use screeners for children above three years of age.

Commercial availability of hearing screeners

The commercial availability of the identified 27 mHealth screeners was assessed. For the objective hearing screener hearOAE is known to be available commercially, two studies27,29 which described off the shelf OAE, is available as an open source and therefore reported only the manufacturing cost. The OAEBuds 28 are not commercially available but have reported the costs for manufacturing, hence both off the shelf OAE and OAEBuds were considered as prototypes.

Among the subjective hearing screeners (n = 24), 15 screeners were commercially available, 1 (Shoebox) had discontinued services for the paediatric population, and no information on eight screeners’ commercial availability.

The cost profiles of the screeners were compiled based on their availability in public search engines. Efforts were also made to contact the authors/vendors to obtain a quotation for the screener (refer Table 3).

Cost and commercial availability of the screeners.

Validity of the screening tools

Different validity measures were used for the mHealth screeners. For objective hearing screeners, sensitivity and specificity analyses (n = 3) were validated against commercial OAE screeners27–29 and concordance (n = 1), validated against the diagnostic OAE instrument 35 were done). For subjective hearing screeners, sensitivity and specificity (n = 21), Cohen's kappa (n = 1), Pearson's (n = 3) and Spearman rank correlation (n = 1), absolute value comparisons (n = 2) and mean values (n = 3) (refer to Tables 4 and 5).

Validity of objective hearing screeners.

Validity of subjective hearing screeners.

The validity of all screeners was categorised based on their commercial availability.

Commercially available validated mHealth Screeners

Objective hearing screeners:

Only hearOAE was available commercially, and it contained two test categories: DPOAE, which follows a pass criterion of an SNR greater than 6 dB in four out of six frequencies. For TEOAE a SNR of 3 dB or more in three out of five frequencies. 35 The study reported a concordance level of 89.7% and TEOAE of 85%. 35

Subjective hearing screeners

Since subjective hearing screeners have a variety of test paradigms, the validity is described in separate headings. The pass criteria were subjective to the screeners. Hence, based on this criterion, validity is reported.

Pure tone audiometry (n = 8)

hearScreen, WAHTS, PASS-PRO, Ear Scale, Hearing Test, Hearing Threshold Test, Shravana Mithra and hearTest are commercially available screeners. The screeners were validated against diagnostic pure-tone audiometry. Some screeners compared the results with otoscopy and tympanometry.

hearTest

49

– The pass criterion is <30 dB HL- Sensitivity is 100% and specificity is 98% for disabling hearing loss, and sensitivity is 100% and specificity is 90.2% for any type of hearing loss. WAHTS,

57

hearScreen,37–40 Ear Scale

59

and Pass-pro

52

– The pass criterion is <25 dBHL-WAHTS

57

reported only the specificity (99.6%) and positive predictive value (77%). For the other screeners, the sensitivity ranged from 62.5%

40

to 100%

59

and a specificity range of 81.9%

52

to 98.5%.

38

hearScreen

31

and Hearing Threshold test

34

– The pass criterion was <20 dB HL. hearScreen

31

reported a sensitivity of 85% and specificity of 41%. Hearing Threshold test

34

reported to have a sensitivity and specificity of 96%. Hearing Test

60

and Shravana Mithra

42

– The pass criterion was <15 dB HL. Hearing Test

60

reported a kappa coefficient value of 0.059 and Shravana Mithra

42

reported the absolute values for air conduction testing is 99% and bone conduction testing is 97% and these are within the 10 dB when compared to standard pure tone audiometry testing.

Game-based audiometry (n = 3)

Kids hearing game,33,54 Hear Glue ear application

63

and Sound scouts

45

are commercially available game-based audiometry:

Kids Hearing Game/ Tablet hearing game – The pass criterion is <20 dBHL in all frequencies. Above 4 years – Sensitivity – 91% and Specificity – 74%, below 4 years – sensitivity is 100% and specificity is 29%

54

and mean results were statistically equivalent (p < 0.050) except for 500 Hz (p = 0.101).

33

Sound scouts: Pass criterion – four frequency average – (500, 1 K, 2 K and 4 kHz) < 30 dB HL. The sensitivity and specificity were reported to be 88%.

45

Hear Glue ear application: The pass/refer criterion was not mentioned however the stimuli of presentation was in the range of 20–80 dB HL and was compared with previous diagnostic thresholds. The validity is measured through Spearman rank correlation r = −0.656, which indicates a strong correlation.

Speech audiometry (n = 3)

The SRESHT Hearing screener,

43

Pass-pro (n = 2)52,64 and Sound scouts.

46

All screeners compared their results with the gold standard pure tone audiometry tests:

Pass-speech52,64 used a 50% psychometric function and the presentation of stimuli was 20 dB HL, with a reported sensitivity is 90% and the specificity is 93.4%. Sound scouts

46

used a H metric of −1.8 SRT, Refer- (-)2.5 SRT with reference to pure tone values. Only the overall validity of the tool was reported with 85% sensitivity and 98% specificity after inconclusive results were excluded. SRESHT Hearing screener

43

used the correct response of 5/6 words in each ear (80% psychometric function) as the pass criterion which reportedly yielded a kappa overall value of the screener of 82%.

Speech in noise (n = 2)

Kinderhoortest

61

and Sound scouts

44

are the commercially available screeners for speech in noise. Kinderhoortest

61

and Sound scouts

44

compared the screeners using gold standard pure tone audiometry. Sound scouts

44

additionally used otoscopy and tympanometry results.

Sound scouts: The scoring criteria are as follows: Fail (0–70), borderline (71–78.4), children with a four-frequency average hearing loss (4 FAHL; average of 0.5, 1, 2 and 4 kHz) of 30 dB HL in at least one ear will reliably fail or borderline score. Only the overall validity of the tool was reported with 85% sensitivity and 98% specificity after inconclusive results were excluded

46

and in another article the reported specificity is 94.99% (false positive on rescreen was 5.01%)

44

Kinderhoortest: The pass criterion speech reception threshold (SRT in dB SNR) at which 50% of the material was correctly understood. The age-related mean value of the scores was reported as – 14.4 dB SNR (SD: 1.6). They also reported that the presentation type (computer or smartphone) did not affect test scores.

Digits in noise (n = 2)

Digit triplet test

62

and hearDigits

36

are commercially available. The screeners’ validity measures were compared with pure tone audiometry, and hearDigits additionally used otoscopy and tympanometry:

Digit triplet test

62

– The pass criterion is better than −7.2 dB SNR for younger children (9–12 years old) and −8.5 dB SNR for older children (13–16 years old). The validity measure used was Pearson correlation which revealed a strong correlation of r = 0.65 (left ear) and moderate correlation r = 0.59 (right ear). hearDigits

36

– The pass criterion is 50% of digits recognized correctly and the age wise normality of the screener was calculated diotic SRT mean value of dB SNR is −9.2 (minimum −12.4 and maximum −6.8 dB SNR) and antiphasic value of dB SNR is −16.4 dB SNR (minimum −20.6 dB SNR and maximum −11.2 dB SNR) SRT mean the SRTs improved by 0.15 dB and 0.35 dB SNR per year of age.

Awareness tasks (n = 1)

The SRESHT Hearing Screener is the only commercially available screener. It uses the Speech Spectrum Awareness Task to screen for disabling hearing loss (>60 dBHL). SRESHT Hearing screener

43

was validated against pure tone audiometry.

1. SRESHT Hearing Screener Criterion: Three out of four stimuli are considered a pass for 60 dBHL. Only the overall kappa value for the screener was available (82%).

Tone in noise (n = 1)

Only sound scouts had two studies discussing tone in noise44,46 and commercially available. They had multiple tests, such as a speech-in-quiet test, speech-in-noise test and tone-in-noise test, combined to give an overall score. These results were compared with the gold standard pure tone audiometry 44 and were additionally tested with an otoscope and tympanometry.

Both studies used different metrics to refer to children. Dillon et al. 46 used the H metric (which is used as a reference of normal limits, a lower H metric indicated better speech perception in lower SNRs, and higher H metric indicated poor performance at lower dB SNRs). A score was considered a Pass if the H metric was −1.8 SRT and classified as a refer if it was −2.5 SRT. Bowers et al. 44 had a cumulative scoring pattern of the screener and classified the metrics as follows: It is considered as fail (0–70), a borderline (71–78.4) and a pass (79–145+). Children with a 4-FAHL (average of 0.5, 1, 2 and 4 kHz) of 30 dBHL in at least one ear will reliably return as fail or borderline score. Only the overall validity of the tool was reported with a reported sensitivity of 85% and specificity of 98% when inconclusive results were excluded, as reported by Dillon et al. 46 and Bowers et al. 44 reported the overall specificity of the Sound Scouts screener as 94.99%, which was based on a 5.01% false positive rate.

Behavioural observation audiometry (n = 1)

The SRESHT hearing screener 43 explored behavioural observation audiometry as a screening module for infants and toddlers aged 0–1 year with a mean age of 6 months for children with normal hearing and a mean hearing age of 8 months for children with hearing impairment. The screener was compared with pure tone audiometry, and subjective responses such as eyeblink, eye widening, searching and head turning towards the sound source were considered as a response. If the infant/toddler can respond to ¾ stimuli correctly, it is considered a pass. Module-specific validity was not available; the overall kappa value was 82%.

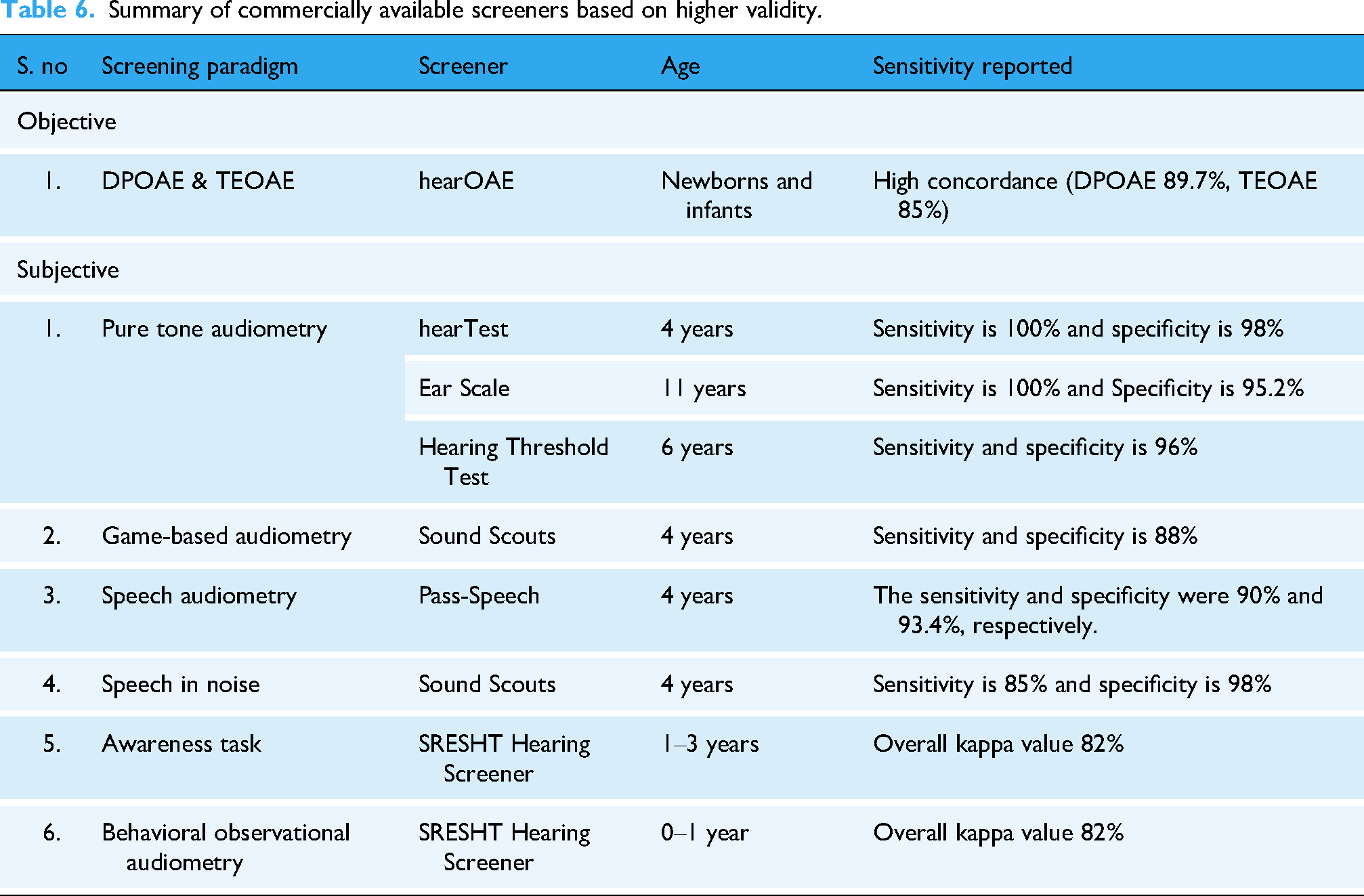

Among the commercially available tools (refer Table 6), the hearTest, Ear Scale and Hearing Threshold Test demonstrated the highest sensitivity for pure-tone-based screening, whereas Sound Scouts consistently showed acceptable sensitivity across multiple subjective paradigms, including game-based and speech-in-noise screening.

2. Non-commercially available/prototype validated mHealth screeners

Summary of commercially available screeners based on higher validity.

Objective hearing screeners

Both OAEBuds 28 and Off the Shelf OAE27,29 were published as open-source codes and hence, they are not commercially available and are validated as prototypes.

The OAEBuds

28

contain TEOAE screening and Off the shelf OAE27,29 contains DPOAE screening both these devices were validated against commercial OAE screeners.

Off the Shelf OAE27,29: The pass criteria for DPOAEs required an SNR greater than 5 in three out of four frequencies.27,29 The reported sensitivity is 100%27,29 and specificity of 88% OAEBuds

28

: For TEOAEs, the criteria included any two frequencies exceeding 8 dB SNR and an emission amplitude greater than −10 dB SPL. The studies reported a sensitivity of 100% and specificity of 89.7%.

28

Subjective hearing screeners:

Pure tone audiometry (n = 7): All the screeners’ results were compared with the gold standard pure tone audiometry, and some of the screeners were additionally compared with otoscopy, tympanometry and ENT diagnosis. Shoebox has discontinued its services for paediatric populations; however, since the tool is validated, it is being reported here.

Screen-H

50

: The pass criterion was <35 dB HL,

50

the range of sensitivity (80.8%–82.8%) and specificity (93.9%–94.1%). R-App

58

: The pass criterion was <25 dBHL. The absolute difference of dB between the screener and a manual audiometer is between 4.5 and 6.2 dBHL. Smart hearing screening

53

and Screen-H

50

: The pass criterion was < 25 dB HL, the range of sensitivity (37.5%–82.8%) and specificity (92.6%%–98.5%). Ouviu,

47

P.E.T.I.T

48

: The pass criterion was <20 dB HL. For 500 Hz, the pass criteria were <30 dB HL

48

and <40 dB HL.

47

The sensitivity is in the range of 95.7%

48

−97.1%

47

and specificity is in the range of 81%

48

–96.6%.

47

Shoebox

41

and Agilis Audiogram

32

: The pass criterion was <15 dB HL. For Shoebox

41

the reported sensitivity was 89% and specificity was 70%. For the Agilis Audiogram,

32

a Pearson correlation value of 0.9 has been reported.

Game-based audiometry (n = 1): Shoebox is validated against Air conduction audiometry for the frequencies of 0.5, 1, 2, 4, 6 and 8 kHz:

Shoebox: Sensitivity was not reported because of the small sample size. The specificity for ≥16 dB was 37% and for ≥ 21 dB HL was 52%.

30

Speech in noise (n = 1): The Sound ear check

56

was validated against diagnostic pure tone audiometry.

1. Sound ear check criterion: Pass-Standard deviation of more than 2.5 dB SNR for the last 15 trials. The overall sensitivity and specificity of the screener for different degrees of hearing loss were <20–69% and 65%, <30–79% and 78%, <40 dB – 90% and 81%, respectively.

Awareness tasks (n = 1): The Sound ear check

55

used pure tone audiometry tests as a comparison to the screener.

Sound ear check criterion: Phase I- Sound acclimatisation's- Dichotic presentation, Noise fixed at 65 dB SPL @ 0 dBSNR, done until correctly identified. Phase-II Training (21 trials) Identifies 71% correctly (Noise fixed at 65 dB SPL but stimuli is up down method – 2 dB steps). SEC (ref) test Sensitivity was 63%, 72% and 89% for 20, 30 and 40 dB HL, respectively, and specificity was 58%, 68%, 79% for 20-, 30- and 40-dB HL, respectively. For the SEC (aph) test, the sensitivity was 63%, 69% and 80% for 20, 30 and 40 dB, and the specificity was 67%, 65% and 76%, respectively, for the combined types of different hearing losses.

Prototype and non-commercial objective hearing screening tools (refer Table 7), such as OAEBuds and Off the Shelf OAE, demonstrated good sensitivity, comparable to or exceeding that of commercially available devices. However, their lack of market availability limits immediate scalability, reinforcing the need to distinguish between technical validity and implementation readiness.

Summary of non-commercially available/prototype screeners based on higher validity.

Similarly, subjective mHealth screeners demonstrated high to acceptable sensitivity and strong agreement with diagnostic pure-tone audiometry across multiple test paradigms. Nonetheless, heterogeneity in validation metrics, pass criteria and the absence of commercial availability limits their immediate applicability beyond research settings.

The compliance of all validity data is available in Table 4 for objective mHealth screeners and Table 5 for subjective mHealth screeners.

Hardware and transducers used

The studies included using mHealth screeners interfaced with different hardware to present stimuli. In Objective screeners, OAEBuds 28 innovatively used a wireless technology, while others used wired probe connected to a controller interfaced with a smartphone for display.

For Subjective hearing screens, most of the studies use tablets and smartphones interfaced with standard headphones. There were a few articles that used off the shelf headphones for presentation of stimuli42,59–6133,43,51,53,54 (n = 9) (refer Table 8).

Classification of hardware interfaced transducers.

Discussion

This systematic review focused on mHealth-validated screening applications that have been available for the paediatric population for the past decade (2014). The findings highlight that while mHealth-based paediatric hearing screeners have expanded their scope, they remain uneven across age groups and test paradigms. Most of the validated tools identified in this review were subjective hearing screeners available mainly for children above 3 years of age, while only a few objective OAE-based hearing screeners were identified, and no other mHealth-based objective hearing screeners were identified.

In this review, we were able to capture three mHealth-based objective OAE screeners such as OAEBuds, 28 Off the shelf OAE27,29 and hearOAE 35 that have demonstrated higher sensitivity and comparable specificity when compared to conventional OAE screeners.65,66 These validity measures indicate the technical feasibility of mobile integrated objective hearing screeners. However, two of the screeners were non-commercial prototypes, and only hearOAE 35 was commercially available at the time of this study. This suggests that, although analytical validity has been demonstrated, its translation into commercial screeners remains limited. The review did not identify any mHealth-based AABR screening tools. The AABRs are particularly used for high-risk neonates for identifying neurologic disorders. 17 This absence highlights a critical gap in mHealth-based early detection. Although two mHealth systems, Otonova by otodynamics 67 that integrates both OAE and AABR and AScreen by neurosoft, 68 which has both TEOAE and DPOAE modules, though available globally their validation data were not identified in the peer-reviewed literature.

In contrast to objective hearing screeners, most of the validated tools identified were subjective hearing assessments. Many of these tools demonstrated acceptable to high sensitivity or strong agreement with diagnostic pure-tone audiometry, suggesting their utility similar to that of conventional hearing screening methods. However, the validity of these tests depends on the age of the child. Tools validated in older children (typically 4 years and above) demonstrated higher validity metrics than those in lower age groups. This is in line with the findings of Dawood et al., 69 they screened children aged 3–10 years, compared the effectiveness of community health workers and school health nurses. It was reported that for each younger age, there was 0.214 times increase in referral results. This highlights the important limitation of subjective mHealth screening approaches for preschool-aged children and reinforces the need for innovative solutions for both subjective and objective screening modalities in younger populations.

In general, audiological testing requires soundproof rooms for testing, in which the maximum permissible ambient noise levels are in the range of 21–37 dB SPL, 70 to achieve good validity during hearing testing. In our review, we found that nearly 80% of the studies used quiet rooms in schools, communities and hospitals, and they achieved good screening validity. However, this review did not capture the screeners’ functionality in the presence of different room ambient noise conditions.

Only 16 out of 27 screeners were commercially available, and the others were prototypes or were discontinued. This is particularly important because validation alone does not equate to market readiness. Screeners also require country-specific regulatory approvals, long-term support and integration into health systems. Most screeners were developed and validated in high-income or upper middle-income countries. Eksteen et al. 71 reported the cost analysis of hearing and vision mHealth screening program. The community health worker screened children of age 4–7 years using hearScreen for hearing screening and peek Acuity for vision screening. The study reported a program running cost of $5.63 per child. The cost of running a program for children younger than 4 years is unknown. Some of the mHealth tools use cloud data management system which requires an annual subscription. With the advantages of increased follow-ups, reporting, data traceability and advanced reporting, 22 the upkeep/program costs for this needs to be studied.

From this study most mHealth tools were validated when used by community health workers or nurses,12,57,72,73 to increase coverage and access to hearing screenings. While this model is successful in the number of children reached and followed up, it is also important to consider the workload of these community health workers, digital literacy and training aspects. 74

While mHealth-based OAE hearing screeners are emerging, validated mHealth-based ABR screeners were not identified through this systematic review. There's a lack of standardization of pass/referral criteria in hearing screening, which was observed in both objective and subjective screeners. This is required to improve comparability and program consistency among the screeners.

Despite the recent trend in AI and machine learning (ML), which can potentially enhance the screening accuracy and automation of referrals and follow-ups, there are no paediatric mHealth hearing screeners that have embedded this technology. This indicates that such technological innovations are yet to be translated for paediatric mHealth hearing screeners.

While the review highlights the advantages, there are some limitations. The included articles had more heterogeneity in the validation protocols and outcome measures. Hence, meta-analysis could not be done. Additionally, the review did not deeply examine the test metrics, test–retest reliability and device calibration procedures. Additionally, only English language papers were involved which might have excluded publications from non-English speaking context on their innovations.

All included studies required a quiet room or sound booth to conduct tests, but during mass screening procedures, ambient noise levels can be high. Therefore, it is essential to explore valid screening methods in noisy environments. Moreover, integrating AI into both objective (digital signal processing) and subjective assessments can help minimize human error while ensuring data privacy.

Conclusion

This systematic review demonstrates that mHealth paediatric hearing screeners can achieve validity comparable to conventional screening methods particularly for older childrens. However, the current evidence base is uneven with limited availability of objective-based mHealth hearing screeners.

Although several screeners achieved adequate validity, only a few were commercially available, with some available free of cost and as open source. Future research should emphasize the following: a standard validation protocol across age groups and contexts, the development of more objective hearing screeners and the adoption of the latest technological innovations, such as AI and machine learning, to increase the efficacy of these instruments.

Supplemental Material

sj-docx-1-dhj-10.1177_20552076261434816 - Supplemental material for A systematic review of mHealth-based paediatric hearing screening tools/devices

Supplemental material, sj-docx-1-dhj-10.1177_20552076261434816 for A systematic review of mHealth-based paediatric hearing screening tools/devices by Vishnu Saravana, Vidya Ramkumar and Arivudai Nambi Pitchaimuthu in DIGITAL HEALTH

Footnotes

Acknowledgements

Prof. Ashok Jhunjhunwala for his advisory role. Quillbot grammar checker and Paperpal have been used to curate the manuscript.

Ethical approval

Funding

The authors disclosed receipt of the following financial support for the research, authorship and/or publication of this article: This work was supported by the Prime Minister Fellowship for Doctoral Research – ANRF, Shri N.P.V Ramasamy Udayar Research Fellowship, (grant number Batch 19, U022300983).

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article.

Data availability

All data analysed in this study is available in Scopus, PubMed and Google Scholar.

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.