Abstract

Objective

Deliberate practice (DP) for psychological therapists involves using objective, corrective feedback to identify and improve individualised skill deficits, alongside iterative practice opportunities. Automated, prognostic feedback on session contents could enhance personalisation of DP across therapy professions and modalities. This study assessed the feasibility, acceptability and initial clinical utility of a 10-week therapist intervention integrating automated feedback on predicted prognosis with DP of individualised therapeutic skill deficits.

Methods

Participants were 97 therapy clients seen by nine therapists in the 10 weeks preceding intervention and 79 clients seen by the same therapists during the 10-week intervention period. Participating therapists, representing diverse professional backgrounds, invited their clients to consent to have sessions recorded and automatically assessed for predicted prognosis. Prognostic feedback was integrated into DP training and practice, comprising a total of 32 h intervention. Assessments of intervention feasibility, acceptability, credibility, outcome expectancy and therapists’ therapeutic skills were taken alongside qualitative interviews at baseline, 5- and 10-week follow-up. Reliable improvement in depression and anxiety was compared between clients receiving therapy in the pre-intervention and intervention periods.

Results

Findings indicated significant pre-post improvements in intervention acceptability (dRM = 2.25, p = .008), credibility (dRM = 1.11, p = .039) and therapist skills (total score: dRM = 2.57, p = .008), with non-significant improvement in feasibility (dRM = 0.67, p = .268) and clinical outcomes, including a 3% increase in the proportion of clients reporting reliable improvement for depression (58–61%) and a 10% increase for anxiety (65–75%). However, client uptake of automated feedback was low due to concerns about artificial intelligence and related trust in the system.

Conclusion

Automated feedback and DP become more acceptable to therapists through engagement, with potential to improve therapeutic skills and effectiveness. However, addressing client concerns about how technology is used for automated feedback is essential to enhance participation.

Keywords

Psychological therapies for common mental health problems

Anxiety and depressive disorders are common and disabling conditions in the general population that are often recurrent with a chronic course.1–3 Psychological therapies are effective, first-line treatments for a number of anxiety and depressive disorders. 4 However, despite strong evidence of effectiveness, psychological therapies are unlikely to have met their full potential to improve health. 5 Compared to treatments for many physical health conditions, the overall effects of psychological therapies have not grown across time and, in some cases, have declined.6–8 Although the majority of people completing a course of psychological therapy report recovery or reliable improvement in mental wellbeing, 5–10% report deterioration in spite of therapy. 9 Furthermore, approximately 20% of people attending psychological therapy drop out prematurely 10 ; making improvement much less likely. 11

A key cause for the lack of improvement in effectiveness over time is the absence of a systematic, objective, routine means of measuring the quality of psychological therapy contents.12–14 As a result, it is harder to tell at any given time whether therapy is having the desired effect. Without such timely, content-oriented feedback, it is also harder for therapists to adjust treatment to remediate difficulties. Therapist training suffers too, because trainers may struggle to identify specific areas where trainees need to improve their practice to gain greater effectiveness.

Therapist-level differences in effectiveness

Potentially related to the lack of routine quality assessment in psychological therapy content, there are significant differences in clinical effectiveness between individual therapists and between different psychological therapy services.15–17 Importantly, the differences between therapists become more pronounced when therapy is shorter and/or with patients who have more severe or complex difficulties. 18 Furthermore, individual therapists themselves do not become more effective with time or experience, suggesting that current established support mechanisms are insufficient to promote improved effectiveness. 19

Current research has focused on in-session interaction types as a key influence on therapist effectiveness and client prognosis in psychological therapy. The most effective therapists have proficient facilitative interpersonal skills, particularly in challenging therapeutic situations, compared to those achieving poorer outcomes.20,21 The importance of interaction style and interaction type was reinforced using natural language processing and machine learning. In an analysis of text-messaging-based therapy for anxiety and depression using Machine Learning, a model identified that specific therapist interaction types (e.g. therapeutic praise) predicted greater outcome improvement, whilst others predicted poorer prognosis (e.g. interactions unrelated to therapeutic activity). 22 A series of recent systematic reviews and meta-analyses have also identified that specific in-session interactions predict poorer health outcomes in addictions and anxiety disorders.23–25

Deliberate practice for therapists

A recently developed training and practice improvement method called deliberate practice (DP) shows promise as an approach that may help therapists enhance their therapeutic skills.26–29 In psychological therapies, DP involves establishing baseline effectiveness and then combining objective feedback with iterative practise to address individualised skill deficits. 30 The two systematic reviews on DP in psychological therapies suggest that it can improve therapeutic skills more effectively than traditional training and supervision, 31 but that most studies have not included all components of DP (including individualised learning objectives, external expert support, feedback and iterative repetition). 32 Despite evidence that DP can help improve clinical effectiveness, 33 unanswered questions remain.

Current best practice recommends identifying therapist skill deficits through analysis of previous therapy episodes, using Routine Outcome Monitoring (ROM) to detect non-random therapist error patterns among clients with poorer outcomes. 34 Engaging in DP without external feedback relies on therapists’ intuitions to choose which skills to practise. However, therapists’ intuitions are often inaccurate and could lead to less skill development.35,36 While methods like outcome analysis reduce this risk, they also involve rigorous processes that may delay access to DP for interested therapists.

Machine learning-enhanced DP

A potential route to balancing accurate focuses for practice with accessibility in DP is using machine learning on therapy contents to provide automated, prognostic feedback from what therapists say each session. Such systems have been deployed to evaluate sessional language and offer competency feedback, partly because much current DP literature focusses on specific therapy modalities. 37 However, scaling DP for broader use requires accommodating therapists from diverse professional backgrounds and supporting the delivery of multiple or integrative therapy models. Additionally, DP demands significant time and effort, but it is unclear what duration of practice is needed to achieve the benefits. Such evidence is crucial for estimating DP's clinical and cost-effectiveness.

The ongoing development of ambient scribe technology (automatic production of clinical records from recordings of health consultations) has, so far, primarily focused on the benefits of reduced administrative burden. 38 However, integration of automated prognostic feedback from recordings of session contents has the potential to integrate with ambient scribe technology and substantially enhance the clinical benefits realised.

Study objectives

The current study assessed the feasibility, acceptability and initial clinical utility of a transtheoretical DP intervention incorporating automated, sessional feedback on prognosis based on machine learning applied to in-session linguistic content (automated feedback and deliberate practice: FDP). FDP aims to enable rapid access to DP while retaining its data-driven focus on non-random errors.

Method

Design

This study applies Bowen et al.'s 39 theoretical framework for investigating the feasibility of evidence-based interventions. In particular, this approach recommends comprehensive, multilevel evaluation of feasibility and acceptability, especially with interventions that may have multiple effects. This is suitable for early-stage development of a staff training intervention, to enable adjustment and adaptation prior to more formal evaluation of efficacy.

The study employed a quasi-experimental, 40 mixed-methods 41 feasibility design with repeated measures, examining changes over time in perceived feasibility, acceptability, therapeutic skills and clinical effectiveness (CONsolidated Standards Of Reporting Trials (CONSORT) checklist: Supplemental Table 1). Participants were psychological therapists from three National Health Service (NHS) services in England and their clients: one service for mild-to-moderate depression and anxiety, and two services offering psychological therapies for people with specific long-term conditions and comorbid moderate-to-severe anxiety or depression. Services were selected for their commitment to ROM and therapist development, alongside a geographic focus on the Midlands region, enabling participants to attend in-person activities.

Participants

Eligible therapists were qualified and registered with the UK Health and Care Professions Council or the British Association for Behavioural and Cognitive Psychotherapies and had been in post and treating clients in their respective services for at least 3 months. Eligible therapists also had to have managerial agreement to commit time to intervention activities, including consent to routinely record therapy sessions (audio or video) and be competent to give written informed consent. Therapists were excluded if they were currently engaged in a disciplinary or fitness to practice review, were working less than 50% whole time equivalent hours or were not routinely monitoring outcomes with all clients. These criteria were used by clinical leads to identify and invite eligible therapists. Participating therapists were offered the FDP intervention. Clients of participating therapists were eligible if their sessions were conducted in English, they were aged 18 or older, and they were competent to give informed consent. The study was conducted in the East Midlands region of England between March and July 2024.

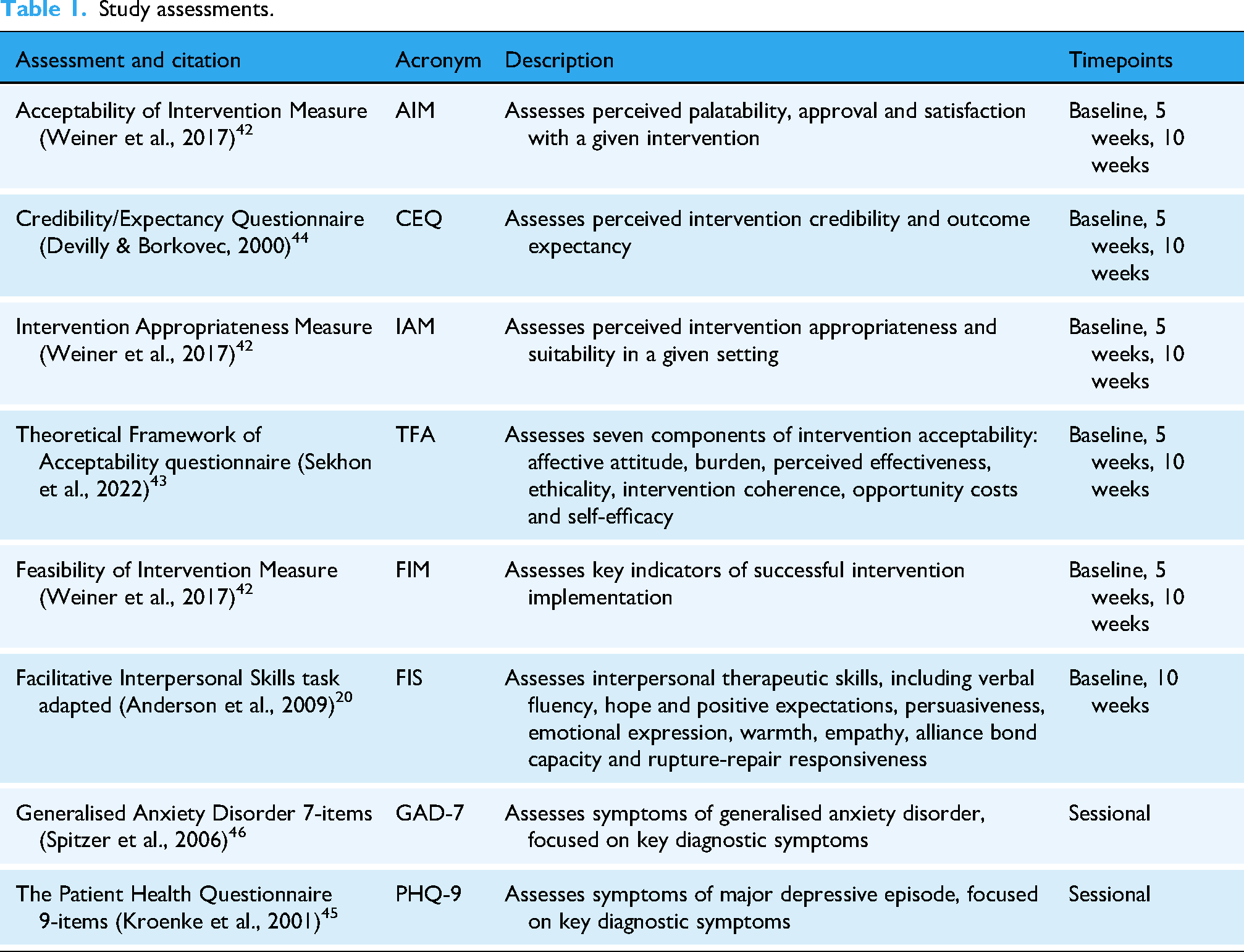

Measures

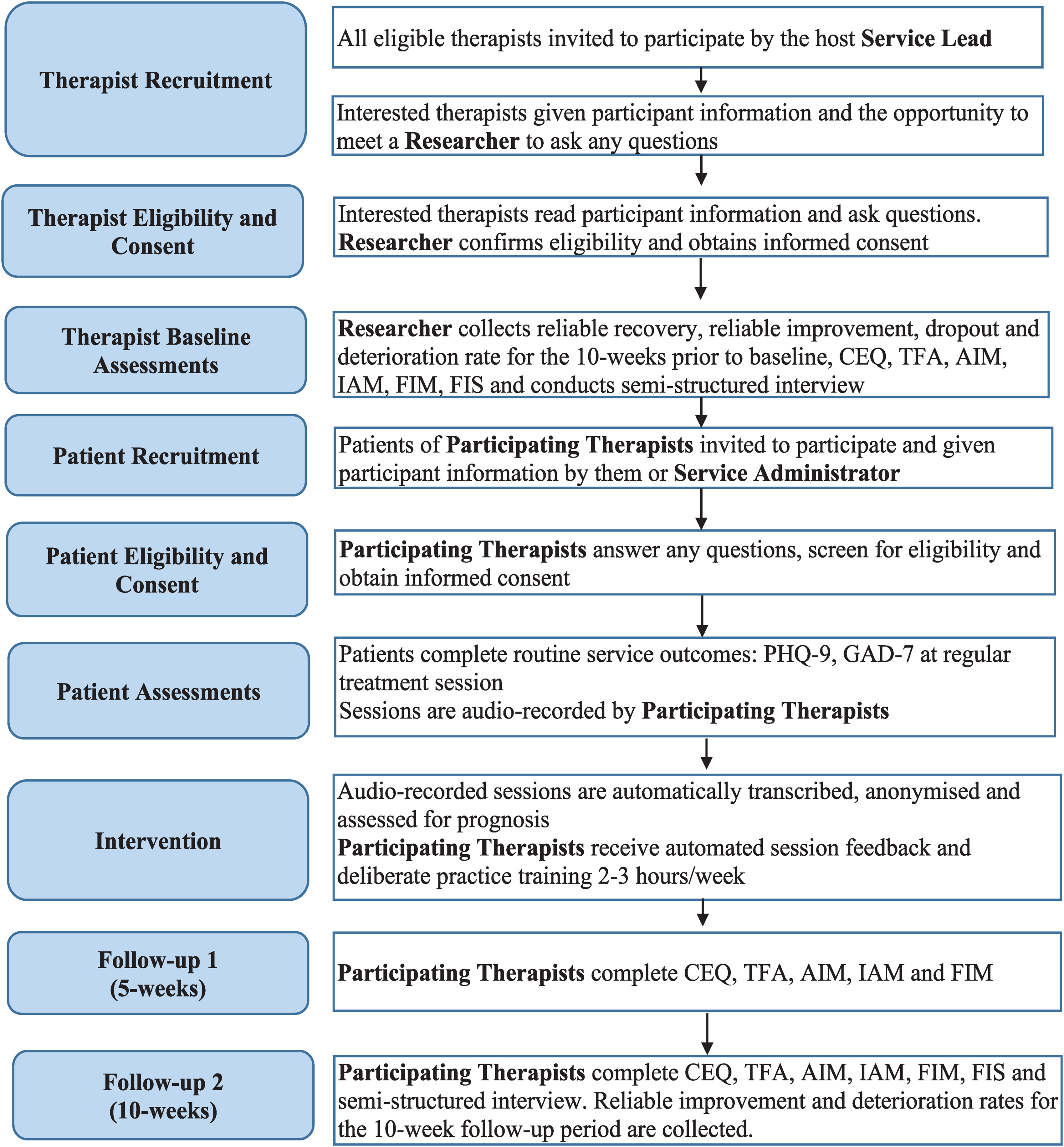

A set of validated, standardised assessments were conducted at baseline, 5- and 10-week follow-up to evaluate feasibility (Feasibility of Intervention Measure, FIM 42 ;), acceptability (Theoretical Framework of Acceptability, TFA; Acceptability of Intervention Measure, AIM; Intervention Appropriateness Measure, IAM42,43), credibility and outcome expectancy (Credibility/Expectancy Questionnaire, CEQ 44 ), transtheoretical therapeutic skills associated with clinical effectiveness (adapted Facilitative Interpersonal Skills task 20 ) and clinical effectiveness (Patient Health Questionnaire 9-items, PHQ-9; Generalised Anxiety Disorder 7-items, GAD-745,46) over time (Table 1, Figure 1). Therapist demographics were also collected at baseline. Standard assessment procedures were conducted for all assessments apart from the Facilitative Interpersonal Skills task, where standard rating processes were used on recently developed stimulus videos.

Flowchart of study processes.

Study assessments.

Feasibility was also assessed through: (1) the proportion of interested therapists who consented to participate; (2) the ability to recruit to target (n = 9–12 therapists); (3) the intervention completion rate (therapists attending ≥50% FDP sessions) and (4) the number of therapists’ clients consenting to participate.

Anonymised clinical outcomes for all clients seen by participating therapists were collected, comparing outcomes from the 10 weeks before FDP intervention (n = 97) with the 10 weeks of FDP intervention (n = 79). Semi-structured qualitative interviews with therapists at baseline and 10 weeks explored intervention expectations and experiences.

All standardised assessments used in the study are presented in the Supplemental Materials. The questionnaires used were either in the public domain or were made freely available by the respective copyright holders for non-commercial research. With the Facilitative Interpersonal Skills task (FIS), the published methodology was adapted without using the proprietary stimuli, which did not require permission, and the FIS team was contacted about the current study.

Interventions

All interventions were developed with ongoing consultation with and involvement of patients, therapists, therapist trainers and therapy service managers.

Automated feedback

Therapists recorded therapy sessions with consenting clients. These recordings were transcribed and anonymised, using Microsoft Azure's AI Speech Services Batch Transcription. Python code was used to prepare uploaded session recordings as mono .mp3 files and then request diarized transcription of the prepared files, which distinguishes between speakers. Transcripts were then rated for prognosis, based on in-session language patterns using secure automated feedback software. The natural language processing model that was used for automated feedback was a Bidirectional Encoder Representations from Transformer (BERT) model, pre-trained on clinical consultation transcripts (ClinicalBERT 47 ). This model was selected after evaluating the performance of a range of models on their ability to predict clinical outcomes of psychological therapy from transcripts of early sessions. The model was then trained on transcripts of therapy session recordings from two clinical trials of psychological therapies,48,49 with linked pre- and post-therapy PHQ-9 and GAD-7 scores for participants to classify them into those who reliably improved and those who did not. This process trained the ClinicalBERT model to identify natural language patterns associated with clinical improvements or otherwise. Software provided client prognosis predictions (‘on-track’ for reliable improvement vs. ‘not-on-track’) based on the likelihood of achieving the national standard for reliable improvement on PHQ-9 or GAD-7 (reduction in PHQ-9 score ≥6, or ≥4 on GAD-7 50 ).

Deliberate practice

Study interventions began with a 2-day training, introducing participants to DP, its evidence base, and practical exercises following transtheoretical methods (see 30 ). The week after training, participants engaged in a 4-week schedule of DP activities, rotated twice:

Week 1: Ninety-minute individual supervision, including review of feedback received and associated session recording segments; review and revision of personalised learning objectives; individualised DP applying exercises focused on personalised learning objectives, and agreement on follow-up activities.

Week 2: Two-hour peer-supported DP in groups of four, facilitated by the intervention trainer. Sessions offered 30 min focused on each therapist, wherein they described what they were learning about their practice and the skills they were working on. Following feedback from peers, each therapist chose how to use their peers and DP to support their learning, typically including a DP role-play with peer feedback and repeated iterations.

Week 3: Two-hour individual reflection and review of targeted session recordings. Therapists reviewed automated feedback, identified skill development areas and practised identified skills through self-recording or peer review.

Week 4: Three-hour in-person progress review with the participant cohort. Sessions focused on embedding learning into routine practice and reflecting on the alignment of DP with therapists’ broader development and professional roles. Reflections were then translated into action plans for the following month.

Procedure

Interested therapists were screened by service leads, and consent-to-contact from the research team was obtained. Study researchers provided participant information and contacted potential participants via telephone or video call. The aims, methods, anticipated benefits and potential hazards of the study were explained, and any questions were answered. Then, written informed consent was obtained electronically and confirmed by email. Baseline assessments were then completed. Follow-up assessments were completed by participants with the same researcher. Participating therapists approached clients on their caseload, providing written participant information to those interested and obtaining written informed consent.

Statistical analysis

As a feasibility study, analyses were primarily descriptive, focusing on feasibility, acceptability and initial indications of clinical utility rather than formal hypothesis testing. Quantitative analyses followed the pre-registered Statistical Analysis Plan (OSF registration: osf.io/e85wx). All analyses were conducted in IBM SPSS Statistics (version 29). Descriptive statistics were calculated to summarise sample characteristics, feasibility indicators and acceptability measures. Feasibility was operationalised as recruitment, retention and adherence rates; acceptability was indexed through scores on the AIM, IAM, FIM and TFA. Given the small sample size, inferential comparisons across timepoints (baseline, mid- and post-intervention) were conducted using non-parametric Wilcoxon signed-rank tests. Changes in acceptability, feasibility, credibility, outcome expectancy and therapeutic skill (FIS) scores were tested using the completer sample (n = 8). For each comparison, repeated-measures effect sizes were calculated as Cohen's dRM, adjusted for within-subject dependency, with corresponding 95% confidence intervals (CIs). For clinical outcomes, anonymised PHQ-9 and GAD-7 data were analysed for all clients seen by participating therapists during the 10 weeks pre-intervention and during the 10-week FDP period. Reliable change indices were computed, applying NHS Talking Therapies thresholds for reliable improvement and deterioration. 50 The proportions of clients meeting reliable improvement, no change and reliable deterioration criteria were compared between pre-intervention and intervention periods using Fisher's exact tests (Fisher–Freeman–Halton extension for multi-category tables).

Qualitative analysis

Qualitative data from semi-structured interviews were analysed using framework analysis, structured around the domains of the TFA.43,51 This approach aims to identify, define and interpret key patterns in emergent themes by creating and then applying an analytical framework. Quantitative and qualitative findings were integrated narratively to aid the interpretation of acceptability and feasibility outcomes.

Ethical approval and pre-registration

Ethical approval was obtained from the Health Research Authority East Midlands - Leicester Central Research Ethics Committee (reference 24/EM/0073). The study was pre-registered prior to recruitment. 52

Results

Participants

All therapists expressing interest were recruited, and the study achieved recruitment within the target range (n = 9). Participants were from a range of professional backgrounds, including practitioner psychologists and psychotherapists applying Cognitive Behavioural Therapy, Acceptance and Commitment Therapy, Compassion-Focused Therapy, Person-Centred Experiential Therapy and integrative (incorporating multiple therapeutic modalities) therapeutic modalities. Participants were predominantly female (n = 7); White British (n = 6), had a median age of 41 (range = 29–55) and had been qualified a median of 1.5 years (range = 0–12 years).

Feasibility

Therapist recruitment, retention and adherence

Eight participants (89%) completed the 10-week intervention. However, use of the automated feedback component varied due to time constraints, technical difficulties and challenges in obtaining recordings, partly influenced by limited client uptake.

Client uptake

Less than two clients-per-therapist consented to use of automated feedback (n = 14), when outcomes were reported by a median of 15 clients-per-therapist in the same timeframe. In interviews, therapists noted that declining clients expressed concerns about the potential role of Artificial Intelligence (AI) in therapy: ‘A couple of patients said, “Are you going to be replaced by AI? I only ever want to see a real person”’ [P7].

Therapist assessments

Baseline therapist assessments on the AIM, IAM and FIM were below typical mean scores from previous research (baseline range = 14.9–16.1, typical M > 16.5; e.g. 53 ). Post-intervention, mean scores for all three measures rose to exceed this typical benchmark (Post M range = 16.6–18.9) (Supplemental Figure 1).

Statistically, this shift represented a significant improvement in perceived acceptability (Z = 2.57, p = .008, dRM = 2.25, 95% CI [1.04, 3.47]). Improvements in appropriateness and feasibility were non-significant (ps = .060 and .268, respectively). Regarding the CEQ, therapist-perceived intervention credibility demonstrated significant improvement (Z = 2.11, p = .039, dRM = 1.11, 95% CI [0.13, 2.09]), while outcome expectancy showed non-significant improvement (p = .148) (Supplemental Figure 2; Table 2).

Changes in intervention acceptability, credibility and therapist facilitative interpersonal skills

Note: Subscales of AIM, IAM, and FIM are scored out of 20, CEQ subscales out of 27, and Facilitative Interpersonal Skills subscales out of 5. Wilcoxon signed-rank tests were conducted on the completer sample (n = 8) as per the preregistered analysis plan. Means, standard deviations, and Cohen's d effect sizes are provided for precision and transferability. Effect sizes are reported as Cohen's dRM (repeated measures Cohen's d), where the denominator is the pre-intervention standard deviation, and the effect size is adjusted for dependency by incorporating the pre–post correlation. The confidence intervals for dRM reflect this adjustment to account for the dependency between measurements. Interpretation of Cohen's d: 0.20 = ‘small’, 0.50 = ‘moderate’, 0.80 = ‘large’. AIM = Acceptability of Intervention Measure, IAM = Intervention Appropriateness Measure, FIM = Feasibility of Intervention Measure, CEQ = Credibility/Expectancy Questionnaire.

*p < .05, **p < .01.

Acceptability

The TFA combined quantitative data from modal appraisals of each domain of feasibility and acceptability with qualitative feedback from therapists to aid understanding of how the intervention was experienced over time. Appraisals of acceptability on the TFA showed improvement over the course of the intervention, particularly in perceptions of opportunity costs, affective attitude and general acceptability (Table 3). Early neutral responses shifted to positive endorsements as participants experienced the intervention's benefits.

Therapist appraisals of intervention acceptability.

Note: Modal responses shown in cells. Key assessment area highlighted in bold. Responses indicating high acceptability in increasingly dark green, low acceptability responses in increasingly dark red; neutral responses in orange.

Framework analysis of therapist interview data (Table 4) revealed high acceptability of the intervention, aligning with the TFA. Emotional responses (affective attitude) were overwhelmingly positive by the end of the intervention, reflecting high satisfaction with group learning and professional development, though therapists found automated feedback less useful, because they expressed preferences for it to offer more detail. Despite the intervention's benefits, participation was deemed demanding throughout, requiring significant time, cognitive and emotional effort, with logistical and recruitment challenges. Ethicality was noted in the alignment with therapists’ values, particularly the autonomy to adapt the intervention to individual therapeutic modalities and approaches.

Acceptability of automated feedback and deliberate practice in terms of the theoretical framework of acceptability.

Note: Px = Participant identification code.

However, concerns were raised about client scepticism toward AI. Intervention coherence was strong for DP principles but weaker for automated feedback, as more detailed feedback was desired to inform DP. Therapists acknowledged opportunity costs (the need to sacrifice other activities for intervention engagement) but valued the intervention's impact, reporting increased self-awareness, confidence and work engagement. Self-efficacy grew as participants gained proficiency with DP procedures, supported by a collaborative learning environment. Finally, therapists expressed clear intentions to integrate the approach into future practice.

Clinical utility

Therapist skills

All domains of the adapted facilitative interpersonal skills task significantly improved from baseline to post-intervention (Z range = 2.20–2.54, all ps < .05), demonstrating large effect sizes across subscales (dRM range = 1.18–2.13). The total FIS score reflected this overall enhancement in therapeutic skills (Z = 2.52, p = .008, dRM = 2.57, 95% CI [1.21, 3.94]; Supplemental Figure 3).

Client outcomes

Outcomes for participating therapists’ clients were compared between 97 clients reporting outcomes in the 10 weeks directly prior to intervention (baseline) with 79 clients reporting outcomes in the 10-week intervention period (intervention). There was a median of 18 clients-per-therapist in the baseline period and 15 clients-per-therapist in the intervention period. The proportion of clients reporting reliable improvement in depression (PHQ-9) increased from 58% to 61%, and reliable improvement in anxiety (GAD-7) rose from 65% to 75%. 54 However, these changes were non-significant for both measures (Fisher–Freeman–Halton exact test PHQ-9, p = .568; GAD-7, p = .448; Figure 2).

Average change in therapist outcomes from baseline to intervention period.

Discussion

This study aimed to assess the feasibility and acceptability of offering automated, prognostic feedback to psychological therapists and providing a structured DP training programme. Overall, findings suggest that the intervention was acceptable to participating therapists and feasible to fit alongside their clinical practice commitments, with some specific time protected for the intervention. However, the intervention was less acceptable to therapists’ clients, with low uptake of the automated feedback component. By the end of the intervention, therapists from a range of professional backgrounds found the FDP intervention highly acceptable, with improvements in perceived opportunity costs, affective attitude and general acceptability over time. Qualitative interviews supported these changes with participants identifying increased work engagement and enjoyment as a result of their participation. Yet, qualitative reports also highlighted mixed experiences with automated feedback that may have limited any benefits. Initial clinical utility was indicated with significant improvements in therapists’ facilitative interpersonal skills – abilities that are associated with greater clinical effectiveness among therapists. 21 In addition, proportionally more clients reliably improved in depression and anxiety symptoms. The difference in impact on depression (3% more clients improving) versus anxiety (10% more clients improving) could be due to differences in FDP effects on depression versus anxiety symptoms, psychometric differences between PHQ-9 and GAD-7, or simply a chance difference given that the changes were non-significant. While feasibility was demonstrated by high therapist retention and adherence, low client uptake and mixed experiences with the automated feedback component highlight the need for its development.

This study is consistent with prior research showing that DP improves therapists’ skills 31 and adds initial evidence that DP may also enhance clinical effectiveness over a relatively short, intensive training period. This suggests that this kind of intervention could play a role in helping to improve the effectiveness of psychological therapies that have eluded the field for long periods. 21 Extending beyond DP studies focussed on modality-specific competencies, 27 these findings suggest that skill and effectiveness gains have the potential to be achieved across multiple and integrated therapeutic modalities within a data-driven, personalised approach. This study also highlights the less commonly discussed issue of the practical and emotional impact of DP on practitioners. Although the challenges of using DP in psychotherapy have been identified previously, 55 this may be a topic that requires greater discussion in future research to optimise the beneficial effects observed on therapists’ wellbeing and work engagement, alongside minimising the burden that comes with consistent focus on skill limitations.

Limitations

As a feasibility study, the results obtained are insufficient for reliable conclusions on effectiveness to be drawn. Furthermore, the absence of any comparator group makes it unclear whether the changes observed would occur under conditions without the intervention. The absence of a sample size calculation identified a priori also reduces the confidence that can be placed on the results observed. By comparison to other therapist-level interventions, this study had a small sample size, and a larger sample would be required to obtain sufficient variability in the sample for generalisability. The short-term follow-up period means the durability of any effects also remains unclear. Powering future research for specific effects, longer-term, with a randomised comparator group would produce more dependable results. The low acceptability and mixed evaluation of the automated feedback indicate that improvements in its design, such as more detailed prognostic feedback and clearer explanations, are needed. Alternatively, established automated feedback methods for specific therapeutic models could serve as a foundation for developing transtheoretical feedback, offering sufficiently generalisable insights to support personalisation across modalities. 37 These adjustments would be required before integration with any ambient scribe technology to facilitate and support adoption and implementation. Although improvements in therapist skills were observed in this study, the use of different stimulus videos as part of a standardised assessment may threaten the validity of these findings.

Future research

Future research should evaluate the FDP intervention in a randomised controlled trial that is powered to detect differences in therapist skills and effectiveness. This would give a clearer understanding of clinically important effects, the size of any effects and the dosage of intervention required to achieve them. If found to be effective, this type of study would enable services to more readily estimate the return on investment of time for therapists to apply FDP. Adaptive trial designs could be applied in future research to enable within-study adaptation and improvement of the automated feedback system using pre-specified rules. 56 Future research could also assess and identify active ingredients of FDP by comparing different automated feedback systems, with and without the transtheoretical DP approach. All future studies should include longer follow-up periods to better understand the durability of effects over time.

Implications

This study suggests that it may be possible for therapists to improve their skills and effectiveness over time with an intervention targeted at improving personalised skill deficits. It also indicates that, in practice, there may be impacts on therapists beyond clinical effectiveness and skills, including potential impacts on their satisfaction with and enjoyment of work. Conversely, the intervention can also be emotionally and cognitively demanding with time demands that can have an impact even with protected time allocated. Therefore, future implementation of related interventions should account for the breadth of impacts, positive and negative, that therapists may experience to enhance acceptability. It is notable that measures of intervention acceptability improved over the 10 weeks of intervention. This suggests that evaluating the impact of FDP over time may be more meaningful than initial perceptions alone, given that they may change with the use of the intervention. Furthermore, co-design of automated feedback systems and integration of processes that enable AI and machine learning processes to be more understandable and explainable could help address the concerns raised by therapists and clients about their use in therapy. 57 This study highlights the value of the ‘human in the loop’ approach to responsible health developments involving AI; accelerating and scaling up technology of this kind is greatly enhanced by human feedback to support acceptable and responsible improvement. This type of development could support larger-scale application of FDP in training and practice.

Conclusion

This study suggests that FDP can be feasibly and acceptably applied with therapists from a range of theoretical orientations, providing therapy to varied patient populations. This intervention has the potential to improve therapeutic skills and clinical effectiveness, though further study is required to formally evaluate this and, if found to be effective, to estimate the size and durability of the effects. Patients’ concerns about the application of AI in this context must be addressed to increase uptake, and close attention should be paid to the processes through which clients are socialised to the approach.

Supplemental Material

sj-docx-1-dhj-10.1177_20552076251413316 - Supplemental material for The feasibility and acceptability of automated feedback and deliberate practice in psychological therapies for anxiety and depression

Supplemental material, sj-docx-1-dhj-10.1177_20552076251413316 for The feasibility and acceptability of automated feedback and deliberate practice in psychological therapies for anxiety and depression by Sam Malins, Grazziela Figueredo, David Saxon, Kate Horton, Jeremie Clos, Thomas Trimble, Kavan Fatehi, David Waldram, Fred Higton, Gillian E Hardy, Michael Barkham, Jonathan Couldridge and Nima Moghaddam in DIGITAL HEALTH

Supplemental Material

sj-docx-2-dhj-10.1177_20552076251413316 - Supplemental material for The feasibility and acceptability of automated feedback and deliberate practice in psychological therapies for anxiety and depression

Supplemental material, sj-docx-2-dhj-10.1177_20552076251413316 for The feasibility and acceptability of automated feedback and deliberate practice in psychological therapies for anxiety and depression by Sam Malins, Grazziela Figueredo, David Saxon, Kate Horton, Jeremie Clos, Thomas Trimble, Kavan Fatehi, David Waldram, Fred Higton, Gillian E Hardy, Michael Barkham, Jonathan Couldridge and Nima Moghaddam in DIGITAL HEALTH

Footnotes

Ethical considerations

Ethical approval was obtained from The Health Research Authority East Midlands – Leicester Central Research Ethics Committee, 26 March 2024 (Reference 24/EM/0073).

Author contributions

Sam Malins: conceptualisation, methodology, data curation, writing–original draft, and writing–review and editing. Grazziela Figueredo: conceptualisation, writing–original draft, and writing–review and editing. David Saxon: data curation, supervision, writing–original draft, and writing–review and editing, Kate Horton: conceptualisation, methodology, writing–original draft, and writing–review and editing. Jeremie Clos: conceptualisation, writing–original draft, and writing–review and editing. Thomas Trimble: software, validation, formal analysis, and writing–review and editing. Kavan Fatehi: software, validation, formal analysis, and writing–review and editing. David Waldram: conceptualisation, methodology, writing–original draft, and writing–review and editing. Fred Higton: conceptualisation, methodology, writing–original draft, and writing–review and editing. Gillian E Hardy: conceptualisation, data curation, supervision, writing–original draft, and writing–review and editing. Michael Barkham: conceptualisation, data curation, supervision, writing–original draft, and writing–review and editing. Jonathan Couldridge: software, validation, formal analysis, and writing–review and editing. Nima Moghaddam: conceptualisation, methodology, formal analysis, investigation, visualisation, data curation, writing–original draft, and writing–review and editing.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and publication of this article: This study was funded through a Health Education England (HEE)/National Institute for Health and Care Research (NIHR) Clinical Lectureship (Sam Malins, NIHR301292). The views expressed are those of the authors and not necessarily those of the NIHR, the National Health Service or the Department of Health and Social Care.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data availability statement

The data that support the findings of this study are available on request from the corresponding author. The data are not publicly available due to privacy or ethical restrictions.

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.