Abstract

Objective

The growing rehabilitation demands driven by global aging and the increasing prevalence of chronic diseases underscore the limitations of conventional therapies, highlighting robot-assisted training as a promising alternative. However, public awareness remains limited, and the quality of health information on short-video platforms is highly variable. This study aimed to evaluate the quality and reliability of Chinese-language videos pertaining to robot-assisted rehabilitation on Douyin and BiliBili.

Methods

A cross-sectional analysis was conducted on 5 February 2025, involving the selection of the top 100 videos related to “robotic rehabilitation” from each of two platforms: Douyin and BiliBili. The Global Quality Score (GQS) and modified DISCERN tool (mDISCERN) were employed to assess the quality and reliability of the video content. Videos were categorized by their source and content type. Meanwhile, their interaction metrics (including likes, comments, shares, and favorites) as well as basic characteristics (such as upload time and duration) were extracted. Cohen'sκtest was used to evaluate inter-rater reliability. Spearman correlation analysis was performed to explore the relationships between variables. Additionally, multiple linear regression analysis was conducted to identify factors influencing the quality and reliability of the videos.

Results

A total of 200 videos were included in the study (100 from each platform). Videos on Douyin were shorter in duration but garnered significantly higher user engagement (all p < 0.001 for likes, comments, shares, and favorites), whereas videos on BiliBili were longer and featured more academic resource citations. Median scores for video quality and reliability on both platforms were moderate (GQS = 2, mDISCERN = 2), yet BiliBili had a higher proportion of high-quality videos. Content created by experts exhibited greater informational value, while commercially promoted videos demonstrated lower credibility. Multiple regression analysis revealed that sources from academic and medical institutions, content types focused on science communication/education and expert explanations, video duration, and daily share rate were significant positive predictors of both GQS and mDISCERN scores.

Conclusion

Short-video platforms serve as valuable channels for disseminating information on robot-assisted rehabilitation; however, significant variability in content quality necessitates critical evaluation by users. Individuals should verify the scientific validity of such information before applying it in health-related decision making.

Introduction

Robot-assisted training (RAT) is a cutting-edge, exercise-based technology that utilizes computer-assisted devices to provide patients with targeted functional exercise patterns. These include repetitive training, intensive practice, and task-specific training formats. 1 RAT has been demonstrated to promote the remodeling of the musculoskeletal system, spinal motor neurons, and intraneuronal systems, enhancing neuroplasticity through goal-directed training programs. Consequently, it facilitates motor relearning, increases muscle strength, and improves movement disorders.2,3 Additionally, RAT's dynamic human–machine interaction capabilities offer real-time training feedback, showcasing significant advantages in enhancing patient compliance and motivation compared to traditional rehabilitation methods. 4 With the global aging population, alongside an increase in chronic disease sufferers and disabled individuals, the demand for rehabilitation services is on the rise.5–8 Traditional rehabilitation approaches suffer from inefficiencies and lack personalization, failing to meet the diverse needs of patients. 9 Robot-assisted rehabilitation offers personalized and precise rehabilitation solutions, significantly improving recovery efficiency.10–12 In the realm of cardiopulmonary rehabilitation, robotic technologies have shown substantial benefits. They can design tailored exercise programs based on individual patient conditions and monitor vital physiological data in real-time during training sessions. Guiding patients through aerobic exercises and respiratory training greatly enhances cardiopulmonary function while alleviating fatigue during rehabilitation. 13 A systematic review and network meta-analysis revealed that RAT combined with conventional rehabilitation therapies effectively improves upper limb motor functions and activities of daily living for stroke survivors. 9 Moreover, some rehabilitation robots integrate virtual reality technology, employing gamified training modes to significantly boost patient engagement and motivation. 14

Despite the promising potential of robot-assisted rehabilitation in enhancing recovery efficacy and patient functionality, its clinical application faces several challenges. Public perception and acceptance of robot-assisted healthcare remain relatively low due to varying viewpoints. 15 Currently, public understanding of this technology largely relies on media reports and online information. 16 The advent of internet technology has revolutionized how people access information, shifting from paper-based to electronic formats, which are now mainstream. 17 Engaging, interesting, and visually appealing video content is particularly popular compared to lengthy traditional texts, contributing to the rapid rise of short video sharing platforms. This trend has led to a significant increase in the availability of health-related short videos, facilitating more efficient and widespread dissemination of health knowledge.18,19 For instance, a foreign study found that social media-based interventions, such as a liver cancer prevention program via Kakao Talk, could promote hepatitis B virus monitoring and liver cancer prevention. 20 However, the unregulated upload of videos without scientific scrutiny often results in variable quality and reliability on these platforms. Misleading or deceptive information can thus be disseminated, increasing the risk of users making health decisions based on inaccurate information. 21 Douyin and BiliBili, as two major short video platforms in China with extensive user bases and influence,8,22 were evaluated in our study. We assessed the top 100 RAT-related videos on each platform using the Global Quality Score (GQS) for video quality and a modified DISCERN (mDISCERN) score for reliability. Our analysis also explored the correlation between video quality and sources, content, duration, likes, comments, shares, and saves. This study aims to evaluate the content, quality, and reliability of robot-assisted training (RAT)-related videos on Douyin and BiliBili. To our knowledge, this study is among the first focused analyses of RAT-related video content on short video platforms.23–25 As pioneering research in this field, it addresses a critical gap in understanding how rehabilitation robotics is portrayed in digital media. This work highlights the need for accurate, high-quality health information in robot-assisted rehabilitation, setting a benchmark for future studies and emphasizing its growing role in patient care and recovery.

Methods

Ethical considerations

This cross-sectional study was completed on 5 February 2025. All data were extracted from publicly available videos on Douyin and BiliBili; no clinical records, human or animal specimens were used, and no personally identifiable information was collected. As there was no interaction with users, ethical review was waived in accordance with the exemption provisions of the Declaration of Helsinki.

Search strategy and data collection

In this cross-sectional study, we searched for videos related to “机器人” (Robotics) and “康复” (Rehabilitation) on Douyin and BiliBili on 5 February 2025, aiming to identify the top 100 robot-assisted rehabilitation videos on each platform (Figure 1 in the attachment).

Data collection flow chart.

To minimize personalized recommendation algorithm bias, new user accounts were registered and logged into each platform, with no search filters applied. Videos were ranked via the platforms’ “comprehensive ranking mechanism,” which integrates video completion rate (proportion of users who watched >5 s), like rate, comment rate, follow rate, and upload time—prioritizing recent and popular content.

During screening, non-Chinese videos, duplicates (identical content from different uploaders), videos without uploader info or titles, and those unrelated to robot-assisted rehabilitation were excluded. The top 100 videos per platform were finally selected for analysis; this sample size was based on previous studies confirming negligible impact of videos beyond the top 100 on results.26,27

To reduce temporal bias from content updates, baseline information of all included videos was uniformly extracted on 5 February 2025, including source, theme, duration (seconds), days since upload, and engagement metrics (e.g., favorites, likes, comments, and shares). All data were recorded in Microsoft Excel (Microsoft Corp.) and collected in strict adherence to Douyin and BiliBili's API policies, with no content downloaded or personal information stored.

Video classification

We categorized the videos into five groups based on their sources and six groups according to their content. The classification criteria for video sources are as follows: (1) medical institutions and related personnel, (2) academic institutions and research teams, (3) individual users, (4) business and advertising, and (5) news reports. For content categorization, the videos were classified into the following categories: (1) introduction to rehabilitation technologies, (2) demonstration of rehabilitation training, (3) patient experience sharing, (4) expert explanations and recommendations, (5) product promotion and publicity, and (6) science communication and education. Detailed classification criteria are shown in Table 1 in the annex. This methodology ensures a systematic approach to analyzing the videos, providing clear distinctions based on both origin and subject matter. Such categorizations facilitate a nuanced understanding of the distribution and nature of robot-assisted rehabilitation content across different platforms, thereby enhancing the robustness of our analysis. The delineation of specific criteria for each category aims to uphold the standards of accuracy and reliability expected in scientific inquiry.

Video classification.

Video quality assessment

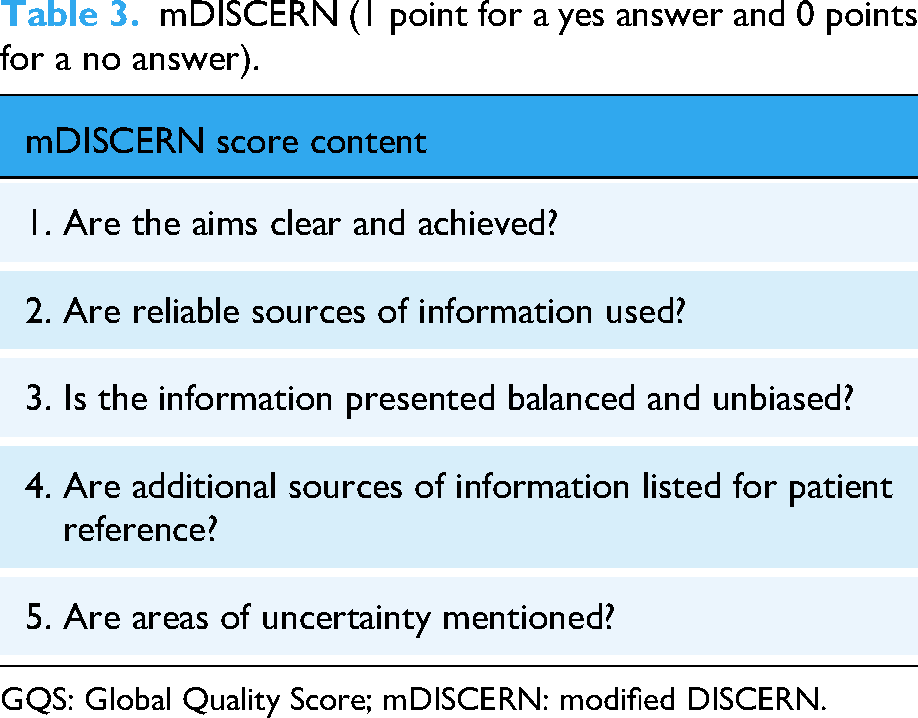

The quality of information in the videos was assessed using the GQS, while reliability was evaluated through the mDISCENRN tool, both of which have been validated in previous studies.28,29 GQS evaluates dimensions such as quality, flow, comprehensiveness, and usefulness. It consists of five criteria rated on a scale from 1 to 5, with higher scores indicating superior quality. 30 The mDISCERN tool, designed to assess the reliability and quality of videos, comprises five questions, each scored with 1 point for “yes” and 0 points for “no,” resulting in a total score ranging from 0 to 5. Detailed grading criteria for GQS and mDISCERN are presented in appendix Tables 2 and 3, respectively. Scores from GQS and mDISCERN were categorized into five levels as presented in appendix Table 4. Video links were provided to two raters in tabular format; these raters were rehabilitation physicians with expertise in therapeutic interventions. To minimize rating bias, the video links were presented in a randomized order. Prior to evaluating the videos, both raters thoroughly reviewed the scoring details of GQS and mDISCERN. They independently watched the videos simultaneously, scored them, and classified the videos based on their source and content. In cases where discrepancies arose between the two raters’ scores, a comprehensive discussion involving an additional observer was conducted to reach a consensus.

Global quality score (GQS) (1–5 points).

mDISCERN (1 point for a yes answer and 0 points for a no answer).

GQS: Global Quality Score; mDISCERN: modified DISCERN.

Describes the GQS and mDISCENRN tool scores.

GQS: Global Quality Score; mDISCERN: modified DISCERN.

Statistic analysis

Shapiro–Wilk test was used to test the normality of continuous variables. Continuous variables with a normal distribution are represented as SD ± mean (standard deviation), while continuous variables without a normal distribution are represented as median, minimum–maximum, and 25 to 75 percentiles. The non-normal distribution data were expressed as the median (interquartile, IQR), and the differences between groups were determined by Mann–Whitney U test, and the significance level was set at P < 0.05. We used Cohen κ to quantify the agreement between the 2 raters. We performed Spearman correlation analysis to evaluate the relationship between quantitative variables. The P value <0.05 was considered statistically significant. All statistical analyses were performed by spss26. Given disparities in video lifespan (“Days since published”), raw cumulative interactions (e.g., total likes) were biased. To control for temporal confounding, we calculated daily average rates (e.g., Likes per day = Total Likes/Days since published), enabling a fair comparison of engagement efficiency.

Result

Video features

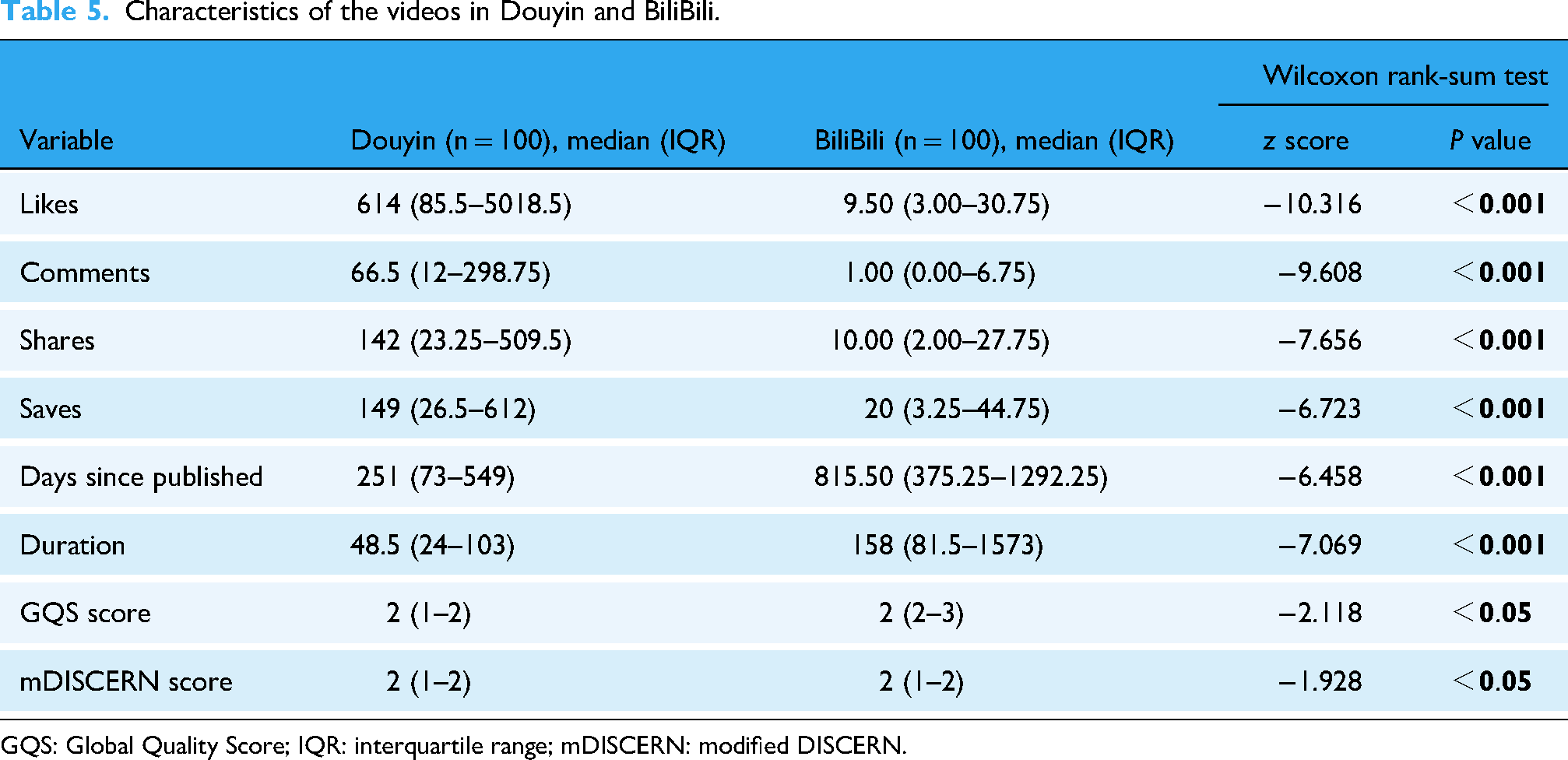

In this study, a total of 200 videos were retrieved and analyzed based on keyword searches, with 100 videos sourced from Douyin and another 100 from BiliBili. As summarized in Table 5, the general characteristics of these videos revealed that Douyin videos garnered significantly more likes, comments, shares, and saves compared to those on BiliBili (all P < 0.001). In contrast, videos on BiliBili were notably longer in duration (P < 0.001) and had been published for a significantly greater number of days (P < 0.001) than those on Douyin. Additionally, the distribution of GQS was wider on BiliBili, suggesting a potentially higher proportion of videos with superior quality on this platform.

Characteristics of the videos in Douyin and BiliBili.

GQS: Global Quality Score; IQR: interquartile range; mDISCERN: modified DISCERN.

However, given the substantial difference in video longevity (“Days since published,” Table 5), direct comparison of raw interaction counts may be biased, as older videos inherently have more time to accumulate engagement. To account for this, daily average interaction metrics were calculated and compared (Table 6). After standardization by time, Douyin videos still exhibited significantly higher per-day averages in likes, comments, shares, and saves (all P < 0.001). This refined analysis confirms that user engagement with rehabilitation robot-related videos remains higher on Douyin even after adjusting for the time since publication.

Daily interaction metrics data across platforms.

IQR: interquartile range.

An analysis of Tables 7 and 8 and Figure 2 in the attachment uncover significant differences in video sources and content features between Douyin and BiliBili. On Douyin, individual users generate most videos (58%), while medical, academic institutions, news reports, and business sources contribute far less. Conversely, BiliBili's content distribution is more balanced, with heavier contributions from medical and academic institutions. Douyin, with its core focus on short-duration, high-frequency interactions, excels particularly in sharing (with a median of 1499 shares for medical content) and saving (a median of 928 saves for medical content). The platform's content tends to favor lightweight, rapidly disseminated formats, typically ranging from 41.5 to 146 seconds in length, with a relatively brief lifecycle (median posting duration concentrated between 84 and 789 days), aligning well with its entertainment-oriented, fragmented-content positioning. In contrast, BiliBili places a greater emphasis on depth and professional engagement. Videos from academic and medical institutions are notably longer (with a median length of 2690 seconds for medical institution videos). Users on BiliBili demonstrate a stronger inclination towards commenting (a median of 13.5 comments for medical institution videos) and long-term saving (a median of 107.5 saves for medical content). Additionally, the quality of content, as assessed by GQS and mDISCERN scores, is generally higher on BiliBili (for instance, a median GQS score of 3 for academic institution videos), reflecting user recognition of professional content. Moreover, BiliBili exhibits a pronounced long-tail effect in content dissemination (with a median posting duration of 1536 days for medical institution videos). The platform's ecosystem fosters community-based discussions and the dissemination of high-quality knowledge, whereas Douyin relies more on instant dissemination and high-efficiency interactions.

Percentage of rehabilitation robot videos from different sources and different content in TikTok and Bilibili. (A) Sources of TikTok videos; (B) sources of Bilibili videos; (C) content classification of TikTok videos; and (D) content classification of Bilibili videos.

Video features of video sources and content in Douyin.

GQS: Global Quality Score; IQR: interquartile range; mDISCERN: modified DISCERN.

Video features of video sources and content in BiliBili.

GQS: Global Quality Score; IQR: interquartile range; mDISCERN: modified DISCERN.

Video quality and reliability assessment

In this study, the κ value used to assess interobserver reliability was 0.78. This result indicates a high degree of agreement in judgments between two observers. Based on the data analysis of Table 9 and Figure 3 in the attachment, significant disparities are observed between Douyin and BiliBili regarding the GQS and mDISCERN ratings of short videos related to rehabilitation robots. In terms of GQS, a striking 79% of videos on Douyin were categorized as low quality, compared to 51% on BiliBili. BiliBili demonstrated superior performance across the “fair,” “good,” and “excellent” categories, with Douyin lacking any videos in the “excellent” category. Regarding mDISCERN scores, 97% of videos on Douyin were rated as having low reliability, whereas this figure was 87% for BiliBili. Videos on BiliBili showed greater reliability, performing better in the “highly reliable,” “moderately reliable,” and “reliable” categories, while no videos on Douyin achieved the “reliable” rating. Overall, BiliBili outperforms Douyin in both video quality and information reliability, likely attributable to differences in content review mechanisms or user demographics.

Statistical analysis of GQS scores and mDISCERN scores of short videos related to rehabilitation robots on TikTok and Bilibili: (A) comparison of GQS scores of videos on TikTok and Bilibili; (B) GQS scores of videos on TikTok and Bilibili; (C) mDI of videos on TikTok and Bilibili comparison of SCERN scores. (D) mDISCERN scores of videos on TikTok and Bilibili. GQS: Global Quality Score; mDISCERN: modified DISCERN.

GQS and mDISCERN scores for Douyin and BiliBili videos related to rehabilitation robots.

GQS: Global Quality Score; mDISCERN: modified DISCERN.

Spearman correlation analysis

To investigate the associations between video metrics (interaction data, longevity, and duration) and quality scores (GQS and mDISCERN), Spearman correlation analyses were conducted on both the raw metrics (Table 10) and the standardized daily interaction data (Table 11). A Bonferroni correction was applied to adjust for multiple comparisons, resulting in a revised significance threshold of α’ = 0.0018. Only correlations with

Spearman correlation analysis of Douyin and BiliBili short video platform (N = 200).

After Bonferroni correction, the significance level is α’ = α/k = 0.05/28 ≈ 0.0018; “*” indicates statistically significant results.

GQS: Global Quality Score; mDISCERN: modified DISCERN.

Spearman correlation analysis of the daily interaction data of Douyin and BiliBili short video platform (N = 200).

After Bonferroni correction, the significance level is α’ = α/k = 0.05/28 ≈ 0.0018; “*” indicates statistically significant results.

GQS: Global Quality Score; mDISCERN: modified DISCERN.

Analysis of the raw interaction metrics (Table 10) revealed strong positive correlations among likes, comments, shares, and saves (all P < 0.0018). A notably high correlation was also found between “Days since published” and “Duration” (P < 0.0018). However, no significant correlations were observed between any raw interaction metric and the quality scores (GQS and mDISCERN) after correction. Additionally, raw interaction counts showed significant positive correlations with both “Days since published” and “Duration” (e.g., Shares vs. Days since published: P < 0.0018; Saves vs. Duration: P < 0.0018), indicating that videos available for a longer period accrued higher absolute interaction numbers.

Analysis of the standardized daily interaction data (Table 11), which accounts for variations in video lifespan, demonstrated that daily averages of likes, comments, shares, and saves remained strongly intercorrelated (all P < 0.0018). The correlation between “Days since published” and “Duration” remained significant (P < 0.0018). Importantly, after temporal standardization, no significant correlations were found between any daily interaction metric and the mDISCERN score—all corresponding P-values exceeded the corrected threshold. Similarly, no significant correlations emerged between the standardized daily metrics and the GQS score. A strong positive correlation was confirmed between GQS and mDISCERN scores (P < 0.0018).

Regression analysis

GQS score regression analysis

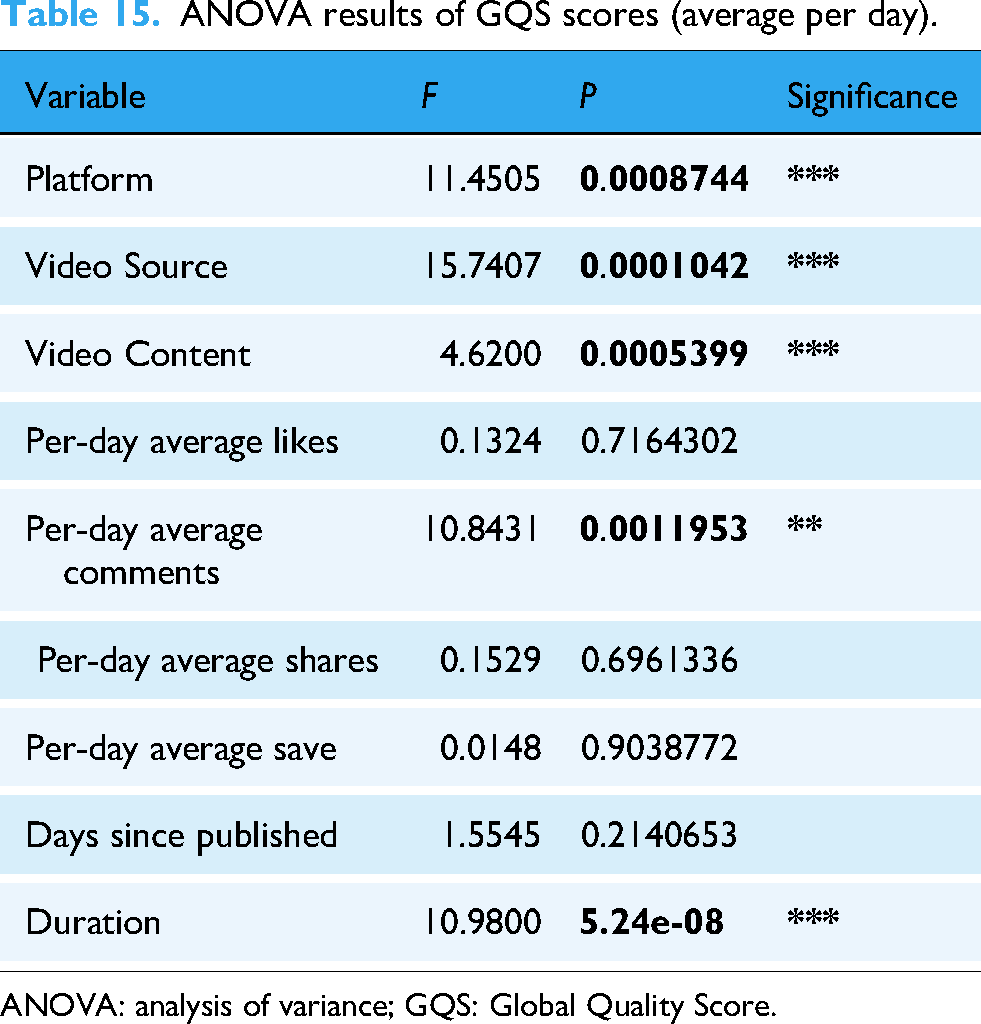

Based on multiple linear regression analyses, several factors were identified as significant predictors of GQS (Tables 12 and 13). Videos from academic institutions (β = 0.700, P = 0.003) and medical institutions (β = 0.615, P = 0.004) demonstrated significantly higher GQS scores compared to other sources. In terms of content type, science communication videos (β = 1.000, P < 0.001) and expert explanations (β = 1.090, P = 0.002) showed the strongest positive effects on quality scores. The analysis of standardized daily metrics revealed that the daily average share rate was a particularly strong predictor (β = 0.840, P < 0.001), indicating that frequently shared videos were associated with higher quality ratings. Video duration maintained a consistent positive relationship with GQS across both models (β = 0.00045, P < 0.001 for raw metrics; β = −0.000309, P = 0.000206 for daily metrics), while longer time since publication showed a negative association (β = −0.000848, P < 0.001 for raw metrics; β = −0.00076, P = 0.0022 for daily metrics). Analysis of variance (ANOVA) results confirmed the overall significance of these models (Tables 14 and 15), with platform (P = 0.000999), video source (P < 0.001), video content (P < 0.001), and duration (P < 0.001) all contributing significantly to explaining variance in GQS scores.

Multiple linear regression analysis of GQS scores.

GQS: Global Quality Score.

Multiple linear regression analysis of GQS scores (average per day).

GQS: Global Quality Score.

ANOVA results of GQS scores.

ANOVA: analysis of variance; GQS: Global Quality Score.

ANOVA results of GQS scores (average per day).

ANOVA: analysis of variance; GQS: Global Quality Score.

mDISCERN score regression analysis

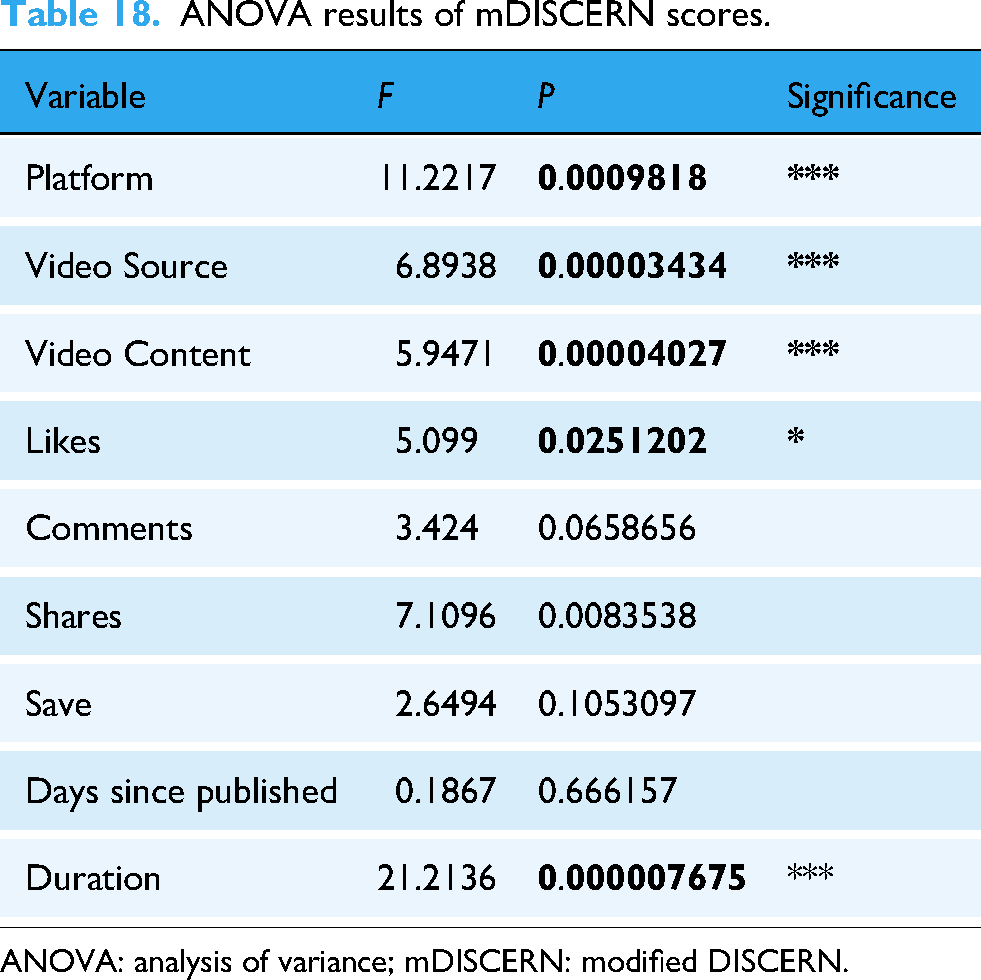

Based on the multiple linear regression analyses of mDISCERN scores (Tables 16 and 17), several significant predictors of information reliability were identified. Commercial and advertising sources showed a substantial positive effect (β= 0.452, P = 0.007), alongside academic institutions (β= 0.399, P = 0.041) and medical institutions (β= 0.447, P = 0.011). Among content types, science communication and education content demonstrated the strongest positive association with reliability scores (β = 0.863, P < 0.001), followed by expert explanations and recommendations (β = 1.230, P < 0.001). Video duration consistently showed a positive relationship with mDISCERN scores across both raw (β = 0.000476, P < 0.001) and standardized models (β = 0.045, P < 0.001), while longer time since publication exhibited a negative association (β = −0.000845, P < 0.001 for raw metrics; β = −0.00078, P < 0.001 for daily metrics). The analysis of standardized daily metrics revealed that daily average comments significantly predicted reliability scores (β = −0.635, P = 0.034). ANOVA results (Tables 18 and 19) confirmed the overall significance of these relationships, with platform (P < 0.001), video source (P < 0.001), video content (P < 0.001), and duration (P < 0.001) all contributing significantly to explaining variance in mDISCERN scores. These findings indicate that professionally sourced, educational content in longer formats tends to receive higher reliability ratings, while longer exposure time appears to negatively impact perceived reliability.

Multiple linear regression analysis of mDISCERN scores.

mDISCERN: modified DISCERN.

Multiple linear regression analysis of mDISCERN scores (average per day).

mDISCERN: modified DISCERN.

ANOVA results of mDISCERN scores.

ANOVA: analysis of variance; mDISCERN: modified DISCERN.

ANOVA results of mDISCERN scores (average per day).

ANOVA: analysis of variance; mDISCERN: modified DISCERN.

Discussion

This study systematically evaluated the content quality and reliability of videos related to “robot-assisted rehabilitation” on two major short-video platforms in China: Douyin and BiliBili. While our findings confirm the enormous potential of short-video platforms in health information dissemination, their content ecosystems also reveal structural issues closely linked to digital health literacy and the challenges of misinformation spread.

Content source and platform ecology shape information quality

Although Douyin videos garnered significantly more raw interactions (likes, comments, and shares), this higher engagement did not correspond to better quality or reliability. Regression analysis indicated that the daily share rate was a positive predictor of GQS (β = 0.840, P < 0.001), implying that content worth sharing tends to be of higher quality. However, no significant correlation was observed between daily interaction rates and mDISCERN scores after time-normalization. This disconnect emphasizes that popularity does not equate to credibility—a crucial consideration for public health education, as users may erroneously associate high visibility with trustworthiness.21,31

Content type significantly influences perceived value

Videos featuring science communication (β = 1.000, P < 0.001) or expert explanations (β = 1.090, P = 0.002) were strong predictors of higher GQS scores. Similarly, content from academic and medical institutions received elevated mDISCERN scores. In contrast, commercially promoted videos consistently underperformed. These results underscore the importance of source credibility and communicative intent in health-related content. 32 They also point to a worrying trend: promotional materials often prioritize esthetic appeal and persuasive messaging over educational value, which may mislead audiences.

Duration and recency matter in content utility

Longer videos were consistently associated with higher GQS and mDISCERN scores, likely because they allow more comprehensive explanations—essential for complex topics like rehabilitation robotics. Conversely, videos that had been online for extended periods scored lower on both scales, possibly due to outdated information or algorithmic neglect. These findings suggest that platforms and creators should prioritize both informational depth and temporal relevance to enhance public understanding.

Educational deficiencies of commercial content and algorithmic amplification effect

Videos produced or promoted by commercial entities consistently underperformed in both GQS and mDISCERN evaluations. This can be attributed to a fundamental misalignment of incentives: whereas educational and scientific content aims to inform, commercial content is primarily designed to promote products or services, capture attention, and drive conversions. Typically centered on product marketing, brand exposure, or traffic conversion, such content prioritizes visual appeal and emotional arousal while neglecting scientific rigor and educational depth. For instance, some videos deliberately construct an image of “pseudo-authority” to mislead viewers—by exaggerating rehabilitation efficacy, concealing indications, and limitations, or employing marketing rhetoric in the form of “patient testimonials.”

More alarmingly, platform algorithms may inadvertently facilitate the dissemination of such content. Owing to their higher production quality, stronger emotional resonance, and clearer audience targeting, commercial videos often garner greater initial user engagement, thereby meeting the criteria for priority recommendation by algorithms. This occurs because recommendation systems are generally optimized for maximizing user retention and interaction—metrics that persuasive, emotionally charged, and well-produced commercial content readily achieves. As a result, algorithm-driven platforms often inherently favor promotive material over educational integrity. This “traffic-first” logic not only squeezes the survival space of high-quality educational content but also further undermines the public's ability to distinguish the authenticity of health information.

Implications for public health and policy

With rapid population aging and increasing rehabilitation needs, accessible, and accurate information on technologies like robot-assisted training is essential. While short videos can effectively disseminate knowledge, unregulated content may perpetuate misinformation. Recent Chinese policies aimed at improving health information quality are a step in the right direction.8,33 To further enhance the reliability and utility of health-related video content, we propose the following evidence-based strategies: Platforms should introduce a mandatory verification system where content from medical institutions, accredited professionals, and academic sources receives visible authentication labels (e.g., “Verified Medical Source” and “Academic-Endorsed”). Additionally, a traffic-weighting mechanism could be established to prioritize the distribution of verified content in user recommendations, increasing its reach and impact while demoting unverified or commercial promotional material. Creating detailed, platform-specific content guidelines for health science communication—covering citation standards, disclosure requirements, and balanced presentation of scientific information—can significantly improve quality. Platforms could collaborate with health authorities to offer training and certification programs for creators, especially those producing content in high-demand areas like rehabilitation and assistive technologies. To address issues of outdated or misleading content, platforms should implement timeliness alerts (e.g., “Uploaded over 2 years ago—content may not reflect latest standards”) and integrate rapid public feedback mechanisms such as “Accuracy Flags” where users or experts can highlight questionable claims for third-party review. Encouraging structured collaborations between healthcare professionals and digital content creators can bridge the gap between scientific accuracy and public engagement. Platforms can sponsor “creator-researcher pairing” programs, support the production of dual-version videos (both expert and public-friendly), and feature these collaborations prominently to set quality benchmarks. Embedding simple, interactive checklists or prompting questions next to health videos—such as “Is the source cited?”, “Are benefits and limitations explained?”, and “Is this advice applicable to your condition?”—can cultivate critical appraisal skills among viewers. These tools can be designed in a gamified format to encourage user participation without being overly burdensome.

By adopting these strategies, platforms, creators, and regulators can collectively foster a more trustworthy and educative digital environment—enabling patients, caregivers, and the general public to access robot-assisted rehabilitation information that is not only engaging but also scientifically sound and ethically communicated.

Limitations

The present study is subject to four main limitations: (1) reliance on publicly available videos from platforms exposed the sample to algorithmic popularity bias and survivorship bias—high-quality but slow-spreading content in the “long tail” was inevitably omitted. Although we normalized engagement metrics by video age (i.e., calculated daily average rates), the data obtained still only reflect the most visible rehabilitation robotics technology (RAT)-related content on the platforms at the time of data scraping. Adopting a “time-bounded data collection approach” (e.g., including only all videos posted within a single year) would yield a corpus that is less tied to algorithms and more comprehensive. (2) The exclusive focus on Chinese-language videos limits the cross-linguistic and cross-cultural generalizability of the findings. Future research should conduct multilingual replications of the study to verify the conclusions. (3) Both the GQS and modified DISCERN (mDISCERN) were originally developed as text-based assessment tools, and thus cannot fully capture the audiovisual attributes of videos—such as narrative coherence, visual clarity, and production sophistication. (4) Despite high inter-rater agreement (Cohen's κ = 0.78), the ratings remain inherently subjective. (5) The small sample size of videos from academic institutions and medical facilities undermined the precision of the associated subgroup analyses. Future studies should expand the sample size to include more of these “underrepresented” video sources.

Conclusion

This study is among the first focused analyses of RAT-related video content on Chinese short-video platforms. While both Douyin and BiliBili show potential for disseminating health information, the overall quality of content remains moderate. BiliBili demonstrated higher quality and reliability, likely due to its user base and content moderation mechanisms. Content creators are encouraged to emphasize accuracy, clarity, and scientific rigor. Users should critically evaluate information and consult healthcare professionals when making medical decisions. These findings highlight the need for improved content regulation and effective science communication strategies in the digital age.

Footnotes

Ethical approval

This study analyzed publicly available video metadata (e.g., likes and shares) and did not involve direct interaction with human participants. Ethical approval was waived per national guidelines for noninterventional research using anonymized public data.

Informed consent

This crosssectional study was completed on 5 February 2025. All study data were extracted from publicly accessible videos on Douyin and BiliBili platforms. Notably, no clinical records, human specimens or animal specimens were utilized in the study, and no personally identifiable information (PII) of any individual was collected during the data extraction process. Since there was no direct or indirect interaction with platform users and no involvement of human subjects as defined by ethical guidelines, ethical review was exempted in accordance with the exemption provisions outlined in the Declaration of Helsinki.

Contributorship

XRL proposed the project and wrote the manuscript; CMZ and YJX conducted data analysis; LW, SJW, and XL provided suggestions for the revision of the article; and CZ supervised the project and interpreted the data. All authors reviewed and edited the manuscript.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research is supported by Special Project for Central Government-Guided Local Sci-Tech Development in Sichuan Province (2024ZYD0269). This work was supported by the Sichuan Provincial Science and Technology Department [grant numbers 2024YFHZ0050], the Luzhou City Science and Technology Bureau [grant number 2024LZXNYDJ035 and 2020LZXNYDJ14], and Cooperation Project between the Second People's Hospital of Deyang and Southwest Medical University [grant number 2022DYEXNYD002]. This research is supported by Special Project for Central Government-Guided Local Sci-Tech Development in Sichuan Province (2024ZYD0269).

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data availability statement

All data are derived from public platforms (TikTok and Bilibili) and are available through search functions. Raw video links and metadata are available upon reasonable request.

Gurantor

CZ.

Peer review

Dr. Gaoyang Pang, University of Sydney reviewed this manuscript.