Abstract

Objective

To examine usability characteristics/attributes and evaluation methods incorporated in the design and/or evaluation of computer-based digital technologies for caregivers of people with chronic progressive conditions.

Methods

We searched Medline (OVID), PsycINFO (Ovid), CINAHL (EBSCO), and Web of Science Core Collection to identify relevant studies published from 2012 to May 2024. Two reviewers screened studies for eligibility, and extracted and synthesized data.

Results

Across 71 included studies, sample sizes ranged between 5 and 127. Most participants were caregivers of people with dementia (n = 52, 73.2%). There was a mix of caregiving relationships across the 55 studies (77.5%) reporting this variable, with spouses most frequently included (n = 51, 92.7%). Samples were predominantly female (72.1%), with mean ages between 43.0 and 76.7 years old. Most technologies were Website/Internet-based (n = 43, 60.6%). Across the studies, we identified 31 distinct usability characteristics/attributes, with more than half of the studies (n = 45, 63.4%) including at least three characteristics/attributes. However, nearly half of the studies (n = 32, 45.1%) used a single usability evaluation method, predominantly inquiry-based interviews (n = 15, 21.1%).

Conclusion

Findings reflect a narrow focus on middle-aged, female, and spousal caregivers, limiting the utility of digital health technologies for more diverse groups of caregivers. In addition to commonly used inquiry-based usability evaluation methods, user-testing, heuristic evaluation, and analytic modelling may offer more adaptive and holistic approaches to address a wider range of digital technologies.

Introduction

Chronic progressive conditions are incurable diseases with significant morbidity in the latter stages of illness. 1 These conditions may include degenerative neurological diseases (e.g. multiple sclerosis, Alzheimer's disease); irreversible organ failure (e.g. liver failure); widespread malignant disease (e.g. cancers); and acquired immune deficiency syndrome. Family caregivers are vital in providing ongoing and often complex care for individuals living with chronic progressive conditions. 2 These caregivers are the backbone of natural support in the community, bridging the gap between what formal health and social care systems can provide and the amount of care required by their care-recipients.3,4 Family caregiving is a multidimensional experience wherein caregivers may derive value and positive benefits from providing care and simultaneously be vulnerable to the role's negative physical, psychological, financial, and social impacts.4–6 Indeed, providing ongoing support for people with chronic progressive conditions is often associated with high levels of stress, poor physical and psychological outcomes, increased risk of morbidity and mortality, reduced opportunities for employment and participation, and poor quality of life.7,8 Thus, supporting family caregiver well-being has been identified as a priority for sustaining this valuable care resource. 9

A wide array of digital health technologies—including computer-based platforms, mobile apps, wearable devices, and telehealth tools—has emerged as a promising means of support for caregivers. With the rapid development of ubiquitous smart devices and advances in artificial intelligence over the past decade, digital health tools have become increasingly prevalent. 10 These technologies offer flexible, accessible support that can overcome time and geographic barriers, supplementing traditional in-person services. 11 Indeed, leveraging cost-effective digital tools for caregiver support holds potential to reduce healthcare utilization (e.g. preventing hospitalizations) while empowering caregivers in their role. 10

Several prior reviews have synthesized the growing landscape of digital health tools for family caregivers, offering an important foundation for the field.10,12–15 Together, these prior reviews have demonstrated that digital health tools can improve caregiver health and well-being outcomes, and that caregivers often report satisfaction and comfort with these tools—especially when human-centered design principles are applied. However, existing reviews have important limitations. Many are narrowly focused by targeting a single specific condition (e.g. dementia or cancer), specific modalities (e.g. web-based programs), or specific outcomes (e.g. implementation cost effectiveness). More importantly, no reviews of caregiver digital health tools in chronic progressive conditions have systematically examined how user-oriented factors, such as usability, are evaluated and reported.

Usability, described as the extent to which specified users can use a system/tool/product/service in a specified context to achieve their specified goals with effectiveness, efficiency, and satisfaction, 16 is increasingly recognized as a key determinant of digital health adoption and long-term success.17–19 Incorporating strong usability principles into the design and evaluation of caregiver-focused technologies is fundamental to ensuring these tools truly meet caregivers’ needs and are embraced in routine care. 20 Good usability characteristics or attributes (e.g. comfort, ease of use, accessibility, flexibility, performance, satisfaction) are critical to driving adoption and improving user compliance with a system or product.21–23 A variety of robust usability evaluation methods (i.e. inquiry-based, user testing, inspection, and analytical modelling) have been developed to test the usability of digital technologies/tools. 24 Inquiry-based methods involve gathering quantitative and qualitative data from users through questionnaires, interviews and focus groups. In contrast, user-testing methods may include think-aloud protocols, performance measurements (e.g. app performance in a given learning context), and log analysis (e.g. frequency of error message occurrence). Inspection methods include heuristic evaluation and cognitive walk-through, and analytical modelling methods can include cognitive task analysis and task environment analysis.20,24

This review was necessary to address a timely and underexplored gap: while we know that digital health tools can work, we know much less about how usable and user-centered these tools are for diverse caregivers in practice. By mapping how usability and related factors have been assessed and operationalized across studies, our review aimed to offer new insights to inform the design, evaluation, and implementation of caregiver-focused digital health tools moving forward. Addressing this gap is timely: as digital health tools for caregivers proliferate, synthesizing knowledge on usability can guide developers and clinicians to create more user-friendly, acceptable, and impactful solutions. By focusing on how studies describe and evaluate usability, we can illuminate whether current caregiver digital technology tools truly align with end-user needs or where design improvements are needed for better adoption. Therefore, we sought to answer two major questions:

What usability characteristics/attributes (e.g. comfort, ease of use, accessibility, flexibility) are incorporated in the design and/or evaluation of computer-based digital technologies for caregivers in the context of chronic progressive conditions? What evaluation methods (e.g. questionnaires, task completion, “Think-Aloud” protocol, interviews, heuristic testing, focus groups) are incorporated in the design and/or evaluation of computer-based digital technologies for caregivers in the context of chronic progressive conditions?

Methods

As described in the published study protocol, 25 the scoping review was conducted using the Joanna Briggs Institute (JBI) methodology. 26 The scoping review was registered on the Open Science Framework (https://doi.org/10.17605/OSF.IO/W4VK5). We report this review according to the PRISMA Extension for Scoping Reviews 27 —see Supplemental File 2.

Literature search and study selection

A health sciences librarian (ARW) developed the preliminary search strategy. An initial search was undertaken in the MEDLINE (Ovid) platform to ensure relevant articles were captured using the preliminary strategy. Members of the research team provided feedback for further development of the preliminary strategy. Once refined, the strategy was reviewed by an independent team of medical librarians according to the criteria outlined in the Peer Review of Electronic Search Databases (PRESS) guideline. 28 Additional changes were made in collaboration with members of the research team. Using feedback from these two groups, a final strategy was developed for MEDLINE (Ovid), using text words in the titles, abstracts, and index terms used to describe relevant articles—see Supplemental File 1. The librarian translated the search strategy for the remaining databases—PsycINFO (Ovid), CINAHL (EBSCO), and Web of Science Core Collection. Database searches were conducted in November 2022 and re-ran in May 2024.

Study selection

All citations were exported into Covidence systematic review software (Veritas Health Innovation, Melbourne, Australia) for deduplication and expunging known exclusions (e.g. conference abstracts). Then, two reviewers independently pilot-tested the screening process on a random sample of 10 citations. Following the piloting, two or more reviewers independently screened the remaining titles and abstracts according to the eligibility criteria outlined below, and then retrieved and screened full text articles. At both stages of the selection process, disagreements between pairs of reviewers were resolved through a consensus process.

Eligibility criteria

Using the Population Concept Context framework recommended by the JBI, 26 the following eligibility criteria were applied to identify studies for inclusion in this review.

Inclusion criteria:

Population: Studies had to focus on adult (≥18 years old) family caregivers of people with chronic progressive conditions. We used the definition of a family caregiver offered by the Canadian Center for Caregiving Excellence, as an unpaid family member or friend who provides help or assistance for an individual who needs care due to disabilities, medical conditions, or aging-related needs.

29

We broadly defined chronic progressive conditions as conditions for which there is no cure and have significant morbidity in the latter stages of illness. These conditions may include degenerative neurological diseases (e.g. multiple sclerosis, Alzheimer's disease), irreversible organ failure (e.g. liver failure), widespread malignant disease (e.g. cancers), and acquired immune deficiency syndrome.

1

Concept: Studies had to describe usability characteristics/attributes and/or usability evaluation methods of computer-based digital technologies aimed at educating family caregivers or enhancing their skills and/or experiences, including, but not limited to, web-based tools, mobile phone and smartphone-based tools, virtual reality, and wearable technologies.20,24,30 Context: Studies conducted in any geographic location and setting (e.g. hospital/clinic, community, university research laboratory, or home) and published from 2012 to the present were included. This time frame was chosen to encompass studies that first conceptualized a new digital paradigm in the healthcare industry,31,32 and that corresponded with the emergence of new knowledge about the deleterious and positive effects of caregiving in the context of chronic progressive conditions.

33

Given the pace of updates in this field, the time frame also allowed the capture of technology that is still accessible/available, ensuring that we excluded obsolete technology with limited relevance in today's world. Type of sources: We included peer-reviewed quantitative (e.g. descriptive observational study designs, cross-sectional survey studies, randomized controlled trials), qualitative (e.g. interview-based, focus groups and other methods generating narrative data), and mixed methods study designs. We recognize that computer-based digital technology development may be conducted outside of academia and published in non-traditional or non-peer-reviewed outlets—but in the context of providing evidence-based information for health and social care providers and researchers, peer-reviewed evidence is considered the gold standard.

34

Exclusion criteria

Population: We excluded studies that focused on care-recipients, care providers (i.e. individuals who provide trained and paid care), or family caregivers of children, as the relationship of a paid care provider or a parent caring for a child with a chronic condition is considered qualitatively different from the adult family caregiver care-recipient relationship.35,36 Studies that focused on dyads (i.e. any combination of family caregivers/formal care providers/researchers and care-recipients), where family caregiver data could not be separated from other data were also excluded. Concept: We excluded studies that focused on electronic medical records, medical monitoring devices, biomedical apps, systems for intelligent processing of genetic data, assistive devices, etc. that are designed for care-recipients only. Multi-component studies in which data on the computer-based digital technology component could not be extracted and studies that only include general perspectives on mHealth/technology were also excluded. Context: Studies published before 2012 were excluded. Type of sources: We excluded conference abstracts, systematic and non-systematic literature reviews, opinion pieces, case studies, study protocols, letters to the editor, non-peer-reviewed articles, and gray literature.

Data extraction

We used a data extraction tool developed for this study. The data extraction tool was independently pilot-tested on a random sample of 10 full-text articles before the final data extraction. Based on the CONSORT E-HEALTH guidelines, 37 we extracted the following information from the included studies: (i) study details (author(s), publication title, source and year, country/continent of origin, purpose, design); (ii) participant characteristics (age, gender, education, caregiver relationship to care-recipient, care-recipient chronic progressive condition); (iii) characteristics of the computer-based digital technology (type of technology and delivery platform, development stage, theoretical framework underpinning development/evaluation); and (iv) usability characteristics/attributes, evaluation methods, and evaluator characteristics. We used descriptions of usability characteristics/attributes reported in previous literature on mobile apps 24 because no standardized descriptions exist. Two independent reviewers extracted relevant information and deductively characterized usability characteristics/attributes based on the description offered in the included study. Disagreements were resolved through a consensus-based discussion.

Data analysis and presentation

Data were synthesized using Microsoft Excel (Microsoft Office, 2019) and IBM SPSS Statistics for Windows, Version 28.0 (IBM Corp, Armonk, NY, USA). No formal measures of agreement were used during the data extraction because differences in capitalization and punctuation generated messages of inconsistency, even if the critical content was the same between reviewers. Our focus was on synthesizing descriptive features of the studies relative to usability, not on synthesizing study results/outcomes. We used descriptive statistics to generate summaries about the study characteristics (i.e. n% of publication year, country, study design); participant characteristics (i.e. n% of gender, relationship to care-recipient, and care-recipient chronic progressive condition; and mean or median age as reported in the included studies); computer-based digital technologies (i.e. n% of technology type, delivery setting, and stage of development, and theoretical framework); and usability evaluation (i.e. n% of usability characteristics, evaluation methods, questionnaires used, and technical knowledge of evaluators). The results are presented in narrative and tabular formats.

Results

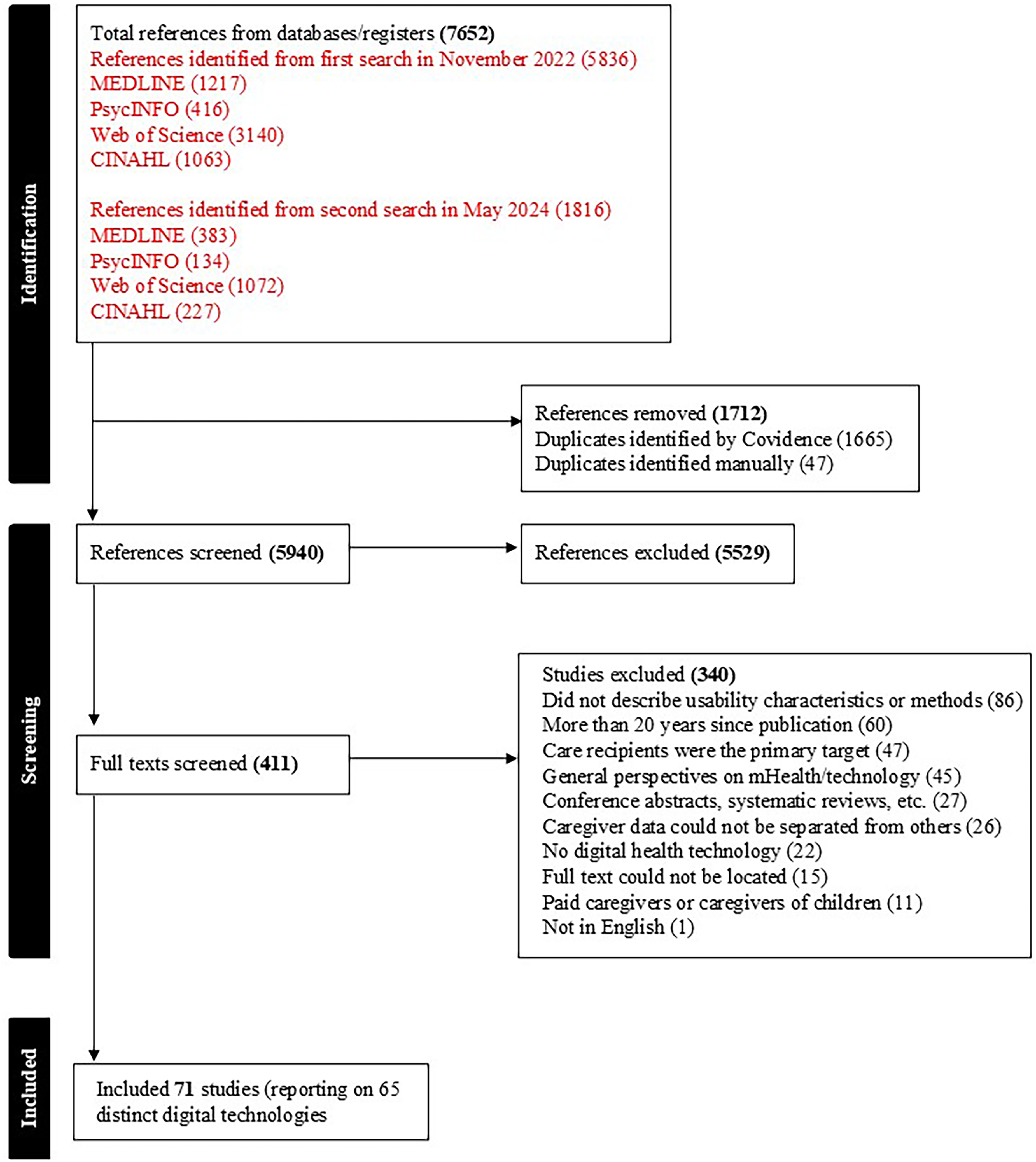

The search of databases yielded 7652 citations (5836 from the initial search in November 2022, and 1816 from the second search in May 2024). After removing 1665 duplicates identified by Covidence and 47 identified manually, we screened the titles and abstracts of 5940 citations—see Figure 1 for the PRISMA flow chart. Following the title and abstract screening, 411 citations remained for full-text screening, where 340 citations were excluded. The most common reason for exclusion at the full-text screening stage was a lack of description of usability characteristics/attributions and/or evaluation methods (n = 86, 25.3%). It is important to note that although six technologies were reported in two (n = 5) or three (n = 1) studies, the focus, stage of technology development, and participants included in these studies differed. Hence, 65 unique digital technologies reported in 71 articles were included in the review.

PRISMA flow diagram.

Characteristics of included studies and participants

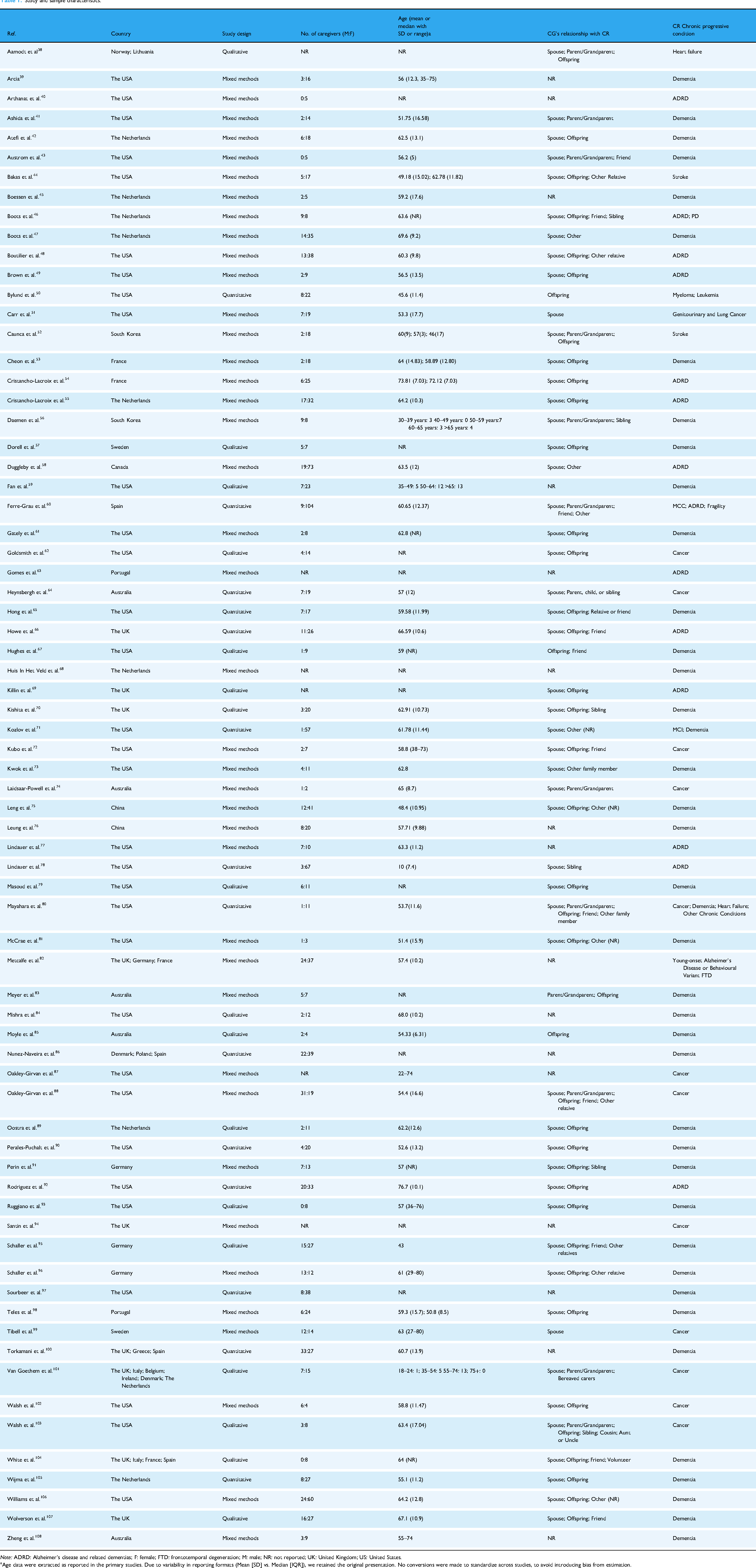

Table 1 summarizes the key characteristics of the included studies and participants. Most studies (n = 55, 77.5%) were published between 2019 and 2024. Studies were conducted across 21 different countries, with six studies (8.5%) involving multi-country investigations. Findings were published in 48 different journals, 38 of which contained only a single article. The three most common journals in which articles were published included Journal of Medical Internet Research (n = 6), JMIR Aging (n = 6), and Dementia (n = 4). Most studies (n = 40, 56.3%) used mixed methods, followed by qualitative (n = 17, 23.9%), and quantitative (n = 14, 19.7%) designs.

Study and sample characteristics.

Note: ADRD: Alzheimer's disease and related dementias; F: female; FTD: frontotemporal degeneration; M: male; NR: not reported; UK: United Kingdom; US: United States.

Age data were extracted as reported in the primary studies. Due to variability in reporting formats (Mean [SD] vs. Median [IQR]), we retained the original presentation. No conversions were made to standardize across studies, to avoid introducing bias from estimation.

Study samples ranged between 5 and 127 participants, with a median sample size of 26 (IQR = 27). Six (11.8%) of the 71 studies provided no participant demographic data. In the remaining 65 studies, about 70% of the participants were female (n = 1460, 72.1%). Most studies (n = 50, 70.4%) reported participants’ mean age ranging between 43.0 and 76.7 years old. Across the 55 studies (77.5%) that reported caregiving relationships, there was a mix of relationships, with spousal caregivers being the most frequently included (n = 51, 92.7%).

Over 70% of the studies (n = 52, 73.2%) targeted caregivers of people with Alzheimer's disease and dementia. Other chronic conditions represented were cancer (n = 13, 18.3%), stroke (n = 2, 2.8%) and heart failure (n = 1, 1.4%). Three studies (4.2%) included caregivers of people with multiple chronic conditions.

Characteristics of digital technologies

Table 2 summarizes the characteristics of the computer-based digital technologies in the included studies. Almost all the studies (n = 63, 88.7%) included a single type of technology, with the most common (n = 43, 60.6%) being Website/Internet, followed by Application-based (Apps) technologies (n = 15, 21.1%). The most common technology delivery setting was in the participant's home (n = 46, 64.8%). Ten studies included two settings: university research laboratory and home (n = 5), clinic and home (n = 4), or university research laboratory and community center (n = 1). Seven studies (9.9%) did not report setting. In terms of the stage of development, most technologies were in the initial development and testing (n = 12, 16.9%) or testing only (n = 37, 52.1%) stage, with less than 5% (n = 3) being labelled as the final versions. Thirteen studies (18.3%) did not report the stage of technology development. Over 60% of the studies (n = 45) did not report using any theoretical framework to guide technology development and/or evaluation. Across the 26 studies that reported using theory, the theories varied widely, limiting the ability to summarize findings. For example, three of the 26 studies (4.2%) were based on the Technology Acceptance Model, while two studies (2.8%) used both Stress and Coping Theory and Bandura's Self-Efficacy Model. Other theories were mentioned only once across the remaining 21 studies.

Technology characteristics.

Note: NR: not reported; HAAT: human activity assistive technology; MRC: medical research council; PEO: person-environment-occupation; UTAUT: unified theory for acceptance and use of technology; TAM: technology acceptance model; VERA: validation, emotion, reassurance, activity.

Website/Internet (e.g. online portal providing educational content and self-management resources); App (e.g. smartphone application for symptom tracking and caregiver reminders); Telehealth system (e.g. video-based consultation platform for remote interaction with healthcare providers); Wearables (e.g. smartwatch used to monitor sleep or physical activity of the care-recipient); Home automation technologies (e.g. .in-home motion sensors that track daily routines and alert caregivers to irregularities).

Usability characteristics/attributes and evaluation methods

Table 3 summarizes the usability characteristics/attributes and evaluation methods applied across the studies. Across the studies, we identified 31 distinct usability characteristics/attributes, with more than half of the studies (n = 45, 63.4%) including at least three characteristics/attributes. The most commonly included characteristics/attributes were “ease of use” (n = 43, 60.1%), followed by “usefulness” (n = 20, 28.2%), “relevance” (n = 16, 22.5%), and “satisfaction” (n = 15, 21.1%). Each of the remaining 26 characteristics/attributes was reported across less than 20% of studies.

Usability characteristics/attributes, evaluation methods and evaluators.

Note: NA: not applicable, a questionnaire was not used.

The assessment of evaluators’ technical knowledge reflects criteria defined by the original study authors. These requirements were not set by the authors of this review but are reported here to accurately represent the methodological approaches used in the primary studies.

Not all usability evaluations reported in the included studies involved direct input from caregiver end-users. In some cases, evaluations were conducted by heuristic experts, researchers, or interdisciplinary team members. These evaluator types reflect the methodological choices of the primary study authors and were retained in this review to accurately represent the range of approaches used.

When considering the methods used to evaluate usability across the studies, there was an almost equal split, wherein 32 studies (45.1%) used a single method of evaluation, while the remaining 39 studies utilized two (n = 29, 40.9%) or more (n = 10, 14.1%) methods. Usability was most commonly evaluated using inquiry-based or a combination of inquiry-based and user testing methods. These methods included questionnaires/surveys and interviews (n = 15, 21.1%), interviews alone (n = 15, 21.1%), questionnaires/surveys alone (n = 13, 18.3%), focus groups alone (n = 4, 5.6%), or questionnaires/surveys and task completion tests (n = 4, 5.6%). Within the 47 studies that used questionnaires/surveys alone or in combination, the measures used were varied, with most (n = 22, 31.0%) using scales developed by the research team. Of the studies that included a standardized usability questionnaire, the System Usability Scale 109 was the most commonly used. Nine studies used the System Usability Scale alone, while two studies combined it with other scales (i.e. scales developed by the research team and the Mobile App Rating Scale. 110

Finally, about one quarter of the included studies (n = 19, 26.7%) assessed the technical knowledge of the individuals who evaluated the usability of the digital technology. It is important to note that this requirement was established by the authors of these studies and not the authors of this review. Across these studies, the methods and tools of assessment varied. For instance, two studies used the eHealth Literacy Scale (eHEALs), 111 while one used the Media and Technology Usage and Attitudes Scale. 112 The remaining 16 studies used varied self-reported mechanisms (e.g. asking about Internet experience and frequency of Internet usage) to assess technical knowledge.

Discussion

This scoping review sought to identify the usability characteristics/attributes and evaluation methods reported in studies of computer-based digital technologies for caregivers in the context of chronic progressive conditions. We also reported on the characteristics of the technology and the target population. Below, we discuss and situate our key findings within the extant usability literature and make recommendations for future work to advance the design, evaluation, and implementation of computer-based digital technologies for caregivers of persons with chronic progressive conditions.

Key findings related to characteristics of included studies and participants

We found a steady increase in studies on computer-based digital technologies for family caregivers of people with chronic progressive conditions from 2019 to date (n = 55), with studies being published in 21 different countries. This finding may reflect the current global health and social care climate following the impetus created by COVID-19 to invest in technology-supported mechanisms to promote population health and well-being.113,114 Given that most of the technologies included in this review were in the initial development and/or testing stage, it can be expected that digital technology tools will continue to play a significant role in supporting population health and well-being into the foreseeable future.

A striking pattern in this review is the disproportionate representation of studies focused on family caregivers of people with Alzheimer's disease and other related dementias. Over 70% of the studies included in this review targeted this population. Alzheimer's disease and other related dementias are undoubtedly one of the most common chronic progressive conditions globally and place a considerable burden on family caregivers. However, the global prevalence of other chronic progressive conditions, such as multiple sclerosis and Parkinson's disease, is also rising, with increasing numbers of people living with these conditions requiring ongoing family caregiver support to manage complex care needs.115,116 While reflective of the global burden of Alzheimer's disease, the concentration of studies focused on digital technologies for caregivers of persons with this condition raises important concerns about the generalizability of findings across other caregiving contexts. Specifically, this narrow focus risks reinforcing a one-size-fits-all approach to the design of digital technologies for caregivers. Caregivers supporting individuals with multiple sclerosis, for example, often contend with unpredictable symptom trajectories and variable disease progression, 117 which may demand different types of technological support compared to those designed for dementia caregiving. Without a broader base of evidence that includes caregivers across diverse chronic progressive conditions, the development of digital health technologies would remain biased toward a relatively narrow subset of caregiving experiences. This not only limits innovation but also risks reproducing inequities in access, engagement, and usability of caregiver technologies. There is a clear need for future research to diversify its focus, including greater attention to caregivers supporting people with multiple chronic conditions and chronic progressive conditions, beyond dementia.

It is also important to consider how well the demographic profile of participant samples in the included studies reflect the broader caregiving population. While caregivers of people with chronic progressive conditions are still predominantly women, typically spouses or adult daughters118,119—the demographic profile of caregivers is shifting to include men, grandchildren, sons, and other non-spousal family members.120–122 As such, the predominance of female participants in the studies reviewed may not fully capture the changing landscape of caregiving relationships. With the increasing demands on formal healthcare systems/structures and shrinking nuclear family size, the involvement of diverse members of a care-recipient's social network, such as close friends and siblings, is further expected to grow. 123 However, the usability of digital technologies among underrepresented caregiver groups—such as male caregivers, young adult offspring and sibling caregivers—remains underexplored. Future research should prioritize the inclusion of these diverse caregiver identities to ensure that digital health innovations are equitably designed, rigorously tested, and responsive to the needs, preferences, and caregiving dynamics of the full spectrum of individuals supporting those living with chronic progressive conditions.

Key findings related to characteristics of digital technologies

A key finding from this review is that most of the technologies examined were in the initial development and/or testing phase. Fewer than 5% of technologies were classified as final versions ready for implementation. This pattern reflects a broader trend in digital health research where innovation often outpaces evaluation and implementation. 124 While early-stage testing is essential for refining usability, feasibility, and acceptability, the limited number of mature technologies raises important questions about real-world adoption and sustained use. Technologies in early development typically lack the robustness and user-informed design refinements necessary for successful implementation in real-world contexts. Moreover, early-phase technologies are often tested under controlled conditions or with small, homogenous samples, which may not reflect the complexity of caregiving environments and characteristics, particularly where caregivers face fluctuating demands, limited digital literacy, or multiple competing responsibilities. Long-term effectiveness is influenced not only by whether a technology works, but also by how it continues to meet evolving caregiver needs. In the context of caregiving in chronic progressive conditions, technologies may need to adapt to changes in care-recipient disease trajectory, caregiving intensity, and relationship dynamics. Yet, most early-stage technologies do not incorporate mechanisms for this kind of adaptability. Therefore, it remains unclear whether short-term usability metrics translate into long-term effectiveness of these technologies.

To bridge the gap between development and real-world effectiveness, studies should adopt a life-cycle evaluation approach that follows technologies from development through to implementation and sustainment. This approach should include ongoing partner engagement, particularly with caregivers themselves, to ensure technologies remain responsive and relevant. Co-design with end users can not only improve initial usability but also inform iterative updates that reflect changing needs and contexts. Furthermore, partnerships with health and community organizations can facilitate real-world testing in naturalistic settings and support integration into existing care pathways. 125 As technologies move beyond initial usability testing, they must also be evaluated in terms of accessibility, cost-effectiveness, data privacy, and equity, particularly for underserved or marginalized caregiving populations.

We found limited use of theoretical frameworks in the design and evaluation of the computer-based digital technologies included in this review. Few studies explicitly referenced conceptual or theoretical models to guide technology development, usability assessment, or interpretation of results. This gap presents a critical missed opportunity. The integration of theoretical frameworks is widely recognized as essential for informing the structure, implementation, and evaluation of digital health tools, ensuring that design decisions are grounded in evidence and aligned with user needs. 124 Theoretical frameworks can also help make explicit the assumptions underlying technology design and user interaction, offering structured ways to identify relevant constructs, guide the selection of usability attributes, and inform the tailoring of digital tools. In the context of caregiving—particularly among those supporting people with chronic progressive conditions—theoretical frameworks can be helpful for addressing the dynamic nature of caregiver roles, psychosocial demands, and relational factors that shape how technology is adopted and used. For example, User-Centered Design and Participatory Design approaches, while not formal theories per se, are grounded in principles of human-computer interaction and are increasingly paired with behaviour change theories to improve acceptability and usability. 126 The Technology Acceptance Model and its derivatives, such as the Unified Theory of Acceptance and Use of Technology, offer useful lenses to understand and predict caregiver technology uptake based on perceived ease of use, usefulness, and effort expectancy. 127 Yet, our findings suggest that these models are rarely used for computer-based digital technologies for caregivers of people with chronic progressive conditions.

Importantly, the lack of theoretical underpinning may partially explain the variability in usability characteristics/attributes evaluated. Tools developed without a theoretical foundation may prioritize surface-level usability (e.g. navigation) but fail to address deeper motivational, contextual, and relational drivers of engagement and sustained use. Moreover, theoretical grounding is essential when evaluating digital interventions across diverse caregiving contexts, helping researchers and developers interpret why certain design features may work well in one context but not in another. To enhance future work in this area, we recommend that researchers and developers adopt a theory-informed approach to usability evaluation and digital health design. Behaviour change and implementation frameworks such as the Theoretical Domains Framework 128 and the Behaviour Change Wheel 129 can be especially useful for mapping caregiver behaviours, identifying barriers and facilitators to engagement, and linking design features to evidence-based strategies for change. Integrating such frameworks from the outset can strengthen methodological rigor, increase intervention coherence, and ultimately improve technology adoption and caregiver outcomes. 130

Key findings related to usability characteristics/attributes and evaluation methods

Only 71 out of 411 full-text studies reviewed provided information about usability over a 10-year period, reflecting limited consideration of this important topic within the context of digital tools for family caregivers of persons with chronic progressive conditions. In terms of usability characteristics/attributes, about 20–60% of included studies evaluated “ease of use,” “usefulness,” and “relevance,” highlighting an opportunity to further explore under-utilized characteristics/attributes such as “efficiency,” “learning performance,” and “cognitive load,” which could provide a more comprehensive understanding of usability. 24 This is particularly relevant, for example, for digital technologies used in home environments where multiple distractions and daily tasks may influence cognitive load, learning performance, efficiency, and overall user experience.

Of note, several studies did not explicitly articulate the usability characteristics/attributes being evaluated. Instead, they used language such as “amount of time spent and duration to complete the task,” and “perceived impact” to reflect the usability characteristics/attributes of “efficiency” and “effectiveness,” respectively. The lack of consistent and standardized usability language across studies limits the ability to summarize existing literature effectively, offers limited direction to researchers, and muddles our understanding of what aspects of usability to focus on when developing, evaluating, and implementing computer-based digital technologies for caregivers of people with chronic progressive conditions. To advance the field, there is a clear need for greater harmonization of usability terminology, frameworks, and evaluation standards. Organizations with mandates to set digital health standards are well-positioned to lead or support these efforts. For instance, the International Organization for Standardization (ISO; https://www.iso.org/home.html) has published guidelines (e.g. ISO 9241-11:2018) that inform standardized usability language for researchers and developers. Interdisciplinary collaborations through national digital health strategy bodies, including the Office of the National Coordinator for Health Information Technology US (https://www.healthit.gov/) or Canada Health Infoway (https://www.infoway-inforoute.ca/en/), and research networks, such as the International Medical Informatics Association Human Factors Engineering for Healthcare Informatics Working Group (https://imia-medinfo.org/wp/human-factors-engineering-for-healthcare-informatics/) could be leveraged to co-develop consensus-driven taxonomies and field-specific guidance for usability evaluations in health contexts. In parallel, health policy organizations and major research funders can play a critical role by incentivizing the adoption of shared usability definitions and reporting practices through funding criteria and knowledge translation initiatives. Collectively, such efforts would support more coherent design, reporting, and evaluation of caregiver-focused technologies that align with broader digital health standards and guidelines.

Across the studies, usability evaluations were mainly conducted using inquiry-based methods, consistent with a previous review of mobile apps. 24 While these methods may provide relevant and valuable information, relying solely on subjective measures can be problematic due to inherent biases. Further, we found that of the 47 studies that used a questionnaire alone or in combination, almost half (n = 22) used independent, non-validated questionnaires developed by the research team rather than psychometrically tested usability questionnaires, such as the System Usability Scale. 109 While the application of psychometrically sound usability questionnaires will support the advancement of the field, 131 it is important to note that self-reported questionnaires, such as the System Usability Scale, do not provide a comprehensive approach to evaluating usability, 132 and are not specific nor adapted to a particular computer-based digital technology. These issues limit the ability to evaluate specific usability characteristics/attributes beyond general usefulness and satisfaction. 131 Applying other evaluation methods, including user-testing (e.g. Think-aloud protocols), inspection (e.g. heuristic evaluation), and analytical modelling (e.g. task environment analysis) together with inquiry-based methods, could be beneficial and provide a more holistic view of usability in future technology development and evaluation efforts.

Regarding the setting of usability evaluations, most technologies were evaluated in the participant's home, with a few studies (n = 10) including evaluations in both a research laboratory and field testing. While it is important to conduct field testing in real-life dynamic environments, such as in the participants’ homes where digital tools will most likely be used, testing in a variety of settings may be beneficial. Such an approach is particularly relevant in the early development phase, where initial testing in a laboratory may provide critical and rapid feedback that can be used to improve the technology within a feasible and efficient timeframe. Some researchers have suggested that it is beneficial to invest time and resources in conducting small-scale laboratory testing before conducting field testing and implementation trials,17–19 and we echo this recommendation.

It is important to note that not all usability evaluations included direct input from caregivers, who were the intended end-users of the technologies. Several primary studies included in this review relied on evaluations conducted by researchers, heuristic experts, or healthcare providers. These expert-based methods (e.g. heuristic evaluations, cognitive walkthroughs) are commonly used in early-stage design to identify usability issues before engaging target users. 133 While such approaches can provide valuable technical and design insights, they do not replace the lived experience or contextual knowledge of caregiver end-users. This underscores the need for future evaluations to prioritize meaningful engagement with caregivers throughout the design and testing process.

Finally, the inconsistent assessment of evaluators’ technical knowledge across studies presents a potential source of bias in reported usability findings. When technical literacy is assessed using varied or non-standardized methods, it becomes difficult to determine whether usability feedback reflects the actual accessibility and design of the technology or the prior digital experience of the evaluator. For instance, users with higher technical literacy may navigate digital tools more easily, potentially overestimating usability, while those with lower technical confidence may report greater difficulty, regardless of the technology's actual design. 134 Moreover, studies that require participants to meet a certain threshold of technical proficiency may introduce selection bias, systematically excluding caregivers who are older, less digitally literate, or otherwise underserved—groups who often stand to benefit most from accessible digital health tools. 135

Strengths and limitations

In this scoping review, we present a novel contribution by zeroing in on the role of usability in caregiver-centered digital health tools in chronic progressive conditions. We charted the specific usability characteristics considered (e.g. comfort, accessibility, flexibility) and the methods used to evaluate usability (e.g. usability questionnaires, task completion metrics, heuristic testing, and user interviews) across a range of digital platforms. By comprehensively mapping these elements, our review clarifies how usability has been integrated into the design, development, and assessment of technologies supporting caregivers of chronic progressive conditions. This work builds upon prior systematic reviews of caregiver digital tools, which confirmed general benefits and advocated for user-centered design.10,12–15 We further extend previous work by providing the first holistic overview of when, where, and how usability enters the picture in the creation and testing of these tools. Such insights are crucial for advancing the next generation of digital health innovations—ones that are not only effective, but also responsive to unique caregiver needs. Ultimately, our findings may inform researchers, developers, and healthcare providers on best practices for incorporating usability into the development pipeline, thereby improving the likelihood that digital health solutions for caregivers will be embraced and sustained in real-world care settings.

However, several limitations warrant consideration. First, scoping reviews usually include a wider spectrum of evidence gathered from various sources, for example, gray literature. We limited our search to peer-reviewed published literature. Therefore, we might have missed other relevant types of literature. Other types of sources should be examined in future work when this area is further developed. A second related limitation involves using search limiters to permit citations with full-text published in English. Although our intention is not to disregard publications in foreign languages, the decision was influenced by the global status of English language in modern science, specifically, peer-reviewed published literature, and the lack of resources for scientific translation. Second, data extraction of usability characteristics/attributes was challenging because “usability” has multiple meanings that do not always relate to usability testing. For instance, usability is sometimes used when describing general experiences or perspectives of tools but not in connection with the development and/or evaluation of the tool. There is potential for errors in categorizing characteristics/attributes described in the included studies and missing some sources that use different terminology. We attempted to mitigate this risk by involving two reviewers in the categorization process and having consensus-based discussions to ensure we captured relevant characteristics/attributes.

Conclusion

This review examined usability characteristics/attributes and evaluation methods reported in studies of computer-based digital technologies for caregivers in the context of chronic progressive conditions. We also examined the characteristics of the technology and the target population. The take-home message for developers and researchers interested in developing and evaluating computer-based digital technologies for caregivers of people with chronic progressive conditions is clear. There is a great need to expand the target audience for such technologies beyond caregivers of people with Alzheimer's disease and dementia and to consider usability characteristics/attributes at all stages from development to implementation. We also need to consider the potential advantages of expanding the types of usability characteristics/attributes evaluated and combining different usability evaluation methods, including both subjective and objective usability measures, to develop a more wholesome and comprehensive understanding of usability in this context. Furthermore, the use of psychometrically valid measures for evaluating usability should be prioritized in future studies to enhance the comparability of findings across studies and ensure that usability evaluations meaningfully capture user experiences in the context of caregiving for individuals with chronic progressive conditions. Investing time and effort in such work is vital for the planning and conduct of future usability studies to advance the use of computer-based digital technologies for supporting the health and well-being needs of caregivers of people with chronic progressive conditions.

Supplemental Material

sj-doc-1-dhj-10.1177_20552076251365141 - Supplemental material for Exploring usability characteristics in computer-based digital health technologies for family caregivers of people with chronic progressive conditions: A scoping review

Supplemental material, sj-doc-1-dhj-10.1177_20552076251365141 for Exploring usability characteristics in computer-based digital health technologies for family caregivers of people with chronic progressive conditions: A scoping review by Afolasade Fakolade, Katherine L. Cardwell, Emma Chow, Amanda Ross-White and Lara A. Pilutti in DIGITAL HEALTH

Supplemental Material

sj-docx-2-dhj-10.1177_20552076251365141 - Supplemental material for Exploring usability characteristics in computer-based digital health technologies for family caregivers of people with chronic progressive conditions: A scoping review

Supplemental material, sj-docx-2-dhj-10.1177_20552076251365141 for Exploring usability characteristics in computer-based digital health technologies for family caregivers of people with chronic progressive conditions: A scoping review by Afolasade Fakolade, Katherine L. Cardwell, Emma Chow, Amanda Ross-White and Lara A. Pilutti in DIGITAL HEALTH

Footnotes

Acknowledgments

The authors thank Emily Broitman, Taylor A. Hume, Mariah Keeling, Julia Ludgate, Isabelle Cardos, and Michelle Bromberg for contributing to the screening process.

Ethical approval

Ethical approval was deemed unnecessary as a scoping review without primary data collection.

Author contributions

Afolasade Fakolade contributed to conceptualization, methodology, data curation, supervision, and writing‒original draft. Katherine L. Cardwell contributed to conceptualization, methodology, data curation, and writing‒original draft. Emma Chow contributed to data curation and writing‒review and editing. Amanda Ross-White contributed to methodology and writing‒review and editing. Lara A. Pilutti contributed to conceptualization, methodology, and writing‒review and editing, supervision.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.