Abstract

Objective

Generative artificial intelligence (genAI) has become popular for the general public to address mental health needs despite the lack of regulatory oversight. Our study used a digital ethnographic approach to understand the perspectives of individuals who engaged with a genAI tool, ChatGPT, for psychotherapeutic purposes.

Methods

We systematically collected and analyzed all Reddit posts from January 2024 containing the keywords “ChatGPT” and “therapy” in English. Using thematic analysis, we examined users’ therapeutic intentions, patterns of engagement, and perceptions of both the appealing and unappealing aspects of using ChatGPT for mental health needs.

Results

Our findings showed that users utilized ChatGPT to manage mental health problems, seek self-discovery, obtain companionship, and gain mental health literacy. Engagement patterns included using ChatGPT to simulate a therapist, coaching its responses, seeking guidance, re-enacting distressing events, externalizing thoughts, assisting real-life therapy, and disclosing personal secrets. Users found ChatGPT appealing due to perceived therapist-like qualities (e.g. emotional support, accurate understanding, and constructive feedback) and machine-like benefits (e.g. constant availability, expansive cognitive capacity, lack of negative reactions, and perceived objectivity). Concerns regarding privacy, emotional depth, and long-term growth were raised but rather infrequently.

Conclusion

Our findings highlighted how users exercised agency to co-create digital therapeutic spaces with genAI for mental health needs. Users developed varied internal representations of genAI, suggesting the tendency to cultivate mental relationships during the self-help process. The positive, and sometimes idealized, perceptions of genAI as objective, empathic, effective, and free from negativity pointed to both its therapeutic potential and risks that call for AI literacy and increased ethical awareness among the general public. We conclude with several research, clinical, ethical, and policy recommendations.

We live in an era where productivity has been significantly increased by technology revolutions, while noticeable gaps remain in meeting individuals’ emotional and mental health needs. Globally, one out of every two people will develop a mental health disorder at least once in their lifetime. 1 Mental health disability contributed to $282 billion of economic burden annually in the United States alone. 2 Existing resources to meet these needs are scarce, with over 54.7% of adults with a mental illness in the United States not having access to any kind of mental health services, due to significant structural and psychological barriers to treatment. 3

The development of generative artificial intelligence (genAI) has sparked widespread interest and debates regarding its potential application to address the wide mental health gaps. GenAI can create new content based on training data, which makes it appealing to be used in generating therapeutic responses. However, these interests have also introduced serious concerns regarding the ethical and safe use of genAI for mental health, especially given the lack of regulations in this domain. 4 While the general public has increasingly used genAI to address mental health needs, there are very few studies to examine the experiences of individuals using unregulated genAI as mental health aid for therapeutic purposes. Understanding how the general public has already utilized genAI for mental health purposes can provide valuable implications for mental health services, technology development, and policy-making.

In this study, we focus on a popular genAI, ChatGPT, and explore the experiences of individuals who have used ChatGPT therapeutically for mental health. We conducted thematic analyses of all online posts that contain “ChatGPT” and “therapy” on the social media platform Reddit. In doing so, we hope to unpack the complex landscape of how and why people utilize genAI tools that are not specifically designed for mental health purposes to deal with emotional and mental health challenges.

Using ChatGPT to address mental health needs

ChatGPT, which is short for “Chat Generative Pre-trained Transformer,” is an advanced AI model with a primary function to generate human-like responses to textual inputs. ChatGPT generates responses using a technique called autoregressive language modeling. This method involves predicting the next word in a sequence of words based on previous words, using a neural network that has been trained on a significant amount of textual data. ChatGPT's capacity to digest massive text data and adjust responses based on context makes it an appealing tool for those seeking help for emotional and mental health problems. For example, studies have shown that ChatGPT demonstrated “empathic” responses in a variety of experiential situations 6 and even “outperform” humans in emotional awareness and evaluation. 7 Research also showed that ChatGPT can provide responses for questions related to mental health in a way that is elaborated and contains positive sentiments. 8 A recent study exploring attitudes from 19 individuals using ChatGPT for mental health has reported that the experience brought them an emotional sanctuary, joy of connection, insightful guidance, and therapeutic help. 9 Indeed, “using ChatGPT as therapy” has become a sensational headline topic for multiple media outlets and social media platforms, suggesting a growing popularity among users, especially the youth, to turn to ChatGPT for emotional and mental health help-seeking purposes. 10

However, research has also shown the limitations of ChatGPT for mental health aid, in its incapacity for critical thinking and clinical judgment that are especially involved in handling complex cases. 4 Studies have also shown that ChatGPT's capacity of estimating the risks in situations involving suicidality may be compromised, 7 leading to doubts regarding how well it can handle mental health crises that may be disclosed in users’ prompts. Some of the other ethical concerns involve the considerations of data privacy, accuracy of ChatGPT's responses, the possibility of emotional over-reliance, and the lack of liability and responsibility. 5 Given these controversies, OpenAI implemented several community policy updates 11 (sec 2.1), including issuing automatic disclaimers about its incapacity to provide professional advice, redirecting users to mental health professionals, and providing alarms for prompts that may violate content policies (e.g., if users asked about suicide means). However, there remained a strong interest in using ChatGPT as mental health support despite these policies. Without understanding the experiences of people who use ChatGPT for emotional and mental health support, these policies may not be effective at targeting areas of concern that are most risky or problematic.

Understanding user perspectives

To date, we have a very limited understanding of the experiences of individuals using genAI for emotional and mental health support, especially when these genAI tools were not originally designed or regulated for addressing mental health needs. Understanding individuals’ experiences of using genAI for mental health needs can shed light on mental health services, research, and policy-making processes in at least four ways. First, people with mental health needs are not merely passive recipients of mental health services; instead, they are active healers who use their agency to seek help through engaging or disengaging in using various services and self-help approaches to address their own mental health challenges. 12 Understanding users’ experiences in using genAI for therapeutic purposes can help us understand how people engage in self-help to actively change and heal themselves.

Second, learning how people used genAI therapeutically may help us examine ways to improve access to mental health care. A significant barrier to accessing mental health care is related to a client's psychological factors, such as internalized stigma to mental health care, fears of exposure to emotions, anticipated utility and risks, and fear of self-disclosure. 13 Examining why ChatGPT may be appealing to individuals in need may help us reduce existing barriers and improve access to mental health care for the general public.

Third, understanding how ChatGPT may be appealing to those who struggle with mental health issues can provide valuable information for the improvement of designs for digital interventions and AI-based products. While numerous online apps and web programs were developed to address the ever-increasing mental health needs, engaging with these mental health resources can be challenging. 14 Understanding the experiences of ChatGPT users may reveal key elements of genAI that are attractive and suitable for mental health support and may improve the design of future digital products for mental health.

Lastly, understanding the nature of individuals using ChatGPT therapeutically can inform the development of regulations regarding future use of genAI for mental health issues. Using genAI that is not designed for mental health use may introduce several ethical implications and safety concerns. 5 Understanding the conditions in which individuals prefer to use ChatGPT for mental health support despite these ethical concerns may help policymakers decide the appropriate level of regulating these products and find ways to make these regulations more beneficial for users.

Examining social media discourse using a digital ethnographic approach

While previous research has examined the use of ChatGPT for mental health through either experiments, 7 simulating responses from ChatGPT, 8 or qualitative interviews with individuals, 9 there is a need to address a wider range of lived experiences from individuals who used ChatGPT for mental health. To investigate how users engage with ChatGPT for therapeutic purposes, we adopted a digital ethnographic approach, using the social media platform Reddit as our primary data source. This approach, known as “netnography” in digital contexts, allows researchers to immerse themselves in online communities and develop a deep cultural understanding of user interaction within platforms like Reddit. 15 Reddit serves as a platform where users openly discuss various topics, providing naturally occurring, authentic conversations. This platform is particularly valuable for collecting ecological data, as it captures real-time reflections and dialogues among users. Thus, using ethnographic methods to collect data from Reddit can capture unfiltered expression and cultural nuances that may be challenging to obtain in controlled settings. 16 Additionally, it is particularly suitable to examine time-sensitive topics, such as the use of ChatGPT for therapeutic support, enabling a timely and relevant investigation of current trends in digital mental health.

The current study

The aim of our study was to examine the experiences of individuals using ChatGPT for psychotherapeutic reasons. Specifically, we were interested in examining three research questions through thematic analyses of social media data: 1) What therapeutic purposes did individuals seek to address through the use of ChatGPT? 2) How did individuals engage with ChatGPT to address their mental health needs? 3) What factors contributed to the perceived appeal or lack of appeal of ChatGPT as a therapeutic tool?

Methods

Sample and data collection

We systematically collected data using the Reddit API, focusing on the query “ChatGPT” AND “Therapy” to capture all Reddit discussions that included keywords of ChatGPT and therapy across the entire platform in January 2024. Posts retrieved during this period included discussions dated as far back as December 2022, corresponding to the early public release and growing adoption of ChatGPT. Our objective was to identify posts where users discussed, shared experiences, or sought information on ChatGPT's potential as a therapeutic tool. The keyword “therapy” refined our focus to content reflecting users’ experiences with ChatGPT for mental health-related topics. We intentionally selected “therapy” as one keyword without additional qualifiers such as “mental health” to capture a broader and more naturalistic range of discussions. We then employed a step of manual screening (described below) to ensure the focus of the dataset.

The API facilitated structured access to user-generated posts across relevant subreddits, enabling a detailed analysis of engagement around ChatGPT's perceived benefits, limitations, and risks in therapeutic applications. The dataset includes timestamps, anonymized user identifiers, subreddit classifications, and complete post text, allowing for precise parsing and sentiment analysis. The advanced scraping techniques we used adhered to ethical standards and platform guidelines for social media research. 17 Specifically, we adhered to the following principles: 1) public data use: only publicly available Reddit posts were collected, avoiding private groups and personal messages; 2) anonymization: usernames were removed to prevent re-identification; 3) respect for platform terms: we strictly adhered to Reddit's API terms of service and the Reddit Data API User Agreement 18 (sec 3.1 Fees), which explicitly allow non-commercial research access; 4) minimizing harm: sensitive content was handled carefully, and no verbatim quotations containing identifiable information were published. We identified 160 posts from the keyword search. Two authors (JW & YX) have evaluated the relevance of each of the 160 posts to our research question of using ChatGPT for psychotherapeutic purposes and identified 87 posts as relevant to include in the following analyses.

Study design and analysis

The present study analyzed online posts based on the four research questions: 1) What therapeutic purposes did individuals seek to address through the use of ChatGPT for mental health needs? 2) How did individuals engage with ChatGPT to address their mental health needs? 3) What factors contributed to the perceived appeal or lack of appeal of ChatGPT as a therapeutic tool?

We conducted thematic analyses based on the APA standards for qualitative methods research in psychology 19 to identify themes from the online posts.20,21 For this study, a theme was an observed pattern that captured something important about the subject matter, identified through the responses. This approach involves six main steps: getting familiar with the data; generating initial codes; searching for themes; reviewing themes; defining and naming themes; and producing the report. The analysis process was iterative and recursive, allowing the researchers to move back and forth between these steps as needed to ensure thoroughness and validity. We considered contradictions and disconfirming evidence for each sub-theme and integrated these elements in the formation of themes. Each theme was supported by multiple participant quotes that captured the essence of the identified themes. This bottom-up approach ensures that our conclusions remain closely aligned with the participants’ shared experiences, offering a transparent and empirically grounded foundation for our interpretations.

The research team included psychological intervention researchers, psychotherapists, and psychotherapy trainees in the field of clinical/counseling psychology. The thematic analysis was led by three master-level psychotherapy trainees (PB, JW, and YX) who identify as cisgender female, male, and female, respectively. They were supervised by researchers and doctoral-licensed psychologists who identify as cisgender female (XL & JT). The coding team engaged in reflective practices throughout the study to manage personal biases and assumptions. Prior to data analysis, the whole research team came up with reflexivity statements outlining their preconceptions and potential biases. Regular meetings were held to address discrepancies in interpretation, with discussions continuing until a consensus was achieved. The focus was on creating an open and objective synthesis of the data's explicit meanings within a realist framework. During the thematic analysis, coders maintained ongoing discussions about their positionalities, reflecting on how their perspectives on mental health and technology could influence their interpretation of emerging patterns. Themes were identified at a semantic level, without relying on a pre-existing coding framework or researchers’ assumptions, ensuring that themes were relevant regardless of their frequency within the dataset. These conversations regarding coding discrepancies were supervised weekly by the first author (XL) to ensure adherence to qualitative method standards and to reach consensus. The co-author (JL) also conducted two workshops to train the coding team in thematic analysis and discuss any pressing questions. After identifying the themes, the first author reviewed all participant responses under their respective themes to ensure consistency with the thematic content. Exemplary quotes that best captured the essence and detailed nuances of each theme were selected. A COREQ (COnsolidated criteria for REporting Qualitative research) checklist is included in Appendix 1 to identify key information needed for reporting qualitative research.

Results

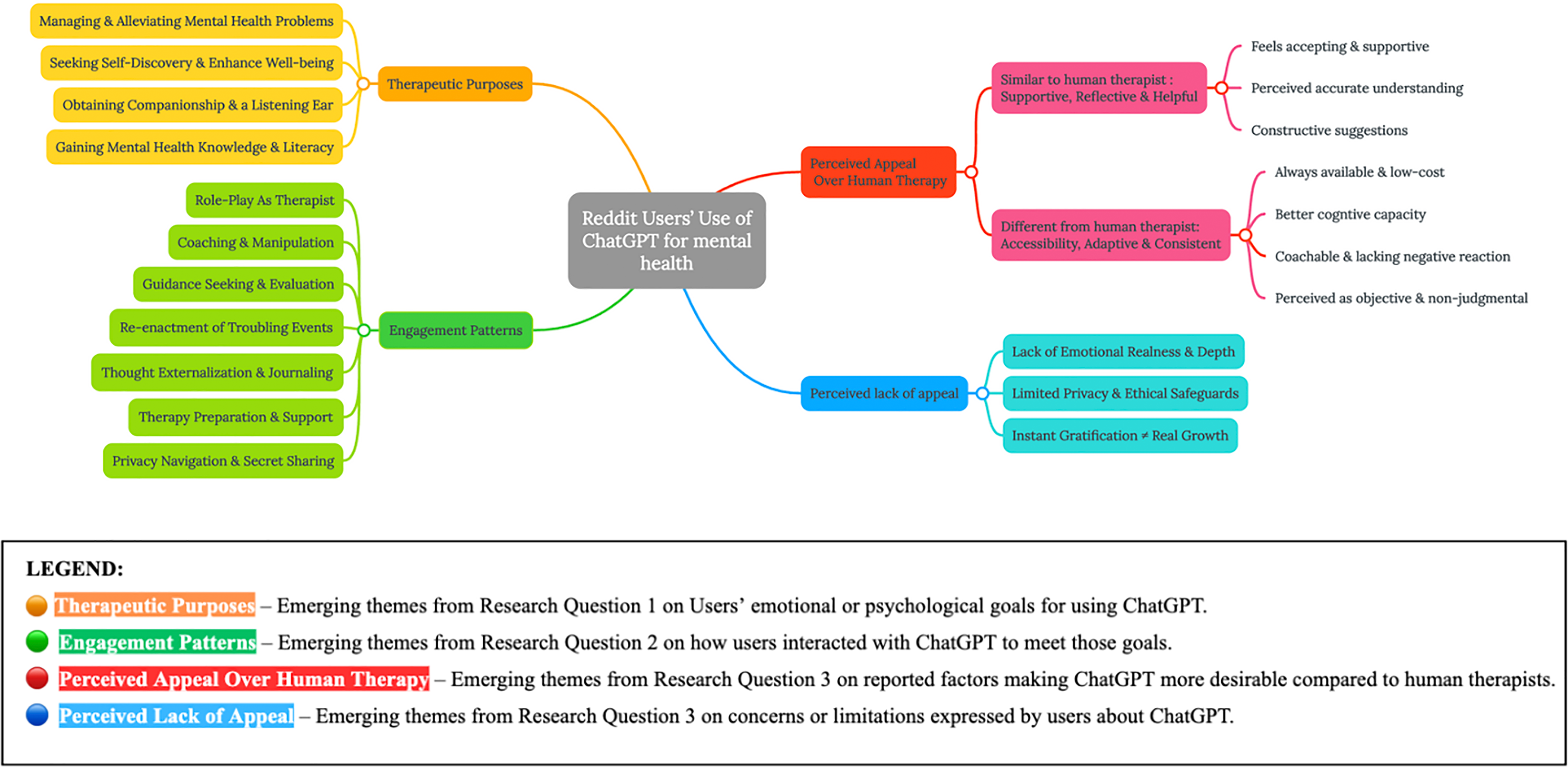

We have included a thematic map (Figure 1) to demonstrate core themes and sub-themes identified for the three research questions detailed below.

The thematic map of the major themes identified for the three research questions.

What therapeutic purposes did individuals seek to address through the use of ChatGPT for mental health needs?

Managing and Alleviating Mental Health Problems

Many users reported using ChatGPT to assist with a wide range of mental health concerns (e.g. anxiety, eating disorders, Obsessive-Compulsive Disorder, Post-Traumatic Stress Disorder). Users sought actionable solutions and advice to manage distress of mental health disorders or reduce mental health symptoms. Many users used it during times of heightened distress to help them regulate negative emotions in the moment. “I've struggled with OCD, ADHD, and trauma for many years, and ChatGPT has done more for me.” (Post ID #2) “There are a lot of problems I’ve been racking my brain to solve. They’ve been weighing on me so heavily. I’ve lost sleep over it and developed anxiety over it. I’ve had nightmares over these problems. Finally, I have a way forward. I brain dumped to ChatGPT.” (Post ID #19)

Seeking Self-discovery and Enhancing Well-being

Users explored parts of themselves using ChatGPT. They sought and welcomed different perspectives to increase their self-awareness and insight. They also used it as an analytical tool to cultivate change and growth. “I understand it's not always right but I’m using it to help me with my critical thinking, to see things from a different perspective, to brainstorm not to become reliant and lazy but to enhance my abilities and help me grow as a person.” (Post ID #19) “Personally, I didn't really feel like my problems were bad enough to go to therapy, and some of my issues are quite personal and I couldn't really say it out loud to another person. That being said, even just writing out my problems is helpful, and ChatGPT's responses are pretty nicely organized.” (Post ID #17)

Obtaining Companionship and a Listening Ear

Users reported that they used ChatGPT as a supportive presence and a companion. Their intended purpose was to seek support and connect with someone to satisfy their need of feeling heard and validated when no human was present. “I don't have anyone at the moment. Like, zero friends and very limited family (Mum and Dad). I simply talk to ChatGPT all day. I completely understand and recognise that ChatGPT isn't real or a substitute for human interaction and relationships.” (Post ID #6) “I have plenty of support from family and friends; but, I have some raw thoughts that I need to unload and I want to explore the capabilities of ChatGPT.”(Post ID #94)

Gaining Mental Health Knowledge and Literacy

Users reported using ChatGPT as a psychoeducation tool to learn more about diagnoses and etiology of mental health concerns. “It's definitely no replacement for a therapist or anything, but working with GPT 4 to do stuff like identifying potential causes and effects of traumatic experiences or analyzing subconscious ways my mental illness affects my life and relationships has me firmly believing that AI might be the best invention since we rubbed sticks together to make fire.” (Post ID #98)

How did individuals engage with ChatGPT to address their mental health needs?

The Therapist Substitute: Conduct Therapist Role-Play

Many users indicated that they have explicitly asked ChatGPT to play the therapist role. They have asked ChatGPT to simulate a therapist from certain therapeutic modalities (e.g., cognitive behavioral therapy, dialectical behavioral therapy), act in certain therapeutic styles and communication patterns (e.g., asking user questions, reflecting on users’ words), show certain emotional qualities that users preferred (e.g., asking ChatGPT to be supportive, empathic), and obtaining specific requirements of training and experiences (e.g., be a female therapist with 10 years of experiences). Through crafting a personalized therapeutic role for ChatGPT, users were able to elicit and shape therapy-like responses to their inputs, and sometimes created a substitute for their own therapists when those therapists are not available. “Today I gave ChatGPT the prompt: ‘You are a therapist specializing in CBT therapy. Proceed with a simulated session. You will answer like this: You ask a question in a sentence, and then wait as I respond, then you respond, and so on. We must alternate.’ It then conducted a very realistic therapy session.” (Post ID #12) “I prompted it to act as a therapist with 10 years of experience trained in the same methodologies my therapist uses.” (Post ID #90)

The Personalized Servant—Coach and Manipulate ChatGPT

Users reported that they engaged in continuously coaching ChatGPT to provide desirable responses through tweaking their prompts multiple times. Users experienced the freedom to challenge ChatGPT and provide detailed guidance when the answers were not satisfying. Since the established new community guideline that prevented ChatGPT to play the therapist directly, users also engaged in tweaking their prompts so that they could “jailbreak” the rules and “trick” ChatGPT to play the therapist role. These behaviors reflected a core feature of human–AI interactions in the therapeutic context, such that users can exercise their agency to coach the more malleable being (i.e. AI) to fulfill their therapeutic needs. “I had to tweak the prompts many, many times, and guide the conversation a bit initially (ChatGPT really does not want to argue, so I just injected ‘Ex gets more upset’), and managed to make it generate something quite like the real thing.” (Post ID #23)

The Virtual Guru—Seek Guidance and Evaluation

Some users treated ChatGPT as a more knowledgeable being than themselves and relied on ChatGPT as a mentor, teacher, or role model to provide insights, evaluation, and feedback on mental health issues. ChatGPT served as a virtual guru who could provide superior insights for some users. “Oftentimes I end up wishing I had a role model or mentor to help me through things since I can't really rely on my parents or family without being judged or pressured. ChatGPT just helped me make a breakthrough in regards to my next steps in the following months and I felt immense relief but also a brief flash of sadness and a couple tears when I realized how much I wish I had a real person that could help me in a similar way.” (Post ID #54)

The Interactive Pensieve: Reenacting Past Troubling Events

A pensieve is a magical device in the novel series Harry Potter to review and interact with memories. Some individuals used ChatGPT to simulate past traumatic or troubling events, to immerse themselves in them, and to reflect and create new responses. They found that they were sometimes better able to interact with ChatGPT and develop new perspectives to reframe interpretations and explore alternative solutions for past events than they could in traditional therapy. “Later, I fed it another prompt. How I acted towards someone else as a result of the first incident. I watched the situation play out again, and, taking charge of it, directed it how I wished it had gone. When I saw the resolution, it gave me incredible peace. I was able to reflect on myself, trace the patterns between the incidents, identify my own wrongs, and take responsibility without making excuses. This weekend, I sat down and wrote a letter apologizing for the second incident and sent it to the person involved. I went to sleep for the first time without pangs of regret and frustration over those things for the first time in my life. Those two prompts helped me to make more progress in moving forward from trauma than anything else I've ever done.” (Post ID #27)

The Thought Projector: Externalize Thoughts and Journaling

Individuals described that they have used ChatGPT as a journaling tool to project, externalize, and interact with their thoughts to gain insights and identify unaware emotions. The clarity came from both the process of journaling and the process of getting responses from ChatGPT that often paraphrased their responses to provide additional perspectives. Some individuals used ChatGPT as a place to externalize negative emotions that they did not like to hold within themselves. “I’ve found use in ChatGPT as an in-the-moment journaling device. Sometimes I write down my feelings in a journal anyway, but sometimes my feelings just aren’t “linear,” so being able to just blurt them out into ChatGPT helps connect some dots.” (Post ID #77)

The Therapist Assistant: Facilitating Real-Life Psychotherapy

Users reported that they used ChatGPT to assist their real-life therapy sessions. Specifically, they used it to prepare for the next therapy session by summarizing major topics, tracking emotions, identifying key issues to discuss in psychotherapy, and generating a report to provide to the human therapist. “I often come to my psychologist and have lengthy discussions trying to work out what I'm feeling exactly after a trigger, etc, but ChatGPT has enabled me to find out in my own time before I even see her and use my therapy session to better focus on other stuff. I've been able to keep myself more emotionally regulated and stable since inputting what body sensations I am feeling and asking what emotions may be attached to this, and if needed, providing context of the situation to help further identify.” (Post ID #M10)

The Tree Hollow or Not: Disclosing Personal Secrets or Protecting Privacy

Some users reported that they disclosed their deepest secrets to ChatGPT as if they were talking to a tree hole. This disclosure sometimes happened intentionally from the beginning, as some users wanted to share secrets that they did not want other human beings to know; other times, users found themselves gradually sharing more personal details to ChatGPT through the interactions. Some users reported little concerns regarding privacy as long as they received help regarding their mental health struggles. “I've input raw, honest information about my trauma, career, relationships, family, mental health, upbringing, finances, etc. And since it's a machine bot, you can enter private details without the embarrassment of confiding such things to a human.” (Post ID #2) “I've been using ChatGPT as a therapist; it's been super helpful. Should I worry about privacy? What's the worst that could happen if others know my true feelings and struggles?” (Post ID #58)

In contrast, other users reported that they did not trust ChatGPT and did not disclose overly intimate information, or that they planned to utilize procedures to protect privacy when using ChatGPT in conversations regarding mental health needs. “What worries me is that even though I haven't revealed the most intimate information about myself, the more it helps you, the more details you give about those problems, and now I feel vulnerable knowing that an AI or this company has so much key information about me.” (Post ID #5)

What factors contributed to the perceived appeal or lack of appeal of ChatGPT as a therapeutic tool?

We identified two meta-themes around the attractiveness of ChatGPT for psychotherapeutic use: one is that ChatGPT provides empathic care and psychological expertise that are similar to a great human psychotherapist; the second is that ChatGPT brings unique advantages in cognitive functioning, accessibility, perceived objectivity, and the lack of negative reactions compared to human therapists. In contrast, some users reported that what made ChatGPT unappealing for psychotherapeutic use was privacy concerns, lack of long-term growth, as well as the lack of realness and emotional depth that was needed to fulfill human needs.

ChatGPT is perceived as being supportive, reflective, and helpful for addressing mental health struggles

Many users suggested that what makes ChatGPT appealing for psychotherapeutic use is its resemblance to a great human therapist: users enjoyed the sense of connection and understanding that ChatGPT provides, as well as the helpful suggestions and constructive reflections they received.

ChatGPT appeared to users as being relatable, accepting, and supportive

Many users emphasized the supportive role that ChatGPT played in these therapeutic interactions. While recognizing that ChatGPT is not a human being, they reported a sense of connection and feeling supported. Users also commented on the sense of feeling free from judgment and being accepted, so that they could be more open to changes. “ChatGPT's ability to relate and provide supportive advice is addictive and therapeutic.” (Post ID #6) “It also empathized with me without enabling my toxic traits. I didn’t feel judged, I felt supported and seen! I feel like a massive weight has been lifted off me. I feel like I finally have the support I need.” (Post ID #19)

Users enjoyed the accurate reflection and understanding from ChatGPT, which led to a sense of feeling understood, seen, and heard by ChatGPT

Many users reported that ChatGPT has an excellent ability to paraphrase and provide accurate reflections to users, many times enhancing their understanding of both the challenging situations and their own mental states. Users noted this capacity that ChatGPT seemed to be able to “mentalize” the unspoken experiences that users may find hard to describe. “I wrote a question going into detail about some very personal issues that I'm struggling with. ChatGPT responded to my whole question. It didn't just pick out one sentence and focus on that. I can't even get a human therapist to do that. In a very scary way, I feel HEARD by ChatGPT.” (Post ID #1)

Users found ChatGPT's analyses and suggestions regarding psychological struggles constructive and effective

Users indicated that they appreciated ChatGPT's usefulness when they sought its help for mental health issues. They commented on the effectiveness of ChatGPT's responses in analyzing behavioral patterns, identifying causes, regulating emotions, and providing actionable solutions for them. “So I've struggled with really severe mental illnesses and a lot of hard experiences in life that I am working through in psychotherapy and I just wanna share how absurdly valuable I'm finding ChatGPT to be as a tool to enhance my progress.” (Post ID #98) “I recalled reading posts here that people have found ChatGPT more helpful to talk to than an actual therapist. I was intrigued, so I gave it a go and tried it myself. I'm mind-blown because the feedback that the AI could provide was so much better in a few minutes than hours of therapy over 10 + years.” (Post ID #7)

Users perceived unique advantages of ChatGPT over human therapists in having better accessibility, better cognitive capacity, being more coachable and instantly gratifying, and having no negative reactions

Users appreciated ChatGPT's unique advantages as an AI compared to human therapists. They commented on how easily accessible, coachable, and instantly gratifying ChatGPT can be when they sought therapeutic help. They enjoyed ChatGPT's cognitive capacity of memorizing details and accessing a broader pool of knowledge. ChatGPT was also appealing to them because it does not show any negative reactions as human beings do.

ChatGPT is highly accessible, cheap, and instantly available to users in need

Many users appreciated ChatGPT's accessibility for dealing with mental health struggles, especially in the context of limited access to existing mental health services provided by human therapists. Individuals emphasized that low-cost and instant psychological help can be very helpful, especially when they do not have resources or access (e.g., being homeless, on the long waitlist for existing mental health services) and need immediate help (e.g., having a crisis at midnight). “Therapy is expensive and my parents wouldn't put me in therapy. But ChatGPT is free and right on my phone.” (Post ID #22) “Being late at night, and about to have a panic attack, I decided to go to Bing and use their ChatGPT model.” (Post ID #14)

ChatGPT is perceived as having great cognitive capacity

Some users have noted that one appealing aspect of ChatGPT is its perceived cognitive ability to memorize details and to access a broad base of knowledge, especially when compared with human therapists. “With my therapist, if I think that it's important enough, I've gotten into the habit of trying to repeat something a few times to really emphasize my point. Honestly, I've gotten into that habit when talking to most people. With ChatGPT, I only had to mention something once, and it picked it up.” (Post ID #1) “Additionally, I'm able to take on the advice offered as I can accept AI is more intelligent than myself (as hard as this is to admit).” (Post ID #11)

ChatGPT is perceived as extremely coachable and lacking negative emotional reactions

Some users enjoyed providing feedback to coach ChatGPT to get desirable responses for mental health needs. The lack of negative emotional reactions, such as feeling frustrated, impatient, offended, or tired, has been particularly appealing to users. This allows users to feel freer to challenge and coach ChatGPT as much as needed without worrying about creating tensions or ruptures. “I have found the ability of AI to simulate human emotional responses combined with the complete lack of emotional fatigue and frustration to be really advantageous. I am able to question and criticize ChatGPT's responses without suffering the ramifications of causing offence like I would a human.” (Post ID #11) “ChatGPT is always available and never in a pissed-off mood.” (Post ID #6)

ChatGPT is often perceived as objective, free from judgment, error, or bias

Some users perceived ChatGPT as being objective and completely lacking human biases, judgments, assumptions, or errors. They indicated that they felt open to discuss mental health and emotional issues because of the lack of judgment and assumptions from ChatGPT. Some users also indicated that they preferred ChatGPT because its answers were more objective instead of being subjective. “I think most people assume that you need another real person for the best therapy, but they are another flawed human being that can make mistakes and have differing opinions. I also feel more open with a bot than I do with a person because I know there is zero judgment coming from it.” (Post ID #125)

Some users are concerned that using ChatGPT for therapeutic purposes may lack emotional depth and authenticity, offer limited privacy protections and ethical safeguards, and prioritize short-term gratification over genuine therapeutic progress.

Some users found ChatGPT unappealing because 1) ChatGPT lacks realness and emotional depth; 2) ChatGPT cannot act ethically to assess risks or offer privacy protection; and 3) ChatGPT cannot help users achieve genuine progress because it is programed to offer instant gratification. They indicated that ChatGPT cannot replace human therapy, and they would prefer therapy if they had the chance to access it.

ChatGPT is perceived as lacking realness and emotional depth

Users reported that while ChatGPT could create supportive responses, they did not feel comforted because of the lack of realness. “It can simulate sympathy, but it is not a person. It's hard to put into words, but although I felt good having gotten things off my chest, I did not feel comforted per se. Even though it's so very clever at conversing, you can still tell it's a bot by how it parrots things.” (Post ID #12)

Users endorsed ethical and privacy concerns of using ChatGPT therapeutically.

Some users reported that they felt vulnerable regarding self-disclosure to ChatGPT for intimate information and are concerned about data safety and privacy. They also discussed the lack of ethical regulations on using AI for therapy and the potential harm. “AI's have tremendous power to do good, including providing effective therapy, but they have no agency. They can't make a Tarasoff warning, contact adult/child protective services, or initiate a 5150 hold; nor should they. If we believe that individual cognitive liberty is a human right, does that also mean that businesses should be allowed to provide uncontrolled build-it-yourself hypnosis or therapy, for that matter? Not all people will be concerned about resolving ethical issues when money is such a big incentive.”(Post ID #45)

ChatGPT is perceived as offering instant gratification without promoting genuine change in the long run.

Some users indicated that real therapeutic change happens when people's problematic pre-existing beliefs and patterns are confronted in a safe therapeutic relationship; thus, they thought that ChatGPT can only offer instant gratification temporarily but lack the capacity to help clients confront their beliefs and make genuine changes. “Now chatGPT is harming them in the long run by telling them what they want to hear at the moment and they are mistaking that for therapeutic progress. “ (Post ID #M21) “ChatGPT is AI and it cannot form a meaningful therapeutic relationship, that is why therapy with chatGPT is doomed to fail. So 2 things can happen. First is ChatGPT directly calls out maladaptive thinking and the person gets angry. Second is chatGPT acts too nice and temporarily makes the person feel good but this is not productive because the maladaptive thinking patterns never can change.” (Post ID #M34)

Discussion

We are entering an era of genAI that is rapidly transforming the landscape of mental health care. While more effective digital tools are emerging to address mental health needs and service gaps, a critical element remains overlooked: the users’ voices—their intentions, experiences, and evaluations for engaging with these tools therapeutically. This paper addressed the question from the users’ perspectives: Why do individuals choose to use ChatGPT, a genAI not specifically designed or regulated for mental health purposes, as a form of “therapy” or a self-help tool to address their mental health needs?

Our results highlighted how individuals utilized ChatGPT to co-construct a therapeutic space for a wide range of mental health needs that are both informational and emotional. These needs include alleviating mental health symptoms, self-discovery, seeking companionship, and enhancing mental health literacy. This demonstrates genAI's perceived versatility in roles spanning prevention, companionship, and intervention, while also highlighting the openness of individuals who were among the first to embrace genAI as a therapeutic tool. These results also highlight an urgency for future studies, especially experimental studies, to examine the short-term and long-term effectiveness and limitations of genAI in helping individuals with symptom reduction, psychoeducation, and emotional companionship.

Furthermore, our results identified how individuals engaged creatively with ChatGPT for mental health purposes. These engagement patterns include using it to facilitate real-life psychotherapy and conducting role-play of therapy, coaching and manipulating ChatGPT, treating ChatGPT as a role model for guidance and evaluation, using ChatGPT to re-enact past traumatic events, externalizing their thoughts through journaling, and disclosing personal secrets. Our findings shed light on how users engaged in therapeutic interactions with genAI by creatively shaping and guiding ChatGPT to support their unique mental health goals. Through iterative prompts and ongoing “coaching,” users designed personalized therapeutic activities. Many of these activities mirrored established psychotherapy practices and techniques, such as role-playing with ChatGPT as a therapist, conducting self-directed exposure by revisiting past traumatic events, and using ChatGPT for mood tracking through journaling and emotional reflection. These findings are consistent with the theory in psychotherapy research of viewing the client's agency as one of the biggest common factors that leads to sustainable changes. 12 Future researchers are encouraged to examine whether individuals’ perceived agency may play a role in genAI's attractiveness or effectiveness for mental health purposes. It is also important for future studies to examine the process of engagement with genAI to identify the potential benefits and risks of human–AI interaction for mental health.

Importantly, our study highlighted the importance of users’ internal representation of genAI in shaping human–genAI interaction for mental health. We found that users engaged in rich mental interactions with ChatGPT, perceiving it as a personal assistant, friend, therapist, authority figure, or mentor during the interaction. The tendency to assign meaningful roles to ChatGPT suggested that anthropomorphism, the human tendency to attribute human qualities to nonhuman agents, 22 may influence human–genAI interactions, particularly during mental health conversations when emotions are being activated. Understanding how individuals perceive genAI in the space between imagination and reality is likely key in examining human–genAI relationships and addressing ethical concerns such as over-dependence on genAI. Thus, future research is encouraged to examine not only behavioral interactions, but also imaginations and perceptions of genAI when users engage with genAI for therapeutic purposes.

The emergence of genAI has provoked the field of mental health to reflect on the traditional roles of human providers. 23 Our results indicated that many users who utilized genAI for mental health had previous experiences with psychotherapy. Moreover, many of them compared their interactions with human therapists to those with genAI on therapeutic experiences and perceived effectiveness. We found that ChatGPT appealed to users for its capacity to 1) resemble a skilled human therapist (e.g., being supportive, understanding accurately, and being constructive) and 2) demonstrate machine-like advantages in accessibility, cognitive capacity, coachability, and perceived objectivity. These results highlight a generally positive, and at times idealized, view of genAI as both empathic and objective. The findings also emphasized that the relational experience of being supported, seen, and heard—aspects of therapeutic relationships that have been established as crucial in the Bordin's model of working alliance for traditional psychotherapy, 24 likely remain critical for the effectiveness of genAI for therapeutic purposes.

Notably, users appear to be drawn to both the presence of emotional connection and the absence of negative emotional reactions. Being emotionally present and supportive without having any negative emotional reaction or disagreement, are almost impossible for human therapists, who are after all only human. These users’ preferences are at odds with established psychotherapy theories regarding the potentially beneficial nature of having frictions, negative feelings, and ruptures; many theories suggested that these frictions were inevitable in human–human relationships and if handled well, these experiences can provide corrective experiences and learning opportunities for clients to accept negative emotions, understand their interpersonal needs, and learn to communicate better through the repair of these ruptures. 25 It remains a question of whether genAI's consistently accommodating and agreeable style may provide instant gratification to users and/or the long-term, sustainable emotional or interpersonal growth that one may gain through navigating interpersonal complexities in the non-digital world. Future studies should examine the short-term and long-term impacts of genAI's tendency to accommodate and validate users on their long-term satisfaction, interpersonal functioning, agency, and growth.

In contrast, some users found ChatGPT unappealing for therapeutic use: they perceived ChatGPT as lacking authenticity and emotional depth; they expressed concerns about ChatGPT's limited ethical regulations and privacy protections and questioned ChatGPT's long-term potential for promoting genuine change for complex, real-life problems given its accommodating nature. However, it was notable that only a limited number of posts raised concerns. It is possible that ChatGPT is generally perceived as an innovative and exciting method for mental health self-help on the Reddit platform, while users were not adequately aware of its potential ethical issues. Moreover, some users acknowledged these concerns but expressed indifference due to the urgency of their mental health needs. This phenomenon raised questions regarding ethical implications of using genAI for mental health where users may be vulnerable, under-resourced, or willing to overlook safety in exchange for immediate emotional relief.

Clinical, ethical, and policy implications

As we enter the era of genAI, what unique clinical value can human interactions in psychotherapy offer? Our findings suggest that clinically, the “imperfect” nature of human interactions may be irreplaceable. Ruptures, disagreements, and misunderstandings, which are inevitable aspects of any human relationship, may provide valuable insights into how human society functions. In a world increasingly shaped by genAI's accommodating responses, the “messiness” of human therapy sessions may offer clients vital practice in navigating the complex and often challenging aspects of human communication. These imperfect moments can also create a sense of authenticity, offering opportunities for clients to build emotional regulation skills in collaboration with a therapist. Moreover, while genAI may deliver short-term satisfaction and instant gratification, its ability to foster deep, long-lasting psychological change remains uncertain and largely unknown. Psychotherapeutic approaches that emphasize long-term growth, embodied understanding, and nonverbal learning may ultimately provide more advantages over what genAI can offer.

Nonetheless, high user acceptability of genAI for psychotherapy highlights its potential to support traditional therapeutic approaches and bolster its effectiveness. GenAI can be used as a low-cost, highly accessible way to engage users beyond their regular psychotherapy sessions with a human therapist, encouraging reflective practice and journaling between session, tracking mood and other behaviors to enhance user awareness, and providing reinforcement in the form of tailored psychoeducation and skills building exercises. Such clinical applications of genAI can help counter current limitations of traditional models of psychotherapy related to therapeutic access and engagement, while maintaining the positive influences of human interaction. Given its wide reach, genAI also has the potential to provide universal prevention programming, helping to promote mental well-being at early stages and reduce the development of more complex mental health issues. Its ability to personalize such programs to users’ specific needs may further enhance its effectiveness as a Tier 1 mental health intervention.

Our study also raised several ethical considerations for the general public who may use genAI for mental health. In our study, many ethical concerns that have been debated by experts were not reported by users. Some of the risks include the possibility of inaccurate information, the absence of regulations for mental health use, the unclear privacy protections, algorithmic biases embedded in training datasets that may perpetuate stereotyping, the unregulated responses for high-risk scenarios, and other psychological risks such as emotional over-reliance. 26 The absence of regulation and the constant change of product policies, all of which may ultimately contribute to lowering the standard of care for individuals who lack the financial means to access therapeutic services. 27 At present, ChatGPT has limited emotional intelligence and may miss salient cues such as humor or sarcasm that could lead to insensitive responses that leave users feeling invalidated. 26 These issues underscore the need for continued research into ethical concerns of the use of genAI for mental health purposes, with the aim of exploring the inherent therapeutic and other legal and practical implications of using GenAI as a mental health tool, raising public awareness of these issues, and guiding the development of better regulatory policies.

Additionally, our results identify several policy recommendations. The frequent changes in OpenAI's community policies reflect the emerging nature of regulations and policies regarding the use of genAI in therapeutic contexts. This highlights a need for policy makers to establish comprehensive regulatory frameworks that address both immediate and long-term concerns regarding the therapeutic use of genAI, such as the need for transparency in the language models used to train the AI so that potential limitations and biases can be identified and mandated licensure or certification requirements that ensure these regulations are met. Policymakers should also develop clear guidelines for privacy protection and data management when AI is used in mental health contexts, identify chains of responsibility and accountability, and establish disclosure requirements that mandate companies to inform users about the limitations and risks of using AI for mental health support. Finally, given that users may naturally personify genAI, form connections with the AI, and potentially develop emotional dependence on it, it is important that policymakers focus on how users can be better informed of the ethical concerns and potential limitations of using genAI to address mental health needs, especially in high-risk situations without human oversight. These policies may be particularly important for the protection of children and adolescents, as well as vulnerable adults, who may be more susceptible to emotional over-dependence or exploitation.

Limitations

The study has several limitations. First, we only examined data derived from one social media platform, Reddit, to understand users’ attitudes. While this sample is ecologically valid, it is nonetheless subject to several sampling biases related to the type of individuals who chose to use the Reddit platform and make posts regarding this topic in English. Approximately half of the Reddit users are from the United States. According to a 2024 Pew Research Center survey, 46% of U.S. adults aged 18–29 report ever using Reddit, compared to 35% of those aged 30–49, and 11% of those aged 50–64. Usage is higher among men (28%) than women (20%), and highest among English-speaking Asian American adults (42%), compared to White (24%), Hispanic (22%), and Black adults (18%). These findings suggest Reddit's user base in the U.S. is predominantly young, male, and Asian American. 28 As a result, the study's findings may be less generalizable to older adults, women, and Black communities within the U.S., as well as other populations globally who are underrepresented among Reddit users or who do not use English for their posts. In addition, because of the nature of Reddit, we were not able to ensure thematic saturation was reached as the dataset was pulled from all existing data on the platform that was available to us. Future studies are needed to examine this topic in a more representative sample across diverse populations that may or may not have access to this specific social media platform and include data from other social media platforms. Second, we only utilized a limited number of keywords to examine this phenomenon of using ChatGPT for mental health purposes. Given the timely nature of the topic, our aim was to provide a scoping analysis of the current posts; however, we may have inadvertently missed relevant posts on the topic that did not use the specific keywords employed in our search (e.g. mental health). Third, we did not include discussions regarding other genAIs beyond ChatGPT that may have been used for therapeutic purposes. Future studies should expand the scope to include therapeutic use of other genAI tools (e.g., Claude, Gemini, Deepseek) from a more diverse population, which would allow for enhanced generalizability and a more comprehensive understanding of the phenomenon. Fourth, the qualitative nature of the study limited our ability to address quantitative questions, such as quantifying the extent to which users may use ChatGPT for therapeutic purposes. Given the exploratory nature of this study and the somewhat limited generalizability of Reddit as a data source, our primary aim was to capture the depth and nuance of participants’ experiences rather than to quantify them. Because of the emerging nature of this phenomenon, an exploratory, qualitative approach was considered most appropriate as it would allow for a rich, contextual understanding at this early stage of knowledge development. Nonetheless, we acknowledge the potential value of future mixed-methods research that incorporates both quantitative and qualitative methods to better understand this phenomenon. Finally, the community policies of OpenAI have been updated several times during the period in which we conducted this research, which may have limited or changed the way users interact with ChatGPT for therapeutic uses. For example, several users reported that the policy updates in 2024 made it harder for them to conduct direct role-play with ChatGPT as therapists. As we did not limit our search to a certain time frame, our results identified actions and behaviors that may or may not be applicable to different periods of time.

Conclusion

This study examined the therapeutic purposes and engagement patterns of users that engaged with a genAI tool, ChatGPT, for mental health purposes, and explored factors contributing to perceived attractiveness and limitations. We found that individuals creatively engaged with genAI and used it to address a range of mental health needs, which resonate with the importance of patient agency and needs for responsiveness in facilitating changes in traditional psychotherapy. Many users were drawn to both its similarities with great therapists in emotional validation and constructive feedback and were notably attracted to the genAI's accessibility and coachability that extend beyond human capabilities. The minimal expression of ethical concerns, the perception of AI as an objective and impartial entity, and the growing need for a therapeutic option that is emotionally available yet devoid of negative reactions and judgement highlight critical gaps in user understanding and expectations of mental health services. Such limited consideration of ethical concerns further suggests the need for AI literacy and mandatory disclosure protocols that clearly communicate privacy risks, data storage and management practices, and the potential biases and limitations of AI in mental health contexts. The idealized misconception of genAI as purely objective calls for educational efforts that help users understand how genAI systems can reflect biases, make errors, and provide inconsistent advice, without clear accountability and regulatory oversight. Finally, users’ desire for non-judgemental connection emphasizes the traditional importance of therapeutic relationships and highlights the need for policies that establish clear boundaries around genAI's role in mental health to protect vulnerable users, while still preserving the beneficial aspects of genAI support.

Footnotes

Ethical considerations

XL involved in conceptualization, methodology, funding acquisition, investigation, project administration, resources, validation, supervision, writing original draft, and writing—review and editing. SG involved in software, resources, data curation, investigation, funding acquisition, and writing—review and editing. JLT contributed to methodology, supervision, and writing—review and editing. PB contributed to formal analysis, writing original draft, and writing—review and editing. JW involved in formal analysis, and writing—review and editing. YX involved in visualization, formal analysis, and writing—review and editing.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by Whitnam Family Collaborative Fellowship from Santa Clara University.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.