Abstract

Objective

This study presents a pilot randomized controlled trial to assess the usability, feasibility, and initial efficacy of a mobile app-based relational artificial intelligence (AI) chatbot (Exerbot) intervention for increasing physical activity behavior.

Methods

The study was conducted over a 1-week period, during which participants were randomized to either converse with a baseline chatbot without relational capacity (control group) or a relational chatbot using social relational communication strategies. Objectively measured physical activity data were collected using smartphone pedometers.

Results

The study was feasible in enrolling a sample of 36 participants and with a 94% retention rate after 1 week. Daily engagement rate with the AI chatbot reached over 88% across the groups. Findings revealed that the control group experienced a significant decrease in steps on the final day, whereas the group interacting with the relational chatbot maintained their step counts throughout the study period. Importantly, individuals who engaged with the relational chatbot reported a stronger social bond with the chatbot compared to those in the control group.

Conclusions

Leveraging AI chatbot and the relationship-building capabilities of AI holds promise in the development of cost-effective, accessible, and sustainable behavior change interventions. This approach may benefit individuals with limited access to conventional in-person behavior interventions.

Clinical trial registrations

ClinicalTrials.gov; NCT05794308; https://clinicaltrials.gov/ct2/show/NCT05794308.

Introduction

Physical activity promotion has faced challenges in achieving population-wide reach and long-term behavior maintenance due to the lack of access to and high cost of in-person counseling and interventions. 1 Despite efforts to raise awareness and involve communities for physical activity programs, 80% of US adults currently fail to meet recommended physical activity guidelines, contributing to increased health risks and healthcare expenditures.2,3 The limited accessibility of in-person counseling and programs has necessitated cost-effective, scalable, and sustainable intervention alternatives.4–6

Recent advancements in artificial intelligence (AI), natural language processing, and machine learning have enabled AI chatbot-based physical activity interventions to emerge as a promising solution. AI chatbots are intelligent dialogue systems designed to simulate human communication through text, speech, or both. Chatbots can be incorporated into mobile applications, providing round-the-clock communication with users.7,8 Chatbots have the potential to offer cost-effective, accessible, sustainable, and personalized health interventions tailored to individual needs. 9

Research has suggested AI chatbots can provide dependable, humanlike interactions in healthcare settings6,10,11 and AI chatbots can promote lifestyle modifications, such as physical activity and healthy diets.12–14 For example, To and colleagues 14 found a machine learning-based chatbot integrated with Fitbit increased users’ average daily steps by 627 after 6 weeks of intervention.

Despite evidence suggesting AI chatbot's potential efficacy, little attention has been paid to evaluating human perceptions toward AI chatbots in the context of health behavior interventions. 13 Specifically, whether human users can develop trust and positive relational perceptions toward AI systems is greatly relevant in determining the acceptance and quality of AI chatbot interventions, which will gain increasing prominence in the next decade. Thus, it is crucial to investigate whether AI chatbots can elicit and establish positive relationships with users, as health outcomes often depend on long-term patient–provider relationships (e.g., counselors).15,16

Considering the limited research on the effectiveness of AI-chatbot-based physical activity interventions and human–chatbot relationships in healthcare contexts, this study presents a “relational AI chatbot,” named Exerbot, using relationship-building strategies to enhance an evidence-based physical activity intervention via a mobile application. This paper reports the feasibility, usability, and initial efficacy of a 1-week chatbot intervention on increasing objectively assessed physical activity data.

Literature review

Artificial intelligence (AI) has emerged as an innovative and essential technology that reforms how we analyze information and improve decision-making. The recent advancements in AI and its applications have shown significant progress in healthcare. For instance, AI-driven chatbot-based interventions have been used as viable strategies for enhancing public health communication. AI chatbots are conversational agents that use AI and machine learning technologies to mimic human interactions through engaging in natural language interactions with users. 7 These chatbots offer cost-effective, accessible, sustainable, and personalized health interventions that are tailored to individual needs, 9 promoting positive health behavior change.12,13 Oh and colleagues 13 discovered that out of seven studies on physical activity outcomes, five demonstrated statistically significant improvements. For instance, in Maher et al., 9 participants conversing with an AI chatbot Paola while using a step tracker increased their physical activity by an average of 109.8 minutes per week at 12 weeks. Yet, while most studies report improvement in health behavior change in the short-term, or evaluate outcomes immediately before and after interventions, a systematic review by Han and colleagues 17 finds that there is limited evidence for the feasibility and acceptability of chatbots in promoting nutrition and physical activity behaviors, particularly regarding adherence. This highlights the challenge of maintaining behavioral changes over time and points to a need for further research on how chatbots can leverage behavioral theories and persuasive strategies to enhance sustained engagement.

Despite the potential benefits of AI chatbots, further challenges and limitations exist. For instance, previous studies18,19 discuss that the existing digital therapeutic interventions with didactic components have encountered several challenges, including relatively low adherence, unsustainability, and inflexibility. In addition, when it comes to the feasibility, acceptability, and usability of AI chatbots in various healthcare applications, findings are mixed. In a systematic review, Aggarwal and colleagues 12 found that evidence on the safety and feasibility of chatbots was limited, with only a small percentage of studies reporting on these metrics. In addition, the acceptability of chatbots was found to be less than ideal, with fewer than 50% of participants expressing satisfaction. 12 This aligns with previous systematic reviews that highlight the challenges in achieving high levels of user acceptance and engagement over time.20,21 The usability of AI chatbots also varied across studies, with some reporting positive experiences in terms of efficient support and content reliability, while others noted an ambiguity in the content, technical difficulties, such as limited responsiveness and failure to understand user inputs, and a need for improvement in quality of recommendation provided by AI chatbots for feasible implementation. 12

Moreover, only a few studies have investigated the specific design considerations that could improve relational qualities. For example, previous works have shown that digital emphatic agents are perceived as more caring, sympathetic and trustworthy than agents without empathic abilities, 22 and that changing the initiative to be more proactive, such as through small talk, seems to be welcomed and contributes to building close relationships by users.23,24 More rigorous research needs to be conducted to explore the development of AI-based chatbots that are designed to foster a sense of warmth, empathy, and personal connection with users. By creating chatbots that are not only functionally capable, but also emotionally and socially relational, researchers may be able to address the current limitations in usability and acceptability, improving the overall effectiveness of the conversational AI systems in healthcare.

Therefore, the primary goal of this study is to examine the feasibility of AI chatbot-based physical activity counseling and see whether users find this usable. Specifically, we explore the relational chatbot's influence on behavioral engagement and usability perceptions. Research suggests that user engagement and satisfaction can be enhanced by incorporating humanlike qualities into computer systems or interfaces. 25 As a result, it is essential to investigate how chatbot's relational behaviors may impact user engagement outcomes when delivered via a mobile app, combined with the availability of behavioral data.

Additionally, in chatbot-based health interventions, chatbots’ relational behaviors can increase the alliance between chatbots and users, helping users to adhere to chatbot's health advice. Cumulative evidence in clinical psychology, counseling, and coaching has acknowledged the positive impact of strong physician–patient relationships on psychotherapeutic outcomes. 26 When patients and physicians establish collaborative relationships, patients are more inclined to perceive treatment value and feel empowered toward behavior change and adherence.27–29 Physician–patient relationships, defined by mutual liking, trust, and belief in achieving desired outcomes, have been consistently linked to critical treatment outcomes like patient adherence.27,30–32

Based on this idea, previous research has utilized physicians’ socio-emotional and relational communicative behaviors, such as empathy, to incorporate into chatbots and promote human–chatbot relationships. 15 For example, studies have demonstrated the effectiveness of conversational agents’ verbal relational behaviors, such as social dialogue, 22 self-disclosure, 33 empathy, 34 meta-relational communication, 35 and humor, 15 in fostering positive relationships in health and well-being contexts. It has been shown that chatbots employing relational behaviors, such as social support and self-disclosure, are more likely to establish positive human–chatbot relationships such as therapeutic alliance, enhance continued engagement, and increase physical activity.36,37 Therefore, as a secondary objective, this study examines the initial efficacy of an AI chatbot-based intervention, focusing on the potential of the relational chatbot in enhancing human–AI relationship perceptions as well as physical activity outcomes.

Methods

Chatbot (Exerbot) development and app design

The chatbot, named Exerbot, was developed using a hybrid approach, combining rule-based methods and a fine-tuned language model. Its structure consisted of two main components: a topic proposer and a dynamic response generator. The topic proposer used predefined templates designed for physical activity intervention. The dynamic response generator is composed of a generation-based model and a retrieval-based question handler. The generation-based model addresses users’ general inquiries and acknowledges their responses. To generate plausible and natural responses, it was built with a conversational generation model, Blender, 38 which is trained on large amounts of human–human conversation data and is adjusted to learn several conversation skills from humans. To handle topics relevant to physical activity intervention, the model was initially trained on large-scale human–human conversation data and further fine-tuned using transcripts from 105 in-person physical activity counseling sessions collected from the mobile phone-based physical activity education program (mPED) study.39–41 The question handler is a retrieval-based question-answering module built with a custom database to address the most frequently asked questions related to physical activities. During each dialog turn, the chatbot generated a response using the dynamic response generator and concatenated the response with the template from the topic proposer, ensuring both contextual relevance and adherence to physical activity intervention strategies (see 42 for a detailed description of the dataset). The physical activity interventions in the topic proposer were designed based on Social Cognitive Theory. 43

Then, the Exerbot app was designed for research purposes to offer educational counseling sessions to encourage individuals to enhance and sustain their physical activity levels. As illustrated in Figure 1, the Exerbot presents an instruction page (left) that provides general information about the study, allowing participants to understand and explore the app's features. The chatroom interface (middle) provides a space for participants to engage in daily conversations with Exerbot. Lastly, the app also features a dashboard displaying daily and weekly step counts, as shown in Figure 1 (right). The app interface and chatbot were prototyped by researchers, with a professional app developer handling the front-end and back-end programming, as well as the deployment of the app. Exerbot app supported in Apple's iOS to reach iPhone users.

Exerbot app: Home page and study overview (left), chatroom with Exerbot (middle), and daily and weekly step report (right).

Upon installation of the app, Exerbot greets the participant and commences the initial educational session. Over the course of 7 days, the app conducts daily sessions on physical activity while tracking the user's progress via the smartphone's built-in pedometer. Exerbot offers visualizations of the past 7 days’ step counts and encourages participants to consistently monitor their progress through daily reminders. Each time Exerbot initiates a conversation or sends a reminder, a push notification appears on the participant's smartphone status bar and lock screen (e.g., “Time for a quick check-in! Go to Stats tab to track your daily step progress”), prompting them to engage with Exerbot or assess their physical activity status.

Study design and sample

A pilot randomized controlled trial (RCT) with two groups was conducted. Data were collected via the Exerbot app on participants’ smartphones and from pre- and postsurveys. Eligibility criteria included being: (1) 18 years or older, (2) able to read and speak English, (3) residing in the United States, (4) iPhone users, and (5) inactive (i.e., not meeting 10,000 daily steps). Eligible participants who completed the presurvey were invited for the app-based intervention. They received information about study goals and procedures and, if willing to participate, were given a study link to enroll. After enrollment, they were instructed to download and install the Exerbot app. Upon signing up, participants enabled pedometer access and allowed push notifications. Participants were randomly allocated to either the relational chatbot condition or nonrelational (control) chatbot condition version of the app. Researchers and participants were blinded to treatment allocation. The Exerbot initiated the first educational session, and participants viewed a graph of their past 7-day step counts. The intervention lasted 7 days, with a postsurvey on the final day. Daily conversations began at 10AM, and progress reminders were sent at 8PM.

This study received approval from the authors’ Institutional Review Board (IRB; approval number: 2033031-1), ensuring adherence to ethical guidelines and standards in academic research. Participants provided electronic informed consent before they started the eligibility screening survey. They were presented with a comprehensive consent page detailing the study's purpose, procedures, potential risks and benefits, and the voluntary nature of participation. Consent was obtained by requiring participants to actively click a button indicating their agreement after they read the full consent form and before they proceeded to the eligibility screening survey. This process ensured informed and voluntary participation while maintaining participant anonymity, as no personally identifiable information was collected.

Intervention components and randomization

Daily conversation modules

On day 1, Exerbot gave an overview of the study, talked about the benefits of physical activity, and set a personalized physical activity goal. The short-term goal was set to increase step counts by 20% compared to the participant's average step counts of the previous 7 days, which were accessed via the smartphone's pedometer activity history upon app installation. The long-term goal was set to maintain 10,000 steps per day, which approximates the World Health Organization's physical activity recommendations. 44 On day 2, Exerbot identified the participant's barriers to physical activity and ways to overcome them. On day 3, Exerbot talked about establishing a physical activity routine and coordinated with participants to create a personalized physical activity routine. On day 4, Exerbot talked about social support in physical activity and asked participants to identify people who can check in and offer support. On day 5, Exerbot provided information on how to prevent relapse and keep oneself motivated. On day 6, Exerbot provided education sessions about diet and weight maintenance. Lastly, on day 7, a discussion about safety was provided. In the closing statement, Exerbot informed participants to complete the postsurvey.

Manipulation of relational and nonrelational chatbot conditions

Upon signing in, participants were allocated to one of two chatbot conditions: relational or nonrelational (control). In the relational chatbot condition, five relational cues were incorporated into the daily chat sessions provided by Exerbot. Conversely, the nonrelational (control) chatbot condition involved daily educational sessions without any inclusion of relational cues. Specific expressions for each relational cue were extracted from mPED trial counseling sessions as well as dialogue examples provided in the previous literature.15,36,37,45

The five relational cues are described as follows. Social dialogue included informal greetings (e.g., “Good morning! How are you? Are you ready to get started for today's chat session?”) and farewells (e.g., “Keep up the good work! Talk to you again tomorrow!”), which were designed according to the day of the intervention to provide feelings of continuity. Self-disclosure included responses that disclosed information about the chatbot's experiences and corresponding feelings related to the daily educational content (e.g., Social support-related content; “For example, I have a friend named BodBot who offers me support and I'm grateful for that”). Empathy included emotional responses such as “I understand how frustrating it is to…” when the user disclosed information relevant to potential barriers or struggles in physical activity. Meta-relational communication included expressions to check on the state of the conversation (e.g., “How do you feel our conversation is going so far?”). Lastly, humor included exercise-related jokes (e.g., “Do you know what is a sloth's favorite form of exercise? Running late!”). To ensure agent variability, multiple expressions for each relational cue were implemented in the chatbot.

Outcome measures

To evaluate feasibility and usability as our primary objective, (1) retention, (2) chatbot usability, and (3) user engagement were measured. Retention was measured by the number of participants who completed the postsurvey compared with those who finished the baseline questionnaire. Chatbot usability and user satisfaction were measured using technology-based intervention metrics developed in previous studies.46–48 Chatbot usability was measured by a seven-item scale (M = 3.97, SD = 0.51, α = 0.70) (e.g., “Chatbot responses were useful, appropriate, and informative.”). User satisfaction was rated on a five-point scale ranging from “very dissatisfied” to “very satisfied” (single-item; M = 3.71, SD = 0.84) (single-item; “In general, are you satisfied with the chatbot?”).

User engagement was measured through objective measures such as total days of completed chat sessions and participants’ daily average message length (i.e., word count). Additionally, we investigated the level of cognitive engagement to understand user interaction with the chatbot. Specifically, we explored how participants’ language use varied when interacting with two different types of Exerbots. Linguistic Inquiry and Word Count (LIWC) was used to quantify various dimensions of language use and analyze these differences. 49 Specifically, we focused on the use of pronouns, prepositions, auxiliary verbs, adverbs, cognitive processing words, causations, motion words, and expressive punctuations, such as exclamation marks and question marks.

Individual's writing styles and syntactic choices can reveal the depth and complexity of the cognitive processes. According to Tausczik and Pennebaker, 50 complex language use is characterized by prepositions, cognitive mechanisms, and words greater than six letters, which captures cognitive depth. For example, prepositions allow for more concrete information about a topic, enhancing the clarity. Similarly, the use of causal words (such as “how,” “because,” “why”) and insight words (such as “think,” “know,” “feel”), which are subcategories of cognitive mechanisms, demonstrate the active process of reappraisal. 50 Furthermore, nouns and other modifiers such as adverbs and adjectives are used more to form sentences with high intensity, leading to clearer message perception. 51 As such, the use of complex sentences can be indicative of the communicator's willingness to interact and engage with the other entity. In sum, analyzing various dimensions of users’ language use can reveal how different chatbot interaction styles influence users’ language output, thereby providing insights into the preferences and tendencies of users.

To measure human–chatbot relationships as our secondary objective, therapeutic alliance (TA) was used for relationship evaluation. TA consists of three subcomponents: bond, goal, and task. A strong TA in human–chatbot interaction will reflect a well-functioning relationship where the human user has positive attachments toward the chatbot, endorses the mutual goal, and accepts the task.15,52,53 Example items for bond, goal, and task were “I believe the chatbot likes me,” “the chatbot and I are working towards mutually agreed upon goals,” and “what I am doing in this physical activity conversation gives me new ways of looking at my problem,” respectively (combined; M = 3.99, SD = 0.52, α = 0.79).

Lastly, physical activity outcomes were assessed through both objective and subjective metrics:54–56 (1) daily and weekly step counts obtained from a smartphone (2) daily and weekly step goal achievement (i.e., 20% increase from baseline), and (3) poststudy physical activity intention, measured by a three-item scale (M = 3.86, SD = 0.97, α = 0.88). Step count information was obtained from participants’ smartphones.

Statistical analyses

Feasibility, usability, and participants’ sociodemographic characteristics were analyzed descriptively, using means, standard deviations, frequencies, and percentages. To evaluate the differential effects of relational and nonrelational (control) chatbots on therapeutic alliance (TA) and physical activity outcomes such as step goal achievements, independent t-tests were conducted.

In addition, to assess changes in physical activity during the intervention period, step count data were collected using pedometers over a 14-day period (7 days preintervention and 7 days during the intervention). The average of the 7 days preceding the intervention was calculated to establish a baseline step count. To analyze the data, we employed a linear mixed-effects model, with the baseline and the subsequent seven timepoints recorded each day as dependent variables. The model included participants as random intercepts to account for within-participant correlations of repeated measures. Fixed effects comprised chatbot conditions, seven daily time indicators, and chatbot condition-by-time indicator interactions. The model was fitted using restricted maximum likelihood (REML) estimation. Analyses were conducted using the lme4 package in R, with a total of 285 observations from 36 participants included in the model. All statistical analyses were conducted using SPSS version 23.0 and R software. Statistical significance was defined as P < .05 for all tests.

Results

Recruitment and retention

As summarized in Figure 2, initially, 222 participants signed up for the study. They were informed that this was a two-part study, consisting of an eligibility survey and an app-based intervention. After eligibility screening, 165 (74.3%) were deemed eligible and invited to join the app-based intervention. Out of 38 who signed up to engage in the app-based intervention, two did not register in the mobile app, resulting in 36 enrolled participants. Twenty-one were randomly assigned to the relational chatbot condition, while 15 were assigned to the nonrelational (control) chatbot condition. Retention after the 1-week intervention period was 94.4%.

Consort flow diagram of study participants.

Participant characteristics

On average, participants were aged 21.0 years (SD = 6.27), and 75.0% were women. 58.3% were Asian or Pacific Islander, 19.4% were Hispanic, 8.3% were White, and 13.9% were mixed race. The majority of participants (72.2%) had experience using chatbots before. Baseline daily steps were 4059.40 (SD = 3838.43).

Primary outcomes: engagement and usability

To measure the level of engagement across the 7-day intervention, participants in the relational chatbot condition completed daily chat sessions on 6.19 days (SD = 1.17), whereas participants in the control condition completed the daily chat sessions on 6.73 days (SD = 0.46). Both conditions demonstrated over 88% daily engagement, indicating that intervention was feasible. Although only marginally significant, participants in the relational chatbot condition had a total average word count of 192.90 (SD = 109.01), while those in the control condition had a total average word count of 138.73; t(30.87) = 1.97, P = .058. This suggests that participants who interacted with a chatbot that engages in relational behaviors tended to respond in lengthier messages.

Overall, participants deemed the Exerbot to be usable (M = 3.97, SD = 0.51), and were satisfied with the system (M = 3.71, SD = 0.84), but were relatively reluctant to continue using the system after the intervention period (M = 2.47, SD = 0.93). Usability, satisfaction, and continued engagement intention did not differ by the two conditions (P > .05).

As presented in Table 1, findings revealed significant differences between the two chatbot conditions. Participants who interacted with the relational chatbot were more likely to use first-person pronouns (P = .030), prepositions (P = .039), auxiliary verbs (P = .005), adverbs (P = .002), and question marks (P = .013) compared to those who interacted with the nonrelational (control) chatbot. In addition, the participants used more cognitive processes (P = .022), insights (P = .035), and causation words (P < .001). While showing marginal significance (P = .058), participants in the relational condition showed higher frequency in total word count as well as average words per sentence. In addition, they used more clout (i.e., language of leadership, status), and authentic (i.e., perceived honesty, genuineness) words.

Comparison of linguistic characteristics of user responses between relational and nonrelational (control) chatbot conditions.

Note. #P < .1, *P < .05, **P < .01, ***P < .001.

In contrast, participants interacting with the nonrelational (control) chatbot exhibited a higher frequency of negations (P < 0.001) and assent (P = .043) compared to those who interacted with the relational chatbot. Although the differences were only marginal (P < .1), participants in the control condition tended to use more affect-based words, express positive emotions, and employ informal language.

Secondary outcomes: human–chatbot relationship and health behavior

Regarding human–chatbot relationship outcomes of the relational and nonrelational (control) chatbot conditions, there was no significant difference in the overall therapeutic alliance. However, a marginally significant difference emerged in the “bond” dimension (P = .081). Participants interacting with the relational chatbot perceived a stronger social and emotional bond with the chatbot (M = 4.49, SD = 0.49) compared to those engaging with the control chatbot (M = 4.18, SD = 1.09).

Throughout the intervention period, participants increased their steps, with the relational chatbot condition showing an average increase of 392.62 steps from a baseline of 3882.61 (SD = 1740.50), and the nonrelational (control) chatbot condition an increase of 494.01 steps from a baseline of 4306.93 (SD = 2306.64). Despite the control chatbot condition showing a greater increase in steps than the relational chatbot condition, this difference was not found to be statistically significant. Furthermore, participants in the relational chatbot condition, on average, achieved their step goals (i.e., a 20% increase from baseline steps) on 2.7 days per week, while those in the control condition met their step goals on 3.14 days per week. A marginal effect of the relational chatbot condition on participants’ self-reported PA intention was observed. Participants interacting with the relational chatbot reported higher levels of PA intention (M = 4.10, SD = 0.97) compared to those engaging with the control chatbot (M = 3.52, SD = 0.89), P = .088.

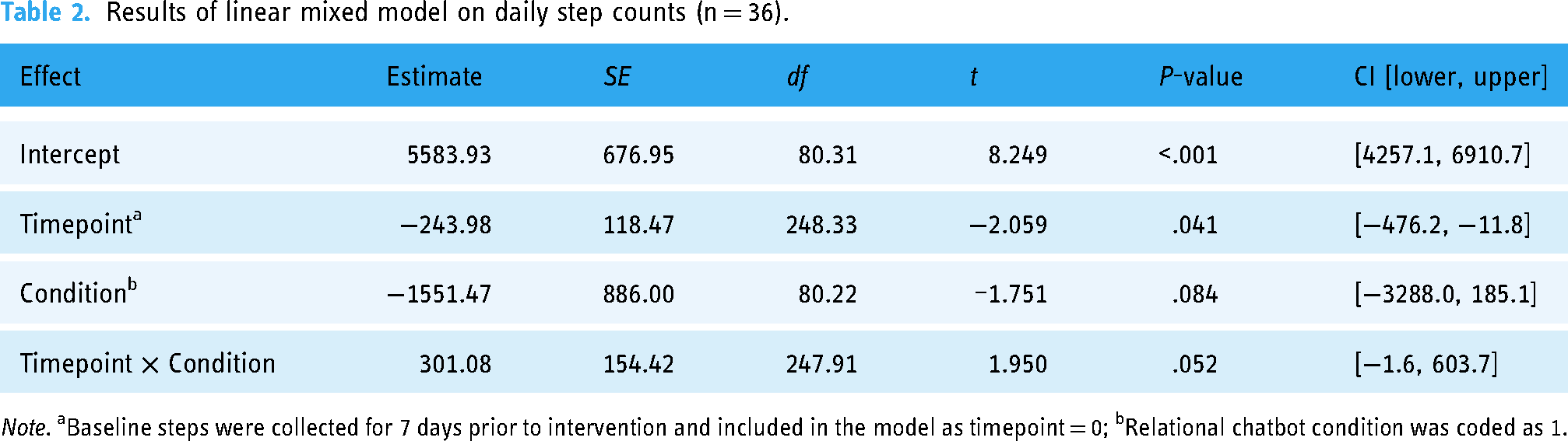

To capture a more nuanced trend across the time points on daily step counts, a linear mixed-effects model was conducted to examine the impact of timepoint and relational condition on the number of steps taken, with a random intercept included for subjects to account for individual variability. As presented in Table 2, the analysis revealed a significant main effect of timepoint (estimate = −243.98, SE = 118.47, t(248.33) = −2.059, P = .041, 95% CI: [−476.2, −11.8]), indicating that the number of steps decreased over time. The main effect of the relational condition was marginally significant (estimate = −1551.47, SE = 886.00, t(80.22) = −1.751, P = .084, 95% CI: [−3288.0, 185.1]), which indicated that those in the relational condition took fewer steps compared to those in the control condition. Interestingly, there was a marginally significant interaction between timepoint and relational condition (estimate = 301.08, SE = 154.42, t(247.91) = 1.950, P = .052, 95% CI: [−1.6, 603.7]). This suggests that the decline in steps over time was less pronounced in the relational condition, highlighting a potential mitigating effect of the relational chatbot on the reduction in physical activity levels over time.

Results of linear mixed model on daily step counts (n = 36).

Note. aBaseline steps were collected for 7 days prior to intervention and included in the model as timepoint = 0; bRelational chatbot condition was coded as 1.

Figure 3 further showcases the changes in daily steps throughout the study period. For the initial six days, there was no significant difference in daily step outcomes between the relational and nonrelational (control) chatbot conditions; in fact, participants in the nonrelational (control) chatbot condition exhibited higher daily steps. Intriguingly, on the final day of the intervention, a significant decrease in steps was observed in the nonrelational (control) chatbot condition.

Mean daily step count and 95% confidence intervals by days of the study.

Adverse events

No adverse events related to study participation were experienced.

Discussion

Principal findings

This study investigated the feasibility, usability, and efficacy of an AI-powered relational chatbot designed to promote physical activity. In the study, a high retention rate of 94.4% was achieved, with only 2 out of 36 participants not completing the postsurvey. User engagement, measured by the daily chat completion rate, was high for both conditions, suggesting good feasibility outcomes. However, considering that comparable mHealth studies employing chatbots have reported retention rates of approximately 90% over 12-week study periods,9,57 it is crucial for future research to incorporate strategies that maintain participant engagement throughout the study. One possible strategy to increase long-term engagement is to personalize interactions with users. For example, just-in-time adaptive intervention (JITAI) allows for combining real-time data from built-in pedometers and other smartphone sensors to examine specific situations in which participants are most likely to react positively to notifications and tailor the timing, content, and frequency of the intervention to each person.55,58

As for linguistic characteristics of user's responses to Exerbot, participants in the relational chatbot condition demonstrated a greater use of total word counts as well as linguistic markers such as first-person pronouns, prepositions, auxiliary verbs, adverbs, and question marks, which can be linguistic markers of personal and involved style of communication. This suggests that relational chatbots can encourage a sense of personalization and enhance user engagement that promotes richer and more dynamic interaction with the chatbot. These findings align with established principles regarding the value of building relational interactions to optimize user experiences. For instance, Bickmore and Picard 15 highlighted how the incorporation of relational elements, such as the use of conversational cues and personalized language, can promote a stronger sense of engagement and connection between users and conversational agents. Moreover, the greater usage of cognition-related words, such as those reflecting cognitive processes, insights, and causation, by participants in the relational chatbot condition indicates a more analytical and explanatory style of communication. This suggests that the relational chatbot prompted users to engage in a more complex, engaging, reflective, and dynamic conversational style compared to the nonrelational chatbot.

By contrast, participants in the nonrelational chatbot condition used more words indicating negation and agreement than those interacting with the relational chatbot. Considering their overall word usage was lower than in the relational chatbot condition, this suggests a generally more negative or resistant conversational tone, while simultaneously showing a tendency to agree with the chatbot without further elaboration, reflecting a terse conversational style.

In regard to establishing human–chatbot relationships, a marginal difference was observed in the “bond” dimension of the therapeutic alliance between the chatbot and participants, with individuals interacting with the relational chatbot reporting a stronger social bond compared to those in the control chatbot condition. This strengthens the notion in healthcare contexts that chatbots hold great value as they can emulate physicians’ relational communicative behaviors, which have shown increased human–chatbot relationships23,34 and significant health outcomes 37 in previous studies.

Throughout the 7-day intervention period, participants using the relational chatbot increased by 2748 steps, while those in the control chatbot condition increased by 3458 steps a week. Assuming that it takes roughly 10 min to take 1000 steps, 9 this increase in steps translates to an additional 27 to 34 min of physical activity accumulated over the course of the week. Taking into account that an increase of 1000 steps per day has been associated with a reduced risk of all-cause mortality in adults, 59 the observed outcomes of this 1-week intervention can be considered promising.

However, these findings should be interpreted with caution. While both conditions showed an overall increase in steps from baseline to the intervention period, the linear mixed model indicated a decreasing trend in step counts over time when accounting for the time factor. As shown in Figure 3, during the 7-day intervention, participants initially increased their activity in the first few days but showed a gradual decline. This may suggest that the initial increase in physical activity was driven by the novelty of interacting with an AI chatbot, and the similarity in improvement between groups suggests a potential digital placebo effect linked to user expectations of digital devices, 60 where the mere act of interacting with any chatbot, regardless of its relational capabilities, may have motivated increased activity.

A notable finding was that this decline in step counts was more pronounced in the nonrelational (control) condition, with a steep drop observed on the final day. In contrast, participants in the relational chatbot condition maintain their step counts. This may suggest that the relational chatbot helped mitigate the postnovelty decline in physical activity levels and sustain participants’ engagement.

In addition, although the nonrelational (control) group engaged more consistently over the days, a deeper analysis of the qualitative aspects of the participants’ conversation and their word choices reveals important differences in processing mechanisms. The relational cues—social dialogue, self-disclosure, empathy, meta-relational, humor—may have triggered motivation by creating a more supportive and relatable environment. For example, participants interacting with the relational chatbot showed a greater use of various language features, which are indicative of a more thoughtful and reflective engagement style. Consequently, these sustained interactions may have fostered a positive reinforcement in maintaining physical activity over time.

In contrast, those interacting with a nonrelational (control) chatbot used more terse negations and assent words, indicative of a more surface-level interaction, suggesting that the participants may have approached the interaction with less depth or engagement. As such, while participants in nonrelational (control) condition show higher step counts over the six days, the pattern is less sustainable and ends in a decline in step counts on the final day. This may indicate that participants in the nonrelational (control) condition perceived physical activity as more of a “task,” thereby losing interest as it neared completion. This contrast supports our interpretation that the relational chatbot may promote sustained engagement through relationship-building, rather than merely task completion.

Therefore, future research should consider these key areas for improvement. First, because novelty effects often diminish over time, longer-duration studies are needed to determine if the observed effects persist beyond the initial novelty period. 61 Second, to clearly isolate the impact of the chatbot's relational features, studies should consider incorporating an additional comparator, such as standard printed materials or no intervention 62 to provide a clearer baseline for assessing the true impact of AI chatbot interventions on sustained physical activity levels. Lastly, it would be informative to triangulate multiple indicators of sustained engagement. Beyond assessing physical activity levels, it would be valuable to analyze the quantity and quality of exchanged messages, the depth of participants’ responses, as well as their emotional tone could provide a more comprehensive understanding of participants’ engagement and interest over time.

Strengths and limitations

This study employed both objective and subjective measures of physical activity, thereby facilitating a comprehensive and accurate evaluation of the intervention's effectiveness. 63 Additionally, drawing upon existing literature on human–chatbot interactions in healthcare settings, this study introduced an AI chatbot capable of exhibiting more humanlike, relationship-building behaviors to enhance health outcomes. A strength of this study lies in its focus on the importance of relational components in AI chatbot-based health interventions. Unlike much of the existing research that primarily emphasizes quantitative outcomes, this study provides qualitative insights into how relational chatbots influence user engagement and conversational depth. The findings highlight that relational components of the chatbots are not merely supplementary but integral to fostering a sustained health engagement process and adherence. Nonetheless, this study presents certain limitations.

First, the sample was relatively small (n = 36) and consisted predominantly of college students, who generally represent a younger and more active population. This homogeneity in our sample may limit our ability to draw conclusions about the chatbot's usability and potential effectiveness across diverse age groups, activity levels, and socioeconomic backgrounds. The focus on college students may have introduced bias in regard to technology literacy, daily schedules, and motivations for physical activity, which may not be representative of the general population. For future research, it would be beneficial to expand the study to include a more diverse sample. Particularly, this should encompass participants with varying levels of digital literacy, ranging from those experienced with AI chatbots to individuals with low technological proficiency or even technology-resistant attitudes. Such an approach would significantly enhance the study's external validity, providing insights into the chatbot's acceptance and usability across a broader range of potential users.

In addition, the small sample size and relatively short intervention period (7 days) limited our ability to detect significant differences between intervention conditions and further examine the role of human–chatbot relationships on physical activity outcomes. To address this, future studies should employ a larger-scale design with an extended intervention period. Increasing the sample size would enhance statistical power, enabling detection of meaningful differences between conditions and allowing for mediation analyses. For instance, extending the intervention to 3 months with an additional 3-month follow-up period would allow for observation of both immediate effects and maintenance 64 of physical activity levels. These changes would provide more robust evidence of the chatbot's effectiveness and insights into the sustainability of AI-driven physical activity interventions.

Lastly, while pedometer data provide objective estimates of step counts, they do not capture all forms of daily physical activity. Future research should incorporate a more comprehensive approach to measuring physical activity, combining self-report questionnaires and/or diaries with devices such as accelerometers and heart rate monitors. 65 This multi-method approach would provide a more complete picture of participants’ activity levels and patterns than relying on a single measure.

Ethical considerations

Chatbots offer significant potential in healthcare and behavior change by providing support to vulnerable populations and tackling challenges related to the high costs of healthcare and behavioral therapy. Yet, they can also bring about new challenges and potential long-term consequences for individuals engaging with these services in healthcare and behavior change contexts. For instance, when a chatbot mimics a human therapist, individuals may develop certain expectations, potentially leading to dependency or blind trust, and anticipating more assistance than the chatbot is capable of providing. 66 Establishing relationships with artificial agents can lead to social and health benefits. 67 However, previous research has documented cases of emotional dependency that may result in addiction and negatively impact individuals’ real-life intimate relationships.68–70 Therefore, researchers need to find a balance in these interactions to minimize potential adverse effects arising from excessive attachment or blind trust.

Conclusions

The rapid advancement of AI chatbot technologies, particularly in relationship-building capacities, offers potentially promising applications in complementing human efforts to promote physical activity. While our findings are suggestive rather than definitive, the present study provides initial evidence that chatbots equipped with relational capabilities may have the potential to effectively motivate individuals to engage in physical activity. However, it should be noted that AI chatbots are not intended to replace human healthcare providers, but rather to complement and extend their efforts, potentially increasing the reach and frequency of interventions. Future research employing larger samples could yield more robust statistical power and further insights into user outcomes. Additionally, it would be beneficial for future studies to incorporate a control condition utilizing an alternative medium (e.g., a website) to establish a more natural health information-providing setting, facilitating a comparison of the effectiveness of chatbots versus other methods in promoting physical activity.

Supplemental Material

sj-doc-1-dhj-10.1177_20552076251324445 - Supplemental material for Enhancing physical activity through a relational artificial intelligence chatbot: A feasibility and usability study

Supplemental material, sj-doc-1-dhj-10.1177_20552076251324445 for Enhancing physical activity through a relational artificial intelligence chatbot: A feasibility and usability study by Yoo Jung Oh, Kai-Hui Liang, Diane Dagyong Kim, Xuanming Zhang, Zhou Yu, Yoshimi Fukuoka and Jingwen Zhang in DIGITAL HEALTH

Supplemental Material

sj-pdf-2-dhj-10.1177_20552076251324445 - Supplemental material for Enhancing physical activity through a relational artificial intelligence chatbot: A feasibility and usability study

Supplemental material, sj-pdf-2-dhj-10.1177_20552076251324445 for Enhancing physical activity through a relational artificial intelligence chatbot: A feasibility and usability study by Yoo Jung Oh, Kai-Hui Liang, Diane Dagyong Kim, Xuanming Zhang, Zhou Yu, Yoshimi Fukuoka and Jingwen Zhang in DIGITAL HEALTH

Footnotes

Contributorship

The authors confirm their contribution to the paper as follows: YJO and JZ contributed to study conception and design; YJO, K-HL, DDK, and XZ contributed to data collection; YJO, DDK, and JZ contributed to analysis and interpretation of results; YJO, K-HL, DDK, XZ, ZY, YF, and JZ contributed to draft manuscript preparation. All authors reviewed the results and approved the final version of the manuscript.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Ethical approval

This study received approval from the Institutional Review Board (IRB) at the University of California, Davis (approval number: 2033031-1), ensuring adherence to ethical guidelines and standards in academic research. All participants provided informed consent before commencing the study.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.