Abstract

Objective

Metastatic breast cancer (MBC) is associated with burdensome side effects, including cognitive changes that require ongoing monitoring. Cognitive ecological momentary assessments (EMAs) allow for assessment of individual cognitive functioning in natural environments and can be administered via smartphones. Accordingly, we sought to establish the feasibility, reliability, and validity of a commercially available cognitive EMA platform.

Methods

Using a prospective design, clinical cognitive and psychosocial assessments (cognitive batteries; patient reported outcomes) were collected at baseline, followed by a 28-day daily EMA protocol that included self-ratings for symptoms and mobile cognitive tests (memory, executive functioning, working memory, processing speed). Satisfaction and feedback questions were included in follow-up data collection. Feasibility data were analyzed using mixed descriptive methods. Test-retest reliability was examined using intraclass correlation coefficients (ICCs) for each EMA, and Pearson's correlation were used to evaluate convergent validity between cognitive EMAs and baseline clinical cognitive and psychosocial variables.

Results

Fifty-one women with MBC (n = 51) completed this EMA study. High satisfaction (median 90%), low burden (median 19%), high adherence rates (mean 94%), and 100% retention rate were observed. ICCs for cognitive tests of working memory, executive function, and processing speed were robust (>0.90) and ICC for memory tests acceptable (>0.66). Other correlational findings indicated strong convergent validity for all cognitive and psychosocial EMAs.

Conclusion

Cognitive EMA monitoring for 28 days is feasible and acceptable in women with MBC, with specific cognitive EMAs (mobile cognitive tests; cognitive function self-ratings) demonstrating strong reliability and validity.

Keywords

Introduction

It is estimated that 168,000 people are living with metastatic breast cancer (MBC) in the United States. 1 Almost 30% of those diagnosed and treated with early stage breast cancer will experience recurrent metastatic disease later in their lives,2,3 and 6% to 10% of all new breast cancer diagnoses are metastatic at diagnosis.1,4 Treatment for MBC is complex, longitudinal, and requires ongoing monitoring. Treatments typically consist of chemo- or immunotherapy, endocrine therapy, and targeted agents depending on the cancer subtype and actionable mutations. The treatment goal for MBC is to optimize quality of life (QoL) and prolong survival while minimizing the side effects of living with incurable cancer. Patients with MBC can live for years with advanced disease and the side effects of treatment, which makes symptom control and QoL optimization of paramount importance. 5

Treatment for MBC is associated with burdensome physical, psychological, and spiritual side effects that negatively impact QoL and interfere with participation in everyday life.5–10 Notably, women with MBC describe debilitating neuropsychological symptoms including fatigue and “chemo-brain” and that these symptoms, in addition to other side effects of MBC treatment, decrease their functional status and limit how they can engage with their everyday activities and their ability to work. 10 Cancer-related cognitive impairments (CRCI) are commonly found in early-stage breast cancer and typically present as deficits in attention, executive functioning, processing speed, and memory.11–16 Almost all CRCI research to date has focused on early-stage breast cancer, methodologically excluding those with advanced or metastatic disease. We recently characterized CRCI in women with MBC using traditional subjective (i.e. a cognitive patient reported outcome [PRO]) and objective assessments (i.e. remote standardized cognitive test battery) in a cross-sectional analysis and found that the majority of our sample (n = 52) had clinically significant CRCI (primarily on PROs and tests of executive functioning and memory) negatively affected QoL and daily functioning. 17 Considering that cognitive functioning in the context of everyday life is highly variable and subject to contextual demands (e.g. demanding, stressful, or distracting situations) and/or state dependent factors (e.g. sleep quality, mood), 18 and that those with MBC may be especially vulnerable to CRCI due to complex treatment regimens, changes to treatment plans, potential for brain metastases, and high psychological burden of disease, alternative methods for assess CRCI in this population should be applied.

QoL, symptoms, and functional status are often assessed using retrospective PRO measures or functional performance tests19,20 that can be influenced by recall bias, and may not adequately represent dynamic and evolving symptoms, function, and QoL of MBC patients across time. Ecological momentary assessments (EMAs) offer one way to capture individuals’ thoughts, feelings, behaviors, and symptoms in natural environments and can be easily administered via their smartphones. EMA methods (via paper/pencil diaries and digital tools) have been applied to oncology populations both during and after treatment, and have been used to assess primary and secondary physical, psychosocial, and behavioral outcomes (e.g. fatigue, sleep, exercise, pain, mindfulness, self-efficacy, expectations, mood, distress, and QoL). 20 Digital EMA's have been reported to be feasible and valuable in studies conducted with persons living with advanced cancer to study stress, 21 loss and life engagement, 22 pain, anxiety, depression and medication use. 23

Use of cognitive EMAs, including ecological mobile cognitive tests, is on the rise and evidence supports use of cognitive EMAs in cognitively vulnerable populations such as persons with mild cognitive impairment, serious mental illness, and mood disorders.24–28 Cognitive EMAs have only recently been applied to study CRCI in early stage (i.e. nonmetastatic) breast cancer patients 29 and survivors,30,31 with preliminary data showing feasibility, acceptability this methodology,29–31 strong reliability and validity for objective cognitive EMAs, and that EMAs may be more sensitive to CRCI than traditional assessments. 31 However, many questions remain regarding how specific cognitive EMAs reflect traditional cognitive measures, what protocols may be most appropriate for various cancer populations, and how interactions among context, behavior, and symptoms explain cognitive variability in cancer survivors with CRCI. To our knowledge, EMAs have not yet been used to study cognitive functioning and associated psychosocial symptoms in persons with MBC. Cognitive EMAs could provide a more accessible and less burdensome means for assessing CRCI in persons with MBC or other cancer patients/survivors and provide a rigorous way to assess outcomes of interventions aimed to improve CRCI, especially modern precision health-type intervention designs such as just-in-time adaptive interventions or ecological momentary interventions. There is also potential to add cognitive EMAs to improve precision in pharmacological trials for MBC.

To address these gaps in knowledge, this study establishes the feasibility, reliability, and validity of a commercially available cognitive EMA platform to assess cognitive functioning and related psychosocial functioning in women living with MBC and describes the psychometric characteristics (practice and fatigue effects, reliability, and convergent validity metrics) of the specific EMAs.

Methods

All study-related procedures were conducted in accordance with the Declaration of Helsinki and approved by the University of Texas at Austin Institutional Review Board (STUDY00002393). All participants provided informed consent.

Sample description

A convenience sample of women who had been diagnosed with MBC participated in the study. To be eligible, participants were required to be 21 years of age or older; physically (i.e. able to use a computer and smartphone; and a Karnofsky Performance Scale result of >=70) and cognitively (MiniMoca Telephone screening result of >=11) able to participate32,33; had access to a computer with internet and a smartphone with cellular or wireless internet connectivity; proficient in reading, speaking, and writing in English; and resided in the United States. Those with major sensory deficits (e.g. deafness or blindness), a prior history of cancer with systemic treatment for cancer (other than breast cancer), or neurological or cognitive comorbidities (e.g. self-reported dementia, substance abuse, unmanaged psychiatric conditions) were excluded.

Data collection procedures

All study procedures were conducted remotely from the University of Texas at Austin. Study information was distributed via social media communications, including administrators of METAvivor©—a national social media peer support group for those affected by MBC. People interested to participate contacted the study team and completed a pre-screening and enrollment telephone appointment.

Data were collected and managed primarily using Research Electronic Data Capture (REDCap) hosted at the University of Texas at Austin.34,35 Baseline data collection included an intake survey (i.e. written consent, sociodemographic data, clinical variables, cognitive variables, psychosocial variables) and remote administration of a cognitive test battery via BrainCheck (BrainCheck Inc., Austin, TX, USA; a digital cognitive testing and care provision platform). Participants then started their cognitive EMA protocols, which were administered daily for 4 weeks (28 assessments in total per participant). After the cognitive EMA protocol was completed, participants were sent follow-up data collection surveys (cognitive and psychosocial variables, feasibility, satisfaction, utility questions) and a second cognitive BrainCheck testing battery. Participants were given a $78 gift card, a report of their NeuroUX performance across the study (generated by NeuroUX and sent to our study team; see Supplemental Figure S1) and an educational handout “Optimizing Cognitive Function During and After Breast Cancer” (available on our lab website 36 ) as tokens of appreciation for participating.

Measures

Sociodemographic and clinical variables (baseline)

Sociodemographic characteristics (e.g. age, education, race, ethnicity, marital status, children/dependents, income, employment), health history (e.g. co-morbidities, menstrual history, current medications), and cancer history (e.g. breast cancer type/stage, cancer treatment details, end date of chemotherapy) were collected via survey to describe the sample and for use in convergent validity analyses (i.e. age and education).

Clinical cognitive function (baseline and follow-up)

The Functional Assessment of Cancer Treatment Cognitive Function, Perceived Cognitive Impairments 20 item subscale (FACT-Cog PCI, v3.0) was used to assess self-reported cognitive function in the previous 7 days. 37 A computerized battery of standardized neuropsychological tests via the BrainCheck platform was administered remotely to assess cognitive performance, and included: the Trail Making Tests for attention and processing speed, the Digit Symbol Substitution Test and the Stroop Test for executive functioning, and the Recall Test (list learning) for immediate and delayed verbal memory. 38 Alternative forms of the tests are used with every administration. Participants were sent detailed instructions for accessing and using the platform, including an introductory video, and study staff were available to assist via video conference as needed. Raw test scores from baseline were used in the psychometric analyses (median time between clicks for Trails A and B, Stroop median reaction times, Digit Symbol median reaction time between clicks for all trials and number correct per second, number of correct responses for immediate and delayed memory, and the raw combined score).

Psychosocial variables (baseline and follow-up)

Depressive symptoms, anxiety, and fatigue correlate with self-reported cognitive function in people affected by cancer,14,39–42 thus we examined convergent validity of the EMAs by using the Patient-Reported Outcomes Measurement Information System (PROMIS) scales—Emotional Distress—Anxiety Short Form 8a (“PROMIS Anxiety”), Emotional Distress—Depression Short Form 8a (“PROMIS Depression”), PROMIS Fatigue Short Form 8a (“PROMIS Fatigue”), 43 PROMIS Sleep Disturbance 4a (“PROMIS Sleep”), 44 PROMIS Satisfaction with Social Roles & Activities Short Form 8a (“PROMIS Social”) and the UCLA Loneliness 3-item scale. 45 All PROMIS Scales were transformed to T-scores per their scoring manuals and used in the convergent validity analyses. The UCLA loneliness scale was summed to obtain a raw score (range: 0–9; higher scores indicating more loneliness). Completion of follow-up cognitive and psychosocial data was used to determine study retention rates, but not used in psychometric analyses.

Cognitive EMA protocol

EMA surveys and gamified mobile cognitive tests were administered via the NeuroUX platform (NeuroUX, Inc.) See Figure 1. NeuroUX was chosen to deliver the EMA protocol because this commercially available platform allows for integrated assessment of both traditional EMA surveys (e.g. assessments of mood, context, symptoms, behaviors) and cognitive tests. NeuroUX is a beautifully designed, gamified, and highly customizable platform that significantly improves participant engagement and adherence. The platform offers customization of surveys and has a menu of validated cognitive tests for various cognitive domains including memory, psychomotor speed, executive function, social cognition, and attention. The cognitive EMA assessments were pushed out via weblinks to participants via text messaging. This eliminated the need for participants to have to install and manage a native app. Weblinks were sent to participants at pseudorandom times between participant's preferred times in the morning and evening, and varied across morning, midday, and evening throughout the protocol. If participants requested to adjust their “start” and “stop” times for receiving EMA texts during their protocol, our study team made the requested adjustments. Once participants received their text messages, they were given 6 hours to complete the assessment session. Reminder texts were automatically sent if sessions were not completed after 3 hours and then again with 1 hour remaining. In addition to reminder text messages, the study coordinator was available as needed to assist participants. This assistance included providing more instructions for how to complete the specific cognitive tests and practice links for tests if participants requested them

Mobile cognitive tests used in cognitive ecological momentary assessment protocol. (A) N-back; (B) CopyKat; (C) Color Trick; (D) Hand Swype; (E) Matching Pair; (F) Quick Tap 1; (G) Memory Matrix; and (H) Memory List-12.

Each assessment collected single-item Likert-type scale ratings for depressed/sadness, anxiety, fatigue, loneliness, cognitive symptoms, cognitive abilities, sleep quality (last night), social engagement, relational quality, social satisfaction, and overall wellbeing (see Supplemental Table S1 for exact items). Each item assessed how bad or good each symptom is ‘right now’. Response options could range from ‘Not at all’ (0) to ‘Extremely’ (7), consistent with our previous cognitive EMA studies.26,46 After symptoms were assessed, 4 mobile cognitive tests were administered, each 2-minute long, in the domains of working memory, executive functioning, processing speed, and verbal/spatial memory. These domains were chosen as they are the most common impacted by CRCI. 11 Two tests were issued for each cognitive domain and balanced throughout the protocol (14 times each, Table 1).

Cognitive ecological momentary assessment protocol across 28 assessments.

1: Color trick color-to-meaning version.

2: Color trick yes/no version.

3: Memory List 12 words.

4: Memory List 18 words.

5: 7 color to meaning version, 7 yes/no version.

6: 9 administrations of the 12-word list, 5 administrations of the 18 word list.

For working memory, we used the N-Back with a 2-back design (12 trials each administration) and the CopyKat tests. The N-Back is a common task that requires the participant to remember if a certain number was displayed 2 trials back (total score was used in the analyses). Participants are initially presented with a 2 × 2 matrix of colored tiles in a fixed position, which briefly light up in a random order. Participants are asked to replicate the pattern by pressing on the colored tiles in the correct order. Another feature of the task is that each color plays a distinct tone when highlighted, providing the phone's volume is turned on. The time to complete is variable, but 3 trials take approximately 2 to 3 min (total score was used in the analyses).

For executive functioning, the Color Trick and Hand Swype tests were used. The Color Trick test asks the participant to match the color of the word with its meaning, with 15 trials each test (total score and median reaction time were used in analyses). The Hand Swype test asks the participant to swipe in the direction of a hand symbol or in the direction that matches the way the symbols are moving across the screen. Hand Swype is a time-based task (1 minute every administration; total score and median reaction time were used in analyses).

For processing speed, we used the Matching Pair and Quick Tap 1 tests. For Matching Pair, the participant was asked to quickly identify the matching pair of tiles out of 6 or more tiles. Matching Pair is a time-based task for 90 seconds. The grid size increases based on correct responses and the max grid size is 4 × 4 tiles (total score was used in the analyses). For Quick Tap 1, the participant is asked to wait and tap the symbol when it is displayed (12 trials/administration; median reaction time were used in the analyses).

For memory we used the Variable Difficulty List Memory Test (VLMT) and Memory Matrix tests. For the VLMT, participants were provided with a list of 12 or 18 random (i.e. not semantically related) words and given 30 seconds to memorize the list. They were then asked yes/no questions to determine if words were on the list or not, immediately following the memorization time (total words correct was used for analyses). For Memory Matrix, patterns of tiles are quickly displayed to the participant, then they are asked to indicate the pattern that was displayed by touching the tiles that were in the pattern. This test gets progressively harder if responses are correct: the first trial starts with a 2 × 2 grid size, and the size can go up to 7 × 7 tiles until the participant gives three incorrect responses (total score was used in the analyses).

Feasibility and acceptability outcomes

Accrual rates (% of those who contacted our team who were eligible that enrolled), adherence rates (number of partial/completed cognitive EMAs across the 28 administrations), and retention rates (proportion of participants who completed baseline and follow-up data collection) were calculated. To assess acceptability of cognitive EMAs, the following questions were asked:

“Overall, how satisfied are you with your experience participating in this study?” Responses ranged: 0–100 (0 = unsatisfied, 50 = neutral, and 100 = very satisfied). “How challenging was it for you to answer the survey questions and do the brain games on your smartphone during the protocol?” Responses ranged: 0–100 (0 = not challenging, 50 = neutral, and 100 = very challenging). “Would you be open to incorporating smartphone based cognitive tasks as part of your ongoing care to monitor your cognitive functioning?” Responses were ‘Yes’, or ‘No’. “If you have any suggestions or feedback about the study, please provide them here”, with an open text box for responses.

Data analysis plan

Preprocessing

Consistent with previously published methods24,28,47,48 NeuroUX data were cleaned to remove instances of suspected low effort/engagement. N-Back scores of 0 were excluded due to suspected low effort/engagement. CopyKat scores less than 4 were excluded due to suspected low effort/engagement. Color Trick total scores < 3 and reaction times > 10 seconds were excluded due to suspected low effort/engagement. Matching Pair scores less than 100 were excluded due to suspected low effort/engagement. Quick Tap 1 median reaction times associated with a session in which < 8 trials were correct were excluded due to suspected low effort/engagement; only 2 Quick Tap sessions were excluded based on these criteria. Memory List scores corresponding to below chance (i.e. total score <12 and total score <18 on the 12-word and 18-word memory lists, respectively) performance were excluded due to suspected low effort/engagement; only 4 Memory List sessions were excluded based on these criteria. Memory Matrix scores for sessions with fewer than 5 correct trials were excluded due to suspected low effort/engagement. In addition to a priori effort thresholds for each test described above, we also excluded scores identified as person-specific outliers (i.e. any score >3 standard deviations [SD] away from a person's own mean performance on that task); 10 total instances across all NeuroUX tests were identified as person-specific mobile cognitive test outliers.

Feasibility and acceptability outcomes

Descriptive statistics (mean, SD, minimum, maximum, and frequencies) were calculated for adherence and retention rates and satisfaction questions, depending on the level of measurement and normality. A priori rates of 80% for retention and 75% for adherence were used to determine feasibility, consistent with previous rates reported in an EMA study of people with metastatic cancer. 23 EMA session fatigue effects (i.e. the likelihood of a missed cognitive EMA session over time) were evaluated using mixed effects logistic regression. Person-specific random intercepts and effects of time (i.e. study day) were modeled.

The open-text box responses to “If you have any suggestions about how the study could be improved or any other feedback, please provide that here”, were independently reviewed by 2 co-authors (OFR; SB) using a qualitative content analysis approach.49,50 This approach allows for the distillation of words into fewer content-related categories, which is done manually. 51 Each co-author first read through the full narrative to become familiar with the data, then initiated the line-by-line coding process. Codes were inductively grouped into larger categories that emerged directly from the data, without an organizing framework. 51 Co-authors met to compare and collapse categories and complete the abstraction process which involves forming general descriptions and meanings of the final categories. 51

Psychometric characteristics

Test-retest reliability was examined by calculating intraclass correlation coefficients (ICCs) for administrations of each test. ICC interpretation followed published guidelines for poor (<0.50), moderate (0.50–0.75), good (0.76–0.90), and excellent (>0.90) reliability. 52

Linear mixed effects models were used to evaluate practice effects. First, linear and quadratic effects of time (defined as study day) on each test score were examined. Person-specific random intercepts and effects of time were modeled. For scores with significant quadratic practice effects, mixed effects models with linear splines tested whether there was a changepoint at which improvements in performance level off.

Correlations evaluated convergent validity between cognitive EMA performance and EMA self-report symptoms (mean, and within-person variability) and baseline clinical cognitive (BrainCheck for objective cognitive function and FACT-Cog PCI for subjective), and psychosocial variables. P values were adjusted using a FDR correction. All analyses were conducted in R Studio (Posit Software, version 2024.04.1 + 748; ‘ggplot2’, ‘ggcorrplot’, ‘dplyer’, ‘tidyverse’, ‘magrittr’ libraries)

Results

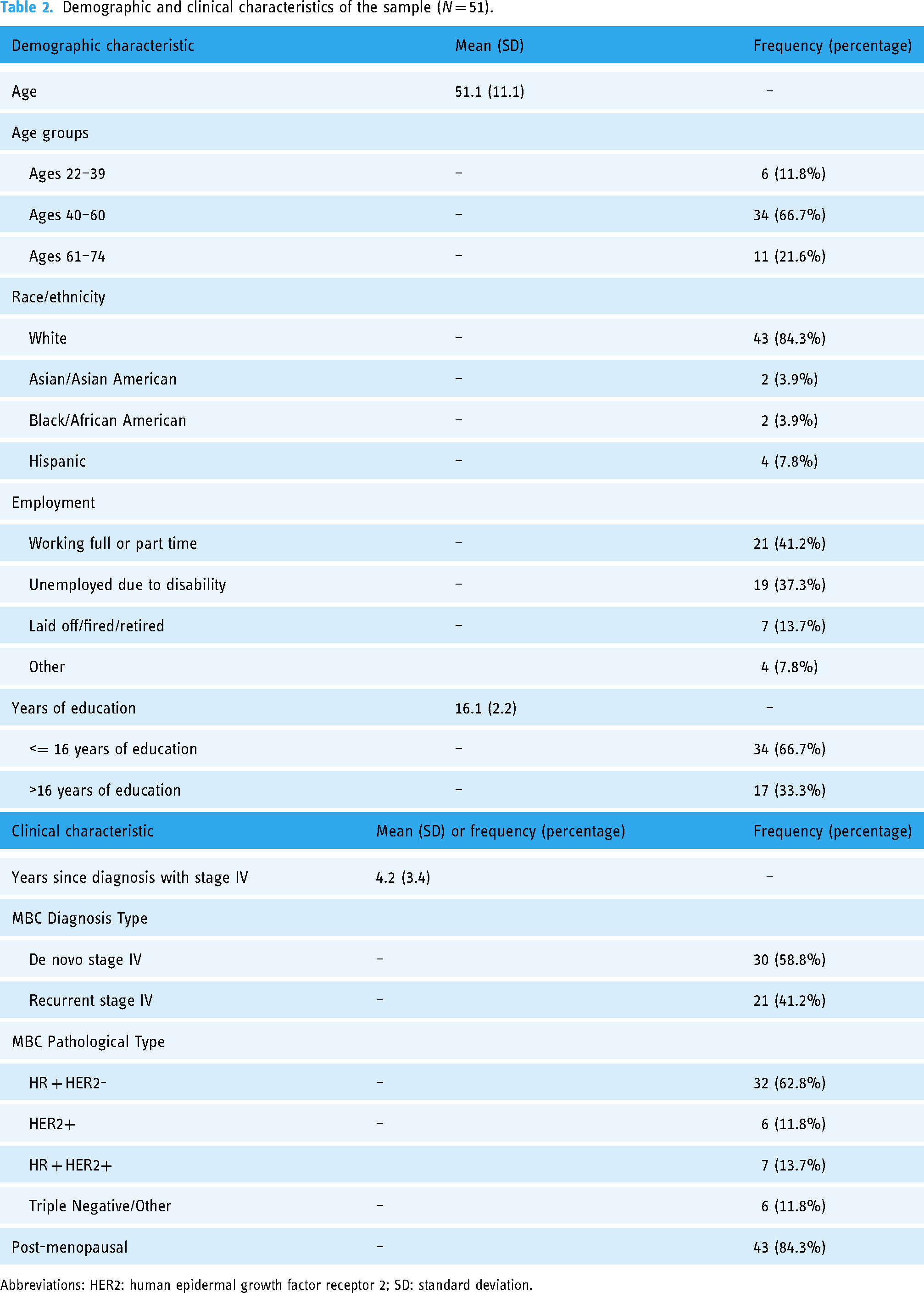

This study included 51 women who were on average 4.2 years post MBC diagnosis, with most diagnosed as de novo MBC (58.8%). Participants mean age was 51.1 years old, the majority were White non-Hispanic race and ethnicity (84.6%), and 67.3% received 16 years or less of formal education. See Table 2 for demographic and clinical characteristics.

Demographic and clinical characteristics of the sample (N = 51).

Abbreviations: HER2: human epidermal growth factor receptor 2; SD: standard deviation.

Feasibility and satisfaction

One hundred and sixteen (n = 116) people completed study interest forms, of which 54 were lost to contact during prescreening scheduling communications. Sixty-two (n = 62) people were screened for enrollment of which 10 were ineligible (n = 6 resided outside the United States; n = 2 had Mini Moca scores <11; n = 2 had medical histories that were exclusion criteria), 52 women were consented and 51 enrolled in the cognitive EMA protocol, thus accrual was 84% (51/62). Mean adherence rates for the 28-day cognitive EMA protocol was 94% (SD = 8.5%, range = 61–100%). Participants expressed high satisfaction with the cognitive EMA protocol (mean rating = 88%, SD = 12.5%, median = 90%, minimum = 50%, maximum = 100%). On average, participants reported the EMA protocol as not challenging (mean rating = 32.2%, SD = 30.9%, median = 19%, range = 0–100%). Retention rates were very high, with all participants (100%) completing baseline and follow-up data collection. In response to the question about incorporating smartphone based cognitive tasks as part of ongoing care to monitor cognitive functioning, 98% of the sample responded ‘Yes’. Non-significant EMA session fatigue effects suggest that participants were not significantly more likely to miss an EMA session over time (OR = 1.02, 95%CI = 0.99–1.05, p = 0.144). The mean time to complete the daily EMA was 11 min 53 s (SD 1 min 36 s).

Qualitative content analyses revealed three categories for the narrative responses to the open-ended questions related to aspects of feasibility and acceptability of the cognitive EMA protocol: (a) tasks at inconvenient times, (b) reminders and time to complete tasks, and (c) unclear instructions. For ‘tasks at inconvenient times’, participants indicated frustration with the pseudorandom timing of the EMA texts across the protocol. For example, “the timing of the tests was all over the map…,” and “the hours I received the text messages were not consistent.” Additionally, participants noted that if they received the text closer to their bedtime, they did not have a full 6 hours to complete their tests before time was up. For ‘reminders and time to complete tasks’, participants noted that they appreciated the 6 hours to complete their EMA and that this was enough time to complete assessments each day. For ‘unclear instructions’, participants noted the instructions for the n-back were not clear and they did not know if they did it correctly, stating “I could not figure out the 2-back letter game.”

Psychometric characteristics

Variability and reliability

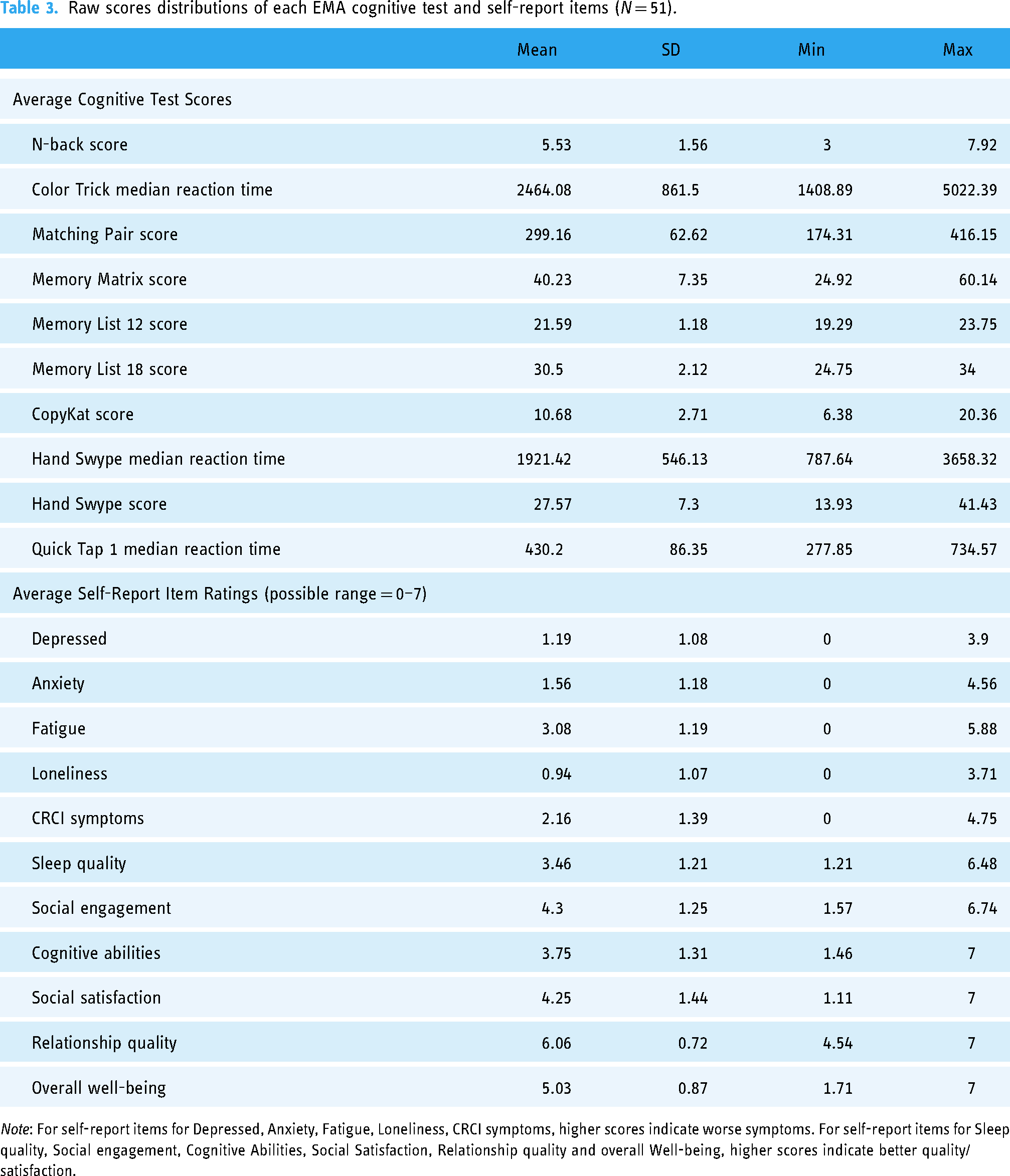

Distributions of cognitive EMA tests and self-report questions are presented in Table 3. Within-person variability (SD) and test-retest reliability estimates across administrations are presented in Table 4. Reliability estimates suggest excellent reliability for nearly all tests (ICCs > 0.90), except for the Memory List 12 and Memory List 18, which showed moderate-to-good test-retest reliability (ICCs > 0.66).

Raw scores distributions of each EMA cognitive test and self-report items (N = 51).

Note: For self-report items for Depressed, Anxiety, Fatigue, Loneliness, CRCI symptoms, higher scores indicate worse symptoms. For self-report items for Sleep quality, Social engagement, Cognitive Abilities, Social Satisfaction, Relationship quality and overall Well-being, higher scores indicate better quality/satisfaction.

Within-person variability and test-retest reliability across administrations of each EMA cognitive test and self-report questions (N = 51).

Practice effects

Linear or non-linear trends over time for each test are shown in Figure 2. Statistically significant linear practice effects (i.e. consistent improvements over time) were observed for Color Trick Reaction Time (day: b = -25.30, SE = 4.18, p < 0.001), Matching Pair Score (day: b = 1.46, SE = 0.26, p < 0.001), and Hand Swype Reaction Time (day: b = -19.16, SE = 1.75, p < 0.001). Non-linear practice effects (i.e. a quadratic effect of time) were observed for N-back, Memory Matrix, Hand Swype Score, and Quick Tap 1. Subsequent mixed effects regression models with linear splines showed that N-back practice effects leveled off after study day 14 (days 1–14: b = 0.13, SE = 0.02, p < 0.001; days 14–28: b = 0.01, SE = 0.01, p = 0.411), Memory Matrix practice effects leveled off after study day 12 (days 1–12: b = 0.22, SE = 0.10, p = 0.036; days 12–28: b = -0.07, SE = 0.07, p = 0.324), Hand Swype Score practice effects leveled off after day 21 (days 1–21: b = 0.61, SE = 0.03, p < 0.001; days 21–28: b = 0.09, SE = 0.09, p = 0.277), and Quick Tap 1 practice effects leveled off after day 6 (days 1–6: b = -10.81, SE = 2.22, p < 0.001; days 6–28: b = -0.30, SE = 0.38, p = 0.435). In contrast, there were no consistent trends in performance over time for Memory List 12 (day: b = -0.003, SE = 0.010, p = 0.735), Memory List 18 (day: b = -0.036, SE = 0.023, p = 0.117), or CopyKat (day: b = -0.020, SE = 0.016, p = 0.220).

Practice effects (linear or non-linear trends) for each EMA cognitive test.

Cognitive and psychosocial EMA convergent validity

Correlations among EMA cognitive tests (mean and within-person variability in performance) with age, education, BrainCheck raw scores, and self-reported cognitive function (FACT-Cog PCI) are shown in Table 5 (mean scores) and Table 6 (SD in scores). Mean performance on Memory List 12 & 18 were most strongly associated with BrainCheck Immediate Recall and Delayed Recall scores. Cognitive EMA tests of processing speed (e.g. Matching Pair, Quick Tap) were most strongly associated with all speeded BrainCheck tests (Trails A, Trails B, Stroop, Digit Symbol). Almost all cognitive EMA tests of executive/working memory (i.e. Color Trick, Memory Matrix, CopyKat, Hand Swype) were associated with BrainCheck executive tests (Trails B, Stroop, Digit Symbol), correlations among N-back and BrainCheck tests did not remain significant with FDR p value adjustments. We included correlations among variability in cognitive EMA scores using root mean squared successive differences and BrainCheck scores in Supplemental Table S2. There were also some significant correlations between self-report and objective cognitive EMAs of processing speed (r's = 0.35 and −0.34, p's < 0.05, see Supplemental Figure S2). In addition, psychosocial EMA items were strongly related to validated measures of psychosocial function and cognitive symptoms (e.g. PROMIS scales and FACT-Cog; see Figure 3).

Pearson r correlations between cognitive and psychosocial self-report EMA items (mean across 28 days), and clinical assessments of psychosocial and cognitive function. Colors represent the strength of the correlation for statistically significant associations using FDR adjusted p values; blank colors have p > 0.05.

Pearson r correlations testing convergent validity between average cognitive EMAs and baseline age, education, BrainCheck cognitive scores, and FACT Cog PCI (N = 51).

Note: FDR adjusted p-value used.

* p < 0.05.

** p < 0.01.

*** p < 0.001.

Pearson r correlations testing convergent validity between within-person variability on cognitive EMAs (SD) and baseline age, education, BrainCheck cognitive scores, and FACT Cog PCI (N = 51).

Note: FDR adjusted p-value used.

* p < 0.05.

** p < 0.01.

*** p < 0.001.

Discussion

We found that short-term cognitive EMA monitoring (for 28 days) is acceptable and feasible in patients with MBC as shown by high adherence to the protocol, study retention, and high satisfaction/low burden ratings. The specific cognitive and psychosocial EMAs (mobile cognitive tests and self-ratings for cognitive and psychosocial symptoms) demonstrated strong reliability and validity. High adherence of the EMA protocol (94%) aligned with previously reported adherence rates of e-Health physical activity interventions for women with MBC (94% adherence over 6 months) 53 and a recent EMA study in MBC patients that used a 7-day EMA protocol (80% adherence). 23 High adherence and satisfaction rates found here may also be attributed to the study procedures that encouraged participant engagement and minimized burden, including accommodating participant preferences into the timing of cognitive EMA texts each day, sending reminder texts, troubleshooting technical difficulties, and providing additional instructional support for the specific mobile cognitive tests administered. Taken together, these findings demonstrate that women with MBC are interested and engaged in e-Health modalities and ongoing symptom monitoring research via EMAs, despite the high burden of metastatic disease.

Since MBC can be a chronic condition, there is a need to help people manage their cognitive changes and live fuller and happier lives. Those with MBC often become active self-managers of their complex treatments, side effects, and inventory of their everyday QoL. 54 Methods for self-monitoring or self-evaluation could empower those with MBC to be more active participants in their treatment plans and provide important data across time to make decisions related to treatments and daily activities. Furthermore, qualitative studies report that women with MBC want to minimize their time at medical appointments and maximize their time ‘living’, 10 so remote digital tools for self-evaluation, such as those employed in this study, may be especially useful to facilitate self-monitoring while living with MBC.

In the context of advanced cancer, EMAs can directly capture variation in symptoms and functional outcomes in real-life settings, provide a useful complement to more macro-level assessments that occur in longitudinal research designs or in clinic visits, and facilitate better personalized care for those impacted by MBC. One of the National Cancer Institute's (United States) research priorities is to “understand and address emerging symptom trajectories of individuals living with metastatic cancers.” 55 Additionally, newly published National Standards for Cancer Survivorship Care from the Office of Cancer Survivorship,56,57 and newly published MASCC-ASCO Standards and Practice Recommendations for people affected by advanced or metastatic cancer58,59 specify the need for oncology organizations to collect data on survivors’ patient-reported outcomes, including QoL, and survivors’ functional capacity. Our findings of low study burden, high utility, and high interest in using the cognitive EMA platform provide foundational evidence for incorporating EMAs for cognitive self-monitoring in clinical settings. Our findings also support the future application of cognitive EMAs for evaluating MBC-specific intervention effects, including mobile/web based supportive interventions that are in the research pipeline.60–63

Qualitative findings in this study highlight the need to provide clearer instructions for some of mobile cognitive tests on the platform, and the provision of scheduled instead of pseudorandom assessment sessions in this population. Our team has incorporated this feedback on instruction clarity into the platform, developing instructional videos for all cognitive tests. True ‘momentary’ assessments in this population may be burdensome, and so longer periods of time for assessment completion, scheduled timing of assessments, and/or participant-initiated assessments should be considered in future EMA studies with persons who have metastatic disease.

The cognitive EMA protocol, including mobile cognitive tests and self-rated symptom assessments, administered via NeuroUX, demonstrated strong reliability across administrations, expected practice effects, and very good convergent validity overall, consistent with previous reports of strong validity and reliability of different cognitive EMAs administered across 14 days in early-stage breast cancer survivors. 31 The lowest reliability scores were found for the Memory List tests, 12-item (0.77) and 18 item (0.67), which is expected since these tests generate new and unrelated lists of words to remember with each administration. Cognitive EMAs are an innovative solution for monitoring the cognitive health of MBC patients, but there is a need for broader validation across diverse cancer types.

The practice effects that we found are consistent with practice effects for the same mobile cognitive tests found in a community sample of healthy adults. 48 Patterns of nonlinear effects with improvements leveling off after many days were found for the three tests that are the most challenging in the protocol (N-back, Memory Matrix, Hand Swype Score). Recently, researchers have used learning curves (i.e. practice effects) across multiple days, rather than single session re-testing, as clinically relevant predictors of disease or future cognitive decline in populations with subtle cognitive deficits (i.e. preclinical Alzheimer's Disease). 64 Mobile cognitive tests with no significant practice effects in this protocol may be explained by strong alternate forms of these tests (e.g. Memory Lists). Researchers using cognitive EMA protocols in MBC populations should consider practice effects for each mobile cognitive test when designing the length of EMA protocols (i.e. number of assessments over number of days) and consider comparing learning curves of serial cognitive EMAs between those with clinical cognitive impairment and those without and/or those with MBC and matched controls.

This study did not have a matched control group. However, our findings were compared to previously published norms from a community based sample of adults living in the United States for these same mobile cognitive tests of memory and reaction time. 48 Our sample demonstrated similar scores and variability in memory tests (Memory Matrix, Memory List-12 item), but slower reaction times and greater within- person variability in reaction time on Quick Tap 1 and Hand Swype tests. Greater variability in reaction time on mobile cognitive tests is observed in cognitively symptomatic older adults compared to cognitively normal. 65 CRCI are often described as a “brain fog” or slower thinking, so it is possible that variability in reaction time may be clinically relevant in this population.66,67 Future studies using cognitive EMAs in MBC should include a healthy, age-matched control group, and evaluate reaction time performance and variability. Furthermore, this study protocol spanned 28 days, whereby it is possible that more within- and between- person variability in symptoms would emerge in longer prospective studies with MBC and is another recommendation for future studies.

Overall, the cognitive (mobile cognitive tests and self-rated items) and psychosocial EMAs administered in this sample of women with MBC demonstrated strong convergent validity, except for N-back. Medium-to-strong correlations were evident among baseline clinical cognitive assessments, with strongest correlations identified for tests of specific cognitive domains (e.g. memory tests correlating with BrainCheck tests for immediate and delayed memory). In terms of age and education, correlations were in expected directions (i.e. higher age, lower scores, and longer reaction time; higher education, higher scores, and shorter reaction time); however, most correlations with education were not significant. These findings may be partially explained by minimal variability in education in this sample. However, NeuroUX tests are designed to be minimally influenced by socioeconomic status (SES) factors, ensuring that they provide an equitable assessment of cognitive function across diverse populations. 68 This approach enhances the reliability and reduces bias of the results, making them more applicable and actionable in diverse settings.

All the EMA self-report items showed strong relationships with all the clinical assessments; but strongest relationships emerged between EMAs tapping into the same construct, demonstrating strong convergent and ecological validity. For example, social EMAs correlated strongest with PROMIS Social, and the cognitive symptoms EMA correlated strongest with the FACT-Cog PCI. The cognitive symptom EMA not only correlated with the clinical cognitive PRO, but also several of the BrainCheck tests (i.e. Trails A and B, Stroop), suggesting that repeated sampling of cognitive PROs in this population reflects both objective and subjective CRCI, although the adjusted p values for these correlations were under the significance threshold (p's = 0.056–0.07). Significant correlations also emerged in this sample among mobile cognitive tests and CRCI symptoms, loneliness, fatigue, depressive symptoms and anxiety across time (Supplemental Figure S2). In the broader CRCI literature, objective cognitive tests rarely correlate with subjective cognitive measures or psychological symptoms.11,69,70 Therefore, our findings provide new insights into the relationship between subjective and objective CRCI at the individual level and suggest that serial mobile cognitive assessments may be sensitive to both objective and subjective CRCI.

This study has several limitations. The relatively small sample size and low variability in race and ethnicity, decreases the generalizability. We also did not examine the within-person effects of time of day that EMAs were administered (administration times varied for each person across the study). While in is not expected that this within-person effect would change our feasibility, reliability, validity findings, future research should examine the within-person effect of time of day on cognitive EMAs. We did not collect data on metastases, limiting our interpretation of the cognitive findings related to burden of disease.3,71 This study did not include a matched control group, limiting our interpretation of the cognitive EMA mean scores and variability in this population. Our feasibility and acceptable findings may also represent a biased sample of women who would be more willing to engage with their smartphones for monitoring purposes or those who felt well enough to participate in the study. Last, this study was conducted over 28 days. While promising, it is not clear that this would be feasible for patients who are undergoing treatments that last months to years, and a longer study is needed.

Conclusion

Despite these limitations, this study extends a growing body of literature employing EMA use for symptom monitoring in populations with metastatic disease, and provides foundational knowledge on the feasibility, reliability, and validity of employing cognitive (subjective and objective) EMAs to monitor cognitive functioning across time in women living with MBC. Future large-scale cohort and implementation studies should confirm these findings and determine how cognitive EMAs can be effectively integrated into electronic health records and clinical care.

Supplemental Material

sj-docx-1-dhj-10.1177_20552076241310474 - Supplemental material for Feasibility and psychometric quality of smartphone administered cognitive ecological momentary assessments in women with metastatic breast cancer

Supplemental material, sj-docx-1-dhj-10.1177_20552076241310474 for Feasibility and psychometric quality of smartphone administered cognitive ecological momentary assessments in women with metastatic breast cancer by Ashley M Henneghan, Emily W Paolillo, Kathleen M Van Dyk, Oscar Y Franco-Rocha, Soyeong Bang, Rebecca Tasker, Tara Kaufmann, Darren Haywood, Nicolas H Hart and Raeanne C Moore in DIGITAL HEALTH

Footnotes

Acknowledgements

This research wouldn’t be possible without the patients, survivors, thrivers, and metavivors who graciously volunteered to participate

Consent to participate

Participants provided informed written consent.

Contributorship

AMH and EWP are responsible for the manuscript's conception and design. EWP conducted the data analyses. SB and OFR conducted the qualitative content analysis. AMH was the PI on this study. AMH, KVD, RCM, DH, OFR, SB, EWP, RT, TK, NHH contributed to revisions of the manuscript. All authors approved the final version.

Data availability

De-identified data will be made available upon reasonable requests to the corresponding author and with data use agreements in place.

Declaration of conflicting interests

The authors declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: RCM is a co-founder of KeyWise AI, Inc. and a co-founder of NeuroUX. The terms of this arrangement have been reviewed and approved by UC San Diego in accordance with its conflict-of-interest policies. AMH was a consultant for Prodeo, Inc. The terms of this arrangement have been reviewed and approved by University of Texas at Austin in accordance with its conflict-of-interest policies.

Ethical approval

This study was performed in line with the principles of the Declaration of Helsinki. The University of Texas at Austin Institutional Review Board reviewed and monitored all study related procedures (STUDY00002393).

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This study was supported by IRG-21-135-01-IRG from the American Cancer Society (AMH). AMH is supported by a grant from the NIH: R21NR020497. NHH is supported by an NHMRC Investigator Fellowship (APP2018070). KVD is supported by grants from the NIH: K08CA241337, R21NR020497, and R35CA283926. DH is a MASCC Cognition Fellow. OYF is a MASCC Equity Fellow.

Guarantor

Ashley M Henneghan, PhD, RN, FAAN.

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.