Abstract

Objective

Motor and cognitive development share biological background within the prefrontal cortex and cerebellum. Monitoring motor development is relevant to identify children at risk of developmental delays. However, access to timely assessment is prevented by its availability and cost. Affordable motion capture technology may provide an alternative to human assessment.

Methods

MotorSense uses this technology to guide and assess children executing age-related developmental motor tasks. It incorporates advanced heuristics informed by pattern recognition principles based on the developmental sequences of motor skills. MotorSense was evaluated with 16 4–6 year-old children from a rural primary school.

Results

A total of 506 jumps, 2415 steps and 831 hops were analysed. The analysis illustrates MotorSense Accuracy (MA), recognising jump forward (89.96%), jump high (83.34%), jump sideway (85.63%), hop (74.58%) and jog (92.34%), is as good as the sensor's precision. The analysis of the tasks’ execution shows a high level of agreement between human and MotorSense's assessment on jump forward (91%), jump high (99%), jump sideway (93%), hop (94%) and jog (92%).

Conclusions

MotorSense helps address the shortage of affordable technologies to support the assessment of motor development using graded age-related developmental motor tasks. Furthermore, it could contribute towards the tele-detection of motor developmental delays.

Keywords

Introduction

Motor development is the age-related process by which children's bones, muscles and their ability to move around and manipulate their environment develops. It is a continuous and sequential process which follows predictable patterns, and it is marked by development milestones. 1 Motor development and cognitive function share biological background within the prefrontal cortex and cerebellum 2 and at the age of 4 years, motor development has been related to cognitive function. 3 Correlation between language learning opportunities and the development of gross motor skills such as sitting 4 or walking 5 has been reported in infants. In childhood development, a systematic literature review noted evidence of correlation between fine and gross motors skills with learning opportunities in mathematics and reading. 6 The lack of early detection of motor developmental delays leads to late intervention which may result in poor communication with the family, 7 performance at school 8 and risk of poor outcomes in the life course.9,10 Monitoring motor development is important to identify children at risk of developmental delays. However, timely and ongoing examination of motor skills development is hindered by the availability of trained professionals, time and cost. 11 Thus, there is need to improve access to, and support professionals in, children's motor development monitoring.

Sensors capable of detecting human movements through intuitive interaction with digital applications based on body motion are available. This technology can support, and increase access to, motor development monitoring from an early stage. MotorSense is a motion-based application developed by the authors which recognises and interprets the execution of locomotor tasks in real time. It is informed by the developmental sequences of motor skills 1 and motor development assessment scales. 12 Currently, it provides activities to performed five chronological age and developmental stage graded motor tasks: jump forward, jump high, jump sideways, hop and jog on the spot. 13 MotorSense aims to widen access to motor assessment by offloading professional observation on technology and assessing children in their natural environment. 14 It provides timely and continuous assessment to support the early detection of motor development delays and afford children their maximum learning potential.

We hypothesised MotorSense could support the assessment of locomotor skills in developing children. To validate this hypothesis, we conducted a feasibility study and posed the following Research Questions (RQ):

- To what extent and how accurately can MotorSense recognise and analyse the execution of locomotor skills (RQ1)? - Is the current accuracy of MotorSense adequate to support locomotor development assessment (RQ2)?

Literature review

Motor development assessment

The assessment of motor functioning in children is conducted by observation by trained professionals, such as paediatricians, occupational therapists and educators, in one-to-one sessions. During these, children undertake motor tasks and professionals evaluate their performance against developmental milestones according to the children's chronological age and developmental stage. To guide their evaluation, professionals use existing assessment frameworks. 15 Among these, the Bruininks-Oseretsky Test of Motor Proficiency – Second Edition (BOT-2) measures motor performance, 16 the Movement Assessment Battery for Children – Second Edition (M-ABC-2) identifies motor impairment, 17 the Test of Gross Motor Development – Second Edition (TGMD-2) assess gross motor skills taught in physical education 18 and the Peabody Developmental Motor Scales – Second Edition (PDMS-2) evaluates motor skills’ development. 12

The PDMS-2 is a standardised instrument specifically designed to assess children's motor development from birth to age 7. 12 It comprises a series of tests covering observable criteria for fine and gross motor abilities arranged into six subsets: Reflexes; Stationary; Locomotion; Manipulation; Grasping; and Visual Motor Integration. Each subset provides a battery of tasks that test specific skill at different development levels. We used the PDMS-2 to inform the development of MotorSense (Table 1).

MotorSense locomotor skills assessment criteria and scores (extracted from PDMS-2).

*Note: The score represents the performance of each task against criteria provided as follows: 0 if the child cannot execute the task; 1 if the execution is partial; and 2 if the execution is complete.

PDMS-2: Peabody Developmental Motor Scales – Second Edition.

Motion capture technology

Motion capture technology facilitates the recording and recognition of human movements. Using devices, such as optical and non-optical sensors, it allows users to interact with virtual environments by recognising their movements in the real world.

Different motion capture systems provide advantages and present challenges. 19 Non-optical systems such as inertial measurement units (IMUs), which capture acceleration and rotational information via gyroscopes, accelerometers or magnetometers, may have low computational cost but require regular calibrations due to the magnetic distortions from the environment. Optical systems, allow users to move freely but their vision algorithms must take into account the background noise, changes in lighting, camera motion or occlusions. 20

The choice of system will determine the available range of motion recognition. In terms of non-optical technology, IMU such as the Nintendo Wiimote™ provides acceleration and direction metadata which enables the recognition of upper limbs movements: when hold by the user; and gait: when attached to the waist. Though this metadata is relevant for motor skills recognition, attaching a controller to children or asking them to hold it while completing motor tasks may be impractical and interfere with the activities and data capture. Pressure mats, such as the Nintendo Wii BalanceBoard™, provide information about the displacement of the body's gravity point and dance mats offer data on the body's position usually limited to five spots. While mats can be used to collect motion data, the information provided by these technologies is limited to balance related motor skills.

In terms of optical technology, a digital skeleton, consisting of the position in space of each joint of the users’ body, can be extracted using colour-based, silhouette geometry, deformable shape and motion-based models. 21 In this end, the Sony EyeToy™ can provide information only on the upper body. Instead depth sensors, such as the Microsoft Kinect™, can capture the full body by extracting the 3D position of the joints. The latter provides advantages for motor skills recognition in children. Namely, it captures relevant data of the full body; it is unintrusive allowing users to move freely as they perform motor tasks; it does not require maintenance or a controlled environment; and it is affordable (<250€).

Although the Microsoft Kinect™ does not meet the gold standard in kinematics measurements due to lack of fine accuracy, it is a trade-off between (a) the accuracy of very expensive Vicon systems for optical sensors, which require a controlled environment and are considered the gold standard in clinical studies and (b) the affordability and accessibility of a device that offers potential as a low-cost analysis tool. 22

Motion capture technology for motor skills recognition

The use of motion capture technology is well documented in wellbeing, 23 education 24 and physical therapy. 25 Studies reporting on the potential of Microsoft Kinect™ to recognise and assess posture, 26 upper-limb coordination, 27 visual motor integration 28 and manipulative motor skills 29 have been conducted. However, its application to assess motor development in children is extremely scarce or non-existent.

In terms of locomotor skills, Rosenberg et al. 30 developed the Kinect Action Recognition Tool (KART) to detect vertical jumps and side steps. To analyse the accuracy of their algorithms, they recorded the users’ skeletons while playing games. Two evaluators watched the skeletons’ videos, counted the jumps and side steps and compared their results to the algorithms’ output. They reported a system accuracy of 81% for jumps and 58% for side steps. Pfister et al. 31 compared the accuracy of the Microsoft Kinect™ and the Vicon system detecting users’ gait. They concluded Kinect™'s gait accuracy is insufficient for clinical use. However, Li et al. 32 reported the reliability of Kinect™'s skeletons pose can be improved by adding human skeleton constraints. While these works explore motor skills recognition accuracy, they do so on adult participants. So, neither the systems were designed to cater and account for developmental milestones nor are the accuracy recognition reported applicable to motor skills recognition in developing children.

In this end, Suzuki et al., 33 developed a model that recognises 13 gross motor skills from 2D videos. They trained and tested their model on data obtained from 22 children aged 4–6 years and obtained a cross-validation of 99.5%. However, their model is limited to the recognition of the motions without taking into account criteria relevant to the skills, the child's chronological age or their developmental stage.

MotorSense

MotorSense is a motion-based application that recognises and interprets the execution of locomotor tasks in real time. It is informed by the developmental sequences of motor skills 1 and motor development assessment scales 12 and developed with the Unity 3D engine.

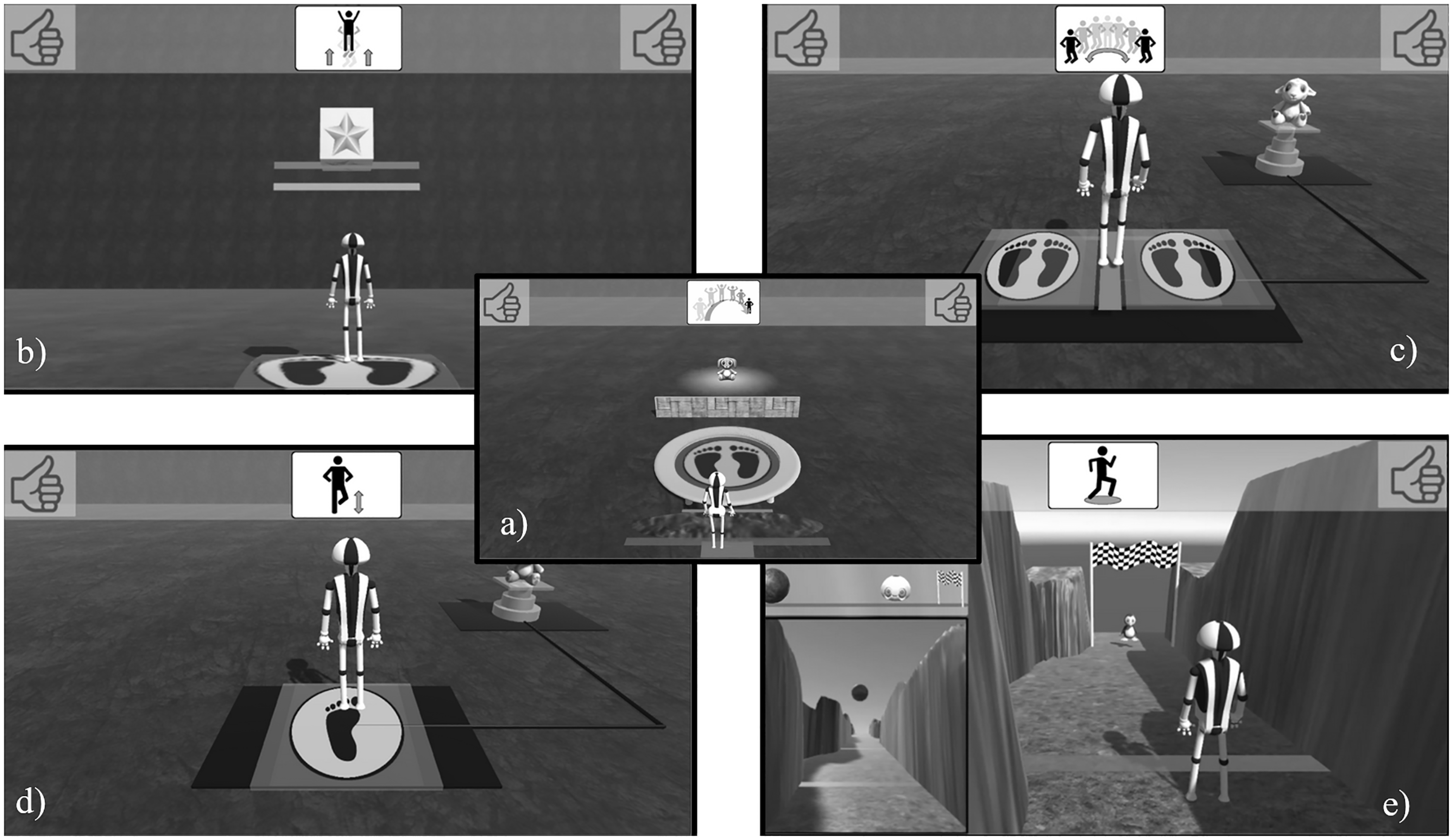

Currently, it provides activities to performed five chronological age and developmental stage graded motor tasks (Figure 1 (a) to (e)): (a) jump forward, (b) jump high, (c) jump sideways, (d) hop and (e) jog on the spot. 13

MotorSense activities: (a) jump forward, (b) jump high, (c) jump sideways, (d) hop and (e) jog on the spot.

Users interact with and control MotorSense through their body motions as illustrated in Figure 2.

Child performing the motor tasks hop, side jump and jog on the spot (left to right) with the MotorSense activities and Activity Mat.

The MotorSense activities guide users to perform the various motor tasks. Their design is minimalist to cater for children at different cognitive developmental stages and incorporates the following features (Figure 3): (1) an avatar that represents the child in the virtual world and mirrors his/her movements; (2) animated tutorials that model the execution of the tasks; (3) graphical metaphors to prompt the execution of the tasks and guide users. For instance, in the jump forward activity, users are presented with a trampoline to elicit them to jump on it; and (4) graphical metaphors to prevent participants from performing tasks differently to the expected. For example, in the jump forward activity, users are presented with a puddle of water between the avatar and the trampoline to prevent them from walking or running instead of jumping. 13

Example of design features MotorSense illustrated in the jump forward activity.

From a technical perspective, MotorSense connects to the Microsoft Kinect v2™ via the Microsoft software development kit (SDK) to obtain the 3D position of each joint of the users’ skeleton. MotorSense uses advanced algorithms to detect the users’ execution of motor skills in real time. The algorithms take the 3D skeleton joints positions provided by the sensors as input, and they extract the relevant features: positions and angles of specific joints in time and space and classify the motion. The extraction of features for each different motor task is informed by, and adheres, to the criteria provided by the developmental sequence of motor skills. 1 We opted for heuristics, a set of rules programmed to solve a problem accurately, because they have the advantage of meeting real-time constraints without relying on datasets. In this end although machine learning models can classify elaborated body motions involving several complex variables, they require large datasets and must integrate temporal and online information for real-time constraints. 34

The class diagram of MotorSense (Figure 4) provides an overview of the system. The central class is called ‘MotionDetection’ and its function is to manage the entire module. The ‘MotionDetection’ object owns a ‘KinectController’ which objective is to read raw data provided by the relevant SDK and to update the 3D positions of the joints of the ‘Body’ object. The ‘Body’ is the main element of this module, it stores the skeleton position, analyses the skeleton and defines the posture of the skeleton at any point in time. The ‘Body’ object continuously checks whether the user is standing on one foot, two feet or on the air. To do so, the algorithm takes into account the ground, the position of the feet, the position and angles of the knees and the lower back position of the spine. These coordinates are stored in the ‘FeetState’ attribute, which can be read by any ‘Motion’ detector. We have implemented the detection algorithms for the motions ‘Walk’ and ‘Jump’.

Class diagram of MotorSense.

The ‘Walk’ algorithm detects whether the user is taking steps by making the algorithm track the ‘FeetState’ of the ‘Body’. Accordingly, every time the ‘Body’ changes to ‘RightFootOnGround’ or ‘LeftFootOnGround’ it means a step has been taken. When walking, one foot is always on the ground, so the ‘IsRunning’ boolean is set to true when the ‘OnAir’ state is detected between two steps.

The ‘Jump’ algorithm detects all types of jumps including hops. The algorithm tracks the body and stores the last position of the feet before ‘OnAir’ is detected and the first position of the feet when the ‘FeetState’ is no longer ‘OnAir’. From these data is possible to analyse the type of jump. First, we establish the displacement vector to know whether it is a high, forward, back or side jump. Then, we determine which foot was the last to take off (left, right, both) and which foot was the first to land. If it was the same foot, the user performed a hop. If the two feet took off and landed at the same time, the user executed a jump. If the user took off with one foot and landed with the other, then it is considered a leap.

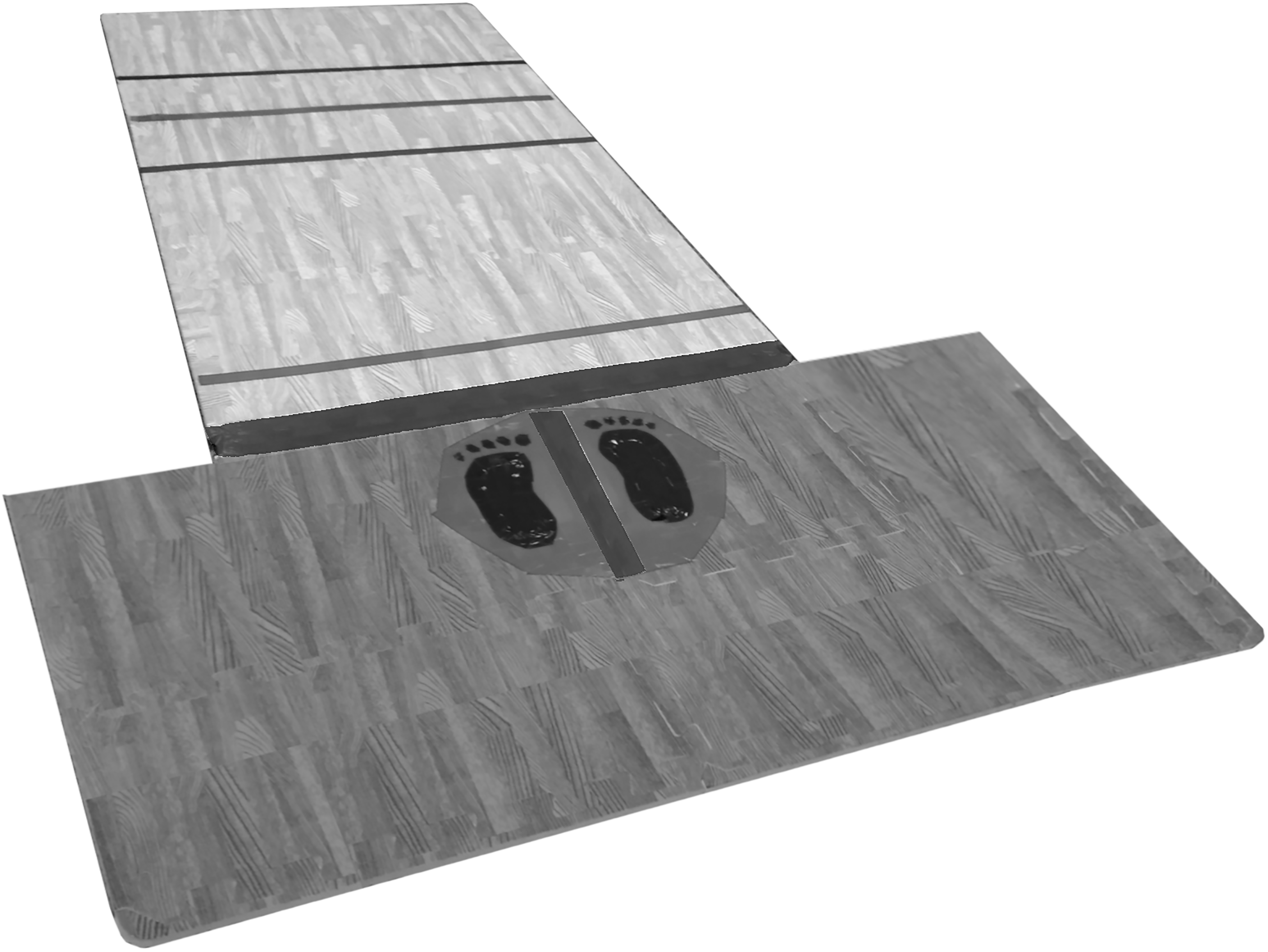

Besides the MotorSense system, we designed the MotorSense Activity Mat which is a foam mat with markers to provide concrete guidance in the completion of the tasks (Figure 5). Concrete prompts specially support children in earlier cognitive development stages, operating at concrete thinking, who could have difficulties following virtual tasks.

MotorSense Activity Mat.

To report on the users’ assessment MotorSense saves metadata: skeletons (series of 3D positions of the body joints); videos (series of coloured images); and task completion results (execution of locomotor skills); in a database. This database can be accessed by professionals via an interface that contains a series of analysis tools which provide information on the children's performance.

Methods

Study design

We conducted an observational study aimed at observing and recording what happened. Research ethics approval was obtained from the authors’ university research ethics committee. All stakeholders: the children, their legal guardians, the school board and teachers; were informed of the study details and those required, signed participation consent forms. Participants were made aware of the video recording and informed they could withdraw from the study at any time. They were aware of the researchers’ presence and the objective of the same. The researchers did not participate in the execution of the tasks and their involvement was limited to providing instructions on how to do the activities before participants were presented with each task.

Setting

The study was conducted in the sports’ hall of a rural public primary school in Ireland. Two identical setups were installed at opposite side of the hall and included: a laptop, a projector and a Microsoft Kinect v2™. For setup 1, the MotorSense Activity Mat (Figure 5) was placed on the ground in front of the participants to provide the users with concrete guidance in the real world. For setup 2, a black cross was drawn on the ground to mark the starting position. The objective of the two setups was to explore whether the guidance provided by the MotorSense Activity Mat had any effect on the execution of the motor tasks. To reduce the learning bias effect the participants entered the sports’ hall in pairs and were randomly allocated to one of the two setups. After completing the activities, they swapped set ups. The participants completed all the activities twice under each setup and were at all times supervised. Although not relevant for this study, on average it took 12 minutes to complete all the activities in each set up.

Participants

An opportunistic sample of 16 children: 8 from the Junior Infant class 4–5 years old and 8 from the Senior Infant class 5–6 years old; from the same school participated in the study. The only inclusion criteria was children in the age range between 2 and 7 years. No exclusion criteria were adopted and none of the participants were diagnosed with motor developmental delay.

Data collection and processing

Data collection was unobtrusive and automated by MotorSense as the participants performed the activities. The following data was collected:

Anonymised colour videos of the participants performing the activities with a frequency of 30 frames per second obtained by the Microsoft Kinect SDK. 3D skeleton animations of the participants performing the tasks corresponding to 19 body joints calculated by the sensor with a frequency of 30 frames per second. Performance data of the participants performing the activities calculated by MotorSense. Performance data from the real execution: Analysis of the coloured videos performed by humans. Performance data from the sensor acquisition: Analysis of the 3D skeletons animation performed by humans. Performance data calculated by MotorSense: Analysis of the 3D skeletons animation performed by MotorSense.

In total, 672 colour videos and 672 series of skeletons data were recorded and used to extract three types of users’ performance data:

The following sections define the performance data for each motor skill assessed by MotorSense, describe the methods to evaluate the execution of each skill and outline the objectives of each activity.

Jump: forward, high and sideways

Performance data

The performance data for the three types of jumps is the geometric distance between the positions of the last foot taking off from the ground and the first foot landing back on the ground.

To calculate the distance jumped from the coloured videos and the animation of the 3D skeletons, the application ‘imageMeter’ (https://imagemeter.com/) was used. ‘imageMeter’ takes an image and defines a parallelepiped that represents the ground perspective where the dimension is known to determine the real distance between two points. The parallelepiped was defined using as reference the MotorSense Activity Mat in setup 1 and the black cross on the floor in setup 2. The 2D points of the starting and landing jumps were extracted from each video and skeletons animation and then input into ‘imageMeter’. The distance calculated by ‘imageMeter’ between those two 2D points is the distance jumped by the participants.

Activity objectives

Each activity was divided into sub-levels parameterised by concrete task objectives defined by the PDMS-2 tool (Table 1):

Jump forward: Given that all the participants were over 4 years old (48 months), we combined the 47- and 53-month target (Table 1) into four sub-levels where the completion objectives were jumping over 30, 60, 76 and 90 cm. respectively. Jump high: The oldest age target for this skill is 45 months (Table 1). Therefore, the activity completion objectives were jumping high above 1, 2.5 and 8 cm, respectively. Jump sideway: This skill only targets children aged 59 months (Table 1). Therefore, the completion objectives for the sub-levels were jumping sideways back and forth 1, 2 and 3 cycles, respectively.

Hop: On the right/left leg

Performance data

The performance data for the hop skill is the number of times a child hops on the same leg. A hop was counted when the same foot took off the ground and landed back while the other foot remained off the ground. To establish the number of hops executed, the researchers watched the videos and skeletons animation and counted the numbers of hops.

Activity objectives

This motor skill only targets children aged 47 months (Table 1). So, the completion objectives for the sub-levels were 1, 3 and 5 hops per leg.

Jog on spot

The Kinect v2™ sensor implemented for the current study has a range limit of 1.2–3.5 m. This presented a challenge for running activities. To bypass this limitation, participants were asked to jog on the spot when presented with running activities.

Performance data

The performance data for the jog on the spot skill is the number of steps taken with both feet. A step was counted when the same foot took off the ground and landed back. To establish the number of steps taken, the researchers watched the videos and skeletons animation and counted the numbers of steps.

Activity objectives

To score the activity, we estimated the distance ran by counting the number of steps taken by each participant applying the following rationale: 1. The length of a walking step of a 4 to 7-year-old child is between 40 and 60 cm 35 ; 2. To walk a mile, instead of run it, an average ratio of 1.4 more steps is required 36 ; 3. The shortest walking step length (40 cm) was multiplied by the previous ratio (1.4). So, one running step is 40 × 1.4 = 56cm. Applying this rationale, the objectives for the run skill reported in the PMDS-2 are 6, 9 and 13.7 m under 6 seconds (Table 1); were converted into the execution of 12, 18 and 27 steps, respectively.

Quantitative variables

To answer RQ1 regarding the capability and accuracy of MotorSense to recognise and analyse the execution of locomotor skills, we extracted the following performance data for each motor skill:

Performance data from the real execution (coloured videos). Performance data from the sensor acquisition (3D skeletons animation). Performance data calculated by MotorSense. Sensor accuracy (SA): which is the percentage error between the performance data of the real execution (a) and the performance data of the sensor acquisition (b). It was calculated as follows: MotorSense accuracy (MA): which is the percentage error between the performance data of the real execution (a) and the performance data of MotorSense (c). It was calculated as follows: Real Completion Task: ‘Passed’ if the performance data of the real execution (a) met the activity objective. MotorSense Completion Task: ‘Passed’ if the performance data of MotorSense (c) met the activity objective.

Then, we calculated the following two variables:

To answer RQ2 regarding whether the current accuracy of MotorSense is adequate to support locomotor development assessment, we determined whether participants ‘passed’ or ‘failed’ the locomotor tasks. This was established by comparing the Performance data against the activity objectives:

Statistical analysis

To validate the internal consistency of the three types of performance data (a) coloured video; (b) skeletons animation; and (c) MotorSense, we ran Cronbach's alpha test with SPSS. An alpha score over 0.8 indicates a high level of consistency. In addition, we ran Cronbach's test to estimate the size effect of the participants, where a value of 0.2 or less means a small effect.

To answer RQ1 regarding the accuracy of MotorSense to recognise and analyse the execution of locomotor skills, we conducted the following non-parametric analysis with SPSS because the data did not have a normal distribution:

Two-independent sample Mann–Whitney U test to establish whether there was a significant effect between the SA and MA. A non-significant effect would mean that the detection of the algorithms matches the accuracy of the sensor. Two-related sample Wilcoxon signed-rank test on MA taking into account the samples with and without the MotorSense Activity Mat. A significant effect would mean that the MotorSense Activity Mat helps the algorithms detect the motions. Two-independent sample Mann–Whitney U test, on MA taking into account the age of the participants. A significant effect between the Junior Infant and the Senior Infant groups would mean that the motion detection works better on a specific group age.

Finally, to address RQ2 regarding whether the current accuracy of MotorSense is adequate to support locomotor development assessment, we calculated the Cohen's kappa with SPSS to measure the inter-rater reliability between Real Completion Task and MotorSense Completion Task. A kappa > 0.8 shows a high level of agreement. The cross-tabulation also provides positive and negative errors. Negative error is when a participant fails the task, but MotorSense returns a pass. This type of error is important because it may hide developmental delay. A positive error is the opposite, when a participant passes the task but MotorSense returns a fail.

Results

We processed 116 jumps forward, 88 jumps high, 302 side jumps, 831 hops (415 on the right and 416 on the left leg) and 2415 steps. As described in the data collection section, we extracted performance data for each motor skill from three difference sources: (a) coloured video; (b) skeletons animation; and (c) MotorSense. We calculated the internal consistency Cronbach's alpha of these performance data obtaining the following scores: jumps forward (α = 0.98); jumps high (α = 0.973); side jumps (α = 0.994); hops (α = 0.964); and steps (α = 0.985).

Since the data was highly consistent for each skill, we calculated the two quantitative variables: ‘Sensor accuracy’ (SA) and ‘MotorSense accuracy’ (MA); where SA describes the accuracy of capture of the sensor and MA describes the accuracy of MotorSense recognising the execution of the motor skills.

The results pertaining to RQ1: To what extent and how accurately can MotorSense recognise and analyse the execution of the five locomotor skills investigated in this study? are reported in Table 2 and the results discussed in the Discussion section.

Statistical results of the comparison of the detection accuracies between the Sensor (SA) and MotorSense system (MA) for the five different motor skills (jump forward, jump high, jump sideways, hop and jog on spot).

A: accuracy; CI: interval of confidence; DV: dependent variable; IV: independent variable; JI: Junior Infant (4–5-year old); Low: lower bound of CI; MA: MotorSense accuracy; Mat: data from the setup using the foam mat; No mat: data from the setup without the foam mat; Nb: total number of motor skills recorded; P: number of participants; SA: sensor accuracy; SI: Senior Infant (5–6-year old); SD: standard deviation; Upp: upper bound of CI; U = result of Mann–Whitney U Test; Z = result of Wilcoxon signed-rank test.

P-values in bold with * represent significant outcomes (see in Discussion section).

To answer RQ2: Is the current accuracy of MotorSense adequate to support locomotor development assessment? we calculated the Cohen's kappa values that show the level of agreement between Real Task Completion (equivalent to traditional assessment) and the MotorSense Task Completion (automatic assessment). These levels of agreement are jump forward (kappa = 0.712, p <.001); jump high (kappa = 0.794, p <.001); jump sideways (kappa = 0.857, p <.001); hop (kappa = 0.842, p <.001); and jog (kappa = 0.784, p <.001). Figure 6 illustrates the percentage of matches as well as the negative and positive errors between Real Task Completion and MotorSense Task Completion.

Level of agreement between Real Task Completion and MotorSense Task Completion.

Discussion

Motorsense's motion detection accuracy

Jump forward

MotorSense detects the distance jumped with an 89.96% accuracy and with no significant difference with the SA (Table 2), which means the detection error of the algorithm is down to the accuracy of the sensor. While 33 reported a 99.5% cross-validation detection rate of jumps by training a machine learning model from videos and 30 reported a detection rate of 81% with the KART system, it is worth noting these studies only report on the detection of the jump without taking into account the actual distance jumped by the children which is a core criterion to assess the development of this locomotor skill.

No significant differences in accuracy were found between the Junior and Senior Infant cohorts, which indicate MotorSense's motion detection is robust from the age of 4. The significant effect found in the use of the MotorSense Activity Mat shows that having concrete markers to see the distances to be jumped might have provided children with the impetus to complete the tasks.

Jump high

MotorSense detects the height of jumps with an 83.34% accuracy and with no significant difference with SA (Table 2). This result seems to validate the algorithms. The accuracy for jump high is slightly lower than jump forward because the feet's positions provided by the sensor are usually unstable. Thus, a few centimetres fluctuation from one frame to the next without the user moving in the real world was appreciated. To minimise the impact of these minor fluctuations, and filter them out, a detection threshold between the feet being on the ground and on the air was set.

The detection ratio is robust from the age of 4since no significant difference was found between the two cohorts of participants. The MotorSense Activity Mat did not affect the result of this motor skill execution.

Jump side

MotorSense detects side jumps with an 85.63% accuracy and with a significant difference with the SA (Table 2). To understand this difference, the animations of the 3D skeletons were examined. Most of the missed side jumps were due to the ‘high’ speed at which participants executed the jumps which made the positions of the skeleton's feet likely to be under the on air threshold.

MotorSense's accuracy of Senior Infants (91.75%) was significantly better than that of Junior Infants (82.31%). This skill is usually acquired around 59 months (Table 1). So, the Junior Infants may not have yet reached the Proficiency Stage 1 for a stable execution of the skill.

The significant effect found in the use of the MotorSense Activity Mat illustrates that having concrete markers to see where to jump helped with the proper execution of the motor task.

Hop

MotorSense detects 74.58% of the hops with no significant difference with SA which stands at 69.13% (Table 2). It is worth noting that when a child is standing on one foot on the hop position the other foot is hidden from the camera and the automatic skeleton recognition of the sensor attempts to estimate the position of the hidden foot. Our ‘Jump’ algorithm identifies these detection misinterpretations, and this resulted in approximately 5% improvement in MA.

There is a significant difference between the Senior (81.07%) and Junior (68.11%) Infant cohorts. Although children acquired the ability to hop at around 57 months (Table 1), the Junior Infants’ execution of the skill is not as proficient as that of the Senior Infants’. 1 No significant difference was found regarding the use of the MotorSense Activity Mat.

Jog

The MotorSense ‘Walk’ algorithm detects the running steps with an accuracy of 92.34% and no significant difference with SA (Table 2). This validates the algorithm which is limited by the sensor precision.

Regarding age, we did not find significant differences in terms of accuracy between the two cohorts of participants, which means MotorSense is robust to assess children from the age of 4. No significant difference was found regarding the use of the Activity Mat either.

The adequacy of the current accuracy of MotorSense to support locomotor development assessment

The Cohen's kappa values show a substantial and high level of agreement of the motor tasks completion between the human evaluation of the videos and the automatic results from the MotorSense system. These values are similar to the ones reported in the literature 27 and suggest that the assessment generated by MotorSense compares well to standard practice.

However, it is important to note the presence of positive errors: when participants pass the task but MotorSense returns a fail; as well as negative errors: when participants fail the task but MotorSense returns a pass result. These types of errors are significant and highlight MotorSense can support rather than replace human expert assessment.

Limitations

The results reported should be leveraged by the fact that the study was conducted on a small effect size (Cohen's d < 0.2), with small age range (4–6 years old) and the extraction of performance data was conducted by the researchers. Furthermore, the set of skills detected by MotorSense is currently limited to a subset of the overall motor development assessment framework. Additionally, due to the 1.2–3.5 m range limit in the Kinect v2™ sensor implemented for the current study, the standard evaluation of the ‘run’ task was performed and analysed as ‘jogging on the spot’. The latter is a distinct movement pattern that requires more cognitive function to suppress forward locomotion and presents other movement patterns. 37

Conclusions

Research on the use of motion capture technology to assess motor development in children is very scarce. The assessment of motor development in children is crucial because motor development has been related to cognitive function. 3 Implementing algorithms for decision support to assess motor development in a timely and continuous fashion in the children's natural environments is paramount. The evaluation of MotorSense shows a high accuracy of detection of the motor skills: jump forward (89.96%), jump high (83.34%), jump sideway (85.63%), hop (74.58%) and jog (92.34%). Such a detection accuracy allows MotorSense to record the performance of the participants and to calculate whether the execution performance meets the expected age and developmental stage parameters. The current study highlights a high level of agreement between the real and MotorSense completion tasks for: jump forward (91%), jump high (99%), jump sideway (93%), hop (94%) and jog (92%). These results illustrate the potential of motion capture technology as a feasible alternative to increase access to and support the tele-assessment of motor development at schools or even homes.

The overall contributions of this study are:

A framework with valid heuristics, based on pattern recognition, in real -time automatically identify the execution of graded age-related developmental locomotor skills. The validation of a framework based on low-cost motion capture technology that may support the tele-assessment of motor development and widen its access. A set of engaging kinaesthetic locomotor activities to guide children executing graded age-related developmental locomotor tasks and assess their locomotor skills. The premise that motion capture technology can be used to support early detection of motor development delays in order to promote early interventions.

Footnotes

Acknowledgement

The authors would like to thank the rural primary school in Ireland for their availability and time as well as all the children and parents who accepted to participate in our study.

Contributorship

Benoit Bossavit: Conceptualization, Methodology, Data curation, Writing—Original draft preparation, Visualization, Investigation, Writing—Reviewing and Editing. Inmaculada Arnedillo-Sánchez: Conceptualization, Supervision, Writing—Reviewing and Editing. All authors certify that they have participated sufficiently in the work to take public responsibility for the content, including participation in the concept, design, analysis, writing or revision of the manuscript.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Ethics approval

The ethics committee of Trinity College Dublin approved this study (REC number: 20190217).

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the EU H2020 Marie Skłodowska-Curie Career-FIT (grant number 713654).

Guarantor

Benoit Bossavit.