Abstract

Background

The potential for machine learning to disrupt the medical profession is the subject of ongoing debate within biomedical informatics.

Objective

This study aimed to explore psychiatrists’ opinions about the potential impact innovations in artificial intelligence and machine learning on psychiatric practice

Methods

In Spring 2019, we conducted a web-based survey of 791 psychiatrists from 22 countries worldwide. The survey measured opinions about the likelihood future technology would fully replace physicians in performing ten key psychiatric tasks. This study involved qualitative descriptive analysis of written responses (“comments”) to three open-ended questions in the survey.

Results

Comments were classified into four major categories in relation to the impact of future technology on: (1) patient-psychiatrist interactions; (2) the quality of patient medical care; (3) the profession of psychiatry; and (4) health systems. Overwhelmingly, psychiatrists were skeptical that technology could replace human empathy. Many predicted that ‘man and machine’ would increasingly collaborate in undertaking clinical decisions, with mixed opinions about the benefits and harms of such an arrangement. Participants were optimistic that technology might improve efficiencies and access to care, and reduce costs. Ethical and regulatory considerations received limited attention.

Conclusions

This study presents timely information on psychiatrists’ views about the scope of artificial intelligence and machine learning on psychiatric practice. Psychiatrists expressed divergent views about the value and impact of future technology with worrying omissions about practice guidelines, and ethical and regulatory issues.

Keywords

Introduction

Background

Worldwide it is estimated that 1 in 6 people suffer from a mental health disorder, and the personal and economic fallout is immense. 1 Psychiatric illnesses are among the leading causes of morbidity and mortality; between 2010 and 2030 this burden is estimated to cost the global economy $16 trillion. 2 Among younger people, suicide is the second or third leading cause of death. 2 Older generations are also affected by mental illness: currently, an estimated 50 million people suffer from dementia worldwide, and the World Health Organization (WHO) predicts this will rise to 80 million by 2030. 3 Stigmatization, low funding and lack of resources – including considerable shortages of mental health professionals – pose significant barriers to psychiatric care.4,5 According to recent WHO data, discrepancies in per-capita availability of psychiatrists is 100 times lower than in affluent countries. 2 Indeed, even in wealthy countries, such as the USA – which has around 28,000 psychiatrists 6 – those living in rural or poverty-stricken urban communities experience inferior access to adequate mental health care. It is anticipated that demographic and societal changes will put even greater pressure on mental health resources in the forthcoming decades. 5 These pressures include: ageing populations; increased urbanization (with associated problems of overcrowding, polluted living conditions, higher levels of violence, illicit drugs, and lower levels of social support); migration, at the highest rate recorded in human history; and the use of electronic communications which has amplified concerns about the effects of the internet on mental health and sociality.7–10

Against these myriad challenges, recent debate has centered on the potential of big data, machine learning (ML) and artificial intelligence (AI) to revolutionize the delivery of healthcare.11–14 According to AI experts, machine learning has the potential to extract novel insights from “big data” – that is, vast accumulated information about individual persons – by yielding precise patterns relevant to patient behavior, and health outcomes.15,16 An estimated excess of 10,000 apps related to mental health are now available for download; the vast majority of these apps have not been subject to randomized controlled trials (RCTs), and many may even provide harmful ‘guidance’ to users. 17 Mining this information for regularities, informaticians argue, may produce precision in diagnostics, prognostics, and personalized treatment plans. 18

Aside from health information gathered via electronic health records and patient reports, an exponentially increasing volume of data is being accumulated via

When it comes to humanistic elements of medical care, many AI experts also argue that by outsourcing some aspects of medical care to machine learning, physicians will be freed up to invest more time in higher quality face-to-face doctor-patient interactions. 26 Going even further, and drawing on findings in the nascent field of affective computing, some informaticians speculate that in the long-term, computers may play a critical role in augmenting or replacing human-mediated empathy; for example, emerging studies suggest that under certain conditions, computers can surpass humans when it comes to accurate detection of facial expressions, and personality profiling.27,28

What do patients think about these advances? A study by Boeldt and colleagues found that patients were more comfortable with the use of technology performing diagnostics than physicians. 29 Surveys suggest a high level of interest among patients to use mobile technologies to monitor their mental health. A recent US survey reported that 70 per cent of patients had an interest in using mobile technologies to track their mental health status. 30 Studies also indicate that patients from diverse socioeconomic and geographical regions express willingness to use apps to support symptom tracking, and illness self-management.30,31 Recent findings also indicate that at least some patients with schizophrenia already use technology to manage their symptoms, or for help-seeking.31,32 However, increasing interest has not so far translated into high levels of usage of mHealth, and some surveyed patients express concerns about privacy. 33

Amid the debate, hype, and uncertainties about the impact of AI on the future of medicine, limited attention has been paid to the views of practicing clinicians including psychiatrists 29 – though there is evidence that this changing.34–36 In 2018, a mixed methods survey of over 500 psychiatrists in France investigated attitudes to the use of disruptive new technologies. 37 The authors reported that there was moderate acceptability of connected wrist bands for digital phenotyping and ML-based blood tests and magnetic resonance imaging, but speculated that attitudes were “more the result of the lack of knowledge about these new technologies rather than a strong rejection”. 37 In addition, psychiatrists expressed concerns about the impact of technologies on the therapeutic relationship, data security and storage, and patient privacy. In 2019 a focus group study by Bucci and colleagues in the UK found that many mental health clinicians believed that more time and resources should be invested in staff training and resources rather than in the adoption of digital technologies, but some expressed fears that aspects of their job could be disintermediated. 38 However, only 4 psychiatrists participated in this study. Another small-scale survey of 131 mental health clinicians (n = 27 psychiatrists) in the USA, Spain and Switzerland, investigated clinicians’ intentions to use and recommend e-health applications among patients with postpartum depression. 39 The survey reported that, compared with primary care doctors, midwives and nurses, psychiatrists and clinical psychologists attributed lower utility to e-health applications for assessing, diagnosing, and treating maternal depression.

Objectives

While current surveys into psychiatrists’ attitudes to ML/AI enabled tools provide some insights into clinicians’ attitudes about adoption, and the potential impact of these technologies on aspects of clinical care, our aim in this survey was to build on these findings to focus, more directly, on how psychiatrists envisage the impact of AI/ML technologies on key components of their job. Specifically, we aimed to determine whether psychiatrists believed their profession would be impacted by advances in AI/ML in the short term (25 years from now), and in the long-term, and to identify the possible positive and negative effects of any such developments. Finally, our aim was to expand on existing research by widening the sample of psychiatrists in our study by undertaking a global survey.

Methods

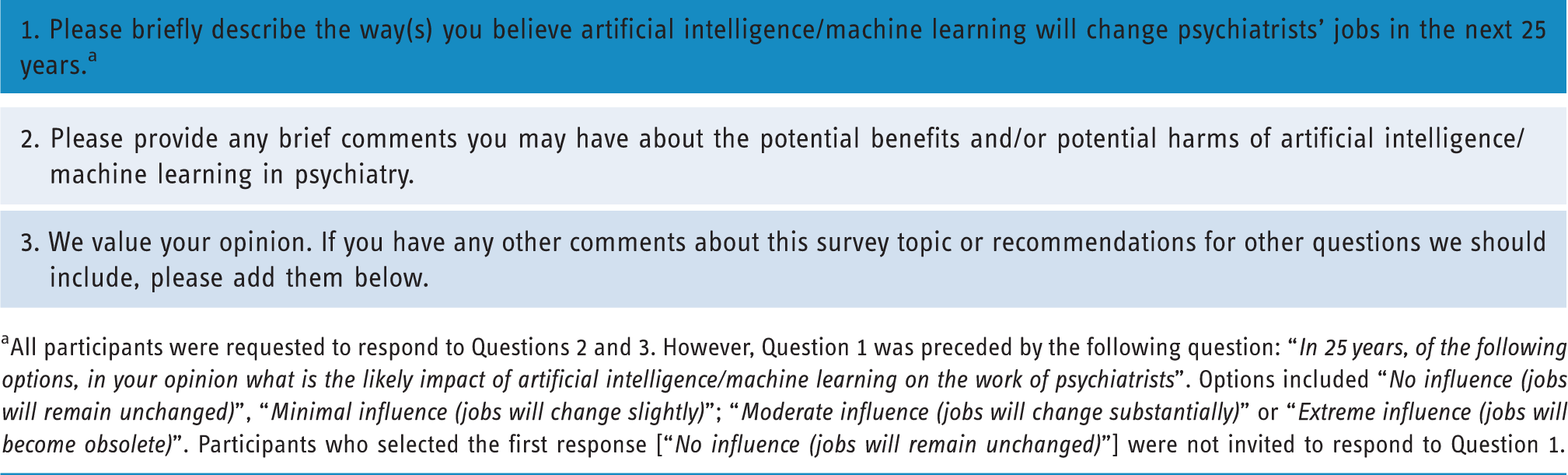

To address these objectives, we adapted a recently published existing mixed methods survey conducted among UK primary care physicians on the topic of disintermediation.35,36 We employed quantitative methods to investigate the global psychiatric community’s opinions about the potential impact of future technologies to replace key physician tasks in mental health care. However, in light of the limited research into psychiatrists’ views about the impact of AI/ML on their profession, and on potential harms and benefits of AI/ML, we incorporated 3 open-ended questions into the survey (see Table 1). These open-ended questions were aimed at acquiring more nuanced insights among our study population.

Open comment questions embedded in survey.

aAll participants were requested to respond to Questions 2 and 3. However, Question 1 was preceded by the following question: “

Main survey

A complete description of the survey methods and quantitative results has been published previously. 40 In summary, we conducted an anonymous global Web-based survey of psychiatrists registered with Sermo, a secure digital online social networking for physicians, and for conducting survey research. 41 Participants were randomly sampled from membership of the Sermo.org [23]. This is the one of the largest online medical networks in the world, with 800,000 users from 150 countries across Europe, North and South America, Africa, and Asia, employed in 96 medical specialties. Users are registered and licensed physicians. Invitations were emailed and displayed on the Sermo.org home pages of randomly selected psychiatrists in May 2019, with quasi-stratification. To overcome limitations of small, national samples in existing surveys, our aim was to recruit one third of participants from the USA, one third from Europe, and one third from the rest of the world. As this was an exploratory study, we aimed to target a sample size of roughly 750 participants to approximate a previous survey of general practitioners’ views, on which the current project was based.35,36 The survey was closed with 791 respondents. This was an anonymous survey and an analysis of de-identified survey data was deemed exempt research by Duke University Medical Center Institutional Review Board in April 2019 (reference: Pro00102582). Invited participants were advised that their identity would not be disclosed to the research team, and all respondents gave informed consent before participating.

The study team devised an original survey instrument specifically designed to investigate psychiatrists’ opinions about the impact of artificial intelligence on primary care. As outlined in the survey (online Appendix 1), participants were expressly requested to provide their opinions about “artificial intelligence and the future of psychiatric practice”. We avoided terms such as “algorithms” in favor of generic descriptors such as “machines” and “future technology.” This was in part to avoid any confusion among physicians unfamiliar with this terminology and to avert technical debates about the explanatory adequacy or specificity of terms of art, such as ‘machine learning’. We adapted a survey instrument from a previously published primary care survey,

35

to investigate whether psychiatrists believed 10 key aspects of their job could be fully replaced by future technology. To avoid ambiguities in how participants interpreted the question, we focused questions on the possibility of full replacement rather than partial replacement. In addition, Likert scales allowed respondents to provide discretionary views about the likelihood of replacement on each task. The 10 key tasks included:

Among the results of the closed-ended questions, the majority of the 791 psychiatrists surveyed (75%) believed that future technology could fully replace human psychiatrists in updating medical records, with around 1 in 2 (54%) believing that future technology could fully replace psychiatrists in synthesizing clinical information. 40 Only 17% of respondents believed that human psychiatrists could be fully replaced in the provision of empathic care, and overall only 4% believed that future technology would make their job obsolete. 40

Qualitative component

To maximize response rate for the qualitative component, as noted, the survey instrument included three open-ended questions that allowed participants to provide more nuanced feedback on the topic of disintermediation within the questionnaire (see Table 1). Comments not in English were translated by Sermo; this process was undertaken by experienced medical text translators, subject to further proofreading and in-house checks. Descriptive content analysis was used to investigate these responses.42,43 Responses were collated and imported into QCAmap (coUnity Software Development GmbH) for analysis. The comment transcripts were initially read numerous times by CB, CL, and MLC to achieve familiarization with the participant responses. Afterward, an inductive coding process was employed. This widely used method is considered an efficient methodology for qualitative data.44–46 A multistage analytic process was conducted: First, we defined the three open-ended questions as our main research questions. Second, we worked through the responses line by line. Brief descriptive labels (“codes”) were applied to each comment. Multiple codes were applied to comments with multiple meanings. Comments and codes were reviewed by CB, CL and MLC. Third, after working through a significant amount of text, CB, CL and MLC met to discuss coding decisions, and subsequent revisions were made. This process led to a refinement of codes. Finally, first-order codes were grouped into second-order categories based on the commonality of their meaning to provide a descriptive summary of the responses. 47 We followed the rules of summarizing qualitative content analysis for this step. 42

Results

Overview

As outlined in the quantitative survey, 791 psychiatrists responded from 22 countries representing North America, South America, Europe, and Asia-Pacific. 40 Of the participants, 70% were male; and 61% were aged 45 or older (see Table 2). All respondents left comments (26,470 words) which were typically brief (1 phrase or 2 sentences).

Respondent characteristics.

As a result of the iterative process of content analysis, four major categories were identified in relation to the impact of future technology on: (1) patient-psychiatrist interactions; (2) the quality of patient medical care; (3) the profession of psychiatry; and (4) health systems (see Figure 1). These categories were further subdivided into themes, which are described below with illustrative comments; numbers in parentheses are identifiers ascribing comments to individual participants.

Themes and sub-themes.

Impact of future technology on patient-psychiatrist interactions

A foremost concern about future technology on psychiatry was the perceived “

Empathy

Numerous comments reflected considerable skepticism that future technology could provide empathic care; an underlying assumption was this was necessarily a human capacity. Some participants were adamant about this; for example:

Although most responses were short – for example, “

Only a small minority of comments hinted at the benefits of machine technology in augmenting empathetic care including the detection of emotions:

The therapeutic relationship

Another dominant view was the broader implications of technology for the therapeutic relationship with the majority of comments anticipating communication problems, lack of rapport, and the potential harms to patients. Notably, the majority of responses assumed that future technology would incur loss of contact with clinicians and even incur harm. For example:

Some respondents indicated that patients would prefer to seek help from humans; for example:

Taking an opposing view, a few psychiatrists suggested that future technology might improve on human interactions; for example:

Telepsychiatry

Only a few participants predicted an increase in the use of telepsychiatry including the use of “

Trust, privacy, and confidentiality

Similarly, implications for the fiduciary doctor-patient relationship also received very limited attention. However, some comments suggested that patients would not find technology acceptable in their care leading to lower rates of satisfaction, resistance, or even refusal to be treated. For example:

For others, trustful interactions could be vulnerable to exploitation or manipulation from patients, including faking illnesses; for example:

However, one psychiatrist took an opposing and more optimistic view, responding that patients may exhibit greater trust in technology than in clinicians:

The topic of data safety, misuse of data, and questions of privacy, received only a small number of truncated comments; for example:

Only one participant suggested that mental health patients may be at greater risk of harm from loss of confidentiality with new technologies:

Impact of future technology on the quality of patient medical care

Implications of future technology for patient care received considerable attention, and a mixture of opinions were offered about potential benefits and harms.

Medical error

Many respondents suggested that technology would reduce errors or improve accuracy in clinical decisions – including in diagnostics and treatment decisions. For example:

A few comments suggested that technology could improve care by identifying drug-drug interactions or potential contraindications to treatment; for example:

More broadly, a minority of comments were very enthusiastic about the role of technology in patient care; for example:

In contrast to these optimistic responses, however, a considerable number of comments suggested that future technology would lead to an increase in medical error. Many of these comments specifically referred to an increased risk of diagnostic error; for example:

Going further, some respondents were adamant about the lack of potential benefits of technology; for example:

Finally, opposing these polarized perspectives, some psychiatrists admitted that they were unfamiliar with the topic of artificial intelligence, and refrained from taking a position; for example:

Avoiding bias in clinical judgments

Many participants anticipated that artificial intelligence would be “

Improved detection and monitoring of mental health

A number of respondents commented on the possibility for improved preventive mental health including earlier diagnosis and increased screening

Other respondents felt technology might facilitate the monitoring of treatment regimens; for example:

On the other hand, some comments were more doubtful that technology might aid preventive services; for example:

Impact of future technology on the profession

Participants expressed a broad range of opinions about the impact of future technology on the profession: from outright replacement of psychiatrists to displacement of key functions of practice, and from skepticism about any change to uncertainty about the future. Responses also indicated a wide array of attitudes about the potential to influence of the field, from very negative to very positive with many psychiatrists displaying neutral perspectives.

The status of the profession

A common perspective was that specific aspects of the job would gradually be replaced by artificial intelligence with some psychiatrists predicting that this would lead to outright elimination; for example:

Some participants viewed change as a threat to the profession; for example:

However, a few disagreed; for example:

Facilitation of work activity

Multiple comments predicted that future technology could facilitate the work of psychiatrists. Although most responses were rather short – for example, “

A considerable number of comments indicated psychiatrists will need to control and verify the technology-based results since machine recommendations would likely be error-prone; for example:

Furthermore, multiple comments suggested that psychiatrists and future technology might have a “

Many comments specified

However, not all participants agreed: a few believed that technology would lead to “

A few psychiatrists anticipated that future technology might play an important role in data-gathering, however comments were typically truncated; for example:

Some commented on perceived improvements with patient history-taking and the establishment of standardized tests and questionnaires; for example:

A related commonly perceived benefit was the provision of greater “

Many comments indicated a role for “

With regard to decisions about treatment course, many respondents stressed that future technology will influence various areas, such as the formulation of the treatment plan, and medication decisions; for example:

In contrast, only a minority of physicians suggested that future technology will assist in determining the “

Limited or negative impact on work activity

Many responses strongly suggested a risk of “

A minority of comments also suggested that future technology might result in a reduction of psychiatric skills and that psychiatrists may lose their “

Going further, numerous comments were associated with considerable skepticism that future technology might ever replace the “

More strongly, some psychiatrists surveyed stated that they do not expect future technology to impact the general professional status; for example:

Finally, multiple comments expressed uncertainties about the impact of technology on the status of the profession, with many psychiatrists admitting they were “

Consequences of future technology at a systems level

Comments encompassed a number of themes related to the impact of future technology on psychiatry at a systems level. The majority of these responses tended to be optimistic, with comments focusing on greater access to psychiatric care; lower costs; and improved efficiencies.

Access to care

Many participants described the many ways that technology could increase access to care particularly in remote or underserviced settings; for example:

Costs

Some psychiatrists speculated that technology could impact the cost of care. Many of these comments mentioned the potential benefit to health care organizations and insurance companies; for example:

Multiple participants commented on the potential for more efficient provision of care; for example:

Scientific innovation and knowledge translation

Only a few comments highlighted the potential for technology to stimulate scientific advancement, such as the facilitation of knowledge translation, increased knowledge exchange, or more specifically the identification of new biological markers or neuroimaging techniques:

Discussion

Principal findings

This extensive qualitative study provides cross-cultural insight into the views of practicing psychiatrists about the potential influence of future technology on psychiatric care (see Box 1). A dominant perspective was that machines would never be able to replace relational aspects of psychiatric care, including empathy and from developing a therapeutic alliance with patients. For the majority of psychiatrists these facets of care were viewed as essentially human capacities. Key questions and findings.

Psychiatrists’ expressed divergent views about the influence of future technology on the status of the profession and the quality of medical care. At one extreme, some psychiatrists considered outright replacement of the profession by AI was likely; yet others believed technology would incur no changes to psychiatric services. Many speculated that AI would fully undertake administrative tasks such as documentation; the vast majority of participants predicted that ‘man and machine’ would collaborate to undertake key aspects of psychiatric care such as diagnostics and treatment decisions. Participants were split over whether AI would ultimately reduce medical error, or improve diagnostic and treatment decisions. Although many believed that AI could augment doctors’ roles, they were skeptical that technology would ever be able to fully undertake medical decisions without human input. For many participants diagnostics and other clinical decisions were quintessentially human skills. Relatedly, risk of overdependence on technology as driving medical error was a common concern.

More positively, many respondents felt technology would be fairer and less biased than humans in reaching clinical decisions. Similarly, participants expressed optimism that technology would play a key role in undertaking administrative duties, such as documentation. Other expected benefits from future technology included improved access to psychiatric care, reduced costs, and increased efficiencies in healthcare systems.

Technology and human interactions

Although psychiatrists, like many informaticians, were optimistic that technology would increase access to psychiatric care, particularly among underserved populations,

48

they were cynical that technological advancements could fully replace the provision of human-mediated empathy and relational aspects of care. Interestingly, very few psychiatrists discussed telepsychiatry despite its potential to increase patient access and adherence to care, however this may have been due to the emphasis on machine learning and artificial intelligence. Technical quality and issues of privacy and confidentiality remain key drawbacks with this medium (see:

The scope of AI in psychiatry

Responses revealed that psychiatrists have myriad, often disparate views about the value of artificial intelligence on the future of their profession. Notwithstanding the wide spectrum of opinion, similar to the views of many experts, a dominant, overarching theme was speculation about a hybrid collaboration between ‘man and machine’ in undertaking psychiatric care.18,25,26 Like informaticians, in particular, many participants highlighted the potential for AI in risk detection and preventative care. 19 More generally, psychiatrists – like informaticians – were optimistic about the benefits of AI in augmenting patient care yet ergonomic and human factors remain ongoing issues in the design of technology. Without due attention to “alert fatigue” and clinical workflow, it is unclear whether AI applications will reap their anticipated potential in improving clinical accuracy, in strengthening healthcare efficiencies or in reducing costs.54,55

Although a considerable number of participants conceived of clinical decisions as essentially and ineffably, a human “art”, biomedical informaticians argue that the ability to mine large scale health data for patterns in diagnosis and behavior is where machine learning presents unprecedented potential to disrupt diagnostic, prognostic, and treatment precision, yielding insights about hitherto undetected subtypes of diseases.15,16,18,23 Against the promise of pattern detection mediated by machine learning, many informaticians acknowledge that current AI is far from sufficient to fully undertake diagnostic decisions unaided, and significant breakthroughs will be necessary if machines are to avoid pitfalls in reasoning, and demonstrate causal and counterfactual reasoning capacities necessary to reach accurate medical decisions.54,56 Importantly, however, and in contrast to many of the physicians surveyed who considered clinical reasoning to be, in essence, a necessarily human capacity, leading AI experts assume that one cannot rule out, a priori, the possibility that technology may one day be fully capable of fulfilling these key medical tasks.

Technology and data-collection

Disparities between psychiatrists and AI experts were apparent in respect of some key developments and debates about the use of technologies in mental health. For example, only a minority of psychiatrists discussed – whether positively or negatively – the role of smart phones in data gathering. So far, however, encouraging evidence demonstrates that utilizing customized smart phone apps with patient health questionnaires can help to capture patients’ symptoms in real-time, allowing more sensitive diagnostic monitoring.57,58 Scarce reflection on the concept of digital phenotyping and the use of diagnostic and triaging apps among respondents contrasts with the predictions of biomedical informaticians who argue that apps and mobile technologies will play an increasing role in accumulating salient personal health information. Wearable devices, it is argued, will help to facilitate real-time monitoring of signs and symptoms, improving accuracy and precision in information gathering, and helping to avoid barriers associated with routine check-ups, such as missed appointments, personnel shortages, and costs on mental health services.59,60

Patients’ preferences and mobile health

Some psychiatrists argued that interfacing with technology would not be acceptable to many patients who would prefer to receive care from doctors. As noted, previous survey research in mobile health (mHealth) undermines the certitude of these claims; for example, a recent US survey of 457 adults identifying with schizophrenia, and schizoaffective disorders, 42% “often” or “very often” reported listening to music or audio files to help block or manage voices; 38% used calendar functions to manage symptoms, or sent alarms or reminders; 25% used technology to develop relationships with other individuals who have a lived experience related to mental illness; and 23% used technology to identify coping strategies. 32 Indeed, previously it was assumed that severity of mental health symptoms would pose a barrier to interest in mHealth 61 ; however, studies show that patients with serious conditions, including psychosis, indicate high levels of interest in the use of mobile applications to manage and track their symptoms and illness.31,32,62 As Torous et al argue, it may be that patients are more comfortable using mobile technology to report and monitor symptoms than earlier methods such as sending text messages to clinicians, and that such a medium reduces stigma. 62 Relatedly, the co-production of medical notes – for example, patients entering information via semi-structured online questions prior to medical appointments – may also play a role in reducing barriers to help-seeking. 63 Although research is ongoing, initial disclosures of symptoms via online patient portals may mitigate stigmatization and feelings of embarrassment in initiating conversations about mental health issues with physicians. 64 Despite patient interest and evidence of high adoption rates for health and wellness apps, there remains well documented problems with drop off rates, and how to design for continuance – issues that surveyed psychiatrists did not directly discuss.65,66

Regulation of mHealth

Conspicuously, participants provided scarce commentary about the regulatory ramifications of artificial intelligence on patient care. As noted, over 10,000 apps related to mental health are available to download, yet most have not been adequately investigated. 17 While recent meta-analyses and systematic reviews indicate that a number of safe, evidence-based apps exist for monitoring symptoms of depression, and schizophrenia, and for reducing symptoms of anxiety, patients and clinicians lack adequate guidelines to facilitate recommendations.67–69 On the other hand, many psychiatrists expressed enthusiasm about the potential of future technology to provide more objective, and less biased clinical judgments. This optimism appeared to overlook concerns associated with “algorithmic biases” – the risk of discrimination against patients, associated with inferior design and implementation of machine learning algorithms.70,71 As AI experts and ethicists warn, bias can become baked into algorithms when demographic groups (for example, along the lines of ethnicity, gender, or age) are underrepresented in training phases of machine learning. Without adequate regulatory standards in the design and ongoing evaluation of algorithms medical decisions informed by machine learning may exacerbate rather than diminish discrimination arising in clinical contexts.

The US Food and Drug Administration (FDA) has so far adopted a deliberately cautious approach to clarifying medical software regulations. 72 Some tech companies have emerged as, “default arbiters and agents responsible for releasing (and on some occasions, withdrawing) applications”. 17 As medical legal experts warn, allowing unregulated market forces to determine ‘kitemarks’ of medical standards, is inadequate to protect patient health. 73

Ethical issues

Related to regulatory issues, few comments – only nine in total – weighed in on ethical issues related to protections for sensitive personal data. Loss of patient data and privacy remain serious concerns for mobile applications and telepsychiatry. In 2018 the European Union (EU) enacted its ‘General Data Protection Regulation’ (GDPR) aimed at ensuring citizens have control of their data, and provide consent for the utilization of their sensitive personal information. The US has considerably weaker data privacy rules, and while similar legislation to the GDPR is mooted to come into effect in California in January 2020, no comparable laws have been enacted at a federal level in the USA nor is there legislative enthusiasm to do so. These issues have prompted much recent media coverage. Given the gravity of ethical issues surrounding adequate oversight for patient data gathering from apps and mobile technologies, including how they might impact doctor-patient relationships and adequate patient care, and the media coverage that these issues have prompted, it was conspicuous that privacy and confidentiality considerations, received scarce commentary from surveyed psychiatrists.72,74 Similarly, while may psychiatrists believed future technology would be a boon to patient access, issues of justice related to the ‘digital divide’ – between those who have ready access to the internet and mobile devices, and those who did not – received no attention.

Strengths and limitations

This survey initiated an original qualitative exploration of psychiatrists’ views about how AI/ML will impact their profession. The themes support and expand on findings of an earlier quantitative survey by providing a more refined perspective of psychiatrists’ opinions about AI and the future of their profession. Utilizing the Sermo platform enabled us to gain rapid responses from verified and licensed physicians from across the world, and this survey benefits from a relatively large sample size of participants working in different countries across a broad spectrum of practice settings. The diversity of respondents combined with the unusually high response rate for questions requesting comments, are major strengths of the survey.

The study has a number of limitations. Comments were often brief, and because of the restrictions of online surveys it was not possible to obtain a more nuanced understanding of participants’ views. Therefore, although a rich and diverse range of opinions was gathered, further qualitative work is warranted to obtain more fine-grained analysis of physicians’ views about the impact of AI/ML on the practice of psychiatry and on patient care. Furthermore, we did not gather information on physicians’ level of knowledge or exposure to the topic or AI/ML in medicine, limiting inferences about awareness, and the depth of participants’ reflections. Notably, some participants explicitly expressed uncertainty about whether AI could benefit medical judgment with some admitting they had limited familiarity with the field. The extent to which participants’ views are comparable to laypersons’ opinions about AI in psychiatry is unknown. Finally, the coronavirus crisis has witnessed an abrupt adoption of telemedicine, and new advances in triaging tools. Conceivably, had the survey been administered after this period, psychiatrists’ responses may have been different. 75 We suggest that further in-depth qualitative interviews, or focus groups would help to facilitate deeper analysis of psychiatrists’ perspectives and their understanding of AI and its impact on psychiatry.

Conclusions

This study provides a foundational exploration of psychiatrists’ views about the future of their profession. Perceived benefits and limitations of future technology in psychiatric practice, and the future status of the profession, have been elucidated. A variety of perspectives were expressed reflecting a wide range of opinions. Overwhelmingly, participants were skeptical about the role of technology in providing empathetic care in patient interactions. Although some participants expressed anxiety about the future of their job, viewing technology as a threat to the status of their profession, the dominant perspective was a prediction that human medics and future technology would work together. However, participants were divided over whether this collaboration might ultimately improve or harm clinical decisions including diagnostics and treatment recommendations, and overreliance on machine learning was a recurrent theme. Similar to biomedical informaticians, participants were also hopeful that technology might improve care at a systems level, improving access, increasing efficiencies, and lowering healthcare costs.

While psychiatrists’ opinions often mirrored the predictions of AI experts, results also revealed worrying omissions in respondents’ comments. In light of high levels of patient interest in mental health apps, the effectiveness, reliability, and safety of machine learning technologies present serious ethical, legal, and regulatory considerations that require the sustained engagement of the psychiatric community.72,73,76,77 So far, the efficacy and safety of the overwhelming majority of downloadable mHealth apps have yet to be demonstrated. 78 Moreover, in contrast to the views of many leading informaticians, psychiatrists were often enthusiastic that technology would reduce biases in decision-making; however, without further regulatory attention to standards of design within machine learning, it is unclear that algorithms will help to redress rather than deepen healthcare disparities. Against these considerations, steadfast leadership is required from the psychiatric community to help patients navigate mobile health apps, and to advocate for guidelines with respect to digital tool, to ensure current mHealth as well as emerging technologies, do not jeopardize standards of safety and trust in patient care.

Finally, given the sheer breadth of opinion, and oversights, 79 it is conceivable that many practitioners, for understandable reasons including work burdens and time constraints, are disengaged from the literature on healthcare AI.25,36 Some respondents admitted that they did not know much about the topic, and with more exposure to this field, psychiatrists’ views may have been different. Recent physician surveys suggest medical education on health technology “leaves much room for improvement”. 79 For example, an extensive cross-sectional survey of EU medical schools found that fewer than a third (90/302, 30%) offered any kind of health information technology training as part of medical degree courses. Similarly, a recent survey of physicians in South Korea reported that only 6% (40/669) of those surveyed described “good familiarity with AI”. 80 While gaps in knowledge are understandable given the volume of medical course curricula and the time pressures of clinical practice, we conclude that the medical community must do more to raise awareness of AI among current and future physicians. Lacking adequate education about machine learning technology and its potential to impact the lives of patients, psychiatrists will be ill-equipped to steer mental health care in the right direction.

Supplemental Material

sj-pdf-1-dhj-10.1177_2055207620968355 - Supplemental material for Artificial intelligence and the future of psychiatry: Qualitative findings from a global physician survey

Supplemental material, sj-pdf-1-dhj-10.1177_2055207620968355 for Artificial intelligence and the future of psychiatry: Qualitative findings from a global physician survey by C Blease, C Locher, M Leon-Carlyle and M Doraiswamy in Digital Health

Footnotes

Acknowledgements

Contributorship

Conceived & initiated project: CB, MD. Analyzed results: CB, CL, MLC. Wrote first draft: CB. Contributed to revisions: CB, CL, MLC, MD.

Declaration of Conflicting Interests

The author(s) declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: Doraiswamy has received research grants from and/or served as an advisor or board member to government agencies, technology and healthcare businesses, and advocacy groups for other projects in this field.

Ethical approval

This study was deemed exempt research by Duke University Medical Center Institutional Review Board on 22 April 2019 (reference number: Pro00102582).

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Blease was supported by an Irish Research Council-Marie Skłodowska-Curie Fellowship. Locher was funded by a Swiss National Science Foundation grant (P400PS_180730).

Guarantor

CB is the guarantor of this article.

Peer review

Dr. George Despotou, WMG has reviewed this manuscript.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.