Abstract

Background:

Quality metrics or indicators help guide quality improvement work by reporting on measurable aspects of health care upon which improvement efforts can focus. For recipients of in-center hemodialysis (ICHD) in Canada, it is unclear what ICHD quality indicators exist and whether they adequately cover different domains of health care quality.

Objectives:

To identify and evaluate current Canadian ICHD quality metrics to document a starting point for future collaborations and standardization of quality improvement in Canada.

Design:

Environmental scan of quality metrics in ICHD, and subsequent indicator evaluation using a modified Delphi approach.

Setting:

Canadian ICHD units.

Participants:

Sixteen-member pan-Canadian working group with expertise in ICHD and quality improvement.

Measurements:

We classified the existing indicators based on the Institute of Medicine (IOM) and Donabedian frameworks.

Methods:

Each metric was rated by a 5-person subcommittee using a modified Delphi approach based on the American College of Physicians/Agency for Healthcare Research and Quality criteria. We shared these consensus ratings with the entire 16-member panel for additional comments.

Results:

We identified 27 metrics that are tracked across 8 provinces, with only 9 (33%) tracked by multiple provinces (ie, more than 1 province). We rated 9 metrics (33%) as “necessary” to distinguish high-quality from low-quality care, of which only 2 were tracked by multiple provinces (proportion of patients by primary access and rate of vascular access-related bloodstream infections). Most (16/27, 59%) indicators assessed the IOM domains of safe or effective care, and none of the “necessary” indicators measured the IOM domains of timely, patient-centered, or equitable care.

Limitations:

The environmental scan is a nonexhaustive list of quality indicators in Canada. The panel also lacked representation from patients, administrators, and allied health professionals, with more representation from academic sites.

Conclusions:

Quality indicators in Canada mainly focus on safe and effective care, with little provincial overlap. These results highlight current gaps in quality of care measurement for ICHD, and this initial work should provide programs with a starting point to combine highly rated indicators with newly developed indicators into a concise balanced scorecard that supports quality improvement initiatives across all aspects of ICHD care.

Trial Registration:

not applicable.

Introduction

Each year more than 16 000 Canadians with end-stage kidney disease receive in-center hemodialysis (ICHD), representing three-quarters of all dialysis recipients. 1 People who receive ICHD experience a high use of health care resources, frequent hospitalizations, and poor health-related quality of life.2-4 Although much of these poor outcomes and high resource use may be related to comorbid medical conditions, a proportion may be related to system-level factors in the provision of hemodialysis that are amenable to quality improvement efforts.

Quality metrics or indicators help guide quality improvement work by reporting on measurable aspects of health care upon which improvement efforts can focus. 5 Over the last decade, quality metrics have been increasingly used to monitor health system performance through comparative reporting and accountability. 6 In some jurisdictions, certain metrics have been linked with remuneration to incentivize consistent performance. 7 Accordingly, quality metrics should be carefully developed, considering whether they are evidence-based, precisely defined, easily and reliably measured, usable for quality improvement activities, and target important improvements in outcomes.6,8 They should also be selected so as to minimize unintended consequences, 6 thereby increasing the value of health care delivery without compromising care. Finally, they should cover different domains and components of health care quality, including the Institute of Medicine (IOM) domains of safe (free from harm), effective (using best available evidence), efficient (limits waste), timely (available when needed), patient-centered (focused on the patient), and equitable (equally available) care, 9 and the Donabedian components of structures (the setting in which care occurs), processes (the care that is done to the patient), and outcomes (how the care ultimately affects the patient). 10

Despite the increased attention devoted to quality indicators and quality improvement in nephrology,11,12 the type and nature of quality metrics currently used in Canadian hemodialysis units has not been documented. Our objectives were to identify, classify, and evaluate ICHD quality metrics that are presently being tracked in Canada, so as to provide a collated resource of existing indicators and inform strategies that may improve future metric development and reporting across Canada.

Methods

Environmental Scan and Indicator Categorization

We performed an environmental scan to identify metrics that are being tracked across provinces (including British Columbia, Alberta, Saskatchewan, Manitoba, Quebec, Ontario, and the Atlantic Provinces) by reaching out to nephrologists at hemodialysis units located in academic teaching hospitals and select community centers via email and phone. We also collected publicly available indicators from provincial and local nephrology programs. We asked nephrologists what metrics related to ICHD are mandated and/or reported across their province, as well as to describe any local quality improvement projects. We stopped the environmental scan once we achieved representation from all the aforementioned provinces/regions.

We combined similar indicators into a single measure and characterized each indicator according to the IOM and Donabedian frameworks of health care quality.9,10 We also included balancing indicators so as to capture measures that look at potential adverse effects of ICHD (eg, infectious complications). 13

Indicator Evaluation

We rated the identified indicators using a modified version of the American College of Physicians/Agency for Healthcare Research and Quality performance measure review criteria, which included the following dimensions (Supplemental Table 1) 14 :

Importance: The metric will lead to measurable and meaningful improvement or there is a clear performance gap;

Evidence-base: The metric is based on high-quality and high-quantity evidence;

Measure specifications: The metric can be clearly defined (ie, numerator and denominator) and reliably captured;

Feasibility and applicability: The metric is under the influence of health care providers and/or the health care system, with data collection and improvement activities both feasible and acceptable.

We rated metrics on a 9-point scale where 1 to 3 indicated “does not meet criteria,” 4 to 6 “meets some criteria,” and 7 to 9 “meets criteria.” Based on these ratings, each indicator received a final global rating based on its overall ability to distinguish good quality from poor quality. 8 We considered indicators as “necessary” if the median global rating was 7, 8, or 9 and there was no disagreement by any member. We considered indicators as “unnecessary” if the median global rating was 1, 2, or 3 and there was no disagreement by any member. We considered all other indicators as “supplemental.”

Modified Delphi Process

We used a modified Delphi approach to evaluate the strengths and weaknesses of the metrics identified by the environmental scan using the criteria described above. This process has been used previously to rate performance indicators.15-21 In short, the ICHD subcommittee of 5 members (the authorship group) individually reviewed the quality indicators identified in the environmental scan in advance of a teleconference. Through group discussion, panelists arrived at initial group ratings within each of the American College of Physicians/Agency for Healthcare Research and Quality dimensions. We shared these initial group ratings with each ICHD subcommittee member to compare with their individual rating, with feedback provided as needed. Any disagreements prompted further ICHD group discussion until achieving consensus. We then shared these consensus ratings with the entire 16-member volunteer committee (representation from 7 of 10 provinces and most possessing advanced training in quality improvement), with further discussion of any ratings that differed by ≥3 points. The final ratings were approved by the full 16-member committee prior to publication.

Ethical Considerations

Formal research ethics board review was not required by Queen’s University based on the Tri-Council Policy Statement for ethical human research, as the focus of the study involved quality indicators and not human participants.

Results

Our environmental scan identified 27 ICHD quality of care metrics across 8 provinces in Canada (Table 1). The IOM domains covered included safe (n = 9, 33%), effective (n = 7, 26%), patient-centered (n = 5, 19%), efficient (n = 3, 11%), timely (n = 2, 7%), and equitable (n = 1, 4%). Donabedian categories included outcome (n = 11, 41%), process (n = 8, 30%), balancing (n = 5, 19%), and structure (n = 3, 11%).

Environmental Scan of Current Canadian Nephrology Quality Indicators.

Note. The denominator is 8 provinces and the table indicates the number of provinces currently using the listed indicator. CKD-MBD = Chronic kidney disease related mineral and bone disorder; ACE = Angiotensin Converting Enzyme; ARB = Angiotensin 2 Receptor Blocker.

Of these 27 indicators, only 9 metrics were tracked in multiple provinces (more than 1 province) and only 4 metrics were tracked in 3 or more provinces (proportion of patients by primary access, proportion of incident patients with goals of care documentation, achievement of anemia targets, and rate of vascular access–related bloodstream infections). None of the metrics measured in multiple provinces assessed efficient or equitable care.

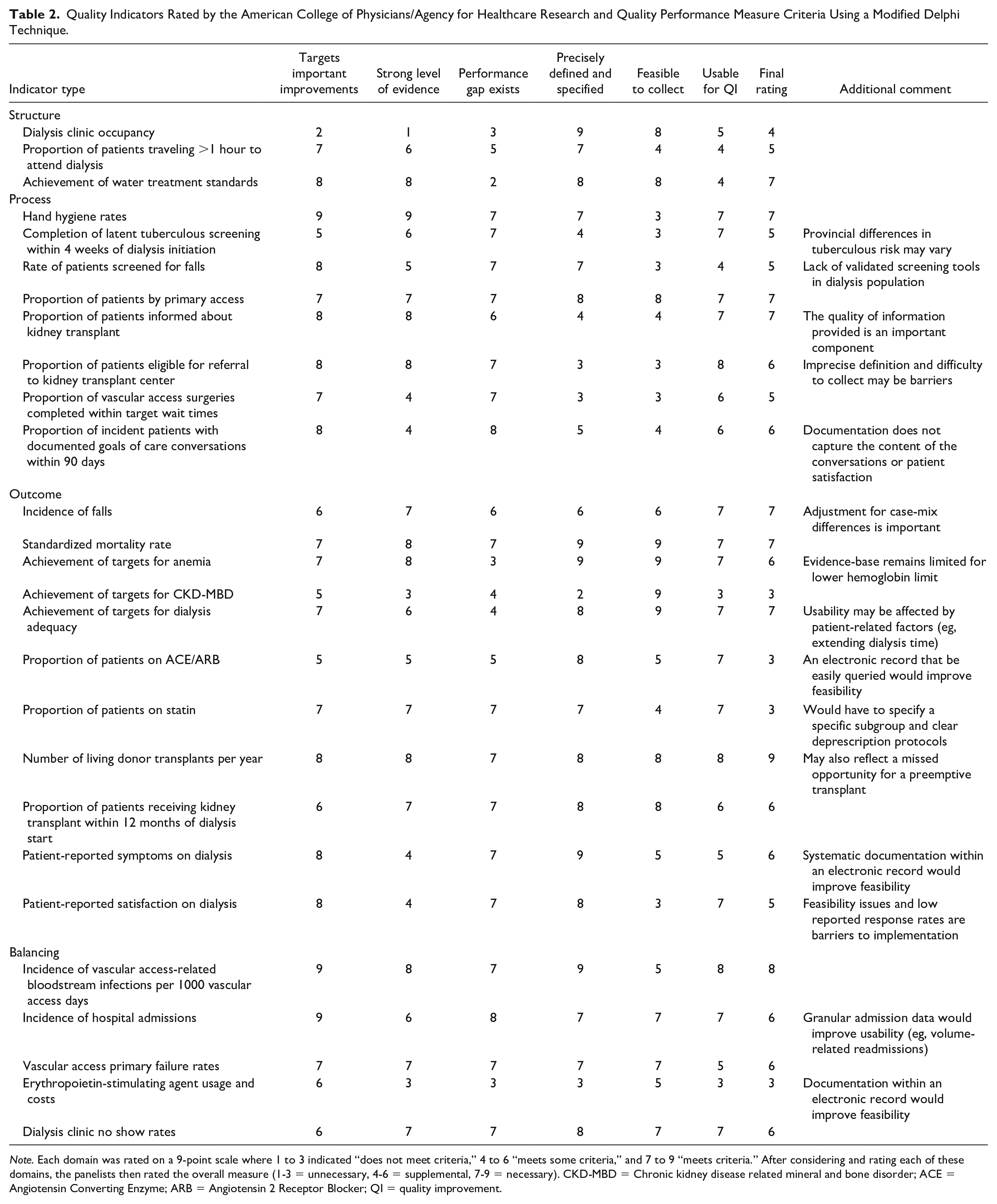

With respect to overall ability to distinguish good quality from poor quality (ie, necessary versus unnecessary for improvement), we rated 9 (33%) indicators as “necessary,” 14 (63%) as “supplemental,” and 4 (15%) as “unnecessary” (Table 2). The 4 “unnecessary” indicators related to laboratory parameters (CKD-MBD targets) or use of medications (ACE/ARB, statins, and erythropoietin-stimulating agents). The 9 “necessary” indicators focused on safe (n = 5, 19%), effective (n = 3, 11%), and efficient (n = 1, 4%) care; none assessed timely, patient-centered, or equitable care. Of the 9 indicators measured by multiple provinces, the panel rated only 2 as “necessary.” These included the proportion of patients by primary access and rate of vascular access–related bloodstream infections.

Quality Indicators Rated by the American College of Physicians/Agency for Healthcare Research and Quality Performance Measure Criteria Using a Modified Delphi Technique.

Note. Each domain was rated on a 9-point scale where 1 to 3 indicated “does not meet criteria,” 4 to 6 “meets some criteria,” and 7 to 9 “meets criteria.” After considering and rating each of these domains, the panelists then rated the overall measure (1-3 = unnecessary, 4-6 = supplemental, 7-9 = necessary). CKD-MBD = Chronic kidney disease related mineral and bone disorder; ACE = Angiotensin Converting Enzyme; ARB = Angiotensin 2 Receptor Blocker; QI = quality improvement.

Among the 21 local hemodialysis programs contacted, most quality improvement initiatives beyond those mandated provincially tracked to the “necessary” metrics of standardized mortality rate (eg, hospitalizations, 30-day rehospitalizations, vaccinations, iatrogenic infections) or dialysis adequacy (eg, target weight achievement, rate of ultrafiltration). None focused on efficient, timely, patient-centered, or equitable care, except for local initiatives to improve health literacy.

Four common themes emerged during the rating process. First, the strength of evidence for most indicators was moderate to strong, with 14 indicators receiving ratings of 7 to 9 and only 3 indicators receiving ratings of 1 to 3. Second, most indicators could be precisely defined and specified, but definitions often varied between provinces. For example, some provinces measure “% of vascular access surgeries completed within target wait times,” where the targets varied between provinces. Third, feasibility of data collection varied across the indicators due to differing provincial infrastructure and electronic medical record (EMR) capabilities; this was particularly problematic for measures that involved medications or were patient-reported. Finally, 8 metrics related to vascular access and transplantation, which may not be completely attributable to ICHD units (eg, % of vascular access surgeries completed within target wait times). The same observations apply to indicators that involve primary care and other specialties, such as falls, goals of care, and hospitalizations.

Discussion

Our environmental scan identified that 27 metrics pertaining to ICHD are currently tracked at the provincial level in Canada. Nine different metrics are being tracked in multiple provinces, but only 2 indicators are tracked in at least 4 provinces. Moreover, we rated only 9 of the 27 metrics as “necessary” to distinguish good quality from poor-quality care based on the American College of Physicians/Agency for Healthcare Research and Quality criteria, with only 2 of these highly rated metrics used by multiple provinces. The 9 highly rated metrics only measured safe and effective care, indicating gaps in the assessment of efficient, timely, patient-centered, and equitable care. This work provides provincial and local hemodialysis programs with a catalog of existing quality metrics from which to choose, as well as highlights domains of quality that require new indicators.

Outside of Canada, quality indicators in nephrology have recently been evaluated by the American Society of Nephrology (ASN) Quality Committee. 22 This group rated 44 ICHD-related indicators, with 33 unique indicators after removing duplicates. Overall, they rated 16 of 33 (48%) highly based on the American College of Physicians/Agency for Healthcare Research and Quality. These findings are consistent with our observation that less than 50% of ICHD metrics are highly rated, as well as the inclusion of highly rated indicators related to vascular access, dialysis adequacy, and transplantation work-up. In both instances, patient-reported outcomes measures were rated as important targets for improvement, but they required changes to increase response rates and usability (ie, tied to frontline staff responses) to maximize their impact on quality of care. Another similarity was the discovery of several nephrology indicators also attributable to other specialties (ie, vascular access complications, hospitalizations, falls, and advance care planning), with the ASN review also rating pneumonia/influenza immunizations and smoking cessation highly. 22 While these mixed attribution indicators should not be completely dismissed, it should be recognized by program administrators that they will require stakeholder engagement outside of nephrology to realize sustained improvement and may be less appropriate pay-for-performance metrics given the influences outside the dialysis unit on target achievement.23,24

In contrast, our ratings differed on several of the ASN review’s highly rated metrics highlighting important considerations in indicator selection and development. 22 While we both rated advance care planning as an important target for quality improvement, our lower overall score reflects current difficulties in measuring whether this process is delivered with sufficient effectiveness to improve patient-centered outcomes. Until such an indicator is developed, we are concerned this promotes measuring a checkbox and gaming rather than improvements in the advance care planning process. We also downgraded indicators without a large performance gap (coined “topped-out measures”), 11 such as dialysis adequacy and achievement of anemia or CKD-MBD targets; moreover, these 3 aforementioned indicators may not be very important to patients, yet dialysis clinicians place a disproportionate amount of focus on them. 25 These metrics could still be followed annually for accountability and patient safety purposes, 26 allowing for the dedication of scarce resources to the frequent assessment of other indicators more in need of quality improvement efforts. Of the ASN review’s highly rated indicators not identified in our environmental scan, 22 several were partly attributable to other specialties (eg, immunizations, smoking cessation), had difficult to measure processes similar to advance care planning (eg, medication accuracy and reconciliation), or required further refinement to avoid unintended consequences (eg, maximum ultrafiltration thresholds). 27

Our environmental scan across Canada further contributes to quality indicator use and development through the striking imbalance observed across the IOM and Donabedian frameworks. Most indicators are process or outcome measures focusing primarily on safe and effective care. Structure measures are needed to ensure the resources (eg, water quality) and staff (eg, staffing ratios) exist to deliver high-quality care. 11 However, many structure measures may be “topped-out” in high-income settings such as Canada, making them more appropriate for quality assurance purposes rather than daily quality improvement activities. 26 Therefore, we suggest the most important gaps are in the underrepresented IOM domains of efficient, timely, patient-centered, and equitable care. Existing indicators in these domains seemed to lack a combination of measurable processes, accepted targets, and practical strategies for quality improvement, which should be prioritized as new indicators are developed and piloted.

To initiate these conversations, we have proposed a balanced quality indicator scorecard for ICHD (Table 3). This incorporates all aspects of the IOM and Donabedian frameworks, as well as several of the highly rated indicators—proportion of patients by primary access, rate of vascular access-related bloodstream infections, standardized mortality rate, and proportion of patients informed about kidney transplant. The transplant metric will require some auditing to ensure the delivery of an effective education program rather than checkbox completion, and it may become “topped-out” over time. Where possible, we modified highly rated indicators by the ASN reviews so that they became more attributable to nephrology, such as volume-related hospitalizations instead of all-cause hospitalizations and time from hospital discharge to nephrologist review instead of postdischarge medication reconciliation. 22 Other newly proposed indicators are meant to elicit further discussion of how to routinely measure these in a manner that is useful for quality improvement initiatives. Some metrics may need to be modified or replaced based on existing data infrastructure and EMR capabilities in different provinces to ensure precise and timely measurement is possible. National collaboration may be helpful to overcoming these barriers, and we believe the minimal overlap across provinces presents an opportunity to learn lessons from each other before each province has its own system that limits provincial comparisons and joint quality improvement activities.

First Step Toward Development of a Balanced Quality Indicator Scorecard for In-Center Dialysis.

Note. Several highly rated indicators from the environmental scan have been populated (in regular font), with indicator gaps (in bold) and additional work needed to complete the scorecard (in italics).

Strengths of this work include the structured approach to indicator categorization and evaluation, using the IOM and Donabedian frameworks along with the American College of Physicians/Agency for Healthcare Research and Quality criteria. Our panel also included members from most regions of Canada to represent different practice patterns, many of whom possessed advanced training and real-life expertise in ICHD and/or quality improvement, ensuring this work is relevant and translatable to frontline improvement efforts.

Although this article is novel in presenting the current state of quality metrics in Canadian hemodialysis units, there are some limitations. First, reported local and provincial quality metrics were solicited by committee members and cannot be considered an exhaustive list. We also only included items specifically identified as quality indicators, and so did not automatically include all items from the Canadian Organ Replacement Register (CORR). 28 Academic viewpoints were overrepresented relative to community-based ICHD practices, which may be important for understanding how to ultimately achieve quality improvement in ICHD at a national level.

Second, we did not focus on the precise operational definitions of the indicators (ie, numerator, denominator, risk adjustment), which will need to be finalized before use. Third, there is some subjectivity to categorization by the IOM and Donabedian domains, as well as the differences between process and outcome measures. This is especially the case for evidence-based processes/surrogates (eg, anemia, dialysis adequacy). 24 Fourth, our committee consisted of physicians and 1 nurse practitioner and did not have representation from allied health, pharmacists, or administrators. The patient perspective was also not represented, which may have overemphasized the views of dialysis providers relative to dialysis recipients. Moving forward, our group intends to involve patients in new indicator development and curation.

Conclusions

By performing a pan-Canadian environmental scan of quality indicators currently in use for ICHD, we identified 27 metrics of which 9 were considered necessary to differentiate between high- and low-quality care. There was little overlap across provinces, with only 9 indicators used by multiple provinces, of which 2 received global ratings ≥7. More than half of the indicators measured safe or effective care, and none of the “necessary” indicators measured the IOM domains of timely, patient-centered, or equitable care. These results should be viewed as the preliminary steps toward development of a balanced scorecard to measure quality of care in ICHD. Future work will require broad stakeholder engagement to combine current indicators and create new indicators that fill the noted gaps, with the ultimate goal of simplifying performance measurement so that it reinforces frontline quality improvement efforts.

Supplemental Material

sj-pdf-1-cjk-10.1177_2054358120975314 – Supplemental material for An Environmental Scan of Canadian Quality Metrics for Patients on In-Center Hemodialysis

Supplemental material, sj-pdf-1-cjk-10.1177_2054358120975314 for An Environmental Scan of Canadian Quality Metrics for Patients on In-Center Hemodialysis by Daniel Blum, Alison Thomas, Claire Harris, Jay Hingwala, William Beaubien-Souligny and Samuel A. Silver in Canadian Journal of Kidney Health and Disease

Footnotes

Acknowledgements

The authors value the support and guidance from Adeera Levin throughout the design and execution of this work. The other 10 volunteer quality committee members include Lisa Dubrofsky, Tamara Glavinovic, Ali Ibrahim, Amber Molnar, Priya Mysore, Krishna Poinen, Sachin Shah, Karthik Tennankore, Amanda Vinson, and Seychelle Yohanna. S.A.S. is supported by a Kidney Research Scientist Core Education and National Training (KRESCENT) Program New Investigator Award (cofunded by the Kidney Foundation of Canada, Canadian Society of Nephrology, and Canadian Institutes of Health Research).

Ethics Approval and Consent to Participate

Not applicable.

Consent for Publication

All authors consent to the publication of this study.

Availability of Data and Materials

Data and materials may be made available upon request to the corresponding author.

Author Contributions

All co-authors contributed equally to this manuscript.

Declaration of Conflicting Interests

The author(s) declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: S.A.S. has received speaking fees from Sanofi Canada. The remaining authors have no conflicts of interest relevant to this study. All authors approved the final version of the submitted manuscript. We certify that this manuscript nor one with substantially similar content has been published or is being considered for publication elsewhere.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.