Abstract

This commentary discusses the role of increasingly artificial intelligence-infused big tech platforms in facilitating and normalising high-emission lifestyles and consumption practices. It introduces the notion of algorithmically facilitated emissions to initiate a shift from a logic of ‘climate collapse by design’ to a logic of ‘emissions reduction by design’. Reducing consumption-based emissions from high-income households and countries is critical for avoiding runaway climate change, and it necessitates redesigning the digital infrastructures that connect production and consumption. Big tech's high-reach artificial intelligence platforms hold a central infrastructural position in many markets and societies. They discursively turn issues into commodities, thereby incentivising unsustainable mass consumption and high-carbon lifestyles. A main argument advanced in the article is that algorithmic and environmental harms are inextricably linked, but neither research nor policy has the terminology to discuss and address this. While the direct negative effects of artificial intelligence development, foundation model training, data centres, and digital devices on the environment are receiving increasing attention in academia and society, the downstream harms resulting from the environmentally unsustainable values underpinning decisions made by artificial intelligence-infused general-purpose platforms remain largely unnoticed. The commentary proposes that the development and impact assessment of big tech platforms ought to be brought in line with a default logic of ‘emissions reduction by design’. For this purpose, we introduce the concept of algorithmically facilitated emissions, defined as downstream emissions that are made possible, more likely, or more intense because people or organisations act in response to or anticipate algorithmic decisions that prioritise high-carbon practices and lifestyles.

Keywords

Introduction

To mitigate climate change and preserve a habitable planet, the lifestyles of high-income households, which account for a disproportionate share of global consumption-based emissions (Calvin et al., 2023), must change radically (Whitmarsh and Hampton, 2024). The societal transformations, at structural and individual levels, required to steer society away from runaway climate change are both urgent and profound (Tian et al., 2024). To enable the necessary reduction in greenhouse gas (GHG) emissions, both the supply and demand sides of economic output must be directed towards reducing resource use, emissions, and other environmental harms (UNEP, 2024). This cannot be achieved without substantial changes to the corporate digital infrastructures that link supply and demand, production and consumption. Such a transformation needs conceptual tools to identify points of intervention, which in turn requires rethinking the most basic values and assumptions embedded in these infrastructures. In this commentary, we want to draw attention to the role of increasingly artificial intelligence (AI)-infused big tech platforms in facilitating and normalising emissions-intensive lifestyles and consumption practices, and we suggest the term algorithmically facilitated emissions to initiate a shift from a logic of ‘climate collapse by design’ to a logic of ‘emissions reduction by design’.

Big tech and high-reach AI

In recent decades, the infrastructures that connect consumers and producers have moved online and been consolidated by the digital platforms of big tech corporations, such as Google/Alphabet, Amazon, Meta/Facebook, Apple, and Microsoft, but also Alibaba, Tencent, and others (Tambini and Moore, 2021). Big tech's multi-sided platforms algorithmically connect producers and consumers of tangible and intangible products and services, and they monetise the extraction of data from these connections. This includes ubiquitous, general-purpose technologies, such as search engines, social media, or online marketplaces that constantly select, rank, present, summarise, or recommend information, services, products, and other content across everyday private and professional life. Increasingly, these platforms are infused with deep learning algorithms, generative and other forms of the so-called AI, which have led to them being described as ‘high-reach AI’ (Söderlund et al., 2024). This term refers to online platforms and search engines that operate as multi-sided businesses and use AI and machine learning technologies alongside other types of data-driven, automated decision-making. They occupy an infrastructural position and already affect most aspects of society, not least consumption patterns and lifestyles. The various machine learning algorithms, subsumed and marketed under the label AI, represent the latest advancement in a series of computational techniques that shape online interaction by commodifying both the interactions themselves and the topics of interaction. This accelerates two significant developments: firstly, that people are primarily cast as consumers, and secondly, that they act as isolated individuals, further reinforced by hyperpersonalisation. Importantly, the ongoing concentration of power is granting an ever-smaller number of corporate actors increasing influence not only over the terms of market exchange and the structure of contemporary data economies but also over social and environmental values more broadly.

This entails two forms of environmental harm: firstly, harms associated with the firms’ tangible assets, and secondly, harms associated with their intangible assets, in particular algorithms and data. Due to the rapid expansion of generative AI, the first category, which includes unsustainable energy demand and associated emissions, excessive water consumption, land use, or hazardous waste related to data centres and other operations, has dominated recent attention (Crawford, 2024; Pasek et al., 2023). Meanwhile, the environmental harms and emissions associated with big tech's main intangible assets, their data regimes and algorithmic decisions, are only superficially considered. Yet it is precisely these that promote and facilitate high-carbon lifestyles and consumption-based GHG emissions, which happened long before the hype around generative AI and will undoubtedly outlast it. It is increasingly recognised that these harms have been overlooked in the AI and data governance landscape (Bossert and Loh, 2025; Hesse et al., 2023; Kaack et al., 2022), and we hope to contribute to this discussion.

Environmental and societal values of big tech AI

Achieving the necessary reduction in consumption-based emissions in the present economic system requires a nuanced understanding and awareness of conflicting values (social, environmental, and economic) embedded in the infrastructures that facilitate consumption and that are dominated by big tech's high-reach AI platforms. Such an understanding, which we can only outline here, helps to align the notions of risk and harm in environmental and algorithmic impact assessment and, through this, to align high-reach AI platforms with environmental sustainability by design (Griffin, 2023; Hacker, 2024), specifically emissions reduction by design. Secondly, a nuanced understanding of the conflicting values in AI platforms is a necessary foundation for developing ways to include big tech's downstream emissions into carbon accounting, while also revealing its limits. Finally, recognising that the necessary emissions reductions may not even be possible under the current economic and political system, it still provides a basis for demanding accountability, not from consumers or even producers, but from those owning the enabling infrastructure that connects them, and whose default is set to contribute to climate collapse.

Examples

Research shows how social media platforms mainstream high-carbon ideals by design (Berglez and Olausson, 2021) and how search engines and online marketplaces are biased toward suggesting unsustainable practices (Haider et al., 2022), including in domains characterised by high consumption-based emissions (Axelsson, Katarina, et al., 2024; Axelsson, Kaya, et al., 2024). In addition to paid advertisements, this consumption bias also manifests in so-called ‘organic content’, where arrays of interdependent algorithmic and human decisions shape, nudge, and encourage certain attitudes and behaviours, while rendering others less visible and more unconventional.

Examples of algorithmically reinforced consumption biases include search engines and online marketplaces that by default prioritise new over second-hand products or repairs and routinely rank flight planners higher up than more sustainable public transport. Other examples are recommender systems that reinforce fast fashion's wear-and-tear logic or meat- and dairy-heavy diets, social media platforms that reward the aestheticization of air travel, weekend getaways and holidays in remote destinations, online maps that are designed to foreground commercial, consumption-oriented rather than public spaces, but also platforms whose algorithms are designed to incentivise extreme political polarisation and inaction in the face of environmental breakdown.

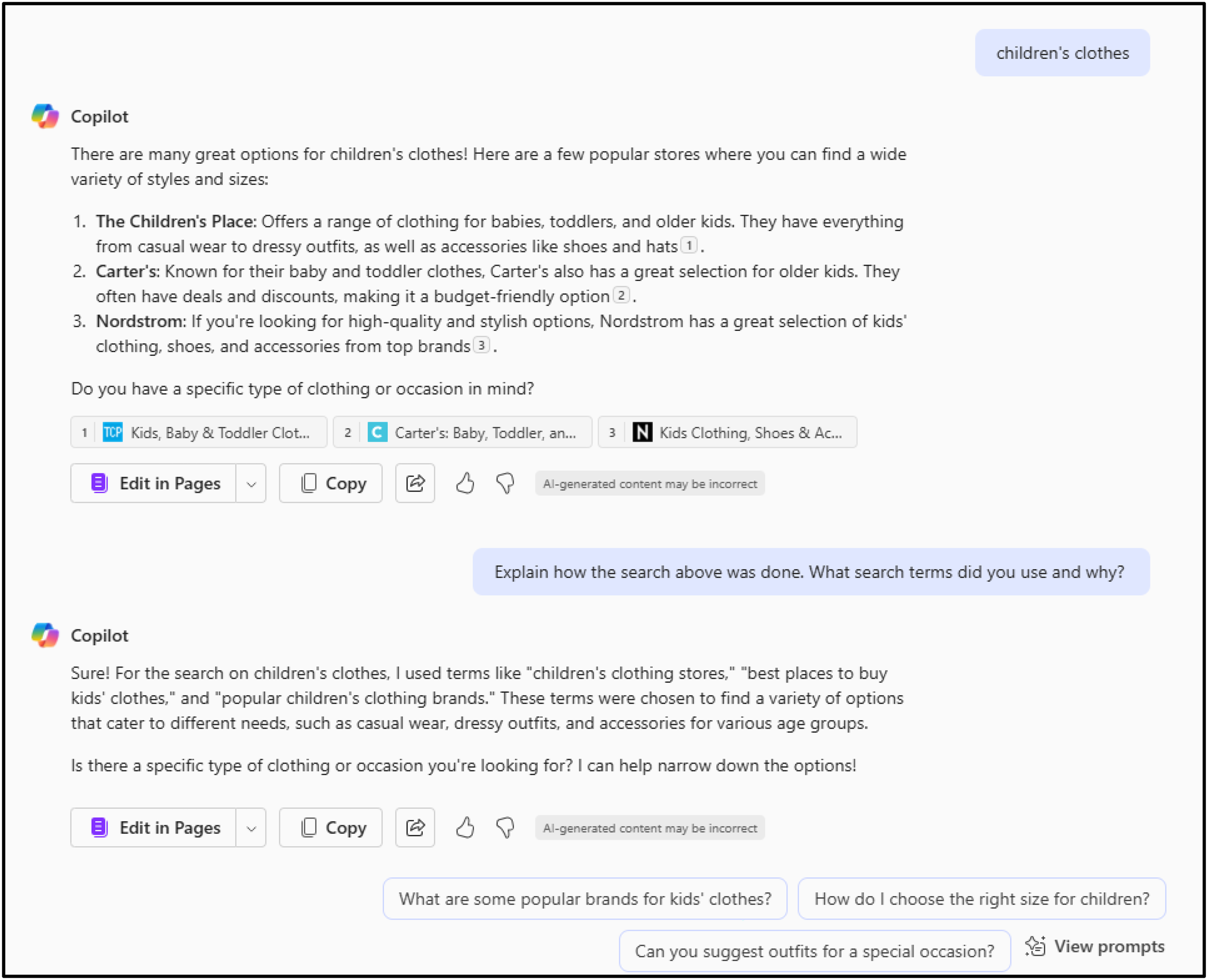

Only one example of many that may illustrate our point: we prompted Bing's Co-Pilot using the generic term ‘children's clothes’ (Figure 1). A commodity relationship was established as it returned a list of ‘popular stores where you can find a wide variety of styles and sizes’ complete with names of stores and links. When we followed up to understand how the search was conducted – although we are unable to verify this – it provided the three queries ‘children's clothing stores’, ‘best places to buy kids’ clothes’, and ‘popular children's clothing brands’. The point is that our search prompt did not specify an interest in buying or even acquiring children's clothes. The response could have mentioned things like typical materials, colours, cultural norms, or historical developments, but it went straight to the commodity relationship.

Screenshot of Copilot response page.

The example illustrates how a search prompt for an object that can be shared, swapped, mended, or learnt about is turned into a commodity that can only be purchased new, thereby manifesting the object's most carbon-intensive form. This commodification default existed in web search and recommender systems before the implementation of LLM-based generative AI summaries and AI-enhanced consumer profiling (Deepak et al., 2024). However, AI fortifies and further normalises these consumption biases. Interactions like these are so commonplace that the algorithmic decisions that underlie them usually go unnoticed. Therefore, the emissions they encourage and – at least potentially – entail are never attributed to these platforms. But they should be. We propose to call them algorithmically facilitated emissions, a critical but actionable concept that places responsibility for emissions at the source of financial value creation, as we discuss in what follows.

The fallacy of individual-level solutions in supply chain capitalism

Big tech's algorithms and data extraction regimes facilitate decisions that, if followed up on, generate consumption-based emissions, but most importantly for our argument on accountability, they monetise this facilitation. As such, emissions and other environmental harms are integral to the ‘supply chain capitalism of AI’ (Valdivia, 2024), intertwining algorithmic and environmental harms. So far, the industry has successfully shifted the responsibility onto its end users. For example, until recently, Google and Amazon have presented themselves as advocates of climate action, but their primary tools are individual responsibility through ‘consumer choice’ (Haider and Rödl, 2023). However, individual-level solutions, such as raising awareness or using labels to highlight more sustainable choices, clearly lack effectiveness as mechanisms for governing systemic problems. Indeed, it is by now evident that individual-level solutions have failed to mitigate the causes of climate change (Stoddard et al., 2021).

Furthermore, value creation in digital markets depends on intangible assets (code, data, and algorithms) (Birch et al., 2021). From this perspective, the extent to which high-reach AI platforms influence individual consumer decisions is irrelevant. These platforms operate irrespective of the downstream emissions linked to the decisions they facilitate, positioning themselves as neutral intermediaries (Hwang, 2020). What matters is that they generate revenue from facilitating such decisions in the first place. We argue that applying the polluter pays principle within multi-sided digital markets should entail attributing a share of consumption-based emissions to businesses that profit from digital assets specifically designed to facilitate lifestyles leading to increases in these emissions.

Are algorithmically facilitated emissions Scope 3 emissions?

Recently, attention has turned to the role of advertising and the financial sector in enabling or financing GHG emissions, with relevant terminology being proposed (Axelsson, Katarina, et al., 2024; Axelsson, Kaya, et al., 2024; Kaack et al., 2022). This development is important, but insufficient. High-reach AI platforms perform central infrastructural tasks and incentivise unsustainable mass consumption and high-carbon lifestyles, not only through identifiable advertising but also, to put it crudely, because their algorithmic decisions and the logic of data extraction enact a consumption-by-default bias. This represents a significant blind spot. Those concerned with environmental issues point to the energy consumption of data centres, and those concerned with algorithms, digital markets, or AI tend to view environmental concerns as a technical rather than a societal issue (Galaz, 2025). The wider environmental impacts are likely to be substantial, but difficult to predict and plan for, let alone mitigate (Bashir et al., 2024; Kaack et al., 2022). Many frameworks for AI and algorithmic systems recognise system-level impacts. However, they lack the conceptual nuance to recognise harms that arise not from unintended system failures but from systems operating as intended, as seen in high-reach AI's downstream environmental harms.

The value creation of big tech's platforms is closely tied to algorithmic decisions whose outcomes are mainstreamed because of their market dominance or even monopolies. Therefore, downstream GHG emissions, which some companies report as part of their so-called Scope 3 emissions (WRI, 2011), ought to include the environmental effects of these algorithmic decisions – and yet, they do not. Some of the world's largest companies belong to this group, including market-leading social media platforms, search engines, digital advertising brokers, digital markets, and more. The physical infrastructures and tangible assets of big tech's high-reach AI already have substantial environmental footprints, which continue to grow as they integrate ever more machine learning solutions and process ever more data. Through multi-actor arrangements, their intangible assets, algorithms, and data regimes embed values that transform most situations into opportunities for consumption. This constitutes a consumption-by-default bias facilitating unsustainable lifestyles, with consequences that are both qualitative (i.e. facilitated consumer choices) and quantitative (i.e. increased product sales), resulting in even more GHG emissions.

Identifying, quantifying, and attributing these emissions will be challenging and can only ever be an approximation, but it is essential for understanding their impact on the climate, environment, and nature, as well as people and society. Reducing the downstream emissions of big tech requires mechanisms and policy instruments to identify and attribute them more consistently. To this end, we propose the concept of algorithmically facilitated emissions, which we define as downstream emissions made possible, more likely, or more intense due to individuals or organisations acting in response to, relating to, or anticipating algorithmic decisions that prioritise high-carbon practices and lifestyles. In terms of the GHG Protocol, the most widely used corporate standard for emissions reporting, these considerations are most closely associated with Category 11 of Scope 3 and pertain to the implications of the intended use of products or services (WRI, 2011). However, less evasive definitions and more rigorous reporting requirements are necessary to hold multi-sided platforms fully accountable for their contributions to climate collapse.

What next? From consumption-by-design to emissions-reduction-by-design

Big Tech's high-reach AI systems are designed to anticipate and exploit people's emotions and behaviours to encourage engagement, from which data and profits can be extracted. Many engagements will, therefore, at some point, result in purchasing decisions. But other quantifiable engagements, such as views, clicks, or data traffic, are also profitable for the platform business and might contribute to the idealisation and mainstreaming of ever more high-carbon practices and extreme social and political polarisation. We are not concerned with calling out problematic consumer behaviour or even the advertising industry, but with the observation that society's dominant infrastructure turns most subjects of conversation into commodities, thereby inevitably turning people (end-users) into consumers. Other subject positions can easily exist. We intended to identify this process and initiate a discussion about alternative visions for the role of different forms of the so-called AI in large online platforms.

Big tech has long followed in the shadows of public discourse and acceptability, whilst they sought to control the media narrative, lobbied legislation that enabled their monopolies, and avoided local controversy, but also presented themselves as environmentally conscious stewards of green economic growth as long as this seemed opportune (Brodie, 2024). There is a real danger that the debate overlooks potentially very significant environmental harms that arise not from unintended side-effects, but from the intended functioning of many of these high-reach AI platforms. Environmental activists, academics, and journalists have expressed alarm, while arguing for greater attention to the environmental consequences of AI, including the development of accounting requirements and related standards (Luccioni and Hernandez-Garcia, 2023). In the context of the accelerating climate crisis and policies to support a necessary societal transformation, this issue must be properly recognised, including the role of algorithmically facilitated emissions, which are not accounted for in current reporting practices and remain unaddressed in current regulatory attempts.

We hope that the notion of algorithmically facilitated emissions will become part of a wider discussion at this moment of heightened sensitivity to the impact of (generative) AI and other algorithmic decisions and contribute to regulatory efforts that consider environmental and algorithmic harms together. Furthermore, even if it is a ‘seemingly intractable task’ (Bashir et al., 2024), there is a need to devise strategies and frameworks to incentivise providers of high-reach AI to adopt emissions-reduction-by-design approaches. Most importantly, we need to keep asking ourselves if and how digital infrastructure can exist that does not contribute to climate collapse by design.

Footnotes

Acknowledgements

The authors would like to thank the editor and two anonymous reviewers who took the time to provide insightful and occasionally challenging comments. They have really helped us to sharpen our argument.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The Swedish Foundation for Strategic Environmental Research via Mistra Environmental Communication; Formas: A Swedish Research Council for Sustainable Development (2022-01352_Formas); the Swedish Research Council (2023-01549_VR).

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.