Abstract

Attracted by the promise of a broader and more egalitarian sample of readers than published book reviews provide, researchers are increasingly scraping social reviewing platforms like Goodreads for data about readers’ behavior. Yet, treating online book reviews as direct proxies for readers and books can be problematic, as they are socially and technically constructed artifacts shaped by platform dynamics, whether between developers and users, or book industry stakeholders and reviewers. To uncover these complexities, we computationally curated 331,211 self-identified incentivized book reviews to understand the growth of incentivized content, and how these purportedly equal-access social reviewing spaces are re-inscribing the inequalities of traditional book reviewing and publishing. Our findings underscore the necessity of critical examination of both online book reviewing and cultural datasets derived from social media platforms. With the growing restrictions on access to platform data for research, this study also demonstrates the potential for a mixed-method analysis of historical scraped datasets; an approach that will likely be of interest to many researchers working with cultural data moderated by black-box algorithms. With this method, our research reveals for the first time the scale of the phenomena of incentivized book reviews that is well known to users of Goodreads but remains largely anecdotal. Additionally, it illuminates the rise of sponsored content while contributing to broader discussions on computational approaches to digital economies of prestige and the responsible use of platform-mediated cultural datasets across disciplines.

Keywords

Introduction

In the last two decades, research on socio-cultural practices and communities has increasingly focused on digital spaces, leveraging what is often termed digital trace or social media data. Tweets are tracked to model elections and crises (Hong and Nadler, 2011; Crawford and Finn, 2015), Amazon reviews are analyzed to optimize product sales (Maity et al., 2017), and Reddit discussions are monitored to predict exposures to certain drugs (Barenholtz et al., 2021). However, it is difficult to assess the quality and scholarly usability of such datasets due to most digital platforms’ black-box algorithms and restrictions on data access (Mittelstadt et al., 2016). Previously, researchers partnered with platforms to gain access. However, the rise of data access restrictions and the politics of platforms like Twitter have increasingly made such partnerships fraught. Alternatively, researchers can sometimes overcome these obstacles as “end-users” through web scraping or Application Programming Interfaces. But such approaches are far from a panacea, and datasets derived from these methods often struggle to account for how platform algorithms and interface designs structure the data, let alone broader commercial and political imperatives shaping these platforms.

Among the platforms researchers have studied using these methods, online book review platforms like Goodreads stand out. The proliferation of online book reviews on such platforms has significantly alleviated the once historical and archival bottleneck that made it difficult to conduct empirical research on everyday reading, readerships, and reception of books (Boot, 2013; Milligan, 2016). This shift has implications for many disciplines, particularly Digital Humanities (DH) 1 , where researchers have increasingly leveraged social media data in the emerging subfield of computational reception studies (Walsh, 2023). In particular, these studies have conceptualized and treated online book reviews as evidence of readerships, as book reception, or as “crowdsourced” and decentralized amateur reviews to be compared with professional literary criticism. While this work has provided valuable insights into contemporary and historical reading tastes, it also raises a crucial methodological concern: are these online reviews truly organic reflections of amateur readers?

The answer is often difficult to determine. As digital artifacts, online reviews are produced at the nexus of the decades-long conglomeration of the publishing and cultural industries on one hand, and on the other, the fast-growing platform economies and fast-moving online communities (Murray, 2021; Sinykin, 2017; Gillespie, 2024). Therefore, some reviews might serve as advertisements in book marketing campaigns, whereas others may be AI-generated content for malicious review bombing. Such complex dynamics not only limit how researchers can access these datasets, but also pose a challenge for how scholars navigate the gap between (a) the limited sample of platform-curated data and (b) the broader social dynamics they aim to study. This is a major issue not just with DH research of online book reviews, but for all critical data studies, since what the data represents versus how it is used in research can often be misaligned, ultimately impairing researchers’ ability to make in-depth, critical, and contextualized interpretations of these datasets (Crawford and Finn, 2015; Olteanu et al., 2019).

The responsible use of these datasets has implications far beyond scholarly research, particularly as social media platforms become “battlefields” not only for commercial campaigns but also for cultural and political conflicts. How do these forces shape the data produced in these digital cultural spheres? In the case of book reviews, how do these platforms influence which books get reviewed? Which reviewers have a voice? Which groups of authors and readers might be negatively portrayed, or unfairly deprived of visibility on social reviewing platforms (Gillespie, 2024)? For researchers studying reception or taste, these questions may seem far afield, but we argue that the sociotechnical and commercial dynamics that shape what is reviewed and who reviews on a platform are crucial to understanding the complexities––and therefore the reliability––of these cultural datasets. However, this goal is far from straightforward. As previously discussed, platforms’ privately-owned algorithms and restrictions on data access continue to constrain researchers’ ability to understand “the fabric of sociomaterial life” and the “myriad of intrinsic and external interests” that shapes and produces these datasets (Wu, 2023).

Rather than discarding these datasets because of their limitations, we argue for a critical approach that uses them as windows into these platform dynamics rather than treating them as neutral reflections of user behavior or cultural trends. To demonstrate this approach, this research presents a case study on incentivized reviews as a window into the broader dynamics underlying online book reviews 2 among platforms, book industry stakeholders, and individual reviewers. We draw upon a historical dataset of eight million book reviews scraped from Goodreads to trace how incentivized reviews were produced, and how their presence expanded from 2007 to 2017. To the best of our knowledge, this work is one of the first to explore the evolution and impacts of sponsored content in online book reviews at large scale. Methodologically, this research addresses a pressing challenge across disciplines: how can researchers scrutinize and make critical use of cultural traces in the face of limited algorithmic transparency and restricted data access. Our findings underscore the importance of approaching these datasets with caution and critical awareness in scholarly research. More broadly, this work could also help inform the public, particularly everyday readers who may be anecdotally aware of the existence of sponsorship but, given the scale of these platforms, might not fully grasp its impact. By making this phenomenon more visible, our findings highlight the importance of critically evaluating and engaging with book reviews on these social reviewing platforms.

Literature review

Contextualizing online book reviews

Since their emergence in the late 17th century, book reviews have served a variety of purposes, from generating revenue to boosting the reputations of book stakeholders. Historically, publishers provided free copies to critics, journalists, and literary influencers to shape public perception of books. Some reviews were explicitly politicized and partisan critiques, and “contemporary readers would have understood their origins well enough to be able to use them judiciously” (Gael, 2012). In the 19th and 20th centuries, newspapers and magazines frequently featured publisher-sponsored reviews, and some publications even had dedicated advertising sections masquerading as editorial content. But, by the late 20th century, professional book reviews were criticized for being both “a byproduct of the publishing industry” (Fay, 2012) where privileged authors review each other's works; and subject to the structural problems (e.g., gender disparity and racial inequality) of the publishing industries (JSTOR Daily, 2015; So, 2020). While many of these issues persist, the rise of digital platforms has introduced a new layer of complexity: contemporary book reviewing increasingly falls under the broader category of incentivized or sponsored content (sometimes abbreviated as “sponcon”), extending beyond books to a wide range of consumer products.

Although book reviewing is far from novel, the study of online book reviews through “big data” is a relatively recent phenomenon across disciplines. In DH, these reviews are often perceived and presented as a more expansive form of amateur reader responses produced in a more “deinstitutionalized participatory culture” (Murray, 2021) than traditional book reviewing, offering a window into book reception and the evolution of literary genres (Bourrier and Thelwall, 2020; Walsh and Antoniak, 2021). In Library and Information Sciences (LIS), online book reviews are frequently treated as analogous to circulation and patron records (Bates, 2022), and are used to inform cataloging, indexing, and reference services (Bartley, 2009; Lu et al., 2010). Meanwhile, in e-commerce and marketing, online book reviews function as transaction data, or “hedonic consumption” (Hirschman and Holbrook, 1982) reviews, to inspect economic effects and consumption phenomena. These datasets are also popular in computational fields for tasks like review classification, sentiment analysis, and narrative extraction (Wan et al., 2019; Holur et al., 2021). Such multi-disciplinary research on online book reviews leads to divergent and sometimes even conflicting modeling of data (Hu et al., 2023). Yet, despite this scholarly attention, little research directly examines how different disciplines conceptualize and use online book reviews.

Characteristics of incentivized content

At its core, content incentivization refers to the practice of businesses providing free products or services in exchange for promotional exposure. In the case of online book reviews, this typically involves authors, publishers, and advertising companies distributing free or advance copies to reviewers whose profiles align with their promotional goals. In return, these reviewers post about the books on selected platforms, intentionally blurring the lines between organic reader responses and commercially driven discourse.

However, incentivized book reviews differ from other forms of sponsored content in a key way: they do not need to be positive to drive sales. While reviews for utilitarian products—typically based on tangible attributes and utilitarian performance—usually need to be positive to motivate purchases, for books, “any publicity is good publicity” (Sorensen and Rasmussen, 2004). While positive reviews have a more significant positive impact on book sales, negative reviews also increase sales (Sorensen and Rasmussen, 2004). A study of fiction books reviewed in the New York Times found that for books by “relatively unknown authors,” bad reviews increase their sales by 45% on average (Berger, 2012). Incentivized book reviews may contribute to book sales simply by increasing the books’ visibility online, regardless of the sentiment or overall rating. Additionally, the long-standing practice of providing advance/free copies in book marketing has shaped how readers evaluate these reviews. For instance, literary critics, librarians, and scholars have long received free desk copies and provided feedback thanks more to their professional roles than to personal preferences. Their invited feedback, such as book blurbs or scholarly book reviews, while being “bought,” are still valued by many as trustworthy and high-quality assessments. In these cases, incentivized reviews, which are clearly not independent or egalitarian, are still perceived as valuable due to the expertise of the reviewers.

These dynamics underscore why differentiating between incentivized and other types of book reviews remains challenging and requires nuance. For instance, incentivized book reviews are much more varied in terms of sentiment and ratings than reviews of other forms of incentivized content. When acknowledging the sponsorship, whether being positive or negative, many book reviewers claim that their opinions were not altered by the incentives, in essence, asserting authenticity and credibility of the reviews. Furthermore, although some users are skeptical of such disclaimers (Cui et al., 2022; Kim et al., 2019), preliminary computational classification conducted on incentivized versus non-sponsored book reviews has not identified significant differences between their review texts (except for the incentive disclosure sentences) or numeral ratings, seemingly endorsing the reviewers’ disclaimers (Hu et al., 2024). These findings suggest that for book reviews, incentivization does not necessarily need to alter a review in order to make a difference. Instead, simply changing the range of books discussed may be a sufficient reward for sponsors.

Changes in the social reviewing landscape

While online book reviewing initially promised to democratize literary discourse—and some independent, community-driven spaces do exist—much of it now operates within the attention economy and remains a “profit good” controlled by commercial platforms. These platforms prioritize engagement-driven algorithms that often reinforce the commercial interests of their stakeholders (Gillespie, 2014). As a result, online book reviewing is shaped not only by traditional cultural institutions like academia and educational curricula (Murray, 2021; Walsh and Antoniak, 2021), but also by accelerating market forces, including the monetization of book content online (Zhu et al., 2025) and word-of-mouth effects that work particularly well at amplifying book visibility. New review formats, such as “Bookstagram” posts, “BookTube” and “BookTok” videos, have been notably successful (Reddan et al., 2024), transforming user engagement and content visibility to commercial value of the books. For instance, a book published in 2014 surged back onto the bestseller lists in 2020, thanks to a video of a teenager crying over it went viral on TikTok (Harris, 2021). Platforms dedicated to book content also leverage such trends by offering pay-to-promote tools for publishers and authors, Platform Monetization further embedding book reviewing within the platform economy. However, this level of monetization has significant side effects on the social reviewing ecology. For instance, Goodreads's promotional model has been shown to reduce genre diversity among the most popular books and discouraged participation from independent publishers and authors (Zhu et al., 2025).

The intensifying competition for book visibility has also given rise to third-party publicity/marketing companies, ranging from legitimate publicity firms to exploitative businesses that prey on authors and manipulate review systems. On “matchmaking” websites like BookSirens, clients with books to promote can choose among potential reviewers and pay according to the numbers of books listed and copies subsequently downloaded (BookSirens, 2023), whereas reviewers who enroll to review advance reader copies are categorized and listed along with their reviewing history and profile (e.g., number of followers and average book rating) (BookSirens, 2025). The prospect of AI-generated book reviews, already available from companies like Hyperwrite (Hyperwrite, 2025), further complicates credibility by producing content that mimics human-authored reviews. In addition, cyberstalking and extortion scams—such as threats of “review bombing” negative ratings—have emerged as a means of manipulating book visibility (McCluskey, 2021). The rise of these business models has blurred the boundaries between incentivized, paid, and fake reviews, and created an ecosystem where distinguishing “untrustworthy” or “deceptive” content from authentic reviews online is increasingly difficult (David and Pinch, 2006; Fornaciari and Poesio, 2014).

While incentives can be an effective marketing strategy, they can also drive biased and fraudulent reviews in favor of the sponsors’ interest. Research has shown that algorithmic discrimination, censorship, and fraudulent reviews further complicate the authentic exchange of opinions online (Alter, 2017; Chen, 2023; Y. Zhang et al., 2020). In response, agencies like the Federal Trade Commission (Federal Trade Commission, 2024) and platforms like Google Maps have introduced regulations and applied restrictions on incentivized reviews (Chew, 2016; Google Maps, 2025). However, enforcement remains difficult, as disclosure requirements are often ignored or inconsistently reported (Cui et al., 2022; Petrescu et al., 2018). Furthermore, even when regulations are applied, many problems potentially caused by incentivized reviews, like the inflation of reputation and ratings, persist (Park et al., 2023). On platforms like Amazon, incentivized book reviews are notably exempt from general prohibitions on paid reviews (Chew, 2016), which might indicate that incentivized book reviews are more acceptable for readers, compared to other types of product reviews.

Beyond commercialization, user motivations and behaviors are constantly evolving. On one hand, various digital literary, media, and cultural theorists have emphasized that book reviewing is often voluntary and community-driven, with readers actively shaping literary culture and engagement (Jenkins, 2012; Driscoll, 2024). On the other hand, research in psychology, management, and sociology (Chiu et al., 2006; Maltby et al., 2016) reveals that reviewing can be strategically manipulated by people abusing social reviewing systems to seek monetary rewards, social capital, attention, and even conflict. Such tensions among users, communities, and platforms can lead to collective action, including “deplatforming” campaigns, where users delete content or boycott platforms to protest against the platform or other users (Albrechtslund, 2017). Essentially, negative and positive reviews alike are influenced by a range of these sociocultural phenomena, including cancel culture, trolling, spamming, review bombing, and the promotional effects of awards and screen adaptations (Hu et al., 2024).

As book reviewing becomes increasingly embedded within platform economies, traditional models of review credibility are being challenged. Existing research lacks a single theory or model to account for this growing entanglement of algorithmic visibility, financial incentives, and user-driven reviewing behaviors that is shaping incentivized reviews. Instead, there is an increasing awareness among scholars around the necessity of analyzing political economic dynamics, “business partnerships of algorithmic cultural decision-makers,” and “users’ perceptions, strategic behaviors and cultural commentary” to better understand the complexities of book reviewing in the digital age (Murray, 2021; Rebora et al., 2021; Pianzola et al., 2022). In addition, the emergence of generative AI and automated content production makes this line of research more urgent than ever. AI's ability to obscure sponsorship, fabricate engagement, and amplify artificial discourse raises serious concerns about the reliability of online review content.

Built on the aforementioned critical approaches, this research emphasizes that book reviews can no longer be considered isolated literary assessments, but are rather complex artifacts shaped by broader platform logics. Our work investigates (a) how sponsored reviews have developed on Goodreads since 2007; and (b) whether the opportunity to produce incentivized reviews is evenly allocated across books and users. Understanding the scale and distribution of incentivization is especially important to help uncover how much, if at all, the publishing industry is transforming in response to these digital platforms as compared with simply continuing previous trends. To uncover evidence relevant to these questions, this study utilizes historical scraped datasets in ways similar to platform audits. Such an approach involves analyzing a platform's verifiable inputs and outputs to better understand review visibility, credibility, and manipulation (Andrejevic et al., 2015), though previously mentioned platform restrictions are making such investigations difficult. Our approach demonstrates that these reviews do not necessarily align with dominant scholarly conceptualizations: they are neither the amateur reader responses defined in reception studies, nor the consumer-driven reviews presumed in marketing research. Instead, they exist within a broader web of platform incentives, governance structures, and market-driven influences—which, if overlooked or inadequately contextualized, can lead to analytical misinterpretations and distortions in research.

Methods

Data source and pre-processing

To investigate incentivization, this study analyzes a large corpus of book reviews from the platform Goodreads, treating them as natural language text documents. Our choice of platform is intentional. Scholars argue that “Goodreads is above all else a node in platform capitalism” with a “near-monopoly” of reader opinion-influencing, and that “overblown claims of readerly empowerment” on Goodreads “misperceived the exact nature of Goodreads and the power relationships that permeate it” (Murray, 2021). However, empirical investigations into these critiques remain notably scarce.

We retrieved the reviews from the Goodreads Book Graph Datasets, which includes multi-genre book reviews posted between 2006 and 2017, along with book and author metadata (Wan and McAuley, 2018; Wan et al., 2019). Our use of the reviews complies with the latest investigation into the legal risks and ethical considerations associated with research on online book reviews (Hu et al., 2023). Table 1 summarizes the distribution of the number of disambiguated reviews across eight genres. Column “Imported” shows the original number of reviews imported from the Goodreads Book Graph datasets, enriched with book and author metadata. Among these metadata-enriched reviews, we selected the reviews that were in English and no shorter than 50 characters for further analysis, which resulted in 7,833,389 reviews. The “Pre-Processed” column shows the genre-wise distributions of the reviews in our corpus. The content of the “Selected” and “Incentivized” columns are explained in the next subsection.

Distribution of reviews by genre, categorized by selection status, in our dataset.

Identification of incentivized reviews

In the Goodreads datasets, there was no information provided by the platform regarding whether a review was incentivized or not. Therefore, to construct a dataset of incentivized reviews, we used a dictionary-based approach and focused on the self-identified incentivized reviews (since these reviews were the only group of reviews confirmed to be incentivized). Our method was effective because many incentivized reviews contained explicit sponsorship disclaimers, in compliance with the FTC guidelines or community-based protocols. Figure 1 presents an example of a self-identified incentivized review (Text edited and anonymized to respect user privacy and anonymity). Through close reading and manual annotation of a sample of 500 reviews of this type, we developed a dictionary of the 75 most common indicator keywords and phrases in sponsorship disclaimers, such as “unbiased review” and “an ARC” (an acronym for “advance reader copy”). 3 We then searched for reviews that contained at least one of the sponsorship keywords or phrases contained in our dictionary. We refer to the retrieved reviews as “Selected.” Table 1 Column “Selected” shows their genre-wise distributions.

A sample incentivized review.

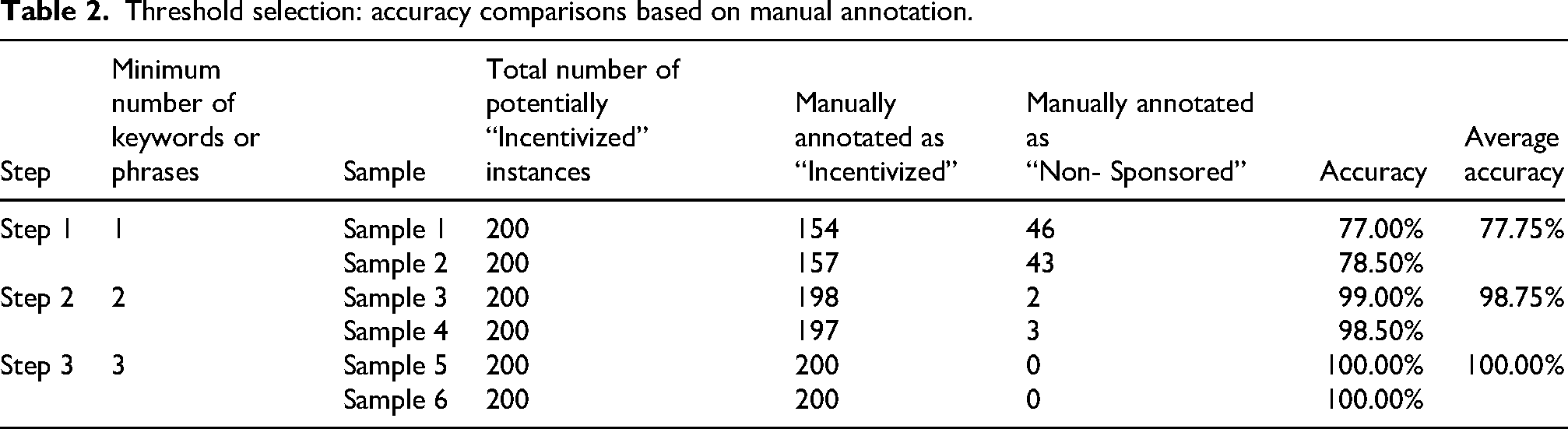

We tagged the “Selected” reviews that contained at least two sponsorship keywords/phrases in the dictionary as “Incentivized” instances. The minimum threshold of two was identified through manual annotation of six samples of “Selected” reviews (sampled randomly and independently without replacement). Table 2 provides details about the manual annotation results. Each sample includes 200 “Selected” reviews: Samples 1 and 2 are reviews with at least one sponsorship keyword/phrase from the dictionary; Samples 3 and 4 are reviews with at least two; Samples 5 and 6 are reviews with at least three.

Threshold selection: accuracy comparisons based on manual annotation.

With these identified candidates from our computational pipeline, we manually assessed and annotated these samples to find out how many reviews in each sample were in fact self-identified incentivized reviews. We define the percentage of verified incentivized reviews in each sample as “accuracy” throughout this article. As is shown in Table 2, 77% reviews in Sample 1 and 78.5% reviews in Sample 2 were manually verified as “Incentivized,” with an average accuracy of 77.75%. In comparison, when the minimum number of keywords/phrases was set to 2 and 3, the average accuracy was 98.75% and 100.00%, respectively. This indicates that setting the threshold for the number of sponsorship keywords/phrases to two or above significantly improved the incentivized review identification.

To settle on the threshold, we looked into the distribution of “Selected” reviews by their number of sponsorship keywords/phrases, as presented in Table 3. Given the large percentages of “Selected” reviews with exactly two sponsorship keywords/phrases (24.60%), we sampled two sets of them and manually verified that on average 98% of them self-identified as incentivized. Setting the threshold as three keywords or more would likely remove a large number of self-identified incentivized reviews that include two sponsorship keywords/phrases. Therefore, we set the minimum number of keywords/phrases as 2 to include these instances. Table 1 Column “Incentivized” shows the genre-wise distributions of these incentivized reviews.

Distribution of “selected” reviews by the number of sponsorship keywords/phrases they contain.

To evaluate the accuracy of using this threshold, we annotated another two sets of randomly and independently sampled reviews with at least two sponsorship keywords/phrases. The first sample contains 500 reviews that were randomly selected and therefore also unbalanced genre-wise; and the second sample contains 504 reviews that were evenly distributed across the eight genres (63 per genre). The percentages of self-identified incentivized reviews in both samples are above 99%.

Limitations of datasets and notes on the “non-sponsored” reviews

While we believe our method is robust, there remain inherent limitations in detecting incentivized reviews that are not self-identified, or that are self-identified with a lexicon outside our dictionary. The former problem is intrinsic due to the lack of ground truth in the original Goodreads datasets, while the latter is unavoidable for dictionary-based approaches, as keyword lists are not exhaustive, suggesting that false negatives can occur. However, manual verification confirmed that selecting reviews with two or more sponsorship keywords gives us a set of incentivized reviews with 98% accuracy.

Selecting reviews with no sponsorship keywords provides a similarly reliable set. We confirmed this, as before, by testing our strategy on two samples: one with 500 genre-wise imbalanced reviews, and the other with 504 genre-wise balanced reviews. Our manual annotation resulted in 14 self-identified incentivized reviews in the first sample and 9 in the second. Instead of labeling this set as “non-incentivized,” we refer to this group of reviews as “non-sponsored” to emphasize that it is not conceptually opposed to the “incentivized” set. For example, reviews containing exactly one sponsorship keyword/phrase are excluded from both groups, as they fall between the two categories and do not meet our predefined selection criteria.

It is important to note that our study does not attempt to exhaustively identify all types of incentivized reviews. Rather, our research goal is to explore the nature of the difference sponsorship makes on reviews. For this purpose, having well-defined subsets that we can confidently characterize as incentivized vs non-sponsored is more valuable than comprehensively labeling all reviews with potentially more errors. While we might have used other methods such as building classifiers to make probabilistic judgments about boundary cases (i.e., potentially incentivized reviews without disclaimers), our research goal is better served by setting those ambiguous cases aside and focusing on subsets where we have high confidence.

Identification of potential sponsors

Relying on such high accuracy in our samples, we can also start to identify the sponsors of incentivized reviews, using a combination of computational methods––specifically named entity recognition (NER) and fuzzy matching in Python (SeatGeek, 2025; spaCy, 2025)––along with more qualitative close reading. First, we applied our NER pipeline to the sponsorship disclaimer sentences extracted from each incentivized review to collect an initial set of relevant named entities. This procedure identified 497,365 named entities from 261,367 of the 331,211 incentivized reviews. We narrowed our analysis down to nine of spaCy's built-in entity types, dropping those that are unlikely to be review sponsors (e.g., date and time). 321,514 named entity instances matched any of these nine types. After deduplication, 57,910 unique named entities remained, including (a) name variations (e.g., misspellings and erroneous references included) to the same entities (e.g., “NetGalley,” “netgally,” and “Net-galley”); (b) false positives (e.g., “Ohhhh” and “WOOHOO”); and (c) combinations of numbers, punctuation marks, and other characters (e.g., “∼ Re-read∼” and “future!!I”).

Given the above-mentioned size, heterogeneity, and noise in entity mentions (e.g., misspellings, incorrect references, non-entity phrases), our analysis focused on the 100 most frequently identified entities. We manually aggregated them into 39 unique curated entities. We applied fuzzy matching to the 57,910 unique entities to retrieve potential matches for these 39 most frequent entities, with 80% set as the threshold for positive matches (based on preliminary tests). Then, we updated the total occurrences of each of the 39 entities based on the fuzzy matching outcomes. After this round of manual data aggregation, we checked the remaining entities (excluding the 39 most frequent ones) that appeared no less than 99 times in the incentivized reviews and conducted a second round of data cleaning with fuzzy matching. Another 35 common entities were thus identified and aggregated. In the end, we collected data about 74 named entities that are among the most frequently identified sponsors.

Results

Prevalence and distribution of incentivized reviews

Among all the 7,833,389 reviews after filtering, we identified 818,872 reviews that contained at least one keyword/phrase in the sponsorship dictionary. This indicates that self-identified incentivized reviews might make up to 10.45% of the selected Goodreads dataset. On the lower end, 331,211 reviews with at least two keywords/phrases from the sponsorship dictionary amount to 4.23%. While the broader 10% is relevant, our following analysis focuses on those reviews with at least two sponsorship keywords/phrases to ensure the utmost accuracy in discussing incentivization.

Figure 2 visualizes the distribution of the incentivized reviews, with blue bars representing the total number of filtered reviews and orange bars indicating the portion of incentivized reviews. From 2007 to 2016, the total number of incentivized reviews increased alongside the total number of filtered reviews, suggesting a positive correlation between the two trends. This correlation was confirmed by the Linear Regression model of scikit-learn in Python (Pedregosa et al., 2011), which produced an R² of 0.9135, reflecting a reasonably strong relationship between the two trends. These findings suggests that while incentivized reviews are not overtaking the platform, they have become well established. In addition to Figure 2, Table 4 provides (a) detailed numbers of filtered and incentivized reviews by year; and (b) annual percentages of incentivized reviews. Notably, two observations stand out. First, the total numbers of both filtered and incentivized reviews in 2017 were lower than those in 2016. Second, the increasing trend of incentivized reviews from 2007 to 2016 appears to have been reversed in 2017. This downturn is likely explained by the fact that the data collected for 2017 was incomplete, as the original datasets were scraped in late 2017 and the latest book review was added on September 30th, 2017.

Number of total and incentivized reviews from 2007 to 2017 (data for 2017 is incomplete).

Number and percentage of total and incentivized reviews by year.

Figure 3 visualizes the genre-wise (the y-axis) distributions of these 331,211 self-identified incentivized reviews over time (the x-axis). The blue shades of colors indicate relatively lower percentages of incentivized reviews (0–4%). The deeper the blue colors, the lower the percentages. The red blocks indicate higher percentages of incentivized reviews (4% and above). The deeper the red colors, the higher the percentages. Longitudinally, percentages of incentivized reviews increased essentially monotonically across all genres from 0.061% in 2007 to 5.903% in 2016. There was a significant increase in the percentages around 2013: The percentages of incentivized reviews of romance and fantasy_paranormal books doubled from 2012 to 2013. One likely explanation for these significant increases since 2013 is Amazon's acquisition of Goodreads that year (O’Donovan, 2023).

Percentage of incentivized reviews across eight genres by year.

In addition, the percentages of incentivized reviews have remained relatively high after 2013, particularly among reviews on genre fiction, such as mystery_thriller_crime books (the highest percentage: 7.73% in 2016) and romance (the highest percentage: 7.74% in 2015)—two of the most lucrative book genres. In contrast, incentivized reviews remained sparse in poetry reviews (the highest percentage: 2.8% in 2017). These findings suggest that incentivized reviews were increasingly prevalent on Goodreads, and were heavily focused on the most lucrative genres which have both large market shares and extensive promotional campaigns (Circana, 2024; Grand View Research, 2024).

Production of incentivized reviews by users

The second analysis inspected user review patterns to answer two sets of questions: (a) Which users produced the most incentivized reviews, and what patterns, if any, emerged from their posting?; and (b) Did these users post non-sponsored reviews as well, and if so, what were the ratios of their non-sponsored to incentivized reviews? We used numerical anonymized user IDs throughout our analysis.

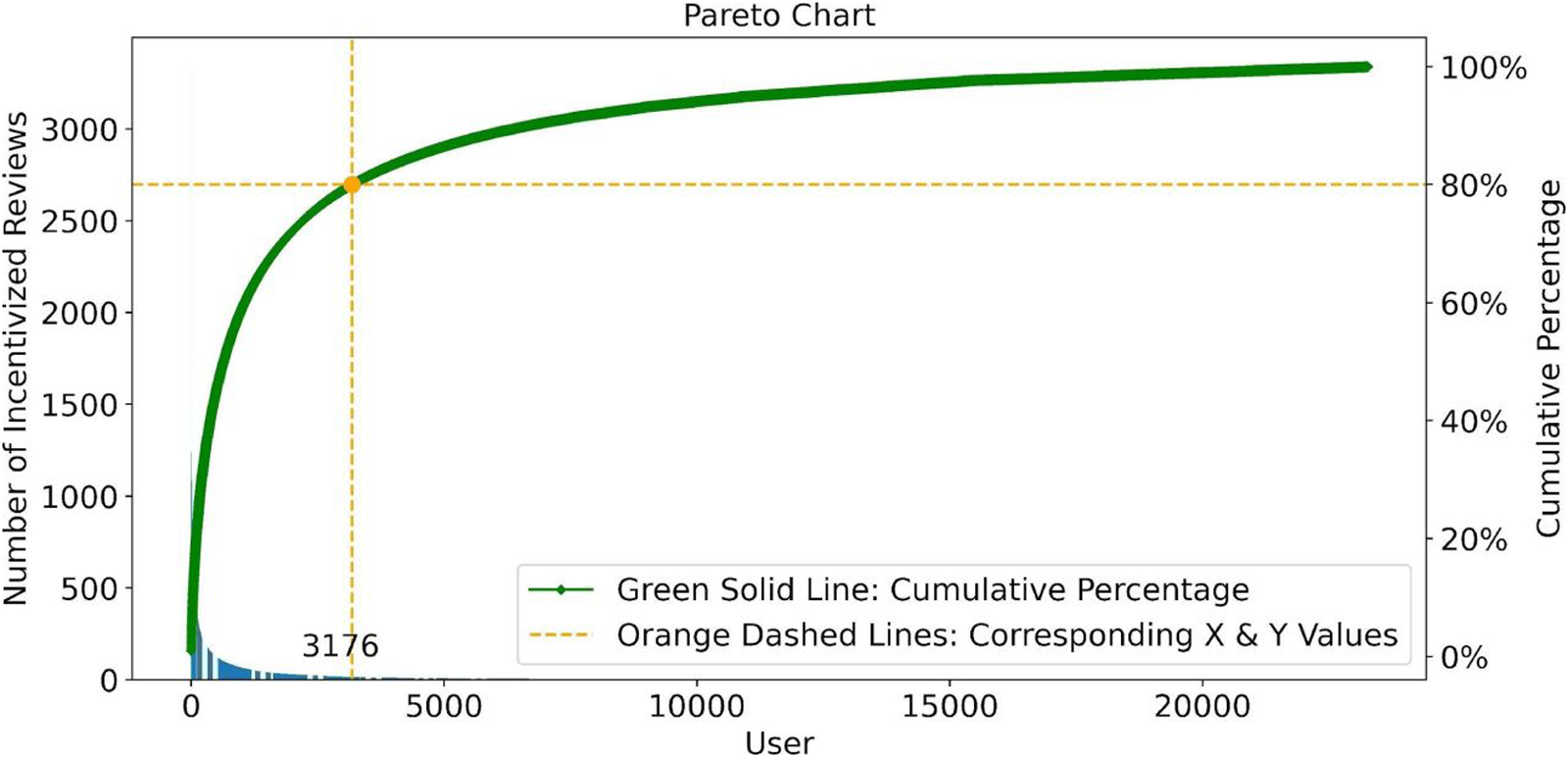

Figure 4 addresses the first set of questions. The horizontal axis represents the 23,261 users who posted incentivized reviews, ranked by the total number of incentivized reviews posted, in descending order from left to right. The vertical axis indicates the number of incentivized reviews posted, ranging from 0 to over 3000. The blue bar chart in the lower left area visualizes the binned number of reviews posted by users. The green curve denotes the corresponding cumulative percentage of incentivized reviews (the running total of the percentage values, from 0 to 100%). For instance, the orange point marked on the green curve at the coordinate of (3176, 80%) indicates that the most productive 3177 users (User No. 0 to User No. 3176, top 13.66%, n = 23,261) produced 80% of all the incentivized reviews in total. This curve indicates that the production of incentivized reviews was not evenly distributed among users; instead, the most productive 13.66% of users produced the majority of incentivized reviews. This signals the Pareto Principle (also known as “the 80/20 rule”) in incentivized review production: less than 20% of users who posted incentivized reviews produced 80% of incentivized reviews.

Number of incentivized reviews posted by individual users.

To address the second set of questions, we compared the number of non-sponsored and incentivized reviews associated with each user, as visualized in Figure 5. Each dot represents two numeric variables associated with the same user: the value on the horizontal axis is the number of non-sponsored reviews posted and the value on the vertical axis shows the number of incentivized reviews. Figure 5 does not suggest any consistent positive or negative correlation between the number of incentivized and non-sponsored reviews produced per user, which was tested statistically: Spearman's correlation coefficient of the paired values on the horizontal and vertical axes is 0.319 with a P-value of 0.0. This indicates a weak monotonic relation between numbers of incentivized and non-sponsored reviews posted per user. In addition, our data analysis revealed that among the 23,261 users who posted incentivized reviews, 866 (around 3.7%, n = 23,261) of those users solely posted incentivized reviews and they produced 2505 reviews in total (mean = 2.89, standard deviation = 11.98, minimum = 1, and maximum = 321). The user who posted the largest number of incentivized reviews (3331) only posted 46 non-sponsored reviews; in contrast, the user who posted the largest number of non-sponsored reviews (8443) only posted four incentivized reviews.

Numbers of incentivized and non-sponsored reviews posted by individual users who produced both types of reviews.

Due to the anonymity of users, we could not verify whether the most prolific user accounts were actually group accounts shared among multiple individuals. Neither could we conclude whether producing thousands of quality book reviews over 11 years was realistic for one reviewer. Nevertheless, our analysis reveals that the productivity of incentivized reviews varied significantly by user. Some users were overwhelmingly and even exclusively prolific in posting incentivized reviews, which raises questions regarding the independence and spontaneity of these reviews and suggests users’ uneven access to incentives.

Dynamics among incentivized review stakeholders

The third analysis examined the incentivized review stakeholders from two perspectives: reviewers and sponsors. First, we investigated (a) which book authors and publishers received more incentivized reviews; and (b) how incentivized reviews were distributed author-wise and publisher-wise. Adopting the same graphic design as Figure 4, Figure 6 and Figure 7 visualize the numbers of incentivized reviews associated with book authors and book publishers respectively, with cumulative percentage curves.

Number of incentivized reviews associated with each book author.

Number of incentivized reviews associated with each publisher.

Overall, incentivized reviews addressed books written by 40,133 authors and produced by 15,535 publishers. Figure 6 indicates that of those approximately forty thousand authors, 27% of them received 80% of the identified incentivized reviews (Author No. 0 to Author No. 10753, n = 40,133). Figure 7 reveals that 80% of the incentivized reviews were written for books published by the top 10% of publishers whose books received incentivized reviews (Publisher No. 0 to Publisher No. 1518, n = 15,535). Similar to the case of Figure 4, the uneven distributions of incentivized reviews author-wise (Figure 6) and publisher-wise (Figure 7) suggest that the majority of incentivized reviews were concentrated on books from a relatively small group of authors and publishers, and were slightly more spread out than the non-sponsored reviews, where reviews clustered organically around the most popular books. Bootstrap resampling confirms (P << .0001) that sponsored and non-sponsored reviews cover quite different sets of books. The Jaccard similarities between these samples showed only a third as much overlap as we found between randomly selected groups of reviews.

Second, we inspected review sponsors in terms of (a) which sponsors provided the incentives; and (b) whether and how sponsors were connected, through a case study of the 74 most prominent named sponsors. Figure 8 shows how many times the 74 sponsors were specified in incentivized reviews, ranked in descending order from top to bottom. Please note that the values on the horizontal axis are not evenly distributed; the numeric differences between bars on the right side of the graph are substantially larger than they visually appear to be. The 74 sponsors were acknowledged 138,075 times, which took up 42.95% of all the 321,514 named entities specified in the incentivized reviews. These sponsors’ total number of mentions varied significantly: the most frequently mentioned sponsor, NetGalley, appeared 83,097 times, or about 32% of the 261,367 incentivized reviews that specified sponsors; the least frequently mentioned sponsor among the 74 sponsors, Abingdon Press, appeared only 99 times (which we had set as our lower threshold for inclusion in our analysis). These sponsors can be clustered into five groups: (a) content distributors and advertising/public relations companies like NetGalley; (b) booksellers and social reviewing platforms like Amazon; (c) social reading and reviewing platforms like Goodreads and LibraryThing; (d) publishers such as Random House and Simon & Schuster; and (e) online book clubs such as “Lovers of Paranormal.”

Number of sponsor mentions in incentivized reviews.

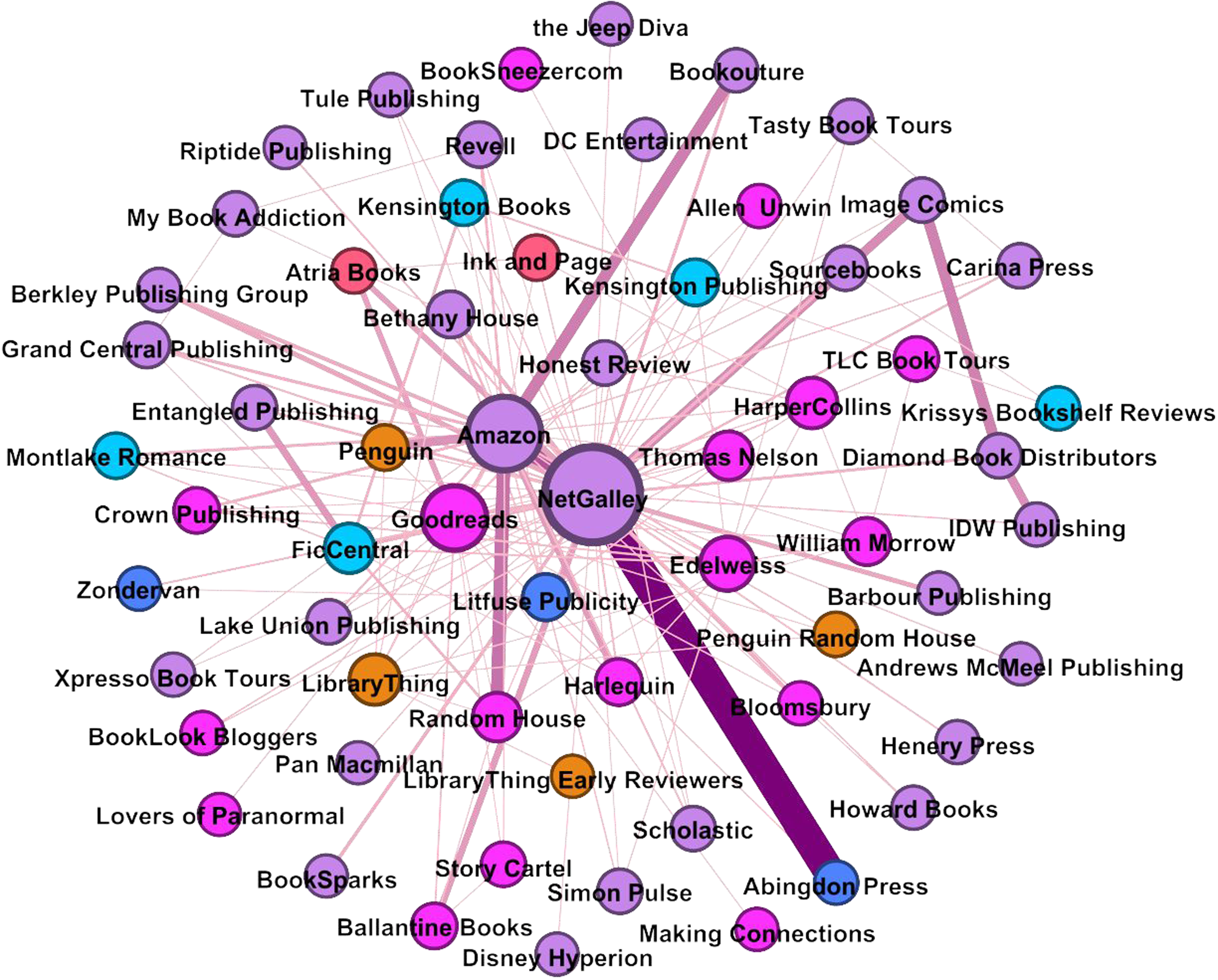

To explore the relationships among the 74 sponsors, we conducted a network analysis based on sponsor name co-occurrence in the same incentivized reviews. For example, if an incentivized review specifies, “Thanks for the advance copy provided by Publisher A and Book Seller B,” we consider this a co-sponsorship between A and B. Figure 9 visualizes the co-sponsorship network which includes 62 of the 74 sponsors who collaborated in providing incentives for book reviews, as produced by Gephi (Bastian et al., 2009) using a Fruchterman-Reingold layout. Each colored node in Figure 9 represents one of the 62 sponsors. The larger the node, the more connections it has. Nodes of the same color represent nodes of one of the six modularity groups detected by Gephi. The 149 edges connecting the nodes indicate co-sponsorship. The thicker the edge, the more frequently the two sponsors co-sponsored reviews. The average degree of this network is 4.81, which means that each sponsor directly worked with 4.81 co-sponsors on average. The average path length is 2.11, which means that, on average, two sponsors are connected by one intermediary sponsor. Table 5 provides more indexes about the network. According to Table 5, NetGalley ranked the highest across four prominent node centrality indexes, followed by Amazon and Goodreads, which suggests NetGalley's highly central role in this network.

Co-sponsorship network of the 62 sponsors.

Selected Gephi network indexes of the top 10 ranked co-sponsors.

Our investigation into the entities involved in incentivized review revealed their dynamics in two aspects. First, incentivized reviews were sponsored by a range of stakeholders; however, these reviews were not evenly distributed among various books: around 80% of incentivized reviews centered on books produced by small groups of both authors (Top 27%) and publishers (Top 10%). Second, a small group of the 62 most prominent sponsors that produced a large percentage of incentivized reviews collaborated on co-sponsoring incentivized reviews. These findings suggest that some stakeholders have not only individual but also collective impacts on incentivized reviews on Goodreads. It should be mentioned that many sponsors run their own book influencer programs and are popular among book influencers, such as Sourcebooks, Bethany House, and Berkley Publishing Group. In addition, some sponsors were or are no longer commercially independent from each other. For instance, Penguin and Random House merged in 2013 (Publishers Weekly, 2012), and Berkley is an imprint of Penguin Random House as of 2024. While this trend toward conglomeration in publishing is well known, our findings underscore that both these formal mergers and co-sponsorships might indicate that these networks and commercial interests are even more intertwined than previously known, although we initially treated these sponsors as distinct named stakeholders.

Discussion

While our research illuminates incentivized reviews on Goodreads, there remain limitations to address and questions to explore. First, as mentioned above, our dictionary-based approach, though very powerful, is inherently restricted to exact phrases or keywords, meaning that we might miss some more subtle wordings that reviewers use to indicate sponsorship. Similarly, our pre-trained NER pipeline, while expansive, cannot identify sponsors that are not explicitly specified in the review, and therefore, we cannot claim that our results represent a definitive list of all sponsors. While these missed incentivized reviews and sponsors should not invalidate our primary findings, continued future refinement of these methods should help elucidate more implicit sponsorships and provide a more comprehensive view of incentivized content.

Second, there are questions specific to Goodreads worthy of further investigation. For instance, the significant increase in the number of incentivized reviews in 2013 and Amazon's acquisition of Goodreads in 2013 deserves further study to evaluate whether there is any correlation or causation between the impacts of external acquisition on the ecology of social reviewing platforms. In addition, while our study has primarily utilized quantitative methods, we believe that more qualitative methods can help illuminate user motivations and behaviors. As preliminary endeavors, we sampled 200 Goodreads incentivized reviews that contain external URLs and manually inspected the URLs. 76.5% of them directed readers to reviewers’ personal book blogs, which suggest that reviewers also created incentivized reviews for self-promotion, in addition to fulfilling requests from sponsors. Some incentivized reviews were explicitly incomplete, inviting readers to read the complete review on external web pages. While such phenomena might be explained by social capital theory, surveys and interviews with book reviewers should give us first-hand knowledge of why and how reviewers engage with incentivized content, and deepen our understanding of incentives and their impacts.

Third, we see an important avenue for comparative analysis of data from other sources in order to cross examine findings based on Goodreads data. For instance, longitudinal distributions of incentivized reviews might vary across platforms, depending on individual business models, policies, and user bases. Due to black-box algorithms, data heterogeneity, and platform dependency, it is challenging to align data across platforms for effective comparative analysis, particularly for comparisons at large scales (Hu et al., 2024). Nevertheless, as many sponsors identified on Goodreads are known to be active across platforms (e.g., YouTube, Instagram, and TikTok), illuminating their roles on Goodreads paves the way for comparative analysis of incentivized reviews across platforms. Additionally, we intend to collect more data from the book industry to further investigate the effects of incentives. For instance, while our research suggests that incentives can direct readers to different sets of books without necessarily changing the sentiment of the reviews, how incentives affect book sales requires further investigation.

Conclusions

Our research set out primarily to consider who gets to decide what is reviewed on Goodreads. This is a complex question, but we believe that examining incentivized book reviews is crucial to any assessment. We limited our analysis to the most clearly identifiable examples: reviews that explicitly self-disclose having received an incentive. While this likely underestimates the full extent of sponsored content, it provides a conservative and reliable basis for our study. From 2007 to 2016, percentages of self-identified incentivized reviews on Goodreads increased 100 times from approximately 0.06% to 6%, with particularly higher percentages among reviews of the most lucrative genre fiction. This suggests the professional (as opposed to “amateur”) production and profit-driven imperatives of incentivized book reviews. Despite this increase, 80% of the incentivized reviews were attributable to only 13.66% of users who posted incentivized reviews; around 3.7% of these users overwhelmingly or exclusively posted incentivized reviews. This distribution indicates that even among the subset of users posting sponsored reviews, there remain differences in who gets access to these incentives. When looking at what books get reviewed, the results are as stark. A similar 80% of incentivized reviews––though to be clear not the same 80% of reviews produced by 13.66% of users––focus on books written by 27% of all authors on Goodreads, which are produced by an even smaller 10% of publishers. Our research has also revealed that the most impactful sponsors collaborated closely on providing incentives for reviews. NetGalley, a marketing services provider at the center of the network, sponsored nearly a third (32%) of all incentivized reviews. Based on these findings, incentivized book reviews are highly concentrated among both sponsors (stakeholders) and review recipients (books).

Despite platform-specific constraints, this research provides some of the first empirical evidence for the impact of sponsorship of online book reviews. We see our work as part of the growing research on digital platforms across disciplines, and believe that our findings further illuminate how monetizing book content on social reviewing platforms increases market concentration and reduces genre diversity (Zhu et al., 2025). Moreover, the highly uneven distribution of incentivized reviews—both in terms of reviewer contributions and sponsorship dynamics—indicates that contemporary social reviewing might be far from less egalitarian practice than platforms like Goodreads advertise. To be clear, our work has not explored what it is about certain users that make them more likely to be sponsored beyond their review posting behavior. Nonetheless, we hope that future research will continue to explore whether the distribution of sponsorship is re-inscribing some of the structural problems inherent in traditional book review and publishing industries.

Our work has shown that these datasets are complex cultural objects. Therefore, when considering them to be “everyday” cultural records, or when repurposing such social media data for scholarly research, we must take their economic and sociocultural dynamics into consideration. Meanwhile, as social reviewing platforms increasingly shape culture, we need to understand and preserve the sociotechnical and cultural contexts in which their data is created and used. More broadly speaking, this research demonstrates how to conduct critical examination of cultural data produced on social media, given the platform providers’ restricted data access and intentionally opaque algorithmic moderation. This case study exemplifies how scholars can use limited historical datasets to reveal the underlying dynamics of online book reviewing, as well as elusive collaborations among various stakeholders beyond the platforms. Given the proprietary and exploitative usage of free user data for profit by both social media platforms and the culture industries, such critical examinations can help mitigate the big corporations’ unequal and restrictive control of cultural data (Walsh, 2022). Only insofar as we approach these datasets critically—whether for scholarly inquiry, AI model development, or everyday decisions—can we ensure that our models, our research, and society as a whole are able to account for the complex dynamics underpinning contemporary digital culture.

Supplemental Material

sj-docx-1-bds-10.1177_20539517251359229 - Supplemental material for Who decides what is read on Goodreads? Uncovering sponsorship and its implications for scholarly Research

Supplemental material, sj-docx-1-bds-10.1177_20539517251359229 for Who decides what is read on Goodreads? Uncovering sponsorship and its implications for scholarly Research by Yuerong Hu, Jana Diesner, Ted Underwood, Zoe LeBlanc, Glen Layne-Worthey and John Stephen Downie in Big Data & Society

Supplemental Material

sj-docx-2-bds-10.1177_20539517251359229 - Supplemental material for Who decides what is read on Goodreads? Uncovering sponsorship and its implications for scholarly Research

Supplemental material, sj-docx-2-bds-10.1177_20539517251359229 for Who decides what is read on Goodreads? Uncovering sponsorship and its implications for scholarly Research by Yuerong Hu, Jana Diesner, Ted Underwood, Zoe LeBlanc, Glen Layne-Worthey and John Stephen Downie in Big Data & Society

Footnotes

Acknowledgments

The authors would like to thank Goodreads users who created the review data that enabled this research. The authors are grateful for the support for open access publication charges provided in part by Indiana University Libraries.

Author contributions

Yuerong Hu: conceptualization, data curation, formal analysis, investigation, methodology, software, validation, visualization, writing—original draft, writing—review and editing

Jana Diesner, Ted Underwood, Zoe LeBlanc, Glen Layne-Worthey, J. Stephen Downie: conceptualization, supervision, writing—review and editing

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Supplemental material

Supplemental material for this article is available online.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.