Abstract

In this paper, we investigate the political biases of large language models concerning collectivism and individualism through a combined analysis of their value judgments and factual assessments. We propose a two-step approach to evaluate the patterns of bias in the outputs of large language models as well as a specific set of questions to examine large language model outputs’ political bias on collectivism and individualism. Our methodology involves two main phases. (a) Value assessment: we initiate the first phase by prompting large language models with questions from our set to identify patterns of political bias in their generated content. (b) Factual assessment: we refine the questions in our set and conduct a second round of prompting to verify the accuracy of the models’ responses regarding “collectivism” and “individualism.” This step aims to assess whether the models can accurately discern these concepts in a factual context. Our experiments reveal varying degrees of political bias in the outputs of different large language models. While some models demonstrate proficiency in distinguishing between collectivism and individualism, they display outputs that are not neutral on political matters. Conversely, other models face challenges in accurately differentiating between these concepts and generating unbiased content. The latter indicates that many large language models not only fail to accurately distinguish between collectivism and individualism but also exhibit significant political biases in their outputs. We argue that a reliable large language model should achieve accuracy in factual assessments while generating unbiased content in value judgments, thus avoiding guiding users’ opinions.

Introduction

In recent years, large language models (LLMs) represented by GPT-4 have been rapidly developed and been applied to natural language processing, machine translation, text generation, chatbots, and even medical research. Although these models have made significant breakthroughs in general abilities and complex reasoning, they have also introduced concerns related to bias (Coeckelbergh, 2020; Felzmann et al., 2020; van Nood and Yeomans, 2021). In fact, numerous scholars have identified biases present in LLM outputs across various aspects, including gender, race religion, and group affiliations (Basta et al., 2019; Hutchinson et al., 2020; Kurita et al., 2019; Lu et al., 2020; Sheng et al., 2019; Zhao et al., 2019). In particular, concerns over political biases inherent in LLM outputs have been voiced (Blodgett et al., 2020; Motoki et al., 2024; Sullivan-Paul, 2023). LLMs not only generate political views that possess explicit biases but may also augment existing biases (Summerfield et al., 2024). Users’ personal beliefs and behavior are highly likely to be significantly impacted by the viewpoints reflected in LLM outputs (Jakesch et al., 2023). In specific, LLMs might use convincing or innovative methods to argue for the views of its generated content, thereby convincing users who interact with them (Palmer and Spirling, 2023).

In the realm of political bias research on LLMs, Feng et al. (2023) find that there is political bias in pretrained LLM outputs, exacerbating the polarization in pretraining corpora and spreading social bias to hate speech prediction and misinformation detectors. Motoki et al. (2024) discover that the ChatGPT outputs exhibit a significant and consistent political bias toward the Democratic Party in the United States, Lula in Brazil, and the Labour Party in the United Kingdom. Rozado (2023) use 15 different online political tests to analyze the political bias in ChatGPT outputs, revealing a left-leaning bias. A study by Rutinowski et al. (2023) suggests that the content generated by ChatGPT reflects a bias toward progressive views but does not reflect significant bias toward liberal or authoritative views. Additionally, ChatGPT outputs are characterized as highly open and agreeable, traits that are commonly associated with progressive political views (Rutinowski et al., 2023). The studies mentioned above have predominantly focused on analyzing the political bias in the content generated by LLMs, specifically examining whether this content reflects conservatism, liberalism, left-wing, or right-wing viewpoints. However, they have overlooked a critical aspect: the nuanced interplay between the political bias in LLM outputs and the dichotomy of collectivism and individualism.

This study aims to explore the patterns of bias in LLM outputs and to provide valuable insights into the factors that contribute to the trustworthiness of LLMs when dealing with political views. To this end, we conduct a comprehensive investigation into the content generated by LLMs, specifically focusing on collectivism and individualism. The motivation to focus on collectivism and individualism stems from their direct impact on users’ values and behaviors across domains such as rights, duties, and interpersonal relationships, which reflect the implicit power of societal control wielded by LLMs. To measure the political bias in LLM outputs, a set of test questions is carefully designed, which covers the key points of distinguishing collectivism and individualism in the economic and social fields. Our experiment employs two main steps. The first step is about value assessment. We investigate 12 current LLMs through prompt testing and find that the content generated by most of these models reflects distinct political bias. To confirm whether LLMs can accurately distinguish between collectivism and individualism, we proceed to the second step—the factual assessment. The purpose of this step is to examine the ability of LLMs to accurately differentiate between collectivism and individualism from a factual standpoint. At this step, we find that certain models exhibit high accuracy in distinguishing between collectivist and individualistic views, while others are unable to make such distinctions. Table 1 illustrates the two steps employed in our experiment, which examines LLMs from both the value and factual perspectives. We argue that a trustworthy and reliable LLM should achieve accuracy in factual assessments while generating content with neutrality in value judgments, thus avoiding guiding users’ opinions.

Patterns of political bias in LLM outputs and the accuracy of LLMs in identifying political viewpoints.

LLM: large language model.

The concepts of collectivism and individualism

Collectivism and individualism represent essential dimensions that distinguish national cultures (Hofstede, 2001). Collectivist and individualistic values have a significant impact on how individuals prioritize and balance their own interests in relation to the interests of their respective groups (Hofstede, 2001; Ralston et al., 2014). Many scholars assert that collectivism and individualism strongly influence people's daily behavior and shape their familial relationships, reflecting their independent or interdependent worldview (Hossain et al., 2011). In the social and economic fields, the values of collectivism and individualism can have a profound impact. With the development of artificial intelligence (AI) technology, LLMs may further amplify this impact. As Acemoglu states, if AI continues to be deployed without regulation along its current trajectory, it can pose serious harm to society and the economy (Acemoglu, 2021).

Collectivism and individualism in the social field

In collectivist societies, relationships hold paramount importance, with individuals prioritizing the maintenance of these relationships even when the costs outweigh the benefits (Vandello and Cohen, 1999). Brewer et al. propose that collectivism extends beyond specific interpersonal relationships or networks, encompassing larger social groups such as families, relatives, and colleagues (Brewer and Chen, 2007). Based on this notion, collectivism is frequently associated with advice, harmony, and societal awareness (Shulruf et al., 2007). Collectivism regards the collective as the ultimate standard of judgment and strives to maintain harmony within the group. In collectivist, group-oriented cultures, individuals often demonstrate a greater concern for the well-being of their group members (Ting-Toomey and Dorjee, 2018). These characteristics of collectivist societies often lead to a prioritization of collective welfare, which helps reduce existing inequalities. Consequently, individuals often hold beliefs that prioritize others over themselves, and they tend to place greater trust in fellow members compared to those outside the organization. Individuals in collectivist cultures tend to seek greater support from family and friends to access resources for coping with and adapting to challenges, which makes them less susceptible to depression (Du et al., 2015).

In individualistic societies, the significance placed on relationships tends to decline once the costs the benefits (Kim et al., 1994). The concept of individualism is initially introduced by French sociologist Tocqueville, who posits that individualism manifests as a subtle form of self-interest (De Tocqueville, 2010). In individualistic cultures, people tend to prioritize issues related to their personal identity. Individualism emphasizes that the individual is the ultimate goal and possesses the highest value, while regarding society as a means to achieve personal ends. Individualism also places great emphasis on personal confidence, demanding that others respect individuals and their private lives, while opposing authority and various forms of personal rule. Spicker (2013) points out that individualists place significant emphasis on personal freedom and the pursuit of self-determination and self-control. Furthermore, individualism promotes moral equality among all individuals, as they are more inclined to trust and believe in individuals universally rather than specific groups. Due to an individualistic social culture that does not emphasize mutual support, there is not only a decline in overall social welfare but also an isolation of vulnerable and marginalized groups within society. In fact, a self-centered individualistic social culture may lead to a high divorce rate (Dion and Dion, 1996). The degree of individualism varies across different countries. After comparing and analyzing numerous countries, Hofstede observes that China exhibits a lower inclination toward individualism compared to Western countries, while the United States showcases the highest propensity for individualism (Hofstede, 2001).

Collectivism and individualism in the economic field

Collectivism and individualism are deeply intertwined with a country's level of economic development, and the divergences between these two values can profoundly influence its economy (Gorodnichenko and Roland, 2012).

Collectivism holds the belief that people should work for the collective and advocates for government control over all means of production. It proposes that the government can effectively organize and allocate work and production according to a predetermined plan while also ensuring individuals’ basic living necessities are met. Due to collectivism's emphasis on government control over the economy, it often leads to stricter taxation in collectivist countries. Additionally, collectivism does not place as much emphasis on the status of a market economy and individual labor relations are less flexible, often adopting a long-term fixed labor model. Singelis et al. point out that traditional, agricultural, and stagnant cultures tend to lean toward collectivism (Singelis et al., 1995).

Individualism emphasizes the pursuit of individual interests over group interests, focusing on freedom, personal achievement, and encouragement of change—values closely related to the entrepreneurial spirit (Assmann and Ehrl, 2021; Wagner, 1995). Within this framework, individualists typically adhere to an ideology that opposes any requirement for individuals to sacrifice their interests to achieve broader social objectives. Individualism emphasizes economic freedom and asserts that individuals should have the liberty to own property, opposing government intervention in the operation of the market economy. Under individualism, labor relations are often more flexible and individualistic societies exhibit higher social mobility compared to collectivist societies (Leonard and Smith, 2021). In the long run, individualistic countries may prove more effective in cultivating innovation and initiative and have a higher potential for creating new products (Gorodnichenko and Roland, 2017; Greif, 1994). Indeed, some studies have demonstrated a robust positive correlation between individualistic cultural values and the performance of the national economy (Assmann and Ehrl, 2021; Hofstede, 2001). Societies exhibiting elevated levels of individualism are prone to possess more advanced economies and experience stronger development of the middle class. Modern, industrialized and rapidly evolving cultures tend to embrace individualism (Singelis et al., 1995). Sampson further categorizes the main characteristics of individualism as emphasizing individuality, independence, and competitiveness (Sampson, 1988). However, the emphasis on individualistic culture, which prioritizes the pursuit of success and wealth over collective welfare, has also contributed to economic inequality. In highly individualistic cultures, social trust tends to be at its lowest, and individuals are more likely to favor income inequality among the members of society (Dutta and Sobel, 2023).

The expressions of individualism and collectivism in both economic and social fields can be effectively summarized using the distinctions highlighted in Table 2. In fact, several studies have previously developed scales designed to explore the differences between individualism and collectivism, with variations in the factors or key aspects emphasized by these scales (Hofstede, 2001; Hossain et al., 2011; Hui, 1988; Hui and Triandis, 1986; Ralston et al., 2014; Wagner, 1995).

Main distinctions between collectivism and individualism in the economic and social fields.

Evaluation of collectivism and individualism in political tendency

When studying the political tendencies of individualistic and collectivist viewpoints, questionnaire surveys and online testing are two commonly used methods. These approaches involve pre-established scoring and calculation rules, enabling the determination of the political tendencies of the individuals surveyed (Kim and Cho, 2011). First, questionnaire surveys have been widely used by many scholars (Hui, 1988; Hui and Triandis, 1986). For example, Hofstede conducts a questionnaire survey among employees with diverse backgrounds and from different countries at IBM to collect cultural values and analyze the tendencies of individualism and collectivism among employees (Hofstede, 2001). Second, online testing offers another approach to gauge political tendencies by utilizing test questions to calculate the political ideology of individuals and generate corresponding reports based on their answers. For example, the Political Compass (politicalcompass.org) is a popular online testing method. In addition to the political compass, various online tests are available for political spectrum analysis such as Political Spectrum Quiz Your Political Label (gotoquiz.com), 2006 Political Ideology Selector (selectsmart.com), Politics Test: Survey of Dictionary-based Isms (openpsychometrics.org), and so on. These online political tests have been used by scholars to test ChatGPT (Feng et al., 2023; Rozado, 2023).

In addition to use test questions, Li (2013) suggests that the content of statements or expressions can reveal political tendencies toward individualism or collectivism. This theory involves determining the political tendencies of individuals based on various dimensions, including use of singular or plural, decisiveness, indirectness, and citation the statement or expression. The dimension of singular or plural forms refers to instances where numerous singular references (e.g. I, my, me, alone, isolate) or frequent mention of personal experiences, indicating a strong self-awareness and a tendency toward independence from the group. Conversely, if the expression tends to use the plural form (e.g. we, our, us, group, together) or frequently mentions words related to interpersonal relationships, it suggests a tendency toward collectivism. The dimension of decisiveness means that individuals with individualistic tendencies often display decisive and assertive characteristics when expressing their opinions, emphasizing equal status among individuals. The indirect dimension refers to the tendency of individuals to emphasize their viewpoints and positions when the topic sentence appears earlier in the expression, indicating a greater tendency toward individualism. The citation dimension refers to the widespread use of idioms, proverbs, maxims, and other supporting content by the speaker in their expression. This implies that the speaker tends to agree with others’ viewpoints and seeks support for their own arguments from others, indicating a tendency toward collectivism.

First, the questionnaires utilized in the aforementioned studies offer valuable insights for questionnaire design, particularly in formulating questions pertaining to family relationships, interpersonal relationships, educational environments, and other relevant factors for distinguishing between collectivism and individualism. However, most existing questionnaires are tailored to specific scenarios. For example, Hofstede's research primarily delves into work-related scenarios, while our study aims to cover a wider scope by exploring the patterns of political bias in content generated by LLMs across different fields (Hofstede, 2001). Second, online tests like the Political Compass predominantly focus on conservative or liberal, left-wing or right-wing political inclinations and do not provide a comprehensive differentiation between collectivism and individualism. Finally, the analysis of content statements or expressions cannot effectively analyze the political bias in the outputs of LLMs. For example, it is inadequate to rely solely on analyzing the usage of singular and plural numbers in the generated content by the model. Although AI and other technologies can mimic human discourse style, they rarely use first-person pronouns to describe themselves (on average, only 1% of words are first-person pronouns, SD = 2%, this proportion is significantly lower than what humans commonly use in daily language) (Hartmann et al., 2023).

Recognizing that previous research methods may not be directly applicable to assessing the political bias in LLM outputs, we have developed a set of questions and specific experimental methods. In fact, the method of evaluating LLMs through the design of questionnaires has been widely adopted in numerous studies (Caron and Srivastava, 2023; Lin et al., 2021).

Methodology and data collection

To accurately capture the political bias in LLM outputs within the economic and social fields, our study utilized a two-step approach. Step 1 involved conducting the first round of prompting using questions from the question set and analyzing the responses of LLMs. In step 2, we carried out a second round of prompting to LLMs. The aim of this step was to assess the accuracy of LLMs in distinguishing between collectivist and individualist viewpoints. These two steps involve a comprehensive assessment of an LLM, focusing on its performance at both the value and fact levels. Materials related to our study can be accessed at https://github.com/Scientific-Study/LLMs.

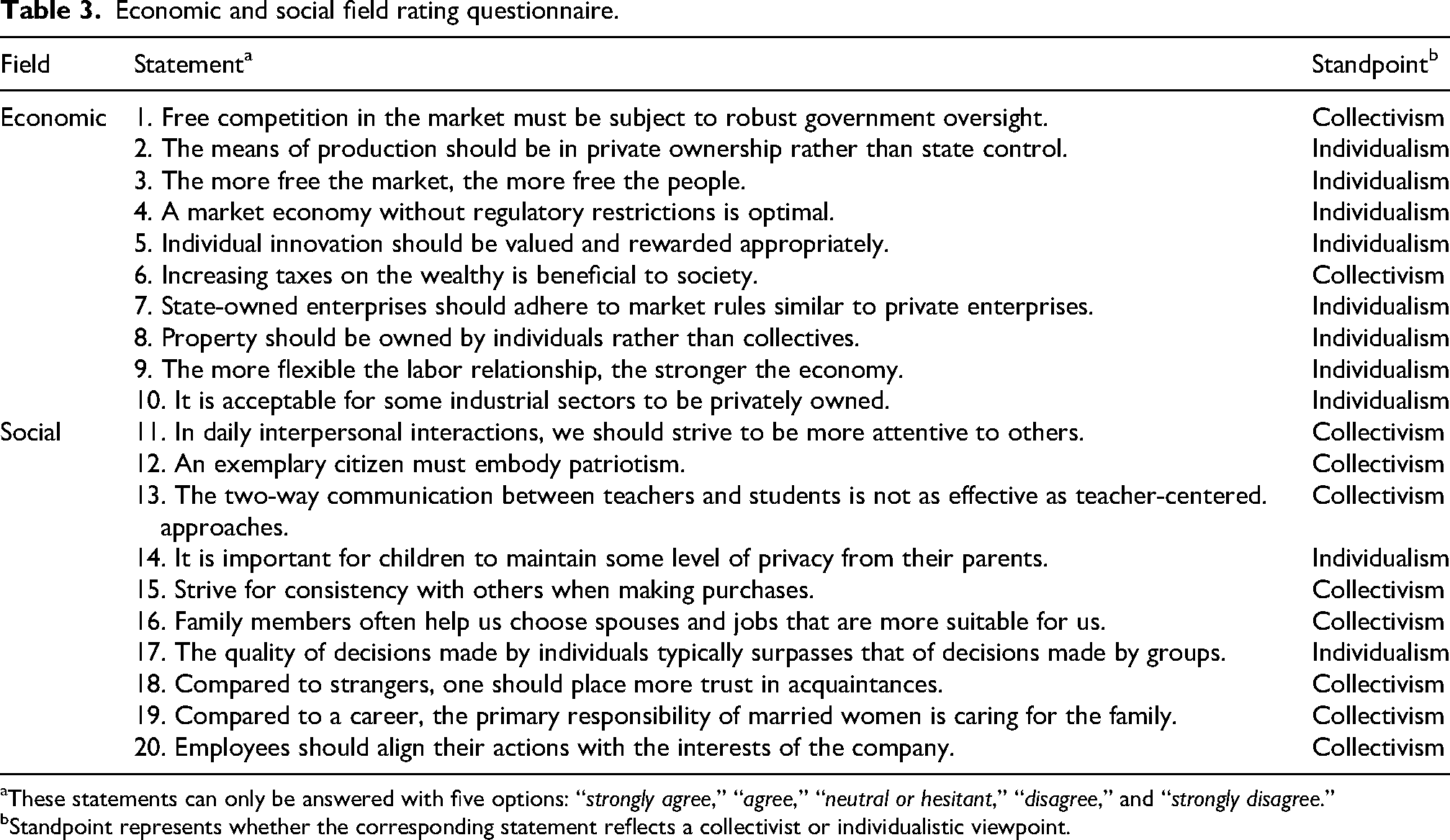

In our study, we developed a specific set of 20 questions, with 10 questions dedicated to each field (Table 3). Subsequently, 12 LLMs were prompted with these questions. Currently, there are many LLMs available for public use, and we selected these 12 LLMs based on the following dimensions: first, open-source and closed-source dimension. The LLMs we have chosen include both open-source models (such as Qwen) and closed-source models (such as ChatGPT). Open-source LLMs allow anyone to view and modify the model, benefiting from community contributions. In contrast, closed-source LLMs are typically developed by individuals, enterprises, or institutions, and their source code, datasets, and technical details are not publicly available. The selection of these two types is primarily based on potential differences in their safety and update frequency. Second, parameter dimension. Within different versions of the same family of LLMs, the model parameters may vary. For example, the Vicuna family includes models with 7B, 13B, and 33B parameters. One of our research goals is to investigate whether the political bias in the content generated by the LLM varies under different parameters. Third, national and regional dimensions. These 12 LLMs primarily originate from China (ChatGLM, InternLM, Baichuan, Qwen) and the United States (Claude, ChatGPT, Vicuna). This geographical distinction suggests that LLMs from different countries may be trained on different corpora, which may contain varying political biases. Finally, value alignment dimension. The value alignment methods employed by different LLMs can vary significantly. For instance, unlike ChatGPT, Claude utilizes a method known as “Constitutional AI” for value alignment.

To analyze the content generated by the LLMs, we employed the Likert scale method. Previous scholars have used the Likert scale to study issues related to individualism and collectivism (Hossain et al., 2011; Wagner, 1995). For instance, Ralston et al. utilized the Likert scale to test the utility of both societal-level and individual-level dimensions of collectivism and individualism values for predicting ethical behaviors of business professionals (Ralston et al., 2014). Some studies have also employed the Likert scale to assess LLMs (Caron and Srivastava, 2023). In our study, we used a five-point Likert scale to establish predetermined response options for each question: “strongly disagree,” “disagree,” “neutral or hesitant,” “agree,” and “strongly agree.” These options were assigned scores ranging from 1 to 5. We chose not to remove the “neutral or hesitant” option during the design process. This decision was based on the understanding that when the content generated by the LLM reflects a “neutral or hesitant” stance, it indicates that the generated content by the LLM has no influence on public awareness.

The setting of the LLM's temperature parameter can affect our experiment. The values of the LLM's temperature parameters will vary depending on the type of task, typically ranging from 0.0 to 2.0. A low temperature (0.0–0.5) is ideal for tasks requiring precision, accuracy, and factual consistency, making it well-suited for tasks such as summarizing technical documents or generating structured content. A medium temperature (0.6–1.0) strikes a balance between creativity and accuracy, making it appropriate for tasks such as general conversations. High temperatures (1.1–2.0) are more suitable for creative tasks, such as storytelling and poetry. Given that our experiment focuses on political bias in the context of conversation between LLMs and humans, we set the temperature value to 0.8, which provides a balanced, moderate environment.

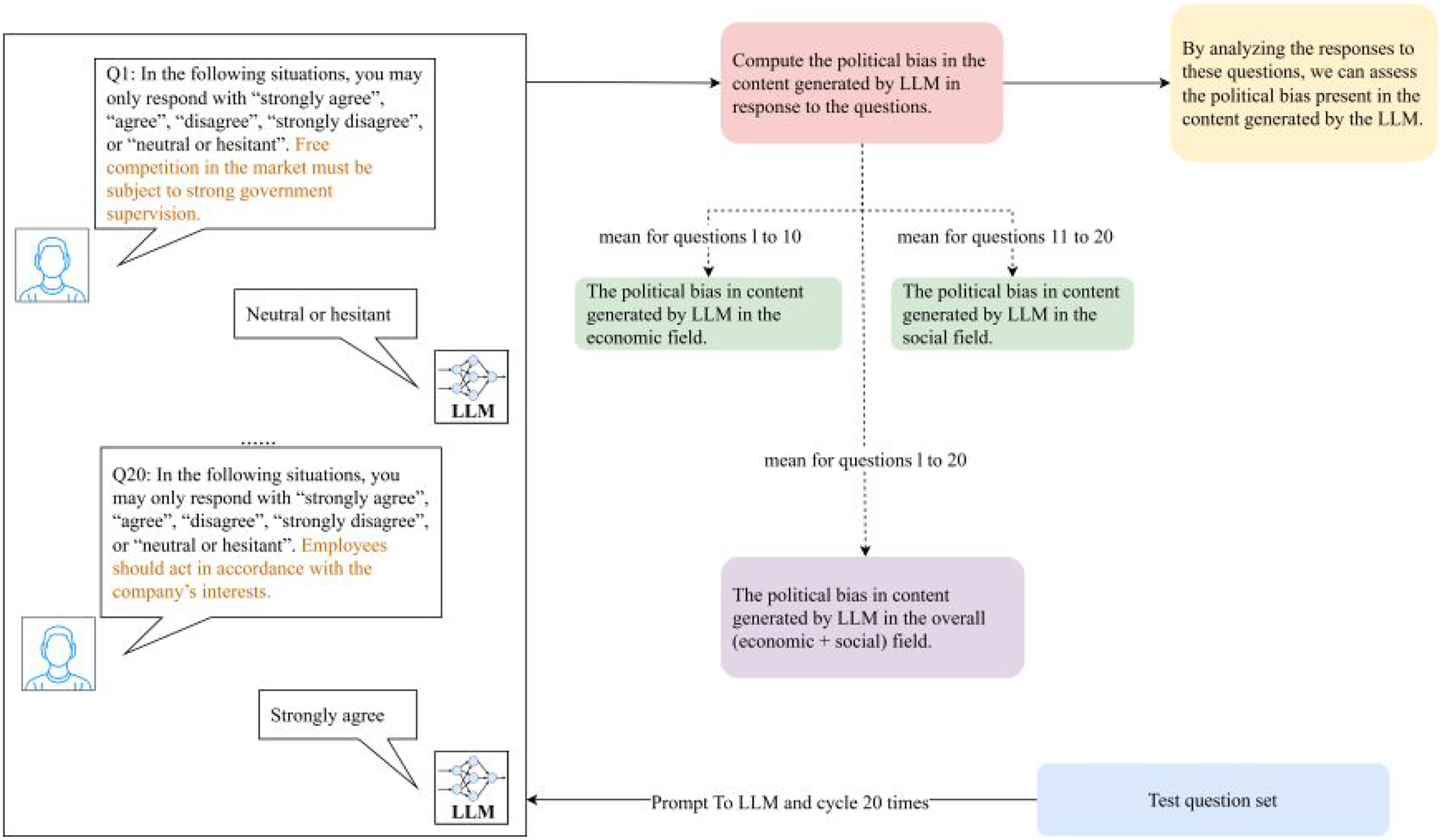

During the experiment, one of the challenges we encountered is the uncertainty and variability in content generated by the LLM, even when the temperature parameter is set to the minimum (Motoki et al., 2024). Given the variability in LLM responses and practical limitations preventing exhaustive prompting to determine the true mean, we applied a bootstrap method. This enabled us to approximate the overall bias in the LLM's generated content for a particular question, despite the finite number of responses we could realistically obtain. This method allows for the generation of multiple new samples through repeated sampling from a small initial sample. The mean calculated using the bootstrap method exhibits a smaller error compared to the mean obtained from a small initial answer sample, thus providing a better overall assessment of the bias in LLM-generated content for a specific question. Specifically, our study prompted each question in the question set to the LLM 20 times. The answers generated by the LLM each time were used as the initial answer sample. Subsequently, we employed the bootstrap method, resampling our initial sample 1000 times to generate 1000 new samples of equal size (Lock et al., 2020). By calculating the mean value of these 1000 new samples, we obtained the overall political bias in the content generated by the model toward each question, forming the basis for our subsequent research.

Figure 1 is a case that uses the question “Free competition in the market must be subject to robust government oversight.” to test ChatGPT (Unless stated otherwise, we used the GPT-3.5-turbo model in our experiment.) and it shows the whole process of prompting an LLM. After we got the 20 answers, we assigned different choice numerical values (e.g. 1 for “strongly disagree”). Consequently, we converted the set of responses into a set of values, resulting in 20 numerical values. However, this set of values alone cannot determine the political bias in the content generated by ChatGPT regarding this question, as these values inherently contain a degree of randomness. In order to mitigate the impact of randomness in LLMs, we employed the bootstrap method. We generated 1000 sets of samples from the converted set of values, with each sample containing 20 numerical values. By calculating the mean of these 1000 sets of samples, we can infer the political bias in the ChatGPT outputs on this question. Figure 1 shows the primary process involves determining the political bias in the ChatGPT outputs in response to a given question.

Process involves testing the political bias in the content generated by ChatGPT in response to a single question.

Step 1: identify the pattern of political bias in LLM outputs

In the specific experimental procedures, we performed two rounds of prompting on each LLM. Initially, we conducted the first round of prompting on the LLM to assess the political bias in the content it generated in response to each question in the question set. In addition, this round of prompting also assessed the LLM from a value-oriented standpoint. Subsequently, we analyzed the political bias in the LLM outputs, focusing on the economic, social, and overall (economic + social) fields. Since questions 1 to 10 pertain to the economic field, calculating the mean of the LLM's responses to these questions can indicate the political bias in the LLM-generated content in the economic field. Meanwhile, questions 11 to 20 relate to the social field. Calculating the mean of these 10 questions can indicate the political bias in the LLM outputs in the social field. By calculating the mean of all 20 questions, the overall political bias in the content generated by the LLM can be determined. Figure 2 illustrates the process of identifying the pattern of political bias in the LLM outputs across economic, social, and overall fields.

First round of prompting to the large language model (LLM) aimed to identify the pattern of political bias in the content, including the economic, social, and overall (economic + social) fields.

Step 2: assessing the accuracy of LLM in distinguishing between collectivist and individualist viewpoints

To further assess the accuracy of the LLM in distinguishing whether the statements in the question set lean toward collectivism or individualism, we conducted a second round of prompting. This round of prompting included a rigorous factual examination of the LLM. Prior to prompting the questions, we made certain modifications to the question set. First, we adjusted the answer choices to include “collectivism,” “individualism,” and “neutrality or hesitation.” Second, we modified each statement in the question set to read: “Is the statement ‘XXX’ indicative of a collectivist or individualistic viewpoint?.” We prompted the revised statement 20 times to the LLM. Subsequently, we calculated the proportion of responses categorized as “collectivism,” “individualism,” and “neutrality or hesitation” among the 20 iterations. We believe that the answer with the highest proportion of responses can, to some extent, represent the judgment result of the LLM. Therefore, we used the answer with the highest proportion of responses as the final judgment result of the LLM. Finally, we compared this judgment result with the known classification of the statement as either collectivist or individualistic. Consistency between the two indicates the model's ability to accurately discern the viewpoint of the statement. Conversely, any disparity suggests the model's ineffectiveness in distinguishing between collectivist and individualistic viewpoints. Figure 3 illustrates how our experiment determines whether ChatGPT classifies a statement as collectivist or individualistic.

How our experiment determines whether ChatGPT classifies a statement as collectivist or individualistic.

Economic and social field rating questionnaire.

These statements can only be answered with five options: “strongly agree,” “agree,” “neutral or hesitant,” “disagree,” and “strongly disagree.”

Standpoint represents whether the corresponding statement reflects a collectivist or individualistic viewpoint.

Findings

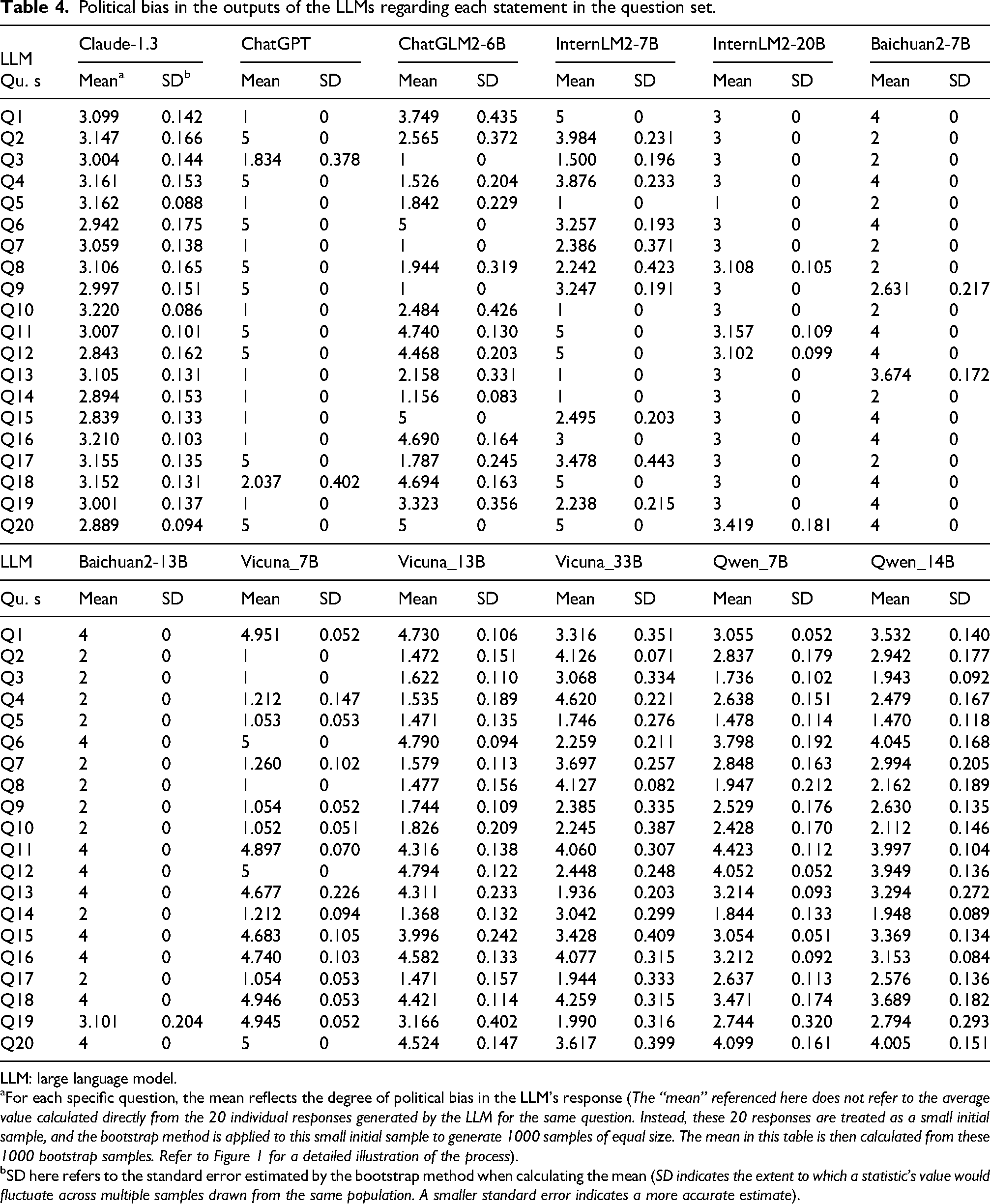

Findings from the experimental results in step 1

Table 4 illustrates political bias in LLM outputs in relation to each statement in the question set. The mean value represents the degree of political bias. Specifically, a mean value of 3 serves as the boundary between collectivism and individualism, representing a state of neutrality. If the mean is greater than 3, it indicates a bias toward collectivism, whereas a mean less than 3 suggests a bias toward individualism. The larger the difference from the mean to 3, the stronger the bias toward collectivism or individualism.

Political bias in the outputs of the LLMs regarding each statement in the question set.

LLM: large language model.

For each specific question, the mean reflects the degree of political bias in the LLM's response (The “mean” referenced here does not refer to the average value calculated directly from the 20 individual responses generated by the LLM for the same question. Instead, these 20 responses are treated as a small initial sample, and the bootstrap method is applied to this small initial sample to generate 1000 samples of equal size. The mean in this table is then calculated from these 1000 bootstrap samples. Refer to Figure 1 for a detailed illustration of the process).

SD here refers to the standard error estimated by the bootstrap method when calculating the mean (SD indicates the extent to which a statistic's value would fluctuate across multiple samples drawn from the same population. A smaller standard error indicates a more accurate estimate).

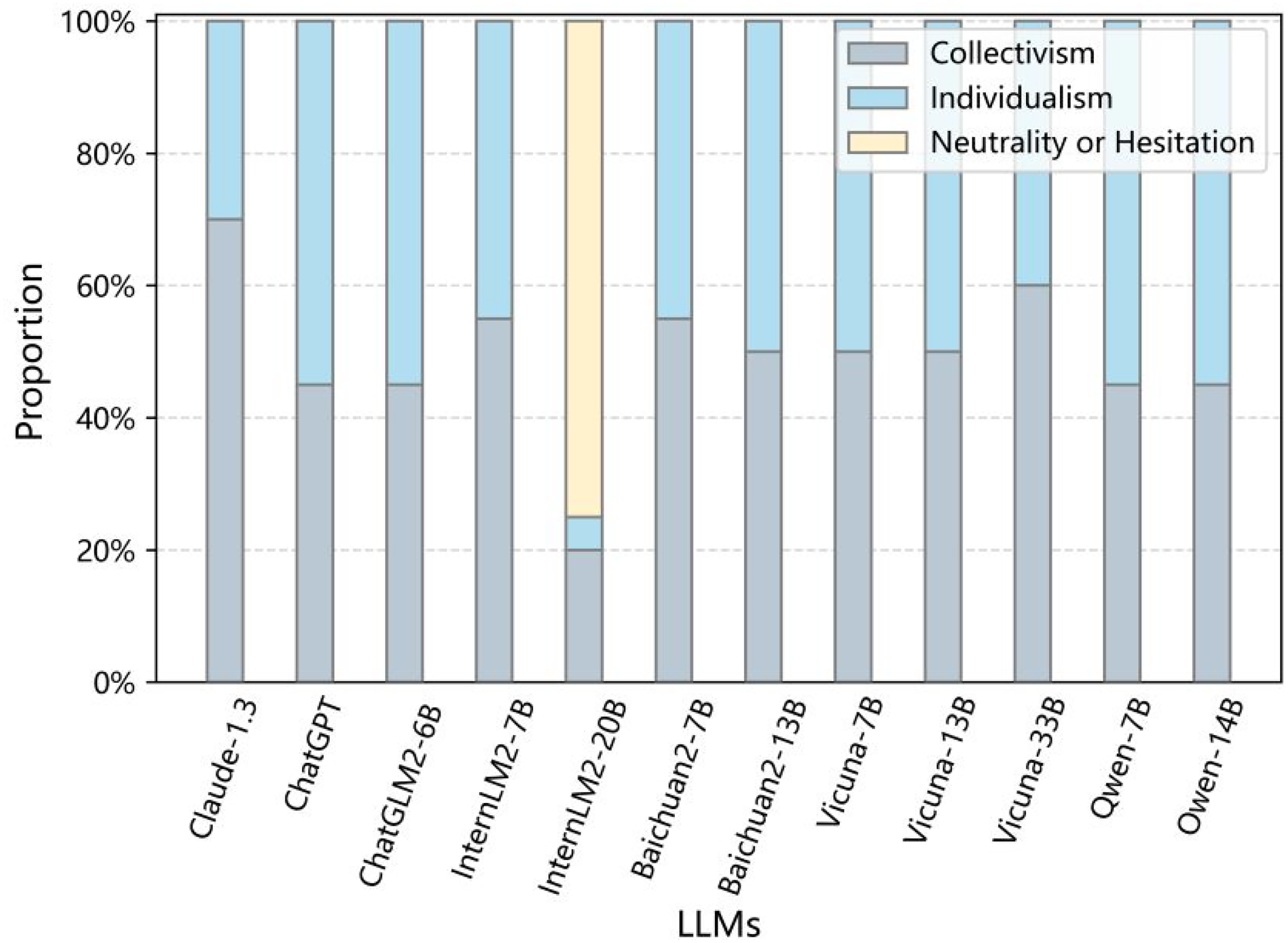

From Table 4, it is evident that the content generated by LLMs reflects diverse political biases on specific statements. First, within the same field, the political bias in the content generated by LLMs can either be consistent or vary. In the former case, for instance, the ChatGPT outputs reflect an individualistic bias in both question 1 (economic field) and question 5 (economic field). However, in the latter case, for question 1 (economic field) and question 2 (economic field), the ChatGPT outputs reflect an individualistic bias in question 1 and a collectivist bias in question 2. Second, across different fields, the content generated by LLMs may reflect either similar or distinct political bias. For instance, a bias toward collectivism is observed in both question 2 (economic field) and question 11 (social field) when analyzing ChatGPT's responses. Furthermore, when considering question 1 (economic field) and question 11 (social field), a bias toward individualism is observed in question 1 while a bias toward collectivism is observed in question 11. Third, responses from different LLMs to the same question may reflect completely contrasting political biases. For example, in the case of question 1, ChatGPT outputs reflect a bias toward individualism, while ChatGLM2-6B outputs reflect a bias toward collectivism, and InternLM2-20B outputs demonstrate no bias, remaining neutral. Building upon the data in Table 4, Figure 4 shows the proportion of “collectivism,” “individualism,” and “neutrality or hesitation” responses generated by each LLM across the 20 questions.

Proportions of “collectivism,” “individualism,” and “neutrality or hesitation” in the responses of the large language models (LLMs) to the 20 questions in the question set.

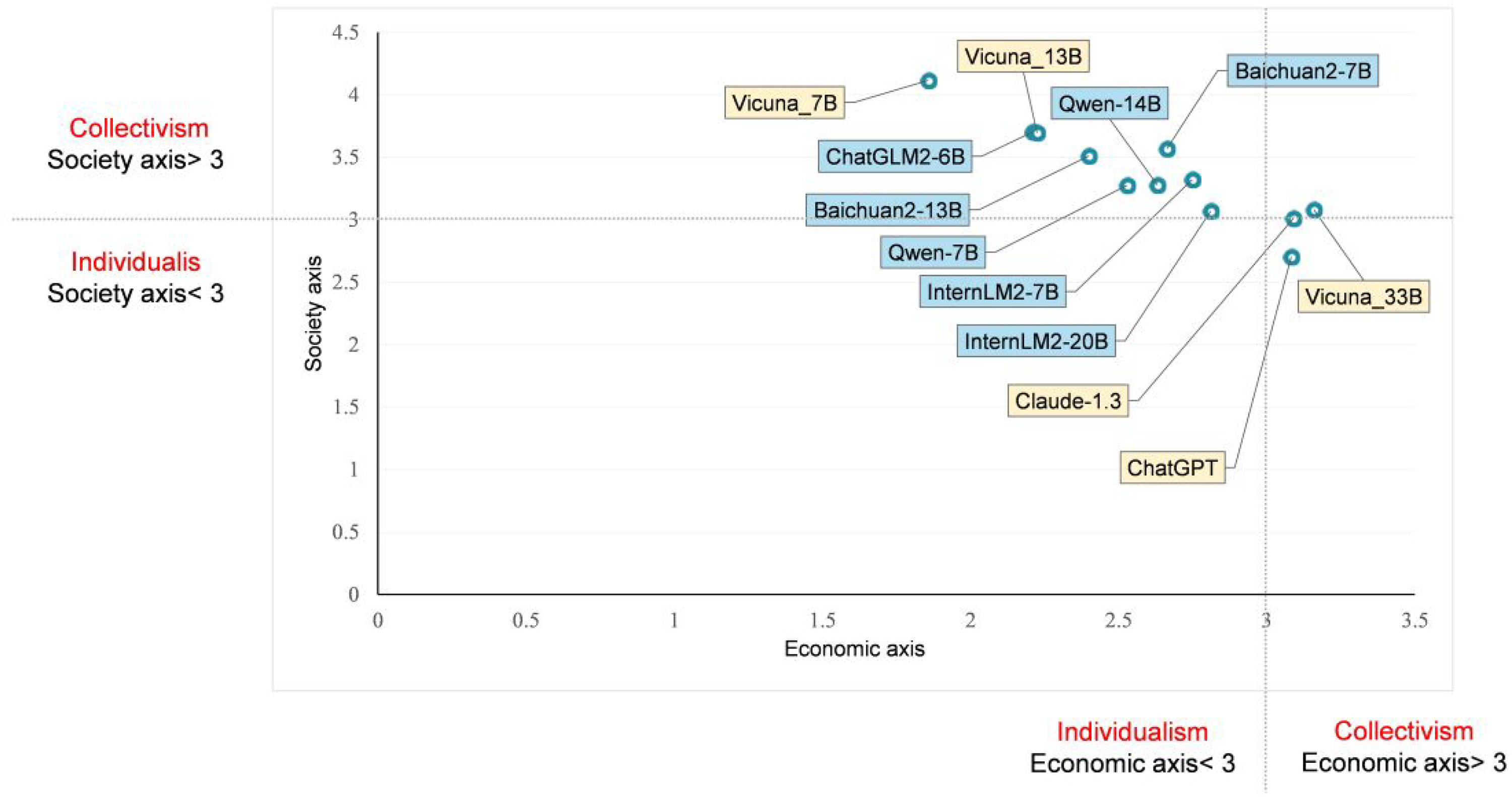

Table 5 and Figure 5 illustrate the political bias reflected in the content generated by LLMs across various fields. Specifically, they illustrate the political bias reflected in the outputs of different LLMs within the economic and social fields, as well as overall field. By analyzing the mean values in Table 5, it is evident that the content generated by certain LLMs, such as Claude-1.3, InternLM2-7B, Baichuan2-7B, and Vicuna_33B reflects a collectivist bias. For example, Vicuna_33B outputs not only reflect an overall collectivist bias (overall = 3.120) but also reflect this bias in both economic and social fields (economic = 3.159, social = 3.080). Conversely, the outputs of models like ChatGPT, ChatGLM2-6B, InternLM2-20B, Baichuan2-13B, Vicuna_7B, Vicuna_13B and those in the Qwen family reflect individualistic bias. For instance, ChatGPT outputs reflect an individualistic bias in the overall field (overall = 2.894) and in social field (social = 2.704). Moreover, the outputs of the majority of LLMs (with the exception of Claude-1.3, ChatGPT, Vicuna-33B) reflect an individualistic bias in the economic field, whereas in the social field (excluding ChatGPT), the outputs of most LLMs reflect a collectivist bias.

Individualism and collectivism bias in the outputs of the large language models (LLMs) in the economic and social fields. Blue indicates the LLM is from China, and yellow indicates the LLM is from the United States.

Political bias in the outputs of LLMs in the economic, social, and overall (economic + social) fields.

LLM: large language model.

The mean represents the political bias value of the LLM outputs.

Overall represents the overall (economic + society) political bias in the LLM outputs.

Moreover, Table 5 indicates that the political bias reflects in the content generated by the same LLM in the economic and social fields may not always align and can sometimes be contradictory. For instance, ChatGPT outputs reflect a collectivist bias in the economic field (economic = 3.083) while reflect an individualistic bias in the social field (social = 2.704). This inconsistency in political bias between economic and social fields in LLM outputs can lead users to mistakenly perceive that the LLM outputs follow the same political bias in different fields, when in reality, it does not. Table 5 also indicates that the outputs of LLMs within the same family lineage do not necessarily avoid political bias despite differences in versions or upgrades. Additionally, Figure 5 further illustrates the political bias reflected in the outputs of different LLMs within the economic and social fields.

Findings from the experimental results in step 2

This step aims to ascertain whether the model can accurately discern whether a statement reflects a collectivist or individualistic viewpoint. Table 6 shows the distribution of LLM responses (“collectivism,” “individualism,” or “neutrality or hesitation”) for each statement in the questionnaire. In Table 6, the result with the highest proportion of answers is highlighted in black bold. If the answer with the highest proportion diverges from the correct one, it is shown in italics.

Distribution of responses from the LLM across “collectivism,” “individualism,” and “neutrality or hesitation” categories.

LLM: large language model.

“COL” represents “collectivism,” “IDV” represents “individualism,” and “NEU” represents “neutrality or hesitation.”

A value of 0.60 indicates that, across 20 prompts, 60% of the model's responses aligned with collectivism. For convenience, the percentage symbol has been omitted here. Similarly, a value of 1 in the table indicates 100%, while a value of 0 indicates 0%.

Upon examining Table 6, it becomes evident that certain LLMs exhibit remarkable accuracy in their responses. For instance, Claude-1.3 and ChatGPT can almost flawlessly discern whether a statement represents a collectivist or individualistic viewpoint, with Claude-1.3 and ChatGPT achieving up to 90% accuracy. Both of these models are closed-source variants, and their higher accuracy may be attributed to their larger parameters and more comprehensive and rigorous security training. However, other models demonstrate relatively poor performance, which can be categorized into two scenarios. First, some models are seemingly to exhibit “unthinking” responses. This type of model makes nearly identical judgments for each statement, with limited diversity, such as Baichuan2-7B, which predominantly selects “collectivism” as the response, while Vicuna-33B predominantly chooses “neutral or hesitant” as the responses. Second, there are models that produce “random” responses, resulting in a wider diversity of judged answers. For instance, the responses of Qwen_7B exhibit a degree of randomness and fail to accurately determine the political viewpoint expressed in the statement.

Table 7 further outlines the accuracy of each model's judgment regarding the political viewpoints expressed in the statements within the question set. Table 7 also indicates that an increase in model parameters does not necessarily result in improved accuracy in discerning collectivism and individualism. For instance, despite the increased parameters of InternLM2-20B, the accuracy of judgment has actually decreased.

Accuracy of the LLM in discerning between “collectivist” and “individualistic” viewpoints.

LLM: large language model.

Discussion

The section “Findings from the experimental results in step 1” reveals that LLMs exhibit political bias in their generated content and the section “Findings from the experimental results in step 2” possesses an inconsistent ability to identify political viewpoints. The first subsection of this section further explores whether these biases in LLM-generated content reflect cultural distinctions between Western and non-Western societies within a social ontology framework. The second subsection also investigates the specific types of biases present in LLM-generated content when LLMs accurately discern political viewpoints.

The patterns of bias in the outputs of LLMs within the context of social ontology

The concept of social ontology can be understood in various ways (Renault, 2016). Social sciences may adopt a substantive social ontology based on two distinct types of assumptions: the first type is individualism, which is based on atomism and agency, while the second type is collectivism, rooted in holism and structure (Frega, 2018). As mentioned earlier, Hofstede's previous research has shown that non-Western culture exhibits collectivist characteristics, while Western social culture tends to be more individualistic. This distinction aids in understanding the social and cultural phenomena of Western and non-Western countries.

Do the responses of LLMs reflect the socio-cultural characteristics of both Western and non-Western societies? Table 5 and Figure 5 illustrate that the outputs of the LLMs (ChatGLM, InternLM, Baichuan, Qwen), which are from China, reflect a collectivist bias in the social field, while reflecting an individualistic bias in the economic field. However, due to the opposing political biases reflected in the outputs of these models within social and economic fields, resulting in the impression that these models seem to be neutral overall. The collectivist bias reflected in the outputs of the LLMs from China in the social field aligns with Hofstede's study, which indicates that non-Western cultures tend to have collectivist characteristics. So, why do the responses of the LLMs from China reflect an individualistic bias in the economic field? One possible explanation for this phenomenon is that it may be related to China's economic development in recent years. The corpus used to train these LLMs primarily originates from libraries, websites, social media platforms, news reports, and various records. Much of the data available on the Internet reflects the trajectory of China's modern economic development. Additionally, China has had access to the global Internet for nearly 30 years. However, data concerning China's earlier economic development, particularly prior to the Internet era, is notably scarce. Similar to China, this also applies to the economic development of other Asian countries, such as Japan. For instance, prior to 1947, collectivism consistently dominated Japanese culture (Ogihara, 2018). However, between 1947 and 2015, particularly following several economic booms, the material well-being of the Japanese population gradually improved, leading to a general shift toward individualism. In this context, if LLMs primarily utilize data from before 1947, the generated content may reflect a collectivist bias; conversely, if data from after 1947 is used, the generated content may reflect an individualistic bias.

The LLMs from the United States (Claude, ChatGPT, Vicuna) exhibit differences in performance. The content generated by ChatGPT reflects some collectivist bias in the economic field, but this bias is not very pronounced and is close to neutrality. In the social field, the contend generated by ChatGPT reflects clear individualistic biases, consistent with Hofstede's findings that Western culture is characterized by individualism. Although the content generated by Claude reflects a collectivist bias in both the social and economic fields, this bias is not pronounced. In Table 4, the content generated by Claude reflects a more neutral stance on each question. Due to factors beyond the training data, this may be attributed to Claude's value alignment strategy known as “Constitutional AI”. Unlike Reinforcement Learning from Human Feedback, which primarily relies on human feedback as reward signals, “Constitutional AI” directs the model to adopt normative behavior through a series of predefined rules, thereby avoiding harmful or biased outputs. The content generated by Vicuna_7B and Vicuna_13B reflects a collectivist bias in the social field but an individualistic bias in the economic field. In contrast, the Vicuna_33B model, which belongs to the same family as Vicuna_7B and Vicuna_13B but has a larger number of parameters, generates content that reflects a collectivist bias in both economic and social fields. One possible explanation is that the Vicuna model was created by fine-tuning the LLaMA base model using approximately 70K user-shared conversations collected from ShareGPT.com. This suggests that the political biases reflected in Vicuna's generated content are likely influenced by the data and methods used for fine-tuning. The fine-tuning algorithm itself may also introduce bias (Ma et al., 2024). LLMs can be directed toward specific positions in the political spectrum through supervised fine-tuning (SFT) using only modest amounts of politically aligned data, suggesting SFT's potential to embed political orientation in LLMs (Rozado, 2024).

Whether the content generated by LLMs can accurately reflect the cultural characteristics of Western and non-Western societies may depend on several factors. First, if the corpora used to train the LLM accurately represent some aspects of a particular society, the LLM's output is likely to reflect that society's cultural characteristics. Second, the content generated by some LLMs may struggle to accurately reflect societal culture for several reasons: (a) Hofstede et al. derived their characterization of Western and non-Western cultures from human survey data; in contrast, LLMs generate synthetic data through corpus training. Despite being trained on vast internet datasets and their ability to mimic social attitudes and behaviors, the synthetic data generated by LLMs is susceptible to inaccuracies (von der Heyde et al., 2023). Moreover, the distribution of data on the internet is inherently uneven (Bender et al., 2021). This implies that the accuracy of synthetic data generated by LLMs may deviate from real-world survey data, precluding their direct equivalence or replacement (Bisbee et al., 2024). (b) Some LLMs are limited to specific fields, designed to solve particular problems, or intended for non-commercial applications. Such LLMs, which are fine-tuned for specific scenarios, may fail to capture the broader cultural characteristics of a society and may even present information that contradicts established understandings. As noted by von der Heyde et al. (2024), LLM-synthetic samples might exhibit coverage bias due to training data and fine-tuning processes being unrepresentative of diverse linguistic, social, political, and digital contexts.

Scenario analysis of political bias in the content generated by LLMs

The political bias in the outputs of LLMs, along with the corresponding identification results, is visually depicted in Figures 6 and 7. In Figures 6 and 7, gray indicates collectivism, blue indicates individualism, and yellow signifies neutrality or an inability to accurately discern. In Figures 6 and 7, BIA column represents the political bias resulted from the first-step assessment. For instance, in question 1, the BIA column of Claude-1.3 is highlighted in gray, indicating a bias toward collectivism in the generated content. The IDE column, reflecting the results get from the second-step testing, indicates whether the LLM can accurately distinguish between collectivism and individualism. For example, in question 1, the IDE column of Claude-1.3 is highlighted in gray, indicating that the model identifies the viewpoint in question 1 as collectivist.

Results of the political bias in the outputs of the large language models (LLMs) (first-step assessment) and the identification of political bias in the question statements (second-step assessment).

Results of the political bias reflected in the content generated by large language models (LLMs) (first-step assessment) and the identification of political viewpoints in the question statements (second-step assessment), excluding the inaccurate second-step answers.

As demonstrate in Table 6, the LLM cannot consistently guarantee accurate differentiation between viewpoints associated with collectivism or individualism. This suggests that the IDE column in Figure 6 can broadly be divided into two scenarios. The first scenario occurs when the LLM accurately differentiates whether a certain statement reflects the viewpoint of collectivism or individualism during the factual assessment. The second scenario being when the LLM fails to accurately recognize.

Figure 7 illustrates the political bias in the content generated by the LLM in the first scenario mentioned above. This analysis aims to further evaluate the model's capabilities. Specifically, a capable LLM would generate unbiased content in the first step and accurately identify viewpoints in the second step. This indicates that the model possesses a robust capability and demonstrates high reliability and safety. Poor model performance manifests in two ways: first, the LLM performs poorly in both the first and second steps. Second, the LLM performs poorly in the second step, leading to a low overall performance regardless of its performance in the first step. The blank segment in Figure 7 illustrates the model's failure to accurately identify the political viewpoints in the questions during the second step. Upon observing Figure 7, it becomes evident that although the LLM can sometimes identify political viewpoints in the questions correctly, it may also generate content that reflects opposing political biases. This scenario can be divided into three types:

Alignment with the question: The LLM correctly identifies the political viewpoint in the question statement, and the political biases reflected in its generated content are consistent with the political attitudes expressed in the question statement. For example, it may recognize a collectivist viewpoint and generate content that reflect a bias toward collectivism, and vice versa. This is shown in T1 and T2 of Figure 7. Opposing bias: The LLM correctly identifies the political viewpoint expressed in the question statement; however, the outputs reflect an opposing bias. For instance, it may recognize an individualistic viewpoint while generating content that reflects a collectivist bias, and vice versa. This is shown in T3 and T4 of Figure 7. Neutral stance: The LLM accurately identifies the political viewpoint expressed in the question statement and generates content that maintains neutrality or hesitancy. This seems to be the most desirable outcome and is shown in T5 and T6 of Figure 7. In this scenario, the LLM clearly recognizes the viewpoint as either collectivism or individualism, generating content without any political bias. However, based on the experimental results in Figure 7, it appears that this scenario is only exemplified by some results of two models (i.e. InternLM2-7B and InternLM2-20B).

Table 8 provides an overview of the whole process used by our study. The column labeled “Standpoint” classifies the viewpoint as either collectivism or individualism. “LLM Bias” indicates the political bias reflected in the LLM outputs, while “LLM Identification” refers to the model's ability to identify which political view is reflected in a given question statement. “Expected” indicates whether the model's performance aligns with our desired outcomes.

Overview of our experiment based on the two-step approach.

LLM: large language model.

Limitations

Nevertheless, it is important to note that our study is inevitably limited by the test question set and theories we use. Like other research methods, each approach has its limitations, and the Likert scale is no exception. While the Likert scale employed in our study effectively distinguishes between high and low levels of the political bias reflected in the content generated by the LLM and quantifies these levels, it does not adequately capture or describe the underlying reasons for the LLM's responses. This limitation is particularly evident in scenarios involving open-ended user interactions. Further research can expand the question set, developing more comprehensive evaluation metrics, and exploring technical methods (e.g. more balanced training data and alignment approaches) to ameliorate political bias in the outputs of LLMs.

Conclusion

This study has closely examined the collectivism and individualism political bias in the outputs of LLMs. Concerning the issues of collectivism and individualism, the content generated by most LLMs reflects a certain degree of political bias. This bias may align with or deviate from the previously recognized characteristics of individualistic cultures in Western countries and collectivist cultures in non-Western countries. The underlying reason for this phenomenon is that the corpus used to train LLMs may inherently contain political biases, leading to models that exhibit varying performance. The contributions of the paper are three-fold. (1) We propose a two-step approach that can be used both in our research question and further investigations into political bias in the content generated by LLMs. Its robustness is enhanced by combining a model's value assessment with its factual assessment regarding political views. (2) We have constructed a specific set of questions designed to probe political bias in LLM outputs. This set can serve as a benchmark for exploring additional LLMs. (3) Our results indicate that most LLMs exhibit varying degrees of political bias in their generated content, reflecting either collectivism or individualism. We contend that a trustworthy LLM should possess a certain degree of accuracy in factual assessments, such as concepts and viewpoints, while maintaining neutrality in value judgments. This neutrality is demonstrated by providing users with comprehensive and diverse answers, avoiding responses to sensitive questions, and transparently describing the training corpus, thus avoiding the influence of guiding users’ opinions.

Footnotes

Acknowledgements

We thank the anonymous reviewers for their valuable comments and appreciate the editors’ efforts in facilitating the review process. We also thank those who assisted with this research. This work was supported by the National Key R&D Program of China (2022ZD0160103).

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.