Abstract

This paper examines the Web ARChive (WARC) file format, revealing how the format has come to play a central role in the development and standardization of interoperable tools and methods for the international web archiving community. In the context of emerging big data approaches, I consider the sociotechnical relationships between material construction of data and information infrastructures for collecting and research. Analysis is inspired by Star and Griesemer's historical case of the Museum of Vertebrate Zoology which reveals how boundary objects and methods standardization are used to enroll actors in the work of collecting for natural history. I extend these concepts by pairing them with frameworks for studying digital materiality and the representational qualities of data artifacts. Through examples drawn from fieldwork observations studying two data-centered research projects, I consider how the materiality of the WARC format influences research methods and approaches to data extraction, selection, and transformation. Findings identify three modalities researchers use to configure WARC data for researcher needs: using indexes to support search queries, constructing derivative formats designed for certain types of analysis, and generating custom-designed datasets tailored for specific research purposes. Findings additionally reveal similarities in how these distinct methods approach automated data extraction by relying upon the WARC's standardized metadata elements. By interrogating whose information needs are being met and taken into account in the design of the WARC's underlying information representation, I reveal effects on the emerging field of web history, and consider alternative approaches to knowledge production with archived web data.

Keywords

Introduction

Web archiving initiatives from libraries and archives aim to preserve digital cultural heritage, recording the changing content of web pages over time. The scale and scope of web collecting has led to coordinated efforts between institutions, and the international community of practitioners has developed a shared set of tools centered around the WebARChive (WARC) file format for recording data. Recent initiatives aim to expand uses of WARC data through research projects analyzing web archives at scale. In this context, I study how WARC data are used by teams of researchers through ethnographic fieldwork in two settings. While prior work addresses the WARC format's structure and resulting challenges for research (Ruest et al., 2022), I take an in-depth approach pairing fieldwork with an investigation of the format's origins, and close reading of its design. My work is motivated by a need to understand how researchers studying archived web data negotiate the particularities of data materiality, and how representational forms ultimately shape and constrain research practices.

My study of the WARC reveals how its design originates from a particular organizational context, and plays a central role in standardizing collection methods for the international web archiving community. I then explore the effects of its design, and the three distinct approaches to extracting and reconfiguring WARC data used by the research projects I studied. Findings reveal how multiple approaches for reconfiguring data are all limited to selection and extraction via the WARC's standardized data elements. By interrogating effects of material design on research methods, I aim to address questions of who makes decisions about data materiality for these collections, and whose interests are served.

Background: Studying web archives as data

Institutional web collecting efforts have captured and preserved websites over the past 25 years, providing access to historic versions of web pages. Interfaces like Internet Archive's Wayback Machine can be used to browse archived versions of web pages, which remains a primary mode of access for many web archives users. For example, Internet Archive's collection has been used as a source for legal and journalistic evidence as well as for scholarly research and finding pages no longer available on the live web (Eltgroth, 2009; Dewey, 2014). Beyond browsing, web archives have increased their engagement of research users over the past decade by investing in new infrastructure for access to collections’ underlying data files. The need for data-centric research uses was highlighted in an early report from Thomas et al. (2010), and the tools and access interfaces emerging from subsequent efforts have resulted in a noted shift from “document-centric” approaches studying individual archived pages, toward approaches studying whole collections and analyzing web archives data at scale (Hockx-Yu, 2014). This shift to data-centered access is seen in the development and testing of interfaces like Internet Archive's “Archive Research Services,” 1 and the British Library's BUDDAH project. 2 Analysis of collections at scale has also revealed issues of data gaps and temporal inconsistencies; Ben-David and Huurdeman (2014) note that taking the whole collection as an object of analysis requires attention to search artifacts resulting from collection methods or selection decisions.

Therefore, alongside these big data approaches, there is a growing recognition of how sociotechnical factors influence archiving practices. Data-centered analysis requires significant technical capacity to support web archives’ large scale of data, but also requires an understanding of legal and ethical constraints, and tacit organizational practices that shape collection and use of archived web data. As a result, web archives are increasingly understood as sociotechnical infrastructures, aligning with the dimensions outlined by Bowker and Star (2000): Summers and Punzalan (2017) address the role of breakdown and repair in collecting practices; Ben-David and Amram (2018) consider how to reveal the “black boxed” processes of collecting; Ogden (2021) identifies how archiving decisions are influenced by organizational norms and membership; and, Hegarty (2022) analyzes the metaphor of “publication” for web materials relating to institutional archives built on the “installed base” of libraries.

My study continues this trajectory, framing web archives as information infrastructures whose sociotechnical dimensions influence possibilities for knowledge production. It is premised on the fact that data-centered “whole collection” analyses of web archives rely upon standardized data artifacts. While past work has identified the use of data derivatives and outlined methodological approaches (Weber, 2018; Ruest et al., 2022), I focus on the construction of data artifacts themselves as a determining factor that ultimately shapes and constrains research. The materiality of archived objects and web pages (relative to live-web counterparts) has been a longstanding topic of interest for web archives scholars (see, Brügger, 2013; Helmond, 2017; Rogers, 2017), however the materiality of archived data remains an undertheorized area.

Beyond web archives, investigations of “collections as data” consider digital transformations made upon cultural heritage material (Padilla, 2017). Related discussions highlight the labor, politics, and infrastructures underlying digitization and processes of transforming physical materials into machine-actionable formats (Lischer-Katz, 2017; Thylstrup, 2018; Ringel and Ribak, 2021). For digital collections, Loukissas examines the rich and varied metadata of the Digital Public Library of America, “assembled from heterogeneous sources, each with their own local conditions” (Loukissas, 2019: 57). For digitized archival sources, Zaagsma considers how “the power relations and political visions embedded in analogue classifications,” are reproduced even when (re)classified for purposes of findability and maintaining a “workable order” (Zaagsma, 2022: 13). Additionally, work from science and technology studies explores the collection and construction of data artifacts as scientific evidence, considering how metadata and organizational contexts influence data's collection, flows and “frictions” (Edwards et al., 2011; Ribes and Jackson, 2013; Bates et al., 2016). I aim to bring web archives into conversation with this scholarship through examining how data standards have become integral to web archiving, and how standardized classifications and metadata are embedded within organizational and technical arrangements.

Analytical framework

Inspired by Star and Griesemer's (1989) study of knowledge production and information infrastructures, I apply their conceptual framing to web archiving's automated methods of born-digital collecting. Where Star and Griesemer's analysis of an early 20th C museum reveals how collecting practices were central to the professionalization and development of natural history as a scientific field, I consider how (or if) current institutional web archiving practices serve a similar role for the emerging field of web history.

Two influential concepts emerge from their Museum of Vertebrate Zoology (MVZ) case: boundary objects and methods standardization. Boundary objects are widely discussed, though frequently mischaracterized and rarely positioned in relation to methods standardization. Introduced in the analysis of MVZ, boundary objects were considered “both plastic enough to adapt to local needs and the constraints of the several parties employing them, yet robust enough to maintain a common identity across sites” (Star and Griesemer, 1989: 393). Subsequent refinement positions them as “objects that both inhabit several communities of practice and satisfy the informational requirements of each” (Bowker and Star, 2000: 16). Star further expands on the etymology: “boundary” is not considered an “edge” but rather a “shared space,” and “object” encompasses material entities and “something people (or, in computer science, other objects and programs) act toward and with” (Star, 2010: 603). While other studies characterize them primarily through interpretive flexibility, boundary objects must be understood in their role of structuring material and organizational relationships.

Material concerns for storage, preservation, and recordkeeping are at the forefront of Star and Griesemer's analysis of MVZ as an information infrastructure. They describe the museum director's concern for preserving certain material qualities of physical animal specimens, and how, “it makes a great deal of difference to ease of measurement, handling and storage whether the limbs are ‘frozen’ at the sides of the body or outstretched, straight or bent” (Star and Griesemer, 1989: 405). Additionally, the director introduced standardized templates to record field notes, a “stringent and simple” system which succeeded in ensuring the quality and integrity of the collected specimens while accommodating the needs of amateur collectors, local trappers, traders, and farmers working in the wilderness. These shared methods for specimen collection served as a “lingua franca” among the museum's diverse actors and social worlds, as they “translate specimens into ecological units via a set of field notes” (Star and Griesemer, 1989: 405). The MVZ's collection relied upon negotiation among actors who were enrolled in the work of gathering specimens for the museum and the resulting repository of specimens are a representative type of boundary object, structuring the museum's work and the development of natural history as a field of study.

I argue and demonstrate here that an analysis of methods standardization and boundary objects similarly applies to the development of web archiving. Just as the material qualities of physical specimens influence their subsequent measurement, handling, and storage, I consider how material choices and methods of collecting data artifacts influence subsequent uses. In order to examine and analyze the material qualities of web archiving's artifacts, I pair Star and Griesemer's framework with recent scholarship studying data practices in relation to digital materiality. For instance, Leonardi is influential in arguing that digital material like software provides “hard constraints and affordances in much the same way as physical artifacts do” (Leonardi, 2010: no pagination). Blanchette (2011) subsequently addresses digital objects as “logical and material entities,” and Kirschenbaum (2012) expands on this by distinguishing “forensic materiality” addressing bits written to media storage devices and drives, from “formal materiality” or the logical aspects of file formats or encoding.

Extending from past work on digital materiality, Dourish introduces the concept of “materialities of information representation” to consider how particular instantiations of data serve to “constrain, enable, limit and shape” subsequent uses and applications (Dourish, 2017: 6). Through several case studies, Dourish “examines the material forms in which digital data are represented and how these forms influence interpretations and lines of action—from the formal syntax of XML to the organizational schemes of relational databases and the abstractions of 1 and 0 over a substrate of continual voltage—and their consequences for particular kinds of representational practice,” ultimately arguing that “material arrangements of information—how it is represented and how that shapes how it can be put to work—matters significantly for our experience of information and information systems” (Dourish, 2017: 4). His analysis provides a template for conducting close readings of digital artifacts at the level of formal construction, and considering multiple scales of analysis simultaneously, understanding their forms as historically situated and evolving. I similarly aim to understand how the choices made in structuring a file format have broad effects on data's curation, analysis, and circulation for knowledge production. My work therefore takes up Dourish's insight that the properties of information representations impact their uses and applications in information systems through examining data artifacts within web archives’ research infrastructures.

My analysis therefore examines specific ways that data materiality is shaped and determined by a range of actors with varying goals, motivations, and needs. I adopt the framework set out by Star and Griesemer studying how methods standardization and boundary objects are used to foster cooperation among diverse actors who must “translate, negotiate, debate, triangulate, and simplify in order to work together” (Star and Griesemer, 1989: 388−9). The authors present several strategies to manage varied information needs of different social worlds: “via a ‘lowest common denominator’ which satisfies the minimal demands of each …; via the use of versatile, plastic, reconfigurable (programmable) objects that each world can mould to its purposes locally …; via storing a complex of objects from which things necessary for each world can be physically extracted and configured for local purposes” (Star and Griesemer, 1989: 404). The discussion of boundary objects describes more specific use of collections as “repositories” or “ordered piles of objects which are indexed in a standardized fashion,” and the use of information gathered through “fillable forms” which support “common communication across dispersed work groups” (Star and Griesemer, 1989: 410−11). My analysis revisits these strategies in conjunction with Dourish's approach to materialities of information representation and explores how translations, negotiations, and simplifications take place in knowledge production centered on data and digital artifacts.

Star notes how boundary objects emerge to meet actors’ evolving needs as, “over time, all standardized systems throw off or generate residual categories” (Star, 2010: 614). For web archives, this study is particularly timely as emerging data-centered infrastructures must rely on pre-existing data standards while also accommodating the newly introduced information needs of researchers. In studying the data practices of web archives researchers, I attend to these tensions, taking an interest in the “others” surfaced when conflicts or “mismatches” arise between actors’ needs or expectations and the more rigidly structured information systems. My analysis therefore addresses two key research questions: whose information needs are embedded in web archives’ materiality of information representation? And, how do standards and boundary objects determine or preclude the objects of study and analysis for web archives research? While I emphasize the effects on research of the archived web, the materiality of the metadata standards and protocols central to web archives extend to implications for knowledge production with networked data broadly.

Methodology

Fieldwork for an ‘ethnography of infrastructure’ “transmogrifies to a combination of historical and literary analysis, traditional tools like interviews and observations, systems analysis, and usability studies,” (Star, 1999: 382). Embracing this approach, my methods involve historical research and document analysis, close reading of digital artifacts, and fieldwork at two sites. Specifically, observations and interviews are used to study data practices from two projects: “Probing a Nation's Web Domain” in Denmark, and “Archives Unleashed” in Canada. These projects represent current developments in data-centered research infrastructures, and both aim to develop extensible methods, technical and organizational capacity, and foster a research community in addition to significant development of software tools. While I consider these as two separate sites, the Canadian and Danish web archiving actors and organizations also collaborate within the international web archiving community. Desk research served to uncover relationships among actors, and the history of the WARC's development.

Fieldwork in Denmark focused on participants from Aarhus University's NetLab and their collaboration with the Royal Library that began in 2016 involving a pilot project for testing and implementing the library's newly-built National Cultural Heritage Cluster (NCHC). 3 The project studies the library's Netarchive which regularly collects all domains registered with the Danish country-code (.dk) and other Danish web materials, mandated by legal deposit law since 2005. 4 The Netarchive is not publicly available due to Danish data protection law, and researchers must apply for access to browse and search the collection. In addition to their own research on web archives, NetLab provides support, services, training courses, and outreach on the study of internet materials for researchers from across Denmark. Researchers from NetLab also have a longstanding relationship with the Royal Library, having served as experts in developing the legal deposit law, and continue to serve on the advisory board. From February to May 2018, I observed five in-person meetings for the project including discussions and demonstrations of code and outputs, ranging from 60 to 120 minutes. The project team includes five individuals: a researcher and data engineer from the Royal Library, and two researchers and one developer from NetLab. I also conducted semi-structured interviews with three participants from NetLab and 12 participants from the Royal Library, including curators and developers outside of the project team. These included individual and group interviews, each approximately 60 minutes. Observations and interviews were supplemented with desk research including review of curatorial and technical documentation for the collection, and project reports.

Fieldwork in Canada focused on participants from Archives Unleashed (AU) datathons. AU is led by a group of researchers from the University of Waterloo and York University whose “datathon” events bring together participants to study web archives (The Archives Unleashed Project, n.d.). 5 Collections studied at each two-day datathon have varied, usually provided by the rotating host organization. In Canada, hosts and collections studied have centered on university libraries. Since there is no overarching collection of the Canadian web, 6 multiple institutions developed their own web archives, primarily using Internet Archive's Archive-It subscription service. For an annual fee, Archive-It subscribers can collect a specified volume of data (their “data budget”) using a web-based interface and technical infrastructure managed by Internet Archive. While Archive-It collections can be accessed openly on the web using an interface similar to the Internet Archive Wayback Machine, AU provides a data analysis toolset compatible with Archive-It collections. Specifically, the Archives Unleashed Cloud service was in development at the time of my study, tailored for Archive-It users and organizations (Ruest et al., 2021). In November 2018, I acted as a participant-observer engaging with three teams during the two-day “datathon” event hosted in Vancouver. I additionally conducted semi-structured individual interviews following the event, both in-person and online, ranging from 30 to 60 minutes. I focus here on the activities of one datathon team of three individuals (from Canadian and international organizations) who studied an Archive-It collection from the University of British Columbia libraries documenting the Site C Project, the development of a hydroelectric dam in the northeast of BC which sparked opposition from First Nations communities and environmental groups.

Deconstructing the WARC

I examine here how the WARC file format has come to play an essential role in web archiving, and the actors driving its development as a standard. While prior work briefly addresses the WARC format's structure and resulting challenges for research (Ruest et al., 2022), my investigation here traces both the format's origins and undertakes a close reading of its design. By drawing parallels to the case of the MVZ, I identify the WARC's role in standardizing collection methods for the web archiving community. I then use Dourish's framework of materialities of information representation to highlight which actors’ information needs are embedded within the format's design. This analysis is necessary to set up an understanding of the WARC's materiality before proceeding to explore examples drawn from fieldwork.

A central actor in the WARC's development is non-profit Internet Archive (IA), a self-described “digital library of Internet sites and other cultural artifacts in digital form” (Internet Archive, n.d.). Based in San Francisco, IA was founded by entrepreneur Brewster Kahle whose Wide Area Information Server internet publishing system was sold to AOL in 1995, after which he founded web analytics company, Alexa Internet, which was acquired by Amazon in 1999 (Feldman, 2004). IA's web collecting began in 1996, and the public Wayback Machine was introduced in 2001, providing an online interface to view and browse historic captures of websites. 7 Kahle's vision for IA centers on the idea “that knowledge is important to fulfilling ourselves as people and for building societies that grow and prosper” (Feldman, 2004). IA's mission “to provide Universal Access to All Knowledge” is reflected in their publicly-accessible archive of over 625 billion webpages and more than 15 petabytes of data (Goel, 2016; Internet Archive, n.d.).

IA's web archiving directly relates to Kahle's past work with Alexa Internet. Web analytics rely on the use of crawlers, automated bots that take ‘seed’ URLs and use those to discover a set of web resources connected through hyperlinks on these pages. Alexa's crawler technologies were repurposed and applied to IA's archiving tasks, programmed to not only discover but also capture and store web resources they encounter. The ARChive (ARC) format (predecessor of the WARC) was created by IA for data from Alexa's crawlers (Burner and Kahle, 1996). While the ARC format has been used by other collectors outside of IA, its development into the standardized WARC represents a shift from IA's internally-focused web archiving practices toward developing a global, collaborative collecting community.

This international community primarily includes members of the International Internet Preservation Consortium (IIPC). The IIPC was formed in 2003 by IA and 11 national libraries, and membership now spans “organizations from over 35 countries, including national, university and regional libraries and archives” (IIPC, n.d.). Members participate in annual meetings and workshops, as well as forums for discussing shared challenges and developing best practices. IIPC's working groups have collaborated on collecting web materials for transnational events like the Olympics, developed software tools, and produced technical reports for quality assurance in collecting. 8 As a founding member of IIPC, the Danish Netarchive actively participates in these technical and curatorial collaborations. The AU project has also become enmeshed with this community especially through work with IA and integration with Archive-It (Bailey, 2020).

The WARC's development began as an IIPC-led project in 2005, alongside two open-source software projects: the Heritrix archival crawler released in 2004, and Open Wayback play back software for accessing and viewing archived web pages in a browser, introduced in 2007 (Kunze, 2005; Mohr et al., 2004; Tofel, 2007). 9 The WARC file format bridges between these applications since data output by the crawler is input to playback software. The three together form a standardized toolset for collection and browser-based access of archived web resources. The WARC was published as an ISO standard in 2009, which has been subsequently updated in 2017, and use of WARC (or ARC) files is now prominent in the international community, used among 47% of 68 institutional web archiving programs surveyed in 2014 (ISO 2009, 2017; Costa et al., 2017). As a data format integral to collection and access, the WARC has become the “installed base” of web archives infrastructure upon which subsequent developments have been built. 10

Analyzing the WARC format in material terms, it provides a “fillable form” or template the crawler populates with data as part of the collecting process. As the crawler discovers and collects web resources—in technical terms “Uniform Resource Identifiers” (URIs) 11 —it writes a new warc-record which inscribes each resource and its metadata. The crawler writes these metadata into a ‘header’ for each record, recording the time, location (IP address), size of the resource (measured in bytes), and other details about the URI specimen and how it was collected (the warc-record structure is illustrated in Figure 1). Within a single WARC file, the crawler writes a series of these warc-records, cataloging its activities by recording each transaction; comparing to collecting methods from MVZ, the WARC records the crawler's “field notes” of its interactions on the web. The WARC's structure also emulates the structure of HTTP transactions which are similarly premised on headers and blocks. This design choice positions the WARC as an element of internet infrastructure which follows the standards set by pre-existing protocols. It also creates a (somewhat confusing) nested structure wherein the HTTP header for a given URI is taken as part of the content “block” of the warc-record.

The “fillable form” structure of a warc-response record.

Looking closely at the WARC's composition of records, headers, and content blocks, I draw attention to the representational structures it employs to organize and order archived materials. The URI is positioned as the primary object of collecting and a central unit of the archive. Additionally, writing WARC files reproduces elements of existing web and Internet standards, directly embedding structures like HTTP status codes, the three-digit codes from 100 to 599 summarizing the outcome of a web transaction such as 301 “moved permanently” or 404 “not found” (Fielding et al., 1999). Other standardized web elements inscribed in the WARC include MIME types, HTML tags, IP addresses, and domain names. In effect, the crawler's production of “field notes” is limited to elements from pre-existing web and Internet protocols and data standards.

The data collected and stored in a WARC can grow very large, very quickly from the crawler's automated discovery processes. The ISO standard recommends a 1GB limit for individual WARC files to optimize storage, meaning that the data from a single crawl can be spread across multiple files. 12 Therefore, a single collection can be stored across hundreds of WARC files listing thousands of URIs resulting in a relatively messy, heterogeneous set of data. With collections spanning decades and crawls captured at regular intervals, the scale of data multiplies quickly and archivists rarely interact with or view WARC data directly. Instead, WARC data is incorporated in other processes: browsing interfaces like the Wayback Machine rely on indexes for search and retrieval, and archivists use pre-generated reports and summaries that characterize and quantify WARC contents. Ultimately, the WARC format was designed to be read and accessed by other software and computational processing.

Recognizing the WARC as one of many possibilities for information representation of archived web data, alternatives could disregard the URI and center other units of analysis. For example, images and videos can capture the experiential aspects of browsing, or web scraping can be used to capture and store data in formats like JSON. The choices embedded in the WARC's design are grounded in the needs and constraints of IA's organizational context, with its use of crawler technologies that focus on large data volume and automated discovery. As the format was standardized, it has allowed IA to enroll a larger set of actors in their approach to collecting. Having established that these standardized methods for collecting web ‘specimens’ and recording field notes in the WARC's fillable form now dominate the practices of international web archiving programs, I explore below the effects of these design choices and impacts on research uses.

Translating the WARC for data-centered research

The WARC is central to methods standardization for web archiving, and the format is designed to ensure compatibility and interoperability with the software ecosystem established by IA and IIPC. Notably, its design was conceived to support particular forms of collecting and access (i.e., using the Heritrix crawler and Wayback playback software), and not constructed to accommodate the newly introduced data-centered methods. In exploring how the heterogeneous nature of data stored within WARC files presents challenges for large-scale research analysis, I consider here if the WARC's data is a sufficient boundary object to meet researchers’ varied information needs.

I present three examples from fieldwork illustrating how the large-scale and heterogeneous structure of WARC data is “translated, negotiated, debated, triangulated, or simplified,” and ultimately made amenable to research analyses. In describing and analyzing the different modalities researchers have developed for managing the difficult material constraints of the WARC format below, I relate them to strategies originally outlined by Star and Griesemer. For the Danish Netarchive's general researcher access, indexes are used to translate the structured data elements in a WARC file into a repository of homogeneous objects that can be efficiently filtered and queried. In the Archives Unleashed project, derivative data formats simplify the content of WARC files to support the use of recommended methods and tools. Finally, for the NCHC pilot project, researchers employ custom data extraction to reconfigure WARC data according to the specific demands of their project. Each approach comes with its own resource needs, requiring varied investments and commitments to maintenance.

Indexing and “ordered piles of objects”

The scale and distribution of WARC files makes retrieving an individual URI challenging, and Wayback-style browsing relies on a back-end index to locate and retrieve resources. 13 The WARC format is both designed to facilitate this kind of indexing based on the data elements in each warc-record header, and requires some form of indexing due to the decisions to segment a single crawl in multiple files based on maximum individual file size limits.

Beyond supporting Wayback playback, indexing is a key component of full-text search functions, which have been identified as an important access step for the web archives community to meet user needs (Thomas et al., 2010). The Danish Netarchive's comprehensive indexing began in 2013 and a full-text search interface was launched for the collection's 10th anniversary in 2015. In early 2018, I spoke with developers working on SolrWayback software, the Netarchive's new search engine (then in beta testing). SolrWayback provides a Google-like search interface, allowing sophisticated text-based queries and filtering of results based on domain, image search, and location-based search. The search engine uses a Solr index created with the “WARC Indexer” tool developed by UK Web Archive. 14

The WARC Indexer processes records at a granular level. Index fields for each URI include metadata fields from the warc-record header (e.g., content length), metadata fields from the URI's HTTP header (e.g., status code or “content type served”), and fields extracted from the URI (e.g., HTML tags and text). However, the WARC Indexer does not only capture pre-existing data fields within the WARC but also annotates records with additional information. For example, new ID and timestamp fields are added in the process of indexing, and additional file format characterization is performed on each URI using two tools: Apache Tika and Droid. Multiple index fields for file format can identify contradictions where the MIME type stated in the HTTP header does not match the URI's format characterized by external tools. Thus, while indexes are generally understood to “delete local uncertainties,” there are also ways to accommodate a degree of ambiguity and conflicting representations in the archived data. After indexing, each URI in the WARC file is represented by over 40 fields. These fields serve as the basis for queries when searching with SolrWayback, allowing a user to refine and filter results based on a combination of fields and values.

Indexing is a resource-intensive process for the Netarchive's collection of over 5.5 million WARC files and 700 TB of data. In 2018, the Royal Library was in the middle of re-indexing all files for a new iteration of the index, requiring months of computational processing to cover over a decade of WARC files. Processing was aided by use of the library's cluster computing power during downtime, when not being used by other projects. Like the segmenting of WARC files, the resulting index produced in this process is itself a large collection of individual files, each of which covers approximately 300 million documents from 10TB of WARC data. The entire Solr index is roughly 70TB or 10% the size of the collection's WARC files. While indexing comes with benefits, managing and maintaining the index comes with costs, and requires significant resources at this institutional scale.

Star and Griesemer reveal how indexing is one approach to managing conflicting viewpoints and negotiating information needs among different actors for the MVZ case. They characterize indexing as developing “an ordered pile of objects” or a repository from which individual units can be drawn as needed, with the benefit of modularity. Structured as a fillable form, the WARC naturally aligns with the creation of an index; indexing fields from each warc-record allows for sorting and extracting URIs as modular “units” from WARC data. However, generating the index requires both up-front costs and ongoing updates to incorporate newly collected items as well as newly desired search fields. Additionally, conceptualizing collected data as an “ordered pile” through this kind of indexing has broader material effects by pre-supposing and imposing a “same-ness” on all the archived materials.

For instance, two developers from the Royal Library described their unorthodox use of the WARC's data structure in recent experiments for capturing data from Twitter and Jodel, a “hyper-local” social media platform. This type of social media collecting involves APIs that transfer JSON data rather than HTTP transactions. Therefore, these data are not associated with specific URIs and are not discovered by crawlers like Heritrix. However, the team developed a way to fit this data into a WARC file, performing a “calculation” of where the data would exist as a URL:

We found that from this experimental week that we just had—that was Jodel and Twitter, but it could be Facebook too—that it actually fit quite well into this WARC format. So that tweet, it doesn’t matter if it's not HTML, it's still a representation of a piece of information on the net.

Well, we are faking the [HTTP] header of course. Because we don’t use the one we get.

[laughs] Yeah—it gets kind of interesting because both Twitter and Jodel have a unique URL that you can view in the web page. That's not the one we harvested though, but we know it's there.

The link is working.

So we harvest the raw data from JSON. And we calculate where can we view this on the live web. But we never visit the live web version. We just kind of say to the WARC file, ‘this would be as if we had taken the data from here from the live web, but it's not.’ So it's lying. The thing we’re doing. And there might be better ways of telling the truth in the WARC files.

Describing this workaround for data storage as “lying” or “faking,” the developers acknowledge their application of the WARC is not as intended in its original design. But this kind of deception serves a specific purpose: by “faking” data to adhere to the WARC format with consistently formatted headers, it allows these social media data to be indexed and searchable as with any other resource in a collection. The index (and the material forms of data it requires) is given primacy when considering how to archive information, and because of the “same-ness” required, the impulse to store data in the WARC format even extends to materials that are not generated with HTTP transactions.

Deleting “extraneous properties” and purpose-built derivative formats

A second approach to the difficulty of working with the WARC's material qualities uses derivative data configured to researcher needs. The AU datathon I observed was the sixth event since 2016 and was the first to introduce derivative data formats, representing an important stage of the evolving approach. This change was prompted by several concerns and constraints. Past datathons had provided participants with a coding toolkit allowing for more free-form exploration of the datasets. While this toolkit was also provided at the Vancouver datathon, many participants were unfamiliar with the Scala syntax it required, and additionally the limited computing resources available meant that only one virtual machine was available per team. The two-day time constraint of the event further limited used of extensive computational processing.

Datathon organizers introduced data derivatives extracted from WARC files to address these challenges, which also aligned with formats under development for the AU Cloud interface. Before the datathon started, organizers completed all processing to generate derivative files for the Archive-It collections studied. The four derivative formats provided for each collection included: a text file listing domain count, the full-text extracted from each HTML page, and two network-graph formats for link analysis. The AU website provided a brief description of derivative formats alongside methodological guidance, tutorials and recommended analysis tools. Using this approach, teams could run analyses in parallel on their own laptops since the computational demands for derivative data are much lower compared to the full-scale WARC files.

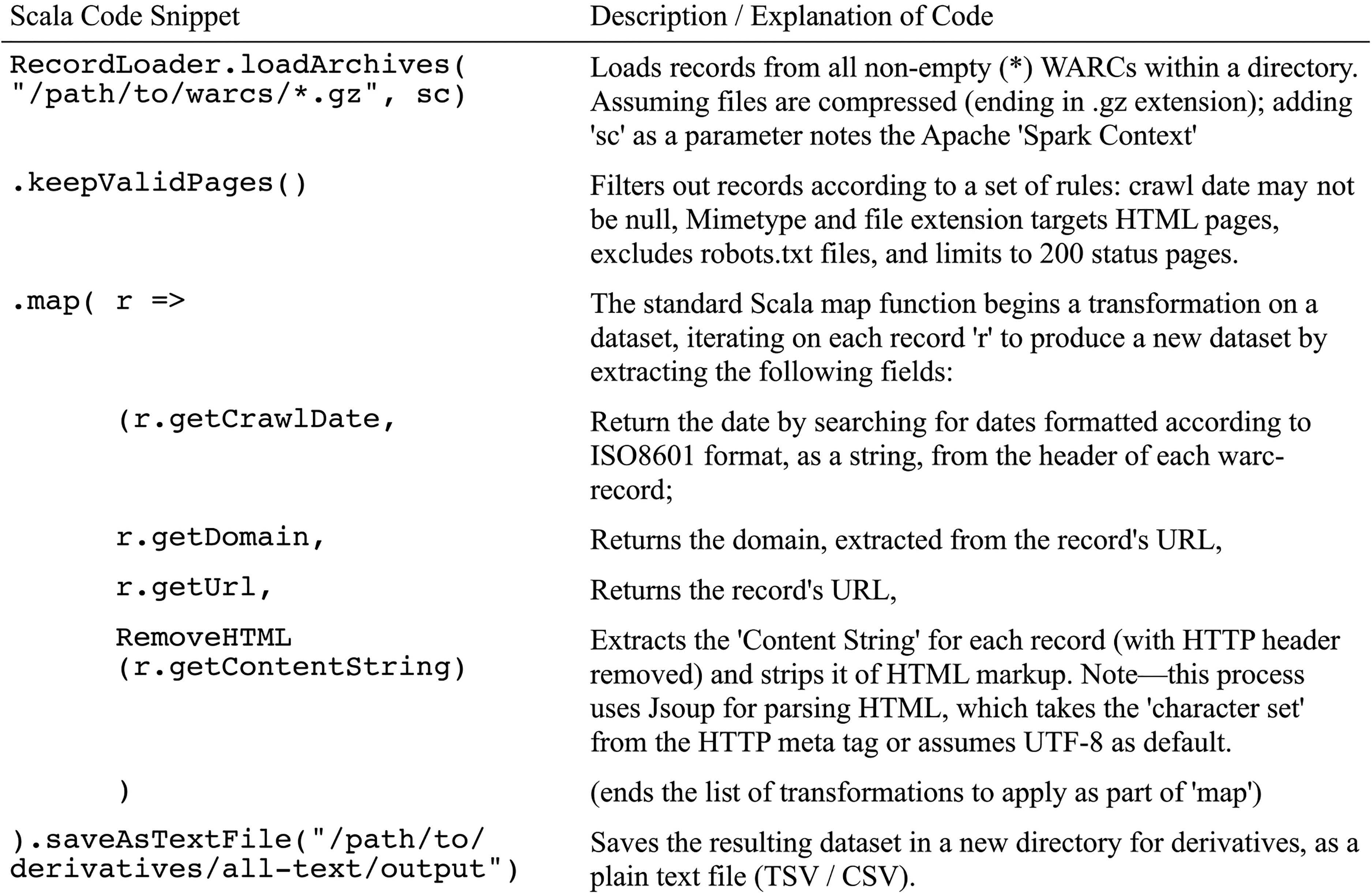

In material terms, each derivative extracts selected data from WARC files, reconfigured for a specific mode of analysis. For example, the AU-generated full-text derivative supports text analysis such as keyword counts and word clouds, sentiment analysis, topic modeling and Named Entity Recognition. A code snippet from organizers (Figure 2) details the process used to create a full-text file, annotated with an explanation of data selection and manipulation in each step. First, all WARC files from a single folder are ingested, and then data is selected by filtering out invalid pages (according to HTTP status codes). A table is created (using the map function), with each row composed of four fields: date, domain, URL, and page content stripped of HTML code. The table is then written in a plain text format to a file directory specified in the code. The resulting derivative is a smaller, more manageable size and in a format which is more familiar and accessible to most researchers (compared to the WARC).

Snippet of scala code used to generate AU full-text derivative (description/explanation by author).

These derivative formats exemplify the approach described by Star and Griesemer where “each participating world can abstract or simplify the object to suit its demands; that is, ‘extraneous’ properties can be deleted or ignored” (Star and Griesemer, 1989: 404). The AU project has adopted this approach of abstraction and simplification by generating purpose-built derivative data formats as a way to make WARC data more easily accessible to researchers. In effect, the full-text derivative simplifies the WARC files to generate textual data for analysis using specific tools. However, deleting what one set of actors determine to be extraneous properties can also lead to challenges for other actors who have differing information needs.

For example, one datathon team I observed encountered challenges with derivatives in their analysis workflow. Throughout the two days, the team tried different approaches to study the Archive-It collection on the Site C Project. Using a full-text analysis, the team noted “letters” as a recurring keyword, and discovered a set of web pages displaying tables including names and addresses as part of a petition or campaign of protest letters. Interested in testing a hypothesis around regional divides and shifting support of Site C's development over time, the team set out to extract geographical locations of these letter-writers based on postal codes in order to generate a time-lapse map visualization. However, as one team member describes, extracting tabular data was not possible using the full-text derivative: … our programmer did have to write a little script to extract tables out. And then needed the HTML tags—we got a different [derivative] dataset than the one that was actually presented to everyone at the beginning. Because the HTML had been stripped out and we needed that. [He] needed that to write his code to extract the tables with first name, last name, location and then letter content.

Though simplified data from the full-text derivative allowed the team to discover “letters” as an important keyword, this form of data was found to be over-simplified for the subsequent analysis. Ultimately, the team members required re-introduction of HTML elements (i.e., the tags denoting tables and cells) to complete their analyses. Luckily, an AU organizer modified the code for generating the full-text derivative and provided the team with a new data file that included HTML elements before the datathon ended. But this case illustrates how the research team's needs and objectives required going beyond the pre-generated derivatives and recommended workflows; their analysis relied on the organizers’ capabilities to supplement the pre-generated full-text derivative.

Navigating archived web data using purpose-built derivative formats promises the simplicity of data preconfigured for analysis for the AU project, a priority for datathons where time and computing resources are limited. However, prioritizing ease of use can result in over-simplification. The AU organizing team has continued revising and adding derivative formats since 2018, but derivatives risk becoming too prescriptive in guiding the forms of analysis possible—open questions remain on who decides what formats are needed and what elements are extraneous.

Custom coding of “reconfigurable, programmable objects”

A third approach for working with the WARC uses custom data extraction to serve the purposes of a specific research project. The “Probing a Nation's Web Domain” pilot project develops a bespoke corpus of data extracted from the Netarchive's systematic crawls. The project aims to track development of each Danish website or domain over a span of ten years, analyzing factors such as the increase or decrease of web domains, linguistic trends, and trends in website design. Analyzing this data with the library's NCHC cluster computing involves technical considerations for the significant scale of the collection (hundreds of Terabytes spanning 10 years), and adhering to specific legal requirements. Danish data protection law applies to the Netarchive's data since crawls can include sensitive personal information 15 and the NCHC is designed accordingly so that all data extracted from the Netarchive collection becomes the legal responsibility of researchers when moved to the cluster's data storage. Additionally, data access and processing is restricted to NCHC's virtual machines, meaning researchers legally cannot store and process data on their own computers.

To create a dataset with a representative set of URIs from each domain for each year the project team began developing specific criteria for which WARC files should be selected for analysis. The computational resources required for processing, in combination with the legal restrictions limiting access, led them to focus on selection by metadata from WARC headers first, after which they could extract additional data for the selected URI resources (such as HTML code and text). During the meetings I observed in 2018, the project team was refining their methods for selection and extraction of URI data from WARC files and documenting all steps in a report on the Extract-Transform-Load (ETL) process. An important outcome of the pilot project is making this ETL process available for future projects to adapt, supporting generation of their own datasets with NCHC.

The ETL process for extracting data embodies what Star and Griesemer describe as “the use of versatile, plastic, reconfigurable (programmable) objects that each world can mould to its purposes locally” (Star and Griesemer, 1989: 404). WARC data presents itself as a malleable object, programmable to specific purposes through use of computational processing tools. The WARC's digital data can presumably be extracted, reconfigured, and transformed to suit specific research needs using the ETL process. Yet, the ‘programmability’ or malleability of WARC data is influenced by other limiting factors and constraints in practice, and sorting archived data into individual domains proved more difficult than anticipated for the project team.

Selecting data according to domain was particularly challenging due to the Netarchive's use of distributed infrastructure with multiple crawler instances running in parallel. Each crawler discovers URIs independently and produces separate WARC data files, meaning that each separate file may contain URIs from the same website or domain, crawled within the same time frame. In theory, this problem could be solved by merging all data from all WARC files and then deduplicating based on URI. However, this option was impractical because of the significant processing power and time required to combine the entire collection into one dataset, as well as the legal restrictions on aggregating data in this way. With these constraints, the research team sought to develop specific methods for efficiently processing and selecting a subset of data based on metadata summaries before extracting URI data from the complete WARC files.

Addressing URI duplication, the research team developed the idea of a “main harvest” to select the WARC files where a domain was used as the “seed” for the crawler, distinct from the “by-harvest” data from additional crawls that unintentionally discover URIs from that domain. In effect, the “main harvest” is centered on a one-to-one relationship between each domain and a single crawl. Quantifying by-harvests also revealed a certain set of domains which specifically presented challenges to the research team's approach to filtering and extraction. These problematic domains with a large number of by-harvests included Twitter, sites hosting user-generated content (e.g., blogger.com, wordpress.com), as well as the PURE research portal used by Danish universities. For these domains, selecting a single “main harvest” was difficult since they were not used as the primary seed for any one crawl. 16 Ultimately, the research team chose to exclude these types of sites from the corpus for analysis due to the data cleaning challenges and inconsistencies they presented. However, I observe that these domains identified as “difficult” also share a common material characteristic in how they structure URIs focused on subdomains, reflecting how each acts as a platform for individual users, rather aggregating webpages all understood as authored by a single entity. The pilot project is centered on a particular vision of a “domain” as a single entity and the core unit of analysis, which broadly aligns with the data ordering and structures imposed by the Royal Library's software for configuring and managing crawls. However, the entities and units of analysis present in the wide breadth of archived web content were found to conflict with that vision, and re-ordering that data for consistent analysis alongside other domains was not simply a matter of selecting and sorting by URIs into domains.

While the WARC is considered to be a reconfigurable data object, specific constraints in this case limit the possibilities for extracting customized datasets. For example, certain approaches to analysis are precluded by both legal restrictions on direct access to the entirety of the collection and by a need to consider computational costs and efficiency in processing. The management of “by-harvests” also reveals how difficult it can be to impose another, alternative ordering upon data which is already materially ordered in a particular way according to individual URIs. In effect, ‘programming’ WARC data to meet local purposes isn’t completely versatile in this (or any) organizational context and technical environment. For the Netarchive, data's malleability relates to the resources available for re-formatting and reconfiguration, resulting in custom datasets that closely align with the pre-existing elements of WARC metadata headers which are most easily extracted and selected.

Discussion: Material complications for data as collections

The above analysis of the WARC's design and use reveals how it pre-configures the URI and its component elements as the primary units of analysis for data-centered studies. Each example from fieldwork illustrates the steps that researchers and developers take to rearrange data in ways that are counter to the organization of materials around metadata fields such as HTTP status codes, MIME types and file size. The Netarchive's developers use workarounds to insert tweets or Twitter data as objects of analysis within the structures of the WARC standard, acknowledging the way that this created a “fake” HTTP header to fit within the indexing scheme. The Site C datathon team required additional coding and computational processing to extract HTML table structures as a more granular object of analysis than provided in the AU full-text derivative. The Probing a Nation's Domain project could not filter WARC data directly based on URI since their conception of domains representing specific entities was not aligned with the structure of domains on the web which include platform users and subdomains. Together these examples demonstrate the significant work required—conceptual re-framing as well as human and technical resources—to re-order WARC data to accommodate researchers’ information needs. I contrast my findings with recent work that positions the WARC as an “unaccessioned collection,” analogous to a “shipping container full of banker boxes holding dozens of jumbled records” which has not yet been processed by archivists (Ruest et al., 2022: 2). I argue here that WARC data is in fact an organized set of records, but ordered according to the logic of the crawler rather than the logics of human users or curators. Evidently, the WARC represents the crawler's limited perspective for interacting with and recording web materials, and was not designed to meet the information needs of researchers. Additionally, my analysis reveals how materiality has real effects on the costs, energy and time for computational processing of web archives collections, revealing how idealized visions of endlessly malleable data archives 17 are stymied by practical limitations on processing and reconfiguration, particularly for often under-resourced cultural heritage institutions.

While the format was not designed with researchers in mind, it has become so embedded in institutional methods that, at present, data-centered uses of web archives are “locked in” to WARC-based workflows and toolsets. Actors working with archived data must learn to adapt to particular material conditions, using various processes of translation, negotiation, debate, triangulation, and simplification. Since the WARC's development has evolved separately from researcher needs, it is distinct from the MVZ case where collecting methods developed alongside natural history; this has resulted in challenges as the field of web archives is only now reckoning with what users require from their data infrastructures. Considering whose perspectives, goals, and priorities are embedded in the materiality of the WARC and its information representation, the format's organization according to crawler logic is also inherently tied to its origins with Internet Archive. With the WARC's standardization, IA has successfully enrolled other actors and established itself as a center of authority, expanding collecting activities in scope, coverage, and reach according to its mission to “provide Universal Access to All Knowledge.” Yet, all-encompassing, universalizing approaches can run counter to the needs of researchers, as Loukissas (2019) has argued with his call to consider the “data setting” over the dataset. Addressing the “data setting” for web archives requires looking beyond the methodologies centered on the data found in the standardized WARC files, toward tacit knowledge and alternative forms of documentation that exist in messier, non-standard formats (Ogden and Maemura, 2021). Recognizing that the WARC format is not in fact a “lingua franca” for collectors and researchers, additional objects, concepts, and terminology are needed to support interpretive flexibility in the study of archived web data. I propose here that expanding on the concept of ‘boundary objects’ can provide web archives with new methods that attend to the needs of researchers and integrate information from “field notes” generated both upstream and downstream of crawling activities. Rather than tailor research questions to align with the processes of analysis that the WARC's materiality affords, knowledge production and development of the field of web history requires deeper consideration for knowledge of the web which is not—and cannot be—written directly into the “source code” of the web and subsequently extracted according to the fields within standardized HTTP or URI-based data.

Unpacking the WARC format reveals how it is designed around automated technologies for accessing and storing data transmitted through network infrastructures, resulting in standardized web elements becoming enrolled, emulated, or reproduced in archival formats. I emphasize here the role that automation plays in structuring data formats, which then necessitates computational methods with their attendant limitations and reliance on pre-existing classifications. While much attention has been given to ‘collections as data’ and the transformations of digitizing archival materials, comparatively less work focuses on material transformations for collections composed of born-digital and born-networked materials. These findings begin to develop an approach for considering how the pre-configured data and metadata of digital sources can, should, and must be similarly examined with a critical eye, and not taken as given. Following work by Zaagsma (2022), analysis of the WARC here demonstrates the effects of specific design choices embedded in data structures, reflecting power relations and politics, which ultimately limit how data can be transformed, manipulated, or analyzed. Beyond web archives, similar material relationships should be examined for diverse fields of study and practical applications premised on capturing, storing and stabilizing digital data and traces from different networked and platform-specific sources including social media studies, computational social sciences, and open source intelligence. As demonstrated here, recording or storing computational data without attention to its material qualities can preclude future uses and interpretations.

Conclusion

By pairing Star and Griesemer's framework of boundary objects and methods standardization with Dourish's approach to information representation, I demonstrate here how we might interrogate whose information needs are being met and taken into account in the design of digital material by closely examining the underlying information structures of a format like the WARC. Analysis reveals how structuring data around URIs stems from practical goals of crawling (stemming from IA's institutional context) but has subsequently become embedded in wide-spread global standards and knowledge production with the archived web. Broadly, this study challenges the assumption that data is always, de facto a boundary object since it can be extracted from, manipulated, or indexed in ways that can appear to meet a variety of information needs. Extending Star and Griesemer's framework reveals that WARC data alone does not meet the information needs of researchers—knowledge production requires re-ordering WARC data through additional labor, resources, and conceptual reframing.

As a standard, the WARC format supports the development of interoperable tools and new methods of research for the international web archiving community. However, reliance upon methods compatible with standard forms of web data also shapes researchers’ objects of analysis and potentially forecloses questions or ideas which cannot be expressed, extracted, reflected or directly translated through these common data elements. This work therefore draws attention to the need for more carefully designed relationships between the materiality of data and the needs, affordances, constraints, and limits of research infrastructures. Beyond the context of web archives collecting and research infrastructure, I hope that this framing can continue to be extended and used to think through the ways that other data formats and standards substantiate knowledge production.

Footnotes

Acknowledgments

The author thanks Katie Mackinnon, Rebecca Noone, Karen Wickett and Kate McDowell for their feedback on an early draft of this article, and the anonymous reviewers for their insightful comments and suggestions.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Social Sciences and Humanities Research Council of Canada (Canada Graduate Scholarship 767-2015-2217, Michael Smith Foreign Study Supplement).