Abstract

The users, sensors and networks of the Internet of Things generate huge amounts of data. Given the sophisticated (artificially intelligent) algorithms, computing power and software available, we would expect governments to have successfully completed their digital transformation into Jane Fountain's (2001) ‘Virtual State’. In practice, despite heavy investments, governments often fail to enact new digital technologies in an efficient, appropriate or fair way. This article provides an overview of techno-rational and socio-political failures and solutions at the macro-, meso- and micro-level to support digital transformation. The reviewed articles suggest a modest approach to digital transformation, with an emphasis on high-quality in-house IT infrastructure and expertise, but also better collaborative networks and strong leadership ensuring human oversight.

Introduction

Since the 1970s, many researchers have promoted the idea that public administration will undergo a data revolution, which will fundamentally reshape governmental structures, processes, and tasks (Shuman, 1975), leading to a full-on digital transformation of the public sector. Half a century later, after witnessing the IT productivity paradox (Brynjolfsson, 1993) and the e-government crisis (Savoldelli et al., 2014; Sorrentino and De Marco, 2013), the same vision is heralded again. Artificial Intelligence (AI) driven by big data is now touted as the next magic ingredient in digital transformation strategies (Guirguis, 2020; Kitchin, 2014; Löfgren and Webster, 2020; Mergel et al., 2019).

The benefits of using data and technology to remake government seem almost infinite. Numerous efforts have been made to identify potential use cases for the public sector and share best practices. For instance, Maciejewski's (2017) lists several good examples, such as Singapore's PLANET project which is used to improve public transport systems. Big data has also proven useful in controlling tax evasion and improving fraud detection (Rogge et al., 2017), and in law enforcement to better understand criminal networks (Chan and Bennett Moses, 2017). Moreover, big data and AI are increasingly used in health care (Agbehadji et al., 2020); is especially pertinent in the COVID-19 crisis (Kuziemski and Misuraca, 2020).

Still, scholars recognize that digital transformation does not always go as smoothly as planned. A vast amount of scholarly evidence has set out the detrimental consequences of the use of data and technology by the state. Increased datafication has led to ‘dataveillance’, and increased control over citizens, impeaching on privacy rights. Moreover, this datafication is flawed, biased and exploitative (Crawford, 2021). The (artificially intelligent) algorithmic decision-making systems that rely on this datafication are similarly biased and opaque, and often lead to a further disadvantaging of already vulnerable groups in society (Criado Perez, 2019; Lyon, 2014). Digital technologies have been shown to be anti-democratic in their effects and consequences (Yeung, 2017).

This has to do with the fact that technologies are not simply objective but enacted (Fountain, 2001). In line with what Jane Fountain (2001) called technological enactment theory, technological possibilities need to be enacted into technological realities by organizational, political, and cultural actors. Therefore, Fountain argues that the ‘Virtual State’ needs to be built, to accommodate the internet, but also other technology. Now that the uptake of data-driven decision making and AI is on the rise, we continue to witness the building of the ‘Virtual State’. However, this construction process, involving processes and politics of institutional change, still remains as troublesome as described in Fountains 2001 book (Kuziemski and Misuraca, 2020; Vydra and Klievink, 2019). For instance, Goh and Arenas (2020) refer to a McKinsey report showing 80% of government's digital transformation efforts failing to achieve expected results. Almost two decades after the publication of Jane Fountain's (2001) seminal book ‘Building the Virtual State’, one might wonder why governments have yet to succeed in doing do. Through a systematic literature review, this article will answer the question of

Key concepts & theoretical underpinnings

The question of (un)successful use of data and technology by the state is grounded in a rich history of diverse literature. It is linked to the rationalization of the state, starting from Weber's ideal type of the rational-legal bureaucracy, towards the New Public Management (NPM) paradigm, revolving around further ‘optimized’ and ‘efficient’ evidence-based policy making (EBPM) (Osborne and Gaebler, 1992). Simultaneously, critical scholars have warned against the use of data and technology by the state as a means of (often failed or flawed) control (Desrosières, 1993; Porter, 1995; Scott, 1998).

These different bodies of work have converged in the literature on the ‘digital transformation’ of the state. Digital Transformation is used as a catch-all term to denote many things. Across literature, three features are commonly agreed on: (1) the use of digital (information) technology to provide existing and new government services (e-government); (2) organizational transformation and new relationships with stakeholders and citizens; and (3) the increased use of big data and information (Gong et al., 2020; Mergel et al., 2019).

Though Fountain did not yet use the term ‘digital transformation’ herself, her work on the ‘Virtual State’ has been foundational to the understating of how information technologies (IT) affect government, and state–society relationships (Mergel et al., 2019). Fountain's book dealt with the impact of the internet that promised a restructuring of the relationship between state and citizen to be simpler, more interactive, and more efficient. Fountain writes that for governments to exploit the potential of the internet; reform was needed. She then defines the emerging ‘Virtual State’ as ‘A government that is increasingly organized in terms of virtual agencies, cross-agency and public-private networks’ to deliver on this promise of efficiency and interaction (Fountain, 2001: 4).

Since the publication of her book, many more technologies have been introduced to government, often holding the same promises. Today it is the Internet of Things (IoT) that produce big data, and the (artificially intelligent) algorithms that can extract value from it, which will (supposedly) make the relationship between state and citizen simpler, more interactive, and more efficient. But again, to reap these benefits, government reform – digital transformation, or building the Virtual State – is required.

Fountain vividly illustrated the processes by which government actors learned to enact technologies with transformative potential during the 1990s, marking the pressing challenges governments face as they move beyond simply putting information and services on the web (digitization), to the more complex challenges of institutional reform (digital transformation). Fountain already noted that this reform consists of negotiations, conflicts and struggles among bureaucratic policy makers. She notes that these reforms also lead to uncertainties, unanticipated consequences and externalities in positive and negative variants. Twenty years after publication it seems relevant to take stock of these challenges set out by Fountain. As governments have continued the pursuit of a ‘Virtual State’, to harness the potential of data and technology (Gangneux and Joss, 2022).

Methods

Several case studies have been performed to better understand the failure to harness the potential of data and technology (Guenduez et al., 2020; Sanden and Neideck, 2021), and successful digital transformation more generally (Fleischer and Carstens, 2021; Gil-Garcia et al., 2017). Despite the obvious relevance of the subject, there is a notorious absence of secondary studies that seek to synthesize the results of those studies. As such, a systematic literature review is a timely and important contribution. Other literature reviews touching on the use of data and technology by the state have focused on the potential of e-governments to create public value (Twizeyimana and Andersson, 2019) or compared the success of digital and non-digital innovations (Mu and Wang, 2020). But none have explicitly focused on teasing out the conditions that lead to different dimensions of (un)successful use of data and technology in the public sector.

Data selection and analysis

A PRISMA approach was used to identify eligible studies (Moher et al., 2009), by searching WorldCat using the keywords: ‘Digital Transformation’ OR ‘Big Data’ OR ‘Data Science’ OR ‘AI’ AND ‘public sector’ OR ‘govern*’ AND ‘succe*’ or ‘fail*’ or ‘bad’. Further identification criteria were: (1) written in English 1 and (2) published between 2001 and 2021. A total of 352 records were identified and 17 duplicates were excluded. The remaining 335 articles were screened based on title and abstract. A further 151 articles were excluded. The full text was found for 161 out of the 184 articles. After scanning the full texts 106 articles were included in the review (these are marked with an * in the reference list). The final selection of articles was done on 17 January 2022. The search and selection process is summarized in Figure 1. The inclusion and exclusion criteria are provided in Table 1.

Preferred reporting items for systematic reviews and meta-analyses (PRISMA) flowchart.

In- and exclusion criteria.

We coded the articles for measures of success and failure of digital transformation, and additionally coded the given causes for this success or failure under macro, meso or micro-level factors. Following Vydra and Klievink (2019), we additionally divided causes as being either techno-rational (relating to how data is created, handled and analysed, often rooted in engineering and computer science disciplines) or socio-political (relating to how quantitative evidence and the advent of big data interacts with political and bureaucratic decision-making); while recognizing the interrelations between the two following sociotechnical theory (Garson, 2006).

Findings of the systematic literature review

The macro-level: requiem for a paradigm

A first list of problems are at the macro-level. Several articles identify the New Public Management (NPM) wave of organizational change as an underlying cause for the failure of digital transformation. NPM is a paradigm that lumps together a plethora of policy principles intending to improve public sector performance by making it more efficient (Dunleavy et al., 2005; Reiter and Klenk, 2018). Two main principles came out of the analysis as especially problematic: disaggregation and competition.

Disaggregation

Disaggregating large public sector hierarchies created a diversification of previous government-wide systems and practices across agencies. This has led to a plethora of public bodies with different mandates, cultures and professions (Andrews, 2019; Bailey et al., 2017; Löfgren and Webster, 2020). However, the reviewed literature argues that digitally transformed government agencies should be able to connect seamlessly with closely related programs of other agencies, to present a ‘one-stop’ service provision to citizens, decreasing administrative and cognitive burdens (Asgarkhani, 2007; Kettl, 2018).

However, the studies show that public agencies still operate in silos without interoperable IT infrastructure and integrated data (Cinar et al., 2018; Malomo and Sena, 2017; Yang et al., 2014). This fragmentation inhibits the integration of datasets into high-quality ‘big data’ that could provide valuable insights and or predictions for the public sector (Adad et al., 2020; Madanian et al., 2019). As such, the literature suggests that improving inter-organizational information integration should become a key priority; not only to do more, but to do better (Martinez-Mosquera et al., 2019). Current databases are badly linked leading to problems when this data is used as input for algorithmic decision-making. Sometimes databases provided by different vendors are not linkable at all (Kuziemski and Misuraca, 2020; Pencheva et al., 2018).

Authors note that few government organizations are willing to invest in linking systems or platforms across departments (Adam, 2020; Zhang et al., 2021). Breaking down data silos takes an active and sustained effort, not to mention a substantial investment with few immediate operational benefits (Deslatte and Stokan, 2020; Leiren and Jacobsen, 2018). Moreover, Zhang et al.'s (2021) case study shows that political power plays can stand in the way of sharing data. Several more critical articles also warn against increased data exchange, often in the light of privacy and security issues of storing and sharing data, as well as mission creep (Bertot et al., 2014; Sun and Medaglia, 2019).

This fragmentation into ‘stovepipes’ that lack interoperability also featured in Fountain's book, stating silos stood in the way of joint policy problem solving – a crucial feature of the Virtual State. Fountain remarked that breaking down silos requires a reorganization of institutional arrangements, which is still seemingly missing. Reintegration efforts, in some cases mandatory (Giacomini et al., 2018; Mikuła and Kaczmarek, 2019), have been noted in several cases where public administrations explore modernization efforts to work towards a more agile, ‘joined up’, government (Qadadeh and Abdallah, 2020; Soe and Drechsler, 2018). In addition to this Yarlagadda (2018: 797) shows how the introduction of DevOps can support integration efforts and foster collaboration. DevOps comprises ‘a set of practices that incorporates IT operations and software development’.

To be sure, a lack of clear legal and organizational guidelines regarding data sharing and reconciliation often stands in the way of safe and secure collaboration, even in countries that did not subscribe to NPM doctrines (Desouza and Jacob, 2017). Several authors do suggest that growing legislation on the access to and reuse of (open) government data might prove helpful (Aula, 2019; Kassen, 2017; Nugroho et al., 2015; Temiz and Brown, 2017).

Competition

The next problematic legacy is the purchaser/provider separation. Under the guise of ‘efficiency through competition’, core areas of state administration were shrunk, and suppliers were diversified. Local systems often have to ‘purchase’ services from each other and are in competition for funds. This hinders the integration and analysis of big data. Governments may find themselves in a situation where the databases of a single system are managed jointly by their own IT department and various other suppliers (Clarke, 2019). When it comes to using the data they have managed to collect, governments are often forced to outsource key IT and data analytical tasks, rather than building in-house expertise (Lobao et al., 2018). Desouza et al. (2020) note that if too much work is outsourced, the organization faces costly support for future deployments, further racking up the cost of digital transformation. Moreover, due to outsourcing, purchasers may not have insight into the algorithms being used due to their proprietary nature, leading to significant information asymmetries (Dickinson and Yates, 2021). Löfgren and Webster (2020) raise issues regarding the control, ownership and access to data, noting the value of being privatized by commercial interests rather than retained by society via public agencies. This is echoed in literature discussing data colonialism (Viera Magalhães and Couldry, 2021). Several other issues arise such as contractual disputes, insufficient bidding and tender evaluation processes, and lack of contractor oversight (Patanakul, 2014). Again, this is something that Fountain already lamented, writing (p. 203): ‘Private sector vendors of digital government and professional service firms have aggressively targeted the construction and operation of the virtual state as an enormous and lucrative market to be tapped. (…) outsourcing architecture is effectively the outsourcing of policymaking. Governments must be careful, in their zeal to modernise, not to unwittingly betray the public interest’.

Despite growing criticism regarding NPM reforms in general, the marketization of government services is still increasing (Burkhardt, 2019). A notable exception is Canada, who avoided the IT-outsourcing trend from the very beginning (Clarke, 2019). To be sure, there are some trends of re-governmentalization, involving the reabsorption into the public sector of activities that had previously been outsourced to the private sector (Dunleavy et al., 2005). Still, privatization and public–private partnerships (PPP) are ubiquitous (Kopańska and Asinski, 2019).

Clarke (2019) provides evidence of Digital Government Units (DGU) as a promising, yet under researched, response. She explains how the UK government was facing widespread criticisms for IT failures, in part due to the largely outsourced IT functions in the UK. This culminated in a report tellingly titled ‘Government and IT – a Recipe for Rip Offs: Time for a New Approach’. In response to this report, the UK Government Digital Service (GDS) was introduced in 2011, spurring the creation of DGU's: dedicated in-house units of digital expertise operating at the centre of government. The macro-level failures, causes and tentative potential solutions identified in literature are presented in Table 2.

Macro-level failures, causes and solutions.

The meso-level: ready, willing and able?

The second arena of importance is the meso-level. A useful concept found in the literature to structure the various meso-level concerns is

Organizational alignment and value creation

Organizational alignment refers to how well big data projects can be reconciled with the organization's structure, main activities and strategy (Klievink et al., 2017). It has to do with the need to use big data in the first place (Giest, 2017). Entrepreneurial public officials are quick to see investment in big data as a solution to every possible problem (Criado et al., 2021). Moreover, eager governments are often easy targets for clever salesmen. Newer and larger data-driven projects are not always a good idea. Because of the immense complexity, high costs, and potential other inconveniences of big data projects, the literature suggests that it would be more prudent to first ask whether problems can be solved within current systems (Pedersen, 2018). The legacy of previous IT developments and existing systems are often conveniently forgotten or the difficulties of integration are downplayed (Choi and Chandler, 2020; de Vries et al., 2018; Gong and Janssen, 2020).

This means that instead of only asking the question if an organization can use big data, the more pertinent question is whether it should. Big data projects need to be politically legitimate (serving citizens’ needs) and sustainable, leading to public value creation (Vydra and Klievink, 2019; Yeung, 2018). Meijer (2018) speaks to this, noting that citizens can (and should) be engaged in decisions on the implementation of safety cameras and other IoT devices or smart software to detect criminal behaviour. Normative trade-offs between for instance safety and privacy should be made explicitly through democratic debate. An important addition made by Hardy and Maurushat (2017) is that the statutory tasks of public organizations are typically set by laws and regulations. Even if organizations can use big data to fulfil their public duty, they might not be allowed to, given the complex multi-level legal patchwork covering privacy, freedom of information, re-use of public sector data, competition law and intellectual property (Cerrillo-i-Martínez, 2012; Langdon et al., 2012).

Organizational maturity

The second aspect of organizational readiness, organizational maturity, indicates ‘how far organizations have developed towards a state in which they collaborate better with other public organizations (and their IT) and provide more citizen-oriented services and demand-driven policies’ (Klievink et al., 2017: 273). The premise is that more cooperation can make more data available for holistic big data applications that improve public sector performance. Mature organizations will be in the best position to use big data to its full potential. Given the NMP trend towards agencification and diversification of IT systems discussed above, it is no surprise that levels of maturity are generally low, leading to various types of failure. Klievink et al. (2017) provide a five-stage maturity model in their paper, ranging from ‘stove-pipe’ organizations, where IT can only be used to digitize processes such as data entry, to ‘joined-up’ government, where centralized IT facilities support the collection, combination and analysis of big data to create new knowledge or holistic services for citizens.

The literature under review showed that few public sector organizations structurally collaborate with others on activities and information sharing (Klievink et al., 2017; Martens and Zhao, 2021; Micheli et al., 2020; Vezyridis and Timmons, 2017). There are examples of best practices, such as US governments sharing code on GitHub (Mergel, 2015), or Italian municipalities setting up a collaborative network for welfare services (Bonomi et al., 2020), but these remain the exception rather than the rule. Aula (2019) shows that data frictions and larger institutional dynamics are importantly connected. Especially transnational collaboration seems to still be in its infancy (Pencheva et al., 2018; Salas-Vega et al., 2015). The need for (trans)national collaboration was of course exacerbated during the COVID-19 pandemic (Peiffer-Smadja et al., 2020). Finally, some authors argue that collaborations should not be limited to collaborations within the public sector. Mikhaylov et al. (2018) argue that cross-sectoral collaborations with universities and private parties will be important as well to provide high-quality government services, and missing out on these opportunities for cooperation contributes to the failure to launch big data projects. Others warn for potential problems in using commercial datasets and collaborating with the private sector. Several authors point out risks such as data being potentially more vulnerable to hacking, vendor lock-in or more practical challenges of managing relationships with multiple stakeholders (Andrews and Entwistle, 2010; Espinoza and Aronczyk, 2021; Patanakul, 2014; Ramon Gil-Garcia et al., 2007).

Organizational capabilities

Organizational capabilities have to do with whether organizations possess the requisite capacities to use big data and create value from it for the organization and society (Klievink et al., 2017). From a techno-rational perspective, this has to do with organizations’ capability to design, develop and maintain suitable IT infrastructure (Abu Bakar et al., 2020)

Liao et al. (2016) empirically show a way out of this paradox. In their research on ICT investment, they show that dips in productivity are often due to compatibility issues and the learning curve associated with new technologies. Lim et al. (2018) do show that sectors that heavily rely on data for their operational functioning, such as mobility-oriented organizations, tend to have better systems in place. Another important factor related to capabilities is the combination of techno-rational and socio-political change at the organizational level. Take for instance the research by Garicano and Heaton (2010) comparing the productivity of different precincts of the New York Police Department. The researchers initially found an inverse effect of the digitalization of case files on the number of arrests. Only precincts that accompanied this digitalization with the other organizational and cultural changes show a positive relationship. So, organizations will only make the most out of big data when they deal with both techo-rational and socio-political issues simultaneously.

A related finding from the literature is that creating value from big data does not mean blindly following the outcomes of big data analyses. In a case study of late-night traffic in Seoul, Hong et al. (2019) explain that data analyses show that late-night bus routes should be mainly introduced in the (wealthy) Gangnam areas from an efficiency point of view. However, this means governments would exclude poorer areas from public transportation access. Thus, it is key that decisions also include normative considerations. Fountain (2001) had also envisaged this, writing: ‘further rationalization and standardization of the administrative state may allow bureaucrats less opportunity to use their accumulated experience and judgement, or tacit knowledge, to consider exceptional cases that do not conform to standardized rule-based systems’ (p. 206). This is echoed by Porway (2013) stating: ‘As data scientists, we are well equipped to explain the “what” of data, but rarely should we touch the question of “why” on matters we are not experts in’. This of course also touches on wider discussions of technocratic governance (Veale and Brass, 2019), system-level bureaucracies (Bovens and Zouridis, 2002) and the need for humans in the loop in algorithmic decision making (Aldulaimi, 2020; Sousa et al., 2019; Wang et al., 2021). The meso-level failures, causes and potential solutions are presented in Table 3.

Meso-level failures, causes and solutions.

The micro-level: fear and loathing in the public sector

The third level of obstacles can be categorized at the micro level, during the seemingly mundane interactions between people, and in day-to-day decision-making processes. Although public sector managers are typically the ones responsible for implementing and using big data, they are often overlooked (Nielsen et al., 2019).

The attitudes of public sector managers

The first issue that came up in the literature is that despite the pervasiveness of big data in current society, for many public managers, it remains unclear what this technology has to offer public administration. In complex and uncertain decision-making processes, people typically rely on pre-existing frames. Hu (2017) poignantly points out that public managers are underprepared for governing in the digital age because they lack key knowledge. He suggests integrating information management, use and technology into more training programs for managers. Research shows that public managers and even Chief Information Officers (CIOs) often decide whether or not to use AI and other technology based on pre-given attitudes and frames (Criado et al., 2021; Guenduez et al., 2020). These frames can range from being techno-enthusiasts to techno-sceptics. Moreover, cognitive biases, such as an attachment to the status quo, or overconfidence in one's own judgement, can also affect technology preferences (Moynihan and Lavertu, 2012). A blind faith in technology might blind public officials to the negative qualities of large projects, leading them to overlook the benefits of older, cheaper or simpler solutions (Guenduez et al., 2020).

On top of this, public sector organizations are left vulnerable to what Goldfinch (2007) calls ‘lomanism’. Lomanism is ‘the enthusiasm that sales representatives and other employees develop for their company's products’ (Goldfinch, 2007: 921). When faced with private sales consultants, public sector officials do not necessarily have the technical expertise to assess how feasible the presented plans and solutions are; calling for more digital literacy among public managers (Mu and Wang, 2020; Wirtz et al., 2019). Casalino et al. (2020) argue that all profiles involved should at least give some insight into how data is being used and analysed through entry-level training on which data is collected and how it is analysed. Gray et al. (2018) even suggest expanding this to a general ‘data infrastructure literacy’.

Moreover, some managers actively seek out grand-sounding projects, as they might be more likely to receive funding and get approval. This can lead to governments investing in obfuscated concepts, rather than necessary basic infrastructure (Sanchez et al., 2017). Take for instance a real-time dashboard infused with artificial intelligence to monitor and steer traffic. Whereas only a handful of governments globally are likely to have the data infrastructure needed to support such a system, this will not keep suppliers from convincing governments to buy such technology (Maciejewski, 2017). Ironically, upon the failure of such projects, the suppliers can blame it on the lacking infrastructure.

Interactions between data scientists and public managers

A related important consideration is a specific interaction between data scientists and public managers. It is commonly suggested that big data leads to better information and therefore, almost automatically, better decisions. This implicit functional theory overlooks the interpersonal interactions that shape the decision-making process. The supply-side problem is that no matter how sophisticated their analyses and poignant their conclusions are, the evidence provided by data scientists is just not taken up by public managers (Kettl, 2018). These are of course well-known criticisms from the field of evidence-based policy (Newman, 2016). To mitigate this, visualization and representation tools such as dashboards could help decision makers understand algorithms and data analyses (Alshahrani et al., 2021; Busuioc, 2020; Kempeneer, 2021).

There is also a demand-side problem. Policy officials do not always have the patience for the methods and rigor of data analysts. They often do not understand the language data scientists are speaking (Jarrahi et al., 2021; Kettl, 2018). Because of this, data scientists have considerable power in framing insights from big data, potentially leading to important power shifts. Finally, public managers will often neglect the information that they are given, simply because it does not suit their (political) interests, or goes against their instincts (Deschamps, 2021).

Another important part of this puzzle relates to developing and retaining IT experts. Finding the right profiles of data scientists, data engineers, database experts, and other profiles to work with the data on the micro-level is no mean feat. Especially given the overall shortage of these profiles in the labour market. Although we know very little about the practices of hiring and retaining data scientists in the public sector, there are some worrying signals (Brooks-Bartlett, 2018). A key point of contention is the high salaries of data scientists that are often prohibitive for public sector organizations, outsourcing is typically cheaper (Gantman, 2011).

Leadership and perceived success

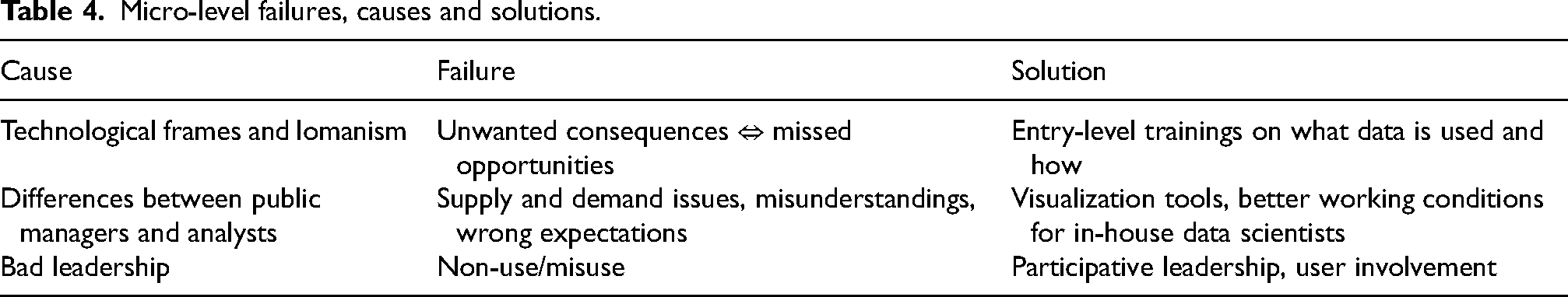

This brings us to a final important consideration: the impact of leadership on the success of data science in the public sector. Despite leadership being identified as a key determinant of the success of digital transformation in the private sector, not a lot of literature was be identified that discussed this topic for the public sector (Sow and Aborbie, 2018). The articles that did touch on this, claim that good leadership is necessary to create a common sense of purpose and shared vision (Hansen and Nørup, 2017; Sow and Aborbie, 2018). Articles suggest that CIOs increasingly need to shift from a technical/operational focus to a strategic/management focus, along with paying more attention to disaster recovery and collaboration (Green, 2003; Hooper and Bunker, 2013; Pang, 2014). Brock and von Wangenheim (2019) state that the perceived success of data science projects is arguably as important as actual success or failure, as perceptions can work as self-fulfilling prophecies. Even if big data projects lead to better results or information on paper, if the people working with these results do not have faith in them, failure will eventually ensue. Hansen and Nørup (2017) show how participative leadership can increase the chances of success in IT and big data projects in a Danish multisite hospital. Tangi et al. (2021) show that managerial activities are important to overcome organizational barriers. The micro-level failures, causes and potential solutions are presented in Table 4.

Micro-level failures, causes and solutions.

Conclusion & discussion

In 2001, Jane Fountain explained that to become a Virtual State, the public sector needed urgent reform to enact digital technologies, such as the internet, in an efficient but also fair manner. Two decades later, this digital transformation has seen mixed success. This article answered the question of which macro, meso and micro-level factors contribute to this failed digital transformation in the public sector, and which tentative solutions are presented (if any). Our findings from a review of 106 articles point to a combination of techno-rational and socio-political factors (summarized in Tables 2 to 4).

The first important overarching conclusion is that many of these problems are not new. They bare an eerie resemblance to the problems set out by Fountain two decades ago. Thus, the overarching problem seems to be that the public sector does not learn. For instance, it continues to pursue efficiency, over normative goals such as appropriateness, fairness, or legitimacy (Lobao et al., 2018; Yeung, 2018). Attempts to save money through automation and outsourcing to the private sector remain pervasive. The IT infrastructure of the state is hollowed out and organizational structures and cultures are unfit to support a democratically legitimate digital transformation. In some respects, problems have gotten worse. Where Fountain (2001) merely warned for data ownership politics to come, literature now shows that (partially due to private sector outsourcing), problems like data colonialism arise (Viera Magalhães and Couldry, 2021). This could also be linked to growing concerns about data justice (Bertoni et al., 2022).

Some of the articles under review do point towards tentative solutions or discuss cases of success. Disaggregated government agencies have found successful ways to share data and set up collaborative structures (such as DGU's) (Clarke, 2019). In part this has been spurred by open data legislation, sometimes ensuring the protection of citizens’ privacy (Hardy and Maurushat, 2017). The literature does agree that the most necessary reform is organizational and cultural change – though few articles elaborate on what this looks like exactly. Tentative success is found in having humans in the loop with substantive expertise to review algorithmic recommendations and other data-driven findings (Busuioc, 2020; Wang et al., 2021). Moreover, entry-level training to ensure that everyone in the organization knows what data is being collected, and how it is being processed has shown to be helpful. From a techno-rational point of view, adopting DevOps practices to modernize legacy IT systems has proven useful, along with building more in-house IT and data analytics expertise (Yarlagadda, 2018).

Ultimately, a key advice that many of the articles put forward is to reflect on the need to use data and digital technologies in the first place (Giest, 2017). In many cases, for instance, when data quality or infrastructure is insufficient or, more notably, when using big data simply does not create value, it is better to leave it alone (Gong and Janssen, 2020). A slower pace can lead to higher long-term efficiency, but also to better quality. With ever growing concerns about the bias and discrimination that big data can bring, governments should not try to build the Virtual State overnight. It goes without saying that if data is not captured, stored, cleaned or processed well, and algorithms are trained with this suboptimal data, the outcomes are likely to be suboptimal as well. As the saying goes: ‘garbage in, garbage out’. This is again reminiscent to Fountains (2021: 203) conclusion that seems to be a hard – yet increasingly urgent – message to take to heart: ‘Governments must be careful in their zeal to modernize, not to unwittingly betray the public interest. It will remain the province of public servants and elected officials to forge long-term policies that guard the interests of citizens, even when those policies seem inefficient, lacking in strategic power, or unsophisticated relative to “best practice” in the economy’.

To be sure, this research showed several limitations. Firstly, by only selecting English articles for the review, the findings are probably most suited for the Global West. Future research might see which findings hold in other countries, and which additional causes, failures and solutions present themselves. Secondly, we did not include the search terms ‘innovation’ or ‘smart city’, though some of the articles under review touched on these concepts, there is probably a greater body of research in these fields that could contribute even more to our understanding of digital transformation. More generally, though a rigorous selection process was followed, it is possible that some key articles and insights have not been considered in this review. As such, these findings should not be seen as exhaustive, but rather as a framework that can easily be expanded by future research.

Several of the factors identified in this paper require further investigation. On the macro level, more work is needed to understand how the difficulties created by (the legacy of) NMP can be overcome, and which further reforms in the public sector are most suitable to facilitate digital transformation. Tied to this, on the meso-level, it is imperative to get a better understanding of the optimal and necessary conditions for successful information sharing and collaboration between government organizations, and whether these require renewed legal frameworks. Additionally, insights are needed on how to reduce an overreliance on external consultants, for instance, by studying how skilled data scientists can be hired and retained by government organizations internally. Finally, on the micro-level, more research is needed on the role of leadership, as well as the attitudes of public sector managers and workers.

For now, the public sector might first have to be disappointed by the modest advances in digital transformation. We would suggest that public sector organizations take on digital transformation one data set and policy question at a time. Though further research remains necessary, the holistic framework provided in this article may be a good starting point to understand the many (and often interrelated) techno-rational and socio-political drivers of the success and failure of digital transformation in the public sector. Rather than hoping for the Virtual State to be built overnight, the public sector should first be concerned with laying a good foundation.

Footnotes

Author's Note

Frederik Heylen is also affiliated with Datamarinier, Antwerp, Belgium.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.