Abstract

As a key constituent of China's approach to fighting COVID-19, Health Code apps (HCAs) not only serve the pandemic control imperatives but also exercise the agency of digital surveillance. As such, HCAs pave a new avenue for ongoing discussions on contact tracing solutions and privacy amid the global pandemic. This article attends to the perceived privacy protection among HCA users via the lens of the contextual integrity theory. Drawing on an online survey of adult HCA users in Wuhan and Hangzhou (N = 1551), we find users’ perceived convenience, attention towards privacy policy, trust in government, and acceptance of government purposes regarding HCA data management are significant contributors to users’ perceived privacy protection in using the apps. By contrast, users’ frequency of mobile privacy protection behaviors has limited influence, and their degrees of perceived protection do not vary by sociodemographic status. These findings shed new light on China's distinctive approach to pandemic control with respect to the state's expansion of big data-driven surveillance capacity. Also, the findings foreground the heuristic value of contextual integrity theory to examine controversial digital surveillance in non-Western contexts. Put tougher, our findings contribute to the thriving scholarly conversations around digital privacy and surveillance in China, as well as contact tracing solutions and privacy amid the global pandemic.

Keywords

Introduction: Health Code apps as digital surveillance instrument

Across different national contexts, contact tracing apps have been proven effective in reducing the spread of COVID-19 (Urbaczewski and Lee, 2020). China is the first country to launch mandatory implementation of its contact tracing solution, Health Code apps (HCAs), and has upheld a nationwide adoption even domestic COVID cases have been relatively low since March 2020. In response to the initial outbreak, local governments promptly worked with Alibaba and Tencent to develop their own HCAs, and then enforced them on local residents and constantly updated in line the central government's guideline for stringent COVID-19 transmission control. Despite data discrepancies among local versions and a slow process to unify, HCAs are widely considered essential to the claimed success of domestic pandemic control and as a token of accomplishment regarding China's digital governance (Chen et al., 2022).

Further, HCAs allude to China's distinct approach to digital surveillance, which has drawn consistent accentuation and enhancement across central and local governments since the late-90s. Notably, the state has revealed unyielding interests in big data technologies such as artificial intelligence, cloud computing, and facial recognition over the past decade (Su et al., 2021). With substantial supports from the private sector, a new generation of digital surveillance instruments is built on data-driven algorithms and orchestrated to bolster a more encompassing “architecture of social management and propaganda” (Jiang and Fu, 2018: 382). The social credit system (SCS) is an exemplary case. Lauding big data technologies as a symbol of modernization and a solution to a variety of economic and social issues, the state has turned to the Internet giants like Alibaba and Tencent for collaboratively developing SCS based on their technological expertise and data assets (Lv and Luo, 2018). This sort of relationship has then made commercial platforms (e.g., Alipay and WeChat) as the fundamental infrastructure of SCS since numerous customer data is aggregated for centralized data analytics against every citizen (Jiang and Fu, 2018; Liang et al., 2018). The resulted social credit ratings set seemingly objective criterion by which the Chinese government can legitimize its regulations on people's Internet-based and -enabled activities in line with preferable social norms and moral values (Kostka, 2019).

Such technical foundation and surveillance approach are further stretched to the design and implementation of HCAs in China. HCAs now primarily function as a proxy to tell whether people are exposed to or already contracted COVID-19 by color-based codes (Liang, 2020). Like SCS, these apps are based on the commercial platforms and become data-driven tools that first send self-reported individual data to local data systems. Then, the data is analyzed against a plethora of individual digital records to assign color codes (Yang et al., 2020). Meanwhile, the apps aggregate and channel the processed data to state-level database for strengthening centralized political power and standardizing local governing practices (Sun and Wang, 2022). In this sense, the everyday use of HCAs connects app users to data systems which synthesize social information from authorities responsible for public health, public transportation, border control, community governance, etc., and ultimately facilitate nationwide restoration of economic and social orders.

Thus, we contend that HCAs are another staple instrument that manifests China's endeavors incorporating big data technologies into its ever-expanding surveillance capacity. Apart from the assumed role to contain the virus transmission, HCAs have turned individual health data like medical records and biometrics into a critical asset to reinforcing political control and social management. These apps characterize the design and implementation of China's digital surveillance inextricably tied to the power interplays in shaping big data technologies between the central and local governments, as well as the public and private sectors. Although HCAs and other digital surveillance instruments have earned a significant degree of public support in China (Su et al., 2021), they have posed significant challenges to individual privacy rights on account of the state's limited interest in and capacity for privacy regulation (Lv and Luo, 2018). Citizens’ privacy protection is often deemed less politically consequential as the relevant regulations typically fall short of addressing the public's lack of privacy trust in both commercial and non-commercial online services (Luo and Lv, 2021; Wang and Yu, 2015).

Among the proliferating body of research on pandemic surveillance, several studies have examined the technical architecture and political underpinnings of HCAs, and the public perception during the early launch stage, albeit with limited attention paid towards user privacy (e.g., Liang, 2020; Liu and Graham, 2021; Meng et al., 2021; Sun and Wang, 2022). Also, these studies are yet to fully consider the specific use context of HCAs and extensive user experience against the evolving backdrop of China's digital surveillance. Articulating how Chinese users engage with the privacy dimension of HCAs after mass adoption not only casts new light on the escalating tension over digital privacy in China, but also brings unique evidence to the crucial debates on contact tracing solutions and privacy worldwide (Bengio et al., 2020).

To address the research gaps, this article focuses on Chinese users’ perceived privacy protection of HCAs contextualized in everyday use, especially to chart influential factors from the use context and interpret their respective effects on the privacy perception. We first elaborate on the use context of HCAs and identify a set of factors affecting users’ perceived privacy protection based on the theory of contextual integrity. Then, leveraging survey data from 1551 adult HCA users in Wuhan and Hangzhou, we find a relatively high level of perceived privacy protection among the users, and interpret the substantial impacts of users’ perceived convenience, attention towards privacy policy, trust in government, and acceptance of government data management on the privacy perception. Moreover, we discuss how our findings contribute to ongoing conversations around digital privacy and surveillance in China, as well as contact tracing solutions and privacy amid the global pandemic.

Literature review: A unique use context of HCAs

Examinations on contact tracing apps have been primarily conducted in Western societies. Prior research has delved into the degree of people's willingness to adopt the apps and underscored public concerns over digital privacy and data security as the major hindrance to mass adoption (e.g., Hargittai et al., 2020; Urbaczewski and Lee, 2020). Nonetheless, this analytical approach cannot be simply replicated in our case because of HCAs’ use context differentiated from the Western counterparts.

First, the mandatory deployment of HCAs conditions a high diffusion of contact tracing apps in China, whereas it confines users’ opportunity to opt-out of HCAs adoption and the underlying data collection process. Specifically, in using HCAs the first time via Alipay or WeChat, users can only agree to a user authorization form that allows personal information to be collected for code generation; besides, canceling or adjusting the authorization would cause HCAs to malfunction, leaving users with a great deal of life troubles. Second, the actual user experience of HCAs is not solely determined by data. At the practical level, using HCAs involves human inspectors whose primary duty is to execute code-based passage rules, but the rigorousness of rule enforcement could vary significantly from place to place and time to time (Liu and Graham, 2021). For example, oftentimes inspectors at a place where uptick in COVID cases is observed would adopt “no code, no pass” rule given that strict lockdown measures are effective, but nevertheless turn perfunctory on code checking soon after the measures are relaxed. Third, despite palpable privacy risks attached to rushed innovations like contact tracing apps, there have been shifts in the political and public discourses to justify public health outweighing individual privacy (Newlands et al., 2020). It is particularly salient in China as the central government marshals its media system to frame the imperative of adopting HCAs and other digital surveillance instruments as a collective value and benefit (Kostka, 2019; Liu, 2021).

This context has subjected users to mandatory information disclosure and data sharing, which may result in social repercussions at the individual level. Yu (2020) argues that HCAs have enabled unprecedented practices of biometric surveillance, which is conducive to gendered information disclosure against women in China. Meng et al. (2021) find that HCAs strengthen social governance to control flow of people between different areas, yielding regulated physical mobilities that discriminate those socially and technologically dependent on others. Sun and Wang (2022) portray HCAs as a social infrastructure that repurposes the existing data systems for deterring users from violating pandemic control rules and modifying individual behaviors in accordance with a desirable mode of citizenry. As shown in the case of SCS (Kostka, 2019; Kostka and Antoine, 2020), how users perceive and respond to the privacy dimension of digital surveillance instruments in China are contingent on their individual characteristics, beliefs, and user experience that is tied to the instruments’ functionality. However, it remains unclear how these factors are related to the use context, and how they affect users’ perceived privacy protection.

Contextual integrity in the use of HCAs

We draw on the theory of contextual integrity (CI) to interpret how users engage with the privacy dimension of HCAs in China. Nissenbaum (2004, 2010) coins and develops CI to better steer the privacy debates surrounding public surveillance and emerging technologies. CI sheds light on the role of contexts in characterizing the nature of personal information and the norms of information processing (e.g., transmission and disclosure). Contrary to applying a homogenous understanding of privacy, CI allows for deliberate accounts of whether health information like HCAs data is legitimately collected, aggregated, used, and reused in specific use contexts (Winter and Davidson, 2019). In the same vein, CI challenges the dichotomy of public/private data by highlighting “a multiplicity of information types” premised on contextual specificity and refuting any stoppage of information flows as the only parameter contouring privacy (Nissenbaum, 2019: 230).

Specifically, according to Nissenbaum's (2019) fundamental theses of CI, framing privacy rests on whether personal information flows are appropriate in relation to privacy norms 1 contingent on the context. These norms, presented in varying forms of social standard (e.g., explicit or implicit), are set to “describe, prescribe, proscribe, and establish expectations for characteristic contextual behaviors and practices” (227). As such, privacy is manifested and protected so long as information flows meet people's expectations governed by the norms in a given context. For instance, Vitak and Zimmer (2020) ascribe high US public acceptance of contact tracing solutions proposed by Google and Apple to the accordance between technical features of data management and people's privacy expectations. On the flip side, people may consider their privacy violated when information flows are not aligned with the norms. For instance, Hargittai et al. (2020) highlight that Americans’ resentments toward government contact tracing technologies stem from concerns over potential long-term citizen surveillance attached to the technology use. Viewing HCAs as digital surveillance instrument, we treat users’ perceived privacy protection of HCAs is synonymous to their reflection on privacy norms that dictate information flows under a public surveillance circumstance. In this vein, the perceived privacy protection denotes the degree to which users’ privacy expectation is fulfilled when being aware of the government's surveillance goals.

The fundamental theses of CI (Nissenbaum, 2019) also set out five key parameters defining privacy norms—subject, sender, recipient, information type, and transmission principle—and bring attention to specifying their respective values in the shaping of norms. Meanwhile, the theses suggest that interrogation of the ethical legitimacy of privacy norms be tied to examining contextual layers, including interests of affected parties, ethical and political values, and contextual functions, purposes, and values. Detailing and synthesizing all these parameters and layers is a demanding work far beyond this article's scope. Instead, we leverage the theses as a heuristic perspective to map out a set of key individual-level factors affecting HCA users’ reflection on the privacy norms.

Privacy policy attention and protection behaviors

The transmission principle refers to the constraints on “who sent the information, who received it, about whom it is, what types of information are involved” (Nissenbaum, 2019: 228). Among the parameters defining privacy norms, the transmission principle often plays a pivotal role in rendering information flows to an extent of appropriateness with respect to the context and involved actors. In the context of contact tracing apps, users recognize and comprehend transmission principles by reading privacy policies, which specify steps and rules of data sharing (Bengio et al., 2020). Besides, users’ consent with privacy policies conditions appropriates flow of information from sender to recipient (Nissenbaum, 2019).

Existing research often contextualizes users ‘engagement with privacy policies in commercial Internet services and has demonstrated a pervasiveness of ignoring policies (e.g., Bakos et al., 2014). Even among users who choose to read the policies, the attention is often insufficient despite the intensive privacy concerns they bear (Obar and Oeldorf-Hirsch, 2020). Yet, explicit, succinct, and readable privacy policies are critical to promoting trust in contact tracing apps, henceforth society-wide adoption in a timely manner (Bengio et al., 2020). Standardization Administration of China (SAC) has required the data collection process via HCAs must be premised on users’ consent or authorization along with provision of privacy policies (2020a). As such, we postulate that as HCA users pay sufficient time to the policies, they would form positive views of their privacy in using the apps.

The degree of HCA users’ attention paid to privacy policies is positively associated with their perception of privacy protection.

In parallel to the transmission principle, actors that include subject, sender, and recipient constitute another pivotal role in driving the formation and shift of privacy norms. Notably, the respective value of and the relationship between actors account for the very fabric of context (Nissenbaum, 2004, 2019). Applied to the use of HCAs, subject and sender are almost overlapped in that most Chinese citizens report personal information for themselves, except those lacking digital access and know-hows, e.g., seniors. Moreover, the recipient is government agencies responsible for public health administration and Internet surveillance across the central, provincial, and local levels (SAC, 2020b).

To chart the values of actors, one must refer to the contextual ontologies that prescribe how actors’ identities are performed; also, it is essential to factor into the use aspect that concerns a variety of practices around the information flow (Gerdon et al., 2021; Nissenbaum, 2019). In this regard, it is important to note HCA users’ mobile privacy protection behaviors. Studies centered on commercial Internet services, like social networking sites, have shown users’ practice of privacy protection can reflect the degrees of individual privacy skills and cautions against privacy risks on mobile devices (Bright et al., 2021; Büchi and Latzer, 2017; Hargittai and Marwick, 2016). Besides, HCAs are released as mini programs of Alipay and WeChat, two super commercial apps that mediate a myriad of Chinese citizens’ online activities and have become integral to Chinese mobile experience (SandP Global, 2019). That is to say, the everyday practice of HCAs is built into rather familiar app environments to most Chinese users.

People's frequent privacy protection behaviors indicate their high technical familiarity with mobile privacy, causing mobile activities with less privacy concerns (Bright et al., 2021; Park, 2013). Along this line of studies, we suggest the users’ mobile privacy protection behaviors have significant implications on their perceived privacy protection in using HCAs. It is possible that the users’ adoption of privacy protection behaviors regarding their everyday mobile activities is instrumental in shaping their perception of HCAs’ privacy protection.

The frequency of HCA users’ mobile privacy protection is positively associated with their perception of privacy protection.

Sociodemographic status

From the perspective of CI, actor values are usually identified with respect to exact occupations or jobs in a given context, such as patient and doctor in a hospital (Nissenbaum, 2019). By contrast, users’ professional background is non-specific to the contexts of contact tracing apps. Sociodemographic status becomes a more influential value to how the subject/sender defines his or her privacy expectation. For instance, drawing on survey data about American adults, Hargittai et al. (2020) find older populations are less willing to adopt contact tracing apps, whereas those better educated are more willing.

Prior research suggests that Chinese perception of online privacy varies by sociodemographic status. For commercial Internet services, males are in general more confident in protecting their online privacy than females (Wang and Yu, 2015). Compared with rural women with limited educational obtainment, female college students are more aware of the technical aspect of online privacy and more capable of managing their information disclosure (Wang et al., 2020). For digital surveillance instruments, those who are older, wealthier, better educated, and living in urban areas are inclined to hold supportive views while downplaying the privacy risks of SCS (Kostka, 2019). Also, users having higher incomes and higher educational obtainments are most receptive to the norms embedded in SCS, thereby yielding behavioral changes for individual benefits (Kostka and Antoine, 2020). Combining these findings, we posit certain social groups, i.e., male users, older generations, the wealthy and well-educated, and urban residents, tend to perceive privacy protection well when using HCAs.

Male, older, wealthier, better educated, and urban HCA users are more likely to perceive high privacy protection.

Trust in government

In general, whether people have trust in government exerts huge impacts on their attitudes and adoption willingness towards contact tracing apps distributed by government (Bengio et al., 2020; Hargittai et al., 2020). Specifically, trust is pivotal to mitigating people's perceived privacy risks attached to contact tracing apps, thereby leading to increase in adoption rate (Duan and Deng, 2022). This role of trust is also evident in the context of HCAs from the CI perspective. The relationship between actors in using HCAs is rather straightforward: as the subject/sender, Chinese citizens adopt the apps in accordance with the mandatory deployment and report health, travel, location, and other types of personal information to local and central governments, the recipient. That said, this relationship does not simply derive from a top-down administrative order. Rather, its formation and dynamics are subject to contextual values whose connection with privacy norms is critical to evaluating the appropriateness of information flows (Nissenbaum, 2010). Trust in government is one such value critical to judging whether novel flows like the data transmission of contact tracing apps is acceptable.

Prior research has documented the shortages of privacy trust in government and public service among Chinese citizens for undue data collection and application (Wang and Yu, 2015). Especially for younger generations, concerns of government's infringement on privacy rights would result in their critical views of digital surveillance instruments (Kostka, 2019). Nonetheless, with the state's effective response to COVID-19, the post-pandemic Chinese society has seen increased trust in government and increased stratification with different levels of government body (Wang, 2021). In light of the public support for HCAs (Liu, 2021) and the stipulation of HCA privacy policies (SAC, 2020a), we assume a positive relationship exists between the users’ trust in government and their perception of privacy protection.

The degree of HCA users’ trust in government is positively associated with their perception of privacy protection.

Data management purposes and perceived convenience

In addition to values like trust, contextual purposes matter tremendously to norm-based governance of information flows and evaluation of privacy practice (Nissenbaum, 2010, 2019). In the case of contact tracing apps, the purposes are closely intertwined with the interests of recipient and the political values. For instance, surveying Germans before and after the COVID-19 outbreak, Gerdon et al. (2021) point to an increased acceptance of health data sharing for government's infectious disease control amid the global pandemic. Such a change of attitude is due to clear and acceptable purposes of data use set by government and alludes to the public's great interests in government's responsible data collection and processing (Ienca and Vayena, 2020).

Applied to the use of HCAs, the purposes are to “control risks and to restore economic and social mobility” (Meng et al., 2021: 397), which have been well received among Chinese citizens. As discussed above, these purposes overlap with the aim of the state's digital surveillance approach: maintaining political and social stabilities. Simultaneously, the purposes cater to the interests of subject/sender to bring back normal lives after months of lockdown and strict controls of individual mobility. Moreover, enacting the purposes is also accompanied with a series of privacy protection practices centered on data security and confidentiality (SAC, 2020a). We thus assume that as they subscribe to the purposes of government data management that legitimize data use for pandemic control only, HCA users would perceive high privacy protection in using the apps.

HCA users’ acceptance of government purposes regarding HCA data management is positively associated with their perception of privacy protection.

On the other hand, in the context of HCA use, the interests of subject/sender cannot be reduced to restoring the rhythm of life at the practical level. Perceived convenience, defined as the required time and effort citizens perceive to use HCAs (Chan et al., 2010), is one salient interest because people must rely on HCAs to pass checkpoints and this has become a normalized route for everyone in China (Ricci, 2021). Convenience plays an important role in facilitating the mass adoption of contact tracing apps by addressing concerns over app specifications like battery consumption, update procedure, and background running (Trang et al., 2020). The design of HCAs further boost convenience by plugging into the mature and widely used mini program ecosystems of Alipay and WeChat so that users would have high technical familiarities with the apps (Rehse and Tremöhlen, 2021).

Yet, the benefits brought by convenience may lead to people's negligence of privacy risks and willingness for more information disclosure in the process of adopting new technologies (Lau et al., 2018). Moreover, there often appear human inspectors in various use contexts of HCAs. Their lax implementation of code-based passage rules, e.g., skimming through multiple codes at the same time, would enhance people's perceived convenience for quicker pass but undermine people's confidence in the apps (Liu and Graham, 2021). It is possible that as people perceive a greater convenience in using HCAs, they would find their privacy protected to a less degree.

The degree of HCA users’ perceived convenience is negatively associated with their perception of privacy protection.

Method and data

Participants and procedure

This article used an online survey data of adult HCA users in two Chinese metropolises—Wuhan and Hangzhou—both are mega cities having over ten-million population. The cities were selected on purpose: the first confirmed COVID-19 case was found in Wuhan and the entire city was under lockdown for nearly three months soon after. With celebratory tones, Chinese media often portrays what people have experienced in Wuhan as the state's great sacrifice and tremendous victory against the pandemic (China Daily, 2020). By contrast, even though the pandemic did not cause serious local outbreaks, Hangzhou was the first metropolitan area where HCAs were introduced and massively adopted in China. Hangzhou was also the first to experiment an expanded use of HCAs into other fields. Besides, the city is home to the headquarter of Alibaba who was first requested to collaborate with the National Health Commission and the State Council to roll out HCAs.

The survey was fielded online using a self-administrated and blind opt-in format from January 20 to February 1, 2021, roughly one-year later after Wuhan's lockdown. We adopted a stratified quota sampling method to achieve diversity regarding age, gender, hukou, and education among respondents. The sampling process was conducted via Diaoyanba, a China-based survey company with a representative pool of millions of respondents. Aiming for 1500 valid survey responses (750 from each city), this process began with sending 3000 email invites in Wuhan and 2500 email invites in Hangzhou based on our sampling approach, each city's sociodemographic distribution, and respondents’ activeness (see Table 1). It turned out that 2930 invites were answered, which led to a response rate of 53%, and 1622 respondents finished the questionnaire. The analytical sample included 1551 respondents (N = 1551) after excluding those were under 18 and reported “uncertain” in terms of reading HCA privacy policy. All the respondents were assured of confidentiality and anonymity, and their reported data would be only used for academic purposes.

Survey sample sociodemographics in comparison with Wuhan and Hangzhou city census data.

Note. Income information was not publicly released in both cities’ census report.

Source: Wuhan Census Bureau, http://tjj.wuhan.gov.cn/ztzl_49/pczl/202109/t20210916_1779157.shtml

Source: Hangzhou Census Bureau, https://www.hangzhou.gov.cn/art/2021/5/17/art_1229063404_3872501.html

Measures

Dependent variable

Independent variables

Sociodemographic status of survey respondents (n = 1551).

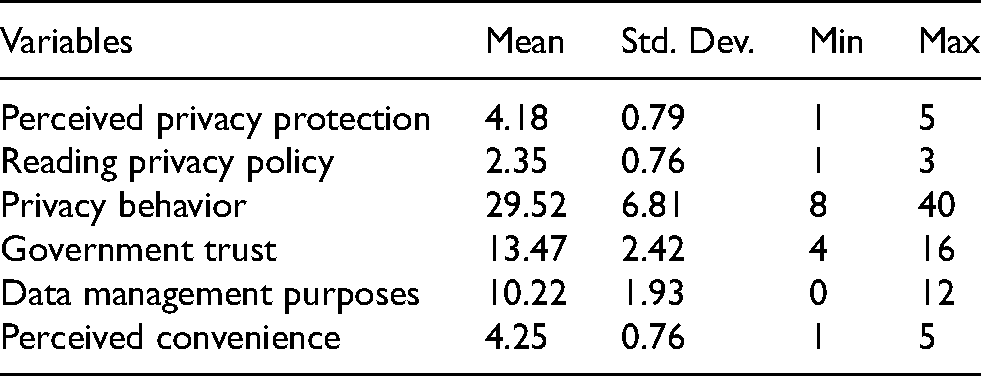

Summary of descriptive statistics (n = 1551).

Results

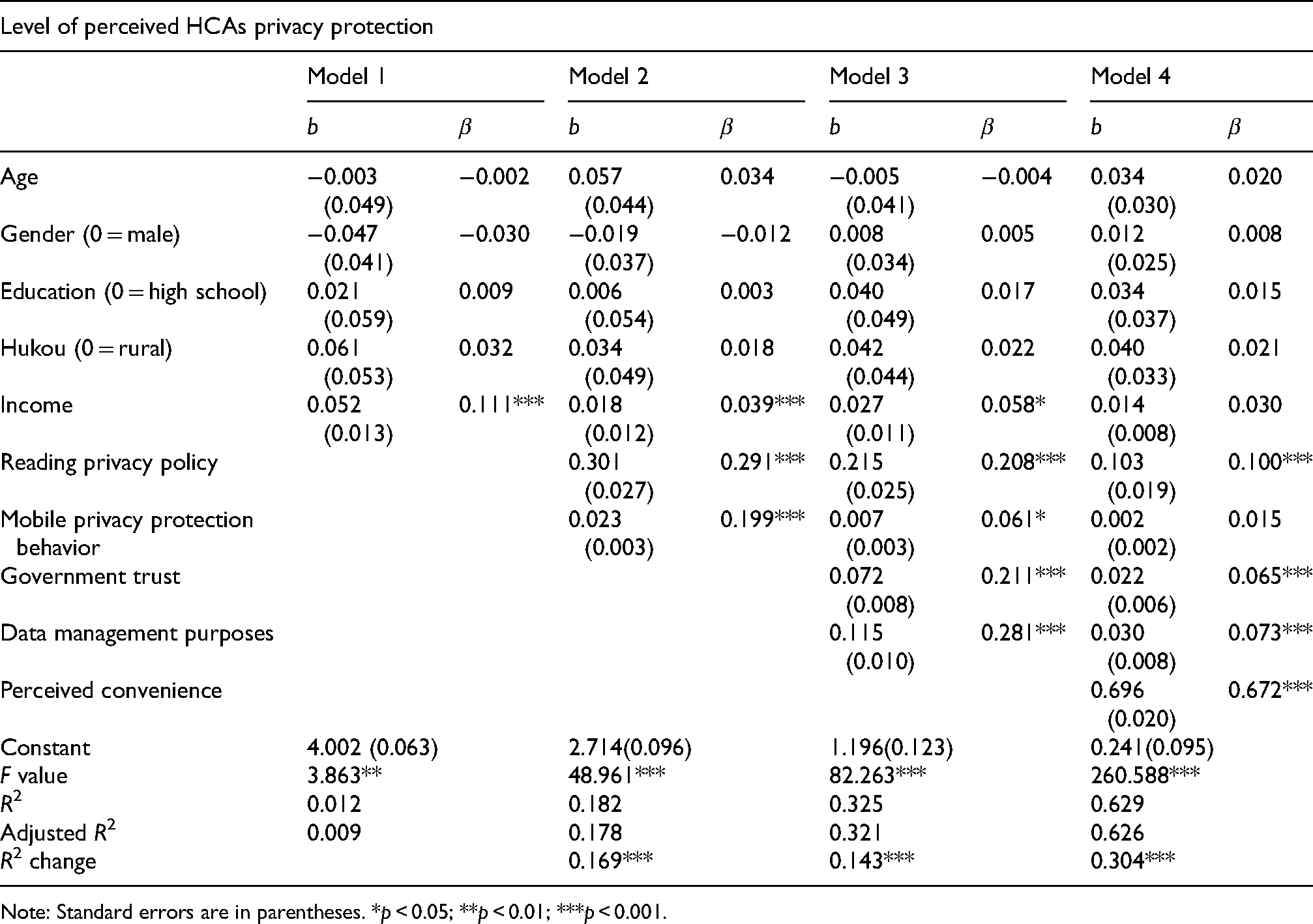

We applied the stepwise regression procedure to generate four models, in which the aforementioned independent variables were entered in sequence so that their respective association with the dependent variable and contribution to model effects can be observed (Henderson and Denison, 1989). Table 4 reported results of a series of multiple regressions addressing hypotheses concerning how sociodemographic status, privacy concerns, privacy behaviors, government trust, government data handling, and perceived convenience were related to perceived privacy protection of HCAs. When all the variables were entered, they collectively accounted for 62.9% of the variance in the dependent variable (R2 = 0.629, F = 260.588, p < 0.001).

Multiple regressions on individuals’ perceived privacy protection of HCAs.

Note: Standard errors are in parentheses. *p < 0.05; **p < 0.01; ***p < 0.001.

Considering the actor values in this very context, Model 1 in Table 4 introduced basic sociodemographic variables including gender, age, education, hukou and income. H3 attends to whether HCA users’ sociodemographic status drew a line between their perceptions of HCA privacy protection. It turned out only income had a significant relationship with perceived privacy protection. Respondents with higher income were more likely to perceive high privacy protection in using HCAs. Yet, other factors had no significant effect on influencing perceived privacy protection.

In addition to the sociodemographic status, Model 2 attended to the transmission principle and the use aspect of context by adding attention towards privacy policy and mobile privacy protection behavior. H1 suggested that the degree of HCA users’ attention paid to privacy policies is positively associated with their perception of privacy protection. The results showed users who read privacy policy more carefully perceived higher privacy protection. Addressing H2, the frequency of HCA users’ mobile privacy protection was positively associated with their perception of privacy protection.

Model 3 further incorporated the contextual value and purpose by adding trust in government and acceptance of government purposes regarding HCA data management. Addressing H4, it turned out greater trust in government institutions was positively related to perceived privacy protection. Addressing H5, the results suggested higher acceptance of government purposes regarding HCA data management was also positively related to perceived privacy protection.

Model 4 introduced perceived convenience to examine the interest of subject/sender. H6 suggested that the degree of HCA users’ perceived convenience is negatively associated with their perception of privacy protection. Rather than the assumed negative relationship, we found a positive relationship: individuals who perceived higher convenience are also more likely to perceive higher privacy protections when using HCAs. Therefore, H6 was not supported.

Put together, H1, H4, and H5 were supported throughout the models whereas H2 was not supported in Model 4. While mobile privacy behavior was a significant factor associated with perceived privacy protection in Model 2 and Model 3, it became non-significant in Model 4 due to the introduction of perceived convenience, which is strongly associated with perceived privacy protection (β = 0.672, p < 0.001). By the same token, income was a significant factor throughout Model 1 to Model 3 but became statistically non-significant in Model 4. Trust in government and acceptance of government purposes remain as significant factors after introducing perceived convenience in Model 4, albeit with drastically decreased effects. Adding perceived convenience also substantially increased the R square of the overall model by 93.54% (from 0.325 to 0.629).

Discussion

The main purpose of this article is to examine the relations of individual characteristics, beliefs, and user experience with the perceived privacy protection of HCAs. Particularly, drawing on the theory of contextual integrity (CI), we consider the use context of HCA use differentiated from similar cases in Western societies and identify a set of key factors contributing to perceived privacy protection. Leveraging an online survey that targeted Chinese HCA users during January and February 2021, our findings identify users with higher levels of attention to privacy policies, trust in government, acceptance of government data management, and perceived convenience of HCAs are more likely to perceive great privacy protection while using HCAs. By contrast, their frequency of mobile privacy protection does not exert significant impacts on the perceived privacy protection. Also, users’ sociodemographic status, including age, gender, educational obtainment, urban/rural residency, and income, is not significantly associated with the perceived privacy protection.

In particular, our analysis first shows that HCA users, on average, report a relatively high degree of perceived privacy protection in a variety of use contexts. In other words, most users deem their privacy expectations fulfilled, implying an alignment between HCAs’ privacy norms and information flows from the user perspective. This result seems to challenge certain public opinion during the launch stage that suggests the necessity to give up personal privacy for pandemic control and consider most information collected through HCAs not that private (Liu and Graham, 2021). That said, it poses no surprise to us given the time we conducted survey fielding, approximately a year since the first HCA went online. On the user end, despite a great deal of public privacy concerns during the launch stage, it is possible that HCA users have been exploring the technical specifications of HCAs and obtained a good understanding of the data processing details driven by growing awareness of individual privacy rights (Jiang and Fu, 2018). Besides, the responsible government agencies have issued explicit guidelines on setting privacy protection features in the design of various HCAs (SAC, 2020a). In a similar vein, the state has promulgated Personal Information Protection Law to address public privacy concerns over commercial platforms and e-government services (NPC, 2021). These administrative and legal efforts may help enhance users’ privacy trust in the apps.

Second, HCA users’ perceived convenience emerges as the most influential factor that positively affects their perceived privacy protection. That is, compared with other values and parameters, the interest of subject/sender rises to a paramount significance in terms of evaluating HACs’ privacy norms. This finding lends an ostensible conflict with the privacy-convenience tradeoff often underscored in studies examining early adopters of emerging technologies like IoT (Kang and Oh, 2021; Lau et al., 2018). In those cases, people are drawn to such technologies for great convenience in use and lapse into a spiral of information disclose for furthering the convenience experience after adoption. By contrast, HCA users report to have a rather balanced perception between convenience and privacy. One possible explanation would be the design of HCAs that manages to deliver a combined sense of convenience and privacy (Trang et al., 2020). In particular, Alibaba and Tencent have contributed profound expertise of big data technologies to the design process with great emphasis on user convenience while complying with the top-down data protection rules.

Aside from the design aspect, we argue it would be more likely ascribed to HCA users’ overemphasis on the value of convenience since they must use them multiple times a day to gain access to workplace, home, public venues, etc. Prior research mainly looks into controversial instances where people encountered privacy violations in the early stage of HCAs deployment (e.g., Yu, 2020). As people are accustomed to such a normalized use, convenience may become the salient pragmatic parameter to assess the entirety of app performance, including privacy protection. This is especially noticeable in the context of mandatory e-government service where convenience is one of the strongest determinants affecting citizen's satisfaction with the service (Chan et al., 2010). Placing the greatest value on perceived convenience, HCA users may take high privacy protection as granted upon passing the checkpoints with satisfaction. Such a user mindset may also explain the decreased effects of reading privacy policy, trust in government, and acceptance of government purposes in the final model, because they are more of the prerequisite or background to the actual use context and bear little pragmatic value like convenience. Further, in relation to the implementation of surveillance instrument, providing citizens with benefits like tangible convenience is prone to mitigate their privacy concerns (Kostka, 2019).

Third, HCA users’ attention towards privacy policies is instrumental in forming high perceived privacy protection. This finding not only resonates with related studies that foreground the prominence of transmission principle in the arrangement of contextual constituents surrounding contact tracing apps (Gerdon et al., 2021; Hargittai et al., 2020; Vitak and Zimmer, 2020). In addition to increasing public trust in and adoption of contact tracing apps, policies in line with users’ privacy expectation maintain adopters’ perceived privacy that ensues in practical use. Users see privacy policies as the statement of institutional privacy assurances that significantly influence the degree of privacy concerns (Xu et al., 2011). While Chinese governments take a retrospective way to improve HCAs’ privacy policies and address related privacy concerns, users may well notice the policies with regard to the policy guidelines and privacy decree showing the state's commitment to privacy protection (NPC, 2021; SAC, 2020a). So long as users pay attention to the policies, they are likely to perceive high privacy protection over the course of long-term implementation of HCAs.

Fourth, HCA users’ greater trust in government is associated with their higher perception of privacy protection. It conforms to the fact that people's trust in the providers of contact tracing apps is determinant to their privacy assessment of the technologies (Hargittai et al., 2020; Kehr et al., 2015). Besides, users’ acceptance of government purposes regarding HCA data management accounts for their perceived privacy protection, echoing the importance of contact tracing data management to circumscribing privacy risks conjured by the users (Gasser et al., 2020). From the CI perspective, both findings shed light on how contextual purposes and values exert significant impacts on shaping privacy norms that prescribe appropriate information flows. Trust in government functions beyond facilitating public adoption as it maintains users’ positive view of HCA primary providers, i.e., the central and local governments, and then undergirds the trustworthy relationship between users and HCAs in actual use (Duan and Deng, 2022; Wang et al., 2022). In a similar vein, users’ consent with the purposes of government data management, especially those for the benefit of public health, grounds their positive perception of HCAs (Utz et al., 2021).

Last, our analysis shows that HCA users’ frequency of mobile privacy protection behaviors is a rather marginal factor to the degree of perceived privacy protection. This challenges our expectation that there is a possible spill-over effect of people's mobile privacy knowledge and skill for a familiar use environment, Alipay or WeChat, on their mobile devices. It is possible due to the mandatory nature of HCAs which strictly define few protection behaviors, such as switching off location service (Boyles et al., 2012), that users can replicate in the contexts of HCAs. Also, we speculate that people may classify HCAs and Alipay or WeChat into separate app categories and recognize the former as a rather context-specific tool. Thus, there is limited common ground for extending privacy knowledge and skill. As for sociodemographic status, we find HCA users’ perceived privacy protection do not vary by sociodemographic status. This finding expands existing literature that highlights Chinese people's willingness to use contact tracing apps is not related to their sociodemographic background (Utz et al., 2021). It further suggests a different pattern of privacy understanding among Chinese in contrast with prior studies regarding commercial Internet services and other surveillance instruments (Kostka, 2019; Wang et al., 2020). It is possibly due to users’ high access to and great technical familiarity with HCAs given the mandate and the presentation form, i.e., mini programs on Alipay and WeChat, as well as long period of use since the apps’ first launch.

Conclusion

HCAs have characterized China's approach to COVID-19 pandemic control. Aside from their claimed effectiveness in restoring economic and social order, HCAs’ data-driven technical architecture also grounds continuous flows of personal information sent to data systems at both central and local levels. Those apps are the de facto digital surveillance instrument illustrating the state's aggressive embrace of big data technologies in strengthening its surveillance capacity. Interestingly, users’ perceived privacy protection of HCAs in China is rather positive as opposed to Western criticism on the associated privacy risks and intrusions. Such a stark contrast is conducive to research inquires exploring how people engage with the privacy dimension of contact tracing apps in a regime keen to harness the full potential of big data technologies in perfecting digital surveillance.

By addressing Chinese users’ perceived privacy protection of HCAs, our findings contribute to the robust scholarly debates on digital privacy and surveillance in China. First, compared to existing research on HCAs, the findings depict a more holistic picture concerning how users engage with the apps beyond the early stage of deployment. In specific, the significant impacts of perceived convenience, attention towards privacy policy, trust in government, and acceptance of government data management on users’ perceived privacy protection can enrich the existing scholarly discussions on public opinions of HCAs. Second, leveraging CI to interpret privacy perception in the unique use context of HCAs, we cast new light on the heuristic implications of the theory for articulating the tensions between pandemic surveillance and individual privacy in China. The findings show that the transmission principle, the contextual purposes and values, and the interests of subject/sender saliently account for how users reflect on the privacy norms in this specific context, whereas the actor values and the use aspect of context play a marginal role. Third, the findings advance the existing scholarship that interrogates the supportive views of governments’ digital surveillance instruments among the Chinese public. Other than state media depiction and governments’ information control (Xu et al., 2021), users’ high perceived privacy protection may underpin public support for and continuous acceptance of the surveillance measures. Last, in terms of methodology, this article draws on original survey data to complement prior HCA research that predominantly adopts qualitative methods, and to address the paucity of quantitative research on this intriguing topic in the broad field of digital privacy and big data surveillance.

Despite HCAs’ mandatory nature, our findings also contribute to ongoing conversations around contact tracing solutions and privacy amid the global pandemic. The examination on HCAs broadens the current scope of research on contact tracing solution as most existing studies wrestle with low public adoption rate and ineffective tool design and implementation in the West (Elkhodr et al., 2021). We manage to explain how a set of individual factors affect users’ privacy perception of HCAs in the Chinese society where mass adoption and almost a year-long mandatory deployment had occurred. Moreover, given the noticeable success of China's pandemic control in contrast with how most Western societies have achieved, this article echoes the necessity to interrogate China's COVID-19 surveillance measures through a constructive perspective that transcends the dichotomies of West/China, public/private, democratic/authoritarian, etc. (Liu, 2021). The findings lend practical implications for big data-driven preparations regarding future pandemics outside the Chinese context. First, the responsible government authorities should prioritize releasing transparent goals of government data management in line with the society-wide privacy interests, therefore easing the public privacy concerns over long-term surveillance measures. Second, the authorities should pay great attention to the gamut of possible use contexts of adopting surveillance measures and specify what practical benefits users can obtain other than the purported collective values.

These contributions aside, this article has several limitations that call for future research. First, we merely focused on users in Wuhan and Hangzhou for respective city characteristics in terms of pandemic control and HCA release. Nonetheless, we are unable to bring all sorts of HCA user in Wuhan and Hangzhou under scrutinization due to the respondent profile of web survey and our sampling strategy; also, the results fall short of generalizability regarding the entire user population in China. Future research needs to refine the sampling strategy so that minor, senior, and poorly educated HCA users can be included. Besides, the examination on HCAs should be expanded to a national representative sample, especially including those living in rural and technologically disadvantaged. Second, we mainly rely on the CI theory to identify important factors related to users’ perceived privacy protection of HCAs. However, it is possible that users’ general privacy attitude, previous experience with surveillance instrument, and personal travel history contribute to their HCA user experience and practical assessment of the apps. Future research needs to pay attention to alike attitudinal and behavioral factors in thoroughly unpacking users’ engagement with the privacy dimension of HCAs. Third, this article lacks attention paid towards other pandemic control measures and local policies that may trivialize HCAs. China's approach to pandemic control is a moving picture since new big data-driven surveillance instruments are constantly put forth across local governments, e.g., travel code (行程码) and location code (场所码), to complement the use of HCAs. These instruments derive from local pandemic control policies that vary from place to place, and sometimes outweigh HCAs given how inspectors execute the policies. Future research should factor these instruments along with the variation of local policies into examination on the public opinions and user experience of HCAs.

In addition, future research should explore how the downsides of China's zero-COVID policy impact the public perception of HCAs. Our analysis rests on survey data collected around the tipping point of China's pandemic control trajectory where the success of COVID-19 containment drew considerable attention and compliments within the international community. National pride among Chinese citizens was invoked and amplified, so were positive views of HCAs in relation to how poorly most Western societies had handled the pandemic (Liu, 2021). Yet, the social crisis tied to Shanghai's COVID lockdown this April and the incident of HCA data abuse in Henan Province this June have not only invited intensive criticism from the outside, but also jeopardize Chinese public confidence in the state's ability to appropriately manage new domestic outbreaks. These sudden turns are poised to render HCAs less effective, reliable, and trustworthy. Prior research on SCS demonstrates that although public support for this surveillance instrument has been steady in the recent years, it may dwindle if the state's positive framing meets unexpected setbacks (Kostka, 2019; Kostka and Antoine, 2020; Xu et al., 2021). In this sense, our findings may not see substantial changes since both Wuhan and Hangzhou have smoothly handled a few local outbreaks this year. That said, it is plausible that a replicated study in Shanghai and Henan would lead to different results.

Footnotes

Acknowledgements

The authors would like to thank the editors and three anonymous reviewers for their constructive comments and detailed editing of this article.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.