Abstract

A major challenge with the increasing use of Artificial Intelligence (AI) applications is to manage the long-term societal impacts of this technology. Two central concerns that have emerged in this respect are that the optimized goals behind the data processing of AI applications usually remain opaque and the energy footprint of their data processing is growing quickly. This study thus explores how much people value the transparency and environmental sustainability of AI using the example of personal AI assistants. The results from a choice-based conjoint analysis with a sample of more than 1.000 respondents from Germany indicate that people hardly care about the energy efficiency of AI; and while they do value transparency through explainable AI, this added value of an application is offset by minor costs. The findings shed light on what kinds of AI people are likely to demand and have important implications for policy and regulation.

Keywords

Introduction

As a general-purpose technology that is expected to gradually penetrate all kinds of social domains, Artificial Intelligence (AI) raises questions about its long-term societal impacts. 1 Besides expected positive effects of AI, two major concerns have been raised in recent policy debates and scholarly work: possible adverse impacts on people's autonomy and the negative ecological impact of AI. As AI applications come to be more widely adopted throughout society and as people more and more rely on them in their daily lives, an important question is to what extent the increasing delegation of decisions and tasks to AI respects and preserves personal autonomy (Floridi et al., 2018). The answer will depend on how these applications are designed and how people use them. The same holds true for possible environmental impacts of AI. Already without AI, the ecological crisis is a serious challenge whose roots can be traced back to technological and policy decisions that lie back decades if not centuries ago. With AI, societies may again find themselves at an important crossroad, where decisions taken today and behavioral patterns taking hold now may shape developments and environmental impacts of the technology over the next decades. Being on the verge of global adoption, AI is hence “not a technology that we can afford to ignore the environmental impacts of” (van Wynsberghe, 2021: 217).

In sum, it will be crucial how AI applications will be taken up and for which kinds of applications there will be popular demand.

Existing research, first, has written a lot on a lack of transparency of AI applications that makes it hard to impossible for users of AI-based applications to understand how much it is aligned with their own interests. For instance, why people see certain content or targeted advertisements in online platforms usually remains opaque to them (Eslami et al., 2019; Klawitter and Hargittai, 2018; Pasquale, 2015). That AI offers an adequate level of transparency and intelligibility of the decisions or recommendations that it produces is accordingly seen as a prerequisite for people being able to make informed decisions and to maintain a certain level of personal autonomy (Edwards and Veale, 2017; Lepri et al., 2018; Malgieri and Comandé, 2017). A possible more far-reaching systemic consequence of opaque AI is that people increasingly let themselves be governed by AI applications in exchange for greater convenience, which effectively leads to paternalist relations (Yeung, 2018).

A second possible negative impact of AI results from its resource and energy footprint. While AI has the potential to achieve sustainable solutions for environmental problems (Vinuesa et al., 2020), recent contributions have also highlighted that the spread of AI and the development of ever more sophisticated forms of AI contribute to a considerable and fast growing consumption of energy (Dauvergne, 2021; Strubell et al., 2019; Thompson et al., 2020). In view of an exponentially growing energy demand of more sophisticated forms of machine learning, realizing green AI is becoming a major challenge (Schwartz et al., 2019). Notably, the share of global CO2 emissions by the entire Information and Communication Technology sector was already estimated to lie at two percent in 2018, which is comparable to the aviation industry. Data centers alone make up about one percent of global energy use that has similarly seen a steep rise (IEA, 2020). As this demand will grow further with increasingly more energy needed for computing, a more widespread use of AI in society may amplify existing environmental challenges (Cowls et al., 2021). This makes it an important question whether and to what degree consumers, among whom AI applications may become widely adopted, value AI energy consumption, e.g., when installing an AI-based app on their phone. To be able to make an informed decision, they would need to be aware of the energy consumption caused by direct and external sources, like data centers. In sum, beyond the ethical problems that AI can create for the individual (Mittelstadt et al., 2016) it is also important to think of AI in terms of its long-term and macro-level consequences for society.

The two described major concerns—the threat to autonomy due to opacity and the threat to the environment due to rising energy consumption—are also reflected in recent policy initiatives by the European Union (EU). With its Declaration on a Green and Digital Transformation (European Commission, 2021), the EU aims to take the lead on energy efficient AI solutions, promote more environmentally sustainable digital products and services, and establish better information for consumers about the energy efficiency and CO2-footprint of products. Regarding the transparency of AI applications, the EU Digital Services Act (European Commission, 2020) posits that certain AI-based online services will need to provide explanations to consumers, e.g., for why they saw certain content. One main expectation embodied in these initiatives is that environmentally sustainable and transparent AI will be advanced through consumer demand of applications with such characteristics, if only consumers are enabled to make informed choices. This could be achieved with product labels for AI-based technologies like they are known from household appliances and other products.

A key question is, however, whether people still opt for transparent and environmentally sustainable AI if an application performs worse or costs more. While previous research has shown that transparent AI systems increase user satisfaction (Shin, 2021; Shin and Park, 2019), it is not yet known what

To obtain valid estimates of people's preferences regarding personal AI assistants we use a choice-based conjoint design. This indirect method of preference measurement allows for estimating the tradeoffs that respondents make between different features of an application based on their observed choices across different applications. The four features of AI assistants that we test in our study are (1) the performance quality of the assistant indicated by user satisfaction, (2) its level of transparency, (3) its price, and (4) its external energy consumption in data centers resulting from querying the AI assistant. Our sample of more than 1.000 respondents from a representative German online panel not only provides a strong basis for reliable estimation of effects but also allows for a targeted subgroup analysis. Specifically, we posit that differences in population subgroups regarding their desire for control and their environmental concern affect how much they value the transparency of AI applications and their energy efficiency respectively. Our analyses add to existing research on people's perceptions of AI applications (Alexander et al., 2018; Binns, 2018; Juravle et al., 2020; Lee, 2018; Shin, 2021; Shin and Park, 2019) by showing that when having to make tradeoffs, people clearly prioritize the performance and the price of these applications over high transparency standards and especially a high energy efficiency.

Design and method

Choice-based conjoint design

The analysis is based on a survey that has been designed to examine the importance that people assign to several relevant features of personal AI assistants. These assistants are, of course, only one possible form of AI application, and unlike AI making recommendations in other areas, such as health care, finance, or criminal justice, the stakes and risks are comparatively low with personal AI assistants which are mainly designed to provide convenience to consumers. Importantly, however, personal assistants are the kind of AI application that is likely to become widely used by people in their everyday life. Thus, while the seriousness of AI recommendations or decisions is low, the potential scale of usage makes them highly relevant with respect to possible macro-level impacts of AI on society—which may mean undesirable repercussions for personal autonomy and the environment.

To probe how much people value transparency and environmental sustainability of personal AI assistants, we use choice-based conjoint analysis as an indirect form of preference measurement that avoids asking people directly how much importance they give to certain object features. Rather, respondents have to make choices between presented options that differ with regard to several features (Eggers et al., 2018; Gustafsson et al., 2007; Hainmueller et al., 2015; Rao, 2014). From these observed choices, the latent utility of the presented options and of individual features can be estimated. This design mitigates social desirability (Horiuchi et al., 2021) and accommodates real choice situations where people cannot get all features that they desire and must make tradeoff decisions (see Supplemental material A1 and A2 for further information on the survey design).

Our study participants saw a—randomized—series of nine choice tasks in which they had to pick one of three AI assistants. These options differed regarding the following four features: (1) service quality measured as the share of people satisfied so far with the recommendations produced by the AI assistant, (2) the transparency of the AI assistant, (3) the price in the form of monthly costs, and (4) external energy consumption. To make sure that people had a common understanding of what they were asked to evaluate, we carefully formulated two introductory pages in the survey describing the AI assistants and the four features (see Supplemental material A1).

Regarding service quality in terms of

The feature levels of

Regarding the

Finally, we chose feature levels for the

One should note that

The four features and their feature levels are summed up in Table 1. The choice sets seen by the respondents in our study contain configurations of AI assistants that are made up of different combinations of these feature levels. Study participants had to choose between these configurations.

Overview of the features and feature levels in the conjoint design.

With four features and each feature having three levels, the conjoint design is kept to a manageable complexity to avoid the problem of fatigue and resulting noise in people's response patterns (Reed Johnson et al., 2013). Four to five features can already be very cognitively taxing on respondents. In a similar vein, it is important to keep the number of choice sets, i.e., the situations in which respondents have to pick from configurations of AI assistants based on the features in Table 1, within certain limits (Rao, 2014: 186). It is common to use no more than around ten sets even with comparatively easy product choices (Eggers et al., 2018: 16).

Using the shifting method by Bunch et al. (1996) to create a choice design, we get overall nine choice sets with three options in each choice set. To create the final choice sets, we first reduced all possible configurations to an orthogonal factorial (i.e. a parsimonious subset of all possible combinations with a variation of feature levels that allows for estimating the main effects). This factorial consists of nine stimuli which were then taken as the first option for the nine choice sets (the first option in each choice set shown in the Supplemental material A2). The second and third options for these choice sets were created by shifting all feature levels of the initial nine stimuli one level higher (for the second option) and then repeating this process (for the third option). We also added a no-choice option, as some respondents may well choose to have no AI assistant at all over all presented options in a given choice set (for details on the resulting conjoint design see Supplemental material A2).

The conjoint design was tested in a pretest with 38 political science and computer science student participants (yielding 38 × 9 = 342 registered choices). As we gained statistically significant and very plausible coefficients with the pretest (for results, see Supplemental material A3), we kept the design for the main study. We also performed a power analysis for discrete choice designs based on the coefficients obtained from the pretest and presuming an alpha of 0.05 and a beta of 0.2. This power analysis yielded a required sample size of 309 for the smallest effect in the pretest, whereas for the strongest effect seven cases showed to be sufficient. Based on this result, we could expect that a sample size of 1.000 participants would also allow for an analysis of subgroups much smaller than the entire sample.

Sample

The Participants in our study were recruited by the certified panel provider (according to the internationally recognized Norm ISO 26362) respondi AG. The sample was drawn from a panel that is representative for the German population aged 18 to 74 based on quotas for gender, age, and region (see Supplemental material A4 for sample composition). The German case is particularly instructive for several reasons: First, the German population has been shown to be comparatively risk averse and skeptical towards new technologies (Metag and Marcinkowski, 2014; Mundorf et al., 1996). Second, Germany is characterized by strong post-materialism (Nový et al., 2017) and high levels of environmental concern (Franzen and Meyer, 2010; Gellrich, 2021). Under these conditions, we would expect that study participants are most likely to be demanding high levels of transparency and environmental sustainability of AI assistants.

Measures and method of analysis

For the subgroup analysis, we draw on two constructs to create the groups in our sample: environmental concern and desire for control. The items for environmental concern are based on a scale developed by the

The questions used to create these constructs were asked in a second part of the survey that followed the choice tasks. In the analysis, we used an attention check included in the desirability of control item battery, a control question asking about the usability of the responses, and a time filter (at least 3 s on average per choice task), leaving us with 946 cases in the analysis.

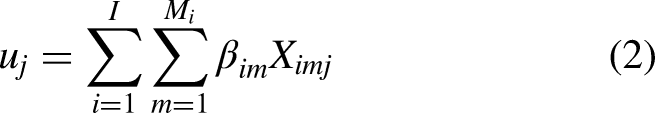

To estimate the partworths of the features, we estimate a multinomial logit model, which models the observed choices in terms of the probability of choosing a given option j in a choice set k as a function of the latent utility of the options in the choice set (the presented AI assistant together with the no-choice option):

pkj: Probability of choosing option j in choice set k.

ukj: utility of option j in choice set k.

uki: utility of option i in choice set k with J options.

The overall utility of option k is a composition of the individual feature level partworths over all attributes that are present in the option j:

uj: utility of option j.

βim: partworth of feature level m of the feature i.

ximj: dummy Variable with the value 1 if the option j has the level m of feature i, otherwise 0.

Since the choice design included a no-choice option, the multinomial logistic regression model includes a choice set-specific constant for this no-choice option. This dummy variable takes the value one for the no-choice option and the value zero for any of the two options in every choice set.

Results

Analysis of the entire sample

The results from the multinomial logistic regression based on the data from the choice-based conjoint design are shown in Figure 1. The figure shows the estimated partworth utilities of the feature categories in relation to the respective reference category, i.e., 89 percent satisfied, no transparency, for free, 10 queries correspond to 1h energy use of an energy-saving lightbulb. Several key findings can be taken away from the depicted partworths.

Estimated feature partworth utilities with 95% confidence intervals. These utilities are the coefficient estimates from a multinomial logistic regression that uses dummy coding of the features and models the relationship between the feature level utilities and the observed choice of one option over other options in a choice set (see main text for the fitted model). Transparency low = basic operating principles are disclosed, transparency high = application provides information on how it arrived at a recommendation/decision, Energy = the external energy use of an AI assistant from 10 queries expressed in the hours an energy-saving light bulb could be run.

Finally,

What do these partworths mean for the tradeoffs that people make between the features? It seems clear that even a price of 1.99 Euros per month in comparison to the application being for free is not easily compensated by other features of the AI assistant. While energy efficiency of the AI assistant will clearly not justify this price for respondents, high transparency comes close to offsetting the utility decrease through the monthly fee of 1.99 Euros. However, only if there is an additional increase in user satisfaction of an AI assistant, people can be expected to opt for the “upgrade,” i.e., the more transparent application. Without this added user satisfaction, users would still rather choose an AI assistant that does not have the desirable transparency feature but is for free.

We can illustrate this tradeoff by means of predicting choices based on our estimated model. Using the coefficients shown in Figure 1, it is possible to calculate the probability of choosing a given configuration over some other configuration. Of particular interest is the comparison between (A) the reference configuration with 89 percent user satisfaction, no transparency, high energy efficiency (reference category), and no costs versus (B) a configuration that only differs by having a high transparency and a monthly cost of 1.99 Euros—hence opaque AI for free versus explainable AI at 1.99 Euros. The probability of choosing (B) over (A) based on our estimates is 43.6 percent with a 95-percent confidence interval between 41.6 and 45.7 percent (estimated using Monte Carlo simulation). This probability is therefore still under the 50-percent threshold above which (B) would be chosen over (A). It is only when increasing user satisfaction for the option (B) to 94 percent that this threshold is crossed, reaching a predicted probability of 51.5 percent (95-percent confidence interval: 48.8; 54.3).

In sum, there is a high degree of price sensitivity with personal AI assistants. At the same time, the findings attest to a general disregard for environmentally sustainable AI and a notable demand for explainable AI (the highest transparency feature level). People do seem to value explainable AI but will only accept a small cost for this feature. These results mirror previous findings such as those by Gerpott and Paukert (2013) who find that German households are hardly willing to pay for an environmentally friendly technology (networked electricity metering systems, so-called smart meters) and that trust in the protection of personal data is more important than environmental awareness.

Subgroup analyses

The extent to which people may be ready to trade transparency and environmental sustainability of AI assistants against other features may well depend on their personal dispositions. Directly relevant in the context of this study are two individual characteristics: desire for control and environmental concern. First, the transparency of an AI-based personal assistant is likely to be given greater importance if people strongly value being self-reliant and in control. Desire for control (Burger and Cooper, 1979) has been noted to be an important factor conditioning how much people value interactivity in online interfaces, specifically for advertising (Liu and Shrum, 2002: 62; Wu, 2019), consumer decisions (Beverland and Farrelly, 2010), and consumer adoption of new products (Faraji-Rad et al., 2017). In a similar vein, Gaudiello et al. (2016) have tested the effect of desire for control on trust in robots, but they do not find any relationship. This may, however, be owed to the specific setting of their study while different realizations of AI might be perceived differently by people (Glikson and Woolley, 2020). With AI-based personal assistants that provide recommendations, users may well appreciate greater transparency, particularly through explainability, if they have a strong desire for control.

The second individual characteristic of interest, environmental concern, is a strong candidate for shaping how much importance people give to the environmental sustainability of an AI application. Evidence on consumer behavior points to environmental concern as an important predictor of choosing green products (Cerri et al., 2018; Tanner and Wölfing Kast, 2003) and suggests that only consumers with a green attitude are willing to pay a higher price for a green product that has no compensatory advantage on other attributes (Olson, 2013). Similar effects may therefore exist with respect to environmental sustainability of AI assistants.

Based on these two relevant individual-level characteristics, we perform analyses over subgroups to determine whether the relative importance of AI assistants’ transparency and environmental sustainability systematically differs between segments in the population. Rather than creating these groups via chosen thresholds on the two underlying variables (environmental concern and desire for control), we use cluster analysis to derive groups made up of these two variables based on how these are empirically distributed in our sample. In this way, one avoids manually creating groups that are hardly relevant or identifiable as such in society. The cluster analysis yielded three subgroups (see Supplemental material A7 for details and group descriptions): the first subgroup is high only on desire for control, the second subgroup only on environmental concern, and subgroup three has high mean scores on both variables. Based on the differences between the three generated subgroups, one would expect that subgroups two and three place greater importance on the energy efficiency of the AI assistants, whereas subgroups one and three give greater weight to the transparency of the AI assistants. Figure 2 shows the partworth estimates for the three created subgroups.

Estimated feature partworth utilities with 95% confidence intervals. These utilities are the coefficient estimates from a multinomial logistic regression that uses dummy coding of the features and models the relationship between the feature level utilities and the observed choice of one option over other options in a choice set (see main text for the fitted model). Transparency low = basic operating principles are disclosed, transparency high = application provides information on how it arrived at a recommendation/decision, Energy = the external energy use of an AI assistant from 10 queries expressed in the hours an energy-saving light bulb could be run. Group 1 = high only on desire for control, group 2 = high only on environmental concern, group 3 = high on desire for control and environmental concern.

A first general finding from the subgroup analyses is that there are hardly any differences between the subgroups. It seems that dispositions that are theoretically highly relevant for people's perceived feature importance regarding the presented AI assistants do not at all emerge as relevant conditioning factors in the analysis. Desire for control does not matter for how much people value the transparency of these assistants. Equally, environmental concern does not affect how much people value energy efficiency of AI assistants—a finding that is in line with results from earlier studies showing a well-known attitude-behavior gap, i.e. between pronounced environmental concern in Germany and environmental behavior and consumption (Gellrich, 2021).

There are only two discernible differences concerning the created subgroups. First, subgroup one (only high on desire for control) appears to be more price sensitive than the others. Second, the third subgroup (high on

This difference does not seem to be due to either the greater environmental concern or the greater desire for control of this subgroup, because in that case, we would see a similar pattern at least for one of the other subgroups. Based on variables capturing political attitudes and socio-demographics included in our survey, we can explore at least to some extent what might be special about group three. We do so by examining the three groups with respect to their political positions on pro-welfare versus less-taxes and their pro-environment versus pro-growth stance, their age, sex, and level of formal education (see Supplemental material A7). We find that group three, i.e., those who place greater value on explainable AI, stands out through much more clearly preferring environmental protection over economic growth. It therefore seems that it is the politically more progressive individuals who care more about a high transparency of AI assistants. This moderating effect is also statistically significant when interacting a binary environmental policy stance variable that has been recoded based on the median (above = 1) in the multinomial logistic regression (see Supplemental material A8). Again, it is striking that the partworth utility of higher energy use is not significantly different from zero for those with a strong pro-environment stance.

As the negligible importance of energy efficiency deviates from the significant negative partworth found with the student sample in our pretest, we tried to replicate these findings with a subgroup from our representative sample that comes closest to the student group: those with at least higher-tier secondary education, aged 18 to 25 and above the third quartile on the environmental concern variable. For this subgroup, we do indeed find a significant negative partworth for the least efficient energy level (see Supplemental material A9). But even in this group, the importance of energy efficiency is still comparatively low. A further subgroup analysis also indicates that groups which are less versus more likely to show a high price sensitivity, i.e., at least higher-tier secondary education and a market-liberal socio-economic policy stance versus lower formal education and a pro-redistribution policy stance, exhibit no clear differences regarding their partworths (see Supplemental material A10). In other words, there is a generally low willingness to accept a price for the transparency and energy efficiency of AI assistants.

Discussion

Our Findings provide important insights on how much people care about the transparency and the environmental sustainability of AI consumer applications. The results show very clearly that people do not care about the energy efficiency of AI assistants. And while they do value explainable AI, i.e., high transparency of the AI assistants, this feature barely offsets even a monthly price of 1.99 Euros as compared to no costs. Our findings are hence in line with previous work showing that people value the transparency of AI (Shin, 2021; Shin and Park, 2019), but our design specifically takes into account the tradeoffs that people make. The results are also largely consistent across three theoretically meaningful subgroups as we do not find evidence that people's desire for control and their environmental concern increases their demand for more transparent and environmentally sustainable AI respectively.

These results need to be seen against the backdrop of several limitations. First, we have kept our choice design to a manageable amount of four features; other configurations with different or additional features would be conceivable. Our study, however, focused on features that are of particular theoretical interest (transparency and energy efficiency) and can be expected to be central in people's calculus (usefulness and price). Besides, the results can at least be said to hold true for these features and their tradeoffs.

Second, while personal AI assistants are suitable for creating choice experiments as they are comparatively close to people's everyday lives, they are only one form of AI with which people may come into contact. Specifically, AI assistants are mainly designed for convenience and help people in situations in which stakes are low—unlike other forms of AI that, e.g., inform health decisions. This might be one reason why people are not willing to pay a small fee for transparent and environmentally sustainable AI assistants. In areas where the stakes of AI decisions or recommendations are higher, people may well care more about transparency of AI systems than with personal assistants. For instance, one can expect this to be the case in the areas of health (Juravle et al., 2020), but also in other settings where it is not the individual but the state who uses AI such as in tax fraud detection (Kieslich et al., 2021) or in the criminal justice system (Waggoner et al., 2019). Similarly, transparency may be valued more where individuals are not the users of a service but the object, as in recruiting decisions (Langer et al., 2021). The value of transparency may thus well rise proportional to the severity of the impacts that individual AI system decisions potentially have. The importance of energy efficiency, on the other hand, seems unlikely to differ between contexts. We see no reason why one would rate energy consumption of an AI differently when using it to, for instance, detect fraud compared to the setting chosen in our study. Moreover, the question of the size of the energy footprint is less relevant where AI systems are not adopted on a huge scale and thus more relevant with AI assistants as investigated in this study. Since many future AI applications can be expected to become prevalent in the realm of consumer appliances, our findings are likely to travel to and should be tested with other applications and contexts.

Third, AI assistants in the form of apps usually come for free. That people have become accustomed to receiving services on the internet in exchange for data instead of money seems to amount to an important psychological anchor that may not similarly exist with other AI-based products and services in fields like medicine or legal tech. It does mean, however, that there exists a major obstacle to introducing transparent and sustainable AI applications for a small to moderate fee in domains where it is common to pay for services with data.

All in all, our findings have important implications for policymakers and educators and are also relevant for businesses that consider offering explainable and energy efficient AI. They strongly suggest that transparency and environmental sustainability—which are both seen as important goals among experts and policymakers—will hardly be induced through demand from consumers for corresponding features of AI applications. Notably, our survey indicates that although people are not generally willing to pay a price for transparent and green AI, they do support regulation of AI. Additional survey items show an average support for restrictive regulation of AI transparency via negative financial incentives, legal standards, and bans above the mid-point of a 5-point scale: with mean values of 3.3, 3.9, and 3.6 respectively. The same is true concerning the regulation of the ecological sustainability of AI, with average values of 3.4, 3.7, and 3.5 respectively. These results suggest that citizens see the need for regulation beyond information-based measures, even though their stated consumption choices regarding transparent and green AI are hardly in line with their attitudes.

In view of these findings, it seems doubtful that simply placing the burden on “the informed consumer” will lead to a demand for transparent and sustainable AI. The challenges with regard to AI use are comparable to those in the realm of privacy where placing responsibility on the individual alone hardly amounts to an effective safeguard to people's informational autonomy (Acquisti, 2009; van Ooijen and Vrabec, 2019). In a similar vein, autonomy-preserving and environmentally sustainable adoption of AI will hardly be driven by consumer choice. These conclusions even gain special weight because in our choice design, we confronted participants with features like energy use that they usually are not even made aware of. Furthermore, our study was fielded in a society that is comparatively postmaterialist and environmentalist, which makes it a most likely case for which we would expect to find a notable demand for transparent and environmentally sustainable AI. The recent policy initiatives by the EU mentioned at the outset clearly have the potential to empower consumers with better information, for instance, through eco-labels and offering more choice. However, that consumers make use of these possibilities seems unlikely without at least raising awareness about and a strong concern for the energy footprint of AI and the value of transparency. Consumers may thus find themselves opting for offers that have undesirable external effects that they themselves would like to avoid.

To trigger changes in the behavior towards the use of more transparent and sustainable AI, other policies will be needed that incentivize corresponding products and services. Raising awareness of the value of transparency features and the environmental impact of AI through education, information campaigns, and the introduction of product labels are only one set of instruments in the policy design toolbox of policymakers, which however still places the burden on the consumers. Against the backdrop of our findings, supply-side measures and regulations might be more effective through placing responsibility on a variety of actors. For instance, competition-based instruments requiring those hosting or operating AI applications to include AI applications into emissions trading systems or adhering to product-related top runner/eco-design approaches might be suitable options (Siderius and Nakagami, 2013). Other institutional solutions to foster green tech could consist in self-regulation by companies and (co-)regulation by state actors that induce positive incentives for a responsible development of AI (Tomitsch, 2021). If AI, including in the realm of consumer appliances, continues to show an exponentially growing impact on energy and resources, there may also be a need for setting binding legal standards to achieve the desired trajectory of AI development and adoption (Taddeo and Floridi, 2018; van Wynsberghe, 2021). Otherwise, there is a great risk that history repeats itself, with now the tech industry instead of the oil industry “insinuating that climate change was the doing of individuals rather than companies” (Tomitsch, 2021).

The above findings also point to possible future research. First, as already mentioned we focused on one specific aspect of ecological sustainability (

Second, while our focus has deliberately been on specific features that can be connected to recent policy initiatives regarding explainable AI and green tech at the level of the EU but also at other (inter-)national levels, future work could also cover other important features of AI. These could comprise, e.g., the certification of algorithmic fairness, which can be seen as a social component of sustainability. Third, the findings above highlight a need to investigate which policy instruments and which policy mix is most suitable for strengthening demand for transparent and environmentally sustainable AI. Fourth and finally, we have chosen Germany as a case where societal and political values present favorable conditions for identifying demand for transparent and environmentally sustainable AI. Hence, the findings may look different in other settings, and it equally remains to be seen whether respondents in countries like the US—which, to date, do not have a federal privacy law—would appreciate transparency more than German consumers. Being subject to EU data protection law might lead citizens to value transparency of AI more highly—but the opposite might also hold true as individuals might consider this issue to already be sufficiently addressed by the regulatory framework.

It is apparent that more work needs to be done to understand and to steer the social and environmental impact of AI-based technology. Today, we might be at a critical juncture since the convergence of the twin challenge of sustainability and digital change requires that crucial decisions for the future must be made within the next few years. When thinking about AI, more “attention to the environmental impact is mandatory” (van Wynsberghe, 2021: 217), as is to the societal repercussions of the technology and the question of individual autonomy in a society in which humans lives become more intertwined with forms of AI.

Supplemental Material

sj-pdf-1-bds-10.1177_20539517211069632 - Supplemental material for Consumers are willing to pay a price for explainable, but not for green AI. Evidence from a choice-based conjoint analysis

Supplemental material, sj-pdf-1-bds-10.1177_20539517211069632 for Consumers are willing to pay a price for explainable, but not for green AI. Evidence from a choice-based conjoint analysis by Pascal D König, Stefan Wurster and Markus B Siewert in Big Data & Society

Footnotes

Acknowledgments

We thank the three anonymous reviewers and the editors of Big Data & Society for their valuable comments and suggestions. We extend our gratitude to the students who participated in the pretest of the survey. Last but not least, we also thank Lea Buchholz and Leonardo Giannotti for their feedback and proof reading at various stages.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Supplemental material

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.