Abstract

This contribution aims at proposing a framework for articulating different kinds of “normativities” that are and can be attributed to “algorithmic systems.” The technical normativity manifests itself through the lineage of technical objects. The norm expresses a technical scheme’s becoming as it mutates through, but also resists, inventions. The genealogy of neural networks shall provide a powerful illustration of this dynamic by engaging with their concrete functioning as well as their unsuspected potentialities. The socio-technical normativity accounts for the manners in which engineers, as actors folded into socio-technical networks, willingly or unwittingly, infuse technical objects with values materialized in the system. Surveillance systems’ design will serve here to instantiate the ongoing mediation through which algorithmic systems are endowed with specific capacities. The behavioral normativity is the normative activity, in which both organic and mechanical behaviors are actively participating, undoing the identification of machines with “norm following,” and organisms with “norminstitution”. This proposition productively accounts for the singularity of machine learning algorithms, explored here through the case of recommender systems. The paper will provide substantial discussions of the notions of “normative” by cutting across history and philosophy of science, legal, and critical theory, as well as “algorithmics,” and by confronting our studies led in engineering laboratories with critical algorithm studies.

Keywords

This article is a part of special theme on Algorithmic Normativities. To see a full list of all articles in this special theme, please click here: https://journals.sagepub.com/page/bds/collections/algorithmic_normativities.

Introduction

It is generally agreed upon that “algorithmic systems” implementing machine learning techniques have significant normative effects upon the ways items are recommended and consumed, the ways choices are taken and justified. However, the aspect and extent of those “normative effects” are subject to much disagreement. Some claim that “algorithmic systems” significantly affect the way norms are conceived and instituted. Others maintain that algorithmic systems are actually black boxing more traditional normative processes (Diakopoulos, 2013). The problem partly lies in accounting for the different kinds of “normativities” that are, and can be attributed to, “algorithmic systems.” Our paper proposes a substantial discussion of “algorithmic normativities” by accounting for the practices we witnessed in diverse engineering laboratories and by confronting literature in sociology, history and philosophy of technology (see for instance Beer 2017 and Ziewitz 2016). Before going any further let us begin by providing working definitions of “algorithm” and “norm,” the value of which should be measured by the conceptual and practical relations they give rise to when thinking about, or interacting with, algorithmic systems.

From an engineering standpoint, algorithms can be helpfully approached as stabilized and formalized computational techniques that scientists and engineers recognize and manipulate as an object throughout a variety of disparate

From a generic standpoint, vital, social or technical norms can be approached either as a

The following pages further investigate how distinct kinds of normativities come into play through these socio-technical assemblages we call algorithmic systems.

The first section exposes some of the minute actions through which engineers willingly or unwittingly infuse technical systems with social norms. The design of a surveillance system will serve to unfold the ongoing mediations (e.g., collecting data, defining metrics, establishing functions) that allow engineers to normalize the behaviors of algorithmic systems (e.g., how algorithms improve their ability to discriminate between threatening and nonthreatening behaviors). The second section shows how successive changes in algorithms’ structures and operations reveal distinct kinds of The third section proposes to conceive of learning machines as exhibiting a genuine social or

The conceptual focus of the paper does not dispense us from indicating the empirical sources thanks to which we ground our developments. The surveillance system’s empirical material derives mainly from ethnographic data collected between 2012–2015 and 2016–2019 while participating in two Research and Innovation projects (e.g., consortium meetings, interviews with engineers, laboratory visits). The neural network’s empirical material is chiefly informed by readings of original technical literature (Rosenblatt, 1962), contemporary technical literature (Bishop, 2006; Mitchell, 1997) and published historical accounts (McCorduck, 1983; Crevier, 1993; Olazaran, 1993; Pickering, 2010). The recommender system’s material freely draws upon knowledge acquired along an empirical study following a computational experiment on recommender systems conducted between 2016 and 2017, as well as of the consultation of other more traditional resources such as scientific literature and technical blogs.

Socio-technical normativity and surveillance system

The recent applications of machine learning techniques in assisted and automated decision-making raise hopes and concerns. On the one hand, there are hopes about the possibility to have an informational environment tailored to specific ends or the possibility to increase a firm’s commercial revenues. On the other hand, there are concerns about the inconsiderate discriminations machine learning results might conceal or the unprecedented possibilities for governing ushered in by these techniques. Such concerns appear to be exacerbated by the patent difficulties to hold beings accountable, either because the responsibility of the decision is not attributable in any straightforward way, whether to an algorithmic system or to a moral person, or because the understanding of processes leading to case-specific decisions is threadbare (Burrell, 2016).

We propose to address these key issues by identifying

Funded by the European Commission between 2012 and 2015, this European “Research and Innovation” Project gathered a dozen private companies, research universities, and public institutions with the aim of designing a system able to automatically detect threatening behaviors. This rather abstract endeavour inevitably concealed multiple concrete technical challenges, the most predominant of which were the combination of visual and thermal cameras with microwave and acoustic sensors, as well as the development of robust and efficient detection, tracking and classification algorithms (i.e. working in real-time within uncontrolled environments). The consortium rapidly pinned down two “use cases” that would put them to work: a Swedish nuclear power plant (the Oskarshamns Kraftgrupp AB) and a Swedish nuclear waste storage facility (the Centralt mellanlager för använt kärnbränsle). The task impelled the engineers to undertake a socio-technical inquiry enabling them to spell out the various qualities with which users (i.e. the security workers) expected the system to be endowed. 3

Understandably, the security workers expected the system to maintain the number of false alarms below a certain threshold—otherwise the system would end up

The very notions of “false positive” or “false negative” suppose that an algorithm-based classification can be compared to, and overturned by, a human-based classification. This human-based classification, acting as the reference norm against which all machine-based classifications are to be evaluated, is called the

How are these ground truth datasets concretely constituted? Constituting relevant datasets for algorithmic systems presents a genuine socio-technical challenge. Building on their socio-technical inquiry—which involves reading regulations, visiting sites, and interviewing workers—the engineers envisioned scenarios that would seek to encompass the range of threatening and inoffensive behaviors the system was expected to handle (e.g., a jogger running near the nuclear power plant, a boat rapidly approaching the waste storage facility, a truck driving perpendicular to one of the site’s fences, etc.). The engineers then gathered on two occasions, each time for about a week, to enact and record the norms scripted in their scenarios (Figure 1). The scene itself is worth visualizing: engineers awkwardly running through an empty field sprinkled with cables and sensors, mimicking the threatening or inoffensive behaviors they want their algorithms to learn. Returning to their laboratories, they spent a considerable amount of time annotating each frame of each sequence produced by each sensor, e.g. circling moving bodies, labeling their behaviors, etc. 4

With their metrics defined and their data collected, engineers can, at last,

We have argued here—against growing claims that contemporary machine learning practices and techniques are progressively slipping through our hands—that approaches combining empirical and critical perspectives are likely to provide us with the means to productively engage with engineering practices, while also opening up possibilities of critique and intervention. The argument essentially rested on two claims. On the one hand, the “metrics,” which mathematically express the system’s socio-technical norms, equip engineers with a way of assessing the comparative values of different algorithms. On the other hand, the “ground truth dataset,” which comprises the actual and possible instances the system is supposed to handle, provides engineers with local norms for telling the algorithm how to learn in specific instances.

Technical normativity and artificial neural networks

The recent craze surrounding “machine” or “deep” learning is usually explained in terms of “available data” (i.e. infrastructural changes made data collection accessible), “algorithmic advances” (i.e. technical changes made data processing possible) or “economic promises” (i.e. applications will make data particularly valuable). In focusing on the algorithmic advances, the following paragraphs propose to further explore three

The earliest trace of the artificial neural network scheme can be found in a technical report authored by the American psychologist Frank Rosenblatt (Rosenblatt, 1962) when based at the Cornell Aeronautical Laboratory. By that time, several researchers were interested in exploring the nature of learning processes with the embryonic tools of automata theories. Distinctively, Rosenblatt decided to address the problem of learning processes by both investigating neurophysiological systems Personal sketch of the data collection field observed in United Kingdom in June 2014. Personal sketch of a slide used by Robin Devooght on June 2017. Diagram of artificial neural network within an environment. Diagram of the parts composing artificial neural networks.

It has been convincingly argued that the singularity of technical objects is best grasped through the schemes describing their operations within different environments, rather than the uses to which they are subject or the practicalities of their actualization (Simondon, 1958: 19–82). Thus, the singularity of artificial neural networks lies in their technical scheme, rather than the general regression, classification or clustering purposes they serve, or their specific implementations in Python’s Theano or TensorFlow libraries. A technical scheme describes the

In the case of artificial neural networks, the scheme always couples the algorithm to an environment (Figure 3). In Rosenblatt’s design, light sensors allow the network to capture signals from its environment that end up being classified and light emitters enable the network to display the results of its classifications. Crucially, Rosenblatt articulated the artificial neural network and its environment with a genuine feedback mechanism, which would work as an experimental controller for correcting the emitter’s output until it produced the desired response.

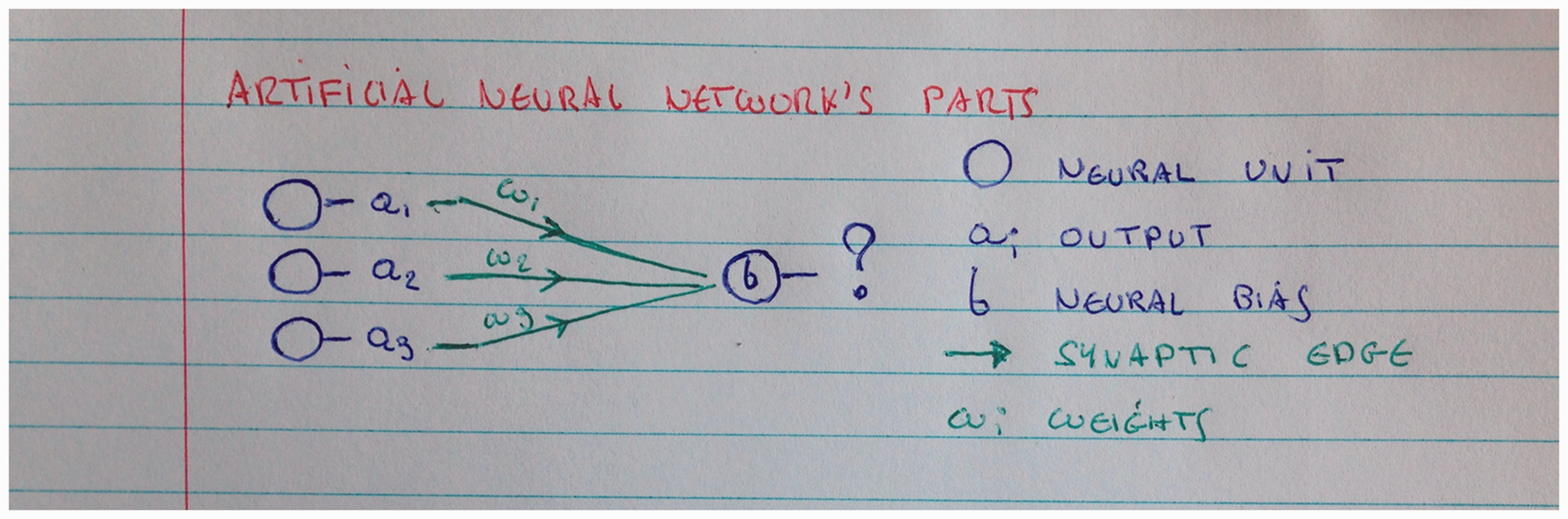

The artificial neural network’s scheme consists of two main parts: neural units and synaptic edges (see Figure 4). Each neural unit

The first operation is prediction. It typically moves from left to right and leaves the network unchanged (see Figure 5). A cascade of operations combines the input signal and the network’s parameters (i.e. synapses’ weights and neurons’ biases) in order to produce specific output values—depending on the problem, the signal is classified as “triangle” or “circle,” as “threatening” or “nonthreatening,” etc.

Diagram of the prediction’s operation in artificial neural networks.

The second operation is learning. It typically moves from right to left and alters the network’s parameters (see Figure 6). A cascade of operations compares the outputs’ values (i.e. the datasequence the algorithm classified) and the ground truth information (i.e. the sequence data the engineer annotated) in order to update and correct the network’s parameters (i.e. synapses weights and neurons’ biases).

Diagram of the learning’s operation in artificial neural networks.

More than a mere description encompassing different but related technical objects, this technical scheme opens a field of possible manipulations for engineers, ranging from minor modifications (How many neurons? How many synapses? etc.) to major modifications, which lead to new lineages of artificial neural networks (What if the neurons’ activation function changes? What if peculiar synaptic connections are allowed? etc.). Thus, technical schemes are always both objectal and imaginal (Beaubois, 2015). If technical objects “have a life of their own,” to borrow Ian Hacking’s (1983: 262–275) words, the problem then lies in accounting for their schemes’ becoming, as it mutates through but also resists, successive inventions (Leroi-Gourhan, 1945; Simondon, 1958: 19–82). The following paragraphs briefly sketch two other episodes neural networks went through between the early 1950s and the late 2000s, further illustrating this dynamic of invention and resistance.

The experimental, mathematical, and commercial successes of artificial neural networks rested upon their ability to adjust synapses’ weights and neurons’ biases in order to recognize incoming patterns—i.e. to learn to classify. However, the limitations of early artificial neural networks rapidly became apparent and problematic (see Olazaran, 1993: 347–350). The single-layer artificial neural networks proved incapable of solving important families of problems, e.g. they could not learn to recognize resized or disconnected geometrical patterns (Rosenblatt, 1962: 67–70). On the other hand, learning capacities of multilayer artificial neural networks appeared to depend more on engineering skills than on their intrinsic qualities, e.g. there existed no procedure guaranteeing that the learning would converge (see Olazaran, 1993: 396–406). These combined limitations to algorithmic learning capacities are traditionally seen as the onset of a significant drop in financial and scientific interest in artificial neural networks and artificial intelligence.

It was not before the mid-1980s that a satisfying learning rule, named “backpropagation,” would be identified for multilayer artificial neural networks—more or less simultaneously and independently by Paul Werbos, David Rumelhart, and Yann Le Cunn (Olazaran, 1993: 390–396). In a nutshell, the learning algorithm measures the difference between the network’s and the environment’s responses (i.e. error function); it then computes the relative contribution of each synapse and neuron to the measured error (e.g., chain rule’s partial derivatives); and finally updates the synapses’ weights and neurons’ biases so as to reduce the overall error. The learning problem is thus recast as an optimization problem, in which the main objective is to minimize classification errors. Backpropagation’s mathematical scheme significantly extended artificial neural networks’ problem-solving capacities.

Thereafter, in the late 1980s multilayer artificial neural networks gave rise to interesting applications in areas such as natural language processing, handwriting recognition, etc. (Olazaran, 1993: 405–411). However, the computational resources required during the learning phases quickly presented a significant impediment: depending on the number of layers, on the size of the datasets and on the power of the processors, it could take up to several weeks to find weights and biases minimizing classification errors. Thus, the applications using artificial neural networks did not stand out from other machine learning algorithms (e.g., “k-nearest neighbors,” “random forests,” “support vector machines,” “matrix factorization”). Their more recent proliferation—often referred to as “deep learning”—appears to be intimately tied to advent and generalization of massively parallel processing units.

In the late 1990s, Nvidia Corporation, one of the largest hardware developers and manufacturers for video games, equipped their graphic cards with specific-purpose processors. Contemporary video games typically involve large-scale mathematical transformations that can be performed in parallel, e.g. the changing values of the millions of pixels displayed on our screens are usually processed simultaneously, instead of being calculated sequentially. In the late 2000s, researchers in computational statistics and machine learning revealed the mathematical affinities shared by the graphical operations in video games and the learning operations in pattern recognition. Indeed, both involved countless matricial calculations that could be distributed over several processing cores and executed independently. This rapid outline indicates the extent to which material developments brought forth or actualized latent algorithmic capacities.

Thus far, our approach has emphasized three kinds of technical norms algorithms impose on engineering practices. The first has to do with the algorithm’s imaginal affordances in relation to the development of technical systems—in this case, a single abstract representation of neural processes brought about multiple lineages of algorithms. The second deals with the mathematical properties of the algorithm’s structures and operations—in this case, the invention of a convergent algorithm significantly extended the abilities of multilayer artificial neural networks. The third bears on the interplay between material constraints and computational possibilities—in this case, the advent of Graphical Processing Units transformed the range of problems algorithms can solve. Thus, the genealogy of artificial neural networks led us not only to a concrete account of algorithmic processes, but more importantly still, to a comprehensive understanding of the imaginal, mathematical and material dynamics that drive their technical becoming.

Behavioral normativity and collaborative filtering

It is generally observed that computers blur an entrenched ontological dichotomy between two kinds of beings: living organisms and automated machines (see Fox-Keller, 2008; 2009). Kant’s far-reaching conception of self-organization famously epitomizes the matter at stake: organisms’ norms are thought to be intrinsic to their activities—organisms are, in this sense, “norm-instituting” beings—and machines’ norms are thought to be extrinsic to their activities—machines are, in this sense, “norm-following” beings (Barandiaran and Egbert, 2014). The most convincing conceptual markers grounding this longstanding dichotomy are generally to be found in the widely shared reluctance to attribute “problems” and “errors” to behaviors exhibited by machines, computers, and algorithms (Bates, 2014). Thus, technical errors are usually thought to be

Contemporary algorithmic systems appear to further complicate any a priori partitions between distinctively organic and machinic behaviors. Indeed,

The case of collaborative filtering recommender systems deployed on commercial platforms such as YouTube or Netflix can help us ground these considerations (Seaver, 2018). In a nutshell, these systems seek to foster unprecedented interactions between items and users by looking at past interactions between similar items and users. Practically speaking, the

The distinction between norm-instituting and norm-following can be further understood as a difference between two processes of determination. Most technical entities, it has been argued, exhibit a relative “margin of indetermination” that makes them more or less receptive to “external information” and responsive in terms of “internal transformations” (see Simondon, 1958: 134–152), e.g. the progressive wear and tear of a bolt connecting metal parts allows for their mutual adjustment, an engine’s governor constantly regulates the train’s speed despite changes of load and pressure, a warehouse logistically adapts to a changing book order, a recommender system responds to newly received interactions. The distinction between norm-instituting and norm-following can thus be reframed as a distinction between two kinds of determination: a determination will be said “convergent” or “divergent” depending on whether it

In this light, the singularity of machine learning applications should be understood in terms of the relative significance of the margin of indetermination exhibited. Indeed, in most machine learning applications, the behavior of the algorithmic system (i.e. the specific predictions displayed) may be periodically transformed (i.e. the values of the model are updated) depending on the relevant incoming information (i.e. particular sets of data). By and large, engineering work is dedicated to restraining the recommender system’s variations—instituting metrics, defining error functions, cleaning datasets—and aligning them with the companies’ interests. On the one hand, the determinations of learning may be seen as converging toward rather specific pre-instituted norms. However, the actual learning process, as Adrian Mackenzie (2018: 82) has argued, has more to do with stochastic function-finding than with deterministic function-execution—the resulting model corresponds to one among many possible configurations (see also Mackenzie 2015). Thus, and on the other hand, the concrete determinations of the system’s behavior can hardly be seen as converging toward any pre-instituted norms. 8

Let us take a closer look at the actual processes that unfold when producing recommendations. Collaborative filtering techniques typically seek to achieve a delicate balance between sameness (always recommending similar items) and difference (only recommending different items) solely by looking at and drawing on patterns of interactions between users and items. The collaborative machines—that may well be instantiated by many different techniques, such as k-nearest neighbors, matrix factorization, neural networks—are usually interpreted by recommendation engineers as capable of producing models that represent

If, indeed, the very process of learning rests on the possibility of erring (Vygotsky, 1978), we propose, following David Bates (Bates and Bassiri, 2016; see also Malabou, 2017), to seize both entangled senses in which erring may be understood: erring as “behaving in unexpected ways” (i.e. errancy), and erring as “susceptible of being corrected” (i.e. error). In both cases, erring supposes that a relation between an individual and an environment be experienced as problematic, that the norms instantiated within behavioral performances are experienced as only certain ones among many possible others. The passage from error to errancy depends on recognizing social activity as open-ended enough for error to appear not only as the mere negation of a norm, but rather as the affirmation of a different norm. We would like to suggest, here, that a more cautious look at learning processes could help us frame these algorithmic systems

Two remarks should suffice here to pin down what is at stake. First, the overall learning process is open-ended: although each learning step stops when the model’s parameters minimize error and overfitting, the overall learning process consists of an indefinite sequence of learning steps, which are periodically picked up again once there is enough fresh data from incoming interactions. Second, the recommendations are “moving targets” (Hacking, 1986): the learning process is shaped by interactions as much as it shapes interactions, i.e. recommender systems learn

The algorithmic system can therefore be seen as genuinely

Thus, the notion of behavioral normativity leads us to reconsider how the redistributions of norm-following and norm-instituting behaviors between humans and machines actively reshape social activities. We might ask then: “What is learning and where is learning occurring within the behavioral distributions of a given activity?” In doing so, the primary

The tension experienced by most people when confronted with technical systems can, in part, be understood in terms of the difficulties they have in experiencing and making sense of how, where, and when machine behaviors perform with ours. The cultural images of technical systems have traditionally allowed us to stabilize the perceptive and motor anticipations determining our relationships with them, e.g. the individual body performing with a simple tool or the industrial ensemble organizing human and machine labor (Simondon, 1965-1966; Leroi-Gourhan, 1965). The problems, in the case of learning machines, are tied to the variable distributions of human and machines behaviors (Collins, 1990: 14) and to the unfamiliar ability algorithmic systems have of instituting norms. The tension can thus in part be reframed as the problem of understanding these behaviors in relation to the social activities within which they unfold and that they take part in shaping.

The concept of behavioral normativity foregrounds the importance of social activity and the afferent margin of indetermination it allows behaviors to inform. If there is no room left for error, then all behaviors that do not execute or follow the established, programmed norm will be disregarded or repressed as useless or inefficient. If, on the other hand, those behaviors that do not perform as expected are attributed value and attended to, they can participate in instituting a new norm-following dynamic. Thereby, machine learning forces us to reconsider long standing divides between machines’ and organisms’ behaviors, between those behaviors that repeat and those that invent norms. Our conceptual proposition invites alternative and more demanding normative expectations to bear on engineering and design practices whereby the margin of indetermination of an algorithmic system would be increased rather than reduced.

Conclusion

The overall aim of this paper was to approach algorithmic normativities in a different light, with different questions in mind, with different norms in sight.

The first investigation into socio-technical normativity showed how engineering practices come to stabilize and embed norms within certain systems by setting up plans or programs for learning machines to execute (metrics, ground truth dataset, optimization function). The socio-technical perspective, although a necessary starting point, is insufficient if taken in and of itself. Indeed, it demands that we be able to define what is “social” and what is “technical” within a given system, and how their given normativities come to be entangled. In this light, we proposed to qualify social and technical normativities in terms of operations they perform, rather than properties they possess.

This led us to look at different algorithmic systems from the standpoints both of their

This normative pluralism can help understand how contemporary algorithmic systems, comprised of multiple structures and operations, simultaneously fulfill engineering aims, express technical resistances and participate in ongoing social processes. Generally speaking we can advance that:

the aims engineers pursue always depend upon certain algorithmic capacities, in this sense algorithmic techniques normalize engineering practices (i.e. the objective function always depends on an efficient learning rule); the socio-technical system’s behavior is subject to engineering aims that normalize its learning activity (i.e. the metrics and the ground truth datasets determine what counts as an error); the algorithmic system’s learning processes unfold genuine norm instituting behaviors (i.e. the system’s outputs periodically affect the very activities that have to be learned).

The value of our approach lies in its ability to provide a nuanced account of what is too often presented as

Footnotes

Acknowledgement

We would like to thank our friends and colleagues Nathan Devos and François Thoreau for discussions and criticisms, the two anonymous reviewers that made very productive critiques and suggestions, as well as the editors of this issue, Francis Lee and Lotta Lotta Björklund Larsen.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This paper was in part made possible thanks to the support of the Belgian FRS-FNRS funded research project “Algorithmic governmentality” which sponsored both Jérémy Grosman's and Tyler Reigeluth's PhD research. In addition, J. Grosman benifited from H2020 funding made available by the European Commission for the “Privacy Preserving Protection Perimeter Project” and “Pervasive and User Focused Biometrics Border Project”.