Abstract

The rapid expansion of Artificial Intelligence (AI) in the workplace has significant political implications. How can we understand perceptions of both personal job risks and opportunities, given each may affect political attitudes differently? We use an original, representative survey from Great Britain to reveal; (i) the degree to which people expect personal AI-based occupational risks versus opportunities, (ii) how much this perceived exposure corresponds to variation in existing expert-derived occupational AI-exposure measures; (iii) the social groups who expect to be AI winners and AI losers; and (iv) how personal AI expectations are associated with demand for different political policies. We find that over 1-in-3 British workers anticipate being an AI winner (10%) or loser (24%) and, while expectations correlate with classifications of occupational exposure, factors like education, gender, age, and employment sector also matter. Politically, both self-anticipated AI winners and losers show similar support for redistribution, but they differ on investment in education and training as well as on immigration. Our findings emphasise the importance of considering subjective winners and losers of AI; these patterns cannot be explained by existing occupational classifications of AI exposure.

Keywords

Artificial intelligence’s (AI) potentially transformative impact on global economies has been likened to a new industrial revolution (Devlin, 2023), with some estimates suggesting that up to 40 per cent of jobs globally – 60% in rich countries – are exposed to these technologies (Cazzaniga, 2024). How could this shape politics? There is ample evidence that economic transformations can have major electoral consequences. Long-term shifts like the expansion of home ownership, graduate-professional employment and the female labour force have reshaped politics (Beramendi et al., 2015; Iversen and Soskice, 2019). Rapid shifts in deindustrialisation, globalisation, and automation are associated with influential economic and cultural grievances (Baccini and Weymouth, 2021; Green et al., 2022; Owen and Johnston, 2017; Scheiring et al., 2024; Thewissen and Rueda, 2019; Walter, 2017). These shifts produce winners and losers, and the political mobilisation of both can be electorally effective (Colantone and Stanig, 2018; Frey et al., 2018; Gallego, Kuo, et al., 2022; Gallego, Kurer, et al., 2022; Steiner et al., 2024; Van Overbeke, 2024;Walter, 2017).

The existing literature identifies the political consequences of technology-induced employment shocks more broadly (see Gallego and Kurer’s, 2022 overview), but there is currently little research on AI specifically. What does exist suggests AI’s expansion could be effectively politicised (Borwein et al., 2024, 2025). AI might impact the employment prospects of very different sorts of citizens than previous waves of routine-biased technological change, with several studies emphasising the exposure of highly educated, high-earning, knowledge-industry professionals (Felten et al., 2021; Gmyrek et al., 2023; Pizzinelli, 2023; Schendstok and Wertz, 2024; Webb, 2020; cf. Goos et al., 2014). This raises the potential for a more thorough realignment of the pro-/anti-redistribution coalition than with previous innovations.

This is more likely if exposure impacts perceptions of personal ‘winner’ or ‘loser’ status. Given that AI is an emerging transformation, and given variability in how people perceive economic threats generally (Green et al., 2024), it is crucial to understand perceptions of risk, and benefits, and whether researchers can be confident that occupational AI-exposure measures align with those perceptions. Such measures may or may not align with subjective assessments, but the latter will drive any political consequences.

Accordingly, we use an original survey from Great Britain which investigates whether assessments of personal AI exposure are predominantly negative or positive; how those assessments are aligned with expert-derived AI-exposure measures; which social and economic groups feel positively and negatively exposed; and the extent to which the political attitudes of these groups vary. We find that over 1-in-3 British workers anticipate being an AI winner (10%) or loser (24%) and, while expectations correlate with objective occupational exposure, factors like education, gender, and age also matter. Politically, subjective AI ‘winners’ and ‘losers’ are equally supportive of redistribution but differ on immigration and investment in education. These findings are useful for considering the potential political implications of AI.

Data and methods

We surveyed 4,249 currently employed respondents aged 18–69 who participated in Wave 1 of the nationally representative Nuffield-JRF Economic Insecurity Panel Survey (2024). 1

To gauge subjective AI exposure, we asked: ‘Do you think the following (“The use of Artificial Intelligence in my area of work”) will increase, decrease, or have no impact on your employment prospects?’, coded from 1 = worsen my job prospects a lot (2 = a little), to 5 = improve my job prospects a lot (4 = a little). We classify perceived, prospective ‘AI-winners’ as answering 4 or 5 and prospective ‘AI-losers’ answering 1 or 2, compared to the middle category, ‘have no impact on my job prospects’. We also present results for the uncertain (‘don’t know’).

To link existing expert-derived AI-exposure scores, respondents’ descriptions of their job titles and duties were matched to the UK-government’s ‘SOC2010’ employment schema. With the assistance of established cross-walks (Dickerson and Morris, 2019; ONS, 2020), occupation codes were then mapped onto the US government’s ‘O*NET-SOC2010’ and International Labor Organisation’s [ILO’s] ‘ISCO-08’ schemas. 2 This facilitated linking respondents to the AI-exposure indices developed by Felten et al. (2021), Pizzinelli (2023), and Gmyrek et al. (2023), respectively. These three measures reflect distinct conceptualisations of how jobs might be ‘affected’ by AI, as opposed to automation or robotisation more generally (as with the ‘routine-task intensity’ measures used by many previous studies of technological change).

The Felten et al. (2021) measure of AI Occupational Exposure [AIOE] scores jobs based on the overlap between 10 expert-assessed potential capabilities of AI (e.g. language modelling or reading comprehension) and O*NET’s list of up to 52 abilities needed by employees to perform occupations (e.g. oral expression or manual dexterity). The standardised index does not distinguish between AI technology that might ‘augment’ or be ‘complementary’ to current human labour and that which could ‘substitute’ for it completely (‘automation’). 3

Pizzinelli (2023) supplement the AIOE with O*NET data on the education and training required for jobs, and the broader context in which abilities must be used. They argue that extended professional development can enhance workers’ ability to utilise AI, while physical and social constraints – such as harsh outdoor environments, situations where others must be motivated, empathised with, or convinced, or critical areas requiring accountability – limit the feasibility of deploying non-human actors, regardless of their abstract capabilities. In such cases, AI will more likely complement humans than substitute for them. Pizzinelli (2023) distinguish occupations that demonstrate higher and lower AI-exposure as well as where AI demonstrates ‘high’ or ‘low’ complementarity to incumbent workers.

Gmyrek et al. (2023) bypass expert forecasts and ask AI software (GPT-4) to evaluate its own ability to perform specific tasks in ISCO occupation descriptions (e.g. ‘piece together components in production lines’ or ‘assign and grade homework’). They classify jobs by combining the mean and standard deviation of their task’s ‘automatability’. Low means and low variability indicate being unaffected, while high means and low variability suggest ‘automation potential’. Occupations with low means and high standard deviations (i.e. certain tasks are automatable but not most) show ‘augmentation potential’, where AI might handle routine, mundane tasks, freeing incumbent human workers for creative or specialised work. High means and high variability suggest unpredictable, idiosyncratic effects.

These three indices overlap partially: jobs focused on information processing are consistently high exposure, while those requiring physical exertion and manual dexterity are low exposure. However, using all three minimises the risk that any correspondence between subjective and ‘objective’ exposure is due to any single index. Appendix A further describes results using the additional alternative objective indices of Schendstok and Wertz (2024) and Webb (2020). We also matched occupations to measures of routine task-intensity [RTI] and vulnerability to offshoring, based on work by Acemoglu and Autor (2011) and Goos et al. (2014) and Blinder (2009), respectively. This allows us to distinguish exposure to AI from employment threats stemming from the earlier automation of routine tasks, as well as work being moved out of one’s country altogether. Both were sourced from Owen and Johnston (2017).

We start by illustrating respondents’ AI pessimism and optimism, before linking these evaluations with our ‘objective’ AIOE indices. Next, we identify demographic patterns in pessimism and optimism, before and after accounting for objective exposure. Finally, we examine whether self-identified AI winners and losers differ politically. We ask ‘How much would you support or oppose a government doing each of the following’, where 1 = strongly oppose to 5 = strongly support (don’t knows excluded), with answers given to: ‘Redistributing incomes from the better off to those who are less well off’, ‘Raising taxes to increase spending on free adult education and re-training’, and ‘Making it easier for immigrants to come to Britain to work’. These policies were selected because ‘tech-losers’ often prioritise compensatory social consumption spending and curbs on further competition from human labour, whereas ‘winners’ prefer social investment spending (e.g. Busemeyer and Tober, 2023; Thewissen and Rueda, 2019; Wu, 2023). 4 Our study enables us to say whether this holds true for AI too, despite the (anticipated) different make-up of the ‘winners’ and ‘losers’.

Analyses include, as relevant, controls for demographics: age; gender; university graduate status; employment status (full time or part time); employment sector/type (private; public; charity; self-employed/employer; other); and equivalised household income quintile. When modelling political attitudes, we also control for left-right self-placement (an ordinal measure from 1 = left to 7 = right, or ‘don’t know’), and party identification. All our models are presented stepwise, proceeding from bivariate associations.

Results

Figure 1 shows the percentages of respondents who believe that AI expansion will worsen, improve or have ‘no impact’ on their job prospects. Each row sums to 100, with gaps representing ‘don’t know’ responses. We compare these results to similar questions on the effects of immigration, general technological advances, more cheap imported products, carbon reduction efforts, and a recession (randomly ordered; see Figure 1 for exact wording). Beliefs about how different economic-political trends might impact one’s own job prospects.

AI is widely seen as worsening job prospects, with 24% expressing personal concern – higher than for general workplace technological advances (17%), immigration (19%) or cheap imports (11%), which much ‘grievance politics’ research focuses on, and only lower than fears of a recession. Only 10% of British workers believe that AI’s expansion in their area will improve their job prospects, which is slightly lower than for general technological advances (16%). Nearly half (47%) predict ‘no impact’ of AI, though this is still lower than for all other perceived threats, bar recession. There are similar ‘don’t know’ rates (17–20%) across topics. This indicates widespread awareness of AI, relatively high total subjective personal occupational exposure (c.1-in-3), and greater AI-pessimism, given subjective AI ‘losers’ outnumber ‘winners’ 2.5:1.

Distribution of subjective exposure to AI by objective occupational exposure.

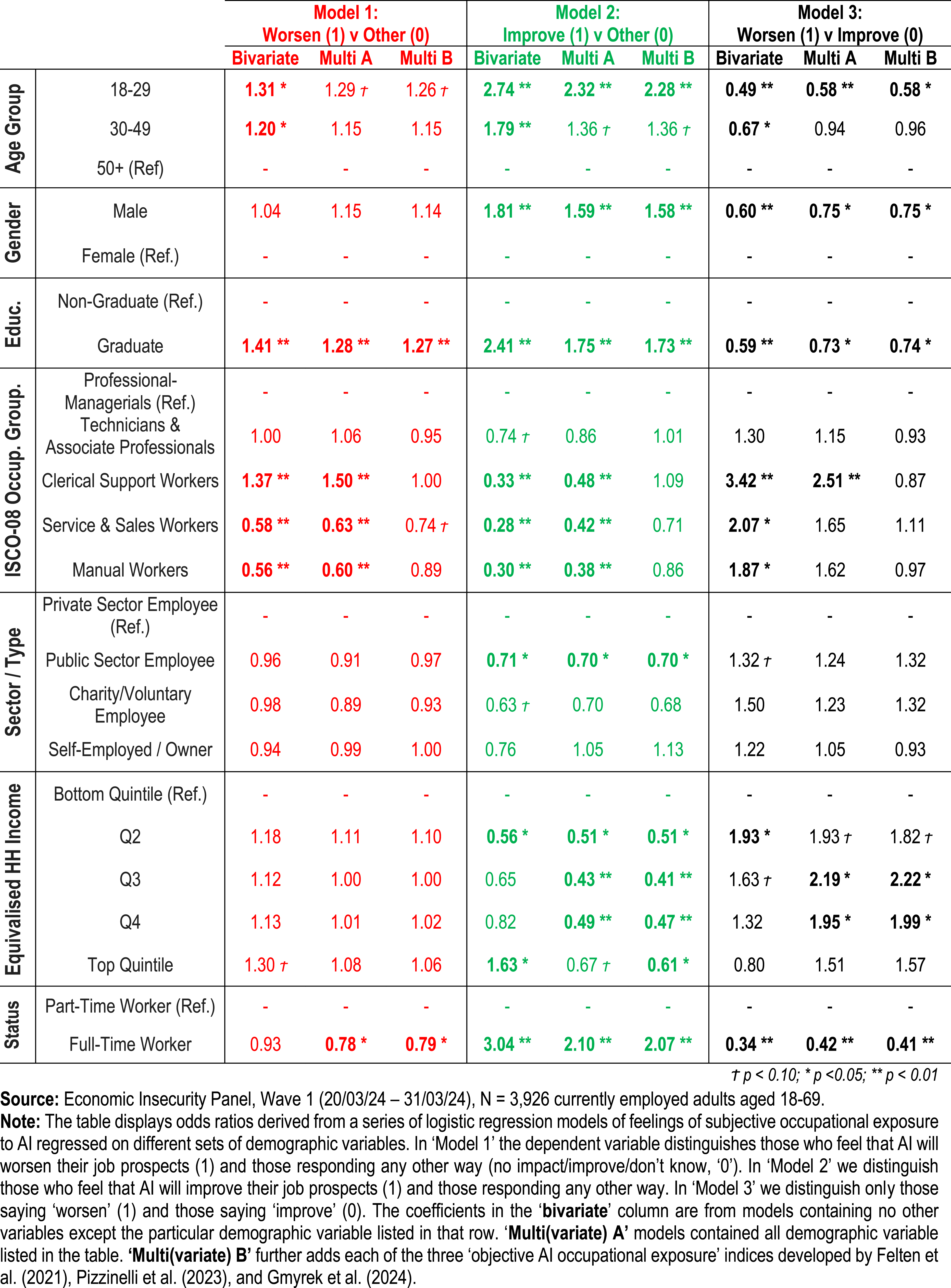

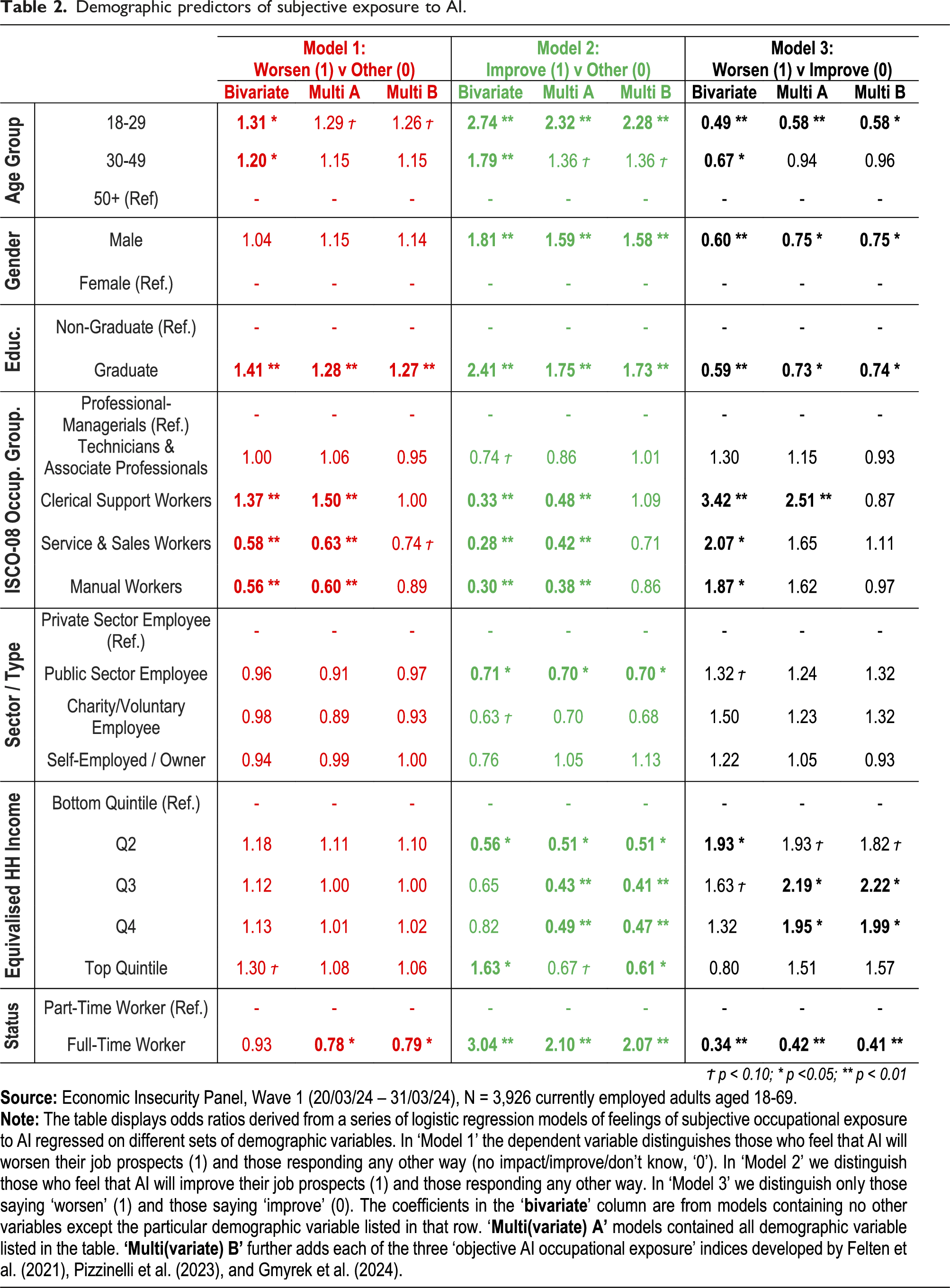

Demographic predictors of subjective exposure to AI.

Felten’s AIOE index is robustly associated with AI optimism and pessimism but cannot consistently distinguish the two when compared directly. Pizzinelli et al.’s adjustment helps here. Where AI demonstrates ‘low complementarity’ to labour (i.e. greater risk of substitution), workers are more likely to say AI will worsen their job prospects than improve them, controlling for demographics; however, not when also including RTI and offshoring proxies for previous economic risks. Gmyrek et al.’s formula best distinguishes AI pessimists and optimists. Those with ‘automation’ risks are consistently more likely to believe that AI will worsen their job prospects – and less likely to believe it will improve them – than those classified as unaffected or with AI ‘augmentation’ potential. That said, while objective exposure aligns with subjective awareness, it does so imperfectly. Substantial minorities in occupations currently considered relatively unaffected by AI nevertheless feel concerned (or, to a lesser extent, pessimistic/optimistic). This underlines the value of understanding subjective AI exposure independent of present occupation.

To assess which social groups see themselves as exposed to AI, positively or negatively, Table 2 presents the results of several logistic regression models examining the relationship between demographics (those in Table 1 plus a 5-category version of the major ISCO-08 occupation schema) 7 and AI risk perceptions. Specifically, beliefs that AI will worsen (Model 1) or improve (Model 2) one’s job prospects versus any other response (i.e. AI ‘losers’ and ‘winners’), or ‘worsen’ v ‘improve’ (Model 3). We present the bivariate associations and then those from models controlling for all other demographic variables listed (‘Multivariate A’), and then also the three objective occupational exposure indices discussed previously (‘Multivariate B’). These last columns tell us, essentially, who is more positive/negative about AI’s personal impact than we might expect given their present occupational exposure.

Prior to controlling for objective exposure, we see evidence both of intra and inter-group polarisation. Younger people (<30), graduates, and professional-managerial workers (relative to service, sales and manual workers) are over-represented among both the subjective AI ‘winners’ and ‘losers’. In contrast, men are slightly more likely to perceive themselves as ‘winners’, and middle-income respondents and those in the public sector are less likely, but gender, income and sector do not strongly predict subjective ‘loser’ status. The only group consistently more likely to see themselves as ‘losers’ and less likely to identify as ‘winners’ are clerical workers. Observing subjective winner/loser status conditional upon any subjective exposure (Model C), the young, men, graduates, professional-managerial and low-income workers are more likely to say AI will ‘improve’ rather than ‘worsen’ their prospects. Comparing ‘Multivariate A’ and ‘B’, most previously significant relationships remain after controlling for objective occupational exposure; however, the impact of broader occupational group is nullified (understandably, given both are based on present job). All told, these findings accord with the research on objective AI exposure – which suggests that it is highly educated professionals and mid-ranking clerical workers who are most exposed (Felten et al., 2021; Gmyrek et al., 2023; Pizzinelli, 2023; Schendstok and Wertz, 2024; Webb, 2020). Using questions on subjective exposure to other ‘shocks’ (see Figure 1) Appendix D highlights how these findings depart from the literature on immigration and trade grievances, where usually working-class, low income, non-graduates feel most threatened (Dancygier and Walter, 2015; Steiner et al., 2024; Walter, 2017).

The political implications of AI depend on political supply (competition around AI, and the politicisation of any associated grievances) as much as voter demand. However, we can gain insight into the potential for demand-side factors by understanding whether subjective AI winners and losers hold distinct preferences. Figure 2 demonstrates the association between expectations and support for government redistributing incomes, spending more on adult education/training, and liberalising immigration. Our goal is not to estimate AI-exposure’s causal effects or exhaustively map its political correlates. We simply describe the preferences of those most impacted on three variables tied to broader left-right and liberal-authoritarian values and previously utilised in studies of new technology’s political consequences (e.g. Busemeyer and Tober, 2023; Gallego et al., 2022a; Im, 2021; Thewissen and Rueda, 2019; Wu, 2023).

8

We report bivariate relationships (M1) and models controlling for demographics (M2), left-right ideology and partisanship (M3), and objective exposure (M4). Subjective exposure to AI and selected political preferences.

In our bivariate models, AI winners and losers show no difference in demand for redistribution, but both ‘exposed’ groups are more enthusiastic than those expecting ‘no impact’, although this difference falls just short [p = .066] of significance for AI winners. We see considerably more polarisation on government investment in adult education and training and on immigration. Here, subjective AI winners are more supportive than other groups, including AI losers. Introducing demographic controls shrinks the size of these effects somewhat (although AI-losers are also significant more hostile to immigration than the unaffected after conditioning on education, specifically), but the patterns remain. That they persist despite controlling for current ‘objective’ expert projections of potential occupational AI exposure highlights the value of interrogating ordinary citizens’ beliefs as these technologies become more widely known.

Collectively, our results show similarities and contrasts with the existing literature on technological change. Prior studies have also shown that objective occupational exposure to technology (e.g. automation) is often linked to subjective awareness, and that ‘losers’ demonstrate more support for compensatory social insurance (e.g. redistribution), but less support for investment in education or for increased immigration (Busemeyer and Tober, 2023; Im, 2021; Thewissen and Rueda, 2019; Wu, 2023, although cf. Gallego et al., 2022a). Unlike these, however, our study also emphasises the disproportionate feelings of AI-exposure among the highly educated; how subjective ‘winners’ and ‘losers’ are often concentrated in the same social groups; and that AI ‘winners’ are equally supportive of redistribution as AI ‘losers’.

Conclusions

Artificial Intelligence is advancing rapidly, with potential political impacts comparable to previous economic transformations. This paper contributes to understanding this emerging technological shock by analysing an original survey of adult workers in Great Britain. It yields several insights.

First, people hold AI-related perceptions of job risks and job benefits. That is more evident than for other types of shocks, such as increased immigration, or cheaper imports. Crucially, we show that these combined risks and benefits are perceived by the same groups; younger people, graduates, and those in professional-managerial occupations. Besides clerical workers, no demographic group is uniquely over-represented among AI ‘losers’ while underrepresented among AI ‘winners’.

Second, research could benefit from using subjective assessments of job threats and benefits. While existing AI occupational exposure measures correlate with perceived exposure, mismatches exist. Some exposed workers remain unaware, while others fear or welcome AI despite experts deeming them unaffected. Understanding these differences is instructive. Longitudinal research tracking rising familiarity with AI’s impacts would be useful, as would additional country cases.

Finally, self-identified AI ‘winners’ and ‘losers’ are politically distinguishable. While both demonstrate similar support for redistribution, ‘winners’ are considerably keener on social investment in education and liberalising immigration. Whether these distinct preferences will influence party alignment will be dependent on if, and if so how, any risks of AI are politicised. Understanding that potential first requires a better understanding of subjective risks and benefits, as we provide here. Future research could explore AI regulation support, as Gallego et al. (2022a) have for new technology more generally, or experimentally manipulate AI exposure, following Borwein et al. (2024). Benchmarking pocketbook concerns about AI against sociotropic considerations (both economic and sociopolitical) would clarify the key factors shaping public attitudes here.

Supplemental Material

Supplemental Material - Linking artificial intelligence job exposure to expectations: Understanding AI losers, winners, and their political preferences

Supplemental Material for Linking artificial intelligence job exposure to expectations: Understanding AI losers, winners, and their political preferences by Jane Green, Zack Grant, Geoffrey Evans and Gaetano Inglese in Journal of Research & Politics.

Footnotes

Acknowledgements

We are grateful for comments at the European Political Science Association (EPSA) annual conference, 2024, the Elections, Public Opinion and Parties (EPOP) annual conference, 2024, and the Lund University Economics and Politics workshop, 2024.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Funding for this project was generously provided by the Joseph Rowntree Foundation (a charity registered in England, Wales, and Scotland under numbers 1184957 and SCO49712, of The Homestead, 40 Water End, YORK, YO30 6WP) as part of the larger ongoing research project, ‘Economic Insecurity: Finding Answers and Solutions’ (2023–2025).

Ethical statement

Data Availability Statement

Replication materials (data set, syntax files, explanatory memo; Stata log files) have been provided alongside this submission. We are happy for this material to be made publicly accessible – at any preferred relevant public data repository – in the event of the acceptance of our article.

Supplemental Material

Carnegie Corporation of New York Grant

This publication was made possible (in part) by a grant from the Carnegie Corporation of New York. The statements made and views expressed are solely the responsibility of the author.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.