Abstract

This study explores the analysis of public reactions to presidential debates using a largely untapped social media data source—YouTube comments. My findings reveal a striking consistency in topics across YouTube channels, pointing to a shared perspective among viewers that goes beyond ideological differences. These results question the assumption that online behavior mirrors traditional media preferences, suggesting that viewers may not apply ideological biases in their selection of YouTube content, instead influenced by accessibility or algorithmic recommendations. My findings also highlight the potential of leveraging social media for identifying significant voter issues and concerns. Lastly, I highlight the distinctive nature of YouTube data and the potential of researchers to utilize it, especially in light of the limited availability of Twitter data.

Keywords

Introduction

Partisan media is on the rise; there is an increasing number of ideologically slanted outlets and political commentators gaining influence online (Xu et al., 2020). Lazarsfeld et al. (1948) and Stemple (1961) demonstrate selective exposure in the offline environment, meaning that participants are more likely to encounter information that affirms their existing beliefs than information that challenges their beliefs. Naturally, the emergence of online forums where people can both consume information and spread information via posts, tweets, and comments raises the possibility of selective exposure within such venues. Specifically, when commenting online, people may purposefully select videos or tweets they find appealing or agree with when deciding to comment on topics. For instance, conservatives might choose to comment on videos posted by Fox News accounts because they perceive the outlet to be more agreeable. Liberals might choose to watch CNN videos expecting more comments on topics that matter to them.

This study investigates the degree to which online users exhibit this variant of selective exposure. To do so, I examine a data source not typically utilized by social media research, YouTube comments, and focus on the first presidential debate of the 2020 United States election cycle. The goal is to determine whether there are noticeable variations in dialog among viewers on YouTube channels that host these debates. I consider questions such as whether discourse differs between viewers of the Fox News YouTube channel and viewers of CNN’s YouTube channel, and whether certain topics are more prevalent in discussions among viewers of one channel over another. For instance, is there a higher prevalence of user comments about the Black Lives Matter movement under CNN compared to Fox News?

The findings of this paper indicate people do not disproportionately post comments on different topics on specific YouTube news channels. Across mainstream media channels in the dataset, topic modeling shows almost no variation in the proportion of topics found in comments. Similarly, the analysis reveals only two channels deviate from others regarding sentiment score patterns in comments. Further analysis on previous debates in 2012 and 2016 replicates and extends these findings 1 .

Selective exposure in the digital world

The first presidential debate of the 2020 election was a pivotal event, unfolding against the backdrop of a global pandemic and a significant civil rights movement (Breuninger and Wilkie, 2020). Featuring candidates Donald Trump and Joe Biden, the debate was particularly tumultuous, marked by frequent interruptions and verbal exchanges that deviated from traditional debate decorum. In addition, Chris Wallace was unusually participatory in the debate for a moderator (Breuninger and Wilkie, 2020). Following the conclusion of the debate, numerous international media outlets voiced concerns over its implications for American democracy, labeling the event a “national humiliation” for the United States (Lahut, 2020). These provocative circumstances create ideal conditions in which we would expect to see increased levels of polarization in comments across different channels.

Leveraging YouTube data to analyze reactions to political content brings distinct advantages to contemporary research on selective exposure and public sentiment, especially in the context of US Presidential debates. In contrast to traditional post-event surveys where respondents rely on their potentially faulty recollection, YouTube comments capture spontaneous reactions, which not only occur in “real time” (i.e., when the respondent reacts to a specific part of the debate) but are also unprompted. Unlike survey methodologies constrained by predetermined questions, social media analysis allows researchers to uncover issues or aspects of the debates that viewers independently choose to highlight. This form of spontaneous feedback can surface concerns or points of interest that might not be captured through structured survey instruments. Additionally, evidence of comment content variations between ideologically driven channels (e.g., CNN and Fox News), compared to neutral outlets (C-SPAN), would suggest a distinction in their respective viewer bases. For instance, a higher frequency of discussions of Black Lives Matter on CNN might indicate that CNN viewers are more interested in that topic and are also more comfortable expressing themselves on a channel they perceive to be more receptive to their ideas.

Many scholars find evidence of selective exposure’s prevalence among online information seekers (An et al., 2013; Bastos et al., 2018; Cinelli et al., 2020; Ohme and Mothes, 2020; Weeks et al., 2017). Using Facebook data, An et al.’s (2013) work demonstrates that users mainly share news articles that align with their views and avoid those that conflict, with partisans being especially prone to this behavior. Additionally, Weeks et al. (2017) find that when strong partisans are incidentally exposed to opposing views, they are more likely to actively search for and share content that aligns with their political beliefs.

On the other hand, some researchers cast doubt on the prevalence of selective exposure. Feldman et al. (2013) finds that methodological choices can significantly impact individual’s choices in selective exposure experiments, and Fletcher et al. (2020) contend that the level of polarized news engagement is overestimated 2 . Indeed, numerous studies using varied methodologies and data sources reveal that while individuals often consume news from sources matching their political views, they also regularly engage with centrist and ideologically opposing outlets (Eady et al., 2019; Guess, 2021; Wittenberg, 2023; Yang et al., 2020). Moreover, Taneja et al. (2018) and Mangold et al. (2022) find that social media’s broad, overlapping networks of users facilitate exposure to diverse news sources and viewpoints beyond users’ close connections.

In short, current research is divided when it comes to evidence of online information seeking and dispersion. The methodologies used in such literature vary widely, including experiments, traditional surveys, and social media analyses (Cinelli et al., 2020; Eady et al., 2019; Guess, 2021; Weeks et al., 2017; Wittenberg, 2023). Multiple studies have discussed the role social media plays in political discourse and proposed new methodologies utilizing social media data (Haq et al., 2020; Németh, 2023; Rodriguez-Ibanez, 2021). Many such analyses tend to focus on the platform Twitter (now X), using Natural Language Processing (NLP) to examine political polarization on Twitter (Ali et al., 2022; Belcastro et al., 2020, 2022; Takikawa and Nagayoshi, 2017; Xia et al., 2021). However, the recent unavailability of Twitter data indicates academics must now find a new data source (Fung, 2023).

Research design

To begin, I perform an analysis of the metadata of the videos in question. This approach provides a foundational understanding and adds context to comments. Furthermore, it is important to understand the engagement patterns across different broadcasting platforms, such as which channels capture the most viewer engagement. The analysis next extends to examining sentiments expressed in the comments. This involves assessing tone intensity, gauging whether comments lean more positively or negatively. Additionally, I explore the emotional spectrum within these sentiments (anger, fear, and similar emotions). The final step involves exploratory topic modeling, where I employ the Latent Dirichlet Allocation (LDA) model to discern the variation in topics across different channels (Blei et al., 2003).

While the analysis of the metadata is primarily descriptive, two hypotheses guide the subsequent content analyses grounded in recent work arguing selective exposure is an overestimated phenomenon (Eady et al., 2019; Fletcher et al., 2020; Guess, 2021; Wittenberg, 2023; Yang et al., 2020). The first hypothesis expects minimal variation in sentiment across different channels; the second hypothesis predicts little to no variation in the distribution of topics across channels with differing ideological orientations. That is, viewers tuning into the debate via Fox News’ YouTube channel do not engage in conversations on distinct topics from those who watch on CNN’s platform.

The dataset for this study includes comments extracted from six YouTube videos showing the initial 2020 presidential debate. My selection criteria are that: videos that have high viewership, videos include the debate in its entirety, and come from mainstream outlets. The second criterion avoids selection bias that might arise from videos featuring specific moments casting the candidates in a particularly favorable or unfavorable light. I establish these criteria to capture as many mainstream audience opinions as possible.

2020 Presidential debate YouTube videos metadata.

C-SPAN emerges as the platform with the highest level of viewer and comment engagement, followed closely by Fox News. CNN’s metadata presents an intriguing contrast: despite having the fewest views among the channels analyzed, it contains a substantial number of comments—about 25,000 in total. This suggests that while CNN might not have attracted the largest viewing audience, its viewers are especially expressive. CBS, on the other hand, records nearly 5.5 million views but only a modest 6000 comments, hinting at a less engaged audience in comparison to CNN.

Sentiment analysis

To establish whether variation exists among commenters of different YouTube channels, I perform sentiment analysis. First I employ the VADER (Valence Aware Dictionary and sEntiment Reasoner) lexicon, a dictionary and rule-based tool specifically crafted for social media text sentiment analysis 3 (Jain et al., 2023). VADER has previously captured public sentiment for tweets pertaining to the 2020 US presidential election (Ali et al., 2022; Nugroho, 2021). Nugroho (2021) shows VADER as being effective in predicting actual results of the 2020 election. Unlike other lexicons, VADER is well-suited for texts like social media comments, as it is fine-tuned for sentiments expressed in short-form content (Fontes et al., 2023). Pai et al. (2022) analyzes Twitter data with VADER, and shows the lexicon is more efficient than traditional algorithms. Thus, while VADER is a simple solution for performing sentiment analysis on social media posts, in the case of YouTube and the 2020 election, it is an effective one.

Figure 1 presents the VADER sentiment analysis outcomes across channels during the 7 days surrounding the debate. For context, recall C-SPAN received an overwhelming 80,000 comments, in contrast to CBS News’s 6,000, explaining the significant difference seen along the y-axis. The primary focus is on identifying sentiment patterns across channels. Notably, for C-SPAN, CBS News, and NBC News, positive sentiments marginally surpass negative ones, with comments in the Wall Street Journal’s video having higher prevalence of positive terminology. Fox News and CNN comments exhibit a higher use of negative language, which could reflect more pronounced partisan or ideological biases associated with these channels. I performed Levene’s test for homogeneity of variance to determine whether the variance seen between Fox News and CNN with the other channels; the results for positive (0.41), negative (0.48), and neutral (0.35) sentiment scores indicate variances between scores are not significant. VADER sentiment analysis of comments across channels over time. The VADER sentiment analysis assigns positive, neutral, and negative scores to words within a text. In this case, each comment was assigned an overall compound score classifying it into one of those categories. Figure 1 shows a breakdown of the number of positive (solid line), neutral (dotted line), and negative (dashed line) comments across channels over a seven day period following the debate.

To delve deeper into the spectrum of emotional sentiment, I employ the NRC Word-Emotion Association Lexicon, which categorizes words into eight basic emotions (anger, fear, anticipation, trust, surprise, sadness, joy, and disgust) and two sentiments (positive and negative) (Mohammad and Turney, 2010). I analyze the text for its overall sentiment polarity and for the specific types of emotions that are being expressed within the text. The analysis with the NRC lexicon in Figure 2 reveals a notable degree of uniformity across channels. Remarkably, the distribution of emotions and sentiment across each channel has minimal variation. NRC sentiment analysis by emotion. The NRC lexicon categorizes words with emotions (anger, fear, anticipation, trust, surprise, sadness, joy, and disgust) and sentiments (positive and negative). Figure 2 reveals the NRC distribution of words in comments from each channel into emotions and sentiments, revealing a remarkable consistency.

Lastly, I employ LDA topic models, which conceptualizes topics as probability distributions over words (Blei et al., 2003). Within this framework, the LDA model posits that each word in a comment can be considered part of a finite set of topics; the inclusion of each word is influenced by its association with one of these topics. After careful consideration, I specified the topic parameter generated by the LDA model to be eleven 4 .

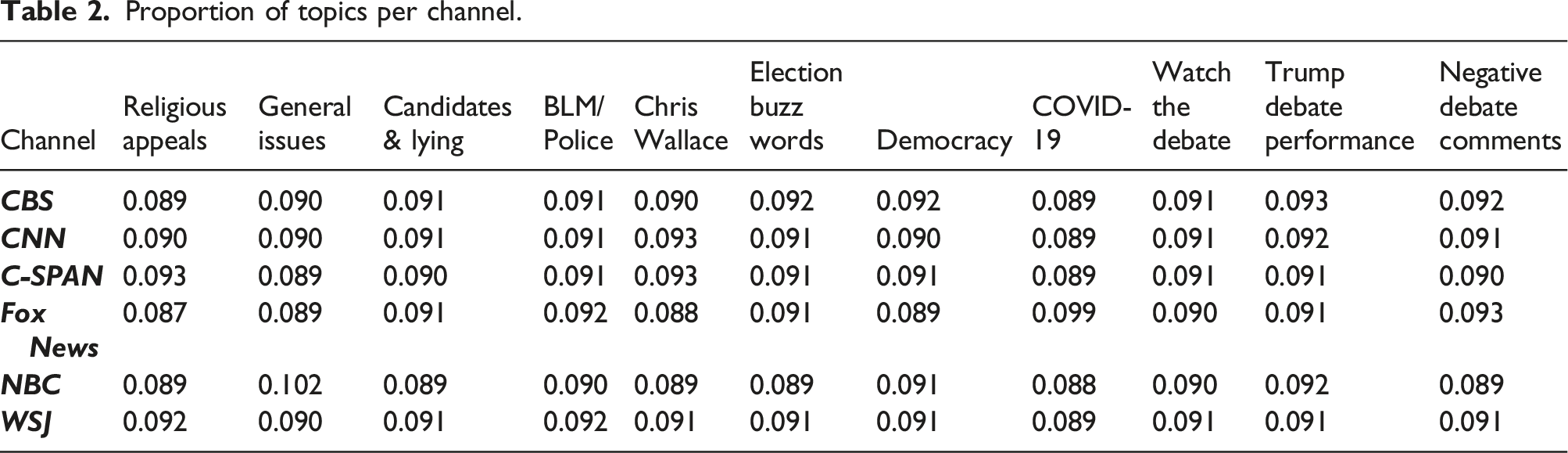

Proportion of topics per channel.

As with the previous sentiment analyses, the core finding here is the uniformity in all topic distributions across different media channels. This uniformity suggests that topics are discussed with similar frequency across channels as diverse as Fox News and CNN. An exploration into whether changing the number of topics (e.g., from eleven to twenty or five) would affect this uniformity yielded no significant changes, indicating a persistent consistency in how topics are proportionately discussed across channels.

Proportion of topics for CNN & Fox News.

Discussion

Utilizing the VADER lexicon to analyze sentiment in YouTube comments, I find that the overall sentiment trajectory is similar across most channels. While Fox News and CNN comments contain more negative language than other channels, Levene’s test for homogeneity of variance shows this distinction is not significant. The NRC analysis comparably showed no distinct variation in sentiment or emotions across all of the channels. Moreover, the investigation into topic distribution via LDA modeling demonstrates a remarkable consistency in topic uniformity across channels. This suggests that despite the diverse ideological orientations of two of the channels analyzed, the discourse within the comments maintains a similar thematic focus. This finding is particularly noteworthy, as it suggests a level of consensus or shared interest among viewers that spans across different media outlets. Furthermore, this finding holds true for analyses performed on the 2012 and 2016 presidential debates.

These results suggest that when commenting online, people may simply opt for the first video that appears in their search results. Viewers may not deliberately choose to watch the debate on a platform like C-SPAN for its inherent qualities but rather select videos based on accessibility or algorithmic suggestions. These findings imply that concerns regarding “partisan echo chambers” and selective exposure may be slightly overstated. In this way, YouTube comments present a viable alternative to Twitter data, providing viewpoints potentially less subjective to bias from selective exposure.

There is much research that outlines various NLP techniques for investigating public sentiment on Twitter (Ali et al., 2022; Belcastro et al., 2020, 2022; Takikawa and Nagayoshi, 2017; Xia et al., 2021). Specifically, many analyses rely on hashtags to identify and classify groups of interest or sentiment in texts (Belcastro et al., 2020, 2022; Ding et al., 2024). Others perform community detection and social network analysis to detect political polarization (Takikawa and Nagayoshi, 2017). However, while these methods are certainly intuitive for analyzing tweets, aspects of their methodological approach are not well-suited for YouTube comments.

In Twitter, hashtags allow users to transform specific terms into searchable links that facilitate the aggregation and discovery of tweets pertaining to similar topics. This feature allows users to easily access, contribute to, and engage with content centered around common discussions. While YouTube technologically supports hashtags, their prevalence is significantly lower compared to Twitter where hashtags are a central feature. Additionally, Twitter enables users to post directly from their accounts onto the platform, whereas YouTube requires comments to be associated with specific videos, thus context dependent. The tagging of other users and the creation of social networks, while possible on YouTube, are also much less common than on Twitter. Consequently, due to platform-specific differences in user interaction and content structuring, methodologies for Twitter analysis cannot be identically employed for YouTube data.

Part of the value of this paper is tapping into this data source that researchers generally do not use. Future scholars should build on build on this approach; leveraging YouTube comments as a resource to identify the topics and issues that hold significance to voters presents a promising research avenue. Understanding the nature of online discourse—ranging from the candidates’ debate performances to the substantive issues raised—emerges as a valuable pursuit. Such an approach not only sheds light on the electorate’s priorities and concerns but also enriches the analytical depth of content analysis in political communication studies. Moreover, the future inclusion of supervised machine learning techniques in this context could significantly enhance the precision and relevance of content analysis. By applying these computational methods, researchers can more accurately categorize, quantify, and interpret the vast and varied data generated by online discussions.

Supplemental Material

Supplemental Material - From the comments section: Analyzing online public discourse on the 2020 presidential debates

Supplemental Material for From the comments section: Analyzing online public discourse on the 2020 presidential debates by John Eaton in Research & Politics

Footnotes

Acknowledgements

I would like to thank Curtis Bram, Vito D’Orazio, and Karl Ho for their feedback and encouragement. I would also like to thank the two anonymous reviewers and the editors of Research & Politics who provided constructive comments that improve my manuscript.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.