Abstract

What do political scientists think about research ethics? What research practices do they find acceptable? Using a survey conducted with the American Political Science Association, we explore perceptions of ethics among 362 political scientists. We find that political scientists do not place much relative weight on ethics when evaluating research. We do, however, find that researchers view different modes of inquiry as having different potential harms. Furthermore, using a conjoint experiment, we find that factors like author affiliation, study location, method, and sample size shape evaluations of study ethicality. Our results contribute to the growing body of metascience by expanding understanding of ethics in political science research.

Keywords

Political science scholars (McDermott and Hatemi, 2020), organizations, and journals have recently attempted to formalize the profession’s research ethics. 1 In addition to having a stated set of ethical principles, the American Political Science Review now specifically prompts reviewers to assess whether submitted manuscripts adhere to ethical principles in their design and execution and asks those who submit manuscripts to affirm and defend the ethical nature of their work. Likewise, Comparative Political Studies states that “papers must transparently address any relevant ethical issues with regard to the research process, whether the research involves human subjects or not. Reviewers should feel free to raise questions about research ethics.” The proliferation of ethical guidelines illustrates a growing interest in ethics.

The political science ethics literature can be divided into three strands. One addresses normative topics or guidelines for best practices or how to consider potential harms (Bernstein et al., 2021; Humphreys, 2015; McDermott and Hatemi, 2020; Teele, 2021; Zittel et al., 2021). A second examines what subjects think about the ethicality of different research designs (Naurin and Öhberg, 2021; Desposato, 2018). A third focuses on the recommendations of individual researchers (Campbell and Bolet, 2022; Landgrave, 2020; Slough, 2019; Woliver, 2002; McClendon, 2012; Nathan and White, 2021).

While ethics research is growing, little empirical work has assessed political scientists’ beliefs about research ethics. This gap leaves the profession’s metascience underdeveloped (e.g., Bisbee et al., 2020; Wilke et al., 2021; Munger, 2020b, 2021, 2020a; Schooler, 2014). Some works have surveyed the research community on their views about research ethics (Bruton et al., 2020; Desposato, 2018; Naurin and Öhberg, 2021) but focused on the role of direct factors about research design (e.g., the presence of deception or participants’ consent) and not indirect factors (e.g., a researchers’ institutional affiliation) that could also influence perceptions of research ethics. Likewise, some research has been conducted to examine economists’ varied perceptions of research practices (Krawczyk, 2019), but again, the focus has been on the use of deception in experimental research rather than on more general ethical considerations. It remains unknown whether substantial levels of heterogeneity exist in political scientists’ views about the importance of ethics and how study characteristics may influence the perceived ethics of research.

There are three reasons to expand study of political scientists’ research ethics beliefs. First, researchers discuss ethical issues when evaluating manuscripts both informally and through the official review process. Understanding perceptions can shed light on how research is evaluated and, subsequently, how it is produced. Second, without understanding researchers’ perceptions, it is difficult to have a meaningful conversation about how current research practices might or should change. Third, and arguably most important, absent an understanding of norms, it is hard to evaluate the extent to which any potential projects of concern exist outside the values of the field. Ethical issues are rarely simple—many ethical issues exist in grey areas that depend on hard-to-quantify costs/benefits and normative issues (Kidder, 1995; Paul and Elder, 2003; Sen, 1999; Sidgwick, 2019; Singer, 2011). These grey areas and perceptions of ethics may influence the types of methods researchers use in their own work, which has significant implications for the range and depth of knowledge that the field can generate. Our goal is not to articulate bounds on what is right or wrong in research. Rather, it is to better understand what political scientists think are acceptable research practices. Though previous work exists (e.g., Desposato, 2018; Naurin and Öhberg, 2021), we make several important contributions about scholars’ perceptions of research ethics.

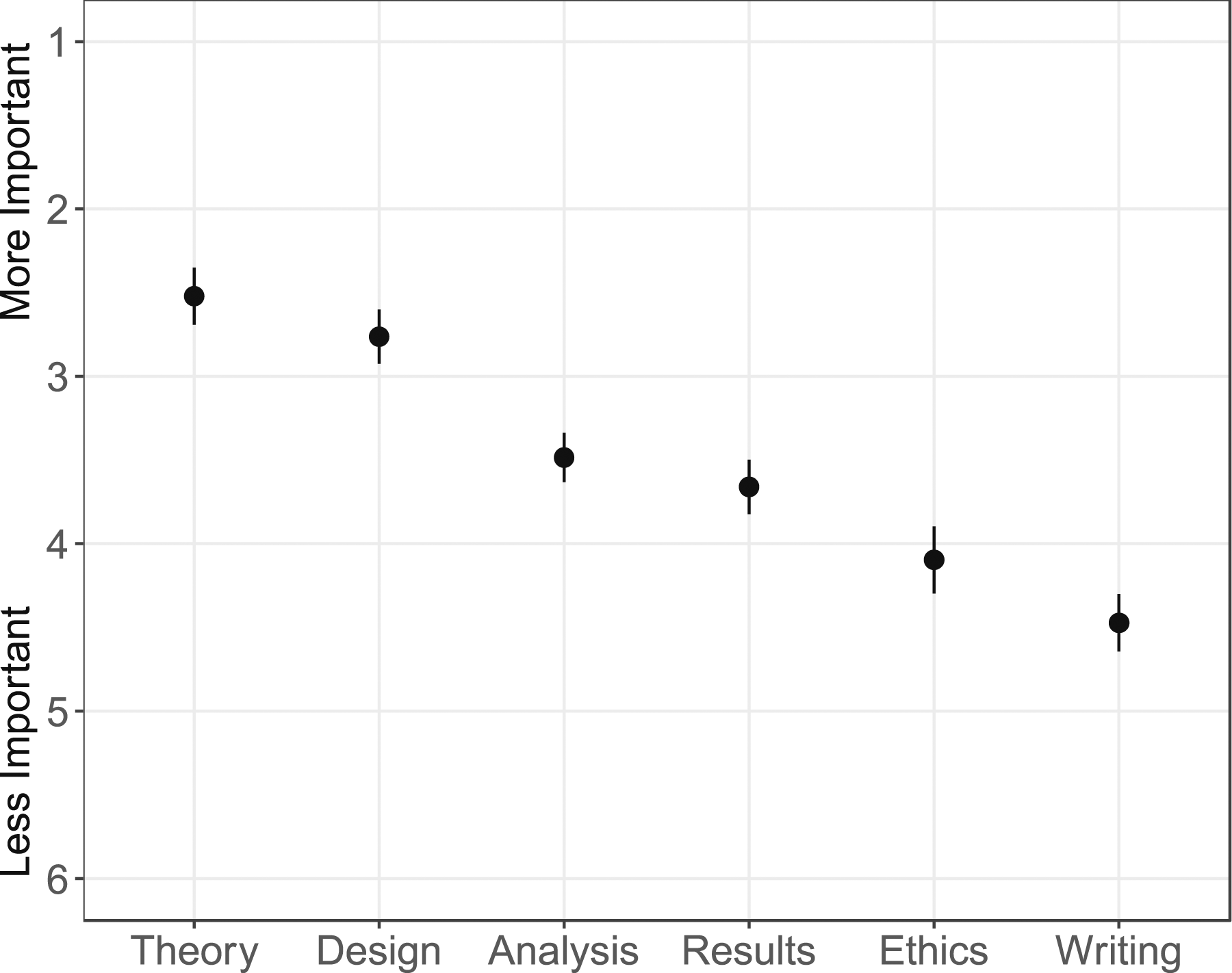

First, we show that researchers do not put much relative weight on ethics when evaluating research, possibly reflecting that most scholars presume that studies follow basic ethical guidelines unless they have a reason to suspect otherwise. The political scientists in our sample, who appear to be representative of the broader APSA membership, rank research ethics below theoretical contribution, research design, analysis, and empirical contribution, on average. This prioritization varies substantially both within and across subfields. Second, we demonstrate that researchers view different modes of inquiry as having different potential harms. Of chief concern are field and lab experiments, which directly manipulate real-world institutions or behaviors. Not all experimental approaches received similar scrutiny. Respondents expressed greater concern about interviews and qualitative work in terms of potential harm to research subjects than audit or survey experiments. Third, we show that several factors not clearly directly related to ethics are associated with perceptions of ethics. Political scientists are more likely to view a study as unethical depending on author affiliation, study location, and the sample size. In contrast, the rank of the scholar and the rank of the publication outlet are not clearly associated with researchers’ considerations. These results suggest that researchers sometimes make inferences about study ethicality based on indirect factors such as author affiliation or based on the mode of study itself.

Taken together, our results illuminate what political scientists believe about research ethics and heterogeneity in those opinions. Our work adds to a growing body of metascience (Ioannidis, 2005; Bisbee et al., 2020; Schooler, 2014; Wilke et al., 2021; Munger, 2020a, 2020b, 2021).

Data

We conducted a survey in collaboration with the American Political Science Association (APSA) in March 2020. A random sample of 3000 (out of 10,504 total) current APSA members were invited to complete our module. A total of 362 respondents consented and completed our module (12.5% response rate). The sample is varied along lines of rank and subfield. Respondents were not compensated for their participation. See the Appendix for more information about the sample, the wording of the survey questions, and a discussion of how our design adheres to ethical guidelines. We included several questions to capture perceptions of research ethics and then presented respondents with a conjoint experiment.

Scholars’ perceptions of research ethics

Respondents ranked the following factors based on how much weight they give them when evaluating research: ethics, quality of the writing, quality of the research design, quality of the analysis, empirical contribution (“results”), and theoretical contribution. Results are shown in Figure 1 in the descending order of mean importance; a score of 1 indicates respondents ranked this item as the most important when evaluating research; a score of 6 indicates respondents ranked this item as the least important. On average, theoretical contribution was ranked the highest (2.5) and the quality of research design was a close second (2.8). Research ethics was one of the least important factors, being ranked only 4.1 on average. Political scientists rank ethics 5th out of six characteristics in evaluations of study quality. Note: Plot shows average ranking on a scale of 1–6 for how much weight each item is given for evaluating research (1=most weight, 6=least weight). Vertical lines represent 95% confidence intervals. Takeaway: Political scientists report that ethical considerations rank below theory, design, analysis, and results when it comes to evaluating studies’ quality.

This shows that ethics is rated relatively unimportant, on average, compared to other characteristics. There are a few different ways to interpret this finding. First, to be sure, that ethics was ranked low could be because much research may not have obvious substantial ethical implications. In descriptive survey-based research, for example, risks to subjects are so minimal that scholars do not think to focus on ethical considerations over other research design considerations when evaluating a study. Indeed, blatantly unethical studies are typically filtered out even before the peer review process, by Institutional Review Boards or colleagues, for example. Research ethics might not be considered important relative to these other characteristics precisely because researchers are seldomly exposed to clear violations of ethical standards. Most studies may be assumed to meet some minimal ethical “bar.” That is, ethical considerations may function in a lexically structured way; they may not be the initial or primary criteria for evaluating research but are nonetheless important, especially if there are glaring issues.

On the other hand, these patterns have implications for how ethics discussions may manifest in evaluative practices. If scholars assume, even correctly, that most research meets a basic ethical threshold, this may inadvertently influence decisions about how to maintain, develop, or enforce high ethical standards in the field. It may also lead graduate students to infer that ethical concerns are secondary. This is not to say that ethical considerations need always be of primary concern for all types of research, but it is important to have a reflection of the current collective perspective about how ethics stacks up against other concerns. If ethics are not a primary consideration when evaluating a study, then it’s difficult to enforce consistent ethical guidelines for research at the discipline level. This may be especially true if there is significant heterogeneity in opinion. As shown in our Appendices (e.g., Figure A3), there is low priority place on ethics on average, but the heterogeneity is large. There is substantial disagreement about the relative importance of ethics as an evaluation tool, even among those who share the same subfield.

The survey included three additional ranking questions. Respondents were asked to rank seven types of common research methodologies “based on the extent to which they potentially create harmful outcomes” for (1) research participants/subjects, (2) society, and (3) the scientific community. Figure 2 indicates that political scientists view different modes of inquiry as having different potential for harm. Field experiments were viewed as the most harmful. Respondents expressed greater concern about interview research and ethnographic work in terms of harm to research subjects than either audit or survey experiments. Concern about ethics in interviewing, ethnography, and other qualitative work is deep and longstanding in the discipline (Gerring and Yesnowitz, 2006; MacLean, 2013). Given recent work on audit study ethics (see Nathan and White, 2021), this is a notable finding. Perceived potential harm of methods to research subjects, society, and scientific community. Note: Plot shows average ranking for how much each methodology harms research subjects (left-hand plot), society (center plot), or the scientific community (right-hand plot) (1 = most potential for harm, 7 = least potential for harm). Vertical lines represent 95% confidence intervals. Takeaway: Political scientists consistently rank field experiments (with the exception of audit studies) and lab experiments as having the most potential for harm and observational designs as having the least potential for harm for research subjects, society, and the broader scientific community.

More research should be conducted to understand what contributes to these perceptions. Are all field and lab experiments viewed as inherently problematic? Or is this a reflection of only certain current practices and executions of field and lab experiments? Unpacking this further could inform guidelines for using these modes of inquiry while lessening the perceived harm for research subjects, society, and the scientific community.

What study features shape ethical evaluations?

We conducted a discrete choice conjoint experiment (Hainmueller et al., 2014) to investigate what factors affect scholars’ ethical evaluations. Respondents were presented with two hypothetical political science studies five separate times. Respondents were told that the purpose of both studies was “to examine whether elected officials are biased against racial/ethnic minorities, compared to non-racial/ethnic minorities.” We picked this focus for the studies in our experiment since there is significant literature on racial discrimination by political elites (Costa, 2017) and we were curious if findings in this area shape ethical perceptions.

We were particularly interested in factors related to the study design and researchers because we view these as the natural next step in research about ethics. Prior work has focused on more direct signals of ethicality but not indirect factors that may be used as heuristics that shape ethical perceptions. Each study included seven attributes: (1) author affiliation, (2) author rank, (3) publication outlet, (4) conclusions of the study, (5) methodology, (6) study location, and (7) sample size. Respondents were presented with two randomly generated profiles and were asked, “If you had to choose, which of the two studies would you say is more ethical?” By fully randomizing the profile characteristics, we can uncover the relative impact of attribute levels of study selection. See the Appendix for the full list of randomized attribute values.

We treat each profile viewed as the unit of analysis so that there are up to 10 observations for each respondent (two profiles per conjoint task). Of the 362 people who took our survey module, 241 began the conjoint experiment and we have 2330 choice outcomes to analyze. 2 We estimate Average Marginal Component Effects (AMCEs) and use cluster robust standard errors (clustering at the individual level). Since we are interested in the causal effects of each study attribute on selection, instead of mere descriptive differences in preferences, we focus on AMCEs here rather than marginal means (though see the Appendix for that analysis).

Figure 3 shows the AMCE of each study characteristic compared to the baseline level. Sample size has the largest effect on ethical perceptions. The probability of a study being selected as more ethical increases as its sample size increases. Increasing the sample size from 200 to 500 leads to a 7-point increase in a study being selected as more ethical (p = .02). Increasing that sample size to 5000 leads to a 17.5-point increase (p < .01). This potentially reflects the idea that bad inferences can be drawn from under-powered studies (e.g., Gelman et al., 2020). This illustrates a problem faced by scholars when selecting sample size. A growing number of scholars are advocating that researchers reduce sample sizes in studies using deception to reduce the studies’ potential harm (e.g., Bischof et al., 2021). A larger sample size potentially increases costs and harms, but a smaller sample size increases the likelihood of a study having insufficient statistical power and, as a result, leading to mistaken inferences.

3

Effects of study attributes on selecting study as more ethical. Note: Plot shows estimated average marginal component treatment effects using cluster robust standard errors by respondent. Horizontal lines represent 95% confidence intervals. Takeaway: Study sample and method influence respondent perceptions of ethicality.

The next most influential attribute was what type of methodology was used. Here, we are primarily interested in how different modes of experiments are viewed compared to observational studies that do not have a researcher-randomized intervention. As seen in Figure 3’s bottom left-hand plot, the results follow the same patterns in Figure 2. Survey experiments are selected at about the same rate as observational studies, but lab experiments, field experiments, and audit/correspondence studies are generally viewed as potentially less ethical than observational studies. Lab experiments are 9.3 points less likely to be selected as the more potentially ethical study, and field experiments are almost 14 points less likely to be selected (p < .05). Audit studies are perceived as potentially less ethical by about 3.5 points than observational studies, but this difference is relatively small in magnitude and not quite statistically significant (p = .07).

Study location is viewed as a proxy for the extent to which the study itself is potentially ethical. Compared to studies in the United States, respondents view studies conducted in China as 7.9 points less ethical (p = .015), studies in Sierra Leone as 7.3 points less ethical (p = .036), and studies in Sweden as 7.3 points more ethical (p = .05). Since this is an indirect feature, it is difficult to interpret conclusively, but it’s possible the study location was interpreted as a proxy for the ethical standards that researchers had to comply with. The United States and most of the Western world have institutional review boards and other regulations regarding human research, but many countries in the global south either have no regulation to protect human research subjects or are unable to enforce them (Aguilar, 2015). Survey participants may believe that Sierra Leone is less likely to provide and/or enforce the regulatory framework necessary to protect human research subjects than Sweden.

When compared to studies by U.S.-based researchers in top research universities, respondents are almost 7 points less likely to select studies conducted by European authors in comparable institutions. The direction of the effect for non-top R1 universities is also negative (−5.4 points less likely). Study conclusion, publication outlet, and author rank are not statistically significantly related to respondent choices. 4

Discussion

We hope these results will generate discussion among scholars about how attributes of a study should be considered when evaluating ethics. Given that the ranking of the program of the author and whether it is based in the U.S. is in our view at best a coarse way of judging the morality of a study’s design, our findings suggest that researchers sometimes rely on noisy signals to evaluate research ethics. This is not to say that these characteristics are always unrelated to ethics, for example, study location may be used as a proxy for potential risks to research subjects or local collaborators. The complexity of ethical challenges in non-democratic regimes such as China, for example, demonstrates that considerations are not homogeneous across all contexts. As for author affiliation, researchers may use institution type to infer training around research ethics or design. Other characteristics involving design-relevant attributes such as informed consent and subject recruitment are more reliably related to research ethics, but more work is needed to further assess whether and how characteristics such as location, author affiliation, and sample size should be used to judge ethicality.

Limitations

We stress that this manuscript is not meant to be exhaustive or the singular authority on research ethics in the political science discipline and that our study does have limitations that future work would do well to build upon. Of course, no single study can cover all potential aspects of research ethics. We acknowledge that our conjoint experiment tested the role of noisy proxies of research ethics. This was an intentional design feature given that previous research has tended to focus on more direct signals of ethicality (e.g., the presence or absence of informed consent, debriefing, etc.). We also acknowledge that the conjoint experiment asked for respondents’ choice between two studies, as opposed to rating the ethicality of a single study. This could have contributed to our results showing that respondents use sometimes irrelevant attributes to adjudicate between the two studies. We think that offering two studies to compare reveals important insights about judgments respondents were willing to make; otherwise, respondents would have answered at random if truly no attribute made a difference in their evaluations. Nonetheless, our results should be understood with this limitation in mind. It is possible that evaluating a single study or offering an explicit option to indicate that neither study is particularly unethical may have resulted in different outcomes and interpretations.

Given constraints, we had to be selective about the scope of this manuscript and our Appendices report additional analyses, but this should not be interpreted to mean that topics omitted are less worthy of being studied. Future work in this literature should focus on other aspects of research ethics, such as the research ethics of interpretative methods or data replication and transparency. More research is also needed to see if there are any interactive effects between direct and indirect signals. In addition, social scientists in other fields should note that our research was restricted to political scientists, given that our sampling framework and fielding come from the pool of political scientists that are members of APSA. Moreover, we acknowledge that our conjoint task was about fictional studies. While previous research has tended to show that conjoint studies often approximate real-world behavior (Horiuchi et al., 2022), we cannot be certain this is the case here. Finally, given our sampling constraints, we are unable to explore all potential moderators in our reported results. Future work should explore all the many other potential heterogeneities that may exist in our descriptive and experimental patterns.

Conclusion

The results of our survey of political scientists provide several new insights. Our findings show substantial variation in how political scientists perceive research ethics and that they sometimes use heuristics, such as sample size and study location, to evaluate whether a study is above some ethical bar. These findings have important implications for how to think about ethical guidelines and practices in the discipline. Inconsistent ethical guidelines may lead to inequitable outcomes for some researchers over others, as well as make it difficult to enforce a set of commonly agreed-upon practices or sufficiently prepare graduate students for addressing ethical issues in their future roles in academia or industry.

Future metascience work will be highly valuable in this space. Despite many prominent research organizations charging headlong into ethical reforms, in our view, still far too little is known about the capacity and preparation of scholars to adequately judge the ethicality of scientific research in their field and the consequences of prompting scholars to consider research ethics in their peer reviews. Metascience is vitally important, but, in many cases, woefully under-supported in scientific research (e.g., Bisbee et al., 2020; Wilke et al., 2021; Munger, 2020a, 2020b, 2021; Schooler, 2014). This is especially true when it comes to research ethics, where many vitally important questions remain. For example, we know surprisingly little about just how well political scientists are trained in evaluating research ethics. Similarly, we have essentially no evidence to our knowledge of whether existing ethical training (e.g., required human subjects training courses provided by university IRBs) improves scholars’ capacity to conduct ethical research and properly evaluate whether the research of other scholars is, in fact, ethical or not. We also have little empirical evidence on how ethical guidelines do or do not shape what, where, and how political scientists approach their work, though research considering scholar positionality is informative. Ethical concerns may influence the methodologies that scholars choose to employ in their own work. If some methods are valued or seen as more ethical than others, this shapes not only our research designs but also our research questions and findings themselves. Finally, we have only scratched the surface on the forces that shape scholars’ perceptions of research ethics. Future research would do well to dig deeper into an even broader set of forces that shape scholars’ evaluations of studies’ ethical nature. There is a great deal of room for future research to consider how political scientists perceive research ethics. That said, our results take an important step in better understanding the role and influence of research ethics in political science. Our results illuminate what an important community thinks of research ethics, points to several paths forward for future research, and could help inform the ongoing discussion that centers on ethics, experimentation, and best practice.

Supplemental Material

Supplemental Material - Is that ethical? An exploration of political scientists’ views on research ethics

Supplemental Material for Is that ethical? An exploration of political scientists’ views on research ethics by Mia Costa, Charles Crabtree, John B. Holbein, and Michelangelo Landgrave in Research & Politics.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.