Abstract

Politicians learning about public opinion and responding to their resulting perceptions is one key way via which responsive policy-making comes about. Despite the strong normative importance of politicians’ understanding of public opinion, empirical evidence on how politicians learn about these opinions in the first place is scant. Drawing on survey data collected from almost 900 incumbent politicians in five countries, this study presents unique descriptive evidence on which public opinion sources politicians deem most useful. The findings show that politicians deem direct citizen contact and information from traditional news media as the most useful sources of public opinion information, while social media cues and polls are considered much less useful. These findings matter for substantive representation, and for citizens’ feeling of being represented.

Any conceptualization of democracy implies that what elected representatives decide, at least to some extent, matches what the people want (e.g. Dahl, 1998). Indeed, policy responsiveness is widely considered to be a cornerstone of an adequately functioning democracy (e.g. Stimson et al., 1995, Soroka and Wlezien, 2009). One mechanism potentially generating such policy responsiveness is politicians learning about the preferences of the public and then following up on those perceptions, and the other mechanism is politicians following their own opinion (see, famously, Miller and Stokes, 1963). But how do politicians learn about public opinion? What sources do they favour to get a sense of what citizens want?

The short answer is: We do not really know. Although the bulk of the work on democratic representation supposes that politicians somehow sense public opinion, how that ‘sensing’ is accomplished remains elusive and hypothetical. Many scholars of representation suffice by referring to all kinds of possible sources of public opinion information without looking into them empirically ― see, for instance, Stimson and colleagues (1995: 562) who mention the importance of polls, newspapers, chats with voters, contact with lobbyists, etc. (or see Kingdon, 1984: 149 who comes up with a similar list of sources). Yet empirical evidence about how politicians go about doing one of the crucial aspects of their job, namely, forming themselves an image of what the people want, is scant. This paper is an attempt to start filling that void.

Examining how politicians stay abreast of public opinion is not just relevant from a descriptive point of view, it has important consequences. After all, which sources politicians (prefer to) consult, has a bearing on their perceptions of public opinion and, probably, on the errors and biases in those perceptions (Pillet et al., 2023, Broockman and Skovron, 2018). Estimating public opinion is a tricky undertaking. Politicians cannot directly perceive ‘the’ public or ‘the’ electorate. They are, in contrast, unavoidably confronted with proxies, with samples of public opinion signals that they then aggregate, weigh, and generalize – to the general population, their party voters, or to a particular group of people they aim to represent. The initial, inevitable sample bias must be recalibrated to a more correct overall estimation. And, some of the sources politicians use are likely to provide more biased samples ― samples that require more recalibration ― than others.

Take, for example, poll results. Such public opinion signals are already aggregated, and they are based on large samples. If the poll is well conducted, not a lot of extrapolation and recalibration on the side of the politicians needs to be done (but see Holtz-Bacha and Strömbäck, 2012 for a discussion of problems with polls). Via their social media feed as well, politicians get a lot of public opinion signals, but this sample of signals is much more skewed given that the feedback mostly comes from their supporters. And another example of a channel that likely yields a very skewed sample of public opinion signals is the contact politicians have with ordinary citizens. Indeed, the literature on elite-citizen contact provided extensive evidence that the citizens who contact politicians are generally higher educated and wealthier (Schlozman et al., 2012), more politically efficacious and more interested in politics (Aars and Strømsnes, 2007), and more conservative (Broockman and Skovron, 2018). Our point is that which sources of public opinion politicians prefer, has a bearing on how they conceive of public opinion.

The channels of public opinion information politicians rely on may not only affect the accuracy and bias of their resulting perceptions, they may matter in other ways as well. The mere act of politicians informing themselves in a certain way about public opinion may have consequences for how citizens look at them. Popular dissatisfaction with politicians reaches all-time highs (Torcal, 2017), and one of the complaints citizens have about their representatives is that they are disconnected from the people, disregard their opinions, and are inaccessible (Clarke et al., 2018). This widely shared complaint implies that people have an opinion about how politicians ought to stay abreast of what they want; it demands interpersonal contact between politicians and their constituents. If we follow this logic, how politicians go about reading public opinion has consequences for how citizens look at them, whether they trust them. The sheer act of talking to ordinary people, in that sense, may be consequential.

For these reasons, this study engages in a systematic effort to empirically grasp how politicians go about estimating public opinion. We draw on survey evidence collected among almost 900 incumbent national and regional politicians in five countries ― Belgium, Switzerland, the Netherlands, Canada, and Germany. We present, for the first time, descriptive evidence about the sources of public opinion information politicians deem most useful. We find that politicians, when wanting to find out what citizens want, mostly prefer direct citizen contact and information from traditional news media.

Politicians’ sources of public opinion information

We know little about the information signals elected representatives use to make sense of what it is that people want. This is all the more surprising since there is evidence that politicians care a lot about knowing the public’s preferences and very frequently engage in trying to stay abreast of it (see e.g. Walgrave et al., 2022). But how do they do it then? What sources of public opinion do politicians value?

Kingdon (1968) pioneered with a survey study of U.S. election candidates asking them about how they try to predict future election outcomes ― which is, of course, only indirectly related to people’s policy preferences. Kingdon finds that past election results, the public’s reaction when campaigning, talking to party people, and interacting with campaign volunteers all matter to some extent. Interestingly, what he calls, the ‘warmth of reception’ on the campaign trail, that is the reaction of rank-and-file voters to their campaign, was considered the number one predictor of their imminent electoral fate; a majority said to rely on it ‘without qualification’ (Kingdon, 1968: 91).

Through open interviews, Powlick (1995) studied the sources of public opinion specifically of foreign policymakers ― not politicians but career bureaucrats. He finds that news media and politicians are most frequently mentioned as sources by foreign policymakers, while unmediated opinion sources (polls, letters, phone calls, and direct contact) have some relevance as well. Interest groups, it appeared, mattered very little for foreign policymakers.

The most ambitious study about sources of public opinion information used by elites to date was authored by Susan Herbst (1998). In Reading Public Opinion she interviews political actors in the U.S. ― policy advisors, partisan activists, and journalists ― about what they consider public opinion to be, and what sources they use to assess it. She shows that mobilized group opinion (interest groups/lobbying) and mass media content matter a lot, according to the interviewees. Polls are rarely mentioned by Herbst’s interlocutors as a useful means of getting access to public opinion. Mass opinion (grasped through polling but also through letters from constituents, for instance) is not deemed very relevant either because citizens are generally thought of as uninformed and uninterested.

Another notable study is the work by Druckman and Jacobs (2006) who draw on a database of the private polling of the U.S. White House under President Richard Nixon. The study only focuses on private polling as a means to acquire public opinion information, and it cannot establish how important polling is compared to other public opinion sources.

All studies mentioned so far are American. In contrast, Petry (2007) surveyed Canadian officials (both elected politicians and civil servants) by presenting them with a closed battery of 12 ‘indicators’ of public opinion and asking them to rate the importance of each of them. Public consultations, election results, polls, and mass media were ticked most by Canadian officials as being (very) important. Lobbyists, protestors, and party activists were considered less important.

Apart from the handful of studies that directly tackled the matter of how politicians learn about public opinion, there is adjacent work that assessed the possible relevance of specific sources of public opinion. We can refer, for example, to the work on how intensely politicians consult the mass media (e.g. Van Aelst and Walgrave, 2016), how often they interact with interest groups, what they can learn about public opinion from those interactions (e.g. De Bruycker, 2016), and how often they engage in constituency work (e.g. André et al., 2014). Yet, this work does not directly tackle the matter of how relevant these activities and interactions are for politicians to assess public opinion.

When screening the above literature review the first thing that strikes the eye is its brevity, and this lack of work translates into several weaknesses. To start with, not all studies focus on elected representatives but instead tackle the public opinion sources non-elected actors rely on (Petry, Powlick, and Herbst). In addition, some studies deal with one specific source, like polls (Druckman and Jacobs) which does not allow for comparison with other sources. Still others focus on one policy domain which makes generalization difficult (Powlick). Also, none of the studies were comparative and could therefore establish some kind of generic pattern travelling across systems. And, importantly, all of the studies date from before the social media age, so a possibly important source of current-day public opinion assessments is lacking. Finally, and not surprisingly, the findings in this small field of inquiry were contradictory with some stating that interests groups matter (Herbst) and some that they do not (Petry), that mass media matter (Herbst, Powlick, and Petry) and others that they do not (Kingdon), that polls matter (Jackson and Druckman) or not (Kingdon and Herbst), that interactions with ordinary people matter (Kingdon) or not (Powlick and Herbst), and so on. So, the question remains: How do politicians learn about public opinion?

Data and methods

Our evidence comes from a survey 1 of incumbent national and regional politicians in five countries: Belgium, Canada, the Netherlands, Germany, and Switzerland. These countries, all Western democracies, present a fairly diverse sample in terms of Western political systems with majoritarian (Canada), very proportional (the Netherlands), moderately proportional (Belgium), mixed (Germany), and sui generis (Switzerland) systems. Given the dearth of empirical work on politicians’ usage of public opinion sources (and in particular the absence of comparative work on the matter), we do not have specific expectations about possible country differences. Yet, this country variation allows us to verify if the findings on politicians’ preferred public opinion sources are robust across different political systems.

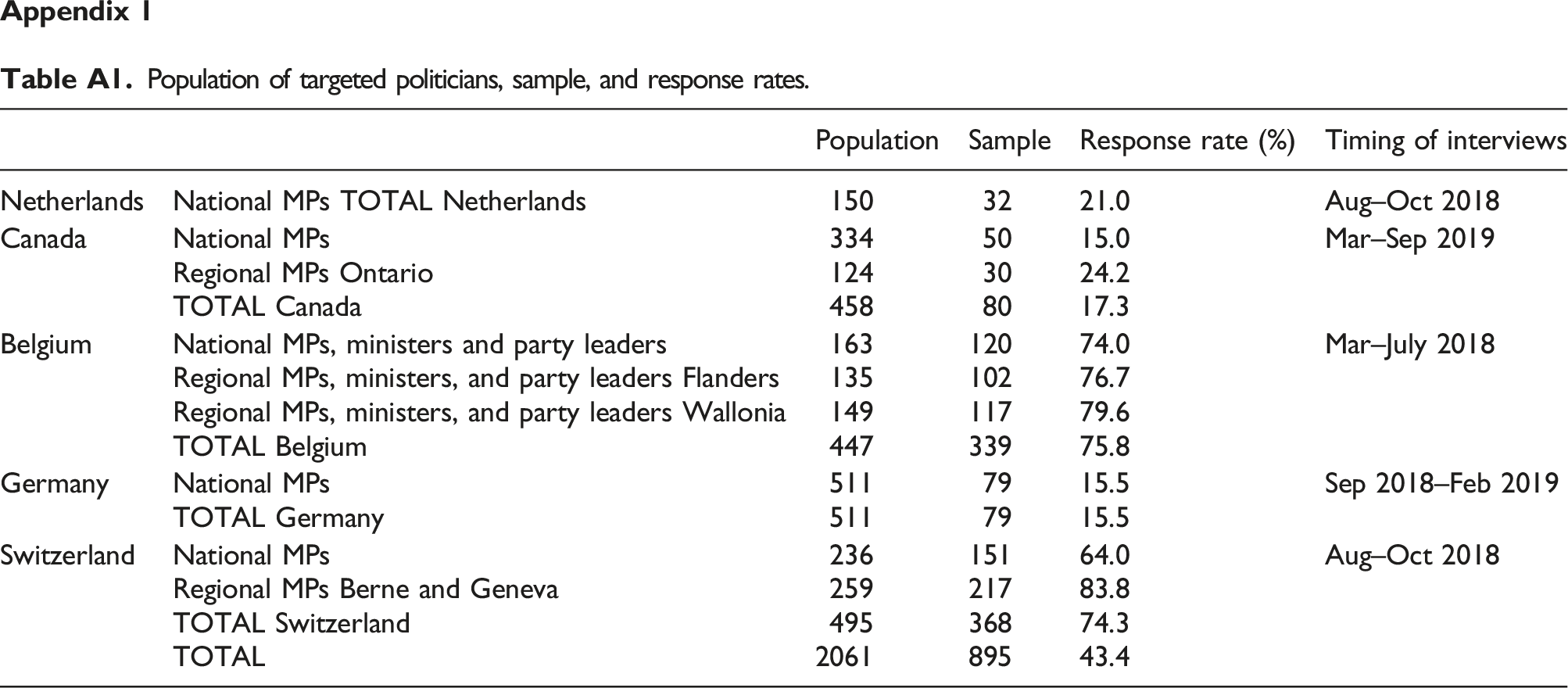

In 2018, in total 898 politicians from these countries were surveyed. Response rates vary substantially from one country to another, with very high response rates in Belgium (76%) and Switzerland (74%), and lower rates in Germany (16%), Canada (17%), and the Netherlands (21%) (see Appendix 1). Note that even though the response rates vary the sample of participating politicians appears to be representative of the full population of politicians in all countries (see Appendix 2). The surveys were conducted in situ, and respondents completed a survey on a laptop brought by the interviewer; the interviewer did not observe the answers but was present to make sure representatives answered the questions themselves, and to answer questions for clarification.

The question we asked was: There are many sources of information that can be useful to inform yourself about what (kind of policy) the general public 2 wants. Below we list several such potential sources. Can you rank these sources based on their usefulness to inform yourself about what the general public wants? Politicians then ranked eight possible sources of public opinion that were presented in random order: (1) contact with people I am close to (friends, family, acquaintances, …), (2) direct contact with citizens, (3) opinion polls by my own party, (4) opinion polls by other organizations, (5) messages on social media, (6) reading, listening or watching traditional media, also online, (7) information from social movements and interest groups, and (8) talking to journalists. Politicians who did not rank all sources are not included in the analyses (56 observations dropped). And the question was not asked in the Swiss short version of the survey (67 observations dropped). So, in total, we have evidence on 775 politicians for which we have complete answers. For these politicians, we additionally collected information about their gender, seniority, and position, and we asked them about their role conception (trustee vs delegate). Also, we included information about their party; party size (i.e. number of seats), whether they are in government, and its ideological position (left-right placement based on Chapel Hill expert survey). These variables are introduced as independent variables to explore variation in source usefulness among politicians.

Results

Average usefulness attributed by politicians to each of the public opinion (95% confidence intervals) (N = 775).

Overall, politicians feel that direct contact with citizens (6.34) is the most useful way to get informed about the public’s preferences. In a sense, it is surprising that politicians attribute most indicative power to one of the sources that is least aggregated, and that provides politicians with almost unavoidably skewed signals. Interestingly, this confirms Kingdon’s (1968) finding from almost half a century ago that interactions with ordinary citizens matter for how politicians see the public ― but they contradict other studies that discard the importance of contact with ordinary people (Herbst, 1998, Powlick, 1995). Moreover, the finding supports conclusions of related studies, for instance, on politicians’ ideas about the value of public engagement in policy-making, which show that politicians prefer (spontaneous) conversations with individual citizens to learn about their desires (e.g. Hendriks and Lees-Marshment, 2019). And, it confirms findings from interviews with Belgian MPs who seem to spend a lot of time talking to people; ‘They immerse themselves intensely in a rich social life and interact with ordinary citizens at various occasions’ (Walgrave et al., 2022: 83). It is likely that politicians value those informal meetings not only to learn whether people are in favour or against a certain policy, also to ‘feel the public mood’, to see how strongly people care about certain issues and what arguments they might have for those opinions.

Consulting traditional media (5.71) is considered by politicians as the second most useful way to stay abreast of the public’s preferences. This confirms previous theoretical work claiming that politicians learn about public opinion by following the news (Van Aelst and Walgrave, 2016) and it corroborates Herbst’s (1998) key finding that it is through the media that political actors learn about public opinion.

The third most useful source of public opinion, politicians say, is talking to people they are close to (5.06). Interestingly, none of the extant studies pointed to that option. Politicians seem to rely partly on a number of confidants to get a feel of what it is that the people want. Note that, here again, the extrapolation work that politicians need to engage in to generalize the signals they get from these intimates to a larger population is quite daunting. Maybe even more than the ordinary (unknown) people they talk to, politicians’ inner circle of family and friends is substantially skewed both in socio-economic and political terms (e.g. Bovens and Wille, 2017), and this doubtlessly translates into a similarly unrepresentative network.

Social movements and interest groups (4.96) are quite useful as well, politicians claim. This means that they appreciate the organized, mobilized public opinion that they are exposed to via organizations. This is in line with one of the key findings of Herbst (1998), yet it contradicts what Powlick (1995) found about U.S. foreign policy or what Petry (2007) found in Canada.

The last four sources were mentioned significally less as useful public opinion sources. Remarkably, social media (3.90) scores rather low. Notwithstanding the ubiquitous presence of social media, and the fact that politicians use social media intensely to send their messages to the public (e.g. Jungherr, 2016), social media signals are not considered to be good indications of what citizens want. The very limited usefulness of polls from other organizations (3.76) and polls from the own party (3.70) is to some extent surprising given that polls offer aggregated public opinion signals that require little extrapolation. In part, the reason may be that about many issues polls are simply not available. Contact with journalists (2.56) closes the ranks. This goes against accounts claiming that politicians like talking to journalists as they learn about public opinion via these interactions (Van Aelst et al., 2010).

Our discussion was so far related to the combined evidence in the five countries. Are the findings robust across political systems? They largely are, Figure 2 shows. Average usefulness attributed by politicians to each of the public opinion sources, by country.

In all countries, politicians consider direct interaction with citizens the number one most useful source. In political systems that differ from each other in many respects – for example, majoritarian versus proportional electoral systems, weak versus strong party systems – talking to ordinary people is considered most valuable in the process of understanding public opinion. Consulting traditional media scores consistently high as well ― in three countries it is the number two source, and in Switzerland and the Netherlands the source is ranked third, on average. Talking to one’s close network scores high in most countries as well ― except for the Netherlands, where it ranks seventh. On the opposite side, in every country, talking to journalists is considered the least useful public opinion source. Results concerning social media are pretty consistent as well; they do not play an essential role in any of the countries (but most so in Germany). Finally, polls and interest groups take a similar place in the five countries as well, with some differences ― especially in the Netherlands, social movements/interest groups are considered important public opinion sources.

Hence, by and large, and notwithstanding the diversity of the political systems in our sample, we do find strikingly similar patterns in all countries. Direct interaction with citizens is key, followed by traditional media and talking to one’s intimate circle. Social media matter surprisingly little, and polls and interactions with journalists even less. This finding places a substantial burden on politicians’ shoulders. Representatives prefer direct, raw, unmediated, and unaggregated public opinion signals (contact with ordinary people and their intimate network) above indirect, mediated, and aggregated signals (such as polls or talking to journalists). Politicians are probably aware of the fact that the ‘ordinary’ people they talk to cannot possibly form a representative sample of the public at large (at least, this is what Walgrave et al., 2022 found in Belgium). Yet, in what way the people they talk to diverge from the rest of the population is tough to judge.

In addition to examining what sources politicians in general deem most useful, we briefly explore variations in how much individual politicians value different sources. The full regressions can be found in Appendix 3 but we summarize the findings here. Most importantly, it shows that all politicians, regardless of their status, seniority, gender, or role conception, consider direct citizen contact the most useful public opinion source. We do find some variation when looking at the other sources, though. Elite politicians (those who currently hold or have held the position of parliamentary party group leader, minister, or party leader) value information from social movements/interest groups less as a public opinion source compared to their backbencher colleagues. Also, more experienced politicians attribute significantly more importance to their contacts with journalists than more junior politicians do ― being in parliament for a longer time, these seasoned politicians probably have more connections with journalists to begin with. And their more junior (usually younger) colleagues, in turn, rely more on the information they get from social media when assessing public opinion. Finally, we observe substantial gender differences; female politicians rank the usefulness of own polls and social movement/interest group information higher than their male colleagues. Male politicians, in turn, value contact with their relatives more as a source of public opinion.

Zooming in on party-level predictors, then, it shows that the more right-wing a politician’s party is, the more they value social media, interactions with relatives, and their own polls as a source of public opinion information. And the opposite is true for traditional news media, contact with journalists and information from interest groups and social movements; here it is politicians from left-wing parties that deem these sources more useful than their right-wing colleagues. Government and opposition status and party size seem to matter less for politicians’ source use (but note that politicians in the opposition value social media more than their colleagues in government).

Conclusion

The best way to learn about popular preferences, politicians say, is through direct interaction with ordinary people. Consuming traditional media and talking with intimates are good indicators of public opinion as well. Social media cues and polls are less useful. This pattern is remarkably consistent across the political systems we looked at. By relying on interactions with ordinary citizens, politicians probably conform to what many people would say a good politician ought to do: listen to the people as directly as possible, be accessible to common people, and remain in close contact with those they are expected to represent.

At the same time, one may question whether these interactions with ordinary citizens give politicians a good grasp of what citizens want. After all, ample scholarly work has shown that the citizens who reach out to politicians are not representative of the full population – they are generally more affluent, higher educated, more conservative, and more politically interested (Schlozman et al., 2012, Broockman and Skovron, 2018). Relying strongly on such direct citizen contact for gauging public preferences, politicians may end up with a biased, inaccurate image of public opinion. Future work could further explore this relationship between politicians’ perceptual accuracy and their source use.

But why do politicians rely so much on direct citizen contact? One answer could be that politicians just declare they value such contacts because they are aware that this is what is expected from them. We cannot rule out this option. But the context in which the surveys took place, with ample guarantees that results would remain private and with the respondents completing the survey unobserved by the interviewer, decreases the chance that social desirability would be the driver. Additionally, in some countries, the closed survey was followed by an open interview, and in these interviews politicians, without being cued, often referred to direct citizen contact as an important information source. Many provided us with a whole series of examples of the most mundane of occasions in which they interact with ordinary citizens (see, Walgrave et al., 2022 for the results of these interviews in Belgium). These anecdotes increase confidence in the fact that talking to ordinary citizens is indeed what politicians deem most valuable to understand public opinion. We suspect that it is the richness of these direct public opinion signals that is part of the explanation for why politicians value such direct interactions with citizens so much. When politicians talk to ordinary people, they not only get information about where these people stand but they also get cues about the quality of the opinion these people hold and the intensity with which the opinion is held. This information is important for politicians to have; deeply held, informed, positions are to be reckoned with, while superficial non-opinions can more easily be discarded.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

This work was supported by the Flemish Fund for Scientific Research (FWO) [grant number G012517N] and was embedded in the POLPOP project. Stefaan Walgrave (University of Antwerp) is the principal investigator of the POLPOP project in Flanders, Jean-Benoit Pilet and Nathalie Brack in Wallonia, Christian Breunig and Stefanie Bailer in Germany, Rens Vliegenthart in the Netherlands, Frédéric Varone in Switzerland, and Peter Loewen in Canada.

Ethical approval

Ethical approval for this research was provided by the respective universities.

Notes

Appendix 1

Population of targeted politicians, sample, and response rates.

Population

Sample

Response rate (%)

Timing of interviews

Netherlands

National MPs TOTAL Netherlands

150

32

21.0

Aug–Oct 2018

Canada

National MPs

334

50

15.0

Mar–Sep 2019

Regional MPs Ontario

124

30

24.2

TOTAL Canada

458

80

17.3

Belgium

National MPs, ministers and party leaders

163

120

74.0

Mar–July 2018

Regional MPs, ministers, and party leaders Flanders

135

102

76.7

Regional MPs, ministers, and party leaders Wallonia

149

117

79.6

TOTAL Belgium

447

339

75.8

Germany

National MPs

511

79

15.5

Sep 2018–Feb 2019

TOTAL Germany

511

79

15.5

Switzerland

National MPs

236

151

64.0

Aug–Oct 2018

Regional MPs Berne and Geneva

259

217

83.8

TOTAL Switzerland

495

368

74.3

TOTAL

2061

895

43.4

Appendix 2

Population of targeted politicians, sample, and response rates.

Netherlands

Canada

Belgium

Germany

Switzerland

Cooperated

Population

Cooperated

Population

Cooperated

Population

Cooperated

Population

Cooperated

Population

Female (%)

38%

31%

39%

31%

37%

39%

25%

31%

32%

32%

Age in years

46

46

52

52

50

50

50

49

51

52

Seniority in years

4

5

6

6

11

11

5

6

10

11

Appendix 3

Random effects ordered logistic regression predicting the usefulness (rank order) of each of public opinion source (group variable party).

Direct contact with citizens

Traditional media

Relatives

Movements

Social media

Other polls

Own polls

Journalists

Coef.(S.E.)

P> |z|

Coef.(S.E.)

P> |z|

Coef.(S.E.)

P> |z|

Coef.(S.E.)

P> |z|

Coef.(S.E.)

P> |z|

Coef.(S.E.)

P> |z|

Coef.(S.E.)

P> |z|

Coef.(S.E.)

P> |z|

Elite politician

.13 (.26)

.620

.40 (.26)

.130

-.26 (.26)

.313

-.17 (.25)

.489

-.22 (.28)

.433

-.20 (.26)

.467

Seniority

.00 (.01)

.884

.00 (.01)

.727

.00 (.01)

.675

.-00 (.01)

.999

-.02 (.01)

.104

.00 (.01)

.905

Trustee role

-.05 (.04)

.208

.03 (.04)

.434

.05 (.03)

.187

.01 (.04)

.803

-.04 (.04)

.251

.00 (.04)

.920

-.03 (.03)

.425

.03 (.04)

.368

Female

.07 (.15)

.626

-.11 (.14)

.431

-.08 (.14)

.568

.05 (.14)

.750

Government

.16 (.23)

.500

.32 (.20)

.103

.31 (.14)

.444

.13 (.21)

.524

-.12 (.19)

.535

.01 (.18)

.976

-.13 (.20)

.534

Rightwingness

.08 (.05)

.110

.00 (.04)

.956

Party seat

.00 (.00)

.341

.00 (.00)

.432

.00 (.00)

.417

-.00 (.00)

.325

.00 (.00)

.835

.00 (.00)

.253

-.00 (.00)

.404

Country (ref. = Belgium)

Switzerland

-.32 (.21)

.127

-.24 (.24)

.326

.09 (.21)

.656

.02 (.16)

.870

Netherlands

-.09 (.46)

.837

-.11 (.39)

.777

-.42 (.39)

.280

-.40 (.41)

.326

.14 (.36)

.696

.43 (.37)

.253

Germany

-.75 (.51)

.143

-.36 (.43)

.406

.61 (.39)

.116

-.64 (.47)

.170

.33 (.42)

.426

-.55 (.44)

.206

-.50 (.38)

.189

Canada

.66 (.49)

.179

-.09 (.39)

.809

.32 (.36)

.370

-.54 (.43)

.207

-.48 (.39)

.214

.54 (.34)

.108

.50 (.35)

.158

σ

.22 (.10)

.06 (.05)

.02 (.04)

.14 (.08)

.07 (.06)

.07 (.05)

.00 (.00)

.02 (.05)

N (groups)

748 (43)

748 (43)

748 (43)

748 (43)

748 (43)

748 (43)

748 (43)

748 (43)