Abstract

Since the 1990s, many governments in middle and low-income countries have used conditional cash transfers to alleviate poverty. However, the evidence of the electoral consequences of this type of anti-poverty intervention remains inconclusive. Do voters reward politicians when they implement conditional cash transfers? This study conducts a meta-analysis using a sample of 10 randomized controlled trials and regression discontinuity designs (35 estimates from six countries in Latin America and Asia) to address this question. The result shows a positive effect of conditional cash transfers on voter support for the incumbents and no evidence of publication bias in the selected sample. Estimated effect sizes tend to be larger in observational studies, unpublished manuscripts, and articles published in political science. These results provide more conclusive evidence that poor voters also respond to non-clientelistic strategies of electoral targeting in developing countries.

Introduction

Conditional cash transfers (CCTs) provide income to low-income families contingent on those households making investments in the education and health of their children. By inducing high levels of child immunization and enforcing regular school attendance by children, CCTs help to break the cycle of poverty for the next generation through the development of human capital. 1 Since Mexico and Brazil had successful experiences implementing Progresa and Bolsa Família (the first anti-poverty programs of this type implemented in the 1990s), more than 60 CCTs have come to existence around the world today (Barrientos, 2019).

Many governments, regardless of their political orientation, became proponents of CCTs. What makes them so attractive to politicians is their low cost accompanied by compelling results. For instance, CCTs in Mexico and Brazil cover 6 million and 13 million households, respectively, at the expense of less than 0.5% of these countries’ GDP (Ibarrarán et al., 2017). Despite their relatively low cost, there is abundant evidence that CCTs alleviate poverty (e.g., Gertler, 2004); increase the enrollment and attendance of kids in schools (e.g., De Janvry et al., 2006); and reduce the incidence of child mortality (e.g., Rasella et al., 2013). 2

Therefore, implementing CCTs can be a strategic move for politicians looking to expand their electoral influence. Indeed, evidence from several countries such as Brazil (Zucco Jr, 2013), Colombia (Conover et al., 2020), Honduras (Galiani et al., 2019), and Uruguay (Manacorda et al., 2011) suggests that the implementation of a CCT has positive implications for the ruling party/candidate. That is, theoretically, voters are more likely to vote for parties/candidates recognized as the “founders” of CCTs in their countries. However, the empirical record remains mixed. Studies suggesting the opposite results (e.g., Tobias et al., 2014; Schober, 2016; Corrêa and Cheibub, 2016) call for caution in making definitive conclusions.

The debate surrounding the Mexican case is a good illustration of deadlock in the literature. Imai et al. (2020) have recently challenged the initial evidence of pro-incumbent voting in favor of Partido Revolucionário Institucional (PRI) due to the implementation of a CCT in Mexico. By reanalyzing data of the original study published by De La O (2013) and adding new evidence on the implementation process of Progresa, the authors conclude that this anti-poverty intervention has no causal effect on support for the incumbent party. According to the authors, their results “resolve some disagreements in the literature and wind up not supporting the programmatic incumbent support hypothesis” (Imai et al., 2020: 715).

Even if we accept that programmatic policies did not affect voter support for PRI in Mexico as valid evidence, should we assume that the implementation of CCTs does not pay off elsewhere? Do voters reward politicians when they implement CCTs? In this paper, I address these questions using a meta-analysis which enables systematic reviews of the literature through the integration of empirical evidence to achieve unified and potentially more general conclusions.

The results of a meta-analysis using a sample of 10 randomized controlled trials and regression discontinuity designs (35 estimates) show that this type of anti-poverty intervention has a positive and causal effect on the electoral support for the incumbent party. I then provide complementary evidence by exploring the heterogeneity of results through meta-regression models. Estimated effect sizes tend to be larger in observational studies, unpublished manuscripts, and articles published in political science (contrary to economics). Furthermore, I show more sizable estimates in studies evaluating the electoral effects of CCTs in Latin America, suggesting that Mexico is a potential outlier in the region rather than a representative case. Finally, I show that the overall positive effect in the sample is not driven by publication bias.

These findings, therefore, provide more conclusive evidence that poor voters also respond to non-clientelistic strategies of electoral targeting in developing countries.

Do programmatic policies pay off?

Programmatic policies, those based on well-known and publicly stated rules regardless of whether an individual supported or opposed the incumbent, 3 are less likely in developing countries (e.g., Kitschelt et al., 2007). Since poor people have fewer instruments to hold politicians accountable between elections (e.g., Taylor-Robinson, 2010), those voters are expected to be more likely to engage in the electoral clientelism to obtain short-term benefits (e.g., Stokes et al., 2013).

Yet, contrary to those theoretical expectations, politicians often use non-clientelistic strategies to target voters in developing countries (e.g., Calvo and Murillo, 2019). The implementation of CCTs is a remarkable example of those strategies of electoral persuasion. CCTs are targeted income transfers to socially vulnerable populations, and the provision of CCTs follows well-defined rules. Hence, the discretion given to politicians over the distribution of these benefits is minimal (Ibarrarán et al., 2017). As CCTs tend to be cheaper than other programmatic policies (e.g., investments in education or public health), further investments on the former can turn out to be more attractive for incumbents, particularly under conditions of fiscal stress (Diaz-Cayeros et al., 2016).

While a strand of the literature assumes that investments in human well-being sway voters in favor of the incumbent (e.g., Dahlberg and Johansson, 2002), the economic voting literature typically emphasizes that the decision to invest in voters’ wellbeing does not necessarily translate into electoral gains for incumbents (e.g., Huber et al., 2012). If the poor are unlikely to reward politicians implementing non-clientelistic programs, politicians have strong incentives to engage solely in clientelistic strategies to obtain electoral support. If, on the other hand, voters recognize and reward politicians when they deliver public goods using programmatic strategies, incumbents have an incentive to bypass clientelism even in contexts of high vulnerability (De La O, 2015).

Can incumbents gain electoral credits by implementing programmatic policies in middle and low-income countries? In this paper, I answer this question by conducting a meta-analysis of all available credible evidence on the effect of CCTs on electoral support for incumbents.

Data

The sample selection is a crucial step in a meta-analysis. A comprehensive review of the literature typically encompasses all available evidence concerning a topic. Further refinements are then required to assure that the final sample reflects a specific research question. Given the goal of this study, a comprehensive review should include all studies on the political effects of the CCTs. Conversely, an accurate final sample should account only for studies researching the impact of this type of anti-poverty intervention on electoral support for incumbents.

I selected the sample of studies on which to perform the meta-analysis based on the following criteria. First, I conducted a screening search in all of the top 30 journals 4 in the fields of political science and economics to identify keywords to include in a draft search strategy. 5 Then, I used the terms “Conditional cash transfers or elections” and “Anti-poverty programs or elections” to research studies in the online repositories of Web of Science and Google Scholar.

Second, I applied the key terms search in the repositories of Social Sciences Research and Network (SSRN); IDEAS/Repec; National Bureau of Economic Research (NBER); the Joint Libraries of the World Bank and the International Monetary Fund (JOLIS); and the British Library of Development Studies (BLDS). This strategy allowed for the inclusion of unpublished papers, thereby reducing the risk of selection bias in the sample of studies (Waddington et al., 2012).

Third, I applied the key terms search in the repository of the Scientific Electronic Library Online (SCIELO) to prevent language bias. SCIELO is a cooperative electronic publishing model of open access journals that evaluates and disseminates scientific literature in Spanish and Portuguese in 16 countries (~1250 journals) in Latin America. Given the high incidence of CCTs in Latin American nations (Barrientos, 2019), this procedure was especially important.

Altogether 54 papers were identified. 6 I excluded the articles that investigate the effect of CCTs on other political outcomes (e.g., civic engagement, voter turnout, electoral competition, and clientelism) rather than the electoral support for the incumbent. The other two reasons for exclusion from the sample were the absence of the quantitative tests in the study or when the authors did not provide the coefficients and their respective standard errors 7 in the manuscript. At this stage, 26 articles and 135 estimates were in the final sample.

I adopted a conservative approach by keeping only studies judged to be of “low risk of bias” in the sample, 8 notably the randomized control trials (RCTs) and regression discontinuity designs (RDDs). When well implemented, these techniques allow for a causal inference since a random (or as-if random) assignment of units (to either treatment or control group) prevents omitted variable bias, as well as other potential sources of issues in estimates that rely on observables (Duflo et al., 2007; Sekhon and Titiunik, 2012). The final sample is composed of 10 studies and 35 estimates.

Table 1 reports the list of selected studies and their characteristics. The meta-analysis sample spans six countries (Colombia, Honduras, Indonesia, Mexico, Philippines, and Uruguay) and seven different CCTs (Familias en Accio´n, PRAF, Bono, PNPM Generasi, Progresa, 4Ps, and Panes) implemented in Latin America and Asia, most of which are located in the former since this region has the highest concentration of CCTs globally.

The list of studies included in the meta-analysis.

Note: Compiled by the author based on the literature review conducted for the meta-analysis.

Method

Suppose that there are K independent studies; each study reports several estimates of the unknown effect size and an estimate of its standard error. A meta-analysis provides an analytical solution to combine these estimates in a single result to obtain the population parameter of interest. Therefore, it is possible to remove any net effect of sampling error from empirical findings (Stanley and Doucouliagos, 2012).

In this paper, I modeled the effects sizes using a random-effects model (RE). RE models adopt the assumption that there are policy implementation heterogeneity (e.g., study location or the unit selected for the intervention), and therefore calculates an average effect across studies that accounts for differences due to both chance and other factors that affect estimates (Stanley and Doucouliagos, 2012).

To identify the effect of CCTs on the electoral support for the incumbent, I collected all estimates reported in the main table of results (N = 35) from each study in the final sample (N = 10). I did not include estimates that tested interactive or heterogeneous effects since conditional coefficients are not directly comparable across studies (Aguinis et al., 2011). As recommended by Doucouliagos and Ulubașoǧlu (2008), these estimates were transformed into partial correlations to make them comparable across studies. A partial correlation is preferable to other measures of associated magnitudes (e.g., vote-counting, success-rate or meta-probit analysis) since it accounts for the sampling error of the estimated effect by adding weights (Philips, 2016). In a final step, I calculated the mean (a weighted average of reported effect sizes; the so-called overall effect) and 95% confidence intervals (CI) around this mean.

Results

Overall effect

Figure 1 displays the individual and overall effect-sizes, their 95% CI, and the percentages of the total weight for each study. By design, estimates using more observations (typically the ones with higher precision) contribute more to the overall effect. In the plot, each study corresponds to a square centered at the point estimate of the effect size with a horizontal line (whiskers) extending on either side of the square. A summary of the effect sizes (theta) is reported at the bottom of Figure 1. The overall estimate is 0.123 with a 95% CI of [0.051; 0.195]. Substantively, this result means a positive and causal effect of CCTs on the electoral support for the incumbents.

Forest plot: Estimated overall effect size using a random-effects model.

Online Appendix B reports estimates using common-effects (CE) and fixed-effects (FE) models of meta-analysis. A CE model assumes one true effect for all studies in the sample, whereas a FE model restricts the inference only for studies included in the sample. In both scenarios, the results are consistent with the ones reported in Figure 1 (using a RE model of meta-analysis). On average, there is a positive and statistically significant effect of CCTs on electoral support for the incumbents.

A fundamental assumption in meta-analysis is that the effect sizes are statistically independent of each other. Since it is more likely that one violates this assumption in models using several estimates from the same study, I also performed RE, FE, and CE models with one estimate per study. I selected the effect size with higher precision (the one with the higher number of cases), as recommended by Card (2015). Online Appendix C shows that the overall effect size calculated through the meta-analysis is not affected by statistical dependence (if any) in the sample.

Heterogeneity of results

The presence of heterogeneity among studies can be inferred from the homogeneity test reported at the bottom of Figure 1. The Q test statistic is 573.52, with a p-value of 0.001, suggesting that a substantive amount of the variability in the effect-size estimates is due to the differences between the individual studies – and not solely attributable to sampling error. I implemented a meta-regression analysis to check whether the heterogeneity is explained by study-level moderators (covariates) available in the dataset.

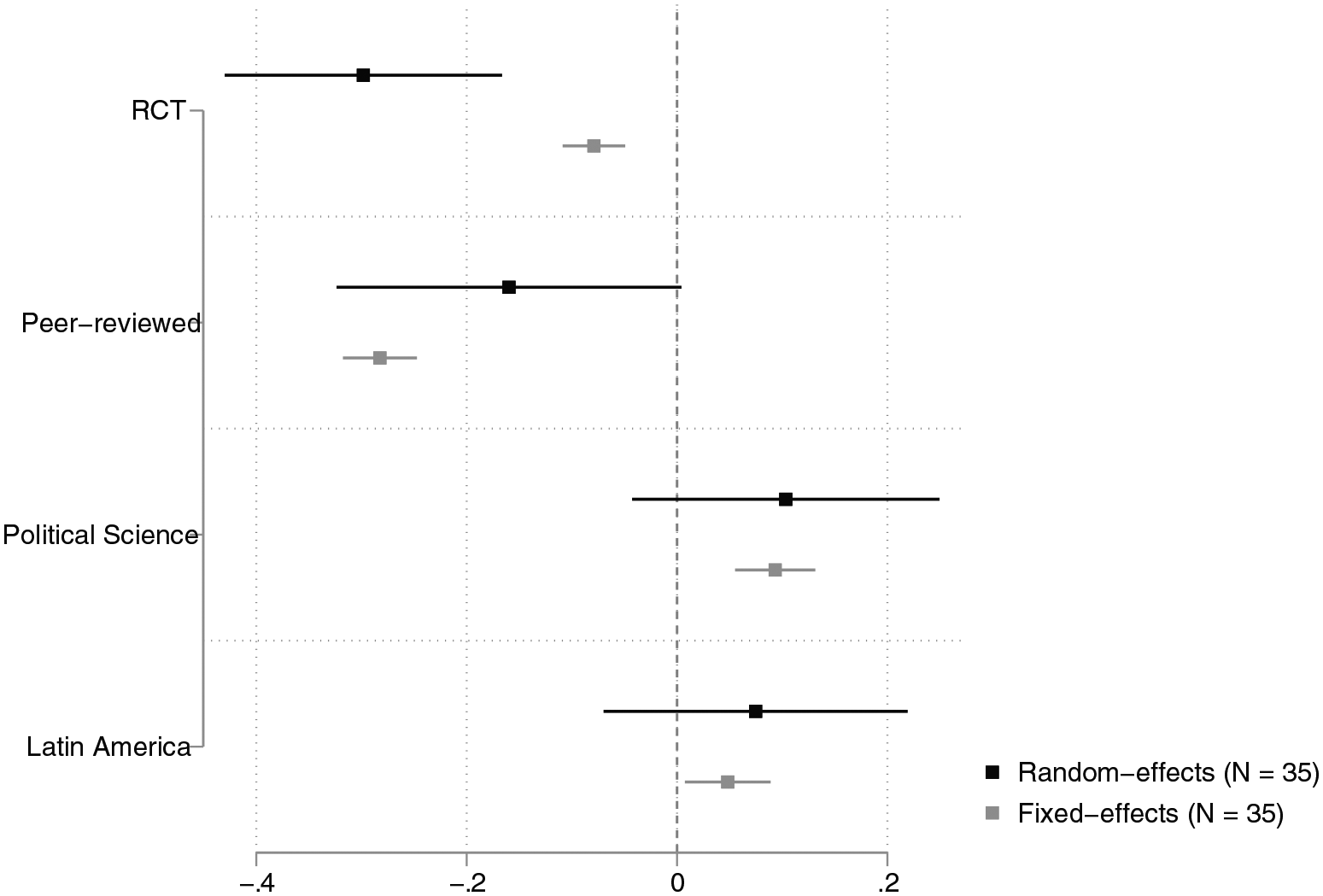

Due to the small number of estimates in the sample (N = 35), I included a few moderators in the meta-regression. The first moderator (RCT) accounts for the variation between estimates from randomized controlled trials and regression discontinuity designs. The second one (Peer-reviewed) tests whether the status (published in a peer-reviewed journal or not) of papers included in the sample affects the effect sizes. The third moderator (Political Science) checks for variations between areas of publications. Specifically, I compare the effect sizes of estimates published in political science with the ones published in economics. The fourth moderator (Latin America) accounts for variations in the effect sizes according to the region where the CCT was implemented (Latin America or Asia).

A meta-regression performs a weighted linear regression of effect sizes on moderators. Therefore, it allows for testing the effect of a given moderator conditional to other covariates included in the same model. I performed a meta-regression with RE and FE models. 9 Since the studies in the meta-analysis represent a sample from a population of interest, one can assume that the effect estimated through a RE extends beyond the K studies included in the meta-analysis to the entire population of interest. By contrast, FE models are typically used whenever the researcher wants to make inferences only about the studies included in the sample. Therefore, FE models are more conservative (Borenstein et al., 2010; Stanley and Doucouliagos, 2012).

Figure 2 reports the meta-regression analysis results. The estimated effect sizes tend to be smaller in studies using RCTs. This result is statistically significant at conventional levels in both RE and FE models. The same pattern is observed for the second moderator (Peer-reviewed). On average, estimates published in peer-reviewed journals tend to be smaller, which speaks against the hypothesis of publication bias in the sample since the estimates that appear in the peer-reviewed journals are smaller than those not published in such journals.

Meta-regression analysis: the effects of CCTs on electoral support for incumbents.

Perhaps surprisingly, estimates published in political science are larger than those published in economics. This result might be a consequence of the predominance of studies using RCTs (the method that produces smaller effect sizes) in economics. Finally, the effect sizes are larger in studies evaluating the electoral effects of CCTs in Latin America. Substantively, this result indicates that incumbents in the region are more likely to be rewarded when they implement CCTs.

Publication-bias

Given the preference of researchers (and journals) to publish statistically significant results, 10 small studies (typically the non-significant ones) are more likely to be underreported than large ones (Abadie, 2020). This so-called publication bias could be the reason for the overall positive effect size reported in Figure 1. To address this concern, I first use a funnel plot to test for small studies bias. In the absence of such bias, the funnel plot should resemble a symmetric inverted funnel. While the distribution of effect sizes is not perfectly symmetrical, as shown in Figure 3, there is no clear evidence of small-studies bias. The presence of estimates at the bottom left side of the funnel plot indicates that researchers report even studies with low levels of precision (higher standard errors).

Funnel plot: testing for small study bias in the sample.

The absence of estimates at the bottom right side of the funnel plot suggests that positive effects with small samples are underreported. I used the nonparametric trim-and-fill method developed by Duval and Tweedie (2000) to correct this bias. The main goal of the trim-and-fill method is to evaluate the impact of publication bias on the calculated overall effect size. This approach is based on the idea that the underreported estimates can be imputed in the sample. One can then reestimate the overall effect by pooling all the estimates (observed + imputed) together.

Recall that the mean effect size based on the 35 observed estimates is 0.123 with a 95% CI of [0.051; 0.195]. As shown in Online Appendix D, 10 hypothetical estimates were imputed. After the imputation of those estimates, the updated overall effect size (based on the 35 estimates + 10 imputed estimates) is 0.179 with a 95% CI [0.115, 0.244]. This result means that after correcting publication bias, the overall effect is still positive and even more sizable.

However, the funnel-plot asymmetry may be caused by factors other than publication bias (e.g., the presence of between-study heterogeneity). For this reason, I implemented the Egger regression-based test, a procedure developed by Harbord et al. (2006) that allows for the detection of small-study effects in the sample. The Egger test investigates whether there is an association between the estimated partial correlation and its standard error. A statistically significant relationship between these two variables would be an indication of publication bias. The p-value of 0.1599 in the Egger test shows little evidence of small-study effects in the sample (see Online Appendix E). Hence, the result does not support the hypothesis that the overall positive effect depicted in Figure 1 is driven by the omission of non-positive (and non-statistically significant) results in the selected sample.

Conclusion

In this paper, I performed a meta-analysis that reported a positive and causal effect of CCTs on electoral support for incumbents and no evidence of publication bias in the selected sample. In other words, poor voters reward politicians when they implement CCTs, suggesting that they also respond to non-clientelistic strategies of electoral targeting. To my knowledge, this is the first attempt to provide a systematic review of this literature. Future research using meta-analysis can take advantage of the recent massive increase of single case studies covering middle and low-income countries. What makes CCTs pay off in some contexts but not in others? Does the quality of implementation matter? Are CCTs different from other types of non-clientelistic policies? By answering these questions, researchers will be able to draw more precise conclusions on whether and when the adoption of programmatic policies pays off.

Supplemental Material

sj-eps-1-rap-10.1177_2053168021991715 – Supplemental material for Do anti-poverty policies sway voters? Evidence from a meta-analysis of Conditional Cash Transfers

Supplemental material, sj-eps-1-rap-10.1177_2053168021991715 for Do anti-poverty policies sway voters? Evidence from a meta-analysis of Conditional Cash Transfers by Victor Araújo in Research & Politics

Supplemental Material

sj-eps-2-rap-10.1177_2053168021991715 – Supplemental material for Do anti-poverty policies sway voters? Evidence from a meta-analysis of Conditional Cash Transfers

Supplemental material, sj-eps-2-rap-10.1177_2053168021991715 for Do anti-poverty policies sway voters? Evidence from a meta-analysis of Conditional Cash Transfers by Victor Araújo in Research & Politics

Supplemental Material

sj-eps-3-rap-10.1177_2053168021991715 – Supplemental material for Do anti-poverty policies sway voters? Evidence from a meta-analysis of Conditional Cash Transfers

Supplemental material, sj-eps-3-rap-10.1177_2053168021991715 for Do anti-poverty policies sway voters? Evidence from a meta-analysis of Conditional Cash Transfers by Victor Araújo in Research & Politics

Supplemental Material

sj-eps-4-rap-10.1177_2053168021991715 – Supplemental material for Do anti-poverty policies sway voters? Evidence from a meta-analysis of Conditional Cash Transfers

Supplemental material, sj-eps-4-rap-10.1177_2053168021991715 for Do anti-poverty policies sway voters? Evidence from a meta-analysis of Conditional Cash Transfers by Victor Araújo in Research & Politics

Supplemental Material

sj-eps-5-rap-10.1177_2053168021991715 – Supplemental material for Do anti-poverty policies sway voters? Evidence from a meta-analysis of Conditional Cash Transfers

Supplemental material, sj-eps-5-rap-10.1177_2053168021991715 for Do anti-poverty policies sway voters? Evidence from a meta-analysis of Conditional Cash Transfers by Victor Araújo in Research & Politics

Supplemental Material

sj-eps-6-rap-10.1177_2053168021991715 – Supplemental material for Do anti-poverty policies sway voters? Evidence from a meta-analysis of Conditional Cash Transfers

Supplemental material, sj-eps-6-rap-10.1177_2053168021991715 for Do anti-poverty policies sway voters? Evidence from a meta-analysis of Conditional Cash Transfers by Victor Araújo in Research & Politics

Supplemental Material

sj-pdf-7-rap-10.1177_2053168021991715 – Supplemental material for Do anti-poverty policies sway voters? Evidence from a meta-analysis of Conditional Cash Transfers

Supplemental material, sj-pdf-7-rap-10.1177_2053168021991715 for Do anti-poverty policies sway voters? Evidence from a meta-analysis of Conditional Cash Transfers by Victor Araújo in Research & Politics

Footnotes

Acknowledgements

For comments and suggestions, I thank Acir Almeida, Lorenzo Casaburi, José Antônio Cheibub, Diego Corrêa, Philipp Kerler, Fernando Limongi, Patrícia Martuscelli, Katharina Michaelowa, and the two anonymous reviewers for their helpful feedback. I am also grateful to Sofie Heintz for excellent research support.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Supplemental materials

The supplemental files are available at http://journals.sagepub.com/doi/suppl/10.1177/2053168021991715 The replication files are available at ![]()

Notes

Carnegie Corporation of New York Grant

This publication was made possible (in part) by a grant from the Carnegie Corporation of New York. The statements made and views expressed are solely the responsibility of the author.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.