Abstract

Objective:

To integrate the Structure–Process–Outcome framework with the SERVQUAL model to develop a performance evaluation index system tailored for internet hospitals in Qingdao, China. This study provides a scientific tool for assessing and optimizing the service quality of internet hospitals.

Methods:

Following the Structure–Process–Outcome framework and the SERVQUAL model, evaluation indicators were systematically collected through literature review and policy analysis. A preliminary index system was constructed and refined through two rounds of Delphi expert consultations. Experts from hospitals, universities, and research institutions in Qingdao, Nanjing, and Lianyungang participated, providing ratings and recommendations. The research team assessed questionnaire reliability, expert positivity, expert authority, and opinion consistency using statistical methods, including Cronbach’s coefficient alpha, authority coefficient, and Kendall’s coefficient of concordance W.

Results:

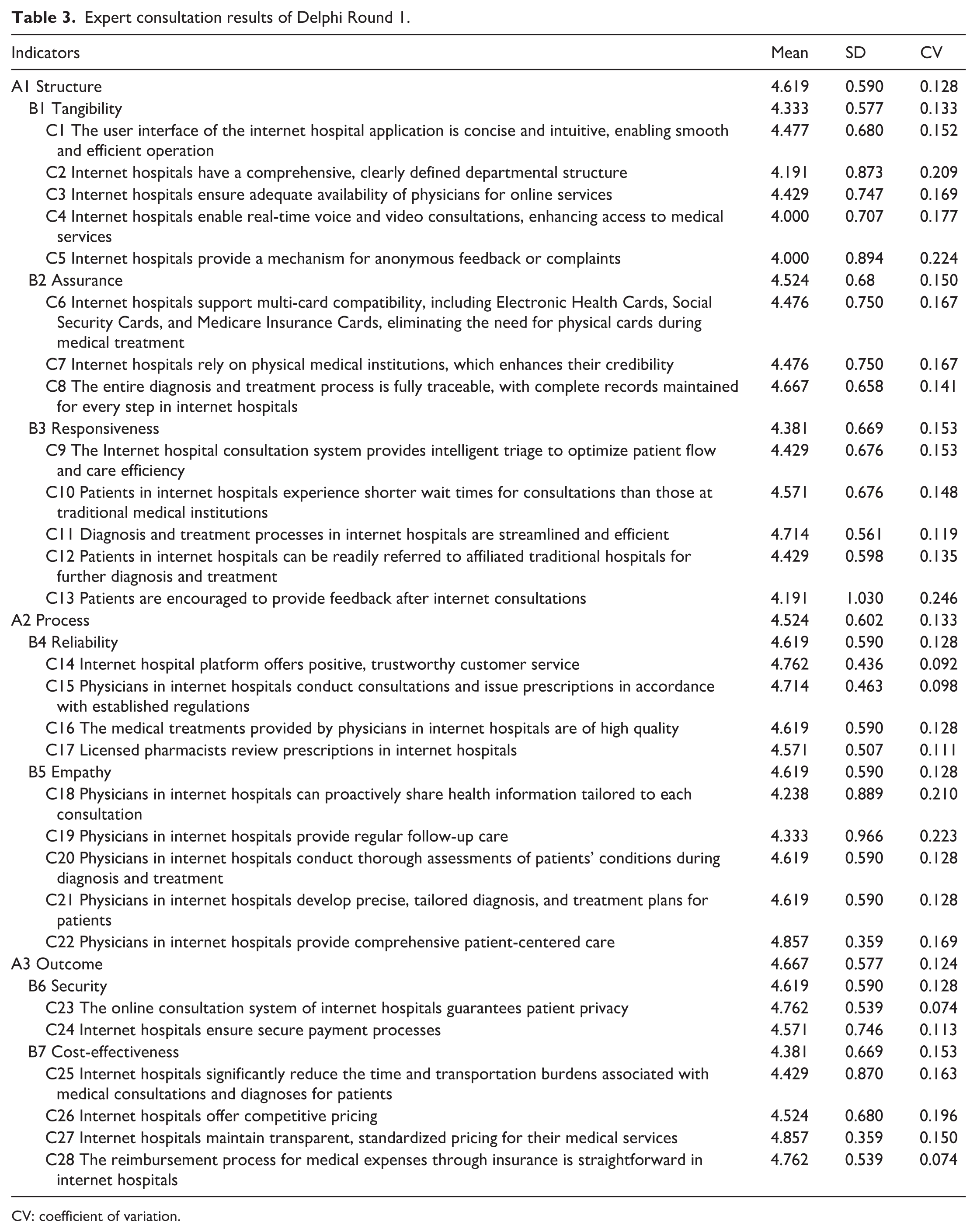

The preliminary index system identified through literature review and a pre-survey included three primary, seven secondary, and 28 tertiary indicators. After Delphi Round 1, some indicators were revised, three tertiary indicators were added, and no indicators were removed. After Delphi Round 2, further revisions were made, and the consultation was concluded when the p value of the test for Kendall’s coefficient of concordance W was <0.001 and the coefficient of variation was within acceptable limits (<0.3). The final index system comprises three primary, seven secondary, and 31 tertiary indicators. The overall Cronbach’s coefficient alpha was 0.962, with values of 0.903, 0.903, and 0.857 for the three primary dimensions, indicating high reliability. The authority coefficient was 0.885, all above the 0.7 threshold. Kendall’s coefficient of concordance W values for secondary and tertiary indicators in the second round were 0.286 and 0.285 (all p < 0.001), reflecting moderate consensus among experts.

Conclusion:

This study provides a practical, outcome-oriented, security-first tool for promoting high-quality development of internet hospitals in China, with potential applicability to similar settings. This study contributes by explicitly integrating Structure–Process–Outcome and SERVQUAL into a unified framework, enabling simultaneous assessment and optimization of process quality and multidimensional service quality in internet hospitals.

Keywords

Introduction

Internet hospitals represent an innovative model that delivers outpatient medical services utilizing internet technology. 1 By integrating multidisciplinary teams—comprising doctors, nurses, pharmacists, and administrative staff—these hospitals provide online medical services, including remote consultations, electronic prescriptions, and drug delivery, while facilitating seamless integration with offline diagnoses.1,2 This model has become a crucial component of the “Healthy China” strategy. Since its introduction to China in 2011, this field has rapidly advanced due to supportive policies and has now become a crucial component of the “Healthy China” strategy. 3 As of February 2024, there were 3340 internet hospitals nationwide, the majority of which were affiliated with public hospitals. 4

Nevertheless, evaluating service quality in internet hospitals continues to pose challenges. Existing studies primarily utilize two established models: the “Structure–Process–Outcome” (SPO) model proposed by Donabedian, 5 which formulates evaluation standards through multidisciplinary expert groups and is widely employed in healthcare service quality assessments,5–7 and the SERVQUAL model introduced by Parasuraman et al., 8 based on the service quality gap theory, which has been validated in healthcare service evaluations in countries such as Saudi Arabia, 9 Iran, 10 Jordan 11 and India. 12 These constraints—the virtual nature and cross-temporal features of internet hospitals—limit the adaptability of any single model and are evidently insufficient. The SPO model fails to capture patient-perceived dimensions of online services, while the SERVQUAL model neglects the core security and efficacy objectives of medical care. For instance, Studies identified patient-satisfaction dimensions of telemedicine using the SERVQUAL model.13,14 The applicability of the SPO model for evaluating service quality in medical-related applications has been validated. 15 Nevertheless, neither model alone satisfies the full-dimensional evaluation requirements of internet hospitals. Beyond SPO and SERVQUAL, healthcare performance evaluation employs diverse frameworks that address quality, security, access, efficiency, and patient experience, as supported by recent research.16,17 Notable examples include multidimensional tools (Balanced Scorecard, EFQM Excellence Model, RE-AIM, CIPP), process-oriented methodologies (Lean Six Sigma, DMAIC, PDCA), KPI dashboards, and patient-centered measures. Guided by this methodological landscape, we integrate SPO and SERVQUAL to identify internet-hospital performance indicators, refined through Delphi expert consultation.

Policy support for internet hospitals has progressed steadily, creating a favorable environment for performance-evaluation research. Since 2015, Guiding Opinions on Actively Promoting the “Internet Plus” Action 18 have positioned internet hospitals as a key element of the Healthy China strategy, with subsequent policies.19,20 In this policy context, our study integrates the SPO and SERVQUAL models to identify performance indicators for internet hospitals, refined through Delphi expert consultation to yield a rigorous, policy-aligned evaluation system.

Here, we propose an evaluation framework that integrates two complementary models and is tailored to the distinctive characteristics of internet hospitals. This framework is essential for high-quality development and ensuring compliance with policy and regulatory requirements. In this study, we systematically collected performance evaluation indicators for internet hospitals and developed a comprehensive evaluation framework. Subsequently, we employed the Delphi method and analytic hierarchy process (AHP) to refine the indicators and determine their weights. Finally, we established a scientifically robust and rational index system for evaluating internet hospitals performance. This framework aims to guide the effective implementation of performance evaluations in internet hospitals and to inform relevant departments in designing service models tailored to their needs.

Materials and methods

Construction of the performance evaluation framework and collection of indicators

This study adopts the SPO framework combined with the SERVQUAL model as the fundamental framework for evaluating the service quality of internet hospitals. Grounded in Opinion on Promoting the Development of “Internet plus Healthcare” 21 and Administration Measures for Internet Diagnosis and Treatments (Trial), 22 the framework combines the dimensions of both models with the virtual nature and accessibility of internet hospitals to clarify the hierarchical structure and interrelationships among evaluation indicators. First, the SPO dimensions are defined as the primary indicators, corresponding to the structural, process, and outcome dimensions of internet hospital services. Second, the seven dimensions of the optimized SERVQUAL model are adopted as secondary indicators and mapped to the corresponding SPO dimensions: Structure encompasses Tangibility and Assurance (external safeguards for internet hospital services). Process encompasses Responsiveness, Reliability, and Empathy (service delivery actions). Outcome encompasses Security and Cost-effectiveness (economic efficiency), reflecting the service’s ultimate outcomes. Finally, the framework establishes a three-tier indicator system: primary indicators (three SPO dimensions)—secondary indicators (seven SERVQUAL dimensions)—tertiary indicators, yielding a clear hierarchy with tight interdependencies. This model is illustrated in Figure 1.

The service quality model for internet hospitals.

Indicators collection

This study adopts the SPO framework and the SERVQUAL model as theoretical foundations. We integrated internet-hospital service features with policy requirements to construct an indicator pool by extensive literature searches. First, we systematically searched PubMed, Web of Science, CNKI, WanFang, and other databases for research from 2014 to 2025. We used keywords “internet hospital,” “performance evaluation,” “SPO model,” and “SERVQUAL model.” Studies were screened according to predefined criteria. A total of 68 articles met the eligibility criteria. We extracted 52 initial indicators comprising 17 structure indicators, 22 process indicators, and 13 outcome indicators. Secondly, we consulted policy documents, such as Opinion on Promoting the Development of “Internet plus Healthcare” and Administration Measures for Internet Diagnosis and Treatments (Trial). These documents translate mandatory policy requirements into measurable indicators. For example, “diagnosis and treatment process traceability” is operationalized as “the entire diagnosis and treatment process is fully traceable” (tertiary indicator). Another example: “protect patient privacy” is operationalized as “the online consultation system of internet hospitals guarantees patient privacy” (tertiary indicator). This step added eight policy-oriented indicators. Thirdly, we conducted two rounds of discussions with five experts in internet medicine. Indicators with low applicability (24 items) were removed. We merged overlapping or redundant indicators (eight items). The final preliminary evaluation indicator system comprises three primary, seven secondary, and 28 tertiary indicators.

Delphi method and its implementation process

The Delphi method is a widely used tool for achieving consensus across diverse fields. 23 Developed in the 1950s–1960s, the Delphi method provides a systematic approach for collecting anonymous expert opinions and facilitating iterative feedback. Its defining features are participant anonymity, structured information flow, regular feedback, and the role of the facilitator. 24 In this study, the experts evaluated each indicator across seven dimensions—importance, sensitivity, and accessibility at the structural, process, and outcome levels. Based on their feedback, we implemented modifications, deletions, or combinations of indicators as necessary.

Inclusion and exclusion criteria for experts

There are no established criteria for selecting Delphi method experts. Participating experts should have authority and representativeness in the relevant field. The number of experts should be determined through a comprehensive assessment of the study’s scope and complexity.25,26 Inclusion criteria: (1) conduct research related to internet medicine, hospital management, or health policy, (2) have at least 5 years of experience in internet-hospital-related work, or hold an intermediate or higher professional title, or possess a doctoral degree, or hold a mid- to senior-level management position in related fields, (3) be familiar with the operating models of internet hospitals and the associated policy requirements, (4) voluntary participate in this study, and committed to completing two rounds of consultations. Exclusion criteria: (1) internet-hospital-related work experience of <5 years, and not hold an intermediate or higher professional title, or do not hold a doctoral degree, or are not in mid- to senior-level management in related fields, (2) lacks a clear understanding of internet-hospital performance evaluation, (3) unable to participate in the full consultation process due to work constraints.

This study employed a Delphi consensus design conducted from March 2024 to June 2024 to reach consensus on evaluation indicators via structured expert input. Two rounds were conducted. Both rounds involved the same panel of 21 experts to ensure continuity of opinions. Experts drew on theoretical and practical knowledge. They assessed the importance of each indicator and could propose additional indicators as warranted by real-world needs. The research team reviewed feedback from the first round. Retention decisions were based on mean importance scores >4 and a coefficient of variation (CV) <0.3, as reported in the Romm and Hulka. 23 A revised questionnaire was developed. This revised instrument incorporated optimized indicators and detailed scoring rules and was circulated to the experts for a second evaluation. After two rounds, expert opinions and indicator revisions converged, and the coordination coefficient stabilized, allowing the process to conclude.

Questionnaire structure and distribution

This study utilized the Delphi method to establish a systematic framework for assessing the service quality of internet hospitals. Prior to formal distribution, five experts specializing in internet healthcare participated in the pilot test, representing ~23.81% of the planned Delphi panel. And their feedback was used to revise ambiguous expressions and adjust the indicator description logic in the questionnaire.

The questionnaire consisted of four main sections: (1) Expert profile, which gathered demographic and professional information, including age, gender, education, work unit, title, position, and years of experience. (2) Indicator scoring, where experts rated the importance of each indicator on a 5-point Likert scale. 27 Specifically, the scale ranged from “1” indicating very unimportant to “5” denoting very important, with “2” representing unimportant, “3” indicating moderate, and “4” implying important. (3) Open-ended questions, which solicited recommendations for developing a performance evaluation index system tailored for internet hospitals. (4) Self-assessment, where experts evaluated their familiarity with the indicators and detailed their judgment criteria. The questionnaire was distributed to experts via email. After completing the questionnaire, experts provided feedback and addressed additional relevant questions via email to the research team.

Statistical analysis

Data entry and database construction were performed using Microsoft Excel 2017 (Microsoft Corp., Redmond, WA, USA), while the data analysis was conducted using the Statistical Package for the Social Sciences software (version 26.0; IBM Corp., Armonk, NY, USA). The Delphi method was applied for indicator screening, and AHP was used for weight calculation, ensuring the scientific rigor and feasibility of the index system.

The reliability of the questionnaire was assessed using Cronbach’s coefficient alpha (Cronbach’s α), which ranges from 0 to 1. Typically, a Cronbach’s α value >0.7 is regarded as acceptable. 28 The Cronbach’s α for the index system is 0.962. Specifically, the Cronbach’s α values for structure dimension, process dimension, and outcome dimension are 0.903, 0.903, and 0.857, respectively. All values exceed 0.7, indicating a high level of overall reliability in the questionnaires. A descriptive analysis of the experts’ basic profiles was conducted to illustrate their professional levels, breadth of their knowledge, and extensive work experience related to internet hospitals. The positive coefficient of experts serves as a key indicator of the effective response rate to the questionnaire. This rate is crucial for assessing the reliability and scientific validity of the research outcomes. Authoritative studies indicate that, within the Delphi method framework, an effective response rate of 50% is the minimum acceptable threshold. A response rate of 60% is considered moderate, while a rate exceeding 70% is regarded as very high. 29 The authority coefficient (Cr) is typically derived from the judgment basis (Ca) and the experts’ familiarity coefficient (Cs). 30 Specifically, Ca reflects the extent to which an expert’s judgment is based on empirical evidence, while Cs indicates the degree of expertise an expert has regarding a particular issue. An expert’s authority level is positively correlated with the Cr value. According to prevailing consensus, reliability is considered acceptable when the Cr value exceeds 0.7. 30 Kendall’s coefficient of concordance W (Kendall’s W) test is used to evaluate the effectiveness of the expert coordination coefficient. Kendall’s W quantifies the degree of agreement among n experts across all evaluation indicators, with scores ranging from 0 to 1. 31 A value closer to 1 indicates a higher level of coordination and consensus among the experts. Statistical testing revealed significance, indicated by p < 0.05. Two rounds of Delphi expert consultation were conducted to assign weights using the AHP and to construct a hierarchical model. Subsequently, a pairwise judgment matrix reflecting the indicators’ relative importance identified during the expert consultations was constructed, and its consistency was tested. If the Cr was below 0.10, 32 the judgment matrix was regarded as having acceptable consistency, and the corresponding weights were calculated.

Results

Description of expert profile

Two rounds of Delphi expert consultations were conducted, with the same 21 experts participating in both rounds to ensure the continuity and consistency of opinions. All participating experts were affiliated with hospitals, universities, or research institutes in Qingdao, Nanjing, and Lianyungang, China, and took part in both Delphi rounds. The group consisted of 10 males and 11 females, with ages ranging from 35 to 40 years. All experts held at least a bachelor’s degree, 42.86% held a master’s degree, and 23.81% a doctoral degree. Among them, 18 (85.71%) worked in hospitals, 19 (90.48%) held intermediate or higher titles, and 14 (66.67%) held administrative positions. Only four participants had <5 years of work experience, 80.95% had more than 5 years (Supplemental Material).

To enhance the comprehensiveness and authority of weight judgments for the AHP, a total of 32 experts were invited for weight calculation. This AHP expert panel included the original 21 Delphi experts supplemented by 11 additional professionals specializing in health management, medical informatics, and public health policy. The 32 AHP experts comprised 16 males and 16 females, with no significant age differences among the three groups. All experts held at least a bachelor’s degree, 68.75% had a master’s degree or higher. Among them, 29 (90.63%) worked in hospitals, 30 (93.75%) held intermediate or higher titles, and 17 (53.13%) held administrative positions. The majority (59.38%) had more than 10 years of work experience. A detailed description of the expert panel is presented in Table 1.

Background and description of experts.

AHP: analytic hierarchy process.

Expert positivity

In both Delphi rounds, expert positivity remained at 100% (21/21), indicating complete participation and high engagement of the expert panel throughout the process.

Expert authority

The expert authority indicates the reliability of the expert judgments obtained during the consultations. In the two rounds of consultation, the mean values were 0.94 for Ca, 0.83 for Cs, and 0.885 for Cr, all above the 0.70 threshold.

Coordination of expert opinions

In this study, Kendall’s W values for secondary and tertiary indicators were 0.148 and 0.165 in Delphi Round 1, and 0.286 and 0.285 in Delphi Round 2 (Table 2), respectively, with p < 0.05 in both rounds. These results reflected moderate consensus among experts.

Kendall’s W and its test results.

Kendall’s W: Kendall’s coefficient of concordance W.

Indicator screening

In both Delphi rounds, 21 questionnaires were distributed to experts, who provided scores and comments for each indicator. The mean importance scores for primary, secondary, and tertiary indicators were all >4, with CV <0.3 (Tables 3 and 4). Following the consultation, some indicators were deleted or revised according to the project group’s recommendations.

Expert consultation results of Delphi Round 1.

CV: coefficient of variation.

Expert consultation results of Delphi Round 2.

CV: coefficient of variation.

The tertiary indicator “C4 Internet hospitals enable real-time voice and video consultations, enhancing access to medical services.” was changed to “C4 Internet hospitals enable real-time consultations through images, audio, or video modalities, thereby improving access to healthcare.”

The tertiary indicator “C6 Internet hospitals support multi-card compatibility, including Electronic Health Cards, Social Security Cards, and Medicare Insurance Cards, eliminating the need for physical cards during medical treatment.” was changed to “C6 Internet hospitals support compatibility with multiple card types, including Medical Treatment Cards, Electronic Health Cards, Social Security Cards, and Medicare Insurance Cards, thereby eliminating the need for physical cards for medical consultations.”

The tertiary indicator “C10 Patients in internet hospitals experience shorter wait times for consultations than those at traditional medical institutions.” was changed to “C10 Physicians in internet hospitals promptly respond to medical consultations and resolve them within 24 h.”

The tertiary indicator “C14 Internet hospital platform offers positive, trustworthy customer service.” was changed to “C14 Physicians in internet hospitals demonstrate a positive and professional service attitude.”

The tertiary indicator was added “C17 The treatment outcomes achieved by physicians in internet hospitals are favorable.”

“C21 Physicians in internet hospitals develop precise, tailored diagnosis and treatment plans for patients.” was changed to “C22 Physicians in internet hospitals develop precise and personalized diagnosis and treatment plans for patients, including medication guidance, health-management recommendations, drug dispensing, and online nursing services.”

Two tertiary indicators were added “C26 Internet hospitals safeguard patient data.” and “C27 Internet hospitals ensure the security of medications prescribed to patients.”

The tertiary indicator “C28 The reimbursement process for medical expenses through insurance is straightforward in internet hospitals.” was changed to “C31 Medical expenses are reimbursed by health insurance, and the reimbursement process is streamlined in internet hospitals.”

After iterative refinement in Delphi Round 1 and subsequent validation through expert consensus in Delphi Round 2, the service quality evaluation index system for internet hospitals was established. This system comprises three primary, seven secondary, and 31 tertiary indicators. A detailed description of expert consultation results is presented in Table 4.

Weights for primary, secondary, and tertiary indicators

Using the combined weights, the outcome dimension has the largest weight (0.493) in the primary indicators. Among the secondary indicators, security has the largest weight (0.411), followed by assurance (0.207) and reliability (0.109). Among the tertiary indicators, data security in the diagnosis and treatment process has the largest weight (0.164), followed by standardized diagnosis and treatment through process traceability (0.102), privacy protection (0.082), security of the payment process (0.082), and medication security (0.082). Details are provided in Table 5.

Weights of the service quality evaluation index system for internet hospitals.

Discussion

Using the integrated SPO framework and the SERVQUAL model of service quality gaps, this study develops an internet-based hospital service quality evaluation system based on two rounds of Delphi expert consultations. The system comprises three primary indicators, seven secondary indicators, and thirty-one tertiary indicators. Drawing on empirical data and the contemporary development of internet-based healthcare, this study analyzes key aspects in depth and offers guidance for theoretical refinement and practical implementation in this field.

Structural characteristics of the indicators

This indicator system is characterized by a distinctive “outcome-oriented, security-first” feature. The outcome dimension (A3) has the highest combined weight at 0.493, with security (B6) at 0.411, ranking first among all secondary indicators. At the tertiary-indicator level, security-related indicators—such as “Patient data security” (C26, combined weight: 0.164), “Privacy protection” (C23, combined weight: 0.082), and “Medication security” (C27, combined weight: 0.082)—collectively exceed 0.30, consistent with the virtual nature of internet hospitals.33–35 Compared with traditional physical hospitals, internet hospitals’ diagnoses and treatments often occur outside offline settings, increasing risks such as privacy breaches, data tampering, and medication errors that can erode trust.36–38 Therefore, security has become the core evaluation dimension in expert consensus. 38 This echoes the principle of ensuring medical quality and security in the Internet Diagnosis and Treatment Management Measures (Trial). 20

In the structural dimension (A1, combined weights 0.311), assurance (B2, combined weights 0.207) exceeds tangibility (B1, combined weights 0.104). Indicators such as “traceability of the entire diagnosis and treatment process” (C8, weight 0.102) and “multi-card compatibility” (C6, combined weights 0.065) are prominent. This suggests that internet hospitals’ credibility derives not only from the platform interface (C1, combined weights 0.044) and other visible facilities, but also from the underlying capacity to mobilize resources—such as leveraging physical hospitals to enhance credibility (C7, combined weights 0.041)—and from standardized diagnosis and treatment through process traceability (C8, weight 0.102). This is consistent with China’s internet hospital development model, which relies on physical hospitals.39,40

The process dimension (A2) has a relatively low weight of 0.196; among its components, empathy (B5, combined weights 0.018) is the smallest. This reflects a current trend in internet hospital evaluation toward functional priority: early development emphasizes service accessibility (e.g. real-time inquiries C4, referral convenience C12) and reliability (e.g. compliant diagnosis and treatment C15, prescription review C18). Humanistic care in doctor–patient interactions (C19, C20) has not yet been identified as a core requirement. This pattern aligns with user demand for efficient and convenient internet medical services and leaves room for future improvements.37,40

Weight interpretation and policy implications

Weights are derived from a hierarchical single-ranking framework and reflect the relative importance of primary, secondary, and tertiary indicators within the overall evaluation. Indicators with higher weights are closely linked to core governance capabilities, including security, privacy protection, and the traceability of the diagnosis and treatment process. Consequently, internet hospital management should prioritize these domains in policy and resource allocation. Weights are based on expert judgment and entail subjectivity. Accordingly, policy implementation should be tailored to the local context, and robustness should be assessed in future work using sensitivity analyses.

Scientific rigor and representativeness of the Delphi consultation process

Two rounds of Delphi consultations supported the reliability of the indicator system by improving implementation quality. The second round included 32 experts from hospitals, universities, and research institutions in Qingdao, Nanjing, and Lianyungang. Among the experts, 90.63% held intermediate or higher professional titles, 68.75% held a master’s degree or higher, and 59.38% had more than 10 years of experience spanning medical practice, academic research, hospital management, and related fields. This formed a triad consulting team with clinical, research, and management expertise. This diverse composition ensures that the indicators reflect frontline clinical needs (e.g. physician response time C10) while aligning with industry supervision and academic norms (e.g. diagnosis and treatment process traceability C8), thus avoiding the limitations of a single perspective. Regarding the consultation outcomes, the expert enthusiasm coefficient remained at 100%, and the Cr = 0.885 exceeded the 0.7 threshold. Consequently, the expert authority in this study is high, which enhances the credibility of the consulting results. The Kendall’s W for the second round rose to 0.285–0.286, indicating moderate consensus of expert opinions. This supported the reliability of the Delphi process. Notably, two criteria were used to screen indicators: a mean importance score >4 and a CV <0.3. This approach ensures the core value of the indicators while reducing the dispersion of expert evaluations. Compared with studies that rely solely on importance scores, this approach further enhances the rigor of the indicator system.41,42

Integration and adaptation of theoretical models

Existing studies evaluating internet-based medical services primarily adopt either the SPO model—focusing on the full-chain “SPO” closed loop—or the SERVQUAL model—focusing on service quality dimensions such as tangibility, reliability, and responsiveness. However, the SPO model is difficult to adapt to virtual service scenarios in internet hospitals, 43 while SERVQUAL neglects core medical objectives such as security and effectiveness.44,45 This study innovatively fuses the two models by using the SPO framework and dividing “SPO” into three primary indicators. It also decomposes SERVQUAL’s five dimensions into secondary indicators (e.g. the structure dimension includes “tangibility” and “assurance”; the process dimension includes “responsiveness,” “reliability,” and “empathy”). This combination covers internet hospitals’ hardware base (structure), service provision (process), and actual outcomes (results), while capturing patients’ perceived service quality—such as response speed and humanistic care—thereby filling the adaptation gap of a single model in virtual medical settings.

Practice-oriented optimization of indicator content

The indicator system’s iterative development reflects a synthesis of problem-oriented and user-friendly orientations. For example, to address accessibility gaps in online diagnosis and treatment, the original indicator “real-time voice/video consultations” is expanded to “real-time consultations in multiple formats—image, voice, and video” (C4) to accommodate patients’ varying technical abilities. Additionally, in line with policies promoting medical-insurance electronic credentials, the scope of “multi-card compatibility” is broadened from “electronic health card, social security card” to “diagnosis-and-treatment card, medical-insurance electronic credential” (C6), reducing reliance on physical cards. To improve physician–patient communication efficiency, physicians should respond to inquiries within 24 h (C10), avoiding ambiguity from terms such as “short waiting times.” Additionally, new indicators—“patient data security” (C26) and “medication security” (C27)—are added to address public concerns about privacy breaches, aligning the indicator system with industry needs.

Providing a quantitative yardstick for the operation and management of internet hospitals

China currently has 3340 internet hospitals, but most institutions focus on construction rather than operation and lack a unified standard for evaluating service quality. 4 The indicator system developed in this study can serve as a diagnosis tool for hospital self-optimization. For features with the highest weight in the “outcome dimension—security,” hospitals should prioritize measures such as data encryption and prescription review (e.g. C18 “pharmacist prescription review”). Given the relatively low weight on the “process dimension—empathy,” hospitals should gradually enhance humane services, including patient follow-up (C20) and health-knowledge dissemination (C19), to achieve coordinated improvements in security, efficiency, and patient experience. Additionally, components such as “simplified medical insurance reimbursement” (C31) and “convenient referral” (C12) can guide hospitals to implement internet + Healthcare policies more effectively, improving patients’ access.3,18–20

Providing a basis for industry regulation and policy implementation

In recent years, authorities have issued policies such as Administration Measures for Internet Hospitals (Trial) 20 and Regulations on the Management of Telemedicine Services (Trial), 20 which call for stronger quality supervision of internet hospitals but lack concrete evaluation indicators. The indicator system aligns well with policy requirements: for example, “traceability of the entire diagnosis and treatment process” (C8) aligns with the policy’s requirement for medical records traceability; “relying on physical hospitals to enhance credibility” (C7) reflects the regulation that internet hospitals must be affiliated with physical hospitals; and “anonymous feedback channels” (C5) supports protecting patients’ rights.40,46 Therefore, this index system provides regulators with directional guidance to develop quality-regulation frameworks, identify potential industry pain points, and formulate corresponding strategic actions.

Limitations of the study

This study presents several limitations. First, although explicit criteria and rigorous procedures were employed during the expert consultation, the Delphi method inherently introduces subjectivity that may bias the results. In subsequent assessments, internet hospitals can evaluate the indicators according to their circumstances to determine the applicability of the indicator system more scientifically. Second, due to time and resource constraints, only 21 experts were included in both rounds of Delphi expert consultations. At present, there is no established standard for the number of experts to be included. Although the expert group size is relatively small, it aligns with the number of experts in many prior studies, and larger groups may require additional rounds to reach consensus. In future studies, we will broaden the scope and scale of the Delphi expert consultation to enhance the representativeness. Third, the questionnaire used in this study has not undergone formal validation in the current population. A pilot test with five participants was conducted to assess feasibility, representing ~23.81% of the planned sample. The lack of formal validation may affect reliability and construct validity, and future work should include formal validation of the instrument in the target population. Fourthly, due to the experts’ tight schedules, they could not participate in further research or apply the AHP to differentiate indicator weights initially, which may lack rigor. In future surveys, we will adjust the indicator weights using objective weighting methods (e.g. principal component analysis or entropy methods) based on survey feedback. In addition, the experts from only three Chinese cities (Qingdao, Nanjing, Lianyungang) may limit national representativeness. Therefore, broader geographic sampling is needed for future nationwide adaptation. Finally, this indicator system was developed based on Qingdao’s practical experience. Its applicability to other regions in China and to other countries warrants careful evaluation. Core indicators such as patient data security (C25), medication security (C26), and privacy protection (C23) are universal and address global medical security needs without geographical limitations. However, indicators such as multi-card compatibility (C6) and simplified medical insurance reimbursement (C31) are context-specific and tightly integrated with China’s medical insurance system. These indicators require localization and adaptation when applied in other regions or countries.

Conclusions

The evaluation index system for internet hospital service quality developed using the Delphi method, aligns with current healthcare service practices and relevant national policies, and demonstrates validity, reliability, and feasibility. The system provides tools to support the evaluation of internet-hospital service quality and offers important guidance for service optimization. Going forward, we will establish a dynamic updating mechanism in response to advances in information technology and policy changes, enabling timely optimization of the indicator content and weights. Additionally, multi-scenario pilot applications and testing will be conducted to continuously improve the framework and promote high-quality development of internet hospitals.

Supplemental Material

sj-docx-1-smo-10.1177_20503121261429633 – Supplemental material for The development of a performance evaluation index system for internet hospitals in Qingdao, China: A Delphi consensus study

Supplemental material, sj-docx-1-smo-10.1177_20503121261429633 for The development of a performance evaluation index system for internet hospitals in Qingdao, China: A Delphi consensus study by Nan Cui, Jian Sun, Xiufen Sui, Ping Ma, Jianping Sun, Zulqarnain Baloch and Jing Cui in SAGE Open Medicine

Footnotes

Acknowledgements

This study was supported by Youth Fund of the Shandong Provincial Natural Science Foundation of Shandong Province (ZR2021QG066) and Qingdao Outstanding Health Professional Development Fund.

Author contributions

N.C. and J.S. conceived the research idea, designed the study, and supervised its execution. N.C. and J.C. performed data collection and management. J.C., J.S., and X.S. drafted the article. J.C. and P.M. organized and analyzed the data. P.M., J.S., and N.C. critically revised the article. All authors read and approved the final article. All authors had full access to all study data and were responsible for decision to submit the article for publication.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data availability statement

The data supporting the findings of this study are not publicly available to protect the privacy of the expert panel, however de-identified data can be obtained from the corresponding author upon reasonable request.

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.