Abstract

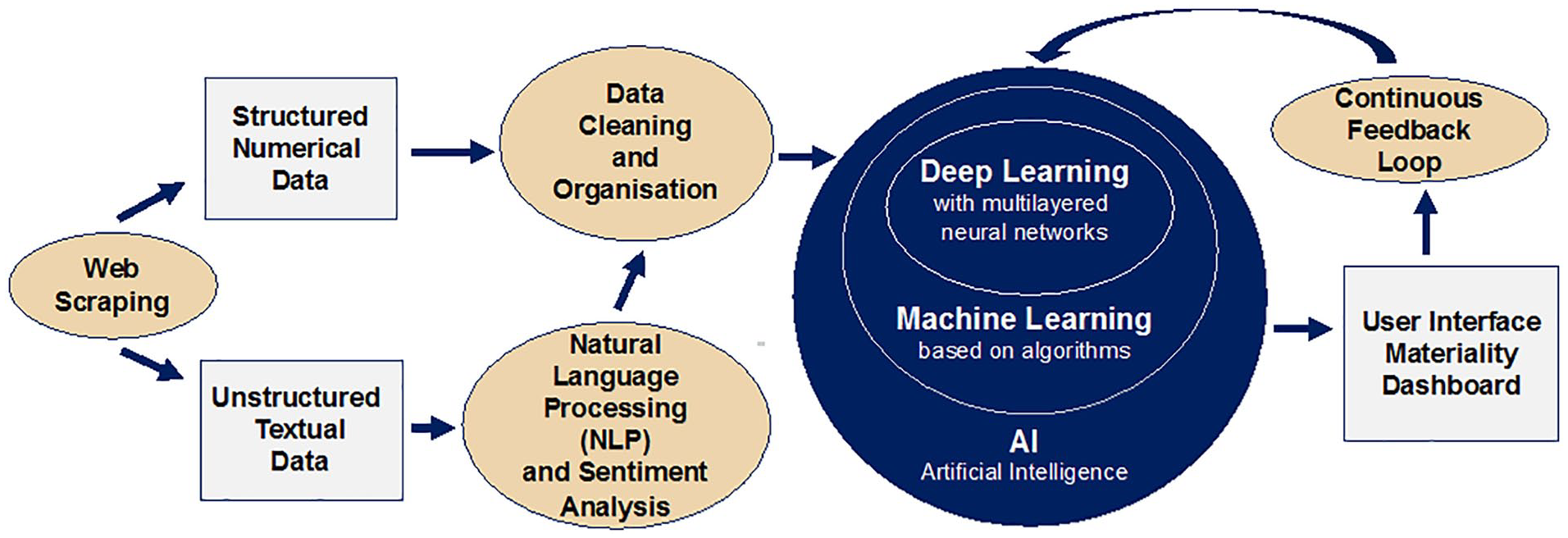

The case focusses on Rho AI, a data science firm, and its attempt to leverage artificial intelligence to encourage environmental, social and governance investments to limit the impact of climate change. Rho AI’s proposed open-source artificial intelligence tool integrates automated web scraping technology and machine learning with natural language processing. The aim of the tool is to enable investors to evaluate the climate impact of companies and to use this evaluation as a basis for making investments in companies. The case study allows for students to gain an insight into some of the strategic choices that need to be considered when developing an artificial intelligence–based tool. Students will be able to explore the role of ethics in decision-making related to artificial intelligence, while familiarising themselves with key technical terminology and possible business models. The case encourages students to see beyond the technical granularities and to consider the multi-faceted, wider corporate and societal issues and priorities. This case contributes to students recognising that business is not conducted in a vacuum and enhances students’ understanding of the role of business in society during new developments triggered by digital technology.

Keywords

Introduction

It was a Monday morning in early January 2020, and most of the 25-or-so US-based staff members of data science and artificial intelligence (AI) company, Rho AI, were online for the company’s daily stand-up. 1 Issues that needed deeper thought were flagged for smaller discussions at a later stage. Gilman Callsen, the company’s Chief Technology Officer, raised the topic of a brand new project to develop an open-access and open-source, environmental, social and governance (ESG) investment tool. ‘In many ways this project puts us into new territory, so we need to discuss the ethics involved in this project and the potential business case, data quality and impact’, he said. Callsen and David McColl, Rho AI’s Head of Commercialisation, agreed to speak later that day to consider the issue.

Background on Rho AI

With the slogan, ‘Let’s uncover the value of your data’, Rho AI had evolved from Pit Rho, a company that Callsen and four others had founded in 2012, when they developed an AI product that provided statistical insights into races held by the National Association for Stock Car Auto Racing (NASCAR) 2 (Fischer and Beswick, 2019, Online interview with Gilman Callsen, 4 December; Weaver, 2018). Having made progress with Pit Rho, the founders then set out to build a bigger company that used data science and machine learning to develop products and services that would enable companies to obtain, organise and utilise data to their benefit. ‘We definitely do try to steer the ship towards impact projects whenever possible – it’s deeply rooted in our culture – we are always looking for meaningful projects that have something tangible as an end product. We want to be doing data science that impacts the world. We don’t want to be doing something that analyses tweets, or anything like that’, said Callsen (Fischer and Beswick, 2019, Online interview with Gilman Callsen, 4 December).

Using the Gartner Hype Cycle for Artificial Intelligence, 2019, McColl described the nature of the industry in which Rho AI operated. ‘In the beginning there were a few behemoths that were producing black box AI models that were supposed to be generalized enough to work in any application’, he said.

Things like IBM Watson were supposed to replace everything humans did (Peak of Inflated Expectations). But that approach didn’t prove to be as fruitful as anticipated because AI hadn’t reached a state where it was truly general (Trough of Disillusionment). Then people started to understand the need to develop models in tandem with industry knowledge and make the process more transparent, so many companies started forming around specific verticals and areas of expertise (Slope of Enlightenment). And now there are many companies carving out niches, either making AI platforms for one specific process/category or deeply integrating with the expertise of the partners they work with (Plateau of Productivity). To thrive in this space you need to have a good understanding of both the mathematics of the models and how they apply to the specific use cases. (Fischer and Beswick, 2019, Online interview with David McColl, 21 November; Gartner, 2019)

A desire to foster ESG investment

The ESG investment 3 tool that Rho AI was developing was designed to make it easier for small-scale investors in particular to understand and integrate information on companies’ climate impact into their decisions about where to invest. The appetite for ESG investment was at the start of 2020 increasing globally. Sustainable investing assets in the major developed economies amounted to approximately US$30.7 trillion in 2018, a 34% increase on 2016 figures and 68% increase on 2004 figures (Global Sustainable Development Alliance, 2019; McKinsey, 2019). In the United States, ESG assets grew from US$8.7 trillion at the start of 2016 to US$12.0 trillion at the start of 2018, an increase of 38%. ESG assets constituted 26% of all investment assets under professional management in that country (Global Sustainable Development Alliance, 2019).

At the beginning of 2020, following a period of 20 years, climate change had become an ever more important component of ESG investment (Common Fund Institute, 2013), with a need for such investment coming into even sharper focus as the effects of global warming were becoming more apparent. 4 With the signing of the United Nations (UN) Paris Agreement in 2016, the countries which were signatories to this agreement had undertaken to put in place measures to keep the global rise in temperature to well below 2°C above pre-industrial levels and to attempt to keep the increase to 1.5°C by the end of the century (UN, 2016). The Intergovernmental Panel on Climate Change (IPCC) estimated that to achieve this goal, there would have to be an annual average investment in the energy system of around US$2.4 trillion between 2016 and 2035, representing about 2.5% of the world GDP (IPCC, 2018).

Air pollution, that is, carbon dioxide and methane, released, for example, by burning fossil fuels, contributes to and exacerbates climate change. In addition to this, once the coronavirus pandemic developed in spring 2020, initial findings examining the link between COVID-19 mortality rates and air pollution showed a correlation, though not necessarily a causation, with a relative higher mortality rate in areas with higher air pollution and higher population density compared on a regional basis 5 (Gross and Hook, 2020).

While at the time of writing this case study, the investment performance of ESG-centred portfolios through the COVID-19 crisis was still inconclusive, and there was growing evidence that companies that focussed on ESG issues within their organisation performed better over the long term (DB Climate Change Advisors, 2012; McKinsey, 2019). Thus, investment in such companies would also maximise monetary returns for their investors. Despite this, adoption of ESG metrics in investment decision-making had not reached the levels that the IPCC had indicated were necessary to keep global warming at the desired levels. The major reasons for this related to information. According to the Department for International Development in the United Kingdom, two of the three people in the United Kingdom agreed with the statement, ‘I have a responsibility to make the world better, and I want my investment choices to make a difference’. However, 54% of people cited a lack of information as a barrier to sustainable investment (HM Government, 2019).

Research by the Stanford Global Projects Center identified three barriers to integration of ESG data into investment decision-making. These were the following:

Unlike traditional financial data that were structured and quantitative, ESG data tended to be ‘unstructured, qualitative, scattered, and incomplete’ (In et al., 2019, p.5);

Evaluation of ESG data required more robust tools to ensure that they were used appropriately;

Investors were not using new tools of data collection and analytics effectively (In et al., 2019).

Brad Foster and David Tabit, respectively, global head of enterprise data content and global head of equity data at Bloomberg, remarked that lack of access to consistent and reliable ESG data was forcing investors to ‘expend an excessive amount of already limited resources trying to standardize and interpret unstructured data, slowing down experienced investors and inhibiting new entrants from joining the field’ (Foster and Tabit, 2019).

It was this lack of information that Rho AI intended to address. The company planned to use automated web scraping technology, natural language processing (NLP) and machine learning to collect and to analyse climate-related data on US-listed companies and to build an open-access software platform that provided transparency on how companies were contributing to, or were tackling, environmental problems. Access to this information would be provided on an intuitive, user-friendly interface. Initially, the project would focus on the areas of pollution monitoring, air quality and efficient use of energy, including companies’ transportation systems and supply chains. The areas covered could expand to include other ESG factors in the future (McColl, 2019, Email correspondence with Isabel Fischer, 17 September).

Implementation of the project

Rho AI’s conventional development methodology was to put in place a road map for each project with dedicated developers assigned to the project and responsible for hitting their milestones. The development process started with building a full technical assessment – ‘everything from how it is going to be used, what our end data will look like, to what format it needs to be in so that our users can use it’ explained McColl.

Then we work backwards: what original data do we need to find, where is it located, and how do we bring it in? Then we start to discuss all the pipelines that handle that data: which databases they are stored in, how to pull data in and out of that database, what types of models we want to use to run this data through, what machine learning

6

techniques we want to use.

7

(Fischer and Beswick, 2019, Online interview with David McColl, 21 November)

When it came to information sources, this project was unusual for Rho AI. Ordinarily, the company worked with a client’s own dataset. In this instance, there was no client, and Rho AI had to obtain information from a variety of public sources. The information would come from structured and unstructured sources, including company reports and social media pages, as well as free online information pages containing ESG metrics, such as the World Benchmarking Alliance 8 and WikiRate 9 (McColl, 2019, Email correspondence with Isabel Fischer, 17 September). ‘That is why we decided to go for public equities first – because that data is readily available’, explained McColl (Fischer and Beswick, 2019, Online interview with David McColl, 21 November).

As was its custom, Rho AI had assembled an advisory group for the project that included domain experts (Fischer and Beswick, 2019, Online interview with Gilman Callsen, 4 December). Among other responsibilities, this team’s role was to establish what constituted current, relevant and ethical data collection and reporting for this platform. ‘Part of the process is judging the quality of the sources. Normally we partner with domain experts who understand what is quality data in this vertical’, 10 explained McColl. ‘At Rho AI, we concern ourselves with whether the data is clean and consistent. Then we turn to the domain expertise to assess whether it is valuable – is it the right data to be used?’ (Fischer and Beswick, 2019, Online interview with David McColl, 21 November).

Using a variety of software tools, including those which it had developed itself, Rho AI would develop processes that automatically and regularly downloaded and stored the required data as unstructured information. The goal of this step was simply to identify text that may be relevant to evaluating a company’s climate impact. After this, NLP models based upon methods that included deep learning recurrent neural networks 11 would be used to identify paragraphs, passages, sentences, phrases and/or words that were associated with climate impact (McColl, 2019, Email correspondence with Isabel Fischer, 17 September).

Having done this, Rho AI then planned to use a set of machine learning models to convert this NLP text into structured information (numerical values or rankings). Thus, the tool would make a numeric estimate of the climate impact of the companies concerned, based upon the language used in their text, and impute values where no public comment was made or identified (McColl, 2019, Email correspondence with Isabel Fischer, 17 September).

McColl explained the process further:

A lot of the data that we are pulling in is unstructured. So we have to run natural language processing to understand what it is actually trying to say. A lot of it is documents that are more qualitative than quantitative. So we have to be able to take sentences and run a sentiment analysis to gauge how what they are saying will translate into a sustainability score on a certain metric. (Fischer and Beswick, 2019, Online interview with David McColl, 21 November)

Using data that linked companies’ language use with their historical climate impact, Rho AI could build a model that would predict the linkage between language use and actual climate impact currently and in the future. Then, it could use these data to train the AI tool to predict how companies’ current language in the area of climate change would translate into action. ‘You have to have something to train it on’, said McColl. ‘Then the tool can look at the new information that is coming in and make its best predictions’ (Fischer and Beswick, 2019, Online interview with David McColl, 21 November).

McColl continued,

We build into our models our confidence in our predictions – so ‘this language translates to this metric and we’re about 50% confident in it’. We don’t want to be binary with these things. Some things you can be more confident in – so things like SEC data you can have much more confidence in than a LinkedIn post – but we try to develop methodologies for saying how confident we are in a prediction, based on historical data. This confidence is integrated into the score that the company gets in the end. (Fischer and Beswick, 2019, Online interview with David McColl, 21 November)

‘It’s not a perfect science to do this – there is definitely human input to determining what should be weighted and where’, McColl added. To establish the methodology for this project, Rho AI planned to establish a group of its own data scientists along with the advisory group. The role of the domain experts was to look beyond the numbers and to evaluate how the data should be factored (Fischer and Beswick, 2019, Online interview with David McColl, 21 November).

These factors were important in mitigating against personal bias. In addition, Rho AI used a training set to highlight instances of bias caused by technical issues. ‘At the end of the day, if you’re doing something in data science, the model is only as good as the data you build on’, said McColl. ‘The reduction of bias has to come at the very initial stages, when you put together the data set. Because afterwards, this is what the model will listen to’ (Fischer and Beswick, 2019, Online interview with David McColl, 21 November).

Since the company intended to develop this as an open-source project, Rho AI wanted to ensure transparency and integrity within the platform. The company wanted the platform’s inner workings to be auditable and for users to be able to discuss and understand how the data were collected, factored, organised and reported, as well as its implications. Similarly, Rho AI intended to work with industry partners to confirm the outputs of their model. Integrating domain expertise would allow the company to assess the accuracy and verify the applicability of the modelling methods used.

AI and ethics under the microscope

In the late 1990s, computing power finally caught up with the ambitions that humans had held for AI for decades, and IBM was able to develop Deep Blue, the chess programme that defeated the reigning world chess champion Garry Kasparov. Since then, the use of AI has become so ubiquitous that the term ‘Fourth Industrial Revolution’ was coined to describe it impact (Anyoha, 2017; Madiega, 2019; Schwab, 2020). AI tools are involved in an increasingly broad spectrum of activities, including credit scoring, detection of tax evaders, algorithmic share trading, prediction of car robberies, parole decisions, online dating and drone warfare (Rahwan et al., 2019). Writing in Nature, Rahwan et al. (2019: 477) observed, ‘Machines powered by artificial intelligence increasingly mediate our social, cultural, economic and political interactions’.

Among the concerns that Rahwan et al. (2019) expressed relating to AI were the following:

Although the code could be simple, the results could be complex, resulting in ‘black boxes’;

The code could be difficult to interpret;

Imperfections in data could impact on the results produced;

Much of the source code and training data were proprietary.

Some of the algorithm-specific questions Rahwan et al. (2019) raised as being relevant in the domain of machine behaviour were as follows:

Does the algorithm create filter bubbles? Does the algorithm disproportionately censor content? Do algorithms manipulate markets? Does the behaviour of the algorithm increase systemic risk of market crash? Do algorithms of competitors collude to fix prices? Does the algorithm exhibit price discrimination? (p. 479)

European Union (EU) research has shown that while AI technologies can be very beneficial from a social and economic point of view, they raise several ethical, legal and economic concerns with regard to the risks facing fundamental freedom and human rights. Thus, the EU said, AI poses risks to privacy and to personal data protection, as well as discrimination when algorithms focus on profiling people (Madiega, 2019).

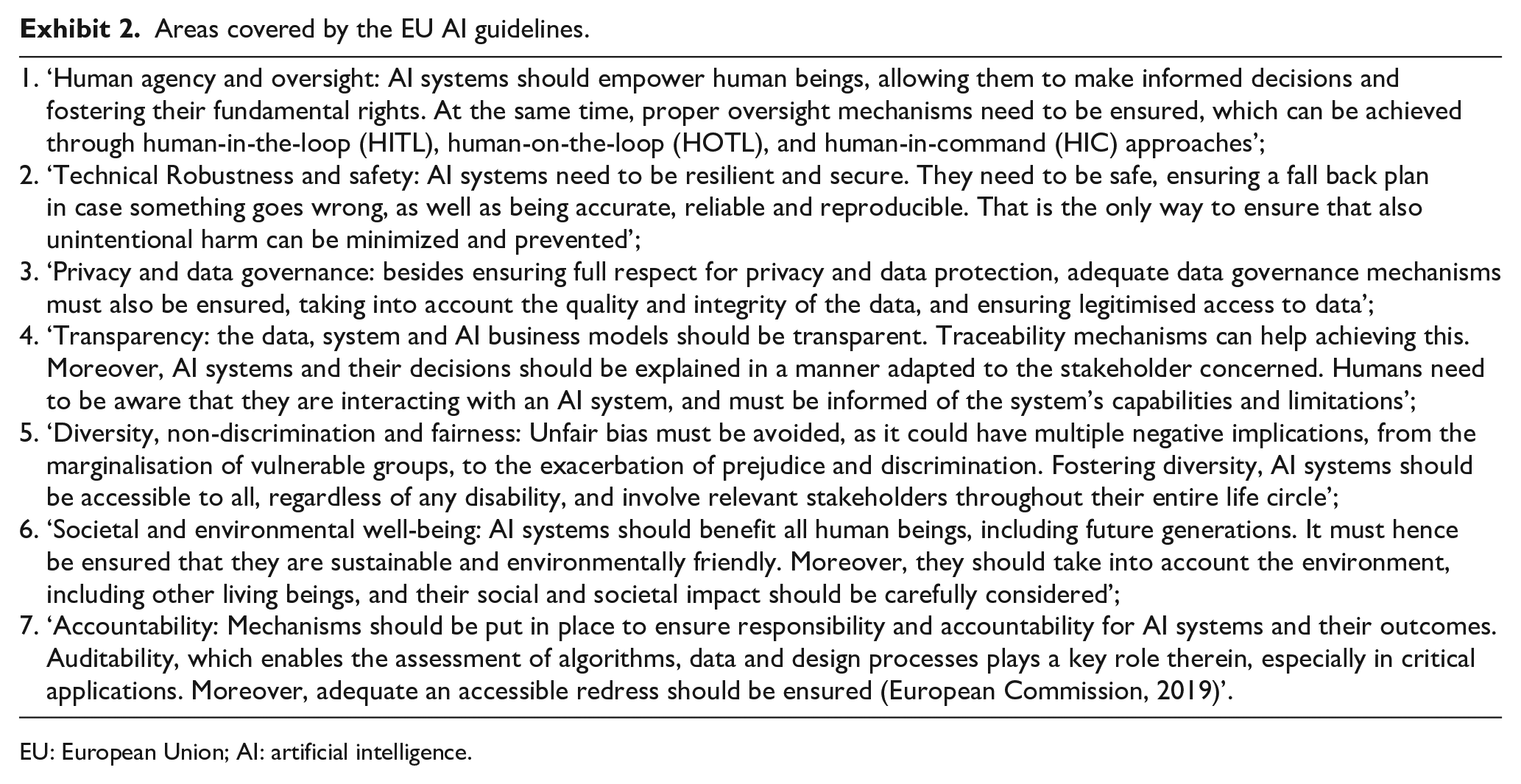

In 2019, the European Commission introduced a set of Ethics Guidelines for Trustworthy Artificial Intelligence, which it is set to confirm in 2020. The guidelines set out seven requirements for AI systems to be regarded as trustworthy. The guidelines cover the areas of human agency and oversight, technical robustness and safety, privacy and data governance, transparency, diversity, non-discrimination and fairness, societal and environmental well-being and accountability (European Commission, 2019). 12

Regarding the broader ethics associated with Rho AI’s products, Callsen said the following:

It is a constant topic on the one hand, and a little in the background on another. Some of our products involve no ethical considerations at all – for example Pit Rho: if a team uses our information and we have a biased training set that gives them the wrong answer, they are not going to win the race and they will stop using our product. We are not endangering anybody, there is no real ethical problem there. (Fischer and Beswick, 2019, Online interview with Gilman Callsen, 4 December)

On the other hand, some of Rho AI’s tools

13

have a real ethical dimension. ‘These can have a truly positive impact’, Callsen said,

but there is a risk there that what our tools predict might have a negative impact on what would otherwise have been good decisions. So we are really focussed on making sure that the tools yield appropriate results for the investment community, so that we can actually be putting capital and resources towards positive, impactful technologies, specifically around carbon mitigation. (Fischer and Beswick, 2019, Online interview with Gilman Callsen, 4 December)

Up until now, however, Rho AI had not codified its approach to the ethical development of AI. ‘We are small enough that our methods are far more informal than would be required for a larger organisation’, said Callsen. But as the company has grown, it is becoming more necessary for Rho AI to codify its approach. ‘When you’re a team of 10 people, you just don’t need to’, he explained.

You know who you’re working with, everybody gets it, you talk about it with everybody, all the time. That core founding group of maybe 10 people: you’re extremely tight knit. Everyone is walking in lockstep. You don’t need to write anything down. Then you get into the kind of 10-20 people range and you’re starting to feel the growing pains. But in that range, I’d consider any employee to be part of the founding team – with the same ethos of ownership. In the 20-30 range, you will still get people who have that same sense of ownership, but you are going to get people who don’t have that – for whom it is just a job. When you get to that stage, it is important to have things codified. (Fischer and Beswick, 2019, Online interview with Gilman Callsen, 4 December)

Concluding thoughts

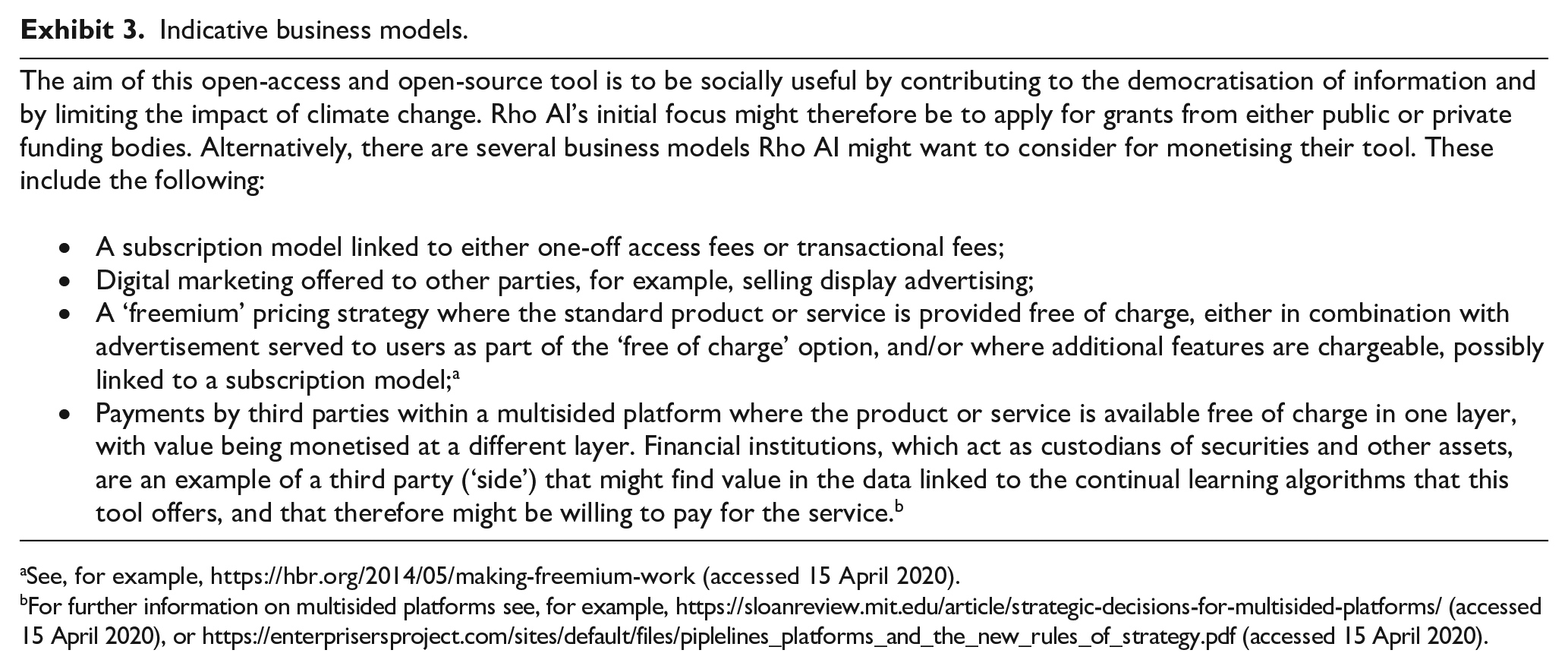

‘So, do you think we have everything covered?’ asked Callsen, as he and McColl spoke later that Monday. McColl was concerned about the business model, in particular, the potential conflict between making the product open-source and freely accessible to any user, with transparent training sets, and the fact that it would cost in the region of US$300,000 to develop the beta version. There would be additional costs to roll it out, 14 to maintain it 15 and, potentially, to expand into other areas and regions. Rho AI had not been funded by venture capital and did not have the wherewithal to bankroll this project indefinitely. Perhaps the company could apply for grants, or consider a different business model (see Exhibit 3 for indicative suggestions) to help fund the development and to ensure that the product was of a high-enough specification that others could find value in the data. He and Callsen had to consider whether it was feasible to allow investors to benefit from the insights ‘for free’. Also, would transparency enable competitors to copy the model and then market it on a proprietary basis?

Simplified overview of techniques used as part of this project.

Areas covered by the EU AI guidelines.

EU: European Union; AI: artificial intelligence.

Indicative business models.

See, for example, https://hbr.org/2014/05/making-freemium-work (accessed 15 April 2020).

For further information on multisided platforms see, for example, https://sloanreview.mit.edu/article/strategic-decisions-for-multisided-platforms/ (accessed 15 April 2020), or https://enterprisersproject.com/sites/default/files/piplelines_platforms_and_the_new_rules_of_strategy.pdf (accessed 15 April 2020).

McColl was also reflecting on the data quality that could be achieved by web scraping and interpreting ESG-related unstructured data, and the impact the data and the tool overall might have on companies and society. Had the ethical considerations been considered fully and the potential for bias been reduced as far as possible? Would the tool contribute to the democratisation of information? And would the tool, via the investments of the users, pressure corporations to intensify their focus on limiting their climate impact in a meaningful, effective and sustainable way?

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship and/or publication of this article.