Abstract

The Scrambled Sentences Task (SST) is a robust measure of interpretational processes in psychopathology. However, there is little evidence of its utility in measuring dysfunctional appraisals (DAs) of potentially traumatic events. We developed a novel SST for trauma-related DAs and examined its psychometric properties including convergent validity (correlations with PTSD-related symptoms and self-reported DAs), divergent validity (e.g., symptoms of depression and eating disorders), and retest reliability via an online study. Our sample (T1: N = 214, T2: N = 145) included participants who reported a potentially traumatic life event still eliciting distress. We found high correlations between the SST, PTSD-related symptoms (r = .37-.51), and self-report measures of DAs (r = .41-.58), indicating good convergent validity. Internal consistency (split-half = .78-.90) and retest reliability (ICC(3,1) = .73-.81) were also good. However, moderate to large correlations with symptoms of other disorders (r = .17-.58) indicated limited divergent validity. Finally, the SST explained unique variance in PTSD-related symptoms above self-report measures of DAs. The results demonstrate the promise of the SST as a valid and reliable tool to assess DAs in the context of potentially traumatic life events. Further research should investigate the transdiagnostic role of trauma-related DAs in psychopathology and the relationship between the SST and self-report measures of DAs.

Keywords

Dysfunctional appraisals (DAs) are a central element in cognitive models of the development and maintenance of posttraumatic stress disorder (PTSD) (Dalgleish, 2004; Ehlers & Clark, 2000; Foa et al., 1989). For example, the model of Ehlers and Clark (2000) suggests a causal pathway from DAs of the trauma and its sequelae to other aspects of PTSD, such as a current feeling of threat and reoccurring, intrusive memories of the traumatic event. The key role of DAs in PTSD has been further underscored by the inclusion of negative cognitive changes following a trauma as a criterion in the diagnosis of PTSD in the Diagnostic and Statistical Manual of Mental Disorders – Fifth edition (DSM-5) (American Psychiatric Association, 2013).

Dysfunctional appraisals can be understood as negative evaluations of an event or stimulus. In the context of PTSD, dysfunctional appraisals reflect the patients’ idiosyncratic appraisals/beliefs about their reactions to the event and their trauma symptoms. To illustrate, patients may appraise their reactions during the traumatic event as a sign of personal weakness or consider their intrusive memories as a proof that they are losing control (e.g., Ehlers & Clark, 2000). Accordingly, DAs in the context of PTSD reflect the evaluation of the meaning of a trauma-relevant stimulus and may involve extrapolations to broader, more wide-ranging themes, with implications for oneself, the world, or one’s future. As such, DAs are distinct from other cognitive phenomena in the context of emotional psychopathology, such as interpretation biases. Although interpretation biases are also the product of an evaluative process, they focus more specifically on the evaluation of ambiguous stimuli or situations (cf. Mathews & MacLeod, 2005) and are restricted to narrower themes.

Evidence for a central role of DAs in PTSD comes from several strands of research. To illustrate, studies have found that DAs are associated with symptoms of PTSD, both cross-sectionally (Gómez de La Cuesta et al., 2019) and longitudinally (Bryant & Guthrie, 2005; Ehring et al., 2008; Kleim et al., 2007). Further, the reduction of DAs during treatment has been shown to predict subsequent symptom reduction, but not vice versa (Brown et al., 2019; Kleim et al., 2013). Additional evidence comes from studies with an experimental focus, for example, manipulating DAs in order to test their potential causal effect on analog or actual PTSD-related symptoms. For example, Cheung and Bryant (2017) induced DAs about intrusions via informing participants that intrusive memories of distressing films were indicative of poor psychological functioning. This induction in turn led to more frequent intrusive memories of the films than a non-dysfunctional induction (Schartau et al., 2009). Studies using computerized paradigms developed in the context of Cognitive Bias Modification-Appraisal (CBM-App; MacLeod et al., 2009; McNally & Woud, 2019; Woud & Becker, 2014), however, have revealed more mixed findings. One study (Woud et al., 2012) found fewer intrusions of an analog trauma following training aiming to induce a functional (positive) appraisal style compared to training aiming to induce a dysfunctional (negative) appraisal style (positive vs. negative CBM-App). Other studies found an effect only on intrusion distress, with less distress in the positively trained group compared to the negatively trained group (Woud, Cwik et al., 2018; Woud et al., 2013) or no effects at all on analog trauma symptoms (Vermeulen et al., 2019; Würtz et al., 2021). In a recent randomized controlled trial (RCT) amongst inpatients diagnosed with PTSD, the effects of positive CBM-App as an adjunct to treatment as usual were compared to a sham-control training. Positive CBM-App, compared to the control condition, successfully reduced DAs and led to a reduction in PTSD symptoms (Woud et al., 2021). However, in another recent RCT, CBM-App did not reduce DAs in comparison to a neutral control condition, and there was no between-group difference in PTSD symptoms after CBM-App (de Kleine et al., 2019). An important note here is, however, that the latter RCT delivered the CBM-App training online, to outpatients with PTSD while they waited for therapy, and this could partly explain the deviating results.

In summary, there is substantial evidence that DAs are a core feature of PTSD, and this evidence is consistent with the proposal that DAs can causally affect trauma symptoms, supporting key assumptions of cognitive models of PTSD. However, past research has mainly used self-report questionnaires such as the Posttraumatic Cognitions Inventory (PTCI) (Foa et al., 1999) to assess DAs (for exceptions, see Blackwell et al., 2021; Boffa et al., 2018; Lindgren et al., 2013), and it is unclear to what extent this may or may not be a problem. For example, self-report measures are associated with several disadvantages such as response or demand effects (MacLeod et al., 2009). Further, actively evaluating the questionnaires’ statements might conceal aspects of DAs that are difficult to verbalize (Brewin et al., 1996), or that are prone to be actively suppressed (Ehlers & Clark, 2000; Rosebrock et al., 2019). Finally, DAs may involve automatically activated counterparts, as assumed by cognitive models (e.g., Ehlers & Clark, 2000), which self-report questionnaires may not be able to capture. The findings of Lindgren et al. (2013), for example, provide some evidence for the idea that self-report measures might not fully capture DAs relevant to PTSD. In their study, an indirect measure of DAs based on the reaction time of participants to categorize self-related words as either traumatized or non-traumatized (an implicit association test) explained additional variance in PTSD-related symptoms beyond that explained by a self-report measure of DAs (the PTCI).

Importantly, other research areas in psychopathology are also characterized by such limitations, and this has led to the development of various novel assessment tasks. Such tasks can include, for example, measuring responses to ambiguous stimuli (Hirsch et al., 2016; Schoth & Liossi, 2017). As different kinds of tasks may tap into slightly different aspects of a process under investigation, examining new ways of measuring a phenomenon such as DAs can help in delineating the conditions under which the DA is or is not expressed. Further, having access to a broad range of measures can also provide a form of triangulation, increasing our confidence that results found are robust rather than an artifact of one measurement method (Munafo & Davey Smith, 2018). One example of a measure with potential utility in the context of DAs and PTSD is the Scrambled Sentences Task (SST) (Wenzlaff & Bates, 1998), which was initially developed to assess interpretational processes in the context of depression. During the SST, participants are instructed to sort words into grammatically correct sentences (e.g., bright the future very dismal looks), and each set of words is designed such that it can be formed into either a disorder-congruent (The future looks very dismal) or -incongruent (The future looks very bright) sentence. To reduce the impact of active evaluation of the chosen words, the SST is applied with a short time limit and participants are simultaneously engaged in a cognitively demanding task, usually retaining a 6-digit number in mind. The SST is most commonly scored by calculating the percentage or proportion of correctly formed sentences that were unscrambled in a disorder-congruent manner. Performance on the SST has been shown to be associated with symptoms of depression (Lee et al., 2016; O’Connor et al., 2021) and to predict a subsequent diagnosis of major depression (Rude et al., 2010). Recent research has successfully adapted the stimuli used in the SST to other psychopathology contexts such as anorexia nervosa or body dissatisfaction (Bradatsch et al., 2020; Brockmeyer et al., 2018), paranoia (Savulich et al., 2020), anxiety sensitivity (Zahler et al., 2020), symptoms of social anxiety (de Voogd et al., 2017), female sexual dysfunction (Zahler et al., 2021), or generalized anxiety disorder (Krahé et al., 2022). Across all these studies, the SST score was associated with symptoms of the target disorder, supporting the validity of the SST as a measure of dysfunctional interpretational processes in various disorders. Further, a recent meta-analysis has shown that the average correlation between the SST score and symptoms of the targeted disorder is moderate (r = .46 [.41, .51]; Würtz et al., 2022). In addition, there is evidence that the SST is a sensitive measure for the assessment of change in interpretational processes in experimental research using CBM-paradigms in both clinical (Yiend et al., 2014) and analog samples (Bradatsch et al., 2020; Würtz et al., 2022). Finally, the recent study by O’Connor et al., (2021) showed that the SST was strongly associated with symptoms of depression, even after taking an explicit measure of dysfunctional interpretational processing into account. However, reaction times derived from the Word-Sentence-Association Paradigm, which is another indirect measure and uses reaction times to infer interpretational processing, failed to predict depressive symptoms.

Moving in a more trauma-related direction, the SST has also been successfully adapted to assess DAs in the context of negative life events (Viviani, Mahler et al., 2018). In the study by Viviani et al., the SST score was associated with questionnaire measures of DAs related to the event and symptoms of depression. However, that SST was specifically designed to be feasible in an fMRI setting and thus differed from the usual version (Viviani, Dommes et al., 2018). Specifically, participants unscrambled the sentences in their mind to avoid artifacts of hand movement, and no cognitive load was applied since the alternative task instruction already presented a cognitive challenge. Further, the SST stimuli neither referred to a potentially traumatic life event nor targeted directly related cognitions, enabling its application in a sample that was not necessarily exposed to such an event. As such, findings from this study might not be fully generalizable to the SST in its common computerized form or the context of an actual potentially traumatic life event.

To summarize, the SST is a potentially valuable tool to assess DAs in different areas of psychopathology. However, there has only been limited research into its applicability to the kind of DAs relevant to PTSD, that is, DAs in relation to potentially traumatic life events. Given its robust findings in a broad range of disorders, the SST might offer a promising approach also for the assessment of DAs in this context. The current study therefore aimed to develop a novel, computerized version of the SST to assess DAs in relation to a potentially traumatic life event, examining the SST’s psychometric properties including its 2-week test–retest reliability. A second aim was to test whether the SST would be a useful predictor of PTSD-related symptoms over and above self-report. Finally, considering the necessity for measures in experimental research to assess changes in cognitive processing pre-post a manipulation, we aimed to develop two parallel SST versions. These were presented in an online study to a sample of participants who reported a potentially traumatic life event that was still causing distress and therefore potentially the object of negative appraisals. Further, we included different questionnaires that were either directly related to PTSD, as measures of convergent validity, or (relatively) unrelated, as measures of divergent validity. To allow an estimation of test–retest reliability, participants were invited to a second assessment session in which we applied the SST again, together with measures of convergent validity.

Our hypotheses were as follows: We expected the SST score to be strongly correlated with both PTSD-related symptoms and DAs measured via self-report (convergent validity). Correlations with measures not directly related to PTSD symptoms, however, were expected to be relatively weak (divergent validity). Further, we expected that the SST would explain unique variance in symptoms, that is, would be a significant predictor of PTSD-related symptoms after controlling for DAs as measured via self-report and other relevant variables (unique variance). 1

Methods

Recruitment

Prior to the start of participant recruitment, the study was pre-registered by uploading the study protocol to the Open Science Framework (OSF; https://osf.io/bnzp3/). Participants were recruited by distributing the study’s link via social media and the participant noticeboard of Ruhr-University Bochum. Inclusion criteria were age ≥ 18 and prior exposure to a potentially traumatic life event still causing distress (and therefore being potentially subject to negative appraisals). Participants were included when they rated their current distress caused by the event as ≥ 3 on a 10-point scale ranging from 1 (not distressing at all) to 10 (highly distressing) (for a similar approach, see Schartau et al. 2009; Woud et al. 2019). Further, participants could only take part using either a laptop or desktop computer to avoid potential artifacts from the touchscreens of mobile devices. In the study advertisements, participants were informed that high fluency in German (e.g., being a native speaker) was necessary to take part in the study; however, no participants were excluded based on this criterion. Participants received course credit for participation and could take part in a prize draw for a 10€ voucher.

We aimed to recruit a total sample of N = 170 for T1, expecting a dropout of ∼ 15% which would have provided a sample of N = 145 for T2. This sample size was informed by an a priori power analysis (Erdfelder et al., 2009) aiming to achieve 80% power to find a small to medium effect size (f 2 = .055) of a single predictor in a multiple regression model with ten predictors in total at an alpha level of α = .05. However, during recruitment, it became apparent that the dropout was larger than expected and that some participants would have to be excluded due to technical problems. As a consequence, we recruited a larger sample for T1 than initially planned. Specifically, we did not use the sample size at T1 as a stopping criterion but stopped the recruitment at a time-point when N = 145 participants had provided complete data of both T1 and T2. However, after the recruitment was stopped, participants who had already taken part at T1 could still complete T2.

Material

Scrambled Sentences Task

The SST (Wenzlaff & Bates, 1998) was implemented as a web application programmed using JavaScript on a designated university-based website (Blackwell et al., 2021), and participants completed the SST in their preferred web browser. During the SST, participants were presented with sets of six words and were instructed to sort these into grammatically correct sentences by selecting five of them. Each trial started with a fixation-cross that was presented for 1s in the middle of the screen. After the fixation-cross disappeared, the word set was presented, and participants could sort the words by clicking on them. After clicking on a word, a number appeared above it, indicating the position of that word in the sentence formed. 2 Participants could not correct their responses. For each trial, there was a time limit of 12s and a trial ended automatically either after the time limit or after five words had been clicked on. As in previous studies, the SST was presented with a cognitive load to reduce participants’ deliberate evaluation of the presented stimuli: At the start of the SST, a 6-digit number was presented for 7s, and participants were instructed to keep the number in mind during the task since they would be asked to recall it afterward. Stimuli for the two SST versions were created based on the German version of the PTCI (Ehlers, 1999; Foa et al., 1999). Each SST version consisted of 20 stimuli, of which 12 were based on the “self,” six on the “world,” and two on the “self-blame” subscale of the PTCI. After the initial stimuli development, the sets were independently discussed with two clinicians outside of the study team who were experienced in the treatment of PTSD. Stimuli were then further adapted to resemble typical trauma-related appraisals of PTSD patients. Word sets were designed such that two words were target words of which one had to be omitted to form a grammatically correct sentence. Depending on which word was omitted, the sentence formed represented either a trauma-related functional or dysfunctional appraisal (example for world subscale: “dangerous the safe is very world”—functional appraisal: the world is very safe, or a dysfunctional appraisal: the world is very dangerous; target words in italics). The order of the two SST versions was counterbalanced (A first or B first) based on the participant number assigned after providing consent, and the order was the same for T1 and T2 (participants receiving version A first at T1 also received version A first at T2 and vice versa). After each SST version, participants were asked to type in the memorized number, received feedback on whether it was correct, and were invited to take a break. Prior to the first version, five neutral practice trials were presented to make participants familiar with the procedure. During the practice trials, participants were also instructed to keep a 6-digit number in mind which had to be recalled after the practice sentences.

The SST score was calculated by dividing the number of correctly generated, dysfunctional sentences by the total number of correctly formed sentences, resulting in a negativity score between 0 and 1, with higher values indicating a more dysfunctional appraisal style. Grammatically incorrect sentences, sentences formed as questions, and sentences with fewer than five words were counted as errors. A list of correct solutions was uploaded together with the pre-registration. However, some additional correct solutions were added after manual inspection of collected data (all materials including the stimuli, the correct solutions, and the source code of the web application can be found on the OSF (https://osf.io/bnzp3/)).

Convergent validity

Posttraumatic Stress Disorder Checklist for DSM-5 (PCL-5)

PTSD-related symptoms were assessed using the German translation of the PCL-5 (Krüger-Gottschalk et al., 2017; Weathers, Litz et al., 2013). The PCL-5 consists of 20 items with each item representing a PTSD symptom as specified in the DSM-5. Participants were instructed to rate how much distress was caused by each symptom in the past 2 weeks on a 5-point scale ranging from 0 (not at all) to 4 (very strongly). A total score of ≥ 33 has been suggested as a cut-off for a potential PTSD diagnosis (Krüger-Gottschalk et al., 2017). Internal consistency (Cronbach’s α) was .94 [.93, .95] at T1 and .95 [.94, .97] at T2, and the test–retest reliability was ICC (3, 1) = .79 [.73, .84].

Posttraumatic Cognitions Inventory (PTCI)

DAs related to PTSD were assessed via the German version of the PTCI (Ehlers, 1999; Foa et al., 1999). The PTCI consists of 33 statements representing typical PTSD-related appraisals. Participants were instructed to rate their agreement with each statement on a 7-point scale ranging from 1 (strongly disagree) to 7 (strongly agree) with higher values indicating more dysfunctional DAs. Internal consistency at T1 was .94 [.93, .96] and at T2 .96 [.95, .97]. Test–retest reliability was ICC (3, 1) = .83 [.78, .87].

Divergent validity

Quick Inventory of Depressive Symptomatology (QIDS)

Symptoms of depression were assessed via the QIDS (Roniger et al., 2015; Rush et al., 2003). This questionnaire consists of 16 items grouped in nine depression-related domains. Each domain consists of one item except for the domains sleep, appetite, and psychomotor agitation/restlessness, consisting of two to four items. Participants rated how strongly they were affected by each symptom in the past week using a 4-point scale ranging from 0 to 3 with higher scores indicating more severe symptoms. The questionnaire is scored by calculating the sum score of each disorder domain, using the highest score for domains with more than one item (Roniger et al., 2015). Total scores of ≤ 5 are indicative of no depression, 6–10 of mild, 11–15 of moderate, 16–20 of severe, and ≥ 21 of very severe depression, respectively (Rush et al., 2003). Internal consistency for the full scale was .83 [.80, .86]. 3

Ambiguous Scenarios Test for Depression (AST)

Depression-related interpretation biases were assessed using version A of the German AST (Berna et al., 2011; Rohrbacher & Reinecke, 2014). The AST consists of 15 ambiguous scenarios describing everyday life situations, for example, having an oral examination. Participants were instructed to briefly imagine each scenario and to rate its pleasantness on an 11-point Likert scale ranging from −5 (very unpleasant) to 5 (very pleasant), with higher scores indicating a more positive interpretation bias. The AST’s internal consistency was .81 [.77, .84].

Acrophobia Questionnaire – Anxiety Subscale (AQ)

Fear of heights was assessed via the German translation of the Anxiety subscale of the AQ (Cohen, 1977). The Anxiety subscale consists of 20 height-related items. Participants were instructed to rate how anxious they would feel in each situation using a 7-point Likert-scale ranging from 0 (not anxious at all) to 6 (very anxious). The AQ’s internal consistency was .93 [.91, .94].

Fear of Spiders Screening (FSS)

Fear of spiders was assessed via the FSS (Rinck et al., 2002), assessing both fear and avoidance of spiders. The questionnaire consists of 4 statements concerning fear of spiders, associated body symptoms, avoidance, and distress, respectively. Each item is rated on a 7-point Likert-scale ranging from 0 (does not apply at all) to 6 (fully applies). Internal consistency was .91 [.89, .93].

Eating Disorder Examination Questionnaire (EDEQ)

Symptoms of eating disorders in the past 4 weeks were assessed via the German translation of the EDEQ (Fairburn & Beglin, 1994; Hilbert et al., 2007). The EDEQ is a 28-item scale, consisting of four subscales: Restrain (5 items), Eating concerns (5 items), Weight concerns (5 items), and Shape concerns (8 items), and five additional items that are not used in scoring. Participants were instructed to answer each item on a 7-point scale ranging from 0 to 6 with higher scores indicating more severe eating disorder symptoms. The EDEQ is scored by calculating the mean score of each subscale and then calculating the overall mean of all subscales. Internal consistency for the full scale was .96 [.95, .96]. 4

Additional measures

Demographic data

Participants were first asked to indicate their age and their gender (male, female, or non-binary), and whether they were a native German speaker. Further they were asked to indicate their self-rated language fluency (“How secure are you in reading German texts”) on a 5-point scale ranging from 0 (very insecure) to 4 (very secure). Finally, participants were asked for their highest level of formal education.

Life Event Checklist for DSM-5 (LEC-5) and distress ratings

Exposure to potentially traumatic negative life events was assessed via the German translation of the LEC-5 (Weathers, Blake et al., 2013). The LEC-5 is a checklist assessing the exposure to 17 traumatic events following the criteria of DSM-5. For each traumatic event, participants were instructed to indicate whether (i) it happened to them, (ii) they witnessed it, (iii) learned about that it happened to someone close, (iv) it happened in an occupational context, (v) they are not sure whether it happened to them, or (vi) they were not exposed to it. For each item, multiple answers were possible, and we scored the LEC by counting the number of events to which participants select another answer than “not exposed.” 5 Scores on the LEC were calculated to help better characterize the sample in terms of trauma exposure, but not used in analyses. To assess further information about the distressing event, we supplemented the LEC with additional questions. That is, participants were asked to indicate which of the selected events caused the most distress, and when the event happened. Further, they had to rate how much distress the event caused when the event happened, and how distressing the event is now, using a 10-point scale ranging from 1 (not distressing at all) to 10 (highly distressing).

Procedure

Prior to participation, all participants were informed that they would be asked about a traumatic event during the study and recommended not to take part if they would anticipate experiencing too much distress from questions related to the event. Participants then gave informed consent by completing an online form. Next, they were asked whether they were using a built-in laptop mouse or external mouse to take part in the study. This was followed by the LEC and the additional questions related to the potentially traumatic event. Participants who rated their current distress < 3 were excluded from further participation (Schartau et al., 2009; Woud et al., 2019). Subsequently, participants completed the SST, after which they were asked for their email address in order to be able to contact them for the second assessment. Next, participants were directed to another survey platform where the questionnaire measures were administered, in the following order: Demographic data, PTCI, PCL-5, QIDS, AST, EDEQ, AQ, and FSS. Two questionnaires assessing resilience and emotion regulation were included at the end of T1 for potential exploratory analyses; however, these are not further analyzed in the present paper (see Supplementary Material for details). Two weeks later, participants received an email containing a link to the second assessment. During this second assessment, participants were asked again to indicate what type of mouse they were using, followed by assessing the current distress caused by the potentially traumatic negative event and the application of the SST. Next, the PTCI and the PCL-5 were completed, again using a separate survey platform. Finally, participants were debriefed, received their course credit, and could enter their information for the prize draw. At the end of each assessment time point, participants were provided with information on an online platform with contact details for (trauma-specialized) psychotherapists. All study procedures were approved by the local ethics committee of the Psychology department at Ruhr-University Bochum (approval number: 596).

Statistical approach

For convergent and divergent validity, bivariate correlations were calculated. However, upon visual inspection, both the SST and questionnaire data were found to be positively skewed and not normally distributed. Therefore, we calculated Spearman’s rank correlations with bootstrapped 95% confidence intervals (CIs; 10,000 resamples) instead of the Pearson correlations planned in the pre-registration, in order to obtain a more robust estimation of the examined associations (de Winter et al., 2016). As the investigation of our hypotheses concerning con- and divergent validity depended on multiple significance tests, the significance level of α = .05 was adjusted via the Bonferroni correction and thus was α = .008 for convergent validity (.05/number of versions (full SST, v. A, v. B) = 3 × number of questionnaires (PTCI, PCL) = 2) and α = .003 for divergent validity (.05/number of versions = 3 × number of questionnaires (QIDS, AST, EDEQ, FSS, AQ) = 5). Whether the SST would explain unique variance in current PTSD-related symptoms above self-report was examined via a series of multiple regression models with the PCL total score of the relevant time point as the outcome. In a first regression step, we controlled for basic characteristics of the participants and the event: age, gender (female vs. non-female 6 ), distress caused by the negative life event when it occurred, and time passed since the event (in months). In a second step, we then added scores on the SST to examine how much variance in PTSD symptoms it explained above these basic variables. In a third step, we added scores on the PTCI, allowing us to examine whether SST scores explained additional variance in PTSD symptoms above that explained by self-report DAs. Analyses were run separately for both assessment time points. 7 For all regression models, full models were examined for influential outliers (standardized residuals > 3 and Cook’s distance > 1) (Field et al., 2012) and multicollinearity (variance inflation factors; VIFs). Bootstrapped 95% CIs (10,000 resamples) for regression coefficients were calculated as robustness checks.

In exploratory analyses, we investigated Spearman’s rank correlations between the PCL-5 and the PTCI and measures of con- and divergent validity at T1 to investigate the association between the symptoms of different disorder domains and compare the results for the SST to those for the PTCI. Further, we analyzed whether participants who dropped out for T2 differed from participants who did not drop out on their age, self-reported language fluency, number of correct solutions on the SST or their bias score, depressive symptoms, PTSD symptoms, or the PTCI using two-sample t-tests or Welch’s tests where appropriate. Finally, we investigated rehearsal effects and differences between the two SST versions. Specifically, we investigated whether participants were more likely to make errors or to form sentences in a dysfunctional way in the first compared to the second application at each time point, or on one or other of the versions. This was done by fitting mixed model logistic regression with fixed effects of “application” (first and second) and “version” (version A and version B) with random intercepts for “participant” and “stimulus.” Outcomes were “incorrect = 1 versus correct = 0” and “dysfunctional = 1 versus functional = 0,” respectively. Models were fitted separately for T1 and T2 data. 8 We further investigated whether participants were more likely to make errors on the SST or to form sentences in a dysfunctional way, based on how many times the SST had already been applied. This was investigated by fitting mixed model logistic regression with the fixed effect “time” (first, second, third, or fourth application) and random intercepts for “participant” and “stimulus” using maximum likelihood estimation with the outcomes “incorrect = 1 versus correct = 0” and “negative = 1 versus positive = 0,” respectively. Tukey’s pairwise post-hoc comparisons were applied. For additional analyses (such as predictive validity analyses and analyses concerning rehearsal effects), the associated statistical approach, and the results, see Supplementary Material.

All analyses were run in R version 4.0.0 (R Core Team, 2021) using RStudio version 1.4.1106 (RStudio Team, 2022). Spearman’s rank correlations and CIs were calculated using the R-package “rcompanion” (Mangiafico, 2021) and the package “stats” (R Core Team, 2021) was used for p-values. Linear regression models were estimated and compared using the package “stats” and bootstrapped CIs for the regression coefficients were calculated using the package “car” (Fox & Weisberg, 2019). Mixed model logistic regression was computed using the package “lme4” (Bates et al., 2015) and the package “stats” for CIs. All reliability indices were calculated using the package “psych” (Revelle, 2019).

Results

Sample

In total, N = 318 participants took part in T1. Out of these, 83.33% (N = 265) rated the current distress caused by the negative life event to be ≥ 3 and were therefore eligible to proceed with the study. Of those, 31 participants then were excluded at T1 as they did not complete any of the questionnaires. Further, two participants were excluded as they dropped out after the demographic questionnaire. Additionally, at T1, a further 14 participants were excluded due to technical difficulties with the saving of the SST data. 9 Finally, we excluded all participants at T1 who formed no grammatically correct sentence in one of the two SST versions (excluding practice trials) (1.83%, n = 4 participants) (Hirsch et al., 2020) resulting in a final sample of N = 214 for T1. For T2, a total of N = 158 participants took part (dropout rate: 31.60% from T1 to T2). 10 Of these, three participants were excluded as they did not answer the questionnaires at T2, and a further nine participants were excluded due to technical difficulties with the saving of the SST data. Finally, one participant (0.7%) was excluded as they did not form a grammatically correct sentence on either one of the SST versions, resulting in a final sample of N = 145 for T2. 11 In total, 3 participants (partially) took part twice in the study. Their data of the first participation was included in all analyses, yet data for the second participation was excluded.

Sample characteristics at T1 and T2.

PTCI: Posttraumatic Cognitions Inventory; PCL: PTSD Checklist for DSM-5; QIDS: Quick Inventory of Depressive Symptomatology – Self-report; AST: Ambiguous Scenarios Task; EDEQ: Eating Disorder Examination Questionnaire; FSS: Fear of Spiders Screening; AQ: Acrophobia Questionnaire.

aInformation from two participants was excluded due to implausible values as the time since the event exceeded their reported age.

bThe exclusion of participants who formed no correct sentences on one of the SST versions was done separately for T1 and T2; thus, some participants in the final sample in T2 are excluded in T1.

cFor one participant, data on the LEC did not save and is therefore missing.

dOne participant dropped out after the QIDS, and thus, the sample for further questionnaires was N = 213 at T1. At T2, one participant was included who did not answer the questionnaires on T1 and thus demographic data is missing.

Psychometric properties of the Scrambled Sentences Task

Internal consistency at T1 was split-half [95% CI] = .88 [.84, .91] for the full SST, .78 [.71, .84] for version A, and .80 [.73, .85] for version B. Internal consistency at T2 was .90 [.86, .93] for the full SST, .80 [.73, .85] for version A, and .82 [.76, .87] for version B. Test–retest reliability (mean re-test interval: 16.39 days 12 ) was ICC (3, 1) [95% CI] = .81 [.75, .85] for the full SST, .75 [.68, .81] for version A, and .73 [.65, .79] for version B. Parallel versions reliability was .71 [.65, .76] for T1 and .84 [.79, .87] for T2. Reliability between versions across time points was the following: For version A at T1 and B at T2, ICC (3,1) [95% CI] = .75 [.68, .80] and for version B at T1 and A at T2, .67 [.59, .74]. The cognitive load retention rates at T1 were 77.57% after the practice trials, 50.47% after the first application, and 61.68% after the second application, and for T2, 78.62% after the practice trials, 56.55% after the first application, and 57.24% after the second application. Finally, the error rates and thus the rate of excluded trials were the following at T1: 19.15% for the full SST, 16.75% for version A, and 21.54% for version B. At T2, the error rates were 14.40% for the full SST, 13.28% for version A, and 15.52% for version B. For further details on error rates and analyses on stimulus level, see the section Exploratory Analyses and the Supplementary Material.

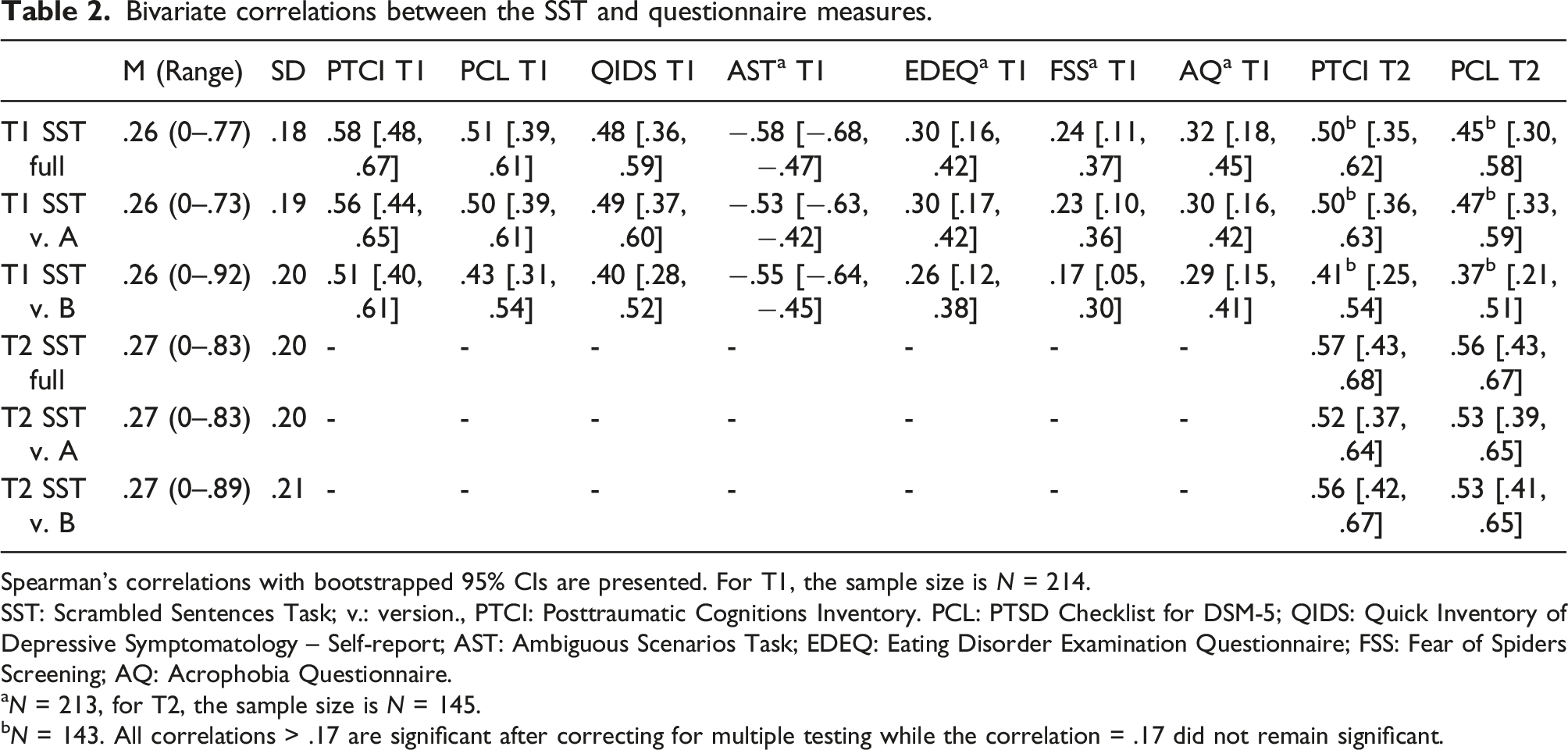

Convergent validity (T1 and T2) and divergent validity (T1)

Bivariate correlations between the SST and questionnaire measures.

Spearman’s correlations with bootstrapped 95% CIs are presented. For T1, the sample size is N = 214.

SST: Scrambled Sentences Task; v.: version., PTCI: Posttraumatic Cognitions Inventory. PCL: PTSD Checklist for DSM-5; QIDS: Quick Inventory of Depressive Symptomatology – Self-report; AST: Ambiguous Scenarios Task; EDEQ: Eating Disorder Examination Questionnaire; FSS: Fear of Spiders Screening; AQ: Acrophobia Questionnaire.

aN = 213, for T2, the sample size is N = 145.

bN = 143. All correlations > .17 are significant after correcting for multiple testing while the correlation = .17 did not remain significant.

Unique variance explained at T1 and T2

Results of stepwise multiple regression testing the unique variance on the concurrent PCL explained by the SST.

CIs for B are bootstrapped. T1: N = 214, T2: N = 144 (for T2, one participant was excluded due to missing values on “Age” and “Gender.” QIDS: Quick Inventory of Depressive Symptomatology – Self-report; PTCI: Posttraumatic Cognitions Inventory; SST: Scrambled Sentences Task score across both versions.

Exploratory analyses—Posttraumatic Cognitions Inventory correlations

Exploratory analyses of associations between the PCL-5, the PTCI, and divergent validity measures.

Bivariate Spearman’s correlations are presented. PTCI: Posttraumatic Cognitions Inventory; PCL: PTSD Checklist for DSM-5; QIDS: Quick Inventory of Depressive Symptomatology – Self-report; AST: Ambiguous Scenarios Task; EDEQ: Eating Disorder Examination Questionnaire; FSS: Fear of Spiders Screening; AQ: Acrophobia Questionnaire.

aN = 213. All correlations are statistically significant at p < .001, with the exception of those with r = .18 and r = .17, which are statistically significant at p < .01 and p < .05, respectively.

Exploratory analyses—Analyses of dropout

Descriptive statistics for the analyses of participants who dropped out.

Exploratory analyses—Rehearsal effects

In further exploratory analyses, we examined rehearsal effects. Investigating error rates at T1, we found that the chance for participants making an error was lower in the second than in the first application of the SST, as indicated by a significant main effect of application, OR [95% CI] = .62 [.46, .81], SE = .14, p = .001 (error rate first application: 21.92%, second application: 16.38%). This effect did not differ between the two SST versions as indicated by a non-significant main effect of version, OR = 1.53 [.62, 3.80], SE = .46, p = .356, and a non-significant version × application two-way interaction, OR = 1.01 [.62, 1.65], SE = .25, p = .968 (error rate version A: 16.75%, version B: 21.54%). On T2, there were no significant main effects or interactions (ps > .074) indicating that error rates did not differ between the first or second application, or between versions (overall error rate: 14.40%).

Investigating the rates of dysfunctional sentences at T1, we found no significant main effects or interactions (ps > .220), indicating that the chance that participants generated sentences in a dysfunctional way did not differ between the first and second application (first: 25.94%, second: 25.23%) or between versions (dysfunctional sentences: version A: 25.85%, version B: 25.28%) on T1. The same pattern of results was found for T2 (ps > .341, overall percentage of dysfunctional sentences: 27.21%). In summary, we found that on T1, there was an initial decrease in the error rate after the first application. This effect was not found at T2. Further, rates of dysfunctional sentences did not differ between versions or applications within each assessment time point.

Investigating error rates across time, we found that participants were more likely to make errors on the SST in the first application (error rate first application: 21.92%), compared to the later applications: Application 2 (error rate: 16.38%), OR [95% CI] = 1.60 [1.37, 1.87], SE = .10, p < .001, application 3 (error rate: 14.90%), OR = 1.63 [1.35, 1.96], SE = .12, p < .001, application 4 (error rate: 13.90%), OR = 1.82 [1.50, 2.20], SE = .13, p < .001. However, after the initial decrease, the error rate remained relatively stable as indicated by non-significant pairwise comparisons between the other applications (ps > .310). We further found that participants were less likely to form negative sentences in application 2 (negativity rate: 25.23%) compared to application 4 (negativity rate: 27.51%), OR = .83 [.69, .99], SE = .06, p = .035. Finally, differences between the other applications were non-significant (negativity rate application 1: 25.94%, application 3: 26.90%, ps > .078) indicating that there was a slight increase in negativity rates between the second application on T1 compared to the second application on T2, but not between the others.

Discussion

The aim of the present study was to develop a novel SST version to assess DAs in the context of potentially traumatic life events and to examine its psychometric properties in a sample exposed to such an event. Further, we aimed to investigate whether the SST would explain unique variance in concurrent PTSD-related symptoms above self-report. Finally, we aimed to develop two parallel versions of the SST to be used in experimental settings.

As expected, the SST was strongly correlated with both concurrent PTSD-related symptoms and PTSD-related DAs. This pattern was found within both time points, indicating good convergent validity. Concerning divergent validity, we found the expected small correlations with symptoms of eating disorders and specific phobias. However, we also found large correlations with both symptoms of depression as well as with depression-related interpretation biases, thus questioning the SST’s divergent validity. Further, the SST was a significant predictor of concurrent PTSD symptoms when taking the PTCI into account, which indicates that the SST could explain variance in PTSD symptoms beyond self-reported DAs. Concerning reliability, we found good internal consistency and test–retest reliability and consistency between both parallel versions of the SST. In exploratory analyses, we further found that participants made fewer errors on the SST after the repeated application, yet their bias score remained stable.

To summarize, results on the SST’s psychometric properties are mostly as expected and mirror those of the earlier study in the context of negative life events. That is, Viviani, Mahler et al. (2018) found that the SST was highly correlated with measures of PTSD-related DAs such as the PTCI; however, the association with depression was also strong. Further, our results are in line with those of a recent meta-analysis investigating the psychometric properties of the SST (Würtz et al., 2022). Here, it was found that the SST generally had good convergent validity as indicated by overall high correlations between the SST and symptoms of the target disorders, yet correlations with unspecific symptom measures were also high. Further, our findings on the SST’s reliability are in line with Würtz et al. (2022) as we replicated good internal consistency and retest reliability. The latter is an important addition to previous research as previous findings on the temporal stability of DAs measured via the SST have been limited to only a small number of studies.

Broadening the scope of our interpretations, our results allow the examination of the SST’s divergent validity in relation to the comorbidity of symptoms of certain disorders. While we found the SST to be strongly correlated with both symptoms of PTSD and depression, correlations with specific phobias and eating disorders were overall weaker. Regarding the former, both depression and PTSD are common disorders following a traumatic event and frequently occur comorbidly while sharing common symptom clusters (e.g., concentration problems or negative affect) (Ehring et al., 2006; Lewis et al., 2019; Marmar et al., 2015). Our findings might therefore indicate that this comorbidity could limit the SST’s divergent validity specifically in relation to PTSD and depression. Conversely, divergent validity may be better in relation to disorders that co-occur less frequently with PTSD (Mitchell et al., 2012). However, in exploratory analyses, we found similar patterns of correlations between the PTCI (and also for the PCL) and measures included for assessing divergent validity, suggesting that the limited divergent validity of the SST (in relation to PTSD vs. depression) is not necessarily inherent to the SST itself but may rather reflect a general association between trauma-related DAs and symptoms of this psychopathology following a potentially traumatic life event (Ehring et al., 2006, 2008). Hence, while the aim of our study was to validate an SST assessing DAs specific to PTSD, we interpret our findings as such that our SST may currently be best conceptualized as assessing trauma-related DAs. These DAs in turn are associated with symptoms of PTSD, but also symptoms of depression. This could be a result of comorbidity and shared symptoms between depression and PTSD. Additionally, although results of a recent network analysis amongst children and adolescents suggest that both syndromes have distinct features, trauma-related DAs were found to be equally associated with both depression and PTSD (de Haan et al., 2020). Future work using a prospective design could investigate the relative specificity of the current SST and the original depression-focused version, or specific subsets of their items, in predicting depression versus PTSD outcomes following potentially traumatic life events (for an approach to investigate the SST’s specificity, see Krahé et al., 2022).

Moving on to a further aim of our study, we found preliminary evidence that the SST would predict variance in PTSD-related symptoms beyond self-report as measured via the PTCI. This finding suggests that the SST adds value to the assessment of DAs solely via self-report which is in line with other studies investigating the benefit of non-self-report measures to assess trauma-related DAs (Lindgren et al., 2013) and our results also support the SST’s general value in the investigation of DAs and interpretation biases. To illustrate, in the context of depression, the inclusion of the cognitive load appears to provide the SST with benefits over simple self-report (e.g., the SST itself without a cognitive load), for example, in predicting a future diagnosis of depression (Rude et al., 2003) or showing differential response to cognitive bias modification versus CBT (Bowler et al., 2012). One explanation for this is that the cognitive load prevents suppression of dysfunctional cognitions that participants would otherwise engage in. More generally, these findings highlight the potential utility of developing DA assessments that tap into more automatic processes than self-report, such as the SST with a cognitive load, and including them in further studies. This may be particularly relevant for interventional studies where demand effects can be especially strong, or longitudinal studies where there is an interest in measuring an underlying cognitive vulnerability while someone is not currently experiencing symptoms of psychopathology. In fact, how useful such measures are in different contexts is an empirical question that can only be addressed via including them in a range of different kinds of studies.

Overall, our findings indicate the potential value and utility of the SST as a measure of DAs in the context of potentially traumatic life events or PTSD, particularly when also taking into account the broader literature on assessing dysfunctional interpretational processing in the context of emotional psychopathology. To illustrate, in earlier studies, the SST has successfully been applied together with eye-tracking measures enabling the simultaneous investigation of DAs/interpretation biases and attentional biases (Sanchez et al., 2015; Sfärlea et al., 2020). While both types of biases play an important role in PTSD (Bomyea et al., 2017) and emotional psychopathology in general, there is little evidence for their interplay. Hence, applying the SST together with measures of other cognitive biases has the potential to advance the field of PTSD and anxiety-relevant research, by facilitating the investigation of combined cognitive biases, a strain of research that has made important contributions to the investigation of other disorders (i.e., the combined cognitive bias hypothesis, see Everaert et al., 2017). Further, in a recent study (O’Connor et al., 2021), the SST was compared to purely performance-based (indirect) and self-report measures of interpretation biases. Results showed that the SST’s correlation with disorder-related symptoms was stronger than that for unrelated symptoms. Interestingly, the SST was uncorrelated with fully indirect and only moderately correlated with self-report measures. These results point out that the SST is a highly useful tool for the assessment of interpretational processing, while the SST seems to be neither a fully direct nor indirect measure (O’Connor et al., 2021). While these results were found in the context of depression, further studies should investigate whether similar results concerning the relationship between the SST and other measures of DAs can be found in the context of negative life events and PTSD.

Previous research has also demonstrated the SST’s potential to validly assess changes in DAs, for example, in experimental settings such as in the context of CBM (Bradatsch et al., 2020) where it has been found that in particular the cognitive load enables the SST to be able to detect effects of CBM training paradigms (Bowler et al., 2012). This potential is further underlined by our promising findings on the psychometric properties of the SST, namely, good retest reliability and the availability of two parallel versions which can be used for the repeated assessment of DAs, and our exploratory findings that although participants make fewer errors after repeated application, their bias score remains stable in absence of a manipulation. This is especially valuable in research on the manipulation of DAs, for example, in analog or actual trauma. While near-transfer effects, that is, effects of CBM on an Encoding Recognition Task (Mathews & Mackintosh, 2000), are commonly found, the investigation of far-transfer effects on, for example, symptom measures provides a less consistent picture (Woud, Zlomuzica et al., 2018; Würtz et al., 2021). This makes it necessary to cross-validate and triangulate current findings across different measures of DAs to shed light on these current issues to potentially open novel routes for the improvement of such paradigms (Munafo & Davey Smith, 2018).

Our results need to be interpreted in the context of a number of limitations. A first limitation to our study is the dropout from T1 to T2 of ∼ 30%. While our estimations of test–retest reliability were based on a reasonably sized sub-sample, our findings still need to be interpreted cautiously as a potentially non-random dropout could have influenced the results.

As an initial validation study, this study was carried out in an online setting to recruit a large sample. We therefore could not ensure that all participants were highly concentrated during the study, and we could not standardize the application of the SST concerning technical aspects such as screen size, the used mouse and browser. This limitation becomes more apparent considering previously mentioned differences in the presentation of the SST between the web browsers. While we do not consider this difference to be a threat to the validity of our results, which in fact indicate the robustness of the basic task given a potentially sub-optimal implementation, it is possible that a cleaner pattern of results would be found in a more controlled lab administration of this SST. This limitation also becomes relevant considering the deviation from the pre-registered participant exclusion criterion based on the finding that the error rate was higher than expected. While most results did not differ between our pre-registered (more than 30% errors on the SST) and our post-hoc applied participant exclusion criterion (no valid sentences on the SST), our finding that the SST explains variance beyond the PTCI was only found using the more lenient post-hoc criterion. It is encouraging that a strict accuracy criterion is not required to use the measure, as excluding participants based on a strict error rate criterion poses the risk of reducing the generalizability of results obtained and the utility of the SST as a measure. However, the difference in results that this exclusion criterion made means we have to be more cautious about the robustness of our findings.

Some limitations concern our sample. Using the LEC, we aimed to include only participants who had been exposed to a self-reported, potentially traumatic negative life event, but it is unclear whether these events would always fulfill DSM-5 criteria for a traumatic event. Further, our sample was considerably homogenous, consisting mostly of female participants who were native speakers and finished at least A-level education. Results from this study therefore might not be generalizable to more diverse samples, especially samples less fluent in German. Finally, our sample consisted of participants with on average mild depression and PTSD symptoms on a subclinical level. Our results are therefore potentially not generalizable to participants with higher levels of psychopathology such as patients with PTSD and/or depression. We need to be particularly cautious in relation to the longitudinal data given our exploratory analyses indicating that participants who dropped out made on average more errors and had slightly higher symptoms of PTSD compared to those who completed the study.

In conclusion, we found that the SST for assessing DAs in the context of potentially traumatic life events had good convergent validity, while findings on the divergent validity were more mixed, especially for symptoms of depression. Further results indicated the SST’s capability of explaining variance in PTSD-related symptoms beyond self-report, and its utility was further underlined by our findings of good internal consistency and test–retest reliability. Finally, we found that the two versions were approximately parallel, and applying the SST repeatedly provided a stable assessment of DAs suggesting that the SST is a potentially valuable tool in research on the experimental manipulation of DAs in the context of potentially traumatic life and analog trauma.

Supplemental Material

Supplemental Material - The world dangerous it is—The scrambled sentences task in the context of posttraumatic stress symptoms

Supplemental Material for The world dangerous it is—The scrambled sentences task in the context of posttraumatic stress symptoms by Felix Würtz, Simon E Blackwell, Jürgen Margraf and Marcella L Woud in Journal of Experimental Psychopathology

Footnotes

Acknowledgments

We would like to thank Dr. Tobias Teismann and Dr. Jan C. Cwik for their feedback on the stimuli for the SST. We would further like to thank Dr. Christian Leson for his support with the server maintenance. We acknowledge support by the Open Access Publication Funds of the Ruhr-Universität Bochum.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

Felix Würtz is supported by a doctoral scholarship of the Studienstiftung des deutschen Volkes. Marcella L. Woud is funded by the Deutsche Forschungsgemeinschaft (DFG) via the Emmy Noether Programme (WO 2018/3-1). Jürgen Margraf and Marcella L. Woud are Principal Investigators of a project within the SFB 1280 “Extinction Learning” awarded by the DFG (Projectnr.: 316803389). Project title: “The impact of modification of stimulus-outcome contingencies on extinction and exposure in anxiety disorders.” The funding bodies had no role in the design of the study, the collection, analysis, and interpretation of the data, or the preparation of the manuscript.

Ethics approval statement

All study procedures were approved by the local ethics committee of the Psychology department at Ruhr-University Bochum (approval number: 596).

Data availability

Supplemental Material

Supplemental material for this article is available online.

Notes

Author biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.