Abstract

Emotional reactivity and recovery are crucial for maintaining well-being. It remains unknown, however, to what extent emotion modulates the time course of recovery assessed using a simple categorization task and how this varies based on individual differences in worry. To address these questions, 35 participants viewed emotional pictures, followed by abstract greeble targets, which were to be categorized. Greebles were presented between 100 ms and 4,000 ms after picture offset. Physiological measures including skin conductance level and the corrugator supercilii were recorded and served as indicators of responsivity to emotional pictures. Measures of reaction time (RT) and accuracy scores were taken as indicators of the impact of emotion on facilitation or interference to the greeble target. Effects of interference and facilitation were observed up to 4,000 ms after emotional pictures on RT and accuracy scores. High worry was associated with greater (1) corrugator supercilii and skin conductance level to negative versus positive and neutral pictures and (2) interference from emotional pictures on accuracy scores. Overall, these findings suggest that subsequent processing is still impacted up to 4,000 ms after the offset of emotional pictures, particularly for negative events in individuals with high worry.

Introduction

Emotional information takes precedence and initiates a cascade of typical behavioral and psychophysiological response tendencies (Davidson, 1998; Frijda, 1986; Lang & Bradley, 2010; Yiend, 2010). Most research has examined how behavioral and physiological response profiles unfold from the onset to the offset of an emotional stimulus. However, an emerging literature has begun to address the dynamic nature of emotional stimulus processing (Davidson, 1998; Kuppens, 2015). In particular, recent work has focused on examining how emotional processing spills over and impacts responding to subsequent task-relevant targets (Bocanegra & Zeelenberg, 2009; Brown et al., 2012; Ciesielski et al., 2010; Grupe et al., 2018; Ihssen & Keil, 2009; Ihssen et al., 2007; Jackson et al., 2003; Kennedy & Most, 2015; Kennedy et al., 2014; Larson et al., 2007; Morriss et al., 2013; Most et al., 2007; Weinberg & Hajcak, 2011). Quantifying emotional recovery via the level of modulation on task-relevant targets that appear after task-irrelevant emotional stimuli may be useful in determining the lingering effect of emotion after offset of the emotion-eliciting event. For instance, interference or facilitation in responding to a following target can be considered a marker of continued processing of emotional stimuli, with the former disrupting processing of following targets, while the latter enables processing of following targets.

A body of behavioral research using rapid serial visual presentation tasks have provided ample evidence that viewing emotional stimuli can both interfere with and facilitate the attentional processing of subsequently presented targets, depending on the temporal proximity between stimuli (Bocanegra & Zeelenberg, 2009; Ciesielski et al., 2010; Ihssen & Keil, 2009; Kennedy & Most, 2015; Most et al., 2007). Bocanegra and Zeelenberg (2009) found that emotional words impaired accuracy on subsequent neutral word targets when distances in time were as small as 50 and 500 ms, while longer time intervals of 1,000 ms improved accuracy. Similarly, Ciesielski et al. (2010) observed that emotional picture distracters, particularly those exhibiting erotic and disgusting content, reduced the participants’ accuracy on a subsequent task during smaller distracter-target lags, including 200, 400, and 600 ms. Longer lags, that is, 800 ms, however, produced facilitation effects in accuracy. In addition, studies using event-related potentials (ERPs) have demonstrated emotional pictures to modulate specific target ERP waveform components over time, thus indicating emotional stimuli to impact upon various stages of subsequent target processing (Brown et al., 2012; Ihssen et al., 2007; Kennedy et al., 2014; Weinberg & Hajcak, 2011). For example, Ihssen et al. (2007) found arousing images to disrupt processing of lexical targets as shown by slower reaction time (RT) and reduced amplitude on two target-locked ERP components: (1) the early attention-specific N1, observed over occipital sites, and time locked to 184–284 ms and (2) the later late positive potential, observed over parieto-central regions and time locked to 412–712 ms. These effects occurred over three different temporal intervals between the emotional image and target, that is, 80, 200, and 440 ms. Furthermore, Weinberg and Hajcak (2011) revealed emotional images to slow RTs and to attenuate subsequent P300 amplitude to shape targets which directly followed the images. The electrophysiological findings from these studies overlap with the behavioral research presented above whereby shorter temporal proximities between an emotional prime and target result in interference.

The extent to which emotional stimuli interfere with and facilitate processing of subsequent stimuli has been found to vary substantially based on individual differences. For example, individuals who report feeling more negative affect show greater interference from emotional images on subsequent targets (Kennedy & Most, 2015). In addition, high trait absorption, that is, the tendency to be engaged by sensory events, predicted greater late positive potential activity to emotional images and reduced P300 activity to subsequent auditory probes presented 3,000–5,000 ms after image offset (Benning et al., 2015). Furthermore, Larson et al. (2007) found healthy participants with (1) depressive symptoms to show blunted startle responses to audio probes presented 1.5 s after positive pictures, compared to neutral pictures and (2) anxious symptoms to show potentiated startle to audio probes presented 1.5 s after unpleasant and pleasant pictures, relative to neutral pictures. A wealth of the literature indeed demonstrates patients with depression and anxiety to ruminate and worry over past emotional events (Nolen-Hoeksema et al., 2008), which may be linked to the dysfunction of emotional recovery mechanisms, that is, rumination or worry over emotional events may disrupt completion of other day-to-day tasks. Therefore, it may be informative to unravel how individual differences in worrying predicts emotional recovery, to help understand potential mechanisms and treatment targets in associated clinical populations such as depression and anxiety (Trull et al., 2015).

In the study reported here, we used behavioral and psychophysiological measures in conjunction with a categorization task to investigate (1) the extent of recovery from arousing negative and positive stimuli, relative to neutral stimuli and (2) the impact of individual differences in worry upon emotional recovery speed. The experimental task consisted of presenting emotional images, followed by a probe stimulus consisting of a greeble target (a similar design by Morriss et al., 2013). Participants were instructed to identify the type of greeble and respond accordingly. In addition, we manipulated the inter-stimulus interval between the images and greeble target in the form of a fixation cross presented for a random period of time varying between 100 ms and 1,000 ms; 1,100 ms and 2,000 ms; 2,100 ms and 3,000 ms; and 3,100 ms and 4,000 ms. Psychophysiological responses (e.g., skin conductance level and corrugator supercilii) to the emotional images were recorded, to serve as metrics of emotional reactivity. Behavioral responses such as reaction time (RT) and accuracy to the greeble targets were recorded, to serve as metrics of emotional recovery, quantified as the level of interference or facilitation on subsequent greeble targets. We used varying temporal intervals to examine how valence and arousal would impact the temporality of emotional recovery speed. We opted for shorter and longer temporal intervals because of the paucity of research examining the impact of preceding emotion-laden stimuli on the processing of targets over a timescale of several seconds. In doing so, we aimed to capture how emotional stimuli might disrupt cognitive processes related to categorizing the target. We used International Affective Picture System (IAPS) images (Lang et al., 2005) as emotional stimuli because they have been shown to induce emotion (Lang & Bradley, 2010), reliably modulate psychophysiological responding and impact subsequent task processing (Brown et al., 2012; Ihssen et al., 2007; Weinberg & Hajcak, 2011). Our subset of IAPS pictures consisted of negative and positive emotional pictures that were matched in arousal, as well as neutral pictures, to assess the influence of valence and arousal upon recovery outcomes. We used greeble stimuli because they are abstract and are as intrinsically neutral.

We had four main hypotheses. Firstly, we expected arousing pictures, compared to neutral pictures, to elicit greater psychophysiological responses (Lang & Bradley, 2010). Secondly, we expected arousing (vs. non-arousing) pictures to interfere with the subsequent processing of greeble targets, as shown by slower RTs and reduced accuracy on following greeble targets (Ihssen et al., 2007; Weinberg & Hajcak, 2011). Thirdly, modulation of RT and accuracy would be contingent upon the temporal interval between the arousing picture and target. We proposed that interference between an arousing image and target will occur over shorter temporal intervals due to increased competition between the image and the target, thus suggesting a slower recovery speed to emotional images, relative to neutral images (Bocanegra & Zeelenberg, 2009; Ciesielski et al., 2010; Kennedy & Most, 2015; Most et al., 2007; Weinberg & Hajcak, 2011). We expected this to be shown by slower RTs and reduced accuracy to greeble targets. Based on the behavioral findings of Bocanegra and Zeelenberg (2009) and Ciesielski et al. (2010), we predicted that facilitation will ensue when the temporal interval between an arousing picture and target is longer, as the competition between the image and the target will be reduced. We anticipated this to be evidenced by faster RTs and better accuracy to greeble targets. Lastly, we examined how individual differences in worry and anxiety could predict emotional reactivity to the IAPS and emotional recovery, indexed by RT’s and accuracy to the greeble target. We expected participants high in worry, compared to participant low in worry, to exhibit greater emotional reactivity to the IAPS and slower emotional recovery, for example, greater impact of emotional images on subsequent greeble targets (Benning et al., 2015; Kennedy & Most, 2015; Larson et al., 2007).

Method

Participants

Thirty-five participants from the local area were recruited for this study (Mean age = 23.4 years, SD = 5.43 years, 19 females and 16 males, 2 left handed and 33 right handed). All participants provided written informed consent and received £10 payment for their participation. The procedure was approved by the University of Reading School of Psychology and Clinical Language Sciences Research Ethics Committee. The sample size was not based on a formal power analysis. We based our sample size on previous studies examining effects of emotion on attention using ERPs (Brown et al., 2012; Ihssen et al., 2007).

Stimuli

A total of 140 pictures were selected from the IAPS (Lang et al., 2005). Based on valence (a response of 1 represented “very unpleasant” and 9 represented “very pleasant”) and arousal ratings (a response of 1 represented “very calm and 9 represented “very excited”) from the IAPS set, we identified 40 negative (M = 2.16, SD = .39), 40 neutral (M = 4.88, SD = .32), and 40 positive (M = 7.45, SD = .39) pictures. The positive and negative pictures were matched on arousal (negative pictures M = 6.04, SD = 56; neutral M = 3.172, SD = .49; and positive M = 6.076, SD = .53). The positive, neutral, and negative images were matched on picture luminosity, complexity, and social content of the scenes depicted.

Abstract figures, called “greebles” (Gauthier & Tarr, 1997), were used for the judgment task. These greebles share common spatial configurations and can be easily divided into two categories. For the purposes of the current study, we categorized the greebles into two groups (Group A and Group B) based on the orientation of their limbs, selecting a total of 12 greebles. Four greebles were randomly allocated to a valence picture condition, with two of each type (A or B) of greeble.

Task design

All of the tasks were designed using E-Prime 2.0 software (Psychology Software Tools Ltd, Pittsburgh, Pennsylvania, USA). Within each task, the experimental trials were randomized and the response button press on the mouse was counterbalanced. Tasks were presented on a 17-inch LCD flat screen monitor, which had a 60 Hz refresh rate.

Emotional recovery task

Participants were required to passively view emotional pictures and identify the type of a following greeble target by pressing the appropriate button on the mouse. To avoid any spatial attention confounds, a fixation cross was presented between the prime and the target. This fixation period varied in timescale to assess the extent of engagement to the emotional picture prime and subsequent greeble target. The emotional recovery task consisted of 140 trials: three valence: negative, neutral, and positive × 4. Time: 100–1,000 ms, 1,100–2,000 ms, 2,100–3,000 ms, and 3,100–4,000 ms. The presentation times of the task were 1,000 ms fixation cross, 5,000 ms picture prime (negative, neutral, and positive), 100–1,000 ms, 1,100–2,000 ms, 2,100–3,000 ms, 3,100–4,000 ms fixation cross, 500 ms neutral greeble target (A, B), and a 2,000 ms response window (see Figure 1).

A sample trial from the emotional recovery task: A fixation cross was presented at the center of the screen to direct participants’ attention. Next, an IAPS image was presented, followed by a variable temporal interval. Lastly, a greeble target was briefly presented. Participants were instructed to identify the type of greeble target as quickly and as accurately as possible. IAPS: International Affective Picture System.

IAPS rating task

A total of 140 IAPS pictures from the main experimental task were presented again. The pictures were presented in a random order for 2 s before displaying the valence scale, followed by the arousal scale, with the next trial starting after participants completed both ratings using the keyboard. Each scale consisted of a 9-point Likert-type scale, where participants were given instructions to rate valence, that is, “how positive or negative you felt in response to the picture” and arousal, that is, “the extent to which you felt calm or excited in response to the pictures.” For the valence ratings, a response of 9 represented “very pleasant” and 1 represented “very unpleasant,” while for arousal 1 represented “very calm” and 9 represented “very excited” (Lang et al., 1999).

Questionnaires

To assess anxious disposition, participants were given trait anxiety and worry questionnaires to complete: Spielberger State-Trait Anxiety Inventory—Trait Version (STAIX-2; Spielberger et al., 1983) and the Penn State Worry Questionnaire (PSWQ; Meyer et al., 1990). We were specifically interested in whether markers of reactivity and recovery would be differentially impacted by STAIX-2 and PSWQ. Similar distributions and internal reliability of scores were found for the anxiety measures, STAIX-2 (M = 40.24; SD = 9.28; range = 27–65; α = .90) and PSWQ (M = 45.32; SD = 16; range = 18–76; α = .95).

Procedure

Upon arrival, participants were informed of the experimental procedure and asked to complete a consent form. Participants were then given 12 practice trials of the emotional recovery task. Following this, sensors were placed on the corrugator supercilii, zygomaticus major, and middle of the forehead (which served as a ground). In addition, on the participants’ nondominant hand, a pulse transducer was attached to the distal phalange of the ring finger and two skin conductance sensors were attached to the distal phalanges of the index and middle fingers. Participants were seated in a sound-attenuated room in front of a computer monitor with the distance between the eyes and the computer screen fixed at approximately 60 cm. Next, participants completed the emotional recovery task. After completion of the emotional recovery task, all physiological sensors were removed. Lastly, participants performed the IAPS rating task and self-report questionnaires on the computer.

Physiological measures

Physiological recordings were taken across the emotional recovery task. Recordings were obtained using AD Instruments (AD Instruments Ltd, Chalgrove, Oxfordshire, UK) hardware and software. An ML138 Bio Amp connected to an ML870 PowerLab Unit Model 8/30 amplified the signal for all physiological measures, which were digitized through a 16-bit A/D converter at 1,000 Hz. Pulse-derived interbeat interval was measured using a MLT1010 Electric Pulse Transducer (not analyzed here). Skin conductance level was measured with dry ML116F Bipolar Finger Electrodes. A constant voltage of 22 mVrms at 75 Hz was passed through the electrodes, which were connected to an ML116 GSR Amp. Facial EMG measurements of the left zygomaticus major (not analyzed here) and corrugator supercilii muscles were obtained using two pairs of 4 mm Ag/AgCl bipolar surface electrodes connected to the ML138 Bio Amp. The centers of each pair of bipolar surface electrodes were approximately 15 mm apart. The reference electrode was a singular 8 mm Ag/AgCl electrode, placed upon the middle of the forehead, and connected to the ML138 Bio Amp. Before placing the EMG sensors the skin site was cleaned with distilled water and slightly abraded with isopropyl alcohol skin prep pads, to reduce skin impedance to an acceptable level (below 20 kΩ).

Data reduction and analysis

RTs in the emotional recovery task were scored from correct responses and only those RTs above 250 ms and below 1,500 ms were included (4,204 of 4,320). Accuracy scores from the emotional recovery task were calculated by the proportion of greeble targets correctly identified.

The physiological parameters were extracted using AD Instruments software. Raw corrugator was root mean squared online. No transformation or detection method was required for skin conductance level. We extracted baseline-corrected second by second means over the course of the picture (5 s) for corrugator supercilii and skin conductance level. Baseline mean values were taken 1 s before each trial began. A macro program was used to extract values for each metric, condition and time point, by taking the weighted data point mean from each second window. Trials with artifacts due to motion or noise were scored out (5 of 3,960). All subject data were then z-scored to reduce individual difference variability in responsivity. Two subjects were removed from the physiology analysis because of excessive movement, thus, leaving a total of 33 participants.

We used a series of multilevel models to assess the impact of emotion, PSWQ and STAIX-2 on ratings of the pictures, physiological reactivity to pictures, and behavioral responses to the target. For ratings of pictures, we entered Emotion (negative, neutral, and positive) at level 1 and individual subjects at level 2, with PSWQ, and STAIX-2 entered as individual difference predictor variables. In addition, for corrugator supercilii activity and skin conductance level to the pictures, we entered Emotion (negative, neutral, and positive) and Post (post picture onset: 0–1,000, 1,000–2,000, 3,000–4,000, and 4,000–5,000 ms) at level 1 and individual subjects at level 2, with PSWQ, and STAIX-2 entered as individual difference predictor variables. Similarly for RT and accuracy to the target, we entered Emotion (negative, neutral, and positive) and Time (greeble target onset: 100–1,000, 1,100–2,000, 2,100–3,000, and 3,100–4,000 ms) at level 1 and individual subjects at level 2, with PSWQ, and STAIX-2 entered as individual difference predictor variables. For all models, we used a diagonal covariance matrix for level 1. Random effects included a random intercept for each individual subject, where a variance components covariance structure was used. Fixed effects included Emotion or Emotion and Time. We used a maximum likelihood estimator.

We report the specificity of one trait measure with respect to the other where a significant interaction was observed. Then, we performed follow-up pairwise comparisons on the estimated marginal means, adjusted for the predictor variables (PSWQ and STAIX-2). Any interaction with a trait measure was followed up with pairwise comparisons of the means between the conditions for the trait measure estimated at the specific values of +1 SD or −1 SD of the mean. Similar analyses have been reported elsewhere (Morriss, 2019; Morriss & McSorley, 2019).

Results

Picture ratings

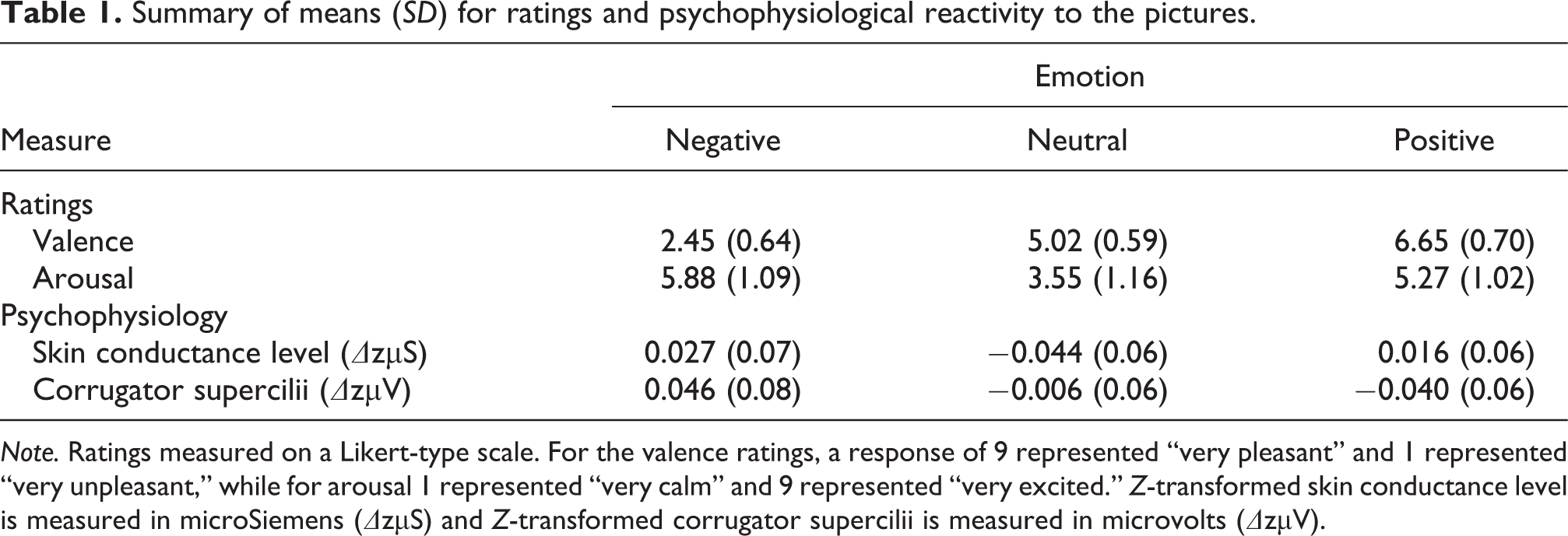

For valence ratings, the multilevel model revealed a significant main effect of Emotion, F(2,66.247) = 467.239, p < .001, and Emotion × PSWQ interaction, F(3,35) = 6.710, p = .001. Negative pictures were rated as the most unpleasant, positive pictures were rated as the most pleasant and neutral ratings were rated as neither unpleasant nor pleasant, p’s < .001 (see Table 1). Follow-up analyses revealed that high PSWQ individuals rated negative pictures as more negative, and positive pictures as more positive, than low PSWQ individuals, p’s < .001 (see Figure 3(a)). A significant Emotion × STAIX-2 interaction was also found, F(3,35) = 3.696, p = .021, with similar patterns to those found with PSWQ, albeit less pronounced.

Summary of means (SD) for ratings and psychophysiological reactivity to the pictures.

Note. Ratings measured on a Likert-type scale. For the valence ratings, a response of 9 represented “very pleasant” and 1 represented “very unpleasant,” while for arousal 1 represented “very calm” and 9 represented “very excited.” Z-transformed skin conductance level is measured in microSiemens (ΔzµS) and Z-transformed corrugator supercilii is measured in microvolts (ΔzµV).

Furthermore, for arousal ratings we found a significant main effect of Emotion, F(2,72.129) = 44.534, p < .001. Both negative and positive picture arousal ratings were significantly higher than neutral, p < .001 (see Table 1). In addition, in our sample, negative picture arousal ratings were significantly higher than positive arousal picture ratings, p = .013 (see Table 1). There was no interaction between arousal ratings and PSWQ or STAIX-2, F < .1.

Physiological reactivity to the picture stimuli

Skin conductance level

For skin conductance level, significant main effects of Emotion, F(2,267.349) = 9.892, p < .001, and Post, F(4,182.477) = 18.945, p < .001, emerged from the multilevel model (see Table 1 and Figure 2). These effects were carried by higher skin conductance level to positive and negative pictures versus neutral pictures, p’s < .001. In addition, higher skin conductance level was observed 1–3 s after picture onset, relative to 3–5 s after picture onset, p’s < .05. Furthermore, there was an interaction between Emotion × PSWQ, F(2, 267.349) = 8.893, p < .001 (see Figure 3(b)), where high PSWQ individuals exhibited higher skin conductance level to both negative and positive pictures, compared to neutral pictures, p’s < .005, while low PSWQ individuals did not show any significant differences for skin conductance level between the picture stimuli, p’s > .06. A similar pattern was observed for Emotion × STAI-X2, F(2, 267.349) = 10.287, p < .001 All other main effects and interactions with PSWQ and STAIX-2 were not significant, max F = 1.627.

Line graphs showing (a) skin conductance level and (b) corrugator supercilii activity to emotional picture stimuli post onset in seconds. Bars represent standard error. Z-transformed skin conductance level measured in microsiemens (ΔµS) and Z-transformed corrugator supercilii measured in microvolts (ΔµV).

Bar graphs depicting +1 SD or −1 SD of the (a) mean PSWQ for valence ratings, (b) skin conductance level, and (c) corrugator supercilii activity to emotional picture stimuli. High PSWQ (worry) versus low PSWQ was associated with greater valence ratings, corrugator supercilii activity and skin conductance level to negative versus positive and neutral pictures. Ratings measured on a Likert scale. For the valence ratings, a response of 9 represented “very pleasant” and 1 represented “very unpleasant.” Bars represent standard error. Z-transformed skin conductance level is measured in microsiemens (ΔµS), and Z-transformed corrugator supercilii is measured in microvolts (ΔµV).

Corrugator supercilii

For the corrugator supercilii, significant main effects of Emotion, F(2,329.260) = 9.544, p < .001, and Post, F(4,161.456) = 2.606, p = .038, emerged from the multilevel model (see Table 1 and Figure 2). These effects were carried by higher corrugator supercilii activity to negative pictures versus neutral and positive pictures, p’s < .005, and neutral versus positive pictures, p = .048. Corrugator supercilii activity was greatest between 2 s and 3 s compared to 1 s and 4–5 s, p’s < .005. Notably, for the corrugator there was a significant interaction between Emotion × PSWQ, F(2, 329.260) = 17.488, p < .001. This interaction was carried by high PSWQ individuals showing larger corrugator responses to negative images versus neutral and positive images, p < .001, and neutral versus positive images, p < .001 (see Figure 3(c)) while low PSWQ individuals showed reduced corrugator responses to negative and neutral images versus positive images, p’s < .05. All other main effects, including Emotion, and interactions with STAIX-2 were not significant, max F = 2.231.

Behavioral reactions to greeble targets

Reaction time

As expected, the multilevel model revealed an interaction between Emotion and Time, F(6, 143.617) = 2.552, p = .022 (see Table 2). Follow-up tests suggest that participants were slower to react (interference) to greeble targets between 1,100 ms and 2,000 ms after negative pictures versus neutral pictures. All other main effects and interactions with STAIX-2 and PSWQ were not significant, max F < .1.

Summary of means (SD) for reaction times and accuracy of greeble targets following picture stimuli.

Note. Reaction time measured in milliseconds (ms) and accuracy scores measured in percent (%).

Accuracy

For accuracy scores we found significant interactions between Emotion × Time, F(6, 123.176) = 4.932, p < .001, Emotion × Time × PSWQ interaction, F(6, 123.176) = 2.342, p = .035 1 and Time × PSWQ, F(3, 108.128) = 2.768, p = .045 (see Table 2). All participants showed interference to greeble targets between 1,100 ms and 2,000 ms following negative versus neutral images and between 2,100 ms and 3,000 ms following positive versus neutral images, as well as facilitation during 3,100–4,000 ms to the arousing (negative and positive) images versus neutral images, all p’s < .05. High PSWQ individuals showed greater interference to greeble targets between 1,100 ms and 2,000 ms following negative versus neutral images and between 3,100 ms and 4,000 ms after negative versus positive image offsets, as well as facilitation during 3,100–4,000 ms to negative and positive images versus neutral images, all p’s < .05 (see Figure 4(a)). Low PSWQ individuals did not show any significant accuracy differences between conditions, all p’s > .3 (see Figure 4(b)). All other main effects and interactions with STAIX-2 were not significant, max F = 1.741.

Bar graphs depicting +1 SD or −1 SD of the mean PSWQ for accuracy scores to greeble targets following emotional picture stimuli. High PSWQ individuals were less accurate to greeble targets between: (1) 1,100–2,000 ms following negative versus neutral images, (2) 3,100–4,000 ms after negative vs. positive images, and (3) 3,100–4,000 ms after neutral images versus negative and positive images. Low PSWQ individuals did not show any accuracy differences between conditions. Bars represent standard error. Accuracy scores measured in percent (%).

Discussion

In the current study, we assessed the impact of emotional images on subsequent categorization of greeble targets in general and in relation to individual differences in worry. To address these questions, participants viewed negative, positive, and neutral pictures from the IAPS, followed by abstract greeble targets, which were to be categorized. Greebles were presented between 100 ms and 4,000 ms after picture offset to capture the time course of recovery from emotional stimuli. Physiological measures including skin conductance level and corrugator supercilii were recorded and served as indicators of arousal to emotional pictures. Measures of RT and accuracy scores were taken as indicators of the impact of emotion on facilitation or interference to the greeble target. We found all participants to show effects of interference up to 1,100–3,000 ms and facilitation between 3,100 ms and 4,000 ms on RT and accuracy scores to greeble targets after emotional picture stimuli. High worry was associated with greater corrugator supercilii and skin conductance level responsivity to negative versus positive and neutral pictures. Furthermore, high trait worry was associated with substantial interference from emotional pictures on greeble target accuracy scores. These results suggest that subsequent processing is still impacted up to 4,000 ms after the offset of emotional pictures, particularly for negative events in individuals with high worry.

Our behavioral findings suggest preceding negative and positive stimuli to reliably modulate the processing of following targets, as indexed by RTs and accuracy scores to the greeble targets. More specifically, we found that participants were on average slower and less accurate to greeble targets presented at 1,100–2,000 ms after negative versus neutral pictures. In addition, participants displayed on average reduced accuracy to greeble targets presented: (1) 2,100–3,000 ms after positive versus neutral pictures and (2) 3,100–4,000 ms after neutral versus negative and positive pictures. These findings are partially in line with previous behavioral experiments showing greater interference on subsequent targets following emotional pictures (Bocanegra & Zeelenberg, 2009; Ciesielski et al., 2010; Ihssen et al., 2007; Kennedy & Most, 2015; Kennedy et al., 2014; Weinberg & Hajcak, 2011). Notably, prior work has focused on, and found effects for, shorter temporal intervals (e.g., up to 800 ms) across a variety of different tasks. The discrepancy in findings may be due to prior work using a faster experimental paradigm, such as RSVP, which also require a faster response from participants. Future work should assess the extent to which a faster versus slower paradigm may result in different temporal patterns of facilitation/interference. However, here we show that interference and facilitation on subsequent targets after emotional content can occur with even longer temporal intervals (e.g. up to 4,000 ms), similar to our previous work (Morriss et al., 2013). Furthermore, we show that the valence of the emotional content can alter the timing of interference: for example, negative pictures disrupted processing of greeble targets at earlier temporal intervals than positive and neutral pictures. We postulate that these effects reflect differences in priority of valenced information, such that negative events typically require an imminent response to avoid pain or potential threat, while positive events require less immediate action (see also McSorley & van Reekum, 2013).

In this study, we showed individual differences in worry to specifically predict reactivity to, and recovery from, emotional events. High worry, compared to low worry, was associated with more negative ratings of negative pictures, as well as greater corrugator supercilii and skin conductance level to negative versus positive and neutral pictures. Furthermore, high worry was associated with stronger interference on accuracy scores to greeble targets presented between 1,100–2,000 ms and 3,100–4,000 ms following negative pictures. These findings support previous research on individual differences in negative affect and emotional recovery. For example, individuals who report feeling more negative affect or anxious symptoms show greater interference from emotional images on subsequent processing of unrelated stimuli, particularly if the emotional event is negative and subsequent stimuli are presented at longer intervals, for example, 1,500–5,000 ms (Benning et al., 2015; Kennedy & Most, 2015; Larson et al., 2007). Importantly, our findings with worry were specific over trait anxiety, highlighting that worry may be more closely aligned with dysfunction of both emotional reactivity and recovery mechanisms. Further work is needed to clarify the specificity of worry and its role in emotional recovery (Trull et al., 2015).

The experiment had a few shortcomings that should be further addressed in future research to assess the robustness and generalisability of the findings reported here. Firstly, the sample size was rather small for examining effects of individual differences and future studies should look to test larger and more diverse samples. Secondly, physiological measures such as skin conductance are relatively slow, compared to electromyography and ERPs, and therefore may be less optimal for assessing temporal effects of emotion.

Overall, we found all participants to show effects of interference up to 1,100–3,000 ms and facilitation between 3,100 and 4,000 ms to greeble targets after emotional pictures. High worry was associated with greater physiological reactivity to negative versus positive and neutral pictures, and substantial interference from emotional pictures on greeble target accuracy scores. These results suggest that subsequent processing is still impacted up to 4,000 ms after the offset of emotional pictures, particularly for negative events in individuals with high worry. These findings highlight the importance of individual differences in worry in predicting emotional reactivity and recovery. More broadly, these findings highlight the potential of worry-based mechanisms to help understand pathological anxiety and mood disorders and inform potential treatment targets.

Footnotes

Acknowledgments

The authors thank the participants who took part in this study and members of the CINN for their advice and feedback. To access the data please email Dr. Jayne Morriss.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was supported by the Centre for Integrative Neuroscience and Neurodynamics (CINN) at the University of Reading and by a British Academy grant (reference SG111611) awarded to Carien van Reekum.