Abstract

Struggling writers including students with disabilities (SWD) need instructional strategies to support their ability to write independently. Integrating technology-mediated instruction to support student writing can mitigate students' challenges throughout the writing process and personalize instruction. In the present group design study, teachers taught 11 to 12 year olds in sixth grade with varying abilities to use a technology-based graphic organizer (TBGO) when digitally planning and composing a persuasive paragraph. Results indicated that the writing quality of the paragraph and use of transition words by typical and struggling writers was significantly better when the TBGO was used as compared to students who wrote without the TBGO. Additionally, when the TBGO was removed, students in the treatment group maintained gains. Student participants and teachers in this study identified features that were especially supportive to students’ writing behaviors. Implications for practice and future research are discussed.

Writing involves strategic processes, knowledge of topic and audience, and the integration of varied skills (Graham et al., 2017). An already complex problem-solving process, writing becomes even more challenging given the genre of persuasion, a typical requirement in high-stakes assessments in the United States. Persuasive writing is a form of nonfiction writing in which the writer provides his/her personal opinion on a topic and provides a logical argument to convince others of his/her opinion. Persuasive writing necessitates a clear, on-topic stance and quality, convincing reasoning. Identifying salient reasons may prove challenging for students with learning and/or behavioral disabilities who lack self-determination skills and may or may not believe their opinions or ideas are worthy of arguing (Cuenca-Sanchez et al., 2012). Equally challenging for some in this population is the need to consider someone else’s perspective on a topic in order to defend your own.

In 2011, the National Center for Education Statistics (NCES) administered the first National Assessment of Educational Progress (NAEP) computer-based writing assessment for 13 to 17 year olds in the U.S. Students were required to complete two 30-minute tasks, including writing to persuade. Less than a third of the students were able to include coherent text with appropriate transitions and varied sentence types (NCES, 2012).

In 2012 when the computer-based writing assessment was piloted with over 10,000 students aged nine and ten to determine computer writing aptitude, results were inadequate. More than 68% of students’ responses scored 3 or below on the 6-point rubric; 40% scored 1 or 2. Only 1% of students with disabilities (SWD) and 5% of English language learners (ELLs) performed at or above the proficient level (NCES, 2012). In addition to writing scores, data included text length and information about prior exposure to writing on the computer. More computer use for composing texts was “associated with higher NAEP writing test scores” (Mo and Troia, 2017: 764) and data showed “low performing students have less exposure to writing on the computer, both inside and outside of school, and that they produce shorter texts” (White et al., 2015: 64). Therefore, introducing technology-mediated instruction and interventions to support essay writing is relevant and necessary.

Teacher integration of technology can support increased student learning and engagement (Darling-Hammond et al., 2014; Williams and Beam, 2019). This is especially important for SWD who often present as work avoidant or less engaged when tasked with writing. Technology can enhance writing instruction, mitigate students’ challenges, and personalize instruction (Little et al., 2018). For example, use of a word processor alleviates the linguistic aspects of writing and its use has shown a moderate effect on writing quality for typical learners and even larger effects for low-achieving writers (Graham and Perin, 2007). The use of a word processor along with instruction in keyboarding can allow individuals to focus on higher-order tasks such as organizing their ideas. In addition, text-to-speech features can improve spelling accuracy and overall writing quality for SWD (Cullen et al., 2008). Also, technology-based graphic organizers (TBGOs) have shown promise for students with learning disabilities (LD) during the prewriting process (Evmenova et al., 2016; Ciullo and Reutebuch, 2013). TBGOs can allow students to visually see connections between initial thoughts and sentences. The process of organizing ideas and planning may take more time, but will likely improve students’ writing quality (Hauth et al., 2010; Shen and Troia, 2018).

Writing is a complex process. Writing on the computer, as evidenced by results of national writing assessments, is not simply a matter of shifting in mode of expression without impact on the writing process and product. While research-based instructional interventions exist, the demands of navigating the writing process while also adapting to the use of technology can overwhelm students who are already struggling with writing. The additional support needs of struggling writers, such as those with learning disabilities and/or for whom English is a second language, within the context of an ever-increasing technology-based school system, might pose challenges for teachers.

Recent research has shown writing with iPads to be an effective means for supporting students with disabilities to spell and compose sentences (Berninger et al., 2015), however, more research is needed to determine the effectiveness of mobile technology to support the writing behaviors of SWD (Cumming and Rodriguez, 2017). And, whereas research-based writing strategies are beneficial for all students, they generally do not involve technology (Gillespie and Graham, 2014). There is limited evidence of comprehensively effective technology-based writing strategies (Goldberg et al., 2003; Little et al., 2018; MacArthur, 2009).

Present study

The purpose of this study was to determine the effectiveness of using a TBGO to improve the writing performance of students, particularly struggling writers. We also sought to understand student and teacher TBGO experiences. Teachers taught 11–12 year old students of varying ability to use a TBGO when planning and composing a persuasive paragraph.

In prior studies, when 11 to 14 year old SWD used the TBGO in Microsoft Word (Evmenova et al., 2016; Regan et al., 2016a) or on iPad applications to plan and compose a persuasive essay (Regan et al., 2017), the quantity and quality of their writing improved and these improvements maintained when the TBGO was removed. The present study extends former research in two ways. First, this investigation involved students from an entire 6th grade level of a school. Second, students used multiple platforms of the TBGO. The research questions were (a) does the use of a TBGO increase written words, number of transition words, and writing quality of persuasive writing composed by 11 to 12 year old students?; (b) to what extent are students able to maintain writing performance gains without the TBGO?; (c) how does the TBGO-supported writing performance of struggling writers compare to typical peers?; and (d) how do struggling writers and their teachers experience persuasive writing with and without the TBGO?

Methods

The study used a nonequivalent comparison group quasi-experimental design (Shadish et al., 2002) to determine the effects of a TBGO with embedded self-regulated learning (SRL) strategies on the persuasive writing of a diverse group of 11 to 12 year olds. Pre, post, and maintenance measures were administered to a writing intervention treatment group and traditional instruction comparison group. The design allowed for empirical testing of the TBGO in a naturally occurring environment. Since students could not be randomly assigned to classes, each teacher taught two classes of students; one class was randomly assigned to the treatment group, the other randomly assigned to the comparison group. Six classrooms were inclusive, two were self-contained special education, and two were general education with struggling writers, but no SWD. Persuasive writing was the curricular focus.

Participants

A team of teachers expressed interest in adopting a consistent approach to teaching writing. Prior to the onset of the study, permission from the school and university institutional review boards were obtained. Teacher participants provided consent and students provided assent and parental consent.

Students

Of the 156 students eligible, 141 students and their parents gave consent for participation. Some students were excluded for the following: absences on day of testing (n = 10), behavioral challenges (n = 2), a student identified with an intellectual disability (n = 1), and four writers with pretest scores at ceiling (n = 4). Subsequent analyses were based on data from 124 students (59 males and 65 females). Of these, 76 were defined as typical writers and 48 were identified as target students. Target students were those identified with a disability (n = 23), those receiving ELL services (n = 8), or those who were teacher-identified as struggling writers (n = 17). Students were considered to have a disability if they received special education services as identified on their Individualized Education Program (IEP). If the student with a disability accessed the general education curriculum and participated in state Standards of Learning (SOL) tests, they were eligible to participate. Students with cognitive developmental delays who accessed an adapted curriculum and subsequently did not participate in SOLs, were not eligible to participate (e.g., intellectual disability). Students with a primary disability of learning disability, other health impairment, speech or language impairment, emotional and/or behavioral disability, autism, and/or attention deficit/hyperactivity disorder therefore met criteria. Another criteria was that the IEP had to include at least one goal or objective relevant to writing performance. For the purposes of this study, school district guidelines classified students as ELLs if they were exempt from standardized testing within the last two school years due to limited English proficiency. The teachers identified struggling writers as showing limited or no independence with writing and those whose writing consistently showed poor organizational skills. Prior to the study, the researchers assessed the students’ writing fluency using the Woodcock-Johnson III (WJ-III) writing fluency subtest. On average, all students performed within expected age norms.

Treatment condition. There were a total of 62 students in the treatment condition: 33 typical and 29 target students. The subgroups of target students included 14 students receiving special education services (LD, other health impairments, autism), six receiving ELL services (Spanish, Arabic, Chinese), and nine struggling writers. Prior to data collection, target students reported using the computer infrequently in school and for final projects primarily. At home, they used a laptop or desktop computer for playing games, social interactions, and searching the Internet. Five of the 29 target students mentioned using the computer for school. When asked what approach or strategy they used when writing, many students shared that they just think and find ideas, while others described a process that included prewriting. Twice as many target students preferred writing on the computer to writing by hand.

Comparison condition. There were a total of 62 students in the comparison condition: 43 typical and 19 target students. The subgroups of target students included nine students receiving special education services (LD, other health impairments, emotional and behavioral disability, autism), two students receiving ELL services (Farsi, Spanish), and eight students identified as struggling writers. Prior to data collection, target students reported using the computer infrequently in school. At home, the majority identified having access to a shared computer and using it primarily for gaming and searching the Internet. Three of the 19 students mentioned using it for writing and schoolwork. When asked what approach or strategy they used when writing, students mentioned they think, then write; nine students mentioned prewriting, one drawing, and the remainder shared they just started “into the writing.” Twice as many target students preferred writing on the computer to writing by hand.

Teachers

Four licensed general education language arts teachers and one licensed special education teacher agreed to participate. The mean age of the teachers was 39.5 years and the mean years of teaching experience was 13.83. All four teachers held a Bachelor’s degree and two held a Master’s degree. Four were female and one was male.

Setting

The study took place in the Mid-Atlantic region of the U.S. at a public school for students 11–14 years of age. The school had 1,057 students enrolled. Fifteen percent of the student population was Asian, 8% was African American, 15% was Hispanic, and 6% was multiple races. The remaining 57% of the population was White. Fourteen percent of the students received special education services. Five percent were identified as ELLs, and 17% were identified as economically disadvantaged. Teachers from three treatment and three comparison classes elected to use school laptops that were shared by teachers. Students in the other two treatment and comparison classes used researcher-provided iPads. Each student had access to headphones and mice.

Independent variable

The TBGO with embedded self-regulated learning (SRL) strategies was the writing intervention used in this study. The TBGO was provided in multiple platforms to accommodate varying hardware available and teachers’ instructional preferences. If students used laptops, the TBGO was in a Microsoft Word document. For students using the iPad, the TBGO was a mobile application. Both platforms were essentially the same with slight variations.

The TBGO assisted students through five parts: (1) pick your goal; (2) fill in the table; (3) copy text from the orange box; (4) paste text; and (5) self-evaluate. (Please see Hughes et al., 2019 for a complete description of the TBGO). First, students selected a goal from a drop down menu identifying how many reasons and elaborations they wanted to include in their paragraph. They then planned and drafted sentences in a four-column table. A mnemonic, IDEAS, was included in the first column to help students recall parts of a persuasive essay (I = Identify your opinion; D = Describe three reasons; E = Elaborate with examples; A = Add transition words; and S = Summarize). After organizing ideas in the next column, students typed sentences in the third column. The final column was for students to self-monitor their progress by checking the true statements. TBGO features included: color-coding, drop-down menus, text hints, audio comments, text-to-speech (TTS), and SRL strategies. The embedded SRL strategies were informed by the self-regulated strategy development model (Harris and Graham, 1992).

The TBGO app version had slight variations. For example, if the student did not begin the sentence with a capital letter, an automatic message appeared prompting correction. Also, in part three of the app, the students pressed a button to shift their sentences from the table into a traditional paragraph form. Cut and paste was not necessary.

Instructional lessons

The teachers presented four, 50-minute lessons to explicitly teach the TBGO. Lesson one introduced the importance of writing and the IDEAS mnemonic. The next lesson focused on modeling use of the TBGO. The third emphasized self-monitoring and self-evaluation followed by an opportunity for guided practice. Lesson four was independent practice. A video camera was used to record each lesson.

Dependent variables

Dependent variables included written words (WW), number of transition words (NTW), and writing quality (WQ). Students’ paragraphs were scored for length by counting WW and NTW in the response. Using Microsoft Word’s word count feature or the automatically populated word count in the TBGO application, WW in each paragraph was recorded. Transition words were words at the beginning of a sentence that signaled a transition between thoughts (e.g., First). Transition words could be selected from the pull down menu on the TBGO or a student could insert their own. Researchers modified a rubric (e.g., Cuenca-Sanchez et al., 2012) to score the paragraphs for WQ on a scale from 0–8. Rubric descriptions considered the elements of strong persuasive writing, organization, completeness of sentences, and cohesiveness. For example, a score of one was a complete sentence inclusive of at least one element in its correct form. The rubric also considered each element’s function. For example, a reason was scored if it was relevant and discrete. This descriptor was to discourage use of run-on sentences or repetitive reasons.

Writing prompts

The researchers randomly selected two prompts for each test from a pool of approximately 30 validated prompts (e.g., Cuenca-Sanchez et al., 2012). All students in both conditions received the same choice of prompts per testing session and were asked to respond to one. Prior to the study, prompts were reviewed to ensure that they were culturally sensitive, age appropriate, and aligned with the persuasive genre.

Procedures

Pre-Interviews

Semi-structured focus group interviews consisted of 3–6 students from each class per group across conditions. The questions focused on target students’ (a) exposure to writing with technology at home and school, (b) strategies used when writing, and (c) preference for writing by hand or computer. Interviews were audio recorded.

Teacher professional development (PD)

Prior to instruction, the five teachers received PD on the TBGO in a 6.5-hour session across two days. The first day of the PD included a project overview, orientation to the instructional lesson PowerPoint materials, an opportunity to use all intervention components, and extensive modeling of the lessons. On day two, participants completed a lesson study in which they delivered portions of the instructional lessons and received feedback from their peers and researchers (see Regan et al., 2016b).

Pre-test

Following pre-interviews, four classes of students were provided iPads and six classes of students were provided laptops. Teachers read a scripted protocol, which included the choice of prompts. Students responded for no longer than 30 minutes.

Instruction

After pretest, students in the treatment condition received TBGO instruction. After four lessons, the students participated in a practice session. At the end, target students were evaluated on their mastery of the TBGO via an observational criterion protocol (i.e., a student think-aloud). If mastery was not met, then the teacher re-taught. Students met criteria if they were able to demonstrate full use of the TBGO.

Students in the comparison group followed their every-day instruction related to persuasive writing. Every day instruction did not include or infrequently included the use of iPads or laptops to compose. Teachers provided 50-minute lessons on persuasive writing similar to the treatment group. They modeled application of the writing process and editing/revision strategies during whole group instruction. They also provided the students with an opportunity to practice using the same writing prompts the treatment group used.

Post-test

Following the practice session, teachers read a scripted protocol to administer a posttest to students in both conditions. Students wrote to one of two persuasive prompts using an iPad/laptop for no longer than 30 minutes. Students in the treatment condition used the TBGO.

Maintenance testing

A week after posttest, maintenance testing was administered. Procedures replicated pretest procedures, with students in both conditions composing responses on the same device used at pretest (i.e., laptop, iPad). The TBGO was not available for maintenance testing.

Post-interviews

After maintenance, 5–8 target students from the treatment condition were interviewed in focus groups. Students were asked to share perceptions about the TBGO, the SRL strategies, and the technological supports. Also, a semi-structured protocol was used to interview each participating teacher 1:1 to glean perspectives of their experience.

Inter-rater reliability (IRR) and fidelity of implementation

Prior to scoring, the lead author trained the research team to score writing probes across all measures. Paragraphs from the current study were reviewed and scored by a primary rater. Then, an independent rater scored 33% of paragraphs from each testing and group condition. The primary rater and independent rater met to compare scores, discussed any discrepancies, and clarified scoring conventions. The total agreement formula was used (smaller number of larger number × 100). For the WW and NTW, IRR was 96.4% (range = 92.9% to 100%). For WQ, IRR was 87.3% (range = 81.4% to 93.3%).

Fidelity of testing implementation ensured that scripts were followed, prompts were consistent, and time limits were respected. After watching video recordings of all testing procedures, a researcher reported that they were administered with 100% fidelity. The teacher and a researcher used a checklist to assess fidelity of instructional implementation (FOI). The checklist included 10–12 items that needed to be completed during each session with a yes (complete) or no (incomplete) rating. FOI was calculated by dividing the total number of steps completed by the total number of steps planned. Each teacher and researcher agreed the FOI across all classes in all conditions was 100%.

Data analysis

All responses were scored for WW, NTW, and WQ. Since pretest differences emerged (i.e., number of typical and target students across conditions), analyses of covariance (ANCOVA) were employed, using the respective pretests as covariates. Adjusted means are presented to demonstrate overall students’ performance across both conditions and maintenance testing. Finally, a descriptive analysis was used to analyze the target students’ performance compared to their typical peers.

A qualitative analysis informed by Saldaña (2016) was conducted to determine students’ and teachers’ experiences of the TBGO as a supplement to quantitative findings. A non-affiliated transcriptionist transcribed all interviews verbatim. An independent qualitative methodologist read all transcripts prior to coding. Initial codes aligned with the TBGO components and included: mnemonic (IDEAS), goal setting, brainstorm and sentence column, transition words, and self-evaluation. A final code included general perspectives (positive, negative, and neutral) shared about the TBGO.

The transcripts were segmented and underlined by each etic code and this data was sorted into thematic categories. After analyzing and synthesizing these categories, emergent ideas from student data were triangulated with teacher interview data. Specifically, teacher data were used to support or refute the patterns and relationships found in student data. The methodologist conducted all analysis without use of software and the primary researcher reviewed results for the purposes of peer debriefing.

Results

Since there were differences at pretest, analyses of covariance (ANCOVA) were employed, using the respective pretests as covariates. The ANCOVA using the WW at pretest as a covariate for the WW at posttest revealed no significant differences for WW among the groups F(1, 121) = .477, p = .491 or at maintenance testing F(1, 119) = 2.125, p = .148. The ANCOVA using the NTW pretest as a covariate for the NTW at posttest was significant F(1, 118) = 139.14, p = .000 and also significant at maintenance testing F(1, 113) = 58.258, p = .340. The ANCOVA using the WQ pretest as a covariate for the WQ at posttest was significant F(1, 117) = 11.71, p = .001 but not significant at maintenance testing F(1, 110) = 1.604, p = .208. These results indicate that the treatment condition significantly outperformed comparison students on the NTW used at immediate posttest and at maintenance. In addition, the treatment condition significantly outperformed comparison students in WQ on the immediate posttest, and although data were descriptively higher at maintenance, those differences were not significant for overall essay quality.

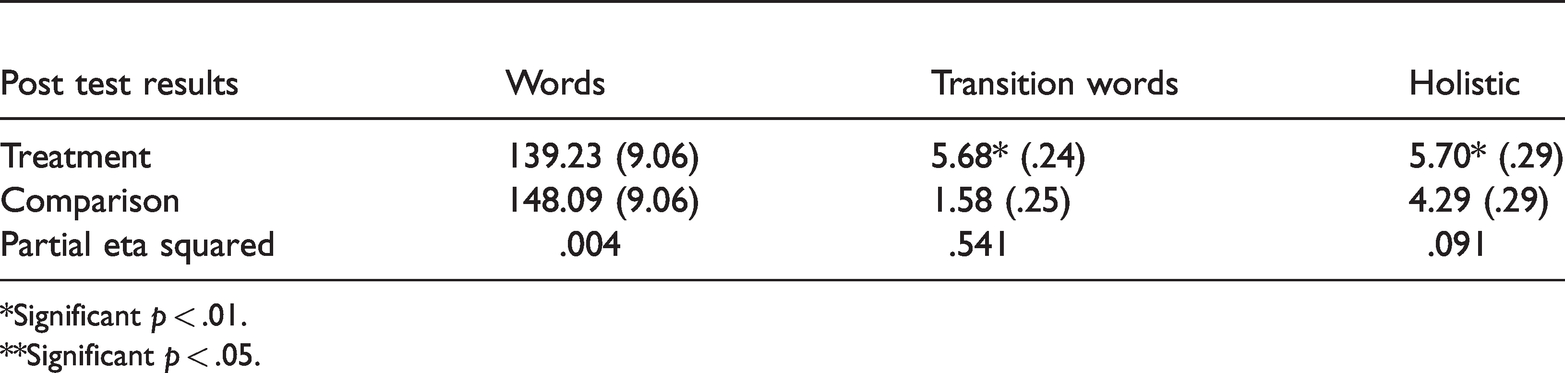

Adjusted means

Adjusted means and standard errors by condition across the dependent measures of WW, NTW, and WQ for post and maintenance testing are presented in Table 1 to show overall students’ performance. Treatment condition students significantly outperformed comparison students on NTW and WQ at post and maintenance testing. Although the comparison condition had higher adjusted mean performance on WW, there was insufficient quality associated with the words to warrant higher WQ. Since a descriptive, but not statistically significant finding was observed at maintenance testing for NTW and WQ measure, it may be that students, especially ELLs, required another booster session or additional practice using the TBGO in order to maintain higher performance levels when use of the TBGO was removed. Performance on WW varied somewhat across conditions, but did not reach significance levels.

Adjusted means, standard error, and partial eta squared at posttest.

*Significant p < .01.

**Significant p < .05.

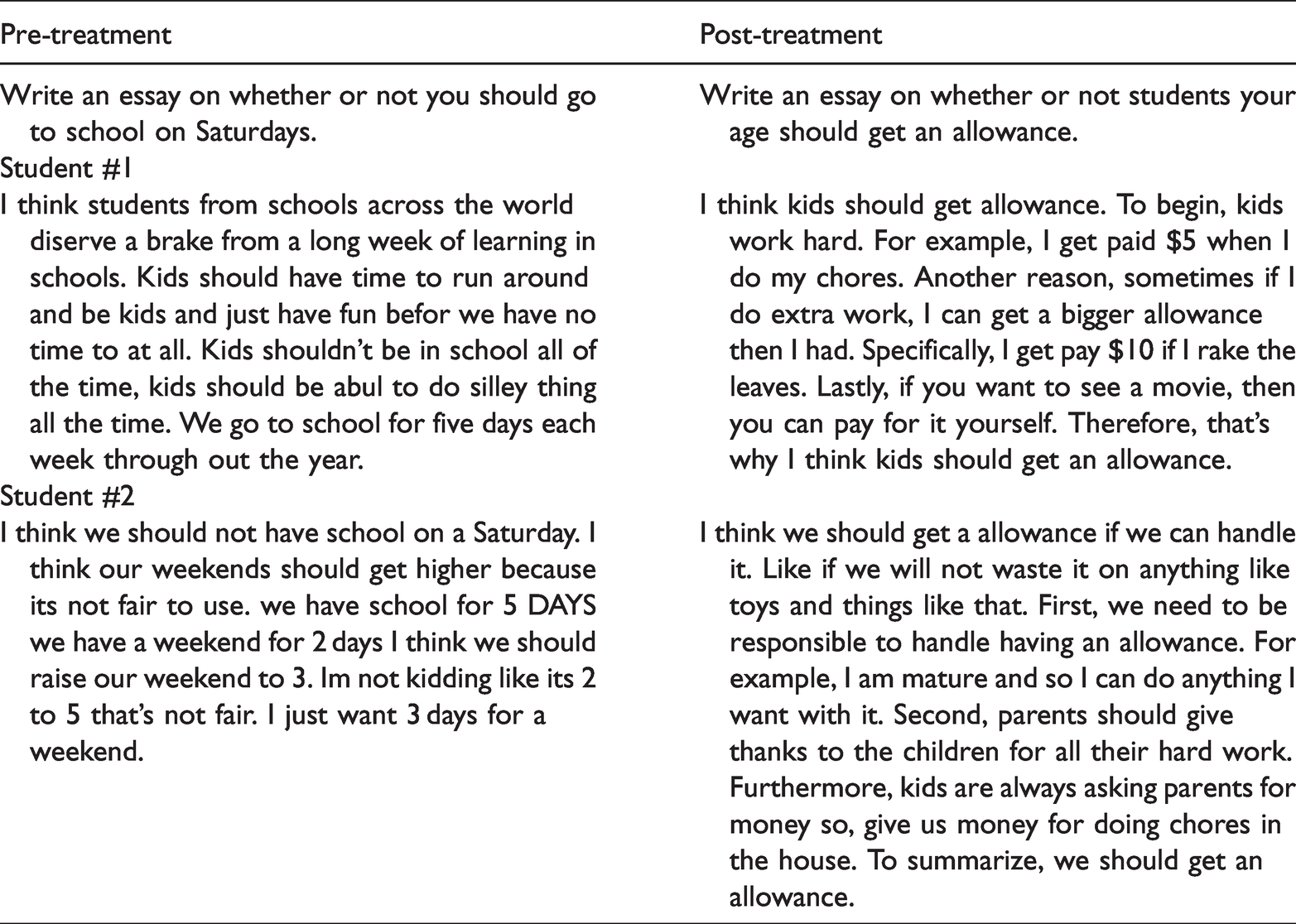

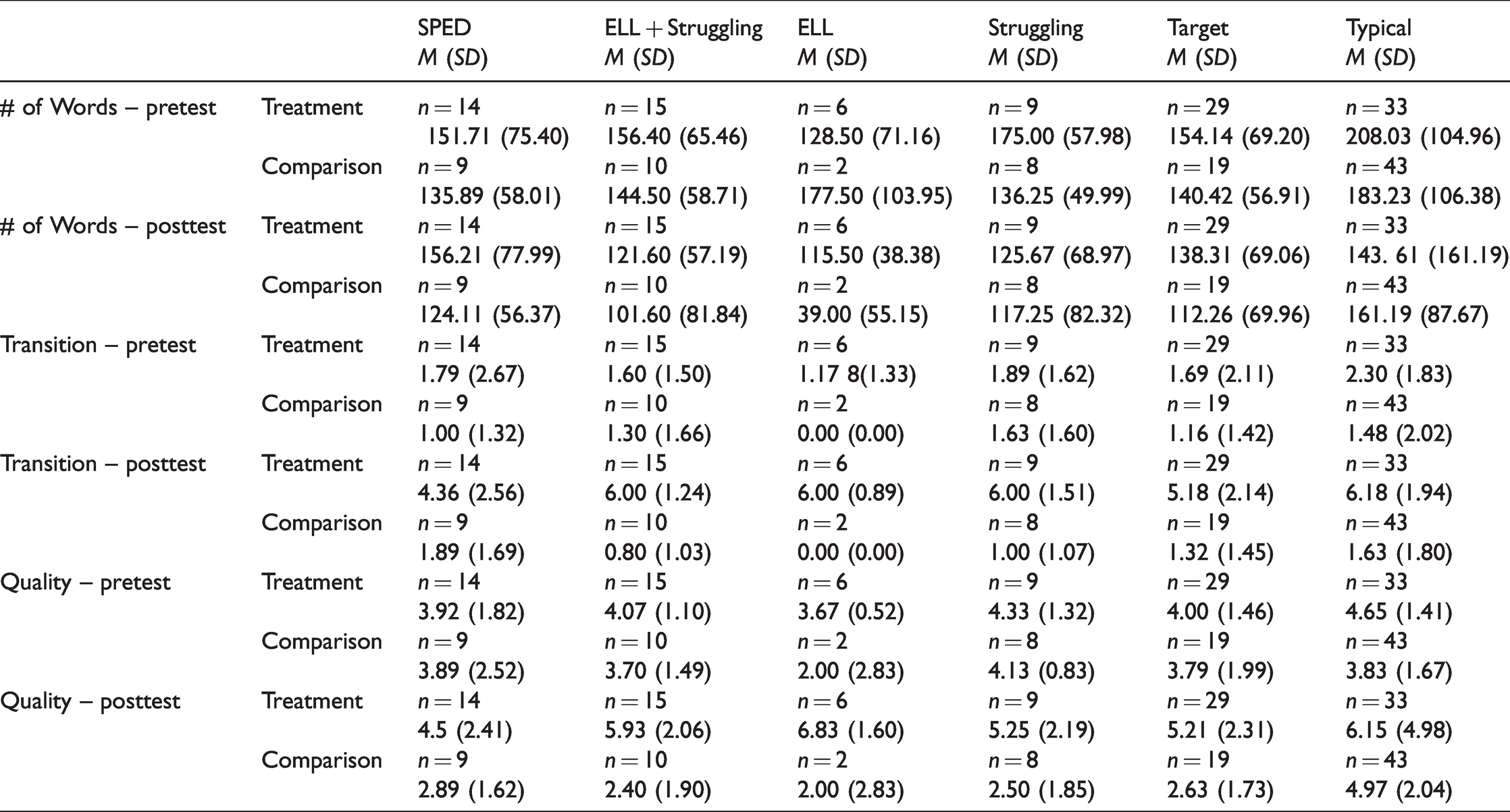

Performance of target and typical students

Target students in this study included SWD, ELLs, and those identified by teachers as struggling writers. A random selection of the written responses from targeted students in the treatment group are represented in Table 2. A descriptive analysis of data of all target student subgroups and typical students is provided in Table 3. The descriptive differences were insufficient to see statistical (even nonparametric) differences given the very small sample sizes of these subgroups within the target student sample. For example, there were relatively fewer SWD enrolled in the inclusive classes (n = 14 in treatment and n = 9 in comparison conditions), so it was deemed inappropriate to complete separate statistical analyses on that sample. However, descriptive analyses indicate that SWD in the treatment condition outperformed descriptively all the comparison students with special needs on all post and maintenance tests. Moreover, the larger sample of all target students assigned to the treatment condition outperformed the typical writers in the comparison condition on NTW and WQ, demonstrating that within an inclusive class as a whole, the target sample benefitted from the TBGO.

Representative responses from targeted students in the treatment group.

Writing performance of target students including sub groups and typical learners.

Student and teacher experiences

Target students and teacher participants perceived the TBGO positively. Students in the treatment condition stated the TBGO was helpful, they liked using it, and it made writing easier. All teachers valued the TBGO. One teacher explained that “the kids saw the organization and they could follow it.” Another shared, “I think they got it very quickly” and “the experimental group had a pretty easy time writing because of it.” Three of the five teachers mentioned that the TBGO was worthwhile for their lower performing students whereas one teacher recognized how all students could benefit from its emphasis on writing quality. “The quality of the organization of their ideas was better…they can get fundamentals and work on length instead of having length and then having to go back and get fundamentals.”

One student did comment that the TBGO “added work” and another said it was “kind of complicated.” The self-contained teacher shared that her students would likely need “a lesson almost every day, a review of it.” Teachers commented that technology and keyboarding were challenges. One student summarized, “If you’re used to technology then it’ll make it easier.” A teacher said, “the kids who didn’t have a lot of background knowledge for technology … they had a hard time … it wasn’t necessarily the platform itself … it was the student technology knowledge that was kind of a barrier.”

Although some students may have been less tech savvy, most students expressed a preference for the TBGO to hand writing. Students found it “easier,” “faster,” and “better,” noting that “instead of just writing you could just type so your hands won’t hurt.” Also, students commented that, “Typing was fun,” and “my favorite part was scrolling.” Similarly, students remarked that the TBGO helped them enhance their skills: “This might be weird, but I’m learning; I learned how to use technology and how to paste.” Another student shared that it “helped you be a better typer.” Although this study didn’t emphasize the distinctions users made about the platforms, use of the iPad was positively perceived in those four classes. A teacher remarked, “the kids were more familiar with that type of technology. I can’t even imagine any of my students using a laptop, except for at school. They all have their smartphones.”

Specific feedback was provided for features of the TBGO. Students in the focus groups readily recalled the mnemonic, IDEAS, that is embedded in the TBGO. A teacher shared, “I really was impressed with the graphic organizer and the students recalling the strategy.” Most students perceived the brainstorming component of the TBGO favorably. Some students thought it was “helpful because it jots all your ideas down so you won’t get lost.” Others found the pre-planning as more work remarking, “it was harder to like just sit there and come up with words.”

Student data indicated that the transition word feature of the TBGO was an easy way to increase writing quality. Students stated the transition word menu was “helpful,” allowed students to “remember them later” and “helps you organize your sentences.” One student recollected transition words in other writings, commenting, “…it kind of got stuck in my brain.”

Student participants perceived the SRL features in varying ways. Some students suggested the goal-setting feature helped them to be better writers, to “help(s) work towards what you want,” while others thought it was unnecessary. One student noted, “you can just write it down on a piece of paper.” Additionally, one teacher lamented that students did not use the goal-setting feature in ways that reflected their abilities, such as “some kids always chose just to do one example and they could have done three.”

Students acknowledged the self-monitoring checklist. Referring to the iPad application, one exclaimed, “I constantly forgot periods so it makes it so you can’t go on without periods …” Students saw it as a gatekeeper explaining that it helped to “make sure you could move forward” and “… if you forget something then it won’t let you go on.” Students’ comments about the self-evaluation feature often referred to checking the number of words or sentence counts. For example, a student said it was “helpful because it could tell how many words I had and [I could] try to improve words, sentences.”

Although feedback about audio supports was not explicitly solicited in student interviews, students across focus groups mentioned the TTS feature specifically. Some said it helped them check their work: “I used the voice thing”; “When we had that thing that would read the paragraph to you it was like, ‘yeah’.” Others expressed feeling more confident about their work because of the feature, remarking, “you can hear if from like another person because sometimes you’re reading it in your head to yourself … like sometimes I mess up reading.” Likewise, a teacher observed student use of the TTS feature. She claimed, “they liked the feature where they could hear it read back to them. I noticed most of them were using that.”

Discussion

This study determined the effectiveness of a technology-based graphic organizer (TBGO) with embedded self-regulated learning strategies on the persuasive writing of typically performing and struggling 11 to 12 year old writers. The measures of writing quality and number of transition words of both typical and the target students in this study were significantly better when the TBGO was used as compared to those who wrote without the TBGO. Additionally, when the TBGO was removed, students in the treatment group continued to maintain improvements over pretest scores. This suggests that students in the TBGO condition were able to internalize and generalize their learning of transition words and of writing quality persuasive paragraphs. These writing outcomes are consistent with previous studies in which target students improved their persuasive writing skills when using the TBGO on mobile platforms (Regan et al., 2017) and laptop computers (Evmenova et al., 2016; Regan et al., 2016a). Results of this study also demonstrate the potential benefit for using the TBGO with all students in inclusive classrooms, adding to the evidence needed for technology-based writing strategies (Goldberg et al., 2003; Little et al., 2018; MacArthur, 2009). Additionally, this study used mobile technologies, which shows a variability of means for providing students with opportunities to write with technology. Mobile technology such as tablets, smartphones, and iPads have increasingly been adopted by school districts worldwide (Ng and Nicholas, 2013) and this study illustrates how students can use an iPad to plan and compose a persuasive paragraph.

In the current study, regardless of condition, students wrote on average relatively less (16 to 28 words less) on posttest than at pretest. This is in contrast to previous TBGO research. We consider two explanations as to why written words decreased from pretest to posttest for both target and typical writers in both treatment and comparison groups. First, prior to this study, students across conditions reported limited use of technology when writing at school and at home. The novelty of using an iPad or a laptop for writing may have caused an inflation of written words at pretest and that novelty waned at posttest, hence less writing. This is aligned with Goldberg et al.'s (2007) findings that when given a word processor to write, or in this case an iPad, students wrote more initially.

A second explanation is that during posttest, students more evenly distributed time to thinking and planning for writing. Despite the fact that students across both conditions reported planning or brainstorming as a strategy for pre-writing, this wasn’t the case for every student and previous research indicates that students with learning disabilities may exhibit an overly inflated view of their writing skills (Bear et al., 2002). Moreover, writing intervention research has resulted in fewer written words at posttest then at pretest (e.g., Mason et al., 2010). These outcomes suggest that when students plan for writing, they may write less, but the quality of their writing improves.

Implications for practice

The use of technology for writing, such as the TBGO allows for student independence and personalized learning. For example, some students preferred using the self-monitoring checklist as a reminder to review their work while others claimed they didn’t. The need for flexibility as to how one uses technology was a consistent pattern across student and teacher comments. This makes sense in large part given the diversity of the student participants across the classrooms. Used flexibly, teachers could be strategic and select features of the TBGO to emphasize for individual learners. Teachers should also be mindful that although the TBGO was reportedly engaging for students to use, they may need ample practice opportunities with the TBGO in order to generalize and maintain learning in its absence.

Insights shared by students and teachers in this study also suggest that there were attributes of the TBGO afforded with the use of technology. For example, the technological features of text-to-speech and the transition word drop-down menu were especially helpful to students. Many of the students also favored the embedded self-regulated learning strategies in the TBGO to aid them in persisting through the writing process. Also, the TGBO may be suitable for students who do not respond to paper-pencil interventions or those who could benefit from assistive technologies when writing. Former scholars have found that technology supported instruction may have an advantage over paper-pencil conditions (e.g., Englert et al., 2007).

Future research and limitations

This study adds to the paucity of research regarding the use of mobile technology for students with disabilities (i.e., Cumming and Rodriguez, 2017). However, there were limitations. First, observing the control condition would have been helpful to further operationalize typical instruction. Second, target subgroups were insufficient to determine differences across conditions and future research is needed to explore if the device used makes a difference in the writing performance of target students. Also, the subgroup of struggling writers was a subjective selection identified by teachers. Norm-referenced writing measures or some other criterion for this subgroup would provide a more objective participant procedure. Similarly, measures of English proficiency such as the World-class Instructional Design and Assessment (WiDA) Access assessment score would provide a more objective description of the English Language Learner participants. Finally, although 20% of the school population in which the sample was drawn were economically disadvantaged, this data was not available to further describe the participants in the sample, specifically.

Conclusion

One’s ability to communicate and defend ideas in writing is an important lifelong skill. The message must be clear, cohesive, and the reasons well planned. For students who struggle to compose text, technology-mediated writing instruction and interventions should be considered. Use of technology can mitigate writing challenges and reinforce student use of strategic practices throughout the process so that writing is of better quality, regardless of length.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was produced under the U.S. Department of Education, Office of Special Education Programs No. H327S120011. The views expressed herein do not necessarily represent the positions or policies of the Department of Education. No official endorsement by the U.S. Department of Education of any product, commodity, service or enterprise mentioned in this publication is intended or should be inferred.