Abstract

Higher education institutions increasingly expect students to work effectively and critically with multiple modes, semiotic resources and digital tools. However, assessment practices are often insufficient to capture how multimodal artefacts represent disciplinary knowledge in complex ways. This study explores and theorises the design and assessment of students’ digitally mediated multimodal work, and it offers insight into how to effectively communicate expectations and evaluate student learning in a digital age. We propose a framework for multimodal assessment that takes account of criticality, creativity, the holistic nature of these assignments and the importance of valuing multimodality.

Introduction

Multimodal assignments are increasingly part of the landscape of higher education, with educators in many disciplines seeking to scaffold students’ competence and engagement with social, visual and interactive information spaces outside formal education into critical and creative capacities to work with and generate knowledge in formal contexts. Such assessments demand that students cultivate multimodal literacy, which draws upon a social semiotic approach to emphasise how multiple modes (e.g. written words, visual images or moving digital images) serve as socially and culturally shaped resources for meaning making. However, assessment rubrics for multimodal assignments have not always kept pace: teachers may be consciously or unconsciously working with ‘a paradigm of assessment rooted in a print-based culture’ (Curwood, 2012: 232).

Consequently, technical and compositional assessment criteria do not always address the richness and complexity of multimodal work. Without criteria that can account for this complexity, instruction, feedforward and feedback cannot fully support students to develop their communicative capacities for future work in digital spaces. There are implications, too, for the development of assessment technologies and automated agents if significant aspects of what it means to express knowledge multimodally and digitally are underexplored.

This article describes findings from a collaborative project between researchers at The University of Sydney in Australia and the University of Edinburgh in Scotland. Our aim was to develop new insights into the nature of digital assignments and methodologies for their design and assessment. This is particularly relevant at a time when universities are rethinking teaching and learning by offering new opportunities for students to collaborate, innovate with technologies and represent their disciplinary knowledge. The project addressed the following research questions:

How do university students use assessment criteria to understand expectations of their multimodal work? How do teachers in higher education design and assess students’ multimodal work?

In this article, we draw on interviews with students and a tutor as well as analysis of multimodal assignments in an undergraduate class at The University of Sydney, and we introduce a framework for multimodal assessment that can guide pedagogy as well as future research in this area.

Background

Multimodality and multimodal composition: from theory to practice

Learning in a digital age involves the creation and assessment of multiple, multimodal and multifaceted textual representations (Coiro et al., 2008). The construction of multimodal texts includes decisions related to the presence, absence and co-occurrence of alphabetic print with visual, audio, tactile, gestural and spatial representations (Cope and Kalantzis, 2009). Whilst learning and literacy are still grounded in decoding, comprehension and production, the modalities within which they occur extend far beyond written language (Curwood and Gibbons, 2009). As Curwood (2012) notes, a focus on the meanings of multimodal student texts has been a central emphasis of work in this area, but there is still a need for more nuanced understanding of the ‘complex ways in which technical skills, composition elements, modes, and meaning interact’ (242). Greater attention to materiality, including artefacts (Pahl and Rowsell, 2011), movement (Leander and Vasudevan, 2009) and place (Ruitenberg, 2005) enriches this understanding, and this attention is beginning to emerge in discussions of multimodality. As Leander and Vasudevan (2009) argue, ‘the multimodal production of culture [is] characterised by changing dynamics of space and time, dynamics that are changing the meanings and effects of cultural production and distribution’ (130). Consequently, the inclusion of multimodal compositions in formal learning environments needs to consider how the conceptualisation, design and assessment of such texts shape teaching and learning.

Within higher education, student learning in many disciplines has traditionally been assessed through written compositions and oral presentations, often in high-stakes exam environments. For students, this can lead to disengagement or difficulty in their ability to share, critique and generate knowledge in university settings. For teachers, this presents challenges to their pedagogy, including how they formatively and summatively assess student learning. Multimodal texts are often collaborative in nature and can challenge students to critically consider places and mobilities in terms of their content, representation and audience. Moving beyond an audience of one, such texts offer authentic opportunities for students to engage with disciplinary knowledge in ways that are innovative, creative and imaginative. Burn and Parker (2003) highlight the importance of process as well as product, noting that ‘making the moving image is itself a kind of drama, where the burden of representation shifts between participants in the process of making the film’ (26). They argue that it is essential to attend to the material bodies and movements of the actors, and how the ‘filmmakers themselves are caught up in this social drama, as partial observers, and as improvisatory re-makers, carving out a new version of the event’ (26). A key challenge, therefore, is how teachers can effectively assess students’ multimodal, digital and collaborative compositions.

Digital assessment within higher education

Higher education institutions around the world are increasingly incorporating digital tools, spaces and resources to support teaching and learning, and prepare graduates to work with technology, engage with multimodal artefacts and be leaders within their respective fields (Adams Becker et al., 2017), including in assessment tasks. Many teachers in higher education are seeking new approaches to incorporating technology into disciplinary learning, assessing collaborative, digitally mediated work and facilitating student learning across modes, tools and semiotic resources (Haythornthwaite, 2012). For instance, The University of Sydney’s 2016–20 Strategic Plan highlights how the distinctive Sydney education encourages students to engage in ‘advanced-level study in their primary field, with further development of broad skills for critical thinking, problem solving, communication, digital literacy, inventiveness, and collaboration’ (The University of Sydney, 2016: 34) while they learn how to ‘work effectively and critically with new media, tools, and resources’ (58).

In all forms of assessment, clear standards are a key factor in creating effective learning environments in higher education, with grade descriptors, rubrics and exemplars commonly used to increase the transparency of assessment standards and assist students to develop assessment literacy (Price, 2005). There are varying accounts of how students use and perceive these resources. Some find them helpful and are able to use them to accurately assess their peers’ work, to guide and structure their own work, and as a checklist (Bell et al., 2013; Bloxham and West, 2004; O’Donovan et al., 2001). However, other studies have shown that students find the language used in rubrics and grade descriptors to be subjective and vague (Cox et al., 2010). Providing more detailed criteria can paradoxically increase students’ anxieties and ‘lead them to focus on sometimes quite trivial issues’ (Norton, 2004: 693), with some students leaning heavily on rubrics and exemplars as ‘recipes’ (Bell et al., 2013). These challenges may be particularly pronounced when assignments call on digital literacies and multiple modes. For instance, in one study, only 11% of students found that the assessment criteria for the multimodal assessment were clear (Cox et al., 2010).

Methodology

This project analysed the creation and assessment of work in an undergraduate class at The University of Sydney. Approximately 100 students take the class each year, most of whom are studying from abroad, and the class runs in each semester as well as in summer and winter sessions. The final assignment is weighted at 20% and involves students using digital tools and multiple modes to reflect their understanding of Australian culture through the creation of short films. The instructions given to students were to work in pairs to create a three-minute film about their Australian cultural experience, including structured narratives, interviews, cinematic elements and a reflective account of the process.

The first stage of the study was conducted over one semester in 2017 with 35 enrolled students. It involved the two Australia-based researchers collecting artefacts such as the unit outline, assessment rubric and student films as well as recruiting a representative sample of five students to participate in two focus groups, multiple in-depth interviews with an additional two focal students and an interview with the tutor. In collaboration with the Scotland-based researcher, we thematically analysed the data from artefacts, interviews and focus groups, and we engaged in multiple cycles of coding (Miles et al., 2014) to identify patterns across data sources and develop descriptive codes to clarify and highlight salient themes.

Our analysis led to a redesign of the assessment task and rubric, which was implemented the following semester in 2018 with 27 enrolled students. The second stage of the study involved one focus group with three students and multiple in-depth interviews with one focal student, and a similar process of data analysis. Given the nature of the case study, and the triangulation of multiple data sources, the sample size was sufficient to establish the trustworthiness of the findings. Because we collected students’ films in both semesters, these artefacts allowed for a comparative analysis of the old and new assignments. Ethics approval was granted by The University of Sydney Human Research Ethics Committee, and pseudonyms have been used for the tutor and students.

Student and teacher understandings of a multimodal assignment: a case study

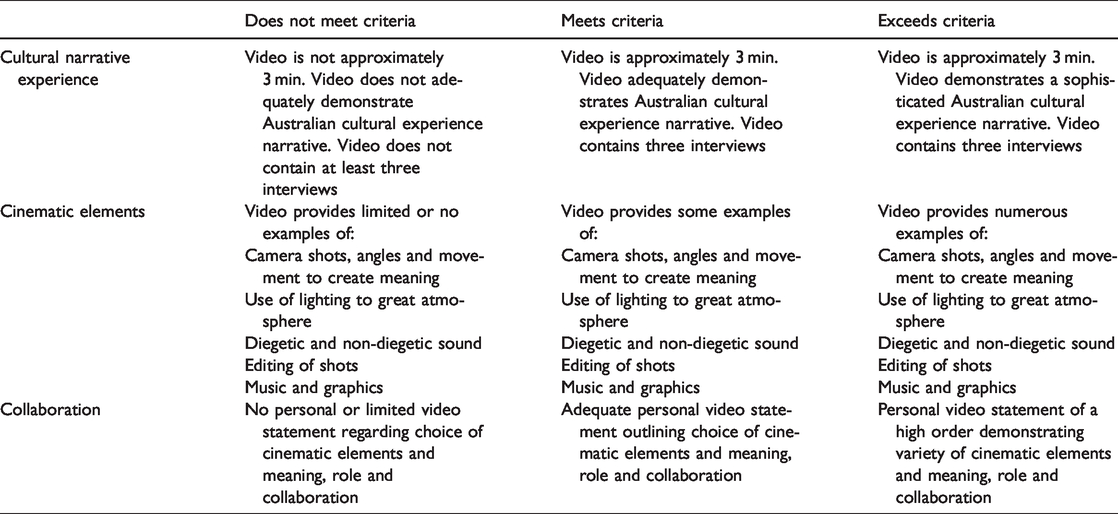

In this section, we focus on the nature of the assignment rubric and how tutors and students engaged with this, drawing on our analysis of interviews, focus groups and artefacts. The original rubric (see Table 1) for this multimodal assignment – a three-minute film – carefully broke down the different elements the students were expected to include in their assignment, under the headings of ‘cultural narrative experience’, ‘cinematic elements’ and ‘collaboration’, with three categories for each criterion (‘does not meet’, ‘meets’ and ‘exceeds’).

Smartphone digital project assessment rubric.

The use of these criteria can be characterised as an act of ‘decomposition’ (Bateman, 2012: 18) – where a holistic view of the multimodal artefact is broken down to focus on specific features or compositional elements (for example, use of lighting, diegetic and non-diegetic sound, and transitions). Yancey (2004) warns against using the frameworks and processes of one medium to interpret and evaluate work composed in another. The original rubric sought to capture both the objectives of the assessment as well as the unique features and compositional elements of film.

The adoption and integration of digital tools demand that educators acquire new orientations to time, space, performance, creativity and design (Lewis, 2007). The tutor, Paul, an experienced television producer and educator, described an iterative process for assessing student work that aligned with the rubric but also attempts to achieve this holistic view. First, he watched all videos without making notes, so as to focus on overall impressions and affective aspects. On the second viewing, the tutor took notes according the rubric and allocated a mark to each film. On the final viewing, he made some adjustments to the marks and additional summative feedback as needed. Paul also described how he used his expert judgment in interpreting the rubric: For example, [one pair] used one interview but used it extremely well. I’m quite flexible and adaptable when it comes within the criteria. If something is absolutely brilliant, of which this one was overall, then I wouldn’t penalise them. They really still came up here in the ‘exceeds criteria’ which is why they ended up getting a high distinction. (Paul, tutor, Interview 1, 2017)

Burke and Hammett (2009) argue that ‘by its very nature, assessing is a political act – an act of power – that is usually carried out by gatekeepers who define and codify knowledge’ (7). Consequently, university tutors and lecturers need to be self-reflective about their how they develop, communicate and implement assessment practices and the implications this has for student learning. As Paul observed, ‘In the end, we see how we are all potentially filmmakers these days’.

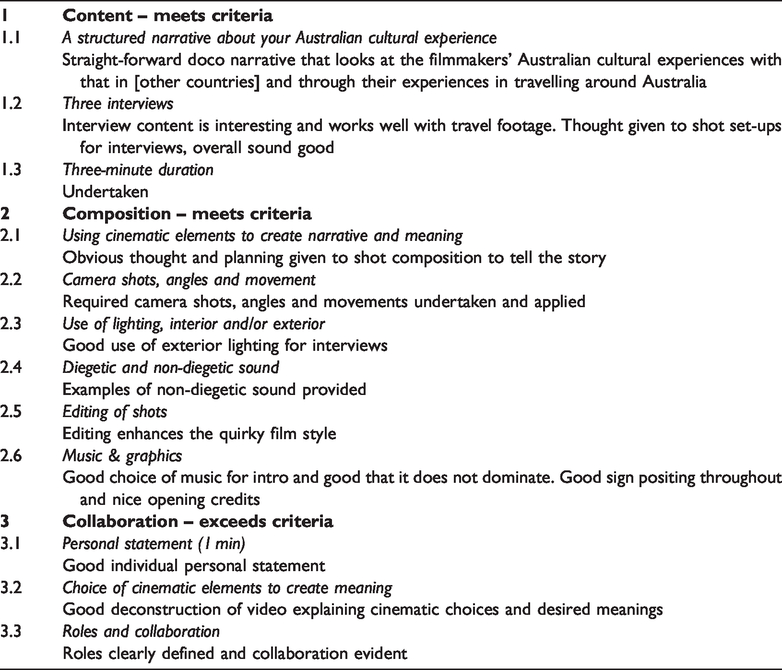

The feedback given to students included brief comments in each of the sections, numbered according to the elements the markers were looking to see. For example, the feedback shown in Table 2 highlights the overall judgment of the marker (meets criteria for content, meets criteria for composition and exceeds criteria for collaboration) and notes how each element was addressed. This assignment received a mark of 15 out of 20, a Distinction, which The University of Sydney describes as being ‘Awarded when you demonstrate the learning outcomes for the unit at a very high standard, as defined by grade descriptors or exemplars outlined by your faculty or school’ (The University of Sydney, 2018).

Example of feedback given on smartphone film.

Revised smartphone digital project assessment rubric

The question of what can be contained within rubrics and what, by necessity, goes beyond them in these types of assessments, was a central one for this research. Students described using the original rubric as a guideline and checklist to check they had covered all requirements of the assignment, and to give them some structure, and to get started: We knew we needed a lot of cool angles, and different shots, so we started thinking ‘What would be really neat and catching to the eye?’ The thing we struggled with looking at the rubric was the narrative, having a narrative, but everything else we were able to look at and make sure was in the project. (Carla, Focus Group 2, 2017)

The original rubric guided students in the use of discipline-specific vocabulary and highlighted the importance of collaboration in reflecting on the meaning of Australian culture and representing it within a multimodal composition.

Students also felt that the rubric ‘left a lot of room for interpretation’. As Carla added, ‘The Australian cultural experience from the videos [viewed as a class after submission] meant so many different things. I liked that it was open … but then again that’s also the challenge’. Carla’s observation highlights the importance of agency and creativity, but a tension exists with the tutor’s responsibility to communicate expectations and fairly assess student learning. One student noted: When it says ‘the video demonstrates a sophisticated Australian cultural experience narrative’, I don’t really know what [the tutor] means by sophisticated. Personally our project was more humorous, I don’t think you’d look at our video and say ‘That’s a sophisticated piece of art’. … But I still got really high marks on my assignment, and so really vague words like ‘sophisticated’, I think really limits people’s creativity. … [Students] don’t exactly know what [tutors] want. (Sarah, Focus Group 1, 2017)

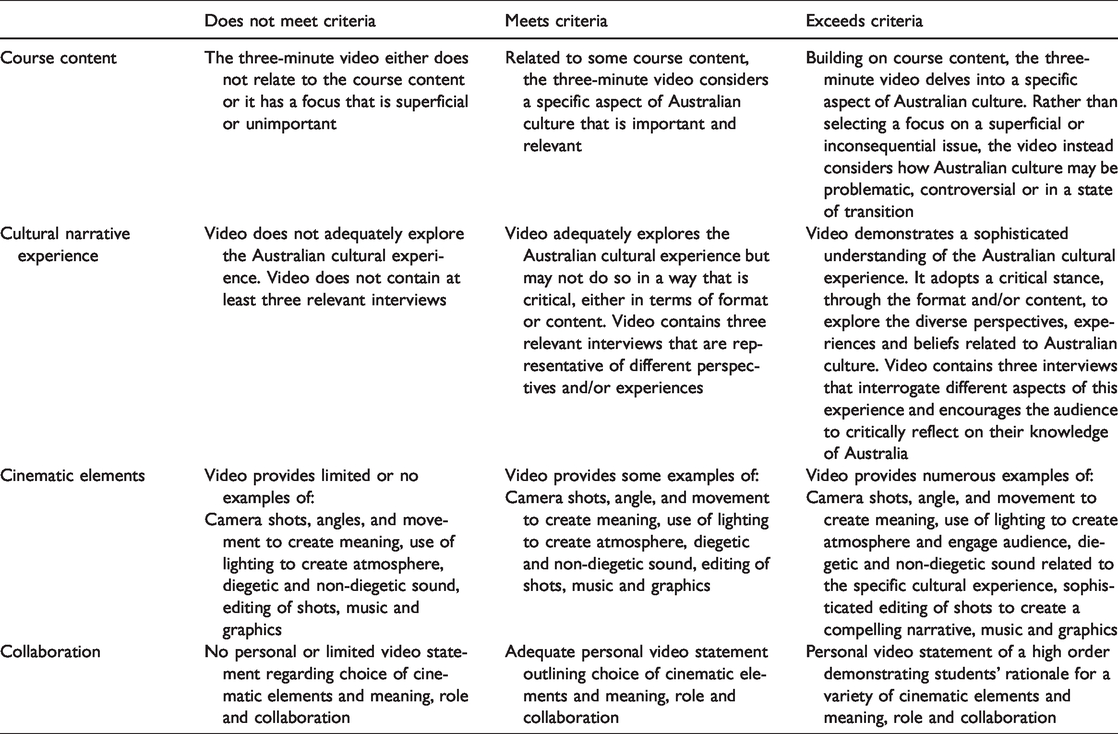

Based on our analysis of data sources, and our conversations with the tutor, we implemented a revised rubric in 2018 (see Table 3). We considered how the assignment could capture more nuanced elements, including how compositional choices build or create tensions with the narrative, how multimodal elements can critique or oversimplify cultural meanings and how multimodal arguments are constructed within the context of short films.

The revised rubric had a new category: Course Content, which focused on what the tutors expected to see from the content of the video itself. It also added concepts of criticality to the cultural narrative experience criteria and highlighted how this could be expressed through form, content or a combination of the two. Finally, it emphasised that the technical elements would be assessed in terms of their meaning-making effects.

Reflecting on using the new rubric to plan her video project, one student commented that she was concerned that her idea (to focus on the differences in language use between her country of origin and Australia) might not consider ‘how Australian culture may be problematic, controversial or in a state of transition’: It’s not like the language barrier is the worst thing on the planet, it’s not necessarily a massive issue, and it’s not probably a nationwide issue, it’s pretty specifically for, what my experience is, study abroad students. I work at [a call centre] so I’m on the phone with Australians. So in that way it’s not really a massive deal. I’m a little bit nervous on how I’m going to connect that. (Katrina, Interview 1, 2018)

Like students from the previous semester, Katrina was attuned to the assessment criteria, and these were asking her to consider something that the previous students had not been explicitly asked for. As the conversation with the interviewer continued, she began to discuss not only differences in terminology, but the way that language might convey different attitudes to political and social issues and how these might be confronted; the weight that personal experiences should have in understanding such differences. Ultimately, she and her partner decided to focus on unanticipated communication challenges, in part because they felt they needed to go ‘deeper’: We really looked at this first [criterion]: consider how Australian culture may be problematic, controversial, or in a state of transition. We looked at that a lot to try and find – because what we were saying before about general language. It’s a problem but it’s not specific enough. So that’s why we decided to go a bit deeper. (Katrina, Interview 1, 2018)

Katrina and her partner also moved from a plan for a scripted film to one based on interviews, to allow for more richness and surprises in the responses from their interviewees. The video form ended up feeling constraining: I wish I had a longer time or a paper to get into it, because this is my favourite thing we’ve done in this class so far, getting into this conversation. Being forced to talk with an Australian and my American friend and have this very upfront conversation that we all kind of talked about casually [before this project]. But to actually deeply talk about it was really interesting. (Katrina, Interview 1, 2018)

It emerged, though, that for Katrina, the new criteria were not matched by attention in class and tutorials to what it might mean to explore a topic of personal interest or experience in a way that reflected the critical lessons about Australian culture they were seeing in the films and theatre on their course. She felt that Australian classmates were in a stronger position to be able to reflect critically on their own culture, and that others may have needed more help and support. In his interview, however, Paul emphasised that ‘there are no right or wrong answers’ and he elaborates, ‘It is their story that they’re telling, so it really is, in terms of content, how they’ve been able to really reflect on and explore cultural diversity in Australia’.

This helps illustrate in practice that developing approaches to multimodal assignments along the lines proposed in the next section makes demands on the module – perhaps the programme – as a whole. Moving against ‘decomposition’ in assessing multimodal work, and developing students’ multimodal assessment literacy, is a major undertaking that should not be underplayed. Emphasising criticality, creativity and a more holistic view of the relationship between form and content may have consequences for the kinds of support students need at all stages of their learning. We now go on to explore these dimensions in more detail, as we introduce and discuss our multimodal assessment framework.

Multimodal assessment framework

Our study reveals the complexity of multimodal assignments and the need for criteria and processes that can account for this. Students need support to develop multimodal assessment literacy, which involves making meaning with diverse semiotic resources and multiple modes, parsing rubrics and criteria, understanding the assessment outcomes and how to meet them and also understanding this process as a dialogue rather than a fixed and objective measurement.

The four dimensions of our framework are intended to support teachers to develop criteria for assessing multimodal work. These dimensions are criticality, cultivating creativity, taking a holistic approach and valuing multimodality.

Form, as well as content, is a vitally important site of criticality in multimodal work. We need to consider how to support our students to create a ‘multimodal argument’. Fostering students’ creative dispositions and agency is a key benefit of introducing multimodal assignments, but these must be carefully designed to support such development. There is tension between constraint and creativity that can be developed constructively, and teachers should be attuned to how creative constraints are operating in the assignments students produce. The intra-action of form and content must be recognised in the assessment process, and teachers must seek ways to look holistically at multimodal assignments and to explore with students what this means in practice. Last but not least, teachers have to consider what they are asking students to do, and how to value it appropriately. A multimodal assignment is not a throwaway task. It often involves substantial learning, work and creativity and its weighting within the course – in terms of time and assessment – needs to be carefully considered.

We now explore each of these dimensions in greater detail.

Criticality

Digital assignments that include multimodal elements such as sound, image, hyperlinks and navigation need attention to how those different modes, separately and in interaction, contribute to an argument. We need to apply the same level of critical engagement to use of image, sound and other elements as we do to the words in a digital assignment. For students, this means considering their choices on an aesthetic and technical level but also in terms of the ‘larger trajectory’ of the text they are constructing (DePalma and Alexander, 2015: 196) and the genres they are employing (Williams, 2016). Images, for example, do not merely illustrate a point made in text but contribute to the overall meaning of the work (Archer, 2010).

Images chosen or created without careful consideration of their impact may serve to weaken a scholarly argument by inadvertently contradicting or oversimplifying it. A critical use of multimodal elements in an assignment can add nuance, challenge assumptions and contribute new perspectives to a narrative. As Yancey (2004) notes, there is a need to consider the work that different aspects of the assignment are doing, and the coherence between modes. Where there is dissonance, students should be deliberate about this, even while they recognise the ambiguity that non textual elements can introduce (Gourlay, 2016).

For teachers, developing approaches to assessing multimodal assignments requires consideration of how to guide students to think critically about their choices. Assignment descriptions and rubrics need to convey how technical and narrative elements work together to construct an argument. Judgements about the quality and criticality of the argument should be foregrounded, and the technical and narrative elements understood as contributing to this. The rubric has to be usable by students as more than a checklist, it should be a resource that can scaffold ‘student understanding of complicated concepts and complex conditions’ (Bowen, 2017: 710) and recognise ‘the risk taken in compelling texts’ (Charlton, 2014: 33).

Cultivating creativity

Creativity is now recognised as one of the most important skills for contemporary learners, who live in a complex and often unpredictable world (Gibson and Ewing, 2011; Jefferson and Anderson, 2017; Sawyer, 2012). Creativity involves the ‘construction of personal meaning’ (Runco, 2003) and can be conceptualised as ‘a form of knowledge creation’ (Craft, 2005). Notably, one of the major aims within the Melbourne Declaration on Educational Goals for Young Australians is for students to become ‘confident and creative individuals’ (Ministerial Council for Education, Employment, Training and Youth Affairs, 2008: 9). Jefferson and Anderson’s (2017) 4Cs model of education posits that creativity, critical reflection, communication and collaboration are central to learning experiences. They argue that without these skills, students are ill equipped to survive, let alone thrive. Despite research and policy that supports the centrality of creativity to learning, education agendas that emphasise standardisation and accountability can serve to undermine the cultivation of creativity in formal learning contexts.

In a working paper from the Organisation for Economic Cooperation and Development, Lucas et al. (2013) identify five core dispositions for the creative process: persistent, imaginative, collaborative, disciplined and inquisitive. With that in mind, how can educators design assessments that nurture students’ creativity and encourage their engagement with multimodality? Without disregarding the imperative of fostering creativity, Ferrari et al. (2009) assert that innovative teachers provide a: nurturing environment to kindle the creative spark, an environment where students feel rewarded, are active learners, have a sense of ownership, and can freely discuss their problems; where teachers are coaches and promote cooperative learning methods, thus making learning relevant to life experiences. (22)

Within the classroom, teachers must design learning opportunities that allow students to cultivate core creative dispositions, exercise agency, engage in creative processes and produce innovative artefacts, including through multimodal assessments.

Holism

Form and content intra-act to deliver the impact of multimodal work. The various elements of multimodal work (for example, images, music, voice and written words) combine to form a total effect that has an impact on the assessor and/or audience. We encourage educators to consider how to preserve the aesthetic judgment inherent in multimodal composition. Rubrics, especially where they specify technical elements, can easily tend towards ‘multimodal decomposition’ (Bateman, 2012). For students, this can mean an inclination to focus on each element within the rubric – ‘following a recipe’ – without enough consideration of the overall piece of work. While this unintended consequence of providing students with a rubric is not unique to multimodal work, it is perhaps writ large when students are grappling to make sense of a complex assessment task that involves several modes.

For educators, even when using a rubric to assess student work, there is an element of overall judgement that: ‘involves both attending to particular aspects that draw attention to themselves, and allowing an appreciation of the quality of the work as a whole to emerge’ (Sadler, 2009: 161). For assessors, such judgments are often ‘unconscious when the individual has had significant experience and expertise in making evaluative judgements in a specific area’ (Tai et al., 2018: 472). We therefore need to focus on how to support students to develop the skills to make a holistic evaluation of their work and to understand how it might appear to others.

Since Sadler (1989) wrote about ‘evaluative knowledge’ and ‘evaluative expertise’ 30 years ago, there has been a renewed focus on the need for students to develop ‘evaluative judgment’ – the ability to assess the overall quality of a piece of work (Tai et al., 2018). Tai et al. argue that using rubrics that students have not been involved in discussing, and having rubrics supplant evaluative judgment, are sub-optimal ways for students to develop evaluative judgment. Instead, they propose, and we concur, that rubrics be co-created with students and ‘represent how evaluative judgments are actually made in the particular discipline’ (474).

Valuing multimodality

Designing, supporting and assessing multimodal work, and understanding and creating multimodal assessments, is complex for both educators and students. Such complexity needs to be valued accordingly in the curriculum and in workload models. Multimodal assessments are sometimes viewed by students as relatively small and inconsequential parts of the class, particularly if the assessment value is low in comparison to more traditional assessment forms, such as essays or exams. This led us to ask: Would students value multimodal assessments more highly if they were more central to how they are evaluated on content knowledge? And how can university teachers build iteration into multimodal assessment, so that students can learn from and expand on their multimodal work?

As educators, we can help students to value multimodality by emphasising meaning making. This focus on meaning is central to Cope and Kalantzis’ (2009) grammar of multimodality. They highlight five key elements (representational, social, organisational, contextual and ideological) and ask five corresponding questions: ‘What do the meanings refer to? How do the meanings connect the persons they involve? How do the meanings hang together? How do the meanings fit into the larger world of meaning? Whose interests are the meanings skewed to serve?’ (p. 365). By foregrounding the meaning of multimodal texts, Unsworth (2008) and Cope and Kalantzis (2009) examine how meaning is a textual, personal, cultural and critical construction within multimodal texts. While composition elements and technical skills are relevant in such a framework, the focus is instead on the construction and interpretation of meaning through multiple modes and within specific contexts.

Operationalising the framework

For educators whose experience with knowledge representation has been dominated by written and spoken words, the implementation of multimodal assessment challenges their approach to design and evaluation. In order to value the learning and knowing that occur within and through multiple, multimodal and multifaceted textual representations, they must ‘engage students in a critical discussion of the affordances and constraints of modes, mediums, and tools for given purposes’ and work with students to ‘jointly construct formative and summative assessments that can capture the design process, modal choices, and meaning making’ (Curwood, 2012: 242). In that sense, the scope for creativity, criticality and holism within multimodal assessment begins in the discussion, contestation and negotiation of how modes and semiotic resources can afford or constrain knowledge representation.

For this reason, a process of developing a shared understanding of creativity and criticality and what these can look like in multimodal artefacts needs to be part of any teaching and learning process that will lead to multimodal assessment. This may happen at a programme or module level, but it is important that it addresses multimodality explicitly, since, as discussed, multimodal knowledge production requires a holistic approach to both criticality and creativity. Thoughtful use of exemplars can support this shared understanding, but there are pitfalls to be avoided – primarily the risk of students using exemplars as ‘recipes’ (Bell et al., 2013). A recipe-following approach tends to be taken when students do not ‘realise the imprecise nature of standards and construct their own interpretations of standards aligned with that of lecturers’ (Bell et al., 2013: 775). Critical approaches, then, are needed at both the assignment creation level and at the level of the assessment process itself. Developing these, along with a shared understanding of what is expected, requires discussion and practice. Such practice can take the form of formatively assessed multimodal tasks. On the fully online Education and Digital Cultures module at the University of Edinburgh, for instance, students are assessed in part on a digital essay, worth 50% of the final mark for the course, which requires students to: explore the possibilities presented by digital, networked media for representing formal academic knowledge. … Technical prowess is not formally assessed – we are rather looking for imaginative and rigorous ways of presenting your academic work online, and critical engagement with the course themes.

1

This high-stakes digital essay is preceded by several formative tasks, including a visual artefact which is produced and published early in the semester for other students, and tutors, to discuss. This gives students the opportunity to practice representing knowledge in visual ways, but also, importantly, formative tutor feedback gives them insight into the kinds of considerations their tutors make in relation to creativity and criticality, and what they expect to see. Through discussion, teachers and students explore how meaning is expressed and negotiated in multimodal work.

More ambitious approaches are also possible – for example, co-constructing a rubric, or iterative development of a multimodal assignment with rounds of feedback and further development. These and other methods of developing shared understanding are time-consuming but may be particularly appropriate for programmes making widespread use of multimodal assessment.

Conclusion

Digital practices help to bridge the gap between academic knowledge representation and the creative, personal and highly social modes prevalent in web-based communication. For students and prospective students, this gap can lead to a lack of confidence in their ability to share, critique and generate disciplinary knowledge. This research explored methods for more engaging, imaginative assessment of student understanding and knowledge, using multimodal approaches. As universities are increasingly emphasising the incorporation of technology to enhance teaching and learning, our research highlights how this impacts the assessment of work that occurs across modes, tools and semiotic resources. The four dimensions of our multimodal assessment framework: (a) criticality, (b) cultivating creativity, (c) holism and (d) valuing multimodality provide some guidance for educators who are designing, supporting and assessing multimodal work. Future work will advance the theoretical and methodological dimensions of place-based multimodal composition across formal and informal learning contexts in higher education, the cultural heritage sector and schools, seeking to develop the ideas from the current collaboration and understand their application in different learning settings, with different age groups, and across discipline areas.

Supplemental Material

sj-pdf-1-ldm-10.1177_2042753020927201 - Supplemental material for A multimodal assessment framework for higher education

Supplemental material, sj-pdf-1-ldm-10.1177_2042753020927201 for A multimodal assessment framework for higher education by Jen Ross, Jen Scott Curwood and Amani Bell in E-Learning and Digital Media

Supplemental Material

sj-pdf-2-ldm-10.1177_2042753020927201 - Supplemental material for A multimodal assessment framework for higher education

Supplemental material, sj-pdf-2-ldm-10.1177_2042753020927201 for A multimodal assessment framework for higher education by Jen Ross, Jen Scott Curwood and Amani Bell in E-Learning and Digital Media

Footnotes

Acknowledgements

The authors would like to dedicate this study to the memory of Peter Wasson, an incredible mentor, teacher, and friend. They are also grateful to Siân Bayne, James Lamb, and Yi-Shan Tsai for their helpful feedback on an earlier draft of this manuscript.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship,and/or publication of this article:This research was supported through a Partnership Collaboration Award from the University of Sydney and the University of Edinburgh.

Note

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.