Abstract

This article investigates the phenomenon of open and participative development (e.g. beta testing, Kickstarter projects)—i.e. extended prototyping—in digital entertainment as a potential source of insights for instructional interventions. Despite the increasing popularity of this practice and the potential implications for educators and instructional designers, little efforts have been done in enlightening the topic. This study aims to address this lack by staging a bridge between Instructional Design and Game Design with an empirical inquiry. N:130 subjects (beta testers and Steam Early Access and Kickstarter users) were recruited with a quantitative questionnaire about their contribution to open development instances. Behavioral patterns, effective policies and managements, and subjective profiles and opinions were gathered and tied to instructional design models and concepts. Results point to successful techniques in designing and applying such a process, while mistakes and unproductive tactics are highlighted as well. To summarize, instruction can take advantage of an increased participation of targeted audiences/learners to its development phases. Benefits span transparency, engagement, and commitment. However, poor communication and incoherence between testing and final product may weaken the overall outcome.

Introduction

Instructional design (in the following, ID) can be described as the process through which an instructor plans an educational intervention to lead his/her students toward specific learning goals. Game design (in the following, GD) might be depicted as the technique for building an engaging ludic experience. These two approaches thrived in the last decades informing teaching strategies and involving activities, respectively. It can be argued that both have achieved a relevant maturity and a well-established relation with media and technology. Moreover, despite their paths seem to diverge, they show more similarities than just the term design. Indeed, several concepts and models are shared in between the two disciplines, and insights can be moved from one to another (e.g. rapid prototyping, user-centered design). This interplay may mean that digital entertainment trends can provide stimuli to instructional designers and vice versa. Merrill and Willson (2007: 435) observe that “more could be done to apply gaming and simulation principles to instructional design,” and we are just starting to deepen how gamification trends—i.e. applying ludic mechanics to nonescapist settings—and game characteristics can fit into ID (Landers, 2014). Moreover, instructional designers usually make several mistakes in harnessing game dynamics, from avoiding playtesting to missing the link between ludic rules and learning goals (Boller and Kapp, 2017).

The objective of this article is to contribute to such a potential synergy by adopting an alternative perspective, i.e. looking at the game industry as a possible depository of stimuli. Specifically, the focus will be on deepening a widespread practice in game development, i.e. participative phases in which players are actively involved (e.g. on online platforms like Steam Early Access or Kickstarter) and reflecting on its potential implications for ID. To reach this goal, n:130 subjects were recruited with a quantitative survey about perceptions, best practices, and issues in experiencing such a bottom-up phenomenon, which can be interpreted as an “extended prototyping” (or “open development”) aimed to improve a final product or intervention. Theoretical references follow a multidisciplinary approach spanning GD, game studies, ID, and media studies.

The article is structured as follows: in the first section, bridges between ID and GD are uncovered and bottom-up development is addressed as a potential crossroad. The second depicts the research design and the third illustrates the results. Finally, the fourth regards discussion and conclusion by highlighting implications, limitations, and possible steps forward. To summarize, an extended prototyping can play a significant role in education harnessing its debugging and open orientation. However, learners should be highly motivated and constantly followed by content providers in a bidirectional exchange for maximizing the outcome.

Seeking a crossroad between instruction and gaming

ID and GD

ID is a “systemic process, usually conducted by a team of professionals (…) [that] involves analysis of a performance problem, and the design development, implementation, and evaluation of an instructional solution to the problem or need” (Gustafson and Brunch, 2007: 14). ID (and technology) addresses the evaluation of performance problems and learners, and then design, develop, and test an array of instructional and noninstructional interventions aimed to solve performance issues. The leading premise is that instructors are active designers rather than just content deliverers; therefore, they are asked to plan, shape, and craft curricula iteratively and trying to satisfy the students’ needs (Brown and Green, 2011; Reiser, 2007: 7; Wiggins and McTighe, 2005: 13). To summarize, instructional designers aim to create, handle, and finalize a learning process through which specific goals are set and (hopefully) reached. Usually, a list of objectives is staged and then assessment modalities are designed and proper educational strategies follow (e.g. see the Understanding by Design (UbD) model by Wiggins and McTighe (2005)).

Several techniques have been developed to guide instructional interventions, from the famous ADDIE (Molenda, 2003) to the systems approach (Dick and Carey, 2014) and Kemp (Morrison et al., 2010) models. In addition, ways to visualize thinking and differentiate between multiple types of content and practices were advanced (e.g. Ritchhart et al., 2011; Wiggins and McTighe, 2005). ID embraces different models and approaches with a broad range of extension and specificity, with an increasing emphasis on flexibility and adaptability (Gibbons et al., 2014). The increasing influence of the constructivism theory has led to open approaches such as the so-called research design, which harnesses educational proto-theories (emergent, developmental theories) in its own development (Jonassen et al., 2007: 48), and grounded design, i.e. an open-minded approach, which combine multiple perspectives, frameworks, and methodologies (Hannafin and Hill, 2007: 56). This approach is especially glaring if we observe how the field embraces suggestions from other disciplines. For instance, rapid prototyping—i.e. a production approach based on increasingly developed prototypes from the initial idea to the final product (Brown and Green, 2011)—is a widely adopted technique from software development. It implies a progressive process that overturns the classical sequence of design/production for saving time, preventing high-cost revision, and better matching clients’ expectations and needs.

The GD can be described as a creative process aimed to involve potential players with a ludic system. Swain (2008: 119) considers “Game design (…) the art of crafting player experiences,” and this view can be found in other definitions (e.g. Bateman and Boon, 2006: 4; Fullerton, 2008: 115; Sylvester, 2013: 4). Several game designers have advocated for the centrality of iteration—i.e. developing and trying increasingly advanced versions of a game until the designer’s criteria are matched (Fullerton, 2008: 14)—within this creative process. This ongoing formative evaluation has been highlighted as an essential strategy to refine a product (Schell, 2008; Swain, 2008), and prototypes play a key role in such an approach (Brathwaite and Schreiber, 2008) considering rapid prototyping an essential step in testing cycles. Flanagan (2009) claims the same importance in reaching a perfect match between core design elements and game mechanics (i.e. the game rules and processes) (see also Adams and Dormans, 2012; Sylvester, 2013)

Similarly to ID, GD is often open to other domains, from architecture (Adams and Dormans, 2012; Schell, 2008) to education. Especially the latter domain is increasingly associated with video games and ludic mechanics, which can provide significant opportunities for fostering learning, meta-cognition, and critical thinking (Gee, 2007; Merchant et al., 2014). Mimicking the most recent approaches in ID, GD for learning is embracing insights from constructionism by highlighting the shared dimension of play (Egenfeldt-Nielsen, 2007). This medium has been proved to improve collaboration, prosocial attitudes, and metacognition indeed (e.g. Badatala et al., 2016; Dale and Shawn Green, 2017).

Such a potential goes beyond the so-called edutainment titles—games mainly designed for instructional purposes (Egenfeldt-Nielsen, 2006)—and addresses leading genres and practices in game industry and consumption (Gandolfi, 2017; Lombardi, 2012). Mainstream trends inform the current expectations about the medium indeed, and studying them is crucial for understanding ludic engagement and involvement, which are key dimensions to consider if we intend to look at video games with educational plans (Egenfeldt-Nielsen, 2007). The increasing importance of the shared dimension of digital play (Consalvo, 2007) demands a better attention to game communities and environments, in which game (learning) outcomes are framed, shaped, and reformulated (Egenfeldt-Nielsen, 2007; Gandolfi, 2017). This lens implies that players are active and social learners. Therefore, building a bridge between ID and GD means to reflect on the leading game dynamics and processes in digital entertainment itself even when our objectives are instructional at their core. Such an approach expands the scope with which educators approach video games, going beyond their formal features (e.g. rules, dynamics, mechanics) and targeting topics like (game) production, consumption, and community.

Combining perspectives

Before deepening such a bond, it is worthy to mention two other similarities between GD and ID. First, the idea that knowledge can be split in two categories—core and specific. According to Bateman and Boon (2006: 110), the former informs the so-called Tight Design, which “use the minimum quantity of elements required to support the desired gameplay”; neither distractions nor ephemeral details are included in such a category (Sylvester, 2013: 167). The latter is addressed by the Elastic Design, which relies on “having more resources than you need with the express purpose of whittling the set of components down to the best minimal set that the production constraints allow” (Bateman and Boon, 2006: 114). The combination between these orientations is crucial to stage a well-balanced experience (Bateman and Boon, 2006: 116; see also Fullerton, 2008: 198). However, the risks span excessive costs, frustrating experiences, and bad balanced gameplays (Sylvester, 2013: 332; see also Adams and Dormans, 2012). In ID, we can see this difference in the relation between big ideas and specific information. The former ones refer to the core of a subject area and they need to be uncovered, while the latter regards secondary details (Wiggins and McTighe, 2005: 65–70). For instance, in learning about the American Civil War slavery is a big idea because it concerns the main causes of such a conflict. Otherwise, knowing the second battle won by the North army tell us less about the big picture. This distinction can be noticed also in the difference between supportive information and specific learning task in the 4c-ID model (Van Merrienboer, 2007: 78) and in the distinction between whole learning problems and single examples (Merrill, 2007: 65)

Second, the user-centered approach is getting popular in both the fields. Indeed, GD stresses the importance of the player: “the sooner you can bring the player into the equation, the better, and the first way to do this is to set ‘player experience goals’” (Fullerton, 2008: 10). Brathwaite and Schreiber (2008: 2) observe that ideal GD means informing and shaping experiences that players perceive as relevant. In ID, the idea that learning is multiple and variegated rather than sequential and hierarchical is flourishing (Ritchhart et al., 2011: 6–7); Gustafson and Brunch (2007: 14) consider ID “an empirical process that is learner centered and goals oriented.” The research and grounded designs described above highlight the openness of this approach. In other words, the need of listening to final users is becoming crucial. Sugar and Luterbach (2016) interviewed several instructional designers, who reported positive experiences when social connections are staged with students and collaboration with subject matter experts, instructors, and clients is included in the design process (see also Reiser, 2017). Digital entertainment is embracing such a trend by developing several models of participative design production, from open beta testing to crowdsourcing. Such processes can be referred as an “extended prototyping.” Several approaches and procedures can be deployed, with a relevant use of social media to communicate between producers and testers. More specifically, we can categorize this phenomenon in two main orientations:

Crowdsourcing: interested users (future players, in this case) economically support the development since the preliminary phases (e.g. on online portals like Kickstarter and Indiegogo) or joining an in-progress version of the game (on Steam Early Access). Their role is significant both in terms of funding and feedback. Testing: developers ask the game community to test a preliminary version of the software to refine it. Testing can be alpha (preliminary) or beta (almost finished), closed or public (the former relies on selected testers and the latter is open to everyone).

Despite the increasing popularity of these initiatives, little efforts have been done in understanding how testers perceive their own efforts. Banks (2013) made a point in suggesting that participating players are a complex audience, whose traits go beyond the mere distinction between exploited labor and active involvement, and Consalvo and Begy (2015) proved that the relation between developers and supporting community is variegated and multidimensional. Going at the core of the audience studies, it has been found that media consumers are moved by different drivers, from interpretative efforts to producing needs (Couldry, 2005; Gandolfi, 2016). This article aims to shed light on this phenomenon, which is still poorly analyzed, with a specific attention to how ID can incorporate its features and processes. Extended prototyping is indeed a thriving technique and affects how playing and game testing are widely perceived; it matches the call for understanding mainstream trends in digital entertainment in order to develop a better educational exploitation of the sector (from production to consumption) (Egenfeldt-Nielsen, 2007). It may be interpreted as a novel way to handle formative evaluation—assessment of the instructional materials and strategies during their development (Smith and Ragan, 1999)—in online contexts and learning.

Research design

According to the previous premises, two research questions lead the present article: RQ1: how do testers perceive open development and which motivations lead them in being involved? From an ID perceptive, what learners can this technique attract and engage? RQ2: what are best practices and weaknesses in staging open development sessions? From an ID perspective, what are the parameters to consider for staging an alike formative evaluation?

The present analysis will focus on four types of extended prototyping in gaming, selected for their popularity and reciprocal differences. The first two are closed and open testing, which are usually made accessible for download by the developer for a limited amount of time. In last years, million players have participated in these sessions (e.g. 6 million for The Division, 6.5 million for For Honor; see https://www.ubisoft.com/en-US/company/investor_center/annual_report.aspx). The former type, which relies on selected testers, tends to let participants assess relevant gaming features; the latter is usually consequential, open (and then broader) and focused on more marginal traits. The third addresses Steam Early Access (in the following, Steam EA); released in 2013, EA is a specific section of Steam, i.e. an online platform owned by Valve Inc with multiple functions, from online digital purchase to forums and UGC galleries, which count million users (Makuch, 2015). Publishers can sell unfinished versions of their games and ask funders to help them in finalizing the development process. At the current date (3 June 2017), almost 1700 games are enrolled in this system in several stages, from rough alpha versions to almost finished products. The fourth targets Kickstarter, which is the most famous crowdfunding platform with over $3.1 billion in pledges from 13 million backers (i.e. funders) supporting 257,000 creative initiatives. Digital games represent a leading component of such a landscape with 31,628 launched projects and $650.33 million raised (Kickstarter, 2017). Usually backers fund a project and then, if successful, they are updated about the development and sometimes asked to give opinions and even participate to testing phases.

Methods

A quantitative questionnaire (see Appendix 1) was designed to gather testers’, EA users’, and backers’ opinions and statements about their involvement. Aside from sociodemographic information (i.e. gender, age, nationality, education, occupation), the survey presented two parts.

The first included four sections about gaming habits and preferences (weekly hours, favorite genre, favorite gaming platform, motivation to play), and then one for gaming expertise (about favorite games, favorite genre, and digital entertainment per se) and affiliation as a gamer. The latter is a scale that was proved to be reliable (Gandolfi, 2016) about gaming identity. It is composed of four items addressing internal attribution, external attribution, priority of the attribution, and sharing of the attribution regarding the word “gamer.” Therefore, participants were asked to fill sections related to their concrete experience with closed testing, open testing, Steam EA, and Kickstarter. For each category, number of involvements and most targeted genres and platforms were reported. In addition, motivations to contribute, behavior, difference with nonparticipative players, and community feelings perceived during the support were addressed. The intent was also to understand if we can refer to a “community of practice” (Wenger, 1998), i.e. proactive learning environments based on a shared domain, a strong community, and reiterated practices (Buysse et al., 2003). If subjects reported good-high scores in stating that testers are not alike common gaming audiences, they were asked to write down the reasons. This first section aimed to understand testers’ characteristics and attitudes toward the domain (in ID, the content taught) targeted by their support. The objective was to address RQ1 by framing them as potential learners during a formative evaluation.

The second part targeted RQ2. Orientation, relation, and experience in testing were assessed (each with seven items) to uncover the bottom-up support itself. The first parameter concerned the perceived utility of such an effort spanning refinement, fixing, and innovation; the second regarded the relation with developers, which is indeed highly debated; the third focused on the experiential orientation of their activity. The rationale was to understand the main traits of extended prototyping, enlightening how subjects perceived their own efforts and deal with the producers. By adopting an ID perspective, orientation can be tied to the relevance of the problem solved by an instructional intervention; relation may be the one with possible ID managers and staff, which “are responsible for researching, designing, and developing the instructional product” (Litchfield, 2007: 117); experience is about the affection (Reeve, 2001) of the learning process, which can vary from engaging to stressful. Finally, best and worst testing experiences and titles were also asked with a free text entry for acquiring further and more spontaneous information.

Regarding items providing personal positions, 1–4 Likert Scales were adopted to prevent the tendency by subjects to check the median point (Marradi, 2007). For the rest (e.g. weekly consumption of video games) a more common 1–5 Likert Scale was preferred. Quantitative data were processed with IBM SPSS Statistics, while textual outputs (from open questions) have been analyzed with NVivo v.10. Regarding the latter, a “discourse analysis” (Gee, 2012) was staged to gather main criteria of difference from other players and strong and weak points of the targeted experiences. The related coding followed a two-cycle process (Saldana, 2016: 234). First, several tags were applied to map statements in detail (e.g. wrong policies by developers, controversial design choices, single experiences) and then a thematic summary was directed (e.g. coherence, community) to regroup results for a clearer overview. In conclusion, this inquiry has been designed for supporting an exploratory analysis—an investigation with “no initial hypothesis, even the initial selection of relevant variables becomes a critical step. (…) [that] aims at summarizing the main characteristics of a given dataset by inspecting it” (Canossa, 2013: 259). Due to the scarcity of studies on the topic, the main objective was indeed to outline a first overview of such a phenomenon for facilitating further researches and more structured arguments. The survey was spread online from October 2016 to February 2017 with the prerequisites of age (18 years old or older) and suitability (being involved with at least one of the four types of open development). Social media (e.g. Facebook, Reddit, Twitter) worked as main dissemination channels with an additional use of the academic mailing-list gamesnetwork.com. Moreover, the creative director of a game frequently mentioned by participants was contacted for an e-mail interview (made in January 2017) about his experience with Steam EA. The research was approved and monitored by the author’s Institution I.R.B. committee.

Results

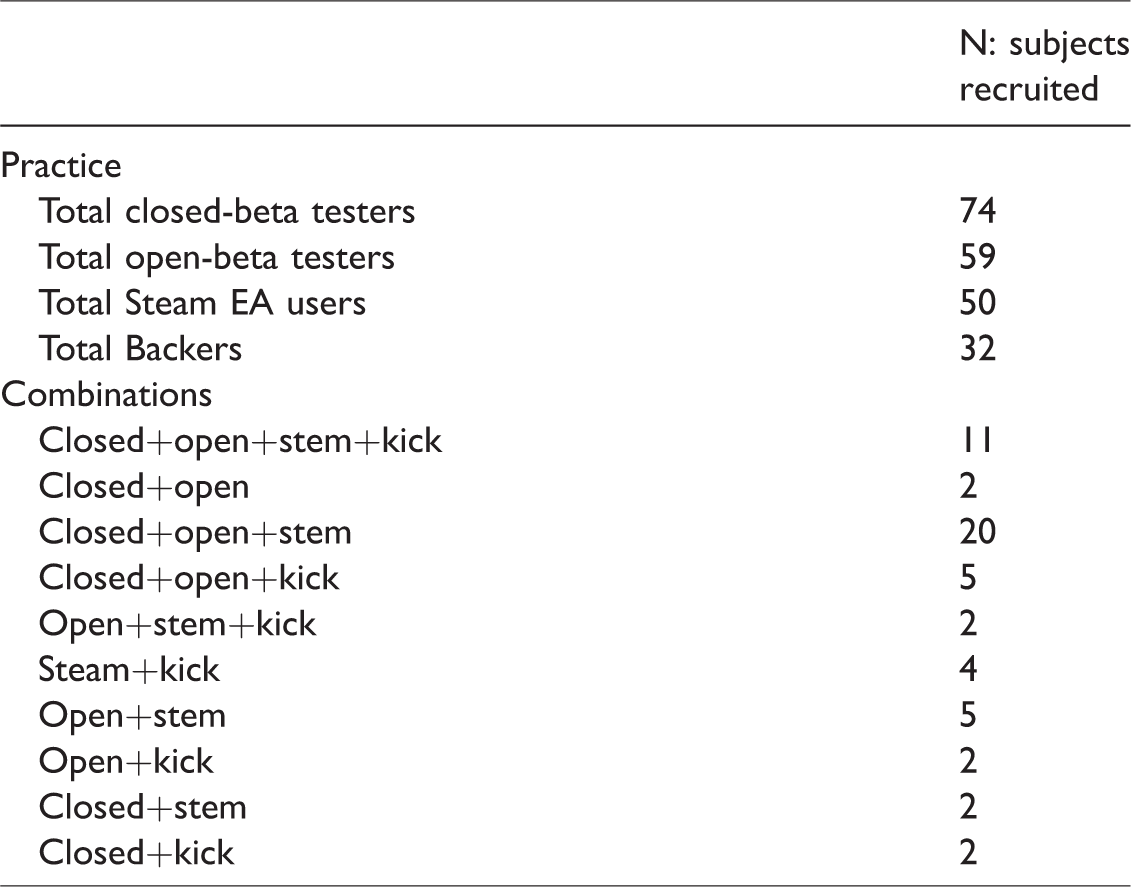

N: 130 participants were recruited (age—m: 29,746; St. dev.: 8855/n:100 male; n:27 female; n:3 other). In Table 1 subgroups’ sizes are reported.

Sample composition.

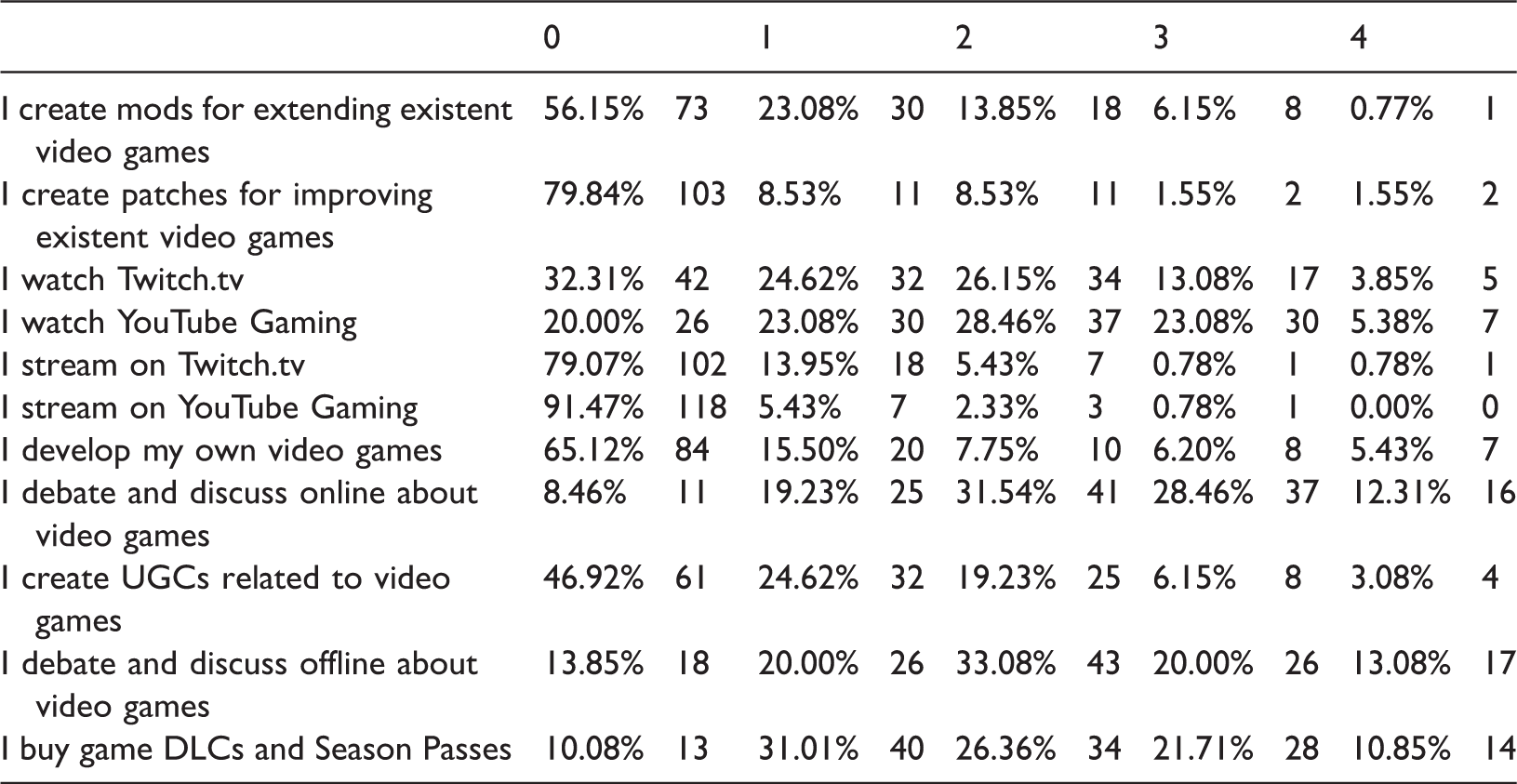

Subjects mainly come from U.S. (n:45–34.62%), United Kingdom (n:16–12.31%), Italy (n:16–12.31%), Canada (n:7–5.43%), and Germany (n:6–4.61%). Other geographic origins are Netherlands, Denmark, Switzerland, Sweden, India, Greece, Hungary, France, Malaysia, Indonesia, Norway, etc. The majority has a College degree (n:40–30.76%), followed by a Bachelor Degree (n:28–21.71%), Master Degree (n:23–17.69%), and High School degree (n:20–15.50%). Most diffused occupations include student (n:34–26.15%); computer and mathematical (n:23–17.69%); education, training, and library (n:13–10.00%); and unemployed (n:13–10.00%). Regarding their game habits, almost half (n:63–48.46%) of the sample reported more than 11 h weekly. N:33–25.38% spend 8–11 h with digital games, while n:24–18.46% 4–7 h and n:10–7.69% less. Favorite game genres widely span Western Role-Playing Games (RPGs) (n:45–34.62%), Turn-Based Strategy (TBS) (n:36–27.69%), and Simulations (n:31–23.85%). On the contrary, PC is the most favorite game platform (n:119–91.54%) with smartphones and PlayStation 4 stop at 21–16.15% preferences each. Regarding the orientation to play, fun (n:79–60.77%), challenge (n:47–36.15%), and immersion (n:44–33.85%) have a crucial influence; other important ones are dissociation from everyday routine (n:21–16.15%) and narrative (n:19–14.62%). Table 2 summarizes the activity outside the game per se. Among the gaming practices, just a minority is actively involved with the development of games, patches, or additional content, but there is a majoritarian tendency to discuss digital entertainment online (72.31% of average-high scores) as well as offline (66.16% of average-high scores), and purchase additional content (58.92% of average-high scores).

Practices.

DLC: downloadable content; UGC: user generated content.

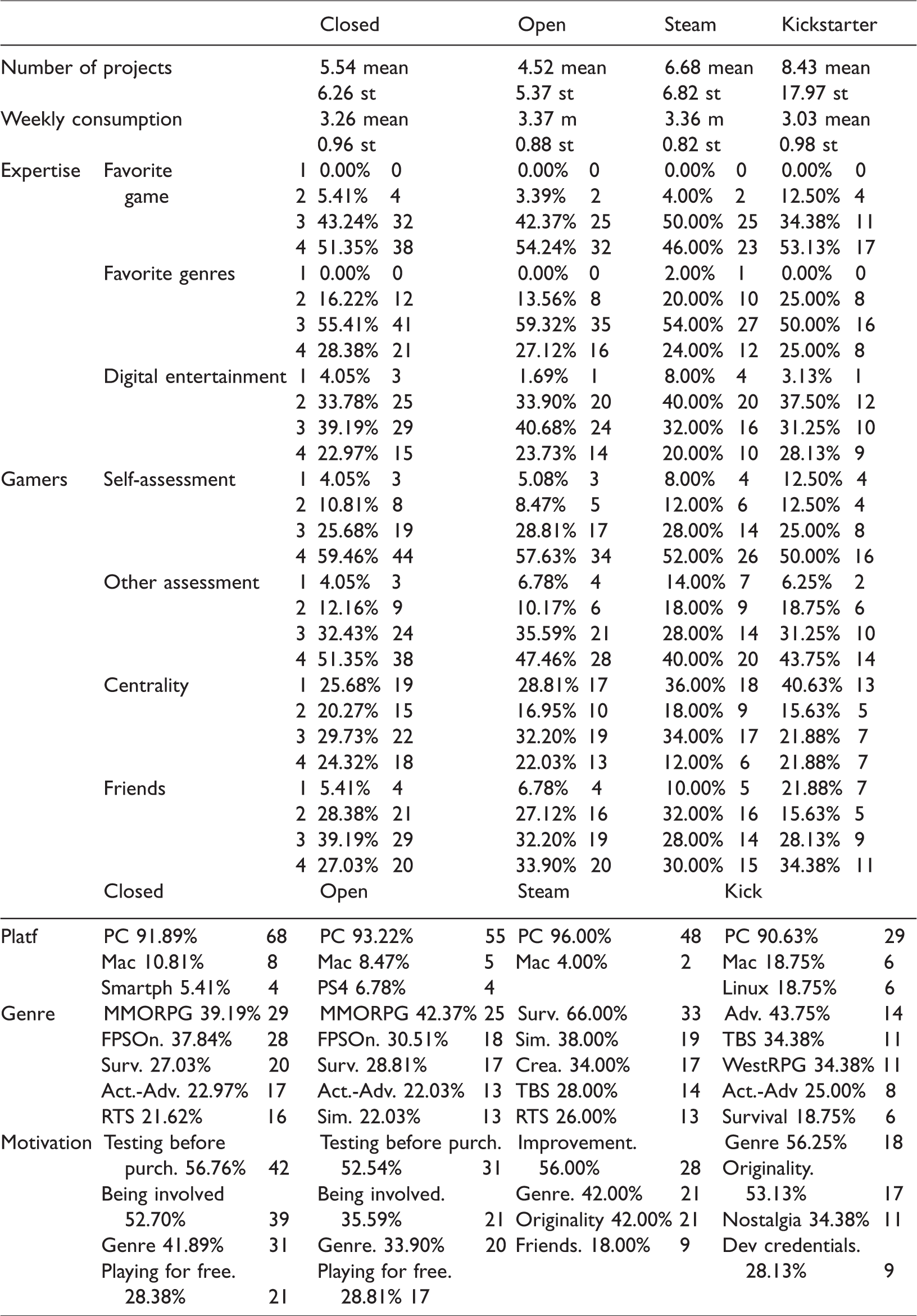

Taking a step forward, in Table 3 the first subgroups’ tendencies are reported. Kickstarter group leads the way in terms of projects funded despite the relevant standard deviation. Weekly consumption is tendentially high across the four categories. It is easy to notice a shared expertise in favorite games and genres with a glaring majority of good-high scores. Such a tendency is confirmed but weakened toward the sector. The tag gamer is intensively felt by each category; however, subjects consider its centrality average and sometimes marginal. PC is the reference testing platform, with Mac and Linux machines are reported as secondary highlights. The targeted genres are common between closed and open testing spanning MMORPG, FPS online, and Survivals. The latter comes first in Steam EA preferences, followed by Simulations and Creative games. Finally, Kickstarter is practically dominated by old-fashioned genres, from adventures to TBS and Western RPGs. It is interesting to notice how many of these genres are online and multiplayer, pointing to a shared and social testing experience. Closed and open testing are similar also regarding motivation; testing before purchase, being involved, genre, and playing for free are the reference drivers. Conversely, Steam EA attracts users with improvement opportunity, and then relying on genre, originality of the idea, and presence of friends. Kickstarter is affected by genre and originality as well, but also nostalgia and developers’ credentials play a key role.

Results (part 1).

Results (part 2).

DLC: downloadable content.

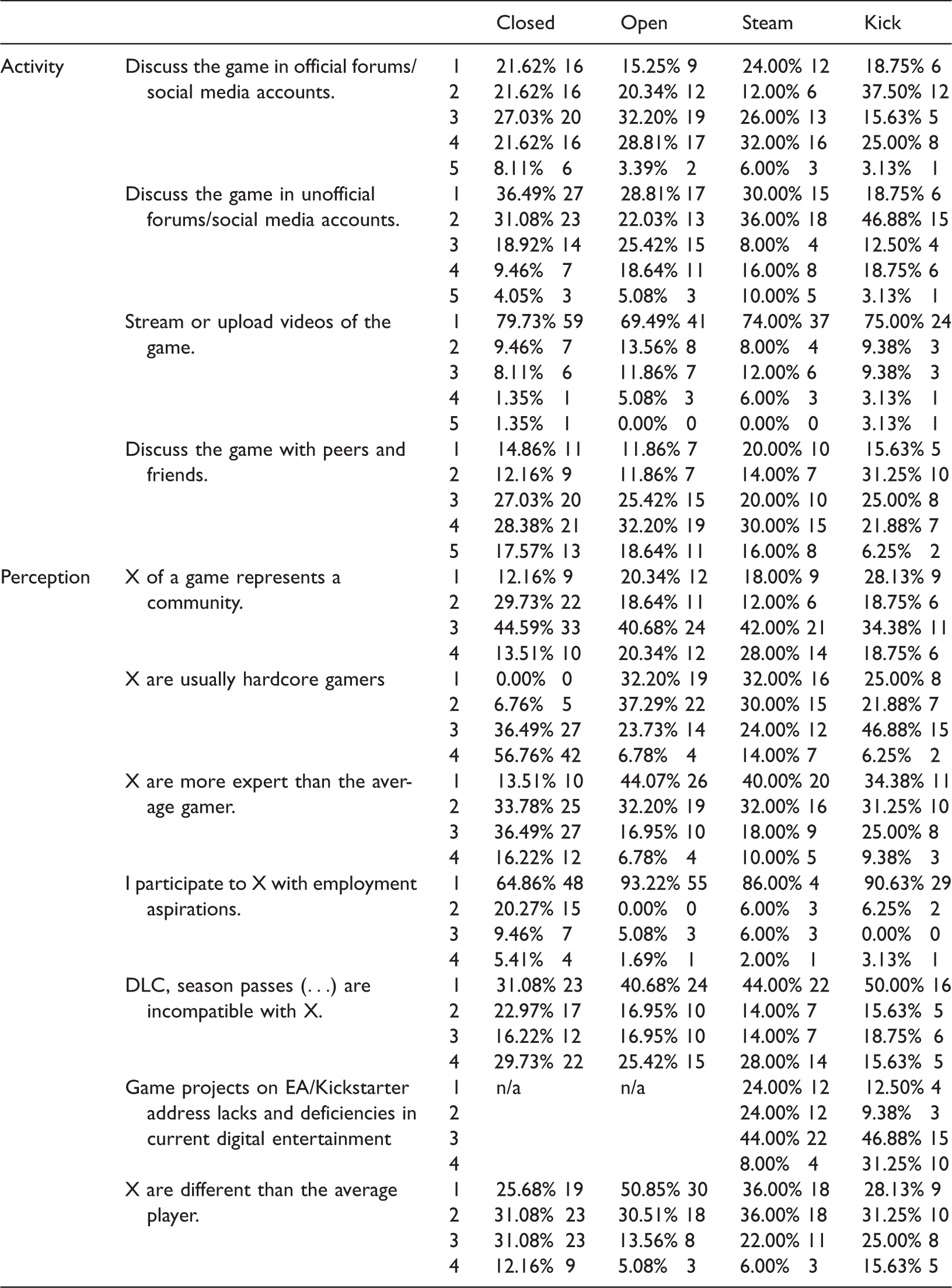

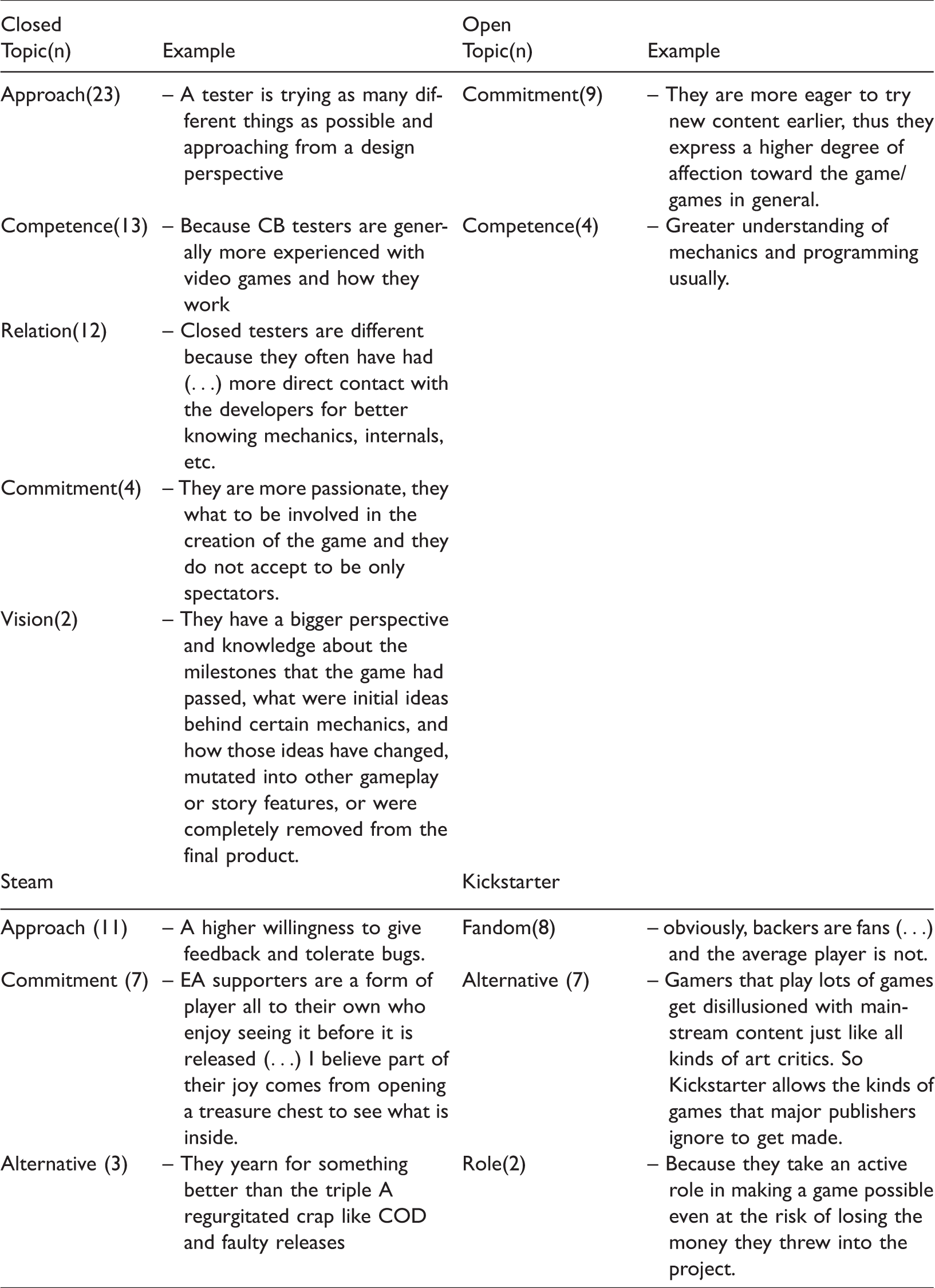

About what differentiates a tester from an average player, Table 5 includes the main criteria of difference reported by the subjects with main themes (with n: of references) and indicative quotes from the survey. Closed beta testers would be characterized by an analytic approach (i.e. assessing the system, finding bugs and issues, giving feedback), and then competence (related to the overall experience), relation with the developer’s commitment (i.e. passion and attitude toward the project tested), and vision of the whole productive process. Open beta testers share commitment and competence, but not the rest. Especially concerning closed testing, the leading trait is the ability to debug the ludic system and improve it issue by issue. Such a process relies on patience but also excitement in observing the product getting “better in the future.” Such an opportunity decreases with open beta testing because “most [of them] are a chance to play the game early and input is rare” and “tend to be more stress-tests of the game servers or similar to make sure the game is ready.” Some subjects observe that sometimes they do not participate to open beta “because it is more difficult to communicate with developers, (…) the game is in an almost finished state (…) - while during CB there are usually more margins.” Steam EA adopters seem to have a peculiar approach (similar to the debugging one mentioned above), relevant commitment, and also the willing to support alternative products. Backers are fans who want to assist novel games too and play a role in the production per se, but their effort is especially economic.

Testers’ peculiarities.

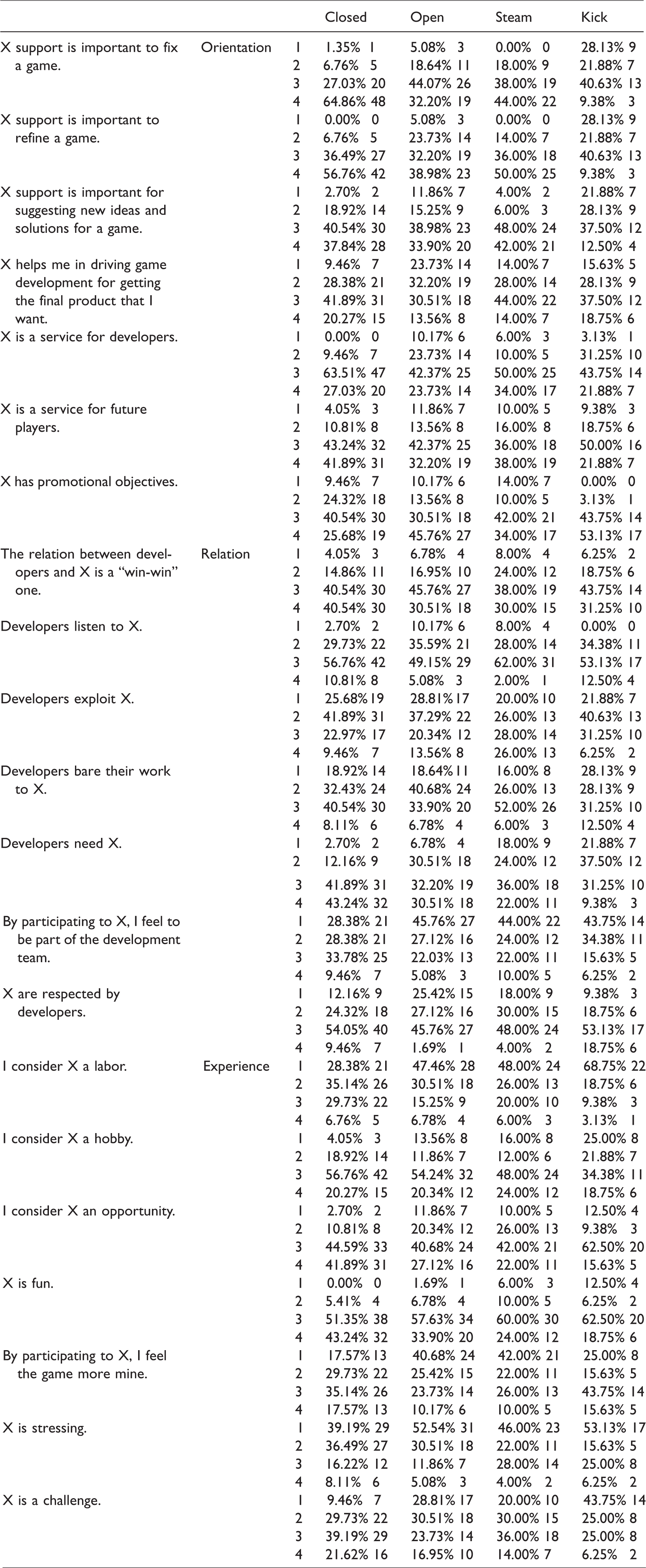

The assessment per se of the extended prototyping is presented in Table 6. Closed testing’s orientation is broad, from fixing and refining to suggesting innovative ideas and augmenting bottom-up participation. Therefore, it is a service for both developers and future players. Open testing is similarly perceived but with a poorer autonomy by testers in influencing the outcome. Steam EA and Kickstarter scope is particularly wide too, but the latter presents lower scores in fixing and refining goals. Regardless, all four subgroups consider their experience affected by promotional drivers. The relation with developers is usually positive, with reciprocal understanding and respect (the only exception is open testers, with 52.54% of low-average scores). Most Steam EA users felt to be exploited by developers (54% of good-high scores), but also that they bare their work (58% of good-high scores); for the other categories, this situation is overturned. Only most bakers (59.33% of low-average scores) think that developers do not need them, but the feeling to be part of the team is marginal in each subgroup. Regarding the experience per se, extended prototyping is poorly perceived as a labor and a stressing experience. On the contrary, it is mainly considered funny, a hobby, and an opportunity by each subgroup. Only closed testers and backers mainly felt the game more theirs in being involved, while the challenging dimension was highlighted by EA users and closed beta testers.

Perception of the procedure.

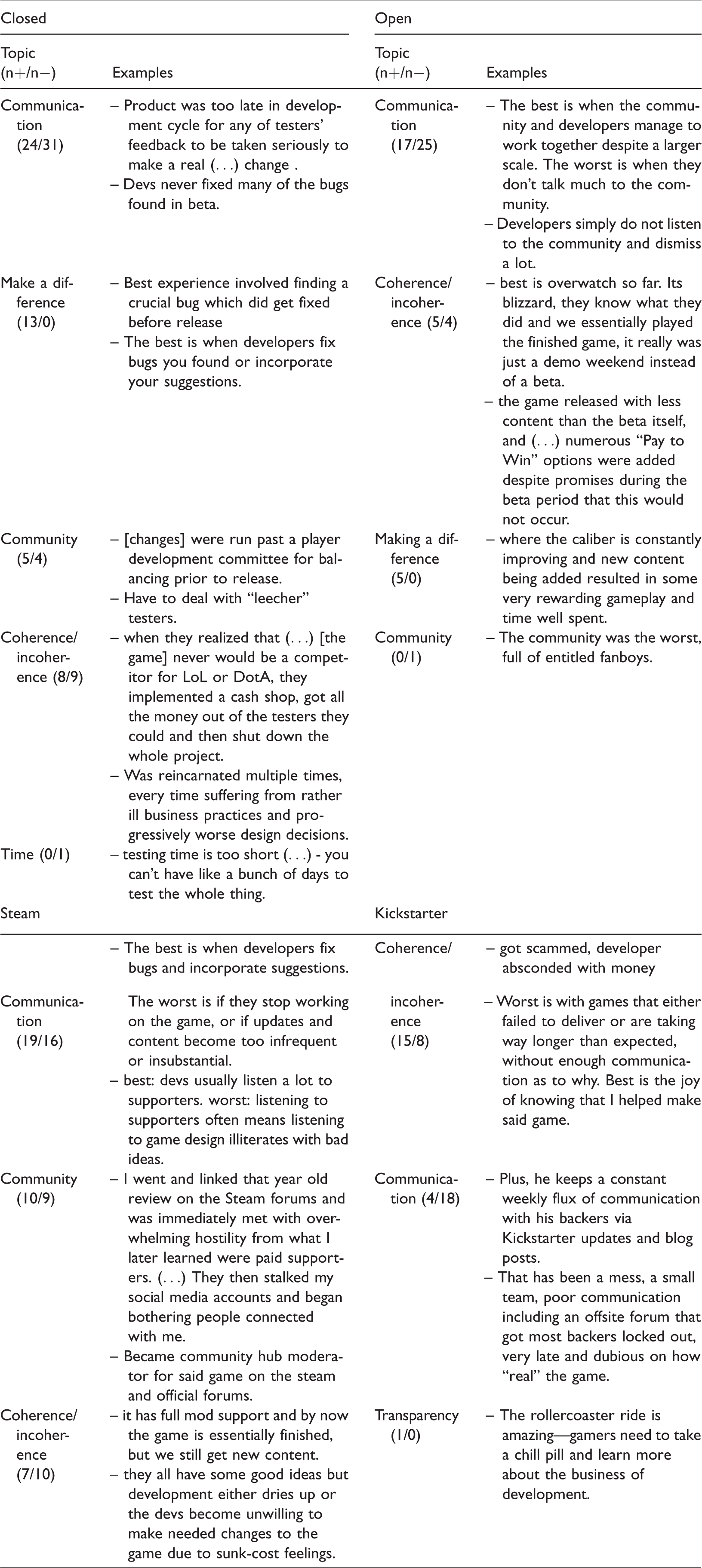

The description of worst and best experiences allowed to frame extend prototyping’s strengths and weaknesses. Table 7 includes the main highlights reported by participants (main themes with numbers of positive—n+—and negative—n−— references and indicative quotes). The importance of communication is spread across the subgroups as well as the coherence between premises and final development. Therefore, a constant exchange with testers and a productive continuity should be a priority in planning such an involvement. This is not a surprise if we consider the importance of the analytic challenge experienced through testing, which requires an adequate feedback to be fully realized. Aside from Kickstarter, community plays a key role in both positive and negative terms, while the feeling of making a difference is relevant in closed and open testing. Other insights concern the limited time for closed testing and the opportunity to see the whole process of production associated with Kickstarter.

Pros and cons.

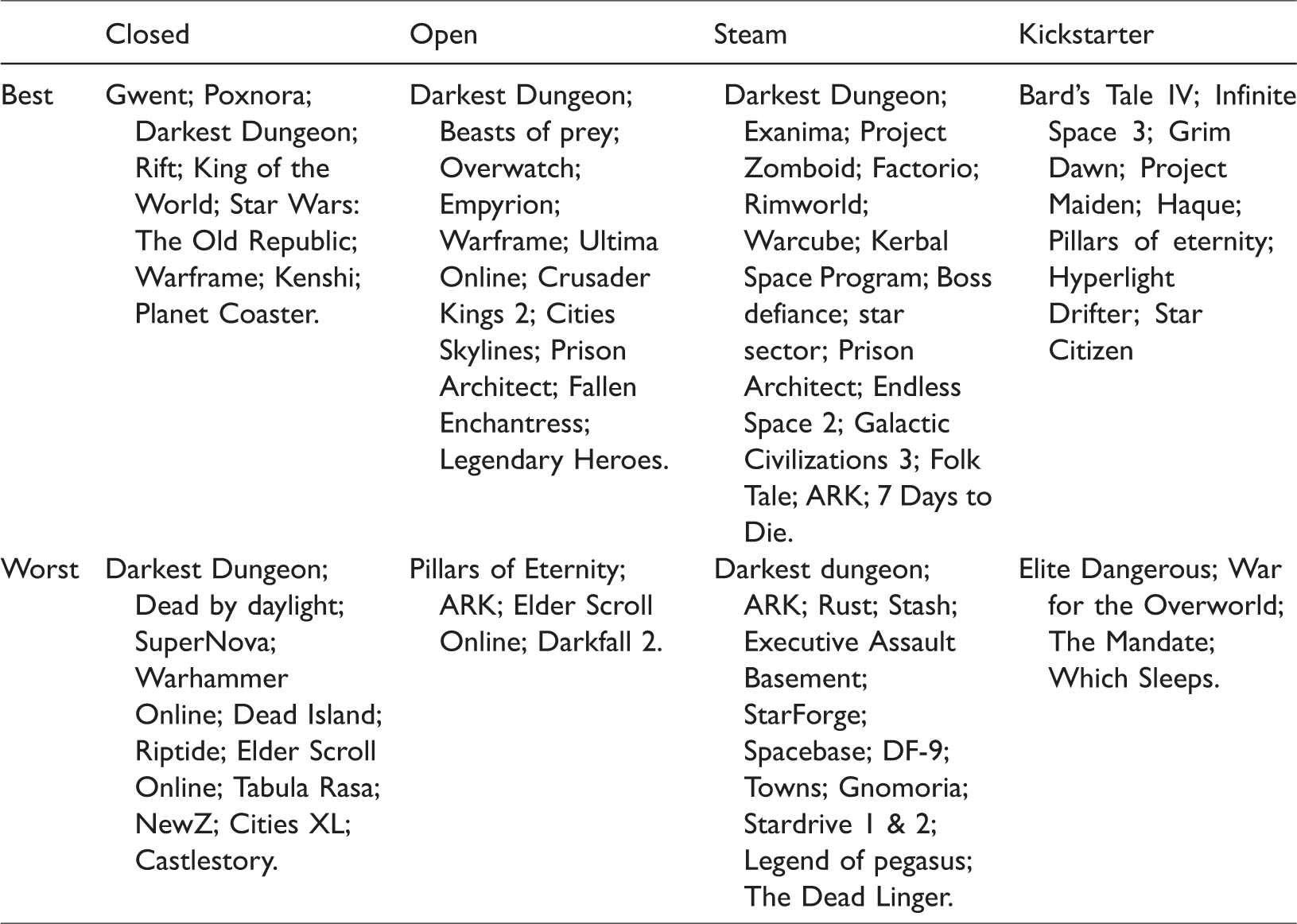

Table 8 lists best and worst projects reported by the participants. It is quite surprising that some appear in both the categories. Darkest Dungeon is one of the most referenced, but also Pillars of Eternity and Rimworld were highly debated.

Best and worst experiences.

Moved by this outcome, the author interviewed via e-mail Chris Bourassa, the creative director of Darkest Dungeon—a turn-based RPG developed by Red Hook Studios and released on Steam EA in 2015. He observed that Steam EA was essential for this game because his team was entirely self-funded and in need of testers: “Early access enabled us to earn a sustainable living while continuing to enhance and grow the game.” Steam EA community was described as “enthusiastic, excited, and provided us with hundreds of pages of constructive critiques, feedback, and observations.” Moreover, the first testers served as the “bedrock of our community” supporting and helping new players. The range of refining was broad but especially focused on balance, tuning, and bugs, which are usually hard to gather for a small software house. In order to handle such a number of inputs, they hired a community manager, whose work is to monitor Steam forums, Reddit, Twitter, and Facebook and select insights with potential to share with the rest of the development team. “We try to address as many issues as we can, but we don’t always take feedback at face value (…) we use the community feedback to identify areas of concern - for example, a heated debate over a hero’s skills usually means something is either out of whack, or not being communicated clearly enough”. Bourassa and his colleagues have positive words about Steam EA. Coherently with the survey results, he claimed that “Regular communication from a developer to their community is crucial in early access, and it does place additional responsibilities/demands on the team. However, we felt it was worthwhile.”

Discussion

It is challenging to summarize such an overview, which was developed to provide a first picture of extended prototyping through an ID lens—an effort made by following the call to look at mainstream trends in digital entertainment to stage a more effective GD for learning (Egenfeldt-Nielsen, 2007). It can be argued that extended prototyping seems to work as a specific type of formative evaluation. Alpha and beta testing have already been described in these terms indeed (Smith and Ragan, 1999), and the techniques of field test and operational tryout (Smith and Ragan, 1999; Tessmer, 1993) share several similarities (e.g. the almost final state of the materials, the range of testing, the context of application). However, open development seems to be characterized by bigger audiences and a relevant social dimension, which developers are not always able to manage.

Answering RQ1, it is worth to begin by stating that extended prototyping was considered by testers an engaging opportunity. A social cognition fueled by a perceived efficacy (Bandura, 1997) seems a relevant driver, especially considering that instrumental expectations (Wigfield and Eccles, 1992) (e.g. of employment) are irrelevant and the promotional dimension of open development has been highlighted. Pursuing this line, a strong motivation of attending closed and open testing is to try the product before purchase, and subjects recruited tend to buy downloadable contents—i.e. additional upgrades (e.g. new missions, weapons, esthetics)—considering them compatible with extended prototyping. In essence, they are heavy consumers and then potential final users of the tested product; it is not surprising that the majority feels to be alike the average gamer and plays for fun and challenge. This result is aligned with ideal ID formative evaluations which tend to recruit normal learners rather than expert ones.

In allowing audiences to try, debug, and contribute to an evolving product, open development raised positive feelings. Being involved is a relevant reason for participating indeed, and the related motivational design relies on a passionate and analytical predisposition toward a system to improve. This importance is especially true for closed testing and Steam EA, which usually let players affect core game features. A correlation between commitment and perceived impact occurs, proving that online audiences may want to be involved with tasks aside from mere escapism. Open testing and Kickstarter are less felt in in this way; the former is considered a sort of stress test (e.g. for game servers), while the latter relies on an economic investment and then a variable involvement (often just waiting the final release with marginal online activities). In ID terms, it means that for testing an educational product/plan, testers need to be selected by their commitment and analytic attitude. Therefore and addressing RQ2, designers are asked to be up to this exchange by providing a constant feedback and listening to bottom-up suggestions.

In comparison with other types of formative evaluation, extended prototyping is affected by a specifically active role of the testing community, which demands to be informed about its own feedback. Therefore, (game/instructional) designers are also asked to explain, motivate, and reflect on the consequent implications within the development. Such a forced taking a stand (exasperated by the open development’s online dimension in terms of assessment itself and/or debating settings like forums and social media) may entail a more aware and iterative ID, which is an essential step to take for current instructional designers (Gibbons et al., 2014). Moreover, it can prepare this field to the challenges and pitfalls of online and blended learning, which is increasingly social and interconnected. Finally, it entails an emphasis on the role of the instructor per se, which has to be legitimized and explained especially when changes and updates occur.

Despite their own peculiarities, the four types of extended prototyping share this point. In ID terms, the challenge is to find a potential audience with notions of the field targeted but in need of advancement (conversely, the risk is to have a nonsupportive or misleading community). For instance, for an extended prototyping of a graduate course in ID, a productive choice would be to recruit students who have already dealt with it in their undergraduate program. Otherwise, older students can be used to contribute to new and updated editions of a course according to their final assessments. The same process may be applied to professional development with specific formative sessions related to targeted tasks (referring to the ARCS model; Keller, 1987) for testing broader interventions. Therefore (and referring to Bourassa’s words), ID experts must constantly follow the participants and explain the rationale of their own choices. It entails a matter of coherence, self-honesty, and continuity, which should be always followed and preserved. A tacit agreement occurs between designers and testers; ruining it means to weaken their involvement and trust. In addition, this engagement entails commitment and satisfaction, and promotes transparency, i.e. one of the pros reported by participants. Echoing Kemp and UbD models about students’ profiles and expectations, these testers should always know which specific audience will be targeted with the final product. If we want to overturn the exchange from GD to ID and reflect on how the latter can support the former, these insights fit into models like ARCS and Universal Design for Learning (UDL) (CAST, 2011), which stress the need to provide clear goals, milestones, and rubrics during the instruction for making its effectiveness more inclusive and significant. Several of their guidelines (e.g. UDL representation’s principles, the importance of Relevance while explaining changes, updates, and top-down decisions) can assist game developers in handling the communication with their testers. Indeed, a successful extended prototyping seems to rely on the concrete behavior of the producer rather than on its credentials which count but are informed by previous conducts (in ID, a sort of instructional history). Online learning may make this process particularly challenging due to the extended range of audiences and feedback, and hiring a community manager can be an effective solution (see Bourassa’s strategy) to filter suggestions and interact with student testers.

Finally, community seems a crucial parameter according to the results related to the tag gamer, the tendency to share game-related information with peers, and its importance in shaping the testing experience. Therefore, in ID the suggestion is to recruit cohorts of students/employees with shared experiences and objectives, and facilitate their mutual exchange. Addressing potential scenarios, extended prototyping can be deployed by making accessible instructional milestones or first modules for informing the whole educational process in the long term. This would allow a better knowledge and an improved quality rather than waiting for the first rounds of final assessments, which may come too late and without preventing significant critiques. In other words, pilots may be shared with selected individuals, whose involvement can provide formative rather than summative evaluations (Rossett and Sheldon, 2001) and trigger their commitment in promoting the final product—even peer tutoring/mentoring might be considered (Bowman-Perrott et al., 2013) as a potential addition. Collaboration and mutual understanding are productive instruments to enhance learning, social skills, and students’ behavior beyond top-down instructions (e.g. Jeong and Chi, 2007; Klavina and Block, 2008). Extended prototyping can provide not just a significant testing opportunity, but also a chance to improve collaborative thinking, knowledge promotion, and empathy among students (Stahl, 2006; Topping, 2008) in places like forums, chats, and virtual environments. These reflections should be kept in mind when technology issues are at stake, from improving an online infrastructure to refining the design interface in a learning-oriented software.

Regardless, this study presents three main vulnerabilities, which lead to possible future developments. First, its orientation is explorative and aims to outline a first overview of the topic. Further and more structured inquiries are required to take a step forward toward, and pilot studies are necessary to evaluate open development’s feasibility and scope. Second, the sample recruited is small and unequally distributed across the subgroups and provides just one perspective on the topic. Developers and platform owners should be addressed to get a complete picture of this phenomenon. Bourassa was contacted for starting to cover this dimension, which might provide further insights and indications. Third, this article is characterized by a speculative orientation. By depicting a potential bridge between two domains, it could raise mixed reactions due to the explorative findings gathered and leap from game audiences to learners.

Despite these deficiencies, the implications are noteworthy for both researchers and practitioners. The former group has now a starting point to develop in either GD or ID fields. The latter can take advantage of an array of insights and suggestions to follow in instruction and/or game development. Extended prototyping is increasingly popular and it will probably flourish beyond the game industry (e.g. Kickstarter hosts projects of several types). Moreover, it is becoming a familiar practice for new generations, who might be more receptive of its use in learning. Finally, it suggests strategies and malleable factors in designing and handling concrete applications, from management to community, which may support ID projects and performance systems on several levels. The desired outcome of this article is to offer a preliminary step in this direction by contributing to the exchange between ID and GD, which shows a promising potential but requires an ongoing attention to be effectively pursued.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.