Abstract

Summary

This paper represents a systematic evaluation of the Core Medical Training Curriculum in the UK. The authors critically review the curriculum from a medical education perspective based mainly on the medical education literature as well as their personal experience of this curriculum. They conclude in practical recommendations and suggestions which, if adopted, could improve the design and implementation of this postgraduate curriculum. The systematic evaluation approach described in this paper is transferable to the evaluation of other undergraduate or postgraduate curricula, and could be a helpful guide for medical teachers involved in the delivery and evaluation of any medical curriculum

Introduction

The core medical training (CMT) programme is a 2-year programme consisting of 4–6 placements in medical specialties, which is designed to deliver core training in general internal medicine and acute internal medicine by acquisition of knowledge, skills and attitudes.

The CMT curriculum was reviewed and rewritten by the Joint Royal Colleges of Physicians Training Board in 2009 and has since undergone a major review in 2011 and a minor review in 2012. 1

In this paper, we proceed to a critical evaluation of the current CMT curriculum from a medical education perspective and identify both its strengths and weaknesses, which are not always apparent to trainees, medical teachers and curriculum designers. Our evaluation results in practical recommendations which are relevant to all parties involved in the design and implementation of the curriculum. In addition, our systematic evaluation approach is transferable to the evaluation of other undergraduate or postgraduate curricula and could be a helpful guide for medical teachers involved in the delivery and evaluation of any medical curriculum.

Methods

Relevant literature was identified through a search of the online databases (MEDLINE, EMBASE and the Royal College of Physicians of London online library) using a number of keywords (e.g. curriculum, evaluation, workplace-based assessments, ward rounds) either alone or in combination. A range of publications was retrieved, including research reports, editorials, letters and books, and a subset of 29 relevant articles and textbooks was used for our reference list. The majority of the works cited are original articles dating from 1995 onwards. However, pioneering and seminal articles on curriculum development published earlier have also been included.

Characteristics of the CMT curriculum: A medical education perspective

A curriculum is not just a syllabus. According to Harden ‘a curriculum is a programme of study where the whole is greater than the sum of the individual parts’. 2 In his ‘Ten questions’ seminal paper on curriculum development, he describes a ‘wider curriculum’ which does not include only content and examinations, but also the aims, learning methods and the organisation of content. 3 Genn gives an even broader definition of curriculum which includes ‘everything that happens in relation to the educational programme’. 4 In this evaluation, curriculum will be considered in terms of its wider rather than its narrow form.

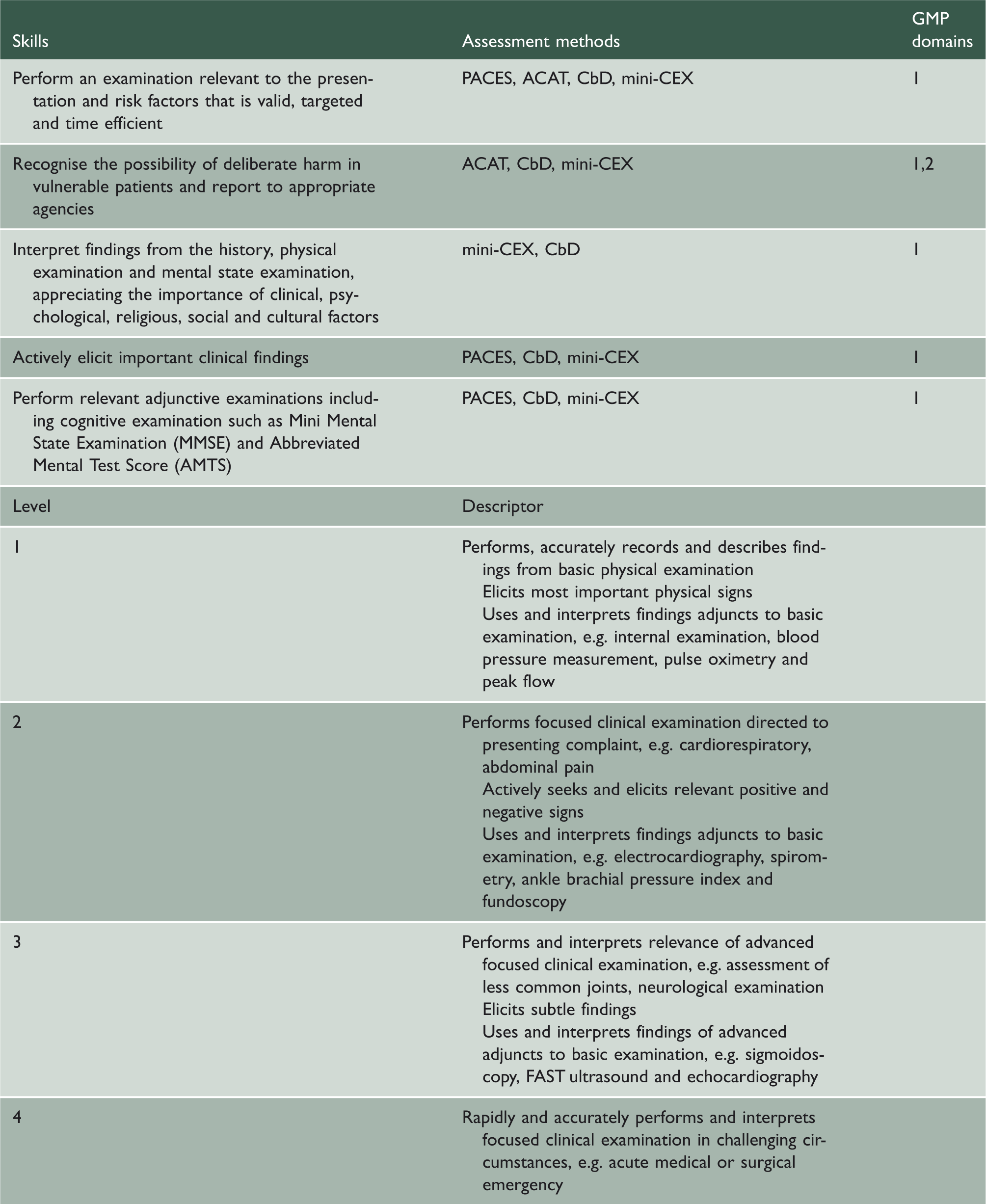

Skills, assessment methods and GMP domains relating to clinical examination in the CMT curriculum.

In addition, the CMT curriculum is an integrated curriculum. Harden described 11 steps in the level of integration from isolation to transdisciplinary teaching and learning, which are presented in the form of an integration ladder. 6 Integration can be achieved both horizontally and vertically. 7 For example, the clinical skills in assessing and managing a patient with chest pain require good knowledge of parallel specialties, such as cardiology (to diagnose cardiac chest pain) and respiratory medicine (to diagnose pleuritic pain). In addition, it requires knowledge of disciplines traditionally taught in different phases of the curriculum, such as physiology (to interpret the blood gas results) and radiology (to interpret the chest radiograph).

The CMT curriculum (as a syllabus) is based mainly on statements of objectives and can therefore be viewed as following a product approach. Kelly described three major ideologies – curriculum as content and education as transmission, curriculum as product and education as instrumental, and curriculum as process and education as development. 8 As given in Table 1, the trainee should be competent in specific skills, such as performing a relevant clinical examination. This approach is useful in ensuring that core medical trainees will have achieved certain skills or targets by the end of the programme. Although some people may think that trainee performance can be easily measured by the meeting of objectives, this may sometimes be difficult. Generally, it may be easy to assess performance of trainees in certain skills, such as procedural skills, but it may be more difficult to accurately measure other clinical skills, such as clinical judgement in a variety of different clinical scenarios and settings. These complex cognitive clinical skills may be more difficult to measure accurately by the meeting of objectives. Furthermore, with this approach, unanticipated learning is overlooked and the achievement of the objectives may be viewed as a tick-box exercise by the trainees. This in turn may lead to a surface rather than a deep approach to learning, as the main aim of the trainees becomes the satisfactory completion of specific tasks that will be sufficient for ‘sign-off’ by the Annual Review of Competence Progression (ARCP) panel or their educational supervisor. On the other hand, trainers may also misunderstand the purpose of the assessment process and view it as a tick-box exercise. They may complete a number of workplace-based assessments for their trainees, so that they achieve a satisfactory outcome in the ARCP reviews, instead of using these as a tool to provide honest and useful feedback.

Curriculum aims and objectives

The aim of the CMT curriculum is that ‘trainees completing core training will have a solid platform from which to continue into specialty training’. This requires the acquisition of competencies in four main domains, which are specified by the General Medical Council:

Domain 1: Knowledge, skills and performance Domain 2: Safety and quality Domain 3: Communication, partnership and teamwork Domain 4: Maintaining trust.

The CMT curriculum also includes learning objectives for core trainees in a number of different areas, which are divided into the following broad categories: common competencies, symptom-based competencies, system-specific competencies and procedural competencies.

The learning objectives could be analysed in terms of: completeness, appropriateness, soundness and feasibility.

9

The learning objectives in terms of clinical skills are appropriate with regards to completeness, as these cover the vast majority of presentations that a core trainee would encounter and would be expected to deal with. These objectives are listed with the four main domains that have been specified by the General Medical Council, thus meeting the criterion of appropriateness. In addition, they meet the criterion of soundness, because they adhere to the principles of:

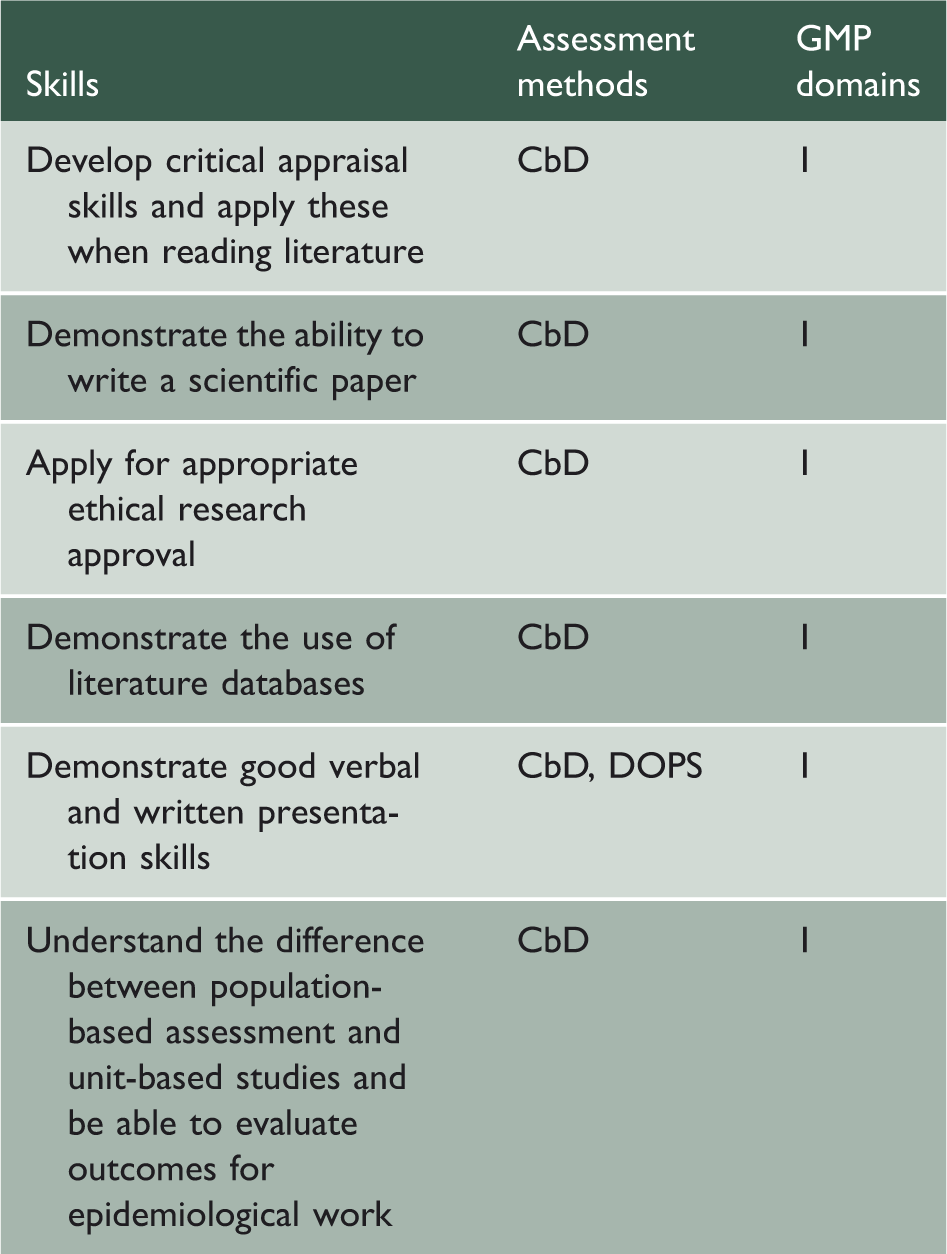

Readiness: They are appropriate to the experiential background of the trainees who have completed the Foundation Programme; Motivation: They are related to the needs of the trainees in their preparation for specialty training; Retention: Learning outcomes, such as history taking, are likely to be permanent. In addition, the spiral nature of the curriculum also helps with the retention of learning outcomes, as the same topics are revisited; Transfer: Learning outcomes, such as the management of anaphylaxis using an ABC approach, are also applicable to the management of a shocked patient. Skills, assessment methods and GMP domains relating to ethical research in the CMT curriculum.

Finally, these objectives meet the criterion of feasibility, as they can generally be achieved over a 2-year period. Johnson et al. in their pilot survey of CMT trainees and supervisors in the Mersey Deanery concluded that the CMT curriculum objectives are feasible.

10

However, this may not be the case for a few objectives, such as the skills required for ethical research, which include critical appraisal skills, the ability to write a scientific paper, apply for ethical approval and perform a literature review (Table 2). For example, a CMT trainee in a district general hospital may not have the opportunity to develop the necessary skills to write an article or submit applications for ethical approval due to lack of research facilities.

Learning

A wide range of opportunities for learning and learning resources available to core trainees are described in this curriculum, including learning with peers, work-based experiential learning, formal postgraduate teaching, self-directed learning and formal study courses.

In his seminal paper, ‘Approaches to Curriculum Planning’, Harden mentioned that ‘the educational value of a method is dependent as much on how the method is used, as on the choice of the method’. 11 With regards to teaching clinical skills, the quality of the teaching is of significant importance. Claridge reported that ward rounds are a good opportunity to learn clinical skills, but their educational value is limited by lack of time, absence of team consistency and increased workload. Also, the learning opportunities for procedural skills may be limited by the fact that the number of trainees has increased and by the introduction of the European Working Time Directive, which has led to a reduction in the length of clinical shifts. 12 Finally, formal courses, such as simulation courses in clinical skills labs, may be useful. In particular, simulation is considered an effective and safe way of teaching clinical skills. 13

There are three levels of a curriculum that can be recognised in an education programme (Prideaux):

14

The planned curriculum is what is intended by the designers; The delivered curriculum includes what is taught by the teachers. This might be different from the planned curriculum. For instance, consultant-led ward rounds are considered an invaluable learning opportunity in the planned curriculum, but this may not be the case in practice; The experienced curriculum is what is learnt by the students. For example, consultant-led ward rounds may be very educational but the trainees may be focused on getting the jobs done, rather than listening to the consultant teaching, thus missing out on the available educational opportunities.

Stanley 15 made useful recommendations on how to improve the educational value of ward rounds, which could be helpful in maximising the learning opportunities for CMT trainees. For example, pre- and post-ward round sessions, either in a medical or a multidisciplinary environment can provide opportunities for learning by discussing diagnostic and management options, receiving honest feedback and learning from other healthcare professionals. In addition, dedicating time to teaching and learning during the ward round, even in the form of a ‘3-minute round-up’, may be very helpful. 15 Dewhurst acknowledges the time constraints on post-take ward rounds and makes some interesting suggestions which could improve their educational value in a timely manner. He mentions that trainee involvement by presenting cases to the consultant during the round, asking questions and obtaining feedback is important, instead of just focusing on the mechanics of the ward round to ensure that it runs smoothly. In addition, consultant involvement by asking trainees questions, ‘thinking out loud’ so that trainees can listen to their clinical thought processes, and acting as a role model, is equally important. Learning from role models occurs through observation and reflection and is a complex mix of conscious and unconscious activities. 16

Assessment strategy

A number of assessment methods are used to assess clinical skills in the CMT curriculum: mini-clinical evaluation exercise (mini-CEX), case-based discussion (CbD), direct observation of procedural skills (DOPS) and multisource feedback (MSF). Other assessment techniques, apart from workplace-based assessments, include reports by supervisors and traditional examinations. The mini-CEX, in which trainees are observed to perform clinical tasks, such as taking a focused history, is a valid and reliable tool, although its reliability depends on sufficient sampling. 17,18 In a systematic review comparing clinical skills assessment tools for medical trainees, the mini-CEX was found to have the strongest validity evidence. 19 In addition, one of its main strengths as an assessment and learning tool is that it can be used to evaluate a wide range of skills in a timely manner. 20 However, different levels of training among assessors in utilising this assessment tool can have an effect on its reliability and discriminatory value. 21 The CbD, in which the trainee is assessed in one or more aspects of a case, such as clinical assessment, investigation or treatment, is also considered a valid tool. 17 The CbD is considered by trainees as an educationally valuable assessment, which can be helpful in promoting reflective learning, improving decision-making skills and effecting a change in practice. The CbD can be particularly useful if both the supervisor and trainee are committed to the educational aspects of the assessment, the case is appropriate for the trainee's level and the assessment is undertaken in a quiet environment with sufficient time for discussion and feedback. 22 The DOPS focuses on evaluating the procedural skills of postgraduate trainees by observing them in the workplace setting. 18 The DOPS has been found to be reliable and is generally acceptable by medical trainees. 23 In other specialties, however, such as anaesthetics, it has been considered to be a tick-box exercise that does not necessarily reflect trainee competence. 24 In a systematic review, the authors concluded that there was no evidence that DOPS assessments lead to objective performance improvement, although subjectively trainees felt that direct observation could help them improve their clinical skills. 25 The MSF, which represents a systematic collection of performance data and feedback for an individual trainee, has been found to be feasible and reliable for formative purposes. 17,26 However, caution is needed when interpreting and acting on the results of this assessment tool, because ratings are often influenced by characteristics of the assessors and the context in which feedback is provided. For example, respondent characteristics, such as the assessor’s age and ethnicity, the rater’s professional group (medical/ non-medical), the length and context of the rater’s working relationship with the trainee and the rater’s familiarity with the trainee’s practice, have been found to influence responses. 27 In addition, ratings are usually highly skewed towards favourable impressions of doctor performance, 27 and occasionally there is a lack of useful comments from assessors, which limits the ‘catalytic effect’ of this assessment tool; that is, its potential to lead to change in doctors’ practice. 26 Some other limitations of these assessment methods are that their usefulness depends on the quality of the assessor (including knowledge of the process, lack of time or personal interest) and also the fact that clinician educators are often reluctant to provide honest feedback particularly in the face of poor performance. 10,17

The alignment of learning objectives, teaching methods and assessment may be satisfactory in some cases but inadequate in others. For example, procedural skills, such as central line insertion, are specific learning objectives which can be taught in a clinical skills lab and then practised on patients under direct supervision. They can also be assessed with a DOPS assessment. However, alignment is not adequate for more complex skills, such as clinical reasoning. In this case, the objectives are broader and cannot be taught in a skills lab, but can probably be learnt during the trainee’s clinical training by observing the way senior clinicians reach decisions. The assessment of these skills can be even more difficult, as they need to be assessed in a variety of different settings and by many different assessors, rather than with a single CbD assessment.

An important consideration, in order to improve the usefulness of the workplace-based assessments, would be to encourage busy clinicians to engage with these assessment methods by providing them with protected time and thus recognising their educational contributions. Another strategy would be to identify a core group of faculty whose only educational job is trainee assessment and feedback. Faculty training is also important in allowing assessors to provide useful feedback. 17 In addition, trainees could also improve the educational impact of assessment by encouraging their supervisors to provide specific and honest feedback and also by reflecting on the feedback they have received and creating an action plan, which they regularly review. 17 Finally, given the practical issue of time constraints in a busy environment, it would be helpful to undertake the workplace-based assessments in a planned manner by organising meetings in advance at regular intervals and by ensuring that assessments are performed in a timely manner, rather than at the end of each rotation. This will improve the quality of the feedback and allow trainees to revisit their action plans, thus increasing the opportunities for reflective learning. 22

Evaluation strategy

Evaluation is an essential quality assurance process, which has been described as analogous to clinical audit and enables the curriculum to evolve and constantly develop in response to the needs of trainees, institutions and society. 28

A number of methods exist that are used locally to evaluate the delivery of the CMT curriculum: trainee feedback, ARCP outcomes, performance in membership exams and outcomes in progression to specialty training. These evaluation methods reflect a shift from a process-oriented to an outcomes-oriented system of education, in which the measurement of educational outcomes based on

Some of these evaluation methods have limitations. For example, performance in exams may provide a misleading measure of the effectiveness of a course. 3 The trainees’ achievement may be despite the teaching rather than because of it. If the quality of the teaching is poor, then trainees are encouraged to study harder and this may lead to better performance. In addition, ARCP outcomes may not always be a useful evaluation measure of a curriculum. They simply reflect the fact that trainees have achieved, for example, a satisfactory number of assessments, a satisfactory attendance record in CMT teaching sessions and an adequate number of clinics, but they do not necessarily indicate that the quality of the CMT teaching was good or that the assessments were done properly and were beneficial for the trainees. On the other hand, trainee feedback is a useful way of evaluating the curriculum.

Malik and Malik suggest that evaluation should be based on student and staff feedback at regular intervals, external examiner comments and students’ performance in assessment exercises. 7 Carr, Celenza and Lake and Morrison also agree that it is important to develop transparent evaluation by collecting feedback broadly by students, trainers and staff within the clinical setting and that closing the loop by ensuring feedback is provided to all stakeholders is equally important. In addition, a useful way of evaluating and improving the quality of the teaching is peer observation of teaching. This can be used both formatively and summatively to evaluate the effectiveness of a teacher. 28,30

One of the strengths of the evaluation strategy of the CMT curriculum is that it is based on a number of different methods. However, it would be helpful if there was a systematic way of collecting feedback at regular intervals from trainers as well, including consultants, educational and clinical supervisors and training programme directors, whose opinions and comments would be useful in such an evaluation. This could be achieved by collecting feedback through online surveys or by organising meetings at the Deanery, in which consultants would be invited and encouraged to give feedback to curriculum designers. Additionally, external examiners, such as training programme directors in institutions based in other countries, would probably be able to contribute usefully with their comments to this evaluation.

Conclusions

In conclusion, the CMT curriculum is a postgraduate curriculum that can be described as spiral, integrated and product-orientated. Some action points identified in this review that could be helpful to those implementing the curriculum include the following:

Modifying the structure of ward rounds. For example, this could be improved by organising pre- and post-ward round sessions, in which discussion accompanied by feedback could take place in a quiet environment, or by dedicating time for teaching during the ward round; Offering protected time to trainers in order to be able to carry out their educational activities; Training faculty in order to be able to assess trainees appropriately and provide useful feedback. Current weaknesses amongst faculty who assess include the fact that the quality of the feedback is often poor, that there is reluctance to give honest feedback especially in the face of poor performance, that faculty’s assessment of trainee performance may not be accurate, that feedback is sometimes not provided immediately after the assessment event and does not always translate into an action plan for the trainee;

17

Evaluating the curriculum by collecting information from many different sources, including feedback from supervisors and external examiners. This could be easily collected with online surveys, which would not take a significant amount of time to complete, but could be extremely useful in highlighting issues relating to the curriculum.

The implementation of some of these suggestions will be undoubtedly influenced by real-world parameters, involving time, resources and available expertise. However, some recommendations described earlier in this paper, such as those relating to the organisation of ward rounds and workplace-based assessments, could be implemented successfully despite the time pressures that clinicians are faced with. This requires of both trainees and medical teachers a degree of motivation, as well as some preparation and organisation. For example, planning meetings in advance at regular intervals for the completion of workplace-based assessments or offering a ‘3-min round-up’ at the end of the ward round to summarise some learning points are only a few of the interventions described in this paper that could improve the educational value of ward-based teaching without the need for additional resources. These recommendations, if implemented successfully, will result in a more highly trained, competent physician workforce and therefore institutions should invest sufficient resources into faculty development and curriculum planning.